U.S. Pat. No. 9,805,196

TRUSTED ENTITY BASED ANTI-CHEATING MECHANISM

AssigneeMicrosoft Technology Licensing LLC

Issue DateFebruary 27, 2009

Illustrative Figure

Abstract

An anti-cheating system may comprise a combination of a modified environment, such as a modified operating system, in conjunction with a trusted external entity to verify that the modified environment is running on a particular device. The modified environment may be may be modified in a particular manner to create a restricted environment as compared with an original environment which is replaced by the modified environment. The modifications to the modified environment may comprise alternations to the original environment to, for example, detect and/or prevent changes to the hardware and/or software intended to allow cheating or undesirable user behavior.

Description

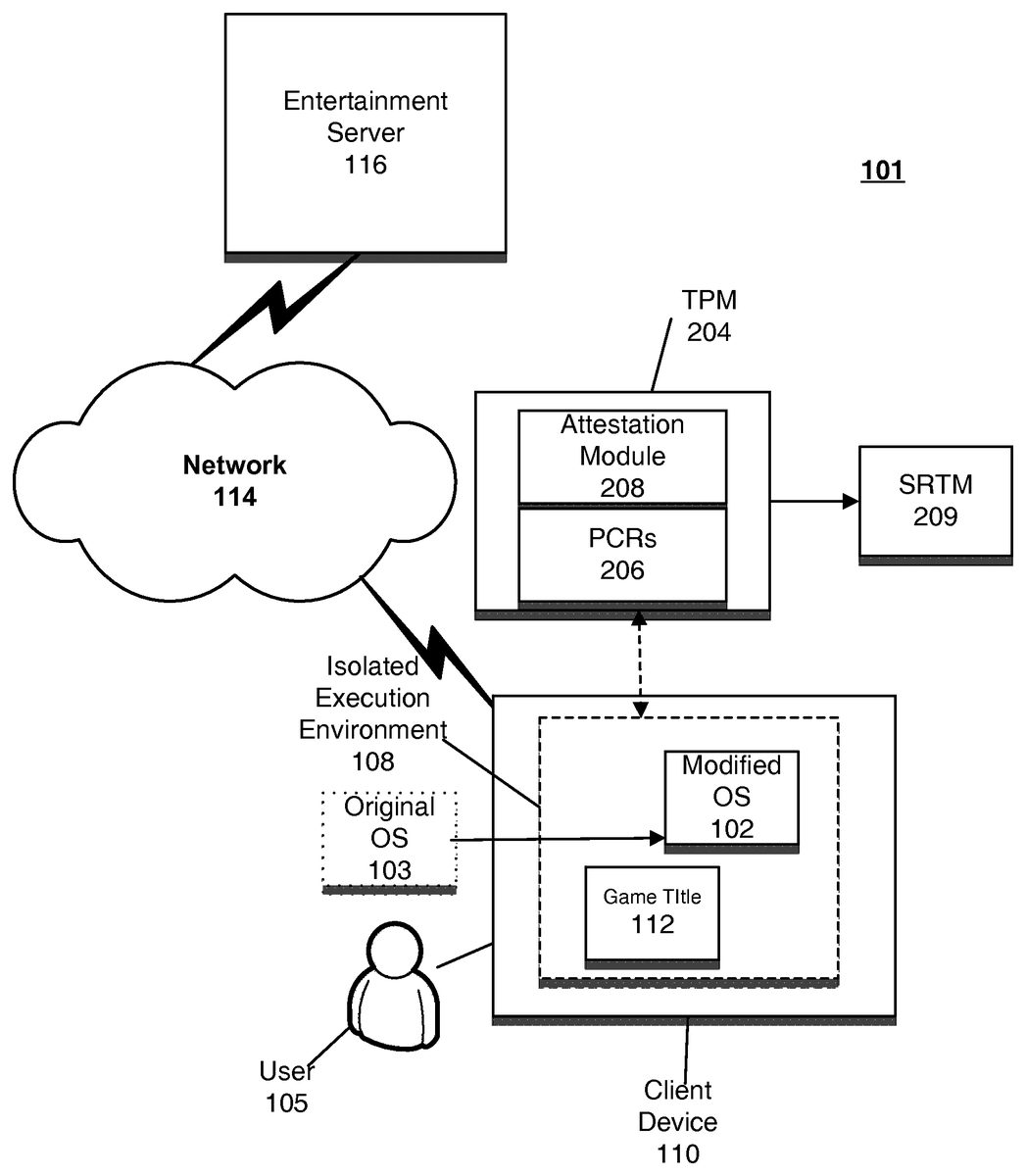

DETAILED DESCRIPTION OF ILLUSTRATIVE EMBODIMENTS FIG. 1adepicts an anti-cheating system according to one embodiment. According to one embodiment, anti-cheating system101may comprise a combination of an engineering of a modified environment, such as modified operating system102on client device110in conjunction with the operations of trusted external entity104to verify in fact that the modified environment, e.g., modified operating system102is in fact running on client device110. The modified environment, e.g. modified operating system102may be modified in a particular manner to create a restricted environment as compared with original operating system103, which it has replaced. According to one embodiment, the modifications in modified operating system102may comprise alternations to original operating system103to prevent cheating such as, for example, modifications to prevent cheating behavior by user105. Modified operating system102may create isolated execution environment108on client device110. Isolated execution environment108may be a spectrum that runs from requiring all software be directly signed by a single vender to allowing a more flexible system which allows for third party software to become certified, by passing certain restricting requirements, and thereby be allowed to run in the isolated execution environment. According to one embodiment, a TPM may be a root of trust and isolated execution environment108may be established primarily through code measurements and unlocking of secrets if the code measures correctly. Isolated execution environment108may comprise an environment in which the software running on client device110can be controlled and identified. In a fully isolated environment, an attacker cannot run any code on client device110and must resort to more costly and cumbersome hardware based attacks. External entity104may operate to verify in fact that isolated execution environment108is installed and intact on client device110by performing monitoring functions on client device110to verify that isolated execution environment108is operating, i.e., modified operating system102is installed and executing. Referring again toFIG. 1a, user105may utilize client device110to play games such as game ...

DETAILED DESCRIPTION OF ILLUSTRATIVE EMBODIMENTS

FIG. 1adepicts an anti-cheating system according to one embodiment. According to one embodiment, anti-cheating system101may comprise a combination of an engineering of a modified environment, such as modified operating system102on client device110in conjunction with the operations of trusted external entity104to verify in fact that the modified environment, e.g., modified operating system102is in fact running on client device110. The modified environment, e.g. modified operating system102may be modified in a particular manner to create a restricted environment as compared with original operating system103, which it has replaced. According to one embodiment, the modifications in modified operating system102may comprise alternations to original operating system103to prevent cheating such as, for example, modifications to prevent cheating behavior by user105.

Modified operating system102may create isolated execution environment108on client device110. Isolated execution environment108may be a spectrum that runs from requiring all software be directly signed by a single vender to allowing a more flexible system which allows for third party software to become certified, by passing certain restricting requirements, and thereby be allowed to run in the isolated execution environment. According to one embodiment, a TPM may be a root of trust and isolated execution environment108may be established primarily through code measurements and unlocking of secrets if the code measures correctly.

Isolated execution environment108may comprise an environment in which the software running on client device110can be controlled and identified. In a fully isolated environment, an attacker cannot run any code on client device110and must resort to more costly and cumbersome hardware based attacks. External entity104may operate to verify in fact that isolated execution environment108is installed and intact on client device110by performing monitoring functions on client device110to verify that isolated execution environment108is operating, i.e., modified operating system102is installed and executing.

Referring again toFIG. 1a, user105may utilize client device110to play games such as game title112or run other entertainment software. User105may utilize client device110in both an online and offline mode. User105interacting with game title112in either an offline or online mode may garner achievement awards or other representations regarding game play. Achievement awards may be, for example, representations of a level obtained, number of enemies overcome, etc. In addition, user105may set various parameters of game title112, which controls features of game title112. Features may comprise various functions related to game play such as difficulty levels, etc.

During an online mode, user105may interact with other players (not shown inFIG. 1a) to allow multiplayer game play. In particular, client device110may be coupled to entertainment server116via network114in order to allow user105to interact with other users (not shown) in multiplayer game play. During a multiplayer game session, user105may enjoy various features related to operation of game title112that affect game play. These features may comprise the operations of the game such as whether the user's player is invisible or has certain invulnerabilities.

User105may also interact with client device110in an offline mode, for example, during single player game play. Subsequently, user105may cause client device110to go online. After this transition from an offline mode to an online mode, various achievements user105has garnered during offline game play may be represented to other players who are online.

According to one embodiment, a pre-existing or original environment, e.g., original operating system103may be replaced in client device110, which upon replacement by modified operating system102, may now support running of game title112but only in a restricted manner. Modified operating system102may be, for example, an operating system that hosts entertainment or gaming software but restricts user105from engaging in particular undesirable cheating behavior.

According to one embodiment, modified operating system102may be an operating system that creates a restricted environment with respect to running of game titles, e.g.,112. That is, modified operating system102may be engineered from original operating system103in such a way as to restrict the ability of user105from performing certain cheating behavior.

For example, original operating system103may allow for the installation of any device driver without restriction so long as the driver has been signed by a key which has been certified by one of several root certificate authorities. Modified operating system102may be modified to prevent certain types of cheating behavior by requiring any device drivers to be signed by a particular key of a centralized authority and may further prohibit updating of device drivers by a local administrator.

Trusted external entity104, may perform functions with respect to client110to verify in fact that modified operating system102is in fact installed and executing on client device110. Trusted external entity104may be trusted in the sense that its operations are trusted to a higher degree than the entity that trusted entity104is verifying, i.e., modified operating system103. That is, the operations of trusted entity104are trusted to a much greater degree than the behavior of user105to have actually installed modified operating system102.

FIG. 1bis a flowchart depicting an operation of an anti-cheating process according to one embodiment. The process is initiated in120. In124, an original operating system is modified to crate an isolated execution environment, which may be a restricted execution environment to preclude a user from engaging in various anti-cheating behavior. In126, a trusted external entity performs a verification to determine whether the modified operating system is in fact running and installed on a particular client device. The process ends in128.

FIG. 2is a block diagram of a trusted platform module and static root of trust module for performing an anti-cheating function. In particular, as shown inFIG. 2, the functions of trusted external entity shown inFIG. 1ahave been replaced by TPM204. According to one embodiment, TPM204may perform an attestation function or operation.

In particular, TPM204may perform an attestation function via attestation module208. Attestation may refer to a process of vouching for the accuracy of information, in particular the installation and execution of modified operating system102on client device110. TPM204may attest, for example, to the boot environment and the specific boot chain which loaded on to the client device110.

Attestation allows changes to the software running on the client device; however all such changes to that software are measured allowing an authorization decision to release secrets or to allow access to network functionality. Attestation may be achieved by having hardware associated with client device102generate a certificate stating what software is currently running, e.g., modified operating system102. Client device102may then present this certificate to a remote party such as entertainment server116to show that its software, e.g., modified operating system102, is in fact intact on client device110and has not been tampered with. Attestation may, although need not necessarily, be combined with encryption techniques, such as for example public-key encryption, so that the information sent can only be read by the programs that presented and requested the attestation, and not by an eavesdropper. Such encryption techniques can protect both the privacy of the user as well as the confidentiality of the protocol in use by the system.

According to one embodiment, attestation may be applied in the context of anti-cheating to determine whether a user is running a particular version of a system or operating system. That is, attestation may be performed by attestation module208on TPM204to verify that modified operating system102has been installed and is executing on client device110. For example, it may be desirable to force user105to run modified operating system102rather than original operating system103as the modified version may have enhanced security and anti-cheating protection itself. That is, as described with respect toFIG. 1a, modified operating system102may comprise a restricted environment represented by isolated execution environment108.

However, user110may attempt to engage in cheating behavior by pretending to run modified operating system102when in fact user110is in fact running an old version of the operating system, i.e., original operating system103. This might be accomplished by gleaning certain bits from the new version (modified operating system102) while in fact running the old version (original operating system103), thereby falsely representing that user105is running the new version (modified operating system102). Such techniques are common today, whereby after a patch is made to a breached DRM system, the attacker either obtains the new secret data from the patched system and places that data into the old breach system, falsely making the old breached system appear to be the new patched one, or by applying the same form, or slightly modified form, of attack on the new patched system creating a new breached system. In both cases the attacker is not running the newly patched DRM system. Increasing the difficulty of the attacker to perform either of those actions represents the majority of the work in releasing a patch. The developer must keep in mind the ease with which the new secrets can be extracted and the ease in which the same class of attack can be applied to the new patched system. Thus, it may be desirable to have TPM204attest to the fact that user105is running modified operating system102as opposed to either the original operating system103, or a combination of both. Alternatively, the desired component may alternatively be a hardware component. In this case, it may be desirable for a trusted component to attest to the fact that a user has the particular hardware component. An additional alternative is for the hardware component to directly enforce restrictions on what software can run, preventing direct modification to the environment. A further alternative is for the hardware component to both enforce restrictions on what software can load and to measure and provide attestations of the software that did load. Likewise a hypervisor can perform both or either action.

TPM204may comprise a secure crypto processor (not shown inFIG. 2) that may store cryptographic keys that protect information. TPM204may provide facilities for the secure generation of cryptographic keys, and limitation of their use, in addition to a hardware pseudo-random number generator. Furthermore, as described, TPM204may also provide capabilities such as attestation and sealed storage. Attestation may be achieved by TPM204creating a nearly un-forgeable hash key summary of a hardware and software configuration, a hardware configuration alone or a software configuration alone such as the hardware and software configuration of client device110(e.g., system102and application112). According to one embodiment, the un-forgeable hash key summary(s) may be stored in platform configuration registers (“PCRs”)206.

A third party such as entertainment server116may then verify that the software such as modified operating system204is intact on client device110and has not been changed, using that attestation, for example as described with respect toFIG. 1a. TPM204may then perform a sealing process, which encrypts data in such a way that it may be decrypted only if TPM204releases the associated decryption key, which it only does for software which has the correct identity as measured into the PCRs of TPM204. By specifying during the sealing process which software may unseal the secrets, the entertainment server can allow off-line use of those secrets and still maintain the protections.

While SRTM209provides strong measurement, it may become cumbersome when dealing with the number of individual binaries in a modern operating system. In order to address this issue, TPM204may perform measurement up to a pre-determined point where the security and integrity of the remainder of the system may be managed through another mechanism such as using a code integrity and disk integrity or other integrity based mechanism (described below).

FIG. 3adepicts a code integrity operation according to one embodiment. Code integrity may require that any binary loaded be cryptographically signed by a trusted root authority. Thus, as depicted inFIG. 3a, binary files304(1)-304(N) as well as OS kernel302are cryptographically signed, i.e., exist in cryptographically secure environment315. In order for various files304(1)-304(N) and302to be utilized they must be verified by trusted root authority306.

For example, some operating systems may include a code integrity mechanism for kernel mode components. According to one embodiment, this existing mechanism may be leveraged by measuring the responsible component (and the contents of the boot path up to that point) and then inferring the security of the operating system kernel. According to one embodiment, the binary signature may be rooted to a new key to limit the set of drivers that can load. In addition, an extension may be grafted onto this system to require that user mode binaries possess the same signatures. The use of code integrity may nevertheless still leave a set of non-binary files that are essential to security such as the registry or other configuration files.

According to one embodiment, a disk integrity mechanism may provide a method to patch those remaining holes.FIG. 3bdepicts an operation of a disk integrity mechanism according to one embodiment. Disk integrity may attempt to ensure an isolated execution environment through control of the persisted media, such as the hard disk. Thus, as depicted inFIG. 3b, integrity protected disk files320(1)-320(N) in environment324are integrity checked using module322before they can be utilized. By assuring that data read from the disk is cryptographically protected (e.g. digitally signed), the difficulty of injecting attacking software into the system may be elevated, thus making it particularly difficult to persist an attack once it is established.

According to the disk integrity model, files on a partition, e.g.,320(1)-320(N), may be signed by a root key. This may require that the disk be effectively read-only (since files cannot be signed on the client). The disk integrity model may address a vulnerability of code integrity by ensuring that the data is signed as well as the code.

According to an alternative embodiment, a proxy execution mechanism may be employed. Proxy execution may refer to running code somewhere other than the main central processing unit (“CPU”). For example, code may be run on a security processor, which may be more tamper and snooping resistant than the main CPU. In this manner, the hardware may be leveraged by moving the execution of certain parts of the code to a security processor in various ways.

According to one embodiment, proxy execution may be further strengthened by requiring that the channel between the CPU and the security processor be licensed. In particular,FIG. 4adepicts a proxy execution process according to one embodiment. Code406may be executed on security processor404. Security processor404may communicate with CPU402via licensed channel410.

According to one embodiment, a proxy execution mechanism may be used to tie the security processor to a particular SRTM code measurement and, if the license expires periodically, can be used to force rechecks of the system as a form of recovery. According to this embodiment, all communication between the security processor and the CPU (other than the communication needed to establish a channel) may require knowledge of a session key. To establish this, the security processor may either directly check the attestation from the TPM if it has that capability or if it doesn't or if more flexibility of versioning is required, a trusted third party, such as an entertainment server116, can be used to negotiate between the TPM and the Security Processor, whereby the entertainment server checks the attestation from the TPM and then provides proofs and key material to allow for establishment of a cryptographically protected channel between the security processor and the TPM. The TPM can then provide those secrets to the CPU on subsequent reboots of the device in the same way the TPM protects all secrets to authorized PCR values.

According to an alternative embodiment, an individualization mechanism may also be employed. Individualization may refer to a process of building and delivering code that is bound to a single machine or user. For example, necessary code for the operation of the applications may be built (either on-demand or in advance and pooled) on a server and downloaded to the client as part of an activation process.

According to one embodiment, individualization may attempt to mitigate “Break Once Run Everywhere” breaches by making the attacker perform substantial work for each machine on which he desires to use the pirated content. Since the binaries produced by the individualization process are unique (or at least “mostly unique”), it becomes more difficult to distribute a simple patcher that can compromise all machines.

Individualization may require some form of strong identity—for a machine binding, this would be a hardware identifier. In traditional DRM systems this has been derived by combining the IDs of various pieces of hardware in the system. According to one embodiment, the security processor may be leveraged to provide a unique and strong hardware identifier.

In certain systems such as those with a poor isolation environment, a method for detecting cheating (and, to a lesser degree, piracy) may employ a watchdog process, which may respond to challenges from game server116. The data collected by game server116may later be used to determine if a machine has been compromised, and if so the machine can be banned from gaming server116and prevented from acquiring additional licenses for content.

In one embodiment the watchdog process may itself be integrity protected by the same mechanisms which protect the operating system or execution environment as described in previous embodiments above.

FIG. 4bdepicts an operation of a watchdog process according to one embodiment. As depicted inFIG. 4b, anti-cheating system101has further been adapted to include watchdog process430on client device110. Entertainment server116may generate security challenge432, which is transmitted over network114, and received by watchdog process430. Watchdog process430in turn may generate response434, which it may then transmit over network14to entertainment server116. In addition to executing within modified operating system102, watchdog process430may also execute in a hypervisor.

Implementation of watchdog process430may require enforcement of several criteria. First, it should not be easy to trivially create a codebook of responses for these challenges, which means that the set of challenges should be large and the correct answers must not be obviously available to the client. Watchdog process430may require sufficient agility such that new challenges can be added at will. In the worst case, an attacker could set up a clean system on the Internet somewhere, such that client's systems running attack software could use it as an oracle. To prevent this, encryption may be applied to the channel between the gaming server116and watchdog process430(to prevent trivial snooping and man in the middle attacks), and potentially responses may be tied in to a security processor, machine identity, or other security hardware, such as a TPM, in some way.

A layered protection scheme may also be employed, wherein multiple technologies are layered in various combinations. For example, a “dual boot” solution may be employed that makes use of a reduced attack footprint, disk integrity and SRTM.

According to an alternative embodiment, rather than utilize trusted external entity104as shown inFIG. 1a, which may be a TPM as described previously, trusted entity may comprise the very hardware device upon which modified operating system102is hosted so long as that hardware device can enforce that modified operating system102is in fact running on it. Thus, as shown inFIG. 4c, anti-cheating system101is implemented via restricted hardware device430, which internally can enforce the operation and execution of a desired operating system such as modified operating system102. Restricted hardware device430may be, for example, a cellular telephone or a cable set top box.

According to yet another alternative embodiment, rather than employing trusted external entity104, hypervisor440may be provided internally to client device110.FIG. 4ddepicts an operation of an anti-cheating system via a hypervisor according to one embodiment. Hypervisor440may receive the burden of measurement instead of employing SRTM in a TPM environment as described previously. According to one embodiment, hypervisor440may be a measured boot hypervisor. According to this embodiment, a mechanism may be provided to determine an identity of a particular hypervisor440that is running, e.g., via hypervisor identity module442. Thus, for example, entertainment server116may communicate with hypervisor identity module to identify the nature of hypervisor440. Hypervisor440in turn may communicate with entertainment server116to inform entertainment server116of the existence or non-existence of modified operating system102. The installation of modified operating system102ensures a non-cheating context to operation of game title112.

FIG. 5shows an exemplary computing environment in which aspects of the example embodiments may be implemented. Computing system environment500is only one example of a suitable computing environment and is not intended to suggest any limitation as to the scope of use or functionality of the described example embodiments. Neither should computing environment500be interpreted as having any dependency or requirement relating to any one or combination of components illustrated in exemplary computing environment500.

The example embodiments are operational with numerous other general purpose or special purpose computing system environments or configurations. Examples of well known computing systems, environments, and/or configurations that may be suitable for use with the example embodiments include, but are not limited to, personal computers, server computers, hand-held or laptop devices, multiprocessor systems, microprocessor-based systems, set top boxes, programmable consumer electronics, network PCs, minicomputers, mainframe computers, embedded systems, distributed computing environments that include any of the above systems or devices, and the like.

The example embodiments may be described in the general context of computer-executable instructions, such as program modules, being executed by a computer. Generally, program modules include routines, programs, objects, components, data structures, etc. that perform particular tasks or implement particular abstract data types. The example embodiments also may be practiced in distributed computing environments where tasks are performed by remote processing devices that are linked through a communications network or other data transmission medium. In a distributed computing environment, program modules and other data may be located in both local and remote computer storage media including memory storage devices.

With reference toFIG. 5, an exemplary system for implementing the example embodiments includes a general purpose computing device in the form of a computer510. Components of computer510may include, but are not limited to, a processing unit520, a system memory530, and a system bus521that couples various system components including the system memory to processing unit520. Processing unit520may represent multiple logical processing units such as those supported on a multi-threaded processor. System bus521may be any of several types of bus structures including a memory bus or memory controller, a peripheral bus, and a local bus using any of a variety of bus architectures. By way of example, and not limitation, such architectures include Industry Standard Architecture (ISA) bus, Micro Channel Architecture (MCA) bus, Enhanced ISA (EISA) bus, Video Electronics Standards Association (VESA) local bus, and Peripheral Component Interconnect (PCI) bus (also known as Mezzanine bus). System bus521may also be implemented as a point-to-point connection, switching fabric, or the like, among the communicating devices.

Computer510typically includes a variety of computer readable media. Computer readable media can be any available media that can be accessed by computer510and includes both volatile and nonvolatile media, removable and non-removable media. By way of example, and not limitation, computer readable media may comprise computer storage media and communication media. Computer storage media includes volatile and nonvolatile, removable and non-removable media implemented in any method or technology for storage of information such as computer readable instructions, data structures, program modules or other data. Computer storage media includes, but is not limited to, RAM, ROM, EEPROM, flash memory or other memory technology, CDROM, digital versatile disks (DVD) or other optical disk storage, magnetic cassettes, magnetic tape, magnetic disk storage or other magnetic storage devices, or any other medium which can be used to store the desired information and which can accessed by computer510. Communication media typically embodies computer readable instructions, data structures, program modules or other data in a modulated data signal such as a carrier wave or other transport mechanism and includes any information delivery media. The term “modulated data signal” means a signal that has one or more of its characteristics set or changed in such a manner as to encode information in the signal. By way of example, and not limitation, communication media includes wired media such as a wired network or direct-wired connection, and wireless media such as acoustic, RF, infrared and other wireless media. Combinations of any of the above should also be included within the scope of computer readable media.

System memory530includes computer storage media in the form of volatile and/or nonvolatile memory such as read only memory (ROM)531and random access memory (RAM)532. A basic input/output system533(BIOS), containing the basic routines that help to transfer information between elements within computer510, such as during start-up, is typically stored in ROM531. RAM532typically contains data and/or program modules that are immediately accessible to and/or presently being operated on by processing unit520. By way of example, and not limitation,FIG. 5illustrates operating system534, application programs535, other program modules536, and program data537.

Computer510may also include other removable/non-removable, volatile/nonvolatile computer storage media. By way of example only,FIG. 5illustrates a hard disk drive540that reads from or writes to non-removable, nonvolatile magnetic media, a magnetic disk drive551that reads from or writes to a removable, nonvolatile magnetic disk552, and an optical disk drive555that reads from or writes to a removable, nonvolatile optical disk556, such as a CD ROM or other optical media. Other removable/non-removable, volatile/nonvolatile computer storage media that can be used in the exemplary operating environment include, but are not limited to, magnetic tape cassettes, flash memory cards, digital versatile disks, digital video tape, solid state RAM, solid state ROM, and the like. Hard disk drive541is typically connected to system bus521through a non-removable memory interface such as interface540, and magnetic disk drive551and optical disk drive555are typically connected to system bus521by a removable memory interface, such as interface550.

The drives and their associated computer storage media discussed above and illustrated inFIG. 5, provide storage of computer readable instructions, data structures, program modules and other data for computer510. InFIG. 5, for example, hard disk drive541is illustrated as storing operating system544, application programs545, other program modules546, and program data547. Note that these components can either be the same as or different from operating system534, application programs535, other program modules536, and program data537. Operating system544, application programs545, other program modules546, and program data547are given different numbers here to illustrate that, at a minimum, they are different copies. A user may enter commands and information into computer510through input devices such as a keyboard562and pointing device561, commonly referred to as a mouse, trackball or touch pad. Other input devices (not shown) may include a microphone, joystick, game pad, satellite dish, scanner, or the like. These and other input devices are often connected to processing unit520through a user input interface560that is coupled to the system bus, but may be connected by other interface and bus structures, such as a parallel port, game port or a universal serial bus (USB). A monitor591or other type of display device is also connected to system bus521via an interface, such as a video interface590. In addition to the monitor, computers may also include other peripheral output devices such as speakers597and printer596, which may be connected through an output peripheral interface595.

Computer510may operate in a networked environment using logical connections to one or more remote computers, such as a remote computer580. Remote computer580may be a personal computer, a server, a router, a network PC, a peer device or other common network node, and typically includes many or all of the elements described above relative to computer510, although only a memory storage device581has been illustrated inFIG. 5. The logical connections depicted inFIG. 5include a local area network (LAN)571and a wide area network (WAN)573, but may also include other networks. Such networking environments are commonplace in offices, enterprise-wide computer networks, intranets and the Internet.

When used in a LAN networking environment, computer510is connected to LAN571through a network interface or adapter570. When used in a WAN networking environment, computer510typically includes a modem572or other means for establishing communications over WAN573, such as the Internet. Modem572, which may be internal or external, may be connected to system bus521via user input interface560, or other appropriate mechanism. In a networked environment, program modules depicted relative to computer510, or portions thereof, may be stored in the remote memory storage device. By way of example, and not limitation,FIG. 5illustrates remote application programs585as residing on memory device581. It will be appreciated that the network connections shown are exemplary and other means of establishing a communications link between the computers may be used.

Computing environment500typically includes at least some form of computer readable media. Computer readable media can be any available media that can be accessed by computing environment500. By way of example, and not limitation, computer readable media may comprise computer storage media and communication media. Computer storage media includes volatile and nonvolatile, removable and non-removable media implemented in any method or technology for storage of information such as computer readable instructions, data structures, program modules or other data. Computer storage media includes, but is not limited to, RAM, ROM, EEPROM, flash memory or other memory technology, CD-ROM, digital versatile disks (DVD) or other optical storage, magnetic cassettes, magnetic tape, magnetic disk storage or other magnetic storage devices, or any other medium which can be used to store the desired information and which can accessed by computing environment500. Communication media typically embodies computer readable instructions, data structures, program modules or other data in a modulated data signal such as a carrier wave or other transport mechanism and includes any information delivery media. The term “modulated data signal” means a signal that has one or more of its characteristics set or changed in such a manner as to encode information in the signal. By way of example, and not limitation, communication media includes wired media such as a wired network or direct-wired connection, and wireless media such as acoustic, RF, infrared and other wireless media. Combinations of the any of the above should also be included within the scope of computer readable media. Although the subject matter has been described in language specific to structural features and/or methodological acts, it is to be understood that the subject matter defined in the appended claims is not necessarily limited to the specific features or acts described above. Rather, the specific features and acts described above are disclosed as example forms of implementing the claims.

Although the subject matter has been described in language specific to the structural features and/or methodological acts, it is to be understood that the subject matter defined in the appended claims is not necessarily limited to the specific features or acts described above. Rather, the specific features or acts described above are disclosed as example forms of implementing the claims.

The inventive subject matter is described with specificity to meet statutory requirements. However, the description itself is not intended to limit the scope of this patent. Rather, it is contemplated that the claimed subject matter might also be embodied in other ways, to include different steps or combinations of steps similar to the ones described in this document, in conjunction with other present or future technologies.

Claims

- A method for preventing cheating comprising: monitoring, by a trusted component, a device and a modified operating system executing on the device;performing a proxy execution operation that includes execution of code on a tamper resistant security processor, wherein the proxy execution operation utilizes a licensed channel between the tamper resistant security processor and a central processing unit of the device, the trusted component usable to check the licensed channel's attestation, and an associated static root of trust measurement usable to validate that the modified operating system is running in an untampered form, and wherein the tamper resistant security processor is associated with a particular static root of trust measurement usable to cause a recovery check when the licensed channel expires;and restricting access to resources based on results of the monitoring and the performing of the proxy execution operation.

- The method of claim 1 , wherein the resources are necessary secrets.

- The method of claim 1 , wherein the resources are network services.

- The method of claim 1 , wherein the resources are additional hardware.

- The method of claim 1 , wherein the trusted component is a trusted platform module.

- The method of claim 5 , wherein the trusted platform module combined with software running on the device generate a static root of trust measurement.

- The method of claim 6 , further comprising performing a code integrity operation.

- The method of claim 7 , further comprising performing a disk integrity operation.

- The method of claim 1 , wherein a first operating system executing on the device is modified to provide the modified operating system.

- The method of claim 7 , further comprising performing an individualization mechanism.

- The method of claim 1 , further comprising performing a watchdog operation.

- A computer readable storage device comprising instructions for preventing cheating, the instructions for performing operations comprising: monitoring, by a trusted component, a device and a modified operating system executing on the device;performing a proxy execution operation that includes execution of code on a tamper resistant security processor, wherein the proxy execution operation utilizes a licensed channel between the tamper resistant security processor and a central processing unit of the device, the trusted component usable to check the licensed channel's attestation, and an associated static root of trust measurement usable to validate that the modified operating system is running in an untampered form, and wherein the tamper resistant security processor is associated with a particular static root of trust measurement usable to cause a recovery check when the licensed channel expires;and restricting access to resources based on results of the monitoring and the performing of the proxy execution operation.

- The computer readable storage device of claim 12 , wherein the resources are necessary secrets.

- The computer readable storage device of claim 12 , wherein the resources are network services.

- The computer readable storage device of claim 12 , wherein the resources are additional hardware.

- The computer readable storage device of claim 12 , wherein a first operating system executing on the device is modified to provide the modified operating system.

- A system for preventing cheating comprising: a device;a modified operating system executing on the device, a tamper resistant security processor;a memory storing computer-executable instructions that, when executed, cause the system to perform operations comprising: instantiate a licensed channel operable to perform a proxy execution operation that includes execution of code on the tamper resistant security processor, wherein the licensed channel is between the tamper resistant security processor and a central processing unit of the device, and including a trusted component usable to check the licensed channel's attestation and an associated static root of trust measurement usable to validate that the modified operating system is running in an untampered form, and wherein the tamper resistant security processor is associated with a particular static root of trust measurement usable to perform a recovery check when the licensed channel expires;monitor the device and the modified operating system;and restrict access to resources based on results of the monitoring and performing of the proxy execution operation.

- The system of claim 17 , wherein the resources are necessary secrets.

- The system of claim 17 , wherein the resources are network services.

- The system of claim 17 , wherein the resources are additional hardware.

- The system of claim 17 , wherein a first operating system executing on the device is modified to provide the modified operating system.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.