U.S. Pat. No. 8,419,545

Method and system for controlling movements of objects in a videogame

AssigneeAiLive, Inc.

Issue DateApril 26, 2009

Illustrative Figure

Abstract

Techniques for controlling movements of an object in a videogame are disclosed. At least one video camera is used at a location where at least a player plays the videogame, the video camera captures various movements of the player. A designated device (e.g., a game console or computer) is configured to the video data to derive the movements of the player from the video data, and cause the object to respond to the movements of the player. When the designated device receives video data from more than one locations, players at the respective locations can play a networked videogame that may be built upon a shared space representing some or all of the real-world spaces of the locations. The video game is embedded with objects, some of which respond to the movements of the players and interact with other objects in accordance with rules of the video games.

Description

DETAILED DESCRIPTION OF THE INVENTION The detailed description of the invention is presented largely in terms of procedures, steps, logic blocks, processing, and other symbolic representations that directly or indirectly resemble the operations of data processing devices coupled to networks. These process descriptions and representations are typically used by those skilled in the art to most effectively convey the substance of their work to others skilled in the art. Reference herein to “one embodiment” or “an embodiment” means that a particular feature, structure, or characteristic described in connection with the embodiment can be included in at least one embodiment of the invention. The appearances of the phrase “in one embodiment” in various places in the specification are not necessarily all referring to the same embodiment, nor are separate or alternative embodiments mutually exclusive of other embodiments. Further, the order of blocks in process flowcharts or diagrams representing one or more embodiments of the invention do not inherently indicate any particular order nor imply any limitations in the invention. Referring now to the drawings, in which like numerals refer to like parts throughout the several views.FIG. 1Ashows an exemplary configuration100for one embodiment of the current invention. The configuration100resembles a living room in which a player105is playing a videogame via a game console102and a display103. A camera101is monitoring the player105. The camera101may be an active or passive infra-red camera, or a camera that responds to visible light only (or the camera101may be capable of operating in different modes). The camera101may also include regular or infra-red lights to help illuminate the scene, or there may be separate lights to illuminate the scene being monitored by the camera101. The camera101may also include the ability to measure time-of-flight information in order to determine depth information. The camera101may also consist of one or more cameras so ...

DETAILED DESCRIPTION OF THE INVENTION

The detailed description of the invention is presented largely in terms of procedures, steps, logic blocks, processing, and other symbolic representations that directly or indirectly resemble the operations of data processing devices coupled to networks. These process descriptions and representations are typically used by those skilled in the art to most effectively convey the substance of their work to others skilled in the art. Reference herein to “one embodiment” or “an embodiment” means that a particular feature, structure, or characteristic described in connection with the embodiment can be included in at least one embodiment of the invention. The appearances of the phrase “in one embodiment” in various places in the specification are not necessarily all referring to the same embodiment, nor are separate or alternative embodiments mutually exclusive of other embodiments. Further, the order of blocks in process flowcharts or diagrams representing one or more embodiments of the invention do not inherently indicate any particular order nor imply any limitations in the invention.

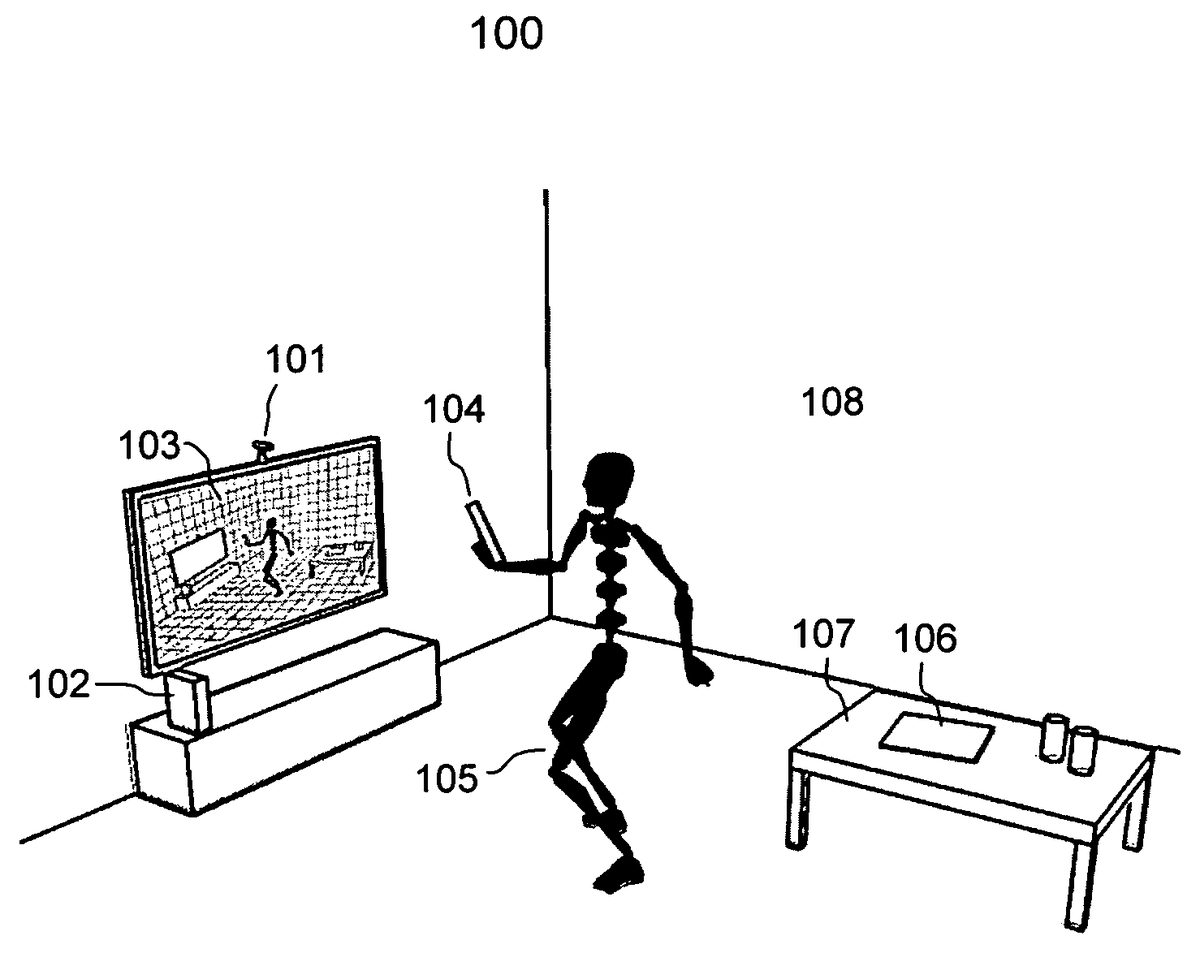

Referring now to the drawings, in which like numerals refer to like parts throughout the several views.FIG. 1Ashows an exemplary configuration100for one embodiment of the current invention. The configuration100resembles a living room in which a player105is playing a videogame via a game console102and a display103. A camera101is monitoring the player105. The camera101may be an active or passive infra-red camera, or a camera that responds to visible light only (or the camera101may be capable of operating in different modes). The camera101may also include regular or infra-red lights to help illuminate the scene, or there may be separate lights to illuminate the scene being monitored by the camera101. The camera101may also include the ability to measure time-of-flight information in order to determine depth information. The camera101may also consist of one or more cameras so that stereoscopic vision techniques can be applied. The camera may be motorized (perhaps with auto-focus capability) to be able to follow a player within a predefined range to capture the movements of the player.

Depending on implementation, the game console102may be a dedicated computer device (e.g., a videogame system like Wii system) or a regular computer configured to run as a videogame system. The game console102may be a virtual machine running on a PC elsewhere. In one embodiment, the motion-sensitive device104used as a controller may also be embedded with necessary capabilities to execute a game. The player may have two separate motion-sensitive controllers, one in each hand. In another embodiment, a mobile phone/PDA may be configured to act as a motion sensitive device. In still another embodiment, the motion sensitive device might be embedded in a garment that the player wears, or it might be in a hat, or strapped to the body, or attached to the body by various means. Alternatively, there may be multiple motion sensitive devices attached to different parts of the body.

Unless specifically stated, a game console as used in the disclosure herein may mean any one of a dedicated base unit for a videogame, a generic computer running a gaming software module or a portable device configured to act as a base unit for a videogame. In reality, the game console102does not have to be in the vicinity of the display103and may communicate with the display103via a wired or wireless network. For example, the game console102may be a virtual console running on a computing device communicating with the display103wirelessly using a protocol such as wireless HDMI. According to one embodiment, the game console is a network-capable box that receives various data (e.g., image and sensor data) and transports the data to a server. In return, the game console receives constantly updated display data from the server that is configured to integrate the data and create/update the game space for a network game being played by a plurality of other participating game consoles.

It should be noted that the current invention is described for video games. Those skilled in the art may appreciate that the embodiments of the current invention may applicable in other non-game applications to create a shared feeling of physical proximity and physical interaction over a network among one or more people who are actually far apart. For example, a rendezvous may be created among some users registered with a social networking website for various activities. Video conferencing could be enhanced or phone calls between friends and families could be enhanced by providing the feeling that an absent person may be made present in a virtual 3D space created by using one embodiment of the present invention. Likewise, various collaborations on virtual projects such as building 3D virtual words and engineering design could be realized in a virtual 3D space created by using one embodiment of the present invention. For some applications, a motion-sensitive controller may be unnecessary and the camera-based motion tracking alone could be sufficient.

FIG. 1Bshows that there are two players122and124playing a video game together. A camera126is monitoring both of the players122and124. According to one embodiment, each of the players122and124are holding two controllers, one in each hand. To make it easy to distinguish one from another, each of the controllers has one or more (infra-red) LEDs thereon. The camera126may also be an infra-red (or other non-visible light) camera. In another embodiment, more cameras are used. Examples of the cameras include an infra-red (or other non-visible light) camera and a visible light camera. Alternatively, a single camera capable of operating in different modes, visible-light mode and/or infra-red mode (or other non-visible light) may also be used. In still another embodiment, there are lights128to help illuminate the scene so as to ameliorate problematic variations in lighting. The lights might be infra-red (or other non-visible light). The lights might also be strobe lights so as to create different lightings of the same scene. The movements of the controllers are used to control corresponding movements of objects in the videogame and/or the movements of the players are used to control movements of the objects respectively corresponding to the players.

FIG. 1Cshows an embodiment in which a player105wears virtual reality (VR) goggles/glasses with display/augmented reality (e.g., available from a website www.vuzix.com). Instead of looking at the display103, the player105may interact with a videogame being displayed in the goggles/glasses. The player may also use 3D glasses to view an external display. Or the display may be an autostereoscopic display or a 3D holographic display. According to one embodiment, the game console, the display, and the controller are all part of the same device or system.

Referring back toFIG. 1A, the motion sensitive device104, or simply a controller, may include at least two inertial sensors, one being a tri-axis accelerometer and the other being a tri-axis gyroscope. An accelerometer is a device for measuring acceleration along one or more axes at a point on a moving object. A gyroscope is a device for measuring angular velocity around one or more axes at a point on a rotating object. Besides accelerometers and gyroscopes, there are other inertial sensors that maybe used in the controller104. Examples of such inertial sensors include a compass and a magnetometer. In general, signals from the inertial sensors (i.e., sensor data) are electronically captured and transmitted to a base unit (e.g., a game console102) to derive a kind of relative movement of the controller104.

Depending on the implementation, sensor signals from the inertial sensors may or may not be sufficient to derive all six relative translational and angular motions of the motion sensitive device104. In one embodiment, the motion sensitive device104includes inertial sensors that are less than a required number of inertial sensors to derive all relative six translational and angular motions, in which case the motion sensitive device104may only detect and track some but not all of the six translational and angular motions (e.g., there are only three inertial sensors therein). In another embodiment, the motion sensitive device104includes inertial sensors that are at least equal to or more than a required number of inertial sensors that are needed to derive all six relative translational and angular motions, in which case the motion sensitive device104may detect and track all of the six translational and angular motions (e.g., there are at least six inertial sensors therein).

In any case, a camera101is provided to image the player105and his/her surrounding environment. The image data may be used to empirically derive the effective play area. For example, when the player is out of the effective play area then the maximum extent of the effective play area can be determined. The effective play area can be used to determine a 3D representation of a real-world space in which the player plays a videogame.

Other factors known in advance about the camera might be used in determining the effective play area, for example, a field of view. Alternatively, there may be a separate calibration phase based on empirical data to define an effective play area.

Those skilled in the art know that there are number of ways to derive a 3D representation of a 3D space from image data, one of which may be used to derive such a 3D representation. Further, the image data may be used to facilitate the determination of absolute motions of the controller in conjunction with the sensor data from the inertial sensor. According to one embodiment, the player may wear or be attached with a number of specially color tags, or dots, or lights, to facilitate the determination of the movements of the player from the image data.

FIG. 2Ashows an exemplary game space200that is composed to resemble a real-world space in which a player is in. The game space200resembling the real-world ofFIG. 1Aincludes a number of objects203-207, some of them (e.g., referenced by203and205) corresponding to actual objects in the real-world space while others (e.g., referenced by204and207) are artificially placed in the game space200for the gaming purpose. The game space200also includes an object202holding something201that may correspond to the player holding a controller. Movement in the game space200, also called a 3D virtual world, resembles movement in the real-world the player is in, but includes various objects to make a game space for a videogame.

FIG. 2Bshows a flowchart or process210of generating a game space resembling a real-world space including and surrounding one or more players. The process210may be implemented in software, hardware or a combination of software and hardware. According to one embodiment, the process210is started when a player has decided to start a videogame at212. In accordance with the setting illustrated inFIG. 1A, the camera operates to image the environment surrounding the player at214. The camera may be a 3D camera or a stereo-camera system generating data that can be used to reconstruct a 3D image or representation of the real-world of the player at216. Those skilled in the art know that there are different ways to generate a 3D representation of a 3D real-world or movements of a player in the 3D real-word space, the details of which are omitted herein to avoid obscuring aspects of the current invention.

At218, with the 3D representation of the real-world, a game space is created to include virtual objects and representative objects. The virtual objects are those that do not correspond to anything in the real-world, examples of virtual objects include icons that may be picked up for scores or various weapons that may be picked up to fight against other objects or figures. The representative objects are those that correspond to something in the real-word, examples of the representative objects include an object corresponding to a player(s) (e.g. avatar(s)) or major things (e.g., tables) in the real-world of the player. The representative objects may also be predefined. For example, a game is shipped with a game character that is designated to be the player's avatar. The avatar moves in response to the player's real-world movements, but there is otherwise no other correspondence. Alternatively, a pre-defined avatar might be modified by video data. For example, the player's face might be applied to the avatar as a texture, or the avatar might be scaled according to the player's own body dimensions. At220, depending on an exact game, various rules or scoring mechanisms are embedded in a videogame using the game space to set objectives, various interactions that may happen among different objects and ways to count score or declare an outcome. A videogame using the created game space somehow resembling the real-world of the player is ready to play at222. It should be noted that the process210may be performed in a game console or any other computing device executing a videogame, such as a mobile phone, a PC or a remote server.

One of the features, objects and advantages in the current invention is the ability to create a gaming space in which there is at least an approximate correspondence between a game object and a corresponding player in his/her own real-world space in terms of, for example, one or more of action, movement, location and orientation. The gaming space is possibly populated with avatars that move as the player does, or as other people do.FIG. 3Ashows a configuration300according to one embodiment of this invention. A camera301is placed in a play area surrounding one player, and the play area may be in a living room, or bedroom, or any suitable area. In one set-up, a player may have multiple cameras in his/her house, for example, one in the living room and another in the bedroom. As the player moves around the house and is recognized as being visible by different cameras, the game space is updated in accordance with the change of the real-world space, and could reflect a current location of the player in some manner.

The camera301has a field of view302. Depending on factors that include the camera parameters, the camera setup, the camera calibration, and lighting conditions, there is an effective play area303within which the movements of the player can be tracked with a predefined level of reliability and fidelity for an acceptable duration. The effective play area303can optionally be enhanced and/or extended304by using INS sensors to help track and identify players and their movements. The effective play area303essentially defines a space in which the player can move and the movements thereof may be imaged and derived for interacting with the videogame. It should be noted that an effective play area303typically contains a single player, but depending on the game and tracking capabilities, may contain one or more players.

The effective play area303may change over time. For example, as lighting conditions change, or as the camera is moved or re-calibrated. The effective play area303may be determined from many factors such as simple optical properties of the camera (e.g., the field of view, or focal length). Experimentation may also be required to pre-determine likely effective play areas. Or the effective play area may be implicitly determined during the game or explicitly determined during a calibration phase in which the player is asked to perform various tasks at various points in order to map out the effective play area.

A mapping305specifies some transformation, warping, or morphing of the effective play area303into a virtualized 3D representation307of the effective play area303. The 3D representation307may be snapped or clipped into some idealized regular shape or, if present, may preserve some or the irregular shape of the original real-world play area. There may also be more than one 3D representation. For example, there may be different representations for different players, or for different parts of the play area with different tracking accuracies, or for different games, or different parts of a game. There might also be more than one representation of a single player. For example, the player might play the role of the hero in one part of the game, and of the hero's enemy in a different part of the game, or a player might choose to control different members of a party of characters, switching freely between them as the game is played.

The 3D representation is embedded in the shared game space306. Another 3D representation308of other real-world spaces is also embedded in the game space306. These other 3D representations (only 3D representations308are shown) are typically of real-world spaces that are located remotely, physically far apart from one another or physically apart under the same roof.

Those skilled in the art will realize that the 3D representation and/or embedding may be implicit in some function that is applied to the image and/or sensor data streams. The function effectively re-interprets the data stream in the context of the game space. For example, it is supposed that an avatar corresponding to a player is in a room of dimensions a×b×c then this could be made to correspond to a bounding box around the player of unit dimension. So if the player moves half-way across the effective play area in the x-dimension, then the avatar moves a/2 units in the game space.

FIG. 3Bshows a system configuration310that may be used to create a game space based on data from a plurality of data coming from at least two game consoles. There are three exemplary game consoles312,314and316inFIG. 3B. Each of the game consoles312,314and316is coupled to at least one camera and possibly a motion-sensitive controller, thus providing image data and sensor data. According to one embodiment, each of the game consoles312,314and316is coupled to a data network. In one embodiment, each of the game consoles312,314and316is equipped with a WiFi interface allowing a wireless connection to the Internet. In another embodiment, each of the game consoles312,314and316is equipped with an Ethernet interface allowing wired connection to the Internet. In operation, one of the game consoles312,314and316is configured as a hosting machine executing a software module implementing one embodiment of the present invention.

The mapping from the effective play areas required to create the 3D representations of the play areas may be applied on one or more of the participating consoles or a designated computing device. In one embodiment, the relevant parameters (e.g., camera parameters, camera calibration, lighting) are communicated to a hosting machine where the mapping takes place. The hosting machine is configured to determine how to embed each of the 3D representations into the game space.

The hosting machine receives the (image and/or sensor) data streams from all the participating game consoles and updates a game space based on the received data. Depending on where the mapping takes place, the data streams will either have been transformed on the console, or need to be transformed on the hosting machine. In the context of the present invention, the game space contains at least a virtualized 3D representation of the real-world space within which the movements of the players in the real-world are interpreted as movements of game world objects that resemble those of players on the networked game, where their game consoles are participating. Depending on which game is being played or even for different points in the same game, the 3D representations of the real-world spaces are combined in different ways with different rules, for example, stitching, merging or warping the available 3D representations of the real-world spaces. The created game space is also embedded with various rules and scoring mechanisms that may be predefined according to a game theme. The hosting game console feeds the created game space to each of the participating game consoles for the player to play the videogame. Those skilled in the art can appreciate that one of the advantages, benefits and advantages in the present invention is that the movements of all players in their real-world spaces are interpreted naturally within the game space to create a shared feeling of physical proximity and physical interaction.

FIG. 3Cshows an exemplary game space320incorporating two real-world spaces of two participating players that may be physically apart remotely or in separate rooms under one roof. The game space320created based on a combination of two real-world spaces of two participating players includes various objects, some corresponding to the actual objects in the real-world spaces and others are artificially added to enhance the game space320for various actions or interactions. As an example, the game space320includes two different levels, the setting on the first floor may be from one real-world space and the setting on the second floor may be from another real-world space, a stair is artificially created to connect the two levels. The game space320further includes two objects or avatars322and324, each corresponding to one of the players. The avatars322and324move in accordance with the movements of players, respectively. In one embodiment, one of the avatars322and324may hold a widget (e.g., a weapon or sword) and may wave the widget in accordance with the movement of the controller by the corresponding player. Given the game space230, each of the avatars322and324may be manipulated to enter or move on each of the two levels and interact with each other and other objects on the either one of the two levels.

Referring back toFIG. 3B, according to another embodiment, instead of having one of the game consoles312,314and316act as a hosting machine, a server computer318is provided as a hosting machine to receive all (image and sensor) data streams from the participating game consoles312,314and316. The server computer318executes a software module to create and update a game space based on the received data streams. The participating consoles312,314and316may also be running on some other remote server, or on some computing devices located elsewhere. In the context of the present invention, movements in the game space resemble the movements of the players within a real-world space combining part or all of the real-world space of each of the players whose game console is participating. Depending on an exact game or even the exact phase in a game, the 3D representations of the real-world spaces are combined in different ways with different rules for gaming, for example, stitching, merging or warping two or more 3D representations of the real-world spaces. The server computer318feeds the created game space to each of the participating game consoles, or other interested party, to play the videogame jointly. As a result, not only do those participating players play in the videogame with a shared feeling of physical proximity and physical interaction, but other game players may be invited to view or play the videogame as well.

FIG. 3Dshows a flowchart or process330of generating a game space combining one or more real-world spaces respectively surrounding participating players. The process330may be implemented in software, hardware or a combination of software and hardware. According to one embodiment, the process330is started when one or more players have decided to start a videogame at332.

As described above, each of the players is ready to play the videogame in front of at least one camera being set up to image a player and his/her surrounding space (real-world space). It is assumed that each of the players is holding a motion-sensitive controller, or is wearing, or has attached to their body at least one set of inertial sensors. In some embodiments, it is expected that the motion-sensing device or sensors may be unnecessary. There can be cases that two or more players are at one place in which case special settings may be used to facilitate the separation of the players, for example, each of the players may wear or have attached one or more specially colored tags, or their controllers may be labeled differently in appearance, or the controllers may include lights that glow with different colors.

At334, the number of game players is determined. It should be noted that the number of game players may be different from the number of players that are participating in their own real-world spaces. For example, there may be three game players, two are together being imaged in one participating real-world space, and the third one is alone. As a result, there are two real-world spaces to be used to create a game space for the video game. Accordingly, such a number of real-world spaces is determined at336.

At338, a data stream representing a real-word space must be received. In one embodiment, two game consoles are used, each at one location and being connected to a camera imaging one real-world space surrounding a player(s). It is assumed that one of the game consoles is set as a hosting game console to receive two data streams, one from a remote and the other from itself. Alternatively, each console is configured to maintain a separate copy of the game space that they update with information from the other console as often as possible to maintain a reasonably close correspondence. If one of the two data streams is not received, the process330may wait or proceed with only one data stream. If there is only one data stream coming, the game space would temporarily be built upon one real-word space. In an event of data missing, for example, a player performs a sword swipe and the data for the torso movement may be missing or incomplete, the game will be filled in with movements of some context-dependent motion it decides suitable, may enhance the motion to make the sword stroke look more impressive, or may subtlety modify the sword stroke so that it makes contact with an opponent character in the case that the stroke might otherwise have missed the target.

At340a game space is created by embedding respective 3D representations of real-world spaces in a variety of possible ways that may include one or any combination of stitching 3D representations together, superimposing or morphing, or any other mathematical transformation. Transformations (e.g., morphing) may be applied before the 3D representations are embedded, possibly followed by image processing to make the game space look smooth and more realistic looking. Exemplary transformations include translation, projection, rotation about any axis, scaling, shearing, reflection, or any other mathematical transformation. The combined 3D representations may be projected onto 2 of the 3 dimensions. The projection onto 2 dimensions may also be applied to the 3D representations before they are combined. The game space is also embedded with various other structures or scenes, virtual or representative objects and rules for interactions among the objects. At this time, the videogame is ready to play as the game space is being sent back to the game consoles and registered to jointly play the videogame.

As the videogame is being played, the image and sensor data keeps feeding from the respective game consoles to the host game console that updates the game space at342in reference to the data so that the game space being displayed is updated in a timely manner. At344, as the data is being received from the respective game consoles, the game space is constantly updated at342.FIG. 3Eprovides an illustration of creating a game space based on two real-world spaces of two separate play areas, which can be readily modified to generalize to multiple real-world spaces.

FIG. 3Fshows a flowchart or process350of controlling representative objects in a networked game, where at least some of the representative objects move in accordance with the movements of the corresponding players. The process350may be implemented in software, hardware or a combination of software and hardware. According to one embodiment, the process350is started when one or more players have decided to start a videogame at352.

As described above, each of the players is ready to play the videogame in front of at least one camera being set up to image a player and his/her surrounding space (real-world space). It is assumed that each of the players is holding a motion-sensitive controller or is wearing, or has attached to their body, at least one set of inertial sensors. In some embodiments it is expected that the motion-sensing device or sensors may be unnecessary. There may be cases where two or more players are at one place in which case special settings may be used to facilitate the separation of the players, for example, each of the players may wear or have attached one or more specially colored tags, or their controllers may be labeled differently in appearance, or the controllers may include lights that glow with different colors.

At354, the number of game players is determined so as to determine how many representative objects in the game can be controlled. Regardless of where the game is being rendered, there are a number of video data streams coming from the players. However, it should be noted that the number of game players may be different from the number of video data streams that are participating in the game. For example, there may be three game players, two together being imaged by one video camera, and the third one alone being imaged by another video camera. As a result, there are two video data streams from the three players. In one embodiment, a player uses more than one camera to image his/her play area, resulting in multiple video streams from the player for the video game. Accordingly, the number of game players as well as the number of video data streams shall be determined at354.

At356, the number of video data streams representing the movements of all the participating players must be received. For example, there are two players located remotely with respect to each other. Two game consoles are used, each at a location and being connected to a camera imaging a player. It is assumed that one of the game consoles is set as a hosting game console (or there is a separate dedicated computing machine) to receive two data streams, one from a remote site and the other from itself. Alternatively, each console is configured to maintain a separate copy of the game space that they update with information from the other console as often as possible to maintain a reasonably close correspondence. If one of the two data streams is not received, the process356may wait or proceed with only one data stream. If there is only one data stream coming, the movement of a corresponding representative object will be temporarily taken over by the hosting game console configured to cause the representative object to move in the best interest of the player. In the event of missing data, for example, if a player performs a sword swipe and the data for the torso movement may be missing or incomplete, the game will be filled in with movements of some context-dependent motion it decides is as consistent as possible with the known data. For example, a biomechanically plausible model of a human body and how it can move could be used to constrain the possible motions of unknown elements. There are many known techniques for subsequently selecting a particular motion from a set of plausible motions, techniques such as picking motions that minimize energy consumption or the motion most likely to be faithfully executed by noisy muscle actuators.

At357, a mapping to a shared game space is determined. The movements of the players need to be somehow embedded in the game space and that embedding is determined. For example, it is assumed that there are 2 players, player A and player B. Player A is playing in his/her living room while player B is remotely located and playing in his/her own living room. As player A moves toward a display (e.g., with a camera on top), the game must decide in advance how that motion is to be interpreted in the shared game space. In a sword fighting game, the game may decide to map the forward motion of player A in the real-world space into rightward motion in the shared game space, backward motion into leftward motion, and so on. Similarly, the game may decide to map the forward motion of player B into leftward motion, backward motion into rightward motion, and so on. The game may further decide to place an object that is representative of player A (e.g., an avatar of player A) to the left of the shared game space and player B's avatar to the right of the space. The result is that as player A moves toward the camera player A, the corresponding avatar moves to the right on the display, closer to player B. If player B moves away from the camera in response, then player A sees that the avatar of player B moves to the right on the display, backing away from the advancing avatar of player A.

Mapping forward motion in the game world to rightward of leftward motion in the shared game space is only one of many possibilities. Any direction of motion in the game may be mapped to a direction in the shared game space. Motion can also be modified in a large variety of other ways. For example, motion could be scaled so that small translations in the real world correspond to large translations in the game world, or vice versa. The scaling could also be non-linear so that small motions are mapped almost faithfully, but large motions are damped.

Any other aspect of a real-world motion could also be mapped. A player may rotate his/her forearm about the elbow joint toward the shoulder, and the corresponding avatar could also rotate its forearm toward its shoulder. Or the avatar may be subject to an “opposite motion” effect from a magic spell so that when the player rotates his/her forearm toward the shoulder, the avatar rotates its forearm away from the shoulder.

The player's real-world motion can also map to other objects. For example, as a player swings his/her arm sideways from the shoulder perhaps that causes a giant frog being controlled to shoot out its tongue. The player's gross-level translational motion of their center of mass may still control the frog's gross-level translational motion of its center of mass in a straightforward way.

Other standard mathematical transformations of one space to another, known to those skilled in the art, could be used; these include, but are not limited to, any kind of reflecting, scaling, translating, rotating, shearing, projecting, or warping.

The transformation applied to the real-world motions can also depend on the game context and the player. For example, in one level of a game, a player's avatar might have to walk on the ceiling with magnetic boots so that the player's actual motion is inverted. But once that level is completed, the inversion mapping is no longer applied to the player's real-world motion. The players might also be able to express preferences on how their motions are mapped. For example, a player might prefer that his/her forward motion is mapped to rightward motion and another player might prefer that his/her motion is mapped to leftward motion. Or a player might decide that his/her avatar is to be on the left of a game space, thus implicitly determining that the forward motion will correspond to a rightward motion. If both players in a two-player game have the same preference, for example, they both want to be on the left of a shared game space, it might be possible to accommodate their wishes with two separate mappings so that on each of their respective displays their avatar's position and movement are displayed as they desire.

Alternatively the game may make some or all of these determinations automatically based on determinations of the player's height, skill, or past preferences. Or the game context might implicitly determine the mapping. For example, if two or more players are on the same team fighting a common enemy monster then all forward motions of the players in the real-world could be mapped to motions of each player's corresponding avatar toward the monster. The direction that is toward the monster may be different for each avatar. In the example, movement to the left or right may not necessarily be determined by the game context so that aspect could still be subject to player choice, or be assigned by the game based on some criteria. All motions should however be consistent. For example, if moving to the left in the real-world space causes a player's avatar to appear to move further away at one instant, then it should not happen, for no good reason, that at another instant the same player's leftward motion in the real world should make the corresponding avatar appear to move closer.

The game can maintain separate mappings for each player and for different parts of the game. The mappings could also be a function of real-world properties such as lighting conditions so that in poor light the motion is mapped with a higher damping factor to alleviate any wild fluctuations caused by inaccurate tracking.

Those skilled in the art would recognize that there are a wide variety of possible representations for the mapping between motion in the real world and motion in the game space. The particular representation chosen is not central to the invention. Some possibilities include representing transformations as matrices that are multiplied together with the position and orientation information from the real-world tracking. Rotations can be represented as matrices, quaternions, Euler angles, or angles and axis. Translations, reflections, scaling, and shearing can all be represented as matrices. Warps and other transformations can be represented as explicit or implicit equations. Another alternative is that the space around the player is explicitly represented as a 3D space (e.g. a bounding box) and the mapping is expressed as the transformation that takes this 3D space into the corresponding 3D space as it is embedded in the game world. The shape of the real-world 3D space could be assumed a priori or it could be an explicitly determined effective play area inferred from properties of the camera, or from some calibration step, or dynamically from the data streams.

Those skilled in the art would recognize that the mapping from real-world motion to game-world motion can potentially be applied at various points, or even spread around and partially applied at more than one point. For example, the raw data from the cameras and motion sensors could be transformed prior to any other processing. In the preferred embodiment the motion of the human players is first extracted from the raw data and then cleaned up using knowledge about typical human motion. Only after the real-world motion has been satisfactorily determined is it mapped onto its game space equivalent. Additional game-dependent mapping may then subsequently be applied. For example, if the player is controlling a spider, the motion in the game space of how the player would have moved had they been embedded in that space instead of the real world is first determined. Only then is any game-specific mapping applied, such as how bipedal movement is mapped to 8 legs, or how certain hand motions might be mapped to special attacks and so forth.

At358, as the data streams come in, the hosting game console is configured to analyze the video data and infer the respective movements of the players, and at the same time, to cause the corresponding objects representing the players to move accordingly. Depending on an exact game and/or its rules, the movements of the representative objects may be enhanced or modified to make the game look more exciting or to make the players feel more involved. For example, the game may enhance a motion to make a sword stroke look more impressive, or may subtlety modify a sword stroke so that it makes contact with an opponent character in the case where that stroke might otherwise have missed the target.

As the videogame is being played, the video data keeps feeding from the respective game consoles to the host game console that updates/modifies/controls the corresponding objects at360and362.

Referring now toFIG. 4A, there shows a case400in which an artificially added element408in a game space may cause one of the two players411to physically move backward in their effective play areas to a new location412. It is assumed that a game space includes two 3D representations402and403of the real-world play areas that are initially stitched together as shown. As the element408(e.g., a monster) is approaching an avatar413corresponding to the player, when a player thereof sees the monster approaching (from a display), the player moves backwards to avoid the monster, causing the avatar to reposition414in the 3D representation. The player who is in the space represented by the 3D representation402will see (from the display) the other player's avatar move back and will feel as if they really are inhabiting the same virtual space together. As a result of a player's movement, it is also possible that a corresponding 3D representation may be changed, for example, it might be made longer so as to dampen the effect of the player's real-world movement.

FIG. 4Bshows a game space410that embeds two 3D representations of two respective real-world play areas412and414, combined face to face. As the player who controls an avatar in412moves toward the camera, the avatar will be seen (in the display) to move to the right, and vice versa. As the player who controls an avatar in414moves away from the camera, the corresponding avatar will be seen to move to the right, and vice versa. In another videogame, or perhaps later on in the same videogame, the 3D representations412and414can be combined in a different way, where one is rotated and the other is mirrored. In the mirrored case as a player moves toward their camera, their avatar may unexpectedly move in the opposite direction to the player's expectation. In the rotation case, the rotation can be about any axis including rotating the space up or down as well as side to side. Exactly how the 3D representations are embedded in the game space and what, if any, functions are applied to modify the game space and the data streams depends on the game and the exact point in the game.

FIG. 5shows a system configuration500according to one embodiment of this invention. The system includes a memory unit501, one or more controllers502, a data processor503, a set of inertial sensors504, one or more cameras505, and a display driver506. There are two types of data, one from the inertial sensors504and the other from the camera505, both are being input to the data processor503. As described above, the inertial sensors504provide sensor data for the data processor503to derive up to six degrees of freedom of angular and translational motions with or without the sensor data from the camera505.

The data processor503is configured to display video sequence via the display driver506. In operation, the data processor503executes code stored in the memory501, where the code has been implemented in accordance with one embodiment of the described invention herein. In conjunction with signals from the control unit502that interprets actions of the player on the controller or desired movements of a controller being manipulated by the player, the data processor503updates the video signal to reflect the actions or movements. In one embodiment, the video signal or data is transported to a hosting game console or another computing device to create or update a game space that is in return displayed on a display screen via the display driver506. [TODO: read this section more carefully.]

According to one embodiment, data streams from one or more game consoles are received to derive respective 3D representations of environments surrounding the players. Using augmented reality that is concerned with the use of live video imagery which is digitally processed and “augmented” by the addition of computer-generated graphics, a scene or a game space is created to allow various objects to interact with people or objects represented in the real-world (referred to as representative objects) or other virtual objects. A player may place an object in front of a virtual object and the game will interpret what the object is and respond to it. For example, if the player rolls a ball via the controller towards a virtual object (e.g., a virtual pet), it will jump out of the way to avoid being hurt. It will also react to actions from the player to allow the player to, for example, tickle the pet or clap their hands to startle it.

According to one embodiment, the sensor data is correlated with the image data from the camera to allow an easier identification of elements such as a player's hand in a real-world space. As it may be known to those skilled in the art, it is difficult to track an orientation of a controller to a certain degree of accuracy from the data purely generated from a camera. Relative orientation tracking of a controller may be done using some of the inertial sensors, the depth information from the camera gives the location change that can then be factored out of the readings from the inertial sensors to derive the absolute orientation of the controller due to the possible changes in angular motions.

One skilled in the art will recognize that elements of the present invention may be implemented in software, but can be implemented in hardware or a combination of hardware and software. The invention can also be embodied as computer-readable code on a computer-readable medium. The computer-readable medium can be any data-storage device that can store data which can be thereafter be read by a computer system. Examples of the computer-readable medium may include, but not be limited to, read-only memory, random-access memory, CD-ROMs, DVDs, magnetic tape, hard disks, optical data-storage devices, or carrier waves. The computer-readable media can also be distributed over network-coupled computer systems so that the computer-readable code is stored and executed in a distributed fashion.

The present invention has been described in sufficient detail with a certain degree of particularity. It is understood to those skilled in the art that the present disclosure of embodiments has been made by way of examples only and that numerous changes in the arrangement and combination of parts may be resorted without departing from the spirit and scope of the invention as claimed. While the embodiments discussed herein may appear to include some limitations as to the presentation of the information units, in terms of the format and arrangement, the invention has applicability well beyond such embodiment, which can be appreciated by those skilled in the art. Accordingly, the scope of the present invention is defined by the appended claims rather than the forgoing description of embodiments.

Claims

- A method for controlling movements of two or more objects in a shared game space for a networked videogame being played by at least two players separately located from each other, the method comprising: receiving in a host machine at least a first video stream from at least a first camera associated with a first location capturing movements of at least a first player at the first location, and a second video stream from at least a second camera associated with a second location capturing movements of at least a second player at the second location;modeling from the first and second video streams a first 3D representation of some or all of the first location and a second 3D representation of some or all or the second locations, respectively;updating the shared game space to include the first and second 3D representations, virtual objects embedded by the host machine, and representative objects corresponding respectively to the at least first and second players;deriving the movements of the first and second players respectively from the first and second video data streams;causing at least a first object in the shared game space to respond to the derived movements of the first player and at least a second object in the shared game space to respond to the derived movements of the second player;and displaying a depiction of the shared game space on at least one display at each of the first and second locations.

- The method as recited in claim 1 , wherein at least one of the first and second players holds a device as a controller containing one or more inertial sensors, and sensor data from the sensors is combined with one of the first and second video streams to derive the respective movements of the first or second player.

- The method as recited in claim 1 , wherein more video cameras are being set up at the first location to monitor an effective play area in which the movements of the first player are captured, the video cameras produce one or more of full color images, depth image or infrared imaging data.

- The method as recited in claim 3 , wherein the effective play area is defined by one or more of: a field of view of the cameras, optical parameters of the cameras and surrounding lighting condition.

- The method as recited in claim 1 , wherein at least one of the first and second players has a device containing one or more inertial sensors, and sensor data from the sensors is combined with the video streams to derive the respective movements of the first or second player.

- The method as recited in claim 5 , wherein the effective play area goes beyond the field of view of the cameras, the movements of either one of the first and second players falling into a portion of the effective play area beyond the field of view of the camera are tracked by the inertial sensors.

- The method as recited in claims 6 , wherein that device is one or more of: hand-held, wearable, or attachable to the human body.

- The method as recited in claim 6 , wherein the device is a hand-held motion-sensitive game controller.

- The method as recited in claim 1 , further comprising: embedding gaming rules in the videogame to enable various interactions between the first and second objects, and among the first and second objects and virtual objects.

- The method as recited in claim 9 , further comprising: mapping the movements of the first and second players to motions of the first and second objects in accordance with a predefined rule in the videogame.

- The method as recited in claim 10 , wherein the mapping of the movements maps the movements of the first player toward the first camera into the motions of the first object in a particular direction.

- The method as recited in claim 10 , wherein the mapping of the movements maps the movements of the first player and second players so that two corresponding objects in the shared game space move complementarily.

- The method as recited in claim 10 , wherein the mapping of the movements maps the movements of the first player and second players in accordance with a choice made respectively by the first and second players.

- The method as recited in claim 10 , wherein the mapping of the movements maps the movements of either one of the first player and second players towards a camera in a way that a corresponding object is displayed moving left or right on a display.

- The method as recited in claim 10 , wherein the mapping of the movements maps the movements of either one of the first player and second players in a way that a corresponding object moves to avoid a predefined virtual object.

- The method as recited in claim 10 , wherein the mapping of the movements maps the movements of either one of the first player and second players in a way that a 3D human-like figure moves substantially similar to either one of the first player and second players.

- The method as recited in claim 10 , wherein the mapping of the movements scales the derived movements in a way that small translations in a real-world space correspond to large translations in the shared game space of the videogame, or vice versa.

- The method as recited in claim 10 , wherein the mapping of the movements depends on one or more of game context of the videogame, the player, gaming history or experience of one or more of the first and second players with the videogame.

- A method for controlling movements of an object in a videogame, the method comprising: receiving in a computing device at least one video stream from a video camera capturing various movements of a player of the videogame within a field of view of the camera;modeling from the video stream a 3D representation of a location of the player;creating a virtual space in the videogame to include the 3D representation, virtual objects embedded by the host machine, and at least a representative object corresponding to the player;embedding a virtual source of sound in the game space in such a way that the sound the player hears get louder as the representative object corresponding to the player gets closer to the sound source, and vice versa;deriving the movements of the player from the video data;deriving the movements of the player from sensor data when the player moves beyond the field of view of the camera, wherein the sensor data is generated from at least one controller being manipulated by the user to interact with the videogame;and causing the representative object to respond to the movements of the player.

- The method as recited in claim 19 , further comprising: mapping the movements of the player to motions of the representative object in accordance with a predefined rule in the videogame.

- The method as recited in claim 20 , wherein the motions are scaled so that small translations in a real-world space of the player correspond to large translations in a game space of the videogame, or vice versa.

- The method as recited in claim 21 , wherein the motions are scaled non-linearly so that small movements of the player are mapped almost faithfully, but large movements of the player are damped.

- The method as recited in claim 21 , wherein the motions are mapped to motions of the object depends on one or more of game context of the videogame, the player, gaming history or experience of the player with the videogame.

- The method as recited in claim 19 , wherein the video camera is provided to monitor an effective play area in which the movements of the player are captured.

- The method as recited in claim 24 , wherein the effective play area is defined by one or more of: a field of view of the camera, optical parameters of the camera or surrounding lighting conditions.

- The method as recited in claim 25 , wherein the effective play area goes beyond the field of view of the camera, the movements of the player falling into a portion of the effective play area beyond the field of view of the camera are tracked by inertial sensors in a controller being held by the player.

- The method as recited in claim 19 , further comprising: embedding gaming rules in the videogame to enable various interactions between the object and other virtual objects, wherein each of the interactions happens as the player manipulates the at least one controller.

- The method as recited in claim 27 , wherein the sensor data is from inertial sensors and used to derive relative translational and angular movements of the at least one controller, both of the sensor data and the video data are combined to derive absolute translational and angular movements of the at least one controller.

- A system for controlling movements of two or more objects in a shared game space for a networked videogame being played by at least two players remotely located with respect to each other, the system comprising: at least a first camera and a second camera, respectively, with a first location and a second location, the first camera producing a first video stream capturing movements of at least a first player at the first location, the second camera producing a second video stream capturing movements of at least a second player at the second location;a designated computing device configured to derive the movements of the first and second players respectively from the first and second video data streams and cause at least a first object in the shared game space to respond to the derived movements of the first player and at least a second object in the shared game space to respond to the derived movements of the second player, wherein the first and second locations are not co-located, wherein the shared game space includes a first 3D representations of some or all of the first location, and a second 3D representation of some or all of the second locations, wherein the shared game space further includes virtual objects embedded by the designated computing device, and at least two representative objects respectively representing the first and second players;and first and second displays respectively displaying the networked videogame including the shared name space.

- The system as recited in claim 29 , wherein the at least one of the first and second 3D representations is morphed over a course of the networked videogame to create new and interesting scenes for the videogame.

- The system as recited in claim 30 , wherein at least one of the first and second players holds a device containing one or more inertial sensors, and sensor data from the sensors is combined with one of the first and second video streams to derive the movements of the first or second player.

- The system as recited in claim 29 , wherein more video cameras are being set up in the first location to monitor an effective play area in which the movements of the first player are captured.

- The system as recited in claim 32 , wherein the effective play area is defined by one or more of: a field of view of the cameras, optical parameters of the cameras and surrounding lighting conditions.

- The system as recited in claim 29 , wherein at least one of the first and second players has a device containing one or more inertial sensors, and sensor data from the sensors is combined with the video streams to derive the movements of the first or second player.

- The system as recited in claim 34 , wherein the effective play area goes beyond a field of view of the first or second camera, the movements of the first or second player falling into a portion of the effective play area beyond the field of view of the first or second camera are tracked by the inertial sensors.

- The system as recited in claims 35 , wherein the device is one or more of: hand-held, wearable, or attachable to the human body.

- The system as recited in claim 35 , wherein the device is a hand-held motion-sensitive game controller.

- The system as recited in claim 29 , wherein the designated computing device is configured to embed gaming rules in the videogame to enable various interactions between the first and second objects, and among the first and second objects and virtual objects.

- The system as recited in claim 29 , wherein the designated computing device is configured to map the movements of the first and second players to motions of the first and second objects in accordance with a predefined rule in the videogame.

- The system as recited in claim 29 , wherein the designated computing device is configured to map the movements of the first player toward the first camera into the motions of the first object in a particular direction.

- The system as recited in claim 29 , wherein the designated computing device is configured to map the movements of the first player and second players so that two corresponding objects in the shared game space move complimentarily.

- The system as recited in claim 29 , wherein the designated computing device is configured to map the movements of the first player and second players in accordance with a choice made by the first and second players.

- The system as recited in claim 29 , wherein the designated computing device is configured to map the movements of either one of the first player and second players towards a camera in a way that a corresponding object is displayed moving left or right on a display.

- The system as recited in claim 29 , wherein the designated computing device is configured to map the movements of either one of the first player and second players in a way that a corresponding object moves to avoid a predefined virtual object.

- The system as recited in claim 29 , wherein the designated computing device is configured to map the movements of either one of the first player and second players in a way that a 3D human-like figure moves substantially similar to either one of the first player and second players.

- The system as recited in claim 29 , wherein the designated computing device is configured to scale the derived movements in a way that small translations in a real-world space correspond to large translations in the shared game space of the videogame, or vice versa.

- The system as recited in claim 29 , wherein the designated computing device is configured to map the movements in accordance with one or more of game context of the videogame, the player, gaming history or experience of one or more of the first and second players with the videogame.

- A system for controlling movements of an object in a videogame, the system comprising: at least a video camera capturing various movements of a player of the videogame;a designated device receiving at least one video stream from the video camera to derive the movements of the player from the video data, and cause the object to respond to the movements of the player, the designated device being configured to further model a 3D representation of a play area in which the player is interacting with the videogame, wherein the videogame includes a virtual space including some or all of the 3D representation, virtual objects embedded by the designed device, and at least a representative object corresponding to the player, embed a virtual source of sound in the game space in such a way that the sound the player hears get louder as the representative object corresponding to the player gets closer to the sound source, and vice versa.

- The system as recited in claim 48 , wherein the designated device is configured to map the movements of the player to motions of the object in accordance with a predefined rule in the videogame.

- The system as recited in claim 48 , wherein the motions are scaled so that small translations in a real-world space of the player correspond to large translations in a game space of the videogame, or vice versa.

- The system as recited in claim 48 , wherein the motions are scaled non-linearly so that small movements of the player are mapped almost faithfully, but large movements of the player are damped.

- The system as recited in claim 48 , wherein a predefined rule in the videogame depends on one or more of game context of the videogame, the player, gaming history or experience of the player with the videogame.

- The system as recited in claim 48 , wherein the video camera is provided to monitor an effective play area in which the movements of the player are captured.

- The system as recited in claim 53 , wherein the effective play area is defined by one or more of: a field of view of the camera, optical parameters of the camera and surrounding lighting conditions.

- The system as recited in claim 54 , wherein the effective play area goes beyond the field of view of the camera, the movements of the player falling into a portion of the effective play area beyond the field of view of the camera are tracked by inertial sensors in a controller being held by the player.

- The system as recited in claim 48 , wherein the designated device is configured to embed gaming rules in the videogame to enable various interactions between the object and other virtual objects, each of the interactions happens as the player manipulates at least a controller including inertial sensors.

- The system as recited in claim 56 , wherein sensor data from the inertial sensors is used to derive relative translational and angular movements of the controller, both of the sensor data and the video data are combined to derive absolute translational and angular movements of the controller.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.