U.S. Pat. No. 9,597,588

ADVANCED GAMEPLAY SYSTEM

AssigneeOpen Invention Network LLC

Issue DateMay 7, 2015

Illustrative Figure

Abstract

The present invention enhances the player's gameplay visual, feedback and other experiences by taking advantage of optical adapters, feedback mechanics, advancements in theatrical audio, frame rate throttle, meta-file object framework for storage and retrieval, calibration advancements, vocal command enhancements, voice object lookups, facial/body scan, color/clothing coordination, party or celebration capabilities, noise cancellation, interactive object placement, heart rate monitor, pan-tilt-zoom camera advances, cooperative gameplay advances and programming advancements.

Description

DETAILED DESCRIPTION OF THE INVENTION Referring toFIG. 1, a projector101(used in a conventional system100, not fully shown) sends a projected image having an upper bound102and a lower bound104described by a vertical angle of view103to a receiving screen105. The arc of the angle of view103varies with the settings within the projector limited by the candle output and lens construction. In one embodiment, the arc of the angle of view extends between 120 degrees and 180 degrees. This is an example of the limited height of the field of view presented using a projector in a modern system. Overhead-mounted projection allows the player to position themselves under the projection path without producing a shadow on the projection screen. Referring now toFIG. 2, the projector201(used in a conventional system200, not fully shown) sends a projected image having an left bound202and a right bound204described by a horizontal angle of view203to a receiving screen205. The arc of the angle of view203varies with the settings within the project limited by the candle output and lens construction. This is an example of the limited width of the field of view presented using a projector in a modern system. Overhead-mounted projection allows the player to position themselves under the projection path without producing a shadow on the projection screen. Referring now toFIG. 3, a player has the ability to view a much wider and higher angle of view than most projection systems output. A player301has the ability to view an upward vertical area302, for example, without moving the head301, seeing well beyond the border303of a regular display screen (used in a system300of the instant invention, not fully shown). Referring now toFIG. 4, a player402has the ability to view an upward vertical area401and a corresponding lower area described by an angle403which is much greater than the height of a ...

DETAILED DESCRIPTION OF THE INVENTION

Referring toFIG. 1, a projector101(used in a conventional system100, not fully shown) sends a projected image having an upper bound102and a lower bound104described by a vertical angle of view103to a receiving screen105. The arc of the angle of view103varies with the settings within the projector limited by the candle output and lens construction. In one embodiment, the arc of the angle of view extends between 120 degrees and 180 degrees. This is an example of the limited height of the field of view presented using a projector in a modern system. Overhead-mounted projection allows the player to position themselves under the projection path without producing a shadow on the projection screen.

Referring now toFIG. 2, the projector201(used in a conventional system200, not fully shown) sends a projected image having an left bound202and a right bound204described by a horizontal angle of view203to a receiving screen205. The arc of the angle of view203varies with the settings within the project limited by the candle output and lens construction. This is an example of the limited width of the field of view presented using a projector in a modern system. Overhead-mounted projection allows the player to position themselves under the projection path without producing a shadow on the projection screen.

Referring now toFIG. 3, a player has the ability to view a much wider and higher angle of view than most projection systems output. A player301has the ability to view an upward vertical area302, for example, without moving the head301, seeing well beyond the border303of a regular display screen (used in a system300of the instant invention, not fully shown).

Referring now toFIG. 4, a player402has the ability to view an upward vertical area401and a corresponding lower area described by an angle403which is much greater than the height of a given screen404(used in a system400of the instant invention, not fully shown).

Referring now toFIG. 5, a player501has the ability to view an left horizontal area502and a right horizontal area503described by an angle504which is much greater than the width of the screen505. This gives the player501the ability to have sight of, for example, opponents overhead, to the front and/or to the sides506, for example, which cannot be displayed on screen505(used in a system500of the instant invention, not fully shown).

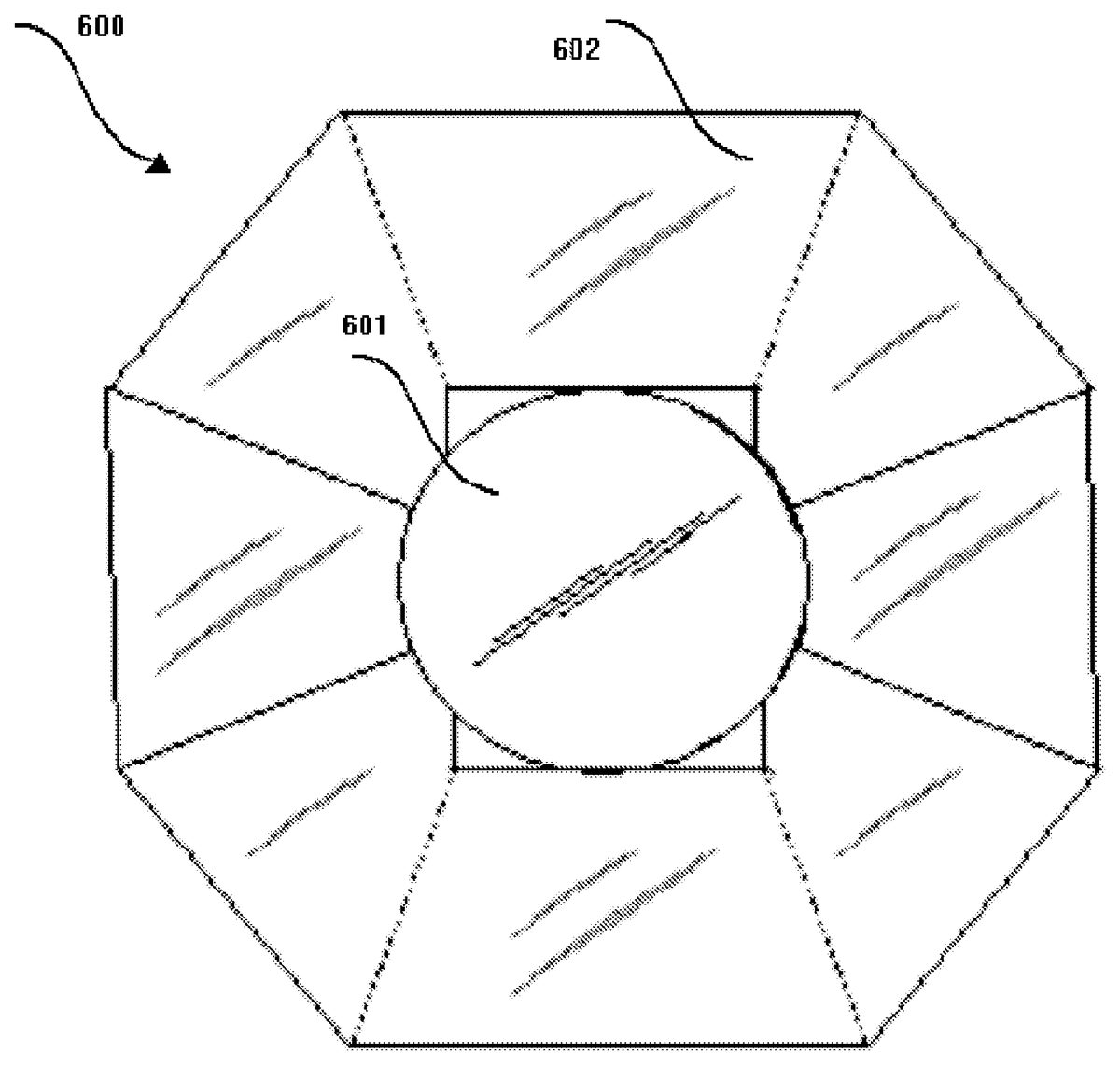

Referring now toFIG. 6, the sending component of the current invention where light and image data for the purpose of projection are transmitted through a lens adapter601(which may be an optical adapter) and multiple facets602. These facets602, which can be constructed of glass, mirrors, plastic, metal, and the like, are responsible for projecting the video images onto a receiving screen at angles well beyond the modern projection capabilities (used in a system600of the instant invention, not fully shown). In one embodiment the plurality of facets comprises eight facets.

Referring now toFIG. 7, the outer body of the projector video adapter700is shown. The adapter700is composed on a lens connector and lens filter701as well as an upper facet set702and a lower facet set703of the lens adapter601. The upper facet702and lower facet703as well as right and left side facets emit a video signal using enhanced power adapters and increased lighting capability.

Referring now toFIG. 8, an alternate embodiment of the current invention shows a half-circular screen801with a player804standing inside of the screen801. The walls of the screen801are around the player804so that the game surrounds the player804from all sides. Details of the pixels802which make up the screen801are shown as803. The electrical and video connections between the screen801and an instant system (not fully shown) are shown in805. In this embodiment, the lens adapter and projector may not be used (used in a system800of the instant invention, not fully shown).

FIG. 9shows another embodiment of the current invention where a screen902has a top screen901which covers the player903overhead and provides additional game interactivity. The screen902is connected to the instant system (not fully shown) and electricity represented by904(used in a system900of the instant invention, not fully shown).

Referring toFIG. 10, the unit1000rests on a moving platter1001which is positioned by a vertical servo1004providing vertical motion1007and a horizontal servo1003providing horizontal motion1008used to track the movements of one or more players using a motion detector1022and camera1010tethered by a control wire1011. The motion detector1022is held in a casing1013which is connected to a motor1014, providing feedback to the servo motor1005through a control wire1015. The servo motor1005sends and receives position signals to and from the vertical servo1004and horizontal servo1003.

A replaceable scent cartridge, also1014, is connected to a scent emitter1012which can spray a various number of scents into the room. For example, if the player is experiencing a wooded area, an evergreen scent can be delivered based on program settings within the game.

The air cannon1012can also be programmed to release varying levels of compressed air based on a game experience. The air cannon1012is connected to the air chamber1002which is controlled by a computer chip inside of1005.

The units described are supported by a mounting bracket1011which also supports the microphone1021and speaker1017and speaker wire1018.

The unit rests on a mounting platform1006which can be connected to a wall, a table, the floor or a speaker in the room. Based on the power levels set within the system, it is best to have the unit mounted to a table or on a wall.

The unit is further connected to a computer for receiving program settings by a wire(s)1020that are attached to the instant system (not fully shown).

The current invention makes use of input devices such as one or more cameras and motion detection apparatuses to detect player movements which symbolize the addition or use of one or more devices within the context of a game.

Referring toFIG. 11, the player1101within a play area1100hoists and rests a pretend bazooka on his arm while holding the trigger1102and aiming the gun in a particular direction1103. The bazooka has a handle at the base with a trigger and a handle at the nose used for aiming the unit at the given target.

Referring now toFIG. 12, the game1200shows the player's avatar1201holding a realistic bazooka by the trigger1202and pointing the gun in the same direction1203which is determined by the player. The bazooka shows the two handles which are imitating the player's use of the unit.

CombiningFIG. 11andFIG. 12in a gameplay environment shows the relationship of how the players' motions are interpreted on the screen as motion instructions for an avatar within the context of the given game in accordance with an embodiment of the instant application.

Referring now toFIG. 13, the example1300shows the operation of storing the gameplay file, transmitting the gameplay file, and representing it on the screen. The gameplay file encoder1310collects the interactive objects1301, the binary codes1302, the media objects1303, the author objects1304, the user objects1305, timing objects1306, licensing and security objects1307, error concealment objects1308and prioritization and scalability objects1309into one file object1311. This format provides the ability to store and retrieve the complex interactive media objects used for the game, game attributes, as well as other components used by the one or more players as well as the objects and actions used by the player and their effects within the game in accordance with an embodiment of the instant application.

The sending service1312sends the deliverable container1311to the one or more storage objects1315while the one or more receiving services1313receive a retrieved deliverable content1311from the one or more storage objects1315to one or more receiving player or interactive object/console1314. In this manner, the content is shown to the user or player.

Referring now toFIG. 14, 1400represents the components of the current invention and their relationship to each other. Under the display section1401of the current invention, the perceptual angle of view model1402, calibration advancements1403, optical advancements1404, projection advancements1405, and other functionality1401related to the display (such as touch-screen elements or borderless screen elements, and the like1406) are noted and collected together in the display category1401. Several other topics are shown under the system1407, user controls1414, feedback1420and programming1425component headings.

Related to the display category, is the system section1407. The multi-player system aspects1408, frame rate throttle1409, body/facial scanning1410, color coordination1411and heart rate monitoring1412elements of the system fall into this section. The system1407is a portion of the architecture of the game system and is located in the game console as both software and hardware. As another embodiment of the instant invention, even though the system1407may reside in the system console, the system may also reside as one or more components distributed across a network and may be accessed across that network by the system console.

Other functionality1413related to the system1407(such as upgrade service handlers, hardware connection modules, and the like1413) are noted.

Related to the system section1407, is the user control advancements1414of the instant invention. The voice recognition aspects1415, object addition1416, team coordination1417, and action addition capabilities1418and other elements1419, such as object and action editors, fall into this section.

Related to the user control category1414, is the feedback section1420. The noise cancellation1421, system attachments1422which include the air cannon, microphone, etc., and the camera and motion control items1423, as well as other elements such as global positioning systems, maps and alarm components1424of the feedback system fall into this section.

Finally, the programming aspects1425of the system architecture include the perceptual angle of view software logic1426, the multi-player program elements1427as well as other elements1428related to each of the previous sections such as guidance systems, network modules and the like.

Referring now toFIG. 15, 1500represents the processing steps taken for the game program based on the screen type in which the game is projected or displayed. The game software used to process the logic needed to display one or more visual objects on the one or more screens resides in the game console and could produce the one or more images to display on one or more screens by determining the physical arrangement of the attached one or more screens or optical adapters described in the one or more alternate embodiments of the instant invention. In addition to the embodiment of the multi-screen logic game software residing within the local game console, this software could be located on a network node connected to the game system either through a wired or wireless connection.

A particular embodiment of the current invention could receive an indicator from the one or more display units, screens or projector and/or optical adapters to determine the number, dimensions and arrangement of the screens or one or more walls which could be available to present the one or more images to the one or more players.

For example, if the screen is a multi-screen or multi-faceted screen, different logic paths are taken than for a simple flat screen. In addition to this, logic is required to scale the capabilities of the system back for a single screen so that the multi-screen capabilities are not used when the one or more receiving display units may not be capable of receiving the data.

Images and game logic within the software of the game console could receive one or more video settings1502which dictate how the game software manages the output to the one or more facets and how the hardware receives the one or more facets and produces the output to the one or more displays. The one or more video settings1502are received by the model generation component1503which produce the image frames and sprite animations necessary for the given output channel.

If the output type is a projection1504where the image is transformed in a projection attachment, the image from the projection1504is passed to one of either the single facet game process option1510or the multi-facet game process option1506. If the output type is not a projection1504, the image data is passed to one of either the multi-screen process option1516or the single screen processing option1511. If the image data is displayed using a projected image against a multi-facet receiver1501, the output is generated for each facet and repeated while the game play indicator1508shows that the game is not done. As each facet is received, the process is repeated from1506to1508until the game finished indicator1508is done. At the point when the game done indicator1508is set, the process is stopped1509.

If the output type is a projection1504but is not a multi-facet receiver or the output type is not a projection1504but a single screen output1514, both output types are handled using the single screen processing receiver1511. The output is generated using the single screen processing receiver1511and repeated while the game play indicator1512shows that the game is not done. As each image is received, the process is repeated from1510to1512until the game finished indicator1512is set. At the point when the game done indicator1512is set, the process is stopped1513.

If the output type is an array of screens1514where the images are delivered as multiple facets1516, each facet is delivered to an individual screen processor1517for that given facet. This is repeated for each facet1516where the game done setting1518is false. Once all of the facets for a single iteration have completed processing, the step is repeated from1515and then each facet in the iteration is processed from the facet counter1516, using the single screen process receiver1517until the game play done indicator1518is set to true. Once the game play done indicator is set to true, the process is stopped1519.

Referring now toFIG. 16, an over-head representation of the advanced field of view projection system1600with cameras, speakers, feedback units and effective waveforms used to enhance the gameplay experience is depicted. The player1601is shown in the center, but the player can be located any where in the room and the system1600can support as many players as desired.

The example embodiment of the current invention makes use of several advancements in technology in the system. As described previously, the projector1602transmits one or more images to the screen or walls as shown inFIG. 8by the curved wall801orFIG. 9by the overhead901and curved wall902, which surrounds the one or more players1601. Speakers and feedback systems1607-1614are noted in multiple positions surrounding the one or more players1601and distributed in this example as a surround sound system where1613, by example only, denotes the sub-woofer and corresponding feedback unit. Recall the feedback system is shown inFIG. 10of the present invention. The waveform signals1615surrounding the one or more players denote the responding air blasts from the air cannons, shown inFIG. 10as reference number1009, or sounds from the speaker system1607-1614. Waveform signals may also be emitted from the one or more players1601and picked up by the one or more microphones as part of the feedback systems1607-1614. Cameras1603-1606are used to track the one or more player motions, receive motion commands, detect target ranges, help in calculating trajectory pressure, and scan player information into the system. The camera1604, by example only, is located above and just behind the player1601looking down. All devices, wired or wireless, are connected to a game console.

FIG. 17represents the high-level process logic made in utilizing many of the aspects of the current invention in a one or more player embodiment1700. Gameplay begins1701where the option is made by the one or more players to either turn on the game learning mode or not1702by one or more motion instructions, or turned on automatically one the game starts. In the diagram, the one or more players are shown in the center of the play area, but one or more players can be any where in the room and can have as many players in the room as desired and can, again, be expanded by network connections to one or more other one or more player gameplay sessions.

The game software used to process the ability to add, remove and change certain aspects of the one or more motion or audio instructions as a portion of the learning mode of the game system may reside within the game console and could store and/or retrieve the one or more motion or audio instructions within the game console, but also could reside on a network node connected to the game system either through a wired or wireless connection.

If the game learning mode1702is turned on1703, the game software detects the player's motion or audio messages1704by use of the motion detection, camera and audio recording equipment described inFIG. 10as a microphone1021referred to1012,1010and1021and defines the specified motions as game commands by converting the one or more motions made by one or more players and comparing the one or more motions with existing motion instructions in the system storage unit which is connected to the game console or attached to the system over a network connection later described inFIG. 18referring to number1804,1808and1807, as example only.

In the manner described, a motion instruction is added to the game system's storage unit by first having the game learning mode turned on. This is performed by either a predetermined, pre-stored motion instruction made by the player or an audio command. For example, by the player waving their hand in front of the screen, the motion detection unit senses the motion, the camera captures the motion, the game system receives the motion images and compares them to images stored in the system storage unit, performs a match on the data analyzed from the images and searches for a match. In this example, the match is made to turning the learning mode on in the system. Once the match is made, the game learning mode is switched on and the player can begin adding, editing or removing audio and motion instructions.

Alternatively, an audio command can also be issued by the player. This audio command is received by the game system microphone, matched against a storage unit of pre-recorded audio commands, and, if a match is found, performs the command. In this case, the command is to start the system's learning mode. Once the match is found, the learning mode begins and the player can begin to add, remove or edit motion instructions or audio commands.

Once the learning mode is on, the system repeatedly learns the physical movements of a player or group of players and provides on-screen lists, for example, of items, tasks, or other actions the player may wish the game system to produce or perform. In the example described inFIG. 11andFIG. 12, the player makes a motion to hoist a bazooka and, even though the player may not physically have a bazooka in their possession, the bazooka appears and is hoisted by the avatar on the player's screen. In this example, the player makes a motion to hoist a gun. The player may stagger, as an example, due to the weight of the imaginary gun. Once the game system captures the motion, compares the information it receives from the motion, and either finds one or more matches, or none at all, it presents either the player's avatar performing the intended one or more commands or, for example, a list of possible one or more commands the player may be interested in having the avatar or system perform.

In addition to a single avatar performing one or more commands, the motion or audio commands could set off a series of one or more commands or reactions within one or more avatars, other players, objects as well as object, players, avatars, etc. which are not even appearing on the screen yet.

At the point where the player is presented with a list, for example, of choices for the related motion or audio instruction, the player can choose the resulting action, item, etc. from the list presented to them, or they can choose additional levels of detail which could provide them with many more choices, if desired. In this manner, a motion or audio instruction produces a motion result, where the motion result can be any of one or more actions, items or another motion/position change. For example, a single command or motion could be issued by one or more players to contact all teammates for a game session. The system, for example, could attempt to contact each teammate, by text, email, phone call, system notification, etc. to organize the gameplay and could satisfy the requirements for the game by substituting in virtual players until the real players joined.

Once the player makes a selection from the list, for example, the motion instruction may be reviewed so that the player can confirm the motion instruction and the motion instruction with the resulting motion result is stored in the system storage unit. This motion instruction can be made private, public or it can be stored in a collection or it can be sent to a server which can allow other players to purchase and/or download the associated motion instruction for their own game. The player also has the ability to make a motion instruction editable by another player or fixed so that players using the motion instruction could use the instruction for its original intent.

Motion instructions also have the ability to be modified by the one or more players and re-associated with other one or more motion results if the one or more players desire. To do this, the player could use a universal motion or audible instruction to open the motion instruction editor. They could then form the motion or audible command so that the system could retrieve the motion instruction and associated motion result from the storage unit, for example. At this point, the player could have the opportunity to either change the motion instruction and overwrite the existing one, delete it or copy it to another motion instruction. The player could also have the ability to modify, remove or change the motion result. Once the player has finished making their changes to the motion instruction, the motion instruction is stored in the game console storage unit.

In order to modify, add to or remove one or more existing motion or audible instructions, either in full or partial, the instruction must be either selected by the player from a list, for example. To do this, the player could review a list of instruction text or images on the screen and select one or more of them by using, for example, a series of one or more motions to select the one or more instructions. This could be done by hand motion, audible command, finger motion, touch screen, using a pen, or any other method. Once the one or more instructions have been selected, the player may choose one or more actions that can be taken upon the one or more instructions. In this case, these could include the modification or removal or one or more full or partial portions of one or more instructions. For example, in the case of the bazooka scenario, the player may want to add to the motion when they act like they are lifting a bazooka, they could immediately fire it at an opponent once it has been aimed and then the bazooka is put away because the player does not want to carry it around with them. In this case, the player would add the motion of aiming, firing and putting the bazooka away to the already existing instruction which shows the avatar lifting the bazooka when instructed to do so. In this manner, the player would have the ability to save over the existing instruction, create a new copy of one, as well as transmit it, for example, over the network, for other players to use it.

The game system of the current invention also provides range flexibility which allows the user to produce a motion instruction within a range of motions, motion speeds and direction and generalizes the motions it detects and associates with the storage unit the related motion result. In this manner, the player is not required to produce the motion instruction in the same position or orientation as the motion instruction was originally created; so there is not reason to memorize the exact position, orientation, speed and range of motion of the original motion instruction. This range flexibility covers the player being, in the case of the football scenario, a left-handed or right-handed thrower, their head being back or cocked in a certain direction, as well as many other positions and motions so that there are a large number of potential motions which fall into the range flexibility window however, these are not infinite. This being said, there are specific motions which could be as subtle as a glance or a turn, for example, by the player to “fake” a pass or a pass' direction, etc. and the player and system could be aware of these nuances so that the system can compensate for the differences required to retrieve the associated motion result from the game system's storage unit.

Restrictions, based on the game system, may also be in place where the player may not have what they want until a certain level has been achieved or a certain amount of virtual money is available to spend. This restriction is dictated by the game author and it tied to the array of potential items, actions, or motions available for the player to choose from. For example, a player may have just started a game and they want a super-cannon they could use to overwhelm their opponents. However, the game author has locked this item until a particular level by the player has been achieved.

The player has the opportunity to add, modify and remove these motion instructions stored or to be stored in the game console or remote server by associating the physical movements with the commands or actions in the game. These commands and motion sequences or audio messages1704are stored in the advanced meta-file object format1705in the system storage unit, shown inFIG. 13as1315,FIG. 18as either1807or1809, orFIG. 19within the console1907, the one or more servers1907or the console1908. Further player motion sequences or audio messages1704are interpreted as the associated commands or actions by performing storage unit searches using signatures from the audio or command instructions and searching for comparative data which could result in a match against the given audio or command instruction in the game console or network storage unit.

When the player turns off the game learning mode1706, either by an audio or motion command or when it automatically turns off, normal gameplay begins1707.

If the game learning mode1702is not turned on1708by the one or more players, either by an audio or motion command, normal gameplay begins1707. If audio or motion instructions are performed during gameplay and a match is not found in the system for the audio or motion command, then the motion or audio command is ignored by the system.

If the one or more audio or motion instructions are intended to have one or more responses by the system console, the game learning mode can be switched on by a given command and can be added to the game system storage unit at any time.

As gameplay progresses, the game software in the game console or received across the network to the game console, as an example only, either presents the one or more players with targets1709which can be fired upon or receives commands from the one or more players1713. These targets are produced by the game software running on the game console. The one or more players can fire at the one or more targets by, as an example, moving their hands back and forth in a shooting motion. This shooting motion is interpreted by the game system as a motion instruction and, taking in consideration the angle and timings of the one or more motions, can hit or miss the targets and show the one or more results to the one or more players on the screen as they “fire” upon the one or more targets.

During the time that the game software presents the one or more targets to the one or more players1709, the game software tracks the one or more players' positions1710. If the one or more targets in this example have the ability to fire at the one or more players, the game software reads and analyzes the target type, its capabilities, and “fires”1711upon the one or more players using the one or more air cannons, for example, as depicted inFIG. 10referring to number1009and/or other devices shown inFIG. 10to simulate a gun or cannon, etc. firing on the one or more players in battle. The player, in turn, responds to the one or more target attacks1712by moving their hands at the targets in a shooting motion.

In the described example, the game software, stored in either a local or remote game console, either by disk, chip, drive, memory, etc., would be accessed to react to motion commands in a method similar to the following description. As a hypothetical, simplified example, five aircraft could be flying over the one or more player's heads. The aircraft may, for example, appear on the ceiling portion of the screen, as shown inFIG. 5as506orFIG. 9as901. The aircraft could be shooting at the one or more players on the “ground” at, for example, 45 degree angles. Since the aircraft are moving at a certain velocity relative to the one or more players' velocities, the angles of each of the one or more players' devices, such as bazookas, aircraft, etc., must be considered in the mathematical calculation necessary to simulate a “hit” either by the approaching aircraft or the one or more players. In addition to the vertical and horizontal angles, multiple velocities of the one or more players, the velocities and capabilities of the one or more “guns”, including their corresponding firepower and damage capabilities, the damage to and around units in the area must be considered, for example. In this way, a “hit” made by the one or more players on the approaching aircraft would be achieved if the angle of the aircraft, the aircraft speed, the player speed, angle of the projectile, and the speed of the one or more projectiles “meet”, for example, at a particular point.

Regardless of how the game software receives the commands from the player1713, the game software receives the commands either by a wired or wireless controller1714, touch screen commands1715, audio messages1716or motion sequences1717and attempts to interpret the command(s) as stored or not1718.

If the command is not stored, it is considered a player move or other response1720and the game software displays the results of the actions1721. For example, if a player is walking and they turn a corner, the avatar on the screen can perform the same motion, but this is simply done by the gameplay system monitoring and mimicking the player on the screen. This is not handled in the same way in the system as a player drawing back to throw a virtual football to an open wide receiver. Even though the avatar could walk through a city, for example, and certain three-dimensional graphical models of the city could be generated by the gameplay system, as well as the avatar looking like they are moving, it does not require a lookup in the gameplay storage unit to determine the motion result of a motion which instigates a new gameplay result, for example, the first time the player, as a quarterback in a game, receives and throws the football. Subsequent plays made by the player in the context of the game are expected to be playing as a quarterback until a new motion instruction is received by the system.

Other motions are ignored altogether. As an example, if the player has instructed the avatar to make a particular move which takes, for example, a few minutes, the player has the opportunity to get a drink of water. The motions made by the player to get a drink of water or to rest are not recorded in the system as a gameplay motion and these are, in essence, ignored by the system. In a scenario where a player is playing a football game, for example, the player could snap the ball and throw it to a receiver. Once the ball has been thrown by the quarterback, the players' motions could be ignored by the system as control is now given to the other players which include a potential receiver and potential tacklers. If the receiver catches the ball and gets tackled and the gameplay ends for the given down, the motion of the other players can be ignored. In addition, in this scenario, the receiving player, if real, can be virtually tackled by a player remotely located and connected to the game through a network connection. In the case when this takes place, the player playing as the receiver may still be standing in their room, but their avatar on the screen is lying on the ground with several tacklers on top of him. This picture is shown on the screens of all players (and/or observers of the gameplay).

There is also a point where the game software derives a random outcome and advances play in the direction of that outcome. At this point, all motion made by other real players is ignored by the system and picked up again when a motion instruction or other interactive play segment begins.

There could also be times during gameplay where a slight variation of a “known” move is made by one or more players. In this case, the system may ask the one or more players what they are intending to do and may present the ability for the one or more players to attach a motion result to the suspected motion instruction or to ignore the instruction. If they wish to add the move to the system as a new or appended motion instruction, they may have the ability to do this and it may be stored in the game system storage unit. If the one or more players notify the game system that the move was really an already existing motion instruction, for example, the one or more players may have the opportunity to connect the move to an already existing one or more motion instructions so that the game system may interpret both of the moves as a single motion instruction. In this manner, the system may have the ability to characterize particular motions and when a motion lies outside of the range of these characteristics, the player may have the opportunity to notify the game system of their intent.

For example, a player playing as a quarterback in a football game could be trying to fake a thrown to a receiver by dropping the ball behind them before throwing it and then catching it with their other hand and tossing it to another player. Since the real player does not necessarily have a ball, the system could determine that their left hand, for example, is being placed behind their back in a very unusual position. At this point, the game system could determine that the motion is new by receiving the motion data from the camera and motion detector, comparing the motion data with the system's storage unit, checking if the motion data already exists in the system. If the system does not find this set of moves within the storage unit, it could prompt the one or more players on the screen if this is a special move. If it is, the player could perform many tasks or simply ignore the prompt. For example, the player could agree that this is a new motion instruction. In this case, the player would see the prompt on the screen, for example, and react to it by performing an audio of motion instruction. In this way, the system could, for example, receive the instruction and show a series on one or more menu items on the screen. The one or more menu items could be answered by the one or more players on the screen. In this case, this new move could be given a name, posted to the one or more players' storage units as well as shared over the network and stored in a storage unit which could be accessed by other players or another one or more players storage units, such as a team.

Again, in the described example, the motion instruction could be stored and shared by the one or more players publicly or privately among themselves for their team to utilize.

Beyond this, the subsequent motions, such as tossing the ball to the left or the right to another player could be captured and handled accordingly instead of throwing the ball as normal.

If the command is stored1722and found by the console software comparing the one or more player audio or motion command signatures to the one or more motion instruction records stored in the system storage unit, the game software retrieves the one or more items or enables the actions' capabilities associated with the stored command1723signature which is found in the database (storage unit). The result is presented to the player on the display unit1721by either presenting the item associated with the audio or motion instruction or by showing the avatar on the screen producing the movement associated with the audio or motion instruction found in the database record.

If the gameplay is finished1724, the gameplay ends1725, otherwise, it continues1707.

The processes used to relate the motion instructions to the resulting items or commands in the game system are described inFIG. 18where a motion instruction is captured by the camera system1801, transforming the motion into a motion sequence, sending the motion sequence to the motion detection unit1802. The motion detection unit1802converts the motion sequence into one or more motion packets, transforming the motion packets into edge points, sending the edge points to the motion logic component1803. The motion logic component1803receiving the edge points, forming a database query made up of the edge points, sending the database query to the database logic component1804where the database query is run against the database1807, the database logic component1804receiving the query results from the database1807, sending the results to the response handler1805. The response handler1805, receiving the results of the database query from the database logic component1804, either prompts the player for more information, receiving the one or more responses from the player and/or sending the results of the motion instruction and/or the results of the player prompts to the significance learning module1806. The significance learning module, receiving the information from the response handler1805, stores the motion instruction and result in the database1807.

In addition, each of the nodes described inFIG. 18could be located in a single or multiple nodes, each of these being located either locally within the game system or outside of the game system on a network device, disk, chip, etc. over a wired or wireless connection.

At any time, the player may ask the system to store or retrieve additional motion instructions and/or updates from a network1808which is connected to a remote database1809which may include connections from many other players having the same ability to store and retrieve motion instructions and/or updates. The system can include a set of motion or audio instructions and can be updated from a server based on the universal instruction lists as well as motion instructions for a particular game the player has purchased. These updates can be made by system developers and may also be made by other players. In this manner, motion or audible instructions are not limited to players or system developers. The associated audio or motion instructions could be provided, for example, by professional quarterbacks. These could be uploaded, as an example, to a remote server and made available to players. Motion instructions which may appear to override custom motion instructions that the player has already produced on their system may result in a prompt which the player can answer. These updates can happen when the player first starts the system or in the background so that gameplay is not interrupted. Players can also choose to have the system overwrite any potential conflicting motion instructions with the updates if they wish.

Likewise, in the same way that motion instructions are continually updated, the models for allowable ranges of motion is continually updated. So as range models improve over use and time, the details to these models are also updated in the player systems to improve the player's experiences. For example, if an improvement is made in a lateral snap where the quarterback can hide the ball for a second and toss it to a receiver, the original models for this may be crude or missing from the system so that the motion result is not available to the player for them to choose, then an update to the system could provide the system with the motion result the player wished to associate with the motion instruction in the first place.

Referring now toFIG. 19, gaming system1900includes multiple players, represented as the players1901and1902receiving and sending information to and from the input/output device1903connected to the console1904connected to a screen1905and a network1906where information can be shared, retrieved or stored at the server1907.

In addition to the above, multiple players represented as player1911and player1912send and receive input from the input/output device1910connected to a console1908and a screen1909and a network1906where information can be shared, retrieved or stored at the server1907as well as interact with multiple players1901and1902across the network. Likewise, players1901and1902can interact through the input/output device1903with players1911and1912using their input/output device1910.

In this scenario, if the players1901,1902,1911and1912are playing together in the same game, the screen interactions, avatars of the associated players, including the motion instructions for multiple players1901and1902could appear on the screen1909across the network for the multiple players1911and1912to view and interact with, and the avatars of the other players1911and1912, as well as the motion instructions, could appear on the screen1905which is viewed by players1901and1902.

Furthermore, in addition to the described scenarios resulting in a collection of one or more motion or audible instruction sets, the player could designate the collection as a playbook. The playbook could belong to a team, real or virtual, for example, and could have private or public characteristics associated with it. The playbook could be made up of motion instructions and audible instructions. The audible instructions could be configured by the player to be heard, for example, by teammates, but not by opponents.

For example, if the team is in a huddle and the quarterback is speaking to the players, the players on the side of the avatar speaking the commands could hear the information, while the opponents might not. The audio level of the quarterback also varies by their loudness level and the direction in which they are speaking. For example, the audio information from the quarterback in the huddle could not be heard by the opposing team members but the commands screamed by the quarterback on the line of scrimmage could be heard by both player team members but it might by muffled by the crowd or because the quarterback is shouting in the opposite direction. The parameters required for the audible commands to be public or private, for example, could be stored with the audible commands so that, when the commands are retrieved, the system would know that only certain speakers for certain players, for example, would play the corresponding sound are that the volume levels would be different on the corresponding speakers so that they would mimic the player's position, audio level, intent, etc.

Likewise, the same could be stated pertaining to the coaches and their signals to their players, other coaches, etc. Quarterbacks, for example, could hear their coaches, but not the opposing coaches as well as determining their hand signals, etc.

The present invention also lends itself to advertising around this technology as well as selling virtual seating, using virtual currency or otherwise, where fans could purchase a seat to get a particular angle on the game. The better price paid for the seat, the better the angle and audio quality is presented to them by the system. Player's could also open up private data such as playbooks and audio commands to particular observers if they desire.

Furthermore, the present invention incentives to become early adopters to the technology is large due to the ability to promote the particular motion information creator's name or brand so that later adopters have the luxury of making use of the existing motion information that the early adopters created.

Referring now toFIG. 20andFIG. 21, gaming system2000and2100show an example of the multiple physical connections of the present invention including the base unit2001which includes at least one processor2002and at least one memory2003having at least one learning module2004and at least one storage unit2005connected to at least one feedback system2007, having at least one camera2008, microphone2009, and optionally one or more motion detectors2010, speakers2011and location units2012, and at least one display2006, alternatively, a projector2013optionally having a lens adapter2014or multiple projectors2013without a lens adapter2014. The gaming system2000optionally connected by a connection2015to a wired or wireless network2016via connector A, connected toFIG. 21using connector A′ connected using a connection2101to at least one base system2102which includes at least one processor2103and at least one memory2104having at least one learning module2105and at least one storage unit2106connected to at least one feedback system2110, having at least one camera2111, microphone2112, and optionally one or more motion detectors2113, speakers2114and location units2115, and at least one display2107, alternatively, a projector2108optionally having a lens adapter2109or multiple projectors2108without a lens adapter2109.

In one embodiment, a controller-less gaming system comprises a base system including at least one processor and memory, and a learning module, a feedback system including at least one: camera, microphone, and motion detector, wherein the feedback system is communicatively coupled to the base system, a display communicatively coupled to the base system, and a storage unit communicatively coupled to the base system, wherein the feedback system receives input from at least one of the camera, the microphone, and the motion detector, and sends the input to the base system, wherein the base system compares the input to other input in the storage unit and if the comparison produces a non-satisfactory result: the base system chooses a closest result to the input if the closest result is above or equal to a threshold and displays the closest result or the base system chooses a default result to the input if the closest result is below the threshold and displays the default result, and if the closest result or the default result is not an intended result, the base system receives an adjusted input from the feedback system and displays a result of the adjusted input on the display. The input and the other input include at least one of: angles of a body and body part, movement of the body and the body part, direction of the body and the body part, speed of the body and the body part, audio from the body or the body part, biometric information the body or the body part, items attached to the body or the body part, or items supporting the body or the body part.

The base system stores the adjusted input result as another one of the closest result in the storage unit, displays the closest result when it receives another adjusted input result without an adjusted input from the feedback system, displays the closest result and the adjusted input result and provides an intended action associated with the closest result and an intended action associated with the adjusted input result. The base system is communicatively coupled to at least one of: a plurality of network connections, a remote storage unit, a projection system, a projection system using a lens adapter, a feedback system, a feedback system using one or a plurality of speakers, a feedback system having a location unit, a base system, a display, a curved display, a curved display with an overhead component, a wired display, a wireless display, a local display, a remote display, a wired connection, a wireless connection, an air cannon or a storage unit.

At least one person (which may be a player or a spectator or both at varying times during the game) is present locally with the base system or present remotely from the base system.

The current invention provides a number of solutions including: A controller-less gaming system, comprising: a base system including at least one processor and memory, and a learning module; a feedback system including at least one: camera, microphone, and motion detector, wherein the feedback system is communicatively coupled to the base system; a display communicatively coupled to the base system; and a storage unit communicatively coupled to the base system; wherein the feedback system receives input from at least one of the camera, the microphone, and the motion detector, and sends the input to the base system; wherein the base system compares the input to other input in the storage unit and if the comparison produces a non-satisfactory result: the base system chooses a closest result to the input if the closest result is above or equal to a threshold and displays the closest result; or the base system chooses a default result to the input if the closest result is below the threshold and displays the default result; and if the closest result or the default result is not an intended result, the base system receives an adjusted input from the feedback system and displays a result of the adjusted input on the display. The base system stores the adjusted input result as another one of the closest result in the storage unit, the base system displays the closest result when it receives another adjusted input result without an adjusted input from the feedback system, the base system displays the closest result and the adjusted input result and provides an intended action associated with the closest result and an intended action associated with the adjusted input result, the base system is communicatively coupled to at least one of: a plurality of network connections; a remote storage unit; a projection system; a projection system using a lens adapter; a feedback system; a feedback system using one or a plurality of speakers; a feedback system having a location unit; a base system; a display; a curved display; a curved display with an overhead component; a wired display; a wireless display; a local display; a remote display; a wired connection; a wireless connection; an air cannon; or a storage unit. At least one person is: present locally with the base system; or present remotely from the base system. The input and the other input include at least one of: angles of a body or a body part; movement of the body or the body part; direction of the body or the body part; speed of the body or the body part; audio from the body or the body part; biometric information the body or the body part; items attached to the body or the body part; or items supporting the body or the body part.

Claims

- A method, comprising: receiving by a model generation component an indicator of a display type;selecting by the model generation component based on the indicator, a multi-facet game process option;generating image frames for a content data projection by the multi-facet game process option;passing the image frames to a projector;transmitting light and image data corresponding to the image frames through a lens adapter fitted onto the projector;and projecting the content data from a plurality of facets connected to the lens adapter onto a receiving screen at a plurality of angles to display content data.

- The method of claim 1 , wherein transmitting the light and image data through the lens adapter comprises transmitting the light and image data through an optical lens.

- The method of claim 1 , wherein transmitting the light and image data onto the plurality of facets comprises transmitting the light and image data onto eight facets.

- The method of claim 1 , wherein transmitting the light and image data onto the plurality of facets comprises transmitting the light and image data onto facets constructed of at least one of glass, mirrors, plastic and metal.

- The method of claim 1 , wherein the projecting the content data onto a receiving screen at a plurality of angles to display content data viewable by a user comprises projecting the content data onto a plurality of viewing display devices which together produce a viewing angle arc extending between 120 degrees and 180 degrees.

- The method of claim 1 , wherein the projected display content comprises video game images displayed to a user actively playing a video game.

- The method of claim 6 , further comprising: receiving feedback from the user via the user's game playing motions;and changing the display content data based on the feedback.

- An apparatus, comprising: a model generation component configured to: receive an indicator of a display type;select, based on the indicator, a multi-facet game process option;generate image frames for a content data projection by the multi-facet game process option;and pass the image frames to a projector configured to project a video signal;a projector video adapter lens connected to the projector comprising a lens connector fitted onto the projector;an upper facet set comprising a plurality of upper facets;and a lower facet set comprising a plurality of lower facets, wherein the upper facet and the lower facet are configured to emit the video signal;wherein the projector is configured to transmit light and image data corresponding to the image frames through the lens adapter, and transmit the light and image data onto at least one of the upper facet set and the lower facet set and thence onto a screen at a plurality of angles to display content data.

- The apparatus of claim 8 , wherein the lens adapter comprises an optical lens.

- The apparatus of claim 8 , wherein the lower facet set and the upper facet set together include eight facets.

- The apparatus of claim 8 , wherein the lower facet set and the upper facet set are constructed of at least one of glass, mirrors, plastic and metal.

- The apparatus of claim 8 , wherein the projector produces a view angle arc that extends between 120 degrees and 180 degrees.

- The apparatus of claim 8 , wherein the projected display content comprises video game images displayed to a user that plays the video game.

- The apparatus of claim 8 , further comprising a receiver configured to receive feedback from a user via the user's game play motions, and change the display content data based on the feedback.

- A non-transitory computer readable storage medium comprising instructions that when executed cause a processor to perform: receiving an indicator of a display type;selecting, based on the indicator, a multi-facet game process option;generating image frames for a content data projection by a multi-facet game process option;passing the image frames to a projector;transmitting light and image data corresponding to the image frames through a lens adapter fitted onto the projector;and projecting the content data from the plurality of facets connected to the lens adapter onto a receiving screen at a plurality of angles to display content data.

- The non-transitory computer readable storage medium of claim 15 , wherein transmitting the light and image data through the lens adapter comprises transmitting the light and image data through an optical lens.

- The non-transitory computer readable storage medium of claim 15 , wherein transmitting the light and image data onto the plurality of facets comprises transmitting the light and image data onto eight facets.

- The non-transitory computer readable storage medium of claim 15 , wherein transmitting the light and image data onto the plurality of facets comprises transmitting the light and image data onto facets constructed of at least one of glass, mirrors, plastic and metal.

- The non-transitory computer readable storage medium of claim 15 , wherein the projecting the content data onto a receiving screen at a plurality of angles to display content data viewable by a user comprises projecting the content data onto a plurality of viewing display devices which together produce a viewing angle arc extending between 120 degrees and 180 degrees.

- The non-transitory computer readable storage medium of claim 15 , wherein the processor is further configured to perform: receiving feedback from a user via the user's game playing motions;and changing the display content data based on the feedback.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.