U.S. Pat. No. 9,300,612

MANAGING INTERACTIONS IN A VIRTUAL WORLD ENVIRONMENT

AssigneeInternational Business Machines Corporation

Issue DateJanuary 15, 2009

Illustrative Figure

Abstract

Methods and apparatus associate a computed trust level to avatars that interact with one another in a simulated environment. The avatars may represent legitimate users of the virtual world or spammers. System monitoring of each avatar provides ability to recognize potential spammers and create an alternate indication of the spammers. A user index may be used to store data describing attributes of each avatar for analysis using programs stored in memory.

Description

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS As stated above, virtual world is a simulated environment in which users may inhabit and interact with one another via avatars. The avatars may represent legitimate users of the virtual world or spammers. A “spammer” generally refers to an avatar controlled by a user (or computer program) in a manner so as to be disruptive to users of the virtual environment. Most commonly, the spamming avatar is used to deliver unsolicited commercial messages to users of the virtual environment. In real life, people can judge one another based on actual attributes that often cannot be readily disguised making it possible to evaluate trustworthiness with some level of confidence. Applying a trust level to the avatars enables identifying trustworthiness even in the virtual world. The trust level predicts probability of the avatar being a spammer and thereby enables the legitimate user to recognize the avatar of another legitimate user compared to the avatar of the spammer. A ranking algorithm may assign the trust level based on actions, conversations, and/or dialogue of the avatar being monitored. In practice, a user index may be used to store data describing attributes of each avatar for analysis using programs that are stored in memory and that execute the ranking algorithm. Monitoring avatars in the virtual world provides an ability to identify and police actions of spammers within the virtual world. In the following, reference is made to embodiments of the invention. However, it should be understood that the invention is not limited to specific described embodiments. Instead, any combination of the following features and elements, whether related to different embodiments or not, is contemplated to implement and practice the invention. Furthermore, in various embodiments the invention provides numerous advantages over the prior art. However, although embodiments of the invention ...

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS

As stated above, virtual world is a simulated environment in which users may inhabit and interact with one another via avatars. The avatars may represent legitimate users of the virtual world or spammers. A “spammer” generally refers to an avatar controlled by a user (or computer program) in a manner so as to be disruptive to users of the virtual environment. Most commonly, the spamming avatar is used to deliver unsolicited commercial messages to users of the virtual environment. In real life, people can judge one another based on actual attributes that often cannot be readily disguised making it possible to evaluate trustworthiness with some level of confidence. Applying a trust level to the avatars enables identifying trustworthiness even in the virtual world.

The trust level predicts probability of the avatar being a spammer and thereby enables the legitimate user to recognize the avatar of another legitimate user compared to the avatar of the spammer. A ranking algorithm may assign the trust level based on actions, conversations, and/or dialogue of the avatar being monitored. In practice, a user index may be used to store data describing attributes of each avatar for analysis using programs that are stored in memory and that execute the ranking algorithm. Monitoring avatars in the virtual world provides an ability to identify and police actions of spammers within the virtual world.

In the following, reference is made to embodiments of the invention. However, it should be understood that the invention is not limited to specific described embodiments. Instead, any combination of the following features and elements, whether related to different embodiments or not, is contemplated to implement and practice the invention. Furthermore, in various embodiments the invention provides numerous advantages over the prior art. However, although embodiments of the invention may achieve advantages over other possible solutions and/or over the prior art, whether or not a particular advantage is achieved by a given embodiment is not limiting of the invention. Thus, the following aspects, features, embodiments and advantages are merely illustrative and are not considered elements or limitations of the appended claims except where explicitly recited in a claim(s). Likewise, reference to “the invention” shall not be construed as a generalization of any inventive subject matter disclosed herein and shall not be considered to be an element or limitation of the appended claims except where explicitly recited in a claim(s).

One embodiment of the invention is implemented as a program product for use with a computer system. The program(s) of the program product defines functions of the embodiments (including the methods described herein) and can be contained on a variety of computer-readable storage media. Illustrative computer-readable storage media include, but are not limited to: (i) non-writable storage media (e.g., read-only memory devices within a computer such as CD-ROM disks readable by a CD-ROM drive) on which information is permanently stored; (ii) writable storage media (e.g., floppy disks within a diskette drive or hard-disk drive) on which alterable information is stored. Such computer-readable storage media, when carrying computer-readable instructions that direct the functions of the present invention, are embodiments of the present invention. Other media include communications media through which information is conveyed to a computer, such as through a computer or telephone network, including wireless communications networks. The latter embodiment specifically includes transmitting information to/from the Internet and other networks. Such communications media, when carrying computer-readable instructions that direct the functions of the present invention, are embodiments of the present invention. Broadly, computer-readable storage media and communications media may be referred to herein as computer-readable media.

In general, the routines executed to implement the embodiments of the invention, may be part of an operating system or a specific application, component, program, module, object, or sequence of instructions. The computer program of the present invention typically is comprised of a multitude of instructions that will be translated by the native computer into a machine-readable format and hence executable instructions. Also, programs are comprised of variables and data structures that either reside locally to the program or are found in memory or on storage devices. In addition, various programs described hereinafter may be identified based upon the application for which they are implemented in a specific embodiment of the invention. However, it should be appreciated that any particular program nomenclature that follows is used merely for convenience, and thus the invention should not be limited to use solely in any specific application identified and/or implied by such nomenclature.

FIG. 1shows a block diagram that illustrates a client server view of computing environment100, for one embodiment. As shown, the computing environment100includes client computers110, network115and server system120. In one embodiment, the computer systems illustrated inFIG. 1are included to be representative of existing computer systems, e.g., desktop computers, server computers, laptop computers, and tablet computers. The computing environment100illustrated inFIG. 1, however, is merely an example of one computing environment. Embodiments of the invention may be implemented using other environments, regardless of whether the computer systems are complex multi-user computing systems, such as a cluster of individual computers connected by a high-speed network, single-user workstations, or network appliances lacking non-volatile storage. Further, the software applications illustrated inFIG. 1and described herein may be implemented using computer software applications executing on existing computer systems, e.g., desktop computers, server computers, laptop computers, and tablet computers. However, the software applications described herein are not limited to any currently existing computing environment or programming language, and may be adapted to take advantage of new computing systems as they become available.

In one embodiment, the server system120includes a central processing unit (CPU)122, which obtains instructions and data via a bus121from memory126and storage124. The processor122could be any processor adapted to support the methods of the invention. The memory126is any memory sufficiently large to hold the necessary programs and data structures. The memory126can be one or a combination of memory devices, including Random Access Memory, nonvolatile or backup memory, (e.g., programmable or Flash memories, read-only memories, etc.). In addition, the memory126and storage124may be considered to include memory physically located elsewhere in the server system120, for example, on another computer coupled to the server system120via the bus121. The server system120may be operably connected to the network115, which generally represents any kind of data communications network. Accordingly, the network115may represent both local and wide area networks, including the Internet.

As shown, the memory126includes virtual world130. In one embodiment, the virtual world130may be a software application that accepts connections from multiple clients, allowing users to explore and interact with an immersive virtual environment by controlling the actions of an avatar. Illustratively, the virtual world130includes virtual objects132. The virtual objects132represent the content present within the environment provided by the virtual world130, including both elements of the “world” itself as well as elements controlled by a given user. Illustratively, the storage124includes an object index125, a user index105, and interaction records106. The object index125may store data describing characteristics of the virtual objects132included in the virtual world130and is accessed to perform searches of the virtual objects132. In one embodiment, the user index105stores records describing the avatars, such as data regarding trust ranking of the avatars as determined based on the interaction records106, which include data related to interactions between the avatars. In one embodiment, the trust ranking is assigned by the system against an arbitrary scale based on analysis described further herein.

As shown, each client computer110includes a CPU102, which obtains instructions and data via a bus111from client memory107and client storage104. The CPU102is a programmable logic device that performs all the instruction, logic, and mathematical processing in a computer. The client storage104stores application programs and data for use by the client computer110. The client storage104includes, for example, hard-disk drives, flash memory devices, and optical media. The client computer110is operably connected to the network115.

The client memory107includes an operating system (OS)108and a client application109. The operating system108is the software used for managing the operation of the client computer110. Examples of the OS108include UNIX, a version of the Microsoft Windows® operating system, and distributions of the Linux® operating system. (Note, Linux is a trademark of Linus Torvalds in the United States and other countries.)

In one embodiment, the client application109provides a software program that allows a user to connect to the virtual world130, and once connected, to explore and interact with the virtual world130. Further, the client application109may be configured to generate and display a visual representation, generally referred to as the avatar, of the user within the immersive environment. The avatar of the user is generally visible to other users in the virtual world, and the user may view avatars representing the other users. The client application109may also be configured to generate and display the immersive environment to the user and to transmit the user's desired actions to the virtual world130on the server120. Such a display may include content from the virtual world determined from the user's line of sight at any given time. For the user, the display may include the avatar of that user or may be a camera eye where the user sees the virtual world through the eyes of the avatar representing this user.

For instance, using the example illustrated inFIG. 2, the virtual objects132may include a box250, a store220, and a library210. More specifically,FIG. 2illustrates a user display200presenting a user with a virtual world, according to one embodiment. In this example, the user is represented by a first avatar260, and another user is represented by a second avatar270. Within the virtual world130, avatars can interact with other avatars. For example, the user with the first avatar260can click on the second avatar270to start an instant message conversation with the other user associated with the second avatar270. The user may interact with elements displayed in the user display200. For example, the user may interact with the box250by picking it up and opening it. The user may also interact with a kiosk280by operating controls built into the kiosk280and requesting information. The user may also interact with a billboard240by looking at it (i.e., by positioning the line of sight directly towards the billboard240). Additionally, the user may interact with larger elements of the virtual world. For example, the user may be able to enter the store220, the office230, or the library210and explore the content available at these locations.

The user may view the virtual world using a display device140, such as an LCD or CRT monitor display, and interact with the client application109using input devices150(e.g., a keyboard and a mouse). Further, in one embodiment, the user may interact with the client application109and the virtual world130using a variety of virtual reality interaction devices160. For example, the user may don a set of virtual reality goggles that have a screen display for each lens. Further, the goggles can be equipped with motion sensors that cause the view of the virtual world presented to the user to move based on the head movements of the individual. As another example, the user can don a pair of gloves configured to translate motion and movement of the user's hands into avatar movements within the virtual reality environment. Of course, embodiments of the invention are not limited to these examples and one of ordinary skill in the art will readily recognize that the invention may be adapted for use with a variety of devices configured to present the virtual world to the user and to translate movement/motion or other actions of the user into actions performed by the avatar representing that user within the virtual world130.

FIG. 3illustrates a schematic application of the trust ranking, which as previously mentioned provides an indicia of an otherwise unrecognizable characteristic of the first avatar260, the second avatar270, and a third avatar300. In one embodiment, the unrecognizable characteristic may be representative of a degree of trustworthiness the first avatar should place in communications with the second avatar. Doing so may help to identify potential spammers. In operation, the system120stores account information for the first avatar260, second avatar270and third avatar300in the user index105once each user opens an account corresponding to a respective one of the avatars260,270,300. A trust ranking algorithm306executed by the system120takes data from the interaction records106that are related to interactions between the avatars260,270,300(and other avatars) correlated within the user index105and computes first, second and third trust ranks360,370,301applied to respectively the first, second and third avatars260,270,300. That is, the trust ranking algorithm306evaluates interactions between the avatars, including the actions in which a given avatar participates, to assign a trust ranking to each avatar. In one embodiment, users may rely on the trust rankings assigned to an avatar they interact with to determine if a reply is worthwhile or if information being provided is accurate. Examples of criteria used by the trust ranking algorithm306include conversational content308, interaction level310, dialogue repetition312and peer feedback314, as explained in greater detail below.

In one embodiment, the trust ranking algorithm306assigns an initial trust rank to an account for each new user account registered with the system. Each user may be assigned (or may generate) an account to give that user a presence within the virtual world. Subsequently, as the user causes their avatar to interact with the virtual world, the trust ranking may increase or decrease from the initial trust rank based on an iterative analysis of the criteria used by the trust ranking algorithm306. By way of example, since correlations may be reversed and relative values are provided for explanation purposes only, increases in the trust rank may correspond to a higher trustworthiness for an avatar and a lower likelihood of that avatar being a spammer. Illustratively, the first avatar260is shown with a first trust rank360of ninety. Assume that this value represents an established user with prior history indicative of the user being legitimate. In contrast, the second avatar270lacks any data within the interaction records106and hence has a second trust rank370of twenty-five, for example, that is unchanged from the initial trust rank. Further, the user for the third avatar300represents a spammer, which has been identified as such by a trust rank lowered from the initial trust rank (twenty-five) to the third trust rank301of five.

For some embodiments, the system120may disable the account for the third avatar300upon the third trust rank301reaching a threshold value selected for suspected spammers. For example, assume a threshold value set to ten, in such a case, the accounts for the first and second avatars260,270with the first and second trust ranks360,370are above the threshold value remain active in the user index105as denoted by checked markings. In contrast, the “X” notation for the third avatar300in the user index105reflects that this account has been disabled, preventing the third avatar300from appearing in the user display200shown inFIG. 2. In one embodiment, the user of the third avatar300may need to follow a process to reactivate the account prior to re-entering the virtual world.

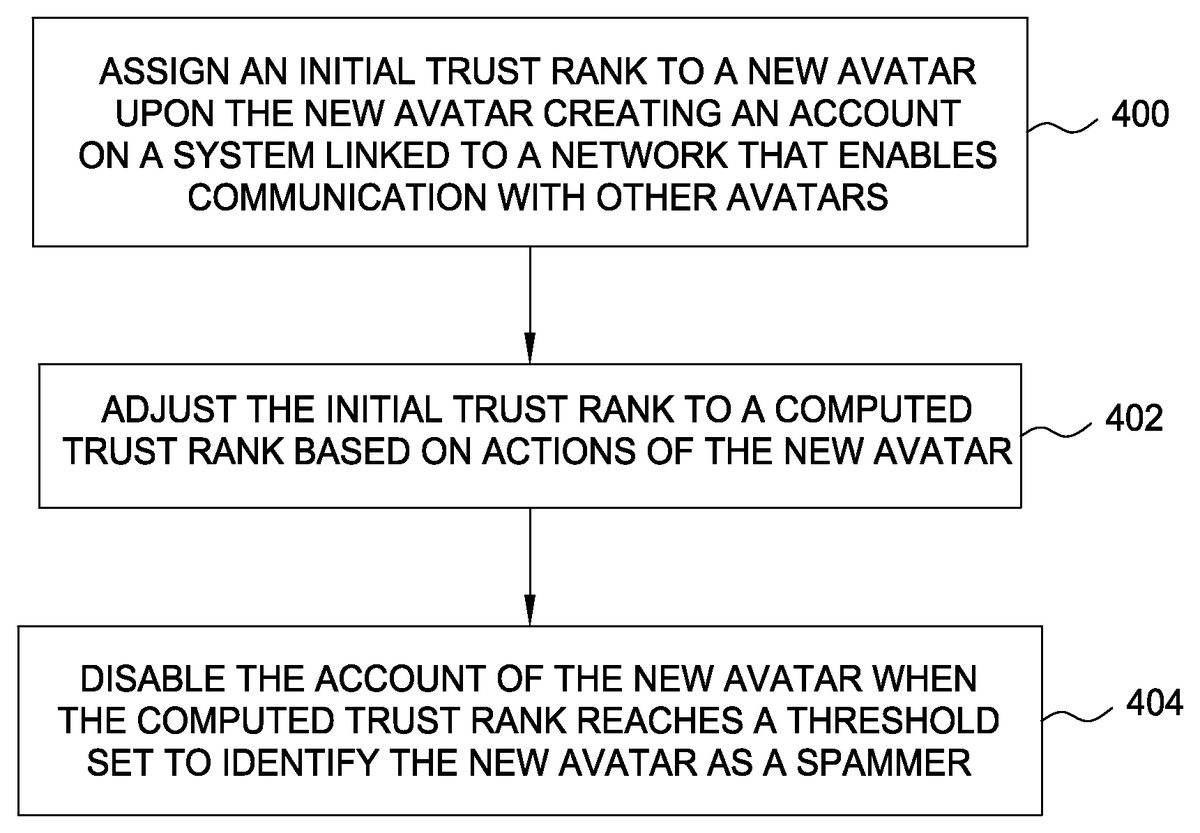

FIG. 4shows a flow diagram illustrating a method for monitoring avatars within a virtual environment to identify spammers, according to one embodiment of the invention. At step400, an initial trust rank may be assigned to a new avatar upon a user creating an account for use on a system linked to a network that enables communication with other avatars (i.e., when a user creates a new account (or new avatar) used to establish a presence within a virtual world). Evaluation step402includes adjusting the initial trust rank to a trust rank based on actions of the new avatar. The adjusting may take place over an extended monitoring period of time and incorporate multiple actions such that the computed trust rank is iteratively updated. Disabling the account of the new avatar occurs when the trust rank reaches a threshold set to identify the new avatar as a spammer, at identification step404. The adjusting occurs such that if the new avatar created at step400represents a legitimate user, the trust rank should not reach the threshold in identification step404.

In some embodiments, a threshold can be provided which users may set such that other avatars with trust ranks outside the threshold cannot communicate with the avatars of the users that have set the threshold. This setting of the threshold can be a global setting or a variable setting based on location of the avatars within the virtual world. Further, limitations may permit a new avatar to only be able to converse with avatars having similar trust ranks unless the avatars accept requests to chat or are in buddy lists. These limitations may ensure that new avatars cannot spam avatars of more established users until being detected, while providing the new avatars with probationary type abilities sufficient to allow interactions necessary for analysis by the trust ranking algorithm306in order to improve their trust rank if the new avatar is a legitimate user.

For some embodiments, the trust ranking of avatars is displayed in the user display200shown inFIG. 2next to respective avatars so that a user may know the trust ranking of the avatars in making decisions about the avatars. If the user is the first avatar260, then the first trust ranking360need not be displayed. However, displaying of the second trust ranking370in connection with displaying of the second avatar260in the user display200may help inform the user that the second avatar260is a new user that maybe should not be trusted. The second trust rank370may always be visible or only become visible upon viewing, clicking or attempting to interact with the second avatar270.

FIG. 5illustrates an exemplary trust ranking assessment process that, with reference toFIG. 3, further explains use of the conversational content308, interaction level310, dialogue repetition312and peer feedback314as criteria for the trust ranking algorithm306. Setup step500includes providing a system linked to a network that enables communication between first and second avatars. In recording step502, monitoring actions and/or dialogue of the first avatar lead to a first inquiry504that determines if the first avatar is interacting with the second avatar. If the answer to the first inquiry504is no, the monitoring continues until the first avatar interacts with the second avatar.

In one embodiment, second through sixth inquiries512-516parse the interaction detected by a yes answer to the first inquiry511. As worded, a no answer to one of the second through sixth inquiries512-516inquiries results in assigning adjustment value indicative of a legitimate user in favoring step504whereas a yes answer to one of the second through sixth inquiries512-516inquiries results in assigning adjustment value indicative of a spammer in disfavoring step506. Other inquiries in addition to the second through sixth inquiries512-516may be suitable to assign the adjustment values, which may also be assigned without all of the second through sixth inquiries512-516inquiries. Since each of the second through sixth inquiries512-516may have an independent result, the favoring and disfavoring steps504,506may both be present and represent a composite of the answers to the second through sixth inquiries512-516. With any of the no and yes answers to the second through sixth inquiries512-516, the answers as explained further herein may provide graded no or yes responses instead of a binary yes or no to facilitate assigning the adjustment values.

In evaluation step508, the trust ranking assigned to the first avatar may change, based on the adjustment values. The evaluation step508thereby computes the trust ranking to provide an indication about a quality of the first avatar that is not otherwise detectable, e.g., a measure of trustworthiness. The assessment process may return to the recording step502to enable continuous updating of the trust ranking in the evaluation step508.

In one embodiment, regarding the interaction level310, the second inquiry512determines whether a word ratio (defined as number of words communicated by the first avatar versus number of words communicated by the second avatar) is weighted to the first avatar. If the first avatar does all the talking in a conversation and then the conversation is over, this creates a much stronger yes answer to the second inquiry512than, for example, when the first avatar has ten more words in a one hundred word conversation or ten percent more words. The stronger yes results in a relatively greater adjustment value in the disfavoring step506than this later situation that may result in no adjustment. In other words, equal or near balanced interaction between the first avatar and the second avatar typically means that both parties are actively participating in a conversation. By contrast, few or one word answers indicate less interest by the party that is answering. Mainly one sided conversations occur in spamming since the spammer reaches out often, randomly and in an unwanted manner making replies short or non-existent. Spammers frequently do not receive replies due to nonsensical context of the talking. In addition, a history of a majority or certain greater percentage of one-sided conversations may further increase strength of the yes answer to the second inquiry512.

The third inquiry513determines if the second avatar has a lower trust ranking than the first avatar. If the first avatar interacts with the second avatar that has a higher rank and the second avatar interacts back, the interaction tends to indicate that the first avatar is legitimate. Further, the third inquiry513prevents the first avatar from gaining credentials without basis as a result of interactions with other spammers that would have poor trust rankings.

Regarding the fourth inquiry514, analyzing patterns in who the first avatar converses with offers useful information. A spamming avatar is likely to approach a large number of random avatars but have little or no repeat interaction with any of them. By contrast, a legitimate user more likely approaches fewer random avatars and has several close relationships evidenced by repeat interactions with certain avatars. To capture these patterns, the fourth inquiry514resolves whether the first avatar is communicating for a first, second or more times with the second avatar. While repeat interactions with the second avatar result in a no answer to the fourth inquiry514, a yes answer leads to assigning the adjustment value indicative of a spammer in the disfavoring step506. A historical imbalance of yes answers to the fourth inquiry514may provide a stronger yes and hence a relatively greater adjustment value in the disfavoring step506.

With reference to the conversational content308and dialogue repetition312represented inFIG. 3, the fifth inquiry515ascertains if the first avatar is using words repeated from previous interactions or that are flagged. That is, the first avatar keeps repeating the same message (or variation of a message) to other avatars. For some embodiments, conversational content of the second avatar may also be monitored and reflect on the first avatar. For example, words flagged as relating to emotions, interest and family in conversations back from the second avatar show indications that the first avatar is a legitimate user. Words on generic topics such as the weather lack interest and tend to be used by spammers so that such words may be the flagged ones that can result in a yes answer to the fifth inquiry515. Moreover, using one set of words when communicating corresponds to more likely behavior of a spammer.

The sixth inquiry516corresponds to the peer feedback314represented inFIG. 3. The second avatar may attempt to converse with the first avatar and receive irrelevant context or repeat sentences making the second avatar assume that the first avatar must be a spammer. Notification by the second avatar to the system that the first avatar is a potential spammer provides an affirmative response to the sixth inquiry516thereby assigning the adjustment value indicative of a spammer in the disfavoring step506. In some embodiments, the affirmative response to the sixth inquiry flags the first avatar and may trigger monitoring or increased monitoring of the first avatar.

Any of the second through sixth inquiries512-516may be linked or associated with one another. For example, particular relevance may occur with two yes answers such as when the first avatar repeats words determined by the fifth inquiry515in conjunction with the first avatar receiving short or no responses evidenced by the second inquiry512. The adjustment indicative of a spammer that is assigned in the disfavoring step506may be greater for such combinations than a summation of respective independent yes answers.

As an exemplary scenario in view of aforementioned aspects, the second avatar270shown inFIG. 2may represent a spammer. The second avatar270approaches the first avatar260and says “store220is overpriced.” The first avatar260hears the comment but does not respond. The second avatar270then goes to other avatars and makes another comment promoting a competitor of the store220. This spamming hinders the virtual world and has a negative impact on business of the store220. The system120illustrated inFIGS. 1 and 3captures and parses the text of the first and second avatar260,270for content and length as described herein in order to assess that the second avatar270is spamming. In particular, the second avatar270talks disproportionately about “the store” (i.e., store220) resulting in a repetition in word-use that provides a basis for, over time, diminishing the second trust rank370of the second avatar270. Similarly, lack of response from the first avatar260and lack of repeat interaction with the first avatar260among the interactions by the second avatar270may further diminish the second trust rank370of the second avatar270.

While the foregoing is directed to embodiments of the present invention, other and further embodiments of the invention may be devised without departing from the basic scope thereof, and the scope thereof is determined by the claims that follow.

Claims

- A computer-implemented method of evaluating actions of a first avatar in a virtual environment, comprising: assigning, by operation of one or more computer processors, an initial trust rank to the first avatar upon the first avatar creating an account on a system linked to a network that enables communication with other avatars within the virtual environment;executing a spam detection module to programmatically monitor one or more interactions between the first avatar and at least a second avatar;adjusting, by the spam detection module, the initial trust rank to a computed adjusted trust rank based on the interactions that are monitored;and disabling, by the spam detection module, the account of the first avatar when the computed adjusted trust rank reaches a specified threshold, wherein upon disabling the account of the first avatar, the first avatar is not visible to any avatars in the virtual environment.

- The computer-implemented method of claim 1 , wherein adjusting the initial trust rank is based on content and length of the monitored interactions between the first avatar and the second avatar.

- The computer-implemented method of claim 1 , wherein adjusting the initial trust rank is based on comparing number of words used by the first avatar to number of words used by the second avatar in a conversation.

- The computer-implemented method of claim 1 , wherein adjusting the initial trust rank is based on repetitions in the monitored interactions of the first avatar.

- The computer-implemented method of claim 1 , further comprising: feedback from the second avatar with respect to the interactions of the first avatar;and upon receiving feedback from the second avatar, adjusting, by the spam detection module, the initial trust rank to a computed trust rank based on the feedback received from the second avatar.

- The computer-implemented method of claim 1 , further comprising displaying the computed trust rank on a user display, wherein the one or more interactions between the first avatar and at least the second avatar are monitored without user input.

- The computer-implemented method of claim 1 , wherein programmatically monitoring one or more interactions between the first avatar and at least a second avatar includes identifying that the first avatar is communicating by repeating a set of one or more words to multiple avatars.

- The computer-implemented method of claim 1 , further comprising limiting the interactions of the first avatar until the computed trust rank reaches a second specified threshold.

- The computer-implemented method of claim 1 , further comprising blocking communication of the first avatar with at least one other avatar according to criteria for the computed trust rank as set by the at least one other avatar.

- A computer program product, comprising: a non-transitory computer-readable storage medium having computer-readable program code embodied therewith, the computer-readable program code executable by a processor to perform an operation to evaluate actions of a first avatar in a virtual environment, the operation comprising: assigning an initial trust rank to the first avatar upon the first avatar creating an account on a system linked to a network that enables communication with other avatars within the virtual environment;executing a spam detection module to programmatically monitor one or more interactions between the first avatar and at least a second avatar;adjusting, by the spam detection module, the initial trust rank to a computed adjusted trust rank based on the interactions that are monitored;and disabling, by the spam detection module, the account of the first avatar when the computed adjusted trust rank reaches a specified threshold, wherein upon disabling the account of the first avatar, the first avatar is not visible to any avatars in the virtual environment.

- The computer program product of claim 10 , the operation further comprising displaying the computed adjusted trust rank on a user display, wherein the one or more interactions between the first avatar and at least the second avatar are monitored without user input.

- A system, comprising: one or more computer processors;and a memory containing a program, which when executed by the one or more computer processors is configured evaluate actions of a first avatar in a virtual environment, the operation comprising: assigning an initial trust rank to the first avatar upon the first avatar creating an account on a system linked to a network that enables communication with other avatars within the virtual environment;monitoring, by a spam detection module, one or more interactions between the first avatar and at least a second avatar;adjusting, by the spam detection module, the initial trust rank to a computed adjusted trust rank based on the interactions that are monitored;and disabling, by the spam detection module, the account of the first avatar when the computed adjusted trust rank reaches a specified threshold, wherein upon disabling the account of the first avatar, the first avatar is not visible to any avatars in the virtual environment.

- The system of claim 12 , wherein adjusting the initial trust rank is based on content and length of the monitored interactions between the first avatar and the second avatar.

- The system of claim 12 , wherein adjusting the initial trust rank is based on repetitions in the monitored interactions of the first avatar.

- The system of claim 12 , wherein adjusting the initial trust rank includes comparing number of words used by the first avatar to number of words used by the second avatar in a conversation.

- The system of claim 12 , wherein monitoring the first avatar is triggered by feedback from a third avatar.

- The system of claim 12 , the operation further comprising displaying the trust rank on a user display, wherein the one or more interactions between the first avatar and at least the second avatar are monitored without user input.

- The system of claim 12 , wherein adjusting the trust rank occurs based on number of words used by the first avatar in the interaction being greater than number of words used by the second avatar in the interaction.

- The system of claim 12 , the operation further comprising limiting communications by the first avatar, wherein the trust ranking is applied against a set criterion to determine which communications are limited.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.