U.S. Pat. No. 8,342,963

METHODS AND SYSTEMS FOR ENABLING CONTROL OF ARTIFICIAL INTELLIGENCE GAME CHARACTERS

AssigneeSony Computer Entertainment America Inc.

Issue DateApril 10, 2009

Illustrative Figure

Abstract

A method for controlling an artificial-intelligence (AI) character includes entering a command mode which enables control of the AI character, and occurs while substantially maintaining an existing display of the game, thereby preserving the immersive experience of the video game for the player. A plurality of locations are sequentially specified within a virtual space of the game, the plurality of locations defining a path for the AI character. The AI character is moved along the path to the plurality of locations in the order they were specified. The plurality of locations may be specified by maneuvering a reticle, and selecting each of the locations. A node can be displayed in the existing display of the game at each of the plurality of locations. A series of lines connecting the nodes can also be displayed in the existing display of the game.

Description

DETAILED DESCRIPTION In the following description, numerous specific details are set forth in order to provide a thorough understanding of the present invention. It will be apparent, however, to one skilled in the art that the present invention may be practiced without some or all of these specific details. In other instances, well known process steps have not been described in detail in order not to obscure the present invention. Broadly speaking, the invention defines systems and methods for enabling a player of a video game to control artificial-intelligence (AI) characters. A command mode is defined which enables the player to access controls for determining actions for the AI characters. In accordance with an aspect of the invention, the command mode is executed while preserving for the player the immersive experience of the normal gameplay of the video game. While in the command mode, the player may specify locations which are visually represented by nodes. The locations specified define one or more paths for the AI characters to traverse. With reference toFIG. 1, a generic video game system is depicted, in which the methods of the present invention may be implemented. A player10provides inputs via an input device12. The input device12may be any device or combination of devices as is known in the art for providing inputs for a video game. Examples of such devices include a joystick, keyboard, mouse, trackball, touch sensitive pad, and dedicated game controllers such as the DUALSHOCK® 3 Wireless Controller manufactured by Sony Computer Entertainment Inc. The input device12transmits inputs to a computer14. The computer14may be any device as is known in the art which is suitable for executing a video game. Various examples of such suitable devices include personal computers, laptops, handheld computing devices, and dedicated gaming consoles such as the Sony Playstation® 3. ...

DETAILED DESCRIPTION

In the following description, numerous specific details are set forth in order to provide a thorough understanding of the present invention. It will be apparent, however, to one skilled in the art that the present invention may be practiced without some or all of these specific details. In other instances, well known process steps have not been described in detail in order not to obscure the present invention.

Broadly speaking, the invention defines systems and methods for enabling a player of a video game to control artificial-intelligence (AI) characters. A command mode is defined which enables the player to access controls for determining actions for the AI characters. In accordance with an aspect of the invention, the command mode is executed while preserving for the player the immersive experience of the normal gameplay of the video game. While in the command mode, the player may specify locations which are visually represented by nodes. The locations specified define one or more paths for the AI characters to traverse.

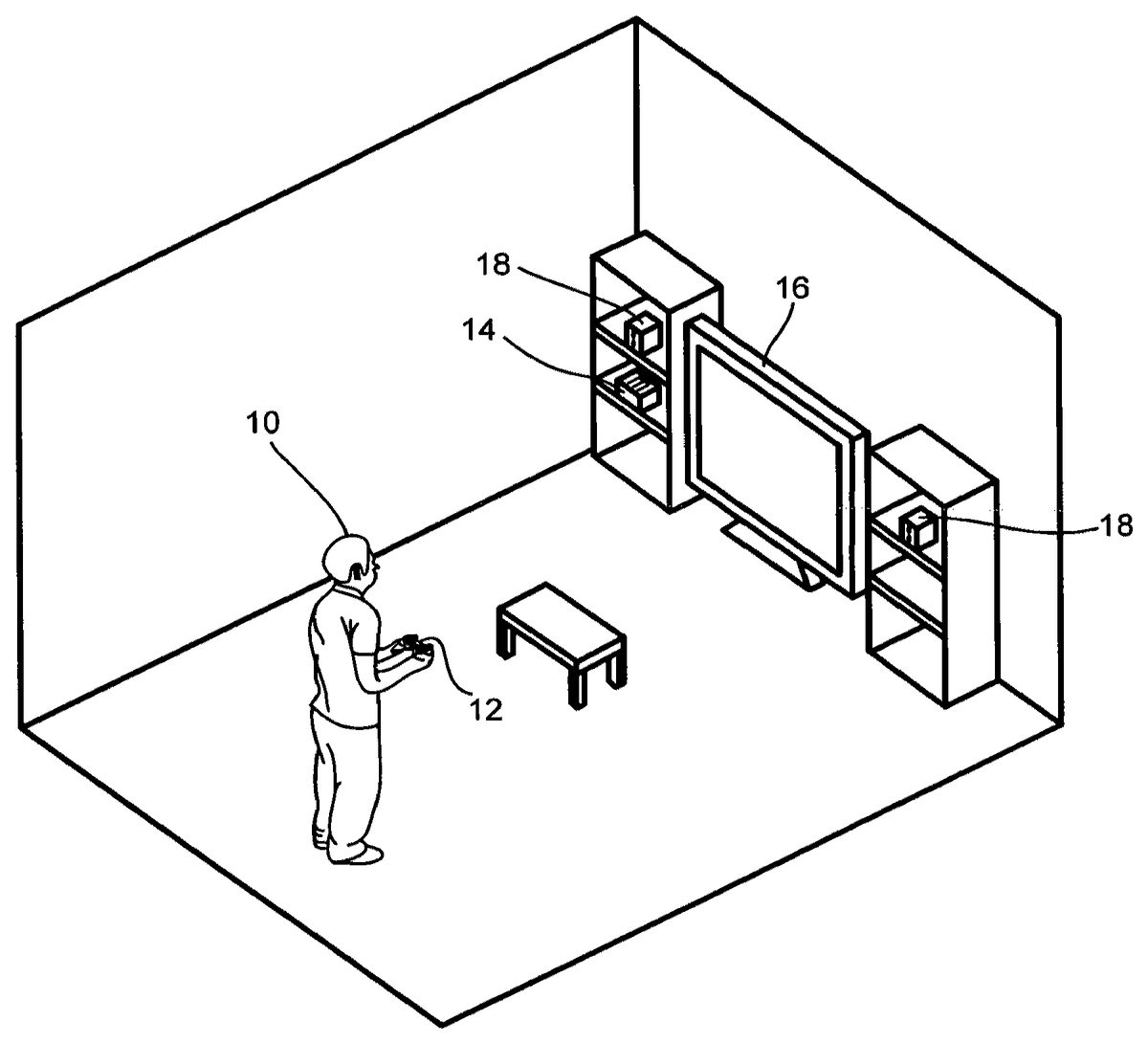

With reference toFIG. 1, a generic video game system is depicted, in which the methods of the present invention may be implemented. A player10provides inputs via an input device12. The input device12may be any device or combination of devices as is known in the art for providing inputs for a video game. Examples of such devices include a joystick, keyboard, mouse, trackball, touch sensitive pad, and dedicated game controllers such as the DUALSHOCK® 3 Wireless Controller manufactured by Sony Computer Entertainment Inc. The input device12transmits inputs to a computer14. The computer14may be any device as is known in the art which is suitable for executing a video game. Various examples of such suitable devices include personal computers, laptops, handheld computing devices, and dedicated gaming consoles such as the Sony Playstation® 3. Other examples of game consoles include those manufactured by Sony Computer Entertainment, Inc., Nintendo, and Microsoft. The computer14outputs video to a display16, such as a monitor or television, and outputs audio to speakers18, which may be loudspeakers (e.g. stand-alone or those built into a television or monitor) or headphones.

With reference toFIGS. 2A-2D, a method for controlling AI characters is shown. A view100represents a field of view of a three-dimensional virtual spatial field of a video game, as would be seen by a player of the video game. The view100as shown inFIGS. 2A-2Dis thus typically projected onto a two-dimensional display such as a television or computer monitor display. In other embodiments, the view100may be projected onto a three-dimensional display, such as a holographic projection. The virtual spatial field of the video game is generally a three-dimensional space. But in other embodiments, the virtual spatial field of the video game shown by view100may be a two-dimensional space.

With reference toFIG. 2A, an AI character102is shown in the view100, along with a player's first-person character101. The player controls the first-person character101in real-time. That is, inputs provided by the player directly and immediately affect the actions of the first-person character during real-time gameplay. For example, a player may control the movements of the first-person character101, or cause the first-person character101to perform certain actions, such as firing a weapon. The first-person character101generally does not take any action until the player provides a direct input which causes the first-person character to act. In contrast, the AI character102is generally under automatic control of the video game. As such, the AI character102behaves according to preset algorithms of the video game, these being generally designed so that the AI character acts in a manner beneficial to the first-person character101, or otherwise beneficial to the player's interests within the context of the video game. Thus, for example, in a battlefield-style game, the AI character102may approximately follow the player's first person character101, and may engage and attack enemy characters. The AI character102may also carry out objectives which are beneficial to the player's interests, such as destroying enemy installations. However, these activities are controlled by preset algorithms of the video game, and may not reflect the actual desires or intent of the player. Therefore, it is beneficial to provide a method for a player to control the AI character102.

With continued reference toFIGS. 2A-D, and in accordance with an embodiment of the invention, such a method for enabling a player to control the AI character102is herein described. As shown atFIG. 2A, a player is enabled to place a first node108within the view100. The first node108represents a first location for the AI character102. If the AI character is not located at node108, then the AI character's first movement will be to move to the node108.

With reference toFIG. 2B, a second node110is placed at a vehicle104. The second node110represents a second location for the AI character102to travel to. The second node110is connected to the first node108by a line109, which provides a visual indication of the approximate path that the AI character102will take when moving from node108to node110.

With reference toFIG. 2C, a third node112is placed at an enemy character106, the third node112indicating a third location for the AI character102. The third node112is connected to the second node110by line111, which provides a visual indication of the approximate path that the AI character102will take when moving from node110to node112.

With reference toFIG. 2D, a fourth node114is placed in the field of view100, the fourth node114indicating a fourth location for the AI character102. The fourth node114is connected to the third node112by line113, which provides a visual indication of the approximate path that the AI character102will take when moving from node112to node114.

In one embodiment, the view100as shown inFIGS. 2A-Das projected onto a display16represents an existing display of the game. In other words, the look and feel of the normal gameplay is substantially preserved, while simultaneously enabling a player to specify locations for the AI character102which are represented by nodes108,110,112, and114. Because a player is enabled to place the nodes108,110,112, and114while substantially maintaining an existing display of the video game, a player is not removed from the immersive experience of the video game. The presently described method thus provides an intuitive method for controlling an AI character in a seamless manner in the context of the gameplay. For example, in a battlefield-style game utilizing the presently described method, then a player would not be required to “leave” the battlefield arena in order to set up commands for an AI character. Rather, the player could specify the commands within the context of the battlefield by simply placing nodes on the battlefield which indicate locations for the AI character to traverse.

The plurality of locations represented by nodes108,110,112, and114collectively define a path for the AI character102. As shown inFIGS. 2A-D, the nodes are disc-shaped. However, in other embodiments, the nodes may be any of various other shapes, such as a polygon, star, asterisk, etc. In one embodiment, the shape may vary according to the location of the node. For example, if a node is placed at an enemy character, the node may have a different shape than if it were placed in a generic location, thereby indicating to the player the presence of the enemy character. In another embodiment, the color of the node may vary depending upon the location represented by the node. Additionally, the shape and color of the lines109,111, and113may vary, provided they indicate an approximate path for the AI character102when traveling between nodes.

In one embodiment, a player may have the option of providing additional commands for the AI character to perform during placement of the nodes. These commands may enable a player to affect how an AI character interacts with its environment when traversing the various nodes. For example, when node110is placed at the vehicle104, the player may have the option of commanding the AI character to destroy the vehicle. This may be indicated at the node110by an emblem such as a grenade symbol. In another example, when node112is placed at enemy character106, the player may have the option of commanding the AI character to attack the enemy character106. This may be indicated at the node112by an emblem such as a skull-and-crossbones. In one alternative embodiment, actions for an AI character to perform are automatically determined based upon the placement of a node. For example, placement of a node in the near vicinity of an enemy character may automatically cause the AI character to attack the enemy character when the AI character traverses the nodes and approaches the enemy character.

In accordance with an embodiment of the invention, the method illustrated inFIGS. 2A-2Das discussed above may comprise a separate command mode of the video game. The command mode may be triggered by providing an input from the controller, such as a button or sequence or combination of buttons. The command mode enables a player to provide command/control data to affect the movements and actions of AI characters, as described above. As mentioned above, the command mode of the video game is executed while maintaining an existing display of the game. This allows a player to continue to experience the immersive environment of the video game, and facilitates the specification of locations within the spatial field of the game in a natural and intuitive manner as described above.

A player is not required to navigate additional menus to place nodes for the AI characters, nor is the player presented with an additional interface display such as a schematic map. In accordance with the presently described methods, a player can intuitively reference the existing display of the game to specify locations which determine a path for an AI character. However, it may also be desirable to provide methods for distinguishing the command mode from a normal gameplay mode of the video game. To this end, in various embodiments, different methods of distinguishing the command mode from the normal gameplay mode while still substantially maintaining the existing display of the game are herein described.

In one embodiment, triggering the command mode causes a desaturation process to be performed on the existing display of the game. According to the desaturation process, points of interest within the spatial field of the game are identified. The points of interest may be objects such as persons, vehicles, weapons fire, explosions, or any other object that may be of interest to the player. Then the color saturation of the display of the game is desaturated while maintaining the color saturation of the points of interest. In this manner, all portions of the display that are not points of interest may appear in partial or full grayscale, while the points of interest appear highlighted to the player by virtue of their color.

In another embodiment, entering the command mode causes a time-slowing effect. In other words, the gameplay clock is slowed when in the command mode, such that the activity of the game proceeds at a slower rate than normal. This allows for the time required for a player to place nodes for an AI character, while still maintaining a sense of the continuity of the game. Thus, a player continues to experience the time-sensitive aspects of the game, but at a slowed rate that helps facilitate use of the presently described methods for controlling an AI character.

In a further embodiment, triggering the command mode causes an initial zoom-out of the field of view. By zooming out the field of view, a player is enabled to survey a larger portion of the spatial field of the game at once. This can be helpful in facilitating strategic placement of nodes for an AI character, as the player is thereby provided with more information regarding the state of the game. Enlarging the field of view conveys a greater sense of awareness of the geography, objects, characters, and events occurring within the spatial field of the game.

In various embodiments, the activity of the AI character102may be controlled in different ways relative to the execution of the command mode. In one embodiment, a player may halt the activity (such as movements) of the AI character102prior to or upon entering the command mode. While the AI character is halted, the player proceeds to specify a series of locations indicated by nodes, as described above, which define a path for the AI character102. The AI character102remains in a halted configuration until released by the player. When released, the AI character begins to follow the path defined by the series of locations, in the order that the series of locations was specified.

In another embodiment, the AI character102is not halted prior to or upon entering the command mode. In such an embodiment, the AI character102continues its activity until a first location is specified, in accordance with the above-described methods. As soon as the first location is specified, then the AI character immediately begins moving to the first location. Upon reaching the first location, the AI character will automatically proceed to additional locations, if they have been specified, without delay.

It will be understood from the present specification that the presently-described methods for controlling an AI character may utilize various input devices and input methods and configurations, as described previously with reference to the video game system ofFIG. 1. Actions such as triggering the command mode, specifying locations, and halting and/or releasing an AI character, all require that a player provide the necessary inputs. Thus, the inputs may result from any of various key strokes, buttons, button combinations, joystick movements, etc., all of which are suitable for providing inputs to a video game.

With reference toFIG. 3A, a command mode of a video game is illustrated in a view100, in accordance with an embodiment of the invention. This first-person character101is directly controlled by the player during real-time gameplay. Additionally, AI characters132accompany the first-person character101. The AI characters are organized into teams, such that AI characters132form a first team, and form a second team. A player may control a team of AI characters in a group-wise fashion, such that a single command is operative to control the entire team.

With continued reference toFIG. 3A, the command mode shown in the view100includes various features to aid the player in specifying locations and actions for the AI characters. For example, a command reticle130indicates to the player where a node indicating a location for an AI character might be placed. Thus, in order to place nodes, the player maneuvers the command reticle130(e.g. via a joystick) to various locations in the view, and selects those locations in order to place a node. In one embodiment, the view100is shifted in accordance with the maneuvering of the command reticle130, such that the command reticle130remains in the central portion of the view100. In other words, when the command reticle130is being maneuvered in the command mode, the video game assumes a first-person characteristic based on the perspective of the command reticle130, such that the view100is shifted in accordance with the movement of the command reticle130.

In another embodiment, the view100generally does not shift when the command reticle130is maneuvered within the boundaries of the current view100. However, when an attempt is made to maneuver the command reticle beyond the boundaries of the view100, then the view100is adjusted accordingly (e.g. panning the field of view), so as to maintain the command reticle in the view100.

With continued reference toFIG. 3A, icons120,121,122, and123are shown in the upper left corner of the view100; whereas icons125,126,127, and128are shown in the upper right corner of the view100. These icons represent game controller buttons, and are provided as an aid to the player, indicating the functionality of various buttons on a game controller. In one embodiment, the icons120,121,122, and123correspond to the left, right, up, and down buttons of a directional pad, respectively. In one embodiment, the icon120indicates to the player that the left button of the directional pad is utilized to place a node for the first team (comprised of AI characters132); whereas the icon121indicates to the player that the right button of the directional pad is utilized to place a node for the second team.

Such utility may be indicated in various ways, such as by providing descriptive words, or by providing a pattern, color, or symbol for each icon which corresponds to a pattern, color, or symbol provided for the AI characters of the relevant team. In one embodiment, the icon122indicates to the player that the up button of the directional pad is utilized to place a node for all of the AI characters132simultaneously. In one embodiment, the icon123indicates to the player that the down button of the directional pad is utilized to cancel a previously placed node.

The icons125,126,127and128may indicate additional functionality of buttons on a controller to the player. For example, in one embodiment, the icon128indicates to the player that the corresponding button having an “X”-shaped symbol is utilized to halt the activity of the AI characters and place them in a waiting state and/or release them from such a waiting state.

With continued reference toFIG. 3A, a series of nodes and connecting lines are shown, which collectively define paths for the AI characters132. Nodes153aand144represent specified locations for the first team. These specified locations define a path for the first team, which is indicated by lines153b. In one embodiment, the nodes153aand144and/or the lines153bindicate their correspondence to the first team by having an associated color/pattern/symbol which corresponds to a color/pattern/symbol associated with the AI characters of the first team. Nodes146and153crepresent specified locations for the second team. These specified locations define a path for the second team, which is indicated by lines153d.

In one embodiment, the nodes146and153cand/or the lines153dindicate their correspondence to the second team by having an associated color/pattern/symbol which corresponds to a color/pattern/symbol associated with the AI characters of the second team. Nodes150and152represent specified locations for all of the AI characters (both the first team and the second team). Thus, they define a path for all of the AI characters indicated by line151. The paths of the first and second teams intersect at node150.

Still with reference toFIG. 3A, a view100of a command mode of a video game is shown, in accordance with an embodiment of the invention. The view100is shown on a display,16, which is connected to a game computer14, and viewed by a player10. The player10's first-person character101is displayed in the lower center portion of the view100. As illustrated, the various identifying features of the command mode are overlaid on the existing display of the game in an intuitive manner to facilitate ease of use and understanding regarding their purpose and implementation. The player10provides command/control data for two teams of AI characters by maneuvering the command reticle130and specifying locations for each of the teams to traverse. Exemplary specified locations for each of the teams are shown at nodes153aand153c. Various nodes are connected by lines indicating a path for the AI characters to navigate. Exemplary connecting lines for each of the teams are shown at153band153d.

With reference toFIG. 3B, a view100of a video game is shown, in accordance with an embodiment of the invention. The view100is shown on a display,16, which is connected to a game computer14, and viewed by a player10. The view100as shown is an overhead view of a scene of the video game, illustrating a command mode of the video game. AI characters154aand154bform a first team, while AI characters154cand154dform a second team. The AI characters of each team may be distinguished from each other by indicators such as associated shapes, markings and/or colors. The node154erepresents a first position for the first team, and is therefore highlighted by a segmented ring-shaped structure surrounding the node having the same color as the first team. The node154frepresents a first position for the second team, and is likewise therefore highlighted by a segmented ring-shaped structure. The node154falso represents a second position for the first team. Because node154fis a position for both the first and second teams, the node154fis highlighted in a color different than that of the first or second teams, thus indicating its multi-team significance. Lines154gand154hconnect the nodes of the first and second teams, respectively, and indicate the path defined by the nodes for each of the teams, respectively.

With reference toFIG. 3C, a view100of a video game illustrating a command mode of the video game is shown, in accordance with an embodiment of the invention. The view100is shown on a display,16, which is connected to a game computer14, and viewed by a player10. A player's first-person character101is shown, along with AI characters155a, which the player controls. Nodes155bhave been specified by the player, and define a path for the AI characters to traverse, which is indicated by connecting lines155c. The last line segment155dof the path is terminated by an arrow indicating the continuing direction that the AI characters will travel after completing traversal of the nodes155b. In accordance with the embodiment shown, a player may be enabled to specify a direction of travel for the AI characters. The direction of travel may be specified after specification of a location as indicated by a node, or the direction of travel may be specified independently.

With reference toFIG. 3D, a view100of a video game illustrating a command mode of the video game is shown, in accordance with an embodiment of the invention. The view100is shown on a display,16, which is connected to a game computer14, and viewed by a player10. A player's first-person character101is shown, along with AI character156a, which the player may control. The nodes156b,156cand156dindicate specified locations for the AI character156a, and are connected by lines156ewhich indicate the path for the AI characters156ato navigate. Node156chas been placed on a vehicle, and includes an icon of a grenade, indicating that when the AI character156areaches the location specified by node156c, the AI character will detonate a grenade to destroy the vehicle.

With reference toFIG. 3E, a view100of a video game is shown, illustrating a command mode of the video game. The view100is shown on a display16, which is connected to a game computer14, and viewed by a player10. The view100is a field of view of a spatial field of the video game. In the present instance, the command mode has enabled the player10to “call in” and airstrike, which is indicated by explosion157c. The location of the airstrike may be specified in accordance with previously described methods, such as my maneuvering a command reticle to a desired location, and selecting the location to receive an airstrike.

With continued reference toFIG. 3E, in order to distinguish the command mode of the video game from regular gameplay, and to help the player110focus on salient aspects of the game, a color desaturation method is applied, in accordance with an aspect of the invention. When the command mode is entered, the color of the general areas of the view, as indicated at157a, is desaturated. However, points of interest such as characters157band the explosion157care not desaturated, and are shown as normal. This causes the characters157band the explosion157cto stand out against the desaturated remainder of the view100.

With reference toFIG. 4, a method for controlling an AI character in a video game is shown, in accordance with an embodiment of the invention. At step160, a command mode of the video game is entered. The command mode enables control of the AI character, and entering the command mode occurs while substantially maintaining an existing display of the game. By substantially maintaining an existing display of the game, the player of the video game is not significantly removed from the immersive environment of the game. At step162, the AI character is paused so as to halt its activity and place the AI character in a waiting state.

At step164, a command reticle is maneuvered to a desired location, the desired location being a location within the spatial field of the game to which the player wishes the AI character to travel. At step166a tactical node (“tac” node) is placed at the desired location which indicates to the player the specification of the location. At step168, a tactical line (“tac” line) is drawn connecting the just-placed tac node to a previously-placed tac node (if one exists). At step170, it is determined whether the player is finished placing tac nodes. If not, then the method steps164,166, and168may be repeated until the player has completed placing tac nodes. When the player has finished, then the AI character is released at step172, at which time the AI character begins moving to each of the tac nodes in the order they were placed, generally following the path indicated by the tac lines. At step174, the command mode is exited so as to resume normal gameplay control.

With reference toFIG. 5A, a method for distinguishing a command mode of a video game by selectively adjusting color saturation is shown, in accordance with an embodiment of the invention. At step180, a player triggers a command mode of the video game, the command mode enabling the player to provide command/control data to determine the activity of AI characters. When the command mode is entered, the existing display of the video game is substantially maintained, so as to continue presenting the immersive environment of the video game to the player. At step182, points of interest within the player's view of the video game are determined. The points of interest may comprise various objects and/or locations which may be of importance to the player, such as characters, explosions, weapons-fire, vehicles, buildings, assorted structures, supplies, key destination points, etc.

At step184, the color saturation of the display of the video game is desaturated, while the color saturation of the determined points of interest is maintained. The amount of desaturation effect applied may vary in different embodiments, and in the case where desaturation is 100%, then those portions of the display which are desaturated will appear in grayscale. In various embodiments, the degree to which the color saturation of the points of interest is maintained may vary depending on the nature of the points of interest. For example, in one embodiment, the color saturation of characters is completely maintained, while the color saturation of other objects is maintained to a lesser degree. At step186, the command mode is exited, so as to return to normal gameplay control of the video game. At step188, upon exiting the command mode, the display is resaturated, restoring the original color saturation of the display of the game.

With reference toFIG. 5B, a method for distinguishing a command mode of a video game by adjusting a rate of gameplay is shown, in accordance with an embodiment of the invention. At step190, a player triggers a command mode of the video game, the command mode enabling the player to provide command/control data to determine the activity of AI characters. When the command mode is entered, the existing display of the video game is substantially maintained, so as to continue presenting the immersive environment of the video game to the player. At step192, the rate of gameplay of the video game is slowed, but not stopped. Thus, actions and events within the game occur at a reduced rate which affords time for the player to strategically determine the actions of the AI characters. However, because the game is not completely stopped, the time-based progression of events which contributes to the feel of the game is preserved. Thus, the player continues to experience the time-sensitive aspect of the game, but at a reduced rate. At step194, the player exits the command mode, which restores normal control of the video game. Upon exiting the command mode, the normal rate of gameplay is resumed at step196, thus returning the player to the regular gameplay environment.

With reference toFIG. 5C, a method for distinguishing a command mode of a video game by adjusting a game view is shown, in accordance with an embodiment of the invention. At step198, a player triggers a command mode of the video game, the command mode enabling the player to provide command/control data to determine the activity of AI characters. When the command mode is entered, the existing display of the video game is substantially maintained, so as to continue presenting the immersive environment of the video game to the player. At step200, the game view, which is the view that the player sees, is zoomed out. This enables the player to simultaneously view a larger area of the spatial field of the video game. Thus, the player is better able to strategically determine the actions of the AI characters in the context of the spatial field of the video game.

At step202, the game view “follows” a command reticle which is maneuvered by the player to various locations in the video game. In other words, the command reticle essentially behaves as the player's first-person “character” so that the view of the game is based on the perspective of the command reticle and tracks the movement of the command reticle. At step204, the player exits the command mode. Upon exiting the command mode, the game view is zoomed in to the normal perspective at step206, thus restoring the player to the normal gameplay view.

With reference toFIG. 6, a system for enabling control of AI characters of a video game is shown, in accordance with an embodiment of the invention. A user10provides inputs via a controller12to a gaming console/computer14. The inputs include: a direction control210, which enables the user10to enter directional inputs; a mode control, for toggling on or off a command mode of the video game; an AI group selection, which enables the user10to selectively provide control inputs for different groups of AI characters; and an AI character control, which enables the user10to directly control AI characters by pausing them so as to place them in a waiting status, or releasing them to continue their activity, which may include command/control data specified during the command mode.

The inputs are received by an input data module218, and processed by a command mode processing block220. The command mode processing block220executes the command mode of the video game, the command mode enabling the player10to control AI characters. The command mode processing block220accesses multiple modules which effect the various aspects of the command mode, by affecting image frame data which is rendered by image frame data renderer232to a display16, thereby forming a display of the game.

A gameplay rate control module222slows the rate of gameplay of the game during execution of the command mode. This allows the player more time to strategically plan and determine the activities of the AI characters, without unnaturally stopping the gameplay entirely. A saturation control module224adjusts the color saturation of the image frame data which defines a display of the game, so as to highlight points of interest within the game. When the command mode is triggered, the saturation control module224first determines the points of interest within the display of the game. Then the color saturation of the display of the game is reduced while maintaining the color saturation of the points of interest. In this manner, the points of interest appear highlighted to the player due to their color saturation. A game view control module226effects a zoom-out of the display of the game upon trigerring the command mode, such that the player is able to view a larger area of the spatial field of the video game at once. This facilitates a greater scope of awareness to aid the player in strategically setting control of the AI characters.

A node placement module228enables a player to specify locations within the spatial field of the video game, which are visually represented by nodes, for AI characters to travel to. To place a node, a player utilizes the direction control210to maneuver a command reticle to a desired location, and then selects the location to place a node. In one embodiment, the AI characters may be organized in groups, and therefore the node placement module accommodates placement of nodes for a group of AI characters via the AI group selection24. A series of specified locations represented by a series of nodes defines a path for the AI character(s) to traverse. This path is represented by lines connecting the nodes which are created by the line generation module230.

A microprocessor234, memory236and an object library238are included within the console/computer14, these being utilized to execute the aforementioned modules of the video game system. It will be understood by those skilled in the art that the illustrated configuration is merely one possible configuration utilizing such components, and that others may be practiced without departing from the scope of the present invention.

FIG. 7schematically illustrates the overall system architecture of the Sony® Playstation 3® entertainment device, a computer system capable of utilizing dynamic three-dimensional object mapping to create user-defined controllers in accordance with one embodiment of the present invention. A system unit1000is provided, with various peripheral devices connectable to the system unit1000. The system unit1000comprises: a Cell processor1028; a Rambus® dynamic random access memory (XDRAM) unit1026; a Reality Synthesizer graphics unit1030with a dedicated video random access memory (VRAM) unit1032; and an I/O bridge1034. The system unit1000also comprises a Blu Ray® Disk BD-ROM® optical disk reader1040for reading from a disk1040aand a removable slot-in hard disk drive (HDD)1036, accessible through the I/O bridge1034. Optionally the system unit1000also comprises a memory card reader1038for reading compact flash memory cards, Memory Stick® memory cards and the like, which is similarly accessible through the I/O bridge1034.

The I/O bridge1034also connects to six Universal Serial Bus (USB) 2.0 ports1024; a gigabit Ethernet port1022; an IEEE 802.11b/g wireless network (Wi-Fi) port1020; and a Bluetooth® wireless link port1018capable of supporting of up to seven Bluetooth connections.

In operation the I/O bridge1034handles all wireless, USB and Ethernet data, including data from one or more game controllers1002. For example when a user is playing a game, the I/O bridge1034receives data from the game controller1002via a Bluetooth link and directs it to the Cell processor1028, which updates the current state of the game accordingly.

The wireless, USB and Ethernet ports also provide connectivity for other peripheral devices in addition to game controllers1002, such as: a remote control1004; a keyboard1006; a mouse1008; a portable entertainment device1010such as a Sony Playstation Portable® entertainment device; a video camera such as an EyeToy® video camera1012; and a microphone headset1014. Such peripheral devices may therefore in principle be connected to the system unit1000wirelessly; for example the portable entertainment device1010may communicate via a Wi-Fi ad-hoc connection, whilst the microphone headset1014may communicate via a Bluetooth link.

The provision of these interfaces means that the Playstation 3 device is also potentially compatible with other peripheral devices such as digital video recorders (DVRs), set-top boxes, digital cameras, portable media players, Voice over IP telephones, mobile telephones, printers and scanners.

In addition, a legacy memory card reader1016may be connected to the system unit via a USB port1024, enabling the reading of memory cards1048of the kind used by the Playstation® or Playstation 2® devices.

In the present embodiment, the game controller1002is operable to communicate wirelessly with the system unit1000via the Bluetooth link. However, the game controller1002can instead be connected to a USB port, thereby also providing power by which to charge the battery of the game controller1002. In addition to one or more analog joysticks and conventional control buttons, the game controller is sensitive to motion in six degrees of freedom, corresponding to translation and rotation in each axis. Consequently gestures and movements by the user of the game controller may be translated as inputs to a game in addition to or instead of conventional button or joystick commands. Optionally, other wirelessly enabled peripheral devices such as the Playstation Portable device may be used as a controller. In the case of the Playstation Portable device, additional game or control information (for example, control instructions or number of lives) may be provided on the screen of the device. Other alternative or supplementary control devices may also be used, such as a dance mat (not shown), a light gun (not shown), a steering wheel and pedals (not shown) or bespoke controllers, such as a single or several large buttons for a rapid-response quiz game (also not shown).

The remote control1004is also operable to communicate wirelessly with the system unit1000via a Bluetooth link. The remote control1004comprises controls suitable for the operation of the Blu Ray Disk BD-ROM reader1040and for the navigation of disk content.

The Blu Ray Disk BD-ROM reader1040is operable to read CD-ROMs compatible with the Playstation and PlayStation 2 devices, in addition to conventional pre-recorded and recordable CDs, and so-called Super Audio CDs. The reader1040is also operable to read DVD-ROMs compatible with the Playstation 2 and PlayStation 3 devices, in addition to conventional pre-recorded and recordable DVDs. The reader1040is further operable to read BD-ROMs compatible with the Playstation 3 device, as well as conventional pre-recorded and recordable Blu-Ray Disks.

The system unit1000is operable to supply audio and video, either generated or decoded by the Playstation 3 device via the Reality Synthesizer graphics unit1030, through audio and video connectors to a display and sound output device1042such as a monitor or television set having a display1044and one or more loudspeakers1046. The audio connectors1050may include conventional analogue and digital outputs whilst the video connectors1052may variously include component video, S-video, composite video and one or more High Definition Multimedia Interface (HDMI) outputs. Consequently, video output may be in formats such as PAL or NTSC, or in 720p, 1080i or 1080p high definition.

Audio processing (generation, decoding and so on) is performed by the Cell processor1028. The Playstation 3 device's operating system supports Dolby® 5.1 surround sound, Dolby® Theatre Surround (DTS), and the decoding of 7.1 surround sound from Blu-Ray® disks.

In the present embodiment, the video camera1012comprises a single charge coupled device (CCD), an LED indicator, and hardware-based real-time data compression and encoding apparatus so that compressed video data may be transmitted in an appropriate format such as an intra-image based MPEG (motion picture expert group) standard for decoding by the system unit1000. The camera LED indicator is arranged to illuminate in response to appropriate control data from the system unit1000, for example to signify adverse lighting conditions. Embodiments of the video camera1012may variously connect to the system unit1000via a USB, Bluetooth or Wi-Fi communication port. Embodiments of the video camera may include one or more associated microphones that are also capable of transmitting audio data. In embodiments of the video camera, the CCD may have a resolution suitable for high-definition video capture. In use, images captured by the video camera may for example be incorporated within a game or interpreted as game control inputs.

In general, in order for successful data communication to occur with a peripheral device such as a video camera or remote control via one of the communication ports of the system unit1000, an appropriate piece of software such as a device driver should be provided. Device driver technology is well-known and will not be described in detail here, except to say that the skilled man will be aware that a device driver or similar software interface may be required in the present embodiment described.

Embodiments of the present invention also contemplate distributed image processing configurations. For example, the invention is not limited to the display image processing taking place in one or even two locations, such as in the CPU or in the CPU and one other element. For example, the input image processing can just as readily take place in an associated CPU, processor or device that can perform processing; essentially all of image processing can be distributed throughout the interconnected system. Thus, the present invention is not limited to any specific image processing hardware circuitry and/or software. The embodiments described herein are also not limited to any specific combination of general hardware circuitry and/or software, nor to any particular source for the instructions executed by processing components.

With the above embodiments in mind, it should be understood that the invention may employ various computer-implemented operations involving data stored in computer systems. These operations include operations requiring physical manipulation of physical quantities. Further, the manipulations performed are often referred to in terms, such as producing, identifying, determining, or comparing.

The above described invention may be practiced with other computer system configurations including hand-held devices, microprocessor systems, microprocessor-based or programmable consumer electronics, minicomputers, mainframe computers and the like. The invention may also be practiced in distributing computing environments where tasks are performed by remote processing devices that are linked through a communications network.

The invention can also be embodied as computer readable code on a computer readable medium. The computer readable medium is any data storage device that can store data which can be thereafter read by a computer system. Examples of the computer readable medium include hard drives, network attached storage (NAS), read-only memory, random-access memory, CD-ROMs, CD-Rs, CD-RWs, magnetic tapes, and other optical and non-optical data storage devices. The computer readable medium can also be distributed over a network coupled computer system so that the computer readable code is stored and executed in a distributed fashion.

Although the foregoing invention has been described in some detail for purposes of clarity of understanding, it will be apparent that certain changes and modifications may be practiced within the scope of the appended claims. Accordingly, the present embodiments are to be considered as illustrative and not restrictive, and the invention is not to be limited to the details given herein, but may be modified within the scope and equivalents of the appended claims.

Claims

- In a computer-implemented game, a method for controlling an artificial-intelligence (AI) character, the method comprising: entering a session of a command mode, the command mode enabling control of the AI character, the entering a command mode occurs while maintaining a view of a virtual space of the game;during the session of the command mode, receiving input sequentially specifying a plurality of user-defined locations within the virtual space of the game, an initial location and the plurality of user-defined locations defining a path for the AI character to traverse in the virtual space;moving the AI character along the path from the initial location to the plurality of user-defined locations in the order they were specified.

- The method of claim 1 , wherein the specifying a plurality of locations further comprises: maneuvering a reticle to each of the locations;selecting each of the plurality of locations when the reticle is situated at each of the plurality of locations.

- The method of claim 2 , wherein the specifying a plurality of locations further comprises: displaying a node in the virtual space of the game at each of the plurality of locations.

- The method of claim 3 , wherein the specifying a plurality of locations further comprises: displaying a series of lines connecting the nodes in the virtual space of the game.

- The method of claim 2 , wherein the view of the virtual space of the game is shifted in accordance with the maneuvering a reticle, so as to maintain a display of the reticle in a central portion of the view.

- The method of claim 1 , wherein the entering a command mode further comprises: slowing a rate of gameplay of the game, wherein slowing the rate of gameplay is defined by a slowing of a gameplay clock so that activity of the game proceeds at a slower rate than a normal rate of activity.

- The method of claim 1 , wherein the entering a command mode further comprises: determining points of interest within the virtual space of the game;reducing color saturation of the view of the virtual space of the game while maintaining color saturation of the points of interest.

- The method of claim 7 , wherein the points of interest are selected from the group consisting of: characters, gun-fire, and explosions.

- The method of claim 1 , wherein the entering a command mode further comprises: enlarging a field of view of the view of the virtual space of the game.

- The method of claim 1 , further comprising specifying an action to be performed by the AI character at one or more of the plurality of locations.

- The method of claim 1 , wherein the moving the AI character begins immediately upon specification of a first one of the plurality of locations.

- The method of claim 1 , further comprising: prior to the specifying of the plurality of locations, halting any existing movement of the AI character;after the plurality of locations have been specified, then releasing the AI character to follow the path.

- A computer system for executing a game, the game including an artificial-intelligence (AI) character, the system comprising: a controller for receiving and relaying user input to said game;a display for displaying image frame data of the game;a command mode processor for executing a command mode of the game, the executing a command mode enabling control of the AI character and occurring while maintaining a view of a virtual space of the game, the command mode processor comprising a node placement module for enabling a user to specify a plurality of user-defined locations within the virtual space of the game, an initial location and the plurality of user-defined locations defining a path for the AI character to traverse in the virtual space.

- The computer system of claim 13 , wherein the specification of the plurality of locations is facilitated by enabling the user to maneuver a command reticle to each of the plurality of the locations and selecting each of the plurality of locations when the reticle is situated at each of the plurality of locations.

- The computer system of claim 13 , wherein the specification of the plurality of locations includes displaying a node in the existing display of the game at each of the plurality of locations.

- The method of claim 15 , wherein the specification of the plurality of locations further includes displaying a series of lines connecting the nodes in the virtual space of the game.

- The computer system of claim 14 , wherein the view of the virtual space of the game is shifted in accordance with the maneuvering a reticle, so as to maintain a display of the reticle in a central portion of the view.

- The computer system of claim 13 , wherein the command mode processor further comprises a gameplay rate control module for slowing a rate of gameplay of the game during execution of the command mode, wherein slowing the rate of gameplay is defined by a slowing of a gameplay clock so that activity of the game proceeds at a slower rate than a normal rate of activity.

- The computer system of claim 13 , wherein the command mode processor further comprises a saturation display control, the saturation display control for determining points of interest within the virtual space of the game and reducing color saturation of the view of the virtual space of the game while maintaining color saturation of the points of interest.

- The method of claim 19 , wherein the points of interest are selected from the group consisting of: characters, gun-fire, and explosions.

- The method of claim 13 , wherein the executing a command mode includes enlarging a field of view of the view of the virtual space of the game.

- The method of claim 13 , wherein the node placement module further enables a user to specify an action to be performed by the AI character at one or more of the plurality of locations.

- The method of claim 1 , wherein moving the AI character occurs after exiting the session of the command mode.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.