U.S. Pat. No. 8,214,306

COMPUTER GAME WITH INTUITIVE LEARNING CAPABILITY

AssigneeIntuition Intelligence, Inc.

Issue DateDecember 5, 2008

Illustrative Figure

Abstract

A computer game and a method of providing learning capability thereto are provided. The computer game has an objective of matching a skill level of the computer game with a skill level of a game player. A move performed by the game player is identified, one of a plurality of game moves is selected based on a game move probability distribution comprising a plurality of probability values corresponding to the plurality of game moves, an outcome of the selected game move relative to the identified player move is determined, the game move probability distribution is updated based on the outcome, and one or more of the game move selection, the outcome determination, and the game move probability distribution update is modified based on the objective.

Description

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS Generalized Single-User Program (Single Processor Action-Single User Action) Referring toFIG. 1, a single-user learning program100developed in accordance with the present inventions can be generally implemented to provide intuitive learning capability to any variety of processing devices, e.g., computers, microprocessors, microcontrollers, embedded systems, network processors, and data processing systems. In this embodiment, a single user105interacts with the program100by receiving a processor action αifrom a processor action set α within the program100, selecting a user action λxfrom a user action set λ based on the received processor action αi, and transmitting the selected user action λxto the program100. It should be noted that in alternative embodiments, the user105need not receive the processor action αito select a user action λx, the selected user action λxneed not be based on the received processor action αi, and/or the processor action αimay be selected in response to the selected user action λx. The significance is that a processor action αiand a user action λxare selected. The program100is capable of learning based on the measured performance of the selected processor action αirelative to a selected user action λx, which, for the purposes of this specification, can be measured as an outcome value β. It should be noted that although an outcome value β is described as being mathematically determined or generated for purposes of understanding the operation of the equations set forth herein, an outcome value β need not actually be determined or generated for practical purposes. Rather, it is only important that the outcome of the processor action αirelative to the user action λxbe known. In alternative embodiments, the program100is capable of learning based on the measured performance of a selected processor action αiand/or selected user action λxrelative to other criteria. As will be described in further detail below, program100directs ...

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS

Generalized Single-User Program (Single Processor Action-Single User Action)

Referring toFIG. 1, a single-user learning program100developed in accordance with the present inventions can be generally implemented to provide intuitive learning capability to any variety of processing devices, e.g., computers, microprocessors, microcontrollers, embedded systems, network processors, and data processing systems. In this embodiment, a single user105interacts with the program100by receiving a processor action αifrom a processor action set α within the program100, selecting a user action λxfrom a user action set λ based on the received processor action αi, and transmitting the selected user action λxto the program100. It should be noted that in alternative embodiments, the user105need not receive the processor action αito select a user action λx, the selected user action λxneed not be based on the received processor action αi, and/or the processor action αimay be selected in response to the selected user action λx. The significance is that a processor action αiand a user action λxare selected.

The program100is capable of learning based on the measured performance of the selected processor action αirelative to a selected user action λx, which, for the purposes of this specification, can be measured as an outcome value β. It should be noted that although an outcome value β is described as being mathematically determined or generated for purposes of understanding the operation of the equations set forth herein, an outcome value β need not actually be determined or generated for practical purposes. Rather, it is only important that the outcome of the processor action αirelative to the user action λxbe known. In alternative embodiments, the program100is capable of learning based on the measured performance of a selected processor action αiand/or selected user action λxrelative to other criteria. As will be described in further detail below, program100directs its learning capability by dynamically modifying the model that it uses to learn based on a performance index φ to achieve one or more objectives.

To this end, the program100generally includes a probabilistic learning module110and an intuition module115. The probabilistic learning module110includes a probability update module120, an action selection module125, and an outcome evaluation module130. Briefly, the probability update module120uses learning automata theory as its learning mechanism with the probabilistic learning module110configured to generate and update an action probability distribution p based on the outcome value β. The action selection module125is configured to pseudo-randomly select the processor action αibased on the probability values contained within the action probability distribution p internally generated and updated in the probability update module120. The outcome evaluation module130is configured to determine and generate the outcome value β based on the relationship between the selected processor action αiand user action λx. The intuition module115modifies the probabilistic learning module110(e.g., selecting or modifying parameters of algorithms used in learning module110) based on one or more generated performance indexes φ to achieve one or more objectives. A performance index φ can be generated directly from the outcome value β or from something dependent on the outcome value β, e.g., the action probability distribution p, in which case the performance index φ may be a function of the action probability distribution p, or the action probability distribution p may be used as the performance index φ. A performance index φ can be cumulative (e.g., it can be tracked and updated over a series of outcome values β or instantaneous (e.g., a new performance index φ can be generated for each outcome value β).

Modification of the probabilistic learning module110can be accomplished by modifying the functionalities of (1) the probability update module120(e.g., by selecting from a plurality of algorithms used by the probability update module120, modifying one or more parameters within an algorithm used by the probability update module120, transforming, adding and subtracting probability values to and from, or otherwise modifying the action probability distribution p); (2) the action selection module125(e.g., limiting or expanding selection of the action α corresponding to a subset of probability values contained within the action probability distribution p); and/or (3) the outcome evaluation module130(e.g., modifying the nature of the outcome value β or otherwise the algorithms used to determine the outcome value β).

Having now briefly discussed the components of the program100, we will now describe the functionality of the program100in more detail. Beginning with the probability update module120, the action probability distribution p that it generates can be represented by the following equation:

p(k)=[p1(k),p2(k),p3(k) . . .pn(k)], [1]where piis the action probability value assigned to a specific processor action αi; n is the number of processor actions αiwithin the processor action set α, and k is the incremental time at which the action probability distribution was updated.

Preferably, the action probability distribution p at every time k should satisfy the following requirement:

∑i=1npi(k)=1,0≤pi(k)≤1.[2]

Thus, the internal sum of the action probability distribution p, i.e., the action probability values pifor all processor actions αiwithin the processor action set α is always equal “1,” as dictated by the definition of probability. It should be noted that the number n of processor actions αineed not be fixed, but can be dynamically increased or decreased during operation of the program100.

The probability update module120uses a stochastic learning automaton, which is an automaton that operates in a random environment and updates its action probabilities in accordance with inputs received from the environment so as to improve its performance in some specified sense. A learning automaton can be characterized in that any given state of the action probability distribution p determines the state of the next action probability distribution p. For example, the probability update module120operates on the action probability distribution p(k) to determine the next action probability distribution p(k+1), i.e., the next action probability distribution p(k+1) is a function of the current action probability distribution p(k). Advantageously, updating of the action probability distribution p using a learning automaton is based on a frequency of the processor actions αiand/or user actions λx, as well as the time ordering of these actions. This can be contrasted with purely operating on a frequency of processor actions αior user actions λx, and updating the action probability distribution p(k) based thereon. Although the present inventions, in their broadest aspects, should not be so limited, it has been found that the use of a learning automaton provides for a more dynamic, accurate, and flexible means of teaching the probabilistic learning module110.

In this scenario, the probability update module120uses a single learning automaton with a single input to a single-teacher environment (with the user105as the teacher), and thus, a single-input, single-output (SISO) model is assumed.

To this end, the probability update module120is configured to update the action probability distribution p based on the law of reinforcement, the basic idea of which is to reward a favorable action and/or to penalize an unfavorable action. A specific processor action αiis rewarded by increasing the corresponding current probability value pi(k) and decreasing all other current probability values pj(k), while a specific processor action αiis penalized by decreasing the corresponding current probability value pi(k) and increasing all other current probability values pj(k). Whether the selected processor action αiis rewarded or punished will be based on the outcome value β generated by the outcome evaluation module130. For the purposes of this specification, an action probability distribution p is updated by changing the probability values piwithin the action probability distribution p, and does not contemplate adding or subtracting probability values pi.

To this end, the probability update module120uses a learning methodology to update the action probability distribution p, which can mathematically be defined as:

p(k+1)=T[p(k),αi(k),β(k)], [3]where p(k+1) is the updated action probability distribution, T is the reinforcement scheme, p(k) is the current action probability distribution, αi(k) is the previous processor action, β(k) is latest outcome value, and k is the incremental time at which the action probability distribution was updated.

Alternatively, instead of using the immediately previous processor action αi(k), any set of previous processor action, e.g., α(k−1), α(k−2), αk−3), etc., can be used for lag learning, and/or a set of future processor action, e.g., α(k+1), α(k+2), α(k+3), etc., can be used for lead learning. In the case of lead learning, a future processor action is selected and used to determine the updated action probability distribution p(k+1).

The types of learning methodologies that can be utilized by the probability update module120are numerous, and depend on the particular application. For example, the nature of the outcome value β can be divided into three types: (1) P-type, wherein the outcome value β can be equal to “1” indicating success of the processor action αi, and “0” indicating failure of the processor action αi; (2) Q-type, wherein the outcome value β can be one of a finite number of values between “0” and “1” indicating a relative success or failure of the processor action αi; or (3) S-Type, wherein the outcome value β can be a continuous value in the interval [0,1] also indicating a relative success or failure of the processor action αi.

The outcome value β, can indicate other types of events besides successful and unsuccessful events. The time dependence of the reward and penalty probabilities of the actions α can also vary. For example, they can be stationary if the probability of success for a processor action αidoes not depend on the index k, and non-stationary if the probability of success for the processor action αidepends on the index k. Additionally, the equations used to update the action probability distribution p can be linear or non-linear. Also, a processor action αican be rewarded only, penalized only, or a combination thereof. The convergence of the learning methodology can be of any type, including ergodic, absolutely expedient, ε-optimal, or optimal. The learning methodology can also be a discretized, estimator, pursuit, hierarchical, pruning, growing or any combination thereof.

Of special importance is the estimator learning methodology, which can advantageously make use of estimator tables and algorithms should it be desired to reduce the processing otherwise requiring for updating the action probability distribution for every processor action αithat is received. For example, an estimator table may keep track of the number of successes and failures for each processor action αireceived, and then the action probability distribution p can then be periodically updated based on the estimator table by, e.g., performing transformations on the estimator table. Estimator tables are especially useful when multiple users are involved, as will be described with respect to the multi-user embodiments described later.

In the preferred embodiment, a reward function gjand a penalization function hjis used to accordingly update the current action probability distribution p(k). For example, a general updating scheme applicable to P-type, Q-type and S-type methodologies can be given by the following SISO equations:

pj(k+1)=pj(k)-β(k)gj(p(k))+(1-β(k))hj(p(k)),ifα(k)≠αi[4]pi(k+1)=pi(k)+β(k)∑j=1j≠ingj(p(k))-(1-β(k))∑j=1j≠inhj(p(k)),ifα(k)=αi[5]where i is an index for a processor action αi, selected to be rewarded or penalized, and j is an index for the remaining processor actions αi.

Assuming a P-type methodology, equations [4] and [5] can be broken down into the following equations:

pi(k+1)=pi(k)+∑j=1j≠ingj(p(k));and[6]pj(k+1)=pj(k)-gj(p(k)),whenβ(k)=1andαiisselected[7]pi(k+1)=pi(k)-∑j=1j≠inhj(p(k));and[8]

pj(k+1)=pj(k)+hj(p(k)), when β(k)=0 and αiis selected [9]

Preferably, the reward function gjand penalty function hjare continuous and nonnegative for purposes of mathematical convenience and to maintain the reward and penalty nature of the updating scheme. Also, the reward function gjand penalty function hjare preferably constrained by the following equations to ensure that all of the components of p(k+1) remain in the (0,1) interval when p(k) is in the (0,1) interval:

0<gi(p)<pj;0<∑j≠in(pj+hj(p))<1

for all pjε(0,1) and all j=1, 2, . . . n.

The updating scheme can be of the reward-penalty type, in which case, both gjand hjare non-zero. Thus, in the case of a P-type methodology, the first two updating equations [6] and [7] will be used to reward the processor action αi, e.g., when successful, and the last two updating equations [8] and [9] will be used to penalize processor action αi, e.g., when unsuccessful. Alternatively, the updating scheme is of the reward-inaction type, in which case, gjis nonzero and hjis zero. Thus, the first two general updating equations [6] and [7] will be used to reward the processor action αi, e.g., when successful, whereas the last two general updating equations [8] and [9] will not be used to penalize processor action αi, e.g., when unsuccessful. More alternatively, the updating scheme is of the penalty-inaction type, in which case, gjis zero and hjis nonzero. Thus, the first two general updating equations [6] and [7] will not be used to reward the processor action αi, e.g., when successful, whereas the last two general updating equations [8] and [9] will be used to penalize processor action αi, e.g., when unsuccessful. The updating scheme can even be of the reward-reward type (in which case, the processor action αiis rewarded more, e.g., when it is more successful than when it is not) or penalty-penalty type (in which case, the processor action αiis penalized more, e.g., when it is less successful than when it is).

It should be noted that with respect to the probability distribution p as a whole, any typical updating scheme will have both a reward aspect and a penalty aspect to the extent that a particular processor action αithat is rewarded will penalize the remaining processor actions αi, and any particular processor action αithat penalized will reward the remaining processor actions αi. This is because any increase in a probability value piwill relatively decrease the remaining probability values pi, and any decrease in a probability value piwill relatively increase the remaining probability values pi. For the purposes of this specification, however, a particular processor action αiis only rewarded if its corresponding probability value piis increased in response to an outcome value β associated with it, and a processor action αiis only penalized if its corresponding probability value piis decreased in response to an outcome value β associated with it.

The nature of the updating scheme is also based on the functions gjand hjthemselves. For example, the functions gjand hjcan be linear, in which case, e.g., they can be characterized by the following equations:

gj(p(k))=apj(k),0<a<1;and[10]hj(p(k))=bn-1-bpj(k),0<b<1[11]

where α is the reward parameter, and b is the penalty parameter.

The functions gjand hjcan alternatively be absolutely expedient, in which case, e.g., they can be characterized by the following equations:

g1(p)p1=g2(p)p2=…=gn(p)pn;[12]h1(p)p1=h2(p)p2=…=hn(p)pn[13]

The functions gjand hjcan alternatively be non-linear, in which case, e.g., they can be characterized by the following equations:

gj(p(k))=pj(k)-F(pj(k));[14]hj(p(k))=pi(k)-F(pi(k))n-1[15]

and F(x)=axm, m=2, 3, . . . .

It should be noted that equations [4] and [5] are not the only general equations that can be used to update the current action probability distribution p(k) using a reward function gjand a penalization function hj. For example, another general updating scheme applicable to P-type, Q-type and S-type methodologies can be given by the following SISO equations:

pj(k+1)=pj(k)−β(k)cjgi(p(k))+(1−β(k)djhi(p(k)), if α(k)≠αi[16]

pi(k+1)=pi(k)+β(k)gi(p(k))−(1−β(k))hi(p(k)), if α(k)=αi[17]where c and d are constant or variable distribution multipliers that adhere to the following constraints:

∑j=1i≠jncjgi(p(k))=gi(p(k));∑j=1i≠jndjhi(p(k))=hi(p(k))

In other words, the multipliers c and d are used to determine what proportions of the amount that is added to or subtracted from the probability value piis redistributed to the remaining probability values pj.

Assuming a P-type methodology, equations [16] and [17] can be broken down into the following equations:

pi(k+1)=pi(k)+gi(p(k)); and [18]

pi(k+1)=pj(k)−cjgi(p(k)), when β(k)=1 and αiis selected [19]

pi(k+1)=pi(k)−hi(p(k)); and [20]

pj(k+1)=pj(k)+djhi(p(k)), when β(k)=0and αiis selected [21]

It can be appreciated that equations [4]-[5] and [16]-[17] are fundamentally similar to the extent that the amount that is added to or subtracted from the probability value piis subtracted from or added to the remaining probability values pj. The fundamental difference is that, in equations [4]-[5], the amount that is added to or subtracted from the probability value piis based on the amounts that are subtracted from or added to the remaining probability values pj(i.e., the amounts added to or subtracted from the remaining probability values pjare calculated first), whereas in equations [16]-[17], the amounts that are added to or subtracted from the remaining probability values pjare based on the amount that is subtracted from or added to the probability value pi(i.e., the amount added to or subtracted from the probability value piis calculated first). It should also be noted that equations [4]-[5] and [16]-[17] can be combined to create new learning methodologies. For example, the reward portions of equations [4]-[5] can be used when an action αiis to be rewarded, and the penalty portions of equations [16]-[17] can be used when an action αiis to be penalized.

Previously, the reward and penalty functions gjand hiand multipliers cjand djhave been described as being one-dimensional with respect to the current action αithat is being rewarded or penalized. That is, the reward and penalty functions gjand hjand multipliers cjand djare the same given any action αi. It should be noted, however, that multi-dimensional reward and penalty functions gijand hijand multipliers cijand dijcan be used.

In this case, the single dimensional reward and penalty functions gjand hjof equations [6]-[9] can be replaced with the two-dimensional reward and penalty functions gijand hij, resulting in the following equations:

pi(k+1)=pi(k)+∑j=1j≠ingij(p(k));and[6a]pj(k+1)=pj(k)-gij(p(k)),whenβ(k)=1andαiisselected[7a]pi(k+1)=pi(k)-∑j=1j≠inhij(p(k));and[8a]pj(k+1)=pj(k)+hij(p(k)),whenβ(k)=0andαiisselected[9a]

The single dimensional multipliers cjand djof equations [19] and [21] can be replaced with the two-dimensional multipliers cijand dij, resulting in the following equations:

pj(k+1)=pj(k)−cijgi(p(k)), when β(k)=1 and αiis selected [19a]

pj(k+1)=pj(k)+dijhi(p(k)), when β(k)=0and αiis selected [21a]

Thus, it can be appreciated, that equations [19a] and [21a] can be expanded into many different learning methodologies based on the particular action αithat has been selected.

Further details on learning methodologies are disclosed in “Learning Automata An Introduction,” Chapter 4, Narendra, Kumpati, Prentice Hall (1989) and “Learning Algorithms-Theory and Applications in Signal Processing, Control and Communications,” Chapter 2, Mars, Phil, CRC Press (1996), which are both expressly incorporated herein by reference.

The intuition module115directs the learning of the program100towards one or more objectives by dynamically modifying the probabilistic learning module110. The intuition module115specifically accomplishes this by operating on one or more of the probability update module120, action selection module125, or outcome evaluation module130based on the performance index φ, which, as briefly stated, is a measure of how well the program100is performing in relation to the one or more objective to be achieved. The intuition module115may, e.g., take the form of any combination of a variety of devices, including an (1) evaluator, data miner, analyzer, feedback device, stabilizer; (2) decision maker; (3) expert or rule-based system; (4) artificial intelligence, fuzzy logic, neural network, or genetic methodology; (5) directed learning device; (6) statistical device, estimator, predictor, regressor, or optimizer. These devices may be deterministic, pseudo-deterministic, or probabilistic.

It is worth noting that absent modification by the intuition module115, the probabilistic learning module110would attempt to determine a single best action or a group of best actions for a given predetermined environment as per the objectives of basic learning automata theory. That is, if there is a unique action that is optimal, the unmodified probabilistic learning module110will substantially converge to it. If there is a set of actions that are optimal, the unmodified probabilistic learning module110will substantially converge to one of them, or oscillate (by pure happenstance) between them. In the case of a changing environment, however, the performance of an unmodified learning module110would ultimately diverge from the objectives to be achieved.FIGS. 2 and 3are illustrative of this point. Referring specifically toFIG. 2, a graph illustrating the action probability values piof three different actions α1, α2, and α3, as generated by a prior art learning automaton over time t, is shown. As can be seen, the action probability values pifor the three actions are equal at the beginning of the process, and meander about on the probability plane p, until they eventually converge to unity for a single action, in this case, α1. Thus, the prior art learning automaton assumes that there is always a single best action over time t and works to converge the selection to this best action. Referring specifically toFIG. 3, a graph illustrating the action probability values piof three different actions α1, α2, and α3, as generated by the program100over time t, is shown. Like with the prior art learning automaton, action probability values pifor the three action are equal at t=0. Unlike with the prior art learning automaton, however, the action probability values pifor the three actions meander about on the probability plane p without ever converging to a single action. Thus, the program100does not assume that there is a single best action over time t, but rather assumes that there is a dynamic best action that changes over time t. Because the action probability value for any best action will not be unity, selection of the best action at any given time t is not ensured, but will merely tend to occur, as dictated by its corresponding probability value. Thus, the program100ensures that the objective(s) to be met are achieved over time t.

Having now described the interrelationships between the components of the program100and the user105, we now generally describe the methodology of the program100. Referring toFIG. 4, the action probability distribution p is initialized (step150). Specifically, the probability update module120initially assigns equal probability values to all processor actions αi, in which case, the initial action probability distribution p(k) can be represented by

p1(0)=p2(0)=p2(0)=…pn(0)=1n.

Thus, each of the processor actions αihas an equal chance of being selected by the action selection module125. Alternatively, the probability update module120initially assigns unequal probability values to at least some of the processor actions αi, e.g., if the programmer desires to direct the learning of the program100towards one or more objectives quicker. For example, if the program100is a computer game and the objective is to match a novice game player's skill level, the easier processor action αi, and in this case game moves, may be assigned higher probability values, which as will be discussed below, will then have a higher probability of being selected. In contrast, if the objective is to match an expert game player's skill level, the more difficult game moves may be assigned higher probability values.

Once the action probability distribution p is initialized at step150, the action selection module125determines if a user action λxhas been selected from the user action set λ (step155). If not, the program100does not select a processor action αifrom the processor action set α (step160), or alternatively selects a processor action αi, e.g., randomly, notwithstanding that a user action λxhas not been selected (step165), and then returns to step155where it again determines if a user action λxhas been selected. If a user action λxhas been selected at step155, the action selection module125determines the nature of the selected user action λx, i.e., whether the selected user action λxis of the type that should be countered with a processor action αiand/or whether the performance index φ can be based, and thus whether the action probability distribution p should be updated. For example, again, if the program100is a game program, e.g., a shooting game, a selected user action λxthat merely represents a move may not be a sufficient measure of the performance index φ, but should be countered with a processor action αi, while a selected user action λxthat represents a shot may be a sufficient measure of the performance index φ.

Specifically, the action selection module125determines whether the selected user action λxis of the type that should be countered with a processor action αi(step170). If so, the action selection module125selects a processor action αifrom the processor action set α based on the action probability distribution p (step175). After the performance of step175or if the action selection module125determines that the selected user action λxis not of the type that should be countered with a processor action αi, the action selection module125determines if the selected user action λxis of the type that the performance index φ is based on (step180).

If so, the outcome evaluation module130quantifies the performance of the previously selected processor action αi(or a more previous selected processor action αiin the case of lag learning or a future selected processor action αiin the case of lead learning) relative to the currently selected user action λxby generating an outcome value β (step185). The intuition module115then updates the performance index φ based on the outcome value β, unless the performance index φ is an instantaneous performance index that is represented by the outcome value β itself (step190). The intuition module115then modifies the probabilistic learning module110by modifying the functionalities of the probability update module120, action selection module125, or outcome evaluation module130(step195). It should be noted that step190can be performed before the outcome value β is generated by the outcome evaluation module130at step180, e.g., if the intuition module115modifies the probabilistic learning module110by modifying the functionality of the outcome evaluation module130. The probability update module120then, using any of the updating techniques described herein, updates the action probability distribution p based on the generated outcome value β (step198).

The program100then returns to step155to determine again whether a user action λxhas been selected from the user action set λ. It should be noted that the order of the steps described inFIG. 4may vary depending on the specific application of the program100.

Single-Player Game Program (Single Game Move-Single Player Move)

Having now generally described the components and functionality of the learning program100, we now describe one of its various applications. Referring toFIG. 5, a single-player game program300(shown inFIG. 8) developed in accordance with the present inventions is described in the context of a duck hunting game200. The game200comprises a computer system205, which, e.g., takes the form of a personal desktop or laptop computer. The computer system205includes a computer screen210for displaying the visual elements of the game200to a player215, and specifically, a computer animated duck220and a gun225, which is represented by a mouse cursor. For the purposes of this specification, the duck220and gun225can be broadly considered to be computer and user-manipulated objects, respectively. The computer system205further comprises a computer console250, which includes memory230for storing the game program300, and a CPU235for executing the game program300. The computer system205further includes a computer mouse240with a mouse button245, which can be manipulated by the player215to control the operation of the gun225, as will be described immediately below. It should be noted that although the game200has been illustrated as being embodied in a standard computer, it can very well be implemented in other types of hardware environments, such as a video game console that receives video game cartridges and connects to a television screen, or a video game machine of the type typically found in video arcades.

Referring specifically to the computer screen210ofFIGS. 6 and 7, the rules and objective of the duck hunting game200will now be described. The objective of the player215is to shoot the duck220by moving the gun225towards the duck220, intersecting the duck220with the gun225, and then firing the gun225(FIG. 6). The player215accomplishes this by laterally moving the mouse240, which correspondingly moves the gun225in the direction of the mouse movement, and clicking the mouse button245, which fires the gun225. The objective of the duck220, on the other hand, is to avoid from being shot by the gun225. To this end, the duck220is surrounded by a gun detection region270, the breach of which by the gun225prompts the duck220to select and make one of seventeen moves255(eight outer moves255a, eight inner moves255b, and a non-move) after a preprogrammed delay (move3inFIG. 7). The length of the delay is selected, such that it is not so long or short as to make it too easy or too difficult to shoot the duck220. In general, the outer moves255amore easily evade the gun225than the inner moves255b, thus, making it more difficult for the player215to shot the duck220.

For purposes of this specification, the movement and/or shooting of the gun225can broadly be considered to be a player move, and the discrete moves of the duck220can broadly be considered to be computer or game moves, respectively. Optionally or alternatively, different delays for a single move can also be considered to be game moves. For example, a delay can have a low and high value, a set of discrete values, or a range of continuous values between two limits. The game200maintains respective scores260and265for the player215and duck220. To this end, if the player215shoots the duck220by clicking the mouse button245while the gun225coincides with the duck220, the player score260is increased. In contrast, if the player215fails to shoot the duck220by clicking the mouse button245while the gun225does not coincide with the duck220, the duck score265is increased. The increase in the score can be fixed, one of a multitude of discrete values, or a value within a continuous range of values.

As will be described in further detail below, the game200increases its skill level by learning the player's215strategy and selecting the duck's220moves based thereon, such that it becomes more difficult to shoot the duck220as the player215becomes more skillful. The game200seeks to sustain the player's215interest by challenging the player215. To this end, the game200continuously and dynamically matches its skill level with that of the player215by selecting the duck's220moves based on objective criteria, such as, e.g., the difference between the respective player and game scores260and265. In other words, the game200uses this score difference as a performance index φ in measuring its performance in relation to its objective of matching its skill level with that of the game player. In the regard, it can be said that the performance index φ is cumulative. Alternatively, the performance index φ can be a function of the game move probability distribution p.

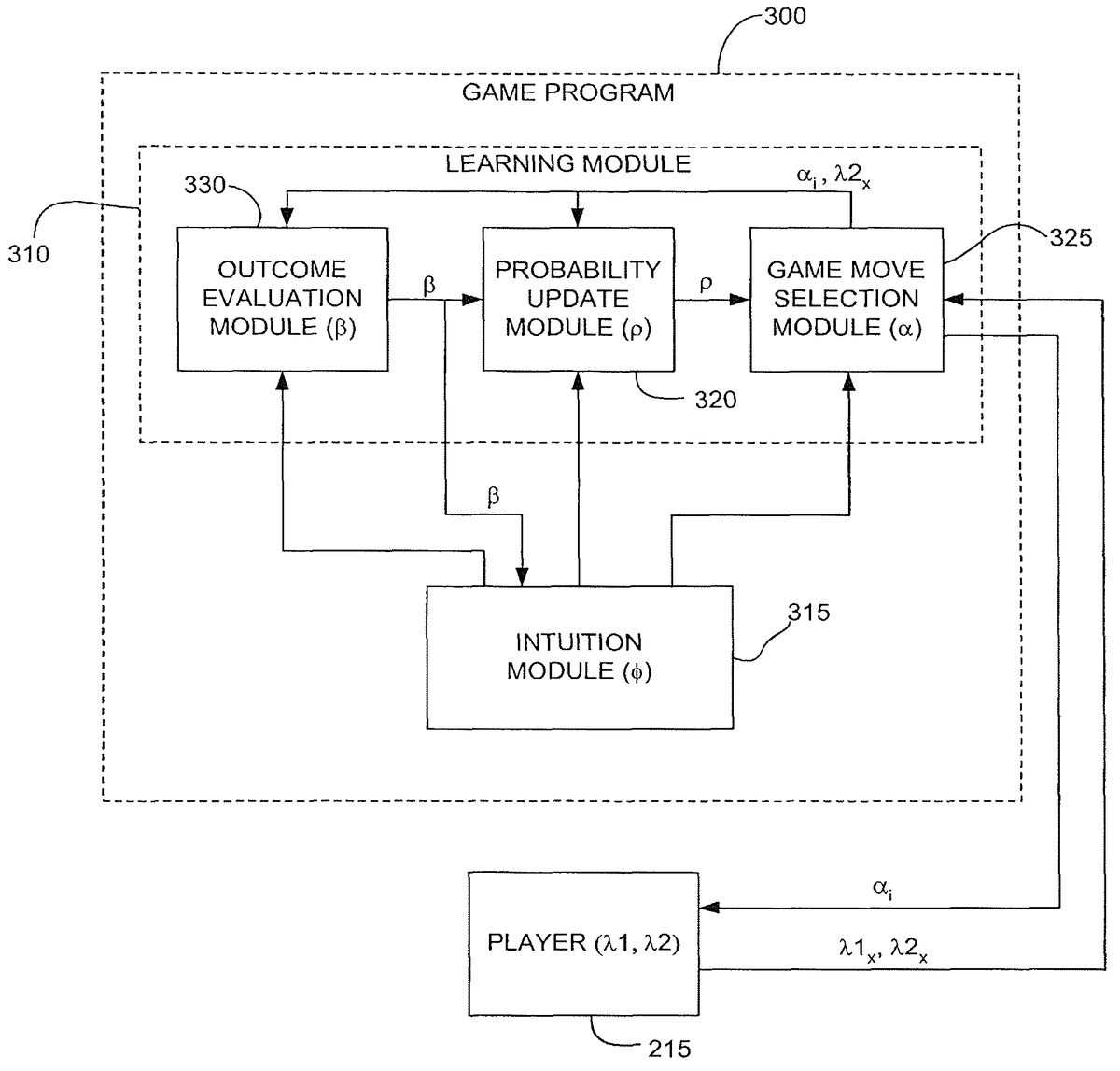

Referring further toFIG. 8, the game program300generally includes a probabilistic learning module310and an intuition module315, which are specifically tailored for the game200. The probabilistic learning module310comprises a probability update module320, a game move selection module325, and an outcome evaluation module330. Specifically, the probability update module320is mainly responsible for learning the player's215strategy and formulating a counterstrategy based thereon, with the outcome evaluation module330being responsible for evaluating moves performed by the game200relative to moves performed by the player215. The game move selection module325is mainly responsible for using the updated counterstrategy to move the duck220in response to moves by the gun225. The intuition module315is responsible for directing the learning of the game program300towards the objective, and specifically, dynamically and continuously matching the skill level of the game200with that of the player215. In this case, the intuition module315operates on the game move selection module325, and specifically selects the methodology that the game move selection module325will use to select a game move αifrom the game move set α as will be discussed in further detail below. In the preferred embodiment, the intuition module315can be considered deterministic in that it is purely rule-based. Alternatively, however, the intuition module315can take on a probabilistic nature, and can thus be quasi-deterministic or entirely probabilistic.

To this end, the game move selection module325is configured to receive a player move λ1xfrom the player215, which takes the form of a mouse240position, i.e., the position of the gun225, at any given time. In this embodiment, the player move λ1xcan be selected from a virtually infinite player move set λ1, i.e., the number of player moves λ1xare only limited by the resolution of the mouse240. Based on this, the game move selection module325detects whether the gun225is within the detection region270, and if so, selects a game move αifrom the game move set α, and specifically, one of the seventeen moves255that the duck220can make. The game move αimanifests itself to the player215as a visible duck movement.

The game move selection module325selects the game move αibased on the updated game strategy. To this end, the game move selection module325is further configured to receive the game move probability distribution p from the probability update module320, and pseudo-randomly selecting the game move αibased thereon. The game move probability distribution p is similar to equation [1] and can be represented by the following equation:

p(k)=[p1(k),p2(k),p3(k) . . .pn(k)], [1-1]where piis the game move probability value assigned to a specific game move αi; n is the number of game moves αiwithin the game move set α, and k is the incremental time at which the game move probability distribution was updated.

It is noted that pseudo-random selection of the game move αiallows selection and testing of any one of the game moves αi, with those game moves αicorresponding to the highest probability values being selected more often. Thus, without the modification, the game move selection module325will tend to more often select the game move αito which the highest probability value picorresponds, so that the game program300continuously improves its strategy, thereby continuously increasing its difficulty level.

Because the objective of the game200is sustainability, i.e., dynamically and continuously matching the respective skill levels of the game200and player215, the intuition module315is configured to modify the functionality of the game move selection module325based on the performance index φ, and in this case, the current skill level of the player215relative to the current skill level of the game200. In the preferred embodiment, the performance index φ is quantified in terms of the score difference value Δ between the player score260and the duck score265. The intuition module315is configured to modify the functionality of the game move selection module325by subdividing the game move set α into a plurality of game move subsets αs, one of which will be selected by the game move selection module325. In an alternative embodiment, the game move selection module325may also select the entire game move set α. In another alternative embodiment, the number and size of the game move subsets αscan be dynamically determined.

In the preferred embodiment, if the score difference value Δ is substantially positive (i.e., the player score260is substantially higher than the duck score265), the intuition module315will cause the game move selection module325to select a game move subset αs, the corresponding average probability value of which will be relatively high, e.g., higher than the median probability value of the game move probability distribution p. As a further example, a game move subset αscorresponding to the highest probability values within the game move probability distribution p can be selected. In this manner, the skill level of the game200will tend to quickly increase in order to match the player's215higher skill level.

If the score difference value Δ is substantially negative (i.e., the player score260is substantially lower than the duck score265), the intuition module315will cause the game move selection module325to select a game move subset αs, the corresponding average probability value of which will be relatively low, e.g., lower than the median probability value of the game move probability distribution p. As a further example, a game move subset αs, corresponding to the lowest probability values within the game move probability distribution p can be selected. In this manner, the skill level of the game200will tend to quickly decrease in order to match the player's215lower skill level.

If the score difference value Δ is substantially low, whether positive or negative (i.e., the player score260is substantially equal to the duck score265), the intuition module315will cause the game move selection module325to select a game move subset αs, the average probability value of which will be relatively medial, e.g., equal to the median probability value of the game move probability distribution p. In this manner, the skill level of the game200will tend to remain the same, thereby continuing to match the player's215skill level. The extent to which the score difference value Δ is considered to be losing or winning the game200may be provided by player feedback and the game designer.

Alternatively, rather than selecting a game move subset αs, based on a fixed reference probability value, such as the median probability value of the game move probability distribution p, selection of the game move set αscan be based on a dynamic reference probability value that moves relative to the score difference value Δ. To this end, the intuition module315increases and decreases the dynamic reference probability value as the score difference value Δ becomes more positive or negative, respectively. Thus, selecting a game move subset αs, the corresponding average probability value of which substantially coincides with the dynamic reference probability value, will tend to match the skill level of the game200with that of the player215. Without loss of generality, the dynamic reference probability value can also be learning using the learning principles disclosed herein.

In the illustrated embodiment, (1) if the score difference value Δ is substantially positive, the intuition module315will cause the game move selection module325to select a game move subset αscomposed of the top five corresponding probability values; (2) if the score difference value Δ is substantially negative, the intuition module315will cause the game move selection module325to select a game move subset αscomposed of the bottom five corresponding probability values; and (3) if the score difference value Δ is substantially low, the intuition module315will cause the game move selection module325to select a game move subset αscomposed of the middle seven corresponding probability values, or optionally a game move subset αscomposed of all seventeen corresponding probability values, which will reflect a normal game where all game moves are available for selection.

Whether the reference probability value is fixed or dynamic, hysteresis is preferably incorporated into the game move subset αsselection process by comparing the score difference value Δ to upper and lower score difference thresholds NS1and NS2, e.g., −1000 and 1000, respectively. Thus, the intuition module315will cause the game move selection module325to select the game move subset αsin accordance with the following criteria:If ΔNS2, then select game move subset αswith relatively high probability values; andIf NS1≦Δ≦NS2, then select game move subset αswith relatively medial probability values.

Alternatively, rather than quantify the relative skill level of the player215in terms of the score difference value Δ between the player score260and the duck score265, as just previously discussed, the relative skill level of the player215can be quantified from a series (e.g., ten) of previous determined outcome values β. For example, if a high percentage of the previous determined outcome values β is equal to “0,” indicating a high percentage of unfavorable game moves αi, the relative player skill level can be quantified as be relatively high. In contrast, if a low percentage of the previous determined outcome values β is equal to 0, indicating a low percentage of unfavorable game moves αi, the relative player skill level can be quantified as be relatively low. Thus, based on this information, a game move αican be pseudo-randomly selected, as hereinbefore described.

The game move selection module325is configured to pseudo-randomly select a single game move αifrom the game move subset αs, thereby minimizing a player detectable pattern of game move αiselections, and thus increasing interest in the game200. Such pseudo-random selection can be accomplished by first normalizing the game move subset αsand then summing, for each game move αiwithin the game move subset αs, the corresponding probability value with the preceding probability values (for the purposes of this specification, this is considered to be a progressive sum of the probability values). For example, the following Table 1 sets forth the unnormalized probability values, normalized probability values, and progressive sum of an exemplary subset of five game moves:

TABLE 1Progressive Sum of Probability Values For Five ExemplaryGame Moves in SISO FormatUnnormalizedNormalizedProgressiveGame MoveProbability ValueProbability ValueSumα10.050.090.09α20.050.090.18α30.100.180.36α40.150.270.63α50.200.371.00

The game move selection module325then selects a random number between “0” and “1,” and selects the game move αicorresponding to the next highest progressive sum value. For example, if the randomly selected number is 0.38, game move α4will be selected.

The game move selection module325is further configured to receive a player move λ2xfrom the player215in the form of a mouse button245click/mouse240position combination, which indicates the position of the gun225when it is fired. The outcome evaluation module330is configured to determine and output an outcome value β that indicates how favorable the game move αiis in comparison with the received player move λ2x.

To determine the extent of how favorable a game move αiis, the outcome evaluation module330employs a collision detection technique to determine whether the duck's220last move was successful in avoiding the gunshot. Specifically, if the gun225coincides with the duck220when fired, a collision is detected. On the contrary, if the gun225does not coincide with the duck220when fired, a collision is not detected. The outcome of the collision is represented by a numerical value, and specifically, the previously described outcome value β. In the illustrated embodiment, the outcome value β equals one of two predetermined values: “1” if a collision is not detected (i.e., the duck220is not shot), and “0” if a collision is detected (i.e., the duck220is shot). Of course, the outcome value β can equal “0” if a collision is not detected, and “1” if a collision is detected, or for that matter one of any two predetermined values other than a “0” or “1,” without straying from the principles of the invention. In any event, the extent to which a shot misses the duck220(e.g., whether it was a near miss) is not relevant, but rather that the duck220was or was not shot. Alternatively, the outcome value β can be one of a range of finite integers or real numbers, or one of a range of continuous values. In these cases, the extent to which a shot misses or hits the duck220is relevant. Thus, the closer the gun225comes to shooting the duck220, the less the outcome value β is, and thus, a near miss will result in a relatively low outcome value β, whereas a far miss will result in a relatively high outcome value β. Of course, alternatively, the closer the gun225comes to shooting the duck220, the greater the outcome value β is. What is significant is that the outcome value β correctly indicates the extent to which the shot misses the duck220. More alternatively, the extent to which a shot hits the duck220is relevant. Thus, the less damage the duck220incurs, the less the outcome value β is, and the more damage the duck220incurs, the greater the outcome value β is.

The probability update module320is configured to receive the outcome value β from the outcome evaluation module330and output an updated game strategy (represented by game move probability distribution p) that the duck220will use to counteract the player's215strategy in the future. In the preferred embodiment, the probability update module320utilizes a linear reward-penalty P-type update. As an example, given a selection of the seventeen different moves255, assume that the gun125fails to shoot the duck120after it takes game move α3, thus creating an outcome value β=1. In this case, general updating equations [6] and [7] can be expanded using equations [10] and [11], as follows:

p3(k+1)=p3(k)+∑j=1j≠317apj(k);p1(k+1)=p1(k)-ap1(k);p2(k+1)=p2(k)-ap2(k);p4(k+1)=p4(k)-ap4(k);⋮p17(k+1)=p17(k)-ap17(k)

Thus, since the game move α3resulted in a successful outcome, the corresponding probability value p3is increased, and the game move probability values picorresponding to the remaining game moves αiare decreased.

If, on the other hand, the gun125shoots the duck120after it takes game move α3, thus creating an outcome value β=0, general updating equations [8] and [9] can be expanded, using equations [10] and [11], as follows:

p3(k+1)=p3(k)-∑j=1j≠317(b16-bpj(k))p1(k+1)=p1(k)+b16-bp1(k);p2(k+1)=p2(k)+b16-bp2(k);p4(k+1)=p4(k)+b16-bp4(k);⋮p17(k+1)=p17(k)+b16-bp17(k)

It should be noted that in the case where the gun125shoots the duck120, thus creating an outcome value β=0, rather than using equations [8], [9], and [11], a value proportional to the penalty parameter b can simply be subtracted from the selection game move, and can then be equally distributed among the remaining game moves αj. It has been empirically found that this method ensures that no probability value piconverges to “1,” which would adversely result in the selection of a single game move αievery time. In this case, equations [8] and [9] can be modified to read:

pi(k+1)=pi(k)-bpi(k)[8b]pj(k+1)=pj(k)+1n-1bpi(k)[9b]

Assuming game move α3results in an outcome value β=0, equations [8b] and [9b] can be expanded as follows:

p3(k+1)=p3(k)-bp3(k)p1(k+1)=p1(k)+b16p1(k);p2(k+1)=p2(k)+b16p2(k);p4(k+1)=p4(k)+b16p4(k);⋮p17(k+1)=p17(k)+b16p17(k)

In any event, since the game move α3resulted in an unsuccessful outcome, the corresponding probability value p3is decreased, and the game move probability values picorresponding to the remaining game moves αjare increased. The values of a and b are selected based on the desired speed and accuracy that the learning module310learns, which may depend on the size of the game move set α. For example, if the game move set α is relatively small, the game200preferably must learn quickly, thus translating to relatively high a and b values. On the contrary, if the game move set α is relatively large, the game200preferably learns more accurately, thus translating to relatively low a and b values. In other words, the greater the values selected for a and b, the faster the game move probability distribution p changes, whereas the lesser the values selected for a and b, the slower the game move probability distribution p changes. In the preferred embodiment, the values of a and b have been chosen to be 0.1 and 0.5, respectively.

In the preferred embodiment, the reward-penalty update scheme allows the skill level of the game200to track that of the player215during gradual changes in the player's215skill level. Alternatively, a reward-inaction update scheme can be employed to constantly make the game200more difficult, e.g., if the game200has a training mode to train the player215to become progressively more skillful. More alternatively, a penalty-inaction update scheme can be employed, e.g., to quickly reduce the skill level of the game200if a different less skillful player215plays the game200. In any event, the intuition module315may operate on the probability update module320to dynamically select any one of these update schemes depending on the objective to be achieved.

It should be noted that rather than, or in addition to, modifying the functionality of the game move selection module325by subdividing the game move set α into a plurality of game move subsets αsthe respective skill levels of the game200and player215can be continuously and dynamically matched by modifying the functionality of the probability update module320by modifying or selecting the algorithms employed by it. For example, the respective reward and penalty parameters a and b may be dynamically modified.

For example, if the difference between the respective player and game scores260and265(i.e., the score difference value Δ) is substantially positive, the respective reward and penalty parameters a and b can be increased, so that the skill level of the game200more rapidly increases. That is, if the gun125shoots the duck120after it takes a particular game move αi, thus producing an unsuccessful outcome, an increase in the penalty parameter b will correspondingly decrease the chances that the particular game move αiis selected again relative to the chances that it would have been selected again if the penalty parameter b had not been modified. If the gun125fails to shoot the duck120after it takes a particular game move αi, thus producing a successful outcome, an increase in the reward parameter a will correspondingly increase the chances that the particular game move αiis selected again relative to the chances that it would have been selected again if the penalty parameter α had not been modified. Thus, in this scenario, the game200will learn at a quicker rate.

On the contrary, if the score difference value Δ is substantially negative, the respective reward and penalty parameters a and b can be decreased, so that the skill level of the game200less rapidly increases. That is, if the gun125shoots the duck120after it takes a particular game move αi, thus producing an unsuccessful outcome, a decrease in the penalty parameter b will correspondingly increase the chances that the particular game move αiis selected again relative to the chances that it would have been selected again if the penalty parameter b had not been modified. If the gun125fails to shoot the duck120after it takes a particular game move αi, thus producing a successful outcome, a decrease in the reward parameter a will correspondingly decrease the chances that the particular game move αiis selected again relative to the chances that it would have been selected again if the reward parameter a had not been modified. Thus, in this scenario, the game200will learn at a slower rate.

If the score difference value Δ is low, whether positive or negative, the respective reward and penalty parameters a and b can remain unchanged, so that the skill level of the game200will tend to remain the same. Thus, in this scenario, the game200will learn at the same rate.

It should be noted that an increase or decrease in the reward and penalty parameters a and b can be effected in various ways. For example, the values of the reward and penalty parameters a and b can be incrementally increased or decreased a fixed amount, e.g., 0.1. Or the reward and penalty parameters a and b can be expressed in the functional form y=f(x), with the performance index φ being one of the independent variables, and the penalty and reward parameters a and b being at least one of the dependent variables. In this manner, there is a smoother and continuous transition in the reward and penalty parameters a and b.

Optionally, to further ensure that the skill level of the game200rapidly decreases when the score difference value Δ substantially negative, the respective reward and penalty parameters a and b can be made negative. That is, if the gun125shoots the duck120after it takes a particular game move αi, thus producing an unsuccessful outcome, forcing the penalty parameter b to a negative number will increase the chances that the particular game move αiis selected again in the absolute sense. If the gun125fails to shoot the duck120after it takes a particular game move αi, thus producing a successful outcome, forcing the reward parameter a to a negative number will decrease the chances that the particular game move aαiis selected again in the absolute sense. Thus, in this scenario, rather than learn at a slower rate, the game200will actually unlearn. It should be noted in the case where negative probability values piresult, the probability distribution p is preferably normalized to keep the game move probability values piwithin the [0,1] range.

More optionally, to ensure that the skill level of the game200substantially decreases when the score difference value Δ is substantially negative, the respective reward and penalty equations can be switched. That is, the reward equations, in this case equations [6] and [7], can be used when there is an unsuccessful outcome (i.e., the gun125shoots the duck120). The penalty equations, in this case equations [8] and [9] (or [8b] and [9b]), can be used when there is a successful outcome (i.e., when the gun125misses the duck120). Thus, the probability update module320will treat the previously selected αias producing an unsuccessful outcome, when in fact, it has produced a successful outcome, and will treat the previously selected αias producing a successful outcome, when in fact, it has produced an unsuccessful outcome. In this case, when the score difference value Δ is substantially negative, the respective reward and penalty parameters a and b can be increased, so that the skill level of the game200more rapidly decreases.

Alternatively, rather than actually switching the penalty and reward equations, the functionality of the outcome evaluation module330can be modified with similar results. For example, the outcome evaluation module330may be modified to output an outcome value β=0 when the current game move α is successful, i.e., the gun125does not shoot the duck120, and to output an outcome value β=1 when the current game move αiis unsuccessful, i.e., the gun125shoots the duck120. Thus, the probability update module320will interpret the outcome value β as an indication of an unsuccessful outcome, when in fact, it is an indication of a successful outcome, and will interpret the outcome value β as an indication of a successful outcome, when in fact, it is an indication of an unsuccessful outcome. In this manner, the reward and penalty equations are effectively switched.

Rather than modifying or switching the algorithms used by the probability update module320, the game move probability distribution p can be transformed. For example, if the score difference value Δ is substantially positive, it is assumed that the game moves αicorresponding to a set of the highest probability values piare too easy, and the game moves αicorresponding to a set of the lowest probability values piare too hard. In this case, the game moves αicorresponding to the set of highest probability values pican be switched with the game moves corresponding to the set of lowest probability values pi, thereby increasing the chances that that the harder game moves αi(and decreasing the chances that the easier game moves αi) are selected relative to the chances that they would have been selected again if the game move probability distribution p had not been transformed. Thus, in this scenario, the game200will learn at a quicker rate. In contrast, if the score difference value Δ is substantially negative, it is assumed that the game moves αicorresponding to the set of highest probability values piare too hard, and the game moves αicorresponding to the set of lowest probability values piare too easy. In this case, the game moves αicorresponding to the set of highest probability values pican be switched with the game moves corresponding to the set of lowest probability values pi, thereby increasing the chances that that the easier game moves αi(and decreasing the chances that the harder game moves αi) are selected relative to the chances that they would have been selected again if the game move probability distribution p had not been transformed. Thus, in this scenario, the game200will learn at a slower rate. If the score difference value Δ is low, whether positive or negative, it is assumed that the game moves αicorresponding to the set of highest probability values piare not too hard, and the game moves αicorresponding to the set of lowest probability values piare not too easy, in which case, the game moves αicorresponding to the set of highest probability values piand set of lowest probability values piare not switched. Thus, in this scenario, the game200will learn at the same rate.

It should be noted that although the performance index φ has been described as being derived from the score difference value Δ, the performance index φ can also be derived from other sources, such as the game move probability distribution p. If it is known that the outer moves255aor more difficult than the inner moves255b, the performance index φ, and in this case, the skill level of the player215relative to the skill level the game200, may be found in the present state of the game move probability values piassigned to the moves255. For example, if the combined probability values picorresponding to the outer moves255ais above a particular threshold value, e.g., 0.7 (or alternatively, the combined probability values picorresponding to the inner moves255bis below a particular threshold value, e.g., 0.3), this may be an indication that the skill level of the player215is substantially greater than the skill level of the game200. In contrast, if the combined probability values picorresponding to the outer moves255ais below a particular threshold value, e.g., 0.4 (or alternatively, the combined probability values picorresponding to the inner moves255bis above a particular threshold value, e.g., 0.6), this may be an indication that the skill level of the player215is substantially less than the skill level of the game200. Similarly, if the combined probability values picorresponding to the outer moves255ais within a particular threshold range, e.g., 0.4-0.7 (or alternatively, the combined probability values picorresponding to the inner moves255bis within a particular threshold range, e.g., 0.3-0.6), this may be an indication that the skill level of the player215and skill level of the game200are substantially matched. In this case, any of the afore-described probabilistic learning module modification techniques can be used with this performance index φ.

Alternatively, the probabilities values picorresponding to one or more game moves αican be limited to match the respective skill levels of the player215and game200. For example, if a particular probability value piis too high, it is assumed that the corresponding game move αimay be too hard for the player215. In this case, one or more probabilities values pican be limited to a high value, e.g., 0.4, such that when a probability value pireaches this number, the chances that that the corresponding game move αiis selected again will decrease relative to the chances that it would be selected if the corresponding game move probability pihad not been limited. Similarly, one or more probabilities values pican be limited to a low value, e.g., 0.01, such that when a probability value pireaches this number, the chances that that the corresponding game move αiis selected again will increase relative to the chances that it would be selected if the corresponding game move probability pihad not been limited. It should be noted that the limits can be fixed, in which case, only the performance index φ that is a function of the game move probability distribution p is used to match the respective skill levels of the player215and game200, or the limits can vary, in which case, such variance may be based on a performance index φ external to the game move probability distribution p.

Having now described the structure of the game program300, the steps performed by the game program300will be described with reference toFIG. 9. First, the game move probability distribution p is initialized (step405). Specifically, the probability update module320initially assigns an equal probability value to each of the game moves αi, in which case, the initial game move probability distribution p(k) can be represented by

p1(0)=p2(0)=p2(0)=…pn(0)=1n.

Thus, all of the game moves αihave an equal chance of being selected by the game move selection module325. Alternatively, probability update module320initially assigns unequal probability values to at least some of the game moves αi. For example, the outer moves255amay be initially assigned a lower probability value than that of the inner moves255b, so that the selection of any of the outer moves255aas the next game move αiwill be decreased. In this case, the duck220will not be too difficult to shoot when the game200is started. In addition to the game move probability distribution p, the current game move αito be updated is also initialized by the probability update module320at step405.

Then, the game move selection module325determines whether a player move λ2xhas been performed, and specifically whether the gun225has been fired by clicking the mouse button245(step410). If a player move λ2xhas been performed, the outcome evaluation module330determines whether the last game move αiwas successful by performing a collision detection, and then generates the outcome value β in response thereto (step415). The intuition module315then updates the player score260and duck score265based on the outcome value β (step420). The probability update module320then, using any of the updating techniques described herein, updates the game move probability distribution p based on the generated outcome value β (step425).

After step425, or if a player move λ2xhas not been performed at step410, the game move selection module325determines if a player move λ1xhas been performed, i.e., gun225, has breached the gun detection region270(step430). If the gun225has not breached the gun detection region270, the game move selection module325does not select any game move αifrom the game move subset α and the duck220remains in the same location (step435). Alternatively, the game move αimay be randomly selected, allowing the duck220to dynamically wander. The game program300then returns to step410where it is again determined if a player move λ2xhas been performed. If the gun225has breached the gun detection region270at step430, the intuition module315modifies the functionality of the game move selection module325based on the performance index φ, and the game move selection module325selects a game move αifrom the game move set α.

Specifically, the intuition module315determines the relative player skill level by calculating the score difference value Δ between the player score260and duck score265(step440). The intuition module315then determines whether the score difference value Δ is greater than the upper score difference threshold NS2(step445). If Δ is greater than NS2, the intuition module315, using any of the game move subset selection techniques described herein, selects a game move subset αS, a corresponding average probability of which is relatively high (step450). If Δ is not greater than NS2, the intuition module315then determines whether the score difference value Δ is less than the lower score difference threshold NS1(step455). If Δ is less than NS1the intuition module315, using any of the game move subset selection techniques described herein, selects a game move subset αs, a corresponding average probability of which is relatively low (step460). If Δ is not less than NS1, it is assumed that the score difference value Δ is between NS1and NS2, in which case, the intuition module315, using any of the game move subset selection techniques described herein, selects a game move subset αs, a corresponding average probability of which is relatively medial (step465). In any event, the game move selection module325then pseudo-randomly selects a game move αifrom the selected game move subset αs, and accordingly moves the duck220in accordance with the selected game move αi(step470). The game program300then returns to step410, where it is determined again if a player move λ2xhas been performed.

It should be noted that, rather than use the game move subset selection technique, the other afore-described techniques used to dynamically and continuously match the skill level of the player215with the skill level of the game200can be alternatively or optionally be used as well. For example, and referring toFIG. 10, the probability update module320initializes the game move probability distribution p and current game move αisimilarly to that described in step405ofFIG. 9. The initialization of the game move probability distribution p and current game move αiis similar to that performed in step405ofFIG. 9. Then, the game move selection module325determines whether a player move λ2xhas been performed, and specifically whether the gun225has been fired by clicking the mouse button245(step510). If a player move λ2xhas been performed, the intuition module315modifies the functionality of the probability update module320based on the performance index φ.

Specifically, the intuition module315determines the relative player skill level by calculating the score difference value Δ between the player score260and duck score265(step515). The intuition module315then determines whether the score difference value Δ is greater than the upper score difference threshold NS2(step520). If Δ is greater than NS2, the intuition module315modifies the functionality of the probability update module320to increase the game's200rate of learning using any of the techniques described herein (step525). For example, the intuition module315may modify the parameters of the learning algorithms, and specifically, increase the reward and penalty parameters a and b.

If Δ is not greater than NS2, the intuition module315then determines whether the score difference value Δ is less than the lower score difference threshold NS1(step530). If Δ is less than NS1, the intuition module315modifies the functionality of the probability update module320to decrease the game's200rate of learning (or even make the game200unlearn) using any of the techniques described herein (step535). For example, the intuition module315may modify the parameters of the learning algorithms, and specifically, decrease the reward and penalty parameters a and b. Alternatively or optionally, the intuition module315may assign the reward and penalty parameters a and b negative numbers, switch the reward and penalty learning algorithms, or even modify the outcome evaluation module330to output an outcome value β=0 when the selected game move αis actually successful, and output an outcome value β=1 when the selected game move αiis actually unsuccessful.

If Δ is not less than NS2, it is assumed that the score difference value Δ is between NS1and NS2, in which case, the intuition module315does not modify the probability update module320(step540).

In any event, the outcome evaluation module330then determines whether the last game move αiwas successful by performing a collision detection, and then generates the outcome value β in response thereto (step545). Of course, if the intuition module315modifies the functionality of the outcome evaluation module330during any of the steps525and535, step545will preferably be performed during these steps. The intuition module315then updates the player score260and duck score265based on the outcome value β (step550). The probability update module320then, using any of the updating techniques described herein, updates the game move probability distribution p based on the generated outcome value β (step555).

After step555, or if a player move λ2xhas not been performed at step510, the game move selection module325determines if a player move λ1xhas been performed, i.e., gun225, has breached the gun detection region270(step560). If the gun225has not breached the gun detection region270, the game move selection module325does not select a game move αifrom the game move set α and the duck220remains in the same location (step565). Alternatively, the game move αimay be randomly selected, allowing the duck220to dynamically wander. The game program300then returns to step510where it is again determined if a player move λ2xhas been performed. If the gun225has breached the gun detection region270at step560, the game move selection module325pseudo-randomly selects a game move αifrom the game move set α and accordingly moves the duck220in accordance with the selected game move αi(step570). The game program300then returns to step510, where it is determined again if a player move λ2xhas been performed.

More specific details on the above-described operation of the duck game100can be found in the Computer Program Listing Appendix attached hereto and previously incorporated herein by reference. It is noted that each of the files “Intuition Intelligence-duckgame1.doc” and “Intuition Intelligence-duckgame2.doc” represents the game program300, with file “Intuition Intelligence-duckgame1.doc” utilizing the game move subset selection technique to continuously and dynamically match the respective skill levels of the game200and player215, and file “Intuition Intelligence-duckgame2.doc” utilizing the learning algorithm modification technique (specifically, modifying the respective reward and penalty parameters a and b when the score difference value Δ is too positive or too negative, and switching the respective reward and penalty equations when the score difference value Δ is too negative) to similarly continuously and dynamically match the respective skill levels of the game200and player215.

Single-Player Educational Program (Single Game Move-Single Player Move)

The learning program100can be applied to other applications besides game programs. A single-player educational program700(shown inFIG. 12) developed in accordance with the present inventions is described in the context of a child's learning toy600(shown inFIG. 11), and specifically, a doll600and associated articles of clothing and accessories610that are applied to the doll600by a child605(shown inFIG. 12). In the illustrated embodiment, the articles610include a (1) purse, calculator, and hairbrush, one of which can be applied to a hand615of the doll600; (2) shorts and pants, one of which can be applied to a waist620of the doll600; (3) shirt and tank top, one of which can be applied to a chest625of the doll600; and (4) dress and overalls, one of which can be applied to the chest625of the doll600. Notably, the dress and overalls cover the waist620, so that the shorts and pants cannot be applied to the doll600when the dress or overalls are applied. Depending on the measured skill level of the child605, the doll600will instruct the child605to apply either a single article, two articles, or three articles to the doll600. For example, the doll600may say “Simon says, give me my calculator, pants, and tank top.”

In accordance with the instructions given by the doll600, the child605will then attempt to apply the correct articles610to the doll600. For example, the child605may place the calculator in the hand615, the pants on the waist620, and the tank top on the chest625. To determine which articles610the child605has applied, the doll600comprises sensors630located on the hand615, waist620, and chest625. These sensors630sense the unique resistance values exhibited by the articles610, so that the doll600can determine which of the articles610are being applied.

As illustrated in Tables 2-4, there are 43 combinations of articles610that can be applied to the doll600. Specifically, actions α1-α9represent all of the single article combinations, actions α10-α31represent all of the double article combinations, and actions α32-α43represent all of the triple article combinations that can be possibly applied to the doll600.

TABLE 2Exemplary Single Article Combinations for DollAction (α)HandWaistChestα1Pursexxα2Calculatorxxα3Hairbrushxxα4xShortsxα5xPantsxα6xxShirtα7xxTanktopα8xxDressα9xxOveralls

TABLE 3Exemplary Double article combinations for DollAction (α)HandWaistChestα10PurseShortsxα11PursePantsxα12PursexShirtα13PursexTanktopα14PursexDressα15PursexOverallsα16CalculatorShortsxα17CalculatorPantsxα18CalculatorxShirtα19CalculatorxTanktopα20CalculatorxDressα21CalculatorxOverallsα22HairbrushShortsxα23HairbrushPantsxα24HairbrushxShirtα25HairbrushxTanktopα26HairbrushxDressα27HairbrushxOverallsα28xShortsShirtα29xShortsTanktopα30xPantsShirtα31xPantsTanktop

TABLE 4Exemplary Three Article Combinations for DollAction (α)HandWaistChestα32PurseShortsShirtα33PurseShortsTanktopα34PursePantsShirtα35PursePantsTanktopα36CalculatorShortsShirtα37CalculatorShortsTanktopα38CalculatorPantsShirtα39CalculatorPantsTanktopα40HairbrushShortsShirtα41HairbrushShortsTanktopα42HairbrushPantsShirtα43HairbrushPantsTanktop

In response to the selection of one of these actions αi, i.e., prompting the child605to apply one of the 43 article combinations to the doll600, the child605will attempt to apply the correct article combinations to the doll600, represented by corresponding child actions λ1-λ43. It can be appreciated an article combination λxwill be correct if it corresponds to the article combination αiprompted by the doll600(i.e., the child action λ corresponds with the doll action α), and will be incorrect if it corresponds to the article combination αiprompted by the doll600(i.e., the child action A does not correspond with the doll action α).