FIG. 1shows the system according to the present invention. A multimedia content7is presented by a multimedia system8to a user1. Such multimedia content may be movies, music, web pages, films, or the like and the multimedia system may be a TV, a computer, or a home environment comprising loudspeakers, display, a HiFi-system, lights or any other devices for presenting and emphasising multimedia content7. An emotion model means5serves for determining the emotions felt by the user1to which a multimedia content7is presented by the multimedia system8. Hereby, the emotion model means5comprises an acquisition system2, a processing means3and a transformation means4. A person during the consumption of multimedia content reacts instinctively to the shown contents with some emotions, e.g. anger, happiness, sadness, surprise or the like. Such mental activity is not constant and its follows the personal attitude of the user. Additionally, such mental activities produce physiological reactions in the human body. The acquisition system2serves for detecting and measuring such physiological reactions of a user. Such reactions may be the heart rate, blood pressure, temperature, facial expression, voice, gesture or the like. These reactions can be measured by e.g. biosensors, infrared cameras, microphones and/or any other suitable sensing means. These physically measurable reactions are measured by the acquisition system2and are then further submitted to the processing means3, which processes and evaluates the data submitted by the acquisition system2. If e.g. the acquisition system2samples the heart beat rate, then the processing means3may calculate the average value. The evaluated data are then further submitted to the transformation means4which, depending on the submitted biological reactions, detects the emotional state of the user. The transformation means4therefore comprises algorithms for effectuating such detection of the emotional state of the user1. Such an algorithm for detecting the emotional state of the user1on the basis of the data acquired by the ...

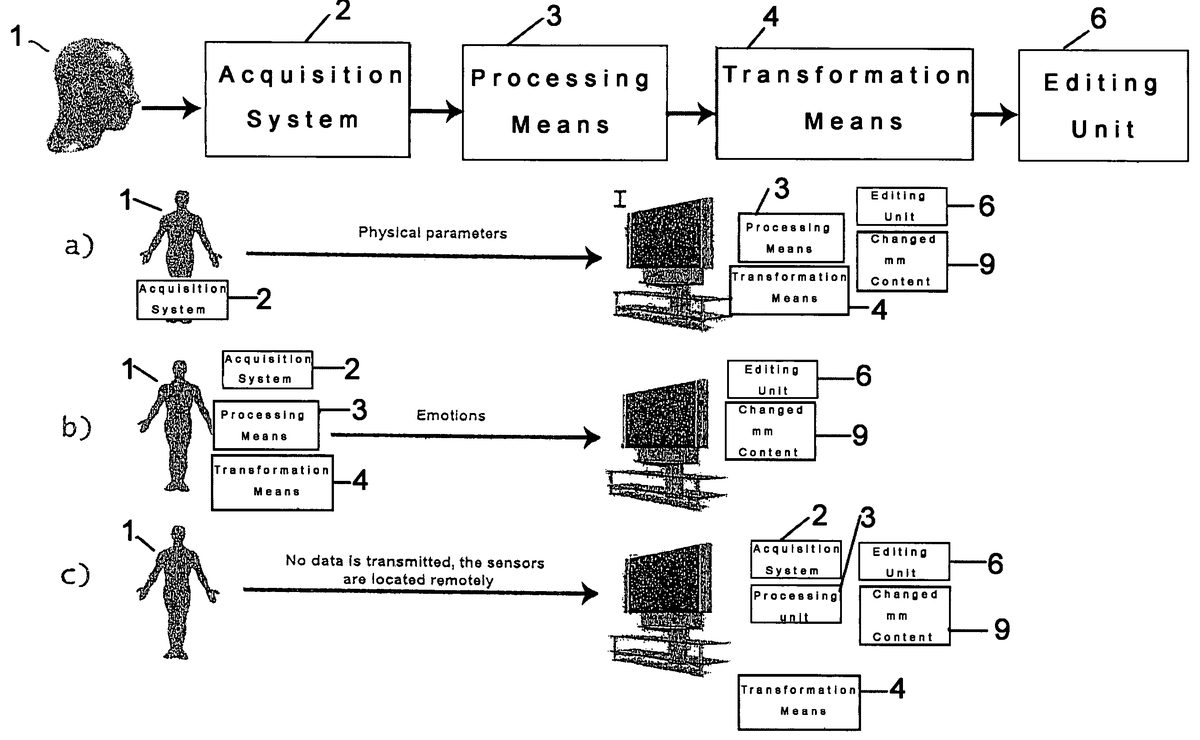

FIG. 1shows the system according to the present invention.

A multimedia content7is presented by a multimedia system8to a user1. Such multimedia content may be movies, music, web pages, films, or the like and the multimedia system may be a TV, a computer, or a home environment comprising loudspeakers, display, a HiFi-system, lights or any other devices for presenting and emphasising multimedia content7.

An emotion model means5serves for determining the emotions felt by the user1to which a multimedia content7is presented by the multimedia system8. Hereby, the emotion model means5comprises an acquisition system2, a processing means3and a transformation means4. A person during the consumption of multimedia content reacts instinctively to the shown contents with some emotions, e.g. anger, happiness, sadness, surprise or the like. Such mental activity is not constant and its follows the personal attitude of the user. Additionally, such mental activities produce physiological reactions in the human body. The acquisition system2serves for detecting and measuring such physiological reactions of a user. Such reactions may be the heart rate, blood pressure, temperature, facial expression, voice, gesture or the like. These reactions can be measured by e.g. biosensors, infrared cameras, microphones and/or any other suitable sensing means. These physically measurable reactions are measured by the acquisition system2and are then further submitted to the processing means3, which processes and evaluates the data submitted by the acquisition system2. If e.g. the acquisition system2samples the heart beat rate, then the processing means3may calculate the average value. The evaluated data are then further submitted to the transformation means4which, depending on the submitted biological reactions, detects the emotional state of the user. The transformation means4therefore comprises algorithms for effectuating such detection of the emotional state of the user1.

Such an algorithm for detecting the emotional state of the user1on the basis of the data acquired by the acquisition means may be as follows: To every emotional state e.g. anger, happiness, fear, sadness or the like a certain value of each measured parameter or a certain interval of values of a measured parameter is assigned. To the emotional state of fear a high heart beat rate, a high blood pressure and the like may be assigned. So the measured physical values are compared with the values or intervals of values assigned to each emotional state and this way the actual emotional state of the user can be detected.

The emotional data determined by the emotion model means5are then submitted to an editing unit6in order to change the multimedia content7depending on the emotion data submitted by the emotion model means5. The changed multimedia content9can then be presented again on the multimedia system8to the user1.

Hereby, the changing of the multimedia content may happen before, during or after the presentation of the multimedia content7to a user1. In a pre-processing for example the reactions and emotions of at least one user not being the end-consumer on a multimedia content may be measured, the content is changed accordingly and afterwards presented to the final consumer. A next possibility is to change the multimedia content at the same time the user1is watching it, that means e.g. changing the quality, the sound, the volume or the like of the multimedia content depending on the reaction of the user. A third possibility would be to store the changed multimedia content in order to show it to the user1at a later time.

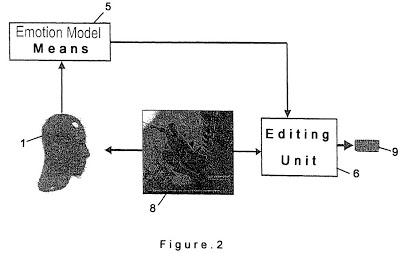

FIG. 2shows a first embodiment of the present invention. Hereby, the multimedia system8presents multimedia content to the user1and the emotion model means5detects the emotional state of the user during the presentation of the multimedia content7and submits these emotional states to the editing unit6. In the editing unit6suitable algorithms process the collected information to create e.g. digests of the viewed video contents. Depending on the algorithm itself different kinds of digests can be produced for different purpose, e.g. e-commerce trailers, a summary of the multimedia contents, a very compact small representation of digital pictures shown as a preview before selection of the pictures themselves, or the like.

Such an automatic system can be applied to both a personal and public usage. For personal usage, the video e.g. can fit the personal needs or attitude of a single person, in the other case it is preferable to apply the method to a vast public for producing a statistic result before automatically addressing different classes of people, e.g. by age, interest, culture, gender etc.

The digest generation can be static or dynamic. Static means, that the digest of a video is generated only once and cannot be changed later. A dynamic generation allows adapting the digest to changing emotions of a person every time the video is watched by this person. That means the digest follows the emotions of a person during lifetime.

As emotions are used to generate a video digest, such a digest is a very personal one. Therefore, the privacy problem has to be solved e.g. by protecting a digest by cryptographic mechanisms.

Another possibility is to use the emotions detected for classifying and analysing multimedia contents. Hereby, the emotional state is embedded in the multimedia content7by the editing means6, that is together with the multimedia content data are stored, which reveal the emotional state, the grade of interest or the like of the user1. Such information can then be used for post-processing analysis of the multimedia content or for activating intelligent devices in a room environment. This for example opens the possibility to search for and look up information inside a multimedia content by emotion parameters, the user can e.g. search for a scene where he was scared or where he was amused.

FIG. 3shows a second embodiment of the present invention, where the multimedia content7presented by the multimedia system8is changed in real time in a closed loop control. That means, that the multimedia contents is automatically and dynamically adapted to the user during playing time.

A first possibility is to detect the actual grade of interest and tension of the user and accordingly change to another scene or programme or continue with the presentation of the multimedia content. Hereby, the analysis of the user's emotion has to be done either at some predefined points of the multimedia content e.g. at the beginning of every new scene or after a predefined time period, e.g. every two minutes or the like. By detecting the actual grade of interest of the user, the attention of the user can be analysed and it can be proceeded to the next scene if the user is not interested in the actual multimedia content. Instead of changing the scenes, also an automatic zapping mode of TV programmes or a selected number of movies may be implemented. Depending on the emotional state of the user and the emotional content associated to movies or TV programmes, an intelligent system might change automatically to a set of user's video choices.

A second possibility of application of the closed loop control is to adapt the quality or the bandwidth for the reproduction or the transmission of the multimedia content. Streaming audio or video contents can be sent with a very high or low quality depending on the level of attention of the user. That means, if a low level of interest by the user is detected then the quality is lowered and on the other hand if the grade of interest of the user is high then also the quality is increased. This is particularly advantageous for multimedia devices with limited network bandwidth and power such as portable phones, PDHs and the like.

A third possibility is to adapt the closed loop control to an interacting home entertainment environment. The multimedia system8may comprise not only display and loudspeakers but also other environmental devices such as lights or the like. According to the user's emotional state, a home entertainment system might receive and process emotion information and adapt the environment to such stimula. If for example the user is watching a scary movie and the physical changes related to fear, such as a high heart beat rate or a high blood pressure are submitted to the emotion model means5and then afterwards the emotional state of fear is submitted to the editing means6, then the multimedia system8may adapt the environment by dimming down the light or increasing the sound in order to emphasise the emotion felt by the user.

FIG. 4is an overview over the different applications of the system of the present invention. Hereby, the emotion data and the multimedia contents are both fed to the editing unit6and then processed in order to receive different applications. In the first possibility, embedding data is used to associate data to the digital content and the obtained data is then processed to create a digital video digest, e.g. a short video digest, a preview video digest or a longer synthesis of the video. In a second possibility, emotion data and the digital stream are used to produce on the fly a different view. In a third possibility, the quality adaptive mechanism is implemented and in this case the multimedia content is processed using the emotion information to produce a changing video stream.

FIG. 5is an overview of the different implementations of the system, especially of the emotion model means5. As already explained the system mainly consists of the acquisition2, the processing means3, the transformation means4and the editing unit6. The different parts of the system according to the present invention can be located remotely from each other. In implementation a) the acquisition system2is placed directly at the user1and the processing means3and the transformation means4are placed remote from the acquisition means2, which only submits physical parameters. In this case the acquisition may be a simple bracelet which transmits the bio or haptic data to the processing means3and transformation means4which then further processes these data to detect the emotional state of the user1. In implementation b), the acquisition system2, the processing means3and the transformation means4are all located near the user1. Hereby, all these means may be implemented in a smart wearable bracelet or another wearable system transmitting the emotions already detected and processed to the editing unit6located remotely. In implementation c) no date from the user1are transmitted, but the acquisition system2is located remotely from the user. In this case an acquisition system2, such as cameras, microphones or the like may be used. Also a combination of the implementations a) to c) can be possible.