U.S. Patent No. 6,558,257: Imaging Processing Apparatus and Image Processing Method

Summary:

The ‘257 patent describes a system which can be used in sports games such as baseball where player movement can be tracked by a position indicator. Whenever a ball is hit off the bat the outfielder should react to the ball. If the player can move his character in position to catch the ball, then the invention enacts a sequence which allows the player to catch the ball. If the player misjudges the location of the ball, however, the character is more apt to drop the ball or miss the catch.

Abstract:

In order to realize the smooth display of a fielder’s ball-catching movement, determination of a collision between a batted ball and a fence by an easy method, and an accurate hidden-face treatment for polygons which are located very close to each other, virtual area producing means 31 is provided to produce a collision area for collision determination at a position away from the picture of a ball for a predetermined distance, and determining means 32 is provided to determine at which position in the collision area a fielder is located. When it is determined that the fielder is located in the collision area, picture changing means 33 gradually changes the posture of the fielder from a waiting state to a ball-catching state.

Illustrative Claim:

1. A picture processing device comprising: coordinate converting means for projecting a group of polygons represented in a three-dimensional coordinate system on a two-dimensional coordinate system; and hidden face treatment means for determining a display order of the polygon group relative to other polygons projected on the two-dimensional coordinate system, wherein the display order is determined based on dimensions of the depth-directional coordinate values of said three-dimensional coordinate system in a display screen and based on the depth-directional coordinate values of a representative polygon within the polygon group, and wherein the hidden face treatment means includes means for displaying polygons within the polygon group in accordance with a predetermined description order when the display order of the polygon group indicates that the polygon group is to be displayed.

Illustrative Figure

Abstract

No abstract is available for this record.

Description

BEST MODE FOR CARRYING OUT THE INVENTION The present invention is hereinafter explained in more detail by referring to the attached drawings. (First Embodiment) I. Structure FIG. 1 is an exterior view of a video game machine which utilizes the picture processing device of a first embodiment of this invention. In this figure, a main frame 1 of the video game machine has a substantial box shape, inside of which substrates and other elements for game processing are provided. Two connectors 2 a are provided at the front side of the main frame 1 of the video game machine, and PADs 2 b are connected to these connectors 2 a through cables 2 c . When two players play a baseball game or other game, two PADs 2 b are used. At the top of the main frame 1 of the video game machine, a cartridge I/F la for connection to a ROM cartridge and a CD-ROM drive 1 b for reading a CD-ROM are provided. At the back of the main frame 1 of the video game machine, a video output terminal and an audio output terminal (not shown) are provided. This video output terminal is connected to a video input terminal of a TV picture receiver 5 through cable 4 a , and the audio output terminal is connected to an audio input terminal of the TV picture receiver 5 through cable 4 b . With this video game machine, a user can play a game while watching a screen displayed on the TV picture receiver 5 by operating PAD 2 b. FIG. 2 is a block diagram showing the outline of the video game machine of this invention. This picture processing device is composed of a CPU block 10 for controlling the entire device, a video block ...

BEST MODE FOR CARRYING OUT THE INVENTION

The present invention is hereinafter explained in more detail by referring to the attached drawings.

(First Embodiment)

I. Structure

FIG. 1 is an exterior view of a video game machine which utilizes the picture processing device of a first embodiment of this invention. In this figure, a main frame 1 of the video game machine has a substantial box shape, inside of which substrates and other elements for game processing are provided. Two connectors 2 a are provided at the front side of the main frame 1 of the video game machine, and PADs 2 b are connected to these connectors 2 a through cables 2 c . When two players play a baseball game or other game, two PADs 2 b are used.

At the top of the main frame 1 of the video game machine, a cartridge I/F la for connection to a ROM cartridge and a CD-ROM drive 1 b for reading a CD-ROM are provided. At the back of the main frame 1 of the video game machine, a video output terminal and an audio output terminal (not shown) are provided. This video output terminal is connected to a video input terminal of a TV picture receiver 5 through cable 4 a , and the audio output terminal is connected to an audio input terminal of the TV picture receiver 5 through cable 4 b . With this video game machine, a user can play a game while watching a screen displayed on the TV picture receiver 5 by operating PAD 2 b.

FIG. 2 is a block diagram showing the outline of the video game machine of this invention. This picture processing device is composed of a CPU block 10 for controlling the entire device, a video block 11 for controlling the display of a game screen, a sound block 12 for generating sound effects, etc., subsystem 13 for reading CD-ROM and other elements.

The CPU block 10 is composed of SCU (System Control Unit) 100 , a main CPU 101 , RAM 102 , ROM 103 , cartridge I/F 1 a , sub-CPU 104 , CPU bus 103 and other elements. The main CPU 101 controls the entire device. This main CPU 101 has an operational function inside as that of DSP (Digital Signal Processor) and is capable of executing application software at a high speed. RAM 102 is used as a work area for the main CPU 101 . An initial program for initialization and other programs are written in ROM 103 . SCU 100 controls buses 105 , 106 and 107 to perform smooth data input and output between the main CPU 101 , VDPs 120 and 130 , DSP 140 , and CPU 141 . SCU 100 comprises a DMA controller inside, thereby being capable of transferring sprite data in a game to VRAM in the video block 11 . Accordingly, it is possible to execute an application software such as a game at a high speed. The cartridge I/F 1 a is used to input an application software which is supplied in the form of a ROM cartridge.

The sub-CPU 104 is the so-called SMPC (System Manager & Peripheral Control) and has functions, for example, to collect peripheral data from PAD 2 b through connector 2 a upon request from the main CPU 101 . The main CPU 101 performs processing, for example, to move a fielder in a game screen in accordance with the peripheral data received from sub-CPU 104 . Optional peripherals, including PAD, a joy stick and a keyboard, can be connected to connector 2 a . Sub-CPU 104 has functions to automatically recognize the type of a peripheral connected to connector 2 a (terminal at the main frame side) and to collect peripheral and other data in accordance with a communication method corresponding to the type of the peripheral.

The video block 11 comprises VDP (Video Display Processor) 120 for drawing characters consisting of polygon data for a video game, and VDP 130 for, for example, drawing a background screen, synthesizing polygon picture data and the background picture, and performing a clipping processing. VDP 120 is connected to VRAM 121 and frame buffers 122 and 123 . Polygon drawing data which represent characters of the video game machine are sent from the main CPU 101 to SCU 100 and then to VDP 120 . The polygon drawing data are then written in VRAM 121 . The drawing data written in VRAM 121 are used for drawing in a drawing frame buffer 122 or 123 , for example, in the 16 or 8 bit/pixel format. The data drawn in the frame buffer 122 or 123 are sent to VDP 130 . The main CPU 101 gives information for drawing control to VDP 120 through SCU 100 . VDP 120 then executes a drawing processing by following the directions.

VDP 130 is connected to VRAM 131 and it is constructed in a manner such that the picture data from VDP 130 are outputted to encoder 160 through memory 132 . Encoder 160 adds synchronization signals, etc. to the picture data, thereby generating picture signals which are then outputted to the TV picture receiver 5 . Accordingly, a baseball game screen is displayed on the TV picture receiver 5 .

The sound block 12 is composed of DSP 140 for synthesizing sound by a PCM method or FM method, and CPU 141 for controlling DSP 140 . Sound data generated by DSP 140 are converted into two-channel signals by a D/A converter 170 , which are then outputted to speaker 5 b.

The subsystem 13 is composed of a CD-ROM drive 1 b , CD I/F 180 , CPU 181 , MPEG AUDIO 182 , MPEG VIDEO 183 and other elements. This subsystem 13 has functions, for example, to read in an application software supplied in the form of a CD-ROM and to reproduce animation. The CD-ROM drive 1 b reads data from a CD-ROM. CPU 181 performs processing such as control of the CD-ROM drive 1 b and correction of errors in the read data. The data read from a CD-ROM are supplied to the main CPU 101 through CD I/F 180 , bus 106 and SCU 100 and are utilized as an application software. MPEG AUDIO 182 and MPEG VIDEO 183 are devices for restoring data which are compressed in MPEG (Motion Picture Expert Group) standards. Restoration of MPEG compressed data, which are written in a CD-ROM, by using these MPEG AUDIO 182 and MPEG VIDEO 183 makes it possible to reproduce animation.

The structure of the picture processing device of this embodiment is hereinafter explained. FIG. 3 is a functional block diagram of the picture processing device which is composed of the main CPU 101 , RAM 102 , ROM 103 and other elements. In this figure, virtual area producing means 31 has a function to generate a collision area (virtual area) at a position ahead of the moving direction of a ball (first picture). Position determining means 34 determines the speed and height (position) of the ball and gives the determination results to the virtual area producing means 31 . Determining means 32 determines a position relationship between the collision area and a fielder and gives the determination results to picture changing means 33 . The picture changing means 33 changes the posture of the fielder (second picture) on the basis of the determination results (the position relationship between the collision area and the fielder) of the determining means 32 . Namely, once the fielder enters the collision area, the fielder moves to catch the ball.

FIG. 4 shows an example of a baseball game screen to be displayed by the video game machine of this embodiment. This baseball game can be executed by one or two persons. Namely, when there are two game players, the players take turns to play the defending team or the team at bat. When there is only one game player, the player takes to the field and goes to bat in turn by setting the computer (video game machine) as his/her competitor team. A scene corresponding to the progress of a game is displayed on display 5 in three-dimensional graphics. When a pitcher throws a ball, a scene is displayed as shown from the back of a batter. Immediately after the batter hits the ball, a scene mainly focused on fielders is displayed as shown in FIG. 4 .

Fielders J and K can be moved by operating PAD 2 b . Namely, when the game player operates PAD 2 b, the main CPU 101 first moves fielder J, who is located in the infield, among fielders J and K who are located in a direction toward which the ball 42 flies. If fielder J fails to catch the ball, the main CPU 101 moves fielder K in the outfield in accordance with the operation of PAD 2 b . Accordingly, a plurality of fielders can be moved by easy operation.

At the same time as batter 41 hits a ball, the main CPU 101 calculates the speed and direction of ball 42 and then calculates an estimated drop point 44 where ball 42 may drop on the basis of the calculation results obtained above. This estimated drop point 44 is actually displayed on the screen. When fielder J or K is moved near the estimated drop point 44 before ball 42 drops, fielder J or K can catch a fly.

A virtual collision area 43 is located on the ground (standard plane picture) in the direction toward which the ball 42 flies (forward direction). This collision area 43 is used for collision determination between ball 42 and a fielder and is not actually displayed. When fielder J or K moves into the collision area 43 , fielder J or K can catch ball 42 . On the other hand, while fielder J or K is located outside the collision area 43 , fielder J or K does not move to catch the ball.

The collision area is hereinafter explained in more detail by referring to FIGS. 5 through 9 . FIG. 5 describes a position relationship between the collision area, ball and fielders. As shown in this figure, the collision area 43 is located on the ground away from and ahead of ball 42 for a predetermined distance. Namely, the collision area 43 moves on the ground to come ahead of ball 42 as ball 42 flies. The distance between the collision area 43 and ball 42 corresponds to a distance in which ball 42 moves in a period of time corresponding to twelve interrupts.

In this embodiment, one interrupt is generated for every frame (vertical retrace line cycle: {fraction (1/60)} msec 2 33.3 msec). Therefore, a period of time corresponding to twelve interrupts is approximately 0.4 sec. Since the posture of fielder J or K changes every interrupt (every frame), the fielder can make movements of twelve scenes in a period of time corresponding to twelve interrupts. For example, as shown in FIG. 6 , fielder J can execute movements of twelve scenes as turning toward the ball during a period of time after fielder J entering into the collision area 43 begins the ball-catching movement until he completes the ball-catching movement. Therefore, it is possible to display the fielder's ball-catching movement smoothly.

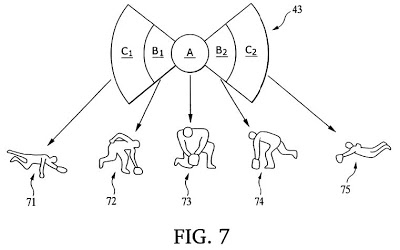

FIG. 7 describes the collision area 43 and the ball-catching postures. As shown in this figure, the collision area 43 is composed of areas A, B 1 , B 2 , C 1 and C 2 . The areas A, B 1 , B 2 , C 1 and C 2 respectively correspond to the fielder's ball-catching postures 71 - 75 . For example, when the fielder enters area A, the ball comes at the front of the fielder and, therefore, the fielder takes a ball-catching posture 73 . When the fielder enters area C 1 , the ball comes to the left side of the fielder, that is, the ball passes through area A and, therefore, the fielder takes a ball-catching posture 71 . The ball-catching postures 71 - 75 are examples of the postures when the height of the ball is low. An appropriate ball-catching posture is selected according to the height of the ball.

FIG. 8 is a top view of the collision area. As described above, the collision area 43 is composed of the areas A, B 1 , B 2 , C 1 and C 2 . The center area A is positioned along the ball flying path and is circular. Areas B 1 , B 2 , C 1 and C 2 respectively in a fan shape are provided in order outside of area A. Areas B 1 , B 2 , C 1 and C 2 successively disappear as the speed of the ball slows down. For example, when the ball bounds on the ground and the speed of the ball slows down, areas C 1 and C 2 first disappear.

As the speed of the ball further slows down, areas B 1 and B 2 disappear and only area A remains. In an actual baseball game, a fielder usually never jumps at a ball when the ball almost stops (see the ball-catching postures 71 and 75 in FIG. 7 ). Accordingly, it is possible to make the movement of the fielder in the screen closer to the movement of a real fielder by appropriately changing the size of the collision area 43 according to the speed of the ball.

An effective angle b of areas B 1 and B 2 and an effective angle c of areas C 1 and C 2 also change according to the speed of the ball and other factors. For example, when the speed of the ball is high, the fielder must be quickly moved to the position where the ball passes. If the area of the collision area 43 is small, it is very difficult to catch the ball. Therefore, in this case, the effective angles b and c are made wider and the collision area 43 is made larger, thereby reducing the difficulty in catching the ball which flies at a high speed.

FIG. 9 describes changes in the shape of the collision area 43 in accordance with the movement of the ball. After the ball is hit and until it stops, the collision area 43 passes through positions (a) through (d) in order. The position (a) indicates the position of the collision area immediately after the ball is hit. As described above, when the speed of the ball is high, the effective angles b and c are made wider in order to reduce the difficulty in catching a ball. On the other hand, when the speed of the ball slows down, the effective angles b and c are made narrower and the area of the collision area 43 is made smaller (position (b)).

As the ball slows down and reaches the position (c), areas C 1 and C 2 of the collision area 43 disappear. Immediately before the ball stops (position (d)), areas B 1 and B 2 of the collision area 43 disappear. Then, only the circular area A remains as the collision area 43 . Therefore, the fielder can catch the ball at his front. As described above, it is possible to reproduce realistic ball-catching movements of a fielder by changing the shape of the collision area 43 according to the speed of the ball.

FIG. 10 shows the fielder's ball-catching postures according to the positions of the fielder in the collision area and the height of the ball. In this figure, the vertical axis indicates the height of the ball and the horizontal axis indicates the position of the fielder. The ball-catching postures 111 - 113 show the fielders jumping at the ball to catch it. The-ball-catching posture 114 shows the fielder catching a fly The ball-catching postures 115 - 119 show the fielders catching the ball at the height of their chest. The ball-catching postures 120 - 124 show the fielders taking a grounder. The ball-catching posture 125 shows the fielder jumping forward to catch the ball. Among these ball-catching postures, the ball-catching postures 115 , 119 , 120 and 124 show the fielder moving and catching the ball.

An appropriate ball-catching posture is selected in accordance with the position of the fielder in the collision area. For example, when the fielder is in area A and the ball is at a high position (a fly), the ball-catching posture 114 with a glove held upward is displayed. When the fielder is in area C 1 and the ball is at the fielder's chest height, the ball-catching posture 115 with the glove held toward the left side of the fielder is displayed. Accordingly, it is possible to provide a fully realistic baseball game by changing the fielder's ball-catching posture according to the position of the fielder in the collision area and the height of the ball.

II. Actions

Actions of the device for determining the picture position of this embodiment are hereinafter explained by referring to the flow-charts shown in FIGS. 11 and 12 .

FIG. 11 is a flow-chart showing actions of the video game machine which utilizes picture processing. This flow-chart is executed at every interrupt (every frame) on the condition that the ball is hit by a batter. First, the position determining means 34 determines the moving direction, angle and speed of the ball immediately after it is hit (at step S 1 ). The virtual area producing means 31 then decides the shape (size and effective angles) of the collision area 43 according to the speed of the ball. For example, when the speed of the ball is high immediately after it is hit, the effective angle b of areas B 1 and B 2 and the effective angle c of areas C 1 and C 2 in the collision area 43 are mad e wider (FIGS. 8 and 9 ). The collision area 43 thereby determined is positioned on the ground away from and ahead of the ball for a predetermined distance. The distance between the collision area 43 and the ball corresponds to a distance in which ball 42 moves during a period of time corresponding to twelve interrupts. The collision area 43 is not actually displayed on the screen.

The virtual area producing means 31 matches the fielder's ball-catching posture with area A, B 1 , B 2 , C 1 or C 2 of the collision area 43 (at step S 2 ). For example, as shown in FIG. 10 , area A is matched with the ball-catching posture in which the fielder catches the ball at his front. Areas B 1 , B 2 C 1 and C 2 are respectively matched with an appropriate ball-catching posture in which the fielder catches the ball on his side.

The determining means 32 selects one fielder (positioned near the ball) out of all fielders, who has a possibility to catch the ball, and calculates distance D between the fielder and the center position of the collision area 43 (at step S 3 ). In FIG. 1 , for example, if fielder J is selected, distance D between fielder J and the center position of the collision area 43 is calculated. If distance D is longer than a maximum radius of the collision area 43 , that is, if fielder J is positioned outside the collision area 43 (YES at S 4 ), the determining means 32 executes a processing of S 10 .

At step S 10 , the determining means 32 determines whether or not a fielder, other than fielder J, exists who has a possibility to catch the ball. If fielder K exists, other than fielder J, who has a possibility to catch the ball, the processing object is then turned to fielder K (at step S 9 ). Then the aforementioned processing of S 3 and S 4 is executed with regard to fielder K. If it is determined as a result of the above processing that distance D between fielder K and the center position of the collision area 43 is longer than the maximum size of the collision area 43 , processing S 10 is executed. At step S 10 , if the determining means 32 determines that no fielder exists, other than fielders J and K, who has a possibility to catch the ball (YES at S 10 ), the processing of this flow-chart terminates and returns to a main flow-chart which is not shown in this figure.

Subsequently, an interrupt generates every frame and the above-described flow-chart of FIG. 10 is repeatedly executed. As a predetermined period of time passes after the ball is hit, the ball moves and the speed and height, etc. of the ball change. The ball position determining means 34 determines the moving direction, angle and speed of the ball (at step S 1 ), and the virtual area producing means 31 newly decides the shape (size and effective angles) of the collision area 43 according to the speed of the ball. For example, when the speed of the ball slows down, the effective angles b and c of the collision area 43 are made narrower.

Assuming that the game player operates PAD 2 b to cause fielder J to enter the collision area 43 , the determination result of step S 4 will become NO and the processing at S 5 and the following steps will be executed. The determining means 32 determines whether or not distance D is shorter than radius Ar of area A, that is, whether or not fielder J is in the area A (at step S 5 ). If the determination result is NO, the determining means 32 determines whether or not distance D is shorter than radius Br of area B 1 or B 2 (at step S 6 ). If the determination result is NO, it determines whether or not distance D is shorter than radius Cr of area C 1 or C 2 (at step S 7 ). Namely, the determining means 32 determines in which area of the collision area 43 fielder J is positioned at steps S 5 through S 7 .

For example, if the determining means 32 determines that fielder J is in area B 1 (YES at S 6 ), a subroutine of S 8 is executed.

FIG. 12 shows the subroutine of S 8 . At step S 81 , the picture changing means 33 calculates an angle formed by the center point of the collision area 43 and the fielder J. The picture changing means 33 determines whether or not a ball-catching posture corresponding to the calculated angle is defined (at step S 82 ). If the ball-catching posture is not defined (NO at step S 82 ), the processing proceeds to the next fielder (at step S 86 ) and then returns to the main flow-chart shown in FIG. 11 . For example, if fielder J enters the left side (area B 1 ) of the collision area 43 , the ball-catching posture 115 shown in FIG. 10 is defined (YES at step S 82 ) and, therefore, the processing at step S 83 and the following steps are executed.

The picture changing means 33 decides an accurate ball-catching posture on the basis of information given by PAD (or stick) 2 b , the facing direction of the fielder, the height of the ball and other factors (at step S 83 ). If such determined ball-catching posture does not exist (NO at step S 84 ), the processing proceeds to the next fielder, for example, fielder K (at step S 86 ) and then returns to the main flow-chart shown in FIG. 11 . On the other hand, if the ball-catching posture determined at step S 83 exists (YES at step S 84 ), the posture of fielder J on the screen is changed to the determined ball-catching posture (at step S 85 ) and the processing then returns to the main flow-chart shown in FIG. 11 and terminates. After the fielder to catch the ball is determined in the above-described manner, the subroutine shown in FIG. 12 is not executed and a posture changing processing not shown in the drawings is executed at every interrupt. This posture changing processing gradually changes the posture of fielder J every frame. When twelve interrupts have passed after fielder J begins the ball-catching movement, the ball is caught by the glove of fielder J.

According to this embodiment, it is possible to make a fielder perform movements of twelve interrupts (twelve frames) after the fielder enters the collision area 43 , thereby making it possible to reproduce a realistic ball-catching movement. Moreover, it is possible to reproduce a fully realistic ball-catching movement by changing the ball-catching movement according to the position of the fielder in the collision area 43 .

(Second Embodiment)

A video game machine of a second embodiment has a function concerning the display of a player's number in addition to the functions of the video game machine of the first embodiment. This function is hereinafter explained by referring to FIGS. 13 and 14 .

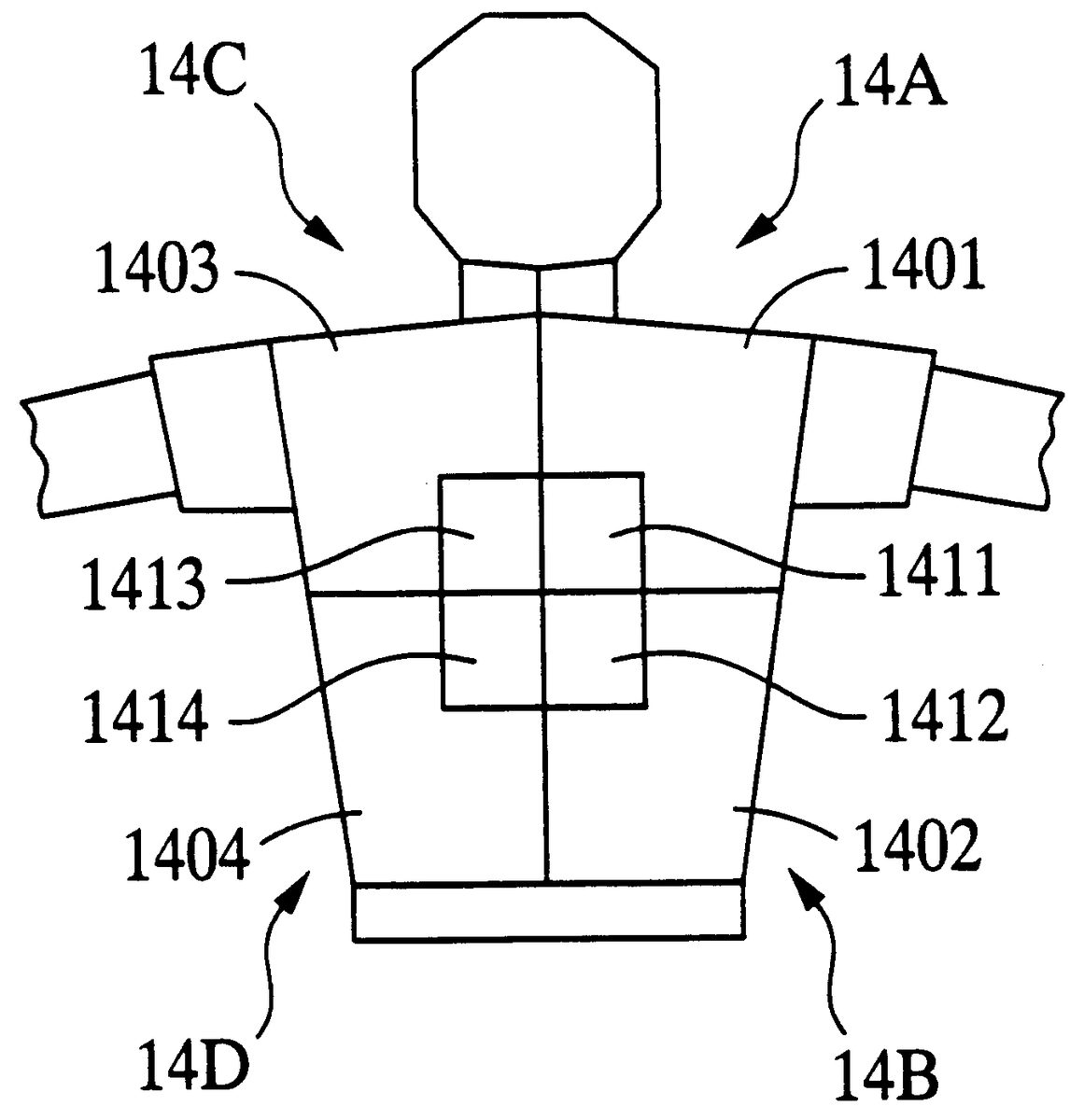

FIG. 14 describes a data structure of polygons representing the upper half of a player's body. In this figure, a uniform is composed of four polygon groups 14 A, 14 B, 14 C and 14 D. Each polygon group is composed of a polygon representing a part of the uniform and a polygon representing a part of the player's number. Namely, polygon 14 A consists of polygon 1401 representing a quarter part of the uniform and polygon 1411 representing a quarter part of the player's number. Similarly, the polygon group 14 B consists of polygons 1402 and 1412 , the polygon group 14 C consists of polygons 1403 and 1413 , and the polygon group 14 D consists of polygons 1404 and 1414 .

The description order (priority order) of a polygon is set for each of the polygon groups 14 A, 14 B, 14 C and 14 D. For example, for the polygon group 14 A, the description order is decided in the order of the uniform polygon 1401 and then the player's number polygon 1411 . Also, a polygon having the highest description order in the respective polygon groups 14 A, 14 B, 14 C and 14 D is selected as the polygon representing each polygon group. Namely, the polygons 1401 , 1402 , 1403 and 1404 representing the uniform are respectively selected as the polygons representing the respective polygon groups 14 A, 14 B, 14 C and 14 D.

The display order of the polygon data having the above-described construction is hereinafter explained by referring to FIG. 13 . As shown in FIG. 13 (A), polygons 1401 - 1404 representing the uniform and polygons 1411 - 1414 representing the player's number are indicated with coordinates of a three-dimensional coordinate system. The main CPU 101 ( FIG. 2 ) performs the coordinate conversion of this three-dimensional coordinate system and generates a two-dimensional coordinate system shown in FIG. 13 (B). The coordinate conversion is conducted by projecting coordinates of each vertex of polygons 1401 - 1404 and 1411 - 1414 on the two-dimensional coordinate system.

The main CPU 101 determines the priority order of polygons 1401 , 1402 , 1403 and 1404 respectively representing the polygon groups 14 A, 14 B, 14 C and 14 D, as well as other polygons which represent the fielder's chest, arms, etc. For example, when the fielder faces forward, that is, when his chest faces forward, his back is positioned at the back of his chest. Namely, Z-coordinate values of polygons 1401 , 1402 , 1403 and 1404 respectively representing the polygon groups 14 A, 14 B, 14 C and 14 D become larger than-Z-coordinate values of polygon representing the player's chest. Accordingly, in this case, the entire polygon groups 14 A, 14 B, 14 C and 14 D are not displayed, that is, the player's back is hidden behind his chest.

On the other hand, if the fielder turns his back upon the screen, the Z-coordinate values of polygons 1401 , 1402 , 1403 and 1404 respectively representing the polygon groups 14 A, 14 B, 14 C and 14 D become smaller than the Z-coordinate values of the polygons representing the player's chest. In this case, the polygon groups 14 A, 14 B, 14 C and 14 D are displayed with priority to the polygons representing the player's chest. For the respective polygon groups 14 A, 14 B, 14 C and 14 D, the polygons are displayed in the predetermined description order. For example, for the polygon group 14 A, polygon 1411 representing the player's number is superimposed on polygon 1401 representing the uniform. In other words, the Z-coordinate values of the respective polygons in the same polygon group are not compared with each other (according to the Z-sorting method), but-the polygons are displayed in the predetermined description order.

As mentioned above, the Z-coordinate values of the respective polygons in the same polygon group are not compared with each other, but the polygons are displayed in the predetermined description order. Therefore, even if two polygons, such as the uniform polygon and the player's number polygon, are positioned very close to each other, it is possible to perform an accurate hidden face treatment. For example, as shown in the invention described in claim 10 , the polygon representing the uniform and the polygon representing the player's number can be displayed accurately. Since the display order of the polygon group is decided on the basis of the Z-coordinate values of the polygon having the highest description order, it is possible to secure the compatibility between the hidden face treatment of this embodiment and the Z-sorting method.

This embodiment is not limited to the display of the player's number on the uniform, but can be applied to, for example, a number on a racing car.

(Third Embodiment)

A video game of this embodiment has the function described below in addition to those of the aforementioned video game machine of the first embodiment. The video game machine of the third embodiment of the present invention is hereinafter explained by referring to FIG. 15 .

FIG. 15 is an exterior view of a baseball field 1500 on the screen. A virtual center point 1502 is set at the back of the second base, and a circular arc having a radius R from the center point 1502 and angle formed by two radius lines extending from the center point 1502 is displayed as an outfield fence 1501 . In this figure, reference numeral 1503 indicates a ball hit by a batter. The main CPU 101 calculates the distance r between the center point 152 and ball 1503 and also determines whether or not angle shown in the figure is within angle . In addition to these two conditions, if the condition is satisfied that the height of ball 1503 is higher than the outfield fence 1501 , the main CPU 101 determines that ball 1503 collides with the outfield fence 1501 . Then, the main CPU 101 performs the processing of bounding ball 1503 back the outfield fence 1501 , and the bounded ball is shown on display 5 .

According to this embodiment, it is possible to determine a collision between the ball and the outfield fence simply by means of operation of the distance r and it is not necessary to perform a complicated processing for determining a collision between polygons. Therefore, it is possible to easily determine the collision between the ball and the outfield fence.

(Other Embodiments)

The present invention is not limited to the above-described embodiments, but can be modified to the extent not departing from the intent of this invention. For example, this invention may be applied not only to a baseball game, but also to other games such as a soccer game or a tennis game.

Availability in the Technical Field

As described above, the following advantages can be obtained according to the present invention.

First, it is possible to display the smooth ball-catching movement. According to this invention, a collision area (virtual area) is generated at a position away from a ball (first picture) for a predetermined distance. Determining means determines whether or not a fielder (second picture) is located in the collision area. If it is determined that the fielder is in the virtual area, picture changing means changes the posture (shape) of the fielder. For example, when the fielder enters the collision area, the fielder's posture gradually changes from the waiting state to the ball-catching state. Subsequently, when the ball reaches the fielder, the fielder's posture becomes the ball-catching state. Since the collision area for collision determination is located away from the ball according to this invention, it is possible to lengthen the time spent after the fielder enters the collision area until the ball reaches the fielder. Accordingly, it is possible to secure sufficient time after the fielder begins the ball-catching movement until he completes catching the ball, that is, the time required to change the fielder's posture. Therefore, it is possible to realize the smooth ball-catching movement.

Moreover, the fielder's ball-catching posture is changed in accordance with the position of the fielder in the collision area. For example, when the fielder is in the center area of the collision area, the fielder facing the front to catch the ball is displayed. When the fielder is at the end of the collision area, the fielder turning right or left to catch the ball can be displayed. Accordingly, it is possible to display the fully realistic ball-catching movement.

Furthermore, the ball-catching movement very similar to real movement can be reproduced by changing the shape of the collision area according to the speed and position (height) of the ball. For example, when the height of the ball is high from the ground (standard plane picture), the fielder catching a fly is displayed. On the other hand, when the height of the ball is low, the fielder taking a grounder is displayed.

Secondly, it is possible to determine a collision between a batted ball and a fence by means of simple operation. According to this invention, a fence (curved-face picture) having a radius r from a center point is assumed and the distance r between the ball and the center point is calculated as appropriate. When distance r reaches distance R, it is determined that the ball collides with the fence, thereby easily enabling the collision determining processing.

Thirdly, it is possible to accurately perform the hidden face treatment of polygons which are positioned very close to each other. According to this invention, the Z-coordinate values of respective polygons in the same polygon group are not compared with each other, but the polygons are displayed in a predetermined description order. Accordingly, even if two polygons, such as a uniform polygon and a player's number polygon, are positioned very close to each other, the hidden face treatment can be performed accurately. Moreover, since the display order of a polygon group is decided based on algorithm such as the Z-sorting method in the same manner as the display order of other polygons, it is possible to secure the compatibility between the hidden face treatment of this invention and the conventional hidden face treatment (for example, the Z-sorting method).

The aforementioned ROM 103 corresponds to the aforementioned memory medium and is not only mounted on the main frame of the game device, but also can be newly connected or applied to the main frame of the game device from outside of the device.

Claims

- A picture processing device comprising: coordinate converting means for projecting a group of polygons represented in a three-dimensional coordinate system on a two-dimensional coordinate system;and hidden face treatment means for determining a display order of the polygon group relative to other polygons projected on the two-dimensional coordinate system, wherein the display order is determined based on dimensions of the depth-directional coordinate values of said three-dimensional coordinate system in a display screen and based on the depth-directional coordinate values of a representative polygon within the polygon group, and wherein the hidden face treatment means includes means for displaying polygons within the polygon group in accordance with a predetermined description order when the display order of the polygon group indicates that the polygon group is to be displayed.

- A picture processing device according to claim 1 , further comprising means for selecting a polygon within the polygon group having the highest description order as the representative polygon.

- A picture processing device according to claim 1 , wherein said representative polygon within the polygon group represents a player's number and another polygon within the polygon group represents a uniform.

- A picture processing method, comprising: projecting a group of polygons represented in a three-dimensional coordinate system on a two-dimensional coordinate system;determining a display order of the polygon group relative to other polygons projected on the two-dimensional coordinate system, wherein the display order is determined based on dimensions of the depth-directional coordinate values of said three-dimensional coordinate system in a display screen and based on the depth-directional coordinate values of a representative polygon within the polygon group;and displaying polygons within the polygon group in accordance with a predetermined description order when the display order of the polygon group indicates that the polygon group is to be displayed.

- A memory medium for storing the order in which a processing device executes a method described in claim 4 .

- A computer readable medium having embodied thereon a computer program for processing by a computer, the computer program comprising: a first code segment to project a group of polygons represented in a three-dimensional coordinate system on a two-dimensional coordinate system;a second code segment to determine a display order of the polygon group relative to other polygons projected on the two-dimensional coordinate system based on dimensions of the depth-directional coordinate values of the three-dimensional coordinate system in a display screen and based on the depth-directional coordinate values of a representative polygon within the polygon group;and a third code segment to display polygons within the polygon group in accordance with a predetermined description order when the display order of the polygon group indicates that the polygon group is to be displayed.

- The computer program of claim 6 , further comprising a fourth code segment to select a polygon within the polygon group having the highest description order as the representative polygon within the polygon group.

- The computer program of claim 6 , wherein the representative polygon within the polygon group represents a player's number and another polygon within the polygon group represents a uniform.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.