U.S. Pat. No. 11,011,142

INFORMATION PROCESSING SYSTEM AND GOGGLE APPARATUS

AssigneeNintendo Co., Ltd.

Issue DateFebruary 5, 2020

U.S. Patent No. 11,011,142: Information processing system and goggle apparatus

U.S. Patent No. 11,011,142: Information processing system and goggle apparatus

Issued May 18, 2021, to Nintendo Co Ltd

Filed: February 5, 2020 (claiming priority to February 27, 2019)

This Nintendo patent appears to relate to the Nintendo LABO virtual reality goggles kit.

Overview:

U.S. Patent No. 11,011,142 (the ‘142 patent) relates to a connectable goggle apparatus, which connects with an information processing system having a touchscreen display to create virtual reality (VR) goggles. The ‘142 patent details an attachable and detachable goggle apparatus which uses a first lens configured to cause a left eye of a user to visually confirm a first area of the touch screen, when attached to the information processing apparatus. A second lens does the same for the right eye. There is also an opening portion of the goggle, positioned at the nose of the user, to allow the user to touch a third area of the screen.

To achieve VR, a left eye image is displayed in the first area of the touch screen, a right eye image having parallax with the left eye image is displayed in the second touch screen area. If the third area is touched, the information processing apparatus executes a process. This allows the goggles to be used for VR with the information processing apparatus, the third section allowing a user to exit the VR mode of the display. The ‘142 patent is likely one of several patents relating to an aspect of the Nintendo LABO, particularly the virtual reality goggles one can build with the kit.

Abstract:

In a goggle apparatus, an opening portion is formed, and in a state where an information processing apparatus is attached to the goggle apparatus, a touch operation can be performed on a third area different from a first area and a second area of a touch screen. Then, a left-eye image is displayed in the first area of the touch screen, and a right-eye image having parallax with the left-eye image is at least displayed in the second area of the touch screen. If a touch operation is performed on a position in the third area of the touch screen, a process is executed.

Illustrative Claim:

- An information processing system including an information processing apparatus having a touch screen configured to display an image, and a goggle apparatus to and from which the information processing apparatus is attachable and detachable, the goggle apparatus comprising: a first lens configured to, if the information processing apparatus is attached to the goggle apparatus, cause a left eye of a user wearing the goggle apparatus to visually confirm a first area of the touch screen; a second lens configured to, if the information processing apparatus is attached to the goggle apparatus, cause a right eye of the user wearing the goggle apparatus to visually confirm a second area different from the first area of the touch screen; and an opening portion configured to, in a state where the information processing apparatus is attached to the goggle apparatus, enable the user to perform a touch operation on a third area different from the first area and the second area of the touch screen, wherein the opening portion is formed at a position of a nose of the user in a state where the user wears the goggle apparatus, and the information processing apparatus comprising a computer configured to: display a left-eye image in the first area of the touch screen and at least display a right-eye image having parallax with the left-eye image in the second area of the touch screen; and if a touch operation is performed on a position in the third area of the touch screen, execute a process.

Illustrative Figure

Abstract

In a goggle apparatus, an opening portion is formed, and in a state where an information processing apparatus is attached to the goggle apparatus, a touch operation can be performed on a third area different from a first area and a second area of a touch screen. Then, a left-eye image is displayed in the first area of the touch screen, and a right-eye image having parallax with the left-eye image is at least displayed in the second area of the touch screen. If a touch operation is performed on a position in the third area of the touch screen, a process is executed.

Description

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS An image display system according to an exemplary embodiment is described below. An example of the image display system according to the exemplary embodiment includes a game system1(as a minimum configuration, a main body apparatus2included in the game system1) and a goggle apparatus150. An example of the game system1includes a main body apparatus (an information processing apparatus; which functions as a game apparatus main body in the exemplary embodiment)2, a left controller3, and a right controller4. Each of the left controller3and the right controller4is attachable to and detachable from the main body apparatus2. That is, the game system1can be used as a unified apparatus obtained by attaching each of the left controller3and the right controller4to the main body apparatus2. Further, in the game system1, the main body apparatus2, the left controller3, and the right controller4can also be used as separate bodies (seeFIG. 2). Hereinafter, first, the hardware configuration of the game system1according to the exemplary embodiment is described, and then, the control of the game system1according to the exemplary embodiment is described. FIG. 1is a diagram showing an example of the state where the left controller3and the right controller4are attached to the main body apparatus2. As shown inFIG. 1, each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the game system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs. FIG. 2is a diagram showing an example of the state where each of the left controller3and the right controller4is detached from the main body apparatus2. As shown inFIGS. 1 and 2, the left controller3and the right ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

An image display system according to an exemplary embodiment is described below. An example of the image display system according to the exemplary embodiment includes a game system1(as a minimum configuration, a main body apparatus2included in the game system1) and a goggle apparatus150. An example of the game system1includes a main body apparatus (an information processing apparatus; which functions as a game apparatus main body in the exemplary embodiment)2, a left controller3, and a right controller4. Each of the left controller3and the right controller4is attachable to and detachable from the main body apparatus2. That is, the game system1can be used as a unified apparatus obtained by attaching each of the left controller3and the right controller4to the main body apparatus2. Further, in the game system1, the main body apparatus2, the left controller3, and the right controller4can also be used as separate bodies (seeFIG. 2). Hereinafter, first, the hardware configuration of the game system1according to the exemplary embodiment is described, and then, the control of the game system1according to the exemplary embodiment is described.

FIG. 1is a diagram showing an example of the state where the left controller3and the right controller4are attached to the main body apparatus2. As shown inFIG. 1, each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the game system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs.

FIG. 2is a diagram showing an example of the state where each of the left controller3and the right controller4is detached from the main body apparatus2. As shown inFIGS. 1 and 2, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. It should be noted that hereinafter, the left controller3and the right controller4will occasionally be referred to collectively as a “controller”.

FIG. 3is six orthogonal views showing an example of the main body apparatus2. As shown inFIG. 3, the main body apparatus2includes an approximately plate-shaped housing11. In the exemplary embodiment, a main surface (in other words, a surface on a front side, i.e., a surface on which the display12is provided) of the housing11has a generally rectangular shape.

It should be noted that the shape and the size of the housing11are optional. As an example, the housing11may be of a portable size. Further, the main body apparatus2alone or the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2may function as a mobile apparatus. The main body apparatus2or the unified apparatus may function as a handheld apparatus or a portable apparatus.

As shown inFIG. 3, the main body apparatus2includes the display12, which is provided on the main surface of the housing11. The display12displays an image generated by the main body apparatus2. In the exemplary embodiment, the display12is a liquid crystal display device (LCD). The display12, however, may be a display device of any type.

Further, the main body apparatus2includes a touch panel13on a screen of the display12. In the exemplary embodiment, the touch panel13is of a type that allows a multi-touch input (e.g., a capacitive type). The touch panel13, however, may be of any type. For example, the touch panel13may be of a type that allows a single-touch input (e.g., a resistive type). It should be noted that in the exemplary embodiment, the display12and the touch panel13provided on the screen of the display12are used as an example of a touch screen.

The main body apparatus2includes speakers (i.e., speakers88shown inFIG. 6) within the housing11. As shown inFIG. 3, speaker holes11aand11bare formed on the main surface of the housing11. Then, sounds output from the speakers88are output through the speaker holes11aand11b.

Further, the main body apparatus2includes a left terminal17, which is a terminal for the main body apparatus2to perform wired communication with the left controller3, and a right terminal21, which is a terminal for the main body apparatus2to perform wired communication with the right controller4.

As shown inFIG. 3, the main body apparatus2includes a slot23. The slot23is provided on an upper side surface of the housing11. The slot23is so shaped as to allow a predetermined type of storage medium to be attached to the slot23. The predetermined type of storage medium is, for example, a dedicated storage medium (e.g., a dedicated memory card) for the game system1and an information processing apparatus of the same type as the game system1. The predetermined type of storage medium is used to store, for example, data (e.g., saved data of an application or the like) used by the main body apparatus2and/or a program (e.g., a program for an application or the like) executed by the main body apparatus2. Further, the main body apparatus2includes a power button28.

The main body apparatus2includes a lower terminal27. The lower terminal27is a terminal for the main body apparatus2to communicate with a cradle. In the exemplary embodiment, the lower terminal27is a USB connector (more specifically, a female connector). Further, when the unified apparatus or the main body apparatus2alone is mounted on the cradle, the game system1can display on a stationary monitor an image generated by and output from the main body apparatus2. Further, in the exemplary embodiment, the cradle has the function of charging the unified apparatus or the main body apparatus2alone mounted on the cradle. Further, the cradle has the function of a hub device (specifically, a USB hub).

The main body apparatus2includes an illuminance sensor29. In the exemplary embodiment, the illuminance sensor29is provided in a lower portion of the main surface of the housing11and detects the illuminance (brightness) of light incident on the main surface side of the housing11. It should be noted that an image can be displayed by setting the display12to an appropriate brightness in accordance with the illuminance of the light detected by the illuminance sensor29. In the exemplary embodiment, based on the detected illuminance, it is determined whether or not the main body apparatus2is attached to the goggle apparatus150described below.

FIG. 4is six orthogonal views showing an example of the left controller3. As shown inFIG. 4, the left controller3includes a housing31. In the exemplary embodiment, the housing31has a vertically long shape, i.e., is shaped to be long in an up-down direction (i.e., a y-axis direction shown inFIGS. 1 and 4). In the state where the left controller3is detached from the main body apparatus2, the left controller3can also be held in the orientation in which the left controller3is vertically long. The housing31has such a shape and a size that when held in the orientation in which the housing31is vertically long, the housing31can be held with one hand, particularly the left hand. Further, the left controller3can also be held in the orientation in which the left controller3is horizontally long. When held in the orientation in which the left controller3is horizontally long, the left controller3may be held with both hands.

The left controller3includes an analog stick32. As shown inFIG. 4, the analog stick32is provided on a main surface of the housing31. The analog stick32can be used as a direction input section with which a direction can be input. The user tilts the analog stick32and thereby can input a direction corresponding to the direction of the tilt (and input a magnitude corresponding to the angle of the tilt). It should be noted that the left controller3may include a directional pad, a slide stick that allows a slide input, or the like as the direction input section, instead of the analog stick. Further, in the exemplary embodiment, it is possible to provide an input by pressing the analog stick32.

The left controller3includes various operation buttons. The left controller3includes four operation buttons33to36(specifically, a right direction button33, a down direction button34, an up direction button35, and a left direction button36) on the main surface of the housing31. Further, the left controller3includes a record button37and a “−” (minus) button47. The left controller3includes a first L-button38and a ZL-button39in an upper left portion of a side surface of the housing31. Further, the left controller3includes a second L-button43and a second R-button44, on the side surface of the housing31on which the left controller3is attached to the main body apparatus2. These operation buttons are used to give instructions depending on various programs (e.g., an OS program and an application program) executed by the main body apparatus2.

Further, the left controller3includes a terminal42for the left controller3to perform wired communication with the main body apparatus2.

FIG. 5is six orthogonal views showing an example of the right controller4. As shown inFIG. 5, the right controller4includes a housing51. In the exemplary embodiment, the housing51has a vertically long shape, i.e., is shaped to be long in the up-down direction. In the state where the right controller4is detached from the main body apparatus2, the right controller4can also be held in the orientation in which the right controller4is vertically long. The housing51has such a shape and a size that when held in the orientation in which the housing51is vertically long, the housing51can be held with one hand, particularly the right hand. Further, the right controller4can also be held in the orientation in which the right controller4is horizontally long. When held in the orientation in which the right controller4is horizontally long, the right controller4may be held with both hands.

Similarly to the left controller3, the right controller4includes an analog stick52as a direction input section. In the exemplary embodiment, the analog stick52has the same configuration as that of the analog stick32of the left controller3. Further, the right controller4may include a directional pad, a slide stick that allows a slide input, or the like, instead of the analog stick. Further, similarly to the left controller3, the right controller4includes four operation buttons53to56(specifically, an A-button53, a B-button54, an X-button55, and a Y-button56) on a main surface of the housing51. Further, the right controller4includes a “+” (plus) button57and a home button58. Further, the right controller4includes a first R-button60and a ZR-button61in an upper right portion of a side surface of the housing51. Further, similarly to the left controller3, the right controller4includes a second L-button65and a second R-button66.

Further, the right controller4includes a terminal64for the right controller4to perform wired communication with the main body apparatus2.

FIG. 6is a block diagram showing an example of the internal configuration of the main body apparatus2. The main body apparatus2includes components81to91,97, and98shown inFIG. 6in addition to the components shown inFIG. 3. Some of the components81to91,97, and98may be mounted as electronic components on an electronic circuit board and accommodated in the housing11.

The main body apparatus2includes a processor81. The processor81is an information processing section for executing various types of information processing to be executed by the main body apparatus2. For example, the processor81may be composed only of a CPU (Central Processing Unit), or may be composed of a SoC (System-on-a-chip) having a plurality of functions such as a CPU function and a GPU (Graphics Processing Unit) function. The processor81executes an information processing program (e.g., a game program) stored in a storage section (specifically, an internal storage medium such as a flash memory84, an external storage medium attached to the slot23, or the like), thereby performing the various types of information processing.

The main body apparatus2includes a flash memory84and a DRAM (Dynamic Random Access Memory)85as examples of internal storage media built into the main body apparatus2. The flash memory84and the DRAM85are connected to the processor81. The flash memory84is a memory mainly used to store various data (or programs) to be saved in the main body apparatus2. The DRAM85is a memory used to temporarily store various data used for information processing.

The main body apparatus2includes a slot interface (hereinafter abbreviated as “I/F”)91. The slot I/F91is connected to the processor81. The slot I/F91is connected to the slot23, and in accordance with an instruction from the processor81, reads and writes data from and to the predetermined type of storage medium (e.g., a dedicated memory card) attached to the slot23.

The processor81appropriately reads and writes data from and to the flash memory84, the DRAM85, and each of the above storage media, thereby performing the above information processing.

The main body apparatus2includes a network communication section82. The network communication section82is connected to the processor81. The network communication section82communicates (specifically, through wireless communication) with an external apparatus via a network. In the exemplary embodiment, as a first communication form, the network communication section82connects to a wireless LAN and communicates with an external apparatus, using a method compliant with the Wi-Fi standard. Further, as a second communication form, the network communication section82wirelessly communicates with another main body apparatus2of the same type, using a predetermined communication method (e.g., communication based on a unique protocol or infrared light communication). It should be noted that the wireless communication in the above second communication form achieves the function of enabling so-called “local communication” in which the main body apparatus2can wirelessly communicate with another main body apparatus2placed in a closed local network area, and the plurality of main body apparatuses2directly communicate with each other to transmit and receive data.

The main body apparatus2includes a controller communication section83. The controller communication section83is connected to the processor81. The controller communication section83wirelessly communicates with the left controller3and/or the right controller4. The communication method between the main body apparatus2and the left controller3and the right controller4is optional. In the exemplary embodiment, the controller communication section83performs communication compliant with the Bluetooth (registered trademark) standard with the left controller3and with the right controller4.

The processor81is connected to the left terminal17, the right terminal21, and the lower terminal27. When performing wired communication with the left controller3, the processor81transmits data to the left controller3via the left terminal17and also receives operation data from the left controller3via the left terminal17. Further, when performing wired communication with the right controller4, the processor81transmits data to the right controller4via the right terminal21and also receives operation data from the right controller4via the right terminal21. Further, when communicating with the cradle, the processor81transmits data to the cradle via the lower terminal27. As described above, in the exemplary embodiment, the main body apparatus2can perform both wired communication and wireless communication with each of the left controller3and the right controller4. Further, when the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2or the main body apparatus2alone is attached to the cradle, the main body apparatus2can output data (e.g., image data or sound data) to the stationary monitor or the like via the cradle.

Here, the main body apparatus2can communicate with a plurality of left controllers3simultaneously (in other words, in parallel). Further, the main body apparatus2can communicate with a plurality of right controllers4simultaneously (in other words, in parallel). Thus, a plurality of users can simultaneously provide inputs to the main body apparatus2, each using a set of the left controller3and the right controller4. As an example, a first user can provide an input to the main body apparatus2using a first set of the left controller3and the right controller4, and simultaneously, a second user can provide an input to the main body apparatus2using a second set of the left controller3and the right controller4.

The main body apparatus2includes a touch panel controller86, which is a circuit for controlling the touch panel13. The touch panel controller86is connected between the touch panel13and the processor81. Based on a signal from the touch panel13, the touch panel controller86generates, for example, data indicating the position where a touch input is provided. Then, the touch panel controller86outputs the data to the processor81.

Further, the display12is connected to the processor81. The processor81displays a generated image (e.g., an image generated by executing the above information processing) and/or an externally acquired image on the display12.

The main body apparatus2includes a codec circuit87and speakers (specifically, a left speaker and a right speaker)88. The codec circuit87is connected to the speakers88and a sound input/output terminal25and also connected to the processor81. The codec circuit87is a circuit for controlling the input and output of sound data to and from the speakers88and the sound input/output terminal25.

Further, the main body apparatus2includes an acceleration sensor89. In the exemplary embodiment, the acceleration sensor89detects the magnitudes of accelerations along predetermined three axial (e.g., xyz axes shown inFIG. 1) directions. It should be noted that the acceleration sensor89may detect an acceleration along one axial direction or accelerations along two axial directions.

Further, the main body apparatus2includes an angular velocity sensor90. In the exemplary embodiment, the angular velocity sensor90detects angular velocities about predetermined three axes (e.g., the xyz axes shown inFIG. 1). It should be noted that the angular velocity sensor90may detect an angular velocity about one axis or angular velocities about two axes.

The acceleration sensor89and the angular velocity sensor90are connected to the processor81, and the detection results of the acceleration sensor89and the angular velocity sensor90are output to the processor81. Based on the detection results of the acceleration sensor89and the angular velocity sensor90, the processor81can calculate information regarding the motion and/or the orientation of the main body apparatus2.

The illuminance sensor29is connected to the processor81, and the detection result of the illuminance sensor29is output to the processor81. Based on the detection result of the illuminance sensor29, the processor81can calculate information regarding the brightness of the periphery of the main body apparatus2.

The main body apparatus2includes a power control section97and a battery98. The power control section97is connected to the battery98and the processor81. Further, although not shown inFIG. 6, the power control section97is connected to components of the main body apparatus2(specifically, components that receive power supplied from the battery98, the left terminal17, and the right terminal21). Based on a command from the processor81, the power control section97controls the supply of power from the battery98to the above components.

Further, the battery98is connected to the lower terminal27. When an external charging device (e.g., the cradle) is connected to the lower terminal27, and power is supplied to the main body apparatus2via the lower terminal27, the battery98is charged with the supplied power.

FIG. 7is a block diagram showing examples of the internal configurations of the main body apparatus2, the left controller3, and the right controller4. It should be noted that the details of the internal configuration of the main body apparatus2are shown inFIG. 6and therefore are omitted inFIG. 7.

The left controller3includes a communication control section101, which communicates with the main body apparatus2. As shown inFIG. 7, the communication control section101is connected to components including the terminal42. In the exemplary embodiment, the communication control section101can communicate with the main body apparatus2through both wired communication via the terminal42and wireless communication not via the terminal42. The communication control section101controls the method for communication performed by the left controller3with the main body apparatus2. That is, when the left controller3is attached to the main body apparatus2, the communication control section101communicates with the main body apparatus2via the terminal42. Further, when the left controller3is detached from the main body apparatus2, the communication control section101wirelessly communicates with the main body apparatus2(specifically, the controller communication section83). The wireless communication between the communication control section101and the controller communication section83is performed in accordance with the Bluetooth (registered trademark) standard, for example.

Further, the left controller3includes a memory102such as a flash memory. The communication control section101includes, for example, a microcomputer (or a microprocessor) and executes firmware stored in the memory102, thereby performing various processes.

The left controller3includes buttons103(specifically, the buttons33to39,43,44, and47). Further, the left controller3includes the analog stick (“stick” inFIG. 7)32. Each of the buttons103and the analog stick32outputs information regarding an operation performed on itself to the communication control section101repeatedly at appropriate timing.

The communication control section101acquires information regarding an input (specifically, information regarding an operation or the detection result of the sensor) from each of input sections (specifically, the buttons103, the analog stick32, and the sensors104and105). The communication control section101transmits operation data including the acquired information (or information obtained by performing predetermined processing on the acquired information) to the main body apparatus2. It should be noted that the operation data is transmitted repeatedly, once every predetermined time. It should be noted that the interval at which the information regarding an input is transmitted from each of the input sections to the main body apparatus2may or may not be the same.

The above operation data is transmitted to the main body apparatus2, whereby the main body apparatus2can obtain inputs provided to the left controller3. That is, the main body apparatus2can determine operations on the buttons103and the analog stick32based on the operation data. Further, the main body apparatus2can calculate information regarding the motion and/or the orientation of the left controller3based on the operation data (specifically, the detection results of the acceleration sensor104and the angular velocity sensor105).

The left controller3includes a power supply section108. In the exemplary embodiment, the power supply section108includes a battery and a power control circuit. Although not shown inFIG. 7, the power control circuit is connected to the battery and also connected to components of the left controller3(specifically, components that receive power supplied from the battery).

As shown inFIG. 7, the right controller4includes a communication control section111, which communicates with the main body apparatus2. Further, the right controller4includes a memory112, which is connected to the communication control section111. The communication control section111is connected to components including the terminal64. The communication control section111and the memory112have functions similar to those of the communication control section101and the memory102, respectively, of the left controller3. Thus, the communication control section111can communicate with the main body apparatus2through both wired communication via the terminal64and wireless communication not via the terminal64(specifically, communication compliant with the Bluetooth (registered trademark) standard). The communication control section111controls the method for communication performed by the right controller4with the main body apparatus2.

The right controller4includes input sections similar to the input sections of the left controller3. Specifically, the right controller4includes buttons113and the analog stick52. These input sections have functions similar to those of the input sections of the left controller3and operate similarly to the input sections of the left controller3.

The right controller4includes a power supply section118. The power supply section118has a function similar to that of the power supply section108of the left controller3and operates similarly to the power supply section108.

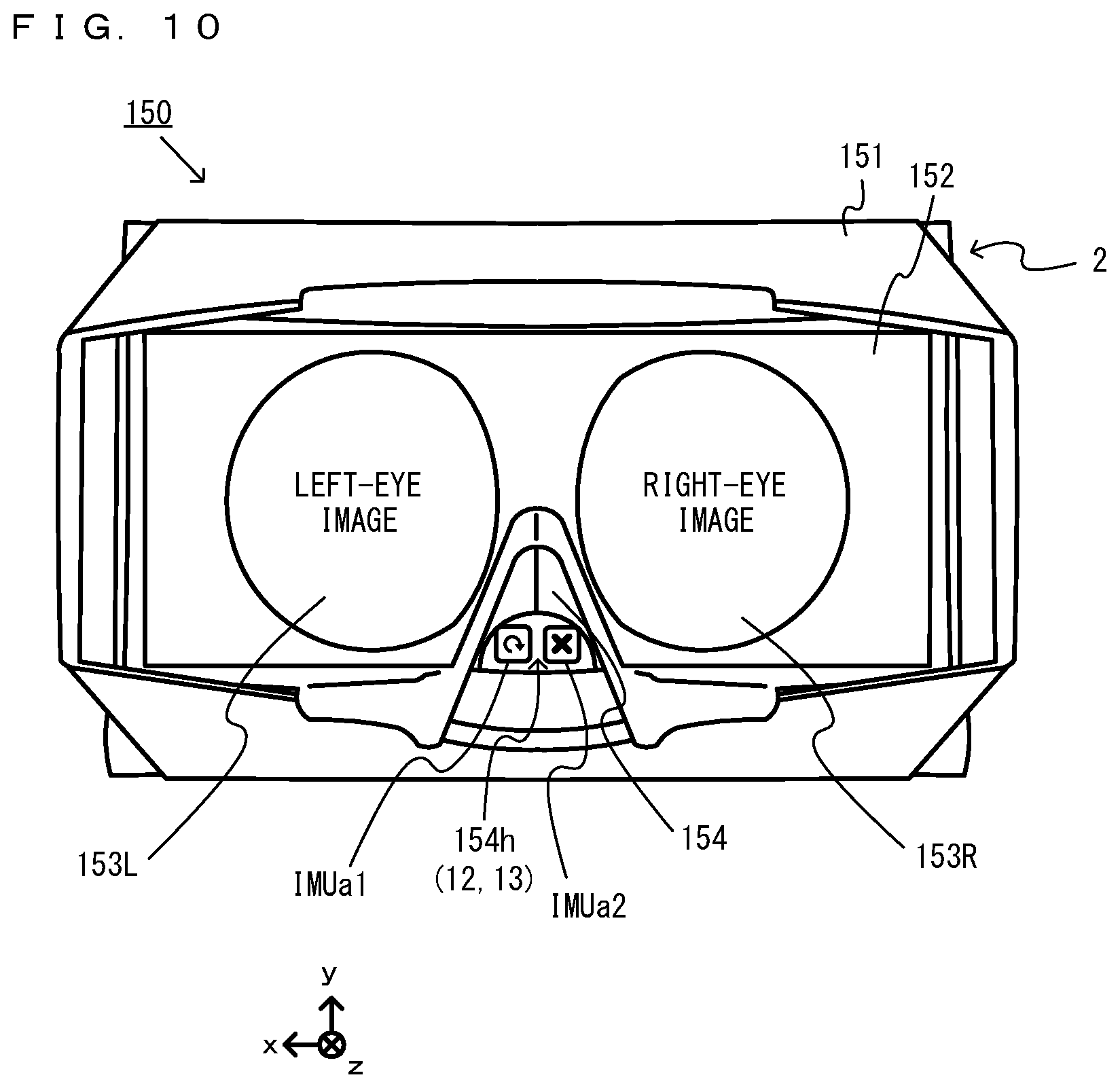

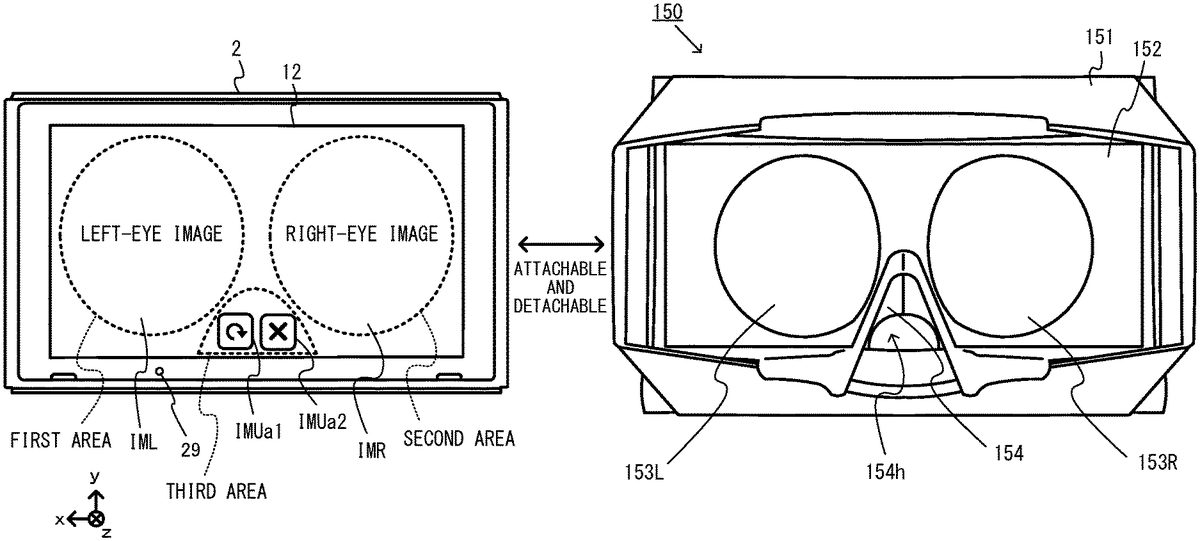

Next, with reference toFIGS. 8 to 14, a description is given of the goggle apparatus150, which is an example of an apparatus forming the image display system by attaching the game system1(specifically, the main body apparatus2) to the apparatus. It should be noted thatFIG. 8is a perspective view showing an example of the external appearance of the goggle apparatus150.FIG. 9is a front view showing an example of the state where the main body apparatus2is attached to the goggle apparatus150.FIG. 10is a front view showing an example of the state of the main body apparatus2attached to the goggle apparatus150.FIG. 11is a diagram showing an example of the internal structure of the goggle apparatus150.FIG. 12is a side view showing an example of the state of the main body apparatus2attached to the goggle apparatus150.FIG. 13is a diagram showing an example of the state of a user viewing an image displayed by the image display system.FIG. 14is a diagram showing an example of the state of the user holding the image display system.

InFIGS. 8 to 12, the goggle apparatus150includes a main body151, a lens frame member152, a lens153, and a plate-like member154. Here, the goggle apparatus, which is an example of the apparatus included in the image display system, is not limited to a configuration described below so long as the goggle apparatus is worn fitted to the face of the user by covering the left and right eyes of the user, and has the function of blocking at least a part of external light and the function of supporting a stereoscopic view for the user with a pair of lenses. For example, the types of the goggle apparatus may include those used in various states, such as a goggle apparatus that is fitted to the face of the user by the user holding the goggle apparatus (a handheld goggle), a goggle apparatus that is fitted to the face of the user by fixing the goggle apparatus to the head of the user, and a goggle apparatus into which the user looks in the state where the goggle apparatus is placed. Further, the goggle apparatus may function as a so-called head-mounted display by being worn on the head of the user in the state where the main body apparatus2is attached to the goggle apparatus, or may have a helmet-like shape as well as the goggle-like shape. In the following description of the goggle apparatus150, a goggle-type handheld goggle apparatus that is worn by the user while fitted to the face of the user by the user holding the goggle apparatus is used.

The main body151includes an attachment portion to which the main body apparatus2is detachably fixed by the attachment portion being in contact with a front surface, a back surface, an upper surface, and a lower surface of the main body apparatus2. The attachment portion includes a front surface abutment portion that is in surface contact with a part of the front surface (the surface on which the display12is provided) of the main body apparatus2, a back surface abutment portion that is in surface contact with the back surface of the main body apparatus2, an upper surface abutment portion that is in surface contact with the upper surface of the main body apparatus2, and a lower surface abutment portion that is in surface contact with the lower surface of the main body apparatus2. The attachment portion is formed into an angular tube which includes a gap formed by being surrounded by the front surface abutment portion, the back surface abutment portion, the upper surface abutment portion, and the lower surface abutment portion, and of which both left and right side surfaces are opened. Both side surfaces of the attachment portion (a side surface further in a positive x-axis direction shown inFIG. 8, and a side surface further in a negative x-axis direction shown inFIG. 8) open so that the attachment portion is attachable from the left side surface side or the right side surface side of the main body apparatus2. Then, as shown inFIG. 9, when the main body apparatus2is attached to the goggle apparatus150from the opening on the right side surface side of the main body apparatus2, the front surface abutment portion is in contact with the front surface of the main body apparatus2, the back surface abutment portion is in contact with the back surface of the main body apparatus2, the upper surface abutment portion is in contact with the upper surface of the main body apparatus2, and the lower surface abutment portion is in contact with the lower surface of the main body apparatus2. It should be noted that in a front surface abutment portion of the main body151, an opening portion is formed so as not to hinder at least the field of view for display images (a left-eye image and a right-eye image) on the display12when the main body apparatus2is attached.

As shown inFIGS. 9 and 12, the main body apparatus2is attached to the goggle apparatus150by inserting the main body apparatus2in a sliding manner into the gap of the attachment portion of the main body151from the left side surface side or the right side surface side of the main body apparatus2along the front surface abutment portion, the back surface abutment portion, the upper surface abutment portion, and the lower surface abutment portion of the attachment portion. Further, the main body apparatus2can be detached from the goggle apparatus150by sliding the main body apparatus2to the left or the right along the front surface abutment portion, the back surface abutment portion, the upper surface abutment portion, and the lower surface abutment portion of the attachment portion from the state where the main body apparatus2is attached to the goggle apparatus150. As described above, the main body apparatus2can be detachably attached to the goggle apparatus150.

The lens frame member152is fixedly provided on the opening portion side formed in a front surface portion of the main body151. The lens frame member152includes a pair of lens frames opened so as not to hinder the field of view for display images (a left-eye image IML and a right-eye image IMR) displayed on the display12of the main body apparatus2attached to the main body151. Further, on outer edges formed in upper, lower, left, and right portions of the lens frame member152, joint surfaces to be joined to the main body apparatus2are formed, and in a central portion of the outer edge formed in the lower portion, a V-shaped recessed portion for coming into contact with the nose of the user wearing the goggle apparatus150is formed.

The lens153, which is an example of a first lens and a second lens included in the image display system, includes a pair of a left-eye lens153L and a right-eye lens153R, and for example, is a pair of Fresnel lenses. The left-eye lens153L and the right-eye lens153R are fitted into the lens frames of the lens frame member152. Specifically, the left-eye lens153L is fitted into one of the lens frames opened so as not to hinder the field of view for the left-eye image IML displayed on the display12of the main body apparatus2attached to the main body151. When the user looks into the left-eye lens153L with their left eye, the user can view the left-eye image IML. Further, the right-eye lens153R is fitted into the other lens frame opened so as not to hinder the field of view for the right-eye image IMR displayed on the display12of the main body apparatus2attached to the main body151. When the user looks into the right-eye lens153R with their right eye, the user can view the right-eye image IMR. It should be noted that typically, the left-eye lens153L and the right-eye lens153R may be circular or elliptical magnifying lenses, and may be lenses that distort images and cause the user to visually confirm the images. For example, the left-eye lens153L may distort the left-eye image IML (described below) displayed distorted into a circular or elliptical shape, in a direction opposite to the distortion of the image and cause the user to visually confirm the image, and the right-eye lens153R may distort the right-eye image IMR (described below) displayed distorted into a circular or elliptical shape, in a direction opposite to the distortion of the image and cause the user to visually confirm the image, whereby the user may stereoscopically view the images. Further, a configuration may be employed in which the left-eye lens153L and the right-eye lens153R are integrally formed.

The main body151includes the abutment portion provided protruding from the front surface side of the main body151to outside by surrounding the outer edges of the lens frame member152in an angular tube shape. In the abutment portion, an end surface protruding from the front surface side to outside is disposed on the near side of the lens153when the lens153is viewed from outside the goggle apparatus150. The end surface is placed furthest on the near side (furthest in a negative z-axis direction) of the goggle apparatus150in the state where the main body apparatus2is attached. Then, the end surface of the abutment portion of the main body151has a shape that fits the face of the user (typically, the periphery of both eyes of the user) when the user looks into the goggle apparatus150to which the main body apparatus2is attached. The end surface has the function of fixing the positional relationships between the eyes of the user and the lens153by abutting the face of the user.

Further, when the user views a three-dimensional image displayed on the display12using the image display system, the abutment portion can block external light on the left-eye lens153L and the right-eye lens153R. This can improve a sense of immersion for the user viewing the three-dimensional image displayed on the display12. It should be noted that when blocking light, the abutment portion does not need to completely block external light. For example, as shown inFIG. 14, in a part of the abutment portion formed into a tubular shape, a recess may be formed. It should be noted that the recess of the abutment portion exemplified inFIG. 14is formed at the position of a lower portion of the midpoint between the left-eye lens153L and the right-eye lens153R. This is a position that the nose of the user viewing the three-dimensional image displayed on the display12abuts. That is, the recess of the abutment portion can avoid the strong abutment between the abutment portion and the nose of the user. Even if the light blocking effect somewhat deteriorates, a feeling of discomfort regarding the abutment between the abutment portion and the nose can be reduced.

As shown inFIG. 10, the plate-like member154is fixedly provided within the main body151, which is a portion between the lens frame member152and the display12when the main body apparatus2is attached to the attachment portion of the main body151. For example, a part of the plate-like member154has a shape along the V-shaped recessed portion of the lens frame member152and is placed as a wall (hereinafter referred to as a “first wall portion”) connecting between the recessed portion and the display12of the main body apparatus2attached to the main body151. Then, a space surrounded by the first wall portion is an opening portion154hthat exposes a part of the display12of the main body apparatus2attached to the main body151to outside and functions as an operation window that enables the user to perform a touch operation on the part through the space. It should be noted that a part of the first wall portion of the plate-like member154may open as shown inFIG. 11.

Further, as shown inFIG. 11, as an example, the plate-like member154is provided standing in a vertical direction between the left-eye lens153L and the right-eye lens153R and placed as a wall (hereinafter referred to as a “second wall portion”) connecting between the recessed portion and the display12of the main body apparatus2attached to the main body151. Then, the second wall portion is disposed between the left-eye image IML and the right-eye image IMR displayed on the display12, so as to divide the images in the state where the main body apparatus2is attached to the main body151. The second wall portion functions as a division wall provided between the left-eye image IML and the right-eye image IMR. Then, the plate-like member154is provided by extending the first wall portion to the second wall portion, and the first wall portion and the second wall portion are formed of integrated members. As described above, the first wall portion and the second wall portion are formed of integrated members, whereby it is possible to reduce the production cost of the plate-like member154.

InFIGS. 10, 12, 13, and 14, the image display system is formed by attaching the main body apparatus2to the goggle apparatus150. Here, in the exemplary embodiment, the main body apparatus2is attached such that the entirety of the main body apparatus2is covered by the goggle apparatus150. Then, when the main body apparatus2is attached to the goggle apparatus150, the user can view only the left-eye image IML displayed in a left area of the display12through the left-eye lens153L and can view only the right-eye image IMR displayed in a right area of the display12through the right-eye lens153R. Thus, by viewing the left-eye lens153L with their left eye and viewing the right-eye lens153R with their right eye, the user of the image display system can visually confirm the left-eye image IML and the right-eye image IMR. Thus, by displaying the left-eye image IML and the right-eye image IMR having parallax with each other on the display12, it is possible to display a three-dimensional image having a stereoscopic effect to the user.

As shown inFIGS. 13 and 14, when the user views the three-dimensional image displayed on the display12while holding the image display system obtained by attaching the main body apparatus2to the goggle apparatus150, the user can hold with their left hand a left side portion of the goggle apparatus150to which the main body apparatus2is attached, and can hold a right side portion of the goggle apparatus150with their right hand. The user thus holds the left and right side portions of the goggle apparatus150, whereby it is possible to maintain the state where the main body apparatus2is stably attached.

Further, in the image display system, even in the state where the main body apparatus2is attached to the goggle apparatus150, a touch operation can be performed on a part of the touch panel13provided on the screen of the display12, through the opening portion154hformed surrounded by the first wall portion of the plate-like member154(a third area of the display12described below). Further, based on the detection results of the acceleration sensor89and/or the angular velocity sensor90provided in the main body apparatus2, the image display system can calculate information regarding the motion and/or the orientation of the main body apparatus2, i.e., the motion and/or the orientation of the image display system including the goggle apparatus150. Thus, the image display system can calculate the orientation based on the direction of gravity of the head of the user looking into the goggle apparatus150to which the main body apparatus2is attached. Further, when the orientation or the direction of the head of the user looking into the goggle apparatus150to which the main body apparatus2is attached changes, the image display system can calculate the direction or the angle of the change. Further, when the user looking into the goggle apparatus150to which the main body apparatus2is attached vibrates the image display system by hitting the image display system, the image display system can detect the vibration. Thus, when the user views the three-dimensional image displayed on the display12through the left-eye lens153L and the right-eye lens153R in the state the main body apparatus2is attached to the goggle apparatus150, a play style is achieved in which a touch operation through the opening portion154h, an operation based on the orientation based on the direction of gravity of the image display system, the operation of changing the orientation of the image display system, and the operation of vibrating the image display system can be performed.

It should be noted that when the image display system according to the exemplary embodiment is used, an operation may be performed using at least one of the left controller3and the right controller4detached from the main body apparatus2. For example, when the image display system is operated using the left controller3, the user views the three-dimensional image displayed on the display12, while holding the goggle apparatus150to which the main body apparatus2is attached with their right hand, and also performs the operation while holding the detached left controller3alone with their left hand. In this case, operation information regarding the operations performed on the left controller3and/or the right controller4detached from the main body apparatus2is transmitted to the main body apparatus2through wireless communication with the main body apparatus2. Specifically, the operation information regarding the operation performed on the left controller3is wirelessly transmitted from the communication control section101of the left controller3and received by the controller communication section83of the main body apparatus2. Further, the operation information regarding the operation performed on the right controller4is wirelessly transmitted from the communication control section111of the right controller4and received by the controller communication section83of the main body apparatus2.

As described above, in the exemplary embodiment, a portable image display system where the user views a three-dimensional image while holding the portable image display system can be formed by attaching the main body apparatus2to the goggle apparatus150. Further, in the image display system according to the exemplary embodiment, the user views the three-dimensional image displayed on the display12of the main body apparatus2, while causing the face of the user to abut the goggle apparatus150. Thus, the positional relationships between stereo speakers (the left speaker88L and the right speaker88R) provided in the main body apparatus2and the ears of the user are also fixed, and the left and right speakers are placed near the ears of the users. Thus, the main body apparatus2can output sounds based on the positional relationships between a sound output apparatus and the ears of a viewer without forcing the viewer to use earphones or speakers. For example, the main body apparatus2can control a sound source using so-called 3D audio effect technology based on the positional relationships between the sound output apparatus and the ears of the viewer.

Next, with reference toFIGS. 9, 10, and 15, a description is given of images displayed on the main body apparatus2. It should be noted thatFIG. 15is a diagram showing examples of images displayed on the main body apparatus2in a stereoscopic display mode and a non-stereoscopic display mode.

The image display system according to the exemplary embodiment is set to either of a stereoscopic display mode used to stereoscopically view an image displayed on the display12by attaching the main body apparatus2to the goggle apparatus150, and a non-stereoscopic display mode used to directly view an image displayed on the display12by detaching the main body apparatus2from the goggle apparatus150, thereby non-stereoscopically viewing the image. Then, the image display system displays the image corresponding to the set mode on the display12of the main body apparatus2. Here, the stereoscopic image to be stereoscopically viewed may be stereoscopically viewed by the user viewing a right-eye image and a left-eye image having parallax with each other with their right eye and left eye. In this case, the non-stereoscopic image to be non-stereoscopically viewed is an image other than that of the above two-image display (stereoscopic display), and typically, may be viewed by the user viewing a single image with their right eye and left eye.

In the stereoscopic display mode, the image display system forms a content image as a display target (e.g., an image for displaying a part of a virtual space or real space) using the left-eye image IML and the right-eye image IMR having parallax with each other, displays the left-eye image IML in the left area of the display12, and displays the right-eye image IML in the right area of the display12. Specifically, as shown inFIG. 9, in the stereoscopic display mode, the left-eye image IML is displayed in a first area, which is an approximately elliptical area that can be viewed through the left-eye lens153L when the main body apparatus2is attached to the goggle apparatus150, and is also a part of the left area of the display12. Further, in the stereoscopic display mode, the right-eye image IMR is displayed in a second area, which is an approximately elliptical area that can be viewed through the right-eye lens153R when the main body apparatus2is attached to the goggle apparatus150, and is also a part of the right area of the display12.

Here, as described above, in the state where the main body apparatus2is attached to the goggle apparatus150, the second wall portion of the plate-like member154is placed between the left-eye image IML displayed in the first area of the display12and the right-eye image IMR displayed in the second area of the display12. Thus, the left-eye image IML and the right-eye image IMR are divided by the second wall portion of the plate-like member154as a division wall. Thus, it is possible to prevent the right-eye image IMR from being visually confirmed through the left-eye lens153L, or the left-eye image IML from being visually confirmed through the right-eye lens153R.

As an example, images of the virtual space viewed from a pair of virtual cameras (a left virtual camera and a right virtual camera) having parallax with each other and placed in the virtual space are generated as the left-eye image IML and the right-eye image IMR. The pair of virtual cameras is placed in the virtual space, corresponding to the orientation of the main body apparatus2based on the direction of gravity in real space. Then, the pair of virtual cameras changes its orientation in the virtual space, corresponding to a change in the orientation of the main body apparatus2in real space and controls the direction of the line of sight of the virtual cameras in accordance with the orientation of the main body apparatus2. Consequently, by the operation of changing the orientation of the main body apparatus2(the image display system) to look around, the user wearing the image display system can change the display range of the virtual space to be stereoscopically viewed, can look over the virtual space that is stereoscopically viewed, and therefore can have an experience as if actually being at the location of the virtual cameras. It should be noted that in the exemplary embodiment, the main body apparatus2matches the direction of a gravitational acceleration acting on the main body apparatus2and the direction of gravity in the virtual space acting on the virtual cameras and also matches the amount of change in the orientation of the main body apparatus2and the amount of change in the direction of the line of sight of the virtual cameras. This increases the reality of the operation of looking over the virtual space to be stereoscopically viewed based on the orientation of the main body apparatus2.

Further, the image display system displays on the display12a user interface image IMU for receiving a touch operation on the touch panel13of the main body apparatus2. For example, a user interface image IMUa displayed in the stereoscopic display mode is displayed in the display area of the display12where a touch operation can be performed through the opening portion154hof the goggle apparatus150. For example, as described above, the opening portion154his formed surrounded by the first wall portion of the plate-like member154and enables a touch operation on a part of the display12of the main body apparatus2attached to the goggle apparatus150(specifically, an area near the center of the lower portion of the display12) through the V-shaped recessed portion of the lens frame member152that abuts the nose of the user. As an example, as shown inFIG. 9, even in the state where the main body apparatus2is attached to the goggle apparatus150, the opening portion154henables a touch operation on a third area set in the lower portion of the display12sandwiched between the first area and the second area of the display12.

For example, in the stereoscopic display mode, two user interface images IMUa1and IMUa2are displayed in the third area of the display12. As an example, the user interface image IMUa1is an operation icon for, when its display position is subjected to a touch operation through the touch panel13, giving an operation instruction to retry a game from the beginning. Further, the user interface image IMUa2is an operation icon for, when its display position is subjected to a touch operation through the touch panel13, giving an operation instruction to end the game. Then, the two user interface images IMUa1and IMUa2are displayed next to each other in the third area of the display12, in sizes matching the shape of the third area. Consequently, the user can give a plurality of operation instructions based on touch operations by performing a touch operation on either of the two user interface images IMUa1and IMUa2through the opening portion154heven in the state where the main body apparatus2is attached to the goggle apparatus150. It should be noted that the two user interface images IMUa1and IMUa2may be displayed near the third area that enables a touch operation by exposing a part of the display12to outside. That is, parts of the two user interface images IMUa1and/or IMUa2may be displayed outside the third area.

The two user interface images IMUa1and IMUa2are images displayed in the stereoscopic display mode and are displayed in the third area outside the first area that can be viewed with the left eye of the user and the second area that can be viewed with the right eye of the user in the display12of the main body apparatus2attached to the goggle apparatus150. That is, the user interface images IMUa1and IMUa2displayed in the third area are displayed outside the field of view of the user visually confirming the user interface images IMUa1and IMUa2through the goggle apparatus150, do not include two images having parallax with each other, and therefore are displayed as non-stereoscopic images that cannot be stereoscopically viewed. Further, the user interface images IMUa1and IMUa2as targets of touch operations are displayed outside the first area and the second area for displaying a stereoscopic image, and therefore, the first area and the second area are less likely to be subjected to a touch operation. Thus, it is possible to prevent the first area and the second area for displaying a stereoscopic image from being defaced by the display12being subjected to a touch operation, and also prevent a finger for performing a touch operation from entering the field of view in the state where the stereoscopic image is viewed.

It should be noted that in another exemplary embodiment, the user interface images IMUa1and IMUa2may be displayed on the display12as a stereoscopic image that can be stereoscopically viewed in the stereoscopic display mode. In this case, the user interface images IMUa1and IMUa2are displayed on the display12as a stereoscopic image by including two images having parallax with each other. Typically, one of images to be stereoscopically viewed is displayed in a part of the first area, and the other image to be stereoscopically viewed is displayed in a part of the second area.

As shown inFIG. 16, in the non-stereoscopic display mode, the image display system forms the above content image as the display target using a single image IMS as a non-stereoscopic image, and as an example, displays the single image IMS in the entirety of the display area of the display12.

As an example, an image of the virtual space viewed from a single virtual camera placed in the virtual space is generated as the single image IMS. The single virtual camera is placed in the virtual space, corresponding to the orientation of the main body apparatus2based on the direction of gravity in real space. Then, the single virtual camera changes its orientation in the virtual space, corresponding to a change in the orientation of the main body apparatus2in real space and controls the direction of the line of sight of the virtual camera in accordance with the orientation of the main body apparatus2. Consequently, by the operation of changing the orientation of the main body apparatus2to look around, the user holding the main body apparatus2detached from the goggle apparatus150can look over the virtual space by changing the display range of the virtual space displayed on the display12, and therefore can have an experience as if actually being at the location of the virtual camera. It should be noted that in the exemplary embodiment, also in the non-stereoscopic display mode, the main body apparatus2matches the direction of a gravitational acceleration acting on the main body apparatus2and the direction of gravity in the virtual space acting on the virtual camera and also matches the amount of change in the orientation of the main body apparatus2and the amount of change in the direction of the line of sight of the virtual camera. This also increases the reality of the operation of looking over the virtual space to be non-stereoscopic viewed based on the orientation of the main body apparatus2. It should be noted that in the exemplary embodiment, the single image IMS is used as an example of a non-stereoscopic image.

Further, a user interface image IMUb displayed in the non-stereoscopic display mode is displayed on the display12in a superimposed manner on, for example, the content image (the single image IMS) displayed on the display12. For example, as shown inFIG. 15, also in the non-stereoscopic display mode, two user interface images IMUb1and IMUb2are displayed on the display12. As an example, the user interface image IMUb1is an image corresponding to the user interface image IMUa1and is an operation icon for, when its display position is subjected to a touch operation through the touch panel13, giving an operation instruction to retry a game from the beginning. Further, the user interface image IMUb2is an image corresponding to the user interface image IMUa2and is an operation icon for, when its display position is subjected to a touch operation through the touch panel13, giving an operation instruction to end the game. Here, the image corresponding to the user interface image IMUa indicates that the design and/or the size of the image are different from those of the user interface image IMUa, but the function of the image is the same (e.g., the content of an operation instruction given by performing a touch operation on the image is the same) as that of the user interface image IMUa. It should be noted that the user interface image IMUa displayed in the non-stereoscopic display mode may not only have the same function as that of the user interface image IMUb, but also have the same design and size as those of the user interface image IMUb, i.e., the user interface image IMUa may be completely the same as the user interface image IMUb.

The two user interface images IMUb1and IMUb2are displayed in corner areas (e.g., an upper left corner area and an upper right corner area) of the display12that are different from the third area. It should be noted that areas where touch operations can be performed on the two user interface images IMUb1and IMUb2are not limited, and therefore, the two user interface images IMUb1and IMUb2can be displayed larger than the user interface image IMUa displayed in the stereoscopic display mode and can be displayed in sizes and shapes that facilitate a touch operation of the user and at positions where the visibility of the content image (the single image IMS) is unlikely to be impaired by a touch operation.

Based on the result of detecting whether or not the main body apparatus2is in an attached state where the main body apparatus2is attached to the goggle apparatus150, the image display system according to the exemplary embodiment can automatically switch the stereoscopic display mode and the non-stereoscopic display mode. For example, in the main body apparatus2, the illuminance sensor29is provided that detects the illuminance (brightness) of light incident on the main surface side of the housing11. Based on the detection result of the illuminance by the illuminance sensor29, the main body apparatus2can detect whether or not the main body apparatus2is in the attached state where the main body apparatus2is attached to the goggle apparatus150. Specifically, when the main body apparatus2is attached to the goggle apparatus150, the illuminance detected by the illuminance sensor29decreases. Thus, a threshold allowing the detection of the decreased illuminance is provided, and it is detected whether or not the illuminance is greater than or equal to the threshold. Thus, it is possible to detect the attached state of the main body apparatus2. Here, the attached state of the goggle apparatus150detected by the main body apparatus2based on the detection result of the illuminance by the illuminance sensor29may be technically a halfway attached state at a stage prior to the state where the main body apparatus2enters the state where the main body apparatus2is completely attached to the goggle apparatus150, and is a concept also including such a halfway attached state.

When it is determined that the main body apparatus2is not in the state where the main body apparatus2is attached to the goggle apparatus150, the image display system according to the exemplary embodiment sets the display mode of the main body apparatus2to the non-stereoscopic display mode. On the other hand, in the main body apparatus2set to the non-stereoscopic display mode, when it is determined that the main body apparatus2changes from a non-attached state where the main body apparatus2is not attached to the goggle apparatus150to the attached state where the main body apparatus2is attached to the goggle apparatus150, the main body apparatus2changes the content image of a displayed non-stereoscopic image to a stereoscopic image, thereby displaying the same content image on the display12. As an example, when the single virtual camera is set in the virtual space to display the single image IMS in the non-stereoscopic display mode, and a virtual space image is generated, the main body apparatus2sets virtual cameras for displaying the left-eye image IML and the right-eye image IMR by changing the single virtual camera to the pair of virtual cameras (the left virtual camera and the right virtual camera) having parallax with each other, without changing the position and the direction of the line of sight of the single virtual camera, thereby switching to the generation of a virtual space image in the stereoscopic display mode. Further, in the main body apparatus2set to the stereoscopic display mode, when it is determined that the main body apparatus2changes from the attached state where the main body apparatus2is attached to the goggle apparatus150to the non-attached state where the main body apparatus2is not attached to the goggle apparatus150, the main body apparatus2changes the content image of a displayed stereoscopic image to a non-stereoscopic image, thereby displaying the same content image corresponding to the content image of the stereoscopic image, as a non-stereoscopic image on the display12. As an example, when the pair of virtual cameras is set in the virtual space to display the left-eye image IML and the right-eye image IMR in the stereoscopic display mode, and a virtual space image is generated, the main body apparatus2sets a virtual camera for displaying the single image IMS by changing the pair of virtual cameras to the single virtual camera without changing the position and the direction of the line of sight of the pair of virtual cameras, thereby switching to the generation of a virtual space image in the non-stereoscopic display mode. As described above, the content image of a non-stereoscopic image (a virtual space image) corresponding to the content image of a stereoscopic image (a virtual space image) or the content image of a stereoscopic image (a virtual space image) corresponding to the content image of a non-stereoscopic image (a virtual space image) indicates that there is only a difference between a stereoscopic image and a non-stereoscopic image in either case. However, the content image of a non-stereoscopic image corresponding to the content image of a stereoscopic image and the content image of a stereoscopic image corresponding to the content image of a non-stereoscopic image may be different in display range. Typically, the content image of a stereoscopic image may have a display range smaller than that of the content image of a non-stereoscopic image.

Further, when the display mode is switched, the image display system according to the exemplary embodiment changes the size, the shape, and the position of the user interface image IMU and displays the user interface image IMU on the display12. For example, in the main body apparatus2set to the non-stereoscopic display mode, when it is determined that the main body apparatus2changes from the non-attached state where the main body apparatus2is not attached to the goggle apparatus150to the attached state where the main body apparatus2is attached to the goggle apparatus150, the main body apparatus2changes the shapes of the user interface images IMUb1and IMUb2displayed in a superimposed manner on the content image in the corner areas of the display12to the user interface images IMUa1and IMUa2and also moves the display positions of the user interface images IMUa1and IMUa2to the third area of the display12, thereby displaying the user interface image IMU having the same function. Further, in the main body apparatus2set to the stereoscopic display mode, when it is determined that the main body apparatus2changes from the attached state where the main body apparatus2is attached to the goggle apparatus150to the non-attached state where the main body apparatus2is not attached to the goggle apparatus150, the main body apparatus2changes the shapes of the user interface images IMUa1and IMUa2displayed in the third area of the display12to the user interface images IMUb1and IMUb2and also moves the display positions of the user interface images IMUb1and IMUb2so that the user interface images IMUb1and IMUb2are displayed in a superimposed manner on the content image in the corner areas of the display12, thereby displaying the user interface image IMU having the same function.

It should be noted that in the above exemplary embodiment, an example has been used where based on the detection result of the illuminance by the illuminance sensor29, it is detected whether or not the main body apparatus2is in the attached state where the main body apparatus2is attached to the goggle apparatus150. Alternatively, based on another detection result, it may be detected whether or not the main body apparatus2is in the attached state where the main body apparatus2is attached to the goggle apparatus150. As an example, based on a detection result obtained by a connection terminal provided in the main body apparatus2and a connection terminal provided in the goggle apparatus150being electrically connected together by the main body apparatus2entering the attached state, or a detection result obtained by a predetermined switch mechanism provided in the main body apparatus2being turned on or off by the main body apparatus2entering the attached state, it may be detected whether or not the main body apparatus2is in the attached state where the main body apparatus2is attached to the goggle apparatus150. As another example, based on the image capturing result of image capturing means (an image sensor) provided in the main body apparatus2, it may be determined whether or not a predetermined image is captured, or it may be determined whether or not captured luminance is greater than or equal to a threshold, thereby detecting whether or not the main body apparatus2is in the attached state where the main body apparatus2is attached to the goggle apparatus150. Further, as another example, when the main body apparatus2enters the attached state where the main body apparatus2is attached to the goggle apparatus150, or when the display mode is to be switched, the user may be urged to perform a predetermined operation. Then, based on the fact that the predetermined operation is performed, it may be determined whether or not the main body apparatus2is in the attached state where the main body apparatus2is attached to the goggle apparatus150, or the selected display mode may be determined.

Further, in the above exemplary embodiment, the third area is set in a lower portion of the display12on the lower side of the center of the display12sandwiched between the first area and the second area of the display12, thereby enabling a touch operation on the third area. Alternatively, the third area may be set in another area of the display12. As a first example, the third area may be set in an upper portion of the display12on the upper side of the center of the display12sandwiched between the first area and the second area of the display12. As a second example, the third area may be set in an upper portion (i.e., the upper left corner area of the display12) or a lower portion (i.e., a lower left corner area of the display12) sandwiched between the first area of the display12and the left end of the display12. As a third example, the third area may be set in an upper portion (i.e., the upper right corner area of the display12) or a lower portion (i.e., a lower right corner area of the display12) sandwiched by the second area of the display12and the right end of the display12. No matter which area the third area is set in, the user interface image IMUa matching the shape of the third area is displayed in the third area, and the opening portion154hthat enables a touch operation on the third area is formed in the goggle apparatus150, thereby enabling an operation similar to that in the above description. It should be noted that when the third area is set between the first area and the second area sandwiched between the first area and the second area, the third area may be shifted to the left or the right from an intermediate position between the first area and the second area.

Further, in the above exemplary embodiment, an example has been used where a user interface image is displayed in the third area. Alternatively, the user interface image may not be displayed in the third area. As described above, in the stereoscopic display mode, even when a user interface image is not displayed in the third area, a predetermined process (e.g., the process of retrying a game from the beginning or the process of ending the game) is executed in accordance with a touch operation on a predetermined position in the third area or any position in the entirety of the third area through the opening portion154h, whereby it is possible to obtain effects similar to those in a case where the user interface image is displayed in the third area.

Further, in the above exemplary embodiment, a user interface image is displayed in the third area, thereby enabling a touch operation on the user interface image. Alternatively, a user interface image related to this user interface image may be further displayed also in the first area and the second area. Here, the user interface image related to the user interface image displayed in the third area is a user interface image having a function similar to that of the function of the user interface image displayed in the third area. At least one of the shape, the size, and the design of the user interface image may be different from that of the user interface image displayed in the third area. As described above, the user interface image displayed in the first area and the second area is stereoscopically viewed through the goggle apparatus150. For example, the user interface image is placed at the center of the display area that is stereoscopically viewed, or overlaps a set indicator, whereby it is possible to set the user interface image as an operation target. Then, in the state where the user interface image that is stereoscopically viewed is set as the operation target, and when a vibration having a magnitude greater than or equal to a predetermined magnitude is imparted to the goggle apparatus150to which the main body apparatus2is attached, a process corresponding to the user interface image as the operation target is executed. For example, a gravitational acceleration component is removed from the accelerations in the xyz axis directions in the main body apparatus2detected by the acceleration sensor89, and when the accelerations after the removal indicate that a vibration having a magnitude greater than or equal to a predetermined magnitude is applied to the main body apparatus2, a process corresponding to the user interface image as the operation target is executed. It should be noted that as the method for extracting the gravitational acceleration, any method may be used. For example, an acceleration component averagely generated in the main body apparatus2may be calculated, and the acceleration component may be extracted as the gravitational acceleration.

Further, the left-eye image IML and the right-eye image IMR may also be displayed outside the display area of the display12that can be viewed through the left-eye lens153L and the right-eye lens153R (typically, outside the first area and/or outside the second area), and parts of the left-eye image IML and the right-eye image IMR may also be displayed in the third area where a touch operation can be performed. Further, the left-eye image IML and the right-eye image IMR may be displayed in a range smaller than that of the display area of the display12that can be viewed through the left-eye lens153L and the right-eye lens153R (typically, the first area and/or the second area).

Next, with reference toFIGS. 16 and 17, a description is given of an example of a specific process executed by the game system1in the exemplary embodiment.FIG. 16is a diagram showing an example of a data area set in the DRAM85of the main body apparatus2in the exemplary embodiment. It should be noted that in the DRAM85, in addition to the data shown inFIG. 16, data used in another process is also stored, but is not described in detail here.