U.S. Pat. No. 10,905,962

MACHINE-LEARNED TRUST SCORING FOR PLAYER MATCHMAKING

AssigneeValve Corp

Issue DateSeptember 7, 2018

Illustrative Figure

Abstract

A trained machine learning model(s) is used to determine scores (e.g., trust scores) for user accounts registered with a video game service, and the scores are used to match players together in multiplayer video game settings. In an example process, a computing system may access data associated with registered user accounts, provide the data as input to the trained machine learning model(s), and the trained machine learning model(s) generates the scores as output, which relate to probabilities of players behaving, or not behaving, in accordance with a particular behavior while playing a video game in multiplayer mode. Thereafter, subsets of logged-in user accounts executing a video game can be assigned to different matches based at least in part on the scores determined for those logged-in user accounts, and the video game is executed in the assigned match for each logged-in user account.

Description

DETAILED DESCRIPTION Described herein are, among other things, techniques, devices, and systems for generating trust scores using a machine learning approach, and thereafter using the machine-learned trust scores to match players together in multiplayer video game settings. The disclosed techniques may be implemented, at least in part, by a remote computing system that distributes video games (and content thereof) to client machines of a user community as part of a video game service. These client machines may individually install a client application that is configured to execute video games received (e.g., downloaded, streamed, etc.) from the remote computing system. This video game platform enables registered users of the community to play video games as “players.” For example, a user can load the client application, login with a registered user account, select a desired video game, and execute the video game on his/her client machine via the client application. Whenever the above-mentioned users access and use this video game platform, data may be collected by the remote computing system, and this data can be maintained by the remote computing system in association with registered user accounts. Over time, one can appreciate that a large collection of historical data tied to registered user accounts may be available to the remote computing system. The remote computing system can then train one or more machine learning models using a portion of the historical data as training data. For instance, a portion of the historical data associated with a sampled set of user accounts can be represented by a set of features and labeled to indicate players who have behaved in a particular way in the past while playing a video game(s). A machine learning model(s) trained on this data is able to predict player behavior by outputting machine-learned scores (e.g., trust scores) for user ...

DETAILED DESCRIPTION

Described herein are, among other things, techniques, devices, and systems for generating trust scores using a machine learning approach, and thereafter using the machine-learned trust scores to match players together in multiplayer video game settings. The disclosed techniques may be implemented, at least in part, by a remote computing system that distributes video games (and content thereof) to client machines of a user community as part of a video game service. These client machines may individually install a client application that is configured to execute video games received (e.g., downloaded, streamed, etc.) from the remote computing system. This video game platform enables registered users of the community to play video games as “players.” For example, a user can load the client application, login with a registered user account, select a desired video game, and execute the video game on his/her client machine via the client application.

Whenever the above-mentioned users access and use this video game platform, data may be collected by the remote computing system, and this data can be maintained by the remote computing system in association with registered user accounts. Over time, one can appreciate that a large collection of historical data tied to registered user accounts may be available to the remote computing system. The remote computing system can then train one or more machine learning models using a portion of the historical data as training data. For instance, a portion of the historical data associated with a sampled set of user accounts can be represented by a set of features and labeled to indicate players who have behaved in a particular way in the past while playing a video game(s). A machine learning model(s) trained on this data is able to predict player behavior by outputting machine-learned scores (e.g., trust scores) for user accounts that are registered with the video game service. These machine-learned scores are usable for player matchmaking so that players who are likely to behave, or not behave, in accordance with a particular behavior can be grouped together in a multiplayer video game setting.

In an example process, a computing system may determine scores (e.g., trust scores) for a plurality of user accounts registered with a video game service. An individual score may be determined by accessing data associated with an individual user account, providing the data as input to a trained machine learning model(s), and generating, as output from the trained machine learning model, a score associated with the individual user account. The score relates to a probability of a player associated with the individual user account behaving, or not behaving, in accordance with a particular behavior while playing one or more video games in a multiplayer mode. Thereafter, the computing system may receive information from a plurality of client machines, the information indicating logged-in user accounts that are logged into a client application executing a video game, and the computing system may define multiple matches into which players are to be grouped for playing the video game in the multiplayer mode. These multiple matches may comprise at least a first match and a second match. The computing system may assign a first subset of the logged-in user accounts to the first match and a second subset of the logged-in user accounts to the second match based at least in part on the scores determined for the logged-in user accounts, and may cause a first subset of the client machines associated with the first subset of the logged-in user accounts to execute the video game in the first match, while causing a second subset of the client machines associated with the second subset of the logged-in user accounts to execute the video game in the second match.

The techniques and systems described herein may provide an improved gaming experience for users who desire to play a video game in multiplayer mode in the manner it was meant to be played. This is because the techniques and systems described herein are able to match together players who are likely to behave badly (e.g., cheat), and to isolate those players from other trusted players who are likely to play the video game legitimately. For example, the trained machine learning model(s) can learn to predict which players are likely to cheat, and which players are unlikely to cheat by attributing corresponding trust scores to the user accounts that are indicative of each player's propensity to cheating (or not cheating). In this manner, players with low (e.g., below threshold) trust scores may be matched together, and may be isolated from other players whose user accounts were attributed high (e.g., above threshold) trust scores, leaving the trusted players to play in a match without any players who are likely to cheat. Although the use of a threshold score is described as one example way of providing match assignments, other techniques are contemplated, such as clustering algorithms, or other statistical approaches that use the trust scores to preferentially match user accounts (players) with “similar” trust scores together (e.g., based on a similarity metric, such as a distance metric, a variance metric, etc.).

The techniques and systems described herein also improve upon existing matchmaking technology, which uses static rules to determine the trust levels of users. A machine-learning model(s), however, can learn to identify complex relationships of player behaviors to better predict player behavior, which is not possible with static rules-based approaches. Thus, the techniques and systems described herein allow for generating trust scores that more accurately predict player behavior, as compared to existing trust systems, leading to lower false positive rates and fewer instances of players being attributed an inaccurate trust score. The techniques and systems described herein are also more adaptive to changing dynamics of player behavior than existing systems because a machine learning model(s) is/are retrainable with new data in order to adapt the machine learning model(s) understanding of player behavior over time, as player behavior changes. The techniques and systems described herein may further allow one or more devices to conserve resources with respect to processing resources, memory resources, networking resources, etc., in the various ways described herein.

It is to be appreciated that, although many of the examples described herein reference “cheating” as a targeted behavior by which players can be scored and grouped for matchmaking purposes, the techniques and systems described herein may be configured to identify any type of behavior (good or bad) using a machine-learned scoring approach, and to predict the likelihood of players engaging in that behavior for purposes of player matchmaking. Thus, the techniques and systems may extend beyond the notion of “trust” scoring in the context of bad behavior, like cheating, and may more broadly attribute scores to user accounts that are indicative of a compatibility or an affinity between players.

FIG. 1is a diagram illustrating an example environment100that includes a remote computing system configured to train and use a machine learning model(s) to determine trust scores relating to the likely behavior of a player(s), and to match players together based on the machine-learned trust scores. A community of users102may be associated with one or more client machines104. The client machines104(1)-(N) shown inFIG. 1represent computing devices that can be utilized by users102to execute programs, such as video games, thereon. Because of this, the users102shown inFIG. 1are sometimes referred to as “players”102, and these names can be used interchangeably herein to refer to human operators of the client machines104. The client machines104can be implemented as any suitable type of computing device configured to execute video games and to render graphics on an associated display, including, without limitation, a personal computer (PC), a desktop computer, a laptop computer, a mobile phone (e.g., a smart phone), a tablet computer, a portable digital assistant (PDA), a wearable computer (e.g., virtual reality (VR) headset, augmented reality (AR) headset, smart glasses, etc.), an in-vehicle (e.g., in-car) computer, a television (smart television), a set-top-box (STB), a game console, and/or any similar computing device. Furthermore, the client machines104may vary in terms of their respective platforms (e.g., hardware and software). For example, the plurality of client machines104shown inFIG. 1may represent different types of client machines104with varying capabilities in terms of processing capabilities (e.g., central processing unit (CPU) models, graphics processing unit (GPU) models, etc.), graphics driver versions, and the like,

The client machines104may communicate with a remote computing system106(sometimes shortened herein to “computing system106,” or “remote system106”) over a computer network108. The computer network108may represent and/or include, without limitation, the Internet, other types of data and/or voice networks, a wired infrastructure (e.g., coaxial cable, fiber optic cable, etc.), a wireless infrastructure (e.g., radio frequencies (RF), cellular, satellite, etc.), and/or other connection technologies. The computing system106may, in some instances be part of a network-accessible computing platform that is maintained and accessible via the computer network108. Network-accessible computing platforms such as this may be referred to using terms such as “on-demand computing”, “software as a service (SaaS)”, “platform computing”, “network-accessible platform”, “cloud services”, “data centers”, and so forth.

In some embodiments, the computing system106acts as, or has access to, a video game platform that implements a video game service to distribute (e.g., download, stream, etc.) video games110(and content thereof) to the client machines104. In an example, the client machines104may each install a client application thereon. The installed client application may be a video game client (e.g., gaming software to play video games110). A client machine104with an installed client application may be configured to download, stream, or otherwise receive programs (e.g., video games110, and content thereof) from the computing system106over the computer network108. Any type of content-distribution model can be utilized for this purpose, such as a direct purchase model where programs (e.g., video games110) are individually purchasable for download and execution on a client machine104, a subscription-based model, a content-distribution model where programs are rented or leased for a period of time, streamed, or otherwise made available to the client machines104. Accordingly, an individual client machine104may include one or more installed video games110that are executable by loading the client application.

As shown by reference numeral112ofFIG. 1, the client machines104may be used to register with, and thereafter login to, a video game service. A user102may create a user account for this purpose and specify/set credentials (e.g., passwords, PINs, biometric IDs, etc.) tied to the registered user account. As a plurality of users102interact with the video game platform (e.g., by accessing their user/player profiles with a registered user account, playing video games110on their respective client machines104, etc.), the client machines104send data114to the remote computing system106. The data114sent to the remote computing system106, for a given client machine104, may include, without limitation, user input data, video game data (e.g., game performance statistics uploaded to the remote system), social networking messages and related activity, identifiers (IDs) of the video games110played on the client machine104, and so on. This data114can be streamed in real-time (or substantially real-time), sent the remote system106at defined intervals, and/or uploaded in response to events (e.g., exiting a video game).

FIG. 1shows that the computing system106may store the data114it collects from the client machines104in a datastore116, which may represent a data repository maintained by, and accessible to, the remote computing system106. The data114may be organized within the datastore116in any suitable manner to associate user accounts with relevant portions of the data114relating to those user accounts. Over time, given a large community of users102that frequently interact with the video game platform, sometimes for long periods of time during a given session, a large amount of data114can be collected and maintained in the datastore116.

At Step1inFIG. 1, the computing system106may train a machine learning model(s) using historical data114sampled from the datastore116. For example, the computing system106may access a portion of the historical data114associated with a sampled set of user accounts registered with the video game service, and use the sampled data114to train the machine learning model(s). In some embodiments, the portion of the data114used as training data is represented by a set of features, and each user account of the sampled set is labeled with a label that indicates whether the user account is associated with a player who has behaved in accordance with the particular behavior while playing at least one video game in the past. For example, if a player with a particular user account has been banned by the video game service in the past for cheating, this “ban” can be used as one of multiple class labels for the particular user account. In this manner, a supervised learning approach can be taken to train the machine learning model(s) to predict players who are likely to cheat in the future.

At Step2, the computing system106may score a plurality of registered user accounts using the trained machine learning model(s). For example, the computing system106may access, from the datastore116, data114associated with a plurality of registered user accounts, provide the data114as input to the trained machine learning model(s), and generate, as output from the trained machine learning model(s), scores associated with the plurality of user accounts. These scores (sometimes referred to herein as “trust scores,” or “trust factors”) relate to the probabilities of players associated with the plurality of user accounts behaving, or not behaving, in accordance with the particular behavior while playing one or more video games in a multiplayer mode. In the case of “bad” behavior, such as cheating, the trust score may relate to the probability of a player not cheating. In this case, a high trust score indicates a trusted user account, whereas a low trust score indicates an untrusted user account, which may be used as an indicator of a player who is likely to exhibit the bad behavior, such as cheating. In some embodiments, the score is a variable that is normalized in the range of [0,1]. This trust score may have a monotonic relationship with a probability of a player behaving (or not behaving, as the case may be) in accordance with the particular behavior while playing a video game110. The relationship between the score and the actual probability associated with the particular behavior, while monotonic, may or may not be a linear relationship. Of course, the scoring can be implemented in any suitable manner to predict whether a player tied to the user account will, or will not, behave in a particular way.FIG. 1illustrates a plurality of user accounts120that have been scored according to the techniques described herein. For example, a first score118(1) (score=X) is attributed to a first user account120(1), a second score118(2) (score=Y) is attributed to a second user account120(2), and so on and so forth, for any suitable number of registered user accounts120.

With the machine-learned scores118determined for a plurality of registered user accounts120, the computing system106may be configured to match players together in multiplayer video game settings based at least in part on the machine-learned scores118. For instance,FIG. 1shows that the computing system106may receive information122from a plurality of the client machines104that have started execution of a video game110. This information122may indicate, to the computing system106, that a set of logged-in user accounts120are currently executing a video game110on each client machine104via the installed client application. In other words, as players102login with their user accounts120and start to execute a particular video game110, requesting to play in multiplayer mode, their respective client machines104may provide information122to the computing system106indicating as much.

At Step3, the computing device106, in response to receiving this information122from the client machines104, may match players102together by defining multiple matches into which players102are to be grouped for playing the video game110in the multiplayer mode, and by providing match assignments124to the client machines104in order to assign subsets of the logged-in user accounts120to different ones of the multiple matches based at least in part on the machine-learned scores118that were determined for the logged-in user accounts120. In this manner, if the trained machine learning model(s) assigned low (e.g., below threshold) scores118to a first subset of the logged-in user accounts120and high (e.g., above threshold) scores118to a second subset of the logged-in user accounts120, the first subset of the logged-in user accounts120may be assigned to a first match of the multiple matches, and the second subset of the logged-in user accounts120may be assigned to a second, different match of the multiple matches. In this manner, a subset of players102with similar scores118may be grouped together and may remain isolated from other players with scores118that are dissimilar from the subset of players'102scores118.

At Step4, the client applications on each machine104executing the video game110may execute the video game110in the assigned match the logged-in user account120in question. For example, a first subset of client machines104may be associated with a first subset of the logged-in user accounts120that were assigned to the first match, and this first subset of client machines104may execute the video game110in the first match, while a second subset of client machines104—associated with the second subset of the logged-in user accounts120assigned to the second match—may execute the video game110in the second match. With players grouped into matches based at least in part on the machine-learned scores118, the in-game experience may be improved for at least some of the groups of players102because the system may group players predicted to behave badly (e.g., by cheating) together in the same match, and by doing so, may keep the bad-behaving players isolated from other players who want to play the video game110legitimately.

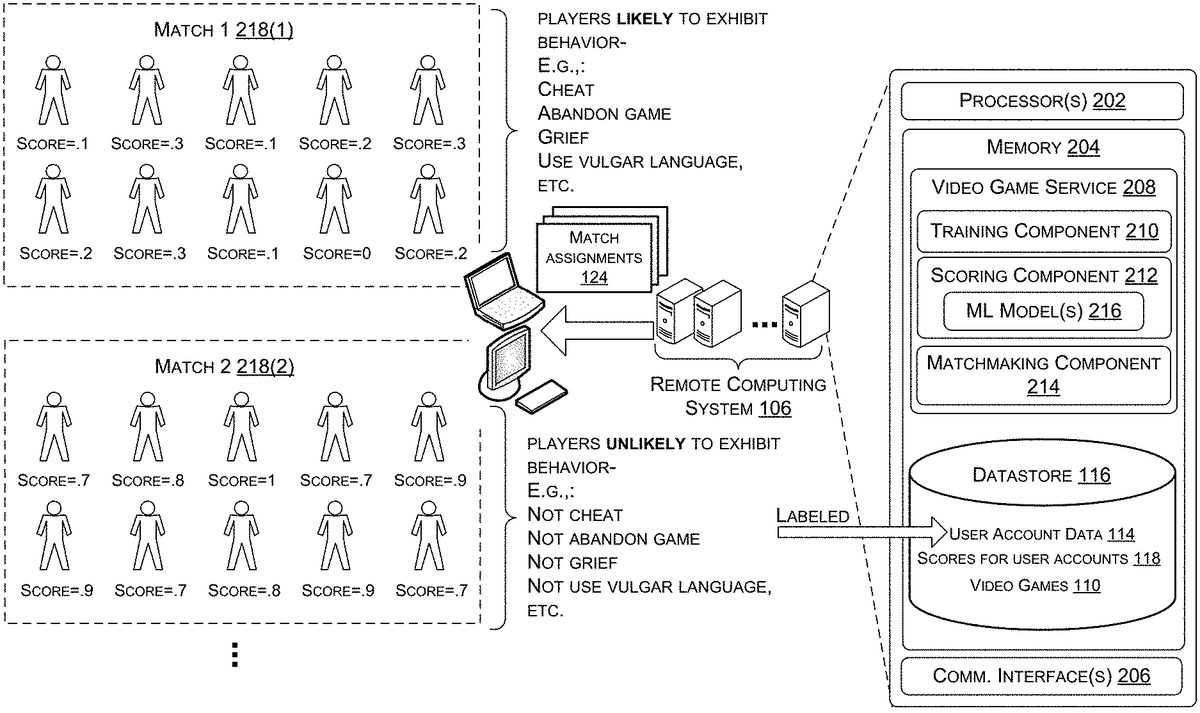

FIG. 2shows a block diagram illustrating example components of the remote computing system106ofFIG. 1, as well as a diagram illustrating how machine-learned trust scoring can be used for player matchmaking. In the illustrated implementation, the computing system106includes, among other components, one or more processors202(e.g., a central processing unit(s) (CPU(s))), memory204(or non-transitory computer-readable media204), and a communications interface(s)206. The memory204(or non-transitory computer-readable media204) may include volatile and nonvolatile memory, removable and non-removable media implemented in any method or technology for storage of information, such as computer-readable instructions, data structures, program modules, or other data. Such memory includes, but is not limited to, RAM, ROM, EEPROM, flash memory or other memory technology, CD-ROM, digital versatile disks (DVD) or other optical storage, magnetic cassettes, magnetic tape, magnetic disk storage or other magnetic storage devices, RAID storage systems, or any other medium which can be used to store the desired information and which can be accessed by a computing device. The computer-readable media204may be implemented as computer-readable storage media (“CRSM”), which may be any available physical media accessible by the processor(s)202to execute instructions stored on the memory204. In one basic implementation, CRSM may include random access memory (“RAM”) and Flash memory. In other implementations, CRSM may include, but is not limited to, read-only memory (“ROM”), electrically erasable programmable read-only memory (“EEPROM”), or any other tangible medium which can be used to store the desired information and which can be accessed by the processor(s)202. A video game service208may represent instructions stored in the memory204that, when executed by the processor(s)202, cause the computing system106to perform the techniques and operations described herein.

For example, the video game service208may include a training component210, a scoring component212, and a matchmaking component214, among other possible components. The training component210may be configured to train a machine learning model(s) using a portion of the data114in the datastore116that is associated with a sampled set of user accounts120as training data to obtain a trained machine learning model(s)216. The trained machine learning model(s)216is usable by the scoring component212to determine scores118(e.g., trust scores118) for a plurality of registered user accounts120. The matchmaking component214provides match assignments124based at least in part on the machine-learned scores118so that players are grouped into different ones of multiple matches218(e.g., a first match218(1), a second match218(2), etc.) for playing a video game110in the multiplayer mode.FIG. 2shows how a first group of players102(e.g., ten players102) associated with a first subset of logged-in user accounts120may be assigned to a first match218(1), and a second group of players102(e.g., another ten players102) associated with a second subset of logged-in user accounts120are assigned to a second match218(2). Of course, any number of matches218may be defined, which may depend on the number of logged-in user accounts120that request to execute the video game110in multiplayer mode, a capacity limit of network traffic that the computing system106can handle, and/or other factors. In some embodiments, other factors (e.g., skill level, geographic region, etc.) are considered in the matchmaking process, which may cause further breakdowns and/or subdivisions of players into a fewer or greater number of matches218.

As mentioned, the scores118determined by the scoring component212(e.g., output by the trained machine learning model(s)216) are machine-learned scores118. Machine learning generally involves processing a set of examples (called “training data”) in order to train a machine learning model(s). A machine learning model(s)216, once trained, is a learned mechanism that can receive new data as input and estimate or predict a result as output. For example, a trained machine learning model216can comprise a classifier that is tasked with classifying unknown input (e.g., an unknown image) as one of multiple class labels (e.g., labeling the image as a cat or a dog). In some cases, a trained machine learning model216is configured to implement a multi-label classification task (e.g., labeling images as “cat,” “dog,” “duck,” “penguin,” and so on). Additionally, or alternatively, a trained machine learning model216can be trained to infer a probability, or a set of probabilities, for a classification task based on unknown data received as input. In the context of the present disclosure, the unknown input may be data114that is associated with an individual user account120registered with the video game service, and the trained machine learning model(s)216may be tasked with outputting a score118(e.g., a trust score118) that indicates, or otherwise relates to, a probability of the individual user account120being in one of multiple classes. For instance, the score118may relate to a probability of a player102associated with the individual user account120behaving (or not behaving, as the case may be) in accordance with a particular behavior while playing a video game110in a multiplayer mode. In some embodiments, the score118is a variable that is normalized in the range of [0,1]. This trust score118may have a monotonic relationship with a probability of a player102behaving (or not behaving, as the case may be) in accordance with the particular behavior while playing a video game110. The relationship between the score118and the actual probability associated with the particular behavior, while monotonic, may or may not be a linear relationship. In some embodiments, the trained machine learning model(s)216may output a set of probabilities (e.g., two probabilities), or scores relating thereto, where one probability (or score) relates to the probability of the player102behaving in accordance with the particular behavior, and the other probability (or score) relates to the probability of the player102not behaving in accordance with the particular behavior. The score118that is output by the trained machine learning model(s)216can relate to either of these probabilities in order to guide the matchmaking processes. In an illustrative example, the particular behavior may be cheating. In this example, the score118that is output by the trained machine learning model(s)216relates to a likelihood that the player102associated with the individual user account120will, or will not, go on to cheat during the course of playing a video game110in multiplayer mode. Thus, the score118can, in some embodiments, indicate a level of trustworthiness of the player102associated with the individual user account120, and this is why the score118described herein is sometimes referred to as a “trust score”118.

The trained machine learning model(s)216may represent a single model or an ensemble of base-level machine learning models, and may be implemented as any type of machine learning model216. For example, suitable machine learning models216for use with the techniques and systems described herein include, without limitation, neural networks, tree-based models, support vector machines (SVMs), kernel methods, random forests, splines (e.g., multivariate adaptive regression splines), hidden Markov model (HMMs), Kalman filters (or enhanced Kalman filters), Bayesian networks (or Bayesian belief networks), expectation maximization, genetic algorithms, linear regression algorithms, nonlinear regression algorithms, logistic regression-based classification models, or an ensemble thereof. An “ensemble” can comprise a collection of machine learning models216whose outputs (predictions) are combined, such as by using weighted averaging or voting. The individual machine learning models of an ensemble can differ in their expertise, and the ensemble can operate as a committee of individual machine learning models that is collectively “smarter” than any individual machine learning model of the ensemble.

The training data that is used to train the machine learning model216may include various types of data114. In general, training data for machine learning can include two components: features and labels. However, the training data used to train the machine learning model(s)216may be unlabeled, in some embodiments. Accordingly, the machine learning model(s)216may be trainable using any suitable learning technique, such as supervised learning, unsupervised learning, semi-supervised learning, reinforcement learning, and so on. The features included in the training data can be represented by a set of features, such as in the form of an n-dimensional feature vector of quantifiable information about an attribute of the training data. The following is a list of example features that can be included in the training data for training the machine learning model(s)216described herein. However, it is to be appreciated that the following list of features is non-exhaustive, and features used in training may include additional features not described herein, and, in some cases, some, but not all, of the features listed herein. Example features included in the training data may include, without limitation, an amount of time a player spent playing video games110in general, an amount of time a player spent playing a particular video game110, times of the day the player was logged in and playing video games110, match history data for a player—e.g., total score (per match, per round, etc.), headshot percentage, kill count, death count, assist count, player rank, etc., a number and/or frequency of reports of a player cheating, a number and/or frequency of cheating acquittals for a player, a number and/or frequency of cheating convictions for a player, confidence values (score) output by a machine learning model that detected a player of cheat during a video game, a number of user accounts120associated with a single player (which may be deduced from a common address, phone number, payment instrument, etc. tied to multiple user accounts120), how long a user account120has been registered with the video game service, a number of previously-banned user accounts120tied to a player, number and/or frequency of a player's monetary transactions on the video game platform, a dollar amount per transaction, a number of digital items of monetary value associated with a player's user account120, number of times a user account120has changed hands (e.g., been transfers between different owners/players), a frequency at which a user account120is transferred between players, geographic locations from which a player has logged-in to the video game service, a number of different payment instruments, phone numbers, mailing addresses, etc. that have been associated with a user account120and/or how often these items have been changed, and/or any other suitable features that may be relevant in computing a trust score118that is indicative of a player's propensity to engage in a particular behavior. As part of the training process, the training component210may set weights for machine learning. These weights may apply to a set of features included in the training data, as derived from the historical data114in the datastore116. In some embodiments, the weights that are set during the training process may apply to parameters that are internal to the machine learning model(s) (e.g., weights for neurons in a hidden-layer of a neural network). These internal parameters of the machine learning model(s) may or may not map one-to-one with individual input features of the set of features. The weights can indicate the influence that any given feature or parameter has on the score118that is output by the trained machine learning model216.

In regards to cheating in particular—which is an illustrative example of a type of behavior that can be used as a basis for matching players, there may be behaviors associated with a user account120of a player who is planning on cheating in a video game110that are unlike behaviors associated with a user account120of a non-cheater. Thus, the machine learning model216may learn to identify those behavioral patterns from the training data so that players who are likely to cheat can be identified with high confidence and scored appropriately. It is to be appreciated that there may be outliers in the ecosystem that the system can be configured to protect based on some known information about the outliers. For example, professional players may exhibit different behavior than average players exhibit, and these professional players may be at risk of being scored incorrectly. As another example, employees of the service provider of the video game service may login with user accounts for investigation purposes or quality control purposes, and may behave in ways that are unlike the average player's behavior. These types of players/users102can be treated as outliers and proactively assigned a score118, outside of the machine learning context, that attributes a high trust to those players/users102. In this manner, well-known professional players, employees of the service provider, and the like, can be assigned an authoritative score118that is not modifiable by the scoring component212to avoid having those players/users102matched with bad-behaving players.

The training data may also be labeled for a supervised learning approach. Again, using cheating as an example type of behavior that can be used to match players together, the labels in this example may indicate whether a user account120was banned from playing a video game110via the video game service. The data114in the datastore116may include some data114associated with players who have been banned cheating, and some data114associated with players who have not been banned for cheating. An example of this type of ban is a Valve Anti-Cheat (VAC) ban utilized by Valve Corporation of Bellevue, Wash. For instance, the computing system106, and/or authorized users of the computing system106, may be able to detect when unauthorized third party software has been used to cheat. In these cases, after going through a rigorous verification process to make sure that the determination is correct, the cheating user account120may be banned by flagging it as banned in the datastore116. Thus, the status of a user account120in terms of whether it has been banned, or not banned, can be used as positive, and negative, training examples.

It is to be appreciated that past player behavior, such as past cheating, can be indicated in other ways. For example, a mechanism can be provided for users102, or even separate machine learning models, to detect and report players for suspected cheating. These reported players may be put before a jury of their peers who review the game playback of the reported player and render a verdict (e.g., cheating or no cheating). If enough other players decide that the reported player's behavior amounts to cheating, a high confidence threshold may be reached and the reported player is convicted of cheating and receives a ban on their user account120.

FIG. 2illustrates examples of other behaviors, besides cheating, which can be used as a basis for player matchmaking. For example, the trained machine learning model(s)216may be configured to output a trust score118that relates to the probability of a player behaving, or not behaving, in accordance with a game-abandonment behavior (e.g., by abandoning (or exiting) the video game in the middle of a match). Abandoning a game is a behavior that tends to ruin the gameplay experience for non-abandoning players, much like cheating. As another example, the trained machine learning model(s)216may be configured to output a trust score118that relates to the probability of a player behaving, or not behaving, in accordance with a griefing behavior. A “griefer” is a player in a multiplayer video game who deliberately irritates and harasses other players within the video game110, which can ruin the gameplay experience for non-griefing players. As another example, the trained machine learning model(s)216may be configured to output a trust score118that relates to the probability of a player behaving, or not behaving, in accordance with a vulgar language behavior. Oftentimes, multiplayer video games allow for players to engage in chat sessions or other social networking communications that are visible to the other players in the video game110, and when a player uses vulgar language (e.g., curse words, offensive language, etc.), it can ruin the gameplay experience for players who do not use vulgar language. As yet another example, the trained machine learning model(s)216may be configured to output a trust score118that relates to a probability of a player behaving, or not behaving, in accordance with a “high-skill” behavior. In this manner, the scoring can be used to identify highly-skilled players, or novice players, from a set of players. This may be useful to prevent situations where experienced gamers create new user accounts pretending to be a player of a novice skill level just so that they can play with amateur players. Accordingly, the players matched together in the first match218(1) may be those who are likely (as determined from the machine-learned scores118) to behave in accordance with a particular “bad” behavior, while the players matched together in other matches, such as the second match218(2) may be those who are unlikely to behave in accordance with the particular “bad” behavior.

It may be the case that the distribution of trust scores118output for a plurality of players (user accounts120) is largely bimodal. For example, one peak of the statistical distribution of scores118may be associated with players likely to behave in accordance with a particular bad behavior, while the other peak of the statistical distribution of scores118may be associated with players unlikely to behave in accordance with that bad behavior. In other words, the populations of bad-behaving and good-behaving players may be separated by a clear margin in the statistical distribution. In this sense, if a new user account is registered with the video game service and is assigned a trust score that is between the two peaks in the statistical distribution, that user account will quickly be driven one way or another as the player interacts with the video game platform. Due to this tendency, the matchmaking parameters used by the matchmaking component214can be tuned to treat players with trust scores118that are not within the bottom peak of the statistical distribution similarly, and the matchmaking component214may be primarily concerned with separating/isolating the bad-behaving players with trust scores118that are within the bottom peak of the statistical distribution. Although the use of a threshold score is described herein as one example way of providing match assignments, other techniques are contemplated, such as clustering algorithms, or other statistical approaches that use the trust scores to preferentially match user accounts (players) with “similar” trust scores together (e.g., based on a similarity metric, such as a distance metric, a variance metric, etc.).

Furthermore, the matchmaking component214may not use player matchmaking for new user accounts120that have recently registered with the video game service. Rather, a rule may be implemented that a player is to accrue a certain level of experience/performance in an individual play mode before being scored and matched with other players in a multiplayer mode of a video game110. It is to be appreciated that the path of a player from the time of launching a video game110for the first time to having access to the player matchmaking can be very different for different players. It may take some players a long time to gain access to a multiplayer mode with matchmaking, while other users breeze through the qualification process quickly. With this qualification process in place, a user account that is to be scored for matchmaking purposes will have played video games and provided enough data114to score118the user account120accurately.

Again, as mentioned, although many of the examples described herein reference “bad” behavior, such as cheating, game-abandonment, griefing, vulgar language, etc. as the targeted behavior by which players can be scored and grouped for matchmaking purposes, the techniques and systems described herein may be configured to identify any type of behavior using a machine-learned scoring approach, and to predict the likelihood of players engaging in that behavior for purposes of player matchmaking.

The processes described herein are illustrated as a collection of blocks in a logical flow graph, which represent a sequence of operations that can be implemented in hardware, software, or a combination thereof. In the context of software, the blocks represent computer-executable instructions that, when executed by one or more processors, perform the recited operations. Generally, computer-executable instructions include routines, programs, objects, components, data structures, and the like that perform particular functions or implement particular abstract data types. The order in which the operations are described is not intended to be construed as a limitation, and any number of the described blocks can be combined in any order and/or in parallel to implement the processes

FIG. 3is a flow diagram of an example process300for training a machine learning model(s) to predict a probability of a player behaving, or not behaving, in accordance with a particular behavior. For discussion purposes, the process300is described with reference to the previous figures.

At302, a computing system106may provide users102with access to a video game service. For example, the computing system106may allow users to access and browse a catalogue of video games110, modify user profiles, conduct transactions, engage in social media activity, and other similar actions. The computing system106may distribute video games110(and content thereof) to client machines104as part of the video game service. In an illustrative example, a user102with access to the video game service can load an installed client application, login with a registered user account, select a desired video game110, and execute the video game110on his/her client machine104via the client application.

At304, the computing system106may collect and store data114associated with user accounts120that are registered with the video game service. This data114may be collected at block304whenever users102access the video game service with their registered user accounts120and use this video game platform, such as to play video games110thereon. Over time, one can appreciate that a large collection of data114tied to registered user accounts may be available to the computing system106.

At306, the computing system106, via the training component210, may access (historical) data114associated with a sampled set of user accounts120registered with the video game service. At least some of the (historical) data114may have been generated as a result of players playing one or multiple video games110on the video game platform provided by the video game service. For example, the (historical) data114accessed at306may represent match history data of the players who have played one or multiple video games110(e.g., in the multiplayer mode by participating in matches). In some embodiments, the (historical) data114may indicate whether the sampled set of user accounts120have been transferred between players, or other types of user activities with respect to user accounts120.

At308, the computing system106, via the training component210, may label each user account120of the sampled set of user accounts120with a label that indicates whether the user account is associated with a player who has behaved in accordance with the particular behavior while playing at least one video game110in the past. Examples of labels are described herein, such as whether a user account120has been banned in the past for cheating, which may be used as a label in the context of scoring players on their propensity to cheat, or not cheat, as the case may be. However, the labels may correspond to other types of behavior, such as a label that indicates whether the user account is associated with a player who has abandoned a game in the past, griefed during a game in the past, used vulgar language during a game in the past, etc.

At310, the computing system106, via the training component210, may train a machine learning model(s) using the (historical) data114as training data to obtain the trained machine learning model(s)216. As shown by sub-block312, the training of the machine learning model(s) at block310may include setting weights for machine learning. These weights may apply to a set of features derived from the historical data114. Example features are described herein, such as those described above with reference toFIG. 2. In some embodiments, the weights set at block312may apply to parameters that are internal to the machine learning model(s) (e.g., weights for neurons in a hidden-layer of a neural network). These internal parameters of the machine learning model(s) may or may not map one-to-one with individual input features of the set of features. As shown by the arrow from block310to block304, the machine learning model(s)216can be retrained using updated (historical) data114to obtain a newly trained machine learning model(s)216that is adapted to recent player behaviors. This allows the machine learning model(s)216to adapt, over time, to changing player behaviors.

FIG. 4is a flow diagram of an example process400for utilizing a trained machine learning model(s)216to determine trust scores118for user accounts120, the trust scores118relating to (or indicative of) probabilities of players behaving, or not behaving, in accordance with a particular behavior. For discussion purposes, the process400is described with reference to the previous figures. Furthermore, as indicated by the off-page reference “A” inFIGS. 3 and 4, the process400may continue from block310of the process300.

At402, the computing system106, via the scoring component212, may access data114associated with a plurality of user accounts120registered with a video game service. This data114may include any information (e.g., quantifiable information) in the set of features, as described herein, the features having been used to train the machine learning model(s)216. This is data114constitutes the unknown input that is to be input to the trained machine learning model(s)216.

At404, the computing system106, via the scoring component212, may provide the data114accessed at block402as input to the trained machine learning model(s)216.

At406, the computing system106, via the scoring component212, may generate, as output from the trained machine learning model(s)216, trust scores118associated with the plurality of user accounts120. On an individual basis, a score118is associated with an individual user account120, of the plurality of use accounts120, and the score118relates to a probability of a player102associated with the individual user account120behaving, or not behaving, in accordance with a particular behavior while playing one or more video games110in a multiplayer mode. The particular behavior, in this context, may be any suitable behavior that is exhibited in the data114so that the machine learning model(s) can be trained to predict players with a propensity to engage in that behavior. Examples include, without limitation, a cheating behavior, a game-abandonment behavior, a griefing behavior, or a vulgar language behavior. In some embodiments, the score118is a variable that is normalized in the range of [0,1]. This trust score118may have a monotonic relationship with a probability of a player behaving (or not behaving, as the case may be) in accordance with the particular behavior while playing a video game110. The relationship between the score118and the actual probability associated with the particular behavior, while monotonic, may or may not be a linear relationship.

Thus, the process400represents a machine-learned scoring approach, where scores118(e.g., trust scores118) are determined for user accounts120, the scores indicating the probability of a player using that user account120engaging in a particular behavior in the future. Use of a machine-learning model(s) in this scoring process allows for identifying complex relationships of player behaviors to better predict player behavior, as compared to existing approaches that attempt to predict the same. This leads to a more accurate prediction of player behavior with a more adaptive and versatile system that can adjust to changing dynamics of player behavior without human intervention.

FIG. 5is a flow diagram of an example process500for assigning user accounts120to different matches of a multiplayer video game based on machine-learned trust scores118that relates to likely player behavior. For discussion purposes, the process500is described with reference to the previous figures. Furthermore, as indicated by the off-page reference “B” inFIGS. 4 and 5, the process500may continue from block406of the process400.

At502, the computing system106may receive information122from a plurality of client machines104, the information122indicating logged-in user accounts120that are logged into a client application executing a video game110on each client machine104. For example, a plurality of players102may have started execution of a particular video game110(e.g., a first-person shooter game), wanting to play the video game110in multiplayer mode. The information112received122at block502may indicate at least the logged-in user accounts120for those players.

At504, the computing system106, via the matchmaking component214, may define multiple matches into which players102are to be grouped for playing the video game110in the multiplayer mode. Any number of matches can be defined, depending on various factors, including demand, capacity, and other factors that play into the matchmaking process. In an example, the multiple defined matches at block504may include at least a first match218(1) and a second match218(2).

At506, the computing system106, via the matchmaking component214, may assign a first subset of the logged-in user accounts120to the first match218(1), and a second subset of the logged-in user accounts120to the second match218(2) based at least in part on the scores118determined for the logged-in user accounts120. As mentioned, any number of matches can be defined such that further breakdowns and additional matches can be assigned to user accounts at block506.

As shown by sub-block508, the assignment of user accounts120to different matches may be based on a threshold score. For instance, the matchmaking component214may determine that the scores118associated with the first subset of the logged-in user accounts120are less than a threshold score and may assign the first subset of the logged-in user accounts120to the first match based on those scores being less than the threshold score. Similarly, the matchmaking component214may determine that the scores118associated with the second subset of the logged-in user accounts120are equal to or greater than the threshold score and may assign the second subset of the logged-in user accounts120to the second match based on those scores being equal to or greater than the threshold score. This is merely one example way of providing match assignments124, however, and other techniques are contemplated. For instance, clustering algorithms, or other statistical approaches may be used in addition, or as an alternative, to the use of a threshold score. In an example, the trust scores118may be used to preferentially match user accounts (players) with “similar” trust scores together. Given a natural tendency of the distribution of trust scores118to be largely bimodal across a plurality of user accounts120, grouping trust scores118together based on a similarity metric (e.g., a distance metric, a variance metric, etc.) may provide similar results to that of using a threshold score. However, the use of a similarity metric to match user accounts together in groups for matchmaking purposes may be useful in instances where the matchmaking pool is small (e.g., players in a small geographic region who want to play less popular game modes) because it can provide a more fine-grained approach that allows for progressive tuning of the relative importance of the trust score verses other matchmaking factors, like skill level. In some embodiments, multiple thresholds may be used to “bucketize” user accounts120into multiple different matches.

As shown by sub-block510, other factors besides the trust score118can be considered in the matchmaking assignments at block506. For example, the match assignments124determined at block506may be further based on skill levels of players associated with the logged-in user accounts, amounts of time the logged-in user accounts have been waiting to be placed into one of the multiple matches, geographic regions associated with the logged-in user accounts, and/or other factors.

At512, the computing system106may cause (e.g., by providing control instructions) the client application executing the video game110on each client machine104that sent the information122to initiate one of the defined matches (e.g., one of the first match218(1) or the second match218(2)) based at least in part on the logged-in user account120that is associated with that the client machine104. For example, with the first match218(1) and the second match218(2) defined, the computing system106may cause a first subset of the plurality of client machines104associated with the first subset of the logged-in user accounts120to execute the video game110in the first match218(1), and may cause a second subset of the plurality of client machines104associated with the second subset of the logged-in user accounts120to execute the video game110in the second match218(2).

Because machine-learned trust scores118are used as a factor in the matchmaking process, an improved gaming experience may be provided to users who desire to play a video game in multiplayer mode in the manner it was meant to be played. This is because the techniques and systems described herein can be used to match together players who are likely to behave badly (e.g., cheat), and to isolate those players from other trusted players who are likely to play the video game legitimately.

Although the subject matter has been described in language specific to structural features, it is to be understood that the subject matter defined in the appended claims is not necessarily limited to the specific features described. Rather, the specific features are disclosed as illustrative forms of implementing the claims.

Claims

- A method, comprising: training a machine learning model using training data to obtain a trained machine learning model;accessing, by a computing system, data associated with a plurality of user accounts registered with a video game service;determining trust scores associated with the plurality of user accounts by: identifying a first user account of the plurality of user accounts that is associated with at least one of a professional player or an employee of a service provider of the video game service;proactively assigning a predetermined trust score to the first user account without using the trained machine learning model;providing the data associated with the plurality of user accounts other than the first user account as input to the trained machine learning model;and generating, as output from the trained machine learning model, additional trust scores associated with the plurality of user accounts other than the first user account, the additional trust scores relating to probabilities of players associated with the plurality of user accounts other than the first user account cheating, or not cheating, while playing one or more video games in a multiplayer mode;receiving, from a plurality of client machines, information indicating logged-in user accounts, of the plurality of user accounts, that are currently logged into a client application that is executing a video game on each client machine of the plurality of client machines;defining, by the computing system, multiple matches into which players associated with the logged-in user accounts are to be grouped for playing the video game in the multiplayer mode, the multiple matches comprising at least a first match and a second match;assigning, by the computing system, a first subset of the logged-in user accounts to the first match based at least in part on the trust scores associated with the first subset;assigning, by the computing system, a second subset of the logged-in user accounts to the second match based at least in part on the trust scores associated with the second subset;and causing, by the computing system, the client application executing the video game on each client machine to initiate one of the first match or the second match based at least in part on a user account, of the logged-in user accounts, that is associated with the client machine.

- The method of claim 1 , wherein the training data includes labels for each user account of a sampled set of user accounts indicating whether the user account is associated with a player who has cheated while playing at least one video game.

- The method of claim 1 , further comprising retraining the machine learning model using updated training data to obtain a newly trained machine learning model that is adapted to recent player behaviors.

- A method, comprising: training a machine learning model using training data to obtain a trained machine learning model;determining, by a computing system, scores for a plurality of user accounts registered with a video game service, wherein the scores are determined by: identifying a first user account of the plurality of user accounts that is associated with at least one of a professional player or an employee of a service provider of the video game service;proactively assigning a predetermined score to the first user account without using the trained machine learning model;accessing data associated with an individual user account of the plurality of user accounts other than the first user account;providing the data as input to the trained machine learning model;and generating, as output from the trained machine learning model, a score associated with the individual user account, the score indicative of a probability of a player associated with the individual user account cheating, or not cheating, while playing one or more video games in a multiplayer mode;receiving, by the computing system, information from a plurality of client machines, the information indicating logged-in user accounts, of the plurality of user accounts, that are logged into a client application executing a video game;defining, by the computing system, multiple matches into which players associated with the logged-in user accounts are to be grouped for playing the video game in the multiplayer mode, the multiple matches comprising at least a first match and a second match;assigning, by the computing system, a first subset of the logged-in user accounts to the first match and a second subset of the logged-in user accounts to the second match based at least in part on the scores determined for the logged-in user accounts;causing, by the computing system, a first subset of the plurality of client machines associated with the first subset of the logged-in user accounts to execute the video game in the first match;and causing, by the computing system, a second subset of the plurality of client machines associated with the second subset of the logged-in user accounts to execute the video game in the second match.

- The method of claim 4 , wherein the training of the machine learning model comprises: accessing, by the computing system, the training data, the training data associated with a sampled set of user accounts registered with the video game service;and labeling each user account of the sampled set of user accounts with a label that indicates whether the user account is associated with a player who has cheated while playing at least one video game.

- The method of claim 4 , wherein the training of the machine learning model comprises setting weights for at least one of a set of features derived from the training data or parameters internal to the machine learning model.

- The method of claim 4 , wherein at least some of the training data was generated as a result of players playing one or multiple video games on a platform provided by the video game service.

- The method of claim 7 , wherein the at least some of the training data represents match history data of the players who have played the one or multiple video games in the multiplayer mode by participating in matches.

- The method of claim 4 , further comprising retraining the machine learning model using updated training data to obtain a newly trained machine learning model that is adapted to recent player behaviors.

- The method of claim 5 , wherein: the label indicates, for each user account, whether the user account is associated with a player who has been banned from playing, via the video game service, the at least one video game as a consequence of having been determined to have cheated while playing the at least one video game.

- The method of claim 4 , wherein the assigning of the first subset of the logged-in user accounts to the first match and the second subset of the logged-in user accounts to the second match comprises: determining that the scores associated with the first subset of the logged-in user accounts are less than a threshold score;and determining that the scores associated with the second subset of the logged-in user accounts are equal to or greater than the threshold score.

- The method of claim 4 , wherein the assigning of the first subset of the logged-in user accounts to the first match and the second subset of the logged-in user accounts to the second match is further based on at least one of: skill levels of players associated with the logged-in user accounts;amounts of time the logged-in user accounts have been waiting to be placed into one of the multiple matches;or geographic regions associated with the logged-in user accounts.

- A system, comprising: one or more processors;and memory storing computer-executable instructions that, when executed by the one or more processors, cause the system to: train a machine learning model using training data to obtain a trained machine learning model;determine trust scores for a plurality of user accounts registered with a video game service, wherein the trust scores are determined by: identifying a first user account of the plurality of user accounts that is associated with at least one of a professional player or an employee of a service provider of the video game service;proactively assigning a predetermined score to the first user account without using the trained machine learning model;accessing data associated with an individual user account of the plurality of user accounts other than the first user account;providing the data as input to the trained machine learning model;and generating, as output from the trained machine learning model, a trust score associated with the individual user account, the trust score relating to a probability of a player associated with the individual user account cheating, or not cheating, while playing one or more video games in a multiplayer mode;receive information from a plurality of client machines, the information indicating logged-in user accounts, of the plurality of user accounts, that are logged into a client application executing a video game;define multiple matches into which players associated with the logged-in user accounts are to be grouped for playing the video game in the multiplayer mode, the multiple matches comprising at least a first match and a second match;assign a first subset of the logged-in user accounts to the first match and a second subset of the logged-in user accounts to the second match based at least in part on the trust scores determined for the logged-in user accounts;cause a first subset of the plurality of client machines associated with the first subset of the logged-in user accounts to execute the video game in the first match;and cause a second subset of the plurality of client machines associated with the second subset of the logged-in user accounts to execute the video game in the second match.

- The system of claim 13 , wherein the computer-executable instructions, when executed by the one or more processors, further cause the system to, prior to determining the trust scores: access the training data, the training data associated with a sampled set of user accounts;and label each user account of the sampled set of user accounts with a label that indicates whether the user account is associated with a player who has cheated while playing at least one video game.

- The system of claim 14 , wherein: the label indicates, for each user account, whether the user account is associated with a player who has been banned from playing, via the video game service, the at least one video game as a consequence of having been determined to have cheated while playing the at least one video game.

- The system of claim 13 , wherein at least some of the training data was generated as a result of players playing one or multiple video games on a platform provided by the video game service.

- The system of claim 13 , wherein the computer-executable instructions, when executed by the one or more processors, further cause the system to, prior to determining the trust scores, determine that players associated with the plurality of user accounts have accrued a level of experience in an individual play mode.

- The method of claim 2 , wherein each of the labels indicates whether the user account is associated with a player who has been banned from playing, via the video game service, the at least one video game as a consequence of having been determined to have cheated while playing the at least one video game.

- The method of claim 4 , further comprising, prior to the determining of the scores, determining that players associated with the plurality of user accounts have accrued a level of experience in an individual play mode.

- The method of claim 1 , further comprising, prior to the determining of the trust scores, determining that players associated with the plurality of user accounts have accrued a level of experience in an individual play mode.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.