U.S. Pat. No. 9,968,850

System for providing virtual spaces for access by users

AssigneeDisney Enterprises Inc

Issue DateSeptember 17, 2007

Illustrative Figure

Abstract

A system configured to provide one or more virtual spaces that are accessible to users. The system may implement a markup language to communicate information between various components. The markup language may enable the communication of information between components of the system via markup elements. A markup element may include a discrete unit of information that includes both content and attributes associated with the content. The implementation of the markup language may enable the instantiation of virtual spaces, and the conveyance to users of views of an instantiated virtual space via a distributed architecture in which the components (e.g., a server capable of instantiating virtual spaces, and a client capable of providing an interface between a user and a virtual space) are capable of providing virtual spaces with a broader range of characteristics than components in conventional systems capable of providing virtual spaces that are accessible to users. This may enable users to access virtual spaces from a broader range of platforms, provide access to a broader range of virtual spaces without requiring the installation of proprietary or specialized client applications, facilitate the creation and/or customization of virtual spaces, and/or provide other enhancements.

Description

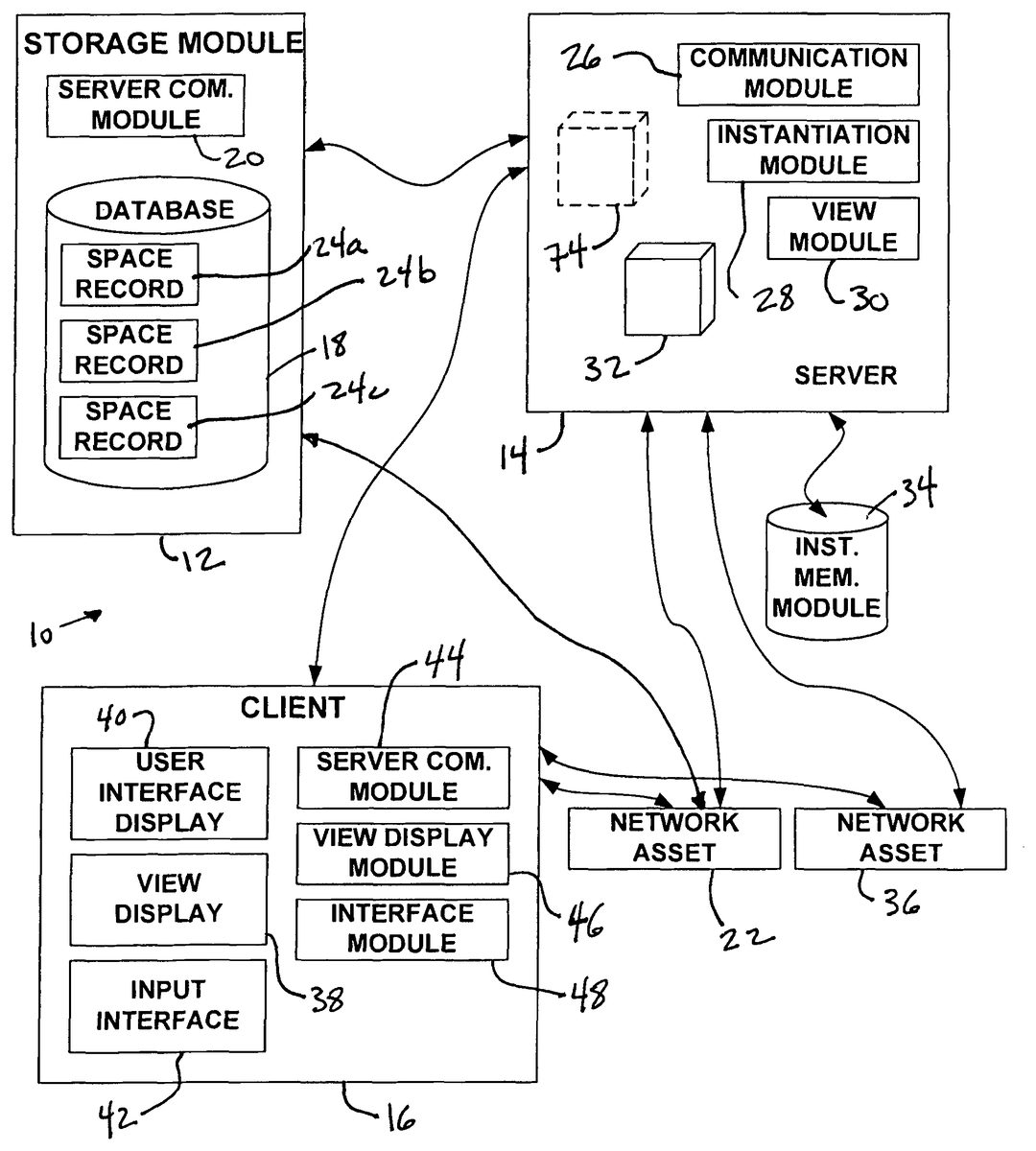

DETAILED DESCRIPTION FIG. 1illustrates a system10configured to provide one or more virtual spaces that may be accessible to users. In some embodiments, system10may include a storage module12, a server14, a client16, and/or other components. Storage module12and client16may be in operative communication with server14. System10is configured such that information related to a given virtual space may be transmitted from storage module12to server14, which may then instantiate the virtual space being run on server14. Views of the virtual space may be generated by server14from the instance of the virtual space. Information related to the views may be transmitted from server14to client16to enable client16to format the views for display to a user. System10may implement a markup language for communication between components (e.g., storage module12, server14, client16, etc.). Information may be communicated between components via markup elements of the markup language. By virtue of communication between the components of system10in the markup language, various enhancements may be achieved. For example, information may be transmitted from storage module12to server14that configures server14to instantiate the virtual space may be provided to server14via the markup language at or near the time of instantiation. Similarly, information transmitted from server14to client16may enable client16to generate views of the virtual space by merely assembling the information indicated in markup elements communicated thereto. The implementation of the markup language may facilitate creation of a new virtual space by the user of client16, and/or the customization/refinement of existing virtual spaces. As used herein, a virtual space may comprise a simulated space (e.g., a physical space) instanced on a server (e.g., server14) that is accessible by a client (e.g., client16), located remotely from the server, to format a view of the virtual space for display to a user of the client. The simulated space may have a topography, express real-time interaction by the user, and/or ...

DETAILED DESCRIPTION

FIG. 1illustrates a system10configured to provide one or more virtual spaces that may be accessible to users. In some embodiments, system10may include a storage module12, a server14, a client16, and/or other components. Storage module12and client16may be in operative communication with server14. System10is configured such that information related to a given virtual space may be transmitted from storage module12to server14, which may then instantiate the virtual space being run on server14. Views of the virtual space may be generated by server14from the instance of the virtual space. Information related to the views may be transmitted from server14to client16to enable client16to format the views for display to a user. System10may implement a markup language for communication between components (e.g., storage module12, server14, client16, etc.). Information may be communicated between components via markup elements of the markup language. By virtue of communication between the components of system10in the markup language, various enhancements may be achieved. For example, information may be transmitted from storage module12to server14that configures server14to instantiate the virtual space may be provided to server14via the markup language at or near the time of instantiation. Similarly, information transmitted from server14to client16may enable client16to generate views of the virtual space by merely assembling the information indicated in markup elements communicated thereto. The implementation of the markup language may facilitate creation of a new virtual space by the user of client16, and/or the customization/refinement of existing virtual spaces.

As used herein, a virtual space may comprise a simulated space (e.g., a physical space) instanced on a server (e.g., server14) that is accessible by a client (e.g., client16), located remotely from the server, to format a view of the virtual space for display to a user of the client. The simulated space may have a topography, express real-time interaction by the user, and/or include one or more objects positioned within the topography that are capable of locomotion within the topography. In some instances, the topography may be a 2-dimensional topography. In other instances, the topography may be a 3-dimensional topography. In some instances, the topography may be a single node. The topography may include dimensions of the virtual space, and/or surface features of a surface or objects that are “native” to the virtual space. In some instances, the topography may describe a surface (e.g., a ground surface) that runs through at least a substantial portion of the virtual space. In some instances, the topography may describe a volume with one or more bodies positioned therein (e.g., a simulation of gravity-deprived space with one or more celestial bodies positioned therein). A virtual space may include a virtual world, but this is not necessarily the case. For example, a virtual space may include a game space that does not include one or more of the aspects generally associated with a virtual world (e.g., gravity, a landscape, etc.). By way of illustration, the well-known game Tetris may be formed as a two-dimensional topography in which bodies (e.g., the falling tetrominoes) move in accordance with predetermined parameters (e.g., falling at a predetermined speed, and shifting horizontally and/or rotating based on user interaction).

As used herein, the term “markup language” may include a language used to communicate information between components via markup elements. Generally, a markup element is a discrete unit of information that includes both content and attributes associated with the content. The markup language may include a plurality of different types of elements that denote the type of content and the nature of the attributes to be included in the element. For example, in some embodiments, the markup elements in the markup language may be of the form [O_HERE]|objectId|artIndex|x|y|z|name|templateId. This may represent a markup element for identifying a new object in a virtual space. The parameters for the mark-up element include: assigning an object Id for future reference for this object, telling the client what art to draw associated with this object, the relative x, y, and z position of the object, the name of the object, and data associated with the object (comes from the template designated). As another non-limiting example, a mark-up element may be of the form [O_GONE]|objId. This mark-up element may represent an object going away from the perspective of a view of the virtual space. As yet another example, a mark-up element may be of the form [O_MOVE]|objectId|x|y|z. This mark-up element may represent an object that has teleported to a new location in the virtual space. As still another example, a mark-up element may be of the form [O_SLIDE]|objectId|x|y|z|time. This mark-up element may represent an object that is gradually moving from one location in the virtual space to a new location over a fixed period of time. It should be appreciated that these examples are not intended to be limiting, but only to illustrate a few different forms of the markup elements.

Storage module12may include information storage18, a server communication module20, and/or other components. Generally, storage module12may store information related to one or more virtual spaces. The information stored by storage module12that is related to a given virtual space may include topographical information related to the topography of the given virtual space, manifestation information related to the manifestation of one or more objects positioned within the topography and/or unseen forces experienced by the one or more objects in the virtual space, interface information related to an interface provided to the user that enables the user to interact with the virtual space, space parameter information related to parameters of the virtual space, and/or other information related to the given virtual space.

The manifestation of the one or more objects may include the locomotion characteristics of the one or more objects, the size of the one or more objects, the identity and/or nature of the one or more objects, interaction characteristics of the one or more objects, and/or other aspect of the manifestation of the one or more objects. The interaction characteristics of the one or more objects described by the manifestation information may include information related to the manner in which individual objects interact with and/or are influenced by other objects, the manner in which individual objects interact with and/or are influenced by the topography (e.g., features of the topography), the manner in which individual objects interact with and/or are influenced by unseen forces within the virtual space, and/or other characteristics of the interaction between individual objects and other forces and/or objects within the virtual space. The interaction characteristics of the one or more objects described by the manifestation information may include scriptable behaviors and, as such, the manifestation stored within storage module12may include one or both of a script and a trigger associated with a given scriptable behavior of a given object (or objects) within the virtual space. The unseen forces present within the virtual space may include one or more of gravity, a wind current, a water current, an unseen force emanating from one of the objects (e.g., as a “power” of the object), and/or other unseen forces (e.g., unseen influences associated with the environment of the virtual space such as temperature and/or air quality).

In some embodiments, the manifestation information may include information related to the sonic characteristics of the one or more objects positioned in the virtual space. The sonic characteristics may include the emission characteristics of individual objects (e.g., controlling the emission of sound from the objects), the acoustic characteristics of individual objects, the influence of sound on individual objects, and/or other characteristics of the one or more objects. In such embodiments, the topographical information may include information related to the sonic characteristics of the topography of the virtual space. The sonic characteristics of the topography of the virtual space may include acoustic characteristics of the topography, and/or other sonic characteristics of the topography.

According to various embodiments, content included within the virtual space (e.g., visual content formed on portions of the topography or objects present in the virtual space, objects themselves, etc.) may be identified within the information stored in storage module12by reference only. For example, rather than storing a structure and/or a texture associated with the structure, storage module12may instead store an access location at which visual content to be implemented as the structure (or a portion of the structure) or texture can be accessed. In some implementations, the access location may include a URL that points to a network location. The network location identified by the access location may be associated with a network asset22. Network asset22may be located remotely from each of storage module12, server14, and client16. For example, the access location may include a network URL address (e.g., an internet URL address, etc.) at which network asset22may be accessed.

It should be appreciated that not only solid structures within the virtual space may be identified in the information stored in storage module12may be stored by reference only. For example, visual effects that represent unseen forces or influences may be stored by reference as described above. Further, information stored by reference may not be limited to visual content. For example, audio content expressed within the virtual space may be stored within storage module12by reference, as an access location at which the audio content can be accessed. Other types of information (e.g., interface information, space parameter information, etc.) may be stored by reference within storage module12.

The interface information stored within storage module12may include information related to an interface provided to the user that enables the user to interact with the virtual space. More particularly, in some implementations, the interface information may include a mapping of an input device provided at client16to commands that can be input by the user to system10. For example, the interface information may include a key map that maps keys in a keyboard (and/or keypad) provided to the user at client16to commands that can be input by the user to system10. As another example, the interface information may include a map that maps the inputs of a mouse (or joystick, or trackball, etc.) to commands that can be input by the user to system10. In some implementations, the interface information may include information related to a configuration of a user interface display provided to the user at client that enables the user to input information to system10. For example, the user interface may enable the user to input communication to other users interacting with the virtual space, input actions to be performed by one or more objects within the virtual space, request a different point of view for the view, request a more (or less) sophisticated view (e.g., a 2-dimensional view, a 3-dimensional view, etc.), request one or more additional types of data for display in the user interface display, and/or input other information.

The user interface display may be configured (e.g., by the interface information stored in storage module12) to provide information to the user about conditions in the virtual space that may not be apparent simply from viewing the space. For example, such conditions may include the passage of time, ambient environmental conditions, and/or other conditions. The user interface display may be configured (e.g., by the interface information stored in storage module12) to provide information to the user about one or more objects within the space. For instance, information may be provided to the user about objects associated with the topography of the virtual space (e.g., coordinate, elevation, size, identification, age, status, etc.). In some instances, information may be provided to the user about objects that represent animate characters (e.g., wealth, health, fatigue, age, experience, etc.). For example, such information may be displayed to the user that is related to an object that represents an incarnation associated with client16in the virtual space (e.g., an avatar, a character being controlled by the user, etc.).

The space parameter information may include information related to one or more parameters of the virtual space. Parameters of the virtual space may include, for example, the rate at which time passes, dimensionality of objects within the virtual space (e.g., 2-dimensional vs. 3-dimensional), permissible views of the virtual space (e.g., first person views, bird's eye views, 2-dimensional views, 3-dimensional views, fixed views, dynamic views, selectable views, etc.), and/or other parameters of the virtual space. In some instances, the space parameter information includes information related to the game parameters of a game provided within the virtual space. For instance, the game parameters may include information related to a maximum number of players, a minimum number of players, the game flow (e.g., turn based, real-time, etc.), scoring, spectators, and/or other game parameters of a game.

The information related to the plurality of virtual spaces may be stored in an organized manner within information storage18. For example, the information may be organized into a plurality of space records24(illustrated as space record24a, space record24b, and space record24c). Individual ones of space records24may correspond to individual ones of the plurality of virtual spaces. A given space record24may include information related to the corresponding virtual space. In some embodiments, the space records24may be stored together in a single hierarchal structure (e.g., a database, a file system of separate files, etc.). In some embodiments, space records24may include a plurality of different “sets” of space records24, wherein each set of space records includes one or more of space records24that is stored separately and discretely from the other space records24.

Although information storage18is illustrated inFIG. 1as a single entity, this is for illustrative purposes only. In some embodiments, information storage18includes a plurality of informational structures that facilitate management and storage of the information related to the plurality of virtual spaces. Information storage18may include not only the physical storage elements for storing the information related to the virtual spaces but may include the information processing and storage assets that enable information storage18to manage, organize, and maintain the stored information. Information storage18may include a relational database, an object oriented database, a hierarchical database, a post-relational database, flat text files (which may be served locally or via a network), XML files (which may be served locally or via a network), and/or other information structures.

In some embodiments, in which information storage18includes a plurality of informational structures that are separate and discrete from each other, information storage18may further include a central information catalog that includes information related to the location of the space records included therein (e.g., network and/or file system addresses of individual space records). The central information catalog may include information related to the location of instances virtual spaces (e.g., network addresses of servers instancing the virtual spaces). In some embodiments, the central information catalog may form a clearing house of information that enables users to initiate instances and/or access instances of a chosen virtual space. Accordingly, access to the information stored within the central information catalog may be provided to users based on privileges (e.g., earned via monetary payment, administrative privileges, earned via previous game-play, earned via membership in a community, etc.).

Server communication module20may facilitate communication between information storage18and server14. In some embodiments, server communication module20enables this communication by formatting communication between information storage18and server14. This may include, for communication transmitted from information storage18to server14, generating markup elements (e.g., “tags”) that convey the information stored in information storage18, and transmitting the generated markup elements to server14. For communication transmitted from server14to information storage18, server communication module20may receive markup elements transmitted from server14to storage module12and may reformat the information for storage in information storage18.

Server14may be provided remotely from storage module12. Communication between server14and storage module12may be accomplished via one or more communication media. For example, server14and storage module12may communicate via a wireless medium, via a hard-wired medium, via a network (e.g., wireless or wired), and/or via other communication media. In some embodiments, server14may include a communication module26, an instantiation module28, a view module30, and/or other modules. Modules26,28, and30may be implemented in software; hardware; firmware; some combination of software, hardware, and/or firmware; and/or otherwise implemented. It should be appreciated that although modules26,28, and/or30are illustrated inFIG. 1as being co-located within a single unit (server14), in some implementations, server14may include multiple units and modules26,28, and/or30may be located remotely from the other modules.

Communication module26may be configured to communicate with storage module12and/or client16. Communicating with storage module12and/or client16may include transmitting and/or receiving markup elements of the markup language. The markup elements received by communication module26may be implemented by other modules of server14, or may be passed between storage module12and client16via server14(as server14serves as an intermediary therebetween). The markup elements transmitted by communication module26to storage module12or client16may include markup elements being communicated from storage module to client16(or vice versa), or the markup elements may include markup elements generated by the other modules of server14.

Instantiation module28may be configured to instantiate a virtual space, which would result in an instance32of the virtual space present on server14. Instantiation module28may instantiate the virtual space according to information received in markup element form from storage module12. Instantiation module28may comprise an application that is configured to instantiate virtual spaces based on information conveyed thereto in markup element form. The application may be capable of instantiating a virtual space without accessing a local source of information that describes various aspects of the configuration of the virtual space (e.g., manifestation information, space parameter information, etc.), or without making assumptions about such aspects of the configuration of the virtual space. Instead, such information may be obtained by instantiation module28from the markup elements communicated to server14from storage module12. This may provide one or more enhancements over systems in which an application executed on a server instantiates a virtual space (e.g., in “World of Warcraft”). For example, the application included in instantiation module28may be capable of instantiating a wider variety of “types” of virtual spaces (e.g., virtual worlds, games, 3-D spaces, 2-D spaces, spaces with different views, first person spaces, birds-eye spaces, real-time spaces, turn based spaces, etc.).

Instance32may be characterized as a simulation of the virtual space that is being executed on server14by instantiation module30. The simulation may include determining in real-time the positions, structure, and manifestation of objects, unseen forces, and topography within the virtual space according to the topography, manifestation, and space parameter information that corresponds to the virtual space. As has been discussed above, various portions of the content that make up the virtual space embodied in instance32may be identified in the markup elements received from storage module12by reference. In such cases, instantiation module28may be configured to access the content at the access location identified (e.g., at network asset22, as described above) in order to account for the nature of the content in instance32.

As instance32is maintained by instantiation module28on server14, and the position, structure, and manifestation of objects, unseen forces, and topography within the virtual space varies, instantiation may implement an instance memory module34to store information related to the present state of instance32. Instance memory module34may be provided locally to server14(e.g., integrally with server14, locally connected with server14, etc.), or instance memory module34may be located remotely from server14and an operative communication link may be formed therebetween.

View module30may be configured to implement instance32to determine a view of the virtual space. The view of the virtual space may be from a fixed location or may be dynamic (e.g., may track an object). In some implementations, an incarnation associated with client16(e.g., an avatar) may be included within instance32. In these implementations, the location of the incarnation may influence the view determined by view module30(e.g., track with the position of the incarnation, be taken from the perspective of the incarnation, etc.). The view may be determined from a variety of different perspectives (e.g., a bird's eye view, an elevation view, a first person view, etc.). The view may be a 2-dimensional view or a 3-dimensional view. These and/or other aspects of the view may be determined based on information provided from storage module12via markup elements (e.g., as space parameter information). Determining the view may include determining the identity, shading, size (e.g., due to perspective), motion, and/or position of objects, effects, and/or portions of the topography that would be present in a rendering of the view.

View module30may generate a plurality of markup elements that describe the view based on the determination of the view. The plurality of markup elements may describe identity, shading, size (e.g., due to perspective), and/or position of the objects, effects, and/or portions of the topography that should be present in a rendering of the view. The markup elements may describe the view “completely” such that the view can be formatted for viewing by the user by simply assembling the content identified in the markup elements according to the attributes of the content provided in the markup elements. In such implementations, assembly alone may be sufficient to achieve a display of the view of the virtual space, without further processing of the content (e.g., to determine motion paths, decision-making, scheduling, triggering, etc.).

In some implementations, view module30may generate the markup elements to describe a series of “snapshots” of the view at a series of moments in time. The information describing a given “snapshot” may include one or both of dynamic information that is to be changed or maintained and static information included in a previous markup element that will be implemented to format the view until it is changed by another markup element generated by view module30. It should be appreciated that the use of the words “dynamic” and “static” in this context do not necessarily refer to motion (e.g., because motion in a single direction may be considered static information), but instead to the source and/or content of the information.

In some instances, information about a given object described in a “snapshot” of the view will include motion information that describes one or more aspects of the motion of the given object. Motion information may include a direction of motion, a rate of motion for the object, and/or other aspects of the motion of the given object, and may pertain to linear and/or rotational motion of the object. The motion information included in the markup elements will enable client16to determine instantaneous motion of the given object, and any changes in the motion of the given object within the view may be controlled by the motion information included in the markup elements such that independent determinations by client16of the motion of the given object may not be performed. The differences in the “snapshots” of the view account for dynamic motion of content within the view and/or of the view itself. The dynamic motion controlled by the motion information included in the markup elements generated by view module30may describe not only motion of objects in the view relative to the frame of the view and/or the topography, but may also describe relative motion between a plurality of objects. The description of this relative motion may be used to provide more sophisticated animation of objects within the view. For example, a single object may be described as a compound object made up of constituent objects. One such instance may include portrayal of a person (the compound object), which may be described as a plurality of body parts that move relative to each other as the person walks, talks, emotes, and/or otherwise moves in the view (e.g., the head, lips, eyebrows, eyes, arms, legs, feet, etc.). The manifestation information provided by storage module12to server14related to the person (e.g., at startup of instance32) may dictate the coordination of motion for the constituent objects that make up the person as the person performs predetermined tasks and/or movements (e.g., the manner in which the upper and lower legs and the rest of the person move as the person walks). View module30may refer to the manifestation information associated with the person that dictates the relative motion of the constituent objects of the person as the person performs a predetermined action. Based on this information, view module30may determine motion information for the constituent objects of the person that will account for relative motion of the constituent objects that make up the person (the compound object) in a manner that conveys the appropriate relative motion of the constituent parts, thereby animating the movement of the person in a relatively sophisticated manner.

In some embodiments, the markup elements generated by view module30that describe the view identify content (e.g., visual content, audio content, etc.) to be included in the view by reference only. For example, as was the case with markup elements transmitted from storage module12to server14, the markup elements generated by view module30may identify content by a reference to an access location. The access location may include a URL that points to a network location. The network location identified by the access location may be associated with a network asset (e.g., network asset22). For instance, the access location may include a network URL address (e.g., an internet URL address, etc.) at which network asset22may be accessed.

According to various embodiments, in generating the view, view module30may manage various aspects of content included in views determined by view module30, but stored remotely from server14(e.g., content referenced in markup elements generated by view module30). Such management may include re-formatting content stored remotely from server14to enable client16to convey the content (e.g., via display, etc.) to the user. For example, in some instances, client16may be executed on a relatively limited platform (e.g., a portable electronic device with limited processing, storage, and/or display capabilities). Server14may be informed of the limited capabilities of the platform (e.g., via communication from client16to server14) and, in response, view module30may access the content stored remotely in network asset22to re-format the content to a form that can be conveyed to the user by the platform executing client16(e.g., simplifying visual content, removing some visual content, re-formatting from 3-dimensional to 2-dimensional, etc.). In such instances, the re-formatted content may be stored at network asset22by over-writing the previous version of the content, stored at network asset22separately from the previous version of the content, stored at a network asset36that is separate from network asset22, and/or otherwise stored. In cases in which the re-formatted content is stored separately from the previous version of the content (e.g., stored separately at network asset22, stored at network asset24, cached locally by server14, etc.), the markup elements generated by view module30for client16reflect the access location of the re-formatted content.

As was mentioned above, in some embodiments, view module30may adjust one or more aspects of a view of instance32based on communication from client16indicating that the capabilities of client16may be limited in some manner (e.g., limitations in screen size, limitations of screen resolution, limitations of audio capabilities, limitations in information communication speeds, limitations in processing capabilities, etc.). In such embodiments, view module30may generate markup elements for transmission that reduce (or increase) the complexity of the view based on the capabilities (and/or lack thereof) communicated by client16to server14. For example, view module30may remove audio content from the markup elements, view module30may generate the markup elements to provide a two dimensional (rather than a three dimensional) view of instance32, view module30may reduce, minimize, or remove information dictating motion of one or more objects in the view, view module30may change the point of view of the view (e.g., from a perspective view to a bird's eye view), and/or otherwise generate the markup elements to accommodate client16. In some instances, these types of accommodations for client16may be made by server14in response to commands input by a user on client16as well as or instead of based on communication of client capabilities by client16. For example, the user may input commands to reduce the load to client16posed by displaying the view to improve the quality of the performance of client16in displaying the view, to free up processing and/or communication capabilities on client16for other functions, and/or for other reasons. From the description above it should be apparent that as view module30“customizes” the markup elements that describe the view for client16, a plurality of different versions of the same view may be described in markup elements that are sent to different clients with different capabilities, settings, and/or requirements input by a user. This customization by view module30may enhance the ability of system10to be implemented with a wider variety of clients and/or provide other enhancements.

In some embodiments, client16provides an interface to the user that includes a view display38, a user interface display40, an input interface42, and/or other interfaces that enable interaction of the user with the virtual space. Client16may include a server communication module44, a view display module46, an interface module48, and/or other modules. Client16may be executed on a computing platform that includes a processor that executes modules44and46, a display device that conveys displays38and40to the user, and provides input interface42to the user to enable the user to input information to system10(e.g., a keyboard, a keypad, a switch, a knob, a lever, a touchpad, a touchscreen, a button, a joystick, a mouse, a trackball, etc.). The platform may include a desktop computing system, a gaming system, or more portable systems (e.g., a mobile phone, a personal digital assistant, a hand-held computer, a laptop computer, etc.). In some embodiments, client16may be formed in a distributed manner (e.g., as a web service). In some embodiments, client16may be formed in a server. In these embodiments, a given virtual space implemented on server14may include one or more objects that present another virtual space (of which server14becomes the client in determining the views of the first given virtual space).

Server communication module44may be configured to receive information related to the execution of instance32on server14from server14. For example, server communication module44may receive markup elements generated by storage module12(e.g., via server14), view module30, and/or other components or modules of system10. The information included in the markup elements may include, for example, view information that describes a view of instance32of the virtual space, interface information that describes various aspects of the interface provided by client16to the user, and/or other information. Server communication module44may communicate with server14via one or more protocols such as, for example, WAP, TCP, UDP, and/or other protocols. The protocol implemented by server communication module44may be negotiated between server communication module44and server14.

View display module48may be configured to format the view described by the markup elements received from server14for display on view display38. Formatting the view described by the markup elements may include assembling the view information included in the markup elements. This may include providing the content indicated in the markup elements according to the attributes indicated in the markup elements, without further processing (e.g., to determine motion paths, decision-making, scheduling, triggering, etc.). As was discussed above, in some instances, the content indicated in the markup elements may be indicated by reference only. In such cases, view display module46may access the content at the access locations provided in the markup elements (e.g., the access locations that reference network assets22and/or36, or objects cached locally to server14). In some of these cases, view display module46may cause one or more of the content accessed to be cached locally to client16, in order to enhance the speed with which future views may be assembled. The view that is formatted by assembling the view information provided in the markup elements may then be conveyed to the user via view display38.

As has been mentioned above, in some instances, the capabilities of client16may be relatively limited. In some such instances, client16may communicate these limitations to server14, and the markup elements received by client16may have been generated by server14to accommodate the communicated limitations. However, in some such instances, client16may not communicate some or all of the limitations that prohibit conveying to the user all of the content included in the markup elements received from server14. Similarly, server14may not accommodate all of the limitations communicated by client16as server14generates the markup elements for transmission to client16. In these instances, view display module48may be configured to exclude or alter content contained in the markup elements in formatting the view. For example, view display module48may disregard audio content if client16does not include capabilities for providing audio content to the user. As another example, if client16does not have the processing and/or display resources to convey movement of objects in the view, view display module48may restrict and/or disregard motion dictated by motion information included in the markup elements.

Interface module48may be configured to configure various aspects of the interface provided to the user by client16. For example, interface module48may configure user interface display40and/or input interface42according to the interface information provided in the markup elements. User interface display40may enable display of the user interface to the user. In some implementations, user interface display40may be provided to the user on the same display device (e.g., the same screen) as view display38. As was discussed above, the user interface configured on user interface display40by interface module38may enable the user to input communication to other users interacting with the virtual space, input actions to be performed by one or more objects within the virtual space, provide information to the user about conditions in the virtual space that may not be apparent simply from viewing the space, provide information to the user about one or more objects within the space, and/or provide for other interactive features for the user. In some implementations, the markup elements that dictate aspects of the user interface may include markup elements generated at storage module12(e.g., at startup of instance32) and/or markup elements generated by server14(e.g., by view module30) based on the information conveyed from storage module12to server14via markup elements.

In some instances, interface module48may configure input interface42according to information received from server14via markup elements. For example, interface module48may map the manipulation of input interface42by the user into commands to be input to system10based on a predetermined mapping that is conveyed to client16from server14via markup elements. The predetermined mapping may include, for example, a key map and/or other types of interface mappings (e.g., a mapping of inputs to a mouse, a joystick, a trackball, and/or other input devices). If input interface42is manipulated by the user, interface module48may implement the mapping to determine an appropriate command (or commands) that correspond to the manipulation of input interface42by the user. Similarly, information input by the user to user interface display40(e.g., via a command line prompt) may be formatted into an appropriate command for system10by interface module48. In some instances, the availability of certain commands, and/or the mapping of such commands may be provided based on privileges associated with a user manipulating client16(e.g., as determined from a login). For example, a user with administrative privileges, premium privileges (e.g., earned via monetary payment), advanced privileges (e.g., earned via previous game-play), and/or other privileges may be enabled to access an enhanced set of commands. These commands formatted by interface module48may be communicated to server14by server communication module44.

Upon receipt of commands from client16that include commands input by the user (e.g., via communication module26), server14may enqueue for execution (and/or execute) the received commands. The received commands may include commands related to the execution of instance32of the virtual space. For example, the commands may include display commands (e.g., pan, zoom, etc.), object manipulation commands (e.g., to move one or more objects in a predetermined manner), incarnation action commands (e.g., for the incarnation associated with client16to perform a predetermined action), communication commands (e.g., to communicate with other users interacting with the virtual space), and/or other commands. Instantiation module38may execute the commands in the virtual space by manipulating instance32of the virtual space. The manipulation of instance32in response to the received commands may be reflected in the view generated by view module30of instance32, which may then be provided back to client16for viewing. Thus, commands input by the user at client16enable the user to interact with the virtual space without requiring execution or processing of the commands on client16itself.

It should be that system10as illustrated inFIG. 1is not intended to be limiting in the numbers of the various components and/or the number of virtual spaces being instanced. For example,FIG. 2illustrates a system50, similar to system10, including a storage module52, a plurality of servers54,56, and58, and a plurality of clients60,62, and64. Storage module52may perform substantially the same function as storage module12(shown inFIG. 1and described above). Servers54,56, and58may perform substantially the same function as server14(shown inFIG. 1and described above). Clients60,62, and64may perform substantially the same function as client16(shown inFIG. 1and described above).

Storage module52may store information related to a plurality of virtual spaces, and may communicate the stored information to servers54,56, and/or58via markup elements of the markup language, as was discussed above. Servers54,56, and/or58may implement the information received from storage module52to execute instances66,68,70, and/or70of virtual spaces. As can be seen inFIG. 2, a given server, for example, server58, may be implemented to execute instances of a plurality of virtual spaces (e.g., instances70and72). Clients60,62, and64may receive information from servers54,56, and/or58that enables clients60,62, and/or64to provide an interface for users thereof to one or more virtual spaces being instanced on servers54,56, and/or58. The information received from servers54,56, and/or58may be provided as markup elements of the markup language, as discussed above.

Due at least in part to the implementation of the markup language to communicate information between the components of system50, it should be appreciated from the foregoing description that any of servers54,56, and/or58may instance any of the virtual spaces stored on storage module52. The ability of servers54,56, and/or58to instance a given virtual space may be independent, for example, from the topography of the given virtual space, the manner in which objects and/or forces are manifest in the given virtual space, and/or the space parameters of the given virtual space. This flexibility may provide an enhancement over conventional systems for instancing virtual spaces, which may only be capable of instancing certain “types” of virtual spaces. Similarly, clients60,62, and/or64may interface with any of the instances66,68,70, and/or72. Such interface may be provided without regard for specifics of the virtual space (e.g., topography, manifestations, parameters, etc.) that may limit the number of “types” of virtual spaces that can be provided for with a single client in conventional systems. In conventional systems, these limitations may arise as a product of the limitations of platforms executing client16, limitations of client16itself, and/or other limitations.

Returning toFIG. 1, in some embodiments, system10may enable the user to create a virtual space. In such embodiments, the user may select a set of characteristics of the virtual space on client16(e.g., via user interface display48and/or input interface42). The characteristics selected by the user may include characteristics of one or more of a topography of the virtual space, the manifestation in the virtual space of one or more objects and/or unseen forces, an interface provided to users to enable the users to interact with the new virtual space, space parameters associated with the new virtual space, and/or other characteristics of the new virtual space.

The characteristics selected by the user on client16may be transmitted to server14. Server14may communicate the selected characteristics to storage module12. Prior to communication of the selected characteristics, server14may store the selected characteristics. In some embodiments, rather than communicating through server14, client16may enable direct communication with storage module12to communicate selected characteristics directly thereto. For example, client16may be formed as a webpage that enables direct communication (via selections of characteristics) with storage module12. In response to selections of characteristics by the user for a new virtual space, storage module12may create a new space record in information storage18that corresponds to the new virtual space. The new space record may indicate the selection of the characteristics made by the user on client16. For example, the new space record may include topographical information, manifestation information, space parameter information, and/or interface information that corresponds to the characteristics selected by the user on client16.

Content may be added to the new virtual space by the user in a variety of manners. For instance, content may be created within the context of client16or content may be accessed (e.g., on a file system local to client16) and uploaded to server storage module12. In some instances, content added to the new virtual space may include content from another virtual space, content form a webpage, or other content stored remotely from client16. In these instances, an access location associated with the new content may be provided to storage module12(e.g., a network address, a file system address, etc.) so that the content can be accessed upon instantiation to provide views of the new virtual space (e.g., by view module30and/or view display module46as discussed above). This may enable the user to identify content for inclusion in the new virtual space (or an existing virtual space via the substantially the same mechanism) from virtually any electronically available source of content without the content selected by the user to be uploaded for storage on storage module12, or to server14during instantiation (e.g., except for temporary caching in some cases), or to client16during display (e.g., except for temporary caching in some cases).

In some embodiments, information storage18of storage module12includes a plurality of space records that correspond to a plurality of default virtual spaces. Each of the default virtual spaces may correspond to a default set of characteristics. In such embodiments, selection by the user of the characteristics of the new virtual space may include selection of one of the default virtual spaces. For example, one default virtual space may correspond to a turn-based role-playing game space, while another default virtual space may correspond to a first-person shooter game space, still another may correspond to a chat space, and still another may correspond to a real-time strategy game. Upon selection of a default virtual space, the user may then refine the characteristics that correspond to the default virtual space to customize the new virtual space. Such customization may be reflected in the new space record created in information storage18.

In some embodiments, the user may be enabled to select individual ones of characteristics from the virtual spaces (e.g., point of view, one or more game parameters, an aspect of topography, content, etc.) for inclusion in the new virtual space, rather than an acceptance of all of the characteristics of the selected default virtual space. In some instances, the default virtual spaces may include actual virtual spaces that may be instanced by server16(e.g., created previously by the user and/or another user). Access to previously created virtual spaces may provided based on privileges associated with the creating user. For example, monetary payments, previous game-playing, acceptance by the creating user of the selected virtual space, inclusion within a community, and/or other criteria may be implemented to determine whether the creating user should be given access to the previously created virtual space.

Content may be added to the new virtual space by the user in a variety of manners. For instance, content may be created within the context of client16or content may be accessed (e.g., on a file system local to client16) and uploaded to server storage module12. In some instances, content added to the new virtual space may include content from another virtual space, content form a webpage, or other content stored remotely from client16. In these instances, an access location associated with the new content may be provided to storage module12(e.g., a network address, a file system address, etc.) so that the content can be accessed upon instantiation to provide views of the new virtual space (e.g., by view module30and/or view display module46as discussed above). This may enable the user to identify content for inclusion in the new virtual space (or an existing virtual space via the substantially the same mechanism) from virtually any electronically available source of content without the content selected by the user to be uploaded for storage on storage module12, or to server14during instantiation (e.g., except for temporary caching in some cases), or to client16during display (e.g., except for temporary caching in some cases).

In some implementations, once the user has selected the characteristics of the new virtual space, instantiation module28may execute an instance74of the new virtual space according to the selected characteristics. View module30may generate markup elements for communication to client16that describe a view of instance74to be provided to the user via view display46on client16(e.g., in the manner described above). In such implementations, interface module48may configure user interface display40and/or input interface42such that the user may input commands to system10that dictate changes to the characteristics of the new virtual space. For example, the commands may dictate changes to the topography, the manifestation of objects and/or unseen forces, a user interface to be provided to a user interacting with the new virtual space, one or more space parameters, and/or other characteristics of the new virtual space. These commands may be provided to server14. Based on these commands, instantiation module28may implement the dictated changes to the new virtual space, which may then be reflected in the view described by the markup elements generated by view module30. Further, the changes to the characteristics of the new virtual space may be saved to the new space record in information storage18that corresponds to the new virtual space. This mode of operation may enable the user to customize the appearance, content, and/or parameters of the new virtual space while viewing the new virtual space as a future user would while interacting with the new virtual space once its characteristics are finalized.

Although the invention has been described in detail for the purpose of illustration based on what is currently considered to be the most practical and preferred embodiments, it is to be understood that such detail is solely for that purpose and that the invention is not limited to the disclosed embodiments, but, on the contrary, is intended to cover modifications and equivalent arrangements that are within the spirit and scope of the appended claims. For example, it should be understood that the present invention contemplates that, to the extent possible, one or more features of any embodiment can be combined with one or more features of any other embodiment.

Claims

- A client computing device configured to enable a user to create a virtual space, wherein the virtual space is a simulated physical space that has a topography, expresses real-time interaction by the user, and includes one or more objects positioned within the topography that are configured to experience locomotion within the topography, the client computing device comprising: a graphic user interface configured to receive selections from the user of a set of characteristics of a virtual space, wherein selection of the set of characteristics dictate (i) aspects of a topography of the new virtual space, and (ii) manifestation of one or more objects positioned within the topography and/or unseen forces experienced by the one or more objects in the new virtual space;one or more processors configured to receive, from a server, a plurality of virtual world templates having default selections for the aspects of topographies of virtual spaces and manifestations of objects positioned within the topography and/or unseen forces experienced by the objects in virtual spaces, and to selectively present the templates in the graphic user interface;and wherein the graphic user interface is further configured to receive selections from the user of refinements to one or more of the templates.

- The device of claim 1 , wherein the one or more processors are further configured to transmit information conveying selections of the user input to the graphic user interface for storage in electronic storage configured to store, for a plurality of virtual spaces, topographical information and manifestation information.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.