U.S. Pat. No. 9,919,206

METHOD AND APPARATUS FOR PREVENTING A COLLISION BETWEEN USERS OF AN INTERACTIVE COMPUTER GAME

AssigneeSamsung Electronics Co., Ltd.

Issue DateAugust 27, 2015

Illustrative Figure

Abstract

An apparatus for executing a computer game are provided. The apparatus includes an output unit configured to transmit a first image generated based on a form of a first user participating in the computer game and a second image generated based on a form of a second user participating in the computer game to a display apparatus, and a control unit configured to predict a possibility of a collision between the first user and the second user, and to control transmitting warning information indicating the possibility of the collision to the display apparatus via the output unit, according to a result of the predicting.

Description

Throughout the drawings, it should be noted that like reference numbers are used to depict the same or similar elements, features, and structures. DETAILED DESCRIPTION The following description with reference to the accompanying drawings is provided to assist in a comprehensive understanding of various embodiments of the present disclosure as defined by the claims and their equivalents. It includes various specific details to assist in that understanding but these are to be regarded as merely exemplary. Accordingly, those of ordinary skill in the art will recognize that various changes and modifications of the various embodiments described herein can be made without departing from the scope and spirit of the present disclosure. In addition, descriptions of well-known functions and constructions may be omitted for clarity and conciseness. The terms and words used in the following description and claims are not limited to the bibliographical meanings, but, are merely used by the inventor to enable a clear and consistent understanding of the present disclosure. Accordingly, it should be apparent to those skilled in the art that the following description of various embodiments of the present disclosure is provided for illustration purpose only and not for the purpose of limiting the present disclosure as defined by the appended claims and their equivalents. It is to be understood that the singular forms “a,” “an,” and “the” include plural referents unless the context clearly dictates otherwise. Thus, for example, reference to “a component surface” includes reference to one or more of such surfaces. Terms used herein will be briefly described, and the inventive concept will be described in greater detail below. General and widely-used terms have been employed herein, in consideration of functions provided in the inventive concept, and may vary according to an intention of one of ordinary skill in the art, a precedent, ...

Throughout the drawings, it should be noted that like reference numbers are used to depict the same or similar elements, features, and structures.

DETAILED DESCRIPTION

The following description with reference to the accompanying drawings is provided to assist in a comprehensive understanding of various embodiments of the present disclosure as defined by the claims and their equivalents. It includes various specific details to assist in that understanding but these are to be regarded as merely exemplary. Accordingly, those of ordinary skill in the art will recognize that various changes and modifications of the various embodiments described herein can be made without departing from the scope and spirit of the present disclosure. In addition, descriptions of well-known functions and constructions may be omitted for clarity and conciseness.

The terms and words used in the following description and claims are not limited to the bibliographical meanings, but, are merely used by the inventor to enable a clear and consistent understanding of the present disclosure. Accordingly, it should be apparent to those skilled in the art that the following description of various embodiments of the present disclosure is provided for illustration purpose only and not for the purpose of limiting the present disclosure as defined by the appended claims and their equivalents.

It is to be understood that the singular forms “a,” “an,” and “the” include plural referents unless the context clearly dictates otherwise. Thus, for example, reference to “a component surface” includes reference to one or more of such surfaces.

Terms used herein will be briefly described, and the inventive concept will be described in greater detail below.

General and widely-used terms have been employed herein, in consideration of functions provided in the inventive concept, and may vary according to an intention of one of ordinary skill in the art, a precedent, or emergence of new technologies. Additionally, in some cases, an applicant may arbitrarily select specific terms. Then, the applicant will provide the meaning of the terms in the description of the inventive concept. Accordingly, it will be understood that the terms, used herein, should be interpreted as having a meaning that is consistent with their meaning in the context of the relevant art and will not be interpreted in an idealized or overly formal sense unless expressly so defined herein.

It will be further understood that the terms “comprises”, “comprising”, “includes”, and/or “including”, when used herein, specify the presence of components, but do not preclude the presence or addition of one or more other components, unless otherwise specified. Additionally, terms used herein, such as ‘unit’ or ‘module’, mean entities for processing at least one function or operation. These entities may be implemented by hardware, software, or a combination of hardware and software.

A “device” used herein refers to an element that is included in a certain apparatus and accomplish a certain objective. In greater detail, a certain apparatus that includes a screen which may perform displaying and an interface for receiving information input by a user, receives a user input, and thus, accomplishes a certain objective may be included in an embodiment of the inventive concept without limitation.

The inventive concept will now be described more fully with reference to the accompanying drawings, in which various embodiments of the inventive concept are shown. The inventive concept may, however, be embodied in many different forms and should not be construed as being limited to the embodiments set forth herein. In the description of the inventive concept, certain detailed explanations of the related art are omitted when it is deemed that they may unnecessarily obscure the essence of the inventive concept. Like numbers refer to like elements throughout the description of the figures.

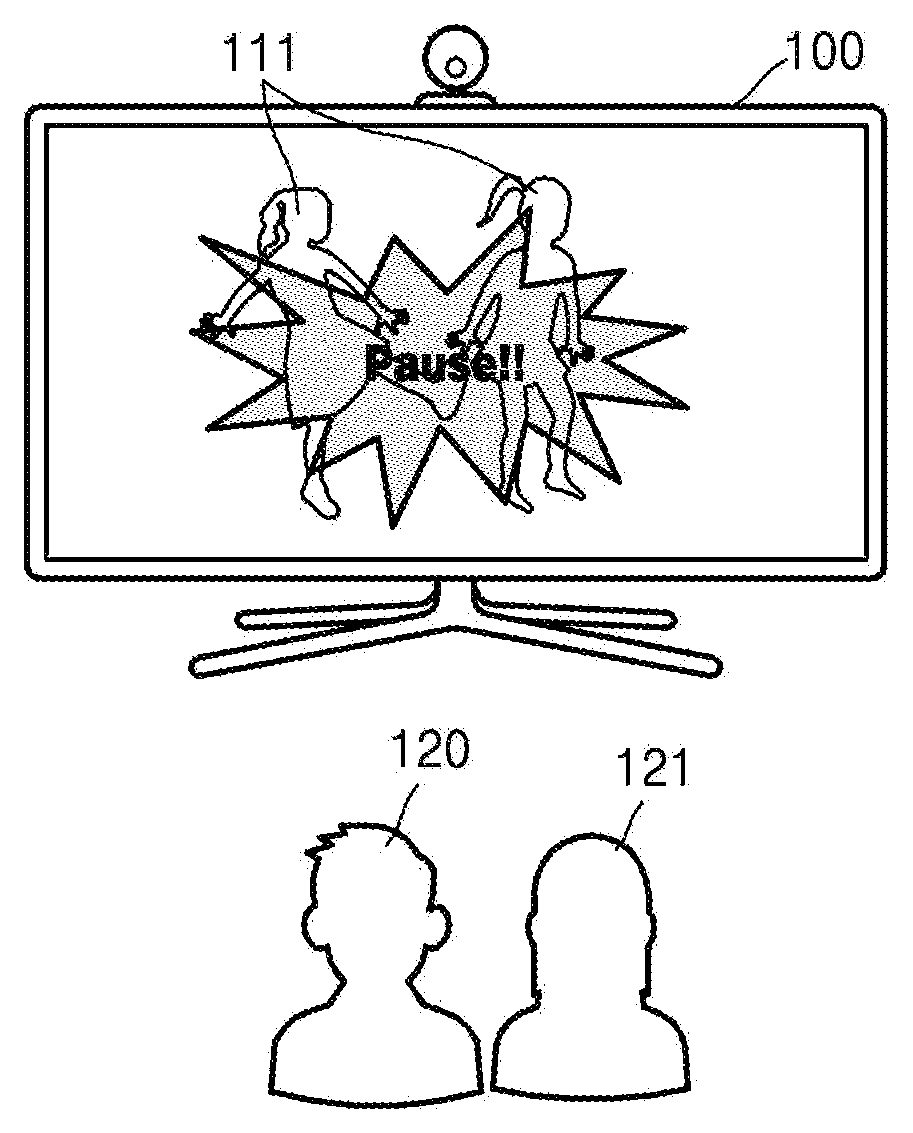

FIGS. 1A and 1Billustrate conceptual diagrams showing an example of a method of preventing a collision between a plurality of subjects according to an embodiment of the present disclosure.

Referring toFIGS. 1A and 1B, persons or things120and121that are located in front of a device100, and objects110and111are shown, wherein the objects110and111are obtained when the persons or things120and121are photographed by using a camera included in the device100and output to a screen of the device100. Hereinafter, the objects110and111respectively refer to images110and111that show the persons or things120and121output to the screen of the device100. Additionally, hereinafter, a subject120or121refers to a person or a thing120or121. In other words, the camera photographs the subjects120or121, and the objects110or111which are images of the subject120or121are output to the screen of the device100. For convenience of description, inFIGS. 1A and 1B, it is described that the objects110and111are images of users of content executed by the device100, but the objects110and111are not limited thereto.

A subject may be a user who participates in the content, or a person or a thing that does not participate in the content. The object110may be an image obtained by photographing a person who uses the content, or an image obtained by photographing a person who does not use the content. Additionally, the object110may be an image obtained by photographing a thing owned by a person or a thing placed in a space where a person is located. Here, the thing may correspond to an animal, a plant, or furniture disposed in a space. The content refers to a program that may be controlled to recognize a motion of a user. For example, the content may be a computer game executed when the user takes a certain motion, such as a dancing game, a sport game, etc., or a program that outputs a motion of the user to a screen of the device100.

If it is assumed that a computer game is executed, both the objects110and111may be images of users participating in the computer game. Alternatively, one of the objects110and111may be an image of a user participating in the computer game, and the other may be an image of a person that does not participate in the computer game or may be an image of a thing. As an example, if it is assumed that a dancing game is being executed, the objects110and111may be respective images of users who enjoy the dancing game together. As another example, one of the object110and the object111may be an image of a user who enjoys the dancing game, and the other may be an image of a person who is near the user and watches the dancing game played by the user. As another example, one of the objects110and111may be an image of a user, and the other may be an image of a person or an animal passing by the user or an image of a thing placed near the user. For example, while a dancing game is being executed, some of a plurality of persons may be set as persons participating in the dancing game (that is, users), and the others may be set as persons not participating in the dancing game (that is, non-users).

Hereinafter, an image of a person or an animal is referred to as a dynamic object, and an image of a thing or a plant that is unable to autonomously move or travel is referred to as a static object.

As an example, displayed objects may include an image of a first user and an image of a second user who are participating in the computer game. As another example, objects may include an image of a user participating in the computer game and an image of a person not participating in the computer game. As another example, objects may include an image of a user participating in the computer game, and an image of an animal placed near the user. As another example, objects may include an image of a user participating in the computer game, and an image of a thing placed near the user, for example, furniture.

An example in which the objects110and111include an image of the first user and an image of the second user who participate in the computer game is described later with reference toFIG. 11. An example in which the objects110and111include an image of a user participating in the computer game and an image of a person not participating in the computer game is described later with reference toFIG. 14. Additionally, an example in which the objects110and111respectively include an image of a user participating in the computer game and an image of an animal placed near the user is described later with reference toFIG. 15. An example in which the objects110and111respectively include an image of a user participating in the computer game and an image of a thing placed near the user is described later with reference toFIG. 16.

Additionally, the object may be a virtual character set by a user. For example, the user may generate a virtual character which does not actually exist as an object, by setting the content.

Referring toFIG. 1A, the users120and121use the content when they are separated from each other by a predetermined distance or more. For example, if it is assumed that the content is a dancing game, since the users120and121are separate from each other so that they do not collide with each other, the users120and121may safely take a certain motion.

Referring toFIG. 1B, since the users120and121are close to each other (i.e., within a predetermined distance), if at least one of the users120and121takes a certain motion, the at least one of the other users120and121may collide with the user.

A collision described herein refers to a physical contact between the users120and121. Alternatively, a collision refers to a contact of the user120with another person, an animal, a plant, or furniture which is located near the user120. In other words, a collision refers to a contact of a part of the user120with a part of a thing121. As an example, if a part of a user such as his/her head, arm, trunk, or leg contacts a part of another user, it is understood that the two users collide with other. As another example, if a part of a user, such as his/her head, arm, trunk, or leg contacts the table, it is understood that the user and the table collide with each other.

If subjects collide with each other, a person or an animal corresponding to one of the subjects may get injured or a thing corresponding to the subjects may be broken or break down. Accordingly, the device100may predict a possibility of a collision between the subjects. If it is determined that the possibility of a collision between the subjects is high, the device100may output certain warning information. The warning information may be light, a color, or a certain image output from the screen of the device100, or sound output from a speaker included in the device100. Additionally, if the device100is executing the content, the device100may stop or pause execution of content as an example of the warning information.

According to the warning information output by the device100, a person or an animal corresponding to objects may stop a motion, and thus, a collision between the objects may be prevented.

InFIGS. 1A and 1B, it is shown that the device100executes the content, for example, a computer game, and outputs an image and/or a sound, but the executing of the content and the outputting of the image and sound are not limited thereto. Additionally, a camera may be an apparatus separate from the device100, or may be included in the device100. Additionally, an apparatus for executing content and an apparatus for outputting an image and sound may be present separately from each other.

FIG. 1Cillustrates a configuration map illustrating an example in which an apparatus for executing content and an apparatus for outputting an image and sound are present independently from each other according to an embodiment of the present disclosure.

Referring toFIG. 1C, a system1includes an apparatus101for executing content, a display apparatus102, and a camera103. If it is assumed that the content is a computer game, the apparatus101for executing content refers to a game console.

The camera103captures an image of a user participating in the computer game or at least one object placed near the user, and transmits the captured image to the apparatus101for executing the content. The captured image refers to an image that shows a form of the user or the at least one object.

The apparatus101for executing the content transmits the image, transmitted from the camera103, to the display apparatus102. Additionally, if it is determined that there is a possibility of a collision between subjects, the apparatus101for executing the content generates warning information indicating that there is the possibility of the collision. Additionally, the apparatus101for executing the content transmits the warning information to the display apparatus102. As an example, objects112may include an image of the first user120and an image of the second user121. As another example, the objects112may include an image of the user120participating in the computer game and the person121not participating in the computer game. As another example, the objects112may include an image of the user120participating in the computer game and an image of the animal121placed near the user120. As another example, the objects112may include the user120participating in the computer game and the thing121placed near the user120, for example, furniture.

The display apparatus102outputs the image or the warning information transmitted from the apparatus101for executing the content. The warning information may be light, a color, a certain image output from the screen of the display apparatus102, or sound output from a speaker included in the display apparatus102, etc.

As described above with reference toFIGS. 1A through 1C, content is executed by the device100or the apparatus101for executing content. However, the execution of content is not limited thereto. In other words, content may be executed by a server, and the device100or the display apparatus102may output an execution screen of the content.

FIG. 1Dillustrates a diagram for explaining an example of executing content, the executing being performed by a server, according to an embodiment of the present disclosure.

Referring toFIG. 1D, the server130may be connected to a device104via a network. A user122requests the server130to execute content. For example, the user122may log on to the server130via the device104and select content stored in the server130so as to execute the content.

When the content is executed, the server130transmits an image to be output to the device104. For example, if the content is a computer game, the server130may transmit an initial setting screen or an execution screen of the computer game to the device104.

The device104or a camera105transmits an image captured by the camera105to the server103. The image captured by the camera105(that is, the image that includes objects113and114) is output to a screen of the device104. The device104may combine and output the execution screen of the content with the image captured by the camera105. For example, if the content is a dancing game, the device104may output an image showing a motion required for the user122together with an image obtained by photographing the user122.

A subject123shown inFIG. 1Dmay be a person, an animal, a plant, or a thing, for example, furniture. In other words, the subject123may be another user who enjoys the content with the user122together or a person who is near the user122. Alternatively, the subject123may be an animal, a plant, or a thing which is placed near the user122.

While the content is being executed, if it is predicted that the subjects122and123are to collide with each other, the server130or the device104may generate a warning signal.

As an example, a possibility of a collision between the subjects122and123may be predicted by the server. If the subjects122and123are predicted to collide with each other, the server130may notify the device104that there is a possibility of a collision between the subjects122and123, and the device104may output a warning signal.

As another example, a possibility of a collision between the subjects122and123may be predicted by the device104. In other words, the server130may perform only execution of content, and the predicting of a possibility of a collision between the subjects123and123and the outputting of the warning signal may be performed by the device104.

Hereinafter, with reference toFIGS. 2 through 27, an example of preventing a collision between subjects, which is performed by a device (e.g., device100, device104, etc.), is described.

FIG. 2illustrates a flowchart showing an example of a method of preventing a collision between a plurality of subjects according to an embodiment of the present disclosure.

Referring toFIG. 2, the method of preventing a collision between the plurality of subjects includes operations, which are processed in time series by the device100as shown inFIG. 29or the apparatus101for executing content as shown inFIG. 31. Accordingly, it will be understood that descriptions to be provided with regard to the device100shown inFIG. 29or the apparatus101for executing content shown inFIG. 31may also be applied to the method described with reference toFIG. 2, even if the descriptions are not provided again.

In operation210, the device100obtains a first object representing a form of a first subject and a second object representing a form of a second subject. A form described herein is an outer shape of a subject, and includes a length and a volume of the subject as well as the shape of the subject. As an example, if it is assumed that an object is an image of a person, the object includes all information indicating an outer shape of the person such as a whole shape from a head to feet, a height, a length of legs, a thickness of a trunk, a thickness of arms, a thickness of legs of the person, and the like. As another example, if it is assumed that an object is an image of a chair, the object includes all information indicating an outer shape of the chair such as a shape, a height, a thickness of legs of the chair, and the like.

As an example, if it is assumed the device100executes the content, the first object refers to an image of a user who uses the content, and the second object refers to an image of another user who uses the content, or an image of a subject who does not use the content. If the second object is an image of a subject that does not use content, the second object refers to either a dynamic object or a static object. An image of a person or an animal is referred to as a dynamic object, and an image of a thing or a plant that may not autonomously move or travel is referred to as a static object. The content described herein refers to a program which requires a motion of a user. For example, a game executed based on a motion of the user may correspond to the content.

As another example, if it is assumed that content is not executed, the first object and the second object respectively refer to either a dynamic object or a static object. A meaning of the static object and the dynamic object is described above. For example, if it is assumed that the device100is installed at a location near a crosswalk, an image of a passenger walking across the crosswalk or a vehicle driving on a driveway may correspond to a dynamic object, and an image of an obstacle located near the sidewalk may correspond to a static object.

Hereinafter, it is described that the first object and the second object are respectively an image obtained by photographing a single subject (that is, a person, an animal, a plant, or a thing), but the first object and the second object are not limited thereto. In other words, the first object or the second object may be an image obtained by photographing a plurality of objects all together.

The device100may obtain a first image and a second image through an image captured by a camera. The device100may obtain not only information about an actual form of a subject (that is, a person, an animal, a plant, or a thing) corresponding to an object (hereinafter, referred to as form information), but also information about a distance between the subject and the camera and information about a distance between a plurality of subjects, based on the first image and the second image. Additionally, the device100may obtain information about colors of the subject and a background, according to a type of the camera.

For example, the camera may be a depth camera. The depth camera refers to a camera for generating an image that includes not only a form of a target to be photographed, but also three-dimensional (3D) information about a space (in other words, information about a distance between the target to be photographed and the camera or information about a distance between targets to be photographed). As an example, the depth camera may refer to a stereoscopic camera for generating an image that includes 3D information of a space by using images captured by two cameras that are present in locations that are different from each other. As another example, the depth camera may refer to a camera for generating an image that includes 3D information of a space by using a pattern of light which is emitted toward the space and reflected back to the camera by things within the space. As another example, the depth camera may be a camera for generating an image that includes 3D information of a space based on an amount of electric charges corresponding to light which is emitted toward the space including an object and reflected back to the camera by things that are present within the space. However, the camera is not limited thereto, and may correspond to any camera that may capture an image that includes information about a form of an object and a space without limitation.

Additionally, the device100may obtain form information of a subject corresponding to a subject, based on data stored in a storage unit (e.g., storage unit2940shown inFIG. 29). In other words, form information of a subject which may be obtained in advance and may be stored in a storage unit. In that case, the device100may read the form information stored in the storage unit.

Descriptions to be provided with reference toFIGS. 3 through 16may be performed before content is executed. For example, if it is assumed that the content is a computer game, the descriptions provided hereinafter with reference toFIGS. 3through16may correspond to operations that are to be performed before the computer game is started.

Hereinafter, with reference toFIG. 3, an example of obtaining form information, which is performed by a device (e.g., device100), is described.

FIG. 3illustrates a diagram for explaining an example of obtaining form information, the obtaining being performed by a device, according to an embodiment of the present disclosure.

Referring toFIG. 3, a user310, the device100, and a camera320are illustrated. Hereinafter, for convenience of description, it is described that the device100includes a screen for displaying an image, and the camera320and the device100are devices separate from each other. However, the camera320and the device100are not limited thereto. In other words, the camera320may be included in the device100. Additionally, it is described that the camera320is a camera for generating an image by using light which is emitted toward a space including an object and reflected back to the camera by both the object and things within the space. However, as described with reference toFIG. 2, the camera320is not limited thereto.

If a screen of the device100and a touch pad form a layered structure to constitute a touchscreen, the screen may be also used as an input unit as well as an output unit. The screen may include at least one of a liquid crystal display (LCD), a thin-film transistor-liquid crystal display (TFT-LCD), an organic light-emitting diode (OLED), a flexible display, a 3D display, and an electrophoretic display. According to an implementation type of the screen, the device100may include two or more screens. The two or more screens may be disposed to face each other by using a hinge.

The camera320emits light toward a space that includes the user310, and obtains light reflected by the user310. Then, the camera320generates data regarding a form of the user310by using the obtained light.

The camera320transmits the data regarding the form of the user310to the device100, and the device100obtains form information of the user310by using the transmitted data. Then, the device100outputs an object that includes the form information of the user310to the screen of the device100. Thus, the form information of the user310may be output to the screen of the device100. Additionally, the device100may also obtain information about a distance between the camera320and the user310by using the data transmitted from the camera320.

Hereinafter, with reference toFIG. 4, an example of obtaining the form information of the user310by using the data transmitted from the camera320, which is performed by the device100, is described.

FIGS. 4A and 4Billustrate diagrams for explaining an example of obtaining form information of a user, the obtaining being performed by a device, according to an embodiment of the present disclosure.

Referring toFIGS. 4A and 4B, an example of data that is extracted from data transmitted from the camera320and an example of a form410of a user which is estimated by using the extracted data are respectively illustrated. In an embodiment, the estimating is performed by the device100.

The device100extracts a predetermined range of area from the data transmitted from the camera320. The predetermined range of area refers to an area in which a user is present. In other words, the camera320emits light toward a space, and then, if the emitted light is reflected from things (including a user) which are present in the space and reflected back to the camera320, the camera320calculates a depth value corresponding to each pixel, by using the light that is reflected back to the camera320. The calculated depth value may be expressed as a degree of brightness of a point corresponding to a pixel. In other words, if light emitted by the camera320is reflected from a location near the camera320and reflected back to the camera320, a dark spot corresponding to the location may be displayed. If light emitted by the camera320is reflected from a location far away the camera320and returns to the camera320, a bright spot corresponding to the location may be displayed. Accordingly, the device100may determine a form of a thing (including the user) located in the space toward which the light is emitted and a distance between the thing and the camera320, by using data transmitted from the camera320(for example, a point corresponding to each pixel).

The device100may extract data corresponding to an area, in which the user is present, from the data transmitted from the camera320, and obtain information about a form of the user by removing noise from the extracted data. Additionally, the device100may estimate a skeleton representing the form of the user, by comparing the data obtained by removing the noise from the extracted data to various poses of a person which are stored in the storage unit2940. Additionally, the device100may estimate the form410of the user by using the estimated skeleton and obtain form information of the user by using the estimated form410of the user.

Hereinafter, an example of outputting form information of a user obtained by the device100is described with reference toFIG. 5.

FIG. 5illustrates a diagram showing an example of outputting form information of a user on a screen of a device according to an embodiment of the present disclosure.

Referring toFIG. 5, the form information of a user may be output to a screen510of the device100. For example, a height520, an arm length530, and a leg length540of the user may be output to the screen510. Additionally, information about a gender550of the user may be output to the screen510. The gender550of the user may be determined by the device100by analyzing data transmitted from the camera320or may be input directly by the user.

Additionally, an object560corresponding to the user may be output to the screen510. The object560may be a form corresponding to data obtained by the camera320or may be a virtual form generated by form information of the user. For example, the object560may be a form photographed by the camera320, or may be a virtual form generated by combining the height520, the arm length530, the leg length540, and the gender550. Additionally, a form of the object560may be determined based on information directly input by the user. For example, the object560may be a game character generated so that form information of the user is reflected in the object560.

An icon570for asking the user whether or not the form information of the user may be stored may be displayed on the screen510. If at least one of the height520, the arm length530, the leg length540, the gender550, and the object560should not be stored (e.g., needs to be modified), the user selects an icon indicating “No”. Then, the device100may obtain the form information of the user again, and the camera320may be re-operated. If the user selects an icon indicating “Yes”, the form information of the user is stored in the storage unit2940included in the device100.

As described above with reference toFIGS. 3 through 5, the device100may identify a user by using data transmitted from the camera, and obtain form information of the identified user. The device100may also add another person or thing or delete a subject captured by the camera320, based on information input by the user. In other words, the user may add a virtual subject or a subject that is not included in the data transmitted by the camera320. Additionally, the user may delete an object that is photographed by the camera320and displayed on the screen of the device100. Hereinafter, an example of additionally displaying an object on a screen or deleting a displayed object, which is performed by the device100, is described with reference toFIGS. 6A through 7B.

FIGS. 6A and 6Billustrate diagrams for explaining an example of adding an object, the adding being performed by a device, according to an embodiment of the present disclosure.

Referring toFIG. 6A, an object610representing a user is shown on a screen of the device100. It is assumed that the object610, shown inFIG. 6A, is an image of a user of content.

Data transmitted by the camera320may not include all information about in a photographing space. In other words, the camera320may not generate data that includes information about all persons, animals, plants, and things located in the photographing space, according to an effect such as performance or a surrounding environment of the camera320. The user may arbitrarily set a virtual object (that is, an image representing a virtual subject), and the device100may obtain form information about the set virtual object.

As an example, even though a dog is actually present in a location near a user, data generated by the camera320may not include information about a form of the dog. Then, the user may input a form620of the dog via an input unit (e.g., input unit2910) included in the device100, and the device100may output an object representing the dog based on the input form620of the dog. In this case, the device100may estimate form information of the dog (for example, a size or a length of legs of the dog), by using a ratio between an object620representing the dog and the object610representing the user.

As another example, even though a chair is not actually present in a location near the user, the user may input a form630of the chair via the input unit2910included in the device100. Additionally, the device100may output an object representing the chair to the screen based on the input form630of the chair. The device100may estimate form information of the chair (for example, a shape or a size of the chair), by using a ratio between the object630representing the chair and the object610representing the user. Alternatively, the device may output a simple object, such as a box, representing the chair as illustrated inFIG. 6A.

Referring toFIG. 6B, the object610representing the user and objects621and631which are added by the user are output to the screen of the device100. The device100may output the objects621and631, added based on information input by the user, to the screen.

FIGS. 7A and 7Billustrate diagrams for explaining an example of deleting an object, the deleting being performed by a device, according to an embodiment of the present disclosure.

Referring toFIG. 7A, objects710,720, and730are shown on a screen of the device100. It is assumed that the object710, shown inFIG. 7A, is an object representing a user of content.

From among objects output to the screen, an object that is not necessary for the user to use content may be present. For example, if it is assumed that the content is a dancing game, from among the objects output to the screen, an object representing a subject having a low possibility of a collision with the user when the user is taking a motion may be present. Then, the user may delete the object representing the subject having a low possibility of a collision with the user.

For example, even though a table and a chair are present near the user, since a distance between the chair and the user is long, a possibility of a collision between the user and the chair may be very low even when the user takes a certain motion. In this case, the user may delete the object730representing the chair, via the input unit2910.

FIG. 7Bshows the object710representing the user and the object720which is not deleted by the user. The device100may not output the object730, which is deleted based on information input by the user, to the screen.

Referring back toFIG. 2, in operation220, the device100determines a first area, which includes points reachable by at least a part of the first subject, by using form information of the first subject. Additionally, in operation230, the device100determines a second area, which includes points reachable by at least a part of the second subject, by using form information of the second subject.

For example, if it is assumed that an object is an image of a person, a part of a subject refers to a part of a body of a user such as a head, a trunk, an arm, or a leg of the user. Hereinafter, for convenience of description, an area, which includes the points reachable by at least a part of a subject, is defined as a ‘range of motion’. For example, an area including all points that a person may reach by stretching his/her arms or legs may be referred to as a ‘range of motion’.

As an example, a range of motion of a subject may be an area which includes points that a part of a user may reach while the user remains still in a designated area. As another example, a range of motion of a subject may be an area which includes points that a part of a user may reach while the user is moving along a certain path. As another example, a range of motion of a subject may be an area which includes points that a part of a user may reach as the user moves in a designated location.

An example in which a combination of points, which a part of a user may reach while the user remains still in a designated area, constitutes a range of motion of a subject is described later with reference toFIGS. 8 through 9BandFIGS. 11 through 16B.

Additionally, an example in which a combination of points, which a part of a user may reach as the user moves in a certain location, constitutes a range of motion of a subject is described later with reference toFIGS. 10A and 10B. Additionally, an example in which a combination of points, which a part of a user may reach while the user is moving along a certain path, constitutes a range of motion of the subject is described later with reference toFIGS. 17 and 18.

FIG. 8illustrates a diagram showing an example of outputting a range of motion of a subject to a screen of a device according to an embodiment of the present disclosure

Referring toFIG. 8, the device100determines a range of motion of a subject by using form information of the subject. The device100determines points reachable by a part of a user when the user remains still in a place, in consideration of values of lengths included in form information of the subject (for example, if the subject is assumed as the person, a height, an arm length, a leg length of a person, or the like), and determines a range of motion of the user by combining the determined points with each other.

As an example, the device100may determine a range of motion of a subject based on a mapping table stored in a storage unit (e.g., storage unit2940). The mapping table refers to a table that shows a ratio between a range of motion and a size of the subject according to a type of the subject represented by the object (for example, if the subject is a person, a height, an arm length, or a leg length of the subject). For example, the mapping table may include information indicating that the radius of a range of motion of the person is equal to three-quarters of the length of an arm of the person or four-fifths of the length of a leg of the person. Additionally, the mapping table may include information about motions that may be taken by a user according to a type of content. For example, motions that may be taken by the user in a soccer game may be different from motions that may be taken by the user in a dancing game. Accordingly, a range of motion that may be determined when the user participates in the soccer game may be different from a range of motion that may be determined when the user participates in the dancing game. The mapping table may include information about motions that may be taken by the user according to a type of content, and store a size of a range of motion in which body sizes of the user are reflected, with respect to each motion. Accordingly, the device100may determine a range of motion variously according to a type of the content.

As another example, the device100may determine a range of motion based on a sum of values of lengths of parts of a subject. If it is assumed that an object is an image of a person, the device100may determine a length, corresponding to twice a length of an arm of the person, as a diameter of a range of motion.

The device100may output the range of motion determined by the device100to the screen810. The range of motion output to the screen810may correspond to a diameter of a circle if the range of motion constitutes the circle, or a length of a side constituting a rectangle if the range of motion constitutes the rectangle. In other words, a user may determine the range of motion based on information820that is output to the screen810. If an object830representing the user is output to the screen810, the range of motion may be displayed as an image840near the object.

For example, the device100calculates a ratio between a range of motion and a length of the object830. For example, if it is assumed that a height of a person corresponding to the object830is 175.2 cm and a range of motion of the person is 1.71 m, the device100calculates a ratio of the range of motion to the height of the person as 171/175.2=0.976. Additionally, the device100calculates a length of an image840that is to be displayed near the object830, by using the calculated ratio and a value of a length of the object830which is displayed on the screen810. For example, if it is assumed that the length of the object830displayed on the screen810is 5 cm, the device100calculates the length of the image840that is to be displayed near the object830as 0.976*5 cm=4.88 cm. The image840corresponding to the length calculated by the device100is displayed on the screen810. For example, the shape of the image840may be circular with a diameter having a value of the length calculated by the device100.

As described with reference toFIG. 8, the device100determines a range of motion of a subject (for example, a user) based on form information of the subject. The device100may determine a range of motion of the subject based on setting information input by the user. In this case, the form information obtained by the device100may not be taken into account in the determining of the range of motion of the subject.

FIGS. 9A through 9Cillustrate diagrams for explaining an example of determining a range of motion of a subject based on setting information input by a user, the determining being performed by a device, according to an embodiment of the present disclosure.

Referring toFIG. 9A, an object920is output to a screen910of the device100. A user may transmit setting information for setting a range of motion to the device100via an input unit (e.g., an input unit2910).

For example, the user may set a certain area930according to the object920, which is output to the screen910, via the input unit (e.g., an input unit2910). The area930set by the user may be shown in the shape of a circle, a polygon, or a straight line with the object920at the center thereof. The device100may output the area930set by the user to the screen910.

Referring toFIG. 9B, the device100may determine a range of motion of the subject based on the area930set by the user. For example, if it is assumed that the area930set by the user is circular, the device100may determine an area having a shape of a circular cylinder as a range of motion, wherein the circular cylinder has a bottom side having a shape of a circle set by the user and a length corresponding to a value obtained by multiplying a height of the user by a certain rate. The rate by which the height of the user is multiplied may be stored in a storage unit (e.g., a storage unit2940) included in the device100.

The device100may output the range of motion determined by the device to the screen910. If the range of motion has a shape of a circle, a diameter of the circle may be output to the screen910as the range of motion. If the range of motion has a shape of a rectangle, a length of a side of the rectangle may be output to the screen910as the range of motion. In other words, information940through which the user may recognize a size of the range of motion may be output to the screen910. If the object920is output to the screen910, the range of motion may be displayed as an image950to include the object920.

Referring toFIG. 9C, the device100may determine a range of motion of a user960so that a gesture of the user960is reflected in the range of motion. For example, when an object971representing the user960is output, the device100may request the user960to perform a certain gesture using a direction or instructions980displayed on a screen of the device100.

The device100may output a first gesture972that is to be performed by the user960and output in real time an appearance973of the user960that is photographed by the camera320to the screen. Accordingly, the user960may check in real time whether the current shape of the user960is identical to the first gesture972.

When the first gesture972of the user960is photographed, the device100calculates a range of motion of the user960in consideration of both form information of the user960(for example, a height, a length of an arm, a length of a leg of the user960, or the like) and the gesture973of the user960together. For example, if it is assumed that a length of one leg of the user960is 1.5 m and a width of a chest of the user960is 0.72 m, the device100may calculate a range of motion of the user960corresponding to the first gesture972as 3.72 m, wherein the gesture972is performed when the user960spreads his/her arms widely.

The device100may output to the screen a value991of the calculated range of motion to the screen, and output the range of motion of the user960to include the object971as an image974.

Here, a plurality of gestures that are to be performed by the user960may be selected according to details of content. For example, if the content is a dancing game, the device100may calculate a range of motion in advance with respect to each of the plurality of gestures that are to be performed while the user960enjoys the dancing game.

In other words, if a first range of motion991of the user960according to the first gesture972is determined, the device100outputs a second gesture975to the screen, and outputs in real time an appearance977of the user960which is photographed by the camera320. Then, the device100calculates a range992of motion of the user930according to the second gesture975. Then, the device100may output the range992of motion of the user930near the object as an image976.

FIGS. 10A and 10Billustrate diagrams for explaining an example of determining a range of motion of a subject, the determining being performed by a device, according to an embodiment of the present disclosure.

Referring toFIG. 10A, an example is shown in which a left side of a range of motion1020is symmetrical to a right side of the range of motion1020with respect to a center of an object1010. If it is assumed that the subject is a person, a range in which the person stretches his/her arm or leg while standing in a certain location may be a range of motion of the person. Accordingly, the device100may determine a circular cylinder, having a center at a trunk of the person, as the range1020of the motion.

Referring toFIG. 10B, an example is shown in which a left side of a range of motion1040is not symmetrical to a right side of the range of motion1040with respect to the center of an object1030. If it is assumed that the subject is a person, movement of the person may not be symmetrical. For example, as shown inFIG. 10B, if the person moves one leg forward while the other leg remains in an original location, the left side of the person's body may not be symmetrical to the right side of the person's body with respect to the center of the person's body.

Accordingly, the device100may determine a range of motion of the subject based on a combination of the farthest points reachable by parts of the subject as the subject moves in a certain area.

As described with reference toFIGS. 8 through 10B, the device100may obtain form information of a subject and determine a range of motion of the subject by using the form information. Additionally, the device100may determine a range of motion of a subject based on a setting by a user. The device100may obtain form information of each of a plurality of subjects, and determine a range of motion with respect to each of the plurality of subjects.

Hereinafter, with reference toFIGS. 11 through 16B, examples of determining a range of motion with respect to each of a plurality of subjects, which is performed by a device, are described.

FIG. 11illustrates a diagram for explaining an example of obtaining form information of a plurality of subjects, the obtaining being performed by a device, according to an embodiment of the present disclosure.

Referring toFIG. 11, an example of a plurality of users1110and1120is illustrated. For convenience of description, a total of two users1110and1120are shown inFIG. 11, but the plurality of users1110and1120are not limited thereto.

The device110obtains form information of each of the plurality of users1110and1120. An example of obtaining form information of each of the plurality of users1110and1120, which is performed by the device100, is described with reference toFIGS. 3 through 4B. For example, the device100may obtain form information of each of the plurality of users1110and1120by using data corresponding to an image captured by the camera320. The camera320may capture an image so that the image includes all of the plurality of users1110and1120. The camera320may also capture a first image that includes a first user1110, and then, capture a second image that includes a second user1120.

FIG. 12illustrates a diagram showing an example of outputting form information and a range of motion of each of a plurality of users to a screen of a device100according to an embodiment of the present disclosure.

Referring toFIG. 12, form information and a range of motion1230of a second user as well as form information and a range of motion1220of a first user may be output to the screen1210. An example of determining a range of motion of a first user and a range of motion of a second user, which is performed by the device100, is described with reference toFIGS. 8 through 10. InFIG. 12, it is assumed that a total of two users are present. However, as described above, the number of users is not limited. Accordingly, form information and a range of motion output to the screen1210may be increased or decreased in correspondence with a number of users.

Additionally,FIG. 12shows that the form information and the range of motion1220of the first user and the form information and the range of motion1230of the second user are output at the same time, but the outputting is not limited thereto. For example, the form information and the range of motion1220of the first user and the form information and the range of motion1230of the second user may be alternately output according to an elapse of time.

FIG. 13Aillustrates a diagram showing an example of outputting a plurality of objects on a screen of a device according to an embodiment of the present disclosure.

Referring toFIG. 13A, the device100may output a plurality of objects1320and1330to a screen1310. Accordingly, a point at which subjects respectively corresponding to each of the objects1320and1330are located currently may be checked in real time.

The device100may display ranges of motion1340and1350of each of the subjects together with the objects1320and1330. Accordingly, it may be checked in real time whether or not ranges of motion of the subjects overlap with each other, based on a current location of the subjects.

If the ranges of motion1340and1350of the subjects overlap with each other, the device100may not execute content. For example, if the content is a computer game, the device100may not execute the computer game. Hereinafter, this is described in more detail with reference toFIG. 13B.

FIG. 13Billustrates a diagram showing an example in which a device does not execute content according to an embodiment of the present disclosure.

Referring toFIG. 13B, it is described that the objects1320and1330are images of users participating in a computer game. If the range of motion1340of a first user1320overlaps with the range of motion1350of a second user1330, the device100may not execute the computer game.

For example, the device100may display an image1360or output a sound indicating that the range of motion1340overlaps with the range of motion1350on the screen1310, and then, may not execute the computer game. As the first user1320or the second user1330moves, if the range of motion1340and the range of motion1350do not overlap with each other, the device100may execute the computer game thereafter.

Hereinafter, with reference toFIGS. 14A through 16B, examples of determining a range of motion of a user of content and a range of motion of a person, an animal, or a thing that does not use the content are described.

FIGS. 14A and 14Billustrate diagrams for explaining an example of obtaining form information of a plurality of subjects and determining a range of motion of the plurality of subjects, the obtaining and the determining being performed by a device, according to an embodiment of the present disclosure.

Referring toFIGS. 14A and 14B, the plurality of subjects1410and1420, shown inFIG. 14A, respectively represent a user1410who uses the content and a non-user1420who does not use the content. The non-user1420may be present in an area near the user1410. For example, if it is assumed that the content is a computer game, the user1410refers to a person who participates in the computer game, and the non-user1420refers to a person who does not participate in the computer game.

The device100obtains form information of the user1410and form information of the non-user1420, and determines each range of motion of the user1410and the non-user1420. As described above, the device100may obtain each form information of the user1410and the non-user1420via data transmitted from the camera320.

FIG. 14Bshows each form of the user1410and the non-user1420output to a screen1430of the device100. The device100may display the respective ranges of motion1440and1450of the user1410and the non-user1420together with an object representing the user1410and an object representing the non-user1420. Accordingly, it may be checked in real time whether ranges of motion of the user1410and the non-user1420overlap with each other, based on a current location of the user1410and the non-user1420.

FIGS. 15A and 15Billustrate diagrams for explaining another example of obtaining form information of a plurality of subjects and determining a range of motion of the plurality of subjects, the obtaining and the determining being performed by a device, according to an embodiment of the present disclosure.

Referring toFIGS. 15A and 15B, a plurality of objects1510and1520respectively represent a user1510of content and an animal1520.

The device100obtains respective form information of the user1510and the animal1520, and calculates a range of motion. As described above, the device100may obtain respective form information of the user1510and the animal1520by using the camera320.

FIG. 15Bshows each form of the user1510and the animal1520output to a screen1530of the device100. The device100may display respective ranges of motion1540and1550of the user1510and the animal1520together with an object representing the user1510and an object representing the animal1520.

FIGS. 16A and 16Billustrate diagrams for explaining an example of obtaining form information of a plurality of subjects and determining a range of motion of the plurality of subjects, the obtaining and the determining being performed by device, according to an embodiment of the present disclosure.

Referring toFIGS. 16A and 16B, the plurality of subjects1610,1620and1630respectively refer to a user1610of content and things1620and1630. InFIG. 16A, the things1620and1630are illustrated as an obstacle which is present in an area near the user1610, such as furniture.

The device100obtains respective form information of the user1610and the obstacle1620or1630, and calculates a range of motion. As described above, the device100may obtain respective form information of the user1610and the obstacle1620or1630by using the camera320.

FIG. 16Bshows each form of the user1610and the obstacle1620or1630output to a screen1640of the device100. The device100may display a range of motion1650of the user1610, from among the user1610and the obstacle1620or1630, together with an object representing the user1610an object representing the obstacle1620or1630.

FIG. 17illustrates a flowchart illustrating an example of obtaining form information of a subject and determining a range of motion of the subject, the obtaining and the determining being performed by a device, according to an embodiment of the present disclosure.

Referring toFIG. 17, operations are processed in time series by the device100or the apparatus101for executing content as shown inFIG. 29 or 31. Accordingly, it will be understood that the descriptions provided with reference toFIGS. 1 through 16may also be applied to the operations described with reference toFIG. 17, even if the descriptions are not provided here again.

Additionally, operation1710described with reference toFIG. 17is substantially identical to operation210described with reference toFIG. 1. Accordingly, a detailed description with regard to operation1710is not provided here.

In operation1720, the device100predicts a moving path of a first subject and a moving path of a second subject.

The first subject and the second subject may be users of content, and the content may be a game that requires a motion and moving of a user. For example, if it is assumed that the content is a dancing game or a fight game, there may be cases when the user may have to move at a same place or to another place according to an instruction made by details of the content.

The device100analyzes the details of content, and predicts the moving path of the first object and the moving path of the second object based on the analyzed details of the content. For example, the device100may analyze the details of the content by reading the details of the content stored in a storage unit (e.g., storage unit2940). Accordingly, the device100may prevent a collision between the subjects regardless of a type of content used by the user.

If the first subject is a user of the content, and the second subject is a non-user of the content, the device100predicts only a moving path of the user. In other words, the device100does not predict a moving path of the non-user. The first subject may be set as a user, and the second subject may be set as a non-user in advance before the content is executed. Accordingly, the device100may determine which subject is a user, from among the first and second subjects.

In operation1730, the device100determines a first area based on form information and the moving path of the first subject. In other words, the device100determines a range of motion of the first subject, based on form information and the moving path of the first subject.

In operation1740, the device100determines a second area based on second information and a moving path of the second object. In other words, the device100determines a range of motion of the second subject, based on form information and the moving path of the second subject. If the second subject is a non-user of the content, the device100may determine a range of motion of the second subject by using only the form information of the second subject.

Hereinafter, with reference toFIG. 18, an example of determining a range of motion of a subject based on form information and a moving path of the subject, which is performed by a device, is described.

FIG. 18illustrates a diagram for explaining an example of determining a range of motion of a subject based on form information and a moving path of the subject, the determining being performed by a device, according to an embodiment of the present disclosure.

Referring toFIG. 18, a first user1810moving from a left to right direction and a second user1820moving from a right to left direction are shown.

The device100may determine a range of motion1831of the first user1810in an initial location of the first user1810, based on form information of the first user1810. In other words, the device100may determine the range of motion1831of the first user1810when the first1810remains still in the initial location.

There may be cases when a user may have to move in a particular direction according to details of content executed by the device100. There may also be cases when a user may have to take a particular motion while the user is moving, according to the details of the content. If it is assumed that the first user1810has to take a particular motion while moving from left to right, the device100determines ranges of motions1832and1833in each location of the first user1810which is included in a path in which the first user1810moves.

The device100may determine a final range of motion1830of the first user1810, by combining all the determined ranges of motion1831through1833.

The device100may determine a range of motion of the second user1820by using a same method as the method of determining a range of motion of the first user1810. In other words, the device100determines a range of motion1841in an initial location of the second user1820, and determines ranges of motion1842through1844in each location of the second user1820which is included in the moving path of the second user1820. Additionally, the device100may determine a final range of motion1840of the second user1820, by combining the determined ranges of motion1841through1844.

The device100may determine the ranges of motion1831through1833and the ranges of motion1841through1844, in consideration of motions that are to be taken by the users1810and1820while the users1810and1820are moving. For example, the device100may calculate a range of motion by using a mapping table stored in a storage unit (e.g., storage unit2940). The mapping table includes information about a range of motion that is necessary in addition to a range of motion determined by using form information of the users1810and1820, according to a type of motion required by content. For example, if a motion required by the content is a motion in which a user stretches an arm while taking a step with one foot, the mapping table may include information indicating that a range of motion amounting to 1.7 times the range of motion, which is determined by using the form information of the users1810and1820, is additionally required.

InFIG. 18, an example in which the users1810and1820move in a two-dimensional (2D) space is described, but a space in which an user moved is not limited thereto. In other words, there may be a case when the users1810and1820may have to move in a 3D space according to details of content. Accordingly, even when the users1810and1820are to move in a 3D space, the device100may determine a range of motion of each of the users1810and1820according to the method described with reference toFIG. 18.

Referring back toFIG. 2, in operation240, the device100predicts whether the first subject and the second subject are to collide with each other, based on whether the first range and the second range overlap with each other. In other words, the device100predicts whether the first and second subjects are to collide with each other, based on whether a range of motion of the first subject overlaps with a range of motion of the second subject. The predicting of whether the first and second subjects are to collide with other refers to predicting of a possibility of a collision between the first and second subjects when the first and second subjects do not collide with each other. For example, if a difference between a range of motion of the first subject and a range of motion of the second subject has a value less than a certain value, the device100may determine that the first subject and the second subject are to collide with each other.

Hereinafter, with reference toFIG. 19, an example of predicting whether a first subject is to collide with a second subject, which is performed by a device, is described.

FIG. 19illustrates a flowchart for explaining an example of predicting whether a first subject and a second subject are to collide with each other, the predicting being performed by a device100, according to an embodiment of the present disclosure.

Referring toFIG. 19, operations are processed in time series by the device100or the apparatus101for executing content as shown inFIG. 29 or 31. Accordingly, it will be understood that the description provided with reference toFIG. 1may also be applied to the operations described with reference toFIG. 19, even if the descriptions are not provided here again.

In operation1910, the device100calculates a shortest distance between the first subject and the second subject. The shortest distance is calculated in consideration of a range of motion of the first subject and a range of motion of the second subject. In more detail, the device1000selects a first point that is nearest the second subject, from among points included in the range of motion of the first subject. Additionally, the device100selects a second point that is nearest the first subject, from among points included in the range of motion of the second subject. Additionally, the device100calculates a distance between the first point and the second point, and determines the calculated distance as the shortest distance between the first subject and the second subject.

In operation1920, the device100determines whether the shortest distance is greater than a predetermined distance value. The certain distance value may be a value pre-stored in a storage unit (e.g., storage unit2940) or a value input by a user.

Hereinafter, with reference toFIGS. 20A through 20C, an example of comparing a shortest distance to a predetermined distance value, which is performed by a device, is described.

FIGS. 20A through 20Cillustrate diagrams for explaining an example of comparing a shortest distance between subjects to a predetermined distance value, the comparing being performed by a device, according to an embodiment of the present disclosure.

Referring toFIG. 20A, an example in which a range of motion2010of a first user and a range of motion2020of a second user overlap with each other is illustrated. In other words, the range of motion2010of the first user includes the range of motion2020of the second user.

In this case, a shortest distance between the first user and the second user, which is calculated by the device100, has a value of 0. In other words, the case when the shortest distance has a value of 0 includes a case when the range of motion2010of the first user overlaps with the range of motion2020of the second user, as well as a case when the range of motion2010of the first user contacts the range of motion2020of the second user at one point.

Accordingly, if the shortest distance between the first user and the second user has a value of 0, the device100determines that a value of the shortest distance is less than the predetermined distance value.

Referring toFIG. 20B, a diagram showing a case when a shortest distance between users has a value of m is illustrated. Here, a predetermined distance value k is assumed as a value greater than m.

A range of motion2030of a first user and a range of motion2040of a second user do not overlap with each other, nor contact each other at one point. The device100selects a first point that is nearest the second user, from among points included in the range of motion2030of the first user, and a second point that is nearest the first user, from among points included in the range of motion2040of the second user. Then, the device100determines a distance from the first point to the second point as a shortest distance m between the first user and the second user.

Since the shortest distance m is less than the predetermined distance value k, the device100performs operation1930shown inFIG. 19.

Referring toFIG. 20C, a diagram showing a case when a shortest distance between users has a value of n is illustrated. Here, a predetermined distance value k is assumed to be a value less than n.

A range of motion2050of a first user and a range of motion2060of a second user do not overlap with each other, nor contact each other at one point. The device100selects a first point that is nearest the second user, from among points included in the range of motion2050of the first user, and a second point that is nearest the first user, from among points included in the range of motion2060of the second user. Then, the device100determines a distance from the first point to the second point as a shortest distance n between the first user and the second user.

Since the shortest distance n is greater than the predetermined distance value k, the device100performs operation1940shown inFIG. 19.

Referring back toFIG. 19, if the shortest distance is greater than the predetermined distance value k, the device100determines that the first subject and the second subject are not to collide with each other in operation1940. Here, a situation in which the first subject and the second subject are not to collide with each other includes a situation in which there is no possibility that the first subject and the second subject may collide with each other if the first subject or the second subject takes a different motion from a current motion. Additionally, if the shortest distance is less than the predetermined distance value k, the device100determines that the first subject and the second subject are to collide with each other in operation1930. In this case, a situation in which the first subject and the second subject are to collide with each other includes a situation in which there is such a possibility that the first subject and the second subject may collide with each other even if the first subject or the second subject takes a motion different from a current motion.

FIGS. 21A through 21Cillustrate diagrams showing an example of an image output to a screen of a device, if the device determines that subjects are to collide with each other according to an embodiment of the present disclosure.

FIGS. 21A and 21Bshow an example of outputting dynamic objects (for example, images representing users) to a screen2110.FIG. 21Cshows an example of outputting a dynamic object (for example, an image representing a user) and static objects (for example, an images representing furniture) to a screen.

Referring toFIGS. 21A through 21C, if it is predicted that subjects are to collide with each other, the device100may output warning information indicating that the subjects are to collide with each other. The warning information may be light, a color, or a certain image output from a screen of the device100, or sound output from a speaker included in the device100. Additionally, if the device100is executing content, the device100may pause the executing of the content as an example of the warning information.

For example, the device100may output images2120and2130indicating the warning information to the screen2110. As an example, referring toFIG. 21A, the device100may request a user to move to a place that is far away from another user, by outputting the image2120indicating a high possibility of a collision between users. Even though a range of motion2140of a first user and a range of motion2150of a second user do not overlap with each other, if a shortest distance between the range of motion2140and the range of motion2150has a value less than a predetermined distance value k, the device100may output the image2120indicating the high possibility of the collision between the first and second users.

As another example, referring toFIG. 21B, the device100may pause execution of content that is currently executed, while outputting the image2130indicating a very high possibility of a collision between users at a same time. If the range of motion2140of the first user and the range of motion2150of the second user overlap with each other, the device100may pause execution of the content that is currently executed, while outputting the image2130at a same time.

Referring toFIG. 21C, if a chair2180is located in a range of motion2170of a user, the device100may output an image2160requesting to move the chair2180out of the range of motion2170of the user.

After the executing of the content is paused, if ranges of motion of subjects become far away from each other so that a value of a distance therebetween is greater than a predetermined value, the device100re-executes the content. Hereinafter, with reference toFIG. 21D, an example of resuming execution of content after execution of the content is paused, the resuming being performed by a device, is described.

FIG. 21Dillustrates a diagram showing an example of resuming execution of content after execution of the content is paused, the resuming being performed by a device, according to an embodiment of the present disclosure.

Referring toFIG. 21D, when content is executed, if it is predicted that a first user2191and a second user2192are to collide with each other, the device100may pause the execution of the content, and output an image2195indicating that the first user2191and the second user2192are to collide with each other. Even when the execution of the content is paused, the camera320continuously photographs the first user2191and the second user2192. Accordingly, the device100may check whether a distance between the first user2191and the second user2192is increased or decreased after the execution of the content is paused.

After the execution of the content is paused, if the first user2191and/or the second user2191moves from a current position, a distance therebetween may be increased. In other words, the first user2191may move in such a direction that the first user2191becomes far away from the second user2192or the second user2192may move in such a direction that the second user2192becomes far away from the first user2191. As at least one from the group consisting of the first and second users2191and2192moves, if a value of a distance between a range of motion2193of the first user2191and a range of motion2194of the second2192becomes greater than a predetermined value, the device100may resume the execution of the content. In other words, as at least one of the first and second users2191and2192moves, if it is determined that there is no such possibility that the users2191and2192are to collide with each other, the device100may resume the execution of the content. In this case, the device100may output an image2196indicating that the execution of the content is resumed to the screen.

As described above, the device100may determine a range of motion based on form information of respective subjects, and predict whether the subjects are to collide with each other. Accordingly, the device100may prevent a collision between the subjects in advance.

FIG. 22illustrates a diagram for explaining an example of comparing a shortest distance between subjects to a predetermined distance value, the comparing being performed by a device100, according to an embodiment of the present disclosure.

Referring toFIG. 22, it is shown that both a first subject2210and a second subject2220are users of content. However, the first subject2210and the second subject2220are not limited thereto. In other words, the second subject2220may be a non-user of the content, or may correspond to a thing such as an animal, a plant, or furniture.

As described above with reference toFIG. 18, the device100may determine ranges of motion2230and2240of the users2210and2220, based on at least one of a moving path of the first user2210and the second user2220and a motion that is to be taken by the first user2210and the second user2220. The device100calculates a shortest distance k between the first object2210and a second object2220based on the ranges of motion2230and2240of the users2210and2220, and predicts a possibility of a collision between the first user2210and a second user2220based on the shortest distance k. A method of predicting a possibility of a collision between users, which is performed by the device100, is described above with reference toFIGS. 19 through 20C.

FIGS. 23A through 23Cillustrate diagrams showing an example of an image output to a screen of a device, if the device determines that users are to collide with each other, according to an embodiment of the present disclosure.

Referring toFIGS. 23A through 23C, if it is predicted that the users are to collide with each other, the device100may output an image2320indicating that the objects are to collide with each other to a screen2310. As an example, as shown inFIG. 23A, the device100may output the image2330notifying to the users a possibility of a collision therebetween to the screen2310. As another example, as shown inFIG. 23B, the device100may pause execution of content, while outputting the image2330notifying to the users a possibility of a collision therebetween to the screen2310at the same time. After the images2320and2330are output to the screen2310, if the users readjust their location, the device100re-predicts a possibility of a collision between the users based on the readjusted location. As shown inFIG. 23C, if it is determined that a collision between the users is impossible, the device100may continuously execute the content instead of outputting the images2320and2330to the screen2310.