U.S. Pat. No. 9,700,787

SYSTEM AND METHOD FOR FACILITATING INTERACTION WITH A VIRTUAL SPACE VIA A TOUCH SENSITIVE SURFACE

AssigneeDeNA Co., Ltd.

Issue DateMay 19, 2014

Illustrative Figure

Abstract

This disclosure relates to a system and method for facilitating interaction with a virtual space via a touch sensitive surface. The user may interact with the virtual space via a touch sensitive surface in a wireless client device. The user may interact with the virtual space by providing control inputs to the touch sensitive surface. The control inputs may be reflected in one or more views of the virtual space. In some implementations, the system may be configured such that the one or more views of the virtual space include one or more views of a shooter game. The system may be configured such that the user may interact with the game primarily with fingers from one hand via the touch sensitive surface. The user may enter various command inputs into the touch sensitive surface that correspond to actions in the virtual space presented to the user.

Description

DETAILED DESCRIPTION FIG. 1is a schematic illustration of a system10configured to facilitate interaction of a user with a virtual space. The user may interact with the virtual space via a touch sensitive surface in a wireless client device12. The user may interact with the virtual space by providing control inputs to the touch sensitive surface. The control inputs may be reflected in one or more views of the virtual space. In some implementations, system10may be configured such that the one or more views of the virtual space include one or more views of a shooter game (e.g., a first person shooter, a third person shooter, and/or other shooter mechanics). The game may not be entirely a shooter-style game, but may be a game that has other components and/or mechanics besides a shooter mechanic. System10may be configured such that the user may interact with the game primarily with fingers from one hand via the touch sensitive surface. The user may enter various command inputs into the touch sensitive surface that correspond to actions in the virtual space presented to the user. The description herein of the use of system10in conjunction with a shooter game is not intended to limit the scope of the disclosure. Rather, it will be appreciated that the principles and system described herein may be applied to virtual space and/or other electronic applications wherein one handed control is advantageous. In some implementations, system10may comprise the wireless client device12, a game server14, and/or other components. Wireless client device12may be a mobile device configured to provide an interface for the user to interact with the virtual space, the game and/or other applications. Wireless client device12may include, for example, a smartphone, a tablet computing platform, and/or other mobile devices. Wireless client device12may be configured to communicate with game server14, other wireless ...

DETAILED DESCRIPTION

FIG. 1is a schematic illustration of a system10configured to facilitate interaction of a user with a virtual space. The user may interact with the virtual space via a touch sensitive surface in a wireless client device12. The user may interact with the virtual space by providing control inputs to the touch sensitive surface. The control inputs may be reflected in one or more views of the virtual space. In some implementations, system10may be configured such that the one or more views of the virtual space include one or more views of a shooter game (e.g., a first person shooter, a third person shooter, and/or other shooter mechanics). The game may not be entirely a shooter-style game, but may be a game that has other components and/or mechanics besides a shooter mechanic. System10may be configured such that the user may interact with the game primarily with fingers from one hand via the touch sensitive surface. The user may enter various command inputs into the touch sensitive surface that correspond to actions in the virtual space presented to the user.

The description herein of the use of system10in conjunction with a shooter game is not intended to limit the scope of the disclosure. Rather, it will be appreciated that the principles and system described herein may be applied to virtual space and/or other electronic applications wherein one handed control is advantageous. In some implementations, system10may comprise the wireless client device12, a game server14, and/or other components.

Wireless client device12may be a mobile device configured to provide an interface for the user to interact with the virtual space, the game and/or other applications. Wireless client device12may include, for example, a smartphone, a tablet computing platform, and/or other mobile devices. Wireless client device12may be configured to communicate with game server14, other wireless clients, and/or other devices in a client/server configuration. Such communications may be accomplished at least in part via one or more wireless communication media. Such communication may be transmitted through a network such as the Internet and/or other networks. Wireless client device12may include a user interface16, one or more processors18, electronic storage20, and/or other components.

User interface16may be configured to provide an interface between wireless client12and the user through which the user may provide information to and receive information from system10. User interface16may comprise a touch sensitive surface22, a display24, and/or other components.

Processor18may be configured to execute one or more computer program modules. The one or more computer program modules may comprise one or more of a game module30, a gesture recognition module32, a control module34, and/or other modules.

Game module30may be configured to execute an instance of the virtual space. Game module30may be configured to facilitate interaction of the user with the virtual space by assembling one or more views of the virtual space for presentation to the user on wireless client12. The user may interact with the virtual space by providing control inputs to touch sensitive surface22that are reflected in the view(s) of the virtual space.

To assemble the view(s) of the virtual space, game module30may execute an instance of the virtual space, and may use the instance of the virtual space to determine view information that defines the view. To assemble the view(s) of the virtual space, game module30may obtain view information from game server14, which may execute an instance of the virtual space to determine the view information. The view information may include one or more of virtual space state information, map information, object or entity location information, manifestation information (defining how objects or entities are manifested in the virtual space), and/or other information related to the view(s) and/or the virtual space.

The virtual space may comprise a simulated space that is accessible by users via clients (e.g., wireless client device12) that present the views of the virtual space to a user. The simulated space may have a topography, express ongoing real-time interaction by one or more users, and/or include one or more objects positioned within the topography that are capable of locomotion within the topography. In some instances, the topography may be a 2-dimensional topography. In other instances, the topography may be a 3-dimensional topography. The topography may include dimensions of the space, and/or surface features of a surface or objects that are “native” to the space. In some instances, the topography may describe a surface (e.g., a ground surface) that runs through at least a substantial portion of the space. In some instances, the topography may describe a volume with one or more bodies positioned therein (e.g., a simulation of gravity-deprived space with one or more celestial bodies positioned therein).

Within the instance(s) of the virtual space executed by game module30, the user may control one or more entities to interact with the virtual space and/or each other. The entities may include one or more of characters, objects, simulated physical phenomena (e.g., wind, rain, earthquakes, and/or other phenomena), and/or other elements within the virtual space. The user characters may include avatars. As used herein, an entity may refer to an object (or group of objects) present in the virtual space that represents an individual user. The entity may be controlled by the user with which it is associated. The user controlled element(s) may move through and interact with the virtual space (e.g., non-user characters in the virtual space, other objects in the virtual space). The user controlled elements controlled by and/or associated with a given user may be created and/or customized by the given user. The user may have an “inventory” of virtual goods and/or currency that the user can use (e.g., by manipulation of a user character or other user controlled element, and/or other items) within the virtual space.

In some implementations, the users may interact with each other through communications exchanged within the virtual space. Such communications may include one or more of textual chat, instant messages, private messages, voice communications, and/or other communications. Communications may be received and entered by the users via their respective client devices (e.g., wireless client device12). Communications may be routed to and from the appropriate users through game server14.

In some implementations, game module30may be configured such that the user may participate in a game in the virtual space. The game may include a shooter game mechanic. A shooter game mechanic may involve aiming and/or releasing a projectile toward a target in a field of view presented to the user. The projectile may be released from a virtual weapon in the virtual space (e.g., carried or operated by a user controlled character or other entity). A shooter game mechanic may include controlling a position of a character (e.g., a user character or other entity) within the virtual space, in addition to the aiming and/or releasing of the projectile. In some implementations, the shooter game mechanic may involve sighting the target through a simulated sighting tool on the weapon, changing weapons, reloading the weapon, and/or other aspects of the shooter game mechanic. In some implementations, the game may include various tasks, levels, quests, and/or other challenges or activities in which one or more users may participate. The game may include activities in which users (or their entities) are adversaries, and/or activities in which users (or their entities) are allies. The game may include activities in which users (or their entities) are adversaries of non-player characters, and/or activities in which users (or their entities) are allies of non-player characters. In the game, entities controlled by the user may obtain points, virtual currency or other virtual items, experience points, levels, and/or other demarcations indicating experience and/or success. The game may be implemented in the virtual space, or may be implemented without the virtual space. The game (and/or a virtual space in which it may be implemented) may be synchronous, asynchronous, and/or semi-synchronous.

Gesture recognition module32may be configured to identify individual ones of a set of gestures made by the user on touch sensitive surface22. Gesture recognition module32may be configured to identify the individual ones of the set of gestures based on output signals from touch sensitive surface22. A gesture may be defined by one or more gesture parameters. The one or more gesture parameters may include one or more directions of motion during a contact, a shape of a motion made during a contact, one or more contact locations, a specific sequence of one or more motions, a specific arrangement of one or more contact locations, relative positioning between multiple contacts, a relative timing of multiple contacts, a hold time of one or more contacts, and/or other parameters. A gesture definition of an individual gesture may specify parameter values for one or more gesture parameters. Gesture recognition module32may have access to a plurality of stored gesture definitions (e.g., stored locally on wireless client device12).

Identification of a user gesture may be made based on analysis of the information conveyed by the output signals from touch sensitive surface22. The analysis may include a comparison between the gesture definitions and the information conveyed by the output signals. Gesture recognition module32may be configured to determine the parameter values for gesture parameters of a current or previously performed interaction of the user with touch sensitive surface22. The determined parameter values may then be compared with the parameter values specified by the gesture definitions to determine whether the current or previously performed interaction matches one of the gesture definitions. Responsive to a match between a gesture definition and the interaction performed by the user, gesture recognition module32may be configured to identify the user's interaction as the gesture corresponding to the matched gesture definition.

The individual ones of the set of gestures may include a two fingered tap gesture, a reverse pinch gesture, a move gesture, a look gesture, a turn gesture, a weapon change gesture, a reload gesture, and/or other gestures.

Control module34may be configured to determine control inputs from the user. Control module34may be configured to determine the control inputs based on the identified gestures. Control module34may be configured such that responsive to the gesture recognition module identifying individual ones of the set of gestures, control module34may determine control inputs corresponding to the identified gestures. Game module30may be configured such that reception of the control inputs may cause actions in the virtual space, and/or in the first person shooter game, for example. In some implementations, the control inputs may be configured to cause one or more actions including aiming and/or releasing a projectile (e.g., responsive to the two fingered tap gesture), sighting one or more objects through a sighting tool (e.g., responsive to the reverse pinch gesture), moving a user character within the virtual space (e.g., responsive to the move gesture), looking in a specific direction (e.g., responsive to the look gesture), turning around (e.g., responsive to the turn gesture), changing a weapon (e.g., responsive to the weapon change gesture), reloading a weapon (e.g., responsive to the reload gesture), and/or other actions.

Touch sensitive surface22may be configured to generate output signals responsive to contact by a user. The output signals may be configured to convey information related to one or more locations where the touch sensitive surface is contacted. In some implementations, touch sensitive surface22may include display24. Display24may be configured to present visual information to the user. The visual information may include one or more views of the virtual space and/or one or more other views. In some embodiments, the one or more contact locations on touch sensitive surface22may correspond to one or more locations on display24.

In some implementations, touch sensitive surface22may comprise a touchscreen. The touchscreen may be configured to provide the interface to wireless client12through which the user may input information to and/or receives information from wireless client12. Through an electronic display capability of the touchscreen, views of the virtual space, views of the first person shooter game, graphics, text, and/or other visual content may be presented to the user. Superimposed over some and/or all of the electronic display of the touchscreen, the touchscreen may include one or more sensors configured to generate output signals that indicate a position of one or more objects that are in contact with and/or proximate to the surface of the touchscreen. The sensor(s) of the touchscreen may include one or more of a resistive, a capacitive, surface acoustic wave, or other sensors. In some implementations the touchscreen may comprise one or more of a glass panel, a conductive layer, a resistive layer, a scratch resistant layer, a layer that stores electrical charge, a transducer, a reflector or other components.

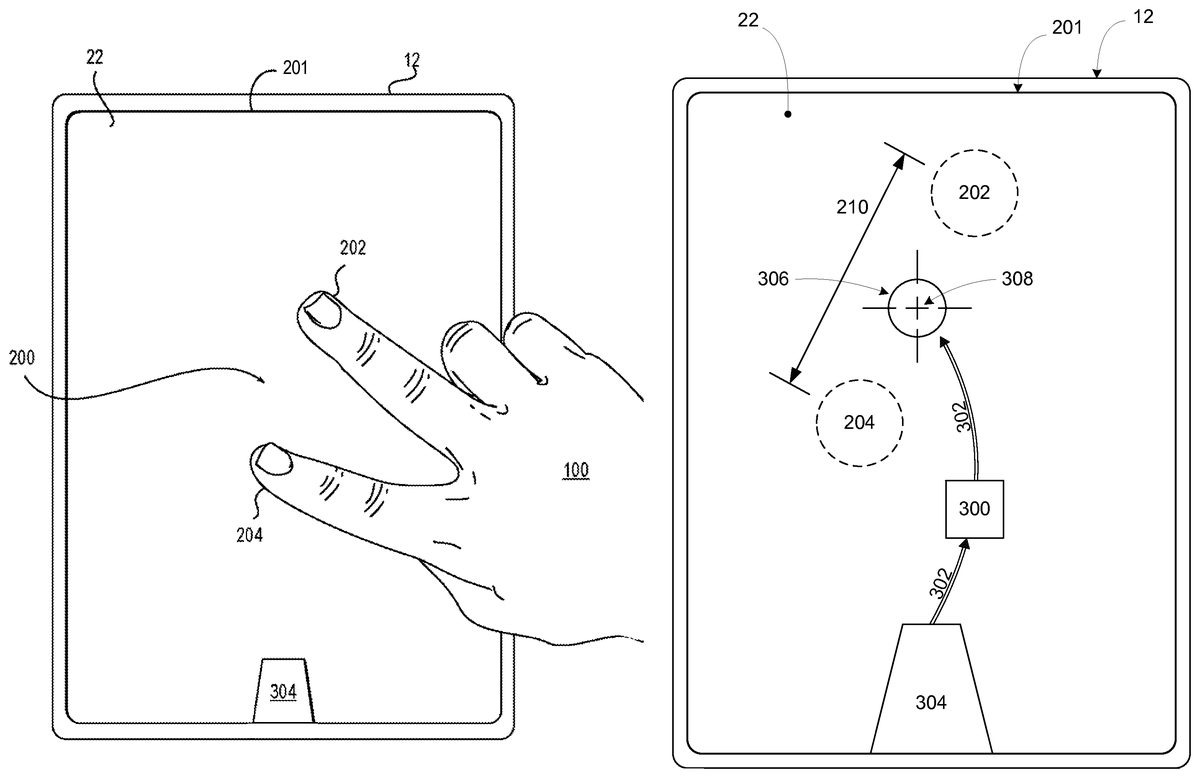

FIG. 2andFIG. 3illustrate a first user interaction200(FIG. 2) made by a user100on touch sensitive surface22(illustrated as a touchscreen inFIG. 2andFIG. 3). First user interaction200may be a two fingered tap gesture. First user interaction200may effect release302(FIG. 3) of a corresponding projectile300from a weapon304, for example, in a first view201of the virtual space. User100may use interaction200to release and/or aim the release of projectile300in the virtual space. As shown inFIG. 2, interaction200may comprise tapping touch sensitive surface22at two locations202,204substantially simultaneously. The tapping interactions at locations202and204may be determined to be substantially simultaneous if both tapping interactions at locations202and204are made within a predetermined period of time. The predetermined period of time may begin when touch sensitive surface22receives the first one of contact at location204, and/or contact at location202, for example. The pre-determined period of time may be determined at manufacture, set by a user via a user interface of a wireless client (e.g., user interface16of wireless client12), and/or determined by another method. A gesture recognition module (e.g., gesture recognition module32shown inFIG. 1and described herein) may be configured to identify user interaction200as the two fingered tap gesture made by user100on touch sensitive surface22. The gesture recognition module may be configured to determine the parameter values for the gesture parameters of interaction200. The determined parameter values may then be compared with the parameter values specified by the definition of the two fingered tap gesture to determine whether interaction200matches the gesture definition of the two fingered tap gesture. Responsive to a match between the parameter values of the two fingered tap gesture definition and the parameter values of interaction200performed by the user, the gesture recognition module may be configured to identify interaction200as the two finger tap gesture. The gesture definition used to determine whether interaction200is a two finger tap gesture may include parameter values and/or parameter value thresholds defining one or more of a distance210between contact locations202,204, a minimum contact time and/or a maximum contact time, a maximum motion distance for contact locations202and/or204, a maximum period of time between contact at location202and contact at location204, and/or other gesture parameters.

A control module (e.g., control module34shown inFIG. 1and described herein) may be configured such that responsive to the gesture recognition module identifying the two fingered tap gesture (FIG. 2), the control module may determine a first control input from user100that causes a game module (e.g., game module30shown inFIG. 1and described herein) to cause the release302of projectile300in the virtual space (FIG. 3). The first control input may specify a target location306in the virtual space toward which the projectile is directed. In some implementations, target location306may correspond to a control location308on touch sensitive surface22that corresponds to one or both of the locations at which user100contacted the touch sensitive surface in making the two fingered tap gesture. In some implementations (e.g., when touch sensitive surface22comprises a touch screen), control location308may be the location on the touchscreen at which target location306is displayed. In some implementations, control location308may be the center point between the two locations202,204at which user100contacted touch sensitive surface22in making the two fingered tap gesture.

FIG. 4-FIG. 7illustrate a second user interaction400(FIG. 4) on touch sensitive surface22(illustrated as a touchscreen inFIG. 4-FIG. 7). Second user interaction400may be a reverse pinch gesture. System10may be configured to present one or more views simulating sighting through a sighting tool (sighting view700shown inFIG. 7) of a firearm responsive to an entry of second user interaction400on touch sensitive surface22. As shown inFIG. 4, second user interaction400may comprise contacting touch sensitive surface22at two locations402,404substantially simultaneously, then moving406the two contact locations402,404farther apart while remaining in contact with touch sensitive surface22.FIG. 5illustrates locations402and404farther apart at new locations502and504. A gesture recognition module (e.g., gesture recognition module32shown inFIG. 1and described herein) may be configured to identify user interaction400as the reverse pinch gesture made by user100on touch sensitive surface22. The gesture recognition module may be configured to determine the parameter values for the gesture parameters of interaction400. The determined parameter values may then be compared with the parameter values specified by the definition of the reverse pinch gesture to determine whether interaction400matches the gesture definition of the reverse pinch gesture. Responsive to a match between the parameter values of the reverse pinch gesture definition and the parameter values of interaction400performed by user100, the gesture recognition module may be configured to identify interaction400as the reverse pinch gesture. The gesture definitions used to determine whether interaction400is a reverse pinch gesture may include parameter values and/or parameter value thresholds defining one or more of a minimum and/or a maximum distance511(FIG. 5) between contact locations402, and404, a maximum and/or a maximum distance514between contact locations502and504, a minimum motion distance510,512for contact locations402and/or404, and/or other values.

A control module (e.g., control module34shown inFIG. 1and described herein) may be configured such that responsive to the gesture recognition module identifying the reverse pinch gesture, the control module may determine a second control input from user100. The second control input may cause a game module (e.g., game module30shown inFIG. 1and described herein) to simulate sighting through a sighting tool in a second view401of the virtual space. As shown inFIG. 6, simulation of sighting through a sighting tool may cause the zoom level of second view401of the virtual space presented to user100to change from a first zoom level600to a second zoom level602. Second zoom level602may be increased relative to first zoom level600. As shown inFIG. 7, in some implementations, second zoom level602, may be configured to simulate a zoom level of a sighting view700through a sight of a firearm. In some implementations, the second control input may cause a portion of second view401(e.g., the portion viewed through the simulated firearm sighting view) to increase to second zoom level602while the rest of second view401remains at first zoom level600.

In some implementations, the game module may be configured such that at least some of the virtual space and/or or game functionality normally available to a user during game play is reduced responsive to the second control input causing the game module to cause second view401to simulate sighting through a sighting tool. For example, while playing the first person shooter game, a user may be able to change weapons when viewing the game at first zoom level600but may not be able to change weapons when viewing the game through simulated sighting view700at second zoom level602.

In some implementations, system10may be configured such that sighting view700is discontinued responsive to a third user interaction on touch sensitive surface22. The third user interaction may be a pinch gesture. The third user interaction may comprise contacting touch sensitive surface22at two locations substantially simultaneously, then moving the two contact locations closer together while remaining in contact with touch sensitive surface22(e.g., substantially opposite the motion depicted inFIG. 5andFIG. 4). The gesture recognition module may be configured to identify the third user interaction as a pinch gesture made by user100on touch sensitive surface22. The gesture recognition module may be configured to determine the parameter values for the gesture parameters of the third interaction. The determined parameter values may then be compared with the parameter values specified by the definition of the pinch gesture to determine whether the third interaction matches the gesture definition of the pinch gesture. Responsive to a match between the parameter values of the pinch gesture definition and the parameter values of the third interaction performed by the user, the gesture recognition module may be configured to identify the third interaction as the pinch gesture. A control module (e.g., control module34shown inFIG. 1and described herein) may be configured such that responsive to the gesture recognition module identifying the third user interaction as a pinch gesture, the control module may determine a third control input from user100. The third control input may cause the game module to cause sighting view700to be discontinued.

FIG. 8-FIG. 10illustrate a fourth user interaction800(FIG. 8) made by user100on touch sensitive surface22(illustrated as a touchscreen inFIG. 8-FIG. 10) and a corresponding change from a third view801(FIG. 8) of the virtual space to a fourth view1001(FIG. 10) of the virtual space. In some implementations, a game module (e.g., game module30shown inFIG. 1and described herein) may be configured such that the one or more views of the virtual space for presentation to user100include third view801(FIG. 8) and fourth view1001(FIG. 10). As shown inFIG. 8-FIG. 10, third view801may correspond to a current location in the virtual space, and fourth view1001may correspond to a move location804(FIG. 8andFIG. 9) in the virtual space.

Fourth interaction800may be a move gesture. Fourth interaction800may comprise contacting touch sensitive surface22at a location that corresponds to move location804in the virtual space. A gesture recognition module (e.g., gesture recognition module32shown inFIG. 1and described herein) may be configured to identify user interaction800as the move gesture made by user100on touch sensitive surface22. The gesture recognition module may be configured to determine the parameter values for the gesture parameters of interaction800. The determined parameter values may then be compared with the parameter values specified by the definition of the move gesture to determine whether interaction800matches the gesture definition of the move gesture. Responsive to a match between the parameter values of the move gesture definition and the parameter values of interaction800performed by the user, the gesture recognition module may be configured to identify interaction800as the move gesture. The gesture definitions used to determine whether interaction800is a move gesture may include parameter values and/or parameter value thresholds defining one or more of a minimum and/or a maximum area820(FIG. 9) on touch sensitive surface22that defines move location804in the virtual space, a minimum and a maximum contact time for the contact made by the user on touch sensitive surface22at location1502, and/or other gesture parameters.

A control module (e.g., control module34shown inFIG. 1and described herein) may be configured such that responsive to the gesture recognition module identifying the move gesture, the control module may determine a fourth control input that causes the game module to change the view presented to user100from third view801(FIG. 8,FIG. 9) to fourth view1001(FIG. 10). In some implementations (e.g., wherein the touch sensitive surface is a touchscreen) move location804may be the location on the touchscreen at which user100contacts the touchscreen with move gesture800. In some implementations, changing from third view801to fourth view1001may comprise simulated movement through the virtual space and/or through the first person shooter game. In some implementations, simulated movement through the virtual space may comprise automatically (e.g., without user100making any additional contact with touch sensitive surface22during the simulated movement) path finding around objects in the instance of the virtual space displayed to user100.

FIG. 11andFIG. 12illustrate a fifth user interaction1100(FIG. 11) made by user100on touch sensitive surface22(illustrated as a touchscreen inFIG. 11andFIG. 12) in a fifth view1101of the virtual space. Fifth user interaction1100may be a look gesture. A user may use interaction1100to “look” in one or more directions in the virtual space. In some implementations, looking may comprise a game module (e.g., game module30shown inFIG. 1and described herein) causing a view of the virtual space to change from fifth view1101to a sixth view1103(FIG. 12) that is representative of the virtual space in the direction indicated by user100via interaction1100.

As shown inFIG. 11andFIG. 12, interaction1100may comprise a directional swipe1102on touch sensitive surface22. Directional swipe1102may comprise contacting touch sensitive surface22at a single location1104, then moving contact location1104in one or more of a first direction1106, a second direction1108, a third direction1110, a fourth direction1112, and/or other directions, while remaining in contact with touch sensitive surface22. A gesture recognition module (e.g., gesture recognition module32shown inFIG. 1and described herein) may be configured to identify user interaction1100as the look gesture made by user100on touch sensitive surface22. The gesture recognition module may be configured to determine the parameter values for the gesture parameters of interaction1100. The determined parameter values may then be compared with the parameter values specified by the definition of the look gesture to determine whether interaction1100matches the gesture definition of the look gesture. Responsive to a match between the parameter values of the look gesture definition and the parameter values of interaction1100performed by the user, the gesture recognition module may be configured to identify interaction1100as the look gesture. The gesture definitions used to determine whether interaction1100is a move gesture may include parameter values and/or parameter value thresholds defining one or more of a two dimensional direction on touch sensitive surface22, a minimum distance1210of the swipe made by the user on touch sensitive surface22, and/or other gesture parameters.

A control module (e.g., control module34) may be configured such that responsive to the gesture recognition module identifying the look gesture (FIG. 11), the control module may determine a fifth control input from user100that causes the game module to change fifth view1101to sixth view1103that is representative of the virtual space in the direction indicated by user100via interaction1100.

In some implementations, responsive to directional swipe1102in first direction1106, the control module may determine the fifth control input such that the game module changes fifth view1101to sixth view1103that is representative of looking down in the virtual space. In some implementations, responsive to directional swipe1102in second direction1108, the control module may determine the fifth control input such that the game module changes fifth view1101to sixth view1103that is representative of looking up in the virtual space. In some implementations, responsive to directional swipe1102in third direction1110, the control module may determine the fifth control input such that the game module changes fifth view1101to sixth view1103that is representative of looking to the left in the virtual space. In some implementations, responsive to directional swipe1102in fourth direction1112, the control module may determine the fifth control input such that the game module changes fifth view1101to sixth view1103that is representative of looking to the right in the virtual space. The four directions described herein are not intended to be limiting. System10may be configured such that a user may use similar swiping gestures in one or more other directions to “look” in any direction in the virtual space.

FIG. 13illustrates a sixth user interaction1300on touch sensitive surface22(illustrated as a touchscreen inFIG. 13) in a seventh view1301of the virtual space. Sixth user interaction1300may be a turn gesture. User interaction1300may comprise contacting touch sensitive surface22at a single location1302toward an edge1304of touch sensitive surface22in a turn gesture area1306. In some implementations, user interaction1300may comprise contacting turn gesture area1306at and/or near the center of turn gesture area1306. A gesture recognition module (e.g., gesture recognition module32) may be configured to identify user interaction1300as the turn gesture made by user100on touch sensitive surface22. The gesture recognition module may be configured to determine the parameter values for the gesture parameters of interaction1300. The determined parameter values may then be compared with the parameter values specified by the definition of the turn gesture to determine whether interaction1300matches the gesture definition of the turn gesture. Responsive to a match between the parameter values of the turn gesture definition and the parameter values of interaction1300performed by the user, the gesture recognition module may be configured to identify interaction1300as the turn gesture. The gesture definitions used to determine whether interaction1300is a turn gesture may include parameter values and/or parameter value thresholds defining one or more of a minimum and/or a maximum area1310on touch sensitive surface22that defines turn gesture area1306, a contact time parameter, and/or other parameters.

A control module (e.g., control module34shown inFIG. 1) may be configured such that responsive to the gesture recognition module identifying the turn gesture, the control module may determine a sixth control input from user100that causes a game module (e.g., game module30) to change seventh view1301to an eighth view that is representative of the virtual space in the direction substantially behind (e.g., a 180° turn from seventh view1301) user100. In some implementations, system10may be configured such that turn gesture area1306may be visible to a user via lines enclosing turn gesture area1306, for example, and/or other visible markings that show turn gesture area1306relative to other areas of seventh view1301displayed to user100. In some implementations, system10may be configured such that turn gesture area1306is invisible to user100.

FIG. 14illustrates a seventh user interaction1400on touch sensitive surface22(illustrated as a touchscreen inFIG. 14) in a ninth view1401of the virtual space. Seventh user interaction1400may be a weapon change gesture. A game module (e.g., game module30) may be configured to display weapon304to user100in ninth view1401. Seventh user interaction1400may comprise contacting touch sensitive surface22at a single location1402that corresponds to a location where weapon304is displayed in the virtual space. In some implementations, user interaction1400may further comprise contacting touch sensitive surface22at the location that corresponds to weapon304and directionally swiping1102location1402on touch sensitive surface22in second direction1108toward edge1304of touch sensitive surface22. A gesture recognition module (e.g., gesture recognition module32) may be configured to identify user interaction1400as the weapon change gesture made by user100on touch sensitive surface22. The gesture recognition module may be configured to determine the parameter values for the gesture parameters of interaction1400. The determined parameter values may then be compared with the parameter values specified by the definition of the weapon change gesture to determine whether interaction1400matches the gesture definition of the weapon change gesture. Responsive to a match between the parameter values of the weapon change gesture definition and the parameter values of interaction1400performed by user100, the gesture recognition module may be configured to identify interaction1400as the weapon change gesture. The gesture definitions used to determine whether interaction1400is a weapon change gesture may include parameter values and/or parameter value thresholds defining one or more of a minimum and/or a maximum area1410on touch sensitive surface22that defines location1402where weapon304is displayed, a direction on touch sensitive surface22, a minimum distance1412of the swipe made by the user on touch sensitive surface22, and/or other gesture parameters.

A control module (e.g., control module34) may be configured such that responsive to the gesture recognition module identifying the weapon change gesture, the control module may determine a seventh control input from user100that causes the game module (e.g., game module30) to change weapon304to another weapon. The swipe direction and edge description related to interaction1400described herein is not intended to be limiting. Interaction1400may include swiping on weapon304in any direction toward any edge of touch sensitive surface22.

FIG. 15andFIG. 16illustrate an eighth user interaction1500made by user100on touch sensitive surface22(illustrated as a touchscreen inFIG. 15andFIG. 16) in a tenth view1501of the virtual space. Eighth user interaction1500may be a reload gesture. A game module (e.g., game module30) may be configured to display weapon304to user100in view1501. User interaction1500may comprise a single tap on touch sensitive surface22at a location1502on touch sensitive surface22that corresponds to a location where weapon304is displayed in the virtual space. A gesture recognition module (e.g., gesture recognition module32) may be configured to identify user interaction1500as the reload gesture made by user100on touch sensitive surface22. The gesture recognition module may be configured to determine the parameter values for the gesture parameters of interaction1500. The determined parameter values may then be compared with the parameter values specified by the definition of the reload gesture to determine whether interaction1500matches the gesture definition of the reload gesture. Responsive to a match between the parameter values of the reload gesture definition and the parameter values of interaction1500performed by the user, the gesture recognition module may be configured to identify interaction1500as the reload gesture. The gesture definitions used to determine whether interaction1500is a reload gesture may include parameter values and/or parameter value thresholds defining one or more of a minimum and/or a maximum area1610(FIG. 16) on touch sensitive surface22that defines location1502where weapon304is displayed, a minimum and a maximum contact time for the contact made by the user on touch sensitive surface22at location1502, and/or other gesture parameters.

A control module (e.g., control module34) may be configured such that responsive to the gesture recognition module identifying the reload gesture, the control module may determine an eighth control input from the user that causes the game module to reload weapon304.

Returning toFIG. 1, in some implementations, user interface16may be configured to provide an interface between system10and the user through which the user may provide information to system10, and receive information from system10. This enables additional data, cues, results, and/or instructions and any other communicable items, collectively referred to as “information,” to be communicated between the user and system10. Examples of additional interface devices suitable for inclusion in user interface16comprise touch sensitive surface22, display24, a keypad, buttons, switches, a keyboard, knobs, levers, speakers, a microphone, an indicator light, an audible alarm, a printer, and/or other interface devices. In one implementation, user interface16comprises a plurality of separate interfaces. In one implementation, user interface16comprises at least one interface that is provided integrally with wireless client12.

It is to be understood that other communication techniques, either hard-wired or wireless, are also contemplated by the present disclosure as user interface16. For example, the present disclosure contemplates that at least a portion of user interface16may be integrated with a removable storage interface provided in wireless client12. In this example, information may be loaded into wireless client12from removable storage (e.g., a smart card, a flash drive, a removable disk, etc.) that enables the user to customize the implementation of wireless client12. Other exemplary input devices and techniques adapted for use with wireless client12as at least a portion of user interface16comprise, but are not limited to, an RS-232 port, RF link, an IR link, modem (telephone, cable or other). In short, any additional technique for communicating information with client computing platform16is contemplated by the present disclosure as user interface16.

Electronic storage20may comprise electronic storage media that electronically stores information. The electronic storage media of electronic storage20may include one or both of system storage that is provided integrally (i.e., substantially non-removable) within wireless client12and/or removable storage that is removably connectable to wireless client12via, for example, a port (e.g., a USB port, a firewire port, etc.) or a drive (e.g., a disk drive, etc.). Electronic storage20may include one or more of optically readable storage media (e.g., optical disks, etc.), magnetically readable storage media (e.g., magnetic tape, magnetic hard drive, floppy drive, etc.), electrical charge-based storage media (e.g., EEPROM, RAM, etc.), solid-state storage media (e.g., flash drive, etc.), and/or other electronically readable storage media. Electronic storage20may include one or more virtual storage resources (e.g., cloud storage, a virtual private network, and/or other virtual storage resources). Electronic storage20may store software algorithms, information determined by processor18, information received from game server14, and/or other information that enables wireless client12to function as described herein. By way of a non-limiting example, the definitions of the set of gestures recognized by gesture recognition module32may be stored in electronic storage20.

Game server14may be configured to host the virtual space, the first person shooter game, and/or other applications, in a networked manner. Game server14may include one or more processors40, electronic storage42, and/or other components. Processor40may be configured to execute a game server module44. Game server module44may be configured to communicate virtual space and/or game information with a game module (e.g., game module30) being executed on wireless client device12and/or one or more other wireless client devices in order to provide an online multi-player experience to the users of the wireless client devices. This may include executing an instance of the virtual space and providing virtual space information, including view information, virtual space state information, and/or other virtual space information, to wireless client device12and/or the one or more other wireless client devices to facilitate participation of the users of the wireless client devices in a shared virtual space experience. Game server module44may be configured to facilitate communication between the users, as well as gameplay.

Processors40may be implemented in one or more of the manners described with respect to processor18(shown inFIG. 1and described above). This includes implementations in which processor40includes a plurality of separate processing units, and/or implementations in which processor40is virtualized in the cloud. Electronic storage42may be implemented in one or more of the manners described with respect to electronic storage20(shown inFIG. 1and described above).

FIG. 17illustrates a method1700for facilitating interaction with a virtual space. The operations of method1700presented below are intended to be illustrative. In some implementations, method1700may be accomplished with one or more additional operations not described, and/or without one or more of the operations discussed. Additionally, the order in which the operations of method1700are illustrated inFIG. 17and described herein is not intended to be limiting.

In some implementations, method1700may be implemented in one or more processing devices (e.g., a digital processor, an analog processor, a digital circuit designed to process information, an analog circuit designed to process information, a state machine, and/or other mechanisms for electronically processing information). The one or more processing devices may include one or more devices executing some or all of the operations of method1700in response to instructions stored electronically on an electronic storage medium. The one or more processing devices may include one or more devices configured through hardware, firmware, and/or software to be specifically designed for execution of one or more of the operations of method1700. In some implementations, at least some of the operations described below may be implemented by a server wherein the system described herein communicates with the server in a client/server relationship over a network.

At an operation1702, a view of a virtual space may be assembled for presentation to a user. In some implementations, operation1702may be performed by a game module similar to, and/or the same as game module30(shown inFIG. 1, and described herein).

At an operation1704, control inputs to a touch sensitive surface may be received. The touch sensitive surface may be configured to generate output signals responsive to contact by the user. The output signals may be configured to convey information related to one or more locations where the touch sensitive surface is contacted. The control inputs may be reflected in the view of the virtual space. In some implementations, operation1704may be performed by a touch sensitive surface similar to and/or the same as touch sensitive surface22(shown inFIG. 1, and described herein).

At an operation1706, individual ones of a set of gestures may be identified. The individual ones of the set of gestures may be made by the user on the touch sensitive surface. The individual ones of the set of gestures may be identified based on the output signals from the touch sensitive surface. The individual ones of the set of gestures may include a two fingered tap gesture. The two fingered tap gesture may comprise tapping the touch sensitive surface at two locations substantially simultaneously. In some implementations, operation1706may be performed by a gesture recognition module similar to, and/or the same as gesture recognition module32(shown inFIG. 1and described herein).

At an operation1708, control inputs from the user may be determined. Determining the control inputs from the user may be based on the identified gestures. Responsive to identifying the two fingered tap gesture, a first control input from the user may be determined. In some implementations, operation1708may be performed by a control module similar to, and/or the same as control module34(shown inFIG. 1and described herein).

At an operation1710, a projectile may be caused to be released in the virtual space. The first control input may cause the release of the projectile in the virtual space. Responsive to identifying the two fingered tap gesture, a target location in the virtual space may be specified toward which the projectile is directed. The target location may correspond to a control location on the touch sensitive surface that corresponds to one or both of the locations at which the user contacted the touch sensitive surface in making the two fingered tap gesture. In some implementations, operation1710may be performed by a game module similar to, and/or the same as game module30(shown inFIG. 1and described herein).

Although the system(s) and/or method(s) of this disclosure have been described in detail for the purpose of illustration based on what is currently considered to be the most practical and preferred implementations, it is to be understood that such detail is solely for that purpose and that the disclosure is not limited to the disclosed implementations, but, on the contrary, is intended to cover modifications and equivalent arrangements that are within the spirit and scope of the appended claims. For example, it is to be understood that the present disclosure contemplates that, to the extent possible, one or more features of any implementation can be combined with one or more features of any other implementation.

Claims

- A system for facilitating interaction with a virtual space, the system comprising: a touch sensitive surface configured to generate output signals responsive to contact by a user, the output signals configured to convey information related to one or more locations where the touch sensitive surface is contacted;and one or more processors configured to execute computer program modules, the computer program modules comprising: a game module configured to facilitate interaction of the user with a virtual space by assembling a view of the virtual space for presentation to the user, wherein the user interacts with the virtual space by providing control inputs that are reflected in the view of the virtual space wherein the tame module is configured such that the view of the virtual space for presentation to the user includes a first view and a second view, the first view corresponding to a current location in the virtual space, and the second view corresponding to a move location in the virtual space;a gesture recognition module configured to identify individual ones of a set of gestures made by the user on the touch sensitive surface based on the output signals from the touch sensitive surface, and wherein the individual ones of the set of gestures include a two fingered tap gesture, the two fingered tap gesture comprising tapping the touch sensitive surface at two locations substantially simultaneously, wherein the gesture recognition module is configured such that the individual ones of the set of gestures include a move gesture, the move gesture comprising contacting the touch sensitive surface at a location that corresponds to the move location in the virtual space;a control module configured to determine control inputs from the user based on the identified gestures, wherein the control module is configured such that responsive to the gesture recognition module identifying the two fingered tap gesture, the control module determines a first control input from the user that causes a release of a projectile in the virtual space;and wherein the control module is configured such that responsive to the gesture recognition module identifying the two fingered tap gesture, the first control input specifies a target location in the virtual space toward which the projectile is directed, the target location corresponding to a control location on the touch sensitive surface that corresponds to one or both of the locations at which the user contacted the touch sensitive surface in making the two fingered tap gesture;and wherein the control module is configured such that responsive to the gesture recognition module identifying the move gesture, the control module determines a third control input that causes the game module to change the view presented to the user from the first view to the second view.

- The system of claim 1 , wherein the target location corresponding to the control location on the touch sensitive surface that corresponds to both of the locations at which the user contacted the touch sensitive surface in making the two fingered tap gesture.

- The system of claim 2 , wherein the touch sensitive surface is a touchscreen configured to present the view of the virtual space, and wherein the control location is the location on the touchscreen at which the target location is displayed.

- They system of claim 2 , wherein the control location is the center point between the two locations at which the user contacted the touch sensitive surface in making the two fingered tap gesture.

- The system of claim 1 , wherein the gesture recognition module is configured such that the individual ones of the set of gestures include a reverse pinch gesture, the reverse pinch gesture comprising contacting the touch sensitive surface at two locations substantially simultaneously, then moving the two contact locations farther apart while remaining in contact with the touch sensitive surface, and wherein the control module is configured such that responsive to the gesture recognition module identifying the reverse pinch gesture, the control module determines a second control input from the user that causes a zoom level of the view of the virtual space presented to the user to change from a first zoom level to a second zoom level, the second zoom level increased relative to the first zoom level.

- The system of claim 5 , wherein the second zoom level is configured to simulate a zoom level of a sighting view through a sighting tool of a firearm.

- The system of claim 1 , wherein the touch sensitive surface is a touchscreen configured to present the first view and the second view of the virtual space, and wherein the move location is the location on the touchscreen at which the user contacts the touchscreen with the move gesture.

- The system of claim 1 , wherein changing from the first view to the second view comprises simulated movement through the virtual space.

- The system of claim 1 , wherein the game module facilitates interaction with the virtual space by causing the virtual space to express an instance of a first person shooter game for presentation to the user, wherein the user interacts with the first person shooter game by providing the control inputs via the touch sensitive surface.

- A method implemented on a computing device having one or more processors, memory, and a touch sensitive surface for facilitating interaction with a virtual space, the method comprising: assembling a view of the virtual space for presentation to a user;receiving control inputs to the touch sensitive surface from the user, the touch sensitive surface configured to generate output signals responsive to contact by the user, the output signals configured to convey information related to one or more locations where the touch sensitive surface is contacted, the control inputs reflected in the view of the virtual space, wherein the view of the virtual space for presentation to the user includes a first view and a second view, the first view corresponding to a current location in the virtual space, and the second view corresponding to a move location in the virtual space;identifying individual ones of a set of gestures made by the user on the touch sensitive surface based on the output signals from the touch sensitive surface, wherein the individual ones of the set of gestures include a two fingered tap gesture, the two fingered tap gesture comprising tapping the touch sensitive surface at two locations substantially simultaneously, wherein the individual ones of the set of gestures include a move gesture, the move gesture comprising contacting the touch sensitive surface at a location that corresponds to the move location in the virtual space;and determining the control inputs from the user based on the identified gestures, wherein, responsive to identifying the two fingered tap gesture, a first control input from the user is determined, the first control input causing a release of a projectile in the virtual space, and wherein, responsive to identifying the move gesture, a third control input from the user is determined, the third control input causing a change in the view presented to the user from the first view to the second view.

- The method of claim 10 , further comprising, responsive to identifying the two fingered tap gesture, specifying a target location in the virtual space toward which the projectile is directed, the target location corresponding to a control location on the touch sensitive surface that corresponds to one or both of the locations at which the user contacted the touch sensitive surface in making the two fingered tap gesture.

- The method of claim 11 , wherein the touch sensitive surface is a touchscreen configured to present the view of the virtual space, and wherein the control location is the location on the touchscreen at which the target location is displayed.

- The method of claim 11 , wherein the control location is the center point between the two locations at which the user contacted the touch sensitive surface in making the two fingered tap gesture.

- A system for facilitating interaction with a virtual space, the system comprising: a touch sensitive surface configured to generate output signals responsive to contact by a user, the output signals configured to convey information related to one or more locations where the touch sensitive surface is contacted;and one or more processors configured to execute computer program modules, the computer program modules comprising: a game module configured to facilitate interaction of the user with a virtual space by assembling a view of the virtual space for presentation to the user, wherein the user interacts with the virtual space by providing control inputs that are reflected in the view of the virtual space, wherein the game module is configured such that the view of the virtual space for presentation to the user includes a first view and a second view, the first view corresponding to a current location in the virtual space, and the second view corresponding to a move location in the virtual space;a gesture recognition module configured to identify individual ones of a set of gestures made by the user on the touch sensitive surface based on the output signals from the touch sensitive surface, and wherein the individual ones of the set of gestures include a two fingered tap gesture, the two fingered tap gesture comprising tapping the touch sensitive surface at two locations substantially simultaneously;and a control module configured to determine control inputs from the user based on the identified gestures, wherein the control module is configured such that responsive to the gesture recognition module identifying the two fingered tap gesture, the control module determines a first control input from the user that causes a release of a projectile in the virtual space, wherein the gesture recognition module is further configured such that the individual ones of the set of gestures include a move gesture, the move gesture comprising contacting the touch sensitive surface at a location that corresponds to the move location in the virtual space, and wherein the control module is configured such that responsive to the gesture recognition module identifying the move gesture, the control module determines a second control input that causes the game module to change the view presented to the user from the first view to the second view.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.