U.S. Pat. No. 9,604,141

STORAGE MEDIUM HAVING GAME PROGRAM STORED THEREON, GAME APPARATUS, GAME SYSTEM, AND GAME PROCESSING METHOD

AssigneeNINTENDO CO., LTD.; TOHOKU UNIVERSITY

Issue DateMay 17, 2010

Illustrative Figure

Abstract

Operation input obtaining means obtains an operation input performed by a player with respect to an input device. Designated position setting means sets a designated position with respect to a virtual game world in accordance with the operation input. Biological signal obtaining means obtains a biological signal from the player. Designated position change means changes the designated position in accordance with the biological signal obtained by the biological signal obtaining means. Game processing means performs a predetermined game process on the basis of the designated position.

Description

DESCRIPTION OF THE PREFERRED EMBODIMENTS With reference toFIG. 1, an apparatus for executing a game program according to one embodiment. Hereinafter, in order to give a specific explanation, a description will be given using a game system including a stationary game apparatus body5that is an example of the above apparatus.FIG. 1is an external view showing an example of a game system1including a stationary game apparatus3.FIG. 2is a block diagram showing an example of the game apparatus body5. The game system1will be described below. As shown inFIG. 1, the game system1includes: a home-use television receiver2(hereinafter referred to as a monitor2) that is an example of display means; and the stationary game apparatus3that is connected to the monitor2via a connection cord. The monitor2has loudspeakers2afor outputting, in the form of sound, an audio signal outputted from the game apparatus3. The game apparatus3includes: an optical disc4having the game program stored thereon; the game apparatus body5having a computer for executing the game program of the optical disc4to output and display a game screen on the monitor2; and a controller7for providing the game apparatus body5with necessary operation information for a game in which a character or the like displayed in the game screen is controlled. The game apparatus body5includes a wireless controller module19therein (seeFIG. 2). The wireless controller module19receives data wirelessly transmitted from the controller7, and transmits data from the game apparatus body5to the controller7. In this manner, the controller7and the game apparatus body5are connected to each other by wireless communication. Further, the optical disc4as an example of an exchangeable information storage medium is detachably mounted on the game apparatus body5. On the game apparatus body5, a flash memory17(seeFIG. 2) is mounted. The flash memory17acts as a backup memory for fixedly storing such data as save data. The game apparatus body5executes the game program or the ...

DESCRIPTION OF THE PREFERRED EMBODIMENTS

With reference toFIG. 1, an apparatus for executing a game program according to one embodiment. Hereinafter, in order to give a specific explanation, a description will be given using a game system including a stationary game apparatus body5that is an example of the above apparatus.FIG. 1is an external view showing an example of a game system1including a stationary game apparatus3.FIG. 2is a block diagram showing an example of the game apparatus body5. The game system1will be described below.

As shown inFIG. 1, the game system1includes: a home-use television receiver2(hereinafter referred to as a monitor2) that is an example of display means; and the stationary game apparatus3that is connected to the monitor2via a connection cord. The monitor2has loudspeakers2afor outputting, in the form of sound, an audio signal outputted from the game apparatus3. The game apparatus3includes: an optical disc4having the game program stored thereon; the game apparatus body5having a computer for executing the game program of the optical disc4to output and display a game screen on the monitor2; and a controller7for providing the game apparatus body5with necessary operation information for a game in which a character or the like displayed in the game screen is controlled.

The game apparatus body5includes a wireless controller module19therein (seeFIG. 2). The wireless controller module19receives data wirelessly transmitted from the controller7, and transmits data from the game apparatus body5to the controller7. In this manner, the controller7and the game apparatus body5are connected to each other by wireless communication. Further, the optical disc4as an example of an exchangeable information storage medium is detachably mounted on the game apparatus body5.

On the game apparatus body5, a flash memory17(seeFIG. 2) is mounted. The flash memory17acts as a backup memory for fixedly storing such data as save data. The game apparatus body5executes the game program or the like stored in the optical disc4, and displays a result thereof as a game image on the monitor2. The game program to be executed may be prestored not only in the optical disc4, but also in the flash memory17. The game apparatus body5may reproduce a state of the game played in the past, by using the save data stored in the flash memory17, and display an image of the reproduced game state on the monitor2. A player of the game apparatus3can enjoy advancing in the game by operating the controller7while watching the game image displayed on the monitor2.

By using the technology of, for example, Bluetooth (registered trademark), the controller7wirelessly transmits transmission data, such as operation information and biological information, to the game apparatus body5having the wireless controller module19therein. The controller7includes a core unit70and a vital sensor76. The core unit70and the vital sensor76are connected to each other via a flexible connection cable79. The core unit70is operation means mainly for controlling an object or the like displayed on a display screen of the monitor2. The vital sensor76is attached to a player's body (e.g., to a player's finger). The vital sensor76obtains biological signals from the player, and sends biological information to the core unit70via the connection cable79. The core unit70includes: a housing that is small enough to be held by one hand; and a plurality of operation buttons (including a cross key, a stick, or the like) that are exposed at a surface of the housing. As described later in detail, the core unit70includes an imaging information calculation section74for taking an image of a view viewed from the core unit70. As an example of imaging targets of the imaging information calculation section74, two LED modules8L and8R (hereinafter referred to as “markers8L and8R”) are provided in the vicinity of the display screen of the monitor2. These markers8L and8R each output, for example, infrared light forward from the monitor2. The controller7(e.g., the core unit70) is capable of receiving, via a communication section75, transmission data wirelessly transmitted from the wireless controller module19of the game apparatus body5, and generating a sound or vibration based on the transmission data.

Note that, in this embodiment, the core unit70and the vital sensor76are connected to each other via the flexible connection cable79. However, the connection cable79can be eliminated by mounting a wireless unit on the vital sensor76. For example, by mounting a Bluetooth (registered trademark) unit on the vital sensor76as a wireless unit, transmission of biological information from the vital sensor76to the care unit70or to the game apparatus body5is enabled. Further, the core unit70and the vital sensor76may be integrated, by fixedly providing the vital sensor76on the core unit70. In this case, the player can use the vital sensor76integrated with the core unit70.

Next, an internal configuration of the game apparatus body5will be described with reference toFIG. 2.FIG. 2is a block diagram showing the internal configuration of the game apparatus body5. The game apparatus body5has a CPU (Central Processing Unit)10, a system LSI (Large Scale Integration)11, an external main memory12, a ROM/RTC (Read Only Memory/Real Time Clock)13, a disc drive14, an AV-IC (Audio Video-Integrated Circuit)15, and the like.

The CPU10performs game processing by executing the game program stored in the optical disc4, and acts as a game processor. The CPU10is connected to the system LSI11. In addition to the CPU10, the external main memory12, the ROM/RTC13, the disc drive14, and the AV-IC15are connected to the system LSI11. The system LSI11performs processing such as: controlling data transfer among components connected to the system LSI11; generating an image to be displayed; obtaining data from external devices; and the like. An internal configuration of the system LSI11will be described later. The external main memory12that is a volatile memory stores a program, for example, the game program loaded from the optical disc4, or a game program loaded from the flash memory17, and also stores various data. The external main memory12is used as a work area or buffer area of the CPU10. The ROM/RTC13has a ROM in which a boot program for the game apparatus body5is incorporated (so-called a boot ROM), and has a clock circuit (RTC) that counts the time. The disc drive14reads program data, texture data, and the like from the optical disc4, and writes the read data into a later-described internal main memory35or into the external main memory12.

On the system LSI11, an input/output processor31, a GPU (Graphic Processor Unit)32, a DSP (Digital Signal Processor)33, a VRAM (Video RAM)34, and the internal main memory35are provided. Although not shown, these components31to35are connected to each other via an internal bus.

The GPU32is a part of rendering means, and generates an image in accordance with a graphics command from the CPU10. The VRAM34stores necessary data for the GPU32to execute the graphics command (data such as polygon data, texture data and the like). At the time of generating the image, the GPU32uses the data stored in the VRAM34, thereby generating image data.

The DSP33acts as an audio processor, and generates audio data by using sound data and sound waveform (tone) data stored in the internal main memory35and in the external main memory12.

The image data and the audio data generated in the above manner are read by the AV-IC15. The AV-IC15outputs the read image data to the monitor2via the AV connector16, and outputs the read audio data to the loudspeakers2aembedded in the monitor2. As a result, an image is displayed on the monitor2and a sound is outputted from the loudspeakers2a.

The input/output processor (I/O Processor)31performs, for example, data transmission/reception to/from components connected thereto, and data downloading from external devices. The input/output processor31is connected to the flash memory17, a wireless communication module18, the wireless controller module19, an expansion connector20, and an external memory card connector21. An antenna22is connected to the wireless communication module18, and an antenna23is connected to the wireless controller module19.

The input/output processor31is connected to a network via the wireless communication module18and the antenna22so as to be able to communicate with other game apparatuses and various servers connected to the network. The input/output processor31regularly accesses the flash memory17to detect presence or absence of data that is required to be transmitted to the network. If such data is present, the data is transmitted to the network via the wireless communication module18and the antenna22. Also, the input/output processor31receives, via the network, the antenna22and the wireless communication module18, data transmitted from other game apparatuses or data downloaded from a download server, and stores the received data in the flash memory17. By executing the game program, the CPU10reads the data stored in the flash memory17, and the game program uses the read data. In addition to the data transmitted and received between the game apparatus body5and other game apparatuses or various servers, the flash memory17may store save data of a game that is played using the game apparatus body5(such as result data or progress data of the game).

Further, the input/output processor31receives, via the antenna23and the wireless controller module19, operation data or the like transmitted from the controller7, and stores (temporarily) the operation data or the like in a buffer area of the internal main memory35or of the external main memory12. Note that, similarly to the external main memory12, the internal main memory35may store a program, for example, the game program loaded from the optical disc4or a game program loaded from the flash memory17, and also store various data. The internal main memory35may be used as a work area or buffer area of the CPU10.

In addition, the expansion connector20and the external memory card connector21are connected to the input/output processor31. The expansion connector20is a connector for such interface as USB, SCSI or the like. The expansion connector20, instead of the wireless communication module18, is able to perform communication with a network by being connected to such a medium as an external storage medium, to such a peripheral device as another controller, or to a connector for wired communication. The external memory card connector21is a connector to be connected to an external storage medium such as a memory card. For example, the input/output processor31is able to access the external storage medium via the expansion connector20or the external memory card connector21to store or read data from the external storage medium.

On the game apparatus body5(e.g., on a front main surface thereof), a power button24of the game apparatus body5, a reset button25for resetting game processing, an insertion slot for mounting the optical disc4in a detachable manner, an eject button26for ejecting the optical disc4from the insertion slot of the game apparatus body5, and the like, are provided. The power button24and the reset button25are connected to the system LSI11. When the power button24is turned on, each component of the game apparatus body5is supplied with power via an AC adaptor (not shown). When the reset button25is pressed, the system LSI11re-executes the boot program of the game apparatus body5. The eject button26is connected to the disc drive14. When the eject button26is pressed, the optical disc4is ejected from the disc drive14.

With reference toFIGS. 3 and 4, the core unit70will be described.FIG. 3is a perspective view of the core unit70viewed from a top rear side thereof.FIG. 4is a perspective view of the core unit70viewed from a bottom front side thereof.

As shown inFIGS. 3 and 4, the core unit70includes a housing71formed by plastic molding or the like. The housing71has a plurality of operation sections72provided thereon. The housing71has an approximately parallelepiped shape extending in a longitudinal direction from front to rear. The overall size of the housing71is small enough to be held by one hand of an adult or even a child.

At the center of a front part of a top surface of the housing71, a cross key72ais provided. The cross key72ais a cross-shaped four-direction push switch. The cross key72aincludes operation portions corresponding to four directions (front, rear, right, and left), which are respectively located on cross-shaped projecting portions arranged at intervals of 90 degrees. The player selects one of the front, rear, right and left directions by pressing one of the operation portions of the cross key72a. Through an operation of the cross key72a, the player can, for example, designate a direction in which a player character or the like appearing in a virtual game world is to move, or give an instruction to select one of multiple options.

The cross key72ais an operation section for outputting an operation signal in accordance with the aforementioned direction input operation performed by the player. Such an operation section may be provided in a different form. For example, an operation section that has four push switches arranged in a cross formation and that is capable of outputting an operation signal in accordance with pressing of one of the push switches by the player, may be provided. Alternatively, an operation section that has a composite switch having, in addition to the above four push switches, a center switch provided at an intersection point of the above cross formation, may be provided. Still alternatively, the cross key72amay be replaced with an operation section that includes an inclinable stick (so-called a joy stick) projecting from the top surface of the housing71and that outputs an operation signal in accordance with an inclining direction of the stick. Still alternatively, the cross key72amay be replaced with an operation section that includes a horizontally-slidable disc-shaped member and that outputs an operation signal in accordance with a sliding direction of the disc-shaped member. Still alternatively, the cross key72amay be replaced with a touch pad.

Behind the cross key72aon the top surface of the housing71, a plurality of operation buttons72bto72gare provided. The operation buttons72bto72gare each an operation section for, when the player presses a head thereof, outputting a corresponding operation signal. For example, functions as a 1st button, a 2nd button, and an A button are assigned to the operation buttons72bto72d. Also, functions as a minus button, a home button, and a plus button are assigned to the operation buttons72eto72g, for example. Operation functions are assigned to the respective operation buttons72ato72gin accordance with the game program executed by the game apparatus body5. In the exemplary arrangement shown inFIG. 3, the operation buttons72bto72dare arranged in a line at the center on the top surface of the housing71in a front-rear direction. The operation buttons72eto72gare arranged on the top surface of the housing71in a line in a left-right direction between the operation buttons72band72d. The operation button72fhas a top surface thereof buried in the top surface of the housing71, so as not to be inadvertently pressed by the player.

In front of the cross key72aon the top surface of the housing71, an operation button72his provided. The operation button72his a power switch for turning on and off the game apparatus body5by remote control. The operation button72halso has a top surface thereof buried in the top surface of the housing71, so as not to be inadvertently pressed by the player.

Behind the operation button72con the top surface of the housing71, a plurality of LEDs702are provided. Here, a controller type (a number) is assigned to the core unit70such that the core unit70is distinguishable from other controllers. The LEDs702are used for, e.g., informing the player of the controller type currently set for the core unit70. Specifically, a signal is transmitted from the wireless controller module19to the core unit70such that one of the plurality of LEDs702, which corresponds to the controller type of the core unit70, is lit up.

On the top surface of the housing71, sound holes for outputting sounds from a later-described speaker (a speaker706shown inFIG. 5) to the external space are formed between the operation button72band the operation buttons72eto72g.

On the bottom surface of the housing71, a recessed portion is formed. The recessed portion on the bottom surface of the housing71is formed in a position in which an index finger or middle finger of the player is located when the player holds the core unit70with one hand so as to point a front surface thereof to the markers8L and8R. On a slope surface of the recessed portion, an operation button72iis provided. The operation button72iis an operation section acting as, for example, a B button.

On the front surface of the housing71, an image pickup element743that is a part of the imaging information calculation section74is provided. The imaging information calculation section74is a system for: analyzing image data of an image taken by the core unit70; identifying an area having a high brightness in the image; and detecting the position of the center of gravity, the size, and the like of the area. The imaging information calculation section74has, for example, a maximum sampling period of approximately 200 frames/sec, and therefore can trace and analyze even a relatively fast motion of the core unit70. A configuration of the imaging information calculation section74will be described later in detail. On the rear surface of the housing71, a connector73is provided. The connector73is, for example, an edge connector, and is used for engaging and connecting the core unit70with a connection cable, for example.

Next, an internal structure of the core unit70will be described with reference toFIGS. 5 and 6.FIG. 5is a perspective view, viewed from a rear surface side of the core unit70, of an example of the core unit70in a state where an upper casing thereof (a part of the housing71) is removed.FIG. 6is a perspective view, viewed from a front surface side of the core unit70, of an example of the core unit70in a state where a lower casing thereof (a part of the housing71) is removed. Here,FIG. 6is a perspective view showing a reverse side of a substrate700shown inFIG. 5.

As shown inFIG. 5, the substrate700is fixedly provided inside the housing71. On a top main surface of the substrate700, the operation buttons72ato72h, an acceleration sensor701, the LEDs702, an antenna754, and the like, are provided. These elements are connected to, for example, a microcomputer751(seeFIGS. 6 and 7) via wiring (not shown) formed on the substrate700and the like. A wireless module753(seeFIG. 7) and the antenna754allow the core unit70to act as a wireless controller. Inside the housing71, a quartz oscillator (not shown) is provided, and the quartz oscillator generates a reference clock of the later-described microcomputer751. Further, the speaker706and an amplifier708are provided on the top main surface of the substrate700. The acceleration sensor701is provided, on the substrate700, to the left side of the operation button72d(i.e., provided not on a central part but on a peripheral part of the substrate700). For this reason, in response to the core unit70having rotated around an axis of the longitudinal direction of the core unit70, the acceleration sensor701is able to detect, in addition to a change in a direction of the gravitational acceleration, acceleration containing a centrifugal component, and the game apparatus body5or the like is able to determine, on the basis of detected acceleration data, a motion of the core unit70by predetermined calculation with favorable sensitivity.

As shown inFIG. 6, at a front edge of the bottom main surface of the substrate700, the imaging information calculation section74is provided. The imaging information calculation section74includes an infrared filter741, a lens742, the image pickup element743, and an image processing circuit744, which are located in said order from the front surface of the core unit70. These elements are attached to the bottom main surface of the substrate700. At a rear edge of the bottom main surface of the substrate700, the connector73is mounted. Further, a sound IC707and the microcomputer751are provided on the bottom main surface of the substrate700. The sound IC707is connected to the microcomputer751and the amplifier708via wiring formed on the substrate700and the like, and outputs an audio signal via the amplifier708to the speaker706in response to sound data transmitted from the game apparatus body5.

On the bottom main surface of the substrate700, a vibrator704is attached. The vibrator704may be, for example, a vibration motor or a solenoid. The vibrator704is connected to the microcomputer751via wiring formed on the substrate700and the like, and is activated or deactivated in accordance with vibration data transmitted from the game apparatus body5. The core unit70is vibrated by actuation of the vibrator704, and the vibration is conveyed to the player's hand holding the core unit70. Thus, a so-called vibration-feedback game is realized. Since the vibrator704is provided at a relatively forward position in the housing71, the housing71held by the player significantly vibrates, and allows the player to easily feel the vibration.

Next, an internal configuration of the controller7will be described with reference toFIG. 7.FIG. 7is a block diagram showing an example of the internal configuration of the controller7.

As shown inFIG. 7, the core unit70includes the communication section75in addition to the above-described operation sections72, the imaging information calculation section74, the acceleration sensor701, the vibrator704, the speaker706, the sound IC707, and the amplifier708. The vital sensor76is connected to the microcomputer751via the connection cable79and connectors791and73.

The imaging information calculation section74includes the infrared filter741, the lens742, the image pickup element743, and the image processing circuit744. The infrared filter741allows, among light incident thereon through the front surface of the core unit70, only infrared light to pass therethrough. The lens742condenses the infrared light having passed through the infrared filter741, and outputs the condensed infrared light to the image pickup element743. The image pickup element743is a solid-state image pickup element such as a CMOS sensor, CCD or the like. The image pickup element743takes an image of the infrared light condensed by the lens742. In other words, the image pickup element743takes an image of only the infrared light having passed through the infrared filter741. Then, the image pickup element743generates image data of the image. The image data generated by the image pickup element743is processed by the image processing circuit744. Specifically, the image processing circuit744processes the image data obtained from the image pickup element743, and detects a high brightness area of the image, and outputs, to the communication section75, process result data indicative of results of detecting, for example, position coordinates, a square measure, and the like of the high brightness area. The imaging information calculation section74is fixed to the housing71of the core unit70. An imaging direction of the imaging information calculation section74can be changed by changing a facing direction of the housing71.

The process result data outputted from the imaging information calculation section74can be used as operation data indicative of: a position designated by using the core unit70; and the like. For example, the player holds the core unit70such that the front surface of the core unit70(a side having a light opening through which light is incident on the imaging information calculation section74taking an image of the light) faces the monitor2. On the other hand, the two markers8L and8R are provided in the vicinity of the display screen of the monitor2. The markers8L and8R each emit infrared light forward from the monitor2, and become imaging targets of the imaging information calculation section74. Then, the game apparatus body5calculates a position designated by the core unit70, by using position data regarding high brightness points based on the two makers8L and8R.

For example, when the player holds the core unit70such that its front surface faces the monitor2, the infrared lights outputted from the two markers8L and8R are incident on the imaging information calculation section74. The image pickup element743takes images of the incident infrared lights via the infrared filter741and the lens742, and the image processing circuit744processes the taken images. In the imaging information calculation section74, components of the infrared lights outputted from the markers8L and8R are detected, whereby positional information (positions of target images) and the like of the markers8L and8R on the taken image are obtained. Specifically, the image processing circuit744analyzes the image data taken by the image pickup element743, eliminates, from area information of the taken image, images that are not generated by the infrared lights outputted from the markers8L and8R, and then determines the high brightness points as the positions of the markers8L and8R. The imaging information calculation section74obtains the positional information such as positions of the centers of gravity of the determined high brightness points, and outputs the positional information as the process result data. The positional information, which is the process result data, may be outputted as coordinate values whose origin point is set to a predetermined reference point on a taken image (e.g., the center or the left top corner of the taken image). Alternatively, with the position of the center of gravity at a predetermined timing being set as a reference point, the difference between the reference point and a current position of the center of gravity may be outputted as a vector. That is, in the case where a predetermined reference point is set on the taken image taken by the image pickup element743, the positional information on the target images is used as parameters representing differences between the positions of the target images and the reference point position. The positional information is transmitted to the game apparatus body5, whereby, on the basis of the differences between the reference point and the positional information, the game apparatus body5is capable of obtaining variations in a signal that corresponds to a movement, an attitude, a position, and the like of the imaging information calculation section74, i.e., the core unit70, with respect to the markers8L and8R. Specifically, when the core unit70is moved, the positions of the centers of gravity of the high brightness points in the image transmitted from the communication section75change. Therefore, a direction and a coordinate point are inputted in accordance with the change in the positions of the centers of gravity of the high brightness points, whereby the position designated by the core unit70may be regarded as an operation input, and a direction and a coordinate point may be inputted in accordance with a direction in which the core unit70moves.

In this manner, the imaging information calculation section74of the controller7takes the images of the markers (the infrared lights from the markers8L and8R in this embodiment) that are located fixedly, whereby data outputted from the controller7is processed in the process on the game apparatus body5, and an operation can be performed in accordance with the movement, the attitude, the position, and the like of the core unit70. Further, it becomes possible to perform an intuitive operation input that is different from an input performed by pressing an operation button or an operation key. Since the above markers are located in the vicinity of the display screen of the monitor2, a position of the core unit70with respect to the markers can be easily converted to the movement, the attitude, position and the like of the core unit70with respect to the display screen of the monitor2. That is, the process result data based on the movement, the attitude, the position, and the like of the core unit70is used as an operation input directly reflected on the display screen of the monitor2(e.g., an input of the position designated by the core unit70), and thus the core unit70can be caused to serve as a pointing device with respect to the display screen.

Preferably, the core unit70includes a triaxial acceleration sensor701. The triaxial acceleration sensor701detects linear acceleration in three directions, i.e., the up-down direction, the left-right direction, and the front-rear direction. Alternatively, an accelerometer capable of detecting linear acceleration along at least one axis direction may be used. As a non-limiting example, the acceleration sensor701may be of the type available from Analog Devices, Inc. or STMicroelectronics N.V. Preferably, the acceleration sensor701is an electrostatic capacitance or capacitance-coupling type that is based on silicon micro-machined MEMS (microelectromechanical systems) technology. However, any other suitable accelerometer technology (e.g., piezoelectric type or piezoresistance type) now existing or later developed may be used to provide the acceleration sensor701.

Accelerometers, as used in the acceleration sensor701, are only capable of detecting acceleration along a straight line (linear acceleration) corresponding to each axis of the acceleration sensor701. In other words, the direct output of the acceleration sensor701is limited to signals indicative of linear acceleration (static or dynamic) along each of the three axes thereof. As a result, the acceleration sensor701cannot directly detect movement along a non-linear (e.g., arcuate) path, rotation, rotational movement, angular displacement, inclination, position, orientation or any other physical characteristic. However, through processing by a computer such as a processor of the game apparatus (e.g., the CPU10) or a processor of the controller (e.g., the microcomputer751) based on the acceleration signals outputted from the acceleration sensor701, additional information relating to the core unit70can be inferred or calculated (determined), as one skilled in the art will readily understand from the description herein.

The communication section75includes the microcomputer751, a memory752, the wireless module753, and the antenna754. The microcomputer751controls the wireless module753that wirelessly transmits transmission data, while using the memory752as a storage area during processing. The microcomputer751also controls operations of the sound IC707and the vibrator704(not shown) in accordance with data which the wireless module753has received from the game apparatus body5via the antenna754. The sound IC707processes sound data or the like that is transmitted from the game apparatus body5via the communication section75. Further, the microcomputer751activates the vibrator704in accordance with vibration data or the like (e.g., a signal for causing the vibrator704to be ON or OFF) that is transmitted from the game apparatus body5via the communication section75.

Operation signals from the operation sections72provided on the core unit70(key data), acceleration signals from the acceleration sensor701with respect to the three axial directions (X-, Y- and Z-axis direction acceleration data), and the process result data from the imaging information calculation section74, are outputted to the microcomputer751. Also, biological signals (biological information data) provided from the vital sensor76are outputted to the microcomputer751via the connection cable79. The microcomputer751temporarily stores inputted data (the key data, the X-, Y- and Z-axis direction acceleration data, the process result data, and the biological information data) in the memory752as transmission data to be transmitted to the wireless controller module19. Here, wireless transmission from the communication section75to the wireless controller module19is performed at predetermined time intervals. Since game processing is generally performed at a cycle of 1/60 sec, the wireless transmission needs to be performed at a shorter cycle. Specifically, game processing is performed at a cycle of 16.7 ms ( 1/60 sec), and a transmission interval of the communication section75configured using the Bluetooth (registered trademark) technology is 5 ms. At a timing of performing transmission to the wireless controller module19, the microcomputer751outputs, to the wireless module753, the transmission data stored in the memory752as a series of pieces of operation information. The wireless module753uses, for example, the Bluetooth (registered trademark) technology to radiate, using a carrier wave having a predetermined frequency, a radio signal from the antenna754, the radio signal indicative of the series of pieces of operation information. Thus, the key data from the operation sections72provided on the core unit70, the X-, Y- and Z-axis direction acceleration data from the acceleration sensor701, the process result data from the imaging information calculation section74, and the biological information data from the vital sensor76, are transmitted from the core unit70. The wireless controller module19of the game apparatus body5receives the radio signal, and the game apparatus body5demodulates or decodes the radio signal to obtain the series of pieces of operation information (the key data, the X-, Y- and Z-axis direction acceleration data, the process result data, and the biological information data). In accordance with the series of pieces of obtained operation information and the game program, the CPU10of the game apparatus body5performs game processing. In the case where the communication section75is configured using the Bluetooth (registered trademark) technology, the communication section75can have a function of receiving transmission data wirelessly transmitted from other devices.

Next, with reference toFIGS. 8 and 9, the vital sensor76will be described.FIG. 8is a block diagram showing an example of a configuration of the vital sensor76.FIG. 9is a diagram showing pulse wave information that is an example of biological information outputted from the vital sensor76.

InFIG. 8, the vital sensor76includes a control unit761, a light source762, and a photodetector763.

The light source762and the photodetector763constitutes a transmission-type digital-plethysmography sensor, which is an example of a sensor that obtains a biological signal of the player. The light source762includes, for example, an infrared LED that emits infrared light having a predetermined wavelength (e.g., 940 nm) toward the photodetector763. On the other hand, the photodetector763, which includes, for example, an infrared photoresistor, senses the light emitted by the light source762, depending on the wavelength of the emitted light. The light source762and the photodetector763are arranged so as to face each other with a predetermined gap (hollow space) being interposed therebetween.

Here, hemoglobin that exists in human blood absorbs infrared light. For example, a part (e.g., a fingertip) of the player's body is inserted in the gap between the light source762and the photodetector763. In this case, the infrared light emitted from the light source762is partially absorbed by hemoglobin existing in the inserted fingertip before being sensed by the photodetector763. Arteries in the human body pulsate, and therefore, the thickness (blood flow rate) of the arteries varies depending on the pulsation. Therefore, similar pulsation occurs in arteries in the inserted fingertip, and the blood flow rate varies depending on the pulsation, so that the amount of infrared light absorption also varies depending on the blood flow rate. Specifically, as the blood flow rate in the inserted fingertip increases, the amount of light absorbed by hemoglobin also increases and therefore the amount of infrared light sensed by the photodetector763relatively decreases. Conversely, as the blood flow rate in the inserted fingertip decreases, the amount of light absorbed by hemoglobin also decreases and therefore the amount of infrared light sensed by the photodetector763relatively increases. The light source762and the photodetector763utilize such an operating principle, i.e., convert the amount of infrared light sensed by the photodetector763into a photoelectric signal to detect pulsation (hereinafter referred to as a pulse wave) of the human body. For example, as shown inFIG. 9, when the blood flow rate in the inserted fingertip increases, the detected value of the photodetector763increases, and when the blood flow rate in the inserted fingertip decreases, the detected value of the photodetector763decreases. Thus, a pulse wave portion in which the detected value of the photodetector763rises and falls is generated as a pulse wave signal. Note that, in some circuit configuration of the photodetector763, a pulse wave signal may be generated in which, when the blood flow rate in the inserted fingertip increases, the detected value of the photodetector763decreases, and when the blood flow rate in the inserted fingertip decreases, the detected value of the photodetector763increases.

The control unit761includes, for example, a MicroController Unit (MCU). The control unit761controls the amount of infrared light emitted from the light source762. The control unit761also performs A/D conversion on a photoelectric signal (pulse wave signal) outputted from the photodetector763, to generate pulse wave data (biological information data). Thereafter, the control unit761outputs the pulse wave data biological information data) via the connection cable79to the core unit70.

In the game apparatus body5, the pulse wave data obtained from the vital sensor76is analyzed, whereby various biological information on the player using the vital sensor76can be detected/calculated. As a first example, in the game apparatus body5, in accordance with peaks and dips of the pulse wave indicated by the pulse wave data obtained from the vital sensor76, it is possible to detect a pulse timing of the player a timing at which the heart contracts, more exactly, a timing at which the blood vessels in a player's body part wearing the vital sensor76contract or expand). Specifically, in the game apparatus body5, it is possible to detect, as a pulse timing of the player, for example, a timing at which the pulse wave indicated by the pulse wave data obtained from the vital sensor76represents a local minimum value, a timing at which the pulse wave represents a local maximum value, a timing at which a blood vessel contraction rate reaches its maximum value, a timing at which a blood vessel expansion rate reaches its maximum value, a timing at which the acceleration rate of the blood vessel expansion rate reaches its maximum value, a timing at which the deceleration rate of the blood vessel expansion rate reaches its maximum value, or the like. Note that, in the case of detecting, as a pulse timing of the player, a timing at which the acceleration rate of the blood vessel expansion rate reaches its maximum value, or a timing at which the deceleration rate of the blood vessel expansion rate reaches its maximum value, a parameter obtained by differentiating the blood vessel contraction rate or the blood vessel expansion rate, namely, a timing at which the acceleration of the blood vessel expansion or contraction reaches its maximum value, may be detected as the pulse timing of the player.

As a second example, it is possible to calculate a heart rate HR by using the pulse timing of the player detected from the pulse wave indicated by the pulse wave data. For example, a value obtained by dividing 60 seconds by the interval of pulse timings is calculated as the heart rate HR of the player using the vital sensor76. Specifically, when the timing at which the pulse wave represents the local minimum value is set as the pulse timing, 60 seconds is divided by the interval of heartbeats between adjoining two local minimum values (an R-R interval shown inFIG. 9), whereby the heart rate HR is calculated.

As a third example, it is possible to calculate a respiratory cycle of the player by using a rise-fall cycle of the heart rate HR. Specifically, when the heart rate HR calculated in this embodiment is rising, it is determined that the player is breathing in, and when the heart rate HR is falling, it is determined that the player is breathing out. That is, by calculating the rise-fall cycle (fluctuation cycle) of the heart rate HR, it is possible to calculate the cycle of breathing (respiratory cycle) of the player.

As a fourth example, it is possible to determine the degree of easiness and difficulties felt by the player by using an amplitude PA of the pulse wave indicated by the pulse wave data obtained from the vital sensor76(e.g., the difference in the height between a local maximum value of the pulse wave and the succeeding local minimum value; seeFIG. 9). Specifically, when the amplitude PA of the pulse wave is decreased, it can be determined that the player is in a difficult state.

As a fifth example, it is possible to obtain a blood flow rate of the player by dividing a pulse wave area PWA (seeFIG. 9) obtained from the pulse wave signal by the heart rate HR.

As a sixth example, it is possible to calculate a coefficient of variance of the heartbeat of the player (coefficient of variance of R-R interval: CVRR) by using the interval of the pulse timings of the player (the interval of heartbeats; e.g., an R-R interval shown inFIG. 9) detected from the pulse wave indicated by the pulse wave data. For example, the coefficient of variance of the heartbeat is calculated by using the interval of heartbeats based on the past 100 beats indicated by the pulse wave obtained from the vital sensor76. Specifically, the following equation is applied for calculation.

Coefficient of variance of heartbeat={(standard deviation of the interval of 100 heartbeats)/(average value of the interval of 100 heartbeats)}×100

With the use of the coefficient of variance of the heartbeat, it is possible to calculate the state of the autonomic nerve of the player (e.g., the activity of the parasympathetic nerve).

Next, an overview of game processing performed on the game apparatus body5will be described with reference toFIGS. 10 to 13Dbefore a specific description of processes performed by the game apparatus body5is given.FIGS. 10 to 12are diagrams each showing an example of a game image displayed on the monitor2.FIGS. 13A to 13Dare diagrams each showing an example of a movement of a shooting aim S displayed on the monitor2.

InFIG. 10, the monitor2represents a virtual game world in which a player character PC and a target object T are arranged. In the example ofFIG. 10, a game is used in which an event (e.g., archery and Kyudo (the Japanese art of archery)) in which the player character PC shoots an arrow (arrow object A) is performed in the virtual game world. In the virtual game world, a target of archery is provided as the target object T. The player character PC holds a bow with the set arrow object A being drawn, and the arrow object A is released from the bow in accordance with an operation of the player.

In a state before the player character PC releases the arrow object A, the shooting aim S is displayed as a rough indication for a position and a direction toward and in which the arrow object A is discharged when being released. For example, the player can move the position of the shooting aim S in an up, down, right, or left direction in the display screen of the monitor2by operating the operation section72(e.g., the cross key72a) provided in the core unit70. Thus, the player moves a position (direction) to which the player character PC shoots the arrow object A, to a desired position (direction) by operating the operation section72. The positional relation between the shooting aim S and the virtual game world is a relative relation. Thus, the shooting aim S may be displayed in a fixed manner with respect to the display screen, and the virtual game world may be moved and displayed on the display screen in accordance with an operation of the operation section72. Alternatively, in accordance with an operation of the operation section72, the displayed position of the shooting aim S with respect to the display screen may be moved and the virtual game world may be also moved and displayed on the display screen. The following will describe an example in which the displayed position of the shooting aim S is moved in an up, down, right, or left direction in the display screen of the monitor2by operating the operation section72.

As shown inFIG. 11, the arrow object A is released in accordance with an operation of the player for releasing the arrow object A (e.g., an operation of pressing the A button72dor the B button72i; hereinafter may be described as a discharge operation), and moves (flies) in the virtual game world with a destination position set in the virtual game world by the shooting aim S, being a destination. As shown inFIG. 12, a score corresponding to a position at which the arrow object A finally reaches is given to the player character PC.

The position of the shooting aim S is changed in accordance with not only an operation of the operation section72performed by the player but also biological information (a biological signal) obtained from the player. For example, as shown inFIGS. 13A to 13D, the position of the shooting aim S is changed so as to wobble about a position set by an operation of the operation section72, in accordance with the biological signal obtained from the player. Specifically, in accordance with a pulse timing of the player, a wobbling direction, a wobbling range, a wobbling time, and the like are set for the shooting aim S. Then, wobbling of the shooting aim S is started on the basis of the set conditions (a state inFIG. 13B). Thus, the shooting aim S is displayed on the monitor2so as to wobble in the set wobbling direction (e.g., a direction D in the drawing) and in the set wobbling range (e.g., a wobbling range of reciprocation between a shooting aim position Sa and a shooting aim position Sb.

Then, the wobbling range of the shooting aim S is reduced in accordance with the set wobbling time (a state inFIG. 13C), and the wobbling of the shooting aim S stops at the time when the wobbling time elapses (a state inFIG. 13D). Then, at the next pulse timing of the player, the shooting aim S starts wobbling similarly as in the above. In this manner, the shooting aim S is displayed so as to intermittently wobble in accordance with pulse timings of the player.

When the shooting aim S is displayed so as to wobble in this manner, it is difficult for the player to take aim at a position to be shot by the arrow object A. As described above, when the arrow object A is released, the arrow object A moves (flies) in the virtual game world with a destination position set in the virtual game world by the shooting aim S, being a destination. Thus, in order to obtain a high score, it is necessary to perform a discharge operation with the shooting aim S being set at a position that gives a high score when being shot. Therefore, when the shooting aim S is displayed so as to wobble, it is difficult to set the shooting aim S at a position that gives a high score, and a highly entertaining operation whose result cannot be easily anticipated by the player is possible. As described above, since the positional relation between the shooting aim S and the virtual game world is a relative relation, the shooting aim S may be displayed in a fixed manner with respect to the display screen, and the virtual game world may be displayed on the display screen so as to wobble. Alternatively, the shooting aim S may be displayed so as to wobble with respect to the display screen, and the virtual game world may be also displayed on the display screen so as to wobble. In the following description, an example will be used in which the displayed position of the shooting aim S is changed in accordance with the biological signal obtained from the player.

As described later in detail, a wobbling range and a wobbling time of the shooting aim S are calculated on the basis of the heart rate HR calculated from the biological signal of the player, and a wobbling direction in which the shooting aim S wobbles is randomly set. Thus, the wobbling direction, the wobbling range, and the wobbling time of the shooting aim S cannot be easily anticipated by the player, and hence a more highly entertaining operation is possible.

The following will describe in detail the game processing performed on the game system1. With reference toFIG. 14, main data used in the game processing will be described.FIG. 14is a diagram showing an example of main data and programs stored in the external main memory12and/or the internal main memory35(hereinafter, the two main memories are collectively referred to a main memory) of the game apparatus body5.

As shown inFIG. 14, a data storage area of the main memory stores therein operation information data Da, heart rate data Db, average heart rate data Dc, heart rate upper limit data Dd, heart rate lower limit data De, wobbling flag data Df, wobbling range data Dg, wobbling time data Dh, wobbling direction data Di, elapsed time data Dj, designated position data Dk, current first wobbling range data Dl, current second wobbling range data Dm, first offset value data Dn, second offset value data Do, aim position data Dp, arrow object position data Dq, image data Dr, and the like. Note that, in addition to the data shown inFIG. 14, the main memory stores therein data required for the game processing, such as data (position data and the like) on other objects appearing in the game, data on the virtual game world (background data and the like). A program storage area of the main memory stores therein various programs Pa configuring the game program.

The operation information data Da includes key data Da1, pulse wave data Da2, and the like. The key data Da1indicates that the plurality of operation sections72in the core unit70have been operated, and is included in the series of pieces of operation information transmitted as transmission data from the core unit70. Note that the wireless controller module19included in the game apparatus body5receives key data included in the operation information transmitted from the core unit70in predetermined cycles (e.g., every 1/200 sec) and stores the received data into a buffer (not shown) included in the wireless controller module19. Thereafter, the key data stored in the buffer is read every one-frame period (e.g., every 1/60 sec.), which corresponds to a game processing cycle, and thereby the key data Da1in the main memory is updated.

In this case, the cycle of the reception of the operation information is different from the processing cycle, and therefore, a plurality of pieces of operation information received at a plurality of timings are stored in the buffer. In a description of the processing below, only the latest one of a plurality of pieces of operation information received at a plurality of timings is invariably used to perform a process at each step described below, and the processing proceeds to the next step.

In addition, a process flow will be described below by using an example in which the key data Da1is updated every one-frame period, which corresponds to the game processing cycle. However, the key data Da1may be updated in other processing cycles. For example, the key data Da1may be updated in transmission cycles of the core unit70, and the updated key data Da1may be used in game processing cycles. In this case, the cycle in which the key data Da1is updated is different from the game processing cycle.

The pulse wave data Da2indicates a pulse wave signal of a required time length obtained from the vital sensor76, and is included in the series of pieces of operation information transmitted as transmission data from the core unit70. A history of a pulse wave signal of a time length required in the processing described below is stored as pulse wave data into the pulse wave data Da2, and is updated as appropriate in response to reception of operation information.

The heart rate data Db indicates a history of heart rates HR (each of which is, for example, a value obtained by dividing 60 seconds by the interval of heartbeats (e.g., R-R interval)) of the player for a predetermined time period.

The average heart rate data Dc indicates the average value of the heart rate HR of the player. The heart rate upper limit data Dd and the heart rate lower limit data De respectively indicates a upper limit and a lower limit of the heart rate HR that are set on the basis of the average value of the heart rate HR.

The wobbling flag data Df indicates whether or not the shooting aim S is wobbling, and indicates a wobbling flag that is set to be ON when the shooting aim S is wobbling. As an example, the wobbling flag is set to be ON in accordance with a pulse timing of the player. Then, the wobbling flag is set to be OFF when a wobbling time during which the shooting aim S is displayed in a wobbling manner elapses, or in accordance with the player performing a discharge operation while the shooting aim S is wobbling. The wobbling range data Dg indicates a wobbling range Xmax of the shooting aim S that is set in accordance with a pulse timing of the player. The wobbling time data Dh indicates a wobbling time Tmax of the shooting aim S that is set in accordance with a pulse timing of the player. The wobbling direction data Di indicates a wobbling direction D of the shooting aim S that is set in accordance with a pulse timing of the player. The elapsed time data Dj indicates an elapsed time T that passes after the shooting aim S starts wobbling. As described later in detail, in this embodiment, the shooting aim S is moved so as to wobble by combining two wobbling movements, and the wobbling range data Dg, the wobbling time data Dh, the wobbling direction data Di, and the elapsed time data Dj indicate set values for each wobbling movement.

The designated position data Dk indicates a designated position of the player in the virtual game world displayed on the display screen, which designated position is set in accordance with an operation of the operation section72performed by the player. The current first wobbling range data Dl indicates a wobbling range X1of a first wobbling movement at the current moment. The current second wobbling range data Dm indicates a wobbling range X2of a second wobbling movement at the current moment. The first offset value data Dn indicates a first offset value of1for offsetting the position of the shooting aim S in the first wobbling movement at the current moment. The second offset value data Do indicates a second offset value of2that is obtained by adding a value for offsetting the position of the shooting aim S in the second wobbling movement at the current moment, to the offset value for the first wobbling movement at the current moment (the first offset value of1).

The aim position data Dp indicates the position of the shooting aim S in the virtual game world. The arrow object position data Dg indicates the position of the arrow object A in the virtual game world.

The image data Dr includes player character image data Dr1, arrow object image data Dr2, target object image data Dr3, aim image data Dr4, and the like. The player character image data Dr1is data for arranging the player character PC in the virtual game world thereby to generate a game image. The arrow object image data Dr2is data for arranging the arrow object A in the virtual game world thereby to generate a game image. The target object image data Dr3is data for arranging the target object T in the virtual game world thereby to generate a game image. The aim image data Dr4is data for arranging the shooting aim S in the virtual game world thereby to generate a game image.

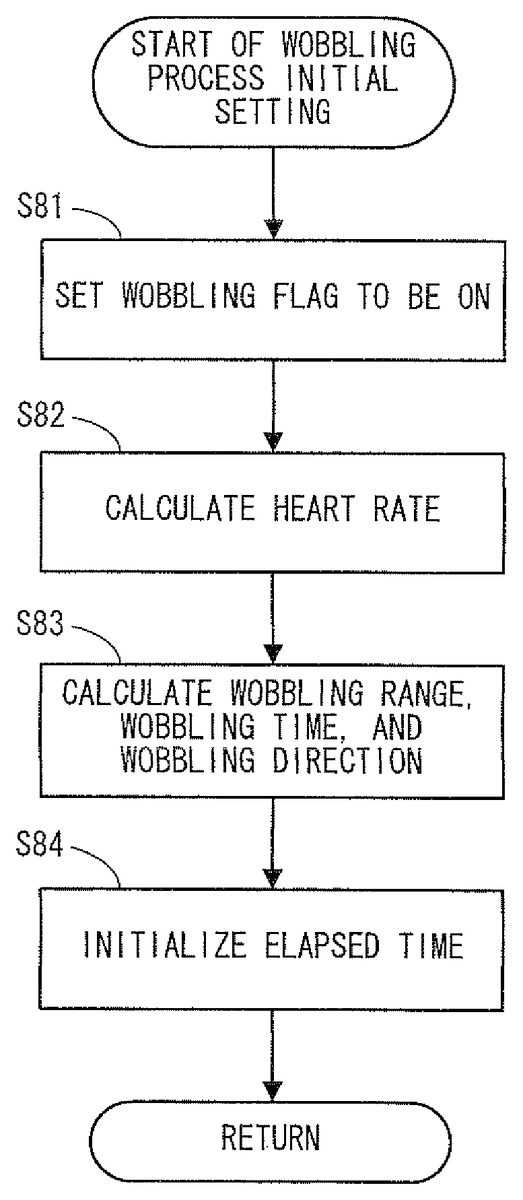

Next, the game processing performed on the game apparatus body5will be described in detail with reference toFIGS. 15 to 18.FIG. 15is a flowchart showing an example of the game processing performed on the game apparatus body5.FIG. 16is a subroutine flowchart showing an example of detailed processing of initial setting shown at step42inFIG. 15.FIG. 17is a subroutine flowchart showing an example of detailed processing of wobbling process initial setting shown at step49inFIG. 15.FIG. 18is a subroutine flowchart showing an example of detailed processing of a wobbling process shown at step50inFIG. 15. In the flowcharts shown inFIGS. 15 to 18, of the game processing, processes using the biological information from the vital sensor76and the key data from the core unit70will be mainly described, and other game processes that do not directly relate to the present invention will not be described in detail. InFIGS. 15 to 18, each step executed by the CPU10is abbreviated to “S”.

When the game apparatus body5is powered on, the CPU10of the game apparatus body5executes the boot program stored in the ROM/RTC13, thereby initializing each unit such as the main memory. Thereafter, the game program stored in the optical disc4is loaded into the main memory, and the CPU10starts execution of the game program. The flowchart shown inFIG. 15indicates game processing that is performed after completion of the aforementioned process.

InFIG. 15, the CPU10performs initial setting (step42), and proceeds the processing to the next step. With reference toFIG. 16, the following will describe the initial setting performed at step42.

InFIG. 16, the CPU10performs setting for obtaining biological information from the player (step61), and proceeds the processing to the next step. For example, the CPU10initializes each parameter used in the subsequent processing. Then, the CPU10instructs the player to wear the vital sensor76, which is an input device for obtaining biological information from the player, via the monitor2. Note that the instruction to the player to wear the vital sensor76may be performed, not by display on the monitor2, but by other means that can be sensed by the player, such as a voice, or by a combination thereof. Then, after the optical system of the vital sensor76recognizes that the player wears the vital sensor76, the vital sensor76starts obtaining a biological signal from the body of the player, and transmits data indicative of the biological signal, to the game apparatus body5.

Next, the CPU10obtains data indicative of operation information, from the core unit70(step62), and proceeds the processing to the next step. For example, the CPU10obtains operation information received from the core unit70, and updates the key data Da1with details of operations performed on the operation section72which details are indicated by the latest key data included in the operation information. Further, the CPU10updates the pulse wave data Da2with a pulse wave signal indicated by the latest biological information data that is included in the operation information received from the core unit70.

Next, the CPU10determines whether or not the current moment is a pulse timing (step63). When the current moment is a pulse timing, the CPU10proceeds the processing to the next step64. On the other hand, when the current moment is not a pulse timing, the CPU10proceeds the processing to the next step66. For example, at step63, the CPU10refers to the pulse wave signal indicated by the pulse wave data Da2and detects a predetermined shape characteristic point in a pulse wave. If the current moment corresponds to the shape characteristic point, the CPU10determines that the current moment is a pulse timing. For example, as the shape characteristic point, any one point is selected from among: a point at which the pulse wave represents a local minimum value; a point at which the pulse wave represents a local maximum value; a point at which the contraction rate of the blood vessels represents a maximum value; a point at which the expansion rate of the blood vessels represents a maximum value; a point at which the acceleration rate of the blood vessel expansion rate represents a maximum value; a point at which the deceleration rate of the blood vessel expansion rate represents a maximum value; and the like. Any of these points may be used as a shape characteristic point for determination of a pulse timing.

At step64, the CPU10calculates the heart rate HR of the player, updates the heartbeat data Db, and proceeds the processing to the next step. For example, the CPU10refers to the pulse wave signal based on the pulse wave data Da2, and calculates, as the interval of heartbeats at the current moment, a time interval between a pulse timing currently detected at step63and the pulse timing detected in the immediately preceding processing (e.g., the R-R interval; seeFIG. 9). Then, the CPU10calculates the heart rate HR by dividing 60 seconds by the interval of heartbeats, and updates the heart rate data Db with the newly calculated heart rate HR. Note that, when the pulse timing is detected for the first time in the current processing, the CPU10updates the heart rate data Db using the heart rate HR as a predetermined constant (e.g., 0), for example. Specifically, the CPU10performs update by: shifting forward, in time-series order, the heart rates HR in the history for the predetermined time period which history is stored in the heart rate data Db; and adding, as the latest heart rate HR, the newly calculated heart rate HR to the heart rate data Db. By so doing, a latest history of the heart rates HR for the predetermined time period is stored in the heart rate data Db.

Next, the CPU10calculates the average value of the heart rate HR of the player and updates the average heart rate data Do (step65), and proceeds the processing to the next step66. For example, the CPU10calculates the average value of the heart rate HR using the history of the heart rates HR for the predetermined time period which history is stored in the heart rate data Db. Then, the CPU10updates the average heart rate data Dc with the calculated average value.

At step66, the CPU10determines whether or not to start the game. The game is started, for example, when the player performs an operation for starting the game. Then, when starting the game, the CPU10proceeds the processing to the next step67. On the other hand, when not starting the game, the CPU10returns to step62to repeat the processing.

At step67, the CPU10performs initial setting of the game processing, and proceeds the processing to the next step. For example, in the initial setting of the game processing at step67, the CPU10performs setting of the virtual game world and initial setting of the player character PC and the like. Further, in the initial setting of the game processing at step67, the CPU10initializes each parameter (except the operation information data Da, the heart rate data Db, and the average heart rate data Dc) used in the subsequent game processing.

Next, the CPU10calculates an upper limit and a lower limit of the heart rate HR using the average value of the heart rate HR of the player (step68), and ends the processing of this subroutine. For example, the CPU10calculates the upper limit and the lower limit of the heart rate HR on the basis of the average value of the heart rate HR indicated by the average heart rate data Dc, and updates the heart rate upper limit data Dd and the heart rate lower limit data De with the calculated upper limit and the calculated lower limit. As one example, the CPU10calculate the upper limit of the heart rate HR by adding a predetermined value (e.g., 20) to the average value of the heart rate HR. Further, the CPU10calculates the lower value of the heart rate HR by subtracting a predetermined value (e.g., 20) from the average value of the heart rate HR. Note that the calculated upper limit and the calculated lower limit may be limited. For example, a maximum limit value is previously provided for the upper limit of the heart rate HR, and, when the calculated upper limit exceeds the maximum limit value, the heart rate upper limit data Dd is updated with the maximum limit value. Further, a minimum limit value is previously provided for the lower limit of the heart rate HR, and, when the calculated lower limit is less than the minimum limit value, the heart rate lower limit data De is updated with the minimum limit value.

Referring back toFIG. 15, after the initial setting at step42, the CPU10obtains data indicative of operation information, from the core unit70(step43), and proceeds the processing to the next step. For example, the CPU10obtains operation information received from the core unit70, and updates the key data Da1with details of operations performed on the operation section72which details are indicated by the latest key data included in the operation information. Further, the CPU10updates the pulse wave data Da2with a pulse wave signal indicated by the latest biological information data that is included in the operation information received from the core unit70.

Next, the CPU10determines whether or not the current moment is a pulse timing (step44). When the current moment is not a pulse timing, the CPU10proceeds the processing to the next step45. On the other hand, when the current moment is a pulse timing, the CPU10proceeds the processing to the next step49. The process for the determination of a pulse timing is the same as that at the above step63, and thus the detailed description thereof is omitted.

At step45, the CPU10refers to the wobbling flag data Df and determines whether or not the wobbling flag is set to be ON. When the wobbling flag is OFF, the CPU10proceeds the processing to the next step46. On the other hand, when the wobbling flag is ON, the CPU10proceeds the processing to the next step50.

At step46, the CPU10determines whether or not the current moment is during a discharge operation. For example, when the operation information obtained at step43indicates a discharge operation (e.g., an operation of pressing the A button72dor the B button72i), or when it is during a period from a time when the arrow object A is released from the bow to a time when the next arrow object A is set on the bow again, the CPU10determines that the current moment is during the discharge operation. When the current moment is not during the discharge operation, the CPU10proceeds the processing to the next step47. On the other hand, when the current moment is during the discharge operation, the CPU10proceeds the processing to the next step51.

At step47, the CPU10calculates a designated position corresponding to an operation of the player, and proceeds the processing to the next step. Here, the designated position is a position designated by the player with respect to the display screen of the monitor2or the virtual game world displayed on the display screen. For example, the designated position is indicated by coordinate data based on a coordinate system that is set for the display screen or a coordinate system that is set for the virtual game world. At step47, the CPU10changes the designated position indicated by the designated position data Dk, for example, in accordance with an operation state of the operation section72(e.g., the cross key72a) which is indicated by the operation information obtained at step43. For example, the CPU10changes the designated position indicated by the designated position data Dk, such that the designated position moves by a predetermined distance in a direction corresponding to the pressed portion of the cross key72a, and updates the designated position data Dk with the designated position after the change. When the player does not perform an operation for moving the designated position, the CPU10keeps the designated position indicated by the designated position data Dk, at the current position, and proceeds the processing to the next step.

Next, the CPU10calculates an aim position corresponding to the designated position and displays the shooting aim S at a position corresponding to the designated position (e.g., at the designated position or the aim position) (step48), and proceeds the processing to the next step52. For example, the CPU10calculates the aim position corresponding to the designated position indicated by the designated position data Dk, and updates the aim position data Dp with the calculated aim position. As one example, when the designated position is indicated as a position based on the coordinate system that is set for the display screen, the CPU10calculates, as an aim position, a position obtained by performing projective transformation (perspective transformation) of the designated position into the virtual game world, and updates the aim position data Dp. As another example, when the designated position is indicated as a position based on the coordinate system that is set for the virtual game world, the CPU10uses, as an aim position, the designated position indicated by the designated position data Dk, and updates the aim position data Dp with the used aim position. Then, the CPU10arranges the shooting aim S at the designated position based on the coordinate system that is set for the display screen or at the aim position based on the coordinate system that is set for the virtual game world, and displays the shooting aim S together with the virtual game world on the monitor2.

On the other hand, when it is determined at step44that the current moment is a pulse timing, the CPU10performs a wobbling process initial setting (step49) and proceeds the processing to the next step. With reference toFIG. 17, the following will describe the wobbling process initial setting performed at step49.

InFIG. 17, the CPU10sets the wobbling flag to be ON and updates the wobbling flag data Df (step81), and proceeds the processing to the next step.

Next, the CPU10calculates the heart rate HR of the player and updates the heart rate data Db (step82), and proceeds the processing to the next step. The method of calculating the heart rate HR and the method of updating the heart rate data Db are the same as those at the above step64, and thus the detailed description thereof is omitted.

Next, the CPU10calculates the wobbling range Xmax, the wobbling time Tmax, and the wobbling direction D (step83), and proceeds the processing to the next step. In this embodiment, the shooting aim S is moved so as to wobble by combining two wobbling movements (the first wobbling movement and the second wobbling movement). Thus, at step83, the CPU30calculates wobbling ranges Xmax1and Xmax2, wobbling times Tmax1and Tmax2, and wobbling directions D1and D2for the both wobbling movements. Then, the CPU10updates the wobbling range data Dg, the wobbling time data Dh, and the wobbling direction data Di with the calculated wobbling ranges Xmax1and Xmax2, the calculated wobbling times Tmax1and Tmax2, and the calculated wobbling directions D1and D2, respectively. The following will describe an example of calculation of the wobbling range, the wobbling time, and the wobbling direction for each wobbling movement.

The first wobbling movement is a wobbling movement such that, when the wobbling of the shooting aim S stops, the shooting aim S returns to the original position before the start of the wobbling. The wobbling range Xmax1and the wobbling time Tmax1for the first wobbling movement are calculated in accordance with the current heart rate HR of the player. For example, a value (e.g., 4.0) in the case where the heart rate HR of the player represents the upper limit, and a value (e.g., 1.0) in the case where the heart rate HR of the player represents the lower limit, are previously provided for the wobbling range Xmax1for the first wobbling movement, and the wobbling range Xmax1is calculated by performing linear interpolation of the current heart rate HR. Further, a value (e.g., 1.0) in the case where the heart rate HR of the player represents the upper limit, and a value (e.g., 0.7) in the case where the heart rate HR of the player represents the lower limit, are previously provided also for the wobbling time Tmax1for the first wobbling movement, and the wobbling time Tmax1is calculated by performing linear interpolation of the current heart rate HR. Here, the values set at step68(i.e., the upper limit and the lower limit indicated by the heart rate upper limit data Dd and the heart rate lower limit data De, respectively) are used as the upper limit and the lower limit, and the latest heart rate HR stored in the heart rate data Db is used as the current heart rate HR. For each of the wobbling range Xmax1and the wobbling time Tmax1, the value corresponding to the upper limit is set when the current heart rate HR exceeds the upper limit, and the value corresponding to the lower limit is set when the current heart rate HR is less than the lower limit. Further, as the wobbling direction Dl for the first wobbling movement, a value is randomly selected from the range between 0 and 2π (π is the circular constant, and this is the same in the following description).

The second wobbling movement is a wobbling movement such that, when the wobbling of the shooting aim S stops, the shooting aim S returns to a position that is different from the original position before the start of the wobbling. The wobbling time Tmax2for the second wobbling movement is calculated in accordance with the current heart rate HR of the player. For example, a value (e.g., 1.0) in the case where the heart rate HR of the player represents the upper limit, and a value (e.g., 0.7) in the case where the heart rate HR of the player represents the lower limit, are previously provided also for the wobbling time Tmax2for the second wobbling movement, and the wobbling time Tmax2is calculated by performing linear interpolation of the current heart rate HR. The values set at step68(i.e., the upper limit and the lower limit indicated by the heart rate upper limit data Dd and the heart rate lower limit data De, respectively) are used as the upper limit and the lower limit, and the latest heart rate HR stored in the heart rate data Db is used as the current heart rate HR. For the wobbling time Tmax2, the value corresponding to the upper limit is set when the current heart rate HR exceeds the upper limit, and the value corresponding to the lower limit is set when the current heart rate HR is less than the lower limit. Thus, the wobbling time Tmax2for the second wobbling movement and the wobbling time Tmax1for the first wobbling movement are typically the same, but may be different from each other. As the wobbling range Xmax2for the second wobbling movement, a value is randomly selected from the range between 0 and a predetermined value (e.g., 3.0). Further, as the wobbling direction D2for the second wobbling movement, a value is randomly selected from the range between 0 and 2π.

Next, the CPU10initializes the elapsed time T and updates the elapsed time data Dj (step84), and ends the processing of this subroutine. As described above, in this embodiment, the shooting aim S is moved so as to wobble by combining the first wobbling movement and the second wobbling movement. Thus, at step84, elapsed times T1and T2corresponding to the first and second wobbling movements, respectively, are initialized (e.g., both are initialized to be 0). Here, when the wobbling time Tmax1for the first wobbling movement and the wobbling time Tmax2for the second wobbling movement are always set so as to be the same, only one elapsed time T may be handled at step84and in processing described below.

Referring back toFIG. 15, after the wobbling process initial setting at step49, the CPU10performs a wobbling process (step50) and proceeds the processing to the next step52. With reference toFIG. 18, the following will describe the wobbling process performed at step50.