U.S. Pat. No. 9,539,511

COMPUTER-READABLE STORAGE MEDIUM, INFORMATION PROCESSING SYSTEM, AND INFORMATION PROCESSING METHOD FOR OPERATING OBJECTS IN A VIRTUAL WORLD BASED ON ORIENTATION DATA RELATED TO AN ORIENTATION OF A DEVICE

AssigneeNintendo Co Ltd

Issue DateOctober 27, 2011

Illustrative Figure

Abstract

It is determined, based on attitude data, whether or not a direction of a predetermined axis set in a portable display device is within a first range. When the result of the determination is that the direction of the predetermined axis is within the first range, an action of a first object arranged in a virtual world is controlled based on the direction of the predetermined axis. When it is determined that the direction of the predetermined axis is outside the first range, at least an action of a second object arranged in the virtual world is controlled based on the direction of the predetermined axis. Then, a first image showing the virtual world including at least the first object and the second object is displayed on the portable display device.

Description

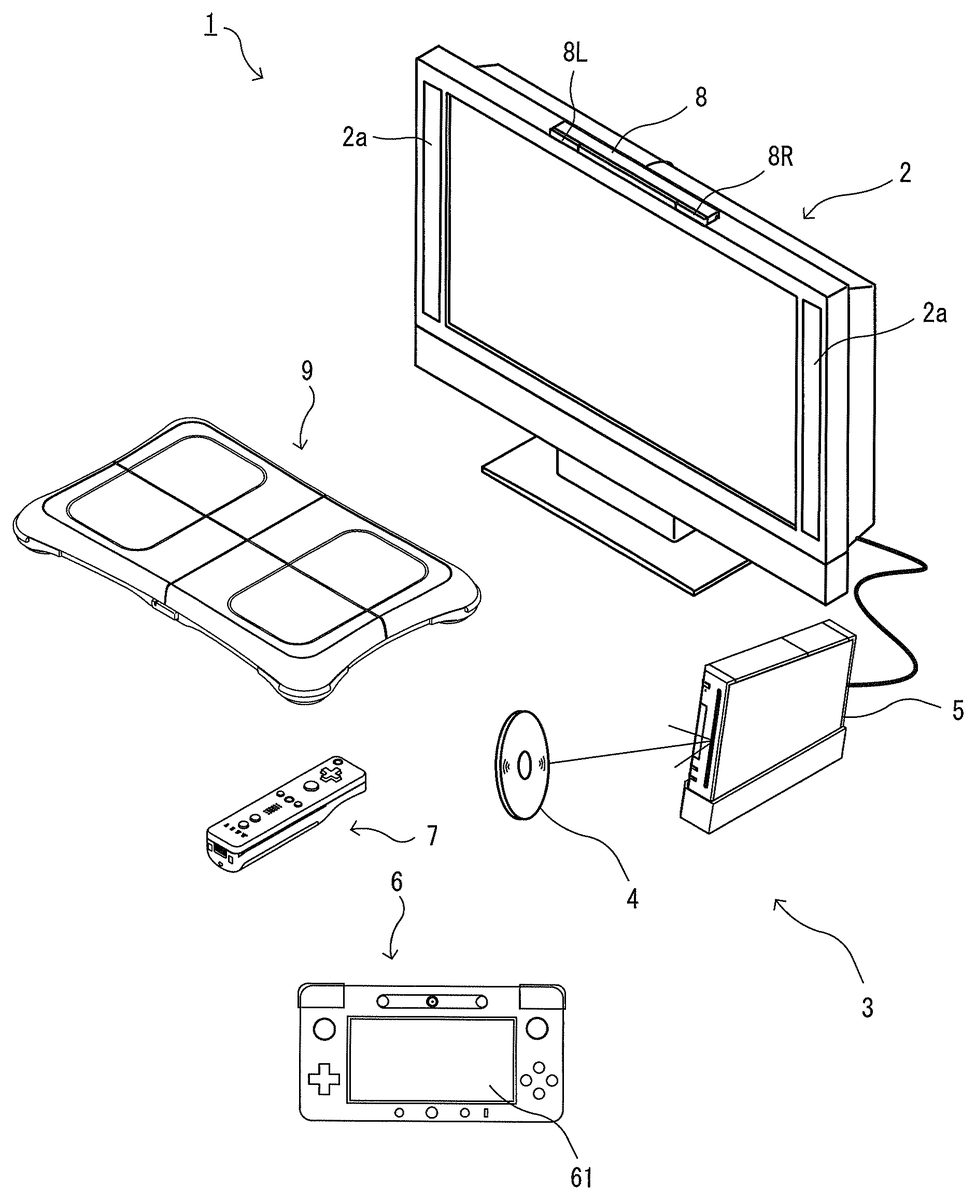

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS First Embodiment With reference toFIG. 1, an information processing apparatus for executing an information processing program according to a first embodiment and an information processing system including the information processing apparatus will be described. Hereinafter, in order to provide a specific description, a stationary game apparatus body5is used as an example of the information processing apparatus, and a game system including the game apparatus body5will be described.FIG. 1is an external view illustrating an example of the game system1including the stationary game apparatus body5.FIG. 2is a block diagram illustrating an example of the game apparatus body5. Hereinafter, the game system1will be described. As shown inFIG. 1, the game system1includes a household television receiver (hereinafter, referred to as a monitor)2which is an example of display means, and the stationary game apparatus3connected to the monitor2via a connection cord. The monitor2includes loudspeakers2afor outputting, in the form of sound, a sound signal outputted from the game apparatus3. Further, the game apparatus3includes: an optical disc4having stored therein a program (e.g., a game program); the game apparatus body5having a computer for executing the program stored in the optical disc4to display a game screen on the monitor2; a terminal device6; a controller7for providing the game apparatus body5with operation information required for operating, for example, objects displayed on the display screen; and a board type controller9. The game system1executes a game process on the game apparatus body5in accordance with a game operation using at least one of the terminal device6, the controller7, and the board type controller9, and displays a game image obtained by the game process on the monitor2and/or the terminal device6. The game apparatus body5is wirelessly connected to the terminal device6, the controller7, and the board type controller9so as to enable wireless communication therebetween. For example, the wireless communication is executed ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

First Embodiment

With reference toFIG. 1, an information processing apparatus for executing an information processing program according to a first embodiment and an information processing system including the information processing apparatus will be described. Hereinafter, in order to provide a specific description, a stationary game apparatus body5is used as an example of the information processing apparatus, and a game system including the game apparatus body5will be described.FIG. 1is an external view illustrating an example of the game system1including the stationary game apparatus body5.FIG. 2is a block diagram illustrating an example of the game apparatus body5. Hereinafter, the game system1will be described.

As shown inFIG. 1, the game system1includes a household television receiver (hereinafter, referred to as a monitor)2which is an example of display means, and the stationary game apparatus3connected to the monitor2via a connection cord. The monitor2includes loudspeakers2afor outputting, in the form of sound, a sound signal outputted from the game apparatus3. Further, the game apparatus3includes: an optical disc4having stored therein a program (e.g., a game program); the game apparatus body5having a computer for executing the program stored in the optical disc4to display a game screen on the monitor2; a terminal device6; a controller7for providing the game apparatus body5with operation information required for operating, for example, objects displayed on the display screen; and a board type controller9. The game system1executes a game process on the game apparatus body5in accordance with a game operation using at least one of the terminal device6, the controller7, and the board type controller9, and displays a game image obtained by the game process on the monitor2and/or the terminal device6. The game apparatus body5is wirelessly connected to the terminal device6, the controller7, and the board type controller9so as to enable wireless communication therebetween. For example, the wireless communication is executed according to the Bluetooth (registered trademark) standard or the IEEE802.11n standard. However, the wireless communication may be executed in accordance with other standards such as standards for infrared communication.

The optical disc4, typifying an information storage medium used for the game apparatus body5in an exchangeable manner, is detachably inserted in the game apparatus body5. The optical disc4has stored therein an information processing program (typically, a game program) to be executed by the game apparatus body5. The game apparatus body5has, on a front surface thereof, an insertion opening for the optical disc4. The game apparatus body5reads and executes the information processing program stored in the optical disc4inserted into the insertion opening to execute the game process.

The monitor2is connected to the game apparatus body5via a connection cord. The monitor2displays a game image obtained by the game process executed by the game apparatus body5. The monitor2includes the loudspeakers2a. The loudspeakers2aeach output a game sound obtained as a result of the game process. In another embodiment, the game apparatus body5and a stationary display unit may be integrated with each other. The communication between the game apparatus body5and the monitor2may be wireless communication.

The game apparatus body5has mounted thereto a flash memory17(seeFIG. 2) which functions as a backup memory for fixedly storing data such as saved data. The game apparatus body5executes the game program or the like stored in the optical disc4, and displays a result thereof as a game image on the monitor2and/or the terminal device6. The game program or the like to be executed may be previously stored in the flash memory17as well as in the optical disc4. Further, the game apparatus body5may reproduce a state of a game played in the past, by using the saved data stored in the flash memory17, and display an image of the game state on the monitor2and/or the terminal device6. A user of the game apparatus3can enjoy the game progress by operating at least one of the terminal device6, the controller7, and the board type controller9while viewing the game image displayed on the monitor2and/or the terminal device6.

The controller7and the board type controller9each wirelessly transmit transmission data such as operation information, by using, for example, the Bluetooth technology, to the game apparatus body5having a controller communication module19. The controller7is operation means for performing, for example, selection of options displayed on the display screen of the monitor2. The controller7includes a housing which is small enough to be held by one hand, and a plurality of operation buttons (including a cross key and the like) which are exposed at the surface of the housing. In addition, as is described later, the controller7includes an imaging information calculation section for taking an image viewed from the controller7. As exemplary imaging targets of the imaging information calculation section, two LED modules (hereinafter, referred to as “markers”)8L and8R are provided in the vicinity of the display screen of the monitor2(above the screen inFIG. 1). Although details will be described later, a user (player) is allowed to perform a game operation while moving the controller7, and the game apparatus body5uses a marker8to calculate the movement, position, attitude and the like of the controller7. The marker8has two markers8L and8R at both ends thereof. Specifically, the marker8L (as well as the marker8R) includes one or more infrared LEDs (Light Emitting Diodes), and emits infrared light forward from the monitor2. The marker8is connected to the game apparatus body5, so that the game apparatus body5can control the infrared LEDs included in the marker8to be lit on or off. The marker8is a portable unit, so that the user is allowed to place the marker8in any position. AlthoughFIG. 1shows a case where the marker8is placed on the monitor2, the location and direction of the marker8may be optionally selected. Further, the controller7is capable of receiving, at a communication section75, transmission data wirelessly transmitted from the controller communication module19of the game apparatus body5, to generate a sound or vibration based on the transmission data.

In another embodiment, the controller7and/or the board type controller9may be connected to the game apparatus body5via a cable. Further, in the exemplary embodiment, the game system1includes a controller7and a board type controller9. However, the game apparatus body5is communicable with a plurality of controllers7and a plurality of board type controllers9. Therefore, a plurality of players can play a game by using a predetermined number of controllers7and board type controller9simultaneously.

The controller7includes a housing which is formed by, for example, plastic molding, and has a plurality of operation sections in the housing. The controller7transmits, to the game apparatus body5, operation data representing an input state to any of the operation sections (i.e., whether or not any of the operation buttons is pressed).

The controller7includes an imaging information calculation section for analyzing image data of an image taken by image pickup means to identify an area having a high luminance in the image, and calculates the position of the center of gravity, the size, and the like of the area. For example, the imaging information calculation section includes image pickup means fixed to the housing of the controller7. A marker emitting infrared light, such as a marker section65of the terminal device6and/or the marker8, is a target to be imaged by the image pickup means. The imaging information calculation section calculates the position of the imaging target in the image taken by the image pickup means, and transmits, to the game apparatus body5, marker coordinate data representing the calculated position. Since the marker coordinate data varies depending on the direction (angle of tilt) or the position of the controller7, the game apparatus body5can calculate the direction and the position of the controller7by using the marker coordinate data.

The controller7includes an acceleration sensor and/or a gyro sensor. The acceleration sensor detects an acceleration (including gravitational acceleration) of the controller7, and transmits acceleration data representing the detected acceleration to the game apparatus body5. Since the acceleration detected by the acceleration sensor varies depending on the direction (angle of tilt) and the movement of the controller7, the game apparatus body5can calculate the direction and the movement of the controller7by using the obtained acceleration data. The gyro sensor detects angular velocities around three axes which are set in the controller7, and transmits angular velocity data representing the detected angular velocities to the game apparatus body5. Since the angular velocities detected by the gyro sensor vary depending on the direction (angle of tilt) and the movement of the controller7, the game apparatus body5can calculate the direction and the movement of the controller7by using the obtained angular velocity data. In this way, the user is allowed to perform a game operation by pressing the operation sections provided on the controller7, or by moving the controller7to change the position and the attitude (angle of tilt) of the controller7.

The controller7is provided with a loudspeaker and a vibrator. The controller7processes sound data transmitted from the game apparatus body5, and causes the loudspeaker to output a sound based on the sound data. Further, the controller7processes vibration data transmitted from the game apparatus body5, and causes the vibrator to vibrate in accordance with the vibration data. In the exemplary embodiment described later, a user is allowed to play a game without using the controller7. The board type controller9will be described in detail later.

The terminal device6is a portable device that is small enough to be held by a user, and the user is allowed to move the terminal device6with hands, or place the terminal device6at any location. Although a specific structure of the terminal device6will be described later, the terminal device6includes an LCD (Liquid Crystal Display)61as display means, and input means (a touch panel62, a gyro sensor604, and the like described later). The terminal device6and the game apparatus body5(a terminal communication module28(seeFIG. 2)) are communicable with each other wirelessly or via a cable. The terminal device6receives, from the game apparatus body5, data of an image (e.g., a game image) generated in the game apparatus body5, and displays the image represented by the data on an LCD61. Although in the exemplary embodiment the LCD61is used as a display device, the terminal device6may include any other display device, such as a display device utilizing EL (Electro Luminescence), for example. Further, the terminal device6transmits, to the game apparatus body5having the terminal communication module28, operation data representing the content of an operation performed on the terminal device6.

Next, with reference toFIG. 2, an internal structure of the game apparatus body5will be described.FIG. 2is a block diagram illustrating an example of an internal structure of the game apparatus body5. The game apparatus body5includes a CPU (Central Processing Unit)10, a system LSI (Large Scale Integration)11, an external main memory12, a ROM/RTC (Read Only Memory/Real Time Clock)13, a disc drive14, an AV-IC (Audio Video-Integrated Circuit)15and the like.

The CPU10, serving as a game processor, executes a program stored in the optical disc4to perform a process. The CPU10is connected to the system LSI11. In addition to the CPU10, the external main memory12, the ROM/RTC13, the disc drive14, and the AV-IC15are connected to the system LSI11. The system LSI11performs processes such as control of data transmission between the respective components connected thereto, generation of an image to be displayed, and acquisition of data from an external apparatus. An internal structure of the system LSI11will be described later. The external main memory12, which is a volatile memory, stores programs loaded from the optical disc4or the flash memory17, and stores various data. The external main memory12is used as a work area and a buffer area for the CPU10. The ROM/RTC13includes a ROM (so-called boot ROM) incorporating a program for booting the game apparatus body5, and a clock circuit (RTC) for counting time. The disc drive14reads, from the optical disc4, program data, texture data and the like, and writes the read data into an internal main memory35described below or the external main memory12.

The system LSI11includes an input/output processor (I/O processor)31, a GPU (Graphics Processor Unit)32, a DSP (Digital Signal Processor)33, a VRAM (Video RAM)34, and the internal main memory35. These components31to35are connected to each other via an internal bus (not shown).

The GPU32, which is a part of rendering means, generates an image in accordance with a graphics command (draw command) supplied from the CPU10. The VRAM34stores data (such as polygon data and texture data) required for the GPU32to execute the graphics command. When an image is generated, the GPU32generates image data by using the data stored in the VRAM3. In the exemplary embodiment, the game apparatus body5may generate both a game image to be displayed on the monitor2and a game image to be displayed on the terminal device6. Hereinafter, the game image to be displayed on the monitor2may be referred to as a “monitor game image”, and the game image to be displayed on the terminal device6may be referred to as a “terminal game image”.

The DSP33, serving as an audio processor, generates sound data by using sound data and sound waveform (tone quality) data stored in the internal main memory35and the external main memory12. In the exemplary embodiment, similarly to the game images, both a game sound to be output from the loudspeakers2aof the monitor2and a game sound to be output from the loudspeakers of the terminal device6may be generated. Hereinafter, the game sound to be output from the monitor2may be referred to as a “monitor game sound”, and the game sound to be output from the terminal device6may be referred to as a “terminal game sound”.

Among the image data and sound data generated by the game apparatus body5, the image data and sound data to be output to the monitor2are read by the AV-IC15. The AV-IC15outputs the read image data to the monitor2via an AV connector16, and outputs the read sound data to the loudspeakers2aincluded in the monitor2. Thereby, an image is displayed on the monitor2, and a sound is output from the loudspeakers2a.

Further, among the image data and sound data generated by the game apparatus body5, the image data and sound data to be output to the terminal device6are transmitted to the terminal device6by the I/O processor31or the like. Data transmission to the terminal device6by the I/O processor31or the like will be described later.

The I/O processor31executes data reception and transmission with the components connected thereto, and download of data from an external apparatus. The I/O processor31is connected to the flash memory17, the network communication module18, the controller communication module19, an extension connector20, a memory card connector21, and a codec LSI27. An antenna23is connected to the controller communication module19. The codec LSI27is connected to the terminal communication module28, and an antenna29is connected to the terminal communication module28.

The game apparatus body5is connected to a network such as the Internet so as to communicate with external information processing apparatuses (for example, other game apparatuses or various servers). That is, the I/O processor31is connected to a network via the network communication module18and the antenna22so as to communicate with external information processing apparatuses connected to the network. The I/O processor31accesses the flash memory17at regular intervals so as to detect for data to be transmitted to the network. When data to be transmitted is detected, the data is transmitted to the network via the network communication module18and the antenna22. Further, the I/O processor31receives, via the network, the antenna22and the network communication module18, data transmitted from the external information processing apparatuses or data downloaded from a download server, and stores the received data in the flash memory17. The CPU10executes a program, and reads the data stored in the flash memory17to use the data for execution of the program. The flash memory17may store not only the data transmitted and received between the game apparatus body5and the external information processing apparatuses, but also saved data (result data or progress data of the process) of the game played with the game apparatus body5. Further, the flash memory17may store programs such as a game program.

The game apparatus body5can receive operation data from the controller7and/or the board type controller9. That is, the I/O processor31receives, via the antenna23and the controller communication module19, operation data or the like transmitted from the controller7and/or the board type controller9, and stores (temporarily) the data in a buffer region of the internal main memory35or the external main memory12. Similarly to the external main memory12, the internal main memory35may store a program loaded from the optical disc4or a program loaded from the flash memory17, and various data. The internal main memory35may be used as a work region or buffer region of the CPU10.

The game apparatus body5is capable of transmitting/receiving image data, sound data and the like to/from the terminal device6. When transmitting a game image (terminal game image) to the terminal device6, the I/O processor31outputs data of a game image generated by the GPU32to the codec LSI27. The codec LSI27performs a predetermined compression process on the image data supplied from the I/O processor31. The terminal communication module28performs wireless communication with the terminal device6. Accordingly, the image data compressed by the codec LSI27is transmitted by the terminal communication module28to the terminal device6via the antenna29. In the exemplary embodiment, the codec LSI27compresses the image data by using a highly efficient compression technique, for example, the H.264 standard. The codec LSI27may adopt other compression techniques. When the communication rate is sufficiently high, uncompressed image data may be transmitted. The terminal communication module28is, for example, a Wi-Fi certified communication module. The terminal communication module28may perform wireless communication with the terminal device6at a high speed by using, for example, the technique of MIMO (Multiple Input Multiple Output) adopted in the IEEE802.11n standard, or may use other communication techniques.

The game apparatus body5transmits, to the terminal device6, sound data as well as the image data. That is, the I/O processor31outputs sound data generated by the DSP33to the terminal communication module28via the codec LSI27. The codec LSI27performs a compression process on the sound data in a similar manner to that for the image data. Any compression technique may be adopted for the sound data. In another embodiment, uncompressed sound data may be transmitted. The terminal communication module28transmits the compressed image data and sound data to the terminal device6via the antenna29.

The game apparatus body5transmits, in addition to the image data and sound data, various control data to the terminal device6, according to need. The control data represent control instructions for the components included in the terminal device6, such as an instruction to control on/off of a marker section (a marker section65shown inFIG. 5), and an instruction to control image taking of a camera (a camera66shown inFIG. 5). The I/O processor31transmits the control data to the terminal device6in response to an instruction from the CPU10. In the exemplary embodiment, the codec LSI27does not perform a data compression process on the control data. In another embodiment, however, the codec LSI27may perform a compression process on the control data. The above-described data transmitted from the game apparatus body5to the terminal device6may be encrypted according to need, or may not be encrypted.

The game apparatus body5can receive various data from the terminal device6. Although details will be described later, in the exemplary embodiment, the terminal device6transmits operation data, image data, and sound data. The respective data transmitted from the terminal device6are received by the terminal communication module28via the antenna29. The image data and sound data transmitted from the terminal device6have been subjected to a similar compression process to that for the image data and sound data transmitted from the game apparatus body5to the terminal device6. Accordingly, these image data and sound data are transmitted from the terminal communication module28to the codec LSI27, and subjected to a decompression process by the codec LSI27. The decompressed data are output to the I/O processor31. On the other hand, since the operation data transmitted from the terminal device6is smaller in amount than the image data and sound data, the operation data need not be compressed. The operation data may be encrypted according to need, or may not be encrypted. Accordingly, the operation data, which has been received by the terminal communication module28, is output to the I/O processor31via the codec LSI27. The I/O processor31stores (temporarily) the data received from the terminal device6in the buffer region of the internal main memory35or the external main memory12.

The game apparatus body5is connectable to other devices and external storage media. That is, an extension connector20and a memory card connector21are connected to the I/O processor31. The expansion connector20is an interface connector as typified by a USB and an SCSI, and is capable of performing communication with the network, instead of the network communication module18, by connecting thereto a medium such as an external storage medium, a peripheral device such as another controller, or a wired communication connector. The memory card connector21is a connector for connecting thereto an external storage medium such as a memory card. For example, the I/O processor31accesses the external storage medium via the expansion connector20or the memory card connector21to save or read data.

The game apparatus body5includes (on the front main surface thereof, for example) a power button24, a reset button25, an insertion slot in which the optical disc4is inserted, an eject button26for ejecting the optical disc4from the insertion slot of the game apparatus body5, and the like. The power button24and the reset button25are connected to the system LSI11. When the power button24is turned on, the respective components of the game apparatus body5are supplied with power. When the reset button25is pressed, the system LSI11re-executes the boot program of the game apparatus body5. The eject button26is connected to the disc drive14. When the eject button26is pressed, the optical disc4is ejected from the disc drive14.

In another embodiment, some of the components of the game apparatus body5may be constituted as an extension device separated from the game apparatus body5. At this time, the extension device may be connected to the game apparatus body5via the extension connector20. Specifically, the extension device may include, for example, the codec LSI27, the terminal communication module28, and the antenna29, and may be detachably connected to the extension connector20. Thus, by connecting the extension device to the game apparatus body which does not have the above-mentioned, the game apparatus body can be made communicable with the terminal device6.

Next, a structure of the terminal device6will be described with reference toFIGS. 3 to 5.FIG. 3is a diagram illustrating an example of an external structure of the terminal device6. More specifically, (a) ofFIG. 3is a front view of the terminal device6, (b) ofFIG. 3is a top view, (c) ofFIG. 3is a right side view, and (d) ofFIG. 3is a bottom view.FIG. 4shows an example of a state in which a user holds the terminal device6with both hands.

As shown inFIG. 3, the terminal device6includes a housing60which generally has a horizontally long plate-like rectangular shape. The housing60is small enough to be held by the user. Therefore, the user is allowed to move the terminal device6with hands, and change the location of the terminal device6.

The terminal device6includes an LCD61on a front surface of the housing60. The LCD61is provided near the center of the front surface of the housing60. Therefore, as shown inFIG. 4, the user, holding the housing60at portions to the right and left of the LCD61, is allowed to move the terminal device6while viewing a screen of the LCD61.FIG. 4shows an example in which the user holds the terminal device6horizontally (i.e., with the longer sides of the terminal device6being oriented horizontally) by holding the housing60at portions to the right and left of the LCD61. However, the user may hold the terminal device6vertically (i.e., with the longer sides of the terminal device6being oriented vertically).

As shown in (a) ofFIG. 3, the terminal device6includes, as operation means, a touch panel62on the screen of the LCD61. In the exemplary embodiment, the touch panel62is, but is not limited to, a resistive film type touch panel. However, a touch panel of any type, such as electrostatic capacitance type, may be used. The touch panel62may be of single touch type or multiple touch type. In the exemplary embodiment, the touch panel62has the same resolution (detection accuracy) as that of the LCD61. However, the resolution of the touch panel62and the resolution of the LCD61need not be the same. Although an input onto the touch panel62is usually performed by using a touch pen, in addition to the touch pen, a finger of the user may be used for performing an input onto the touch panel62. The housing60may have an opening for accommodating the touch pen used for performing an operation to the touch panel62. Since the terminal device6has the touch panel62, the user is allowed to operate the touch panel62while moving the terminal device6. That is, the user is allowed to directly (by using the touch panel62) perform an input onto the screen of the LCD61while moving the LCD61.

As shown inFIG. 3, the terminal device6has, as operation means, two analog sticks63A and63B, and a plurality of operation buttons64A to64L. The analog sticks63A and63B are each a device for designating a direction. The analog sticks63A and63B are each configured such that a stick part thereof to be operated by a finger of the user is slidable or tiltable in any direction (at any angle in any direction such as the upward, the downward, the rightward, the leftward, or the diagonal direction) with respect to the front surface of the housing60. The left analog stick63A is provided to the left of the screen of the LCD61, and the right analog stick63B is provided to the right of the screen of the LCD61. Therefore, the user is allowed to perform an input for designating a direction by using the analog stick63A or63B with either the left or right hand. Further, as shown inFIG. 4, the analog sticks63A and63B are positioned so as to be operated by the user holding the right and left portions of the terminal device6. Therefore, the user is allowed to easily operate the analog sticks63A and63B when the user holds and moves the terminal device6.

The operation buttons64A to64L are each operation means for performing a predetermined input. As described below, the operation buttons64A to64L are positioned so as to be operated by the user holding the right and left portions of the terminal device6(seeFIG. 4). Accordingly, the user is allowed to easily operate the operation means when the user holds and moves the terminal device6.

As shown in (a) ofFIG. 3, among the operation buttons64A to64L, the cross button (direction input button)64A and the operation buttons64B to64H are provided on the front surface of the housing60. The operation buttons64A to64H are positioned so as to be operated by a thumb of the user (seeFIG. 4).

The cross button64A is provided to the left of the LCD61and beneath the left analog stick63A. That is, the cross button64A is positioned so as to be operated by the left hand of the user. The cross button64A is cross-shaped, and is capable of indicating an upward, a downward, a leftward, or a rightward direction. The operation buttons64B to64D are provided beneath the LCD61. The three operation buttons64B to64D are positioned so as to be operated by the right and left hands of the user. The four operation buttons64E to64H are provided to the right of the LCD61and beneath the right analog stick63B. That is, the four operation buttons64E to64H are positioned so as to be operated by the right hand of the user. Further, the four operation buttons64E,64H,64F, and64G are positioned upward, downward, leftward, and rightward, respectively, with respect to a center position of the four operation buttons. Accordingly, the terminal device6may cause the four operation buttons64E to64H to function as buttons which allow the user to designate an upward, a downward, a leftward, or a rightward direction.

As shown in (a), (b), and (c) ofFIG. 3, a first L button64I and a first R button64J are provided on diagonal upper portions (an upper left portion and an upper right portion) of the housing60. Specifically, the first L button64I is provided on the left end of the upper side surface of the plate-shaped housing60so as to protrude from the upper and left side surfaces. The first R button64J is provided on the right end of the upper side surface of the housing60so as to protrude from the upper and right side surfaces. In this way, the first L button64I is positioned so as to be operated by the index finger of the left hand of the user, and the first R button64J is positioned so as to be operated by the index finger of the right hand of the user (seeFIG. 4).

As shown in (b) and (c) ofFIG. 3, leg parts68A and68B are provided so as to protrude from a rear surface (i.e., a surface reverse of the front surface on which the LCD61is provided) of the plate-shaped housing60, and a second L button64K and a second R button64L are provided so as to protrude from the leg parts68A and68B, respectively. Specifically, the second L button64K is provided at a slightly upper position on the left side (the left side as viewed from the front surface side) of the rear surface of the housing60, and the second R button64L is provided at a slightly upper position on the right side (the right side as viewed from the front-surface side) of the rear surface of the housing60. In other words, the second L button64K is provided at a position substantially opposite to the left analog stick63A provided on the front surface, and the second R button64L is provided at a position substantially opposite to the right analog stick63B provided on the front surface. The second L button64K is positioned so as to be operated by the middle finger of the left hand of the user, and the second R button64L is positioned so as to be operated by the middle finger of the right hand of the user (seeFIG. 4). Further, as shown in (c) ofFIG. 3, the leg parts68A and68B each have a surface facing obliquely upward, and the second L button64K and the second R button64L are provided on the oblique surfaces of the leg parts68A and68B, respectively. Thus, the second L button64K and the second R button64L have button surfaces facing obliquely upward. Since it is supposed that the middle finger of the user moves vertically when the user holds the terminal device6, the upward facing button surfaces allow the user to easily press the second L button64K and the second R button64L. Further, the leg parts68A and68B provided on the rear surface of the housing60allow the user to easily hold the housing60. Moreover, the operation buttons provided on the leg parts68A and68B allow the user to easily perform operation while holding the housing60.

In the terminal device6shown inFIG. 3, the second L button64K and the second R button64L are provided on the rear surface of the housing60. Therefore, if the terminal device6is placed with the screen of the LCD61(the front surface of the housing60) facing upward, the screen of the LCD61may not be perfectly horizontal. Accordingly, in another embodiment, three or more leg parts may be provided on the rear surface of the housing60. In this case, if the terminal device6is placed on a floor with the screen of the LCD61facing upward, the three or more leg parts contact the floor. Thus, the terminal device6can be placed with the screen of the LCD61being horizontal. Such a horizontal placement of the terminal device6may be achieved by providing detachable leg parts on the rear surface of the housing60.

The respective operation buttons64A to64L are assigned functions, according to need, in accordance with a game program. For example, the cross button64A may be used for direction designation operation, selection operation, and the like, and the operation buttons64E to64H may be used for determination operation, cancellation operation, and the like.

The terminal device6includes a power button (not shown) for turning on/off the power of the terminal device6. The terminal device6may include an operation button for turning on/off screen display of the LCD61, an operation button for performing connection setting (pairing) with the game apparatus body5, and an operation button for adjusting the volume of loudspeakers (loudspeakers607shown inFIG. 5).

As shown in (a) ofFIG. 3, the terminal device6includes a marker section (a marker section65shown inFIG. 5) including a marker65A and a marker65B, on the front surface of the housing60. For example, the marker section65is provided above the LCD61. The markers65A and65B are each constituted by one or more infrared LEDs, like the markers8L and8R of the marker8. The marker section65is used, like the marker8, for causing the game apparatus body5to calculate a movement or the like of the controller7with respect to the marker section65. The game apparatus body5is capable of controlling the infrared LEDs of the marker section65to be on or off.

The terminal device6includes a camera66as imaging means. The camera66includes an image pickup element (e.g., a CCD image sensor or a CMOS image sensor) having a predetermined resolution, and a lens. For example, the camera66is provided on the front surface of the housing60. Accordingly, the camera66is capable of taking an image of the face of the user holding the terminal device6. For example, the camera66is capable of taking an image of the user playing a game while viewing the LCD61.

The terminal device6has a microphone (a microphone609shown inFIG. 5) as sound input means. A microphone hole60bis provided in the front surface of the housing60. The microphone609is embedded in the housing60at a position inside the microphone hole60b. The microphone609detects for a sound, such as user's voice, around the terminal device6.

The terminal device6has loudspeakers (loudspeakers607shown inFIG. 5) as sound output means. As shown in (d) ofFIG. 3, speaker holes60aare provided in the lower side surface of the housing60. A sound is output through the speaker holes60afrom the loudspeakers607. In the exemplary embodiment, the terminal device6has two loudspeakers, and the speaker holes60aare provided at positions corresponding to a left loudspeaker and a right loudspeaker, respectively.

The terminal device6includes an extension connector67for connecting another device to the terminal device6. In the exemplary embodiment, as shown in (d) ofFIG. 3, the extension connector67is provided in the lower side surface of the housing60. Any device may be connected to the extension connection67. For example, a controller (a gun-shaped controller or the like) used for a specific game or an input device such as a keyboard may be connected to the extension connector67. If another device need not be connected, the extension connector67need not be provided.

In the terminal device6shown inFIG. 3, the shapes of the operation buttons and the housing60, the number of the respective components, and the positions in which the components are provided are merely examples. The shapes, numbers, and positions may be different from those described above.

Next, an internal structure of the terminal device6will be described with reference toFIG. 5.FIG. 5is a block diagram illustrating an example of an internal structure of the terminal device6. As shown inFIG. 5, the terminal device6includes, in addition to the components shown inFIG. 3, a touch panel controller601, a magnetic sensor602, a gyro sensor604, a user interface controller (UI controller)605, a codec LSI606, loudspeakers607, a sound IC608, a microphone609, a wireless module610, an antenna611, an infrared communication module612, a flash memory613, a power supply IC614, a battery615, and a vibrator619. These electronic components are mounted on an electronic circuit board and accommodated in the housing60.

The UI controller605is a circuit for controlling data input to various input/output sections and data output from various input/output sections. The UI controller605is connected to the touch panel controller601, the analog stick63(the analog sticks63A and63B), the operation button64(the operation buttons64A to64L), the marker section65, the magnetic sensor602, the acceleration sensor603, the gyro sensor604, and the vibrator619. Further, the UI controller605is connected to the codec LSI606and the extension connector67. The power supply IC614is connected to the UI controller605, so that power is supplied to the respective components through the UI controller605. The internal battery615is connected to the power supply IC614, so that power is supplied from the battery615. Further, a battery charger616or a cable, which is supplied with power from an external power supply, may be connected to the power supply IC614via a connector or the like. In this case, the terminal device6can be supplied with power and charged from the external power supply by using the battery charger616or the cable. Charging of the terminal device6may be performed by setting the terminal device6on a cradle (not shown) having a charging function.

The touch panel controller601is a circuit which is connected to the touch panel62and controls the touch panel62. The touch panel controller601generates a predetermined form of touch position data, based on a signal from the touch panel62, and outputs the touch position data to the UI controller605. The touch position data represents coordinates of a position at which an input is performed on an input surface of the touch panel62. The touch panel controller601reads a signal from the touch panel62and generates touch position data every predetermined period of time. Further, various control instructions on the touch panel62are output from the UI controller605to the touch panel controller601.

The analog stick63outputs, to the UI controller605, stick data representing a direction in which the stick part operated by a finger of the user slides (or tilts), and an amount of the sliding (tilting). The operation button64outputs, to the UI controller605, operation button data representing an input state of each of the operation buttons64A to64L (whether or not the operation button is pressed).

The magnetic sensor602detects the magnitude and direction of a magnetic field to detect an orientation. Orientation data representing the detected orientation is output to the UI controller605. The UI controller605outputs, to the magnetic sensor602, a control instruction for the magnetic sensor602. Examples of the magnetic sensor602include: an MI (Magnetic Impedance) sensor, a fluxgate sensor, a hall sensor, a GMR (Giant Magneto Resistance) sensor, a TMR (Tunneling Magneto Resistance) sensor, and an AMR (Anisotropic Magneto Resistance) sensor. However, any sensor may be adopted as long as the sensor can detect an orientation. Strictly speaking, the obtained orientation data does not represent an orientation in a place where a magnetic field is generated in addition to the geomagnetism. Even in such a case, it is possible to calculate a change in the attitude of the terminal device6because the orientation data changes when the terminal device6moves.

The acceleration sensor603is provided inside the housing60. The acceleration sensor603detects the magnitudes of linear accelerations along three axial directions (x-axis, y-axis, and z-axis directions shown in (a) ofFIG. 3), respectively. Specifically, in the acceleration sensor603, the long side direction of the housing60is defined as the x-axial direction, the short side direction of the housing60is defined as the y-axial direction, and the direction orthogonal to the front surface of the housing60is defined as the z-axial direction, thereby detecting the magnitudes of the linear accelerations in the respective axial directions. Acceleration data representing the detected accelerations is output to the UI controller605. The UI controller605outputs, to the acceleration sensor603, a control instruction for the acceleration sensor603. In the exemplary embodiment, the acceleration sensor603is, for example, an electrostatic capacitance type MEMS acceleration sensor. However, in another embodiment, another type of acceleration sensor may be used. Further, the acceleration sensor603may be an acceleration sensor for detecting the magnitude of acceleration in one axial direction or two axial directions.

The gyro sensor604is provided inside the housing60. The gyro sensor604detects the angular velocities around the three axes (the above-described x, y, and z axes), respectively. Angular velocity data representing the detected angular velocities is output to the UI controller605. The UI controller605outputs, to the gyro sensor604, a control instruction for the gyro sensor604. Any number and any combination of gyro sensors may be used as long as the angular velocities around three axes are detected. The gyro sensor604may be constituted by a two-axis gyro sensor and a one-axis gyro sensor, like the gyro sensor703. Alternatively, the gyro sensor604may be a gyro sensor for detecting the angular velocity around one axis or two axes.

The vibrator619is, for example, a vibration motor or a solenoid. The vibrator619is connected to the UI controller605. The terminal device6is vibrated by actuating the vibrator619in accordance with a control instruction outputted from the UI controller605to the vibrator619. The vibration of the terminal device6is transmitted to the user's hand holding the terminal device6. Thus, a so-called vibration-feedback game is realized.

The UI controller605outputs, to the codec LSI606, the operation data including the touch position data, the stick data, the operation button data, the orientation data, the acceleration data, and the angular velocity data, which have been received from the respective components. If another device is connected to the terminal device6through the extension connector67, data representing operation to the other device may be included in the operation data.

The codec LSI606is a circuit for performing a compression process on data to be transmitted to the game apparatus body5, and a decompression process on data transmitted from the game apparatus body5. The LCD61, the camera66, the sound IC608, the wireless module610, the flash memory613, and the infrared communication module612are connected to the codec LSI606. The codec LSI606includes a CPU617and an internal memory618. Although the terminal device6is configured not to perform a game process, the terminal device6may execute a program for managing the terminal device6or a program for communication. For example, a program stored in the flash memory613is loaded into the internal memory618and executed by the CPU617when the terminal device6is powered on, thereby starting up the terminal device6. A part of the area of the internal memory618is used as a VRAM for the LCD61.

The camera66takes an image in accordance with an instruction from the game apparatus body5, and outputs data of the taken image to the codec LSI606. The codec LSI606outputs, to the camera66, a control instruction for the camera66, such as an instruction to take an image. The camera66is also capable of taking a moving picture. That is, the camera66is capable of repeatedly performing image taking, and repeatedly outputting image data to the codec LSI606.

The sound IC608is connected to the loudspeakers607and the microphone609. The sound IC608is a circuit for controlling input of sound data from the microphone609to the codec LSI606and output of sound data from the codec LSI606to the loudspeakers607. Specifically, when the sound IC608receives sound data from the codec LSI606, the sound IC608performs D/A conversion on the sound data, and outputs a resultant sound signal to the loudspeakers607to cause the loudspeakers607to output a sound. The microphone609detects sound (such as user's voice) propagated to the terminal device6, and outputs a sound signal representing the sound to the sound IC608. The sound IC608performs A/D conversion on the sound signal from the microphone609, and outputs a predetermined form of sound data to the codec LSI606.

The codec LSI606transmits the image data from the camera66, the sound data from the microphone609, and the operation data from the UI controller605(terminal operation data), to the game apparatus body5through the wireless module610. In the exemplary embodiment, the codec LSI606subjects the image data and the sound data to a compression process similar to that performed by the codec LSI27. The compressed image data and sound data, and the terminal operation data are output to the wireless module610as transmission data. The antenna611is connected to the wireless module610, and the wireless module610transmits the transmission data to the game apparatus body5through the antenna611. The wireless module610has the same function as the terminal communication module28of the game apparatus body5. That is, the wireless module610has a function of connecting to a wireless LAN by a method based on, for example, the IEEE802.11n standard. The data transmitted from the wireless module610may be encrypted according to need, or may not be encrypted

As described above, the transmission data transmitted from the terminal device6to the game apparatus body5includes the operation data (terminal operation data), the image data, and the sound data. If another device is connected to the terminal device6through the extension connector67, data received from the other device may be included in the transmission data. The infrared communication module612performs, with another device, infrared communication based on, for example, the IRDA standard. The codec LSI606may include, in the transmission data, data received by the infrared communication, and transmit the transmission data to the game apparatus body5, according to need.

As described above, the compressed image data and sound data are transmitted from the game apparatus body5to the terminal device6. These data are received by the codec LSI606through the antenna611and the wireless module610. The codec LSI606decompresses the received image data and sound data. The decompressed image data is output to the LCD61, and an image according to the image data is displayed on the LCD61. On the other hand, the decompressed sound data is output to the sound IC608, and a sound based on the sound data is output from the loudspeakers607.

When control data is included in the data received from the game apparatus body5, the codec LSI606and the UI controller605make control instructions for the respective components, according to the control data. As described above, the control data represents control instructions for the respective components (in the exemplary embodiment, the camera66, the touch panel controller601, the marker section65, the sensors602to604, the vibrator619, and the infrared communication module612) included in the terminal device6. In the exemplary embodiment, the control instructions represented by the control data are considered to be instructions to start and halt (stop) the operations of the above-mentioned components. That is, some components which are not used for a game may be halted to reduce power consumption. In this case, data from the halted components are not included in the transmission data transmitted from the terminal device6to the game apparatus body5. Since the marker section65is constituted by infrared LEDs, the marker section65is controlled by simply turning on/off the supply of power thereto.

As described above, the terminal device6includes the operation means such as the touch panel62, the analog sticks63, and the operation buttons64. In another embodiment, however, the terminal device6may include other operation means instead of or in addition to these operation means.

The terminal device6includes the magnetic sensor602, the acceleration sensor603, and the gyro sensor604as sensors for calculating the movement (including the position and the attitude, or a change in the position or the attitude) of the terminal device6. In another embodiment, however, the terminal device6may include one or two of these sensors. In still another embodiment, the terminal device6may include other sensors instead of or in addition to these sensors.

The terminal device6includes the camera66and the microphone609. In another embodiment, however, the terminal device6may not include the camera66and the microphone609, or may include either of the camera66and the microphone609.

The terminal device6includes the marker section65as a component for calculating the positional relation between the terminal device6and the controller7(such as the position and/or the attitude of the terminal device6as viewed from the controller7). In another embodiment, however, the terminal device6may not include the marker section65. In still another embodiment, the terminal device6may include other means as a component for calculating the above-mentioned positional relation. For example, the controller7may include a marker section, and the terminal device6may include an image pickup element. In this case, the marker8may include an image pickup element instead of an infrared LED.

Next, a structure of the board type controller9will be described with reference toFIGS. 6 to 8.FIG. 11is a perspective view illustrating an example of an external appearance of the board type controller9shown inFIG. 1. As shown inFIG. 6, the board type controller9includes a platform9aon which a user stands (on which the user puts his/her feet), and at least four load sensors94ato94dfor detecting a load applied to the platform9a. Each of the load sensors94ato94dis embedded in the platform9a(seeFIG. 7), and the positions where the load sensors94ato94dare provided are indicated by dotted lines inFIG. 6. In the following description, the four load sensors94ato94dmay be collectively referred to as a load sensor94.

The platform9ais formed in the shape of substantially a rectangular parallelepiped, and is in the shape of substantially a rectangle as viewed from the top. For example, the short side of the rectangular shape of the platform9ais approximately 30 cm, and the long side thereof is approximately 50 cm. The upper surface of the platform9ais flat, and has a pair of planes on which the user stands with the bottoms of his/her feet contacting thereto. Specifically, the upper surface of the platform9ahas a plane (a back-left region enclosed with a double line inFIG. 6) on which the user's left foot is put, and a plane (a front-right region enclosed with a double line inFIG. 6) on which the user's right foot is put. The platform9ahas, at four corners thereof, side surfaces each partially projecting outward in a cylindrical shape.

In the platform9a, the four load sensors94ato94dare arranged at predetermined intervals. In the exemplary embodiment, the four load sensors94ato94dare arranged on the periphery of the platform9a, more specifically, at the four corners of the platform9a, respectively. The intervals of the load sensors94ato94dare appropriately set such that the load sensors94ato94dcan accurately detect the intention of a game operation which is expressed by a manner of applying a load onto the platform9aby the user.

FIG. 7shows an example of a cross-sectional view of the board type controller9, taken along a line A-A inFIG. 6, and an example of an enlarged view of a corner part where a load sensor94is arranged. InFIG. 7, the platform9aincludes a support plate90on which the user stands, and legs92. The load sensors94ato94dare provided in positions where the legs92are provided. In the exemplary embodiment, since the four legs92are provided at the four corners, the four load sensors94ato94dare also provided at the corresponding four corners. Each leg92is formed by plastic molding in the shape of substantially a cylinder with a base. Each load sensor94is located on a spherical part92aprovided on the base of the corresponding leg92. The support plate90is supported by the legs92via the load sensors94.

The support plate90includes an upper plate90aforming an upper surface and an upper side surface portion, a lower plate90bforming a lower surface and a lower side surface portion, and an intermediate plate90cprovided between the upper plate90aand the lower plate90b. The upper plate90aand the lower plate90bare formed by, for example, plastic molding, and are integrated using an adhesive or the like. The intermediate plate90cis, for example, formed of a single metal plate by press forming. The intermediate plate90cis fixed onto the four load sensors94ato94d. The upper plate90ahas, on a lower surface thereof, a grid-patterned rib (not shown), and is supported by the intermediate plate90cvia the rib. Therefore, when the user stands on the platform9a, the load is transferred to the four legs92via the support plate90and the load sensors94ato94d. As indicated by arrows inFIG. 7, a reaction from a floor, which is generated by the input load, is transferred from the legs92through the spherical parts92a, the load sensors94ato94dand the intermediate plate90cto the upper plate90a.

Each load sensor94is, for example, a strain gauge (strain sensor) load cell, which is a load converter for converting an input load to an electrical signal. In the load sensor94, a strain-generating body95is deformed according to an input load, resulting in a strain. The strain is converted into a change of electrical resistance and then converted into a change of voltage by a strain sensor96attached to the strain-generating body.95Therefore, the load sensor94outputs, from an output terminal thereof, a voltage signal indicating the input load.

The load sensor94may be of other types, such as a tuning fork type, a string vibration type, an electrostatic capacitance type, a piezoelectric type, a magnetostrictive type, and a gyroscopic type.

Referring back toFIG. 6, the board type controller9further includes a power button9c. When the power button9cis operated (e.g., when the power button9cis pressed) in the state where the board type controller9is not activated, power is supplied to each of circuit components (seeFIG. 8) of the board type controller9. However, there are cases in which the board type controller9is powered on in accordance with an instruction from the game apparatus body5and thereby supply of power to the circuit components is started. The board type controller9may be automatically powered off when a state where the user does not stand thereon continues for a predetermined period of time (e.g., 30 sec) or more. Further, when the power button9cis again operated in the state where the board type controller9is in the active state, the board type controller9may be powered off to stop supply of power to the circuit components.

FIG. 8is a block diagram illustrating an example of an electrical configuration of the board type controller9. InFIG. 8, flows of signals and data are indicated by solid arrows, and supply of power is indicated by dotted arrows.

As shown inFIG. 8, the board type controller9includes a microcomputer100for controlling the operation thereof. The microcomputer100includes a CPU, a ROM, a RAM, and the like, which are not shown. The CPU controls the operation of the board type controller9in accordance with a program stored in the ROM.

The power button9c, an AD converter102, a DC-DC converter104, and a wireless module106are connected to the microcomputer100. An antenna106ais connected to the wireless module106. The four load sensors94ato94bare connected to the AD converter102via amplifiers108, respectively.

Further, the board type controller9includes a battery110for supplying power to the circuit components. In another embodiment, an AC adapter may be connected to the board type controller9instead of the battery110so that commercial power is supplied to the circuit components. In this case, instead of the DC-DC converter104, a power circuit, which converts alternating current into direct current and lowers and rectifies a direct-current voltage, may be provided in the board type controller9. In the exemplary embodiment, power is supplied directly from the battery110to the microcomputer100and the wireless module106. In other words, power is constantly supplied from the battery110to the wireless module106and some components (such as the CPU) in the microcomputer100to detect whether or not the power button9cis turned on and whether or not a command that instructs power-on is transmitted from the game apparatus body5. On the other hand, power is supplied from the battery110through the DC-DC converter104to the load sensors94ato94d, the AD converter102, and the amplifiers108. The DC-DC converter104converts a voltage value of direct current supplied from the battery110into a different voltage value, and supplies the resultant direct current to the load sensors94ato94d, the AD converter102, and the amplifiers108.

Supply of power to the load sensors94ato94d, the A/D converter102and the amplifiers108may be performed according to need by the microcomputer100that controls the DC-DC converter104. Specifically, when the microcomputer100determines that it is necessary to operate the load sensors94ato94dto detect a load, the microcomputer100may control the DC-DC converter104to supply power to the load sensors94ato94d, the A/D converter102and the amplifiers108.

When power is supplied to the load sensors94ato94d, the load sensors94ato94deach output a signal indicating a load inputted thereto. These signals are amplified by the respective amplifiers108, and converted from analog signals into digital data by the A/D converter102. The digital data is input to the microcomputer100. The detected values of the load sensors94ato94dare given identification information of the load sensors94ato94d, so that the load sensors94ato94dcan be identified from the corresponding detected values. Thus, the microcomputer100can obtain the data representing the detected load values of the four load sensors94ato94dat the same time.

On the other hand, when the microcomputer100determines that it is not necessary to operate the load sensors94ato94d, i.e., when it is not the time for load detection, the microcomputer100controls the DC-DC converter104to stop supply of power to the load sensors94ato94d, the A/D converter102, and the amplifiers108. Thus, the board type controller9can operate the load sensors94ato94dto detect a load or a distance only when it is required, resulting in a reduction in power consumption for load detection.

Load detection is typically required when the game apparatus body5(FIG. 1) needs to obtain load data. For example, when game apparatus body5requires load information, the game apparatus body5transmits an information acquisition command to the board type controller9. When the microcomputer100receives the information acquisition command from the game apparatus body5, the microcomputer100controls the DC-DC converter104to supply power to the load sensors94ato94dand the like, thereby detecting a load. On the other hand, when the microcomputer100does not receive a load acquisition command from the game apparatus body5, the microcomputer100controls the DC-DC converter104to stop supply of power to the load sensors94ato94dand the like.

The microcomputer100may control the DC-DC converter104based on a determination that load detection timing occurs at predetermined intervals. When such periodic load detection is performed, information regarding the constant time period may be supplied and stored from the game apparatus body5to the microcomputer100of the board type controller9when the game is started, or it may be preinstalled in the microcomputer100.

The data representing the detected values from the load sensors94ato94dare transmitted as board operation data (input data) for the board type controller9from the microcomputer100via the radio module106and an antenna106bto the game apparatus body5. For example, when the microcomputer100has performed load detection according to a command from the game apparatus body5, the microcomputer100transmits the detected value data of the load sensors94ato94dto the game apparatus body5on receipt of the detected value data from the A/D converter102. The microcomputer100may transmit the detected value data to the game apparatus body5at predetermined intervals. If the interval of the data transmission is longer than the interval of the load detection, data containing load values which have been detected at a plurality of detection timings up to the next transmission timing may be transmitted.

The wireless module106is set so as to perform communication according to the same wireless standard (the Bluetooth, wireless LAN, and the like) as that for the controller communication module19of the game apparatus body5. Accordingly, the CPU10of the game apparatus body5is allowed to transmit an information acquisition command to the board type controller9through the controller communication module19and the like. Thus, the board type controller9is allowed to receive the command from the game apparatus body5through the wireless module106and the antenna106a. Further, the board type controller9is allowed to transmit the board operation data including the load detection values (or load calculation values) of the load sensors94ato94dto the game apparatus body5.

For example, in a game which is executed based on a simple sum of four load values detected by the four load sensors94ato94d, the user is allowed to stand at any position with respect to the four load sensors94ato94dof the board type controller9. That is, the user is allowed to stand on the platform9aat any position and in any direction to play a game. In some kinds of games, however, the direction of a load value detected by each of the four load sensors94viewed from the user needs to be identified. That is, a positional relation between the four load sensors94of the board type controller9and the user needs to be recognized. In this case, for example, the positional relation between the four load sensors94and the user may be previously defined, and the user may be supposed to stand on the platform9ain a manner which allows the predetermined positional relation. Typically, a positional relation in which two of the load sensors94ato94dare present in front of, behind, to the right of, and to the left of the user standing in the center of the platform9a, i.e., a positional relation in which the user stands in the center of the platform9aof the board type controller9, is defined. In this case, the platform9aof the board type controller9is rectangular in shape as viewed from the top, and the power button9cis provided at one side (long side) of the rectangle. Therefore, it is previously ruled that the user, using the power button9cas a guide, stands on the platform9asuch that the long side at which the power button9cis provided is located in a predetermined direction (front, rear, left or right). In this case, each of the load values detected by the load sensors94ato94dis a load value of a predetermined direction (front right, front left, rear right, or rear left) as viewed from the user. Therefore, the board type controller9and the game apparatus body5can find out a direction to which each detected load value corresponds as viewed from the user, based on the identification information of the load sensors94contained in the detected load value data and arrangement data representing previously set (stored) positions or directions of the load sensors94with respect to the user. As a result, it is possible to recognize what is intended by a game operation performed by the user, such as an operation direction of forward, backward, rightward or leftward, or which foot of the user is lifted.

Next, an outline of information processing performed on the game apparatus body5will be described with reference toFIGS. 9, 10A, and 10Bbefore a specific description of processes performed by the game apparatus body5is given. In the following description of the outline of information processing, a first exemplary game is used as an example of information processing.FIG. 9is a diagram illustrating an example of a user who plays the first exemplary game by using the terminal device6and the board type controller9.FIG. 10Ais a diagram illustrating an example of an image of the first exemplary game, which is displayed on the LCD61of the terminal device6.FIG. 10Bis a diagram illustrating an example of an image of the first exemplary game, which is displayed on the monitor2.

As shown inFIG. 9, in the first exemplary game, the user performs an operation by using the terminal device6and the board type controller9. Specifically, the user stands on the board type controller9with the terminal device6in his/her hands, and performs an operation. Then, the user performs an action (e.g., stepping or knee bending) on the board type controller9and simultaneously performs an operation to move the terminal device6, while viewing the image displayed on the monitor2and the image displayed on the LCD61of the terminal device6, thereby playing the game. On each of the LCD61of the terminal device6and the monitor2, a game image is displayed, in which a player object Po performs an action (e.g., an action to change its attitude or an action to change its movement direction) in a virtual world in accordance with the direction and the attitude of the terminal device6held by the user as well as the user's action on the board type controller9, and the position and the attitude of a virtual camera set in the virtual world are changed in accordance with the position and the attitude of the player object Po.

As shown inFIG. 10A, on the LCD61of the terminal device6, the player object Po moving in the virtual world is displayed. In the example shown inFIG. 10A, a virtual camera is located near the back of the player object Po swimming in the sea, and a state of the virtual world viewed from the virtual camera is displayed together with player object Po. Displaying, on the LCD61, the virtual world viewed at close range from behind the player object Po allows the user holding the terminal device6to experience a sense of presence in the virtual world, and allows the user to intuitively know the movement direction and the movement speed of the player object Po. Further, the attitude and the movement direction of the player object Po are changed when the attitude of the terminal device6is changed or the terminal device6is rotated to change its direction, and the position and the attitude of the virtual camera are changed in accordance with the attitude change of the terminal device6. For example, by associating the attitude and the direction of the terminal device6with the attitude and the direction of the virtual camera, the user is allowed to experience a sense of looking into the virtual world through the LCD61of the terminal device6.

Further, as shown inFIG. 10B, the same virtual world as that displayed on the LCD61is displayed on the monitor2. In the example shown inFIG. 10B, a state of the virtual world in which the player object Po swimming in the sea is viewed from a distance in a manner of bird's eye viewing is displayed together with the player object Po. Further, in the example shown inFIG. 10B, a movement trajectory Lp of the player object Po in the sea is displayed in the virtual world. Displaying, on the monitor2, the virtual world in which the player object Po is viewed from a distance in a manner of bird's eye viewing allows the user to easily know the surroundings of the player object Po, and allows another person who watches the user's playing the first exemplary game to enjoy a view in which the player object Po moves in the virtual world.

For example, when the user performs stepping on the board type controller9, the player object Po swims in the sea with flutter kicks at a speed based on the user's stepping action. When the user performs knee bending on the board type controller9, the player object Po swims in the sea with dolphin kicks at a speed based on the user's knee bending action. In this way, the user is allowed to change the swimming style or the movement speed of the player object Po in accordance with his/her action on the board type controller.

For example, as described above, detected load values based on the user's action on the board type controller9are output from the board type controller9. Using the detected load values allows calculation of a total load applied to the board type controller9, and a center-of-gravity position of the load applied to the board type controller9. Further, using a change in the total load or a change in the center-of-gravity position allows estimation of the action of the user on the board type controller9. Based on the estimated user's action on the board type controller9, the swimming style and the movement speed of the player object Po are set.

Further, the attitude and the movement direction of the player object Po swimming in the sea change in accordance with the direction and the attitude of the terminal device6held by the user. For example, when the user turns the terminal device6to the upward, downward, leftward, or rightward direction, the attitude of the player object Po swimming in the sea (the direction to which the player object Po faces in the virtual world) changes in accordance with the direction change of the terminal device6, and the direction in which the player object Po swims also changes. Specifically, when the user changes the direction of the terminal device6such that the back surface of the terminal device6faces upward, the attitude of the player object Po changes such that the player object Po faces the sea surface, and the swimming direction of the player object Po changes such that the player object Po swims upward to the sea surface. When the user changes the direction of the terminal device6such that the back surface of the terminal device6faces leftward, the attitude of the player object Po changes to the left as viewed from the player object Po, and the swimming direction of the player object Po changes to the left. In this way, the user is allowed to change the attitude and the movement direction of the player object Po in accordance with the direction and the attitude of the terminal device6held by the user. For example, by associating the attitude and the direction of the terminal device6with the attitude and the movement direction of the player object Po, the user is allowed to perform, by using the terminal device6, a realistic operation as if he/she becomes the player object Po. Further, since the virtual camera is located near the back of the player object Po swimming in the sea, when the attitude and the movement direction of the player object Po change, the attitude and the position of the virtual camera also change. For example, by associating the attitude and the direction of the terminal device6with the attitude and the direction of the virtual camera, the user is allowed to experience a sense of looking into the virtual world through the LCD61of the terminal device6.

For example, acceleration data or angular velocity data based on a change in the attitude of the terminal device6is output from the terminal device6. Since the direction of the gravitational acceleration applied to the terminal device6can be calculated by using the acceleration represented by the acceleration data, it is possible to estimate the attitude of the terminal device6with respect to the vertical direction in the real space. Further, since the angular velocity and/or the dynamic acceleration applied to the terminal device6are known by using the angular velocity represented by the angular velocity data and/or the acceleration represented by the acceleration data, it is possible to estimate a change in the attitude (a change in the direction) of the terminal device from its initial attitude in the real space by using the angular velocity and/or the dynamic acceleration. Based on the estimated attitude change (direction change) of the terminal device, the attitude and the movement direction of the player object Po as well as the attitude and the position of the virtual camera are set.

Next, a process of the first embodiment, which is performed in the game system1, will be described in detail. First, main data used in the process will be described with reference toFIG. 11.FIG. 11is a diagram illustrating an example of main data and programs stored in the external main memory12and/or the internal main memory35(hereinafter, these two main memories are collectively referred to as a main memory) of the game apparatus body5of the first embodiment.

As shown inFIG. 11, in a data storage area of the main memory, board operation data Daa, terminal operation data Dab, load value data Dac, center-of-gravity position data Dad, terminal device direction/attitude data Dae, operation direction data Daf, action/attitude data Dag, movement vector data Dah, position data Dai, virtual camera data Daj, movement trajectory data Dak, action mode flag data Dam, image data Dan, and the like are stored. In addition to the data shown inFIG. 11, data required for a game process, such as image data of various objects displayed on the monitor2and the LCD61, and sound data used for a game, are optionally stored in the main memory. In a program storage area of the main memory, various programs Pa constituting an information processing program of the first embodiment are stored.

As the board operation data Daa, a series of operation information (board operation data) transmitted as transmission data from the board type controller9is stored, and updated to the latest board operation data. For example, the board operation data Daa includes load data Daa1and the like. The load data Daa1is data representing load values detected by the load sensors94ato94dof the board type controller9.