U.S. Pat. No. 9,539,506

System and Method for Playsets Using Tracked Objects and Corresponding Virtual Worlds

AssigneeDisney Enterprises, Inc.

Issue DateApril 11, 2016

Illustrative Figure

Abstract

There is provided a system and method for playsets using tracked objects and corresponding virtual worlds. There is provided an object for use with a physical environment and connectable to a virtual world corresponding to the physical environment, the object including a processor and a plurality of sensors including a position sensor. The processor is configured to establish a first connection with the virtual world, wherein the virtual world contains a virtual object corresponding to the object, determine a position of the object using the position sensor, and send the position of the object using the first connection. There is also provided a physical environment having similar capabilities as the object.

Description

DETAILED DESCRIPTION OF THE INVENTION The present application is directed to a system and method for playsets using tracked objects and corresponding virtual worlds. The following description contains specific information pertaining to the implementation of the present invention. One skilled in the art will recognize that the present invention may be implemented in a manner different from that specifically discussed in the present application. Moreover, some of the specific details of the invention are not discussed in order not to obscure the invention. The specific details not described in the present application are within the knowledge of a person of ordinary skill in the art. The drawings in the present application and their accompanying detailed description are directed to merely exemplary embodiments of the invention. To maintain brevity, other embodiments of the invention, which use the principles of the present invention, are not specifically described in the present application and are not specifically illustrated by the present drawings. FIG. 1presents a system for providing a physical playset environment using tracked objects and corresponding virtual worlds, according to one embodiment of the present invention. Diagram100ofFIG. 1includes physical environment110, object120, display131, host computer140, network145, server150, virtual environment160, and virtual object170. Physical environment110includes rooms111a-111f, with object120positioned in room111a. Server150includes virtual environment160. Virtual environment160also includes virtual object170in a position corresponding to room111a. FIG. 1presents a broad overview of how figural toys can be used and tracked by playset environments and interfaced with virtual worlds. As shown by object120, the figural toy inFIG. 1is represented by a pet figure or plush toy, but could comprise other forms such as battle and action figures, dress up and fashion dolls, posing figures, game pieces for board games, robots, and others. Object120is placed in room111aof physical environment110, which may comprise a dining area of a playset or dollhouse. ...

DETAILED DESCRIPTION OF THE INVENTION

The present application is directed to a system and method for playsets using tracked objects and corresponding virtual worlds. The following description contains specific information pertaining to the implementation of the present invention. One skilled in the art will recognize that the present invention may be implemented in a manner different from that specifically discussed in the present application. Moreover, some of the specific details of the invention are not discussed in order not to obscure the invention. The specific details not described in the present application are within the knowledge of a person of ordinary skill in the art. The drawings in the present application and their accompanying detailed description are directed to merely exemplary embodiments of the invention. To maintain brevity, other embodiments of the invention, which use the principles of the present invention, are not specifically described in the present application and are not specifically illustrated by the present drawings.

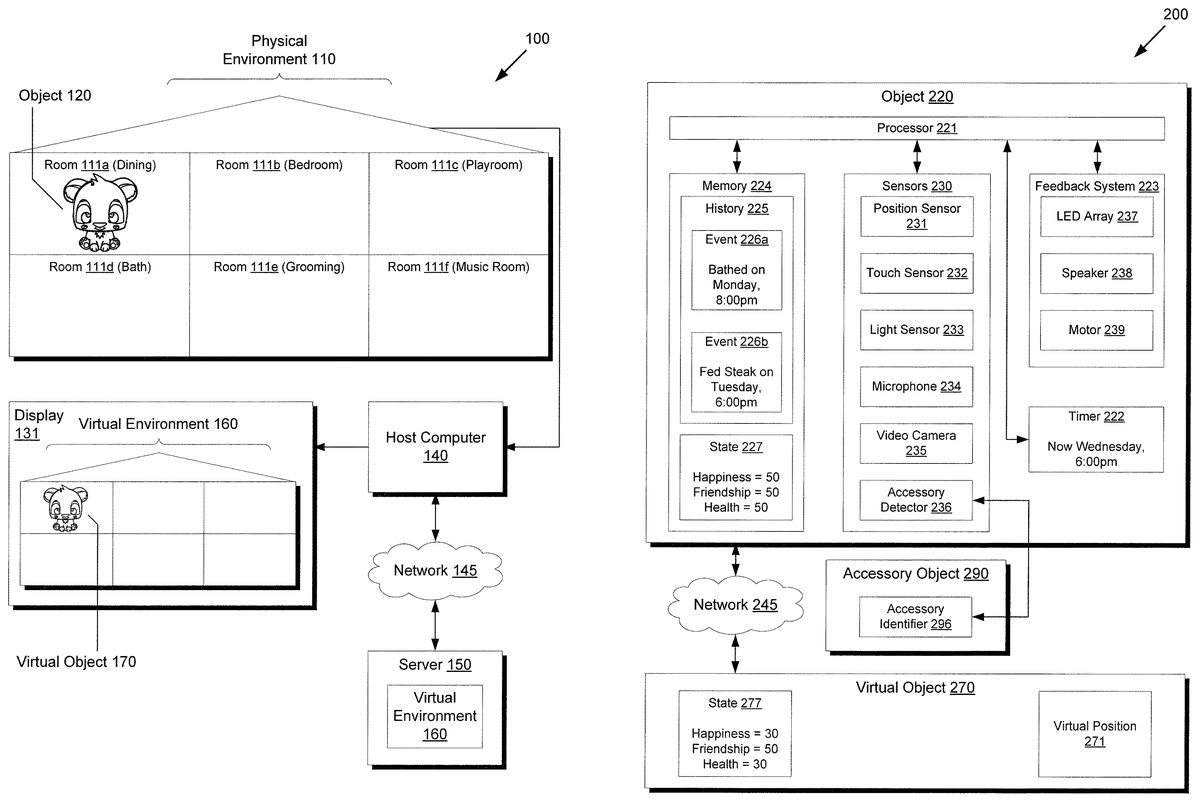

FIG. 1presents a system for providing a physical playset environment using tracked objects and corresponding virtual worlds, according to one embodiment of the present invention. Diagram100ofFIG. 1includes physical environment110, object120, display131, host computer140, network145, server150, virtual environment160, and virtual object170. Physical environment110includes rooms111a-111f, with object120positioned in room111a. Server150includes virtual environment160. Virtual environment160also includes virtual object170in a position corresponding to room111a.

FIG. 1presents a broad overview of how figural toys can be used and tracked by playset environments and interfaced with virtual worlds. As shown by object120, the figural toy inFIG. 1is represented by a pet figure or plush toy, but could comprise other forms such as battle and action figures, dress up and fashion dolls, posing figures, game pieces for board games, robots, and others. Object120is placed in room111aof physical environment110, which may comprise a dining area of a playset or dollhouse. Although physical environment110is presented as a house or living space, physical environment110may also comprise other forms such as a game board.

By using some form of position sensor, physical environment110can be made aware of the three-dimensional coordinates of object120, allowing object120to be located within a particular room from rooms111a-111f. Position tracking can be implemented using any method known in the art, such as infrared tracking, radio frequency identification (RFID) tags, electromagnetic contacts, electrical fields, optical tracking, and others. Additionally, an orientation in space might also be detected to provide even more precise position tracking. This might be used to detect whether object120is facing a particular feature in physical environment110. For example, if object120is facing a mirror within room111e, a speaker embedded in object120might make a comment about how well groomed his mane is, whereas if object120has his back to the mirror, the speaker would remain silent.

Besides providing standalone functionality as a playset for use with object120, physical environment110can also interface with virtual environment160, a virtual world representation of physical environment110. As shown inFIG. 1, physical environment110can communicate with virtual environment160by using host computer140to communicate with server150over network145. A connection from physical environment110to host computer140might be supported through a Universal Serial Bus (USB) connection using a client daemon or middleware program service running on host computer140, and network145might comprise a public network such as the Internet. The middleware program might, for example, be provided to the consumer on disc media or as a download when object120is purchased at retail. The middleware program may scan USB devices for the presence of physical environment110and act as a communications intermediary between physical environment110, object120, host computer140, and server150. Alternatively, physical environment110or object120may include embedded WiFi, Bluetooth, 3G mobile communications, WiMax, or other wireless communications technologies to communicate over network145directly instead of using the middleware program on host computer140as an intermediary to access network145.

For example, a client application or web browser on host computer140might access a virtual world or website on server150providing access to virtual environment160. This client application may interface with the middleware program previously described to communicate with physical environment110and object120, or communicate directly over network145if direct wireless communication is supported. The client application or web browser of host computer140may then present a visual depiction of virtual environment160on display131, including virtual object170corresponding to object120. Since server150can also connect to other host computers connected to network145, virtual environment160can also provide interactivity with other virtual environments and provide features such as online chat, item trading, collection showcases, downloading or trading of supplemental or user generated content, synchronization of online and offline object states, and other features.

Additionally, the position of virtual object170in relation to virtual environment160can be made to correspond to the position of object120in relation to physical environment110. For example, if the consumer or user moves object120right to the adjacent room111b, then virtual object170may similarly move right to the corresponding adjacent room in virtual environment160. In another example, if a furniture accessory object is placed in room111bagainst the left wall and facing towards the right, a corresponding virtual furniture accessory object may be instantiated and also placed in a corresponding room of virtual environment160with the same position against the left wall and orientation facing towards the right. In this manner, a consumer can easily add a virtual version of a real object without having to enter a tedious unlocking code or complete another bothersome registration process. If the real object is removed from physical environment110, then the virtual object may also be removed, allowing the real object to provide the proof of ownership. Alternatively, inserting the real object into physical environment110may provide permanent ownership of the virtual object counterpart, which may then be moved to a virtual inventory of the consumer. Further, the correspondence of real and virtual positions may be done in a continuous fashion such that positioning and orientation of objects within physical environment110are continuously reflected in virtual environment160, providing the appearance of real-time updates for the consumer.

A similar functionality in the reverse direction might also be supported, where movement of virtual object170causes a corresponding movement of object120through the use of motors, magnets, wheels, or other methods. Moreover, each object may have an embedded identifier to allow physical environment110to track several different objects or multiple objects simultaneously. This may allow, for example, special interactions if particular combinations of objects are present in specific locations. In this manner, various types of virtual online and tangible or physical interactions can be mixed together to provide new experiences.

One example of mixing virtual online and tangible interactions might be virtual triggers affecting the tangible environment, also referred to as traps or hotspots. If object120is moved to room111c, the playroom, then a trigger within the corresponding area in virtual environment160might be initiated. As a result of the trigger, corresponding virtual object170might then enact a short drama story on a stage with other virtual objects, for example. Interactive elements of physical environment110such as switches, levers, doors, cranks, and other mechanisms might also trigger events or other happenings within virtual environment160. Similarly in the other direction, elements of virtual environment160might also affect physical environment110. For example, if an online friend selects a piece of music to play in virtual environment160, the music might also be streamed and output to a speaker in room111f, the music room. Decorative wallpaper might be selected to decorate virtual environment160, causing corresponding wallpaper to be displayed in physical environment110using, for example, a scrolling paper roll with preprinted wallpaper, LCD screens, electronic ink, or other methods.

Moving toFIG. 2,FIG. 2presents a toy object interacting with an accessory object and a virtual object corresponding to the toy object, according to one embodiment of the present invention. Diagram200ofFIG. 2includes object220, network245, virtual object270, and accessory object290. Object220includes processor221, timer222, feedback system223, memory224, and sensors230. Feedback system223includes light emitting diode (LED) array237, speaker238, and motor239. Memory224includes history225and state227. History225includes event226aand event226b. Sensors230include position sensor231, touch sensor232, light sensor233, microphone234, video camera235, and accessory detector236. Virtual object270includes virtual position271and state277. Accessory object290includes accessory identifier296. With regards toFIG. 2, it should be noted that object220corresponds to object120fromFIG. 1, that network245corresponds to network145, and that virtual object270corresponds to virtual object170.

FIG. 2presents a more detailed view of components that may comprise a toy object. Although not shown inFIG. 2, a rechargeable battery or another power source may provide the power for the components of object220. Processor221may comprise any processor or controller for carrying out the logic of object220. In particular, an embedded low power microcontroller may be suitable for minimizing power consumption and extending battery life. Timer222may comprise, for example, a real-time clock (RTC) for keeping track of the present date and time. A separate battery might provide power specifically for timer222so that accurate time can be maintained even if primary battery life is drained. Feedback system223includes various parts for providing feedback to a consumer, such as emitting lights from LED array237, emitting sound through speaker238, and moving object220using motor239.

Object220also includes sensors230for detecting and determining inputs and other environmental factors, including position sensor231for determining a position of object220, touch sensor232for detecting a touch interaction with object220, light sensor233for detecting an ambient light level, microphone234for recording sound, video camera235for recording video, and accessory detector236for detecting a presence of an accessory object. These sensors may be used to initiate actions that affect the emotional and well-being parameters of object220, represented within state227.

For example, touch sensor232may be used to detect petting of object220, which may cause the happiness parameter of state227to increase or decrease depending on how often a consumer pets object220. Light sensor233may detect the amount of ambient light, which may be used to interpret how much time object220is given to sleep based on how long object220is placed in a dark environment. Sufficient sleep might cause the health parameter of state227to rise, whereas insufficient sleep may cause the health parameter to fall. Microphone234might be used to determine whether the consumer talks to object220or plays music for object220, with more frequent conversations and music positively affecting the friendship parameter. Similarly, video camera235might record the expression of the consumer, where friendly and smiling expressions also positively affect the friendship parameter. Of course, the particular configuration of sensors, feedback systems, and state parameters shown in object220are merely exemplary and other embodiments may use different configurations depending on the desired application and available budget. For example, an application with fighting action figures might focus more on combat skills and battle training rather than nurturing and well-being parameters.

Moving to accessory detector236, any of several different tracking technologies may be used to detect the presence and proximity of accessory objects, similar to position sensor231. For example, accessory detector236might comprise a RFID reader, and accessory identifier296of accessory object290may comprise a RFID tag. Once accessory object290is brought into close proximity to object220, accessory detector236can read accessory identifier296, which indicates the presence of accessory object290. This can be used to simulate various actions with object220. For example, accessory object290might comprise a plastic food toy that triggers a feeding action with object220. Other examples might include a brush for grooming, soap for bathing, trinkets for gifts, and other objects.

Additionally or alternatively, simply placing object220within a particular positional context could trigger a corresponding action. For example, examiningFIG. 1, placing object220within room111amight trigger feeding of food, within room111bmight trigger sleep, within room113cmight trigger play activities, within room111dmight trigger a bath, within room111egrooming, and within room111flistening to music. These activities may then affect the parameters in state227as previously discussed.

Besides including the state of object220as state227, memory224also contains a record of past interactions with object220as history225. As shown in history225, two events226a-226bare already recorded. Event226adescribes that object220was bathed with a timestamp of Monday at 8:00 pm. This event might be registered, for example, if the consumer moves the object220to room111dofFIG. 1, or if accessory object290representing a piece of soap is brought within close proximity to accessory detector236of object220. Timer222may have also been used to determine that the action also occurred on Monday at 8:00 pm. Similarly, event226bdescribing that object220was fed a steak on Tuesday at 6:00 pm may have been registered by moving object220to room111aofFIG. 1or bringing accessory object290representing a steak within close proximity to accessory detector236. These events might also be evaluated to influence the parameters in state227, as previously described.

Of course, it should be noted that timer222is constantly updated to reflect the current time, which may also be used to determine the effect of any events on state227. For example, object220may be preprogrammed with a particular optimal schedule, or how often it prefers to be bathed, fed, petted, and so forth. If, for example, timer222progresses to the point where the last feeding event was a week ago, this information might be used by processor221to decrease the happiness parameter of state227, as the pet may prefer to be fed at least once a day. On the other hand, repeatedly feeding the pet at closely spaced time intervals may decrease the health parameter, as overfeeding the pet is not optimal either. In this manner, time contextual interactivity can be provided to the consumer, enabling richer and more realistic experiences.

While object220may interface with virtual object270, object220may not always have a continuous connection to the virtual world with virtual object270, as the consumer might turn off the host computer providing the connection to network245, network245might have connectivity issues, wireless signals might receive strong interference, or other circumstances may terminate the availability of network communications over network245. In this case, it may be desirable for the consumer to still interact with object220and any associated tangible environment, and still be credited for those interactions when network communications is restored. For this reason, history255may contain a record of events that occur offline, so that once network245is available again, state277of virtual object270can be synchronized with state277of object220.

For example, as shown inFIG. 2, state277of virtual object270corresponding to object220is out of date, as the happiness and health parameters are only 30, whereas in object220the happiness and health parameters have increased by 20 to 50. This may be attributed to events226a-226boccurring offline, where event226a, bathing, raised the health parameter by 20 points, and where event226b, feeding steak, raised the happiness parameter by 20 points. After object220reestablishes a connection to virtual object270via previously down but now available network245, then state277might also be updated to synchronize with state227, also adding 20 points to the health and happiness parameters. Additionally, virtual position271, representing the position of virtual object270in a corresponding virtual world, may also be updated to correspond to a position based on input readings from position sensor231of object220, as previously described.

This synchronization could also occur in the other direction as well. For example, an online friend might send a virtual gift to virtual object270, causing the happiness parameter of state277to rise by 10 points. When object220reestablishes a connection to virtual object270over previously down but now available network245, those additional 10 points to happiness might also be added to state227of object220. In this manner, both online and offline play are supported and actions within an offline or online context can be credited to the other context, thereby increasing consumer satisfaction and encouraging further play.

AlthoughFIG. 2focuses on toy objects, the elements contained with object220, including processor221, timer222, feedback system223, memory224, and sensors230might also be embedded in a corresponding environment, or tangible playset. In this manner, the playset, rather than the object, may gather sensor data and record events for exchanging with a virtual environment containing virtual object270. Alternatively, the environment and the object may share and divide particular duties with regard to communications to a virtual environment. However, for simplicity, an embodiment where functions are primarily carried out by the toy object is presented inFIG. 2.

Moving toFIG. 3,FIG. 3presents a diagram demonstrating a toy object providing a time contextual interaction, according to one embodiment of the present invention. Diagram300ofFIG. 3includes objects320a-320band accessory object390. Object320aincludes timer322, history325a, state327a, articulating part329a, and LED337a. History325aincludes event326a. Object320bincludes history325b, state327b, articulating part329b, and LED337b. History325bincludes event326b. With regards toFIG. 3, it should be noted that objects320a-320bcorrespond to object220fromFIG. 2, and that accessory object390corresponds to accessory object290.

FIG. 3presents an example time contextual interaction that a toy object may provide. Object320arepresents an initial state of the toy object, which is further explained through the information conveyed in timer322, history325a, and state327a. As shown in history325a, the last time the pet was fed was on Monday at 1:00 pm, whereas timer322indicates that the current time is Friday, 6:00 pm, or more than four days past the last time the pet was fed. This is also reflected in the low happiness parameter of 10 shown in state327a, which may have been calculated using predetermined rules regarding preferred feeding frequency. Several visual indicators corresponding to elements of feedback system223ofFIG. 2also reflect the low happiness parameter, such as LED337aglowing a depressed blue color on the cheeks, and articulating part329apresenting droopy ears. While not shown inFIG. 3, a speaker embedded in object320amight also, for example, play the sound of a grumbling stomach, or make a verbal request for food.

Responding to the indicators, the consumer might bring accessory object390, representing a steak, in close proximity to object320ato simulate feeding the pet. As previously mentioned, various methods of detecting accessory object390such as RFID tags may be utilized. Once accessory object390is identified and processed by object320a, object320amay transition to the state shown by object320b. As shown by state327b, the happiness parameter has increased drastically from 10 to 80, the action of feeding is noted in history325bas event326b, and the visual indicators change to indicate the state of happiness, with LED337bglowing a bright red and articulating part329bpresenting perked up ears. Although history325bshows that event326breplaces event326afrom history325a, alternative embodiments may preserve both events, which may be used, for example, to detect whether overfeeding has occurred by analyzing the frequency of feeding events over time. In this manner, a toy object can provide various time contextual interactions, providing deeper game play mechanics than objects focusing on canned and immediate responses.

Moving toFIG. 4,FIG. 4shows a flowchart describing the steps, according to one embodiment of the present invention, by which a toy object can be used with an environment and connected to a virtual world corresponding to the environment. Certain details and features have been left out of flowchart400that are apparent to a person of ordinary skill in the art. For example, a step may comprise one or more substeps or may involve specialized equipment or materials, as known in the art. While steps410through440indicated in flowchart400are sufficient to describe one embodiment of the present invention, other embodiments of the invention may utilize steps different from those shown in flowchart400.

Referring to step410of flowchart400inFIG. 4and diagram100ofFIG. 1, step410of flowchart400comprises object120establishing a first connection with virtual environment160hosted on server150via host computer140and network145, wherein virtual environment160contains virtual object170corresponding to object120. For example, physical environment110may include a USB connector connecting directly to host computer140, which in turn connects to a broadband modem to access network145, which may comprise the public Internet. As previously described,FIG. 1only shows one particular method of connectivity, and other methods, such as wirelessly connecting to network145, might also be utilized.

Referring to step420of flowchart400inFIG. 4, diagram100ofFIG. 1, and diagram200ofFIG. 2, step420of flowchart400comprises object220corresponding to object120updating state277of virtual object270corresponding to virtual object170using the first connection established in step410and history225. In other words, timer222is compared to the timestamps of events226a-226brecorded in history225to determine a net effect to apply to state227, which also applies to state277of virtual object270. Of course, events that are evaluated the same regardless of timer222may also exist, in which case timer222may not need to be consulted. The net effect can then be sent over network245using the first connection established previously in step410, so that state277of virtual object270can be correspondingly updated.

Referring to step430of flowchart400inFIG. 4, diagram100ofFIG. 1, and diagram200ofFIG. 2, step430of flowchart400comprises object120determining a position of object120using position sensor231. As previously discussed, position sensor231may use a variety of technologies to implement three-dimensional position and orientation detection. After step430, object120may determine that it is positioned in room111aof physical environment110and oriented facing outwards towards the consumer.

Referring to step440of flowchart400inFIG. 4, diagram100ofFIG. 1, and diagram200ofFIG. 2, step440of flowchart400comprises object120sending the position determined in step430using the first connection established in step410to cause a position of virtual object170in virtual environment160to correspond to the position of object120in physical environment110. As previously described, this could also occur on a continuous basis so that the movements of object120and virtual object170appear to be synchronized in real-time to the consumer. In this manner, position tracked toy objects providing time contextual interactivity can be used in both offline and online contexts.

For example, to extend the example flowchart400shown inFIG. 4, additional steps might be added to emphasize the tracking of offline activity and subsequent credit given online. The first connection might be explicitly terminated, an input might be detected from the variety of sensors contained in sensors230ofFIG. 2, history225may be correspondingly updated with new events, and a new second connection may be established with virtual object270over network245. State277may then be updated using this second connection to reflect the newly added events in history225. Additionally, as previously discussed, this process may also proceed in the other direction, where activity occurring to the virtual object in the online context can be credited to the tangible object when connected online.

From the above description of the invention it is manifest that various techniques can be used for implementing the concepts of the present invention without departing from its scope. Moreover, while the invention has been described with specific reference to certain embodiments, a person of ordinary skills in the art would recognize that changes can be made in form and detail without departing from the spirit and the scope of the invention. As such, the described embodiments are to be considered in all respects as illustrative and not restrictive. It should also be understood that the invention is not limited to the particular embodiments described herein, but is capable of many rearrangements, modifications, and substitutions without departing from the scope of the invention.

Claims

- A virtual gaming system configured to interact with a virtual environment accessible by a host system, the virtual gaming system comprising: a physical environment;and a physical object having an appearance that resembles an appearance of a virtual object in the virtual environment, wherein the physical object includes a physical object identifier;wherein the physical environment is configured to: wirelessly sense a position of the physical object in relation to the physical environment by identifying the physical object identifier using at least one of infrared, radio frequency identification (RFID), electromagnetics, electrical fields and optics;and communicate a first information regarding the physical object, the first information including the position of the physical object in relation to the physical environment, to the host system;and wirelessly store a second information to a memory within the physical object.

- The virtual gaming system of claim 1 , wherein the physical object further comprises an interface for a sensor to indicate the position of the physical object in relation to the physical environment.

- The virtual gaming system of claim 2 , wherein the interface comprises a radio frequency identification (RFID) interface, and wherein the physical environment contains an RFID sensor to read the first information from the RFID interface.

- The virtual gaming system of claim 3 , wherein the memory within the physical object is configured to store the first information regarding the physical object.

- The virtual gaming system of claim 4 , wherein the RFID sensor is configured to read the first information from the memory.

- The virtual gaming system of claim 1 , wherein the virtual object is a character in the virtual environment.

- The virtual gaming system of claim 1 , wherein the physical environment is configured to wirelessly read the first information from the memory of the physical object and transmit the first information to the host system.

- The virtual gaming system of claim 7 , wherein the virtual object is a character in the virtual environment, and wherein the first information read from the memory and transmitted to the host system updates a state of the character in the virtual environment.

- The virtual gaming system of claim 1 , further comprising a physical accessory of the physical object, wherein the physical accessory includes a physical accessory identifier.

- The virtual gaming system of claim 9 , wherein the physical environment is further configured to wirelessly sense the physical accessory using the physical accessory identifier.

- The virtual gaming system of claim 10 , wherein the physical environment is configured to wirelessly sense the physical accessory identifier using at least one of infrared, radio frequency identification (RFID), electromagnetics, electrical fields and optics.

- The virtual gaining system of claim 10 , wherein the physical object includes an accessory detector configured to detect the physical accessory.

- The virtual gaming system of claim 12 , wherein the physical environment is further configured to: wirelessly obtain a third information from the physical object relating to detecting the physical accessory;and communicate the third information relating to detecting the physical accessory to the host system.

- The virtual gaming system of claim 13 , wherein a virtual accessory object corresponding to the physical accessory is displayed in the virtual environment.

- The virtual gaming system of claim 14 , wherein the physical accessory is a physical furniture in the physical environment with a corresponding virtual furniture in the virtual environment.

- The virtual gaming system of claim 10 , wherein the physical environment is configured to communicate a third information regarding the physical accessory to the host system.

- The virtual gaming system of claim 1 , wherein the physical environment is configured to wirelessly receive the second information from the host system and write the second information to the memory of the physical object.

- The virtual gaming system of claim 17 , wherein the virtual object is a character in the virtual environment, and wherein the second information written to the memory comprises event information corresponding to activities of the character in the virtual environment.

- The virtual gaming system of claim 1 , wherein the physical environment is configured to operably connected to the host system through one of a universal serial bus (USB) connection and a wireless connection.

- The virtual gaming system of claim 1 , wherein the host system is a computer.

- The virtual gaming system of claim 1 , wherein the host system is a console.

- The virtual gaming system of claim 1 , further comprising a computer-readable medium configured to operate with the host system to access the virtual environment.

- The virtual gaming system of claim 1 , wherein the position of the physical object in relation to the physical environment corresponds to an area in the virtual environment.

- A physical object for use in a virtual gaming system comprising a physical environment operably connectable to a host system, and a virtual environment rendered by the host system corresponding to the physical environment, the physical object having an appearance that resembles an appearance of a virtual object in the virtual environment, the physical object comprising: a physical object identifier configured to wirelessly indicate a position of the physical object in relation to the physical environment using at least one of infrared, radio frequency identification (RFID), electromagnetics, electrical fields and optics;and a memory;wherein the physical object is configured to provide a first information regarding the physical object, the first information including the position of the physical object in relation to the physical environment to the host system by way of the physical environment;and wherein the memory is configured to wirelessly store a second information received from the host system by way of the physical environment.

- The physical object of claim 24 further comprising an interface for a sensor to indicate the position of the physical object in relation to the physical environment.

- The physical object of claim 25 , wherein the interface comprises a radio frequency identification (RFID) interface, and wherein the physical environment contains an RFID sensor to read the first information from the RFID interface.

- The physical object of claim 26 , wherein the memory is configured to store the first information regarding the physical object.

- The physical object of claim 27 , wherein the RFID sensor is configured to read the first information from the memory.

- The physical object of claim 24 , wherein the virtual object is a character in the virtual environment.

- The physical object of claim 29 , wherein the first information is read from the memory and transmitted to the host system to update a state of the character in the virtual environment.

- The physical object of claim 24 , further comprising a physical accessory including a physical accessory identifier configured to wirelessly indicate a presence of the physical accessory using at least one of infrared, radio frequency identification (RFID), electromagnetics, electrical fields and optics.

- The physical object of claim 31 , wherein the physical object includes an accessory detector configured to detect the physical accessory.

- The physical object of claim 24 , wherein the second information written to the memory comprises event information corresponding to activities of the character in the virtual environment.

- The physical object of claim 24 , wherein the position of the physical object in relation to the physical environment corresponds to an area in the virtual environment.

- A virtual gaming system configured to interact with a virtual environment accessible by a host system, the virtual gaming system comprising: a physical environment;and a physical object having an appearance that resembles an appearance of a virtual object in the virtual environment, wherein the physical object includes a physical object identifier;wherein the physical environment is configured to: wirelessly sense a position of the physical object in relation to the physical environment by identifying the physical object identifier using at least one of infrared, radio frequency identification (RFID), electromagnetics, electrical fields and optics;and communicate a first information regarding the physical object, the first information including the position of the physical object in relation to the physical environment, to the host system.

- The virtual gaming system of claim 35 , wherein the physical object further comprises an interface for a sensor to indicate the position of the physical object in relation to the physical environment.

- The virtual gaming system of claim 36 , wherein the interface comprises a radio frequency identification (RFID) interface, and wherein the physical environment contains an RFID sensor to read the first information from the RFID interface.

- The virtual gaming system of claim 35 , wherein the physical environment is configured to wirelessly receive a second information from the host system and write the second information to the memory of the physical object.

- The virtual gaming system of claim 38 , wherein the virtual object is a character in the virtual environment, and wherein the second information written to the memory comprises event information corresponding to activities of the character in the virtual environment.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.