U.S. Pat. No. 9,492,748

VIDEO GAME APPARATUS, VIDEO GAME CONTROLLING PROGRAM, AND VIDEO GAME CONTROLLING METHOD

AssigneeKABUSHIKI KAISHA SEGA

Issue DateNovember 29, 2012

Illustrative Figure

Abstract

A video game apparatus includes a depth sensor configured to capture an area where a player exists and acquire depth information for each pixel of the image; and a gesture recognition unit configured to divide the image into a plurality of sections, to calculate statistics information of the depth information for each of the plurality of sections, and to recognize a gesture of the player based on the statistics information.

Description

BEST MODE FOR CARRYING OUT THE INVENTION In order to avoid instability in the bone structure tracking described in the above patent document 1, it is possible to add a dead zone to the calculation. However, if a slight change in the posture, a weight shift or the like may be missed. In this case, the bone structure tracking is not applicable to the video game requiring delicate control. For example, in a video game operable by gestures of a player to control a surf board, a skate board or the like, the face of a player faces right or left by 90 degrees relative to the camera or the depth sensor and the body of the player faces sideways in playing the game. In such a video game operated by gestures of the player, there may be a case where a slight change in the posture, a weight shift or the like is missed in measuring a body member positioned opposite to a camera or a sensor because a slight change in the posture or a slight weight shift is detected. Therefore, it is important to accurately recognize the gestures of the player irrespective of the direction of the player relative to the depth sensor. Hereinafter, embodiments of the present invention are described in detail. FIG. 1illustrates a structure example of a video game apparatus of a first embodiment of the present invention. Referring toFIG. 1, the video game apparatus1includes a control unit101, a memory108, a camera111, a depth sensor112, a microphone113, an image output unit114, an sound output unit115, a communication unit16, a monitor117, and a speaker118. The camera111, the depth sensor112, the monitor112, and the speaker118are illustrated so as to be included in the video game apparatus1. However, the camera111, the depth sensor112, the monitor112, and the ...

BEST MODE FOR CARRYING OUT THE INVENTION

In order to avoid instability in the bone structure tracking described in the above patent document 1, it is possible to add a dead zone to the calculation. However, if a slight change in the posture, a weight shift or the like may be missed. In this case, the bone structure tracking is not applicable to the video game requiring delicate control. For example, in a video game operable by gestures of a player to control a surf board, a skate board or the like, the face of a player faces right or left by 90 degrees relative to the camera or the depth sensor and the body of the player faces sideways in playing the game. In such a video game operated by gestures of the player, there may be a case where a slight change in the posture, a weight shift or the like is missed in measuring a body member positioned opposite to a camera or a sensor because a slight change in the posture or a slight weight shift is detected.

Therefore, it is important to accurately recognize the gestures of the player irrespective of the direction of the player relative to the depth sensor.

Hereinafter, embodiments of the present invention are described in detail.

FIG. 1illustrates a structure example of a video game apparatus of a first embodiment of the present invention.

Referring toFIG. 1, the video game apparatus1includes a control unit101, a memory108, a camera111, a depth sensor112, a microphone113, an image output unit114, an sound output unit115, a communication unit16, a monitor117, and a speaker118. The camera111, the depth sensor112, the monitor112, and the speaker118are illustrated so as to be included in the video game apparatus1. However, the camera111, the depth sensor112, the monitor112, and the speaker118may be provided outside the video game apparatus1and connected to the video game apparatus1by a cable or the like.

The control unit101is formed by a central processing unit (CPU) or the like. The control unit performs a main control operation in conformity with a computer program.

The memory108stores data as a work area for the control unit101.

The camera111captures an image of a space where a player exists acquires to acquire image information. The data structure is described later.

The depth sensor112emits an infrared light or the like into the space where the player exists and captures an image of the space. Then, the depth sensor112acquires depth information (distance information) for each pixel of the captured image (e.g.,FIGS. 5A, 5B, and 5C) from a time (a flying time) between transmission of the infrared light and receipt of the infrared light. The data structure is described later.

The microphone113receives sound from a space where the player ordinarily exists.

The image output unit114generates an image signal to be output to the monitor117.

The sound output unit115generates a sound signal to be output to the speaker118.

The communication unit116performs wired or wireless data transmission with another apparatus or a network.

Meanwhile, the control unit101includes, as a functional unit, a camera and depth sensor control unit102, a player tracking unit103, a bone structure tracking unit104, a gesture recognition unit105, a sound recognition unit106, and a game progression control unit107.

The camera and depth sensor control unit102controls the camera111and the depth sensor112, and stores image information109captured by the camera111and the depth information110acquired from the depth sensor112in the memory108. As illustrated inFIG. 2A, the image information109is image data of Red, Green, and Blue (RGB) for each pixel. As illustrated inFIG. 2B, the depth information110and the flag indicative of the player corresponding to the depth data are stored in the memory108. The depth information110indicates the distance between the depth sensor112and the surface of an object to be measured. The flag is, for example, “1” for player #1, “2” for player #2, and “3” for another object. The depth information110and the flag are stored in correspondence with each pixel of the captured image. The flag of the depth information is set by the player tracking unit103. There is no value in the flag of the depth information when the depth data are acquired from the depth sensor112. The resolution of the image information109may not be the same as the resolution of the depth information110.

Referring back toFIG. 1, the player tracking unit103tracks the depth information110stored in the memory108(when necessary, it may be the image information109), and sets the flag for identifying the players. For example, after recognizing that the player is a specific player (the layer #1 or player #2) using the gestures, a silhouette (a portion recognized as a foreground rather than a background) of the specific player is tracked. Then, the flag indicative of the specific player is set in the depth information110which is assumed to be included in the silhouettes of the same specific player. If the depth information110is not assumed to be the silhouette of the player, the flag is set so as to indicate information that the depth information110is not assumed to be the silhouette of the player. The player tracking unit103may be included in the bone structure tracking unit104.

The bone structure tracking unit104recognizes the characteristic ends (e.g., the head, an arm, the body, a leg, or the like) of the player based on the depth information110(when necessary, the image information109may be used instead) stored in the memory108. The bone structure tracking unit104presumes the positions of bones and joints inside the body of the player and traces movements of the positions of the bones (ossis) and the joints (arthro).

The gesture recognition unit105recognizes the gestures based on statistics information of the depth information110stored in the memory108(when necessary, the image information109may be used instead). At this time, the gesture recognition unit105uses the result of the tracking by the bone structure tracking unit104and the image is previously divided into plural sections (e.g., four sections). A detailed description of this is described later.

The sound recognition unit106recognizes sound using sound information received by the microphone113.

The game progression control unit107controls a game progression based on results obtained by the bone structure tracking unit104, the gesture recognition unit105, and the sound recognition unit106.

FIG. 3is a flow chart illustrating exemplary processes of gesture recognition and game control. When there is plural players, the gesture recognition and the game control are performed for each player. The process illustrated inFIG. 3is performed for each frame (e.g., a unit of updating screens for displaying game images).

Referring toFIG. 3, after the process is started in step S101, the gesture recognition unit105acquires the depth information110from the memory108in step S102.

Next, the gesture recognition unit105calculates dividing positions of the image of the depth information110in step S103.

FIG. 4is a flow chart illustrating an exemplary process of calculating the dividing positions (step S103illustrated inFIG. 3). Here, one coordinate is acquired as the dividing position for dividing the image into four sections. Further, if the image of the depth information is divided into more sections, the number of the coordinate of the dividing position is made plural. For example, in a case of 2 (the lateral direction) by 4 (the longitudinal direction), the sections divided by the above dividing positions are further divided into two subsections in the longitudinal directions at new dividing positions.

In this case, for example, the vertically divided sections may be simply divided into two subsections. Instead, weighted centers of the sections may be determined based on area centers acquired from depth information of the sections, and the subsections are further divided at the weighted center on the ordinate. The upper section may be divided at the position of the neck on the upper body side, and at the position of the knees on the lower body side.

Referring toFIG. 4, after the process is started in step S201, the depth information of the player is specified using the flags of data of the depth information110in step S202.

Next, the area center (the weighted center) of the depth information of the silhouette of the player is calculated in step S203. For example, provided that the abscissa of the image is represented by a value x and the ordinate of the image is represented by a value y, values x and y of pieces of the depth information110corresponding to the player indicated by the flag are picked up. The sum of the values x is divided by the sum of the pieces of the depth information110to acquire the value on the abscissa (the x axis). The sum of the values y is divided by the sum of the pieces of the depth information110to acquire the value on the ordinate (the y axis).

Then, the depth value of the calculated area center is acquired in step S204. Said differently, the depth data of the depth information110corresponding to the coordinate of the area center are referred.

Next, the depth information110of the player residing within predetermined plus and minus value ranges from the acquired depth value is selected by filtering in step S205. The depth data are referred to filter the depth information110corresponding to the player indicated by the flag to remove the pieces of the depth information110without the predetermined value ranges. The filtering is provided to remove noises caused by an obstacle existing on front or back sides of the player.

Next, the area center of the depth information110after the filtering is calculated to acquire the dividing positions in step S206. Thereafter, the process is completed in step S207.

Referring back toFIG. 3, the gesture recognition unit105performs the area divisions of the image at the acquired dividing positions in step S104. Specifically, the acquired dividing positions are determined as the coordinate values for the area divisions.

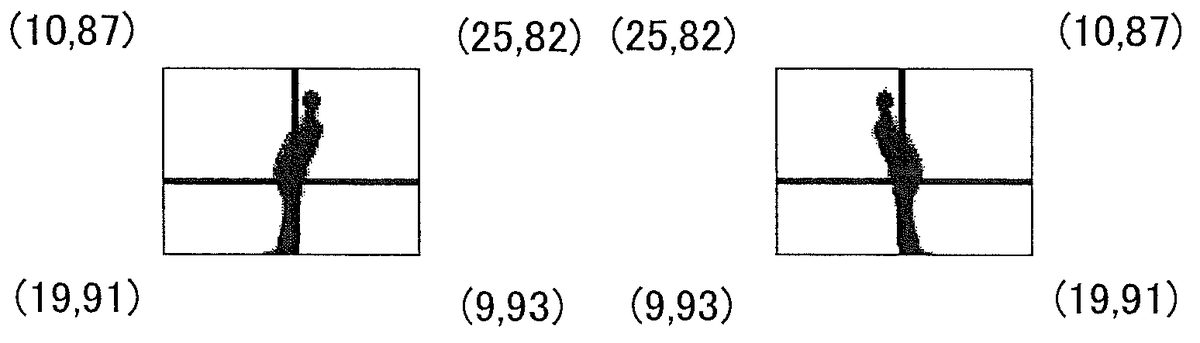

Next, the gesture recognition unit105calculates statistics information of the depth information110after the filtering for each divided section in step S105.FIGS. 5A and 5Billustrate examples of calculated statistics information. The statistics information for different postures are illustrated inFIGS. 5A and 5B. Referring toFIGS. 5A and 5B, two numbers are surrounded by case arcs on the four corners. The first number represents the area value (the number of the depth information110of the silhouette of the player in the corresponding section. The second number represents the average depth value (the average value of the depth values of the depth information110) of the silhouette of the player in the corresponding section. The statistics information is not limited to the example.

Referring back toFIG. 3, the gesture recognition unit105stores the calculated statistics information inside the video game apparatus1in step S106.

Next, the gesture recognition unit105calculates differences between the previously stored statistics information and currently stored statistics information for each divided section in step S107.FIG. 5Cillustrates exemplary differences between the values of the previously stored statistics information at the four corners inFIG. 5Aand the values of the currently stored statistics information at the four corners inFIG. 5B.

Referring back toFIG. 3, the gesture recognition unit105calculates the result of the gesture recognition based on the previously stored statistics information and the currently stored statistics information for each divided section and outputs the result to the game progression control unit107in step S108. Thereafter, the process returns to the acquiring the depth information in step S102. In the example illustrated inFIG. 5C, the area value of the upper left section decreases, and the area values of the upper right decreases, and the increments of the area values of the lower left and the lower right are small. Therefore, it is possible to recognize that the player bends his or her body in the left direction onFIG. 5C(the player bows). The amount of the bowing is proportional to the increment of the area value of the upper left section. The amount may further include the decrement (the sign inversion of the increment) of the area value of the upper right section. The difference of the average value of the depth values may be included in calculating the result of the gesture recognition.

Referring back toFIG. 3, after the game progression control unit107acquires the result of the gesture recognition, the game progression control unit107corrects the result in conformity with a correction parameter previously acquired in response to the types of the gestures in step S109. For example, when bowing and rolling back are compared, the degrees of the rolling back are ordinarily lesser than the degrees of the bowing for many players. If similar degrees of operations are required between the bowing and the rolling back as in a surfboard game, the operations in the bowing and the operations in the rolling back do not conform depending on the result of the gesture recognition. Therefore, a correction parameter may be calculated using the result of the gesture recognition in the bowing and the rolling back. By multiplying the result of the gesture recognition by the correction parameter, the result of the gesture recognition in the rolling back can be corrected. Thus, the operations corresponding to the bowing and the rolling back can become even relative to the bowing and the rolling back. When the correction parameter for correcting the bowing and the rolling back and the correction is applied, in order to determine whether the player bows or rolls back, it is necessary to know the facing direction (left or right) of the player. In order to determine the direction of the player, it is possible to use information output by the bone structure tracking unit104. The bone structure tracking unit104successively understand three dimensional positions of the bones and joints of the player. Therefore, it is possible to determine the facing direction (right or left) of the player relative to the direction of the depth sensor. Detailed calculation of the correction parameter is described later.

Next, the game progression control unit107controls game progression based on the result of the gesture recognition (if a correction is done, the result of the gesture recognition after the correction) in stop S110.FIGS. 6A and 6Billustrate exemplary game control. Referring toFIGS. 6A and 6B, a character21riding on a surfboard22moves in association with movement of a player. If the posture of the player illustrated inFIG. 5Acorresponds to a game screen illustrated inFIG. 6A, when the player bows as illustrated inFIG. 5A, the character21bows as illustrated inFIG. 6B. Then, the surfboard22can be turned rightward.

FIG. 7is a flowchart illustrating an exemplary process of calculating the correction parameter used in correcting the game control (Step S109inFIG. 3). The process can be done during loading a program, before starting the game, or the like.

Referring toFIG. 7, the process is started in step S301. A specific screen is displayed to prompt a player to face forward and be erect in step S302.FIG. 8Ais an exemplary screen in which a character faces forward and is erect. It is possible to simultaneously display a message such as “please face forward and be erect for correction”. Measurement of the player while the player faces forward and is erect is to acquire data of a reference position and is not used to calculate the correction parameter. Therefore, it is possible to omit the measurement of the player while the player faces forward and is erect. The omission is similar in step S303.

Next, the process moves back toFIG. 7. In step S303(steps S304to S307), depth information is acquired, a dividing position is calculated, an area is divided, and statistics information of the depth information is calculated and/or stored for each divided section. Here, the acquiring the depth information (step S304) is a process similar to the acquiring the depth information (step S102). The calculation of the dividing position (step S305) is similar to the calculation of the dividing position (step S103). Details of the calculation are as illustrated inFIG. 4. The area division in step S306is similar to the area division (step S104) illustrated inFIG. 3. Calculating and storing the statistics information of depth information for each divided section in step S307are a process similar to the calculating the statistics information of the depth information for each divided section in step S105and the storing the statistics information in step S106. An example of the statistics information to be acquired is illustrated inFIG. 8B.

As an example of the statistics information, number strings (20,89), (18,90), (11,96), and (10,95) are illustrated at four corners inFIG. 8B. These number strings indicate area values and average depth values of four divided sections as inFIGS. 5A, 5B, and 5C. Number strings inFIGS. 8E, 8F, 9D, and 9Halso indicate area values and average depth values of four divided sections.

Referring back toFIG. 7, a screen is displayed for prompting the player to face sideways and be erect (to face sideways in an erect posture) in step S308.FIGS. 8C and 8Dillustrate exemplary screens where the character faces sideways and is erect. InFIG. 8C, the player is in a regular stance (The left foot is in the forward direction). InFIG. 8D, the player is in a goofy stance (The right foot is in the forward direction). The screen ofFIG. 8CorFIG. 8Dmay be selected. Instead, a screen may be configured to be used both the regular stance and the goofy stance. In addition, a message such as “please face sideways and be erect (face sideways in an erect posture)” can be displayed.

Next, referring back toFIG. 7, the depth information is acquired, the dividing position is calculated, the area is divided, and the statistics information of the depth information is calculated and/or stored for each divided section in step S309. Step S309is a process similar to step S303(steps S304to S307). An example of the statistics information to be acquired is illustrated inFIGS. 8E and 8F. To deal with a case where the player does not face the prompted direction, information of the player's direction may be simultaneously acquired from information output by the bone structure tracking unit104or the like and/or stored.

Referring back toFIG. 7again, a screen is displayed for prompting the player to bow in step S310.FIGS. 9A and 9Bare exemplary screens in which the character bows.FIG. 9Aillustrates the player in the regular stance.FIG. 9Billustrates the player in the goofy stance. In addition, a message such as “please roll forward (bow) maximally” can be displayed.

Next, referring back toFIG. 7, the depth information is acquired, the dividing position is calculated, the area is divided, and the statistics information of the depth information is calculated and/or stored for each divided section in step S309. Step S311is a process similar to step S303(steps S304to S307). An example of the statistics information to be acquired is illustrated inFIGS. 9C and 9D.

Next, referring back toFIG. 7, a screen is displayed for prompting the player to roll back in step S312.FIGS. 9E and 9Fare exemplary screens in which the character rolls back.FIG. 9Eillustrates the player in the regular stance.FIG. 9Fillustrates the player in the goofy stance. In addition, a message such as “please roll back maximally” can be displayed.

Next, referring back toFIG. 7, the depth information is acquired, the dividing position is calculated, the area is divided, and the statistics information of the depth information is calculated and/or stored for each divided section in step S313. Step S313is a process similar to step S303(steps S304to S307). An example of the statistics information to be acquired is illustrated inFIGS. 9G and 9H. To deal with a case where the player does not face the prompted direction, information of the player's direction may be simultaneously acquired from information output by the bone structure tracking unit104or the like and/or stored.

Next, referring back toFIG. 7, a difference between the statistics information acquired when the player facing sideways and being erect and the statistics information acquired when the player bowing and a difference between the statistics information acquired when the player facing sideways and being erect and the statistics information acquired when the player rolling back. Thereafter, a correction parameter is calculated in step S314. Whether the bowing or the rolling back is determined may depend on the contents prompted at the time of acquiring the statistics information, or may be determined based on the contents and further the acquired and/or stored information of the direction of the player.

For example, in a case where the statistics information illustrated inFIGS. 8E, 9C, and 9Gare acquired, the correction parameter is acquired as follows. The correction parameter may be obtained by dividing the increment of the area value in the upper left section (FIGS. 8E and 9G) by the increment of the area value in the upper right section (FIGS. 8E and 9C). To the increment of the area value in the upper right section inFIGS. 8E and 9G, the decrement (the sign inversion of the increment) of the area value in the upper right section may be added. To the increment of the area value in the upper left section inFIGS. 8E and 9C, the decrement (the sign inversion of the increment) of the area value in the upper left section may be added.

Referring back toFIG. 7, thereafter, the process is completed in step S315.

Although the correction parameters for the bowing and the rolling back are described above, the correction can be applied not only to the above examples. For example, a correction parameter for the reach of punch or a correction parameter for the height of kick may be calculated.

According to the embodiments, regardless of the direction of the player, the gesture can be accurately recognized and the correction required by characteristics of areas of body can be appropriately carried out.

As described, the present invention is described using the preferred embodiments of the present invention. Although the invention has been described with respect to specific embodiments, various modifications and changes may be added to the embodiments within extensive points and ranges of the present invention defined in the scope of claims. Said differently, the present invention should not be limited to details and appended drawings of the embodiments.

This international application is based on Japanese Priority Patent Application No. 2010-135609 filed on Jun. 14, 2010, the entire contents of Japanese Priority Patent Application No. 2010-135609 are hereby incorporated herein by reference.

EXPLANATION OF REFERENCE SYMBOLS

1: video game apparatus;101: control unit;102: camera and depth sensor control unit;103: player tracking unit;104: bone structure tracking unit;105: gesture recognition unit;106: sound recognition unit;107: game progression control unit;108: memory;109: image information;110: depth information;111: camera;112: depth sensor;113: microphone;114: image output unit;115: sound output unit;116: communication unit;117: monitor; and118: speaker.

Claims

- A video game apparatus comprising: a depth sensor configured to capture an area where a player exists and acquire depth information for each pixel of an image including a silhouette of the player;and a gesture recognition unit configured to divide the image so as to divide the silhouette of the player into a plurality of sections of the image, to calculate statistics information for each of the plurality of sections, and to recognize a gesture of the player based on the statistics information, the statistics information including an area value, which is a ratio of an area occupied by a divided part of the silhouette of the player in the corresponding section relative to an area of the corresponding section, and depth information of the divided part of the silhouette of the player in the corresponding section.

- The video game apparatus according to claim 1 , wherein the gesture recognition unit calculates an area center of the silhouette of the player in the image and divides the image into the plurality of sections.

- A non-transitory video game controlling program causing a computer to execute the steps of: capturing, by a depth sensor of a video game apparatus, an area where a player exists and acquiring, by the depth sensor, depth information for each pixel of an image including a silhouette of the player;and dividing, by a gesture recognition unit of the video game apparatus, the image so as to divide the silhouette of the player into a plurality of sections of the image, calculating, by the gesture recognition unit, statistics information for each of the plurality of sections, and recognizing, by the gesture recognition unit, a gesture of the player based on the statistics information, the statistics information including an area value, which is a ratio of an area occupied by a divided part of the silhouette of the player in the corresponding section relative to an area of the corresponding section, and depth information of the divided part of the silhouette of the player in the corresponding section.

- A video game controlling method comprising: capturing, by a depth sensor of a video game apparatus, an area where a player exists and acquiring, by the depth sensor, depth information for each pixel of an image including a silhouette of the player;and dividing, by a gesture recognition unit of the video game apparatus, the image so as to divide the silhouette of the player into a plurality of sections of the image, calculating, by the gesture recognition unit, statistics information for each of the plurality of sections, and recognizing, by the gesture recognition unit, a gesture of the player based on the statistics information, the statistics information including an area value, which is a ratio of an area occupied by a divided part of the silhouette of the player in the corresponding section relative to an area of the corresponding section, and depth information of the divided part of the silhouette of the player in the corresponding section.

- The video game apparatus according to claim 1 , wherein the gesture recognition unit prompts the player to take a plurality of postures and calculates a correction parameter for individual postures.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.