U.S. Pat. No. 9,327,199

MULTI-TENANCY FOR CLOUD GAMING SERVERS

AssigneeMicrosoft Technology Licensing, LLC

Issue DateMarch 7, 2014

Illustrative Figure

Abstract

Some implementations may include one or more servers to host multiple game instances of game modules. The one or more servers may determine whether a difference between a total rendering time to render output data for the multiple game instances and a rendering capacity of the one or more processors is less than a predetermined rendering threshold. In response to determining that the difference between the total rendering time and the rendering capacity of the one or more processors is less than the predetermined rendering threshold, the one or more servers may adjust a rendering complexity associated with one or more of the plurality of game instances.

Description

DETAILED DESCRIPTION As discussed above, with cloud multi-tenancy gaming, enabling datacenter servers to host multiple players while maintaining high quality graphics may be challenging. For example, over-provisioning datacenter servers may result in datacenter resources being under-utilized while under-provisioning datacenter servers may result in video quality that is unacceptable to some players, causing them to stop playing. Servers hosting games may use the systems and techniques described herein to create gaming data (e.g., profiles) that enable the servers to host a large number of gaming sessions while dynamically adjusting rendering settings of one or more gaming sessions based on a total rendering time and a total estimated perceived quality. For example, if the total amount of graphics processing for the gaming sessions is determined to approach the graphics processing capacity of the servers, the rendering settings of one or more of the gaming sessions may be adjusted to reduce rendering complexity (e.g., by reducing or eliminating lighting effects, shadows, environmental details, etc.). This reduction in rendering complexity may be perceived by users as a reduction in quality. The rendering complexity may be reduced for one or more of the gaming sessions in such a way as to minimize the drop in estimated perceived quality to the users of the particular devices, thereby maximizing the aggregate estimated perceived quality of all the users. For example, the complexity level of gaming sessions that take place in relatively dark environments (e.g., nighttime or interior scenes with little lighting) may be reduced with little or no drop in estimated perceived quality. At a later point in time, the complexity level of gaming sessions that were previously reduced in complexity may be dynamically increased if the servers have sufficient capacity. For example, if a gaming session whose complexity was previously reduced encounters a situation that would ...

DETAILED DESCRIPTION

As discussed above, with cloud multi-tenancy gaming, enabling datacenter servers to host multiple players while maintaining high quality graphics may be challenging. For example, over-provisioning datacenter servers may result in datacenter resources being under-utilized while under-provisioning datacenter servers may result in video quality that is unacceptable to some players, causing them to stop playing. Servers hosting games may use the systems and techniques described herein to create gaming data (e.g., profiles) that enable the servers to host a large number of gaming sessions while dynamically adjusting rendering settings of one or more gaming sessions based on a total rendering time and a total estimated perceived quality. For example, if the total amount of graphics processing for the gaming sessions is determined to approach the graphics processing capacity of the servers, the rendering settings of one or more of the gaming sessions may be adjusted to reduce rendering complexity (e.g., by reducing or eliminating lighting effects, shadows, environmental details, etc.). This reduction in rendering complexity may be perceived by users as a reduction in quality. The rendering complexity may be reduced for one or more of the gaming sessions in such a way as to minimize the drop in estimated perceived quality to the users of the particular devices, thereby maximizing the aggregate estimated perceived quality of all the users. For example, the complexity level of gaming sessions that take place in relatively dark environments (e.g., nighttime or interior scenes with little lighting) may be reduced with little or no drop in estimated perceived quality. At a later point in time, the complexity level of gaming sessions that were previously reduced in complexity may be dynamically increased if the servers have sufficient capacity. For example, if a gaming session whose complexity was previously reduced encounters a situation that would benefit from a higher complexity rendering (e.g., due to complex game play, well-lit environment, multiple moving objects, explosions, or the like) the rendering complexity of the gaming session may be increased if the servers have sufficient graphics processing capacity at that point in time. In some cases, the complexity of a second gaming session may be reduced to enable the complexity of a first gaming session to be increased. To illustrate, initially, the first gaming session may take place in a poorly-lit environment with relatively few moving objects and relatively few effects, while the second gaming session may take place in a well-lit environment with multiple moving objects and various complex effects. If the total rendering time of the gaming sessions approaches the rendering capacity of the servers, the rendering settings of the first gaming session may be adjusted, e.g., to lower the rendering complexity (e.g., thereby providing a lower quality output), because the drop in estimated perceived quality may be acceptable (e.g., imperceptible or barely perceptible) to the user. At a later point in time, the first gaming session may take place in a well-lit environment with multiple moving objects and various complex effects while the second gaming session may take place in a poorly-lit environment with relatively few moving objects and relatively few effects. At the later point in time, the rendering settings of the first gaming session may be adjusted to a higher complexity setting (e.g., resulting in higher quality rendering) while the rendering settings of the second gaming session may be adjusted to a lower complexity setting (e.g., resulting in a lower quality rendering). As used herein, the terms “HC” and “LC” refer to the rendering complexity of the output frames for a gaming session, whereas “estimated perceived quality” (also referred to herein as “perceived quality”) is a measure that estimates how the output frames are perceived by a user of the gaming session. Thus, HC refers to a higher complexity rendering (e.g., with a corresponding higher quality rendering) while LC refers to a lower complexity rendering (e.g., with a corresponding lower quality rendering).

Determining when to adjust (e.g., reduce or increase) the complexity of rendering settings and identifying particular gaming sessions whose complexity may be adjusted may be performed by first gathering data for each game and then using the data to selectively adjust complexity levels of gaming sessions. The data may be gathered during off-peak times when the gaming servers are at less than full capacity and/or in a controlled environment (e.g., gaming servers that are not accessible to the general public or are accessible to select individuals). For example, the controlled environment may use simulated device input to perform regression testing to identify situations in each game where complexity can be reduced without incurring a proportional or significantly perceptible drop in the estimated perceived quality.

While the examples provided herein describe multiple game instances providing output frames to multiple computing devices, the system and techniques described herein may be used to provide multiple streams of multimedia content to multiple computing devices. For example, the system and techniques described herein may be used for a server system that transcodes multiple live video streams to provide multiple streams of multimedia content, such as movies, television shows, sporting events, news programs, and other types of multimedia content to multiple computing devices.

Thus, data may be gathered to identify situations in a game where the complexity provided to the user may be reduced without a proportional reduction in the estimated perceived quality. For example, low-light environments and environments with relatively simple game play may be identified as situations where the complexity and/or quality of the output frames may be reduced without significantly impacting the estimated perceived quality. The gathered data may be used when a gaming server is hosting multiple gaming sessions to select particular gaming sessions and to dynamically adjust (e.g., increase or decrease) the complexity and/or quality levels of the selected gaming sessions such that the available graphics processing capacity of the gaming server is not exceeded while providing a high level of aggregate estimated perceived quality to the multiple gaming sessions.

Illustrative Architectures

FIG. 1is an illustrative architecture100to gather gaming data according to some implementations. The architecture100includes multiple computing devices, such as a first computing device102to an Lth computing device104(where L>1), coupled to one or more server106via a network108. The network108may include one or more networks, such as a wireless local area network (e.g., WiFi®, Bluetooth™, or other type of near-field communication (NFC) network), a wireless wide area network (e.g., a code division multiple access (CDMA) network, a global system for mobile (GSM) network, or a long term evolution (LTE) network), a wired network (e.g., Ethernet, data over cable service interface specification (DOCSIS), Fiber Optic System (FiOS), Digital Subscriber Line (DSL) or the like), other type of network, or any combination thereof.

Each of the computing devices102to104may be a desktop computing device, a laptop computing device, a tablet computing device, a wireless phone, a media playback device, a media device, a set-top-box device, a gaming device, another type of computing device, or any combination thereof. The computing devices102to104may include one or more processors and one or more computer readable media. The computer readable media may store instructions that are organized into modules and that are executable by the one or more processors to perform various functions. The computing devices102to104may include a communications interface that enables the computing devices102to104to communicate with the server(s)106using the network108by sending input data110and receiving output data112. The output data112may include multiple streams of multimedia content (e.g., audio and video content), with each stream sent to a particular device of the computing devices102to104. Additionally or alternatively, the computing devices102to104may include one or more input devices (e.g., numeric keypad, QWERTY-based keyboard, mouse, trackball, joystick, microphone, motion sensor (e.g., such as an accelerometer an inertial measurement unit, a compass or the like), touch-sensitive surface, or the like) to generate the input data110. The computing devices102to104may additionally or alternatively include one or more output devices (e.g., display screen, audio transducer such as a speaker or headphones, tactile generator for generating vibration or resistance, etc.) to output the output data112.

The server106may include one or more processors114and one or more computer readable media116. The processors114may include one or more central processing units (CPUs), graphics processing units (GPUs), arithmetic processing units (APUs), other types of processing units, and/or any combination thereof.

The computer readable media116may be used to store various types of data as well as instructions that are executable by the processors114. The instructions may be organized into various modules, such as a first game module118to an Mth game module120(where M>1). The game modules118to120may provide the same video game or different video games. For example, the first game module118may provide a first game or type of video game (e.g., first person shooter) while the second game module120may provide a second game or type of video game (e.g., flight simulator). Each game module118may be based on a game engine. The game engine may provide a software framework for developers to create and develop video games. For example, the game engine may include a rendering engine for 2D or 3D graphics, a physics engine, collision detection (and collision response), sound, scripting, animation, artificial intelligence, networking, streaming, memory management, threading, etc. InFIG. 1, the first game module118is based on a first game engine122and the Mth game module120is based on an Mth game engine124. In some implementations, the game engines122to124may be the same game engine while in other implementations, at least two of the game engines122to124may be different from each other. For example, in some implementations, each of the game engines122to124may be different to enable the server106to provide a wide variety of games.

When one of the computing devices102to104initiates (or resumes) playing a particular game provided by one of the game modules118to120, a gaming instance of the particular game may begin executing. A gaming instance may also be referred to as a gaming session. The server106may host N game instances, such as a first game instance126to an Nth game instance128(where N>1 and N not necessarily equal to M). Each of the game instances126to128may be created based on one of the game modules118to120. Each of the game instances126to128may have an associated set of rendering settings (abbreviated as “render. set.” inFIG. 1), such as first rendering settings130associated with the first game instance126to Nth rendering settings132. Each of the rendering settings130to132may specify various rendering-related settings. For example, the rendering settings130may include one or more of a complexity setting associated with one of the game instances126to128, a screen resolution (e.g., 1920×1080 pixels, 1920×1600 pixels, and the like) of one of the computing devices102to104, an available (e.g., or a maximum, a minimum, or an average) bandwidth for receiving data by one of the computing devices102to104, another rendering-related setting, or any combination thereof. For illustration purposes, two complexity levels, e.g., high complexity (HC) and low complexity (LC), are used in many examples herein, where HC is a higher complexity rendering setting as compared to LC. However, it should be understood that additional complexity levels may be used in addition to HC and LC, such as a medium complexity (MC) that is in-between HC and LC. For example, X number of complexity settings (where X>1) may be provided and adjusted. Other rendering settings may include an anti-aliasing level (e.g., none, 2 times, 4 times, etc.), lighting effects, tessellation level, texture quality level, fog depth, motion blur, and the like. The number of complexity levels may include a combination chosen from the various rendering settings described herein.

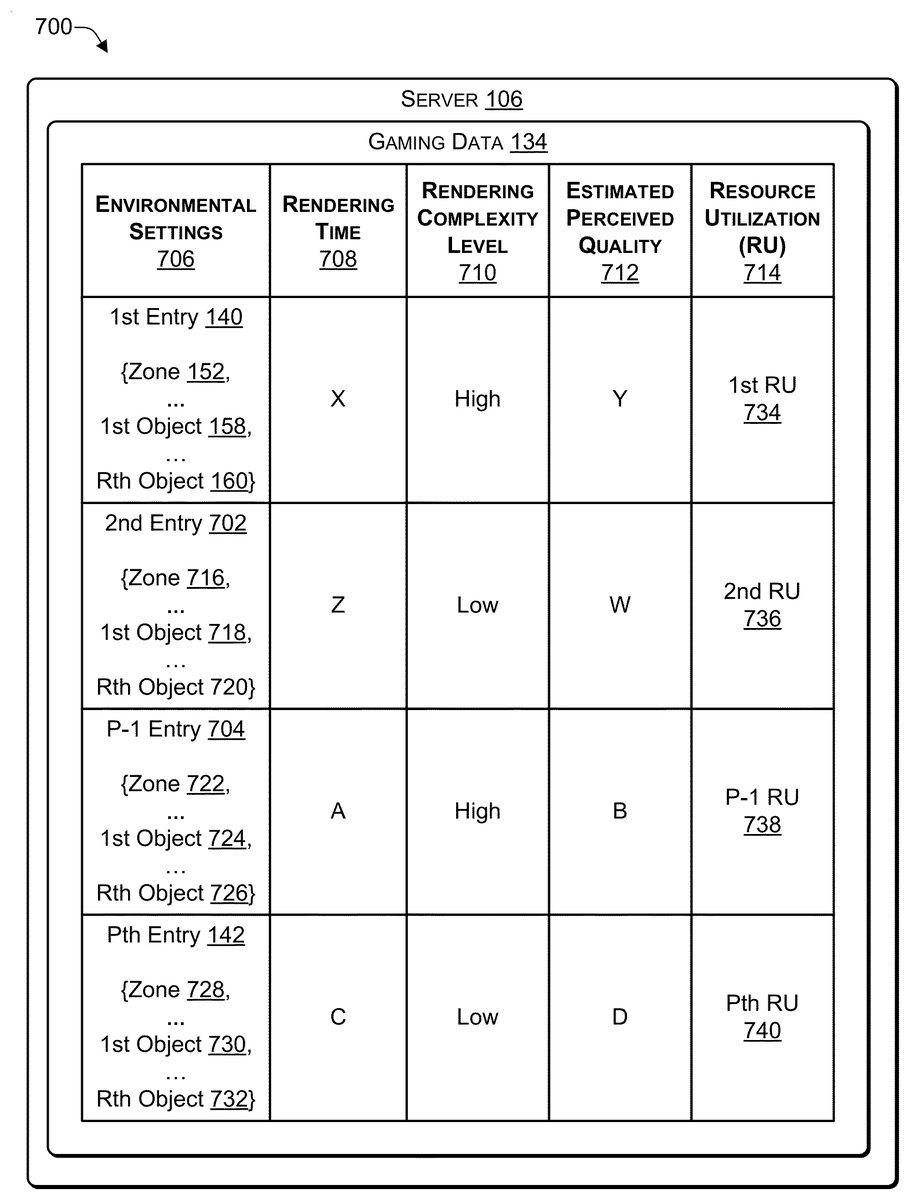

The server106may gather gaming data134to enable the server106to identify game instances whose rendering settings may be dynamically adjusted. The gaming data134may be stored in a data structure, such as a table or other data structure. For example, if the server106determines that generating the output data112for the game instances126to128, at the corresponding rendering settings130to132, would approach (or exceed) the computing capacity or threshold of the server106, the server106may identify a subset of the game instances126to128and reduce a rendering complexity setting from the corresponding rendering settings130to132of the subset without exceeding the computing capacity of the server106. One or more of the rendering settings130to132may be adjusted in way that the total estimated perceived quality is maximized, e.g., the drop in total estimated perceived quality of the game instances126to128is less than proportional to the drop in total rendering time to render the game instances126to128. For example, the drop in estimated perceived quality may be barely perceptible or imperceptible to the users.

While the systems, techniques, and examples herein are described with reference to the game modules118to120, the systems, techniques, and examples described herein may also be applied to the game engines122to124instead of (or in addition) to the game modules118to120. For example, the gaming data134may be gathered by shadowing or performing regression on game engines rather than game modules. The gaming data134may be used to adjust the rendering settings associated with game instances of games based on the game engines for which the gaming data134was gathered.

In addition, the systems, techniques, and examples described herein may be used to dynamically adjust the complexity level (or output quality level) of multiple multimedia streams being sent to the computing devices102to104. Each of the multiple multimedia streams may be generated by each of multiple multimedia instances, similar to the game instances126to128. If the total time to render the multiple multimedia streams approaches the processing capacity of the server106(e.g., total processing time for the multiple streams minus the processing capacity of the server106is less than a threshold), then the server106may adjust the complexity level of one or more of the instances that are generating the multiple multimedia streams such that the total processing time for the multiple multimedia streams does not exceed the processing capacity of the server106while maximizing the aggregate perceived quality of the multiple multimedia streams.

Collecting Gaming Data Using Shadowing

One way in which the server106may gather data is to create one or more shadow instances of the game instances126to128when the server106is relatively lightly loaded (e.g., N is relatively small). For example, the server106may create shadow instance136that is a synchronized copy of one of the game instances126to128. The game instances126to128that are being shadowed may be associated with one of the computing devices102to104. For example, the shadow instance136may mirror the game play occurring in the game instance that is being shadowed. The corresponding rendering settings, e.g., shadow rendering settings138, of the shadow instance136, may be different as compared to the rendering settings of the original game instance. For example, the shadow instance136may copy the game play of the Nth game instance128while the shadow rendering settings138may differ from the Nth rendering settings132. To illustrate, the shadow rendering settings138may be at a lower or a higher rendering complexity level as compared to the Nth rendering settings132. For example, the shadow rendering settings138may include a high complexity (HC) rendering setting and the Nth rendering settings132may include a low complexity (LC) rendering setting. As another example, the shadow rendering settings138may include an LC rendering setting and the Nth rendering settings132may include an LC rendering setting.

The server106may gather and store multiple entries in the gaming data134, such as a first entry140to a Pth entry142(where P>1 and where L, M, N, and P may be different from each other). The server106may periodically (e.g., at a predetermined interval) compare an output frame F of the Nth game instance128with an output frame SF of the shadow instance136. For example, the server106may compare each (or every other) output frame F of the Nth game instance128with each (or every other) output frame SF of the shadow instance136. The server106may measure an amount of rendering (e.g., processing) time T to produce the output frame F and measure an amount of rendering time ST to produce the output frame SF. The server106may measure a difference in the estimated perceived quality (PQ) between output frame F and output frame SF. For example, the server106may determine a perceived quality difference (PQD)144between output frame F and output frame SF as follows: PQD=PQ(F)−PQ(SF). The estimated perceived quality (e.g., PQ(F) and PQ(SF)) may be measured using a structural similarity index measure (SSIM), perceptual evaluation of video quality (PEVQ), Peak Signal-to-Noise-Ration (PSNR) or another type of measurement of perceived quality.

The server106may store the estimated perceived quality difference144, the shadow rendering settings138, and a rendering time146associated with rendering an output frame of the shadow instance136in an entry (e.g., the first entry140) of the gaming data134. The server106may also record one or more environmental settings150. The environmental settings150may be implemented using a data structure, such as a vector. The environmental settings150may include settings associated with the environment being rendered in the Nth game instance128and the shadow instance136. The environmental settings150may be the same for both the Nth game instance128and the shadow instance136because the shadow instance136is shadowing the game play of the Nth game instance128. The environmental settings150may be specific to a particular game module (e.g., gaming application). The environmental settings150may include a zone152, weather/season154, game time of day156, one or more visible objects, such as a first object158to an Rth object160, and other settings162. For example, the zone152may identify a particular region or area, such as the interior of a building or an outdoor area, where the game play is taking place. For an outdoor area, the weather/season154may indicate an amount of sunshine, clouds, rain, snow, lightening, or other weather or season related rendering information. The game time of day156may indicate a time of day associated with the game play, e.g., whether the game play is taking place during the day, at night, at sunrise, at sunset, etc. The objects158to160may identify objects, such as monsters or aliens, spaceships, etc. that are visible in the output frame. For example, the first object158may identify a number of a first type of object that is visible or potentially visible in the output frames while the Rth object160may identify a number of an Rth type of object that is visible or potentially in the output frames. Potentially visible means that the object may currently be obscured by another object in a frame or the object may be nearby, e.g., just outside a field of view of a current frame. For example, in a first person shooter, an object may be obscured in a current frame by another object, such as a monster. If the monster is shot and killed and falls down (or is vaporized) or if the monster makes an evasive maneuver, at least a portion of the object that was previously obscured may be displayed in a subsequent frame. As another example, an object just outside the view of a user in a current frame may become visible in a subsequent frame if the object is moving or if the user's perspective changes (e.g., the user's character in the game moves, resulting in a different field of view in the subsequent frame. Nearby objects may be objects just outside a field of view in a current frame, or may be objects in the same game zone (regardless of line-of-site visibility). Zones may be used to segment a game's map into smaller regions. Games and game engines may maintain a list or table of potentially visible objects in each zone. The other settings162may include other environmental factors of the output frame that may be taken into consideration when determining whether to adjust the rendering settings of a game instance.

The output data112may include output frames164. For example, the output frames164may include a first output frame for the first computing device102and an Lth output frame for the Lth computing device.

The gaming data134may be used to identify environments where the estimated perceived quality difference144is relatively small and to adjust the rendering settings of the corresponding game instances accordingly. For example, when the server106determines that the game instances126to128are approaching the rendering capacity of the server106, the server106may identify particular game instances from the game instances126to128whose rendering complexity may be reduced based on the gaming data134. To illustrate, the game play of the Nth game instance128may take place in a poorly lit environment (e.g., overcast sky, indoors with little light, or at night) while the game play of the first game instance126may take place in a brightly lit environment with multiple monsters approaching. The environmental settings150of the Nth game instance128may indicate that the estimated perceived quality difference144between HC and LC may be relatively small compared to the environmental settings150of the first game instance126. In this example, when the server106determines that the game instances126to128are approaching the rendering capacity of the server106, the server106may adjust the Nth rendering settings132from HC to LC because the perceived quality difference for adjusting the Nth game instance128from HC to LC is less than the perceived quality difference for adjusting the first game instance126from HC to LC. At a later point in time, the game play of the Nth game instance128may take place in a brightly lit environment while the game play of the first game instance126may take place in a poorly lit environment. In this situation, the server106may adjust the Nth rendering settings132from LC to HC and adjust the first rendering settings130from HC to LC.

Collecting Gaming Data Using Regression

A disadvantage with shadowing one or more of the game instances126to128is that all possible environmental settings for the game modules118to120that are instantiated (e.g., as the game instances126to128) may not be encountered because the shadow instance136is shadowing a game instance associated with a user (e.g., using one of the computing devices102to104). One approach to systematically collecting the gaming data134is to use regression to build entries in the gaming data134for each element of the environmental settings150.

The regression may be performed by executing two or more game instances of each of the game modules118to120, with each game instance having a different rendering setting. The server106may hold all but one setting of the environmental settings150invariant and add entries to the gaming data134while varying the other settings of the environmental settings150. For example, for each zone152that is encountered in a game provided by one of the game modules118to120, the server106may store entries for various types of weather/season154, various game time of day156, various numbers of the objects158to160, and so on. Each entry that is added may include the perceived quality difference114, the shadow rendering settings138(e.g., rendering settings associated with the LC rendering settings), and the rendering time146. For example, for an indoor scene in a first building, the server106may add entries to the gaming data134based on a number of the objects158to160.

The regression may be performed when the server106is lightly loaded (e.g., N is relatively small). In some cases, after the gaming data134has been gathered (e.g., using shadow instances or regression), the gaming data134may be analyzed and entries that are identified as redundant may be removed. For example, for a particular environmental setting, varying the other environmental settings may have little or no impact on the perceived quality difference144. To illustrate, in a particular zone that has a poorly-lit environment (e.g., a cave), varying the weather or season, the game time of day, or the number of objects, may not result in a change to the perceived quality difference. The first entry140may specify that when zone152identifies the particular zone, the other environmental settings may be ignored because the perceived quality difference144is the same regardless of the other environmental settings. As another illustration, when the zone152specifies an outdoor zone and the game time of day specifies night time, the perceived quality difference144does not change regardless of how many of the objects158are in the output frame. Thus, the gaming data134may be thinned out by removing entries where changes to the environmental settings150do not result in a change to the perceived quality difference144.

Thus, the server106may gather gaming data134using techniques such as shadow game instances or regression. The game data134may enable the server106to identify which of the rendering settings130to132(corresponding to the game instances126to128) may be adjusted (e.g., from HC to LC or from LC to HC). The server106may dynamically adjust the rendering settings130to132such that the total amount of processing time (e.g., rendering time) to render the output frames for the game instances126to128does not exceed the processing capacity of the server106. For example, if the server106determines that the total amount of processing time to render the output frames for the game instances126to128would exceed the processing capacity of the server106, the server106may adjust one or more of the rendering settings130to132(e.g., from HC to LC) based on which game instances126to128would incur the smallest drop in estimated perceived quality. Thus, one or more of the rendering settings130to132may be adjusted (e.g., from HC to LC) in a way that maximizes the aggregate estimated perceived quality of the game instances126to128without exceeding the processing capacity of the server106.

When the server106determines that one or more of the game instances126to128can be re-adjusted (e.g., from a lower complexity to a higher complexity) without exceeding the processing capacity, the server106may selectively re-adjust one or more of the game instances126to128based on which game instances126to128would incur the greatest increase in estimated perceived quality. Thus, one or more of the rendering settings130to132may be adjusted (e.g., from a lower complexity to a higher complexity) in a way that maximizes the aggregate estimated perceived quality of the game instances126to128without exceeding the processing capacity of the server106.

FIG. 2is an illustrative architecture200to determine differences in perceived quality according to some implementations. The architecture200illustrates how the server106may determine the difference in perceived quality between two or more game instances. WhileFIG. 2illustrates determining the difference in perceived quality between two game instances, e.g., the Nth game instance128and the shadow instance136, the techniques described herein may be used to determine the difference in perceived quality between more than two game instances.

The Nth game instance128may have a corresponding Nth set of rendering settings132. The shadow instance136may have a corresponding set of shadow rendering settings138. The Nth set of rendering settings132are different from the shadow rendering settings138. For example, the Nth set of rendering settings132may have a higher complexity rendering setting as compared to the shadow rendering settings138. To illustrate, the Nth set of rendering settings132may be set to HC and the shadow rendering settings138may be set to LC.

The Nth game instance128may output (e.g., as the output data112ofFIG. 1) multiple output frames, such as a first frame202to a Qth frame204(where Q>1). The shadow instance136may output multiple output frames, such as a first shadow frame206to a Qth frame204(where Q>1). The server106may determine an amount of rendering time (e.g., processing time) to generate each output frame. For example, the server106may determine a first rendering time210to generate the first frame202, a Qth processing212to generate the Qth frame204, a first shadow rendering time214to generate the first shadow frame206, and a Qth shadow rendering time216to generate the Qth shadow frame208.

The game play of the shadow instance136may shadow the game play of the Nth game instance128. Thus, each frame generated by the Nth game instance128may depict the same scene as the first shadow frame206, but based on a different rendering setting, e.g., the output frames of the Nth game instance128may be based on the Nth rendering settings132and the output frames of the shadow instance136may be based on the shadow rendering settings138. For example, the output frames of the Nth game instance128may depict a scene in HC while the output frames of the first shadow frame206may depict the same scene in LC.

The server106may determine a difference in perceived quality (PQ) between one or more of the frames202to204(of the Nth gaming instance128) and the corresponding frames206to208(of the shadow instance136). For example, the server106may determine a difference in perceived quality218between the first frame202and the first shadow frame206and determine a difference in perceived quality220between the Qth frame204and the Qth shadow frame206. Depending on the implementation, the server106may determine a difference in perceived quality between every frame, or between every Z number of frames (where Z>1). For example, in some implementations, the server106may determine a difference in perceived quality between every other frame, between every fifteenth frame (Z=15), every thirtieth frame (Z=30), etc.

The server106may determine environmental settings associated with each frame. Because the shadow instance136is shadowing the Nth game instance128, the environmental settings are the same for the corresponding frames. For example, first environmental settings222may correspond to the first frame202and the first shadow frame206and Qth environmental settings224may correspond to the Qth frame204and the Qth shadow frame208. The server106may store (e.g., in the gaming data134ofFIG. 1) the rendering times214to216, the shadow rendering times214to216, the difference in perceived quality218to220, and the environmental settings222to224. The environmental settings222to224may identify the environments corresponding to the frames202to204(and the frames206to208). For example, the first environmental settings222may indicate that the first frame202and the first shadow frame206depict a well lit interior of a building with three objects (e.g., monsters) approaching. As another example, the Qth environmental settings224may indicate that the Qth frame204and the Qth shadow frame208depict a forest with many trees at night with one object approaching.

Thus, the server106may repeatedly compare output frames from two (or more) game instances of the same game, with each game instance having different rendering settings, to determine the difference in perceived quality between the frames. The server106may determine the amount of rendering time (e.g., processing time) to render the output frames. The server106may determine the environmental settings for the output frames, e.g., what type of zone or region the action in which the game play is taking place, the number of moving objects, the amount of lighting, etc. The server106may use the rendering time and the difference in perceived quality to perform a cost-benefit analysis (e.g., where rendering time is the cost and the difference in perceived quality is the benefit) to adjust the rendering settings of select game instances to maximize the benefits (estimated perceived quality) for the game instances while adjusting the costs such that the costs do not exceed the available processing capacity.

Dynamically Adjusting Rendering Settings

FIG. 3is an illustrative architecture300to adjust rendering settings based on gaming data according to some implementations. The architecture300may be used when the server106begins to approach 100% utilization of a capacity302(e.g., a rendering capacity or a processing capacity) of the server106. For example, the techniques described herein may be used when the capacity302of the server106is greater than a threshold amount, such as 80% of the capacity302, 90% of the capacity302, or the like. The threshold amount may be set by a system administrator. As another example, the techniques described herein may be used when a difference between the processing capacity302and the total amount of rendering time to render the game instances126to128is less than a predetermined rendering threshold.

The server106may host the game instances126to128with the corresponding rendering settings130to132. Each of the rendering settings130to132may identify various settings associated with the rendered output frames, such as a screen resolution associated with the computing device that is displaying the output frames, a communications reception bandwidth (e.g. the rate at which data can be received) associated with the computing device that is displaying the output frames, a complexity setting of the rendered output frames, other rendering-related settings or any combination thereof. InFIG. 1, the first rendering settings130include a first (rendering) complexity setting304and the Nth rendering settings132include an Nth (rendering) complexity setting306. For example, if the first game instance126is associated with the first computing device102, the first rendering settings130may indicate that the first computing device102has a screen resolution of 1280×720 pixels, a communications reception bandwidth) of 10 megabits per second (mbps), and a high complexity rendering setting. The communications bandwidth may include a minimum bandwidth, a maximum bandwidth, an average bandwidth, or any combination thereof.

Each of the game instances126to128may have the corresponding environmental settings222to224. The environmental settings222to224may change as players progress through the game play of the game instances126to128. The environmental settings222to224may identify a zone or region where the game play is taking place, whether game play is taking place at night or during the day, weather conditions associated with the game place, seasonal conditions (e.g., spring, summer, fall, winter, monsoon, etc.) associated with the game play, a number of objects (e.g., moving objects, such as monsters) visible in the game play, and other environmental details associated with the game play of each of the game instances126to128.

The server106may determine an estimated perceived quality308to310for each of the game instances118to120based on the corresponding rendering settings130to132and the environmental settings222to224. Each of the estimated perceived quality308to310may be determined such that the resultant estimated perceived quality is a decimal value between −1 and 1 or between 0 and 1. A lower value (e.g., 0.4) may indicate a lower estimated perceived quality as compared to a higher value (e.g., 0.8).

The server106may determine a first rendering time312to render an output frame of the first game instance126and an Nth rendering time314to render an output frame of the Nth game instance128. The server106may determine a total rendering time to render the output data112by summing the N rendering times312to314. Thus, the server106may repeatedly adjust the rendering settings of the game instances126to128such that the total of the rendering times312to314is less than the capacity302while maximizing a sum of the estimate perceived quality of the game instances. For example, in equation form, the problem the server106repeatedly solved may be expressed as:

(SUM(rendering times 312 to 314)Y. In response to this determination, the server106may adjust the first rendering settings130, e.g., by lowering the complexity setting304from a higher complexity setting to a lower complexity setting. While this example illustrates the server106adjusting one set of rendering settings, in a typical implementation, the server106may adjust one or more of the rendering settings130to132.

The server106may determine which of the rendering settings130to132to adjust based on the game data134. The game data134may include a first entry140to the Pth entry142. The first entry may include the first rendering settings138, the first perceived quality (PQ) difference144, the first rendering time146, and the first environmental settings150. The Pth entry142may include a Pth PQ difference316, Pth rendering settings318, Pth environmental settings320, and a Pth rendering time322. The server106may compare the environmental settings222to224of the game instances126to128with the environmental settings150,308. For example, when one of the environmental settings222to224of the game instances126to128matches one of the environmental settings150,308of the entries140to142, the server106may identify the perceived quality difference (e.g., one of the PQ differences144,304) and the rendering time (e.g., one of the rendering times144,310) associated with lower complexity rendering settings (e.g., the rendering settings138,306). The server106may select one or more of the rendering settings138,306that results in the total rendering time for the game instances126to128being less than the capacity302and results in the least drop in the sum of the estimated perceived quality308to310.

In some implementations, the server may maximize the sum of the estimated perceived quality308to310by minimizing a drop in the sum of the estimated perceived quality from pre-adjustment to post-adjustment. For example, the server106may determine the sum of the estimated perceived quality308to310before adjusting the rendering settings130to132(e.g., pre-adjustment sum). The server106may identify one or more of the rendering settings130to132that can be adjusted such that the difference between the post-adjustment sum of the estimated perceived quality308to310and the pre-adjustment sum of the estimated perceived quality308to310is less than a predetermined estimated perceived quality threshold. For example, the server106may identify one or more of the rendering settings130to132that can be adjusted such that the post-adjustment sum of the estimated perceived quality308to310is less than a five percent drop from the pre-adjustment sum of the estimated perceived quality308to310.

The server106may use various techniques to avoid repeatedly adjusting the same rendering settings (e.g., one or more of the rendering settings130to132) in a short period of time. For example, users engaged in a particular game instance may not enjoy the experience of the corresponding complexity setting being repeatedly adjusted, e.g., adjusted from HC to LC and then back to HC (or adjusted from LC to HC and then back to LC) within a short period of time. To avoid such situations, when the server106adjusts one or more of the rendering settings130to132, the server106may add a timestamp to the adjusted rendering settings to identify when the rendering settings were last adjusted. When the difference between the current time and the timestamp of the adjusted rendering settings is less than a predetermined time interval (e.g., one minute, five minutes, ten minutes, twenty minutes, or the like), the server106may not adjust one or more of the rendering settings130to132.

In an implementation where there are more than two complexity settings, the server106may use the timestamp identifying the last time the rendering settings were adjusted to avoid repeatedly adjusting the same rendering settings (e.g., one or more of the rendering settings130to132) within a short period of time. For example, in an implementation that includes a high complexity setting, a medium complexity setting, and a low complexity setting, when a particular complexity setting (e.g., of the complexity settings304to306) is adjusted from high complexity to medium complexity, the server106may not adjust the particular complexity setting from medium complexity to low complexity within a predetermined time period (e.g., one minute, five minutes, ten minutes, twenty minutes, or the like). As another example, when a particular complexity setting (e.g., of the complexity settings304to306) is adjusted from high complexity to medium complexity, the server106may not further drop the particular complexity setting from medium complexity to low complexity until the remainder of the complexity settings304to306have been adjusted to medium complexity.

Thus, after gathering the gaming data134, the server106may periodically adjust the rendering settings corresponding to one or more game instances. For example, if a difference between the processing capacity of the server106and the total processing power to render the multiple game instances126to128is less than a rendering threshold, the server106may identify and adjust one or more rendering settings. The server106may identify and adjust rendering settings that provide the most “bang for the buck”, e.g., those rendering settings whose adjustment results in a relatively small drop in estimated perceived quality but a relatively large drop in rendering time. Expressed mathematically, the server106may identify and adjust rendering settings to reduce the total rendering time to render the multiple game instances (e.g., such that total rendering time<rendering capacity) while maximizing the total estimated perceived quality. In some cases, the total estimated perceived quality may be maximized by minimizing the difference between the pre-adjustment total estimated perceived quality of the multiple game instances and the post-adjustment total estimated perceived quality of the multiple game instances or adjusting one or more rendering settings such that a difference between the pre-adjustment total estimated perceived quality and the post-adjustment total estimated perceived quality is less than an estimated perceived quality threshold.

When the server106determines that the server106has additional rendering capacity and one or more game instances are at less than the highest complexity rendering setting, the server106may adjust at least one of the one or more game instances to a higher complexity rendering setting. For example, if a difference between the processing capacity of the server106and the total processing power to render the multiple game instances126to128is greater than a second rendering threshold, the server106may identify and adjust one or more rendering settings. The server106may identify rendering settings that have a complexity setting that is less than the highest available complexity setting and adjust the rendering settings to a higher complexity setting to maximize the total estimated perceived quality of the multiple game instances such that the total time to render the multiple game instances does not exceed the capacity of the server106. For example, the server106may identify and adjust rendering settings to maximize the total estimated perceived quality such that total rendering time is less than the rendering capacity of the server106.

Example Processes

In the flow diagrams ofFIGS. 4-6, each block represents one or more operations that can be implemented in hardware, software, or a combination thereof. In the context of software, the blocks represent computer-executable instructions that, when executed by one or more processors, cause the processors to perform the recited operations. Generally, computer-executable instructions include routines, programs, objects, modules, components, data structures, and the like that perform particular functions or implement particular abstract data types. The order in which the blocks are described is not intended to be construed as a limitation, and any number of the described operations can be combined in any order and/or in parallel to implement the processes. For discussion purposes, the processes400,500, and600are described with reference to the architectures100,200, and300as described above, although other models, frameworks, systems and environments may be used to implement these processes.

FIG. 4is a flow diagram of an example process400that includes executing one or more shadow instances according to some implementations. For example, the process400may be performed by one or more gaming servers, such as the server106ofFIGS. 1, 2, and 3.

At402, multiple game instances (e.g., based on one or more game modules or game engines) may be executed. Each game instance of the multiple game instances may have corresponding rendering settings. For example, inFIG. 1, the server106may execute the game instances126to128with the corresponding rendering settings130to132.

At404, one or more shadow instances may be executed. Each shadow instance may shadow one of the multiple game instances. A shadow instance that is shadowing a game instance may have a different rendering setting compared to the game instance. For example, inFIG. 1, the server106may execute the shadow instance136that shadows one of the name instances126to128. The shadow instance136may have the corresponding rendering settings138. When the shadow instance136is shadowing the Nth game instance128, the shadow rendering settings138may be different from the Nth rendering settings132.

At406, a rendering time to (1) render an output frame of the game instances that are being shadowed may be determined and (2) render an output frame of the shadow instances may determined. At408, a difference in perceived quality of (1) the output frame and (2) the shadow frame may be determined. For example, inFIG. 2, the server106may determine (1) the first rendering time210to render the first frame202of the Nth game instance128and (2) the first shadow rendering time214to render the first shadow frame206of the shadow instance136. The server106may determine the first difference in perceived quality218between the first frame202and the first shadow frame206.

At410, for each shadow instance, the server may store (1) the rendering settings of the shadow instance, (2) the rendering time to render the shadow frame, (3) the difference in the perceived quality between the output frame and the shadow frame, and (4) the environmental settings (e.g., associated with a game instance and the shadow instance that is shadowing the game instance). For example, inFIG. 1, the server106may store in the gaming data134(1) the shadow rendering settings138, (2) the rendering time146, (3) the perceived quality difference144, and (4) the environmental settings150associated with the Nth game instance128and the shadow instance136that is shadowing the Nth game instance128.

At412, the server may render a next output frame for each game instance being shadowed and a next shadow frame for each shadow frame, and the process may proceed to406, where the rendering time of the next output frame and the next shadow frame may be determined. For example, inFIG. 2, the server106may render the Qth frame204and the Qth shadow frame208. The server106may determine the Qth rendering time212, the Qth shadow rendering time216, and the Qth difference in perceived quality220. In some cases,406to412may be repeated for a predetermined period of time or until a predetermined quantity of gaming data has been gathered.

Thus, when executing multiple game instances, one or more shadow instances may be used to shadow the game instances. A shadow instance that is shadowing a game instance may have different shadow rendering settings (e.g., including a lower complexity setting) as compared to the rendering settings of the game instance that is being shadowed. The time to render output frames of the game instance and shadow frames of the shadow instance may be determined. A difference in perceived quality between the shadow frames and the output frames may be determined. The server may periodically store various information, such as the shadow rendering settings, the rendering time to render the shadow frames, the difference in perceived quality, and environmental settings associated with both the game instances and the shadow instances.

In some cases, the information gathered using the shadow instances may be analyzed. For example, inFIG. 1, the gaming data134may be analyzed and some of the entries140to142deleted and/or modified to reduce the number of entries. For example, the zone152may identify a poorly-lit environment and multiple entries may be associated with the poorly-lit environment. Analyzing the multiple entries may determine that the perceived quality difference144is not affected by the number of objects158to160in the poorly-lit environment. In this example, the multiple entries may deleted and replaced with a single entry indicating that the number of objects in the output do not need to be considered. For example, a wild-card character (e.g., “*”) may be used to indicate that a particular environmental setting, such as a number of the objects158to160, does not affect the perceived quality difference144. As another example, the poorly-lit environment may be the inside of a building with no windows or light, such that that environment is poorly-lit regardless of the game time of day156, e.g., regardless of whether the game play takes place during the day or at night. In this example, the perceived quality difference144may not change when the game time of day156changes. A wild-card character or other notation may be used to indicate that a particular environmental setting, e.g., the game time of day156, does not affect the perceived quality difference144.

The stored information may be used to identify environments where the rendering complexity can be adjusted form a higher complexity to a lower complexity to reduce the rendering time without a significant (e.g., perceptible) drop in estimated perceived quality. For example, environments with a relative low amount of lighting or with relative few moving objects may be identified as environments where the rendering settings may be adjusted to a lower complexity level with a tolerable drop in the estimated perceived quality.

FIG. 5is a flow diagram of an example process500that includes performing regression over environmental settings according to some implementations. For example, the process500may be performed by one or more gaming servers, such as the server106ofFIGS. 1, 2, and 3.

At502, multiple game instances (e.g., based on one or more game modules or game engines) may be executed. Each game instance of the multiple game instances may have corresponding rendering settings. For example, inFIG. 1, the server106may execute the game instances126to128with the corresponding rendering settings130to132.

At504, environmental settings associated with each game instance may be determined. For example, inFIG. 3, the server106may determine the environmental settings222to224corresponding to the game instances126to128.

At506, regression may be performed over one or more of the environmental settings. For example, one environmental setting may be varied while a remainder of the environmental settings are held invariant (e.g., constant).

At508, the server may determine a rendering time to render each output frame of each game instance. For example, inFIG. 2, the server106may determine the rendering times210to212to render the frames202to204.

At510, the server may determine an estimated perceived quality of each output frame. For example, inFIG. 3, the server106may determine the estimated perceived quality308to310associated with an output frame of the game instances126to128.

At512, for each game instance, the server may store (1) the rendering settings, (2) the rendering time to render the output frame, (3) the estimated perceived quality of the output frame, and (4) the environmental settings (e.g., associated with a game instance). For example, inFIG. 1, the server106may store in the gaming data134(1) one of the rendering settings130to132, (2) the rendering time146, (3) the perceived quality difference144, and (4) the environmental settings150.

At514, the server may render a next output frame for each game instance, and the process may proceed to508, where the rendering time to render the next output frame may be determined. For example, inFIG. 2, the server106may render the Qth frame204and determine the Qth rendering time212. In some cases,508to514may be repeated for a predetermined period of time or until a predetermined quantity of gaming data has been gathered.

Thus, regression over one or more environmental variables may be performed to identify environmental settings where the rendering settings may be adjusted from a higher complexity level setting to a lower complexity level setting to reduce the rendering time with an imperceptible or barely perceptible drop in estimated perceived quality. For example, when multiple game instances are executing on a server and the total time to render the game instances begins to approach the rendering capacity of the server (e.g., capacity−total rendering timecapacity, e.g., that X plus A is greater than a processing capacity of the server106(e.g., the capacity302ofFIG. 3), then the server106may determine whether X+C<capacity, Z+A<capacity, and/or Z+C<capacity. For example, if the server106determines that X+C<capacity, Z+A<capacity, and Z+C(W+B)>(W+D), then the server106may adjust the rendering complexity of the second game instance to low, with a rendering time of C (e.g., total rendering time X+C) and an estimated perceived quality of D. If (W+B)>(Y+D)>(W+D), then the server106may adjust the rendering complexity of the first game instance to low, with a rendering time of Z (e.g., total rendering time Z+A) and an estimated perceived quality of W. If (W+D)>(Y+D)>(W+B), then the server106may adjust the rendering complexity of the first game instance to low, with a rendering time of Z and an estimated perceived quality of W, and adjust the rendering complexity of the second game instance to low, with a rendering time of C and an estimated perceived quality of D.

Thus, the server106may use the gaming data134to identify gaming instances whose rendering settings can be adjusted while keeping the aggregate estimated perceived quality as high as possible. The gaming data134may be gathered using one or more shadow instances or using regression analysis.

Example Computing Device and Environment

FIG. 8illustrates an example configuration of a computing device800and environment that can be used to implement the modules and functions described herein. For example, the server106may include an architecture that is similar to or based on the computing device800.

The computing device800may include one or more processors802, a memory804, communication interfaces806, a display device808, other input/output (I/O) devices810, and one or more mass storage devices812, able to communicate with each other, such as via a system bus814or other suitable connection.

The processor802may be a single processing unit or a number of processing units, all of which may include single or multiple computing units or multiple cores. The processor802may be implemented as one or more microprocessors, microcomputers, microcontrollers, digital signal processors, central processing units, state machines, logic circuitries, and/or any device(s) that manipulate signals based on operational instructions. Among other capabilities, the processor802may be configured to fetch and execute computer-readable instructions stored in the memory804, mass storage devices812, or other computer-readable media.

Memory804and mass storage devices812are examples of computer storage media for storing instructions, which are executed by the processor802to perform the various functions described above. For example, memory804may generally include both volatile memory and non-volatile memory (e.g., RAM, ROM, or the like). Further, mass storage devices812may generally include hard disk drives, solid-state drives, removable media, including external and removable drives, memory cards, flash memory, floppy disks, optical disks (e.g., CD, DVD), a storage array, a network attached storage, a storage area network, or the like. Both memory804and mass storage devices812may be collectively referred to as memory or computer storage media herein, and may be capable of storing computer-readable, processor-executable program instructions as computer program code that can be executed by the processor802as a particular machine configured for carrying out the operations and functions described in the implementations herein.

Computer storage media includes non-volatile, removable and non-removable media implemented in any method or technology for storage of information, such as computer readable instructions, data structures, program modules, or other data. Computer storage media includes, but is not limited to, RAM, ROM, EEPROM, flash memory or other memory technology, CD-ROM, digital versatile disks (DVD) or other optical storage, magnetic cassettes, magnetic tape, magnetic disk storage or other magnetic storage devices, or any other medium that can be used to store information for access by a computing device.

In contrast, communication media may embody computer readable instructions, data structures, program modules, or other data in a modulated data signal, such as a carrier wave. As defined herein, computer storage media does not include communication media.

The computing device800may also include one or more communication interfaces806for exchanging data with other devices, such as via a network, direct connection, or the like, as discussed above. The communication interfaces806can facilitate communications within a wide variety of networks and protocol types, including wired networks (e.g., LAN, cable, etc.) and wireless networks (e.g., WLAN, cellular, satellite, etc.), the Internet and the like. Communication interfaces806can also provide communication with external storage (not shown), such as in a storage array, network attached storage, storage area network, or the like.

A display device808, such as a monitor may be included in some implementations for displaying information and images to users. Other I/O devices810may be devices that receive various inputs from a user and provide various outputs to the user, and may include a keyboard, a remote controller, a mouse, a printer, audio input/output devices, voice input, and so forth.

Memory804may include modules and components for hosting game instances and adjusting rendering settings according to the implementations described herein. The memory804may include multiple modules (e.g., the modules118to120) to perform various functions. The memory804may also include thresholds816, other modules816that implement other features, and other data818that includes intermediate calculations and the like. The threshold816may include various predetermined thresholds that may be set by a system administrator or other user, such as a rendering threshold822(e.g., used to determine when to adjust one or more complexity settings to a lower complexity setting), a second rendering threshold824(e.g., used to determine when to adjust at least one complexity setting to a higher complexity setting), and a perceived quality threshold826(e.g., one or more rendering settings may be adjusted when a difference between a post-adjustment sum of the estimated perceived quality and a pre-adjustment sum of the estimated perceived quality is less than the perceived quality threshold). The other modules816may include various software, such as an operating system, drivers, communication software, or the like.

Adjusting Rendering Settings when Providing Multimedia Content

FIG. 9is an illustrative architecture900to adjust rendering settings of applications providing multimedia content according to some implementations. The architecture900illustrates how the techniques described herein may be used to adjust the rendering complexity of applications that provide multimedia content (e.g., streaming media content, such as stream, audio or video). For example, the techniques described herein may be used when the capacity302of the server106is greater than a threshold amount, such as 80% of the capacity302, 90% of the capacity302, or the like. As another example, the techniques described herein may be used when a difference between the processing capacity302and the total amount of rendering time to render the application instances126to128is less than a predetermined rendering threshold.

The server106may host the application instances126to128with the corresponding rendering settings130to132. The application instances126to128may provide multimedia content to the computing devices102to104. For example, the multimedia content may include gaming output, television shows, movies, live events (e.g., sporting events), music videos, or other types of multimedia content, including interactive multimedia content. Each of the rendering settings130to132may identify various settings associated with the rendered output frames, such as a screen resolution associated with the computing device that is displaying the output frames, a communications reception bandwidth (e.g. the rate at which data can be received) associated with the computing device that is displaying the output frames, a complexity setting of the rendered output frames, other rendering-related settings or any combination thereof. InFIG. 9, the first rendering settings130include the first (rendering) complexity setting304and the Nth rendering settings132include the Nth (rendering) complexity setting306. For example, if the first application instance126is associated with the first computing device102, the first rendering settings130may indicate that the first computing device102has a screen resolution of 1280×720 pixels, a communications reception bandwidth) of 10 megabits per second (mbps), and a high complexity rendering setting. The communications bandwidth may include a minimum bandwidth, a maximum bandwidth, an average bandwidth, or any combination thereof.

The server106may determine an estimated perceived quality308to310for each of the application instances118to120based on the corresponding rendering settings130to132. The server106may determine a first rendering time312to render an output frame of the first application instance126and an Nth rendering time314to render an output frame of the Nth application instance128. The server106may determine a total rendering time to render the output data112by summing the N rendering times312to314. Thus, the server106may repeatedly adjust the rendering settings of the application instances126to128such that the total of the rendering times312to314is less than the capacity302while maximizing a sum of the estimate perceived quality of the application instances.

As the total amount of rendering time of the application instances126to128approaches the capacity302(e.g., processing capacity or rendering capacity), the server106may identify which of the rendering settings130to132to adjust such that the total amount of the estimated perceived quality308to310is maximized (e.g., by keeping the total amount relatively high) based on the application data134. The server106may determine, based on the application data134, that adjusting the first rendering settings130(e.g., by lowering the complexity setting304from HC to LC) may result in the total of the estimated perceived quality308to310being higher as compared to adjusting the Nth rendering settings132. The server106may adjust one or more of the rendering settings130to132.

The server106may determine which of the rendering settings130to132to adjust based on the application data134. The server106may select one or more of the rendering settings138,306that results in the total rendering time for the application instances126to128being less than the capacity302and results in the least drop in the sum of the estimated perceived quality308to310.

The server may maximize the sum of the estimated perceived quality308to310by minimizing a drop in the sum of the estimated perceived quality from pre-adjustment to post-adjustment. For example, the server106may determine the sum of the estimated perceived quality308to310before adjusting the rendering settings130to132(e.g., pre-adjustment sum). The server106may identify one or more of the rendering settings130to132that can be adjusted such that the difference between the post-adjustment sum of the estimated perceived quality308to310and the pre-adjustment sum of the estimated perceived quality308to310is less than a predetermined estimated perceived quality threshold. For example, the server106may identify one or more of the rendering settings130to132that can be adjusted such that the post-adjustment sum of the estimated perceived quality308to310is less than a five percent drop from the pre-adjustment sum of the estimated perceived quality308to310.

The server106may use various techniques to avoid repeatedly adjusting the same rendering settings (e.g., one or more of the rendering settings130to132) in a short period of time. For example, users engaged in a particular application instance may not enjoy the experience of the corresponding complexity setting being repeatedly adjusted, e.g., adjusted from HC to LC and then back to HC (or adjusted from LC to HC and then back to LC) within a short period of time. To avoid such situations, when the server106adjusts one or more of the rendering settings130to132, the server106may add a timestamp to the adjusted rendering settings to identify when the rendering settings were last adjusted. When the difference between the current time and the timestamp of the adjusted rendering settings is less than a predetermined time interval (e.g., one minute, five minutes, ten minutes, twenty minutes, or the like), the server106may not adjust one or more of the rendering settings130to132.

In an implementation where there are more than two complexity settings, the server106may use the timestamp identifying the last time the rendering settings were adjusted to avoid repeatedly adjusting the same rendering settings (e.g., one or more of the rendering settings130to132) within a short period of time. For example, in an implementation that includes a high complexity setting, a medium complexity setting, and a low complexity setting, when a particular complexity setting (e.g., of the complexity settings304to306) is adjusted from high complexity to medium complexity, the server106may not adjust the particular complexity setting from medium complexity to low complexity within a predetermined time period (e.g., one minute, five minutes, ten minutes, twenty minutes, or the like). As another example, when a particular complexity setting (e.g., of the complexity settings304to306) is adjusted from high complexity to medium complexity, the server106may not further drop the particular complexity setting from medium complexity to low complexity until the remainder of the complexity settings304to306have been adjusted to medium complexity.

Thus, after gathering the application data134, the server106may periodically adjust the rendering settings corresponding to one or more application instances hosted by one or more servers. For example, if a difference between the processing capacity of the server106and the total processing power to render the multiple application instances126to128is less than a rendering threshold, the server106may identify and adjust one or more rendering settings. The server106may identify and adjust those rendering settings whose adjustment results in a relatively small drop in estimated perceived quality but a relatively large drop in rendering time. Expressed mathematically, the server106may identify and adjust rendering settings to reduce the total rendering time to render the multiple application instances (e.g., such that total rendering time<rendering capacity) while maximizing the total estimated perceived quality. In some cases, the total estimated perceived quality may be maximized by minimizing the difference between the pre-adjustment total estimated perceived quality of the multiple application instances and the post-adjustment total estimated perceived quality of the multiple application instances or adjusting one or more rendering settings such that a difference between the pre-adjustment total estimated perceived quality and the post-adjustment total estimated perceived quality is less than an estimated perceived quality threshold.

When the server106determines that the server106has additional rendering capacity and one or more application instances are at less than the highest complexity rendering setting, the server106may adjust at least one of the one or more application instances to a higher complexity rendering setting. For example, the server106may identify and adjust rendering settings to maximize the total estimated perceived quality such that total rendering time is less than the rendering capacity of the server106.

FIG. 10is an illustrative architecture1000that includes multiple computing devices communicating with one or more servers according to some implementations. The architecture1000illustrates how a variety of computing devices may be used to communicate with applications, such as games, hosted by the server106.

For example, the server106may host various applications, including gaming applications and applications to stream multimedia content. The applications hosted by the server106may provide multimedia content, including audio, video, graphics, animation, and the like, to the computing devices102to104. The computing devices102to104may include one or more of a personal computer, a tablet computing device, a gaming console, a wireless phone (or other wireless mobile computing device), a laptop computer, a television set, a set-top box device, portable gaming console, or other type of computing device.

The example systems and computing devices described herein are merely examples suitable for some implementations and are not intended to suggest any limitation as to the scope of use or functionality of the environments, architectures and frameworks that can implement the processes, components and features described herein. Thus, implementations herein are operational with numerous environments or architectures, and may be implemented in general purpose and special-purpose computing systems, or other devices having processing capability. Generally, any of the functions described with reference to the figures can be implemented using software, hardware (e.g., fixed logic circuitry) or a combination of these implementations. The term “module,” “mechanism” or “component” as used herein generally represents software, hardware, or a combination of software and hardware that can be configured to implement prescribed functions. For instance, in the case of a software implementation, the term “module,” “mechanism” or “component” can represent program code (and/or declarative-type instructions) that performs specified tasks or operations when executed on a processing device or devices (e.g., CPUs or processors). The program code can be stored in one or more computer-readable memory devices or other computer storage devices. Thus, the processes, components and modules described herein may be implemented by a computer program product.

Furthermore, this disclosure provides various example implementations, as described and as illustrated in the drawings. However, this disclosure is not limited to the implementations described and illustrated herein, but can extend to other implementations, as would be known or as would become known to those skilled in the art. Reference in the specification to “one implementation,” “this implementation,” “these implementations” or “some implementations” means that a particular feature, structure, or characteristic described is included in at least one implementation, and the appearances of these phrases in various places in the specification are not necessarily all referring to the same implementation.

CONCLUSION

Although the subject matter has been described in language specific to structural features and/or methodological acts, the subject matter defined in the appended claims is not limited to the specific features or acts described above. Rather, the specific features and acts described above are disclosed as example forms of implementing the claims. This disclosure is intended to cover any and all adaptations or variations of the disclosed implementations, and the following claims should not be construed to be limited to the specific implementations disclosed in the specification. Instead, the scope of this document is to be determined entirely by the following claims, along with the full range of equivalents to which such claims are entitled.

Claims

- A method performed by one or more processors executing instructions to perform acts comprising: determining a total rendering time to render output data for a plurality of game instances;determining whether a difference between the total rendering time and a rendering capacity of the one or more processors is less than a predetermined rendering threshold;and in response to determining that the difference between the total rendering time and the rendering capacity of the one or more processors is less than the predetermined rendering threshold, adjusting a rendering complexity of one or more game instances of the plurality of game instances such that a difference between a first sum of an estimated perceived quality of each of the plurality of game instances and a second sum of the estimated perceived quality of each of the plurality of game instances after lowering the rendering complexity of the one or more game instances is less than a predetermined perceived quality threshold.

- The method as recited in claim 1 , wherein adjusting the rendering complexity of the one or more game instances of the plurality of game instances comprises: lowering the rendering complexity of the one or more game instances such that the difference between the total rendering time of the plurality of game instances and the rendering capacity of the one or more processors is greater than or equal to the predetermined rendering threshold.

- The method as recited in claim 1 , wherein adjusting the rendering complexity of the one or more game instances comprises: comparing game environmental settings of each of the plurality of game instances with stored environmental settings to identify matching environmental settings;determining a stored rendering time and a stored perceived quality associated with the matching environmental settings;and identifying the one or more game instances based on the stored rendering time and the stored perceived quality.