U.S. Pat. No. 9,278,280

VIDEO GAME USING DUAL MOTION SENSING CONTROLLERS

AssigneeNINTENDO CO., LTD.

Issue DateMay 9, 2011

Illustrative Figure

Abstract

An inclination of a first unit is detected based on an output from a first acceleration sensor provided in a first unit of a controller, and an inclination of a second unit is detected based on an output from a second acceleration sensor provided in a second unit separate from the first unit. A difference between the inclinations of the first unit and the second unit is detected, and game control is performed using the detected difference. Thus, with a game apparatus using a plurality of acceleration sensors or a plurality of sensors capable of detecting a motion or a posture, a dynamic play is made possible with a high degree of freedom of motion and an intuitive motion input is realized.

Description

DESCRIPTION OF THE PREFERRED EMBODIMENTS With reference toFIG. 1, a game system1according to one embodiment will be described.FIG. 1is an external view illustrating the game system1. In the following example, the game system1includes an installation type game apparatus3. As shown inFIG. 1, the game system1includes a display (hereinafter, referred to as a “monitor”)2such as a home-use TV receiver or the like, which includes speakers2a, the installation type game apparatus (hereinafter, referred to simply as a “game apparatus”)3connected to the monitor2via a connection cord, and a controller7for providing the game apparatus3with operation information. The game apparatus3is connected to a receiving unit6via a connection terminal. The receiving unit6receives transmission data which is wirelessly transmitted from the controller7. The controller7and the game apparatus3are connected to each other via wireless communication. On the game apparatus3, an optical disc4as an exemplary exchangeable information storage medium is detachably mounted. On a main top surface of the game apparatus3, a power ON/OFF switch for the game apparatus3, a reset switch for game processing, and an OPEN switch for opening a top lid of the game apparatus3are provided. When the player presses the OPEN switch, the lid is opened to allow the optical disc4to be mounted or dismounted. Also on the game apparatus3, an external memory card5is detachably mounted when necessary. The external memory card5includes a backup memory or the like for fixedly storing saved data or the like. The game apparatus3executes a game program or the like stored on the optical disc4and displays the result on the monitor2as a game image. The game apparatus3can also reproduce a state of a game played in the past using saved data stored on the external memory card5and display a game image on the monitor2. The player using the game apparatus3can enjoy the game by operating the controller7while watching the game ...

DESCRIPTION OF THE PREFERRED EMBODIMENTS

With reference toFIG. 1, a game system1according to one embodiment will be described.FIG. 1is an external view illustrating the game system1. In the following example, the game system1includes an installation type game apparatus3.

As shown inFIG. 1, the game system1includes a display (hereinafter, referred to as a “monitor”)2such as a home-use TV receiver or the like, which includes speakers2a, the installation type game apparatus (hereinafter, referred to simply as a “game apparatus”)3connected to the monitor2via a connection cord, and a controller7for providing the game apparatus3with operation information. The game apparatus3is connected to a receiving unit6via a connection terminal. The receiving unit6receives transmission data which is wirelessly transmitted from the controller7. The controller7and the game apparatus3are connected to each other via wireless communication. On the game apparatus3, an optical disc4as an exemplary exchangeable information storage medium is detachably mounted. On a main top surface of the game apparatus3, a power ON/OFF switch for the game apparatus3, a reset switch for game processing, and an OPEN switch for opening a top lid of the game apparatus3are provided. When the player presses the OPEN switch, the lid is opened to allow the optical disc4to be mounted or dismounted.

Also on the game apparatus3, an external memory card5is detachably mounted when necessary. The external memory card5includes a backup memory or the like for fixedly storing saved data or the like. The game apparatus3executes a game program or the like stored on the optical disc4and displays the result on the monitor2as a game image. The game apparatus3can also reproduce a state of a game played in the past using saved data stored on the external memory card5and display a game image on the monitor2. The player using the game apparatus3can enjoy the game by operating the controller7while watching the game image displayed on the monitor2.

The controller7wirelessly transmits transmission data to the game apparatus3connected to the receiving unit6from a communication section75(seeFIG. 6) included in the controller7using, for example, the Bluetooth (registered trademark) technology. The controller7includes two control units (a core unit70and a sub unit76) connected to each other via a bendable connection cable79. The controller7is control means mainly for operating a player object appearing in a game space displayed on the monitor2. The core unit70and the sub unit76each have operation sections such as a plurality of operation buttons, keys, a stick and the like. As described later, the core unit70includes an imaging information calculation section74(seeFIG. 6) for taking an image seen from the core unit70. As an example of imaging target of the imaging information calculation section74, two LED modules8L and8R are provided in the vicinity of the display screen of the monitor2. The LED modules8L and8R output infrared light forward from the side of the monitor2. In this example, the core unit70and the sub unit76are connected to each other via the bendable connection cable79, but the sub unit76may include a wireless unit. In this case, the connection cable79is not necessary. When, for example, a Bluetooth (registered trademark) unit is mounted on the sub unit76as a wireless unit, operation data can be transmitted from the sub unit76to the core unit70.

Next, with reference toFIG. 2, a structure of the game apparatus3will be described.FIG. 2is a functional block diagram of the game apparatus3.

As shown inFIG. 2, the game apparatus3includes a CPU (central processing unit)30(for example, a RISC CPU) for executing various programs. The CPU30executes a start program stored on a boot ROM (not shown) to initialize memories including a main memory33, and then executes a game program stored on the optical disc4to perform game processing or the like in accordance with the game program. The CPU30is connected to a GPU (Graphics Processing Unit)32, the main memory33, a DSP (Digital Signal Processor)34, and an ARAM (Audio RAM)35via a memory controller31. The memory controller31is connected to a controller I/F (interface)36, a video I/F37, an external memory I/F38, an audio I/F39, and a disc I/F41via a predetermined bus. The controller I/F (interface)36, the video I/F37, the external memory I/F38, the audio I/F39and the disc I/F41are respectively connected to the receiving unit6, the monitor2, the external memory card5, the speaker2aand a disc drive40.

The GPU32performs image processing based on an instruction from the CPU30. The GPU32includes, for example, a semiconductor chip for performing calculation processing necessary for displaying 3D graphics. The GPU32performs the image processing using a memory dedicated for image processing (not shown) or a part of the storage area of the main memory33. The GPU32generates game image data or a movie to be displayed on the monitor2using such memories, and outputs the generated data or movie to the monitor2via the memory controller31and the video I/F37as necessary.

The main memory33is a storage area used by the CPU30, and stores a game program or the like necessary for processing performed by the CPU30as necessary. For example, the main memory33stores a game program, various types of data or the like read from the optical disc4by the CPU30. The game program, the various types of data or the like stored on the main memory33are executed by the CPU30.

The DSP34processes sound data or the like generated by the CPU30during the execution of the game program. The DSP34is connected to the ARAM35for storing the sound data or the like. The ARAM35is used when the DSP34performs predetermined processing (e.g., storage of the game program or sound data already read). The DSP34reads the sound data stored on the ARAM35and outputs the sound data to the speaker2aincluded in the monitor2via the memory controller31and the audio I/F39.

The memory controller31comprehensively controls data transfer, and is connected to the various I/Fs described above. The controller I/F36includes, for example, four controller I/Fs, each of which communicably connects an external device engageable with a connector thereof and the game apparatus3to each other. For example, the receiving unit6is engaged with such a connector and is connected to the game apparatus3via the controller I/F36. The receiving unit6receives the transmission data from the controller7as described above, and outputs the transmission data to the CPU30via the controller I/F36. The video I/F37is connected to the monitor2. The external memory I/F38is connected to the external memory card5, and is accessible to the backup memory or the like included in the external memory card5. The audio I/F39is connected to the speaker2abuilt in the monitor2, such that the sound data read by the DSP34from the ARAM35or sound data directly output from the disc drive40is output through the speaker2a. The disc I/F41is connected to the disc drive40. The disc drive40reads data stored at a predetermined reading position of the optical disc4and outputs the data to a bus of the game apparatus3or the audio I/F39.

With reference toFIG. 3, the controller7will be described.FIG. 3is an isometric view showing an external appearance of the controller7.

As shown inFIG. 3, the controller7includes the core unit70and the sub unit76which are connected to each other via the connection cable79. The core unit70has a housing71, which includes a plurality of operation sections72. The sub unit76has a housing77, which includes a plurality of operation sections78. The core unit70and the sub unit76are connected to each other via the connection cable79.

One of two ends of the connection cable79is provided with a connector791which is detachable with a connector73(seeFIG. 4) of the core unit70. The other end of the connection cable79is fixedly connected with the sub unit76. The connector791of the connection cable79is engaged with the connector73provided on a bottom surface of the core unit70, and thus the core unit70and the sub unit76are connected to each other via the connection cable79.

The housing71of the core unit70is formed by plastic molding or the like. The housing71has a generally parallelepiped shape, and the overall size of the housing71is small enough to be held by one hand of an adult or even a child.

At a center of a front surface of the housing71, a cross key72ais provided as direction instruction means. The cross key72ais a cross-shaped four-direction push switch. The cross key72aincludes projecting operation portions corresponding to the four directions (top, bottom, right and left) and arranged at an interval of 90 degrees. The player selects one of the top, bottom, right and left directions by pressing one of the operation portions of the cross key72a. Through an operation on the cross key72a, the player can, for example, instruct a direction in which a player character or the like appearing in a virtual game world, or a cursor, is to move. Instead of the cross key72a, a joystick capable of instructing any direction in 360 degrees may be provided.

Downward with respect to the cross key72aon the front surface of the housing71, a plurality of operation buttons72bthrough72gare provided. The operation buttons72bthrough72gare each an operation section for outputting a respective operation signal when the player presses a head thereof. For example, the operation buttons72bthrough72dare assigned functions of a first button, a second button, and an A button. The operation buttons72ethrough72gare assigned functions of a minus button, a home button and a plus button, for example. The operation buttons72bthrough72gare assigned various functions in accordance with the game program executed by the game apparatus3.

Upward with respect to the cross key72aon the front surface of the housing71, an operation button72his provided. The operation button72his a power switch for remote-controlling the power of the game apparatus3to be on or off.

Downward with respect to the operation button72con the front surface of the housing71, a plurality of LEDs702are provided. The controller7is assigned a controller type (number) so as to be distinguishable from the other controllers7. For example, the LEDs702are used for informing the player of the controller type which is currently set to the controller7that he/she is using. Specifically, when the core unit70transmits transmission data to the receiving unit6, one of the plurality of LEDs corresponding to the controller type is lit up.

On the front surface of the housing71, sound holes for outputting a sound from a speaker706(seeFIG. 4) described later are provided between the operation button72band the operation buttons72ethrough72g.

On a rear surface of the housing71, an operation button (not shown) is provided at a position at which an index finger or middle finger of the player is located when the player holds the core unit70. The operation button acts as, for example, a B button, and is used as, for example, a trigger switch in a shooting game.

On a top surface of the housing71, an imaging element743(seeFIG. 6) included in the imaging information calculation section74(seeFIG. 6) is provided. The imaging information calculation section74is a system for analyzing image data which is taken by the core unit70, and detecting the position of the center of gravity, the size and the like of an area having a high brightness in the image data. The imaging information calculation section74has, for example, a maximum sampling period of about 200 frames/sec., and therefore can trace and analyze even a relatively fast motion of the core unit70. The structure of the imaging information calculation section74will be described later in detail. On a bottom surface of the housing71, the connector73(FIG. 4) is provided. The connector73is, for example, a 32-pin edge connector, and is used for engaging and connecting the connector791of the connection cable79.

Now, with reference toFIG. 4, an internal structure of the core unit70will be described.FIG. 4is an isometric view of the core unit70, illustrating a state where an upper housing (a part of the housing71) of the core unit70is removed.

As shown inFIG. 4, a substrate700is fixed inside the housing71. On a front main surface of the substrate700, the operation buttons72athrough72h, an acceleration sensor701, the LEDs702, the speaker706, an antenna754and the like are provided. These elements are connected to a microcomputer751(seeFIG. 6) or the like via lines (not shown) formed on the substrate700or the like. The acceleration sensor701is provided in a peripheral area of the substrate700, not in a central area. Owing to such an arrangement, as the core unit70rotates around a longitudinal direction thereof as an axis, the acceleration sensor701detects an acceleration including a centrifugal force component in addition to a component of direction change of gravitational acceleration. As a result, the rotation of the core unit70can be determined at a high sensitivity based on the detected acceleration data through a predetermined calculation.

On a rear main surface of the substrate700, the image information calculation section74and the connector73are provided.

With reference toFIG. 3andFIG. 5, the sub unit76will be described.

FIG. 5is an isometric view of the sub unit76, illustrating a state where an upper housing (a part of the housing77) of the sub unit76is removed.

As shown inFIG. 3, the housing77of the sub unit76is formed by plastic molding or the like. The overall size of the housing77is small enough to be held by one hand of an adult or even a child.

On a front surface of the housing77, a stick78ais provided as direction instruction means. The stick78ais an inclinable operation section protruding from the front surface of the housing77. When being inclined, the stick78aoutputs an signal in accordance with the inclination direction. The player can instruct, for example, any direction or position by directing the tip of the stick78ain any direction in 360 degrees. Thus, the player can instruct a direction in which a player character or the like appearing in the virtual game world, or a cursor, is to move. Instead of the stick78a, a cross key may be provided.

On a top surface of the sub unit76, a plurality of operation buttons78dand78e(seeFIG. 5) are provided. The operation buttons78dand78eare each an operation section for outputting a respective operation signal when the player presses a head thereof. For example, the operation buttons78dand78eare assigned functions of an X button and a Y button. The operation buttons78dand78eare assigned various functions in accordance with the game program executed by the game apparatus3.

As shown inFIG. 5, a substrate is fixed inside the housing77. On the front main surface of the substrate, the stick78a, an acceleration sensor761and the like are provided. These elements are connected to the connection cable79via lines (not shown) formed on the substrate or the like.

With reference toFIG. 6, an internal structure of the controller7will be described.FIG. 6is a block diagram showing a structure of the controller7.

As shown inFIG. 6, the core unit70includes the communication section75therein in addition to the operation sections72, the imaging information calculation section74, the acceleration sensor701, the speaker706, the sound IC707and the amplifier708described above. The sub unit76includes the operation sections78and the acceleration sensor761described above, and is connected to the microcomputer751via the connection cable79, the connector791and the connector73.

The imaging information calculation section74includes an infrared filter741, a lens742, the imaging element743and an image processing circuit744. The infrared filter741allows only infrared light to pass therethrough, among light incident on the top surface of the core unit70. The lens742collects the infrared light which has passed through the infrared filter741and outputs the infrared light to the imaging element743. The imaging element743is a solid-state imaging device such as, for example, a CMOS sensor or a CCD, and takes an image of the infrared light collected by the lens742. Accordingly, the imaging element743takes an image of only the infrared light which has passed through the infrared filter741for generating image data. The image data generated by the imaging element743is processed by the image processing circuit744. Specifically, the image processing circuit744processes the image data obtained from the imaging element743, senses an area thereof having a high brightness, and outputs the processing result data representing the detected position coordinate and size of the area to the communication section75. The imaging information calculation section74is fixed to the housing71of the core unit70. The imaging direction of the imaging information calculation section74can be changed by changing the direction of the housing71. The connection cable79which connects the housing71and the sub unit76is bendable. Therefore, even when the direction or position of the sub unit76is changed, the imaging direction of the imaging information calculation section74is not changed. Based on the processing result data which is output from the imaging information calculation section74, a signal in accordance with the position or motion of the core unit70can be obtained.

In this example, the core unit70includes the acceleration sensor701. The acceleration sensor701included in the core unit70is preferably a three-axial (X, Y and Z axes inFIG. 4) acceleration sensor. The acceleration sensor761included in the sub unit76is preferably a three-axial (X, Y and Z axes inFIG. 5) acceleration sensor. The three-axial acceleration sensors701and761each detect a linear acceleration in each of three directions, i.e., an X direction (left side surface toward right side surface), a Y direction (top surface toward bottom surface), and a Z direction (front surface toward rear surface). In other embodiments, two-axial acceleration detection means for detecting a linear acceleration in each of only the X direction and the Y direction (or directions along another pair of axes) may be used depending on the type of control signals used for game processing. Alternatively, one-axial acceleration detection means for detecting a linear acceleration in only the X direction (or other directions) may be used. For example, such three-axial, two-axial or one-axial acceleration sensors701and761may be available from Analog Devices, Inc. or STMicroelectronics N.V. The acceleration sensors701and761may be of a static capacitance coupling system based on the technology of MEMS (Micro Electro Mechanical Systems) provided by silicon precision processing. Alternatively, the three-axial, two-axial or one-axial acceleration sensors701and761may be based on an existing acceleration detection technology (e.g., piezoelectric system or piezoelectric resistance system) or any other appropriate technology developed in the future.

As apparent to those skilled in the art, the acceleration detection means used for the acceleration sensors701and761can detect only an acceleration along a straight line corresponding to each of the axes of the acceleration sensors701and761(linear acceleration sensors). Namely, a direct output from each of the acceleration sensors701and761is a signal indicating the linear acceleration (static or dynamic) along each of the axes thereof. Hence, the acceleration sensors701and761cannot directly detect a physical property such as, for example, a motion along a nonlinear path (e.g., an arc path), rotation, revolution, angular displacement, inclination, position or posture.

Nonetheless, those skilled in the art would easily understand from the description of this specification that further information on the core unit70or the sub unit76can be estimated or calculated by executing additional processing on an acceleration signal which is output from the acceleration sensor701or761. For example, when a static acceleration (gravitational acceleration) is detected, an inclination of the object (core unit70or the sub unit76) with respect to the gravitational vector can be estimated by performing calculations based on the inclination angle and the detected acceleration, using the output from the acceleration sensor701or761. By combining the acceleration sensor701or761with the microcomputer751(or another processor) in this manner, the inclination, posture or position of the core unit70or the sub unit76can be determined. Similarly, when the core unit70including the acceleration sensor701or the sub unit76including the acceleration sensor761is dynamically accelerated by a hand of the player or the like as described herein, various motions and/or positions of the core unit70or the sub unit76can be calculated or estimated by processing an acceleration signal generated by the acceleration sensor701or761. In other embodiments, the acceleration sensor701or761may include a built-in signal processing device, or another type of dedicated processing device, for executing desired processing on an acceleration signal which is output from the built-in acceleration detection means, before the signal is output to the microcomputer751. For example, when the acceleration sensor701or761is for detecting a static acceleration (e.g., a gravitational acceleration), the built-in or dedicated processing device may convert the detected acceleration signal to a corresponding inclination angle. The data indicating the acceleration detected by the acceleration sensor701or761is output to the communication section75.

The communication section75includes the microcomputer751, a memory752, a wireless module753, and the antenna754. The microcomputer751controls the wireless module753for wirelessly transmitting the transmission data, while using the memory752as a storage area during processing. The microcomputer751also controls the operation of the sound IC707in accordance with the data transmitted from the game apparatus3to the wireless module753via the antenna754. The sound IC707processes sound data or the like transmitted from the game apparatus3via the communication section75.

Data from the core unit70including an operation signal from the operation section72(core key data), acceleration signals from the acceleration sensor701(core acceleration data), and the processing result data from the imaging information calculation section74are output to the microcomputer751. Data transmitted from the sub unit76via the connection cable79, including an operation signal from the operation section78(sub key data) and acceleration signals from the acceleration sensor761(sub acceleration data) are output to the microcomputer751. The microcomputer751temporarily stores the input data (core key data, sub key data, core acceleration data, sub acceleration data, and the processing result data) in the memory752as transmission data which is to be transmitted to the receiving unit6. The wireless transmission from the communication section75to the receiving unit6is performed at a predetermined time interval. Since game processing is generally performed at a cycle of 1/60 sec., the data collection and the wireless transmission need to be performed at a cycle of a shorter time period. Specifically, the game processing unit is 16.7 ms ( 1/60 sec.), and the transmission interval of the communication section75structured using the Bluetooth (registered trademark) technology is, for example, 5 ms. At the transmission timing to the receiving unit6, the microcomputer751outputs the transmission data stored on the memory752as a series of operation information to the wireless module753. Based on the Bluetooth (registered trademark) technology or the like, the wireless module753converts a carrier wave of a predetermined frequency with the operation information and radiates the resultant very weak radio signal from the antenna754. Namely, the core key data from the operation sections72in the core unit70, the sub key data from the operation sections78in the sub unit76, the core acceleration data from the acceleration sensor701in the core unit70, the sub acceleration data from the acceleration sensor761in the sub unit76, and the processing result data from the imaging information calculation section74are converted into a very weak radio signal by the wireless module743and radiated from the core unit70. The receiving unit6of the game apparatus3receives the very weak radio signal, and the game apparatus3demodulates or decodes the very weak radio signal to obtain the series of operation information (the core key data, the sub key data, the core acceleration data, the sub acceleration data, and the processing result data). Based on the obtained operation information and the game program, the CPU30of the game apparatus3performs the game processing. In the case where the communication section75is structured using the Bluetooth (registered trademark) technology or the like, the communication section75can have a function of receiving transmission data which is wirelessly transmitted from other devices.

As shown inFIG. 7, in order to play the game using the controller7of the game system1, the player holds the core unit70with one hand (for example, right hand) and holds the sub unit76with the other hand (for example, left hand).

As described above, the inclination, posture, position or motion (movement or swing) of the core unit70can be determined using the output from the acceleration sensor701of the core unit70(core acceleration data). More specifically, when the player moves his/her hand holding the core unit70, for example, up, down, right or left, the core unit70acts as operation input means for making an input in accordance with the motion or direction of the player's hand. Also as described above, the inclination, posture, position or motion (movement or swing) of the sub unit76can be determined using the output from the acceleration sensor761of the sub unit76(sub acceleration data). More specifically, when the player moves his/her hand holding the sub unit76, for example, up, down, right or left, the sub unit76acts as operation input means for making an input in accordance with the motion or direction of the player's hand. Owing to this arrangement, the player holding different units with his/her right hand and left hand can make inputs by moving both of his/her hands. The core unit70and the sub unit76, which are obtained by dividing a conventional game controller, allow the player to move both of his/her hands freely and to make new operations which are not possible with the conventional game controller. Since the degree of freedom of operations which can be made on the controller7is also significantly improved, realistic game operations can be realized.

In the above example, the controller7and the game apparatus3are connected with each other by wireless communication. Alternatively, the controller7and the game apparatus3may be electrically connected with each other via a cable. In this case, a cable connected to the core unit70is connected to a connection terminal of the game apparatus3.

In the above example, the connection section75is provided in the core unit70, but not in the sub unit76included in the controller7. Alternatively, the sub unit76may include a communication section for transmitting transmission data to the receiving unit6wirelessly or in a wired manner. Still alternatively, the core unit70and the sub unit76may both include a communication section. For example, the communication section included in each of the core unit70and the sub unit76may wirelessly transmit transmission data to the receiving unit6. The communication section in the sub unit76may wirelessly transmit transmission data to the core unit70, and upon receiving the transmission data, the communication section75in the core unit70may wirelessly transmit transmission data of the core unit70and the transmission data from the sub unit76to the receiving unit6. In these cases, the connection cable79is not necessary for electrically connecting the core unit70and the sub unit76with each other.

In the above example, the receiving unit6is connected to the connection terminal of the game apparatus3as receiving means for receiving transmission data wirelessly transmitted from the controller7. The receiving means may be a receiving module provided in the game apparatus3. In this case, the transmission data received by the receiving module is output to the CPU30via a predetermined bus.

Hereinafter, various embodiments which are realized by the game system1will be described. For easier understanding, the core unit70will be referred to as a “first unit”, the sub unit76will be referred to as a “second unit”, the acceleration sensor701included in the core unit70will be referred to as a “first acceleration sensor”, and the acceleration sensor761included in the sub unit76will be referred to as a “second acceleration sensor”.

(First Embodiment)

FIG. 8shows an exemplary image displayed in a first embodiment. On the screen of the monitor2, a three-dimensional virtual game world including a character operated by the player (game object) is displayed. In this embodiment, the character is riding on a battle tank. The player can control the motion of the character by inclining the first unit or the second unit (i.e., rotating the first unit or the second unit around a horizontal axis thereof). The following description will be given with the premise that a positive X axis direction of the acceleration sensor is a horizontal direction and the rightward direction with respect to the player, a positive Y axis direction is the vertical downward direction, and a positive Z axis direction is a horizontal direction and the forward direction with respect to the player. The relationship between the axial directions regarding the acceleration sensor and the directions in the real world is not limited to such a premise.

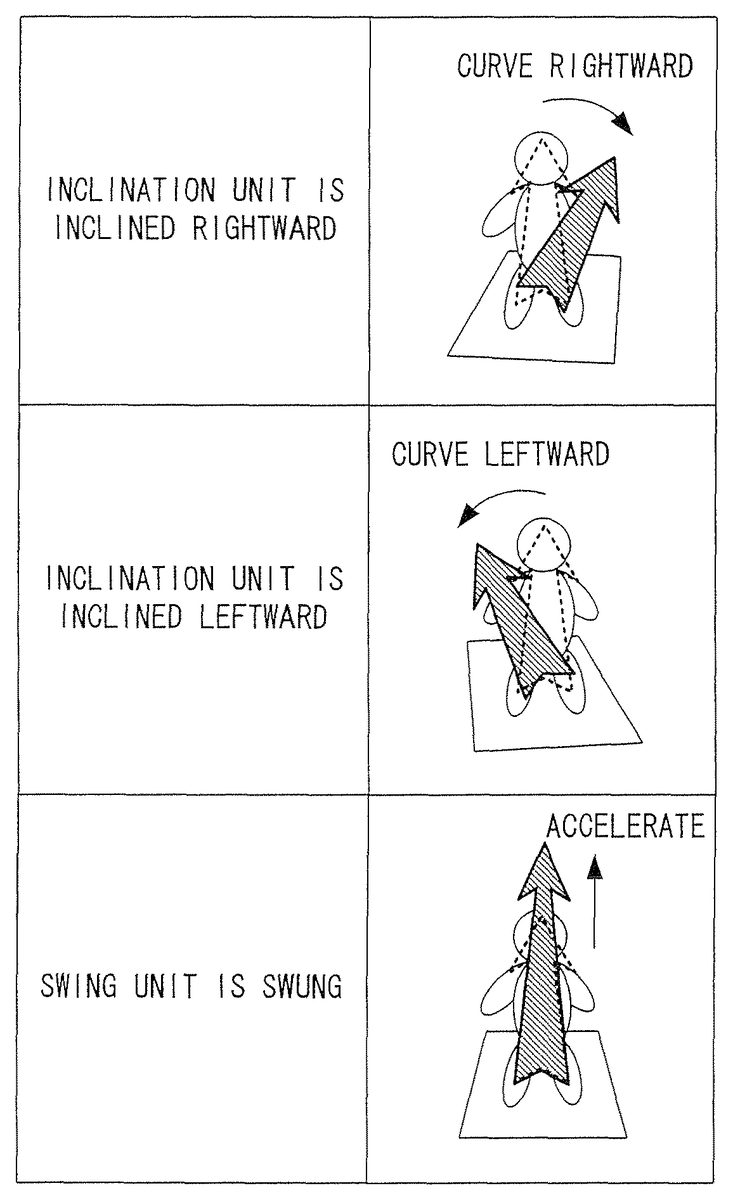

FIG. 9shows an exemplary correspondence between the operation performed by the player and the motion of the character in the first embodiment. When the first unit is inclined farther (FIG. 16) from the player than the second unit, the character curves leftward; whereas when the second unit is inclined farther from the player than the first unit, the character curves rightward. When the first unit and the second unit are inclined farther from the player on average (i.e., the average of the inclinations of the first unit and the second unit is farther from the player) with respect to the reference posture (for example, the posture vertical to the ground), the character advances; whereas when the first unit and the second unit are inclined closer (seeFIG. 16) to the player on average with respect to the reference posture, the character retracts. InFIG. 9, the direction from the eye of the observer of the drawing toward the sheet of the paper is the advancing direction (forward direction) of the character, and the opposite direction is the retracting direction (rearward direction) of the character.

FIG. 10shows an exemplary memory map of the main memory33in the first embodiment. The main memory33stores a game program100, game image data102, character control data104, a first inclination value106, a second inclination value108, a first reference value110, and a second reference value112. The game program and the game image data102are stored on the optical disc4, and are copied onto the main memory33for use when necessary. The first reference value110and the second reference value112may also be stored on the optical disc4, and may be copied onto the main memory33for use when necessary.

The game image data102is data for generating a game image (polygon data, texture data, etc.) and includes data for generating a character image and data for generating a background image.

The character control data104is data for controlling a character, and includes current position data representing the current position of the character in the game world (three-dimensional virtual space), velocity data representing the magnitude of the moving speed of the character, and a directional vector representing the advancing direction of the character. The current position data is represented by a three-dimensional coordinate value, the velocity data is represented by a scalar value, and the directional vector is represented by a three-dimensional unit vector. Instead of the velocity data, a velocity vector may be used.

The first inclination value106represents an inclination of the first unit detected based on an output value from the first acceleration sensor. The second inclination value108represents an inclination of the second unit detected based on an output value from the second acceleration sensor. The first reference value110is a reference value for the inclination of the first unit. The second reference value112is a reference value for the inclination of the second unit.

With reference to the flowcharts inFIG. 11throughFIG. 14, a flow of processing executed by the CPU30based on the game program100will be described.

Referring toFIG. 11, when the execution of the game program100is started, the CPU30first executes neutral position setting processing in step S100. The neutral position setting processing is for determining the reference value for the inclination of the first unit (first reference value110) and the reference value for the inclination of the second unit (second reference value112). Hereinafter, with reference toFIG. 12, the neutral position setting processing will be described in detail.

Referring toFIG. 12, in step S134, the CPU30determines whether or not the player has pressed a setting button (a button for allowing the player to set the neutral position) based on the operation information transmitted from the controller7. The setting button may be provided only in the first unit, only in the second unit or both in the first unit and the second unit. The neutral position may be set by the player uttering a voice to a microphone instead of pressing the setting button. In this embodiment, the setting button is provided in the first unit. When it is detected that the player has pressed the setting button, the processing is advanced to step S136. When it is not detected that the player has pressed the setting button, the processing in step S134is repeated (i.e., the CPU30waits until the player presses the setting button).

In step S136, the CPU30waits for a certain time period (for example, 10 frames). The reason for this is that immediately after the player leaves his/her fingers from the setting button, the operation unit including the setting button (in this embodiment, the first unit) may possibly swing, in which case the first reference value is not correctly set.

In step S138, an output value (output vector) from the first acceleration sensor is obtained. In this embodiment, the output value in the X axis direction from the first acceleration sensor is Ax1, the output value in the Y axis direction from the first acceleration sensor is Ay1, and the output value in the Z axis direction from the first acceleration sensor is Az1. The output value may be set to be used as follows. Output values from the acceleration sensor for a predetermined time period (e.g., about 3 seconds) are always stored. When the player presses the setting button, the output value which was output a predetermined time period before may be used, or an average of the output values for a certain time period before or after the player presses the setting button may be used (this is also applicable to the second acceleration sensor).

In step S140, it is determined whether or not the magnitude of the output vector (Ax1, Ay1, Az1) from the first acceleration sensor obtained in step S138(i.e., √(Ax12+Ay12+Az12) is within the range of 0.8 to 1.2, namely, whether or not the first unit is in a still state. The output vector from each of the first acceleration sensor and the second acceleration sensor is set to have a magnitude of 1.0 in a still state (i.e., in a state of being influenced only by the gravitational acceleration). Therefore, when the magnitude of the output vector from the first acceleration sensor is 1.0 or closer thereto, the first unit can be determined to be substantially still. By contrast, when the magnitude of the output vector from the first acceleration sensor is far from 1.0, the first unit can be determined to be moving. When the magnitude of the output vector from the first acceleration sensor is within the range of 0.8 to 1.2, the processing is advanced to step S142. When the magnitude of the output vector from the first acceleration sensor is not within the range of 0.8 to 1.2, the processing is returned to step S134. The reason is that when the first unit is moving, the first reference value cannot be correctly set. The range of 0.8 to 1.2 is exemplary. The determination in step S140is whether or not the magnitude of the output vector is substantially close to 1.0. In this embodiment, the X direction component of the acceleration sensor (Ax) is not used. Therefore, the player basically plays without inclining the controller7in the X direction. Therefore, when the X direction component of the output from the first acceleration sensor obtained in step S138is larger than a certain value, the processing may be returned to step S134for the reason that the neutral position is not appropriate (this is also applicable to the second acceleration sensor).

In step S142, an output value (output vector) from the second acceleration sensor is obtained. In this embodiment, the output value in the X axis direction from the second acceleration sensor is Ax2, the output value in the Y axis direction from the second acceleration sensor is Ay2, and the output value in the Z axis direction from the second acceleration sensor is Az2.

In step S144, it is determined whether or not the magnitude of the output vector (Ax2, Ay2, Az2) from the second acceleration sensor obtained in step S142(i.e., √(Ax22+Ay22+Az22) is within the range of 0.8 to 1.2, i.e., whether or not the second unit is in a still state. When the magnitude of the output vector from the second acceleration sensor is within the range of 0.8 to 1.2, the processing is advanced to step S146. When the magnitude of the output vector from the second acceleration sensor is not within the range of 0.8 to 1.2, the processing is returned to step S134. The reason is that when the second unit is moving, the second reference value cannot be correctly set.

In step S146, arctan (Az1/Ay1), which represents the inclination of the first unit around the X axis (horizontal axis) (such an inclination is represented by angle θ inFIG. 16), is calculated, and the calculated value is set as the first reference value110. Similarly, arctan (Az2/Ay2), which represents the inclination of the second unit around the X axis (such an inclination is represented by angle θ inFIG. 16), is calculated, and the calculated value is set as the second reference value112. (Ay1, Az1) may be set as a reference value.

In this embodiment, the first reference value110is set only based on the output value from the first acceleration sensor obtained in step S138. Alternatively, output values from the first acceleration sensor may be obtained at a plurality of different times, and the first reference value110may be set based on an average thereof. Owing to such an arrangement, even if the first unit is swinging when the neutral position is set, the influence of such a swing can be suppressed. This is also applicable to the second reference value112.

In step S148, it is determined whether or not the difference between the first reference value110and the second reference value112is within a predetermined value. When the difference between the first reference value110and the second reference value112is within the predetermined value, the neutral position setting processing is terminated, and the processing is returned to step S102inFIG. 11. When the difference between the first reference value110and the second reference value112exceeds the predetermined value, the processing is returned to step S134to re-set the first reference value110and the second reference value112.

The reason why the first reference value110and the second reference value112are re-set when the difference therebetween exceeds the predetermined value in step S148is that when the first reference value110and the second reference value112having such values are used for the game, a high operability is not expected to be obtained. More specifically, in this embodiment, as shown inFIG. 9, when the first unit is inclined farther from the player than the second unit, the character curves leftward; whereas when the second unit is inclined farther from the player than the first unit, the character curves rightward. When the first reference value110and the second reference value112are largely different from each other, even when the first unit and the second unit are inclined parallel to each other, the character may curve leftward or rightward. This makes the player feel unnatural.

In the case of a game in which a large difference between the first reference value110and the second reference value112does not present any serious problem, the determination in step S148may be omitted.

In this embodiment, when the player presses the setting button provided in the first unit, the first reference value110and the second reference value112are both set. The present invention is not limited to this. For example, the following arrangement is possible in the case where the first unit and the second unit each have a setting button. When the player presses the setting button provided in the first unit, the first reference value110is set; and then when the player presses the setting button provided in the second unit, the second reference value112is set. In this case, however, the first reference value110and the second reference value112are likely to be largely different from each other. Therefore, it is preferable that the first reference value110and the second reference value112are set substantially at the same time as in this embodiment.

In this embodiment, the first reference value110and the second reference value112are separately set. In order to avoid the unnaturalness described above, a common value may be set as the first reference value110and the second reference value112. For example, an average of arctan (Az1/Ay1) and arctan (Az2/Ay2) may be commonly set as the first reference value110and the second reference value112in step S146. Alternatively, either arctan (Az1/Ay1) or arctan (Az2/Ay2) may be calculated, and such a calculation result may be commonly set as the first reference value110and the second reference value112. In this case, in order to avoid the influence of the swing of the operation unit when the player presses the setting button, it is preferable to commonly set the first reference value110and the second reference value112based on the output value from the acceleration sensor in the operation unit which does not include the setting button pressed by the player.

Returning toFIG. 11, when the neutral position setting processing is terminated, in step S102, the CPU30initializes various data used for the game processing (character control data104, inclination value106, etc.), and generates and displays a game image including the character on the screen of the monitor2.

In step S104, an inclination of the first unit is detected. Hereinafter, the detection of the inclination will be described in detail with reference toFIG. 14.

Referring toFIG. 14, in step S154, an output value (output vector) from the acceleration sensor (here, the first acceleration sensor) is obtained. In this embodiment, the output value in the X direction component from the acceleration sensor is Ax1, the output value in the Y direction component from the acceleration sensor is Ay1, and the output value in the Z direction component from the acceleration sensor is Az1.

In step S156, it is determined whether or not the magnitude of the output vector (Ax1, Ay1, Az1) from the first acceleration sensor obtained in step S154(i.e., √(Ax12+Ay12+Az12) is within the range of 0.8 to 1.2, namely, whether or not the first unit is in a still state. When the magnitude of the output vector from the first acceleration sensor is within the range of 0.8 to 1.2, the processing is advanced to step S158. When the magnitude of the output vector from the first acceleration sensor is not within the range of 0.8 to 1.2, the processing is advanced to step S160.

In step S158, arctan (Az1/Ay1), which represents the inclination of the first unit around the X axis (such an inclination is represented by angle θ inFIG. 16), is calculated, and the calculated value is returned as a return value for the detection of the inclination. The return value is stored on the main memory33as the first return value106. Then, the processing is advanced to step S106inFIG. 11.

In step S160, an error is returned as the detection result of the inclination for the reason that when the first unit is moving, the inclination of the first unit cannot be correctly detected. Then, the detection of the inclination is terminated. The processing is advanced to step S106inFIG. 11.

In step S106, it is determined whether or not the detection result of the inclination in step S104is an error. When the result is an error, the processing is advanced to step S150inFIG. 13. When the result is not an error, the processing is advanced to step S108.

In step S108, the detection of the inclination is performed regarding the second unit similarly to step S104. Specifically, when the magnitude of the output vector from the second acceleration sensor (Ax2, Ay2, Az2) is within the range of 0.8 to 1.2, the value of arctan (Az2/Ay2), which represents the inclination of the second unit around the X axis (such an inclination is represented by angle θ inFIG. 16), is stored on the main memory33as the second inclination value108.

In step S110, it is determined whether or not the detection result of the inclination in step S108is an error. When the result is an error, the processing is advanced to step S150inFIG. 13. When the result is not an error, the processing is advanced to step S112.

In step S112, the first inclination value106is corrected based on the first reference value110. Specifically, the difference between the first inclination value106and the first reference value110is calculated, and the calculation result is stored on the main memory33to update the first inclination value106.

In step S114, the second inclination value108is corrected based on the second reference value112. Specifically, the difference between the second inclination value108and the second reference value112is calculated, and the calculation result is stored on the main memory33to update the second inclination value108.

In step S116, it is determined whether or not the value obtained by subtracting the second inclination value108from the first inclination value106is larger than S1(positive threshold value). When the value obtained by subtracting the second inclination value108from the first inclination value106is larger than S1(i.e., when the first unit is inclined farther from the player than the second unit), the processing is advanced to step S118. Otherwise, the processing is advanced to step S120.

In step S118, the directional vector is changed so as to cause the character to curve leftward. The directional vector can be changed by various methods. In this embodiment, for example, the method shown inFIG. 15is used. A leftward curve vector, which is perpendicular both to the normal vector to the ground and to the current directional vector at the current position of the character and has a predetermined magnitude, is obtained. The leftward curve vector and the current directional vector are synthesized to obtain a synthesized vector. A unit vector having the same direction as the synthesized vector is determined as a new directional vector.

In step S120, it is determined whether or not the value obtained by subtracting the second inclination value108from the first inclination value106is smaller than −S1. When the value obtained by subtracting the second inclination value108from the first inclination value106is smaller than −S1(i.e., when the second unit is inclined farther from the player than the first unit), the processing is advanced to step S122. Otherwise, the processing is advanced to step S124.

In step S122, the directional vector is changed so as to cause the character to curve rightward.

In step S124, it is determined whether or not the average value of the first inclination value106and the second inclination value108is larger than S2(positive threshold value). When the average value of the first inclination value106and the second inclination value108is larger than S2(i.e., when the first unit and the second unit are inclined farther from the player on average with respect to the reference posture), the processing is advanced to step S126. Otherwise, the processing is advanced to step S128.

In step S126, positive velocity data is set in accordance with the average value of the first inclination value106and the second inclination value108. For example, positive velocity data having an absolute value in proportion to the average value is set. Then, the processing is advanced to step S150inFIG. 13.

In step S128, it is determined whether or not the average value of the first inclination value106and the second inclination value108is smaller than −S2. When the average value of the first inclination value106and the second inclination value108is smaller than −S2(i.e., when the first unit and the second unit are inclined closer to the player on average with respect to the reference posture), the processing is advanced to step S130. Otherwise, the processing is advanced to step S132.

In step S130, negative velocity data is set in accordance with the average value of the first inclination value106and the second inclination value108. For example, negative velocity data having an absolute value in proportion to the average value is set. Then, the processing is advanced to step S150inFIG. 13.

In step S132, the velocity data is set to 0, and the processing is advanced to step S150inFIG. 13.

Referring toFIG. 13, in step S150, the current position data is updated based on the velocity data and the directional vector. As a result, the character in the game world moves by the distance represented by the velocity data in the direction represented by the directional vector.

In step S152, the game image displayed on the monitor2is updated based on the current position data, and the processing is returned to step S104inFIG. 11. The above-described processing is repeated, so that the game image is changed when necessary in accordance with the operation performed by the player.

As described above, according to this embodiment, the player can freely move both of his/her hands. Owing to a high degree of freedom of motion realized by such an arrangement, a dynamic play is made possible. In addition, the character can be controlled by the inclination difference between two operation units. Therefore, the player can play intuitively and thus obtain a high operability.

In this embodiment, three-axial acceleration sensors are used. Even when one-axial acceleration sensors are used, the inclinations of the operation units can be detected (for example, the inclinations of the operation units can be detected by referring to only the output value in the Z axis direction inFIG. 16). Thus, substantially the same effects as those of this embodiment are provided.

In this embodiment, the inclination difference between the first unit and the second unit, and the average inclination value of the first unit and the second unit, are used to control the motion of the character. It is possible to use only the difference without using the average value. Hereinafter, a modification to the first embodiment in which only the inclination difference between the first unit and the second unit will be described.

FIG. 17shows an exemplary correspondence between the operation performed by the player and the motion of the character in the modification to the first embodiment. When the first unit is inclined farther from the player than the second unit, the character curves leftward; whereas when the second unit is inclined farther from the player than the first unit, the character curves rightward. When an acceleration button is pressed, the character's motion is accelerated in the advancing direction at that time.

With reference toFIG. 18, a flow of processing executed by the CPU30in the modification to the first embodiment will be described. InFIG. 18, substantially the same processing as that inFIG. 11will bear the same reference numerals and the descriptions thereof will be omitted.

Before step S162, the character curves leftward or rightward in accordance with the inclination difference between the first unit and the second unit.

In step S162, the CPU30determines whether or not the player has pressed the acceleration button. The acceleration button may be provided only in the first unit, only in the second unit or both in the first unit and the second unit. When the player has pressed the acceleration button, the processing is advanced to step S164. When the player has not pressed the acceleration button, the processing is advanced to step S166.

In step S164, the velocity data is increased by a predetermined amount.

In step S166, the velocity data is decreased by a predetermined amount.

After step S164or S166, substantially the same processing as that inFIG. 13is executed.

As described above, according to this modification, the game can be played only using the inclination difference between the first unit and the second unit, without using any absolute value of the inclination of the first unit or the second unit. Therefore, the player can play the game with no problem even while lying on the floor. Thus, the degree of freedom of posture during the game is increased.

(Second Embodiment)

FIG. 19shows an exemplary image displayed in a second embodiment. On the screen of the monitor2, a three-dimensional virtual game world including a character operated by the player (game object) is displayed. In this embodiment, the character is riding on a sleigh, which is pulled by five dinosaurs (dinosaurs A through E). The player can control the motion of the character by swinging the first unit or the second unit. The following description will be given with the premise that the player holds the first unit with his/her left hand and holds the second unit with his/her right hand (the player may hold the first unit with his/her right hand and hold the second unit with his/her left hand).

FIG. 20shows an exemplary correspondence between the operation performed by the player and the motion of the character in the second embodiment. When only the first unit is swung, the character curves rightward (the advancing direction is changed rightward); whereas when only the second unit is swung, the character curves leftward (the advancing direction is changed leftward). When the first unit and the second unit are swung simultaneously, the character's motion is accelerated in the advancing direction at that time.

The game may be set such that when only the first unit is swung, the character advances leftward; when only the second unit is swung, the character advances rightward; and when the first unit and the second unit are swung at the same time, the character advances forward.

FIG. 21shows an exemplary memory map of the main memory33in the second embodiment. The main memory33stores a game program200, game image data202, character control data204, a first swinging strength value206, a second swinging strength value208, a first input flag210, a second input flag212, a simultaneous input flag214, a first timer216, a second timer218, and a simultaneous input timer220.

The game image data202and the character control data204are substantially the same as those in the first embodiment and will not be described here.

The first swinging strength value206represents a swinging strength of the first unit which is detected based on the output value from the first acceleration sensor. The second swinging strength value208represents a swinging strength of the second unit which is detected based on the output value from the second acceleration sensor.

The first flag210is a flag representing that the first unit has been swung, and is turned on when the first unit is detected to have been swung. The second flag212is a flag representing that the second unit has been swung, and is turned on when the second unit is detected to have been swung. The simultaneous input flag214is a flag representing that the first flag and the second unit have been swung simultaneously, and is turned on when the first flag and the second unit are detected to have been swung simultaneously.

The first timer216is a value representing a time period from when the first unit is detected to have been swung (the number of frames). The second timer218is a value representing a time period from when the second unit is detected to have been swung (the number of frames). The simultaneous input timer220is a value representing a time period from when the first unit and the second unit are detected to have been swung simultaneously (the number of frames).

With reference to the flowcharts inFIG. 22throughFIG. 25, a flow of processing executed by the CPU30based on the game program200will be described. The processing in steps S202through S266is repeated frame by frame.

Referring toFIG. 22, when the execution of the game program200is started, in step S200, the CPU30first initializes various data used for the game processing (character control data204, first swinging strength value206, first input flag210, first timer216, etc.), and generates and displays a game image including the character on the screen of the monitor2.

In step S202, it is determined whether or not the simultaneous input flag214is on. When the simultaneous input flag214is on, the processing is advanced to step S204. When the simultaneous input flag214is not on, the processing is advanced to step S210.

In step S204, “1” is added to the simultaneous input timer220.

In step S206, it is determined whether or not the simultaneous input timer220is equal to or greater than 20. When the simultaneous input timer220is equal to or greater than 20, the processing is advanced to step S208. Otherwise, the processing is advanced to step S262inFIG. 24.

In step S208, the simultaneous input flag214is turned off, and the processing is advanced to step S262inFIG. 24.

As described above, after the simultaneous input flag214is turned on (i.e., after the first unit and the second unit are detected to have been swung simultaneously) until a 20 frame time period passes, neither the detection of the swinging strength of the first unit (step S236described later) nor the detection of the swinging strength of the second unit (step S250described later) is performed. Namely, neither the swing operation on the first unit nor the swing operation on the second unit by the player is accepted. Owing to such an arrangement, one swing operation performed by the player is prevented from being detected continuously over a period of a plurality of frames.

In step S210, it is determined whether or not the first input flag210is on. When the first input flag210is on, the processing is advanced to step S212. When the first input flag210is not on, the processing is advanced to step S222.

In step S212, “1” is added to the first timer216.

In step S214, it is determined whether or not the first timer216is 5. When the first timer216is 5 (i.e., when a 5 frame time period has passed after the first unit is detected to have been swung, without any swing of the second unit being detected; namely, when only the first unit was swung), the processing is advanced to step S216. When the first timer216is not 5, the processing is advanced to step S218.

In step S216, the directional vector is changed so as to cause the character to curve rightward. The directional vector can be changed in substantially the same manner as in the first embodiment.

In step S218, it is determined whether or not the first timer216is larger than 10. When the first timer216is larger than 10, the processing is advanced to step S220. When the first timer216is not larger than 10, the processing is advanced to step S222.

In step S220, the first input flag210is turned off.

As described above, after the first input flag210is turned on (i.e., after the first unit is detected to have been swung) until a 10 frame time period passes, the detection of the swinging strength of the first unit (step S236described later) is not performed. Namely, the swing operation on the first unit by the player is not accepted. Owing to such an arrangement, one swing operation performed by the player is prevented from being detected continuously over a period of a plurality of frames.

As described in more detail later, when the second unit is detected to have been swung before a 5 frame time period passes after the first input flag210is turned on, the simultaneous input flag214is turned on at that time. Therefore, until a 20 frame time period passes after that, the swing operation on the first unit performed by the player is not accepted. When the simultaneous input flag214is turned off, the detection of the swinging strength of the first unit (step S236described later) and the detection of the swinging strength of the second unit (step S250described later) are resumed simultaneously. Therefore, the timing at which the acceptance of the swing operation on the first unit is resumed, and the timing at which the acceptance of the swing operation on the second unit is resumed, match each other.

In step S222, it is determined whether or not the second input flag212is on. When the second input flag212is on, the processing is advanced to step S224. When the second input flag212is not on, the processing is advanced to step S234inFIG. 23.

In step S224, “1” is added to the second input timer218.

In step S226, it is determined whether or not the second timer218is 5. When the second timer218is 5 (i.e., when only the second unit was swung), the processing is advanced to step S228. When the second timer218is not 5, the processing is advanced to step S230.

In step S228, the directional vector is changed so as to cause the character to curve leftward.

In step S230, it is determined whether or not the second timer218is larger than 10. When the second timer218is larger than 10, the processing is advanced to step S232. When the second timer218is not larger than 10, the processing is advanced to step S234inFIG. 23.

In step S232, the second input flag212is turned off.

As described above, after the second input flag212is turned on (i.e., after the second unit is detected to have been swung) until a 10 frame time period passes, the detection of the swinging strength of the second unit (step S250described later) is not performed. Namely, the swing operation on the second unit by the player is not accepted. Owing to such an arrangement, one swing operation performed by the player is prevented from being detected continuously over a period of a plurality of frames.

As described in more detail later, when the first unit is detected to have been swung before a 5 frame time period passes after the second input flag212is turned on, the simultaneous input flag214is turned on at that time. Therefore, until a frame time period passes after that, the swing operation on the second unit performed by the player is not accepted. When the simultaneous input flag214is turned off, the detection of the swinging strength of the first unit (step S236described later) and the detection of the swinging strength of the second unit (step S250described later) are resumed simultaneously. Therefore, the timing at which the acceptance of the swing operation on the first unit is resumed, and the timing at which the acceptance of the swing operation on the second unit is resumed, match each other. In other words, in this embodiment, even when the timing at which the first unit is detected to have been swung is slightly offset with respect to the timing at which the second unit is detected to have been swung, it is recognized that the first unit and the second unit were swung simultaneously. Even in this case, the timing at which the acceptance of the swing operation on the first unit is resumed, and the timing at which the acceptance of the swing operation on the second unit is resumed, match each other. Therefore, when the simultaneous swing operation is resumed and then another simultaneous swing operation is performed, the problem that it is detected that only the first unit or the second unit has been swung the second time is avoided. The time period in which the swing is not accepted (swing acceptance prohibition time period) after the simultaneous input flag214is turned on may be a 10 frame time period (same as the swing acceptance prohibition time period after only the first unit or only the second unit is swung). In this embodiment, such a period starts when a later swing operation is detected, among the swing operation on the first unit and the swing operation on the second unit. Alternatively, a period of, for example, 20 frames may start when an earlier swing operation is detected. Still alternatively, such a period may start at an intermediate timing (a timing between the timing at which an earlier swing operation is detected and the timing at which a later swing operation is detected; for example, exactly the middle timing N).

Referring toFIG. 23, in step S234, it is determined whether or not the first input flag210is on. When the first input flag210is on, the processing is advanced to step S248. When the first input flag210is not on, the processing is advanced to step S236.

In step S236, the swinging strength of the first unit is detected. Hereinafter, the detection of the swinging strength of the first unit will be described in detail with reference toFIG. 25.

Referring toFIG. 25, in step S268, an output value (output vector) from an acceleration sensor (here, the first acceleration sensor) is obtained. In this embodiment, the output value in the X axis direction from the first acceleration sensor is Ax, the output value in the Y axis direction from the first acceleration sensor is Ay, and the output value in the Z axis direction from the first acceleration sensor is Az.

In step S270, it is determined whether or not the magnitude of the output vector (Ax, Ay, Az) from the first acceleration sensor obtained in step S268(i.e., √(Ax2+Ay2+Az2) is larger than K (K is a predetermined value). When the magnitude of the output vector from the first acceleration sensor is larger than K (it is determined that a swing operation has been performed), the processing is advanced to step S272. When the magnitude of the output vector from the first acceleration sensor is not larger than K (it is determined that a swing operation has not been performed), the processing is advanced to step S274.

In step S272, the magnitude of the output vector from the first acceleration sensor is returned as a return value X for the detection of the swinging strength. Usually, as the first unit is swung more strongly, the magnitude of the output vector from the first acceleration sensor is larger. Therefore, the return value X reflects the swinging strength of the first unit. Then, the processing is advanced to step S238inFIG. 23. In this embodiment, when the magnitude of the output vector exceeds K, the magnitude of the output vector at that time is immediately returned as the return value X. In a modification, the following processing may be executed. When the magnitude of the output vector exceeds K, a state flag indicating such a state is stored; and the output vector value when the magnitude of the output vector reaches the maximum value (the point at which the magnitude of the output vector starts decreasing after being maximized) may be returned.

In this embodiment, the determination on the swing operation is made based on the magnitude of the output vector being equal to or greater than a predetermined value. The determination may be performed more precisely. This will be described in more detail. When a swing operation is made, the output from the acceleration sensor usually changes as follows. (a) 0→(b) output is increased→(c) maximum→(d) output is decreased→(e) 0→(f) output is increased in the opposite direction→(g) maximum in the opposite direction→(h) output is decreased in the opposite direction→(i) 0.

The history of output values for a predetermined time period from the current point may be always stored, so that it can be detected whether or not the history shows such a change. More simply, it may be detected that the history matches a part of such a change. In this case, which part of the change from (a) through (i) is to be used may be determined arbitrarily (any point other than (a) through (i), for example, a point when the output reaches a predetermined value while being increased, may be used).

Instead of the swing operation, a predetermined motion (an operation for providing a motion of a predetermined pattern) may be detected. Such an operation is, for example, an operation for moving the character in a predetermined direction. In this case also, the history of output values is stored, so that it can be detected whether or not the history matches the predetermined pattern.

The above-described modification is also applicable to embodiments other than the second embodiment.

In step S274, a value representing “no swing operation” is returned as the detection result of the swing operation. Then, the processing is advanced to step S238inFIG. 23.

In step S238, it is determined whether or not the first unit was swung based on the detection result of the swing operation in step S236. When the first unit was swung, the processing is advanced to step S240. When the first unit was not swung, the processing is advanced to step S248.

In step S240, it is determined whether or not the second input flag212is on and also whether or not the second timer218is equal or smaller than 4. When the second input flag212is on and also the second timer218is equal or smaller than 4 (i.e., when the first unit and the second unit were swung substantially simultaneously), the processing is advanced to step S242. When the second input flag212is not on, or the second timer218is larger than 4, the processing is advanced to step S246. When the first unit is swung before a 4 frame time period passes after the second unit is swung, it is determined that “the first unit and the second unit were swung simultaneously” for the following reason. Even if the player intended to swing the first unit and the second unit simultaneously, such swing operations may not necessarily be performed exactly simultaneously. Even when the timing at which the first unit is detected to have been swung is offset by several frames with respect to the timing at which the second unit is detected to have been swung, it is determined that “the first unit and the second unit were swung simultaneously”. Thus, a better operability is obtained.

In step S242, the velocity data of the character is increased in accordance with the return value X for the detection of the swinging strength of the first unit in step S236(value reflecting the swinging strength of the first unit) and the second swinging strength value208which is set in step S260described later (value reflecting the swinging strength of the second unit). For example, the current velocity data may be multiplied by a numerical value in proportion to the return value X and by a numerical value in proportion to the second swinging strength value208so as to determine new velocity data. Alternatively, a numerical value obtained by multiplying the return X by a first coefficient, and a numerical value obtained by multiplying the second swinging strength208by a second coefficient, may be added to the current velocity data (the first coefficient may be the same as, or different from, the second coefficient). Still alternatively, an average of the return value X and the second swinging strength value208is multiplied by a predetermined coefficient, and the resultant value may be added to the current velocity data.

As the return value for the detection of the swinging strength, the magnitude of only the component in a predetermined direction may be used among the output values from the acceleration sensor.

In step S244, the simultaneous input flag214is turned on. The simultaneous input timer220is reset to 0 to resume. The second input flag212is turned off.

In step S246, the first input flag210is turned on. The first input timer216is reset to 0 to resume. The return value X for the detection of the swinging strength of the first unit is set as the first swinging strength value206.

In step S248, it is determined whether or not the second input flag212is on. When the second input flag212is on, the processing is advanced to step S250. When the second input flag212is not on, the processing is advanced to step S262inFIG. 24.

In step S250, the swinging strength of the second unit is detected in substantially the same manner as in step S236. Namely, when the magnitude of the output vector from the second acceleration sensor is larger than K, the magnitude of the output vector from the second acceleration sensor (value reflecting the swinging strength of the second unit) is returned as a return value X for the detection of the swinging strength.

In step S252, it is determined whether or not the second unit was swung based on the detection result of the swing operation in step S250. When the second unit was swung, the processing is advanced to step S254. When the second unit was not swung, the processing is advanced to step S262inFIG. 24.

In step S254, it is determined whether or not the first input flag210is on and also whether or not the first timer216is equal or smaller than 4. When the first input flag210is on and also the first timer216is equal or smaller than 4 (i.e., when the first unit and the second unit were swung substantially simultaneously), the processing is advanced to step S256. When the first input flag210is not on, or the first timer216is larger than 4, the processing is advanced to step S260.

In step S256, the velocity data is increased in accordance with the return value X for the detection of the swinging strength of the second unit in step S250(value reflecting the swinging strength of the second unit) and the first swinging strength value206which was set in step S246above (value reflecting the swinging strength of the first unit). For example, the current velocity data may be multiplied by a numerical value in proportion to the return value X and by a numerical value in proportion to the first swinging strength value208so as to determine new velocity data.

In step S258, the simultaneous input flag214is turned on. The simultaneous input timer220is reset to 0 to resume. The first input flag210is turned off.

In step S260, the second input flag212is turned on. The second input timer218is reset to 0 to resume. The return value X for the detection of the swinging strength of the second unit is set as the second swinging strength value208.

In step S262inFIG. 24, the current position data is updated based on the velocity data and the directional vector. As a result, the character in the game world moves by the distance represented by the velocity data in the direction represented by the directional vector.