U.S. Pat. No. 9,251,603

INTEGRATING PANORAMIC VIDEO FROM A HISTORIC EVENT WITH A VIDEO GAME

Issue DateNovember 27, 2013

Illustrative Figure

Abstract

A panoramic video of a real world event can be received. The video can include perspective data linked with a video timeline. A perspective view associated with a graphics of a video game linked with a game timeline at a first time index can be determined. The perspective data of the panoramic video can be processed to obtain a video sequence matching the perspective view associated with the graphics at a second time index. The video timeline and the game timeline can be synchronized based on a common time index of each of the timelines. The graphics and the video sequence can be integrated into an interactive content, responsive to the synchronizing.

Description

DETAILED DESCRIPTION The present disclosure is a solution for integrating video from real-world cameras into a video game simulation environment. In the solution, cameras within a real-world environment can capture one or more videos of the environment and/or environmental elements. For example, a video camera mounted on a racing car can capture panoramic video of the car as the car races around a racing track during a racing event. In one embodiment, the captured video can be processed and integrated with a video game. In the embodiment, a composite environment can be created utilizing captured video to simulate event occurrences and/or event environment. For example, the disclosure can be utilized within a companion device as a second screen application (e.g., custom content) to enhance a live event viewing (e.g., in a stadium, at home in front of a television) by an audience (e.g., spectator). It should be appreciated that the video and game can be synchronized (e.g., to each other, to an external event, etc) enabling a cohesive user experience. As will be appreciated by one skilled in the art, aspects of the present invention may be embodied as a system, method or computer program product. Accordingly, aspects of the present invention may take the form of an entirely hardware embodiment, an entirely software embodiment (including firmware, resident software, micro-code, etc.) or an embodiment combining software and hardware aspects that may all generally be referred to herein as a “circuit,” “module” or “system.” Furthermore, aspects of the present invention may take the form of a computer program product embodied in one or more computer readable medium(s) having computer readable program code embodied thereon. Any combination of one or more computer readable medium(s) may be utilized. The computer readable medium may be a computer readable signal medium or a computer readable ...

DETAILED DESCRIPTION

The present disclosure is a solution for integrating video from real-world cameras into a video game simulation environment. In the solution, cameras within a real-world environment can capture one or more videos of the environment and/or environmental elements. For example, a video camera mounted on a racing car can capture panoramic video of the car as the car races around a racing track during a racing event. In one embodiment, the captured video can be processed and integrated with a video game. In the embodiment, a composite environment can be created utilizing captured video to simulate event occurrences and/or event environment. For example, the disclosure can be utilized within a companion device as a second screen application (e.g., custom content) to enhance a live event viewing (e.g., in a stadium, at home in front of a television) by an audience (e.g., spectator). It should be appreciated that the video and game can be synchronized (e.g., to each other, to an external event, etc) enabling a cohesive user experience.

As will be appreciated by one skilled in the art, aspects of the present invention may be embodied as a system, method or computer program product. Accordingly, aspects of the present invention may take the form of an entirely hardware embodiment, an entirely software embodiment (including firmware, resident software, micro-code, etc.) or an embodiment combining software and hardware aspects that may all generally be referred to herein as a “circuit,” “module” or “system.” Furthermore, aspects of the present invention may take the form of a computer program product embodied in one or more computer readable medium(s) having computer readable program code embodied thereon.

Any combination of one or more computer readable medium(s) may be utilized. The computer readable medium may be a computer readable signal medium or a computer readable storage medium. A computer readable storage medium may be, for example, but not limited to, an electronic, magnetic, optical, electromagnetic, infrared, or semiconductor system, apparatus, or device, or any suitable combination of the foregoing. More specific examples (a non-exhaustive list) of the computer readable storage medium would include the following: an electrical connection having one or more wires, a portable computer diskette, a hard disk, a random access memory (RAM), a read-only memory (ROM), an erasable programmable read-only memory (EPROM or Flash memory), an optical fiber, a portable compact disc read-only memory (CD-ROM), an optical storage device, a magnetic storage device, or any suitable combination of the foregoing. In the context of this document, a computer readable storage medium may be any tangible medium that can contain, or store a program for use by or in connection with an instruction execution system, apparatus, or device.

A computer readable signal medium may include a propagated data signal with computer readable program code embodied therein, for example, in baseband or as part of a carrier wave. Such a propagated signal may take any of a variety of forms, including, but not limited to, electro-magnetic, optical, or any suitable combination thereof. A computer readable signal medium may be any computer readable medium that is not a computer readable storage medium and that can communicate, propagate, or transport a program for use by or in connection with an instruction execution system, apparatus, or device.

Program code embodied on a computer readable medium may be transmitted using any appropriate medium, including but not limited to wireless, wireline, optical fiber cable, RF, etc., or any suitable combination of the foregoing. Computer program code for carrying out operations for aspects of the present invention may be written in any combination of one or more programming languages, including an object oriented programming language such as Java, Smalltalk, C++ or the like and conventional procedural programming languages, such as the “C” programming language or similar programming languages. The program code may execute entirely on the user's computer, partly on the user's computer, as a stand-alone software package, partly on the user's computer and partly on a remote computer or entirely on the remote computer or server. In the latter scenario, the remote computer may be connected to the user's computer through any type of network, including a local area network (LAN) or a wide area network (WAN), or the connection may be made to an external computer (for example, through the Internet using an Internet Service Provider).

Aspects of the present invention are described below with reference to flowchart illustrations and/or block diagrams of methods, apparatus (systems) and computer program products according to embodiments of the invention. It will be understood that each block of the flowchart illustrations and/or block diagrams, and combinations of blocks in the flowchart illustrations and/or block diagrams, can be implemented by computer program instructions.

These computer program instructions may be provided to a processor of a general purpose computer, special purpose computer, or other programmable data processing apparatus to produce a machine, such that the instructions, which execute via the processor of the computer or other programmable data processing apparatus, create means for implementing the functions/acts specified in the flowchart and/or block diagram block or blocks.

These computer program instructions may also be stored in a computer readable medium that can direct a computer, other programmable data processing apparatus, or other devices to function in a particular manner, such that the instructions stored in the computer readable medium produce an article of manufacture including instructions which implement the function/act specified in the flowchart and/or block diagram block or blocks.

The computer program instructions may also be loaded onto a computer, other programmable data processing apparatus, or other devices to cause a series of operational steps to be performed on the computer, other programmable apparatus or other devices to produce a computer implemented process such that the instructions which execute on the computer or other programmable apparatus provide processes for implementing the functions/acts specified in the flowchart and/or block diagram block or blocks.

FIG. 1Ais a schematic diagram illustrating a set of scenarios110,130for integrating video from real-world cameras into a video game simulation environment in accordance with an embodiment of the inventive arrangements disclosed herein. Scenario110,130can be performed in the order presented herein or can be performed out of order. In scenario110, sequence114can be presented within interface129to enhance a user128interaction127with content124. In scenario130, a video sequence114can be extracted from panoramic video112of an event140.

Content124can be a user interactive media which can enhance a user128experience of an event140. Content124can include, but is not limited to, a video sequence114, a game element122(e.g., interactive graphics object), and the like. Content124can be associated with a point of view which can change during the course of user interaction127and/or viewing. The point of view can include, a first person point of view, a third person point of view, and the like. It should be appreciated that the point of view can be a perspective based view (e.g., first person perspective).

In one instance, content124can be presented within interface129after event140. In another instance, content124can be presented during event140with an appreciable delay (e.g., broadcasting delay, network latency). That is, user128can experience customized interactive content which can enhance the viewing of event140. It should be appreciated that the disclosure is not limited to viewing event140, and can include other embodiments in which user128can utilize content124to experience a realistic simulation of an event140with user interactive characteristics. In one embodiment, content124can include an automotive racing content, a racing simulation (e.g., marine racing), and the like. For example, content124can replicate a NASCAR racing championship event (e.g., event140) in which the user can perform limited actions which can affect the content124.

In scenario110, a video archive server111can be communicatively linked to a data store113. Data store113can store sequence114which can include one or more portions of panoramic video112. In one instance, sequence114can include footage of real world objects traversing and/or acting within an event140. For example sequence114can be a spectator reaction to an accident during event140. In one embodiment, sequence can114be presented at an appropriate time during user interaction127with content124. For example, when the user128is operating a game element122(e.g., car) and crashes into a computer controlled car, a video sequence114of an audience reaction can be presented within a picture-in-picture (PiP) window of interface129.

Video game environment120can include one or more game elements122which can be extracted and presented within content124. For example, element122can be superimposed upon a video sequence of car drifting around a street corner. It should be appreciated that environment and/or element can be associated with one or more perspective point of views121. For example, environment120can be a racing simulation with a third person view and a first person point of view depending on a user selection (e.g.,FIG. 1B). In one embodiment, sequence114can be overlayed within environment120, permitting dynamic video content (e.g., sequence114) to enhance gameplay of environment120. In one instance, element122can be associated with a timeline of the game environment120. That is, element122is synchronized with game environment120.

In one instance, sequence114and element122can be synchronized based on timing data (e.g.,119), an event timeline (e.g., environment120timeline, event140), and the like. In one embodiment, sequence114can be utilized to recreate real world limitations. For example, sequence114can be utilized to replicate a pit stop in which a driver is unable to race during the pit stop. In one instance, sequence114can interrupt user interaction127when a specific user action and/or time marker is reached. In one configuration of the instance, sequence114can be utilized as a cut away to simulate an occurrence within event140in which user128is unable to perform actions. In another configuration of the instance, user interaction127can be suppressed. The interaction127can resume when the sequence114has ended.

It should be appreciated that the content124can include a master timeline to which element122and/or sequence114can be synchronized. In one embodiment, user128can adjust playback of video sequence114utilizing the master timeline which can appropriately affect element122.

In scenario130, event140can be recorded utilizing panoramic camera131. In one instance, camera131can be a three hundred and sixty degree camera with fixed directional lenses and a stitching lense mounted on the roof of a vehicle135during event140. For example, during event140, a hood mounted panoramic camera131can capture panoramic video112of an event140from the point of view of the vehicle135. It should be appreciated that the disclosure can utilize multiple cameras131to obtain panoramic video112.

In one embodiment, metadata134within video112can be utilized to determine an appropriate point of view136for usage within content124. In the instance, the disclosure can appropriately match a point of view of content124with a video sequence having a similar or identical point of view, and vice versa. For example, when content is of a driver's perspective (e.g., element122), a video sequence114of a first person perspective (e.g., from the point of view of a driver in the same approximate view) can be presented to show a realistic view from the point of view of car (e.g., element122). That is, based on the point of view of content124, an appropriate sequence114can be obtained and utilized (e.g.,FIG. 1B). In essence, the disclosure can approximately match a camera angle of video112with a virtual camera angle of a game environment120and vice versa.

Panoramic video112can be a digital media with an elongated field of view. Video112can be created from one or more cameras, one or more lenses, and/or one or more videos. In one embodiment, video112can be an immersive video. In the embodiment, immersive video can be a video recording of a real world scene/environment, where the view in every direction is recorded at the same time. During playback the viewer can control the viewing direction (e.g., up, down, sideways, zoom).

Video112can include one or more frames115, metadata134, and the like. Video112can undergo video processing132which can prepare video for usage within content124. For example, processing can adjust the aspect ratio of frames115to produce adjusted frames117which are compatible with the aspect ratio of content124or element122. Processing132can include, but is not limited to, distortion correction, color correction, object removal, fidelity filtering, object detection, motion tracking, semantic processing, photogrammetry, and the like. Distortion correction can include projection translations which permit the mapping of a video112geometry to any coordinate system, perspective, and the like. Color correction can include, specialized color balancing algorithms, true color algorithms, and the like. Object removal can utilize traditional (e.g., texture synthesis, multiple images) and/or proprietary technology to remove portions of background and/or foreground objects within video112. Fidelity filtering can be leveraged to control the aberration in video sequences (e.g., high noise, low light). Object detection can be utilized to track objects within video to select appropriate point of views for an object, determine other objects obstructing the view of a tracked object, and the like.

In one embodiment, processing132can include semantic processing which can be utilized to determine the content of video112. In the embodiment, video112content metadata (e.g.,134) can be utilized to match game element122with video112,114to produce a meaningful content124.

In one embodiment, processing132can include the creation of a three dimensional virtualized scene using stitching software such as MICROSOFT PHOTOSYNTH. In the embodiment, multiple perspectives can be utilized to create a content124which can permit user128to view different point of views of interest.

It should be appreciated that the disclosure can utilize one or more regions of the panoramic video112. In one instance, video112can be cropped to focus video sequence114on relevant portions. For example, video112can be cropped to produce a five second video sequence (e.g.,114) of a lead car racing around a track which can be integrated into content124in a realistic manner. It should be understood that the disclosure can utilize individual frames, short sequences, long sequences, special effects, and the like.

As used herein, a video game can be an electronic game which involve human interaction with a user interface to generate visual feedback on a computing device within an environment120. It should be appreciated that environment120can be executed within a computing device (e.g., device126). Device126can include, but is not limited to, a video game console, handheld device, tablet computing device, a mobile phone, and the like. Environment120can include one or more user interactive elements122. Elements can include, but not limited to, playable characters, non-playable characters, environmental elements, and the like. Environment120can conform to any genre including, but not limited to, a simulation genre, a strategy genre, a role-playing genre, and the like.

In one instance, content124can be a secondary content which can permit a user128to interact within an environment which resembles event140. In the instance, overlays can be utilized to “skin” the appearance of environment to appear similar to event140. In one embodiment, user128can interact with event140specific elements appearing within the content124. For example, a user128can select a racing car within content124(e.g., which can be visually similar to vehicle134) to experience a simulation of driving the vehicle135during event140.

In one embodiment, the disclosure can extract car shell “templates” from video112which can enable the templates to applied within content124. In the instance, the exterior appearance of a vehicle135within event140can be extracted and applied appropriately to an element122within content124. For example, a truck shell template of a trucks competing in a Camping World Truck Series event can be extracted from video112to enable a car (e.g.,122) within a Sprint Cup Series content124to appear as a truck from the Camping World Truck Series.

As used herein, event140can be a real world occurrence within a real world environment. For example, event140can be a racing event such as a National Association for Stock Car Auto Racing (NASCAR) racing championship event. Event140can include, but is not limited to, real world participants (e.g., human spectators) and/or objects (e.g., vehicle135). For example, event140can include racing cars travelling around a race track while spectators observe the race. Video112can be collected before, during, and/or after event140occurrence.

In one embodiment, camera131can convey video112wirelessly to a event server, archive server111, broadcast server, and the like. It should be appreciated that video112can include data from multiple events140, from one or more segments of an event140, and the like. For example, video112can include video footage from multiple car races or multiple interval segments of a motorcycle race.

In one embodiment, the disclosure can be a game mode, a game modification (e.g., game mod), and the like. In one instance, content124can be a downloadable content such as a patch, a content expansion pack, and the like. For example, the content124can be accessible once a user128reaches a game checkpoint of completes a game achievement.

It should be appreciated that the disclosure can utilize traditional and/or proprietary mechanism to blend sequence114and element122within content124. Mechanisms can include special effects and/or post production mechanisms including, but is not limited to, compositing (e.g., chroma keying), layering, and the like. It should be appreciated that content124can include additional content and is not limited to game element122and/or video sequence114.

Drawings presented herein are for illustrative purposes only and should not be construed to limit the invention in any regard. It should be appreciated that the disclosure can shift pixels of sequence114to appear appropriately within content124. It should be appreciated that the disclosure is not limited to automotive racing can be utilized in the context of team sports, athletics, extreme sports, and the like.

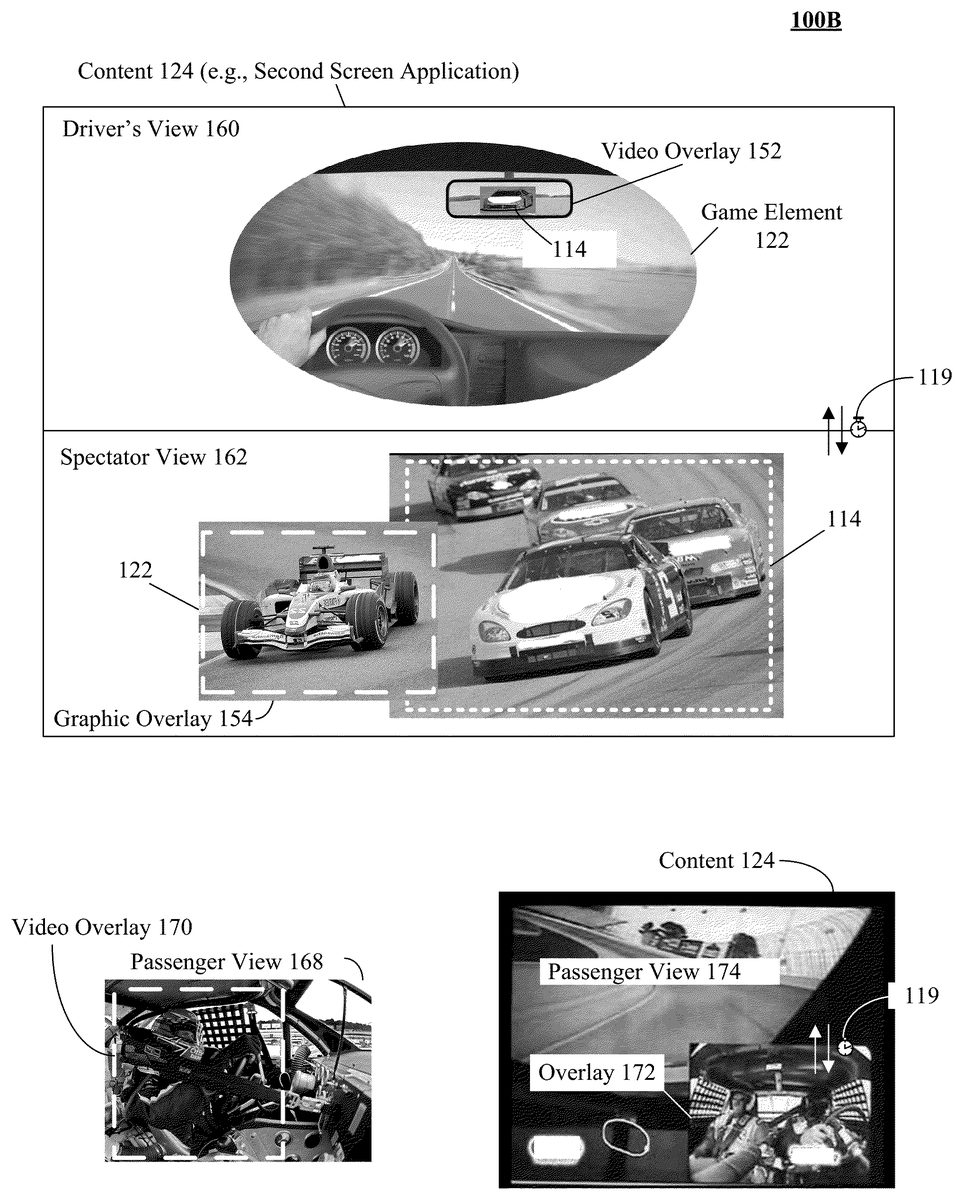

FIG. 1Bshows multiple views160,162,168,174which can be presented within interface129. Views160,162,168,174can include one or more game elements, video overlays (e.g., object114), and the like. Views160,162,168,174can be computer perspective based views, player character perspective based views, and the like.

In one instance, content124can include two views, a driver's160and a spectator162of a racing event. Each view160,162can be a perspective view of a content124which can include interactive and non-interactive portions. In one embodiment, content124can be a video game application which can permit a user to select one or more computer based perspective views, where the computer based perspective views (e.g.,160,162) can be generated utilizing video112,114obtained from a past event. It should be appreciated that content124is not limited to simultaneous views and can support an arbitrary number of perspective views.

In driver's view160, a game element122and a video overlay152can be presented. For example, game element122can be an interior of a car controlled by a user and overlay152can show an approaching vehicle in the rear view mirror. In one instance, video overlay152and element122can be synchronized to permit a realistic experience. For example, as the car moves further away from the approaching vehicle, the overlay152can be scaled appropriately, resulting in the appearance of speeding away from the vehicle. That is, driver view160can utilize a video sequence overlayed on a graphic element (e.g., game element122) to create a simulated perspective view within content124.

In spectator view162, a graphic overlay154can be presented simultaneously with a video sequence114in a third person perspective view. For example, view162can show a user controlled car (e.g.,122) positioned alongside other competing cars (e.g.,114), where the competing cars are a portion of a video sequence114of a previously finished race. That is, spectator view162can utilize a graphic overlayed on a video sequence to create a perspective view within content124.

In one embodiment, timing data119can be utilized to keep views160,160in synchronicity.

Passenger view168can be a perspective view of a game content. In one instance, a video sequence from a passenger mounted camera can be utilized to enhance a computer based perspective view. In the instance, a video sequence114can be overlayed within the computer based perspective view. For example, a video of a real world driver can be used to obscure a computer avatar within overlay170to enhance the realism of the computer based perspective view.

In passenger view174, a picture-in-picture (PiP) feature of a game can be utilized to present a video overlay172. In one instance, overlay172can be synchronized119to the movement of a user interaction127. In the instance, overlay172can utilize appropriate video sequences to improve the realism of a game experience. For example, a video sequence of a driver and passenger leaning left can be presented within the PiP window when a user steers the car around a left hand corner quickly.

In one embodiment, game data from a video game can be utilized to create a customized content. In the embodiment, session data including, but not limited to, game scoring, lap times, routes, and the like can be leveraged by the disclosure. For example, a user can select saved games with a best lap time of a NASCAR track to visually compare performance against historic video of professional drivers racing on an identical track. It should be appreciated that collision detection between video sequences and game elements can be resolved utilizing traditional (e.g., non-colliding geometry, bounding boxes) and/or proprietary techniques, and the like. For example, when a video sequence is detected to obscure a game element, one or more opaque layers can be utilized to block the game element from being viewed in an appropriate manner.

FIG. 2is a schematic diagram illustrating a method200for integrating panoramic video from a historic event with a video game in accordance with an embodiment of the inventive arrangements disclosed herein. In method200, a video footage from an event can be dynamically integrated into an interactive content.

In step205, an interactive content can be established within an interface of a computing session. The computing session can be executed within a companion device. In step210, if a video sequence is available for the content, the method can continue to step220, else proceed to step255. In step220, game element data can be determined. Data can include, but is not limited to, point of view data, semantic data, element geometry, element texture, and the like. In step225, a sequence can be selected based on perspective information obtained from game element data. In step230, the sequence and elements can be cohesively merged into the interactive content. In step235, if errors are detected in content, the method can continue to step240, else proceed to step245.

In step245, a user input can be received. In step250, if the input exceeds previously established constraints, the method can return to step245, else continue to step255. Constraints can be determined based on element data restrictions, video sequence limitations, user preferences, system settings, and the like. In step255, the content can be updated appropriately based on input. The content can be updated utilizing traditional and/or proprietary video/graphic algorithms. In step260, user specific views can be optionally generated. User specific views can include, but is not limited to, a first person perspective view (e.g., player character view), third person perspective view (e.g., observer view), and the like. In step265, the views can be optionally presented within the interface. In step270, if the session is terminated the method can continue to step275, else return to step210. In step275, the method can end.

Drawings presented herein are for illustrative purposes only and should not be construed to limit the invention in any regard. It should be appreciated that method200can be performed in real-time or near real-time. Further, method200can be performed in serial and/or in parallel. Steps210-270can be performed continuously during the computing session to enable a video sequence and game element to be seamlessly integrated as a point of view of interactive content changes. It should be appreciated that method200can include additional steps which can permit the acquisition of additional event data (e.g., footage, semantic data) during the session.

FIG. 3is a schematic diagram illustrating a system300for integrating panoramic video from a historic event with a video game in accordance with an embodiment of the inventive arrangements disclosed herein. In system300, a content server310can enable the creation of interactive content314which can be conveyed to device360. In one embodiment, server310can be a component of a content delivery network.

Content sever310can be a hardware/software entity for enabling interactive content314. Server310can include, but is not limited to, compositing engine320, interactive content314, data store330, and the like. Server310functionality can include, but is not limited to, file serving, encryption capabilities, and the like. In one instance, server310can perform functionality to enable communication with game server370, server390, and the like. In one embodiment, server310can be a functionality of a pay-per-view subscription service.

Compositing engine320can be a hardware/software element for producing content314. Engine320functionality can include, but is not limited to, image processing, video editing, and the like. In one embodiment, engine320can be a functionality of a graphics framework. In one instance, engine320can determine one or more relevant portions (e.g., dynamic content) of content314to be conveyed to device360. In the instance, relevant portions can be conveyed314while non-relevant portions (e.g., static content) can be omitted. That is, engine320can compensate for real world limitations including, but not limited to network latency, computing resource availability, and the like. It should be appreciated that engine320can perform caching functionality to enable real-time or near real-time content314delivery and/or presentation.

It should be appreciated that engine320can allow for manual oversight and management of the functionality described herein. For example, engine320can permit an administrator to approve or reject a video and/or a game element prior to use within content314.

Video processor322can be a hardware/software entity for processing panoramic video312. Processor322functionality can include traditional and/or proprietary functionality. In one embodiment, processor322can include pre-processing functionality including, but not limited to, metadata313analysis, video acquisition and/or filtering, and the like.

Compositing handler324can be a hardware/software element for merging video312and element374into an interactive content314. Handler324can utilize traditional and/or proprietary functionality to cohesively integrate video312and element374into an interactive content314.

View renderer326can be a hardware/software entity for presenting a perspective view of interactive content314. Renderer326functionality can include, but is not limited to, environment372analysis, element374analysis, environment mapping analysis, and the like.

Settings328can be one or more rules for establishing the behavior of system300, server310, and/or engine320. Settings328can include, but is not limited to, video processor322options, compositing handler324settings, view renderer326options, and the like. In one instance, settings328can be manually and/or automatically established. In the embodiment, settings328can be heuristically established based on historic settings. Settings328can be persisted within data store330, computing device360, and the like.

Interactive content314can be one or more digital media which can permit user interaction to affect content314state. Content314can conform to traditional and/or proprietary formats including, but not limited to, an ADOBE FLASH format, a JAVA format, and the like. That is, content314can be a Web based application. It should be appreciated that content314is not limited to Web based platforms and can include desktop application platforms, video game console platforms, and the like. In one embodiment, content314can be access restricted (e.g., pay-per-view, age restricted) based on one or more provider settings (e.g.,328), licensing restrictions, and the like. In one instance, content314can be executed within a sandbox which can address potential security pitfalls without markedly decreasing performance of the content314. It should be appreciated that content314can include single player functionality, multiplayer functionality and the like.

Data store330can be a hardware/software component able to persist content314, element374, video312, and the like. Data store330can be a Storage Area Network (SAN), Network Attached Storage (NAS), and the like. Data store330can conform to a relational database management system (RDBMS), object oriented database management system (OODBMS), and the like. Data store330can be communicatively linked to server310in one or more traditional and/or proprietary mechanisms. In one instance, data store330can be a component of Structured Query Language (SQL) complaint database.

Computing device360can be a software/hardware element for collecting user input and/or presenting content314. Device360can include, but is not limited to, input components362(e.g., keyboard, camera), output components363(e.g., display), interface364, and the like. In one instance, interface364can be a Web based interface (e.g., rich internet application media player). Device360hardware can include but is not limited to, a processor, a non-volatile memory, a volatile memory, a bus, and the like. Computing device360can include but is not limited to, a desktop computer, a laptop computer, a mobile phone, a mobile computing device, a portable media player, a Personal Digital Assistant (PDA), a video game console, an electronic entertainment device, and the like.

Game server370can be a hardware/software entity for executing game environment372. Server370can include, but is not limited to, virtual game world server, a gateway server, a game content server, an e-commerce server, and the like. In one embodiment, server370can communicate with server310to convey relevant game elements374as requested by server310. In one embodiment, game server can be utilized to can convey perspective data to server310and/or engine320. In one instance, game server370can be utilized to support multiplayer interaction with content314.

Game environment372can be one or more virtual environments associated with a video game. Environment372can include, but is not limited to, game maps, elements374. Environment372can include two dimensional environments, three dimensional environments, and the like. Environment372can include static elements, dynamic elements, and the like.

Video archive server390can be a hardware/software entity for persisting and/or conveying video312. Server390can execute a digital asset management software which can index video312based on one or more criteria including, but not limited to, metadata313, user input (e.g., keywords), and the like.

Network380can be an electrical and/or computer network connecting one or more system300components. Network380can include, but is not limited to, twisted pair cabling, optical fiber, coaxial cable, and the like. Network380can include any combination of wired and/or wireless components. Network380topologies can include, but is not limited to, bus, star, mesh, and the like. Network380types can include, but is not limited to, Local Area Network (LAN), Wide Area Network (WAN), Virtual Private Network (VPN) and the like.

Drawings presented herein are for illustrative purposes only and should not be construed to limit the invention in any regard. It should be appreciated that one or more components within system300can be optional components permitting that the disclosure functionality be retained. It should be understood that engine320components can be optional components providing that engine320functionality is maintained. It should be appreciated that one or more components of engine320can be combined and/or separated based on functionality, usage, and the like. System300can conform to a Service Oriented Architecture (SOA), Representational State Transfer (REST) architecture, and the like.

The flowchart and block diagrams in theFIGS. 1A-3illustrate the architecture, functionality, and operation of possible implementations of systems, methods and computer program products according to various embodiments of the present invention. In this regard, each block in the flowchart or block diagrams may represent a module, segment, or portion of code, which comprises one or more executable instructions for implementing the specified logical function(s). It should also be noted that, in some alternative implementations, the functions noted in the block may occur out of the order noted in the figures. For example, two blocks shown in succession may, in fact, be executed substantially concurrently, or the blocks may sometimes be executed in the reverse order, depending upon the functionality involved. It will also be noted that each block of the block diagrams and/or flowchart illustration, and combinations of blocks in the block diagrams and/or flowchart illustration, can be implemented by special purpose hardware-based systems that perform the specified functions or acts, or combinations of special purpose hardware and computer instructions.

Claims

- A method for integrating panoramic video with a video game comprising: receiving, via a computing device comprising hardware and software, a panoramic video captured by a camera of a moving vehicle of a real world event, wherein the video comprises of perspective data linked with a video timeline;determining, via the computing device, a perspective view associated with a game vehicle of a graphics of a video game linked with a game timeline at a first time index, wherein the graphics includes an interactive graphics object for the game vehicle controlled by a user of the video game in which the game vehicle moves in a game environment per user input, wherein the graphics interactively shows the game vehicle in a third person perspective;processing, via the computing device, the perspective data of the panoramic video to obtain a video sequence, from the panoramic video matching the perspective view associated with the graphics at a second time index, wherein the video sequence shows an interior of the moving vehicle in addition to showing at least one occupant of the moving vehicle;synchronizing the video timeline and the game timeline based on a common time index of each of the timelines;and integrating the graphics and the video sequence into an interactive content, responsive to the synchronizing, such that motion of the moving vehicle from the video sequence is synchronized to match motion of the game vehicle as controlled by the user, wherein the graphics showing the game vehicle in third person perspective and the video segment are concurrently included for presentation within a user interface of the video game responsive to the integrating step.

- The method of claim 1 , further comprising: presenting, via the computing device, the graphics and the video segment within the user interface.

- The method of claim 1 , wherein changes of playback of the video segment during the video game using affects a portion of the gaming vehicle as shown in the user interface.

- The method of claim 1 , further comprising: embedding, via the computer, the perspective data within multiple frames of the video sequence.

- The method of claim 1 , further comprising: simultaneously presenting, via the computer, the interactive content on a computing device during a mass communication broadcast of the event.

- The method of claim 1 , further comprising: detecting, via the computing device, a change in the perspective view within the interactive content from a previous perspective to a subsequent perspective view;and updating, via the computing device, the interactive content with a graphics and a video sequence matching the subsequent perspective view to maintain continuity of the content.

- The method of claim 1 , further comprising: overlaying, via the computing device, the video sequence within the interactive content, wherein the sequence obscures at least a portion of a computer based view.

- The method of claim 1 , further comprising: overlaying, via the computing device, the graphics within the interactive content, wherein the graphics obscures at least a portion of a video sequence.

- The method of claim 1 , wherein the interactive content is a downloadable content associated with the video game, wherein the downloadable content is accessible responsive to a video game achievement.

- A system for integrating panoramic video with a video game comprising: a compositing engine configured to generate an interactive content comprising of a video game graphics and a video sequence, wherein the video game graphic includes an interactive graphics object for a game vehicle of a video game environment shown in a first viewing perspective, wherein the video sequence is captured by a camera of a moving vehicle of a real world event, wherein video sequence shows an interior of the moving vehicle from a second viewing perspective, which is different from the first viewing perspective, wherein movement of game vehicle is interactively controlled by user input, wherein the compositing engine time synchronizes the video sequence and the game vehicle such that motion of the moving vehicle from the video sequence is synchronized to match motion of the game vehicle as controlled by the user, wherein the video sequence is a portion of a panoramic video;and a data store able to persist at least one of the interactive content and a panoramic video metadata.

- The system of claim 10 , further comprising: a computing device receiving the panoramic video, wherein the video segment comprises of perspective data linked with a video timeline;the computing device determining a perspective view associated with a graphics of a video game linked with a game timeline at a first time index;the computing device processing the perspective data of the panoramic video to obtain a video sequence matching the perspective view associated with the graphics at a second time index;the computing device synchronizing the video timeline and the game timeline based on a common time index of each of the timelines;and the computing device integrating the graphics and the video sequence into the interactive content, responsive to the synchronizing.

- The system of claim 10 , further comprising: a computing device presenting the interactive content within an user interface.

- The system of claim 10 , wherein the video is obtained from a camera comprising of multiple different fixed directional lenses and a stitching lens.

- The system of claim 10 , wherein a computing device embedding the perspective data within multiple frames of the video sequence.

- The system of claim 10 , further comprising: a companion device simultaneously presenting the interactive content on a computing device during a mass communication broadcast of the event.

- The system of claim 10 , further comprising: a companion device detecting a change in the perspective view within the interactive content from a previous perspective to a subsequent perspective view;and the companion device updating the interactive content with a graphics and a video sequence matching the subsequent perspective view to maintain continuity of the content.

- The system of claim 10 , further comprising: the companion device overlaying the video sequence within the interactive content, wherein the sequence obscures at least a portion of a computer based view.

- The system of claim 10 , further comprising: the companion device overlaying the graphics within the interactive content, wherein the graphics obscures at least a portion of a video sequence.

- The system of claim 10 , wherein the interactive content is a downloadable content associated with the video game, wherein the downloadable content is accessible responsive to a video game achievement.

- A non-transitory computer readable storage medium having computer usable program code embodied therewith, the computer usable program code comprising: computer usable program code stored in a non-transitory storage medium, if said computer usable program code is executed by a processor it is operable to receive a panoramic video captured by a camera of a moving vehicle of a real world event, wherein the video comprises of perspective data linked with a video timeline;computer usable program code stored in a non-transitory storage medium, if said computer usable program code is executed by a processor it is operable to determine a perspective view associated with a game vehicle of a graphics of a video game linked with a game timeline at a first time index, wherein the graphics includes an interactive graphics object for the game vehicle controlled by a user of the video game in which the game vehicle moves in a game environment per user input, wherein the graphics interactively shows the game vehicle in a third person perspective;computer usable program code stored in a non-transitory storage medium, if said computer usable program code is executed by a processor it is operable to process the perspective data of the panoramic video to obtain a video sequence, from the panoramic video matching the perspective view associated with the graphics at a second time index, wherein the video sequence shows an interior of the moving vehicle in addition to showing at least one occupant of the moving vehicle;computer usable program code stored in a non-transitory storage medium, if said computer usable program code is executed by a processor it is operable to synchronize the video timeline and the game timeline based on a common time index of each of the timelines such that motion of the moving vehicle from the video sequence is synchronized to match motion of the game vehicle as controlled by the user;and computer usable program code stored in a non-transitory storage medium, if said computer usable program code is executed by a processor it is operable to integrate the graphics and the video sequence into an interactive content, responsive to the synchronizing.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.