U.S. Pat. No. 9,205,333

MASSIVELY MULTIPLAYER GAMING

AssigneeUbisoft Entertainment

Issue DateJune 7, 2013

Illustrative Figure

Abstract

A computer program, method, and system for participating in interactive events, such as a massive multiplayer game, includes a personal input executing an event application, a client device coupled to a display and executing a display application, and a server executing a server application. The display application is configured to receive a request from a player to participate in an event, receive the event from the server application, show the event on the display, and determine an event start time corresponding to a time at which the start of the event is shown on the display. The event application is configured to receive sensor data from a sensor, form a plurality of packets, and transmit the packets. The server application is configured to transmit the event to the display application, receive the sensor data from the event application, and compare the sensor data to reference data to generate a score.

Description

The drawing figures do not limit the current invention to the specific embodiments disclosed and described herein. The drawings are not necessarily to scale, emphasis instead being placed upon clearly illustrating the principles of the invention. DETAILED DESCRIPTION The following detailed description of embodiments of the present invention references the accompanying drawings that illustrate specific embodiments in which the invention can be practiced. The embodiments are intended to describe aspects of the invention in sufficient detail to enable those skilled in the art to practice the invention. Other embodiments can be utilized and changes can be made without departing from the scope of the current invention. The following detailed description is, therefore, not to be taken in a limiting sense. The scope of the current invention is defined only by the appended claims, along with the full scope of equivalents to which such claims are entitled. In this description, references to “one embodiment”, “an embodiment”, or “embodiments” mean that the feature or features being referred to are included in at least one embodiment of the technology. Separate references to “one embodiment”, “an embodiment”, or “embodiments” in this description do not necessarily refer to the same embodiment and are also not mutually exclusive unless so stated and/or except as will be readily apparent to those skilled in the art from the description. For example, a feature, structure, act, etc. described in one embodiment may also be included in other embodiments, but is not necessarily included. Thus, the current technology can include a variety of combinations and/or integrations of the embodiments described herein. A system10that may be used for participating in an interactive event, constructed in accordance with various embodiments of the current invention, is shown inFIG. 1. The event could be, or may include, playing electronic or computer-based games on a ...

The drawing figures do not limit the current invention to the specific embodiments disclosed and described herein. The drawings are not necessarily to scale, emphasis instead being placed upon clearly illustrating the principles of the invention.

DETAILED DESCRIPTION

The following detailed description of embodiments of the present invention references the accompanying drawings that illustrate specific embodiments in which the invention can be practiced. The embodiments are intended to describe aspects of the invention in sufficient detail to enable those skilled in the art to practice the invention. Other embodiments can be utilized and changes can be made without departing from the scope of the current invention. The following detailed description is, therefore, not to be taken in a limiting sense. The scope of the current invention is defined only by the appended claims, along with the full scope of equivalents to which such claims are entitled.

In this description, references to “one embodiment”, “an embodiment”, or “embodiments” mean that the feature or features being referred to are included in at least one embodiment of the technology. Separate references to “one embodiment”, “an embodiment”, or “embodiments” in this description do not necessarily refer to the same embodiment and are also not mutually exclusive unless so stated and/or except as will be readily apparent to those skilled in the art from the description. For example, a feature, structure, act, etc. described in one embodiment may also be included in other embodiments, but is not necessarily included. Thus, the current technology can include a variety of combinations and/or integrations of the embodiments described herein.

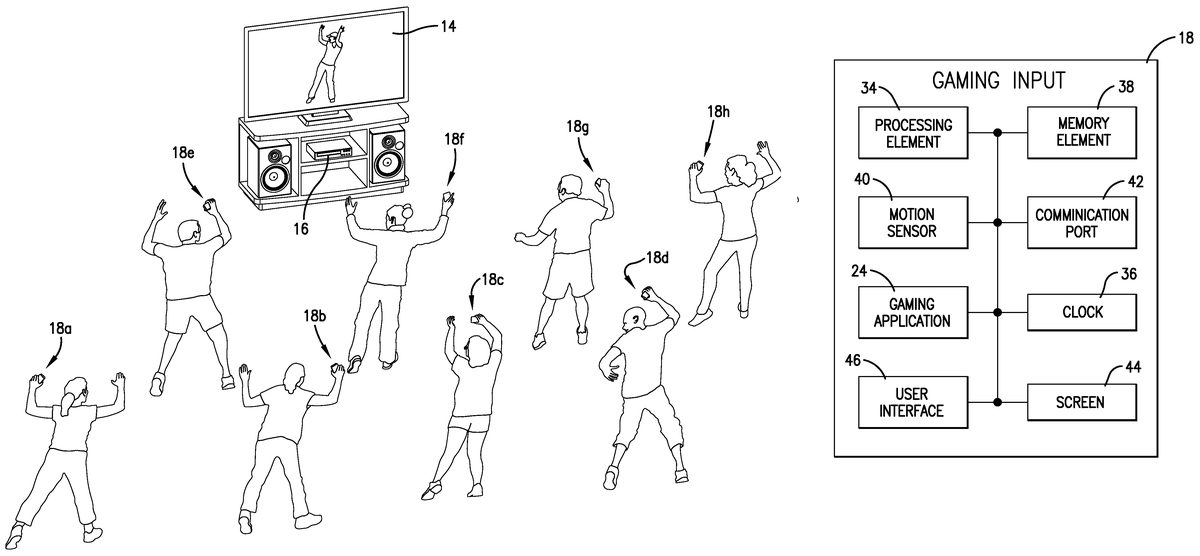

A system10that may be used for participating in an interactive event, constructed in accordance with various embodiments of the current invention, is shown inFIG. 1. The event could be, or may include, playing electronic or computer-based games on a communication network12. The electronic games, hereinafter “game” or “games”, typically include interactive video games in which the player responds to images on a display14. The player may engage in actions or motions when prompted by proceedings shown on the display14. For example, the player may dance by mimicking or following the motions of a dance leader shown on the display14. Or, the player may simulate participating in a sport, such as throwing a baseball, hitting a tennis ball, or dribbling a basketball. In addition, the player may engage in simulated combat activity such as boxing. Alternatively, the player may respond to proceedings on the display14by singing. Thus, the games may include dancing games, sporting games, such as basketball, baseball, tennis, golf, bowling, and the like, combat games, role-playing games, adventure games, and so on. The system10may broadly comprise a client device16, a personal input18, a server20, a display application22, a event application24, and a server application26. By way of example to demonstrate the features of the current invention, but not intended to be limiting, an electronic game and in particular, a dancing game, will be the interactive event discussed in this application and shown in the figures. Furthermore, embodiments of the current invention may be implemented in hardware, software, firmware, or combinations thereof.

The communication network12generally allows communication between the personal inputs18and the server20as well as the server20and the client devices16. The communication network12may include local area networks, metro area networks, wide area networks, cloud networks, the Internet, intranets, and the like, or combinations thereof. The communication network12may also include or connect to voice and data communication systems such as cellular networks, such as 2G, 3G, or 4G, and public ordinary telephone systems. The communication network12may be wired, wireless, or combinations thereof and may include components such as switches, routers, hubs, access points, and the like. Furthermore, the communication network12may include components or devices that are capable of transmitting and receiving radio frequency (RF) communication using wireless standards such as Wi-Fi, Wimax, or other Institute of Electrical and Electronic Engineers (IEEE) 802.11 and 802.16 protocols.

The display14, as seen inFIGS. 1-6, generally shows or displays the actions and proceedings of the event or game along with scores or information or data related to the game. The display14may include video devices of the following types: plasma, light-emitting diode (LED), organic LED (OLED), Light Emitting Polymer (LEP) or Polymer LED (PLED), liquid crystal display (LCD), thin film transistor (TFT) LCD, LED side-lit or back-lit LCD, heads-up displays (HUDs), projection, combinations thereof, or the like. The display14may possess a square or a rectangular aspect ratio and may be viewed in either a landscape or a portrait mode. Examples of the display14include monitors or screens associated with tablets or notebook computers, laptop computers, desktop computers, as well as televisions, smart televisions, wall projectors, theater projectors, or similar video displays.

The client device16, as seen inFIGS. 1-7, generally receives aspects of the game from the server20and communicates the video content of the game to the display14. In various embodiments, the client device16may include or have access to an audio system that receives the audio content of the game. The client device16may be capable of running or executing web browsers, web viewers, or Internet browsers, which may be used to access the server application26. Examples of the client device include tablet computers, notebook computers, laptop computers, desktop computers, and the like, as well as smart video devices such as Blu-Ray players or other video streaming devices that are capable of running web browsers or video-based applications. In further embodiments, the client device16may be a stand-alone gaming console. In various embodiments, the client device16may be incorporated with, integrated with, or housed within the display14, as shown inFIGS. 2a-2b. Furthermore, the client device16may execute or run the display application22.

The client device16may include a processing element28, a clock30, and a memory element32. The processing element28may include processors, microprocessors, microcontrollers, digital signal processors (DSPs), field-programmable gate arrays (FPGAs), analog and/or digital application-specific integrated circuits (ASICs), and the like, or combinations thereof. The processing element28may generally execute, process, or run instructions, code, software, firmware, programs, applications, apps, processes, services, daemons, or the like, or may step through states of a finite-state machine.

The clock30may include circuitry such as oscillators, multivibrators, phase-locked loops, counters, or combinations thereof, and may generate or measure timing data such as the time period that elapses between two actions. Given communication with an external time of day source, the clock circuitry may also generate time of day data. The clock circuitry may be able to generate timing or time of day data with a resolution ranging from approximately 1 millisecond (ms) to approximately 10 ms. The clock30may be in communication with the processing element28and the memory element32, and may be accessed by the display application22.

The memory element32may include data storage components such as read-only memory (ROM), programmable ROM, erasable programmable ROM, random-access memory (RAM), hard disks, floppy disks, optical disks, flash memory, thumb drives, universal serial bus (USB) drives, and the like, or combinations thereof. The memory element32may include, or may constitute, a non-transitory “computer-readable storage medium”. The memory element32may store the instructions, code, software, firmware, programs, applications, apps, services, daemons, or the like that are executed by the processing element28. The memory element32may also store settings, data, documents, sound files, photographs, movies, images, databases, and the like. The processing element28may be in communication with the memory element32through address busses, data busses, control lines, and the like.

The personal input18, as seen inFIGS. 1-6and8, may generate sensor data based on the motions of the player in response to the game and may transmit the sensor data to the server20. The personal input18is generally held in the player's hand. In embodiments of the present invention, the player is instructed to hold or otherwise attach the personal input18to the player's right hand/wrist. Alternatively, the player is questioned as to which hand is his dominant hand (i.e., the hand in which the player prefers to hold the personal input18), and the generated sensor data accounts for whether the player holds in his left or right hand. In yet further embodiments of the present invention, the personal input18may be strapped, or otherwise coupled, to the player's wrist, arm, or otherwise strapped to the player's body. Generally, the personal input18may be held in either hand or strapped to either wrist. Examples of the personal input18include smart phones, cell phones, mobile phones, personal digital assistants (PDAs), smart watches, smart bracelets, Wi-Fi-enabled watches, Wi-Fi-enabled bracelets, and the like. The personal input18may include a processing element34, a clock36, a memory element38, a sensor40, and a communication port42. Furthermore, the personal input18may include a screen44and a user interface46with inputs such as buttons, pushbuttons, keypads, keyboards, or combinations thereof. The user interface46may also include a touch screen occupying the entire screen44or a portion thereof so that the screen44functions as part of the user interface46. In some embodiments, the personal input18may further include a camera or other video capture device. The personal input18may also include a microphone or other audio capture device; a retina scanner for determining eye movement; a heart rate or other physiological sensor; a brainwave sensor; or a force sensor for determining a load applied to an object.

The processing element34may be substantially the same as the processing element28in structure and function. The clock36may be substantially the same as the clock30in structure and function and may be accessed by the event application24. The memory element38may be substantially the same as the memory element32in structure and function and may include or may constitute a computer-readable storage medium.

The sensor40generally senses or detects movements or actions of the participant. In some embodiments, the sensor40may sense motion and may broadly include accelerometers, tilt sensors, inclinometers, gyroscopes, combinations thereof, or other devices including piezoelectric, piezoresistive, capacitive sensing, or micro electromechanical systems (MEMS) components. The sensor40may sense motion along one axis of motion or multiple axes of motion. Sensors40that sense motion along three orthogonal axes, such as X, Y, and Z, are often used. In various embodiments, the sensor40may measure the acceleration, such as the gravitation (G) force, of the personal input18and may output the measured data in a digital binary format. An exemplary range of acceleration data may be from approximately 4, at low acceleration, to approximately 30, at high acceleration.

In other embodiments, the sensor40may sense acts or activities of the participant, such as singing. Thus, the sensor40may include a microphone or other transducing components that capture sound and produce audio data. In yet other embodiments, the sensor40may include optical sensors such as still cameras, motion video cameras, photodetectors, and the like that produce video data. In still other embodiments, the sensor40may measure pressure data, brainwave activity, eye motion, heart rate activity, and other human physiological quantities.

The sensor40may sample data at a frequency that may range from approximately 10 hertz (Hz) to approximately 100 Hz. The sensor40may be in communication with the processing element34and the memory element38. It is to be understood that other sensing technologies may also be used.

The communication port42generally allows the personal input18to communicate with the server20through the communication network12. The communication port42may be wireless and may include antennas, signal or data receiving circuits, and signal or data transmitting circuits. The communication port42may transmit and receive radio frequency (RF) signals and/or data and may operate utilizing communication standards such as cellular 2G, 3G, or 4G, IEEE 802.11 or 802.16 standards, Bluetooth™, or combinations thereof. Alternatively, or in addition, the communication port42may be wired and may include connectors or couplers to receive metal conductor cables or connectors or optical fiber cables. The communication port42may be in communication with the processing element34and the memory element38.

The server20, as seen inFIGS. 1 and 9, generally runs or executes the server application26. The server20may include application servers, gaming servers, web servers, or the like, or combinations thereof. The server20may further include a processing element48, a clock50, and a memory element52. The processing element48may be substantially the same as the processing element28in structure and function and may be accessed by the server application26. The clock50may be substantially the same as the clock30in structure and function. The memory element52may be substantially the same as the memory element32in structure and function and may include or may constitute a computer-readable storage medium.

The display application22generally manages the displaying or showing of the game on the display14. The display application22may also coordinate with an audio system to play the audio portion of the game. The display application22may include instructions, code, software, firmware, programs, applications, apps, services, daemons, or the like. In some embodiments, the display application22may be executed as markup language or scripting code that is executed from within a web browser, web viewer, or Internet browser. In other embodiments, the display application22may be executed as a standalone program or application on the client device16. In still other embodiments, the display application22may be accessed through third-party social networking web sites. For example, a player may click on a link on a third-party social networking site that transfers the player to the display application22.

The display application22is generally executed by a player wishing to start a game or participate in an event. Once executed, the display application22may include a synchronization process with the server20, discussed in more detail below. The synchronization process may determine a client device clock offset, which is a time difference between the clock30of the client device16and the clock50of the server20. The client device clock offset may also be thought of as a clock offset for the display14as well. As a result of the synchronization process, it may be determined that the client device clock offset is, for example, 1.5 seconds (s), indicating that the client device clock30is 1.5 s ahead of the server clock50. In some instances, the client device clock offset may be negative, indicating that the client device clock30is behind the server clock50. However, the (positive or negative) sign of the client device clock offset is arbitrary and could mean the opposite of what is mentioned above. Once the client device clock offset is determined, it may be recorded by the server application26.

Either before or after the synchronization process is executed, the display application22may receive from the server application26a list of types of interactive events (e.g., games) that can be played with the personal input18. The player may select the type of game in which he is interested and then may be presented with the option of starting a new game. In exemplary embodiments, the game may correspond to a dance room. When the player selects a game, the server application26may transmit a code to the display application22to be shown on the display14. As an example, the code may correspond to a dance room number. The code may be in the form of an alphanumeric code or a quick response (QR) code that the player can either type into the personal input18or scan into the personal input18.

Once the player has chosen a game to start, the display application22may request the game from the server application26running on the server20. The display application22may then receive the game from the server20and may show the game on the display14. In some embodiments, at least a portion of the game may be buffered on the client device16, such as with the memory element32, before it is shown on the display14. The display application22may record an event start time (e.g., the time of day) at which the start of the game was displayed and may transmit the event start time to the server application26. Furthermore, the display application22may monitor the progress of the game being shown on the display14to determine if the game is shown smoothly and completely. For example, if there are delays in the game being transmitted from the server20, there may be a delay in the game being shown on the display14. In such a situation, the display application22may send a message to the server application26that includes the time elapsed in the game and the amount of time by which the game has been delayed.

The display application22may also receive player scores from the server application26. In various embodiments, the display application22may receive the top eight player scores that are updated on a regular basis. The display application22may show the scores along with the associated player names in an area of the display14that does not interfere with the showing of the game. After the game has completed, the display application22may show the name of the winner of the game along with his score.

The event application24generally synchronizes with the server20and manages the transfer of the sensor data from the personal input18to the server20. The event application24may include instructions, code, software, firmware, programs, applications, apps, services, daemons, or the like. The event application24may be executed on the personal input18by the player in order to play the game. Once executed, the event application24may include a synchronization process with the server20, discussed in more detail below. The synchronization process may determine a personal input clock offset that is the time difference between the clock36of the personal input18and the server clock50. Once the personal input clock offset is determined, it may be recorded by the server application26.

Either before or after the synchronization process, the event application24may prompt the player to enter the code that identifies a particular game. The code may be shown on the display14and may be scanned from the display14using a camera on the personal input18or may be entered using the user interface46on the personal input18. In some embodiments, the code may be scanned from another player's personal input18. The event application24may further initialize and handshake with the server20by retrieving data from the sensor40and any user interface46inputs that are active and sending the data to the server20. The server20may send data back to the personal input18that acknowledges the player's participation in the game, such as showing statistics of the game, showing a leader board, sending a command or code to vibrate the personal input18, and so forth.

Once the game begins, the event application24may receive data from the sensor40at regular intervals, such as the sensor data capture rate. Different personal inputs18may capture sensor data at different rates. In order for the server application26to handle the sensor data from all of the different personal inputs18with different sensor data capture rates in a uniform fashion, the event application24may modify the sensor data to adapt to a single sensor data capture rate standard. Although other standard capture rates may be utilized, a standard capture rate of 20 Hz, or a 50 millisecond (ms) period, generally provides sufficient sensor data resolution without overloading the communication network12and the server20with too much data. Thus, the event application24may ignore or delete some of the sensor data if the data capture rate of the sensor40is greater than 20 Hz. Likewise, the event application24may perform a linear interpolation, or similar mathematical operation, on the sensor data if the data capture rate of the sensor40is less than 20 Hz. In addition, to further reduce the amount of data that is transmitted through the communication network12and handled by the server20, the event application24may record just a single value for each sensor data capture. While the sensor40may supply three data components (for three-axis motion sensing), the event application24may calculate the vector magnitude of the three components to be recorded as the sensor data value.

At certain intervals, the event application24may create a packet that includes a plurality of sensor data capture values. The intervals may follow a schedule that is determined for each game. The schedule may correspond to actions of the game. In exemplary embodiments, the schedule may correspond to the performing of dance moves and may be variable. For example, one dance move may require 1 s to perform while another dance move may require 5 s to perform, and other dance moves may require times in between. Thus, a schedule may be created with a series of times relative to the beginning of the game that correspond to the dance moves of the game. In order for the event application24to create the packets at the correct time, the server application26may transmit a personal input adjusted event start time, as discussed in more detail below, to the event application24. As an example, the schedule may include the following times relative to the personal input adjusted event start time: 2.5 s, 4 s, 8 s, 9 s, 11.5 s, and so forth, indicating dance moves completing at each of those times. Accordingly, the event application24may create a packet for each of the listed time periods. A first packet may include sensor data captured between 0 s and 2.5 s. A second packet may include sensor data captured between 2.5 s and 4 s. The remainder of the packets may be created in the same fashion. Each packet may include the schedule time along with a block of sensor data that includes all of the sensor data capture values for the corresponding dance move. The packet may also include a timestamp from the personal input clock36and/or other relevant data. In various embodiments, the event application24may send and receive data using known packet protocols, such as transmission control protocol/internet protocol (TCP/IP).

The server application26generally supplies the game content and tracks the score of each player. The server application26may include instructions, code, software, firmware, programs, applications, apps, services, daemons, or the like. The server application26may be accessed when a player executes either the display application22or the event application24. When initially accessed by the display application22, the server application26may transmit to the client device16(to be shown on the display14) a list of types of games that can be played with the personal input18. The player may select the type of game in which he is interested and then may be presented with options such as starting a new game, joining a game that is about to begin, and so forth. The server application26may also present information such as high scores, player statistics, previous scores for the player, a profile for the player, and the like. The player generally chooses an option and the server application26transmits an event code to be shown on the display14. At least the first player enters the event code on his personal input18. Other players may enter the code as well.

At some point, the server20may determine the client device clock offset with a synchronization process that includes the following steps. The steps may include or adapt at least a portion of the network time protocol (NTP) clock synchronization algorithm, where the role of server and client are inverted. The server20may send a first packet transmission to the client device16at time t0, which may refer to the local time of the server20and may be included as part of the first packet. The client device16may receive the first packet at time t1, which may refer to the local time of the client device16. Subsequently, the client device16may send a second packet transmission back to the server20at time t2, which may refer to the local time of the client device16and may be included as part of the second packet. The second packet may also include the times t0 and t1. The server20may receive the second packet at time t3, the local time of the server20. The round trip delay time (tRTD) is computed as [(t3−t0)−(t2−t1)]. The offset time (tOff) is computed as [(t3−t2)+(t1−t0)]/2. Each calculation of the round trip delay time and the offset time is referred to as a “sample”, and the sending and receiving of the packets is “sampling”. While the sampling is occurring, the server application26may also be calculating the standard deviation of the tRTD, the average (mean) of the tRTD, and the standard deviation of the tOff. The sampling may continue until a minimum number of samples have been taken. The sampling may stop when a maximum number of samples have been taken. An exemplary maximum number of samples may be75. The sampling may also stop when the standard deviation of the tRTD is below a first threshold value, which is an indication that the round trip delay times are consistent. The sampling may further stop when the standard deviation of the tOff is below a second threshold value, which is an indication that the offset times are consistent. In addition, the sampling may stop when the average of the tRTD is less than the maximum value of the tRTD multiplied by a first factor, indicating that the round trip delay times are small. When the sampling has stopped, then a weighted tOff is calculated using the weight of the tRTD, thereby giving lower latency tOff time samples more weight than higher latency tOff time samples.

The weighted tOff represents the weighted average clock offset between the server20and the client device16. A positive client device clock offset indicates that the client device clock30is ahead of the server clock50, whereas a negative result indicates that the client device clock30is behind the server clock50. Periodically, the server application26may repeat the synchronization process in order to account for clock drift or other factors that may change the offset. In various embodiments, the server application26may repeat the synchronization process every 1, 5, or 10 minutes.

When the server application26is accessed by the event application24, the server application26may receive the event code for a particular game that is to be played by the player. The server application26may associate the event code with an address or other electronic data identifier that corresponds to the particular personal input18. The event code may also identify the client device16that is receiving the game that the player is playing. In addition, the server application26may also determine the personal input clock offset with the synchronization process discussed above, with the exception that the sampling occurs between the server20and the personal input18. The server application26may also send other initializing commands or codes to the personal input18to alert the player that he has joined a game.

After the server application26has verified all of the players for a particular game, the server application26may transmit or stream the content of the game to the client device16to be shown on the display14for each client device16that requested to join the game. The server application26may continue transmitting the game to each client device16until the game has ended.

Once each client device16begins showing the game on the display14, the display application22running on each client device16may transmit the event start time to the server application26. The server application26may adjust the event start time to include the client device clock offset for each client device16, thereby creating a client device adjusted event start time. The server application26may record each client device adjusted event start time.

The server application26may have utilized the event code to previously associate each player's personal input18with a client device16and accompanying display14on which the player's game is being shown. For each personal input18, the server application26may further adjust the client device adjusted event start time of the associated client device16to include the personal input clock offset of the personal input18, thereby creating the personal input adjusted event start time. The server application26may then transmit the personal input adjusted event start time to the event application24running on each personal input18.

The game may be presented as a sequence of actions that previously have been determined to occur. In exemplary embodiments, the actions are previously-determined dance moves that are performed to a song. Before the game is released to the public, a reference data file may be created that includes the optimal responses to each event. Each game (or song) may have its own reference data file. The reference data file may be created by having an expert player, who is retaining a personal input18, play the game while capturing and recording the sensor data from the sensor1A. In exemplary embodiments, the reference data file is created by having a professional dancer dance to the song while holding or wearing a personal input18. In addition, the sensor data may be measured at the standard data capture rate of 20 Hz, or one measurement every 50 ms. Accordingly, the reference data file may include an entry of measured data for every 50 ms of the song. Thus, for example, a three-minute song may have a reference data file with 3,600 entries (20 entries/second×180 seconds) of measured data timestamped at 50 ms intervals. As with the player's sensor data being transmitted from the personal inputs18, each sensor data value of the reference data file is the vector magnitude of the measured sensor data. In some embodiments, the server application26may utilize more than one data files for the same game (or song) and average the data files to obtain the reference data file. For example, in embodiments of the present invention, three data files may be obtained and then averaged (or other mathematical technique) to calculate the reference data file. The server application26may include, or have access to, the reference data file. In alternative embodiments of the present invention, the reference data file may be generated, including automatically generated, from a standardized or ideal model of the measured sensor data, as determined by a computer software program.

While the game is being played, each personal input18is transmitting packets of sensor data, captured by the sensor40, through the communication network12to the server20. The score for each player is determined by his respective sensor data and how closely the sensor data matches the reference data. Accordingly, the server application26may compare the sensor data to the reference data. Each packet of sensor data includes a schedule time, relative to the beginning of the game (or song), and a block of sensor data. The server application26may retrieve data from the reference data file that corresponds to the data of the packet from the personal input18. Following the example set forth above in discussing the event application24, the server application26may retrieve reference data from 0 s to 2.5 s to compare to the first packet from the personal input18. The server application26may retrieve reference data from 2.5 s to 4 s to compare to the second packet from the personal input18, and so forth.

To calculate how close the sensor data is to the reference data, the server application26may perform a correlation, such as a Spearman rank correlation, between a player's sensor data and the reference data. Specifically, the server application26may perform a correlation between the player's sensor data and the reference data on a packet by packet basis—correlating a packet of sensor data to a corresponding block of reference data. Each packet of sensor data generally corresponds to a dance move. Thus, each dance move is scored separately. Typically, the greater the correlation between the player's sensor data and the reference data, the greater the score for the player. In addition, in some embodiments, the server application26may compare the player's sensor data with three reference data files and average the comparison results or use the median result to determine the player's score.

The server application26performs the same comparison calculation for each player's sensor data and determines a score for each player. The server application26may identify a player by addresses or other alphanumeric identifiers contained within the packet data, such as within a header, that is transmitted from the personal inputs18to the server20. The total score may be updated after each packet of sensor capture data, or corresponding dance move, is scored. The server application26may transmit the top eight, or other predetermined number of, scores to all of the client devices16so that the scores can be shown on the displays14. After the game is over, the server application26may transmit the top scores to each client device16to be shown on the display14. In addition, the server application26may transmit each player's score to his or her personal input18. Furthermore, the server application26may transmit the commands or codes to the personal input18of the winner so that the personal input18lights up, makes a sound, vibrates, or combinations thereof.

The system10may operate as follows. A plurality of participants may be interested in participating in an interactive event. For example, a plurality of players may be interested in playing a game—typically, as contestants in the same game. In some situations, the players may be geographically separated with each player having his own client device16, display14, and personal input18, as seen inFIGS. 2-5. In other situations, a plurality of players may share the same location such as a large room, a hall, an auditorium, or the like, wherein each player possesses his own personal input18a-18f, but all the players view the same display14(with one or more screens) connected to a client device16, as shown inFIG. 6. In any case, one or more players may use the display application22being executed on a client device16. In certain situations, the players may first access a third-party social networking program on the client device16that has a link to the display application22. The players may then select the link to access the display application22. In such situations, the name of the player, as registered with the third-party social networking program, may be passed on to the display application22and used to identify the player when displaying scores and so forth.

Once the players choose a game to play, the server application26may transmit an event code to each client device16that requested the game. The event code may be shown on the display14. The event code may identify the particular game as well as the particular client device16that is receiving the game. The players may then enter the code on their personal inputs18, which subsequently transmits the code to the server20. The server application26may then engage in the synchronization process with both the display application22and the event application24, as discussed above. These processes may determine the client device clock30offset and the personal input clock30offset.

After the initialization and synchronization are complete, the server application26may transmit, or stream, the game to all of the client devices16that requested it. Each display application22may transmit the event start time to the server application26. The server application26may adjust the each event start time to form the client device adjusted event start time associated with each client device16. For each personal input18, the server application26retrieves the associated client device adjusted event start time and further adjusts it with the personal input clock offset to form the personal input adjusted event start time. The server application26may then transmit the appropriate personal input adjusted event start time to each personal input18.

As the game is shown on the displays14, the players may respond by dancing and mimicking the motions of the dancer on the display14. Typically, a player may hold the personal input18in his or her hand while dancing, or the personal input18may by coupled to the player's arm or wrist. The sensor40may record sensor data as the player moves while dancing, and the event application24may convert the sensor data to the standard data capture rate, if necessary. Furthermore, the event application24may create packets of sensor data that correspond to certain actions of the game, such as dance moves. Each packet may include a time that is relative to the personal input adjusted event start time and a block of sensor data that includes the sensor data captured for the period of time during which the event (dance move) occurred. The time may be one of a plurality of times that is part of a predetermined schedule that has recorded the start time of each event (dance move). The packets are communicated from the event application24of each personal input18to the server application26.

The server application26receives the sensor data packets from each personal input18and may identify the source of each packet by an address or other electronic data identifier that may be parsed from the packet. The address may be associated with a particular player, and thus the sensor data may be associated with the player as well. Each packet includes the starting time of the block of sensor data. The server application26may retrieve the reference data from the reference file with the same starting time to compare with the player's sensor data. The server application26may perform a correlation on the player's sensor data and the reference data to determine a score for the block of data and thus the event (dance move) of the game. Generally, a higher correlation results in a higher score. The server application26may perform the same calculations and accumulate the score for each player as the game continues.

As the game is being shown on the display14, the display application22may monitor its progress. If there is a delay in receiving the game from the server20that results in an interruption of the game being shown on the display14, the display application22may send a message to the server application26, noting the amount of the delay. The server application26may in turn communicate a message to the event applications24running on the personal inputs18of the players watching the interrupted display14. The event application24may adjust the personal input adjusted event start time by the amount of the delay so that the sensor data capture packets will include the correct sensor data. In some embodiments, the event application24may discard the sensor data that was captured during the delay of the game on the display14.

After the game is over, the highest scores may be shown on each display14. Furthermore, the server application26may transmit the commands or codes to the personal input18of the winner so that the personal input18lights up, makes a sound, vibrates, or combinations thereof.

Although the invention has been described with reference to the embodiments illustrated in the attached drawing figures, it is noted that equivalents may be employed and substitutions made herein without departing from the scope of the invention as recited in the claims. For example, the present invention has been described with reference to a predetermined set of actions, such as a computerized avatar performing a set of dance movements. Embodiments of the present invention comprise determining the reference data file and comparing a measured sensor data file to the reference data file so as to score a player that is mimicking the avatar's dance movements. However, in other types of games, such as a first-person shooter (“FPS”) type game or reaction-based games (e.g., a quiz game) that do not involve a predetermined set of time-based actions, a certain subset of features of embodiments of the present invention may only need to be performed. For example, determining the clock offset of each player's personal input and display could be applied to most games where many players share the same screen and each players personal input has an individually variable latency. Furthermore, it is to be understood that the invention does not require a pre-recorded choreography—any player may dance in front of the other players and act as the reference dance. Determining the score would be different as there would be no standard reference data to which each player's sensor data would be compared. The score would then be determined by comparing one player's sensor data with another player's sensor data directly. For example, in a quiz game, the sensor data would correspond to the player's actuating a response button, and the clock offset would determine the offset of the personal input's clock. In even further embodiments of the present invention, the interactive event may be participating in karaoke, with the personal input having a sensor for receiving audio, such as the microphone as discussed above. Yet further types of interactive events may be a fitness event that instructs the user on performing a fitness routine and monitors, via the sensors associated with the personal input, the user's accuracy in performing the routine or the user's capabilities in performing the routine, such as exerting a particular force as monitored by the force/load sensor.

Claims

- A non-transitory computer readable storage medium with an executable program stored thereon for participating in an interactive event, wherein the program instructs a processing element to perform the following steps: transmitting, via a network, an indication of the interactive event to a display device;receiving a request from at least one player, via a personal telecommunications device, for participating in the interactive event;determining a display device clock offset of the display device with respect to a server clock;determining a personal telecommunications device clock offset of the personal telecommunications device with respect to the server clock;transmitting the event to the at least one display device to be shown on a display;receiving a plurality of packets from the player via the personal telecommunications device, each packet including sensor data from an accelerometer in the personal telecommunications device and corresponding to the motion of the personal telecommunications device for a predetermined period of time;and comparing the sensor data to reference data to generate a score.

- The non-transitory computer readable storage medium of claim 1 , wherein the program further instructs the processing element to perform the step of receiving an event start time corresponding to a time at which the start of the event is shown on the display from the at least one display device and adjusting the event start time to form a display device adjusted event start time which includes the display device clock offset.

- The non-transitory computer readable storage medium of claim 2 , wherein the program further instructs the processing element to perform the steps of: adjusting the display device adjusted event start time to form a player event start time which includes the personal telecommunications device clock offset and transmitting the player event start time to the personal telecommunications device.

- The non-transitory computer readable storage medium of claim 3 , wherein each packet further includes a time relative to the player event start time.

- The non-transitory computer readable storage medium of claim 1 , wherein comparing the sensor data to reference data includes correlating the sensor data from the packet with reference data from a corresponding period of time.

- The non-transitory computer readable storage medium of claim 1 , wherein the program further instructs the processing element to perform the step of transmitting computer-executable instructions to be executed by the processing element of the personal telecommunications device during the interactive event.

- The non-transitory computer readable storage medium of claim 1 , wherein the request includes the indication of the event.

- The non-transitory computer readable storage medium of claim 1 , wherein the indication of the event is a quick response code.

- The non-transitory computer readable storage medium of claim 1 , wherein each packet further includes audio data for the predetermined period of time.

- A system for interactive events, comprising: a display device operable to: receive, over a network, an indication of an interactive event;display the indication;receive, over a network, display data for the interactive event;and display the display data for the interactive event;a server operable to: transmit the indication of the interactive event to the display device;transmit the display data for the interactive event to the display device;receive, from a personal telecommunications device of a player, the indication of the event;receive a plurality of packets from the personal telecommunications device of the player, each packet including sensor data from an accelerometer in the personal telecommunications device of the player and corresponding to the motion of the personal telecommunications device of the player for a predetermined period of time;determine a clock offset of the personal telecommunications device with respect to a server clock;and comparing the sensor data, as adjusted by the clock offset of the personal telecommunications device of the player, to reference data to generate a score for the player;and a plurality of personal telecommunications devices, each personal telecommunications device of the plurality of the telecommunications devices operable to: receive input of the indication of the interactive event;transmit the indication of the interactive event to the server;and generate and transmit, to the server, a plurality of packets, each packet including sensor data from an accelerometer in the personal telecommunications device and corresponding to the motion of the personal telecommunications device for a predetermined period of time.

- The system of claim 10 , wherein the sensor data is further adjusted by a display device clock offset before being compared to the reference data to calculate the score for the player.

- The system of claim 10 , wherein the server is further operable to transmit computer-executable instructions to the plurality of personal telecommunications devices for execution during the interactive event.

- The system of claim 10 , wherein the indication of the interactive event is a quick-response code.

- The system of claim 10 , wherein the indication of the interactive event is a numeric code.

- The system of claim 10 , wherein at least a portion of the plurality of packets generated and transmitted by each of the personal telecommunications devices further includes audio data.

- One or more non-transitory computer-readable media storing computer-executable instructions for an interactive event, comprising: a first set of computer-executable instructions which, when executed by a first processor, cause a display device to: receive an indication of an interactive event;display the indication;receive display data for the interactive event;and display the display data for the interactive event;a second set of computer-executable instructions which, when executed by a second processor, cause a server to: transmit the indication of the interactive event to the display device;transmit the display data for the interactive event to the display device;receive, over a network and from a personal telecommunications device of a player, the indication of the event;receive, over the network, a plurality of packets from the personal telecommunications device of the player, each packet including sensor data from an accelerometer in the personal telecommunications device of the player and corresponding to the motion of the personal telecommunications device of the player for a predetermined period of time;determine a clock offset of the personal telecommunications device with respect to a server clock;and comparing the sensor data, as adjusted by the clock offset of the personal telecommunications device of the player, to reference data to generate a score for the player;and a third set of computer-executable instructions which, when executed by a third processor, cause a personal telecommunications device to: receive input of the indication of the interactive event;transmit the indication of the interactive event to the server;and generate and transmit, to the server, a plurality of packets, each packet including sensor data from an accelerometer in the personal telecommunications device and corresponding to the motion of the personal telecommunications device for a predetermined period of time.

- The computer-readable media of claim 16 , wherein the second set of computer-executable instructions further causes the second processor to further adjust the sensor data by a display device clock offset before being compared to the reference data to calculate the score for the player.

- The computer-readable media of claim 16 , wherein the second set of computer-executable instructions further causes the second processor to transmit a fourth set of computer-executable instructions to the plurality of personal telecommunications devices for execution during the interactive event.

- The computer-readable media of claim 16 , wherein the indication of the interactive event is a quick-response code.

- The computer-readable media of claim 16 , wherein at least a portion of the plurality of packets generated and transmitted by the third set of computer-executable instructions further includes audio data.

- The computer-readable media of claim 16 , wherein the first processor, the second processor and the third processor are all distinct.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.