U.S. Pat. No. 9,165,404

METHOD, APPARATUS, AND SYSTEM FOR PROCESSING VIRTUAL WORLD

AssigneeSamsung Electronics Co Ltd

Issue DateJuly 12, 2012

Illustrative Figure

Abstract

An apparatus and method for processing a virtual world. The apparatus for controlling a facial expression of an avatar in a virtual world using a facial expression of a user in a real world may include a receiving unit to receive sensed information that is sensed by an intelligent camera, the sensed information relating to a facial expression basis of the user, and a processing unit to generate facial expression data of the user for controlling the facial expression of the avatar, using initialization information representing a parameter for initializing the facial expression basis of the user, a 2-dimensional/3-dimensional (2D/3D) model defining a coding method for a face object of the avatar, and the sensed information.

Description

DETAILED DESCRIPTION Reference will now be made in detail to example embodiments, examples of which are illustrated in the accompanying drawings, wherein like reference numerals refer to the like elements throughout. Example embodiments are described below to explain the present disclosure by referring to the figures. FIG. 1illustrates a system enabling interaction between a real world and a virtual world, according to example embodiments. Referring toFIG. 1, the system may include an intelligent camera111, a generic 2-dimensional/3-dimensional (2D/3D) model100, an adaptation engine120, and a digital content provider130. The intelligent camera111represents a sensor that detects a facial expression of a user in a real world. Depending on embodiments, a plurality of intelligent cameras111and112may be provided in the system to detect the facial expression of the user. The intelligent camera111may transmit the sensed information that relates to a facial expression basis to a virtual world processing apparatus. The facial expression basis is described further below, at least with respect toFIG. 3. Table 1 shows an extensible markup language (XML) representation of syntax regarding types of the intelligent camera, according to the example embodiments. TABLE 1 Table 2 shows a binary representation of syntax regarding types of the intelligent camera, according to the example embodiments. TABLE 2(NumberFacialExpressionSensorType {of bits)(Mnemonic)FacialExpressionBasisFlagIntelligentCameraIntelligentCameraTypeif(FacialExpressionBasisFlag ) {NumOfFacialExpressionBasis7uimsbffor( k=0;kτt≥τmin[Equation3] FIG. 4illustrates a virtual world processing method, according to example embodiments. Referring toFIG. 4, in operation410, the virtual world processing method may receive sensed information from an intelligent camera, the sensed information sensed by the intelligent camera with respect to a facial expression basis of a user. The sensed information may include information on an intelligent camera facial expression basis type which defines the facial expression basis of the user, sensed by the intelligent camera. The intelligent camera facial expression basis type may include the facial expression basis. The facial expression ...

DETAILED DESCRIPTION

Reference will now be made in detail to example embodiments, examples of which are illustrated in the accompanying drawings, wherein like reference numerals refer to the like elements throughout. Example embodiments are described below to explain the present disclosure by referring to the figures.

FIG. 1illustrates a system enabling interaction between a real world and a virtual world, according to example embodiments.

Referring toFIG. 1, the system may include an intelligent camera111, a generic 2-dimensional/3-dimensional (2D/3D) model100, an adaptation engine120, and a digital content provider130.

The intelligent camera111represents a sensor that detects a facial expression of a user in a real world. Depending on embodiments, a plurality of intelligent cameras111and112may be provided in the system to detect the facial expression of the user. The intelligent camera111may transmit the sensed information that relates to a facial expression basis to a virtual world processing apparatus. The facial expression basis is described further below, at least with respect toFIG. 3.

Table 1 shows an extensible markup language (XML) representation of syntax regarding types of the intelligent camera, according to the example embodiments.

TABLE 1

Table 2 shows a binary representation of syntax regarding types of the intelligent camera, according to the example embodiments.

TABLE 2(NumberFacialExpressionSensorType {of bits)(Mnemonic)FacialExpressionBasisFlagIntelligentCameraIntelligentCameraTypeif(FacialExpressionBasisFlag ) {NumOfFacialExpressionBasis7uimsbffor( k=0;k<NumOfFacialExpressionBasis; k++ ) {FacialExpressionBasis[k]FacialExpressionBasisType}}}FacialExpressionBasisType {facialExpressionBasisIDFlag1bslbffacialExpressionBasisValueFlag1bslbffacialExpressionBasisUnitFlag1bslbfif(facialExpressionBasisIDFlag) {facialExpressionBasisIDFacialExpressionBasisIDCSType}if(facialExpressionBasisValueFlag) {facialExpressionBasisValue32fsbf}if(facialExpressionBasisUnitFlag) {facialExpressionBasisUnitunitType}}

Table 3 shows descriptor components of semantics regarding the types of the intelligent camera, according to the example embodiments.

TABLE 3NameDefinitionFacialExpressionSensorTypeTool for describing a facial expression sensor.FacialExpressionBasisFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.NumOfFacialExpressionBasisThis field, which is only present in the binary representation,indicates the number of facial expression basis in this sensedinformation.FacialExpressionBasisDescribes each facial expression basis detected by thecamera.FacialExpressionBasisTypeTool for describing each facial expression basis.facialExpressionBasisIDFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.facialExpressionBasisValueFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.facialExpressionBasisUnitFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.facialExpressionBasisIDDescribes the identification of the associated facial expressionbasis based as a reference to the classification scheme termprovided by FacialExpressionBasisIDCS defined in A.X ofISO/IEC 23005-X.facialExpressionBasisValueDescribes the value of the associated facial expression basis.facialExpressionBasisUnitDescribes the unit of each facial expression basis. The defaultunit is a percent.Note 1: the unit of each facial expression basis can berelatively obtained by the range provided by theFacialExpressionCharacteristicsSensorType. The minimumvalue shall be 0% and the maximum value shall be 100%.Note 2: the unit of each facial expression basis can also usethe unit defined in Annex C of ISO/IEC14496-2.

Table 4 shows a facial expression basis integrated data coding system (IDCS) regarding the types of the intelligent camera, according to the example embodiments.

TABLE 4open jawlower top middle inner lipraise bottom middle inner lipstretch left inner lip cornerstretch right inner lip cornerlower midpoint between left corner and middle of top inner liplower midpoint between right corner and middle of top innerlipraise midpoint between left corner and middle of bottom innerlipraise midpoint between right corner and middle of bottom innerlipraise left inner lip cornerraise right inner lip cornerthrust jawshift jawpush bottom middle lippush top middle lipdepress chinclose top left eyelidclose top right eyelidclose bottom left eyelidclose bottom right eyelidyaw left eyeballyaw right eyeballpitch left eyeballpitch right eyeballthrust left eyeballthrust right eyeballdilate left pupildilate right pupilraise left inner eyebrowraise right inner eyebrowraise left middle eyebrowraise right middle eyebrowraise left outer eyebrowraise right outer eyebrowsqueeze left eyebrowsqueeze right eyebrowpuff left cheekpuff right cheeklift left cheeklift right cheekshift tongue tipraise tongue tipthrust tongue tipraise tonguetongue rollhead pitchhead yawhead rolllower top middle outer lipraise bottom middle outer lipstretch left outer lip cornerstretch right outer lip cornerlower midpoint between left corner and middle of top outer liplower midpoint between right corner and middle of top outerlipraise midpoint between left corner and middle of bottom outerlipraise midpoint between right corner and middle of bottom outerlipraise left outer lip cornerraise right outer lip cornerstretch left side of noisestretch right side of noiseraise nose tipbend nose tipraise left earraise right earpull left earpull right ear

The 2D/3D model100denotes a model to define a coding method for a face object of an avatar in the virtual world. The 2D/3D model100, according to example embodiments, may include a face description parameter (FDP), which defines a face, and a facial animation parameter (FAP), which defines motions. The 2D/3D model100may be used to control a facial expression of the avatar in the virtual world processing apparatus. That is, the virtual world processing apparatus may generate facial expression data of the user for controlling the facial expression of the avatar, using the 2D/3D model100and the sensed information received from the intelligent camera111. In addition, based on the generated facial expression data, the virtual world processing apparatus may control a parameter value of the facial expression of the avatar.

The adaptation engine120denotes a format conversion engine for exchanging contents of the virtual world with the intelligent camera of the real world.FIG. 1illustrates an example embodiment in which the system includes N adaptation engines, however, the present disclosure is not limited thereto.

The digital content provider130denotes a provider of various digital contents including an online virtual world, a simulation environment, a multi user game, broadcasting multimedia production, peer-to-peer multimedia production, “package type” contents, such as, a digital versatile disc (DVD) or a game, and the like, in real time or non real time. The digital content provider130may be a server operated by the provider.

Under the aforementioned system structure, the virtual world processing apparatus may control the facial expression of the avatar using the facial expression of the user. Hereinafter, the virtual world processing apparatus will be described in detail with reference toFIG. 2.

FIG. 2illustrates a structure of a virtual world processing apparatus200according to example embodiments.

Referring toFIG. 2, the virtual world processing apparatus200may include a receiving unit210, a processing unit220, and, in another embodiment, may further include an output unit230.

The receiving unit210may receive sensed information from an intelligent camera111, that is, the sensed information sensed by the intelligent camera111with respect to a facial expression basis of a user.

The sensed information may include information on an intelligent camera facial expression basis type which defines the facial expression basis of the user, sensed by the intelligent camera111. The intelligent camera facial expression basis type may include the facial expression basis. The facial expression basis may include at least one attribute selected from a facial expression basis identifier (ID) for identification of the facial expression basis, a parameter value of the facial expression basis, and a parameter unit of the facial expression basis.

Table 5 shows an XML representation of syntax regarding the intelligent camera facial expression basis type, according to the example embodiments.

TABLE 5

The processing unit220may generate facial expression data of the user, for controlling the facial expression of the avatar, using (1) initialization information denoting a parameter for initializing the facial expression basis of the user, (2) a 2D/3D model defining a coding method for a face object of the avatar, and (3) the sensed information.

The initialization information may denote a parameter for initializing the facial expression of the user. Depending on embodiments, for example, when the avatar represents an animal, a facial shape of the user in the real world may be different from a facial shape of the avatar in the virtual world. Accordingly, normalization of the facial expression basis with respect to the face of the user is required. Therefore, the processing unit220may normalize the facial expression basis using the initialization information.

The initialization information may be received from any one of the intelligent camera111and an adaptation engine120. That is, when the user has not photographed his or her face using the intelligent camera111, a sensing operation is necessary, in which the user senses the facial expression basis of his or her face using the intelligent camera111. However, since such initialization requires a dedicated time, the intelligent camera111may be designed to store the initialization information in a database (DB) of the adaptation engine or a server for reuse of the initialization information once the initialization is completed. For example, when control of the facial expression of the avatar is required again with respect to the same user or when another intelligent camera different from the intelligent camera used in the initialization operation is to be used, the virtual world processing apparatus200may reuse previous initialization information, without requiring the user to perform the initialization operation again.

When the initialization information is sensed by the intelligent camera111, the initialization information may include information on an initialization intelligent camera facial expression basis type that defines a parameter for initializing the facial expression basis of the user, sensed by the intelligent camera111.

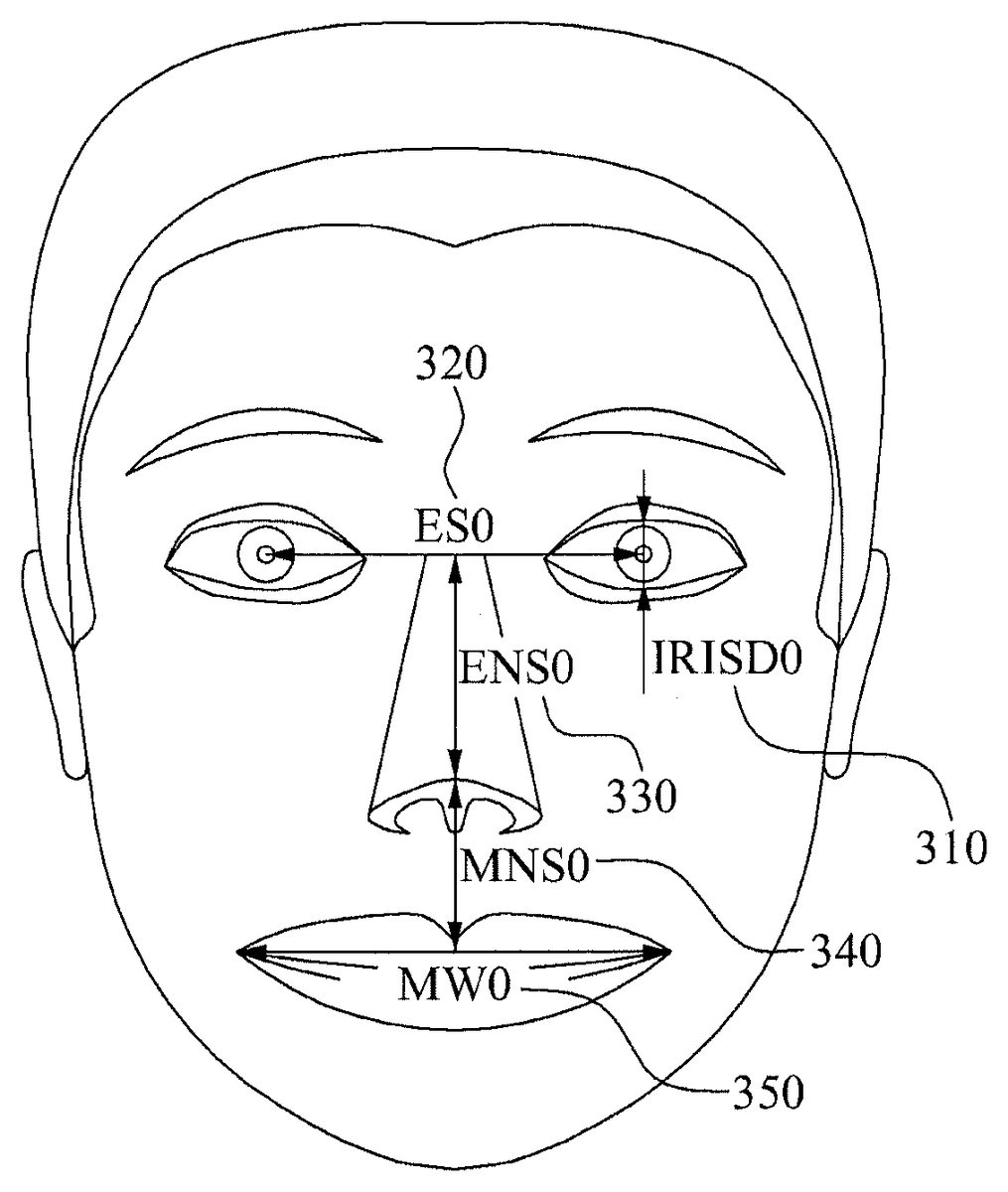

In addition, referring toFIG. 3, the initialization intelligent camera facial expression basis type may include at least one element selected from (1) a distance (IRISD0)310between an upper eyelid and a lower eyelid while the user has a neutral facial expression, (2) a distance (ES0)320between two eyes while the user has the neutral facial expression, (3) a distance (ENS0)330between the two eyes and a nose while the user has the neutral facial expression, (4) a distance (MNS0)340between the nose and a mouth while the user has neutral facial expression, (5) a width (MW0)350of the mouth while the user has the neutral facial expression, and (6) a facial expression basis range with respect to a parameter value of the facial expression basis of the user.

Elements of the facial expression basis range may include at least one attribute selected from an ID (FacialExpressionBasisID) for identification of the facial expression basis, a maximum value (MaxFacialExpressionBasisParameter) of the parameter value of the facial expression basis, a minimum value (MinFacialExpressionBasisParameter) of the parameter value of the facial expression basis, a neutral value (NeutralFacialExpressionBasisParameter) denoting the parameter value of the facial expression basis in the neutral facial expression, and a parameter unit (FacialExpressionBasisParameterUnit) of the facial expression basis.

Table 6 shows an XML representation of syntax regarding the initialization intelligent camera facial expression basis type, according to the example embodiments.

TABLE 6

When the initialization information stored in the DB of the adaptation engine or the server is called, the initialization information according to the example embodiments may include information on an initialization intelligent camera device command that defines a parameter for initializing the facial expression basis of the user, stored in the DB.

In addition, referring toFIG. 3, the initialization intelligent camera device command may include at least one element selected from (1) the distance (IRISD0)310between the upper eyelid and the lower eyelid while the user has the neutral facial expression, (2) the distance (ES0)320between two eyes while the user has neutral facial expression, (3) the distance (ENS0)330between the two eyes and the nose while the user has the neutral facial expression, (4) the distance (MNS0)340between the nose and the mouth while the user has the neutral facial expression, (5) the width (MW0)350of the mouth while the user has the neutral facial expression, and (6) the facial expression basis range with respect to the parameter value of the facial expression basis of the user.

Also, the elements of the facial expression basis range may include at least one attribute selected from the ID (FacialExpressionBasisID) for identification of the facial expression basis, the maximum value (MaxFacialExpressionBasisParameter) of the parameter value of the facial expression basis, the minimum value (MinFacialExpressionBasisParameter) of the parameter value of the facial expression basis, the neutral value (NeutralFacialExpressionBasisParameter) denoting the parameter value of the facial expression basis in the neutral facial expression, and the parameter unit (FacialExpressionBasisParameterUnit) of the facial expression basis.

Table 7 shows an XML representation of syntax regarding the initialization intelligent camera device command, according to the example embodiments.

TABLE 7

Table 8 shows an XML representation of syntax regarding a facial morphology sensor type, according to example embodiments.

TABLE 8

Table 9 shows a binary representation of syntax regarding the facial morphology sensor type, according to the example embodiments.

TABLE 9(Number ofFacialMorphologySensorType {bits)(Mnemonic)IrisDiameterFlag1BslbfEyeSeparationFlag1BslbfEyeNoseSeparationFlag1BslbfMouseNoseSeparationFlag1BslbfMouseWidthFlag1BslbfunitFlag1BslbfSensedInfoBaseSensedInfoBaseTypeif(IrisDiameterFlag) {IrisDiameter32Fsbf}if(EyeSeparationFlag) {EyeSeparation32Fsbf}if(EyeNoseSeparationFlag) {EyeNoseSeparation32Fsbf}if(MouseNoseSeparationFlag) {MouseNoseSeparation32Fsbf}if(MouseWidthFlag) {MouseWidth32Fsbf}if(unitFlag) {unitFlagunitType}}

Table 10 shows descriptor components of semantics regarding the facial morphology sensor type, according to the example embodiments.

TABLE 10NameDefinitionFacialMorphologySensorTypeTool for describing a facial morphology sensor sensedinformation.IrisDiameterFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.EyeSeparationFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.EyeNoseSeparationFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.MouseNoseSeparationFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.MouseWidthFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.unitFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.IrisDiameterDescribes IRIS Diameter (by definition it is equal to thedistance between upper and lower eyelid) in neutral face.EyeSeparationDescribes eye separation.EyeNoseSeparationDescribes eye-nose separation.MouseNoseSeparationDescribes mouth-nose separation.MouthWidthDescribes mouth-width separation.UnitSpecifies the unit of the sensed value, if a unit other than thedefault unit is used, as a reference to a classification schemeterm that shall be using the mpeg7:termReferenceTypedefined in 7.6 of ISO/IEC 15938-5:2003. The CS that may beused for this purpose is the UnitTypeCS defined in A.2.1 ofISO/IEC 23005-6

In addition, Table 11 shows an XML representation of syntax regarding a facial expression characteristics sensor type, according to example embodiments.

TABLE 11

Table 12 shows a binary representation of syntax regarding the facial expression characteristics sensor type, according to the example embodiments.

TABLE 12(NumberofFacialExpressionCharacteristicsSensorType {bits)(Mnemonic)FacialExpressionBasisRangeFlag1bslbfSensedInfoBaseSensedInfoBaseTypeif(FacialExpressionBasisRangeFlag ) {NumOf FacialExpressionBasisRange7uimsbffor( k=0;k< NumOfFacialExpressionBasisRange ;k++ ) {FacialExpressionBasisRange[k]FacialExpressionBasisRangeType}}}FacialExpressionBasisRangeType {facialExpressionBasisIDFlag1bslbfmaxValueFacialExpressionBasisFlag1bslbfminValueFacialExpressionBasisFlag1bslbfneutralValueFacialExpressionBasisFlag1bslbffacialExpressionBasisUnitFlag1bslbfif(facialExpressionBasisIDFlag) {facialExpressionBasisIDFacialExpressionBasisIDCSType}if(maxValueFacialExpressionBasisFlag) {maxValueFacialExpressionBasis32fsbf}if(minValueFacialExpressionBasisFlag) {minValueFacialExpressionBasis32fsbf}if(neutralValueFacialExpressionBasisFlag) {neutralValueFacialExpressionBasis32fsbf}if(facialExpressionBasisUnitFlag) {facialExpressionBasisUnitunitType}}

Table 13 shows descriptor components of semantics regarding the facial expression characteristics sensor type, according to the example embodiments.

TABLE 13NameDefinitionFacialExpressionCharacteristicsSensorTypeTool for describing a facial expression characteristics sensorsensed information.FacialExpressionBasisRangeFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.NumOfFacialExpressionBasisRangeThis field, which is only present in the binary representation,indicates the number of facial expression basis range in thissensed information.FacialExpressionBasisRangeDescribes the range of each of facial expression basisparameters.FacialExpressionBasisRangeTypeTool for describing a facial expression basis range.facialExpressionBasisIDFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.maxValueFacialExpressionBasisFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.minValueFacialExpressionBasisFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.neutralValueFacialExpressionBasisFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.facialExpressionBasisUnitFlagThis field, which is only present in the binary representation,signals the presence of the activation attribute. A value of “1”means the attribute shall be used and “0” means the attributeshall not be used.facialExpressionBasisIDDescribes the identification of associated facial expressionbasis based as a reference to a classification scheme termprovided by FacialExpressionBasisIDCS defined in A.X ofISO/IEC 23005-X.maxValueFacialExpressionBasisDescribes the maximum value of facial expression basisparameter.minValueFacialExpressionBasisDescribes the minimum value of facial expression basisparameter.neutralValueFacialExpressionBasisDescribes the value of facial expression basis parameter inneutral face.facialExpressionBasisUnitDescribes the corresponding measurement units of thedisplacement amount described by a facial expression basisparameter.

Table 14 shows a unit type, that is, a unit of length or distance, specific to measure a displacement of each facial expression basis, regarding the facial expression characteristics type, according to the example embodiments.

TABLE 14IRIS Diameter

A unit of length or distance equal to one IRISD0/1024, specific to measure a displacement of each facial expression basis for an individual user.

Eye Separation

A unit of length or distance equal to one ES0/1024, which is specific to measure a displacement of each facial expression basis for an individual user.

Eye-Nose Separation

A unit of length or distance equal to one ENS0/1024, which is specific to measure a displacement of each facial expression basis for an individual user.

Mouth-Nose Separation

A unit of length or distance equal to one MNS0/1024, which is specific to measure a displacement of each facial expression basis for an individual user.

Mouth-Width Separation

A unit of length or distance equal to one MW0/1024, which is specific to measure a displacement of each facial expression basis for an individual user.

Angular UnitA unit of plane angle equal to 1/100000 radian.

According to an aspect of the present disclosure, the virtual world processing apparatus200may further include an output unit230.

The output unit230may output the generated facial expression data to the adaptation engine120to control the facial expression of the avatar in the virtual world. In addition, the output unit230may output the generated facial expression data to the server and store the facial expression data in the DB. The facial expression data stored in the DB may be used as the initialization intelligent camera device command.

The processing unit220may update, in real time, the facial expression basis range of the initialization information based on the sensed information received from the intelligent camera. That is, the processing unit220may update, in real time, at least one of the maximum value and the minimum value of the parameter value of the facial expression basis, based on the sensed information.

Depending on different embodiments, the processing unit220may obtain, from the sensed information, (1) a face image of the user, (2) a first matrix representing translation and rotation of a face of the user, and (3) a second matrix extracted from components of the face of the user. The processing unit220may calculate the parameter value of the facial expression basis based on the obtained information, that is, the face image, the first matrix, and the second matrix. In addition, the processing unit220may update at least one of the maximum value and the minimum value, in real time, by comparing the calculated parameter value of the facial expression basis and a previous parameter value of the facial expression basis. For example, when the calculated parameter value is greater than the previous parameter value, the processing unit220may update the maximum value into the calculated parameter value.

The foregoing operation of the processing unit220may be expressed by Equation 1 as follows.

τ=argminV={τ}R-1T-τ·E[Equation1]

Here, T denotes the face image of the user, R−1denotes an inverse of the first matrix representing translation and rotation of the face of the user, and E denotes the second matrix extracted from the components of the face of the user. The components of the face of the user may include a feature point or a facial animation unit which is a basic element representing a facial expression. In addition, τ denotes a matrix indicating sizes of respective components.

For example, presuming that the neutral facial expression of the user, for example a blank face, is a neutral expression T0, the processing unit220may extract τ meeting Equation 1 by a global optimization algorithm or a local optimization method, for example, gradient descent. Here, the extracted value may be expressed as τ0. The processing unit220may store the extracted value as a value constituting the neutral expression of the user.

In addition, presuming that various facial expressions of the user are a neutral expression T, the processing unit220may extract r meeting Equation 1 by a global optimization algorithm or a local optimization method such as gradient descent. Here, the processing unit220may use the extracted value in determining a minimum value and a maximum value of the facial expression basis range, that is, a range of the facial expressions that can be made by the user.

In this case, the processing unit220may determine the minimum value and the maximum value of the facial expression basis range using Equation 2.

τt,max=max{τt-1,max,τt}

τt,min=min{τt-1,min,τt} [Equation 2]

Since an error may be generated during image estimation, the processing unit220may compensate an outlier when estimating τt, maxand τt, minof Equation 2. Here, the processing unit220may compensate the outlier by applying smoothing or median filtering with respect to an input value τt, of Equation 2. According to other example embodiments, the processing unit220may perform the compensation through outlier handling.

When a value estimated by the foregoing process does not change for a predetermined time, the processing unit220may determine the facial expression basis range using the maximum value and the minimum value determined by Equation 2.

Three values τ0, τmax, and τminestimated by the processing unit220may be used as initial parameters for generating the facial expression made by the user. For example, with regard to lips, the processing unit220may use a left end point of an upper lip as a neutral value, the maximum value, and the minimum value of a parameter of the facial expression basis determining a lip expression.

The processing unit220may control the parameter value of the facial expression basis of the avatar using a mapping function and sensed information. That is, the processing unit220may control the facial expression of the avatar using the mapping function.

The mapping function defines mapping relations between a range of the facial expression basis with respect to the user and a range of the facial expression with respect to the avatar. The mapping function may be generated by the processing unit220.

When τ0is input as the parameter value with respect to the facial expression basis of the user, the mapping function may output τ′0as the parameter value with respect to the facial expression basis of the avatar. When τmaxis input as the parameter value with respect to the facial expression basis of the user, the mapping function may output τ′maxas the parameter value with respect to the facial expression basis of the avatar. Also, when τminis input as the parameter value with respect to the facial expression basis of the user, the mapping function may output τ′minas the parameter value with respect to the facial expression basis of the avatar.

When a value between τmaxand τminis input, the mapping function may output an interpolated value using Equation 3.

τt↵↵′↵↵={(τmax′-τ0′)/(τmax-τ0)×(τt-τ0)+τ0′,ifτmax≥τt≥τ0(τ0′-τmin′)/(τ0-τmin)×(τt-τ0)+τ0′,ifτ0>τt≥τmin[Equation3]

FIG. 4illustrates a virtual world processing method, according to example embodiments.

Referring toFIG. 4, in operation410, the virtual world processing method may receive sensed information from an intelligent camera, the sensed information sensed by the intelligent camera with respect to a facial expression basis of a user.

The sensed information may include information on an intelligent camera facial expression basis type which defines the facial expression basis of the user, sensed by the intelligent camera. The intelligent camera facial expression basis type may include the facial expression basis. The facial expression basis may include at least one attribute selected from a facial expression basis ID for identification of the facial expression basis, a parameter value of the facial expression basis, and a parameter unit of the facial expression basis.

In operation420, the virtual world processing method may generate facial expression data of the user, for controlling the facial expression of the avatar, using (1) initialization information denoting a parameter for initializing the facial expression basis of the user, (2) a 2D/3D model defining a coding method for a face object of the avatar, and (3) the sensed information.

The initialization information may denote a parameter for initializing the facial expression of the user. Depending on embodiments, for example, when the avatar represents an animal, a facial shape of the user in the real world may be different from a facial shape of the avatar in the virtual world. Accordingly, normalization of the facial expression basis with respect to the face of the user is required. Therefore, the virtual world processing method may normalize the facial expression basis using the initialization information.

The initialization information may be received from any one of the intelligent camera and an adaptation engine.

When the initialization information is sensed by the intelligent camera, the initialization information may include information on an initialization intelligent camera facial expression basis type that defines a parameter for initializing the facial expression basis of the user, sensed by the intelligent camera.

In addition, the initialization intelligent camera facial expression basis type may include at least one element selected from (1) a distance (IRISD0) between an upper eyelid and a lower eyelid in a neutral facial expression of the user, (2) a distance (ES0) between two eyes in the neutral facial expression, (3) a distance (ENS0) between the two eyes and a nose in the neutral facial expression, (4) a distance (MNS0) between the nose and a mouth in the neutral facial expression, (5) a width (MW0) of the mouth in the neutral facial expression, and (6) a facial expression basis range with respect to a parameter value of the facial expression basis of the user.

Elements of the facial expression basis range may include at least one attribute selected from an ID (FacialExpressionBasisID) for identification of the facial expression basis, a maximum value (MaxFacialExpressionBasisParameter) of the parameter value of the facial expression basis, a minimum value (MinFacialExpressionBasisParameter) of the parameter value of the facial expression basis, a neutral value (NeutralFacialExpressionBasisParameter) denoting the parameter value of the facial expression basis in the neutral facial expression, and a parameter unit (FacialExpressionBasisParameterUnit) of the facial expression basis.

When the initialization information stored in the DB of the adaptation engine or the server is called, the initialization information according to the example embodiments may include information on an initialization intelligent camera device command that defines a parameter for initializing the facial expression basis of the user, stored in the DB.

In addition, the initialization intelligent camera device command may include at least one element selected from (1) the distance (IRISD0) between the upper eyelid and the lower eyelid in the neutral facial expression of the user, (2) the distance (ES0) between two eyes in the neutral facial expression, (3) the distance (ENS0) between the two eyes and the nose in the neutral facial expression, (4) the distance (MNS0) between the nose and the mouth in the neutral facial expression, (5) the width (MW0) of the mouth in the neutral facial expression, and (6) the facial expression basis range with respect to the parameter value of the facial expression basis of the user.

Also, the elements of the facial expression basis range may include at least one attribute selected from the ID (FacialExpressionBasisID) for identification of the facial expression basis, the maximum value (MaxFacialExpressionBasisParameter) of the parameter value of the facial expression basis, the minimum value (MinFacialExpressionBasisParameter) of the parameter value of the facial expression basis, the neutral value (NeutralFacialExpressionBasisParameter) denoting the parameter value of the facial expression basis in the neutral facial expression, and the parameter unit (FacialExpressionBasisParameterUnit) of the facial expression basis.

According to an aspect of the present invention, the virtual world processing method may output the generated facial expression data to the adaptation engine to control the facial expression of the avatar in the virtual world. In addition, the virtual world processing method may output the generated facial expression data to a server and store the facial expression data in a DB. The facial expression data stored in the DB may be used as an initialization intelligent camera device command.

The virtual world processing method may update, in real time, the facial expression basis range of the initialization information based on the sensed information received from the intelligent camera. That is, the virtual world processing method may update, in real time, at least one of the maximum value and the minimum value of the parameter value of the facial expression basis, based on the sensed information.

Depending on different embodiments, to update the facial expression basis range, the virtual world processing method may obtain, from the sensed information, (1) a face image of the user, (2) a first matrix representing translation and rotation of a face of the user, and (3) a second matrix extracted from components of the face of the user. The virtual world processing method may calculate the parameter value of the facial expression basis based on the obtained information, that is, the face image, the first matrix, and the second matrix. In addition, the virtual world processing method may update at least one of the maximum value and the minimum value, in real time, by comparing the calculated parameter value of the facial expression basis and a previous parameter value of the facial expression basis. For example, when the calculated parameter value is greater than the previous parameter value, the virtual world processing method may update the maximum value into the calculated parameter value.

The virtual world processing method described above may be expressed by Equation 1-2.

τ=argminV={τ}R-1T-τ·E[Equation1-2]

Here, T denotes the face image of the user, R−1denotes an inverse of the first matrix representing translation and rotation of the face of the user, and E denotes the second matrix extracted from the components of the face of the user. The components of the face of the user may include a feature point or a facial animation unit which is a basic element representing a facial expression. In addition, τ denotes a matrix indicating sizes of respective components.

For example, presuming that the neutral facial expression of the user, for example a blank face, is a neutral expression T0, the virtual world processing method may extract τ meeting Equation 1-2 by a global optimization algorithm or a local optimization method such as gradient descent. Here, the extracted value may be expressed as τ0. The virtual world processing method may store the extracted value as a value constituting the neutral expression of the user.

In addition, presuming that various facial expressions of the user are a neutral expression T, the processing unit220may extract τ meeting Equation 1 by a global optimization algorithm or a local optimization method such as gradient descent. Here, the processing unit220may use the extracted value in determining a minimum value and a maximum value of the facial expression basis range, that is, a range of the facial expressions that can be made by the user.

In this case, the virtual world processing method may determine the maximum value and the minimum value of the facial expression basis range using Equation 2-2.

τt,max=max{τt-1,max,τt}

τt,min=min{τt-1,min,τt} [Equation 2-2]

Since an error may be generated during image estimation, the virtual world processing method may compensate an outlier when estimating τt, maxand τt, minof Equation 2-2. Here, the virtual world processing method may compensate the outlier by applying smoothing or median filtering with respect to an input value τt, of Equation 2-2. According to other example embodiments, the virtual world processing method may perform the compensation through outlier handling.

When a value estimated by the foregoing process does not change for a predetermined time, the virtual world processing method may determine the facial expression basis range using the maximum value and the minimum value determined by Equation 2-2.

Three values τ0, τmax, and τminestimated by the virtual world processing method may be used as initial parameters for generating the facial expression made by the user. For example, in regard to lips, the virtual world processing method may use a left end point of an upper lip as a neutral value, the maximum value, and the minimum value of a parameter of the facial expression basis determining a lip expression.

The virtual world processing method may control the parameter value of the facial expression basis of the avatar using a mapping function and sensed information. That is, the virtual world processing method may control the facial expression of the avatar using the mapping function.

The mapping function defines mapping relations between a range of the facial expression basis with respect to the user and a range of the facial expression with respect to the avatar. The mapping function may be generated by the virtual world processing method.

For example, when τ0is input as the parameter value with respect to the facial expression basis of the user, the mapping function may output τ′0as the parameter value with is respect to the facial expression basis of the avatar. When τmaxis input as the parameter value with respect to the facial expression basis of the user, the mapping function may output τ′maxas the parameter value with respect to the facial expression basis of the avatar. Also, when τminis input as the parameter value with respect to the facial expression basis of the user, the mapping function may output τ′minas the parameter value with respect to the facial expression basis of the avatar.

When a value between τmaxand τminis input, the mapping function may output an interpolated value using Equation 3-2.

τt↵↵′↵↵={(τmax′-τ0′)/(τmax-τ0)×(τt-τ0)+τ0′,ifτmax≥τt≥τ0(τ0′-τmin′)/(τ0-τmin)×(τt-τ0)+τ0′,ifτ0>τt≥τmin[Equation3-2]

The methods according to the above-described example embodiments may be recorded in non-transitory computer-readable media including program instructions to implement various operations embodied by a computer. The embodiments may also be implemented in computing hardware (computing apparatus) and/or software, such as (in a non-limiting example) any computer that can store, retrieve, process and/or output data and/or communicate with other computers. The results produced can be displayed on a display of the computing hardware. A program/software implementing the embodiments may be recorded on non-transitory computer-readable media comprising computer-readable recording media. The media may also include, alone or in combination with the program instructions, data files, data structures, and the like. The program instructions recorded on the media may be those specially designed and constructed for the purposes of the example embodiments, or they may be of the kind well-known and available to those having skill in the computer software arts. Examples of non-transitory computer-readable media include magnetic media such as hard disks, floppy disks, and magnetic tape; optical media such as CD ROM discs and DVDs; magneto-optical media such as optical discs; and hardware devices that are specially configured to store and perform program instructions, such as read-only memory (ROM), random access memory (RAM), flash memory, and the like. Examples of the magnetic recording apparatus include a hard disk device (HDD), a flexible disk (FD), and a magnetic tape (MT). Examples of the optical disk include a DVD (Digital Versatile Disc), a DVD-RAM, a CD-ROM (Compact Disc-Read Only Memory), and a CD-R (Recordable)/RW. The media may be transfer media such as optical lines, metal lines, or waveguides including a carrier wave for transmitting a signal designating the program command and the data construction. Examples of program instructions include both machine code, such as produced by a compiler, and files containing higher level code that may be executed by the computer using an interpreter. The described hardware devices may be configured to act as one or more software modules in order to perform the operations of the above-described example embodiments, or vice versa.

Further, according to an aspect of the embodiments, any combinations of the described features, functions and/or operations can be provided.

Moreover, the virtual world processing apparatus, as shown in at leastFIG. 2, may include at least one processor to execute at least one of the above-described units and methods.

Although example embodiments have been shown and described, it would be appreciated by those skilled in the art that changes may be made in these example embodiments without departing from the principles and spirit of the disclosure, the scope of which is defined in the claims and their equivalents.

Claims

- A virtual world processing apparatus for controlling a facial expression of an avatar in a virtual world, by using a facial expression of a user in a real world, the apparatus comprising: a receiving unit configured to receive sensed information sensed by an intelligent camera, the sensed information relating to a facial expression basis of the user;and a computer processor configured to: generate facial expression data of the user, for controlling the facial expression of the avatar, by using: initialization information representing a parameter used for initializing the facial expression basis of the user, a 2-dimensional/3-dimensional (2D/3D) model defining a coding method for a face object of the avatar, and the received sensed information, obtain a face image of the user, a first matrix representing translation and rotation of a face of the user, and a second matrix extracted from components of the face of the user, calculate the parameter value of the facial expression basis, based on: the face image, the first matrix, and the second matrix, and update at least one of the maximum value and the minimum value of the facial expression basis, in real time, by comparing the calculated parameter value of the facial expression basis and a previous parameter value of the facial expression basis.

- The virtual world processing apparatus of claim 1 , further comprising: an output unit configured to output the facial expression data to an adaptation engine.

- The virtual world processing apparatus of claim 2 , wherein the output unit is configured to output the generated facial expression data to a server, and to store the generated facial expression data in a database.

- The virtual world processing apparatus of claim 3 , wherein the generated facial expression data stored in the database is used as an initialization intelligent camera device command that defines a parameter used for initializing the facial expression basis of the user.

- The virtual world processing apparatus of claim 1 , wherein the initialization information is received from any of: the intelligent camera and an adaptation engine.

- The virtual world processing apparatus of claim 1 , wherein the sensed information comprises at least one attribute selected from: an identifier (ID) used for identification of the facial expression basis;a parameter value of the facial expression basis;and a parameter unit of the facial expression basis.

- The virtual world processing apparatus of claim 1 , wherein the initialization information comprises an initial intelligent camera facial expression basis type that defines a parameter used for initializing the facial expression of the user, and wherein the initial intelligent camera facial expression basis type comprises at least one element selected from: a distance between an upper eyelid and a lower eyelid of the user while the user has a neutral facial expression;a distance between two eyes of the user while the user has the neutral facial expression;a distance between the two eyes and a nose of the user while the user has the neutral facial expression;a distance between the nose and a mouth of the user while the user has the neutral facial expression;a width of the mouth of the user while the user has the neutral facial expression;and a facial expression basis range with respect to a parameter value of the facial expression basis of the user.

- The virtual world processing apparatus of claim 7 , wherein the facial expression basis range comprises at least one attribute selected from: an identification (ID) of the facial expression basis;a maximum value of the parameter value of the facial expression basis;a minimum value of the parameter value of the facial expression basis;a neutral value, denoting the parameter value of the facial expression basis while the user has the neutral facial expression;and a parameter unit of the facial expression basis.

- The virtual world processing apparatus of claim 8 , wherein the computer processor is configured to update at least one of the maximum value and the minimum value, in real time, based on the sensed information.

- The virtual world processing apparatus of claim 7 , wherein the computer processor is configured to control the parameter value of the facial expression basis, by using the sensed information and a mapping function that defines relations between: the facial expression basis range with respect to the user, and a facial expression basis range with respect to the avatar.

- The virtual world processing apparatus of claim 1 , wherein the computer processor is configured to normalize the facial expression basis by using the initialization information, when a facial shape of the user in the real world is different from a facial shape of the avatar in the virtual world.

- The virtual world processing apparatus of claim 1 , wherein the 2D/3D model comprises: a face description parameter (FDP) which defines a face of the user, and a facial animation parameter (FAP) which defines motions of the face of the user.

- The system for enabling interaction between a real world and a virtual world of claim 1 , wherein the maximum value is updated when the calculated parameter value of the facial expression basis is greater than the previous parameter value of the facial expression basis.

- A virtual world processing apparatus for controlling a facial expression of an avatar in a virtual world, by using a facial expression of a user in a real world, the apparatus comprising: a receiving unit configured to receive sensed information sensed by an intelligent camera, wherein the sensed information relates to a facial expression basis of the user;and a computer processor configured to generate facial expression data of the user, for controlling the facial expression of the avatar, by using: initialization information representing a parameter used for initializing the facial expression basis of the user, a 2-dimensional/3-dimensional (2D/3D) model defining a coding method for a face object of the avatar, and the sensed information received from the intelligent camera;wherein the initialization information comprises an initial intelligent camera facial expression basis type that defines a parameter used for initializing the facial expression of the user, and wherein the initial intelligent camera facial expression basis type comprises at least one element selected from: a distance between an upper eyelid and a lower eyelid of the user while the user has a neutral facial expression, a distance between two eyes of the user while the user has the neutral facial expression, a distance between the two eyes and a nose of the user while the user has the neutral facial expression, a distance between the nose and a mouth of the user while the user has the neutral facial expression, a width of the mouth of the user while the user has the neutral facial expression, and a facial expression basis range with respect to a parameter value of the facial expression basis of the user;wherein the facial expression basis range comprises at least one attribute selected from: an identification (ID) of the facial expression basis, a maximum value of the parameter value of the facial expression basis, a minimum value of the parameter value of the facial expression basis, a neutral value, denoting the parameter value of the facial expression basis while the user has the neutral facial expression, and a parameter unit of the facial expression basis;wherein the computer processor is configured to update, in real time, at least one of the maximum value and the minimum value, based on the sensed information;wherein the computer processor is configured to obtain a face image of the user, a first matrix representing translation and rotation of a face of the user, and a second matrix extracted from components of the face of the user;wherein the computer processor is configured to calculate the parameter value of the facial expression basis, based on: the face image, the first matrix, and the second matrix;and wherein the computer processor is configured to update at least one of the maximum value and the minimum value, in real time, by comparing the calculated parameter value of the facial expression basis and a previous parameter value of the facial expression basis.

- The virtual world processing apparatus of claim 14 , wherein the maximum value is updated when the calculated parameter value of the facial expression basis is greater than the previous parameter value of the facial expression basis.

- The virtual world processing apparatus of claim 14 , wherein the computer processor is configured to compensate an outlier, with respect to the calculated parameter value of the facial expression, and wherein the computer processor is configured to update at least one of the maximum value and the minimum value, in real time, by comparing the outlier-compensated parameter value of the facial expression basis and the previous parameter value of the facial expression basis.

- A virtual world processing method for controlling a facial expression of an avatar in a virtual world, by using a facial expression of a user in a real world, the method comprising: receiving sensed information sensed by an intelligent camera, the sensed information relating to a facial expression basis of the user;and generating facial expression data of the user, for controlling the facial expression of the avatar, by using initialization information representing a parameter used for initializing the facial expression basis of the user, a 2-dimensional/3-dimensional (2D/3D) model defining a coding method for a face object of the avatar, and the sensed information received from the intelligent camera;obtaining a face image of the user, a first matrix representing translation and rotation of a face of the user, and a second matrix extracted from components of the face of the user;calculating the parameter value of the facial expression basis, based on: the face image, the first matrix, and the second matrix;and updating, in real time, at least one of the maximum value and the minimum value of the facial expression basis, by comparing the calculated parameter value of the facial expression basis and a previous parameter value of the facial expression basis.

- A non-transitory computer readable recording medium storing a program to cause a computer to implement the method of claim 17 .

- A virtual world processing method, the method comprising: receiving sensed information sensed by camera sensor, the sensed information relating to a facial expression basis of a user in a real world;and controlling, by use of a computer processor, a facial expression of an avatar in a virtual world, by using facial expression data generated based on the sensed information received from the camera sensor;obtaining a face image of the user, a first matrix representing translation and rotation of a face of the user, and a second matrix extracted from components of the face of the user;and updating, in real time, at least one of the maximum value and the minimum value of the facial expression basis, by comparing the calculated parameter value of the facial expression basis and a previous parameter value of the facial expression basis;wherein the parameter value of the facial expression basis is based on: the face image, the first matrix, and the second matrix.

- The virtual world processing method of claim 19 , wherein the controlling the facial expression of the avatar is further based on: initialization information representing a parameter used for initializing a facial expression basis of the user, and a 2-dimensional/3-dimensional (2D/3D) model defining a coding method for a face object of the avatar.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.