U.S. Pat. No. 9,039,182

VIDEO GAME TO MONITOR RETINAL DISEASES

AssigneeiCheck Health Connection, Inc.

Issue DateNovember 21, 2012

Illustrative Figure

Abstract

Systems and methods for providing a video game to map macular visual acuity comprising a multiple choice test where the fixation point is ensured by brief simultaneous presentation of both a central and pericentral targets. The game may be implemented on a hardware platform including a video display, a user input device, and a video camera. The camera is used to monitor ambient light level and the distance between the device and the eyes of the test subject. The game serves as a macular acuity perimeter that produces a map of the acuity of an eye that may be compared with normative data. The type of acuity tested is preferably Vernier acuity, but resolution acuity can also be tested. The test results are transmitted to a health care professional by telecommunications means to facilitate the diagnosis or monitoring of age-related macular degeneration or other relevant eye diseases.

Description

DETAILED DESCRIPTION OF THE INVENTION Overview Embodiments of the present invention are useful for the detection and monitoring of retinal diseases affecting primarily the macula. There are many such diseases, but the most common ones are age-related macular degeneration (AMD) and diabetic retinopathy. Generally, embodiments of the present invention include a video game or program configured to map macular visual acuity comprising a multiple choice test wherein a fixation point is ensured by brief, simultaneous presentation of both a central and pericentral targets. The game is implemented on a hardware platform comprising a video display, a user input device, and an image or video camera. The camera is used to monitor ambient light level, and to monitor the distance between the device and the eyes of the test subject. The game serves as a macular acuity perimeter that produces a map of the acuity of an eye that may be compared with normative data. The type of acuity tested is preferably Vernier acuity (also called “hyperacuity”), but resolution acuity or other types can also be tested. The test is suitable to be self-administered by the user (also referred to as the player or the subject herein) with or without professional supervision. The results may be transmitted (e.g., wirelessly) to a health care professional by telecommunications means to facilitate the diagnosis or monitoring of age-related macular degeneration or other relevant eye diseases. Embodiments of the present invention are sometimes referred to herein as the macular acuity perimetry (MAP) test. The Apparatus Embodiments of the present invention include a computer with a video monitor, a video camera, and a human-user input device. One example of an integrated apparatus serving these functions is the iPad 2® (Apple Inc., Cupertino, Calif.). Other computers or computer systems with similar functionalities may also be used. Referring ...

DETAILED DESCRIPTION OF THE INVENTION

Overview

Embodiments of the present invention are useful for the detection and monitoring of retinal diseases affecting primarily the macula. There are many such diseases, but the most common ones are age-related macular degeneration (AMD) and diabetic retinopathy.

Generally, embodiments of the present invention include a video game or program configured to map macular visual acuity comprising a multiple choice test wherein a fixation point is ensured by brief, simultaneous presentation of both a central and pericentral targets. The game is implemented on a hardware platform comprising a video display, a user input device, and an image or video camera. The camera is used to monitor ambient light level, and to monitor the distance between the device and the eyes of the test subject. The game serves as a macular acuity perimeter that produces a map of the acuity of an eye that may be compared with normative data. The type of acuity tested is preferably Vernier acuity (also called “hyperacuity”), but resolution acuity or other types can also be tested.

The test is suitable to be self-administered by the user (also referred to as the player or the subject herein) with or without professional supervision. The results may be transmitted (e.g., wirelessly) to a health care professional by telecommunications means to facilitate the diagnosis or monitoring of age-related macular degeneration or other relevant eye diseases. Embodiments of the present invention are sometimes referred to herein as the macular acuity perimetry (MAP) test.

The Apparatus

Embodiments of the present invention include a computer with a video monitor, a video camera, and a human-user input device. One example of an integrated apparatus serving these functions is the iPad 2® (Apple Inc., Cupertino, Calif.). Other computers or computer systems with similar functionalities may also be used. Referring toFIG. 1, a device100is shown that has a video camera110configured to monitor the distance between the device and a test subject's eyes. The device100also comprises a touch screen display120that is divided into a main game play area121and an ancillary area122. The play area121is used to display the visual action of a game. The play area121is preferably approximately square, but other shapes may also be used. The ancillary area122is used as an ancillary human-user input and score display, as discussed below.

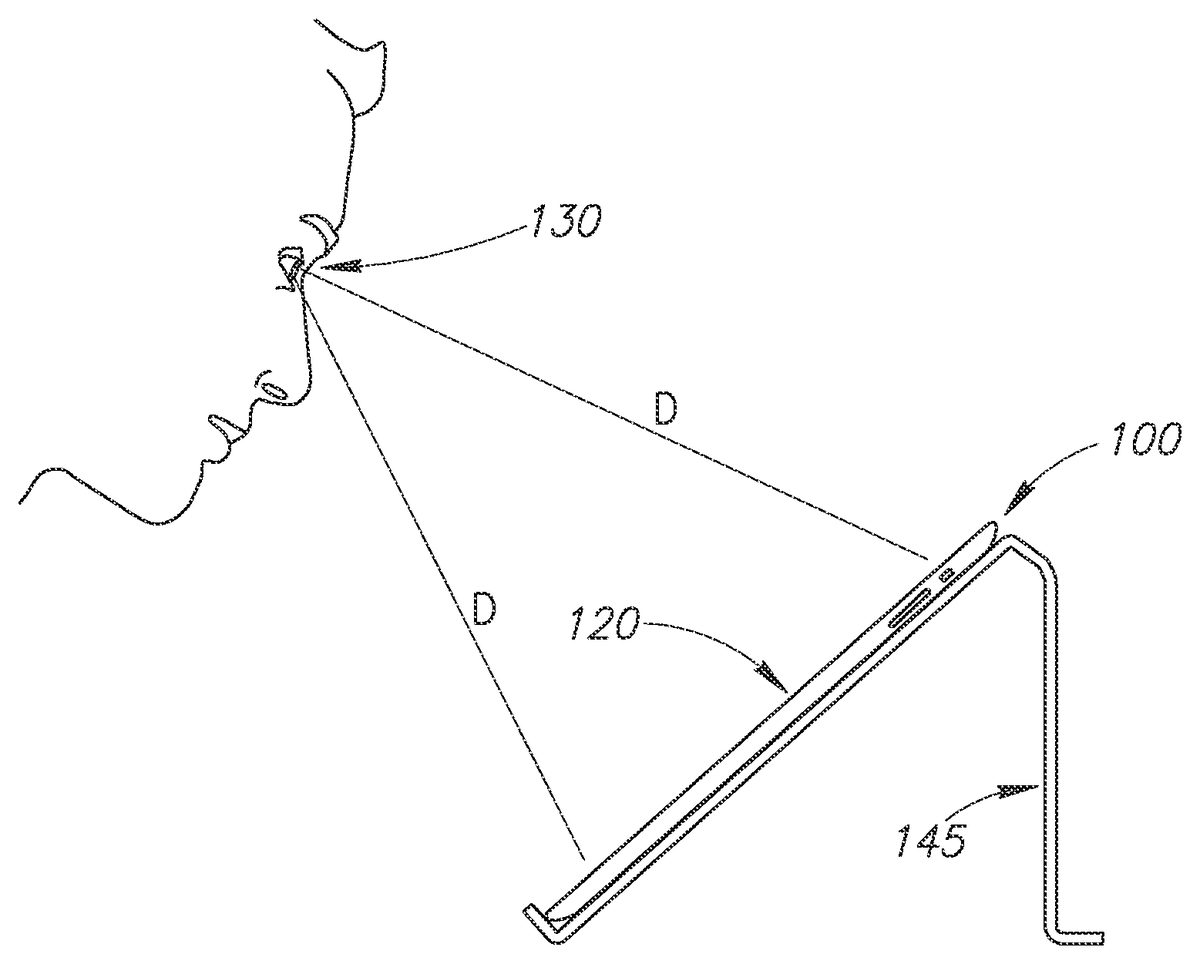

Referring toFIG. 2, the device100may be positioned on a stand145such that the user's eye130is approximately equal distance (D) to the top and bottom of the device's display120. The camera110on the front of the device100is used to monitor ambient light. The test is preferably performed in dim room lighting (low scotopic). The brightness of the screen120may be automatically adjusted according to the ambient light level within an acceptable range. Outside of the acceptable range, a warning message on the screen120may be provided to instruct the user to increase or decrease the room lighting appropriately.

Referring toFIGS. 3A and 3B, an occluder160is shown that may be used to occlude vision in one eye so the other eye can be tested using the video game of the present invention. The occluder160could be mounted on spectacles150or could be fixed on the user's head using straps. The occluder160has a visible feature165of known dimensions which is captured by the video camera110and can be analyzed by a computer (seeFIG. 4) of the device100to monitor the distance between subject's eyes and the device. As shown, the visual feature165could include, for example, a horizontal bar165A with well-defined termination points (e.g., vertical bars165B and165C) so that the length of the horizontal bar may be easily determined by computerized automatic image processing. Other shapes or patterns, such as a circle or rectangle, could also be used. Based on the video analysis, the device100may display an instruction140on the screen120(and/or by sound) so the user can position his or her head within the optimal range of distance from the device.

An alternative method, shown inFIG. 3C, of obtaining the desired viewing distance D asks the user to adjust the viewing distance until the size of the real-time video display the occluder160has the correct size. In the example shown, the user compares the video display of the calibration feature165against a regularly spaced vertical line overlay141. The user moves his/her head and/or the device100back and forth until the length of the feature165(e.g., between vertical bars165B and165C) spans two interval spacing between the vertical lines141.

Another alternative method for the device100to monitor viewing distance is to analyze the size of the subject's eye (e.g., corneal width from limbus to limbus) being tested or other features on the subject's face. For this alternative to work, a video frame may first be taken when the user's face is at a known distance from the camera110. As an example, the distance could initially be established using a measuring tape or ruler with a known length.

Referring now toFIG. 4, an input device123and an output device120are shown connected to a computer166of the device100. The term computer used in this instance refers to processors, memory, data/control bus, etc., as opposed to the peripheral input and output devices. The input and output functions can both be performed on the same touch screen, as depicted inFIG. 1. The video camera110produces image frames that are processed by the computer166to monitor the distance between the subject's eyes and the device100. The subject produces action in the video game with the input device123and the game background and actions are displayed on the video display or output device120. The game sounds are output on a speaker125.

The test results may be transmitted or uploaded (e.g., wirelessly) to a server168over a network167(e.g., the Internet, a mobile communications network, etc.). This feature allows for the storage, tracking, review, and analysis of the test results over time to detect patterns, such as the deterioration of a patient's vision. The patient, his or her healthcare professionals, or others may access the data stored on the server168through a web browser or via a link to an electronic health record system of a healthcare facility. The test results data may be processed and presented in a manner that is useful for the patient and/or healthcare provider to analyze the results.

The server168may also be configured to provide notifications or alerts to the patient or their healthcare provider for any changes in vision that may require further attention or treatment. These alerts may be sent to a patient's and/or healthcare provider's electronic devices (e.g., the mobile phone169) via email, SMS messages, voice messages, or any other suitable messaging system. For example, if an analysis of the uploaded test results reveals that a patient's vision is deteriorating, the server168may automatically send a message to the patient and/or a healthcare provider to alert them of the change in condition. Thus, appropriate action or treatment may be provided.

Initial Setup

The user is instructed to perform the setup steps by the device100without the need of human professional instruction and supervision, though a human supervisor could be helpful to assure proper use.

The first time the subject is taking the test, the subject's identifying information (e.g., name, age, etc.) may be entered into the computer166using the user input interface123. An acuity map of a normal population may be used as the initial estimate of the subject's acuity map. For subsequent tests, the initial estimate may be the average of the subject's recent tests.

Since a game is used to perform the MAP test, the terms “game” and “test” are used interchangeably herein. Further, the user of the device100is the subject of the MAP test and the game player. Therefore, the terms “user,” “subject,” and “player” are also used interchangeably.

Before and/or during each game, the brightness of the screen120may be adjusted to the desired range by the use of the camera110(seeFIG. 1) as described above. If the ambient light detected by the camera110is too high or low to be compensated for by adjusting the brightness, a message may be displayed on the display area120so the user can adjust the light level in the room. The test should generally be administered with the light level in the low scotopic range.

The test is administered at a viewing distance that is sufficient to provide useful AMD diagnostic information. For example, the iPad 2® used in some embodiments has a screen that is 5.8 inches wide. Referring back toFIG. 1, the display area120uses this full width of the screen. This provides a maximum perimetry testing area of 18 degrees full width at a viewing distance of 18 inches, using the methods of the current invention. As discussed above, the device100monitors the viewing distance D by taking images of the user's face (seeFIG. 3) using the camera110. The computer166(seeFIG. 4) analyzes the visible feature165on the occluder160to compute the distance between the camera110and the occluder160, which is approximately the same as the viewing distance. At the setup of each game, the device100instructs the user to move his or her head into position so the image of their face (in particular, the occluder160) can be captured by the camera110and displayed in display area120. The device100then instructs the user to move closer to or further from the display area120to bring the user's eyes into the target range of viewing distance. The initial target range may be 17 to 19 inches, for example.

Generally, the user should be wearing spectacle correction for their best vision within the operating range of the viewing distance. For an emmetrope, a pair of reading glasses with power of +2.25 D would be optimal for the viewing distance of 18 inches. If spectacles are used, the occluder160should be mounted over the spectacle lens over the eye not being tested. If no spectacles are needed or if the subject is using contact lenses, the occluder160could be mounted over plano glasses or strapped on as an eye patch.

Game Playing and Perimetry Test Cycle

Many game scenarios could be devised based on the principles of the current invention. For the purpose of demonstration, an exemplary flash card multiple-choice game illustrated inFIGS. 5-20is described.

The initial rounds of the game are used to establish central visual acuity. This is done using several rounds of “open card” games. Referring toFIG. 5, the display area120has a uniform background (e.g., a green background) with a number of open cards201-204thereon displaying visual acuity targets over a range of sizes bracketing around the user's estimated central acuity. Vernier acuity targets are preferably used, though normal acuity targets can also be employed. Vernier acuity targets test relative displacements such as relative shifts between two groups of line segments, as shown by the cards201-204inFIG. 5. A single line can also be used instead of multiple lines. Other types of unevenness or distortions in a straight line, curve, or circle can also be used. In an opening round, the subject selects the smallest card201-204on which he (or she) can perceive the shift between line segments by tapping on the touch screen120with a finger210, as shown inFIG. 6. The visual angle subtended by the spatial shift defines the Vernier acuity.

The selected acuity level is then confirmed and refined using a multiple-choice test. Referring toFIG. 7, four open cards221-224each showing the same size target are displayed. The player is tasked to select the one card of the cards221-224that is different from the other cards. In this example, the card221has a lateral shift between the line segments, while the other three cards222-224have no shift (aligned). For the sake of brevity, cards with shifted line segments are referred to as “shifted” cards and card with the aligned line segments are referred to as “aligned” cards. The test asks the subject to pick out the one card that is different from the other cards. Thus, the test could involve one shifted card and several aligned cards, or one aligned card and several shifted cards. A greater or smaller numbers of cards (for example, 2, 3, or 9 cards) could also be used.

Referring toFIG. 8, the subject chooses the one of the card221-224he believes is different from the other cards by tapping on the card on the touch screen120with a finger225. In this example, the choice was wrong and therefore the device100displays the correct choice with a message226and displays a score227saying “0 correct out of 1 test.” If the subject cannot see the patterns on the card, then the subject should tap on a “cannot see” button228rather than tap on the wrong choice. This should be explained in the game instructions so the test may proceed faster. After a brief delay, a new round of the game is started with a display similar to that shown inFIG. 7, but with a new set of cards where the location and type (shifted versus aligned lines) of the correct choice is different (e.g., randomly selected, etc.). In some embodiments, the central acuity level is established when the player chooses a sufficient number of correct cards at a certain error level (e.g., a 5% error level—the probability of achieving equal or greater number of correct choices being less than 5%).

Given a choice of four cards each round and allowing for zero selection error, the subject needs to make the correct choice in three rounds of the game to establish that he was able to perceive the correct choice at the acuity level being displayed. If this occurs, then the acuity level is raised (i.e., the lateral shift is made smaller) and more rounds of games are played until the user's perception is established or refuted. If the player clicks the “cannot see” button228(seeFIG. 8) or makes the correct choice in only one of three rounds, then perception is refuted. If he make the correct choice in two of three rounds (a borderline case), then one additional round is played for a total of four rounds. If the player makes the correct choice in three of four rounds, then perception is established. If he made the correct choice in two of four rounds, then perception is refuted. Thus for a choice of four cards per round, three rounds are played if the player makes no error and four rounds are played if the player makes one error. For a choice of fewer cards, a larger number of rounds are needed. The numbers of test rounds needed are tabulated in Table 1 shown below.

TABLE 1The number of test rounds needed to establish perception at <5%error level.# allowed wrong# of choices01258335434923

Table 1 is calculated based on the following equations on the condition that Py≦Y<5%.

Pc(x)=n!(1c)x(1-1c)n-xx!(n-x)!Equation1Py≤Y=∑x=n-YnPc(x)Equation2

wherePC(x) is the probability of the number of correct choices being arrived at by random chance,x is the number of rounds in which the correct card was picked,n is the total number of rounds played,c is the number of cards to choose from in each round,Py≦Yis the probability that y≦Y by random chance,y is the number of rounds in which the wrong card was picked, andY is the number of wrong choices allowed.

Once the central acuity is established in the initial rounds, the game proceeds to map parafoveal and perifoveal acuity. Anatomically, the fovea refers to the region approximately 1 mm in diameter, the parafovea refers to the surrounding region 2.5 mm (8 degrees) in diameter, and the perifovea the surrounding region 5.5 mm (18 degrees) in diameter. Again, Vernier acuity targets are preferred, but standard acuity targets can also be used. Preferably, a “flash card” game is used.

An example of parafoveal acuity perimetry is first described. Referring toFIG. 9, three cards231-233are dealt face down with their line patterns covered. A game score234is shown in the ancillary display area122to inform the player of the number of correct choices made and the number of test rounds played. The player activates a “flash reveal” (i.e., a brief display of the face of the cards) of the cards by a finger tap235in the display area122. The player is asked to fixate on the central card232at this stage of the game. The “back” of this card may include a “fixation location indicator” (e.g., “look here”) to instruct the user to fixate on the central card232. Referring toFIG. 10, the shifted or aligned line patterns associated with cards231-233are revealed in a brief flash (e.g., 0.2 seconds, 0.5 seconds, etc.). The patterns on the faces of the cards231-233should be revealed for only a short period of time so the player does not have a chance to shift their gaze from the central card232to a side card231or233. After a fraction of a second, the side cards231and233are once again covered to conceal the line patterns (seeFIG. 11). The player is then asked to identify which of the concealed cards231and233has the same pattern as the central card232. In this example, the player's finger tap235on card233is correct. Referring now toFIG. 12, the correct choice of the card233is rewarded by a smiley face icon238or other visual and/or sound effects. The score234is also updated to reflect the increase in the number of correct choices made and the number of rounds played.

FIGS. 13-16illustrate one game round to test perifoveal vision. Referring toFIG. 13, five cards241-245are dealt face down. A game score246is shown in the display area122to inform the player of the number of correct choices made and the number of test rounds played. All of the cards241-245are dealt with the line patterns covered (i.e., face down). The player activates the flash reveal of the cards by a finger tap247in the area122. The player is asked to fixate on the central card243at this stage of the game.

Referring toFIG. 14, the shifted or aligned line patterns associated with cards241-245are revealed in a brief flash. After a fraction of a second, the edge or side cards241,242,244, and245are again covered to conceal the line patterns (seeFIG. 15). The player is then asked to identify which of the concealed cards has the same pattern as the central card243. In this example, the player's finger tap247on the card245chooses the incorrect card. Referring now toFIG. 16, the correct card244is then revealed and marked as correct by an indicator226. The score246is also updated to reflect the increase in the number of correct choices made and the number of rounds played. Referring back toFIG. 15, the player can make the testing go much quicker by clicking the “Cannot See” button248when he is not able to see the patterns on the side cards241,242,244, and245.

FIGS. 17-20illustrate a method of testing perifoveal vision on a small screen such as on a mobile phone. For example, on a typical mobile phone with a 2 inch screen width, the visual angle subtended by the screen is only 8 degrees, even at a closer viewing distance of 14 inches. If the fixation point is placed at the center of the screen, then the screen is only large enough to test parafoveal vision (8 degrees diameter), but not perifoveal vision (18 degrees diameter). However, the testable visual field can be doubled if the fixation point is placed at the peripheral edge of the display screen. Referring toFIG. 17, the fixation point or “fixation location indicator” is a card253located in a corner of the display area120, with two other cards251and252positioned at the top-left edge and bottom-right edge, respectively, of the display area120. As before, the player use the finger tap247to briefly reveal the faces of the cards251-253. Referring toFIG. 18, while fixating on the pattern on the face of the card253, the player notes patterns251and252in the perifoveal visual field. Referring toFIG. 19, the player chooses between cards251and252based on the memory of which card held the same pattern as the fixation card253. And, as with other embodiments, the player also has the choice of a “cannot see” button258. In this example, the player chose the correct card252and is rewarded with a visual symbol259(FIG. 20) and the game score256is incremented.

Each round of the flash card game provides one data point on one location in the parafovea or perifovea. The game round can be described by a flow chart (seeFIG. 21) for acuity perimetry testing. First, the presentation is set up at300by dealing the cards face down. Then, the acuity targets (shifted and aligned line patterns) are presented for a brief moment when the player gives a signal (e.g., a finger tap) to do so at301. The parafoveal or perifoveal location being tested is the side card with the same line pattern as the central card. The player must choose (e.g., by finger tap) the correct location (or card) at302. If the choice is correct, decision303equals yes, then the score is incremented at304. If the choice is incorrect, decision303equals No, then the score is not incremented at305. The next round of the game is then played.

Mapping of Stimulus Perception Threshold

One output of the flash game is an acuity perimetry map. The dimension of the map is preferably approximately 16 degrees, which can be easily accommodated by tablet computers currently on the market. For example, the iPad 2® has a display area that is 5.8 inches wide. This provides a maximum visual field width of +/−18 degrees at a viewing distance of 18 inches. With the use of off-center fixation, 16 degrees of testing can be accomplished even on a smart phone screen.

Referring toFIG. 22, an acuity perimetry map310is presented as a polar grid of acuity values on the logarithm of minimum angle of resolution (LogMAR) scale. On the LogMAR scale, normal acuity (for the location) has a value of zero. Each ten-fold increase in the size of target needed for threshold perception increases LogMAR by 1.0. A LogMAR of 0.3 indicates that approximately a two-fold increase in target size is needed for threshold perception. Acuity is preferably determined to a precision of 0.1 LogMAR units. For the purpose of AMD screening and monitoring, targets of high contrast are used. The polar grid is divided into a central region312spanning the central 3 degrees diameter, parafoveal region313spanning the annulus from 3 degrees to 8 degrees diameter, and perifoveal region314spanning the annulus from 8 degrees to 16 degrees diameter. The parafoveal and perifoveal annuli are each subdivided into eight sectors.FIG. 22represents one implementation. More or fewer sectors or annuli could be employed. Rectangular grids could be also be employed instead of a polar grid as well. The map division shown inFIG. 22may be preferred because the number of test locations (i.e., 17 test locations) is reasonable and the sampling density is appropriately weighed with denser central sampling.

The map310is measured over many rounds of the game. The central acuity is tested in the initial rounds of the open card game as described above. The central acuity limits the smallest acuity target that could be used to test parafoveal and perifoveal vision. Then, a series of flash card games are played. The distribution of target locations depends on test location of the number of choices given. For example, a two-choice (two choices of side cards plus one central card=three total cards) game is shown inFIG. 10, where card233is the location where acuity is being tested and card231is placed in the location opposite the test location, also within the parafoveal annulus. An example of a 4-choice game is shown inFIG. 14, where card244is the location of acuity testing and three other cards (241,242, and245) are distributed evenly in the perifoveal annulus.

At the beginning of the game, the number of tests at each location can be found in column 2 of Table 1 shown above. For example, for a two-choice game, five tests are needed at each location. Since there are 16 parafoveal and perifoveal test locations (seeFIG. 22), a total of 80 flash card rounds are needed. These rounds are randomly ordered into a game sequence and played. At each round, the central target line pattern is randomly assigned to be either aligned or shifted. After the primary flash card rounds, the acuity target at a location is considered to be perceived if it is correctly chosen in all tests (e.g., five out of five times for the two-choice game), and considered not perceived if two or more incorrect choices are made. The test results at some locations could be equivocal (e.g., one error out of five choices), and three more tests are needed at these locations. These secondary flash card rounds are also randomly sequenced and played. After the secondary flash card rounds at each location, the target is considered perceived if only one or less incorrect choice is made, and is considered not perceived if two or more incorrect choices are made. Depending on perception, the target size at each location is then incremented or decremented for the next series of flash card rounds. The game series are played until enough information is accumulated to determine the LogMAR acuity map.

The series of testing needed to determine LogMAR is determined by an iterative bracketing algorithm. Referring toFIG. 23, the upper and lower bounds of LogMAR acuity at a parafoveal or perifoveal location are initially set to the largest and smallest possible targets at340. The initial size of the acuity target is set according to a best guess. If the game has been played before, decision341equals yes, then the best guess may be the average of recent games at342. For example, the results of the most recent three games in the past month could be averaged. If no game had been played before, decision341equals No, the best guess may be the average LogMAR acuity level at the same test location for a normal reference population.

The target is then presented in a series of flash card games as described above at344. If the target is perceived, decision345equals yes, then the LogMAR upper bound is set to the target size and the target size for the next series of flash card tests is set one increment smaller at346. The increment of target sizing is preferably 0.1 LogMAR units. If the target is not perceived, decision345equals No), then the LogMAR lower bound is set to the target size and the target size for the next series of testing is made one increment larger. If the upper and lower bounds are more than 0.1 LogMAR unit apart, decision348equals No, then additional series of game testing are done at the test location at344using the new target size. If the upper and lower bounds are only 0.1 LogMAR unit apart or less, decision348equals Yes, then no more testing is necessary at the location and the acuity output at the location is set to the upper bound (smallest target shown to be perceived) at349. Other methods for approaching and determining the threshold value may be used. For example, rather than incrementing or decrementing the target size by 1 increment each interval, the target size may be set half way between the upper bound and lower bound at each interval.

The number of choices in each flash card round should be determined by the ability of the player to rapidly process visual information. This ability will increase as more games are played. Thus, preferably, a two-choice flash card game is played initially, and then the player is given the opportunity to advance to a three-choice game if the score is high. Following this, the player is again given the opportunity to advance to a four-choice game if the score is high. Higher number of choices at each round means fewer rounds are needed (see Table 1 above). This leads to a shorter and more challenging game. However, because the primary purpose of the game is to test retinal function rather than visual processing, the number of choices per round is preferably kept relatively low (e.g., between two and four choices) so mistakes due to inattention are infrequent.

Alternative Test Targets

The tests so far illustrated utilized Vernier acuity targets. But it should be appreciated that alternative targets could be used to test different aspects of vision. For example, referring toFIG. 24, the targets could test line orientation410(targets411,412, and413), Landolt C orientation420(targets421,422, and423), color or gray level430(targets431,432, and433having differing gray levels or color levels), color value or saturation (not shown), commonly used Snellen letter or tumbling E acuity targets (not shown), or other targets could be used.

Advantages

Embodiments of the present invention comprise a video game-based acuity perimetry test that has some or all of the following advantages as well as other advantages:1) Embodiments of the present invention can be implemented on common consumer-owned hardware platforms such as a smart phone, laptop computer or a tablet computer (e.g., an iPad 2®). This allows more frequent testing by users.2) The central fixation point is established by forcing the player to know the pattern of the central card. Thus, there is less chance of error due to the player cheating or otherwise shifting central gaze to a peripheral target.3) For smaller screens, the fixation point is placed off-center to allow testing of perifoveal vision.4) The video game uses interesting visual stimuli, visual action, and background scenery to help hold a user's attention.5) The video game uses background music and action-generated sounds to help hold the user's attention.6) The video game keeps a score related to a user's performance towards goals to help hold the user's attention and motivate repeated playing of the game.7) The pace of the game is controlled by the player.8) The distance between the eye being tested and the display screen is established by video imaging of the occluder, obviating the use of a chin rest or other devices to fix the head position relative to the display screen.9) The ambient light level is monitored by the video camera included in the apparatus of the current invention.

Thus, the present invention provides a “home test” that can be self-administered by subjects who have AMD or are at risk for AMD, so that the test can be performed frequently (e.g., daily or weekly, etc.). The test may be in the form of a game that can maintain player interest. And the resulting macular acuity map may be automatically analyzed by a computer and transmitted electronically to a physician or healthcare provider who monitors the patient's eye health.

Example Hardware Environment

FIG. 25is a diagram of hardware and an operating environment in conjunction with which implementations of the device100may be practiced. The description ofFIG. 25is intended to provide a brief, general description of suitable computer hardware and a suitable computing environment in which implementations may be practiced. Although not required, implementations are described in the general context of computer-executable instructions, such as program modules, being executed by a computer, such as a personal computer. Generally, program modules include routines, programs, objects, components, data structures, etc., that perform particular tasks or implement particular abstract data types.

Moreover, those skilled in the art will appreciate that implementations may be practiced with other computer system configurations, including hand-held devices, multiprocessor systems, microprocessor-based or programmable consumer electronics, network PCs, minicomputers, mainframe computers, tablet computers, smartphones, and the like. Implementations may also be practiced in distributed computing environments where tasks are performed by remote processing devices that are linked through a communications network. In a distributed computing environment, program modules may be located in both local and remote memory storage devices.

The exemplary hardware and operating environment ofFIG. 25includes a general-purpose computing device in the form of a computing device12. The device100may be implemented using one or more computing devices like the computing device12.

The computing device12includes a system memory22, the processing unit21, and a system bus23that operatively couples various system components, including the system memory22, to the processing unit21. There may be only one or there may be more than one processing unit21, such that the processor of computing device12includes a single central-processing unit (“CPU”), or a plurality of processing units, commonly referred to as a parallel processing environment. When multiple processing units are used, the processing units may be heterogeneous. By way of a non-limiting example, such a heterogeneous processing environment may include a conventional CPU, a conventional graphics processing unit (“GPU”), a floating-point unit (“FPU”), combinations thereof, and the like. The computing device12may be a tablet computer, a smart phone, a conventional computer, a distributed computer, or any other type of computer.

The system bus23may be any of several types of bus structures including a memory bus or memory controller, a peripheral bus, and a local bus using any of a variety of bus architectures. The system memory22may also be referred to as simply the memory, and includes read only memory (ROM)24and random access memory (RAM)25. A basic input/output system (BIOS)26, containing the basic routines that help to transfer information between elements within the computing device12, such as during start-up, is stored in ROM24. The computing device12further includes a flash memory27, a magnetic disk drive28for reading from or writing to a removable magnetic disk29, and an optical disk drive30for reading from or writing to a removable optical disk31such as a CD ROM, DVD, or other optical media.

The flash memory27, magnetic disk drive28, and optical disk drive30are connected to the system bus23by a flash memory interface32, a magnetic disk drive interface33, and an optical disk drive interface34, respectively. The drives and their associated computer-readable media provide nonvolatile storage of computer-readable instructions, data structures, program modules, and other data for the computing device12. It should be appreciated by those skilled in the art that any type of computer-readable media which can store data that is accessible by a computer, such as magnetic cassettes, hard disk drives, solid state memory devices (“SSD”), USB drives, digital video disks, Bernoulli cartridges, random access memories (RAMs), read only memories (ROMs), and the like, may be used in the exemplary operating environment. As is apparent to those of ordinary skill in the art, the flash memory27and other forms of computer-readable media (e.g., the removable magnetic disk29, the removable optical disk31, flash memory cards, hard disk drives, SSD, USB drives, and the like) accessible by the processing unit21may be considered components of the system memory22.

A number of program modules may be stored on the flash memory27, magnetic disk29, optical disk31, ROM24, or RAM25, including an operating system35, one or more application programs36, other program modules37, and program data38. A user may enter commands and information into the computing device12through input devices such as a keyboard40and input device42. The input device42may include touch sensitive devices (e.g., a stylus, touch pad, touch screen, or the like), a microphone, joystick, game pad, satellite dish, scanner, video camera, depth camera, or the like. In a preferred embodiment, the user enters information into the computing device using an input device42that comprises a touch screen, such as touch screens commonly found on tablet computers (e.g., an iPad® 2). These and other input devices are often connected to the processing unit21through an input/output (I/O) interface46that is coupled to the system bus23, but may be connected by other types of interfaces, including a serial port, parallel port, game port, a universal serial bus (USB), or a wireless interface (e.g., a Bluetooth interface). A monitor47or other type of display device is also connected to the system bus23via an interface, such as a video adapter48. In addition to the monitor, computers typically include other peripheral output devices (not shown), such as speakers, printers, and haptic devices that provide tactile and/or other types physical feedback (e.g., a force feedback game controller).

The computing device12may operate in a networked environment using logical connections (wired and/or wireless) to one or more remote computers, such as remote computer49. These logical connections are achieved by a communication device coupled to or a part of the computing device12(as the local computer). Implementations are not limited to a particular type of communications device or interface.

The remote computer49may be another computer, a server, a router, a network PC, a client, a memory storage device, a peer device or other common network node or device, and typically includes some or all of the elements described above relative to the computing device12. The remote computer49may be connected to a memory storage device50. The logical connections depicted inFIG. 25include a local-area network (LAN)51(wired or wireless) and a wide-area network (WAN)52. Such networking environments are commonplace in offices, enterprise-wide computer networks, intranets and the Internet.

Those of ordinary skill in the art will appreciate that a LAN may be connected to a WAN via a modem using a carrier signal over a telephone network, cable network, cellular network (e.g., a mobile communications network such as 3G, 4G, etc.), or power lines. Such a modem may be connected to the computing device12by a network interface (e.g., a serial or other type of port). Further, many laptop or tablet computers may connect to a network via a cellular data modem.

When used in a LAN-networking environment, the computing device12may be connected to the local area network51through a network interface or adapter53(wired or wireless), which is one type of communications device. When used in a WAN networking environment, the computing device12typically includes a modem54, a type of communications device, or any other type of communications device for establishing communications over the wide area network52(e.g., the Internet), such as one or more devices for implementing wireless radio technologies (e.g., GSM, etc.).

The modem54, which may be internal or external, is connected to the system bus23via the I/O interface46. The modem54may be configured to implement a wireless communications technology (e.g., mobile telecommunications system, etc.). In a networked environment, program modules depicted relative to the personal computing device12, or portions thereof, may be stored in the remote computer49and/or the remote memory storage device50. It is appreciated that the network connections shown are exemplary and other means of and communications devices or interfaces for establishing a communications link between the computers may be used.

The computing device12and related components have been presented herein by way of particular example and also by abstraction in order to facilitate a high-level view of the concepts disclosed. The actual technical design and implementation may vary based on particular implementation while maintaining the overall nature of the concepts disclosed.

The foregoing described embodiments depict different components contained within, or connected with, different other components. It is to be understood that such depicted architectures are merely exemplary, and that in fact many other architectures can be implemented which achieve the same functionality. In a conceptual sense, any arrangement of components to achieve the same functionality is effectively “associated” such that the desired functionality is achieved. Likewise, any two components so associated can also be viewed as being “operably connected”, or “operably coupled”, to each other to achieve the desired functionality.

While particular embodiments of the present invention have been shown and described, it will be obvious to those skilled in the art that, based upon the teachings herein, changes and modifications may be made without departing from this invention and its broader aspects and, therefore, the appended claims are to encompass within their scope all such changes and modifications as are within the true spirit and scope of this invention. Furthermore, it is to be understood that the invention is solely defined by the appended claims. It will be understood by those within the art that, in general, terms used herein, and especially in the appended claims (e.g., bodies of the appended claims) are generally intended as “open” terms (e.g., the term “including” should be interpreted as “including but not limited to,” the term “having” should be interpreted as “having at least,” the term “includes” should be interpreted as “includes but is not limited to,” etc.).

It will be further understood by those within the art that if a specific number of an introduced claim recitation is intended, such an intent will be explicitly recited in the claim, and in the absence of such recitation no such intent is present. For example, as an aid to understanding, the following appended claims may contain usage of the introductory phrases “at least one” and “one or more” to introduce claim recitations. However, the use of such phrases should not be construed to imply that the introduction of a claim recitation by the indefinite articles “a” or “an” limits any particular claim containing such introduced claim recitation to inventions containing only one such recitation, even when the same claim includes the introductory phrases “one or more” or “at least one” and indefinite articles such as “a” or “an” (e.g., “a” and/or “an” should typically be interpreted to mean “at least one” or “one or more”); the same holds true for the use of definite articles used to introduce claim recitations. In addition, even if a specific number of an introduced claim recitation is explicitly recited, those skilled in the art will recognize that such recitation should typically be interpreted to mean at least the recited number (e.g., the bare recitation of “two recitations,” without other modifiers, typically means at least two recitations, or two or more recitations).

Accordingly, the invention is not limited except as by the appended claims.

Claims

- A computer-implemented method for testing macular visual acuity, comprising: displaying a first set of objects on a display of a computing device, the first set of objects including at least three objects, exactly one object in the first set objects being different from the other of the objects in the first set, wherein recognition of the difference between the one different object and the other of the objects is indicative of a user's visual acuity;receiving input from the user via a user input device of the computing device indicating a selection of one of the objects in the first set of objects;determining whether the user correctly selected the one different object from the first set of objects;and assessing the user's visual acuity based on the selections of the user.

- The computer-implemented method of claim 1 , further comprising: capturing an image of the user using an image capture device of the computing device;determining the distance between the display of the computing device and the user based on the captured image;and providing an instruction to the user to either increase or decrease his or her distance from the display based on the determined distance.

- The computer-implemented method of claim 1 , further comprising: subsequent to displaying the first set of objects on the display, displaying a second set of objects on the display of the computing device, the second set of objects including at least three objects, exactly one object in the second set objects being different from the other of the objects in the second set, wherein recognition of the difference between the one different object in the second set and the other of the objects in the second set is indicative of a user's visual acuity;receiving input from the user via the user input device of the computing device indicating a selection of one of the objects in the second set of objects;and determining whether the user correctly selected the one different object from the second set of objects.

- The computer-implemented method of claim 3 , wherein a characteristic of one or more of the objects in the second set of objects displayed on the display is dependent on whether the user correctly selected the one different object from the first set of objects.

- The computer-implemented method of claim 1 , wherein the computing device comprises a tablet computer and the user input device comprises a touch screen of the tablet computer.

- The computer-implemented method of claim 1 , further comprising: measuring ambient light level;and adjusting a brightness level of the display dependent on the measured ambient light level.

- The computer-implemented method of claim 1 , further comprising: measuring ambient light level using the computing device;and providing a notification instructing the user to adjust the ambient light level.

- The computer-implemented method of claim 1 , further comprising: transmitting data relating to the user's visual acuity to an external computing device.

- The computer-implemented method of claim 8 , further comprising storing the data on the external computing device, and analyzing the data to detect presence of an eye condition.

- The computer-implemented method of claim 9 , further comprising sending a notification from the external computing device to a computing device over a network indicative of the detected eye condition.

- The computer-implemented method of claim 1 , wherein the objects in the first set of objects comprise Vernier acuity targets.

- The computer-implemented method of claim 1 , further comprising, prior to displaying the first set of objects, displaying a set of differently-sized objects on the display, and receiving a selection of an object in the set of differently-sized objects via the user input interface indicating the smallest object in the set of differently-sized objects that is perceptible by the user, wherein the size of the objects in the first set of objects corresponds with the size of the selected object in the set of differently-sized objects.

- The computer-implemented method of claim 1 , further comprising displaying a score on the display based on the number of correct selections by the user.

- The computer-implemented method of claim 1 , wherein the one object in the first set objects is different in size from the other of the objects in the first set.

- A computer-implemented method for testing macular visual acuity, comprising: displaying a fixation location indicator on a display of a computing device configured for fixation thereon by a user;simultaneously and briefly displaying on the display a fixation pattern at the location of the fixation location indicator and at least two pericentral patterns spaced apart from the fixation pattern, wherein one of the pericentral patterns is a correct choice pericentral pattern that is different from the other of the pericentral patterns;receiving input from the user via a user input device indicating a selection of one of the pericentral patterns;determining whether the user selected the correct choice pericentral pattern;and recording whether the user selected the correct choice pericentral pattern in a data storage.

- The computer-implemented method of claim 15 , wherein the fixation pattern is positioned on the display near a periphery thereof.

- The computer-implemented method of claim 15 , further comprising: capturing an image of the user using an image capture device of the computing device;determining the distance between the display of the computing device and the user based on the captured image;and providing an instruction to the user to either increase or decrease his or her distance from the display based on the determined distance.

- The computer-implemented method of claim 15 , wherein the computing device comprises a tablet computer and the user input device comprises a touch screen of the tablet computer.

- The computer-implemented method of claim 15 , further comprising: measuring ambient light level;and adjusting a brightness level of the display dependent on the measured ambient light level.

- The computer-implemented method of claim 15 , further comprising: measuring ambient light level using the computing device;and providing a notification instructing the user to adjust the ambient light level.

- The computer-implemented method of claim 15 , further comprising: transmitting data relating to the user's visual acuity to an external computing device.

- The computer-implemented method of claim 21 , further comprising storing the data on the external computing device, and analyzing the data to detect presence of an eye condition.

- The computer-implemented method of claim 22 , further comprising sending a notification from the external computing device to a computing device over a network indicative of the detected eye condition.

- The computer-implemented method of claim 15 , wherein the fixation pattern and the at least two pericentral patterns comprise Vernier acuity targets.

- The computer-implemented method of claim 15 , wherein the correct choice pericentral pattern comprises the same pattern as the fixation pattern, and the other of the pericentral patterns comprises a different pattern than the fixation pattern.

- The computer-implemented method of claim 15 , wherein the correct choice pericentral pattern comprises a different pattern than the fixation pattern, and the other of the pericentral patterns comprises the same pattern as the fixation pattern.

- A system for testing macular visual acuity, comprising: a display;a user input device;and a computer coupled to the display and the user input device, and configured to: simultaneously and briefly display on the display a fixation pattern and at least two pericentral patterns, wherein one of the pericentral patterns is a correct choice pericentral pattern that is different from the other of the pericentral patterns;receive input from the user via the user input device indicating a selection of one of the pericentral patterns;and determine whether the user selected the correct choice pericentral pattern.

- The system of claim 27 , wherein the correct choice pericentral pattern comprises the same pattern as the fixation pattern, and the other of the pericentral patterns comprises a different pattern than the fixation pattern.

- The system of claim 27 , wherein the correct choice pericentral pattern comprises a different pattern than the fixation pattern, and the other of the pericentral patterns comprises the same pattern as the fixation pattern.

- The system of claim 27 , further comprising: a camera operatively coupled to the computer configured to monitor the ambient light level of the environment of the system, wherein the computer is configured to adjust the brightness of the display dependent on the monitored ambient light level.

- The system of claim 27 , further comprising: a camera operatively coupled to the computer configured to monitor the ambient light level of the environment of the system, wherein the computer is configured to display a message on the display providing an instruction to the user to adjust the ambient light level of the environment.

- The system of claim 27 , further comprising: a communications interface coupled to the computer and configured to communicate with an external computer system using wired or wireless communication.

- The system of claim 27 , further comprising: a camera operatively coupled to the computer configured to capture an image of the user, wherein the computer is configured to determine the distance between the display and the user based on the captured image, and to provide an instruction to the user to either increase or decrease his or her distance from the display based on the determined distance.

- The system of claim 27 , wherein the system comprises a tablet computer and the user input device comprises a touch screen of the tablet computer.

- The system of claim 27 , wherein the fixation pattern and the pericentral patterns comprise Vernier acuity targets.

- The system of claim 27 , wherein the computer is further configured to, prior to displaying the fixation pattern and the pericentral patterns, display a set of differently-sized patterns on the display, and receive a selection of one of the differently-sized patterns via the user input interface indicating the smallest patterns in the set of differently-sized patterns that is perceptible by the user, wherein the size of the fixation pattern and the pericentral patterns corresponds with the size of the selected pattern in the set of differently-sized patterns.

- The system of claim 27 , wherein the fixation pattern is positioned on the display near a periphery thereof.

- A non-transitory computer-readable medium encoded with computer executable instructions, which when executed, performs a method comprising: displaying a first set of objects on a display of a computing device, the first set of objects including at least three objects, exactly one object in the first set objects being different from the other of the objects in the first set, wherein recognition of the difference between the one different object and the other of the objects is indicative of a user's visual acuity;receiving input from the user via a user input device of the computing device indicating a selection of one of the objects in the first set of objects;determining whether the user correctly selected the one different object from the first set of objects;and assessing the user's visual acuity based on the selections of the user.

- The non-transitory computer-readable medium of claim 38 , wherein the method further comprises: capturing an image of the user using an image capture device of the computing device;determining the distance between the display of the computing device and the user based on the captured image;and providing an instruction to the user to either increase or decrease his or her distance from the display based on the determined distance.

- The non-transitory computer-readable medium of claim 38 , wherein the method further comprises: subsequent to displaying the first set of objects on the display, displaying a second set of objects on the display of the computing device, the second set of objects including at least three objects, exactly one object in the second set objects being different from the other of the objects in the second set, wherein recognition of the difference between the one different object in the second set and the other of the objects in the second set is indicative of a user's visual acuity;receiving input from the user via the user input device of the computing device indicating a selection of one of the objects in the second set of objects;and determining whether the user correctly selected the one different object from the second set of objects.

- The non-transitory computer-readable medium of claim 40 , wherein a characteristic of one or more of the objects in the second set of objects displayed is dependent on whether the user correctly selected the one different object from the first set of objects.

- The non-transitory computer-readable medium of claim 38 , wherein the computing device comprises a tablet computer and the user input device comprises a touch screen of the tablet computer.

- The non-transitory computer-readable medium of claim 38 , wherein the method further comprises: measuring ambient light level;and adjusting a brightness level of the display dependent on the measured ambient light level.

- The non-transitory computer-readable medium of claim 38 , wherein the method further comprises: measuring ambient light level using the computing device;and providing a notification instructing the user to adjust the ambient light level.

- The non-transitory computer-readable medium of claim 38 , wherein the method further comprises: transmitting data relating to the user's visual acuity to an external computing device.

- The non-transitory computer-readable medium of claim 45 , wherein the method further comprises storing the data on the external computing device, and analyzing the data to detect presence of an eye condition.

- The non-transitory computer-readable medium of claim 46 , wherein the method further comprises sending a notification from the external computing device to a computing device over a network indicative of the detected eye condition.

- The non-transitory computer-readable medium of claim 38 , wherein the objects in the first set of objects comprise Vernier acuity targets.

- The non-transitory computer-readable medium of claim 38 , wherein the method further comprises, prior to displaying the first set of objects, displaying a set of differently-sized objects on the display, and receiving a selection of an object via the user input interface indicating the smallest object in the set of differently-sized objects that is perceptible by the user, wherein the size of the objects in the first set of objects corresponds with the size of the selected object in the set of differently-sized objects.

- The non-transitory computer-readable medium of claim 38 , wherein the method further comprises displaying a score on the display based on the number of correct selections by the user.

- The non-transitory computer-readable medium of claim 38 , wherein the one different object in the first set objects differs from the other of the objects in the first set of objects in one of color and gray level.

- A computer-implemented method for providing a video game for testing macular visual acuity, the method comprising: testing central visual acuity by executing a plurality of central visual acuity rounds of the video game, each central visual acuity round comprising: displaying at least three central acuity targets on a display of a computing device, one of the at least three central acuity targets being different from the other of the central acuity targets, wherein recognition of the difference between the one different central acuity target and the other central acuity targets is indicative of a user's central visual acuity;receiving a selection of one of the central acuity targets from the user via a user input device of the computing device;determining whether the user correctly selected the one different central acuity target from the central acuity targets;and assessing the user's central visual acuity based on the selection of the user;testing pericentral visual acuity by executing a plurality of pericentral visual acuity rounds of the video game, each pericentral visual acuity round comprising: displaying a fixation location indicator on the display of the computing device configured for fixation thereon by a user;simultaneously and briefly displaying on the display a fixation target at the location of the fixation location indicator and at least two pericentral acuity targets spaced apart from the fixation target, wherein one of the pericentral acuity targets is a correct choice pericentral acuity target that is different from the other of the pericentral acuity targets, the correct choice pericentral acuity target being positioned relative to the fixation target such that perception of the correct choice pericentral acuity target is indicative of the user's visual acuity at a location on an acuity map;receiving a selection of one of the pericentral acuity targets from the user via the user input device;determining whether the user selected the correct choice pericentral acuity target;and recording whether the user selected the correct choice pericentral acuity target in a data storage;and generating a visual acuity map based on the user's selections during the plurality of central acuity and pericentral acuity rounds, wherein in the visual acuity map is divided into a plurality of locations, and each of the plurality of locations is tested by at least one of the central acuity or pericentral acuity rounds of the video game.

- The computer-implemented method of claim 52 , wherein, during each of the central acuity rounds, a characteristic of the central acuity targets displayed on the display is dependent on the user's selection of a central acuity target in a previous central acuity round.

- The computer-implemented method of claim 52 , wherein, during each of the pericentral acuity rounds, a characteristic of the fixation target and the pericentral acuity targets displayed on the display is dependent on the user's selection of a pericentral acuity target in a previous pericentral acuity round.

- The computer-implemented method of claim 52 , wherein a characteristic of the central acuity targets and the pericentral acuity targets is initially selected based on the user's previous visual acuity test results.

- The computer-implemented method of claim 52 , wherein a characteristic of the central acuity targets and the pericentral acuity targets is initially selected based on visual acuity information of a normal population.

- The computer-implemented method of claim 52 , further comprising: capturing an image of the user using an image capture device of the computing device;determining the distance between the display of the computing device and the user based on the captured image;and providing an instruction to the user to either increase or decrease his or her distance from the display based on the determined distance.

- The computer-implemented method of claim 52 , wherein the computing device comprises a tablet computer and the user input device comprises a touch screen of the tablet computer.

- The computer-implemented method of claim 52 , wherein the central acuity targets and the pericentral acuity targets comprise Vernier acuity targets.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.