U.S. Pat. No. 8,944,914

CONTROL OF TRANSLATIONAL MOVEMENT AND FIELD OF VIEW OF A CHARACTER WITHIN A VIRTUAL WORLD AS RENDERED ON A DISPLAY

AssigneePNI SENSOR CORP

Issue DateDecember 11, 2012

Illustrative Figure

Abstract

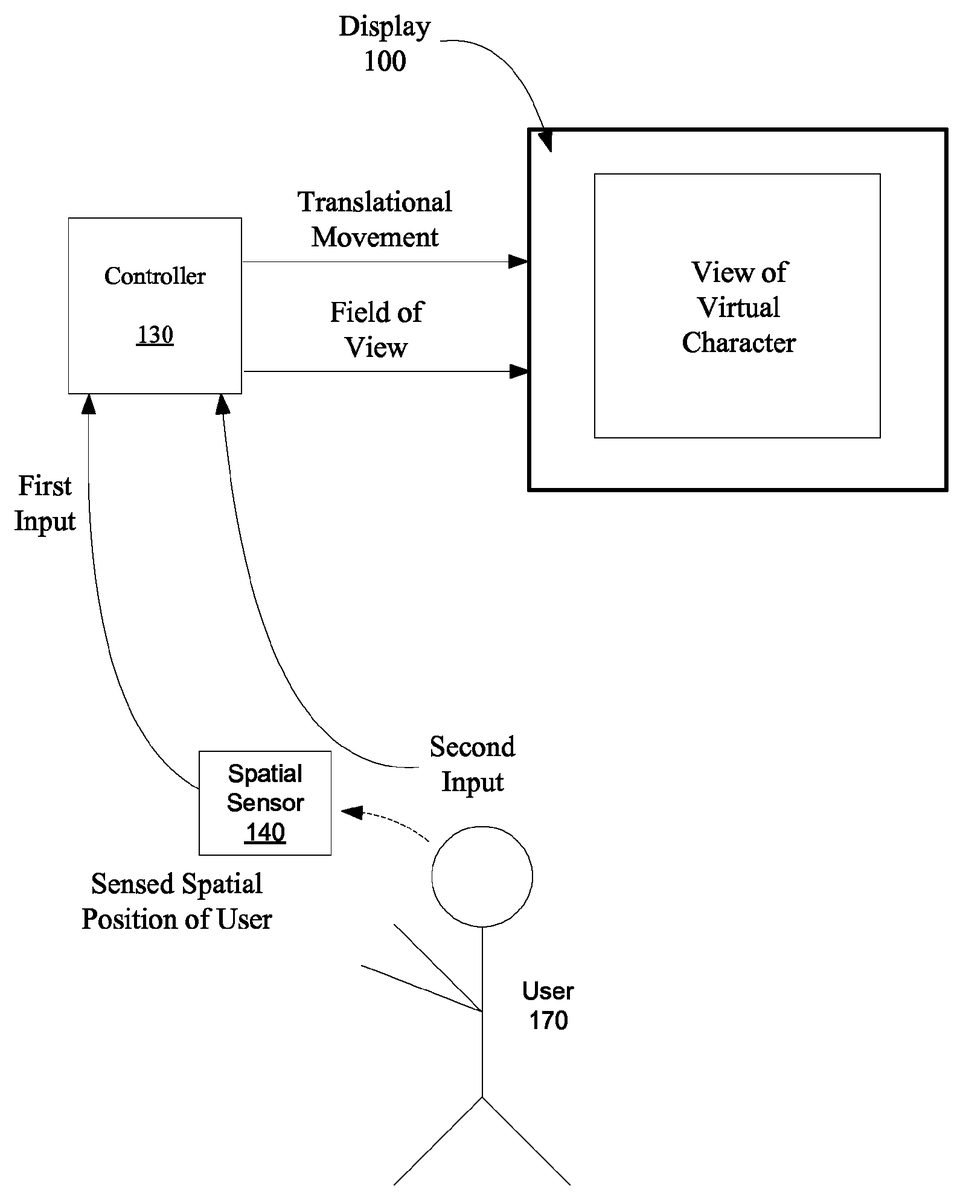

Apparatuses, methods and systems for controlling a view of a virtual world character on a display are disclosed. One apparatus includes a controller, wherein the controller is operative to control translational movement and field of view of a character within a virtual world as rendered on a display based upon a first input and second input. The first input controls the translational movement of the character within the virtual world, and the second input controls the field of view as seen by the character within the virtual world. The video game apparatus further includes a sensor for sensing a spatial position of a user, wherein the sensor provides the first input based on the sensed spatial position of the user.

Description

DETAILED DESCRIPTION The described embodiments provide for apparatuses, methods, and systems for controlling a view of a character within a virtual world as rendered on a display based on the sensed spatial position of a user. At least two inputs are generated for controlling the view of the character within the virtual world on the display. At least one of the inputs is generated based on sensing spatial position of a user, and/or of a device associated with the user. The described embodiments provide a video game platform that provides a more realistic experience for, as an example, a video game user controlling and shooting a gaming weapon. FIG. 1shows a video game apparatus, according to an embodiment. The video game apparatus includes a controller130operative to control translational movement and field of view of a character within a virtual world as rendered on a display100based upon a first input and second input. For an embodiment, the first input controls the translational movement of the character within the virtual world, and the second input controls the field of view as seen by character within the virtual world. The video game apparatus further includes a sensor140for sensing a spatial position of a user, wherein the sensor140provides the first input (translational movement control) based upon the sensed spatial position of the user. As will be described, the spatial position of the user170can be sensed in many different ways. For an embodiment, the spatial position of the user170can be sensed using one or more cameras located proximate to the display. The one or more cameras sense the spatial position of the user, for example, when the user170is playing a First Person Shooter (FPS) video game. This embodiment provides a more realistic video game experience as the body motion of the user170effects the view of ...

DETAILED DESCRIPTION

The described embodiments provide for apparatuses, methods, and systems for controlling a view of a character within a virtual world as rendered on a display based on the sensed spatial position of a user. At least two inputs are generated for controlling the view of the character within the virtual world on the display. At least one of the inputs is generated based on sensing spatial position of a user, and/or of a device associated with the user. The described embodiments provide a video game platform that provides a more realistic experience for, as an example, a video game user controlling and shooting a gaming weapon.

FIG. 1shows a video game apparatus, according to an embodiment. The video game apparatus includes a controller130operative to control translational movement and field of view of a character within a virtual world as rendered on a display100based upon a first input and second input. For an embodiment, the first input controls the translational movement of the character within the virtual world, and the second input controls the field of view as seen by character within the virtual world. The video game apparatus further includes a sensor140for sensing a spatial position of a user, wherein the sensor140provides the first input (translational movement control) based upon the sensed spatial position of the user.

As will be described, the spatial position of the user170can be sensed in many different ways. For an embodiment, the spatial position of the user170can be sensed using one or more cameras located proximate to the display. The one or more cameras sense the spatial position of the user, for example, when the user170is playing a First Person Shooter (FPS) video game. This embodiment provides a more realistic video game experience as the body motion of the user170effects the view of the character within virtual world of the game in real-time, and provides a user experience in which the user170is more “a part of the game” than present FPS video games allow.

While at least one of the described embodiments include one or more cameras sensing the spatial position of the user, other embodiments include additional or alternate sensing techniques for sensing the spatial position of the user. For example, the spatial position of the user170can also be sensed in three-dimensional real space by using active magnetic distance sensing wherein an AC magnetic source is generated either on the user170or from a fixed reference position and an opposing magnetic detector is appropriately configured. In another embodiment, passive DC magnetic sources, such as permanent magnets or electro-magnetic coils, and DC magnetic sensors could be used in a similar configuration.

At least some embodiments further include detecting distance and position from a user170to a reference point in space. At least some of these embodiments include ultrasonic transducers, radar, inertial positioning, radio signal triangulation or even mechanical mechanisms such as rods and encoders. Other possible but less practical embodiments include, by way of example, capacitive sensors spaced around the user's body to measure changes in capacitance as the user170moves closer or further away from capacitive sensors, or load sensors spaced in an array configuration around the user's feet to detect where the user's weight is at any given time with respect to a reference and by inference the user's position. It should be evident that there are many techniques known to one with ordinary skill in the art of spatial position and distance detecting and that the foregoing detection techniques were presented by way of example and not meant to be limiting.

Further, specific body parts of the user, and/or a device (such as, a gaming weapon) can be sensed. For at least some embodiments, specific (rather than several or all) body parts of the user170are sensed. Specific examples include sensing the spatial position of the user's head, shoulders or torso.

FIG. 2shows an example of a character within a virtual world for providing an illustration of translational motion of the character and field of view as seen by the character within a virtual world that are controlled by the first input and the second input. This illustration includes, for example, a cart or wagon201serving as a fully translational camera platform in the X, Y and Z coordinates of the virtual world, which is moveable up, down, left, right, forward and backward directions absent rotations about any of its axes. Residing on the cart201is the virtual world character205, wherein the virtual world character205is looking through a camera's viewfinder, wherein the camera240is mounted on a tripod with a fully rotational and articulating, but non-translational, ball and socket joint, wherein the tripod is mounted atop the cart201. As shown, the display100is connected to the output of the camera, and therefore, provides a view of the virtual world as perceived by the character205through the camera's viewfinder, and thus, the user is able to share and experience the same exact view of the virtual world as seen and experienced by the character within it.

It is to be understood that the character205ofFIG. 2within the virtual world is a hypothetical character that is depicted to illustrate the visual display being provided to the user on the display100while the user is playing a video game (or other application) using the described embodiments. Further, the first input and the second input provided by the user control the translational movement of and field of view as seen by the character within the virtual world, which are represented inFIG. 2.

Translational Movement Control

As shown inFIG. 2, the translational motion of the character205within the virtual world occurs, for example, by the character205moving the camera240up, down, right, left, forwards or backwards, absent rotations, within the virtual world. Specifically, the first input controls the translational movement, absent rotations, of the cart or wagon201within the virtual world. The user sees the results of this translational movement rendered on the display. This translational motion is provided by sensing and tracking the user's position in 3 dimensional space.

As related toFIG. 1, for an embodiment, the translational movement of the character includes forwards, backwards, strafe left and strafe right. For an embodiment, the translational movement of the character of the first input control is strictly relative to and along the direction of a line of motion when moving forwards and backwards, or exactly orthogonal to it when strafing left, strafing right, jumping up or crouching down. This line of motion is always fixed to be perpendicular to the camera's viewfinder, and thus display, and is centered within the current field of view unless the character is ascending or descending non-level terrain, wherein the direction of translational movement can occur at an oblique angle to the perpendicular angle formed with the center of the current field of view.

Field of View

As shown inFIG. 2, control of the field of view as seen by the character205within the virtual world occurs, for example, by moving the articulating camera240atop the tripod in its ball and socket joint in any configuration of possible, but purely rotational, movements as enabled by a fully articulating ball and socket joint, including pan right, pan left, pan up, and pan down rotations which is then rendered to the display100.

As related toFIG. 1, for an embodiment, the field of view as seen by the character within the virtual world includes pan up, pan down, pan left and pan right. For an embodiment, the field of view as seen by the character within the virtual world of the second input control redirects movement within a scene that the first input controls.

For an embodiment, the user controls the translational movement of and the field of view as seen by a character within a virtual world that is displayed on the display, wherein the character is associated with a first person shooter game. For an embodiment, the translational movement allows the user to adjust the aiming point of crosshairs of the weapon controlled by the character within a particular scene. For an embodiment, the particular scene remains static while the crosshairs of the weapon are controllably adjusted.

Sensed Spatial Position of the User

Various embodiments exist for sensing the spatial position of the user. For an embodiment, the spatial position of the user or device (such as a gaming weapon or other device associated with the user) is sensed and tracked. For at least some embodiments, the device (weapon or gun) is tracking in 3 dimensional real space for the purposes of geometric correction necessary to accurately render the positioning of crosshairs on the display. At least some embodiments further utilize the 3 dimensional real space tracking of the device for tracking the position of the user as well.

For at least some embodiments, as previously described, the user's position is determined in three dimensional real space using active magnetic distance sensing wherein an AC magnetic source is generated either on the user or from a fixed reference position and an opposing magnetic detector is appropriately configured. In another embodiment, passive DC magnetic sources, such as permanent magnets or electro-magnetic coils, and DC magnetic sensors could be used in a similar configuration. Further embodiments to detect distance and position from a user to a reference point in space include ultrasonic transducers, radar, inertial positioning, radio signal triangulation or even mechanical mechanisms such as rods and encoders. Other possible embodiments include, by way of example, capacitive sensors spaced around the user's body to measure changes in capacitance as the user moves closer or further away from capacitive sensors, or load sensors spaced in an array configuration around the user's feet to detect where the user's weight is at any given time with respect to a reference and by inference the user's position.

For at least some embodiments, improvements in the spatial sensing can be used to enhance a position sensor's performance in more accurately detecting the user's position. For example, an embodiment includes one or more reflectors placed on the user's body or the device that the user is holding for use with the camera, radar or ultrasonic techniques. For at least some embodiments, objects that can emit an active signal (such as an LED for the optical system) are placed on the user's body or the device that he is holding. For at least some embodiments, an object of a given color (green for instance), geometry (circle, sphere, triangle, square, star, etc) or a combination of both (a green sphere) are placed on the user's body or the device that the user is holding.

At least some embodiments include additional sensors for enhancing a user's game play. For example, for an embodiment, an orientation sensor is included within the device (gaming weapon) that the user is holding. Based upon the system's determination of user's position, the weapon's position (supplied by the position sensor) and the weapon's orientation (provided by its internal orientation sensor) the aiming point of the weapon can be accurately controlled for display relative to the character and the user's point of view within the virtual world. For another embodiment, the user position sensor (optical, ultrasound, radar, etc) is used to not only determine the position of user and the device, but also to determine the device's orientation.

FIG. 3shows another video game apparatus, according to another embodiment. For this embodiment, the sensor includes a camera. For an embodiment, the camera is located proximate to the display100and senses spatial position of the user370.

FIG. 3shows multiple cameras362,364sensing the spatial position of the user370. That is, the spatial position sensor includes a plurality of cameras362,364, and senses changes in a depth position of the user370relative to the display. Outputs of the cameras362,364are received by a camera sensing controller380which generates a signal representing the sensed spatial position of the user370, which provides the first input control322.

As shown inFIG. 3, for an embodiment, the second input control321is provided by a joystick that is located, for example, on the gaming weapon360that the user370is using as part of a game.

As previously described, translational movement of and field of view as seen by the character110within the virtual world are displayed on the display100as controlled by the first input control322and the second input control321. As shown, the first input control322and the second input control321are received by a display driver130which processes the inputs, generating the translational movement of and field of view controls for the display100.

The gaming and game weapon system provided by the video game apparatus ofFIG. 3provides an alternative character and weapon control technology that allows the user370to arbitrarily and realistically aim the gaming weapon anywhere within a character's field of view. This embodiment has the potential of making FPS games more realistic by allowing the player to actually aim a real “weapon” at any location within a scene that is being shown on the display. This embodiment allows for great extension of the realism of FPS game play.

FIG. 4shows steps of a method of controlling a view of a character within a virtual world on a display, according to an embodiment. A first step410includes controlling translational movement of the character within the virtual world based on a first input. A second step420includes controlling a field of view as seen by the character within the virtual world based on a second input. A third step430includes sensing a spatial position of a user, wherein the sensed spatial position provides the first input based upon the sensed spatial position of the user.

As previously described, for an embodiment, the movement of the character includes forwards, backwards, strafe left, strafe right, up and down. For an embodiment, the movement of the character of the first input control is strictly relative to a center of a current field of view displayed on the display.

As previously described, for an embodiment, the field of view as seen by the character within the virtual world includes pan up, pan down, pan left and pan right. For an embodiment, the field of view as seen by the character within the virtual world of the second input control redirects movement within a scene that the first input controls.

As previously described, for an embodiment, the sensed spatial position of the user comprises a sensed spatial position of the user's head.

Although specific embodiments have been described and illustrated, the described embodiments are not to be limited to the specific forms or arrangements of parts so described and illustrated. The embodiments are limited only by the appended claims.

Claims

- A video game apparatus, comprising: a controller operative to control translational movement and field of a view of a character within a virtual world as rendered on a display based upon a first input and second input, wherein the first input controls the translational movement of the view of the character within the virtual world, wherein the translational movement includes X, Y, and Z coordinates of the virtual world, and the second input controls the field of view of the character within the virtual, wherein the field of view includes rotational movement within the virtual world;and a sensor, for sensing a spatial position of a user in three dimensions, wherein the sensor provides the first input based on the sensed spatial position of the user.

- The apparatus of claim 1 , wherein the translational movement of the view of the character within the virtual world includes forwards, backwards, strafe left and strafe right.

- The apparatus of claim 1 , wherein the translational movement of the view of the character within the virtual world of the first input control is strictly relative to and along a direction of a line of motion when moving forwards and backwards, or exactly orthogonal to the direction of the line when strafing left, strafing right, jumping up or crouching down.

- The apparatus of claim 1 , wherein the translational movement of the view of the character within the virtual world of the first input control is strictly relative to a center of a current field of view displayed on the display.

- The apparatus of claim 1 , wherein the field of view of the character within the virtual world includes pan up, pan down, pan left and pan right.

- The apparatus of claim 1 , wherein the field of view of the character within the virtual world of the second input control redirects movement within a scene that the first input controls.

- The apparatus of claim 1 , wherein the sensed spatial position of the user comprises a sensed spatial position of the user's head.

- The apparatus of claim 1 , wherein the user controls the translational movement and the field of view as seen by the character within the virtual world as rendered on the display, and wherein the character within the virtual world is associated with a first person shooter game.

- The apparatus of claim 1 , wherein the translational movement allows the user to adjust an aiming of cross-hairs of a gaming weapon controlled by the character within a particular scene.

- The apparatus of claim 9 , wherein the particular scene remains static while the cross-hairs of the gaming weapon are controllably adjusted.

- The apparatus of claim 1 , further comprising a joy-stick for providing the second input.

- The apparatus of claim 1 , wherein the sensor comprises a camera.

- The apparatus of claim 12 , wherein the camera is located proximate to the display and senses spatial position of a body part of the user.

- The apparatus of claim 1 , wherein the sensor comprises a plurality of cameras, and senses changes in a depth position of the user relative to the display.

- A method of controlling a view of a character within a virtual world rendered on a display, comprising: controlling, by a controller, translational movement of the character within the virtual world based on a first input, wherein the translational movement includes X, Y and Z coordinates of the virtual world;controlling, by the controller, a field of view of the character within the virtual world based on a second input, wherein the field of view includes rotational movement within the virtual world;and generating, by a sensor, the first input based upon sensing a spatial position of a user in three dimensions.

- The method of claim 15 , wherein the translational movement of the view of the character within the virtual world includes forwards, backwards, strafe left and strafe right.

- The method of claim 15 , wherein the translational movement of the view of the character within the virtual world of the first input control is strictly relative to and along a direction of a line of motion when moving forwards and backwards, or exactly orthogonal to the direction of the line when strafing left, strafing right, jumping up or crouching down.

- The method of claim 14 , wherein the translational movement of the view of the character within the virtual world of the first input control is strictly relative to a center of a current field of view displayed on the display.

- The method of claim 15 , wherein the field of view of the character within the virtual world includes pan up, pan down, pan left and pan right.

- The method of claim 15 , wherein the field of view of the character within the virtual world of the second input control redirects movement within a scene that the first input controls.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.