U.S. Pat. No. 8,882,592

GAME SYSTEM, GAME APPARATUS, COMPUTER-READABLE STORAGE MEDIUM HAVING STORED THEREIN GAME PROGRAM, AND GAME PROCESSING METHOD

AssigneeNintendo Co., Ltd.

Issue DateOctober 25, 2011

Illustrative Figure

Abstract

An example game apparatus calculates the attitude of a terminal device on the basis of a value of a gyro sensor of the terminal device. The game apparatus sets the position of an aim in a game image on the basis of the calculated attitude of the terminal device, and also sets the attitude of a virtual camera. The game apparatus sets the firing direction of an arrow on the basis of the position of the aim, and causes the arrow to be fired in the firing direction in accordance with the cessation of a touch operation on a touch panel of the terminal device.

Description

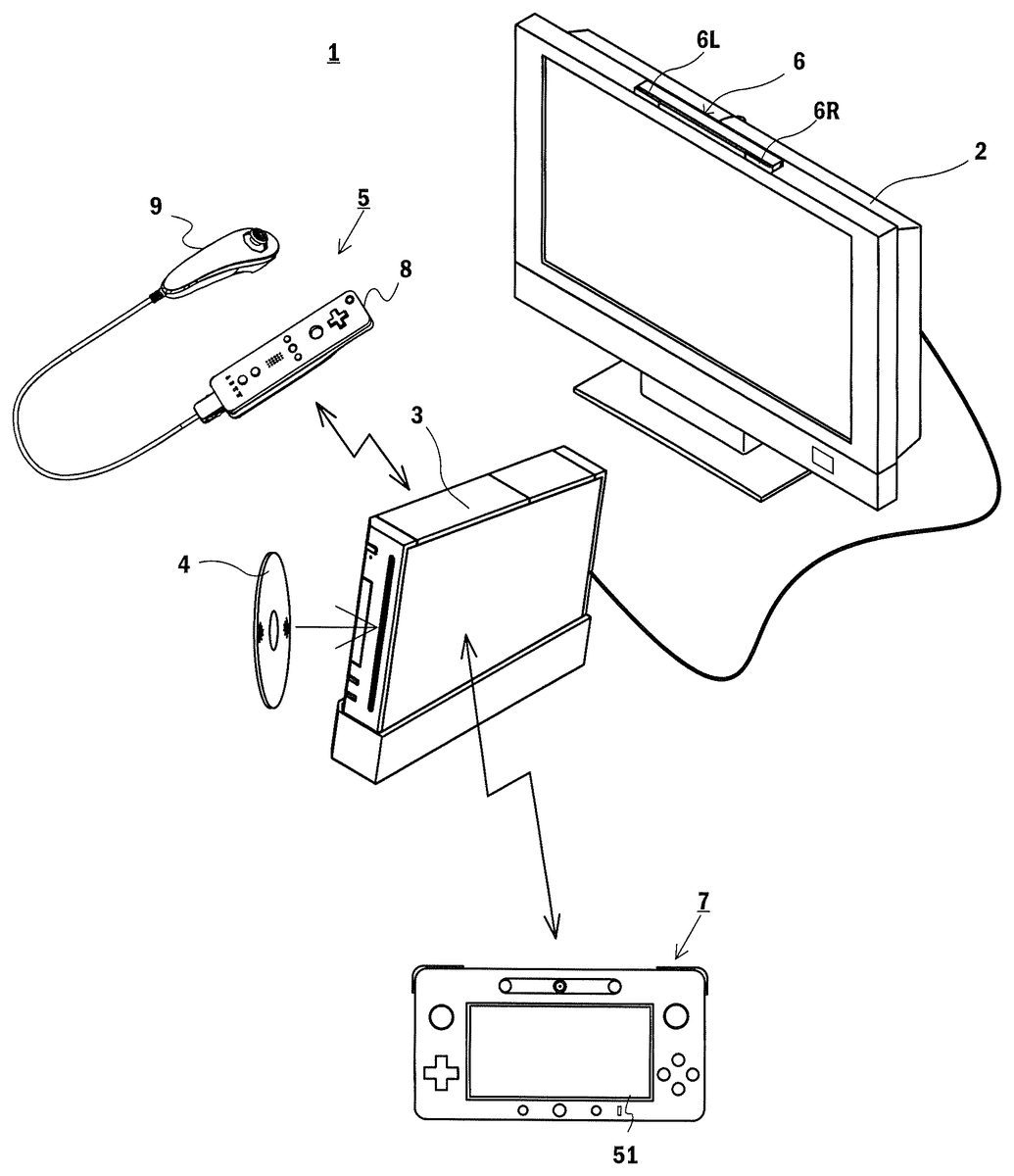

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS [1. Overall Configuration of Game System] With reference to the drawings, a description is given of a game system1according to an exemplary embodiment.FIG. 1is an external view showing a non-limiting example of the game system1. Referring toFIG. 1, the game system1includes a stationary display device (hereinafter referred to as a “television”)2typified by, for example, a television receiver, a stationary game apparatus3, an optical disk4, a controller5, a marker device6, and a terminal device7. In the game system1, the game apparatus3performs game processing on the basis of a game operation performed using the controller5, and a game image obtained by the game processing is displayed on the television2and/or the terminal device7. The optical disk4is detachably inserted into the game apparatus3, the optical disk4being an example of an information storage medium exchangeably used for the game apparatus3. The optical disk4has stored therein an information processing program (typically, a game program) to be executed by the game apparatus3. On the front surface of the game apparatus3, an insertion opening for the optical disk4is provided. The game apparatus3reads and executes the information processing program stored in the optical disk4inserted in the insertion opening, and thereby performs the game processing. The game apparatus3is connected to the television2via a connection cord. The television2displays the game image obtained by the game processing performed by the game apparatus3. The television2has a loudspeaker2a(FIG. 2). The loudspeaker2aoutputs a game sound obtained as a result of the game processing. It should be noted that in another embodiment, the game apparatus3and the stationary display device may be integrated together. Further, the communication between the game apparatus3and the television2may be wireless communication. In the periphery of the screen of the television2(above the screen inFIG. 1), the marker device6is installed. Although described in detail later, a user (player) ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

[1. Overall Configuration of Game System]

With reference to the drawings, a description is given of a game system1according to an exemplary embodiment.FIG. 1is an external view showing a non-limiting example of the game system1. Referring toFIG. 1, the game system1includes a stationary display device (hereinafter referred to as a “television”)2typified by, for example, a television receiver, a stationary game apparatus3, an optical disk4, a controller5, a marker device6, and a terminal device7. In the game system1, the game apparatus3performs game processing on the basis of a game operation performed using the controller5, and a game image obtained by the game processing is displayed on the television2and/or the terminal device7.

The optical disk4is detachably inserted into the game apparatus3, the optical disk4being an example of an information storage medium exchangeably used for the game apparatus3. The optical disk4has stored therein an information processing program (typically, a game program) to be executed by the game apparatus3. On the front surface of the game apparatus3, an insertion opening for the optical disk4is provided. The game apparatus3reads and executes the information processing program stored in the optical disk4inserted in the insertion opening, and thereby performs the game processing.

The game apparatus3is connected to the television2via a connection cord. The television2displays the game image obtained by the game processing performed by the game apparatus3. The television2has a loudspeaker2a(FIG. 2). The loudspeaker2aoutputs a game sound obtained as a result of the game processing. It should be noted that in another embodiment, the game apparatus3and the stationary display device may be integrated together. Further, the communication between the game apparatus3and the television2may be wireless communication.

In the periphery of the screen of the television2(above the screen inFIG. 1), the marker device6is installed. Although described in detail later, a user (player) can perform a game operation of moving the controller5. The marker device6is used by the game apparatus3to calculate the motion, the position, the attitude, and the like of the controller5. The marker device6includes two markers6R and6L at its two ends. The marker6R (the same applies to the marker6L) is composed of one or more infrared LEDs (Light Emitting Diodes), and outputs infrared light forward from the television2. The marker device6is connected to the game apparatus3in a wireless (or wired) manner. This enables the game apparatus3to control each of the infrared LEDs included in the marker device6to be lit on or off. It should be noted that the marker device6is portable, which allows the user to install the marker device6at a given position.FIG. 1shows the form where the marker device6is installed on the television2. The installation position and the facing direction of the marker device6, however, are a given position and a given direction.

The controller5provides the game apparatus3with operation data based on the operation performed on the controller5itself. In the present embodiment, the controller5has a main controller8and a sub-controller9, and the sub-controller9is detachably attached to the main controller8. The controller5and the game apparatus3are capable of communicating with each other by wireless communication. In the present embodiment, the wireless communication between the controller5and the game apparatus3uses, for example, the Bluetooth (registered trademark) technology. It should be noted that in another embodiment, the controller5and the game apparatus3may be connected together in a wired manner. Further, inFIG. 1, the game system1includes one controller5; however, the game system1may include a plurality of controllers5. That is, the game apparatus3is capable of communicating with a plurality of controllers, and therefore, the simultaneous use of a predetermined number of controllers allows a plurality of people to play a game. A detailed configuration of the controller5will be described later.

The terminal device7is small enough to be held by a user. This allows the user to use the terminal device7by moving the terminal device7while holding it, or placing the terminal device7at a given position. Although a detailed configuration will be described later, the terminal device7includes an LCD (Liquid Crystal Display)51, which serves as display means, and input means (a touch panel52, a gyro sensor64, and the like described later). The terminal device7and the game apparatus3are capable of communicating with each other in a wireless (or wired) manner. The terminal device7receives, from the game apparatus3, data of an image (e.g., a game image) generated by the game apparatus3, and displays the image on the LCD51. It should be noted that in the present embodiment, an LCD is employed as a display device. Alternatively, the terminal device7may have another given display device such as a display device using EL (electroluminescence), for example. Further, the terminal device7transmits, to the game apparatus3, operation data based on the operation performed on the terminal device7itself.

[2. Internal Configuration of Game Apparatus3]

Next, with reference toFIG. 2, the internal configuration of the game apparatus3is described.FIG. 2is a block diagram showing the internal configuration of a non-limiting example of the game apparatus3. The game apparatus3includes a CPU (Central Processing Unit)10, a system LSI11, an external main memory12, a ROM/RTC13, a disk drive14, an AV-IC15, and the like.

The CPU10performs the game processing by executing the game program stored in the optical disk4, and functions as a game processor. The CPU10is connected to the system LSI11. The system LSI11is connected to, as well as the CPU10, the external main memory12, the ROM/RTC13, the disk drive14, and the AV-IC15. The system LSI11, for example, controls data transfer between the components connected thereto, generates images to be displayed, and obtains data from external devices. It should be noted that the internal configuration of the system LSI11will be described later. The volatile-type external main memory12stores a program, such as the game program read from the optical disk4or the game program read from a flash memory17, and various other data. The external main memory12is used as a work area or a buffer area of the CPU10. The ROM/RTC13has a ROM (a so-called boot ROM) having incorporated therein a program for starting up the game apparatus3, and also has a clock circuit (RTC: Real Time Clock) for counting time. The disk drive14reads program data, texture data, and the like from the optical disk4, and writes the read data into an internal main memory11edescribed later or the external main memory12.

The system LSI11includes an input/output processor (I/O processor)11a, a GPU (Graphics Processor Unit)11b, a DSP (Digital Signal Processor)11c, a VRAM (Video RAM)11d, and an internal main memory11e. Although not shown in the figures, the components11athrough11eare connected together via an internal bus.

The GPU11bforms a part of drawing means, and generates an image in accordance with a graphics command (a command to draw an image) from the CPU10. The VRAM11dstores data (such as polygon data and texture data) that is necessary for the GPU11bto execute the graphics command. When the image is generated, the GPU11buses the data stored in the VRAM11dto generate image data. It should be noted that in the present embodiment, the game apparatus3generates both a game image to be displayed on the television2and a game image to be displayed on the terminal device7. Hereinafter, occasionally, the game image to be displayed on the television2is referred to as a “television game image”, and the game image to be displayed on the terminal device7is referred to as a “terminal game image”.

The DSP11cfunctions as an audio processor, and generates audio data using sound data and acoustic waveform (timbre) data that are stored in the internal main memory11eor the external main memory12. It should be noted that in the present embodiment, a game sound is generated in a similar manner to a game image, that is, both a game sound to be output from the loudspeaker of the television2and a game sound to be output from the loudspeakers of the terminal device7are generated. Hereinafter, occasionally, the game sound to be output from the television2is referred to as a “television game sound”, and the game sound to be output from the terminal device7is referred to as a “terminal game sound”.

Data of, among images and sounds generated by the game apparatus3as described above, an image and a sound to be output from the television2is read by the AV-IC15. The AV-IC15outputs the read data of the image to the television2through an AV connector16, and also outputs the read data of the sound to the loudspeaker2abuilt into the television2. This causes the image to be displayed on the television2, and also causes the sound to be output from the loudspeaker2a.

In addition, data of, among images and sounds generated by the game apparatus3, an image and a sound to be output from the terminal device7is transmitted to the terminal device7by the input/output processor11aor the like. The transmission of the data to the terminal device7by the input/output processor11aor the like will be described later.

The input/output processor11atransmits and receives data to and from the components connected thereto, or downloads data from external devices. The input/output processor11ais connected to the flash memory17, a network communication module18, a controller communication module19, an extension connector20, a memory card connector21, and a codec LSI27. The network communication module18is connected to an antenna22. The controller communication module19is connected to an antenna23. The codec LSI27is connected to a terminal communication module28. The terminal communication module28is connected to an antenna29.

The game apparatus3is connected to a network such as the Internet, and is thereby capable of communicating with external information processing apparatuses (e.g., other game apparatuses, various servers, and various information processing apparatuses). That is, the input/output processor11ais connected to a network such as the Internet via the network communication module18and the antenna22, and is thereby capable of communicating with external information processing apparatuses also connected to the network. The input/output processor11aperiodically accesses the flash memory17, and detects the presence or absence of data that needs to be transmitted to the network. When such data is present, the input/output processor11atransmits the data to the network through the network communication module18and the antenna22. The input/output processor11aalso receives data transmitted from an external information processing apparatus or data downloaded from a download server, through the network, the antenna22, and the network communication module18, and stores the received data in the flash memory17. The CPU10executes the game program to thereby read the data stored in the flash memory17and use the read data for the game program. The flash memory17may have stored therein data (data stored after or during the game) saved as a result of playing the game using the game apparatus3, as well as data to be transmitted to, or data received from, an external information processing apparatus. Further, the flash memory17may have stored therein the game program.

In addition, the game apparatus3can receive operation data from the controller5. That is, the input/output processor11areceives operation data transmitted from the controller5through the antenna23and the controller communication module19, and stores (temporarily stores) the operation data in a buffer area of the internal main memory11eor the external main memory12.

In addition, the game apparatus3can transmit and receive data of an image, a sound, and the like to and from the terminal device7. When transmitting a game image (terminal game image) to the terminal device7, the input/output processor11aoutputs data of the game image generated by the GPU11bto the codec LSI27. The codec LSI27performs a predetermined compression process on the image data from the input/output processor11a. The terminal communication module28wirelessly communicates with the terminal device7. Accordingly, the image data compressed by the codec LSI27is transmitted from the terminal communication module28to the terminal device7through the antenna29. It should be noted that in the present embodiment, the image data transmitted from the game apparatus3to the terminal device7is used in the game. Therefore, in the game, a delay in the display of the image adversely affects the operability of the game. Thus, it is preferable that a delay in the transmission of the image data from the game apparatus3to the terminal device7should be prevented as far as possible. Accordingly, in the present embodiment, the codec LSI27compresses the image data using a highly efficient compression technique such as the H.264 standard. It should be noted that another compression technique may be used, or the image data may be transmitted without being compressed if the communication speed is fast enough. Further, the terminal communication module28may be, for example, a Wi-Fi-certified communication module and may wirelessly communicate with the terminal device7at a high speed, using, for example, MIMO (Multiple Input Multiple Output) technology employed based on the IEEE 802.11n standard, or may use another communication method.

In addition, the game apparatus3transmits, as well as the image data, audio data to the terminal device7. That is, the input/output processor11aoutputs audio data generated by the DSP11cto the terminal communication module28through the codec LSI27. The codec LSI27performs a compression process on the audio data in a similar manner to that performed on the image data. Any method of compression may be performed on the audio data. It is, however, preferable that the method should have a high compression ratio, and should not cause a significant deterioration of the sound. In another embodiment, the audio data may be transmitted without being compressed. The terminal communication module28transmits the compressed image data and audio data to the terminal device7through the antenna29.

In addition, the game apparatus3transmits, as well as the image data and the audio data described above, various control data to the terminal device7where necessary. The control data is data representing a control instruction to be given to a component included in the terminal device7. The control data represents, for example, an instruction to control a marker section (a marker section55shown inFIG. 11), and an instruction to control a camera (a camera56shown inFIG. 11) to capture an image. The input/output processor11atransmits the control data to the terminal device7in accordance with an instruction from the CPU10. It should be noted that in the present embodiment, the codec LSI27does not perform a compression process on the control data. Alternatively, in another embodiment, the codec LSI27may perform a compression process on the control data. It should be noted that the above data transmitted from the game apparatus3to the terminal device7may be encrypted where necessary, or may not be encrypted.

In addition, the game apparatus3can receive various data from the terminal device7. Although described in detail later, in the present embodiment, the terminal device7transmits operation data, image data, and audio data. The data transmitted from the terminal device7is received by the terminal communication module28through the antenna29. Here, the image data and the audio data from the terminal device7are subjected to compression processes similarly to those performed on the image data and the audio data, respectively, from the game apparatus3to the terminal device7. Accordingly, the image data and the audio data are transmitted from the terminal communication module28to the codec LSI27, are subjected to decompression processes by the codec LSI27, and are output to the input/output processor11a. On the other hand, the operation data from the terminal device7may not be subjected to a compression process because the operation data is smaller in amount than the image data and the audio data. Further, the operation data may be encrypted where necessary, or may not be encrypted. Thus, the operation data is received by the terminal communication module28, and is subsequently output to the input/output processor11athrough the codec LSI27. The input/output processor11astores (temporarily stores) the data received from the terminal device7in a buffer area of the internal main memory11eor the external main memory12.

In addition, the game apparatus3can be connected to another device and an external storage medium. That is, the input/output processor11ais connected to the extension connector20and the memory card connector21. The extension connector20is a connector for an interface such as USB or SCSI. The extension connector20can be connected to a medium such as an external storage medium, or can be connected to a peripheral device such as another controller, or can be connected to a wired communication connector and thereby communicate with a network instead of the network communication module18. The memory card connector21is a connector for connecting an external storage medium such as a memory card. For example, the input/output processor11acan access an external storage medium through the extension connector20or the memory card connector21, and thereby can store data in, or read data from, the external storage medium.

The game apparatus3includes a power button24, a reset button25, and an eject button26. The power button24and the reset button25are connected to the system LSI11. When the power button24has been turned on, power is supplied to each component of the game apparatus3from an external power supply through an AC adaptor not shown in the figures. When the reset button25has been pressed, the system LSI11restarts a start-up program for the game apparatus3. The eject button26is connected to the disk drive14. When the eject button26has been pressed, the optical disk4is ejected from the disk drive14.

It should be noted that in another embodiment, some components among all the components of the game apparatus3may be configured as an extension device different from the game apparatus3. In this case, the extension device may be connected to the game apparatus3via, for example, the extension connector20described above. Specifically, the extension device may include components such as the codec LSI27, the terminal communication module28, and the antenna29, and may be attachable to and detachable from the extension connector20. This enables the game apparatus to communicate with the terminal device7by connecting the extension device to a game apparatus that does not include all the components described above.

[3. Configuration of Controller5]

Next, with reference toFIGS. 3 through 7, the controller5is described. As described above, the controller5includes the main controller8and the sub-controller9.FIG. 3is a perspective view showing the external configuration of a non-limiting example of the main controller8.FIG. 4is a perspective view showing the external configuration of a non-limiting example of the main controller8.FIG. 3is a perspective view of a non-limiting example of the main controller8from the top rear thereof.FIG. 4is a perspective view of a non-limiting example of the main controller8from the bottom front thereof.

Referring toFIGS. 3 and 4, the main controller8includes a housing31formed by, for example, plastic molding. The housing31has a generally parallelepiped shape extending in its longitudinal direction from front to rear (the Z1-axis direction shown inFIG. 3). The entire housing31can be held with one hand by an adult or even a child. A user can perform a game operation by pressing buttons provided on the main controller8, and moving the main controller8per se to change the position and the attitude (tilt) thereof.

The housing31includes a plurality of operation buttons. As shown inFIG. 3, on the top surface of the housing31, the following are provided: a cross button32a; a 1-button32b; a 2-button32c; an A-button32d; a minus button32e; a home button32f; a plus button32g; and a power button32h. In the present specification, the top surface of the housing31, on which the buttons32athrough32hare provided, is occasionally referred to as a “button surface”. On the other hand, as shown inFIG. 4, on the bottom surface of the housing31, a recessed portion is formed. On the slope surface of the recessed portion on the rear surface side, a B-button32iis provided. The operation buttons (switches)32athrough32iare each appropriately assigned a function in accordance with the information processing program to be executed by the game apparatus3. Further, the power switch32his used to remotely turn on/off the power to the game apparatus3. The top surfaces of the home button32fand the power button32hare buried in the top surface of the housing31. This makes it possible to prevent the user from inadvertently pressing the home button32for the power button32h.

On the rear surface of the housing31, a connector33is provided. The connector33is used to connect the main controller8to another device (e.g., the sub-controller9or another sensor unit). Further, on the rear surface of the housing31, latch holes33aare provided to the respective sides of the connector33in order to prevent said another device from easily separating from the housing31.

In the posterior of the top surface of the housing31, a plurality of (four inFIG. 3) LEDs34athrough34dare provided. Here, the controller5(the main controller8) is appropriately assigned a controller type (number) in order to distinguish the controller5from other controllers5. The LEDs34athrough34dare used to, for example, notify the user of the controller type currently set for the controller5that they are using, or to notify the user of the remaining battery charge. Specifically, when a game operation is performed using the controller5, one of the plurality of LEDs34athrough34dis lit on in accordance with the corresponding controller type.

In addition, the controller5includes an imaging information calculation section35(FIG. 6). As shown inFIG. 4, on the front surface of the housing31, a light incident surface35aof the imaging information calculation section35is provided. The light incident surface35ais formed of a material that allows the infrared light from the markers6R and6L to at least pass therethrough.

Between the first button32band the home button32fon the top surface of the housing31, sound holes31aare formed so as to emit a sound from a loudspeaker47(FIG. 5) built into the main controller8to the outside.

Next, with reference toFIGS. 5 and 6, the internal structure of the main controller8is described.FIGS. 5 and 6are diagrams showing the internal structure of a non-limiting example of the main controller8. It should be noted thatFIG. 5is a perspective view showing the state where an upper casing (a part of the housing31) of the main controller8is removed.FIG. 6is a perspective view showing the state where a lower casing (a part of the housing31) of the main controller8is removed.FIG. 6is a perspective view showing the reverse side of a substrate30shown inFIG. 5.

Referring toFIG. 5, a substrate30is fixed within the housing31. On the top main surface of the substrate30, the following are provided: the operation buttons32athrough32h; the LEDs34athrough34d; an acceleration sensor37; an antenna45; a loudspeaker47; and the like. These components are connected to a microcomputer42(seeFIG. 6) via wiring (not shown) formed on the substrate30and the like. In the present embodiment, the acceleration sensor37is located off the center of the main controller8along an X1-axis direction. This facilitates the calculation of the motion of the main controller8when the main controller8is rotated about a Z1-axis. Further, the acceleration sensor37is also located anterior to the center of the main controller8along its longitudinal direction (the Z1-axis direction). A wireless module44(FIG. 6) and the antenna45allow the controller5(the main controller8) to function as a wireless controller.

On the other hand, referring toFIG. 6, at the front edge of the bottom main surface of the substrate30, the imaging information calculation section35is provided. The imaging information calculation section35includes an infrared filter38, a lens39, an image pickup device40, and an image processing circuit41that are placed in order starting from the anterior of the controller5. The members38through41are each attached to the bottom main surface of the substrate30.

In addition, on the bottom main surface of the substrate30, a vibrator46is attached. The vibrator46is, for example, a vibration motor or a solenoid, and is connected to the microcomputer42via wiring formed on the substrate30and the like. The main controller8is vibrated by the actuation of the vibrator46on the basis of an instruction from the microcomputer42. This makes it possible to achieve a so-called vibration-feedback game where the vibration is conveyed to the player's hand holding the main controller8. In the present embodiment, the vibrator46is located slightly anterior to the center of the housing31. The location of the vibrator46closer to the front end than the center of the main controller8makes it possible to vibrate the entire main controller8significantly by the vibration of the vibrator46. Further, the connector33is attached to the rear edge of the main bottom surface of the substrate30. It should be noted that the main controller8includes, as well as the components shown inFIGS. 5 and 6, a quartz oscillator that generates a reference clock of the microcomputer42, an amplifier that outputs an audio signal to the loudspeaker47, and the like.

FIG. 7is a perspective view showing the external configuration of a non-limiting example of the sub-controller9. The sub-controller9includes a housing80formed by, for example, plastic molding. The entire housing80can be held with one hand by an adult or even a child. Also the use of the sub-controller9allows a player to perform a game operation by operating buttons and a stick, and changing the position and the facing direction of the controller per se.

As shown inFIG. 7, on the front end side (a Z2-axis positive side) of the top surface (the surface on a Y2-axis negative direction side) of the housing80, an analog joystick81is provided. Further, although not shown in the figures, at the front end of the housing80, a front end surface slightly inclined backward is provided. On the front end surface, a C-button and a Z-button are provided so as to be arranged in the up-down direction (the Y2-axis direction shown inFIG. 7). The analog joystick81and the buttons (the C-button and the Z-button) are each appropriately assigned a function in accordance with the game program to be executed by the game apparatus3. It should be noted that the analog joystick81and the buttons are occasionally collectively referred to as an “operation section82” (seeFIG. 8).

In addition, although not shown inFIG. 7, the sub-controller9has an acceleration sensor (an acceleration sensor83shown inFIG. 8) within the housing80. In the present embodiment, the acceleration sensor83is one similar to the acceleration sensor37of the main controller8. The acceleration sensor83may be, however, one different from the acceleration sensor37, and may be one that detects the acceleration in one predetermined axis, or the accelerations in two predetermined axes.

In addition, as shown inFIG. 7, one end of a cable is connected to the rear end of the housing80. Although not shown inFIG. 7, a connector (a connector84shown inFIG. 8) is connected to the other end of the cable. The connector can be connected to the connector33of the main controller8. That is, the connection between the connector33and the connector84causes the main controller8and the sub-controller9to be connected together.

It should be noted that inFIG. 3 through 7, the shapes of the main controller8and the sub-controller9, the shapes of the operation buttons, the numbers and the installation positions of the acceleration sensor and the vibrator, and the like are merely illustrative, and may be other shapes, numbers, and installation positions. In the present embodiment, the capturing direction of capturing means of the main controller8is the Z1-axis positive direction, but the capturing direction may be any direction. That is, the position of the imaging information calculation section35(the light incident surface35aof the imaging information calculation section35) of the controller5is not necessarily on the front surface of the housing31, and may be on another surface so long as light can be obtained from outside the housing31.

FIG. 8is a block diagram showing the configuration of a non-limiting example of the controller5. As shown inFIG. 8, the main controller8includes an operation section32(the operation buttons32athrough32i), the imaging information calculation section35, a communication section36, the acceleration sensor37, and a gyro sensor48. Further, the sub-controller9includes the operation section82and the acceleration sensor83. The controller5transmits data representing the particulars of the operation performed on the controller5itself, to the game apparatus3as operation data. It should be noted that, hereinafter, occasionally, the operation data to be transmitted from the controller5is referred to as “controller operation data”, and the operation data to be transmitted from the terminal device7is referred to as “terminal operation data”.

The operation section32includes the operation buttons32athrough32idescribed above, and outputs data representing the input state of each of the operation buttons32athrough32i(whether or not each of the operation buttons32athrough32ihas been pressed), to the microcomputer42of the communication section36.

The imaging information calculation section35is a system for analyzing image data of an image captured by the capturing means, determining an area having a high brightness in the image data, and calculating the center of gravity, the size, and the like of the area. The imaging information calculation section35has, for example, a maximum sampling period of about 200 frames/seconds, and therefore can trace and analyze even a relatively fast motion of the controller5.

The imaging information calculation section35includes the infrared filter38, the lens39, the image pickup element40, and the image processing circuit41. The infrared filter38allows only infrared light, among the light incident on the front surface of the controller5, to pass therethrough. The lens39collects the infrared light having passed through the infrared filter38, and makes the infrared light incident on the image pickup element40. The image pickup element40is a solid-state image pickup element such as a CMOS sensor or a CCD sensor. The image pickup element40receives the infrared light collected by the lens39, and outputs an image signal. Here, capturing targets, namely the marker section55of the terminal device7and the marker device6, each include markers that output infrared light. The provision of the infrared filter38allows the image pickup element40to receive only the infrared light having passed through the infrared filter38, and generate image data. This makes it possible to accurately capture the capturing targets (the marker section55and/or the marker device6). Hereinafter, an image captured by the image pickup element40is referred to as a “captured image”. The image data generated by the image pickup element40is processed by the image processing circuit41. The image processing circuit41calculates the positions of the capturing targets in the captured image. The image processing circuit41outputs coordinates representing the calculated positions to the microcomputer42of the communication section36. Data of the coordinates is transmitted from the microcomputer42to the game apparatus3as operation data. Hereinafter, the coordinates described above are referred to as “marker coordinates”. The marker coordinates change in accordance with the facing direction (tilt angle) and the position of the controller5per se. This enables the game apparatus3to calculate the facing direction and the position of the controller5using the marker coordinates.

It should be noted that in another embodiment, the controller5may not include the image processing circuit41, and the captured image per se may be transmitted from the controller5to the game apparatus3. In this case, the game apparatus3may have a circuit or a program that has functions similar to those of the image processing circuit41, and may calculate the marker coordinates described above.

The acceleration sensor37detects the acceleration (including the gravitational acceleration) of the controller5. That is, the acceleration sensor37detects the force (including the force of gravity) applied to the controller5. The acceleration sensor37detects the values of, among the accelerations applied to a detection section of the acceleration sensor37, the accelerations in linear directions along sensing axes (linear accelerations). For example, in the case of using a multi-axis (at least two-axis) acceleration sensor, the component of the acceleration in each axis is detected as the acceleration applied to the detection section of the acceleration sensor. It should be noted that the acceleration sensor37is, for example, an electrostatic capacitance type MEMS (Micro Electro Mechanical System) acceleration sensor, but may be another type of acceleration sensor.

In the present embodiment, the acceleration sensor37detects the linear accelerations in three axial directions, namely the up-down direction (the Y1-axis direction shown inFIG. 3), the left-right direction (the X1-axis direction shown inFIG. 3), and the front-rear direction (the Z1-axis direction shown inFIG. 3) based on the controller5. The acceleration sensor37detects the acceleration in the linear direction along each axis, and therefore, the output from the acceleration sensor37represents the value of the linear acceleration in each of the three axes. That is, the detected accelerations are represented as a three-dimensional vector in an X1-Y1-Z1coordinate system (a controller coordinate system) set on the basis of the controller5.

Data (acceleration data) representing the accelerations detected by the acceleration sensor37is output to the communication section36. It should be noted that the accelerations detected by the acceleration sensor37change in accordance with the facing direction (tilt angle) and the motion of the controller5per se. This enables the game apparatus3to calculate the direction and the facing direction of the controller5using the acquired acceleration data. In the present embodiment, the game apparatus3calculates the attitude, the tilt angle, and the like of the controller5on the basis of the acquired acceleration data.

It should be noted that those skilled in the art will readily understand from the description herein that a computer such as a processor (e.g., the CPU10) of the game apparatus3or a processor (e.g., the microcomputer42) of the controller5may perform processing on the basis of signals of the accelerations output from the acceleration sensor37(the same applies to an acceleration sensor63described later), whereby it is possible to estimate or calculate (determine) further information about the controller5. For example, the case is considered where the computer performs processing on the assumption that the controller5having the acceleration sensor37is in a static state (i.e., on the assumption that the acceleration detected by the acceleration sensor37is limited to the gravitational acceleration). If the controller5is actually in a static state, it is possible to determine, on the basis of the detected acceleration, whether or not the controller5is tilted relative to the direction of gravity, and also determine the degree of the tilt of the controller5. Specifically, based on the state where the detection axis of the acceleration sensor37is directed vertically downward, it is possible to determine, on the basis of only whether or not 1G (a gravitational acceleration) is applied to the acceleration sensor37, whether or not the controller5is tilted. Further, it is also possible to determine the degree of the tilt of the controller5relative to the reference, on the basis of the magnitude of the gravitational acceleration. Alternatively, in the case of using a multi-axis acceleration sensor37, the computer may perform processing on the acceleration signal of each axis, whereby it is possible to determine the degree of the tilt of the controller5in more detail. In this case, a processor may calculate the tilt angle of the controller5on the basis of the output from the acceleration sensor37, or may calculate the tilt direction of the controller5without calculating the tilt angle. Thus, the use of the acceleration sensor37in combination with a processor makes it possible to determine the tilt angle or the attitude of the main controller5.

On the other hand, when it is assumed that the controller5having the acceleration sensor37is in a dynamic state (the state where the controller5is being moved), the acceleration sensor37detects the accelerations corresponding to the motion of the controller5in addition to the gravitational acceleration. This makes it possible to determine the motion direction of the controller5by removing the component of the gravitational acceleration from the detected accelerations by a predetermined process. Further, even when it is assumed that the acceleration sensor37is in a dynamic state, it is possible to determine the tilt of the controller5relative to the direction of gravity by removing the component of the acceleration corresponding to the motion of the acceleration sensor37from the detected accelerations by a predetermined process. It should be noted that in another embodiment, the acceleration sensor37may include an embedded processing apparatus or another type of dedicated processing apparatus for performing a predetermined process on acceleration signals, detected by built-in acceleration detection means, before outputting the acceleration signals to the microcomputer42. For example, when the acceleration sensor37is used to detect a static acceleration (e.g., the gravitational acceleration), the embedded or dedicated processor may convert the acceleration signal into a tilt angle (or another preferable parameter).

The gyro sensor48detects the angular velocities about three axes (the X1, Y1, and Z1axes in the present embodiment). In the present specification, on the basis of the capturing direction of the controller5(the Z1-axis positive direction), the direction of rotation about the X1-axis is referred to as a “pitch direction”; the direction of rotation about the Y1-axis is referred to as a “yaw direction”; and the direction of rotation about the Z1-axis is referred to as a “roll direction”. Any number and any combination of gyro sensors may be used so long as the gyro sensor48can detect the angular velocities about the three axes. For example, the gyro sensor48may be a three-axis gyro sensor, or may be one that detects the angular velocities about the three axes by combining a two-axis gyro sensor and a one-axis gyro sensor. Data representing the angular velocities detected by the gyro sensor48is output to the communication section36. Alternatively, the gyro sensor48may be one that detects the angular velocity about one axis, or the angular velocities about two axes.

In addition, the operation section82of the sub-controller9includes the analog joystick81, the C-button, and the Z-button that are described above. The operation section82outputs, to the main controller8through the connector84, stick data (referred to as “sub-stick data”) representing the direction of tilt and the amount of tilt of the analog joystick81, and operation button data (referred to as “sub-operation button data”) representing the input state of each button (whether or not the button has been pressed).

In addition, the acceleration sensor83of the sub-controller9is one similar to the acceleration sensor37of the main controller8, and detects the acceleration (including the gravitational acceleration) of the sub-controller9. That is, the acceleration sensor83detects the force (including the force of gravity) applied to the sub-controller9. The acceleration sensor83detects the values of, among the accelerations applied to a detection section of the acceleration sensor83, the accelerations in linear directions along predetermined three-axial directions (linear accelerations). Data (referred to as “sub-acceleration data”) representing the detected accelerations is output to the main controller8through the connector84.

As described above, the sub-controller9outputs to the main controller8the sub-controller data including the sub-stick data, the sub-operation button data, and the sub-acceleration data.

The communication section36of the main controller8includes the microcomputer42, a memory43, the wireless module44, and the antenna45. Using the memory43as a storage area while performing processing, the microcomputer42controls the wireless module44that wirelessly transmits the data acquired by the microcomputer42to the game apparatus3.

The sub-controller data from the sub-controller9is input to the microcomputer42, and is temporarily stored in the memory43. Further, the following are temporarily stored in the memory43: the operation section32; the imaging information calculation section35; the acceleration sensor37; and data (referred to as “main controller data”) output from the gyro sensor48to the microcomputer42. The main controller data and the sub-controller data are transmitted as the operation data (controller operation data) to the game apparatus3. That is, when the time for transmission to the controller communication module19has arrived, the microcomputer42outputs the operation data stored in the memory43to the wireless module44. The wireless module44modulates a carrier wave of a predetermined frequency by the operation data, and radiates the resulting weak radio signal from the antenna45, using, for example, the Bluetooth (registered trademark) technology. That is, the operation data is modulated into a weak radio signal by the wireless module44, and is transmitted from the controller5. The weak radio signal is received by the controller communication module19on the game apparatus3side. This enables the game apparatus3to obtain the operation data by demodulating or decoding the received weak radio signal. The CPU10of the game apparatus3performs the game processing using the operation data obtained from the controller5. It should be noted that the wireless communication from the communication section36to the controller communication module19is sequentially performed every predetermined cycle. Generally, the game processing is performed in a cycle of 1/60 seconds (as one frame time), and therefore, it is preferable that the wireless transmission should be performed in a shorter cycle than this cycle. The communication section36of the controller5outputs the operation data to the controller communication module19of the game apparatus3every 1/200 seconds, for example.

As described above, the main controller8can transmit marker coordinate data, the acceleration data, the angular velocity data, and the operation button data, as the operation data representing the operation performed on the main controller8itself. The sub-controller9can transmit the acceleration data, the stick data, and the operation button data, as the operation data representing the operation performed on the sub-controller9itself. Further, the game apparatus3performs the game processing using the operation data as a game input. Accordingly, the use of the controller5allows the user to perform an operation of moving the controller5per se, in addition to a conventional general game operation of pressing the operation buttons. For example, it is possible to perform: an operation of tilting the main controller8and/or the sub-controller9to a given attitude; an operation of indicating a given position on the screen with the main controller8; an operation of moving the main controller8and/or the sub-controller9per se; and the like.

In addition, in the present embodiment, the controller5does not have display means for displaying a game image. Alternatively, the controller5may have display means for displaying, for example, an image representing the remaining battery charge.

[4. Configuration of Terminal Device7]

Next, with reference toFIGS. 9 through 11, the configuration of the terminal device7is described.FIG. 9is a diagram showing the external configuration of a non-limiting example of the terminal device7. InFIG. 9: (a) is a front view of the terminal device7; (b) is a top view; (c) is a right side view; and (d) is a bottom view. Further,FIG. 10is a diagram showing a non-limiting example of the state where a user holds the terminal device7.

As shown inFIG. 9, the terminal device7includes a housing50that generally has a horizontally long plate-like rectangular shape. The housing50is small enough to be held by a user. This allows the user to move the terminal device7while holding it, and to change the location of the terminal device7.

The terminal device7has an LCD51on the front surface of the housing50. The LCD51is provided near the center of the front surface of the housing50. Accordingly, as shown inFIG. 10, the user can hold and move the terminal device7while viewing a screen of the LCD51, by holding the housing50at portions to the right and left of the LCD51. It should be noted thatFIG. 10shows an example where the user holds the terminal device7horizontally (i.e., such that the terminal device7is oriented horizontally) by holding the housing50at portions to the right and left of the LCD51. The user, however, may hold the terminal device7vertically (i.e., such that the terminal device7is oriented vertically).

As shown in (a) ofFIG. 9, the terminal device7includes a touch panel52on the screen of the LCD51, as operation means. In the present embodiment, the touch panel52is, but is not limited to, a resistive film type touch panel. The touch panel may be of a given type such as an electrostatic capacitance type. The touch panel52may be of a single touch type or a multiple touch type. In the present embodiment, the touch panel52has the same resolution (detection accuracy) as that of the LCD51. The resolution of the touch panel52and the resolution of the LCD51, however, may not necessarily be the same. Generally, an input to the touch panel52is provided using a touch pen; however, an input may be provided to the touch panel52not only by a touch pen but also by a finger of the user. It should be noted that the housing50may include an insertion opening for accommodating a touch pen used to perform an operation on the touch panel52. The terminal device7thus includes the touch panel52. This allows the user to operate the touch panel52while moving the terminal device7. That is, the user can directly (through the touch panel52) provide an input to the screen of the LCD51while moving the LCD51.

As shown inFIG. 9, the terminal device7includes two analog sticks53A and53B and a plurality of buttons54A through54L, as operation means. The analog sticks53A and53B are each a device for indicating a direction. The analog sticks53A and53B are each configured such that a stick part thereof to be operated by a finger of the user is slidable or tiltable in a given direction (at an given angle in any of the upward, downward, rightward, leftward, and diagonal directions). The left analog stick53A is provided to the left of the screen of the LCD51, and the right analog stick53B is provided to the right of the screen of the LCD51. This allows the user to provide an input for indicating a direction using an analog stick with either the right or left hand. Further, as shown inFIG. 10, the analog sticks53A and53B are placed so as to be operated by the user holding the right and left portions of the terminal device7. This allows the user to easily operate the analog sticks53A and53B when the user holds and moves the terminal device7.

The buttons54A through54L are each operation means for providing a predetermined input. As described below, the buttons54A through54L are placed so as to be operated by the user holding the right and left portions of the terminal device7(seeFIG. 10). This allows the user to easily operate the operation means even when the user holds and moves the terminal device7.

As shown in (a) ofFIG. 9, among the operation buttons54A through54L, the cross button (direction input button)54A and the buttons54B through54H are provided on the front surface of the housing50. That is, the buttons54A through54H are placed so as to be operated by a thumb of the user (seeFIG. 10).

The cross button54A is provided to the left of the LCD51and below the left analog stick53A. That is, the cross button54A is placed so as to be operated by the left hand of the user. The cross button54A is cross-shaped, and is capable of indicating an upward, a downward, a leftward, or a rightward direction. Further, the buttons54B through54D are provided below the LCD51. The three buttons54B through54D are placed so as to be operated by the right and left hands of the user. Furthermore, the four buttons54E through54H are provided to the right of the LCD51and below the right analog stick53B. That is, the four buttons54E through54H are placed so as to be operated by the right hand of the user. In addition, the four buttons54E through54H are placed above, below, to the left, and to the right (relative to the center position of the four buttons54E through54H). This enables the terminal device7to cause the four buttons54E through54H to function as buttons that allow the user to indicate an upward, a downward, a leftward, or a rightward direction.

In addition, as shown in (a), (b), and (c) ofFIG. 9, the first L button54I and the first R button54J are provided on upper diagonal portions (an upper left portion and an upper right portion) of the housing50. Specifically, the first L button54I is provided at the left end of the upper side surface of the plate-shaped housing50so as to be exposed through the upper and left side surfaces. The first R button54J is provided at the right end of the upper side surface of the housing50so as to be exposed through the upper and right side surfaces. As described above, the first L button54I is placed so as to be operated by the index finger of the left hand of the user, and the first R button54J is placed so as to be operated by the index finger of the right hand of the user (seeFIG. 10).

In addition, as shown in (b) and (c) ofFIG. 9, the second L button54K and the second R button54L are provided on leg parts59A and59B, respectively, the leg parts59A and59B provided so as to protrude from the rear surface (i.e., the surface opposite to the front surface on which the LCD51is provided) of the plate-shaped housing50. Specifically, the second L button54K is provided in a slightly upper portion of the left side (the left side as viewed from the front surface side) of the rear surface of the housing50, and the second R button54L is provided in a slightly upper portion of the right side (the right side as viewed from the front surface side) of the rear surface of the housing50. In other words, the second L button54K is provided at a position substantially opposite to the left analog stick53A provided on the front surface, and the second R button54L is provided at a position substantially opposite to the right analog stick53B provided on the front surface. As described above, the second L button54K is placed so as to be operated by the middle finger of the left hand of the user, and the second R button54L is placed so as to be operated by the middle finger of the right hand of the user (seeFIG. 10). Further, as shown in (c) ofFIG. 9, the second L button54K and the second R button54L are provided on the surfaces of the leg parts59A and59B, respectively, that face obliquely upward. Thus, the second L button54K and the second R button54L have button surfaces facing obliquely upward. It is considered that the middle fingers of the user move vertically when the user holds the terminal device7. Accordingly, the upward-facing button surfaces allow the user to easily press the second L button54K and the second R button54L by directing the button surfaces upward. Further, the provision of the leg parts on the rear surface of the housing50allows the user to easily hold the housing50. Furthermore, the provision of the operation buttons on the leg parts allows the user to easily operate the housing50while holding it.

It should be noted that in the terminal device7shown inFIG. 9, the second L button54K and the second R button54L are provided on the rear surface of the housing50. Accordingly, if the terminal device7is placed with the screen of the LCD51(the front surface of the housing50) facing upward, the screen of the LCD51may not be completely horizontal. Thus, in another embodiment, three or more leg parts may be provided on the rear surface of the housing50. In this case, in the state where the screen of the LCD51faces upward, the terminal device7can be placed on a floor (or another horizontal surface) such that the leg parts are in contact with the floor. This makes it possible to place the terminal device7such that the screen of the LCD51is horizontal. Such a horizontal placement of the terminal device7may be achieved by adding attachable and detachable leg parts.

The buttons54A through54L are each appropriately assigned a function in accordance with the game program. For example, the cross button54A and the buttons54E through54H may be used for a direction indication operation, a selection operation, and the like, and the buttons54B through64E may be used for a determination operation, a cancellation operation, and the like.

It should be noted that although not shown in the figures, the terminal device7includes a power button for turning on/off the power to the terminal device7. The terminal device7may include a button for turning on/off screen display of the LCD51, a button for performing a connection setting (pairing) with the game apparatus3, and a button for adjusting the volume of loudspeakers (loudspeakers67shown inFIG. 11).

As shown in (a) ofFIG. 9, the terminal device7includes a marker section (the marker section55shown inFIG. 11) including markers55A and55B on the front surface of the housing50. The marker section55may be provided at any position, but is provided above the LCD51here. Similarly to the markers8L and8R of the marker device6, the markers55A and55B are each composed of one or more infrared LEDs. Similarly to the marker device6described above, the marker section55is used to cause the game apparatus3to calculate the motion of the controller5(the main controller8) and the like. The game apparatus3is capable of controlling the infrared LEDs of the marker section55to be lit on or off.

The terminal device7includes a camera56as capturing means. The camera56includes an image pickup element (e.g., a CCD image sensor or a CMOS image sensor) having a predetermined resolution, and a lens. As shown inFIG. 9, in the present embodiment, the camera56is provided on the front surface of the housing50. This enables the camera56to capture the face of the user holding the terminal device7, and therefore to capture, for example, the user playing the game while viewing the LCD51. It should be noted that in another embodiment, one or more cameras may be provided in the terminal device7.

It should be noted that the terminal device7includes a microphone (a microphone69shown inFIG. 11) as audio input means. A microphone hole60is provided on the front surface of the housing50. The microphone69is provided within the housing50at the back of the microphone hole60. The microphone69detects a sound surrounding the terminal device7, such as the user's voice. It should be noted that in another embodiment, one or more microphones may be provided in the terminal device7.

The terminal device7has loudspeakers (loudspeakers67shown inFIG. 11) as audio output means. As shown in (d) ofFIG. 9, loudspeaker holes57are provided on the lower side surface of the housing50. A sound from the loudspeakers67is output through the loudspeaker holes57. In the present embodiment, the terminal device7includes two loudspeakers, and the loudspeaker holes57are provided at positions corresponding to a left loudspeaker and a right loudspeaker. It should be noted that any number of loudspeakers may be included in the terminal device7. For example, additional loudspeaker may be provided in the terminal device7in addition to the two loudspeakers described above.

In addition, the terminal device7includes an extension connector58for connecting another device to the terminal device7. In the present embodiment, as shown in (d) ofFIG. 9, the extension connector58is provided on the lower side surface of the housing50. It should be noted that any device may be connected to the extension connection58. For example, a controller (e.g., a gun-shaped controller) used in a specific game, or an input device such as a keyboard may be connected to the extension connector58. If it is not necessary to connect another device, the extension connector58may not need to be provided.

It should be noted that in the terminal device7shown inFIG. 9, the shapes of the operation buttons and the housing50, the numbers and the installation positions of the components are merely illustrative, and may be other shapes, numbers, and installation positions.

Next, with reference toFIG. 11, the internal configuration of the terminal device7is described.FIG. 11is a block diagram showing the internal configuration of a non-limiting example of the terminal device7. As shown inFIG. 11, the terminal device7includes, as well as the components shown inFIG. 9, a touch panel controller61, a magnetic sensor62, an acceleration sensor63, a gyro sensor64, a user interface controller (UI controller)65, a codec LSI66, the loudspeakers67, a sound IC68, the microphone69, a wireless module70, an antenna71, an infrared communication module72, a flash memory73, a power supply IC74, a battery75, and a vibrator79. These electronic components are mounted on an electronic circuit board and accommodated in the housing50.

The UI controller65is a circuit for controlling the input of data to various input sections and the output of data from various output sections. The UI controller65is connected to the touch panel controller61, the analog stick53(the analog sticks53A and53B), the operation buttons54(the operation buttons54A through54L), the marker section55, the magnetic sensor62, the acceleration sensor63, the gyro sensor64, and the vibrator79. Further, the UI controller65is connected to the codec LSI66and the extension connector58. The power supply IC74is connected to the UI controller65, so that power is supplied to each component through the UI controller65. The built-in internal battery75is connected to the power supply IC74, so that power is supplied from the battery75. Furthermore, the power supply IC74can be connected, via a connector or the like, to a battery charger76or a cable through which power can be acquired from an external power supply. This enables the terminal device7to be supplied with power and charged from the external power supply, using the battery charger76or the cable. It should be noted that the terminal device7may be charged by attaching the terminal device7to a cradle not shown in the figures that has a charging function.

The touch panel controller61is a circuit that is connected to the touch panel52and controls the touch panel52. The touch panel controller61generates touch position data in a predetermined form on the basis of a signal from the touch panel52, and outputs the touch position data to the UI controller65. The touch position data represents the coordinates of the position (or a plurality of positions, in the case where the touch panel52is of a multiple touch type) where an input has been provided on an input surface of the touch panel52. The touch panel controller61reads a signal from the touch panel52, and generates touch position data every predetermined time. Further, various control instructions to be given to the touch panel52are output from the UI controller65to the touch panel controller61.

The analog stick53outputs, to the UI controller65, stick data representing the direction in which the stick part operated by a finger of the user has slid (or tilted), and the amount of the sliding (tilting). Further, the operation buttons54output, to the UI controller65, operation button data representing the input state of each of the operation buttons54A through54L (whether or not the operation button has been pressed).

The magnetic sensor62detects an orientation by sensing the magnitude and the direction of a magnetic field. Orientation data representing the detected orientation is output to the UI controller65. Further, the UI controller65outputs to the magnetic sensor62a control instruction to be given to the magnetic sensor62. Examples of the magnetic sensor62include MI (Magnetic Impedance) sensors, fluxgate sensors, Hall sensors, GMR (Giant Magneto Resistance) sensors, TMR (Tunneling Magneto Resistance) sensors, and AMR (Anisotropic Magneto Resistance) sensors. Any sensor, however, may be used so long as the sensor can detect an orientation. It should be noted that, strictly speaking, the obtained orientation data does not indicate an orientation at the place where a magnetic field other than geomagnetism is produced. Even in such a case, however, it is possible to calculate a change in the attitude of the terminal device7because the orientation data changes when the terminal device7has moved.

The acceleration sensor63is provided within the housing50. The acceleration sensor63detects the magnitudes of the linear accelerations in three axial directions (the X, Y, and Z axes shown in (a) ofFIG. 9). Specifically, in the acceleration sensor63, the long side direction of the housing50is defined as an X-axis direction; the short side direction of the housing50is defined as a Y-axis direction; and the direction orthogonal to the front surface of the housing50is defined as a Z-axis direction. Thus, the acceleration sensor63detects the magnitudes of the linear accelerations in the respective axes. Acceleration data representing the detected accelerations is output to the UI controller65. Further, the UI controller65outputs to the acceleration sensor63a control instruction to be given to the acceleration sensor63. In the present embodiment, the acceleration sensor63is, for example, an electrostatic capacitance type MEMS acceleration sensor, but, in another embodiment, may be another type of acceleration sensor. Further, the acceleration sensor63may be an acceleration sensor for detecting the magnitude of the acceleration in one axial direction, or the magnitudes of the accelerations in two axial directions.

The gyro sensor64is provided within the housing50. The gyro sensor64detects the angular velocities about three axes, namely the X, Y, and Z axes described above. Angular velocity data representing the detected angular velocities is output to the UI controller65. The UI controller65outputs to the gyro sensor64a control instruction to be given to the gyro sensor64. It should be noted that any number and any combination of gyro sensors may be used to detect the angular velocities about the three axes. Similarly to the gyro sensor48, the gyro sensor64may be constituted of a two-axis gyro sensor and a one-axis gyro sensor. Alternatively, the gyro sensor64may be one that detects the angular velocity about one axis, or the angular velocities about two axes.

The vibrator79is, for example, a vibration motor or a solenoid, and is connected to the UI controller65. The terminal device7is vibrated by the actuation of the vibrator79on the basis of an instruction from the UI controller65. This makes it possible to achieve a so-called vibration-feedback game where the vibration is conveyed to the user's hand holding the terminal device7.

The UI controller65outputs to the codec LSI66the operation data (terminal operation data) including the touch position data, the stick data, the operation button data, the orientation data, the acceleration data, and the angular velocity data that have been received from each component described above. It should be noted that if another device is connected to the terminal device7via the extension connector58, data representing the operation performed on said another device may be further included in the operation data.

The codec LSI66is a circuit for performing a compression process on data to be transmitted to the game apparatus3, and a decompression process on data transmitted from the game apparatus3. The codec LSI66is connected to the LCD51, the camera56, the sound IC68, the wireless module70, the flash memory73, and the infrared communication module72. Further, the codec LSI66includes a CPU77and an internal memory78. Although the terminal device7is configured not to perform game processing per se, the terminal device7needs to execute a minimum program for the management and the communication of the terminal device7. A program stored in the flash memory73is load into the internal memory78and executed by the CPU77when the terminal device7has been powered on, whereby the terminal device7is started up. Further, a part of the area of the internal memory78is used as a VRAM for the LCD51.

The camera56captures an image in accordance with an instruction from the game apparatus3, and outputs data of the captured image to the codec LSI66. Further, the codec LSI66outputs to the camera56a control instruction to be given to the camera56, such as an instruction to capture an image. It should be noted that the camera56is also capable of capturing a moving image. That is, the camera56is also capable of repeatedly capturing images, and repeatedly outputting image data to the codec LSI66.

The sound IC68is connected to the loudspeakers67and the microphone69. The sound IC68is a circuit for controlling the input of audio data from the microphone69to the codec LSI66and the output of audio data from the codec LSI66to the loudspeakers67. That is, when the sound IC68has received audio data from the codec LSI66, the sound IC68outputs to the loudspeakers67an audio signal obtained by performing D/A conversion on the audio data, and causes a sound to be output from the loudspeakers67. Further, the microphone69detects a sound conveyed to the terminal device7(e.g., the user's voice), and outputs an audio signal representing the sound to the sound IC68. The sound IC68performs A/D conversion on the audio signal from the microphone69, and outputs audio data in a predetermined form to the codec LSI66.

The codec LSI66transmits the image data from the camera56, the audio data from the microphone69, and the operation data from the UI controller65as terminal operation data, to the game apparatus3through the wireless module70. In the present embodiment, the codec LSI66performs a compression process, similar to that performed by the codec LSI27, on the image data and the audio data. The terminal operation data and the compressed image data and audio data are output to the wireless module70as transmission data. The wireless module70is connected to the antenna71, and the wireless module70transmits the transmission data to the game apparatus3through the antenna71. The wireless module70has the same functions as those of the terminal communication module28of the game apparatus3. That is, the wireless module70has the function of establishing connection with a wireless LAN by a method based on, for example, the IEEE 802.11n standard. The transmitted data may be encrypted where necessary, or may not be encrypted.

As described above, the transmission data transmitted from the terminal device7to the game apparatus3includes the operation data (terminal operation data), the image data, and the audio data. If another device is connected to the terminal device7via the extension connector58, data received from said another device may be further included in the transmission data. Further, the infrared communication module72performs infrared communication based on, for example, the IRDA standard with another device. The codec LSI66may include, in the transmission data, data received by the infrared communication, and transmit the resulting transmission data to the game apparatus3, where necessary.

In addition, as described above, the compressed image data and audio data are transmitted from the game apparatus3to the terminal device7. The compressed image data and audio data are received by the codec LSI66through the antenna71and the wireless module70. The codec LSI66decompresses the received image data and audio data. The decompressed image data is output to the LCD51, and an image is displayed on the LCD51. Meanwhile, the decompressed audio data is output to the sound IC68, and the sound IC68causes a sound to be output from the loudspeakers67.

In addition, when the control data is included in the data received from the game apparatus3, the codec LSI66and the UI controller65give control instructions to each component in accordance with the control data. As described above, the control data represents control instructions to be given to each component (the camera56, the touch panel controller61, the marker section55, the sensors62through64, the infrared communication module72, and the vibrator79in the present embodiment) included in the terminal device7. In the present embodiment, possible control instructions represented by the control data may be an instruction to start and halt (stop) the operation of each component described above. That is, the components that are not used in the game may be halted in order to reduce power consumption. In this case, data from the halted components are not included in the transmission data transmitted from the terminal device7to the game apparatus3. It should be noted that the marker section55is composed of infrared LEDs, and therefore may be controlled by simply turning on/off the supply of power thereto.

As described above, the terminal device7includes the operation means, namely the touch panel52, the analog stick53, and the operation buttons54. Alternatively, in another embodiment, the terminal device7may include another operation means instead of, or in addition to, the above operation means.

In addition, the terminal device7includes the magnetic sensor62, the acceleration sensor63, and the gyro sensor64as sensors for calculating the motion (including the position and the attitude, or changes in the position and the attitude) of the terminal device7. Alternatively, in another embodiment, the terminal device7may include only one or two of these sensors. Alternatively, in yet another embodiment, the terminal device7may include another sensor instead of, or in addition to, these sensors.

In addition, the terminal device7includes the camera56and the microphone69. Alternatively, in another embodiment, the terminal device7may not include the camera56and the microphone69, or may include only either one of the camera56and the microphone69.

In addition, the terminal device7includes the marker section55as a component for calculating the positional relationship between the terminal device7and the main controller8(e.g., the position and/or the attitude of the terminal device7as viewed from the main controller8). Alternatively, in another embodiment, the terminal device7may not include the marker section55. In yet another embodiment, the terminal device7may include another means as a component for calculating the positional relationship described above. In yet another embodiment, for example, the main controller8may include a marker section, and the terminal device7may include an image pickup element. Further, in this case, the marker device6may include an image pickup element instead of the infrared LEDs.

[5. Overview of Game Processing]

Next, a description is given of an overview of the game processing performed in the game system1according to the present embodiment. A game according to the present embodiment is a game performed by a plurality of players. In the present embodiment, the game apparatus3is connected to one terminal device7and a plurality of main controllers8by wireless communication. It should be noted that in the game according to the present embodiment, sub-controllers9are not used for a game operation, and therefore do not need to be connected to the main controllers8. It is, however, possible to perform the game in the state where the main controllers8and the sub-controllers9are connected together. Further, in the game according to the present embodiment, the number of main controllers8that can be connected to the game apparatus3is up to three.

In the present embodiment, one first player operates the terminal device7, while a plurality of second players operate the main controllers8. A description is given below of the case where the number of second players is two (a second player A and a second player B). Further, in the present embodiment, a television game image is displayed on the television2, and a terminal game image is displayed on the terminal device7.

FIG. 12is a diagram showing a non-limiting example of the television game image displayed on the television2.FIG. 13is a diagram showing a non-limiting example of the terminal game image displayed on the terminal device7.

As shown inFIG. 12, the following are displayed on the television2: a first character97; a second character98a; a second character98b; a bow object91; an arrow object92; a rock object93; a tree object94; a sword object96a; a sword object96b; and an enemy character99.

The first character97is a virtual character located in a game space (virtual space), and is operated by the first player. The first character97holds the bow object91and the arrow object92, and makes an attack on the enemy character99by firing the arrow object92into the game space. Further, the second character98ais a virtual character located in the game space, and is operated by the second player A. The second character98aholds the sword object96a, and makes an attack on the enemy character99, using the sword object96a. Furthermore, the second character98bis a virtual character located in the game space, and is operated by the second player B. The second character98bholds the sword object96b, and makes an attack on the enemy character99, using the sword object96b. The enemy character99is a virtual character controlled by the game apparatus3. The game according to the present embodiment is a game whose object is for the first player, the second player A, and the second player B to cooperate to defeat the enemy character99.