U.S. Pat. No. 8,795,077

GAME CONTROLLERS WITH FULL CONTROLLING MECHANISMS

AssigneeAiLive Inc.

Issue DateOctober 24, 2010

Illustrative Figure

Abstract

A personal control mechanism is disclosed. The personal control mechanism includes at least one controller with full functionalities in a sense that the controller does not need any attachment and is operable with one hand to fully control an interactive environment being shown. According to one embodiment of the controller, the controller has a top surface including a number of buttons and a joystick operable by a finger of one hand, and a bottom surface including a trigger operable by another finger of the hand. A housing of the controller is sized comfortably to fit into either hand of a user and has a holding portion designed in a way that the trigger, the buttons and the joystick are readily operated when the holding portion is being held in either hand.

Description

DETAILED DESCRIPTION OF THE INVENTION The detailed description of the invention is presented largely in terms of procedures, steps, logic blocks, processing, and other symbolic representations that directly or indirectly resemble the operations of data processing devices coupled to networks. These process descriptions and representations are typically used by those skilled in the art to most effectively convey the substance of their work to others skilled in the art. Reference herein to “one embodiment” or “an embodiment” means that a particular feature, structure, or characteristic described in connection with the embodiment can be included in at least one embodiment of the invention. The appearances of the phrase “in one embodiment” in various places in the specification are not necessarily all referring to the same embodiment, nor are separate or alternative embodiments mutually exclusive of other embodiments. Further, the order of blocks in process flowcharts or diagrams representing one or more embodiments of the invention do not inherently indicate any particular order nor imply any limitations in the invention. Referring now to the drawings, in which like numerals refer to like parts throughout the several views.FIG. 1Ashows an exemplary configuration of a controller100according to one embodiment of the current invention andFIG. 1Bshows a side view of the controller100. The controller100resembles a handheld device operable by a single hand. The controller100includes a housing made of a light material (e.g., plastic or the like). The housing has a generally parallelepiped shape extending in a longitudinal direction from front to rear. The overall size of the housing is small enough to be held by one hand of an adult or a child. The housing includes at least four sides, a forward end102, a rearward end104(not visible), a top surface106and a bottom surface108(not visible). The forward end102includes at least one emitter110designed to emit one or ...

DETAILED DESCRIPTION OF THE INVENTION

The detailed description of the invention is presented largely in terms of procedures, steps, logic blocks, processing, and other symbolic representations that directly or indirectly resemble the operations of data processing devices coupled to networks. These process descriptions and representations are typically used by those skilled in the art to most effectively convey the substance of their work to others skilled in the art.

Reference herein to “one embodiment” or “an embodiment” means that a particular feature, structure, or characteristic described in connection with the embodiment can be included in at least one embodiment of the invention. The appearances of the phrase “in one embodiment” in various places in the specification are not necessarily all referring to the same embodiment, nor are separate or alternative embodiments mutually exclusive of other embodiments. Further, the order of blocks in process flowcharts or diagrams representing one or more embodiments of the invention do not inherently indicate any particular order nor imply any limitations in the invention.

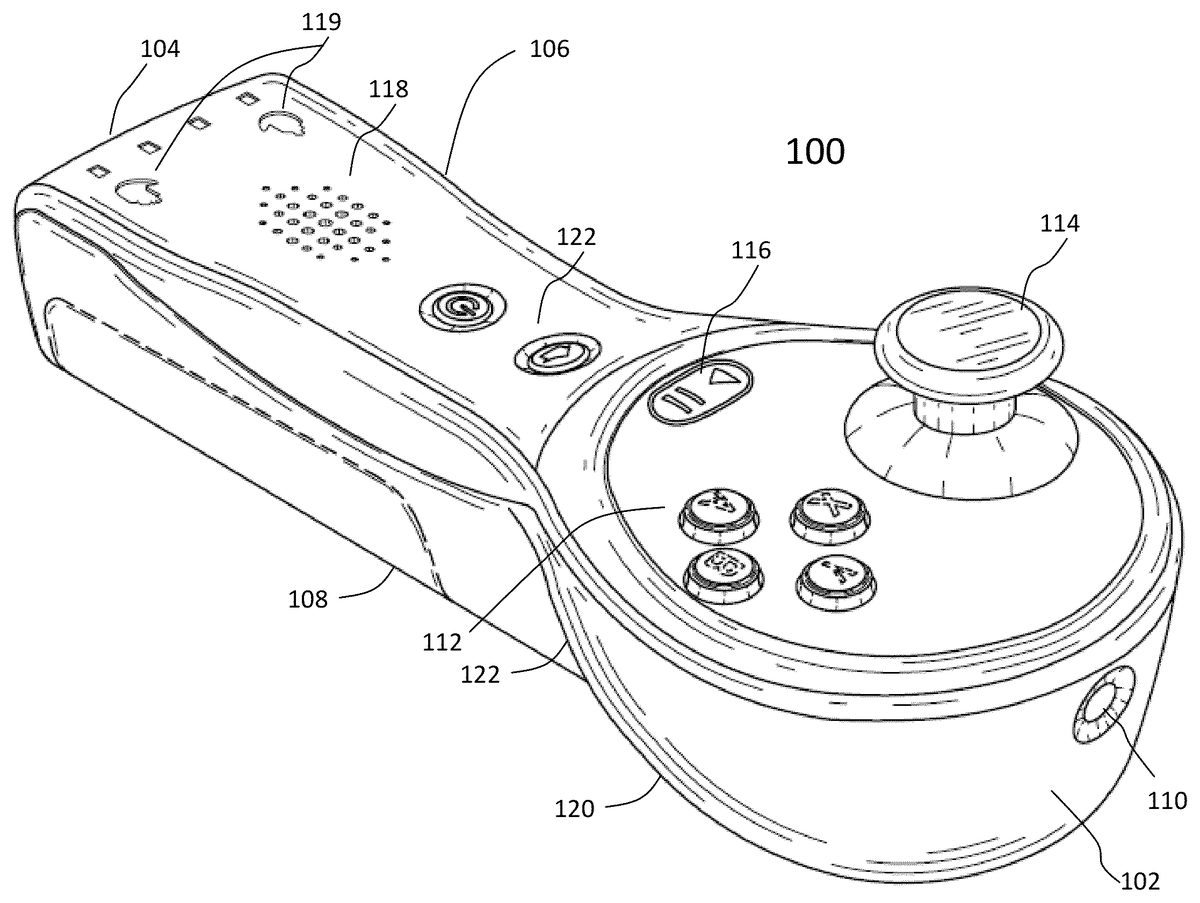

Referring now to the drawings, in which like numerals refer to like parts throughout the several views.FIG. 1Ashows an exemplary configuration of a controller100according to one embodiment of the current invention andFIG. 1Bshows a side view of the controller100. The controller100resembles a handheld device operable by a single hand. The controller100includes a housing made of a light material (e.g., plastic or the like). The housing has a generally parallelepiped shape extending in a longitudinal direction from front to rear. The overall size of the housing is small enough to be held by one hand of an adult or a child.

The housing includes at least four sides, a forward end102, a rearward end104(not visible), a top surface106and a bottom surface108(not visible). The forward end102includes at least one emitter110designed to emit one or more signals. According to one embodiment, the emitter110includes an infrared LED emitting infrared light that may be received by an image sensor, providing information that constrains where the controller100is pointing at.

Depending on implementation, besides a mechanism for a wrist strap attached thereto, the rearward end104may include a socket to allow the controller100to be charged when the socket is plugged with a power charger. The socket may be also designed to communicate with another device. For example, the socket may be in form of a USB socket, the controller100can thus communicate with a computing device through a USB cable to get updates or exchange data with a device that is Internet enabled. Optionally, the housing provides an opening to accommodate a readable medium (e.g., a flash memory card or microSD) to transport data in or out the controller100.

Near the front part of the top surface106, there are a number of operation or action buttons112, a joystick (e.g., analog stick, or analog joystick)114, a status button116, a speaker118and two hand indicators119. Each of the action buttons112is configured per a game to be played. The joystick114is a control affordance comprising a stick that pivots on a base and reports its direction (or angle) and force. Depending on implementation, the joystick114may be implemented in different forms, but primarily to be operated by a finger (e.g., a thumb) of a hand holding the controller100. In one application, through an operation on the joystick114, a user of the controller100can instruct a direction in which a character or the like appearing in a virtual game world is to move or a direction in which a cursor is to move.

According to one embodiment, the housing of the controller100is sized comfortably to fit into one hand and designed to have a holding portion in a way that the buttons112and the analog stick (or joystick)114are readily operated by a thumb when the holding portion is being held in the hand and another finger is on a trigger120. Depending on a game being played, the trigger120may be used as a gun trigger in a shooting game, or a gas pedal in a driving game, or a means to indicate grabbing. To facilitate a child's ability to comfortably reach the buttons112, analog stick114and trigger120, the neck of the forward part of the housing is made as thin as possible, and the undersurface122of the housing under the joystick and buttons is deeply grooved to provide easy access for a finger. In one embodiment, the buttons112are small, in a diamond formation, and placed as close to the joystick114as is feasible for a large-handed adult to accurately access all. The grooves on the undersurface122are symmetric along the longitudinal axis of the controller so that both the left and right hands have easy access to the trigger120. The centerline of the joystick114is lined up with the buttons112so that both left and right hands of a person have equal access to the stick114and the buttons112.

The start button116is provided to assist the user to start games, to pause them, and for other common interactions with electronic video games.

The speaker118is provided to generate sounds (e.g., the sound of a ball hitting a tennis racquet). The two hand indicators119are provided, but only one will be turned on, to indicate whether the controller100is being held by a left or right hand. There are other buttons122each of which has a top surface thereof buried in the top surface106so as not to be inadvertently pressed by the user.

One of the important features, advantages or benefits provided by the controller100is that it supports all classic control mechanisms that one would expect when playing a non-motion-sensitive computer video game, where the player holds one of these controllers in each hand, and has access to the dual analog stick, dual trigger, and multiple button control mechanics that were popularized by games like Halo. This includes control modalities that are comfortably operable by one hand of the person holding the controller.

Additionally, because the controllers are independently operable in either hand, and each controller100is capable of generating inertial sensor signals sufficient to derive relative orientations and positions in six degrees of freedom, the player may engage in free-form motion control with both hands physically moving and generating motion-sensitive control signals independently. Unless specifically stated, a 6D location or locations of a controller includes 3 translational data and 3 angular data, a position, positions, a location and locations are interchangeably used herein. Optionally, with an external imaging device, an optical signal emitted from each controller is captured and analyzed to help determine drift-free orientations and positions in six degrees of freedom relative to the external imaging device, in conjunction with the inertial sensor signals.

FIG. 1Cshows a function block diagram of a controller150that may correspond to the controller100ofFIG. 1A. The controller150includes a plurality of inertia sensors152such as linear sensors (e.g., accelerometers), rotation sensors (e.g., gyroscopes), magnetometers, and other sensors whose output can be transformed and combined to continuously calculate via dead reckoning the position, orientation, and velocity (direction and speed of movement) of a moving controller without the need for external references. Typically such estimations are good for short periods of time before drifting, due in part to sensor noise and errors, non-linearities and analog-to-digital conversion bucket size issues.

According to one embodiment, there are at least three linear sensors and three rotation sensors, each producing a signal when the controller150is caused to move from one position to another position. The signals from the inertia sensors152are coupled to a processor162via interfaces158to send the signals to a base unit (not shown) via one of the communication ports160. Depending on implementation, the communication port for transmitting the inertia sensor signals to the base unit may be based on Bluetooth or wireless data communication (e.g., WiFi).

In operation, an action signal is generated when one of the buttons154and the joystick156is manipulated. The action signal is also coupled to the processor158via the interfaces158. According to one embodiment, the processor162is configured by a module stored in memory164to package the action signal together with the inertia sensor signals for transmission to the base unit.

In addition, the processor162is configured to drive one or more outputs168depending on the action signal(s) and/or the inertia sensor signals in conjunction with the virtual environment being displayed at the moment. Different from any controllers in the prior art, and critical to the viability of a full featured control system including independently operable motion sensing controllers, the processor162is configured to indicate which hand is holding the controller by turning on one of the two indicators120on the top surface106of the controller100inFIG. 1A.

FIG. 1Dshows a configuration180in which a number of controllers182are used to interact with a virtual environment being displayed on a display screen184(e.g., a television) via a base unit186. The base unit186is caused to execute an application and drive the display184to show the virtual environment. When one of the controllers182transmits signals, the base unit186is configured to receive the signals and derive orientations and positions of the controller from which the signals are received. The base unit186is also configured to receive signals from the camera188which may receive infrared signals from the IR LEDs on the controllers182, and use this information to help eliminate any drift in positions and orientations of the controllers. Likewise the base unit186may send one or more signals to drive one or more outputs on the controller. In particular, an appropriate one of the indicators119on the top surface106of the controller100inFIG. 1Ais turned on by a signal from the base unit186in conjunction with the application being executed therein.

Unless specifically stated, the base unit186as used herein may mean any one of a dedicated videogame console, a generic computer or a portable device. In reality, the base unit186does not have to be in the vicinity of the display184and may communicate with the display184via a wired or wireless network. For example, the base unit186may be a virtual console running on a computing device communicating with the display184wirelessly using a protocol such as wireless HDMI. According to one embodiment, the base unit186is a network-capable box that receives various data (e.g., image and sensor data) and transports the data to a server. In return, the base unit186receives constantly updated display data from the server that is configured to integrate the data and create/update a game space for a network game being played by a plurality of other participating base units.

FIG. 2Ashows a configuration200in which two exemplary controllers202and204are being imaged within a field of view by a camera206. These two controllers202and204may be being held respectively by two players or in two hands of one player. The camera206is preferably disposed in front of the player(s), for example, on top of a display to capture movements of the two controllers202and204within the field of view by the camera206. In one embodiment, when either one of the two controllers202and204happens to go beyond the field of view of the camera206, signals from the inertial sensors, magnetometers or other tracking-related sensors in the controller may be used to facilitate the tracking of the movement.

In one embodiment, the LED emitters110emit in the infrared spectrum so as to be largely invisible to human eyes, and have a wide half power angle to facilitate easy detection over gross player movement; and the camera188is equipped to filter out wavelengths that are not matched to the LED emitters.

In another embodiment, the LED signals from the emitters110are detected with sub-pixel accuracy and tracked from frame to frame on an image map that is generated by the camera188. The per-frame LED locations on the image map are used to eliminate the drift and other errors stemming from pure inertial sensor-based tracking of the absolute orientations and positions of the controllers.

FIG. 2Bshows a flowchart or process210of describing movement-based controller disambiguation as applied to detecting which controller is in which hand of a player. The flowchart210may be implemented in software, hardware or in a combination of both thereof, and may be understood in conjunction withFIG. 1A-FIG.1D. It is assumed that there are two controllers, each is designed according to one embodiment of the present invention as shown inFIG. 1A. The controllers are in sleeping mode or turned off when they are not used. In212a player picks up both controllers and turns them on. Once the controllers are in working mode, the process210proceeds. In214an initial dynamic calibration is automatically performed. The calibration includes zeroing the initial location from which position tracking of a controller will be computed, until the world frame of reference relative to the display184is computed. Often an initial rough correspondence is achieved “behind the scenes” by requiring the player to point and select certain boxes being displayed on the display. The camera188is in an approximately known position, for example, above or below the display, and is positioned to be able to capture such pointing events. The pointing location, including the rough orientations of the controller at the time of pointing as determined by the force gravity exerted on the inertial sensors, has enough information to form many constraints on the possible positions of the player with respect to the display.

Once initial calibration is complete in214, two threads are initiated: one has to do with driving the inertial sensors152in the controllers and computing the 6D positions and orientations. The second thread has to do with driving the camera to track the LED locations of the controllers as they show up in the recorded image of the camera (e.g. an X,Y location in the image plane). In216, the processing unit186receives inertial sensor data from each of the two controllers. In one embodiment, the inertial sensors include a three-axis accelerometer and a three-axis gyroscope. In another embodiment the inertial sensing is provided by a three axis accelerometer combined with a 3-axis magnetometer whose output can be transformed to provide angular velocity. The main requirement is to have enough inertial information to support computation of the 6D orientation and position of each of the controllers in real time.

In218the new positions and orientations of each controller is computed based on the last known positions and orientations, the inertial forces recorded in216, and possibly other state internal to the inertial tracker.

In220, the 6D relative position and orientation for each controller as determined in218is updated and used in conjunction with the last estimated relative depth for each controller, and the last estimated X,Y pointing location of each controller on the camera's image plane, to compute a new estimated INERTIAL-XY from the pointing and relative depth estimate for each controller. INERTIAL-XY refers to the X and Y component of a part (e.g., a tip) of the controller on an image plane, as determined in part in218from the inertial estimates of the positions and orientations of the controller, and the relative depth with respect to the display. The INERTIAL-XY values are stored in219for subsequent updating by220and use by222. Initially the pointing and relative depth estimates with respect to the display may be quite poor, especially on the first pass where these estimates are initialized in214. The estimates improve significantly after the first full pass through this process210. Once the new estimates are computed, the control passes back to216to wait for the next set of inputs from the controllers. Typically inertial sensors can be read, communicated and processed at a much higher rate than the image data.

In one embodiment, the inertial sensor-based tracking solution described in216,218and220and whose INERTIAL-XY data is stored in219is updated continuously, and will typically update many times before a single image frame is processed and control in one thread passes to222. In one embodiment, every controller has its own thread and its inertial sensors are processed asynchronously with respect to the other controllers and the camera.

In221, each camera image frame is processed asynchronously to detect and track all the LEDs that are in the viewing frustrum of the camera188. The output is the X, Y pixel location in the pixel map (i.e. image frame) representing the center of the excitation area for each LED signal that was detected. Not all controllers may be represented in an image frame, since not all controllers may be pointing toward the display and the camera at every moment. In one embodiment, the image is scanned periodically to detect new LEDs, which are then in subsequent frames tracked by scanning the pixels local to the last known X,Y coordinates of the center of the area of LED excitation. Depending on implementation, various measures may be taken to minimize signal disturbance to the excited pixels representing the LEDs. For example, a filter may be used to enhance the wavelengths of the LEDs with respect to others from ambient lighting sources so that it is easy to process an image frame to locate the areas containing the LED. In one embodiment a classifier is built based on several features and the classifier is used to differentiate real LED signatures from false locations resulting from other lighting. In another embodiment the LED location is tracked to sub-pixel accuracy by using a modified center of mass calculation for the LED patterns, which when the controllers are being moved, take on a comet-like appearance.

The process210passes from221once the LED tracking scan is completed for a given image frame. In222a collection of error estimates are computed for each possible pairing of the LED-XY positions computed in221to INERTIAL-XY positions stored and continuously updated in219. There are several possible error estimates of merit. One is a simple output error estimate, the root-mean-squared error between the two XY positions (i.e. sqrt((x1−x2)^2+(y1−y2)^2)). Another one is analogous to a minimum edit error, what is the smallest change in the estimated controller position needed to move the INERTIAL-XY pointing location from the current position to the LED-XY position. For example, imagine the INERTIAL-XY as the splash of light on a wall created by a candle fixed to the end of a stick, and the LED-XY as the target point—the minimum edit error is a measure of how much the stick must be moved to match the splash of light with the target point. It can be useful to consider the component estimates (change position only or orientation only) of the minimum edit error separately as well. Still another one is a vector difference between a vector composed of the last few frames of LED-XY data and the corresponding time frame vector of INERTIAL-XY data (derivative error). Yet another one is the INERTIAL-XY difference with the controller that last frame was matched with the current LED-XY (stability error).

In one embodiment a predictor is constructed and uses a regression estimate of some or all of the above errors to compute a final error. In another embodiment an estimate of the probability of the pairing is created and computed as a Dirichlet probability over a fixed number of passes through the221-228loop to determine if this pairing is a bad match—i.e. is incorrect. In another embodiment the averaged normalized estimate of all of the estimates that agree are returned. There are many possible combinations of error estimates, it should be clear to those skilled in the art that exactly how the estimate is chosen is not a matter of significant concern as long as it is rational and data driven.

In221and222, the movement-based disambiguation process is effectively providing a second, independent estimate of the 6D orientation and position of each controller. It is not necessary for this independent estimate to provide 2 degrees of freedom (i.e. the XY location on an image frame)—the approach extends to an independent estimate of anywhere from 1 to 6 degrees of information. Viable alternatives to this optically-driven (LED and camera) approach include a sonar-based emitter/receiver pair, and magnetometer based approaches where enough is known about the ambient field.

In224, the error estimates of each possible pairing between controller-based INERTIAL-XY and camera-based LED-XY is received from 222 and a new assignment is made that maps each controller to 0 or 1 LED-XY points. In one embodiment, this decision is not strictly based on the current minimal error, but rather on a function of the current error estimates, the overall stability of recent assignments, and the degree of clarity that a previous assignment needs to be modified.

In226, the controller to LED-XY assignments from224are received, and used to provide a mapping to the left or the right hand of the player. In one embodiment the significant assumption is made that players will face the display without crossing their hands, and so the hand assignment is rather trivial given the relative LED-XY positions in the image plane of each controller. In one embodiment, a face tracker is used to verify that a human face is detected. An important feature and benefit of this invention is that players never need to think about “which controller am I supposed to hold in which hand?”, eliminating a significant barrier to this form of fully functional free form motion control.

In another embodiment, additional camera-independent inputs are used in this decision, one of which is an inertial-only recognizer which is analyzing the motion stream and returning a series of probabilities that each stream is sourced from a controller which is in one of four situations: in the right hand of a right-handed person, in the right hand of a left-handed person, in the left hand of a right-handed person, or in the left hand of a left-handed person. On average, at rest, over a longer period of time, humans tend to hold controllers in such a way that they are inner-pointing—this and other tendencies can be picked up by a classifier trained on features related to the inertial signals received from one controller compared to the other controller.

In228the internal state of the inertial tracker for each controller used in218and220is updated. This inertial state includes the most recent estimated orientation of the controller, an absolute depth from the display (and so from the camera), a relative location, pointing XY on the image plane, velocity vector and various error estimates. The inertial state is corrected to adjust the estimated inertial position and orientation so that the pointing INERTIAL-XY matches the paired LED-XY. In one embodiment, this entire adjustment is made during every visit to228. In another embodiment, the adjustment is smoothed out and collected over several passes through228to avoid the appearance of jerkiness in the virtual interactive environment being shown on the display.

An important feature and benefit of this process is the ability to map from a virtual environment being displayed on the display to the world frame in reference to the display and the player. The ability to detect and report a hand assignment to the player is just one useful benefit, and there are many more forms of player interaction with the physical and virtual world that are enabled by having access to the relative depth of both controllers and to their relationship with the player, especially as this methodology is generalized to multiple players and multiple controllers.

It shall be noted that the process210inFIG. 2Bis not limited to being applied to two controllers and one player. Those skilled in the art shall understand from the description that the process210is applicable to any number of controllers and players. In one embodiment, the process210facilitates to disambiguate a number of controllers, in which at least one player holds two controllers in each of his/her hands and/or at least one player holds one controller in one hand. Additionally, the controller disambiguation inFIG. 2Bmaps results to the world frame of reference by assigning a left or a right hand to the player that has picked up the controllers. One of the important features and advantages is that the image plane provides a representation of the world frame of reference, and the mapping from controller to LED track in224provides a mapping from controllers to world frame of reference that can be reflected accurately and in more detail to players in many useful and interesting ways, for example, as a key enabling technology for augmented reality applications.

FIGS. 3A,3B and3C show three exemplary control paradigms in which the fully functional freeform motion controller can be used to interact with a virtual environment being displayed.FIG. 3Ashows two controllers being held in the left and right hands of a user, and being used as a classic controller, i.e., not a motion sensitive controller and so not capable of registering the physical motion of the player. The dashed lines connecting the controllers are shown simply to help visualize how the controllers are comfortable for players to use, and how they support classic controller-controlled games. Basically, if one were to connect the controllers as shown, the form factor is similar to a classic controller, and the sum total of all the control affordances (e.g., analog sticks, buttons, triggers) presents a complete, fully functional set for motion-insensitive gaming.

FIG. 3Bshows one moment ofFIG. 3Ain which the two handed freeform motion control aspect of this solution is being used by a single player. Many important features and benefits of a fully functional freeform motion controller may be appreciated, and may be in part described in the form of an example of a First Person Shooter (FPS) control scheme comparison between what is enabled by the controller in this invention and what is typically available in the current state of the art.

In a FPS game, a virtual in-game camera is roughly positioned behind the shoulder of the player or the main protagonist in the game, so the virtual interactive environment is showing virtual hands of an avatar and the view in front of it on the display. For a classic Xbox-like controller, the control paradigm is to map Left-Analog-Stick to movement in the environment, and Right-Analog-Stick to both looking with the head, and to aiming the gun. For PS3 Move- or Wii Remote-like controllers, the control paradigm is to map Left-Analog-Stick to movement in the environment, and to map Right-Pointing to both looking with the head, and to aiming the gun. In both these cases, overloading one control affordance with two major activities (looking, and aiming) causes many difficulties. For example, how can one tell if the player wants to aim at a bad guy on the right side of the screen, or just wants to look there?

With a fully function freeform motion controller, an entirely new control paradigm is possible that is much more natural and does not have the common overloaded control affordance issues. The new mapping is Left-Analog-Stick for movement in the environment, Right-Analog-Stick for looking with the head, and Right-Pointing to aim. The three major functions are disconnected and can be controlled separately. Thus, there are significant benefits and advantages to adding new control paradigms to the video gaming industry. After all, good control mechanisms are central to importance to good games.

FIG. 3Cis an exemplary diagram that makes it clear that a third major mode of operation is to support two players with one controller each. This is possible since the controllers are independently operable and fully functional. This mode additionally enables a super-set of the single-handed motion-controlled games that are enabled with current state of the art controllers, since the analog stick creates many possibilities.

Backwards Compatibility

According to one embodiment, a fixed application programming interface (API) (e.g., a standard device-independent motion data API) is provided for applications. The API abstracts away from details of the controllers (e.g., the controllers182ofFIG. 1D) that are providing the motion signals, and provides a registration interface with which the manufacturer or distributer or user of a new device can inform the system of the sufficient statistics of the device. This is an essential element for application developers—the less device fragmentation there is, the broader the abstract platform for a given motion sensitive application. An end user is exposed only indirectly to the benefits of the API in that they can now use a broader range of input devices when interacting with their motion sensitive applications. Additionally, the same techniques used for device independence can be applied to ensure the backwards compatibility of the controllers in the present invention with most previous motion sensing controllers on the market before it. There are many benefits and advantages in providing personal motion control solutions that will work with applications that were designed originally for other motion control devices.

As known to those skilled in the art, there are many different inertial sensing devices with a wide range of specifications, error characteristics and capabilities. Operating a device with inertial sensors in one location based on the operation (e.g., algorithm, code and settings) for a different device with sensors in a different location can pose serious barriers in many cases.

FIG. 4is a flowchart or process400of providing compatibility of a controller per the current invention to a prior-art controller. The process400may be implemented in hardware, software of in combination of both. According to one embodiment, a driver implementing part of the process400is executed in the controller. According to another embodiment, a software module is executed in a base unit to accommodate the controller, where the base unit was designed for an old controller. The software module may be installed into the base unit from different means (e.g., the internet or a readable medium). The process400proceeds when a transforming module (e.g., the driver or the software module) is available.

In404, the inertial sensor data coming from a controller is received. In406, a transformation of the sensor data is carried out to masquerade as though the inertial sensor data were coming from a set of inertial sensors in an old controller. For example, a controller designed per one embodiment of the present invention is being used to replace a prior-art controller. Depending on implementation, the transformation may be carried out either in a driver for the controller or a software module running on a processing unit for the old controller, which helps ensure the backwards compatible nature of the controller.

For example, for a wide range of accelerometers, if the maximum sensitivities and ranges are known, and the respective locations of the sensor within the rigid controller body are known, the output of two different devices can be mapped to each other without enough information loss to affect motion recognition. In one embodiment, if the other device or controller is not equipped with a gyroscope or other means of providing angular velocity, the gyroscope output data for the controllers in the present invention can simply be ignored.

In another embodiment, the controllers have a SD-card-like interface (e.g., disposed underneath the battery cover) that allows an end user to swap out an older generation of inertial sensors placed on a chip that fits into that interface, with a newer generation of inertial sensors. In this embodiment, the associated driver for the updated controllers is provided and allows the newer sensors to be backwards compatible with the older sensors, by masquerading as the older sensors for applications that require it. In some cases this means artificially cutting off the sensitivity ranges of the new sensors to match the older ones.

Device independence and backwards compatibility must also apply to tracking in a general motion control environment. One exemplary task is to track the positions and orientations of some visible part of the device, in part so that the tracking results can be used as an input to recognition. When tracking a known position on a known device with known sensor locations, a standard approach is to track the location of the sensors over time, then at the end when reporting the results to the user, report the known visible point on the rigid body of the controller instead of reporting the actual sensor position. For example, if the sensors are in the center of mass of the controller, the position and orientation of the center of mass may be first tracked, then the location of the visible point is computed as: Pos−orientation*vecAcc, where Pos is the tracked location of the inertial sensors in world frame, orientation is the orientation of the controller, and vecAcc is the location of the inertial sensors relative to the visible point that are being located.

A more beneficial but challenging problem is to continue using a set of motion recognizers of the old controllers when the device characteristics of the old controllers differ from a new device being recognized. In other words, data from inertial sensors in a new controller and located in a specific position is transformed to act as though the data is being generated from the old sensors residing in a different location in the old controller. The naive approach to transforming the data fails in practice because inertial sensor noise is too strong.

According to one embodiment, in408, the sensor noise is accounted for to allow device independent recognition of the controller using the motion recognizers provided for the old controller. The following methods of accounting for sensor noise allow device independent recognition through a standard motion data API. To facilitate the understanding of the approach, the following pseudo-code cutout shows the steps involved in correcting inertial readings from a sensor not located at the center of mass, for which no corrections are needed for angular velocity data if the object is a rigid body, and angular velocity data is used to estimate the readings that would have been measured at the center of mass as follows.

LX=accX;

LY=accY;

LZ=accZ;

//Subtract out tangential effects of rotation of accelerometers around center of mass

LZ−=aaX*yOffset;

LY+=aaX*zOffset;

LX−=aaY*zOffset;

LZ+=aaY*xOffset;

LY−=aaZ*xOffset;

LX+=aaZ*yOffset;

//Centripetal acceleration, move back to acceleration at center of mass

LX+=xOffset*(avY*avY+avZ*avZ);

LY+=yOffset*(avX*avX+avZ*avZ);

LZ+=zOffset*(avX*avX+avY*avY);

//Compensate for gyroscopic effects

LX−=avX*(avY*yOffset+avZ*zOffset);

LY−=avY*(avX*xOffset+avZ*zOffset);

LZ−=avZ*(avX*xOffset+avY*yOffset);

Keys: accX, accY, accZ—linear accelerations measured along each axis at sensor position

avX, avY, avZ—angular velocities measured around each axis

aaX, aaY, aaZ—angular accelerations calculated around each axis

xOffset, yOffset, zOffset—physical separation between accelerometers and center of mass

LX, LY, LZ—calculated linear accelerations for center of mass

Improvements to account for sensor noise:

1) In practice, it is noted that measuring angular acceleration over multiple periods of sensor data gave smoothed estimates that helped reduce the effect of noise on the calculated linear accelerations. The number of readings used varied according to the sampling rate and noise characteristics of the particular gyroscopes.

dt=history[endIndex].time−history[startIndex].time;

aaX=(history[endIndex].avX−history[startIndex].avX)/dt;

aaY=(history[endIndex].avY−history[startIndex].avY)/dt;

aaZ=(history[endIndex].avZ−history[startIndex].avZ)/dt;

2) Angular acceleration was reduced when the corresponding angular velocity was small. (Most acceleration was found to be a result of noise in this case)

//If angular velocity is small, angular accelerations may be due primarily to the

//gyro readings jumping between values, yielding jumps of up to about 5 rad/sec^2

if (reduceAA)

{real const aaReduction=5.0f; //Reduce aa this much at zero angular velocity (rad/sec/sec)real const smallAngularVelocity=0.5f; //Don't adjust accelerations if angular velocity above this value (rad/sec)moveTowardsZero(aaX, asReduction*(smallAngularVelocity−fabsf(avX))/smallAngularVelocity);moveTowardsZero(aaY, aaReduction*(smallAngularVelocity−fabsf(avY))/smallAngularVelocity);moveTowardsZero(aaZ, aaReduction*(smallAngularVelocity−fabsf(avZ))/smallAngularVelocity);

}

Different embodiments of the present inventions have been described above. One skilled in the art will recognize that elements of the present invention may be implemented in software, but can be implemented in hardware or a combination of hardware and software. The invention can also be embodied as computer-readable code on a computer-readable medium. The computer-readable medium can be any data-storage device that can store data which can be thereafter be read by a computer system. Examples of the computer-readable medium may include, but not be limited to, read-only memory, random-access memory, CD-ROMs, DVDs, magnetic tape, hard disks, optical data-storage devices, or carrier waves. The computer-readable media can also be distributed over network-coupled computer systems so that the computer-readable code is stored and executed in a distributed fashion.

The present invention has been described in sufficient detail with a certain degree of particularity. It is understood to those skilled in the art that the present disclosure of embodiments has been made by way of examples only and that numerous changes in the arrangement and combination of parts may be resorted without departing from the spirit and scope of the invention as claimed. While the embodiments discussed herein may appear to include some limitations as to the presentation of the information units, in terms of the format and arrangement, the invention has applicability well beyond such embodiment, which can be appreciated by those skilled in the art. Accordingly, the scope of the present invention is defined by the appended claims rather than the forgoing description of embodiments.

Claims

- A personal control mechanism comprising: a pair of identical controllers, each of the controllers including: a housing having at least a forward end, a top surface and a bottom surface, the forward end including an emitter emitting a light to be captured by a receiver remotely located, the top surface including a number of buttons and a joystick all operable by a finger of one hand, the bottom surface including a trigger operable by another finger of the hand, the housing having a holding portion designed in a way that the trigger, buttons and the joystick are operated by respective fingers of the hand when the holding portion is being held in the hand;a plurality of inertial sensors producing sensing signals that are sufficient to derive relative positions and orientation in six degrees of freedom by a processing unit;and an indicator indicating the each of the controllers is being held in a left hand or a right hand when both of the controllers are being held by one user and indicating the each of the controllers is being held by which user when the two controllers are respectively being held by two users, wherein the indicator is turned on automatically by the processing unit configured to receive independently and process the sensing signals from both of the controllers in conjunction of determined respective coordinates of illuminations from the controllers in an image from a camera provided in vicinity, wherein the sensing signals of each of the controllers are received in the processing unit configured to generate a virtual interactive environment in which at least one of objects is responsive to the sensing signals of either one of the controllers.

- The personal control mechanism as recited in claim 1 , wherein the indicator is disposed on the top surface of each of the controllers to provide an illuminating indication to the user or users without interfering the camera.

- The personal control mechanism as recited in claim 1 , wherein the indicator includes a graphic left-hand sign and a graphic right-hand sign, only one of the two signs is turned on at a time.

- The personal control mechanism as recited in claim 3 , wherein each of the controllers further comprises a wireless interface to receive an indication signal from the processing unit, a corresponding one of the left-hand sign and the right-hand sign is caused to be turned on by the indication signal.

- The personal control mechanism as recited in claim 4 , wherein the processing unit is configured to determine the indication signal for each of the controllers from the sensing signals of each of the controllers.

- The personal control mechanism as recited in claim 1 , wherein each of the controllers further includes a light emitter emitting a light invisible to human eyes but facilitates a camera to track respective movements of the controllers.

- The personal control mechanism as recited in claim 1 , wherein the two controllers are not physically attached.

- A personal control mechanism for a user, comprising: a first controller fully operable by a left hand of the user;a second controller fully operable by a right hand of the user, the first and second controllers, not being physically attached, operating independently, each of the first and second controllers containing inertial sensors, and having a top surface including buttons and a joystick all operable by a finger or a thumb of the left hand or the right hand, wherein the user manipulates either one of the joysticks on the first and second controllers to interact with a virtual environment being displayed;and wherein each of the first and second controllers has an indicator, the indicator of the first controller indicating that the first controller is being held in the left hand and the indicator of the second controller indicating that the second controller is being held in the right hand when both of the first and second controllers are picked up by the user, wherein the indicator is turned on automatically by a processing unit configured to receive independently and process sensing signals from both of the first and second controllers in conjunction of determined respective coordinates of illuminations from the first and second controllers in an image from a camera provided in vicinity.

- The personal control mechanism as recited in claim 8 , wherein each of the first and second controllers further comprises: a bottom surface including a trigger operable by another finger of either one of the two hands, a housing for each of the first and second controllers is sized to fit into either one of the two hands and has a holding portion designed in a way that the trigger, the buttons and the joystick are readily operated by respective fingers when the holding portion is being held in either one of the two hands.

- The personal control mechanism as recited in claim 9 , wherein the indicator is disposed on the top surface of each of the first and second controllers to indicate to the user which hand is holding which of the two controllers, without interfering a camera provided in vicinity to monitor respective movements of the controllers.

- The personal control mechanism as recited in claim 10 , wherein the indicator includes a left-hand sign and a right-hand sign, only one of the two signs is turned at a time.

- The personal control mechanism as recited in claim 11 , wherein each of the controllers further comprises a wireless interface to receive the indication signal from the processing unit, a corresponding one of the left-hand sign and the right-hand sign is caused to be turned on by the indication signal.

- The personal control mechanism as recited in claim 11 , wherein the processing unit is configured to determine the indication signal for each of the controllers from the sensing signals from the controllers.

- The personal control mechanism as recited in claim 8 , wherein the two controllers are functioning to provide a classic control by one user, one or two handed freeform motion control by one user, or a one-handed freeform motion control by two users.

- A personal control mechanism comprising: a housing having at least a forward end, a rearward end, a top surface and a bottom surface, the forward end including an emitter emitting a light beam to be captured by a receiver remotely located, the top surface including a number of buttons and a joystick all operable by a finger of the hand, the bottom surface including a trigger operable by another finger of the hand, the housing sized to fit into the hand and having a holding portion designed in a way that the buttons and the joystick are operated by the finger when the holding portion is being held in the hand;a plurality of inertial sensors producing sensing signals that are sufficiently to derive relative positions and orientation in six degrees of freedom;an indicator automatically indicating that the personal control mechanism is being held by a left hand or a right hand when the personal control mechanism is picked up by a user, wherein the indicator is turned on automatically by a processing unit configured to receive independently and process the sensing signals from the personal control mechanism in conjunction of determined respective coordinates of illuminations from the emitter in an image from the receiver;and a wireless module coupled to the processor to transmit the sensing signals and the action signal to a base unit remotely located, the base unit configured to derive the positions and orientation in six degrees of freedom from the processed sensing signals and producing a virtual environment in which at least one object being controlled by the handheld controller moves according to the relative positions and orientation and reacts according to the action signal.

- The handheld controller as recited in claim 15 , wherein the top surface further includes an indicator indicating that the handheld controller is being held by a left hand or a right hand.

- The handheld controller as recited in claim 15 , wherein the handheld controller receives an indication from a processing unit, receiving wirelessly the sensing signals, configured to compute in real time the relative positions and orientations of the handheld controller;and determine which hand of the user is holding the handheld controller.

- The handheld controller as recited in claim 17 , wherein the processing unit further receives a data sequence from a sensor external to the first handheld controller to determine which hand of the user is holding the handheld controller in accordance with the relative positions and orientations of the handheld controller.

- The handheld controller as recited in claim 18 , wherein the indicator includes two signs, one implying a left hand and the other implying for a right hand, and only one of the signs is illuminated at a time.

- The handheld controller as recited in claim 15 , wherein the handheld controller is being held by a right hand, and another such a handheld controller is being held by a left hand, one of the two signs on each of the handheld controllers is turned on automatically and accordingly.

- The handheld controller as recited in claim 20 , wherein the sensing signals of the controller and the another such a handheld controller are received in a processing unit configured to generate a virtual interactive environment in which at least one of objects responsive to the sensing signals, and wherein the sensing signals are processed in such a way that the two controllers are functioning to provide one or two handed freeform motion control by one user, or a one-handed freeform motion control by two users.

- The handheld controller as recited in claim 21 , wherein a recessed portion is formed near the bottom surface at a position the trigger is readily reachable by an index finger or middle finger of a hand when the hand holds the holding portion of the handheld controller.

- The handheld controller as recited in claim 15 , wherein the receiver is an imaging capturing device provided to capture an image of the light beam, the base unit is configured to derive from the image and the received sensing signals absolute positions and orientation in six degrees of freedom.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.