U.S. Pat. No. 8,784,202

APPARATUS AND METHOD FOR REPOSITIONING A VIRTUAL CAMERA BASED ON A CHANGED GAME STATE

AssigneeNintendo Co., Ltd.

Issue DateJune 3, 2011

U.S. Patent No. 8,784,202: Apparatus and method for repositioning a virtual camera based on a change game state

U.S. Patent No. 8,784,202: Apparatus and method for repositioning a virtual camera based on a change game state

Issued July 22, 2014, to Nintendo Co., Ltd.

Priority Date June 3, 2011

Summary:

U.S. Patent No. 8,784,202 (the ‘202 Patent) describes a method for automatically shifting a virtual camera’s position based on a character’s movement speed. The ‘202 Patent relates to third-person games since the player generally controls the camera in first-person games. A game utilizing the ‘202 Patent will start the camera in a high angle position while the character is walking or standing. As the character begins to run, the camera shifts down. Shifting the camera down allows for the players to see ahead and have a better understanding of the characters footing. When the character starts to slow down, the camera begins to move back into the original high angle position.

Abstract:

An apparatus and method is provided for controlling a virtual camera. The virtual camera is positioned in a first position and a first direction while an object is in a first state and the virtual camera is moved to a second position and second direction when the object transitions from a first state to a second state. The virtual camera is held in the second position and second direction while the object is in the second state and then moves back to the first position and first direction when the object transitions from the second state back to the first state. While the virtual camera is in the second position and second direction, the lowest part of the object is made more easily viewable.

Illustrative Claim:

1. An information processing system, comprising: a processing system having at least one processor, the processing system configured to: position a virtual camera in an initial orientation while an object is moving at a first velocity, and change downwardly the angle of orientation of the virtual camera while moving the virtual camera above the object from the initial orientation when the object transitions from moving at the first velocity to moving at a second velocity greater than the first velocity.

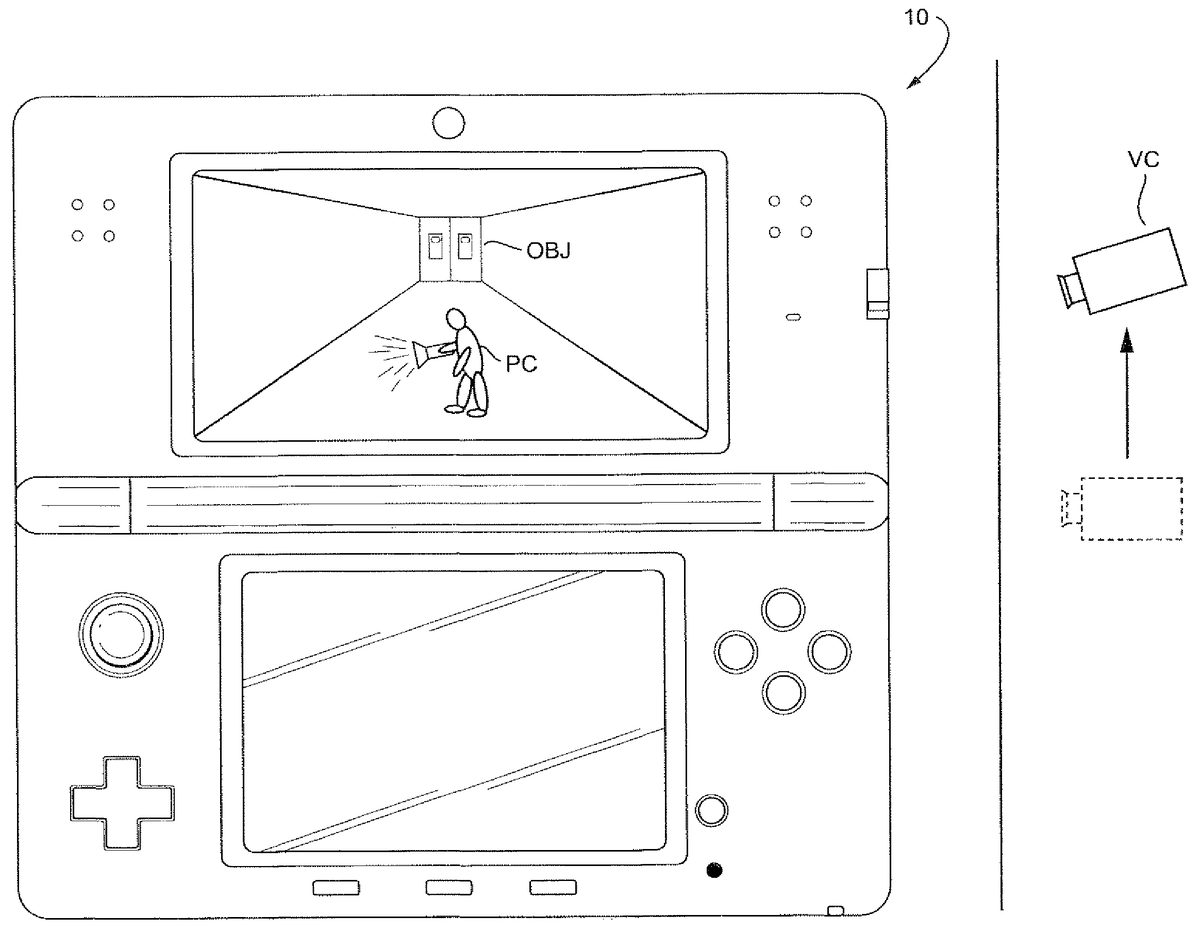

Illustrative Figure

Abstract

An apparatus and method is provided for controlling a virtual camera. The virtual camera is positioned in a first position and a first direction while an object is in a first state and the virtual camera is moved to a second position and second direction when the object transitions from a first state to a second state. The virtual camera is held in the second position and second direction while the object is in the second state and then moves back to the first position and first direction when the object transitions from the second state back to the first state. While the virtual camera is in the second position and second direction, the lowest part of the object is made more easily viewable.

Description

DETAILED DESCRIPTION OF THE DRAWINGS A description is given of a specific example of an image processing apparatus that executes an image processing program according to an embodiment of the present system. The following embodiment, however, is merely illustrative, and the present system is not limited to the configuration of the following embodiment. It should be noted that in the following embodiment, data processed by a computer is illustrated using graphs and natural language. More specifically, however, the data is specified by computer-recognizable pseudo-language, commands, parameters, machine language, arrays, and the like. The present embodiment does not limit the method of representing the data. First, with reference to the drawings, a description is given of a hand-held game apparatus10as an example of the image processing apparatus that executes the image processing program according to the present embodiment. The image processing apparatus according to the present system, however, is not limited to a game apparatus. The image processing apparatus according to the present system may be a given computer system, such as a general-purpose computer. The image processing apparatus may also not be limited to a portable electronic gaming device and may be implemented on a home entertainment gaming device, such as the Nintendo Wii (including e.g., Wii MotionPlus attachment having gyroscope sensors), for example. A description of an example home entertainment gaming device can be found in U.S. application Ser. No. 12/222,873 (U.S. Patent Publication No. 2009/0181736) which is hereby incorporated by reference. The home entertainment gaming device can be played, for example, on a standard television, on a 3-D television, or even on a holographic television. It should be noted that the image processing program according to the present embodiment is a game program. The image processing program according to the present system, however, is not limited to a ...

DETAILED DESCRIPTION OF THE DRAWINGS

A description is given of a specific example of an image processing apparatus that executes an image processing program according to an embodiment of the present system. The following embodiment, however, is merely illustrative, and the present system is not limited to the configuration of the following embodiment.

It should be noted that in the following embodiment, data processed by a computer is illustrated using graphs and natural language. More specifically, however, the data is specified by computer-recognizable pseudo-language, commands, parameters, machine language, arrays, and the like. The present embodiment does not limit the method of representing the data.

First, with reference to the drawings, a description is given of a hand-held game apparatus10as an example of the image processing apparatus that executes the image processing program according to the present embodiment. The image processing apparatus according to the present system, however, is not limited to a game apparatus. The image processing apparatus according to the present system may be a given computer system, such as a general-purpose computer. The image processing apparatus may also not be limited to a portable electronic gaming device and may be implemented on a home entertainment gaming device, such as the Nintendo Wii (including e.g., Wii MotionPlus attachment having gyroscope sensors), for example. A description of an example home entertainment gaming device can be found in U.S. application Ser. No. 12/222,873 (U.S. Patent Publication No. 2009/0181736) which is hereby incorporated by reference. The home entertainment gaming device can be played, for example, on a standard television, on a 3-D television, or even on a holographic television.

It should be noted that the image processing program according to the present embodiment is a game program. The image processing program according to the present system, however, is not limited to a game program. The image processing program according to the present system can be applied by being executed by a given computer system. Further, the processes of the present embodiment may be subjected to distributed processing by a plurality of networked devices, or may be performed by a network system where, after main processes are performed by a server, the process results are distributed to terminals, or may be performed by a so-called cloud network.

FIGS. 1,2,3A,3B,3C, and3D are each a plan view showing an example of the appearance of the game apparatus10. The game apparatus10shown inFIGS. 1 through 3Dincludes a capturing section (camera), and therefore is capable of capturing an image with the capturing section, displaying the captured image on a screen, and storing data of the captured image. Further, the game apparatus10is capable of executing a game program stored in an exchangeable memory card, or a game program received from a server or another game apparatus via a network. The game apparatus10is also capable of displaying on the screen an image generated by computer graphics processing, such as an image captured by a virtual camera set in a virtual space. It should be noted that in the present specification, the act of obtaining image data with the camera is described as “capturing”, and the act of storing the image data of the captured image is described as “photographing”.

The game apparatus10shown inFIGS. 1 through 3Dincludes a lower housing11and an upper housing21. The lower housing11and the upper housing21are joined together by a hinge structure so as to be openable and closable in a folding manner (foldable). That is, the upper housing21is attached to the lower housing11so as to be rotatable (pivotable) relative to the lower housing11. Thus, the game apparatus10has the following two forms: a closed state where the upper housing21is in firm contact with the lower housing11, as seen for example inFIGS. 3A and 3C; and a state where the upper housing21has rotated relative to the lower housing11such that the state of firm contact is released (an open state). The rotation of the upper housing21is allowed to the position where, as shown inFIG. 2, the upper housing21and the lower housing11are approximately parallel to each other in the open state (seeFIG. 2).

FIG. 1is a front view showing an example of the game apparatus10being open (in the open state). A planar shape of each of the lower housing11and the upper housing21is a wider-than-high rectangular plate-like shape having a longitudinal direction (horizontal direction (left-right direction): an x-direction inFIG. 1) and a transverse direction ((up-down direction): a y-direction inFIG. 1). The lower housing11and the upper housing21are joined together at the longitudinal upper outer edge of the lower housing11and the longitudinal lower outer edge of the upper housing21by a hinge structure so as to be rotatable relative to each other. Normally, a user uses the game apparatus10in the open state. The user stores away the game apparatus10in the closed state. Further, the upper housing21can maintain the state of being stationary at a desired angle formed between the lower housing11and the upper housing21due, for example, to a frictional force generated at the connecting part between the lower housing11and the upper housing21. That is, the game apparatus10can maintain the upper housing21stationary at a desired angle with respect to the lower housing11. Generally, in view of the visibility of a screen provided in the upper housing21, the upper housing21is open at a right angle or an obtuse angle with the lower housing11. Hereinafter, in the closed state of the game apparatus10, the respective opposing surfaces of the upper housing21and the lower housing11are referred to as “inner surfaces” or “main surfaces.” Further, the surfaces opposite to the respective inner surfaces (main surfaces) of the upper housing21and the lower housing11are referred to as “outer surfaces”.

Projections11A are provided at the upper long side portion of the lower housing11, each projection11A projecting perpendicularly (in a z-direction inFIG. 1) to an inner surface (main surface)11B of the lower housing11. A projection (bearing)21A is provided at the lower long side portion of the upper housing21, the projection21A projecting perpendicularly to the lower side surface of the upper housing21from the lower side surface of the upper housing21. Within the projections11A and21A, for example, a rotating shaft (not shown) is accommodated so as to extend in the x-direction from one of the projections11A through the projection21A to the other projection11A. The upper housing21is freely rotatable about the rotating shaft, relative to the lower housing11. Thus, the lower housing11and the upper housing21are connected together in a foldable manner.

The inner surface11B of the lower housing11shown inFIG. 1includes a lower liquid crystal display (LCD)12, a touch panel13, operation buttons14A through14L, an analog stick15, a first LED16A, a fourth LED16D, and a microphone hole18.

The lower LCD12is accommodated in the lower housing11. A planar shape of the lower LCD12is a wider-than-high rectangle, and is placed such that the long side direction of the lower LCD12coincides with the longitudinal direction of the lower housing11(the x-direction inFIG. 1). The lower LCD12is provided in the center of the inner surface (main surface) of the lower housing11. The screen of the lower LCD12is exposed through an opening of the inner surface of the lower housing11. The game apparatus10is in the closed state when not used, so that the screen of the lower LCD12is prevented from being soiled or damaged. As an example, the number of pixels of the lower LCD12is 320 dots×240 dots (horizontal×vertical). Unlike an upper LCD22described later, the lower LCD12is a display device that displays an image in a planar manner (not in a stereoscopically visible manner). It should be noted that although an LCD is used as a display device in the first embodiment, any other display device may be used, such as a display device using electroluminescence (EL). Further, a display device having a desired resolution may be used as the lower LCD12.

The touch panel13is one of input devices of the game apparatus10. The touch panel13is mounted so as to cover the screen of the lower LCD12. In the first embodiment, the touch panel13may be, but is not limited to, a resistive touch panel. The touch panel may also be a touch panel of any pressure type, such as an electrostatic capacitance type. In the first embodiment, the touch panel13has the same resolution (detection accuracy) as that of the lower LCD12. The resolutions of the touch panel13and the lower LCD12, however, may not necessarily need to be the same.

The operation buttons14A through14L are each an input device for providing a predetermined input. Among the operation buttons14A through14L, the cross button14A (direction input button14A), the button14B, the button14C, the button14D, the button14E, the power button14F, the select button14J, the home button14K, and the start button14L are provided on the inner surface (main surface) of the lower housing11.

The cross button14A is cross-shaped, and includes buttons for indicating at least up, down, left, and right directions, respectively. The cross button14A is provided in a lower area of the area to the left of the lower LCD12. The cross button14A is placed so as to be operated by the thumb of a left hand holding the lower housing11.

The button14B, the button14C, the button14D, and the button14E are placed in a cross formation in an upper portion of the area to the right of the lower LCD12. The button14B, the button14C, the button14D, and the button14E, are placed where the thumb of a right hand holding the lower housing11is naturally placed. The power button14F is placed in a lower portion of the area to the right of the lower LCD12.

The select button14J, the home button14K, and the start button14L are provided in a lower area of the lower LCD12. The buttons14A through14E, the select button14J, the home button14K, and the start button14L are appropriately assigned functions, respectively, in accordance with the program executed by the game apparatus10. The cross button14A is used for, for example, a selection operation and a moving operation of a character during a game. The operation buttons14B through14E are used, for example, for a determination operation or a cancellation operation. The power button14F can be used to power on/off the game apparatus10. In another embodiment, the power button14F can be used to indicate to the game apparatus10that it should enter a “sleep mode” for power saving purposes.

The analog stick15is a device for indicating a direction. The analog stick15is provided to an upper portion of the area to the left of the lower LCD12of the inner surface (main surface) of the lower housing11. That is, the analog stick15is provided above the cross button14A. The analog stick15is placed so as to be operated by the thumb of a left hand holding the lower housing11. The provision of the analog stick15in the upper area places the analog stick15at the position where the thumb of the left hand of the user holding the lower housing11is naturally placed. The cross button14A is placed at the position where the thumb of the left hand holding the lower housing11is moved slightly downward. This enables the user to operate the analog stick15and the cross button14A by moving up and down the thumb of the left hand holding the lower housing11. The key top of the analog stick15is configured to slide parallel to the inner surface of the lower housing11. The analog stick15functions in accordance with the program executed by the game apparatus10. When, for example, the game apparatus10executes a game where a predetermined object appears in a three-dimensional virtual space, the analog stick15functions as an input device for moving the predetermined object in the three-dimensional virtual space. In this case, the predetermined object is moved in the direction in which the key top of the analog stick15has slid. It should be noted that the analog stick15may be a component capable of providing an analog input by being tilted by a predetermined amount in any one of up, down, right, left, and diagonal directions.

It should be noted that the four buttons, namely the button14B, the button14C, the button14D, and the button14E, and the analog stick15are placed symmetrically to each other with respect to the lower LCD12. This also enables, for example, a left-handed person to provide a direction indication input using these four buttons, namely the button14B, the button14C, the button14D, and the button14E, depending on the game program.

The first LED16A (FIG. 1) notifies the user of the on/off state of the power supply of the game apparatus10. The first LED16A is provided on the right of an end portion shared by the inner surface (main surface) of the lower housing11and the lower side surface of the lower housing11. This enables the user to view whether or not the first LED16A is lit on, regardless of the open/closed state of the game apparatus10. The fourth LED16D (FIG. 1) notifies the user that the game apparatus10is recharging and is located near the first LED16A.

The microphone hole18is a hole for a microphone built into the game apparatus10as a sound input device. The built-in microphone detects a sound from outside the game apparatus10through the microphone hole18. The microphone and the microphone hole18are provided below the power button14F on the inner surface (main surface) of the lower housing11.

The upper side surface of the lower housing11includes an opening17(a dashed line shown inFIGS. 1 and 3D) for a stylus28. The opening17can accommodate the stylus28that is used to perform an operation on the touch panel13. It should be noted that, normally, an input is provided to the touch panel13using the stylus28. The touch panel13, however, can be operated not only by the stylus28but also by a finger of the user.

The upper side surface of the lower housing11includes an insertion slot11D (a dashed line shown inFIGS. 1 and 3D), into which an external memory45having a game program stored thereon is to be inserted. Within the insertion slot11D, a connector (not shown) is provided for electrically connecting the game apparatus10and the external memory45in a detachable manner. The connection of the external memory45to the game apparatus10causes a processor included in internal circuitry to execute a predetermined game program. It should be noted that the connector and the insertion slot11D may be provided on another side surface (e.g., the right side surface) of the lower housing11.

The inner surface21B of the upper housing21shown inFIG. 1includes loudspeaker holes21E, an upper LCD22, an inner capturing section24, a 3D adjustment switch25, and a 3D indicator26are provided. The inner capturing section24is an example of a first capturing device.

The upper LCD22is a display device capable of displaying a stereoscopically visible image. The upper LCD22is capable of displaying a left-eye image and a right-eye image, using substantially the same display area. Specifically, the upper LCD22is a display device using a method in which the left-eye image and the right-eye image are displayed alternately in the horizontal direction in predetermined units (e.g., in every other line). It should be noted that the upper LCD22may be a display device using a method in which the left-eye image and the right-eye image are displayed alternately for a predetermined time. Further, the upper LCD22is a display device capable of displaying an image stereoscopically visible with the naked eye. In this case, a lenticular type display device or a parallax barrier type display device is used so that the left-eye image and the right-eye image that are displayed alternately in the horizontal direction can be viewed separately with the left eye and the right eye, respectively. In the first embodiment, the upper LCD22is a parallax-barrier-type display device. The upper LCD22displays an image stereoscopically visible with the naked eye (a stereoscopic image), using the right-eye image and the left-eye image. That is, the upper LCD22allows the user to view the left-eye image with their left eye, and the right-eye image with their right eye, using the parallax barrier. This makes it possible to display a stereoscopic image giving the user a stereoscopic effect (stereoscopically visible image). Furthermore, the upper LCD22is capable of disabling the parallax barrier. When disabling the parallax barrier, the upper LCD22is capable of displaying an image in a planar manner (the upper LCD22is capable of displaying a planar view image, as opposed to the stereoscopically visible image described above. This is a display mode in which the same displayed image can be viewed with both the left and right eyes). Thus, the upper LCD22is a display device capable of switching between: the stereoscopic display mode for displaying a stereoscopically visible image; and the planar display mode for displaying an image in a planar manner (displaying a planar view image). The switching of the display modes is performed by the 3D adjustment switch25described later.

The upper LCD22is accommodated in the upper housing21. A planar shape of the upper LCD22is a wider-than-high rectangle, and is placed at the center of the upper housing21such that the long side direction of the upper LCD22coincides with the long side direction of the upper housing21. As an example, the area of the screen of the upper LCD22is set greater than that of the lower LCD12. Specifically, the screen of the upper LCD22is set horizontally longer than the screen of the lower LCD12. That is, the proportion of the width in the aspect ratio of the screen of the upper LCD22is set greater than that of the lower LCD12. The screen of the upper LCD22is provided on the inner surface (main surface)21B of the upper housing21, and is exposed through an opening of the inner surface of the upper housing21. Further, the inner surface of the upper housing21is covered by a transparent screen cover27. The screen cover27protects the screen of the upper LCD22, and integrates the upper LCD22and the inner surface of the upper housing21, and thereby provides unity. As an example, the number of pixels of the upper LCD22is 800 dots×240 dots (horizontal×vertical). It should be noted that an LCD is used as the upper LCD22in the first embodiment. The upper LCD22, however, is not limited to this, and a display device using EL or the like may be used. Furthermore, a display device having any resolution may be used as the upper LCD22.

The loudspeaker holes21E are holes through which sounds from loudspeakers44that serve as a sound output device of the game apparatus10are output. The loudspeakers holes21E are placed symmetrically with respect to the upper LCD. Sounds from the loudspeakers44described later are output through the loudspeaker holes21E.

The inner capturing section24functions as a capturing section having an imaging direction that is the same as the inward normal direction of the inner surface21B of the upper housing21. The inner capturing section24includes an imaging device having a predetermined resolution, and a lens. The lens may have a zoom mechanism.

The inner capturing section24is placed: on the inner surface21B of the upper housing21; above the upper edge of the screen of the upper LCD22; and in the center of the upper housing21in the left-right direction (on the line dividing the upper housing21(the screen of the upper LCD22) into two equal left and right portions). Such a placement of the inner capturing section24makes it possible that when the user views the upper LCD22from the front thereof, the inner capturing section24captures the user's face from the front thereof. A left outer capturing section23aand a right outer capturing section23bwill be described later.

The 3D adjustment switch25is a slide switch, and is used to switch the display modes of the upper LCD22as described above. The 3D adjustment switch25is also used to adjust the stereoscopic effect of a stereoscopically visible image (stereoscopic image) displayed on the upper LCD22. The 3D adjustment switch25is provided at an end portion shared by the inner surface and the right side surface of the upper housing21, so as to be visible to the user, regardless of the open/closed state of the game apparatus10. The 3D adjustment switch25includes a slider that is slidable to any position in a predetermined direction (e.g., the up-down direction), and the display mode of the upper LCD22is set in accordance with the position of the slider.

When, for example, the slider of the 3D adjustment switch25is placed at the lowermost position, the upper LCD22is set to the planar display mode, and a planar image is displayed on the screen of the upper LCD22. It should be noted that the same image may be used as the left-eye image and the right-eye image, while the upper LCD22remains set to the stereoscopic display mode, and thereby performs planar display. On the other hand, when the slider is placed above the lowermost position, the upper LCD22is set to the stereoscopic display mode. In this case, a stereoscopically visible image is displayed on the screen of the upper LCD22. When the slider is placed above the lowermost position, the visibility of the stereoscopic image is adjusted in accordance with the position of the slider. Specifically, the amount of deviation in the horizontal direction between the position of the right-eye image and the position of the left-eye image is adjusted in accordance with the position of the slider.

The 3D indicator26indicates whether or not the upper LCD22is in the stereoscopic display mode. For example, the 3D indicator26is an LED, and is lit on when the stereoscopic display mode of the upper LCD22is enabled. The 3D indicator26is placed on the inner surface21B of the upper housing21near the screen of the upper LCD22. Accordingly, when the user views the screen of the upper LCD22from the front thereof, the user can easily view the 3D indicator26. This enables the user to easily recognize the display mode of the upper LCD22even when viewing the screen of the upper LCD22.

FIG. 2is a right side view showing an example of the game apparatus10in the open state. The right side surface of the lower housing11includes a second LED16B, a wireless switch19, and the R button14H. The second LED16B notifies the user of the establishment state of the wireless communication of the game apparatus10. The game apparatus10is capable of wirelessly communicating with other devices, and the second LED16B is lit on when wireless communication is established between the game apparatus10and other devices. The game apparatus10has the function of establishing connection with a wireless LAN by, for example, a method based on the IEEE 802.11.b/g standard. The wireless switch19enables/disables the function of the wireless communication. The R button14H will be described later.

FIG. 3Ais a left side view showing an example of the game apparatus10being closed (in the closed state). The left side surface of the lower housing11shown inFIG. 3Aincludes an openable and closable cover section11C, the L button14H, and the sound volume button14I. The sound volume button14I is used to adjust the sound volume of the loudspeakers of the game apparatus10.

Within the cover section11C, a connector (not shown) is provided for electrically connecting the game apparatus10and a data storage external memory46(seeFIG. 1). The data storage external memory46is detachably attached to the connector. The data storage external memory46is used to, for example, store (save) data of an image captured by the game apparatus10. It should be noted that the connector and the cover section11C may be provided on the right side surface of the lower housing11. The L button14G will be described later.

FIG. 3Bis a front view showing an example of the game apparatus10in the closed state. The outer surface of the upper housing21shown inFIG. 3Bincludes a left outer capturing section23a, a right outer capturing section23b, and a third LED16c.

The left outer capturing section23aand the right outer capturing section23beach includes an imaging device (e.g., a CCD image sensor or a CMOS image sensor) having a predetermined common resolution, and a lens. The lens may have a zoom mechanism. The imaging directions of the left outer capturing section23aand the right outer capturing section23b(the optical axis of the camera) are each the same as the outward normal direction of the outer surface21D. That is, the imaging direction of the left outer capturing section23aand the imaging direction of the right outer capturing section23bare parallel to each other. Hereinafter, the left outer capturing section23aand the right outer capturing section23bare collectively referred to as an “outer capturing section23”. The outer capturing section23is an example of a second capturing device.

The left outer capturing section23aand the right outer capturing section23bincluded in the outer capturing section23are placed along the horizontal direction of the screen of the upper LCD22. That is, the left outer capturing section23aand the right outer capturing section23bare placed such that a straight line connecting between the left outer capturing section23aand the right outer capturing section23bis placed along the horizontal direction of the screen of the upper LCD22. When the user has pivoted the upper housing21at a predetermined angle (e.g., 90°) relative to the lower housing11, and views the screen of the upper LCD22from the front thereof, the left outer capturing section23ais placed on the left side of the user viewing the screen, and the right outer capturing section23bis placed on the right side of the user (seeFIG. 1). The distance between the left outer capturing section23aand the right outer capturing section23bis set to correspond to the distance between both eyes of a person, and may be set, for example, in the range from 30 mm to 70 mm. It should be noted, however, that the distance between the left outer capturing section23aand the right outer capturing section23bis not limited to this range. It should be noted that in the first embodiment, the left outer capturing section23aand the right outer capturing section23bare fixed to the housing21, and therefore, the imaging directions cannot be changed.

The left outer capturing section23aand the right outer capturing section23bare placed symmetrically with respect to the line dividing the upper LCD22(the upper housing21) into two equal left and right portions. Further, the left outer capturing section23aand the right outer capturing section23bare placed in the upper portion of the upper housing21and in the back of the portion above the upper edge of the screen of the upper LCD22, in the state where the upper housing21is in the open state (seeFIG. 1). That is, the left outer capturing section23aand the right outer capturing section23bare placed on the outer surface of the upper housing21, and, if the upper LCD22is projected onto the outer surface of the upper housing21, is placed above the upper edge of the screen of the projected upper LCD22.

Thus, the left outer capturing section23aand the right outer capturing section23bof the outer capturing section23are placed symmetrically with respect to the center line of the upper LCD22extending in the transverse direction. This makes it possible that when the user views the upper LCD22from the front thereof, the imaging directions of the outer capturing section23coincide with the directions of the respective lines of sight of the user's right and left eyes. Further, the outer capturing section23is placed in the back of the portion above the upper edge of the screen of the upper LCD22, and therefore, the outer capturing section23and the upper LCD22do not interfere with each other inside the upper housing21. Further, when the inner capturing section24provided on the inner surface of the upper housing21as shown by a dashed line inFIG. 3Bis projected onto the outer surface of the upper housing21, the left outer capturing section23aand the right outer capturing section23bare placed symmetrically with respect to the projected inner capturing section24. This makes it possible to reduce the upper housing21in thickness as compared to the case where the outer capturing section23is placed in the back of the screen of the upper LCD22, or the case where the outer capturing section23is placed in the back of the inner capturing section24.

The left outer capturing section23aand the right outer capturing section23bcan be used as a stereo camera, depending on the program executed by the game apparatus10. Alternatively, either one of the two outer capturing sections (the left outer capturing section23aand the right outer capturing section23b) may be used solely, so that the outer capturing section23can also be used as a non-stereo camera, depending on the program. When a program is executed for causing the left outer capturing section23aand the right outer capturing section23bto function as a stereo camera, the left outer capturing section23acaptures a left-eye image, which is to be viewed with the user's left eye, and the right outer capturing section23bcaptures a right-eye image, which is to be viewed with the user's right eye. Yet alternatively, depending on the program, images captured by the two outer capturing sections (the left outer capturing section23aand the right outer capturing section23b) may be combined together, or may be used to compensate for each other, so that imaging can be performed with an extended imaging range. Yet alternatively, a left-eye image and a right-eye image that have a parallax may be generated from a single image captured using one of the outer capturing sections23aand23b, and a pseudo-stereo image as if captured by two cameras can be generated. To generate the pseudo-stereo image, it is possible to appropriately set the distance between virtual cameras.

The third LED16cis lit on when the outer capturing section23is operating, and informs that the outer capturing section23is operating. The third LED16cis provided near the outer capturing section23on the outer surface of the upper housing21.

FIG. 3Cis a right side view showing an example of the game apparatus10in the closed state.FIG. 3Dis a rear view showing an example of the game apparatus10in the closed state.

The L button14G and the R button14H are provided on the upper side surface of the lower housing11shown inFIG. 3D. The L button14G is provided at the left end portion of the upper side surface of the lower housing11, and the R button14H is provided at the right end portion of the upper side surface of the lower housing11. The L button14G and the R button14H are appropriately assigned functions, respectively, in accordance with the program executed by the game apparatus10. For example, the L button14G and the R button14H function as shutter buttons (capturing instruction buttons) of the capturing sections described above.

It should be noted that although not shown in the figures, a rechargeable battery that serves as the power supply of the game apparatus10is accommodated in the lower housing11, and the battery can be charged through a terminal provided on the side surface (e.g., the upper side surface) of the lower housing11.

FIG. 4is a block diagram showing an example of the internal configuration of the game apparatus10. The game apparatus10includes, as well as the components described above, electronic components, such as an information processing section31, a main memory32, an external memory interface (external memory I/F)33, a data storage external memory I/F34, a data storage internal memory35, a wireless communication module36, a local communication module37, a real-time clock (RTC)38, an acceleration sensor39, an angular velocity sensor40, a power circuit41, and an interface circuit (I/F circuit)42. These electronic components are mounted on electronic circuit boards, and are accommodated in the lower housing11, or may be accommodated in the upper housing21.

The information processing section31is information processing means including a central processing unit (CPU)311that executes a predetermined program, a graphics processing unit (GPU)312that performs image processing, and the like. In the first embodiment, a predetermined program is stored in a memory (e.g., the external memory45connected to the external memory I/F33, or the data storage internal memory35) included in the game apparatus10. The CPU311of the information processing section31executes the predetermined program, and thereby performs the image processing described later or game processing. It should be noted that the program executed by the CPU311of the information processing section31may be acquired from another device by communication with said another device. The information processing section31further includes a video RAM (VRAM)313. The GPU312of the information processing section31generates an image in accordance with an instruction from the CPU311of the information processing section31, and draws the image in the VRAM313. The GPU312of the information processing section31outputs the image drawn in the VRAM313to the upper LCD22and/or the lower LCD12, and the image is displayed on the upper LCD22and/or the lower LCD12.

To the information processing section31, the main memory32, the external memory I/F33, the data storage external memory I/F34, and the data storage internal memory35are connected. The external memory I/F33is an interface for establishing a detachable connection with the external memory45. The data storage external memory I/F34is an interface for establishing a detachable connection with the data storage external memory46.

The main memory32is volatile storage means used as a work area or a buffer area of the information processing section31(the CPU311). That is, the main memory32temporarily stores various types of data used for image processing or game processing, and also temporarily stores a program acquired from outside (the external memory45, another device, or the like) the game apparatus10. In the first embodiment, the main memory32is, for example, a pseudo SRAM (PSRAM).

The external memory45is nonvolatile storage means for storing the program executed by the information processing section31. The external memory45is composed of, for example, a read-only semiconductor memory. When the external memory45is connected to the external memory I/F33, the information processing section31can load a program stored in the external memory45. In accordance with the execution of the program loaded by the information processing section31, a predetermined process is performed. The data storage external memory46is composed of a readable/writable non-volatile memory (e.g., a NAND flash memory), and is used to store predetermined data. For example, the data storage external memory46stores images captured by the outer capturing section23and/or images captured by another device. When the data storage external memory46is connected to the data storage external memory I/F34, the information processing section31loads an image stored in the data storage external memory46, and the image can be displayed on the upper LCD22and/or the lower LCD12.

The data storage internal memory35is composed of a readable/writable non-volatile memory (e.g., a NAND flash memory), and is used to store predetermined data. For example, the data storage internal memory35stores data and/or programs downloaded by wireless communication through the wireless communication module36.

The wireless communication module36has the function of establishing connection with a wireless LAN by, for example, a method based on the IEEE 802.11.b/g standard. Further, the local communication module37has the function of wirelessly communicating with another game apparatus of the same type by a predetermined communication method (e.g., infrared communication). The wireless communication module36and the local communication module37are connected to the information processing section31. The information processing section31is capable of transmitting and receiving data to and from another device via the Internet, using the wireless communication module36, and is capable of transmitting and receiving data to and from another game apparatus of the same type, using the local communication module37.

The acceleration sensor39is connected to the information processing section31. The acceleration sensor39can detect the magnitudes of accelerations (linear accelerations) in the directions of straight lines along three axial (x, y, and z axes in the present embodiment) directions, respectively. The acceleration sensor39is provided, for example, within the lower housing11. As shown inFIG. 1, the long side direction of the lower housing11is defined as an x-axis direction; the short side direction of the lower housing11is defined as a y-axis direction; and the direction perpendicular to the inner surface (main surface) of the lower housing11is defined as a z-axis direction. The acceleration sensor39thus detects the magnitudes of the linear accelerations produced in the respective axial directions. It should be noted that the acceleration sensor39is, for example, an electrostatic capacitance type acceleration sensor, but may be an acceleration sensor of another type. Further, the acceleration sensor39may be an acceleration sensor for detecting an acceleration in one axial direction, or accelerations in two axial directions. The information processing section31receives data indicating the accelerations detected by the acceleration sensor39(acceleration data), and calculates the orientation and the motion of the game apparatus10.

The angular velocity sensor40is connected to the information processing section31. The angular velocity sensor40detects angular velocities generated about three axes (x, y, and z axes in the present embodiment) of the game apparatus10, respectively, and outputs data indicating the detected angular velocities (angular velocity data) to the information processing section31. The angular velocity sensor40is provided, for example, within the lower housing11. The information processing section31receives the angular velocity data output from the angular velocity sensor40, and calculates the orientation and the motion of the game apparatus10.

The RTC38and the power circuit41are connected to the information processing section31. The RTC38counts time, and outputs the counted time to the information processing section31. The information processing section31calculates the current time (date) based on the time counted by the RTC38. The power circuit41controls the power from the power supply (the rechargeable battery accommodated in the lower housing11, which is described above) of the game apparatus10, and supplies power to each component of the game apparatus10.

The I/F circuit42is connected to the information processing section31. A microphone43, a loudspeaker44, and the touch panel13are connected to the I/F circuit42. Specifically, the loudspeaker44is connected to the I/F circuit42through an amplifier not shown in the figures. The microphone43detects a sound from the user, and outputs a sound signal to the I/F circuit42. The amplifier amplifies the sound signal from the I/F circuit42, and outputs the sound from the loudspeaker44. The I/F circuit42includes: a sound control circuit that controls the microphone43and the loudspeaker44(amplifier); and a touch panel control circuit that controls the touch panel13. For example, the sound control circuit performs A/D conversion and D/A conversion on the sound signal, and converts the sound signal to sound data in a predetermined format. The touch panel control circuit generates touch position data in a predetermined format, based on a signal from the touch panel13, and outputs the touch position data to the information processing section31. The touch position data indicates the coordinates of the position (touch position), on the input surface of the touch panel13, at which an input has been provided. It should be noted that the touch panel control circuit reads a signal from the touch panel13, and generates the touch position data, once in a predetermined time. The information processing section31acquires the touch position data, and thereby recognizes the touch position, at which the input has been provided on the touch panel13.

An operation button14includes the operation buttons14A through14L described above, and is connected to the information processing section31. Operation data is output from the operation button14to the information processing section31, the operation data indicating the states of inputs provided to the respective operation buttons14A through14I (indicating whether or not the operation buttons14A through14I have been pressed). The information processing section31acquires the operation data from the operation button14, and thereby performs processes in accordance with the inputs provided to the operation button14.

The lower LCD12and the upper LCD22are connected to the information processing section31. The lower LCD12and the upper LCD22each display an image in accordance with an instruction from the information processing section31(the GPU312). In the first embodiment, the information processing section31causes the lower LCD12to display an image for a hand-drawn image input operation, and causes the upper LCD22to display an image acquired from either one of the outer capturing section23and the inner capturing section24. That is, for example, the information processing section31causes the upper LCD22to display a stereoscopic image (stereoscopically visible image) using a right-eye image and a left-eye image that are captured by the inner capturing section24, or causes the upper LCD22to display a planar image using one of a right-eye image and a left-eye image that are captured by the outer capturing section23.

Specifically, the information processing section31is connected to an LCD controller (not shown) of the upper LCD22, and causes the LCD controller to set the parallax barrier to on/off. When the parallax barrier is on in the upper LCD22, a right-eye image and a left-eye image that are stored in the VRAM313of the information processing section31(that are captured by the outer capturing section23) are output to the upper LCD22. More specifically, the LCD controller repeatedly alternates the reading of pixel data of the right-eye image for one line in the vertical direction, and the reading of pixel data of the left-eye image for one line in the vertical direction, and thereby reads the right-eye image and the left-eye image from the VRAM313. Thus, the right-eye image and the left-eye image are each divided into strip images, each of which has one line of pixels placed in the vertical direction, and an image including the divided left-eye strip images and the divided right-eye strip images alternately placed is displayed on the screen of the upper LCD22. The user views the images through the parallax barrier of the upper LCD22, whereby the right-eye image is viewed with the user's right eye, and the left-eye image is viewed with the user's left eye. This causes the stereoscopically visible image to be displayed on the screen of the upper LCD22.

The outer capturing section23and the inner capturing section24are connected to the information processing section31. The outer capturing section23and the inner capturing section24each capture an image in accordance with an instruction from the information processing section31, and output data of the captured image to the information processing section31. In the first embodiment, the information processing section31gives either one of the outer capturing section23and the inner capturing section24an instruction to capture an image, and the capturing section that has received the instruction captures an image, and transmits data of the captured image to the information processing section31. Specifically, the user selects the capturing section to be used, through an operation using the touch panel13and the operation button14. The information processing section31(the CPU311) detects that an capturing section has been selected, and the information processing section31gives the selected one of the outer capturing section23and the inner capturing section24an instruction to capture an image.

When started by an instruction from the information processing section31(CPU311), the outer capturing section23and the inner capturing section24perform capturing at, for example, a speed of 60 images per second. The captured images captured by the outer capturing section23and the inner capturing section24are sequentially transmitted to the information processing section31, and displayed on the upper LCD22or the lower LCD12by the information processing section31(GPU312). When output to the information processing section31, the captured images are stored in the VRAM313, are output to the upper LCD22or the lower LCD12, and are deleted at predetermined times. Thus, images are captured at, for example, a speed of 60 images per second, and the captured images are displayed, whereby the game apparatus10can display views in the imaging ranges of the outer capturing section23and the inner capturing section24, on the upper LCD22of the lower LCD12in real time.

The 3D adjustment switch25is connected to the information processing section31. The 3D adjustment switch25transmits to the information processing section31an electrical signal in accordance with the position of the slider.

The 3D indicator26is connected to the information processing section31. The information processing section31controls whether or not the 3D indicator26is to be lit on. When, for example, the upper LCD22is in the stereoscopic display mode, the information processing section31lights on the 3D indicator26.

FIG. 5shows an example of an application flowchart for the virtual camera repositioning system. The system begins in S1where the virtual camera is positioned in a first, or default state. The default state may include: (i) a default position of the virtual camera and (ii) a default direction (i.e., viewing angle or orientation) of the virtual camera. For example, the virtual camera may remain at substantially “eye-level” (i.e., doll-house view) while the player character is walking in the third person view. An image is generated and displayed (seeFIGS. 6,8A, and9A) with the virtual camera positioned in the default state.

Once the virtual camera is positioned in a first (default) position and has the first (default) direction, the system proceeds to S2where it determines the game state of the character. In a default mode, the game state of the character, for example, could be a walking, or standing mode. A change in the game state of the player character, for example, would be from walking to running. If the game state of the player character does not change, the system maintains the camera in the first, or default position, and repeats S2until the game state changes.

If the game state of the player character changes (e.g., walking to running or standing to running), the system proceeds to S4where the virtual camera begins moving to a second position. As explained above, the second position of the virtual camera is preferably in a higher position than the first position and the direction of the virtual camera is preferably more downwardly pitched (compare virtual camera VC inFIGS. 6 and 7) so that the player character's footing is made more easily viewable while the player character is running in a third-person view. The position of the virtual camera is raised and the direction (i.e., viewing angle or orientation) is more downwardly pitched simultaneously. As an example, the position may be raised by 10% and the direction may be pitched downwardly by 4° to 8°.

It should be appreciated that the game state may change by a player pressing a button alone. That is, the player character does not actually have to appear as “running” but the game state should change so that the player character should be made to “run” is currently “walking” upon some input or indication by the game. So for example, through the combination of pressing a button and moving the player character (i.e., via a cross-switch), the player character will begin running in a particular direction. However, the button alone may indicate that the player character is entering the “running” state and the game state may change even though the player has not pressed the cross-switch. That is, the game state may change to indicate that the player character should be made to run if currently walking even though the player character is not running in any particular direction because the player has not yet used the cross-switch. As such, the virtual camera will begin moving even though the player character does not appear to be “running” yet on the display.

Once the camera is positioned in the second position, the system proceeds to S5where it holds the virtual camera in the second position while the player character is running. As mentioned above, the virtual camera views the player character running in the third-person view in such a way to more easily see the player character's footing. Once again, the virtual camera will move upon the indication that the player character is changing state so even if the player character is not yet “running” (i.e. the user has not pressed the cross-switch), the virtual camera will still move based on the indication that the game state is changing. At least one other image is generated and displayed (seeFIGS. 7,8B, and9B) with the virtual camera having the changed position and direction.

In S6, the system determines if the player character is going to return to its first, or default state (i.e. stop running). If the player character is not returning to its first state (i.e. keeps running), the system returns to S5where it maintains the virtual camera's position in the second position and second direction.

If the player character returns to its default state, the system proceeds to S8where the virtual camera begins moving back to the first, or default position. For example, the virtual camera will lower back to a position that is substantially “eye-level” with a third-person view of the player character.

FIG. 6shows a diagram of an example where the game apparatus10is operating in a first, or default state. In this example, the player character PC is walking (or standing) in a hallway where an object OBJ depicted as a door is in the background. The virtual camera VC can be “eye level” with the player character PC in the third person view in the default position. In this example, the player character PC holds a flashlight with the player character PC body facing left.

FIG. 7shows a diagram of an example where the game apparatus10is operating in a second state. In this example, the player character PC state has changed from walking to running. This change in state may result from, for example, user manipulation of a particular input (e.g., button, switch, or housing movement) which serves an initiation instruction for the second state and the virtual camera's VC change in position and direction. As can be seen inFIG. 7, the virtual camera VC in this example moves upward so that it is higher than the default “eye level” or “doll house” view. At the same time, the visual direction of the virtual camera VC points downward. The object OBJ in the background is moved upward as the virtual camera is now higher than “eye level” with the player character PC but facing downward. Moving the virtual camera to the angle downward allows the player character PC footing to be more easily viewable. This virtual camera angle also gives the player a more realistic sense of the player character PC running.

It should be appreciated that the virtual camera VC can be in such a position so that the player character PC is always in a substantially center field-of-view of the virtual camera VC. The virtual camera VC may also move to the second position in a linear correspondence with the speed of the player character. For example, the virtual camera VC may increase its movement to the second position as the player character PC increases its speed and may lower pitch of the viewing direction of the virtual camera VC as the player character increases its speed. The virtual camera VC may also move to the second position when the player character reaches a maximum speed.

FIGS. 8A-Bshow examples of an embodiment of the present system, which may be shown for example in either LCD12or22.FIG. 8Ashows a situation where the player character is walking. As can be seen inFIG. 8A, the virtual camera is relatively level with the player character and the player character is walking toward the display and down a hallway.

FIG. 8Bshows a situation where the game apparatus changes game states such that the player character is now running. As can be seen inFIG. 8B, the camera moves upward so that the player character's footing is made more easily viewable. Consequently, the door in the background moves higher as the camera moves upward yet changes the viewing angle to look downward.

FIGS. 9A-Bshow examples of an embodiment of the present system, which may be shown for example in either LCD12or22.FIG. 9Ashows a situation of the virtual camera viewing the player character from a side view, while the player character is walking. Like the situation shown inFIG. 8A, the virtual camera is relatively level with the player character.

FIG. 9Bshows a situation where the game apparatus changes game states such that the player character is being viewed from the side and running. LikeFIG. 8B,FIG. 9Bshows the virtual camera moving upward yet pointing downward so that the player character's footing is more easily viewable. The door in the background consequently rises with the downward viewing of the virtual camera.

While the system has been described above as using a single virtual camera, the same system can be implemented in a system using two or more virtual cameras. For example, the system can be implemented in a 3-D viewing system that uses at least two virtual cameras.

While the system has been described in connection with what is presently considered to be the most practical and preferred embodiment, it is to be understood that the system is not to be limited to the disclosed embodiment, but on the contrary, is intended to cover various modifications and equivalent arrangements included within the spirit and scope of the appended claims.

Claims

- An information processing system, comprising: a processing system having at least one processor, the processing system configured to: position a virtual camera in an initial orientation while an object is moving at a first velocity, and change downwardly the angle of orientation of the virtual camera while moving the virtual camera above the object from the initial orientation when the object transitions from moving at the first velocity to moving at a second velocity greater than the first velocity.

- The system of claim 1 , wherein the virtual camera keeps the object in a substantially center position of a viewpoint of the virtual camera while the object is moving at the second velocity.

- The system of claim 1 , wherein the object is a player character and a footing of the player character is viewable while the angle of orientation of the virtual camera is changed downwardly when the object transitions to moving at the second velocity.

- The system of claim 3 , wherein the player character is walking when moving at the velocity and running when moving at the second velocity.

- The system of claim 1 , wherein the virtual camera moves to a location above the object and has the angle of orientation viewing a direction downward toward the object when the object is moving at the second velocity.

- The system of claim 1 , wherein the processing system further configured to: position the virtual camera in a first position while the virtual camera is in the initial orientation;and move the virtual camera to a second position, above the first position, as the angle of orientation of the virtual camera changes downwardly when the object transitions from moving at the first velocity to moving at the second velocity.

- The system of claim 6 , wherein the virtual camera moves to the second position in a linear correspondence with a speed of the object.

- The system of claim 6 , wherein the virtual camera moves to the second position when the object reaches a maximum speed.

- A non-transitory computer readable storage medium having computer readable code embodied therein for executing an application program, the program causing a computer having at least one processor to perform functionality comprising: positioning a virtual camera in an initial orientation while an object is moving at a first velocity;and changing downwardly the angle of orientation of the virtual camera while moving the virtual camera above the object from the initial orientation when the object transitions from moving at the first velocity to moving at a second velocity greater than the first velocity.

- The non-transitory computer readable storage medium of claim 9 , wherein the virtual camera keeps the object in a substantially center position of a viewpoint of the virtual camera while the object is moving at the second velocity.

- The non-transitory computer readable storage medium of claim 9 , wherein the object is a player character and a footing of the player character is viewable while the angle of orientation of the virtual camera is changed downwardly when the object transitions to moving at the second velocity second state.

- The non-transitory computer readable storage medium of claim 11 , wherein the player character is walking when moving at the first velocity and running when moving at the second velocity.

- The non-transitory computer readable storage medium of claim 9 , wherein the virtual camera moves to a location above the object and has the angle of orientation viewing a direction downward toward the object when the object is moving at the second velocity.

- The non-transitory computer readable storage medium of claim 9 , wherein the program causing the computer to further perform functionality comprising: positioning the virtual camera in a first position while the virtual camera is in the initial orientation;and moving the virtual camera to a second position, above the first position, as the angle of orientation of the virtual camera changes downwardly when the object transitions from moving at the first velocity to moving at the second velocity.

- The non-transitory computer readable storage medium of claim 14 , wherein the virtual camera moves to the second position in a linear correspondence with a speed of the object.

- The non-transitory computer readable storage medium of claim 14 , wherein the virtual camera moves to the second position when the object reaches a maximum speed.

- A gaming apparatus comprising at least one processor, the gaming apparatus configured to: position a virtual camera in an initial orientation while an object is moving at a first velocity;and change downwardly the angle of orientation of the virtual camera while moving the virtual camera above the object from the initial orientation when the object transitions from moving at the first velocity to moving at a second velocity greater than the first velocity.

- The gaming apparatus of claim 17 , wherein the virtual camera keeps the object in a substantially center position of a viewpoint of the virtual camera while the object is moving at the second velocity.

- The gaming apparatus of claim 17 , wherein the object is a player character and a footing of the player character is viewable while the angle of orientation of the virtual camera is changed downwardly when the object transitions to moving at the second velocity.

- The gaming apparatus of claim 19 , wherein the player character is walking when moving at the first velocity and running when moving at the second velocity.

- The gaming apparatus of claim 17 , wherein the virtual camera moves to a location above the object and has the angle of orientation viewing a direction downward toward the object when the object is moving at the second velocity.

- The gaming apparatus of claim 17 , further configured to: position the virtual camera in a first position while the virtual camera is in the initial orientation;and move the virtual camera to a second position, above the first position, as the angle of orientation of the virtual camera changes downwardly when the object transitions from moving at the first velocity to moving at the second velocity.

- The gaming apparatus of claim 22 , wherein the virtual camera moves to the second position in a linear correspondence with a speed of the object.

- The gaming apparatus of claim 22 , wherein the virtual camera moves to the second position when the object reaches a maximum speed.

- A method comprising: generating, using one or more processors, a first image of a virtual object in a field of view of a virtual camera having an initial orientation and an initial position in a virtual world;receiving an input to increase a moving speed of the virtual object within the virtual world;raising the position of the virtual camera from the initial position upon receiving the input to increase the moving speed of the virtual object;downwardly changing the orientation of the virtual camera from the initial orientation upon receiving the input to increase the moving speed of the virtual object;and generating a second image of the virtual object in a field of view of the virtual camera at the raised position and downwardly changed orientation.

- The method of claim 25 , wherein a lowest part of the virtual object is more easily viewable in the second image than in the first image.

- The method of claim 25 , wherein raising the position of the virtual camera occurs simultaneously with downwardly changing the orientation of the virtual camera.

- The method of claim 25 , wherein the virtual object is a player character and a lowest part of the virtual object is a footing of the player character.

- A system comprising: a device configured to provide an instruction to increase a moving speed of a virtual object within a virtual world;and one or more processors configured to perform functionality comprising: generate a first image of the virtual object in a field of view of a virtual camera having an initial orientation and an initial position;raise, based upon on the instruction to increase the moving speed of the virtual object, the position of the virtual camera from the initial position;change downwardly, based upon on the instruction to increase the moving speed of the virtual object, the orientation of the virtual camera from the initial orientation;and generate at least a second image of the virtual object in a field of view of the virtual camera having the changed orientation and the changed position.

- The system of claim 29 , wherein a lowest part of the virtual object is more easily viewable in the second image than in the first image.

- The system of claim 29 , wherein the computer processor is configured to raise the position of the virtual camera simultaneously with the downward change to the orientation of the virtual camera.

- The system of claim 29 , wherein the device is a hand-held user-operable device.

- The system of claim 29 , wherein the virtual object is a player character and a lowest part of the virtual object is a footing of the player character.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.