U.S. Pat. No. 8,753,207

GAME SYSTEM, GAME PROCESSING METHOD, RECORDING MEDIUM STORING GAME PROGRAM, AND GAME DEVICE

AssigneeNintendo Co., Ltd.

Issue DateJanuary 23, 2012

Illustrative Figure

Abstract

In an example game device, a second character is caused to rotate based on an input operation performed on a stick of a terminal device and a change in an attitude of the terminal device. In the game device, when an enemy character is present behind the second character, a rotation angle based on an input operation performed on the stick is adjusted so that the second character is easily caused to face the enemy character. In the game device, when an orientation of the second character is changed based on the change in the attitude of the terminal device, a rotation angle based on the change in the attitude of the terminal device is adjusted so that the second character is easily caused to face the enemy character. In the game device, the second character is caused to rotate based the adjusted rotation angle.

Description

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS [1. General Configuration of Game System] A game system1will now be described with reference to the drawings.FIG. 1is a non-limiting example external view of the game system1. InFIG. 1, a game system1includes a non-portable display device (hereinafter referred to as a “television”)2such as a television receiver, a console-type game device3, an optical disc4, a controller5, a marker device6, and a terminal device7. In the game system1, the game device3performs a game process based on a game operation performed using the controller5, and displays a game image obtained through the game process on the television2and/or the terminal device7. In the game device3, the optical disc4typifying an interchangeable information storage medium used for the game device3is removably inserted. An information processing program (a game program, for example) to be executed by the game device3is stored on the optical disc4. The game device3has, on a front surface thereof, an insertion opening for the optical disc4. The game device3reads and executes the information processing program stored on the optical disc4which has been inserted in the insertion opening, to perform a game process. The television2is connected to the game device3by a connecting cord. A game image obtained as a result of a game process performed by the game device3is displayed on the television2. The television2includes a speaker2a(seeFIG. 2) which outputs a game sound obtained as a result of the game process. In alternative embodiments, the game device3and the non-portable display device may be an integral unit. Also, the communication between the game device3and the television2may be wireless communication. The marker device6is provided along the periphery of the screen (on the upper side of the screen inFIG. 1) of the television2. The user (player) can perform a game operation by moving the controller5, details of which will be described later. ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

[1. General Configuration of Game System]

A game system1will now be described with reference to the drawings.FIG. 1is a non-limiting example external view of the game system1. InFIG. 1, a game system1includes a non-portable display device (hereinafter referred to as a “television”)2such as a television receiver, a console-type game device3, an optical disc4, a controller5, a marker device6, and a terminal device7. In the game system1, the game device3performs a game process based on a game operation performed using the controller5, and displays a game image obtained through the game process on the television2and/or the terminal device7.

In the game device3, the optical disc4typifying an interchangeable information storage medium used for the game device3is removably inserted. An information processing program (a game program, for example) to be executed by the game device3is stored on the optical disc4. The game device3has, on a front surface thereof, an insertion opening for the optical disc4. The game device3reads and executes the information processing program stored on the optical disc4which has been inserted in the insertion opening, to perform a game process.

The television2is connected to the game device3by a connecting cord. A game image obtained as a result of a game process performed by the game device3is displayed on the television2. The television2includes a speaker2a(seeFIG. 2) which outputs a game sound obtained as a result of the game process. In alternative embodiments, the game device3and the non-portable display device may be an integral unit. Also, the communication between the game device3and the television2may be wireless communication.

The marker device6is provided along the periphery of the screen (on the upper side of the screen inFIG. 1) of the television2. The user (player) can perform a game operation by moving the controller5, details of which will be described later. The marker device6is used by the game device3for calculating a movement, a position, an attitude, etc., of the controller5. The marker device6includes two markers6R and6L at opposite ends thereof. Specifically, the marker6R (as well as the marker6L) includes one or more infrared light emitting diodes (LEDs), and emits infrared light in a forward direction of the television2. The marker device6is connected to the game device3via either a wired or wireless connection, and the game device3is able to control the lighting of each infrared LED of the marker device6. Note that the marker device6is movable, and the user can place the marker device6at any position. WhileFIG. 1shows an embodiment in which the marker device6is placed on top of the television2, the position and direction of the marker device6are not limited to this particular arrangement.

The controller5provides the game device3with operation data representing the content of an operation performed on the controller itself. The controller5and the game device3can communicate with each other via wireless communication. In the present embodiment, the controller5and the game device3use, for example, Bluetooth (Registered Trademark) technology for the wireless communication therebetween. In other embodiments, the controller5and the game device3may be connected via a wired connection. While only one controller is included in the game system1in the present embodiment, a plurality of controllers may be included in the game system1. In other words, the game device3can communicate with a plurality of controllers. Multiple players can play a game by using a predetermined number of controllers at the same time. The detailed configuration of the controller5will be described below.

The terminal device7is sized to be grasped by the user's hand or hands. The user can hold and move the terminal device7, or can place and use the terminal device7at an arbitrary position. The terminal device7, whose detailed configuration will be described below, includes a liquid crystal display (LCD)51as a display, and input mechanisms (e.g., a touch panel52, a gyroscopic sensor74, etc., to be described later). The terminal device7and the game device3can communicate with each other via a wireless connection (or via a wired connection). The terminal device7receives from the game device3data of an image (e.g., a game image) generated by the game device3, and displays the image on the LCD51. While an LCD is used as the display device in the embodiment, the terminal device7may include any other display device such as a display device utilizing electroluminescence (EL), for example. The terminal device7transmits operation data representing the content of an operation performed on the terminal device itself to the game device3.

[2. Internal Configuration of Game Device3]

Next, an internal configuration of the game device3will be described with reference toFIG. 2.FIG. 2is a non-limiting example block diagram showing the internal configuration of the game device3. The game device3includes a CPU10, a system LSI11, an external main memory12, a ROM/RTC13, a disc drive14, and an AV-IC15.

The CPU10performs a game process by executing a game program stored on the optical disc4, and functions as a game processor. The CPU10is connected to the system LSI11. The external main memory12, the ROM/RTC13, the disc drive14, and the AV-IC15, as well as the CPU10, are connected to the system LSI11. The system LSI11performs the following processes: controlling data transmission between each component connected thereto; generating an image to be displayed; acquiring data from an external device(s); and the like. The internal configuration of the system LSI11will be described below. The external main memory12, which is of a volatile type, stores a program such as a game program read from the optical disc4, a game program read from a flash memory17, or the like, and various data. The external main memory12is used as a work area and a buffer area for the CPU10. The ROM/RTC13includes a ROM (a so-called boot ROM) containing a boot program for the game device3, and a clock circuit (real time clock (RTC)) for counting time. The disc drive14reads program data, texture data, and the like from the optical disc4, and writes the read data into an internal main memory11e(to be described below) or the external main memory12.

The system LSI11includes an input/output processor (I/O processor)11a, a graphics processor unit (GPU)11b, a digital signal processor (DSP)11c, a video RAM (VRAM)11d, and the internal main memory11e. Although not shown in the figures, these components11ato11eare connected to each other through an internal bus.

The GPU11b, which forms a part of a rendering mechanism, generates an image in accordance with a graphic command (rendering command) from the CPU10. The VRAM11dstores data (data such as polygon data and texture data) required by the GPU11bto execute graphics commands. When an image is generated, the GPU11bgenerates image data using data stored in the VRAM11d. In the present embodiment, the game device3generates both a game image to be displayed on the television2and a game image to be displayed on the terminal device7. The game image to be displayed on the television2may also be hereinafter referred to as a “television game image,” and the game image to be displayed on the terminal device7may also be hereinafter referred to as a “terminal game image.”

The DSP11c, which functions as an audio processor, generates audio data using sound data and sound waveform (e.g., tone quality) data stored in one or both of the internal main memory11eand the external main memory12. In the present embodiment, game audio is output from the speaker of the television2, and game audio is output from the speaker of the terminal device7.

As described above, of images and audio generated in the game device3, data of an image and audio to be output from the television2is read out by the AV-IC15. The AV-IC15outputs the read image data to the television2via an AV connector16, and outputs the read audio data to the speaker2aprovided in the television2. Thus, images are displayed on the television2, and sound is output from the speaker2a.

Of images and audio generated in the game device3, data of an image and audio to be output from the terminal device7is transmitted to the terminal device7by an input/output processor11a, etc. The data transmission to the terminal device7by the input/output processor11a, or the like, will be described below.

The input/output processor11aexchanges data with components connected thereto, and downloads data from an external device(s). The input/output processor11ais connected to the flash memory17, a network communication module18, a controller communication module19, an extension connector20, a memory card connector21, and a codec LSI27. An antenna22is connected to the network communication module18. An antenna23is connected to the controller communication module19. The codec LSI27is connected to a terminal communication module28, and an antenna29is connected to the terminal communication module28.

The game device3can be connected to a network such as the Internet to communicate with external information processing devices (e.g., other game devices, various servers, computers, etc.). That is, the input/output processor11acan be connected to a network such as the Internet via the network communication module18and the antenna22to communicate with an external information processing device(s) connected to the network. The input/output processor11aregularly accesses the flash memory17to detect the presence or absence of any data which needs to be transmitted to the network, and when there is data, transmits the data to the network via the network communication module18and the antenna22. The input/output processor11aalso receives data transmitted from an external information processing device and data downloaded from a download server via the network, the antenna22, and the network communication module18, and stores the received data into the flash memory17. The CPU10executes a game program to read data stored in the flash memory17and use the data in the game program. The flash memory17may store saved game data (e.g., data representing game results or data representing intermediate game results) of a game played using the game device3in addition to data exchanged between the game device3and an external information processing device. The flash memory17may also store a game program(s).

The game device3can receive operation data from the controller5. That is, the input/output processor11areceives operation data transmitted from the controller5via the antenna23and the controller communication module19, and stores (temporarily) the data in a buffer area of the internal main memory11eor the external main memory12.

The game device3can exchange data such as images and audio with the terminal device7. When transmitting a game image (terminal game image) to the terminal device7, the input/output processor11aoutputs data of the game image generated by the GPU11bto the codec LSI27. The codec LSI27performs a predetermined compression process on the image data from the input/output processor11a. The terminal communication module28wirelessly communicates with the terminal device7. Therefore, the image data compressed by the codec LSI27is transmitted by the terminal communication module28to the terminal device7via the antenna29. In the present embodiment, the image data transmitted from the game device3to the terminal device7is image data used in a game, and the playability of a game can be adversely influenced if there is a delay in displaying an image in the game. Therefore, it is preferred to eliminate a delay as much as possible in transmission of image data from the game device3to the terminal device7. Therefore, in the present embodiment, the codec LSI27compresses image data using a compression technique with high efficiency such as the H.264 standard, for example. Other compression techniques may be used, and image data may be transmitted uncompressed if the communication speed is sufficient. The terminal communication module28is, for example, a Wi-Fi certified communication module, and may perform wireless communication at high speed with the terminal device7using, for example, a multiple input multiple output (MIMO) technique employed in the IEEE 802.11n standard, or other communication schemes.

The game device3transmits audio data to the terminal device7, in addition to image data. That is, the input/output processor11aoutputs audio data generated by the DSP11cto the terminal communication module28via the codec LSI27. The codec LSI27performs a compression process on audio data, as with image data. While the compression scheme for audio data may be any scheme, it is preferably a scheme with a high compression ratio and less audio degradation. In other embodiments, audio data may be transmitted uncompressed. The terminal communication module28transmits the compressed image data and audio data to the terminal device7via the antenna29.

The game device3can receive various data from the terminal device7. In the present embodiment, the terminal device7transmits operation data, image data, and audio data, details of which will be described below. These pieces of data transmitted from the terminal device7are received by the terminal communication module28via the antenna29. The image data and the audio data transmitted from the terminal device7has been subjected to a compression process similar to that on image data and audio data transmitted from the game device3to the terminal device7. Therefore, the compressed image data and audio data are sent from the terminal communication module28to the codec LSI27, which in turn performs a decompression process on the pieces of data and outputs the resulting pieces of data to the input/output processor11a. On the other hand, the operation data from the terminal device7may not be subjected to a compression process since the amount of the data is small as compared with images and audio. It may or may not be encrypted as necessary. After being received by the terminal communication module28, the operation data is output to the input/output processor11avia the codec LSI27. The input/output processor11astores (temporarily) data received from the terminal device7in a buffer area of the internal main memory11eor the external main memory12.

The game device3can be connected to another device or an external storage medium. That is, the input/output processor11ais connected to the extension connector20and the memory card connector21. The extension connector20is a connector for an interface, such as a USB or SCSI interface. The extension connector20can receive a medium such as an external storage medium, a peripheral device such as another controller, or a wired communication connector which enables communication with a network in place of the network communication module18. The memory card connector21is a connector for connecting, to the game device3, an external storage medium such as a memory card. For example, the input/output processor11acan access an external storage medium via the extension connector20or the memory card connector21to store data into the external storage medium or read data from the external storage medium.

The game device3includes a power button24, a reset button25, and an eject button26. The power button24and the reset button25are connected to the system LSI11. When the power button24is turned on, power is supplied to components of the game device3from an external power supply through an AC adaptor (not shown). When the reset button25is pressed, the system LSI11restarts the boot program of the game device3. The eject button26is connected to the disc drive14. When the eject button26is pressed, the optical disc4is ejected from the disc drive14.

In other embodiments, some of the components of the game device3may be provided as extension devices separate from the game device3. In this case, an extension device may be connected to the game device3via the extension connector20, for example. Specifically, an extension device may include components of the codec LSI27, the terminal communication module28, and the antenna29, for example, and can be attached/detached to/from the extension connector20. In this case, by connecting the extension device to a game device which does not include the above components, the game device can communicate with the terminal device7.

[3. Configuration of Controller5]

Next, with reference toFIGS. 3 and 4, the controller5will be described.FIG. 3is a non-limiting example perspective view showing an external configuration of the controller5.FIG. 4is a non-limiting example block diagram showing an internal configuration of the controller5. The perspective view ofFIG. 3shows the controller5as viewed from the top and the rear.

As shown inFIGS. 3 and 4, the controller5has a housing31formed by, for example, plastic molding. The housing31has a generally parallelepiped shape extending in a longitudinal (front-rear) direction (Z1-axis direction shown inFIG. 3), and is sized to be grasped by one hand of an adult or a child. The user can perform game operations by pressing buttons provided on the controller5, and by moving the controller5itself to change the position and attitude (tilt) thereof.

The housing31has a plurality of operation buttons. As shown inFIG. 3, on a top surface of the housing31, a cross button32a, a first button32b, a second button32c, an “A” button32d, a minus button32e, a home button32f, a plus button32g, and a power button32hare provided. A recessed portion is formed on a bottom surface of the housing31, and a “B” button32iis provided on a rear, sloped surface of the recessed portion. The operation buttons32ato32iare assigned, as necessary, their respective functions in accordance with the game program executed by the game device3. The power button32his used to remotely turn on and off the game device3.

On a rear surface of the housing31, the connector33is provided. The connector33is used to connect other devices (e.g., a sub-controller having an analog stick, other sensor units, etc.) to the controller5.

In a rear portion of the top surface of the housing31, a plurality (four inFIG. 3) of LEDs34ato34dare provided. The controller5is assigned a controller type (number) so as to be distinguishable from other controllers.

The controller5also has an image capturing/processing section35(FIG. 4), and a light incident surface35aof an image capturing/processing section35is provided on a front surface of the housing31. The light incident surface35ais made of a material which transmits at least infrared light emitted from the markers6R and6L.

On the top surface of the housing31, sound holes31athrough which sound from a speaker provided in the controller5is emitted out are provided between the first button32band the home button32f.

Note that the shape of the controller5, the shapes of the operation buttons, etc., are only for illustrative purposes. Other shapes, numbers, and positions are possible.

FIG. 4is a non-limiting example block diagram showing an internal configuration of the controller5. The controller5includes an operation section32(the operation buttons32ato32i), the image capturing/processing section35, a communication section36, the acceleration sensor37, and a gyroscopic sensor48. The controller5transmits data representing the content of an operation performed on the controller itself, as operation data, to the game device3. The operation data transmitted by the controller5may also be hereinafter referred to as “controller operation data,” and the operation data transmitted by the terminal device7may also be hereinafter referred to as “terminal operation data.”

The operation section32includes the operation buttons32ato32idescribed above, and outputs, to the microcomputer42of the communication section36, operation button data indicating the input states of the operation buttons32ato32i(e.g., whether or not the operation buttons32ato32iare pressed).

The image capturing/processing section35includes a infrared filter38, a lens39, an image capturing element40, and an image processing circuit41. The infrared filter38transmits only infrared light contained in light incident on the front surface of the controller5. The lens39collects the infrared light transmitted through the infrared filter38so that the light is incident on the image capturing element40. The image capturing element40is a solid-state image capturing device, such as, for example, a CMOS sensor or a CCD sensor, which receives the infrared light collected by the lens39, and outputs an image signal. The marker section55of the terminal device7and the marker device6of which images are to be captured are formed by markers which output infrared light. Therefore, the infrared filter38enables the image capturing element40to receive only the infrared light transmitted through the infrared filter38and generate image data, whereby an image of an object to be imaged (the marker section55and/or the marker device6) can be captured more accurately. In the description that follows, the image data generated by the image capturing element40is processed by the image processing circuit41. The image processing circuit41calculates a position of the object to be imaged within the captured image. The image processing circuit41outputs coordinates of the calculated position to the microcomputer42of the communication section36. The data representing the coordinates is transmitted as operation data to the game device3by the microcomputer42. The coordinates are hereinafter referred to as “marker coordinates.” The marker coordinates change depending on an orientation (a tilt angle) and/or a position of the controller5itself, and therefore, the game device3can calculate the orientation and position of the controller5using the marker coordinates.

The acceleration sensor37detects accelerations (including a gravitational acceleration) of the controller5. While the acceleration sensor37is assumed to be an electrostatic capacitance type micro-electromechanical system (MEMS) acceleration sensor, other types of acceleration sensors may be used.

In the present embodiment, the acceleration sensor37detects a linear acceleration in each of three axial directions, i.e., the up-down direction (Y1-axis direction shown inFIG. 3), the left-right direction (the X1-axis direction shown inFIG. 3), and the front-rear direction (the Z1-axis direction shown inFIG. 3) of the controller5.

Data (acceleration data) representing the acceleration detected by the acceleration sensor37is output to the communication section36. The acceleration detected by the acceleration sensor37changes depending on the orientation (tilt angle) and the movement of the controller5itself, and therefore, the game device3is capable of calculating the orientation (attitude) and the movement of the controller5using the obtained acceleration data.

One skilled in the art will readily understand from the description herein that additional information relating to the controller5can be estimated or calculated (determined) through a process by a computer, such as a processor (for example, the CPU10) of the game device3or a processor (for example, the microcomputer42) of the controller5, based on an acceleration signal output from the acceleration sensor37(this applies also to an acceleration sensor73to be described later). For example, assuming that the computer performs a process on the premise that the controller5including the acceleration sensor37is in the static state (that is, in the case in which the process is performed on the premise that the acceleration detected by the acceleration sensor contains only the gravitational acceleration), when the controller5is actually in the static state, it is possible to determine whether or not or how much the controller5is tilted relative to the direction of gravity, based on the detected acceleration. Specifically, when the state in which the detection axis of the acceleration sensor37faces vertically downward is used as a reference, whether or not the controller5is tilted relative to the reference can be determined based on whether or not 1 G (gravitational acceleration) is present, and the degree of tilt of the controller5relative to the reference can be determined based on the magnitude thereof. The multi-axis acceleration sensor37can more precisely determine the degree of tilt of the controller5relative to the direction of gravity by performing a process on the acceleration signals of the axes. In this case, the processor may calculate, based on the output from the acceleration sensor37, the tilt angle of the controller5, or the tilt direction of the controller5without calculating the tilt angle. Thus, by using the acceleration sensor37in combination with the processor, it is possible to determine the tilt angle or the attitude of the controller5.

On the other hand, when it is assumed that the controller5is in the dynamic state (in which the controller5is being moved), the acceleration sensor37detects the acceleration based on the movement of the controller5, in addition to the gravitational acceleration, and it is therefore possible to determine the movement direction of the controller5by removing the gravitational acceleration component from the detected acceleration through a predetermined process. Even when it is assumed that the controller5is in the dynamic state, it is possible to determine the tilt of the controller5relative to the direction of gravity by removing the acceleration component based on the movement of the acceleration sensor from the detected acceleration through a predetermined process. In other embodiments, the acceleration sensor37may include an embedded processor or another type of dedicated processor for performing a predetermined process on an acceleration signal detected by a built-in acceleration detector before the acceleration signal is output to the microcomputer42. For example, when the acceleration sensor37is used to detect a static acceleration (for example, the gravitational acceleration), the embedded or dedicated processor may convert the acceleration signal to a tilt angle (or other preferred parameters).

The gyroscopic sensor48detects angular velocities about three axes (the X1-, Y1-, and Z1-axes in the embodiment). In the present specification, with respect to the image capturing direction (the Z1-axis positive direction) of the controller5, a rotation direction about the X1-axis is referred to as a pitch direction, a rotation direction about the Y1-axis as a yaw direction, and a rotation direction about the Z1-axis as a roll direction. The number and combination of gyroscopic sensors to be used are not limited to any particular number and combination as long as the gyroscopic sensor48can detect angular velocities about three axes. For example, the gyroscopic sensor48may be a 3-axis gyroscopic sensor, or angular velocities about three axes may be detected by a combination of a 2-axis gyroscopic sensor and a 1-axis gyroscopic sensor. Data representing the angular velocity detected by the gyroscopic sensor48is output to the communication section36. The gyroscopic sensor48may be a gyroscopic sensor that detects an angular velocity or velocities about one axis or two axes.

The communication section36includes the microcomputer42, a memory43, the wireless module44, and the antenna45. The microcomputer42controls the wireless module44for wirelessly transmitting, to the game device3, data acquired by the microcomputer42while using the memory43as a storage area in the process.

Pieces of data output from the operation section32, the image capturing/processing section35, the acceleration sensor37, and the gyroscopic sensor48to the microcomputer42are temporarily stored in the memory43. These pieces of data are transmitted as the operation data (controller operation data) to the game device3.

As described above, as operation data representing an operation performed on the controller itself, the controller5can transmit marker coordinate data, acceleration data, angular velocity data, and operation button data. The game device3performs a game process using the operation data as a game input. Therefore, by using the controller5, the user can perform a game operation of moving the controller5itself, in addition to a conventional typical game operation of pressing the operation buttons. Examples of the game operation of moving the controller5itself include an operation of tilting the controller5to an intended attitude, an operation of specifying an intended position on the screen with the controller5, etc.

While the controller5does not include a display for displaying a game image in the embodiment, it may include a display for displaying, for example, an image representing a battery level, etc.

[4. Configuration of Terminal Device7]

Next, a configuration of the terminal device7will be described with reference toFIGS. 5 to 7.FIG. 5is a non-limiting example plan view showing an external configuration of the terminal device7. InFIG. 5, (a) is a front view of the terminal device7, (b) is a top view thereof, (c) is a right side view thereof, and (d) is a bottom view thereof.FIG. 6is a non-limiting example diagram showing a user holding the terminal device7in a landscape position.

As shown inFIG. 5, the terminal device7includes a housing50generally in a horizontally-elongated rectangular plate shape. That is, it can also be said that the terminal device7is a tablet-type information processing device. The housing50is sized to be grasped by the user.

The terminal device7includes an LCD51on a front surface (front side) of the housing50. The LCD51is provided near the center of the front surface of the housing50. Therefore, the user can hold and move the terminal device7while viewing the screen of the LCD51, by holding portions of the housing50on opposite sides of the LCD51, as shown inFIG. 6. WhileFIG. 6shows an example in which the user holds the terminal device7in a landscape position (being wider than it is long) by holding portions of the housing50on left and right sides of the LCD51, the user can also hold the terminal device7in a portrait position (being longer than it is wide).

As shown in (a) ofFIG. 5, the terminal device7includes a touch panel52on the screen of the LCD51as an operation mechanism. The touch panel52may be of a single-touch type or a multi-touch type. While a touch pen60is usually used for performing an input operation on the touch panel52, the present exemplary embodiment is not limited to using the touch pen60, and an input operation may be performed on the touch panel52with a finger of the user. The housing50is provided with a hole60afor accommodating the touch pen60used for performing an input operation on the touch panel52(see (b) ofFIG. 5).

As shown inFIG. 5, the terminal device7includes two analog sticks53A and53B and a plurality of buttons (keys)54A to54M, as operation mechanisms (operation sections). The analog sticks53A and53B are each a direction-selection device. The analog sticks53A and53B are each configured so that the movable member (stick portion) operated with a finger of the user can be slid in any direction (at any angle in the up, down, left, right and diagonal directions) with respect to the front surface of the housing50. That is, the analog sticks53A and53B are each a direction input device which is also called a slide pad. The movable member of each of the analog sticks53A and53B may be of a type that is tilted in any direction with respect to the front surface of the housing50. Since the present embodiment uses analog sticks of a type that has a movable member which is slidable, the user can operate the analog sticks53A and53B without significantly moving the thumbs and therefore while holding the housing50more firmly.

The left analog stick53A is provided on the left side of the screen of the LCD51, and the right analog stick53B is provided on the right side of the screen of the LCD51. As shown inFIG. 6, the analog sticks53A and53B are provided at positions that allow the user to operate the analog sticks53A and53B while holding the left and right portions of the terminal device7(on the left and right sides of the LCD51), and therefore, the user can easily operate the analog sticks53A and53B even when holding and moving the terminal device7.

The buttons54A to54L are operation mechanisms (operation sections) for making predetermined inputs, and are keys that can be pressed down. As will be discussed below, the buttons54A to54L are provided at positions that allow the user to operate the buttons54A to54L while holding the left and right portions of the terminal device7(seeFIG. 6).

As shown in (a) ofFIG. 5, the cross button (direction-input button)54A and the buttons54B to54H and54M, of the operation buttons54A to54L, are provided on the front surface of the housing50.

The cross button54A is provided on the left side of the LCD51and under the left analog stick53A. The cross button54A has a cross shape, and can be used to select at least up, down, left, and right directions.

The buttons54B to54D are provided on the lower side of the LCD51. The terminal device7includes the power button54M for turning on and off the terminal device7. The game device3can be remotely turned on and off by operating the power button54M. The four buttons54E to54H are provided on the right side of the LCD51and under the right analog stick53B. Moreover, the four buttons54E to54H are provided on the upper, lower, left and right sides (of the center position between the four buttons54E to54H). Therefore, with the terminal device7, the four buttons54E to54H can also serve as buttons with which the user selects the up, down, left and right directions.

In the present embodiment, a projecting portion (an eaves portion59) is provided on the back side of the housing50(the side opposite to the front surface where the LCD51is provided) (see (c) ofFIG. 5). As shown in (c) ofFIG. 5, the eaves portion59is a mountain-shaped member which projects from the back surface of the generally plate-shaped housing50. The projecting portion has a height (thickness) that allows fingers of the user holding the back surface of the housing50to rest thereon.

As shown in (a), (b), and (c) ofFIG. 5, a first L button541and a first R button54J are provided in the right and left sides, respectively, on the upper surface of the housing50. In the present embodiment, the first L button541and the first R button54J are provided on diagonally upper portions (a left upper portion and a right upper portion) of the housing50.

As shown in (c) ofFIG. 5, a second L button54K and a second R button54L are provided on the projecting portion (the eaves portion59). The second L button54K is provided in the vicinity of the left end of the eaves portion59. The second R button54L is provided in the vicinity of the right end of the eaves portion59.

The buttons54A to54L are each assigned a function in accordance with the game program. For example, the cross button54A and the buttons54E to54H may be used for a direction-selection operation, a selection operation, etc., and the buttons54B to54E may be used for a decision operation, a cancel operation, etc. The terminal device7may include a button for turning on and off the LCD51, and a button for performing a connection setting (pairing) with the game device3.

As shown in (a) ofFIG. 5, the terminal device7includes the marker section55including a marker55A and a marker55B on the front surface of the housing50. The marker section55is provided on the upper side of the LCD51. The markers55A and55B are each formed by one or more infrared LEDs, as are the markers6R and6L of the marker device6. The infrared LEDs of the markers55A and55B are provided behind or further inside than a window portion that is transmissive to infrared light. The marker section55is used by the game device3to calculate the movement, etc., of the controller5, as is the marker device6described above. The game device3can control the lighting of the infrared LEDs of the marker section55.

The terminal device7includes a camera56as an image capturing mechanism. The camera56includes an image capturing element (e.g., a CCD image sensor, a CMOS image sensor, or the like) having a predetermined resolution, and a lens.

The terminal device7includes a microphone69as an audio input mechanism. A microphone hole50cis provided on the front surface of the housing50. The microphone69is provided inside the housing50behind the microphone hole50c. The microphone69detects ambient sound of the terminal device7such as the voice of the user.

The terminal device7includes a speaker77as an audio output mechanism. As shown in (a) ofFIG. 5, speaker holes57are provided in a lower portion of the front surface of the housing50. The output sound from the speaker77is output from the speaker holes57. In the present embodiment, the terminal device7includes two speakers, and the speaker holes57are provided at the respective positions of the left and right speakers. The terminal device7includes a knob64for adjusting the sound volume of the speaker77. The terminal device7includes an audio output terminal62for connecting an audio output section such as an earphone thereto.

The housing50includes a window63through which an infrared signal from an infrared communication module82is emitted out from the terminal device7.

The terminal device7includes an extension connector58for connecting another device (additional device) to the terminal device7. The extension connector58is a communication terminal for exchanging data (information) with another device connected to the terminal device7.

In addition to the extension connector58, the terminal device7includes a charging terminal66for obtaining power from an additional device. In the present embodiment, the charging terminal66is provided on a lower side surface of the housing50. Therefore, when the terminal device7and an additional device are connected to each other, it is possible to supply power from one to the other, in addition to exchanging information therebetween, via the extension connector58. The terminal device7includes a charging connector, and the housing50includes a cover portion61for protecting the charging connector. Although the charging connector (the cover portion61) is provided on an upper side surface of the housing50in the present embodiment, the charging connector (the cover portion61) may be provided on a left, right, or lower side surface of the housing50.

The housing50of the terminal device7includes holes65aand65bthrough which a strap cord can be tied to the terminal device7.

With the terminal device7shown inFIG. 5, the shape of each operation button, the shape of the housing50, the number and positions of the components, etc., are merely illustrative, and the present exemplary embodiment can be implemented in other shapes, numbers, and positions.

Next, an internal configuration of the terminal device7will be described with reference toFIG. 7.FIG. 7is a non-limiting example block diagram showing the internal configuration of the terminal device7. As shown inFIG. 7, the terminal device7includes, in addition to the components shown inFIG. 5, a touch panel controller71, a magnetic sensor72, the acceleration sensor73, the gyroscopic sensor74, a user interface controller (UI controller)75, a codec LSI76, the speaker77, a sound IC78, the microphone79, a wireless module80, an antenna81, the infrared communication module82, a flash memory83, a power supply IC84, a battery85, and a vibrator89. These electronic components are mounted on an electronic circuit board and accommodated in the housing50.

The UI controller75is a circuit for controlling the input/output of data to/from various input/output sections. The UI controller75is connected to the touch panel controller71, an analog stick53(the analog sticks53A and53B), an operation button54(the operation buttons54A to54L), the marker section55, the magnetic sensor72, the acceleration sensor73, the gyroscopic sensor74, and the vibrator89. The UI controller75is connected to the codec LSI76and the extension connector58. The power supply IC84is connected to the UI controller75, and power is supplied to each section via the UI controller75. The built-in battery85is connected to the power supply IC84to supply power. The charger86or a cable with which power can be obtained from an external power source can be connected to the power supply IC84via a charging connector, and the terminal device7can receive power supply from or be charged by an external power source using the charger86or the cable. The terminal device7may be charged by attaching the terminal device7to a cradle (not shown) having a charging function.

The touch panel controller71is a circuit which is connected to the touch panel52and controls the touch panel52. The touch panel controller71generates touch position data in a predetermined format based on a signal from the touch panel52, and outputs the data to the UI controller75. The touch position data represents, for example, the coordinates of a position on the input surface of the touch panel52at which an input operation is performed.

The analog stick53outputs, to the UI controller75, stick data representing a direction and an amount in which the stick portion operated with a finger of the user has been slid (or tilted). The operation button54outputs, to the UI controller75, operation button data representing the input state of each of the operation buttons54A to54L (e.g., whether the button is pressed).

The magnetic sensor72detects an azimuth by sensing the magnitude and direction of the magnetic field. Azimuth data representing the detected azimuth is output to the UI controller75. The UI controller75outputs a control instruction for the magnetic sensor72to the magnetic sensor72. While there are sensors using a magnetic impedance (MI) element, a fluxgate sensor, a Hall element, a giant magneto-resistive (GMR) element, a tunnel magneto-resistance (TMR) element, an anisotropic magneto-resistive (AMR) element, etc., the magnetic sensor72may be any sensor as long as the sensor can detect the azimuth. Strictly speaking, in a place where there is a magnetic field other than the geomagnetic field, the obtained azimuth data does not represent the azimuth. Nevertheless, if the terminal device7moves, the azimuth data changes, and it is therefore possible to calculate a change in the attitude of the terminal device7.

The acceleration sensor73is provided inside the housing50and detects the magnitude of a linear acceleration along each of the directions of the three axes (the X-, Y-, and Z-axes shown in (a) ofFIG. 5). Specifically, the acceleration sensor73detects the magnitude of the linear acceleration along each of the axes, where the X-axis lies in a longitudinal direction of the housing50, the Y-axis lies in a width direction of the housing50, and the Z-axis lies in a direction vertical to the surface of the housing50. Acceleration data representing the detected acceleration is output to the UI controller75. The UI controller75outputs a control instruction for the acceleration sensor73to the acceleration sensor73. While the acceleration sensor73is assumed to be a capacitive-type MEMS-type acceleration sensor, for example, in the present embodiment, other types of acceleration sensors may be employed in other embodiments. The acceleration sensor73may be an acceleration sensor which detects an acceleration or accelerations in one or two axial detections.

The gyroscopic sensor74is provided inside the housing50and detects angular velocities about the three axes, i.e., the X-, Y-, and Z-axes. Angular velocity data representing the detected angular velocities is output to the UI controller75. The UI controller75outputs a control instruction for the gyroscopic sensor74to the gyroscopic sensor74. The number and combination of gyroscopic sensors used for detecting angular velocities about the three axes may be any number and combination, and the gyroscopic sensor74may be formed by a 2-axis gyroscopic sensor and a 1-axis gyroscopic sensor, as is the gyroscopic sensor48. The gyroscopic sensor74may be a gyroscopic sensor which detects an acceleration or accelerations in one or two axial detections.

The UI controller75outputs, to the codec LSI76, operation data including touch position data, stick data, operation button data, azimuth data, acceleration data, and angular velocity data received from the components described above. If another device is connected to the terminal device7via the extension connector58, data representing an operation performed on the other device may be further included in the operation data.

The codec LSI76is a circuit for performing a compression process on data to be transmitted to the game device3, and a decompression process on data transmitted from the game device3. The LCD51, the camera56, the sound IC78, the wireless module80, the flash memory83, and the infrared communication module82are connected to the codec LSI76. The codec LSI76includes a CPU87and an internal memory88. While the terminal device7does not perform a game process itself, the terminal device7executes programs for management and communication thereof. When the terminal device7is turned on, a program stored in the flash memory83is read out to the internal memory88and executed by the CPU87, whereby the terminal device7is started up. Some area of the internal memory88is used as a VRAM for the LCD51.

The camera56captures an image and outputs the captured image data to the codec LSI76in accordance with an instruction from the game device3. A control instruction for the camera56, such as an image capturing instruction, is output from the codec LSI76to the camera56.

The sound IC78is a circuit which is connected to the speaker77and the microphone79and controls input/output of audio data to/from the speaker77and the microphone79. That is, when audio data is received from the codec LSI76, the sound IC78outputs an audio signal obtained by performing D/A conversion on the audio data to the speaker77, which in turn outputs sound. The microphone79detects sound entering the terminal device7(the voice of the user, etc.), and outputs an audio signal representing the sound to the sound IC78. The sound IC78performs A/D conversion on the audio signal from the microphone79, and outputs audio data in a predetermined format to the codec LSI76.

The codec LSI76transmits image data from the camera56, audio data from the microphone79, and operation data (terminal operation data) from the UI controller75to the game device3via the wireless module80. In the present embodiment, the codec LSI76performs a compression process similar to that of the codec LSI27on image data and audio data. The terminal operation data and the compressed image data and audio data are output, as transmit data, to the wireless module80. The antenna81is connected to the wireless module80. The wireless module80transmits the transmit data to the game device3via the antenna81. The wireless module80has a function similar to that of the terminal communication module28of the game device3. That is, the wireless module80has a function of connecting to a wireless LAN by a scheme in conformity with the IEEE 802.11n standard, for example. The transmitted data may or may not be encrypted as necessary.

As described above, the transmit data transmitted from the terminal device7to the game device3includes operation data (terminal operation data), image data, and audio data. When another device is connected to the terminal device7via the extension connector58, data received from the other device may also be contained in the transmit data. The infrared communication module82establishes infrared communication in conformity with the IRDA standard, for example, with another device. The codec LSI76may transmit, to the game device3, data received via infrared communication while the data is contained in the transmit data as necessary.

As described above, compressed image data and audio data are transmitted from the game device3to the terminal device7. These pieces of data are received by the codec LSI76via the antenna81and the wireless module80. The codec LSI76decompresses the received image data and audio data. The decompressed image data is output to the LCD51, which in turn displays an image on the LCD51. That is, the codec LSI76(the CPU87) displays the received image data on the display section. The decompressed audio data is output to the sound IC78, which in turn causes the speaker77to emit sound.

[5. General Description of Game Process]

Next, a game process executed in the game system1of the present embodiment will be generally described. A game in the present embodiment is played by a plurality of players. In the present embodiment, one terminal device7and a plurality of controllers5are connected to the game device3via wireless communication. In the game of the present embodiment, the maximum number of controllers5which are allowed to connect to the game device3is three.

In the description that follows, the game of the present embodiment is assumed to be played by four players which are three first players (first players A-C) who operates the controllers5(controllers5a-5c) and one second player who operates the terminal device7.

FIG. 8is a non-limiting example diagram showing an example television game image displayed on the television2.FIG. 9is a non-limiting example diagram showing an example terminal game image displayed on the LCD51of the terminal device7.

As shown inFIG. 8, the screen of the television2is divided in four equal regions, in which images90a,90b,90c, and90dare displayed. As shown inFIG. 8, the television2displays first characters91a,91b, and91c, and a second character92. A plurality of enemy characters (93a-93c) are also displayed on the television2.

The first character91ais a virtual character which is provided in a game space (a three-dimensional (or two-dimensional) virtual world) and is operated by the first player A. The first character91aholds a sword object95aand attacks the enemy character93using the sword object95a. The first character91bis a virtual character which is provided in the game space and is operated by the first player B. The first character91bholds a sword object95band attacks the enemy character93using the sword object95b. The first character91cis a virtual character which is provided in the game space and is operated by the first player C. The first character91cholds a sword object95cand attacks the enemy character93using the sword object95c. The second character92is a virtual character which is provided in the game space and is operated by the second player. The second character92holds a bow object96and an arrow object97and attacks the enemy character93by shooting the arrow object97in the game space. The enemy character93is a virtual character which is controlled by the game device3.

In the game of the present embodiment, the first players A-C and the second player move in the game space while cooperating with each other to kill or beat the enemy character93. Specifically, the player characters (91a-91cand92) move from a game start position to a game end position in the game space while killing or beating the enemy character93.

As shown inFIG. 8, the television2displays the images90a-90din the four equal regions (upper left, lower left, upper right, and lower right regions) into which the screen is divided. Specifically, the upper left region of the screen shows the image90awhich is an image of the game space as viewed from directly behind the first character91awhich is operated by the first player A using the controller5a. The image90aof the game space is captured by a first virtual camera A which is set based on a position and an operation in the game space of the first character91a. A shooting direction of the first virtual camera A is the same as the orientation in the game space of the first character91a. The upper right region of the screen shows the image90bwhich is an image of the game space as viewed from directly behind the first character91bwhich is operated by the first player B using the controller5b. The image90bof the game space is captured by a first virtual camera B which is set based on a position and an orientation in the game space of the first character91b. A shooting direction of the first virtual camera B is the same as the orientation in the game space of the first character91b. The lower left region of the screen shows the image90cwhich is an image of the game space as viewed from directly behind the first character91cwhich is operated by the first player C using the controller5c. The image90cof the game space is captured by a first virtual camera C which is set based on a position and an orientation in the game space of the first character91c. A shooting direction of the first virtual camera C is the same as the orientation in the game space of the first character91c. The lower right region of the screen shows the image90dwhich is an image of the game space as viewed from diagonally behind the second character92which is operated by the second player using the terminal device7. The image90dof the game space is captured by a second virtual camera which is set based on a position and an orientation in the game space of the second character92. The second virtual camera is located at a predetermined position at the right rear of the second character92(above the vicinity of the ground of the game space). Therefore, the image90dcaptured by the second virtual camera is an image of the game space containing the second character92as viewed from diagonally behind the second character and above.

In the present embodiment, the first virtual camera A is set directly behind the first character91a, and therefore, the first character91ais translucent in the image90a. As a result, the player can visually recognize a character(s) which is located deeper in the depth direction of the screen than the first character91ain the image90a. This holds true for the other images90band90d, etc. The positions of the first virtual cameras A-C may be set at the viewpoints of the first characters91a-91c.

On the other hand, as shown inFIG. 9, the LCD51of the terminal device7displays an image90eof the game space as viewed from the rear of the second character92. The image90eis an image of the game space captured by a third virtual camera which is set based on a position and an orientation in the game space of the second character92. The third virtual camera is located behind the second character92(here, also offset slightly rightwardly from the center line in the front-rear direction of the second character92). An attitude (shooting direction) of the third virtual camera is set based on the orientation in the game space of the second character92. As described below, the orientation of the second character92is changed based on an input operation performed on the left analog stick53A and the attitude of the terminal device7. Therefore, the attitude of the third virtual camera is changed based on the input operation performed on the left analog stick53A and the attitude of the terminal device7.

A position in the game space is represented by coordinate values along the axes of a rectangular coordinate system (xyz coordinate system) which is fixed to the game space. The y-axis extends upward along a direction perpendicular to the ground of the game space, and the x- and z-axes extend in parallel to the ground of the game space. The first characters91a-91cand the second character92move on the ground of the game space (xz-plane) while changing the orientation (direction parallel to the xz-plane). The first character91automatically moves under a predetermined rule. The second character92moves on the ground of the game space while changing the orientation in accordance with an operation performed on the terminal device7. A control for the position and orientation of the second character92will be described below.

Next, the movement (changes in the orientation and position) of the first character91will be described. The first character91automatically moves on a path which is previously set in the game space.FIG. 10is a non-limiting example diagram showing movement paths of the first characters91a-91c.FIG. 10simply shows the game space as viewed from above. As shown inFIG. 10, the first characters91a-91cand the enemy characters93aand93bare present in the game space. It is assumed that there is the game start position in a lower portion ofFIG. 10and there is the game end position in an upper portion ofFIG. 10. As shown inFIG. 10, paths98a,98b, and98cindicated by dash-dot lines are previously set in the game space. The paths98a-98care movement paths of the characters which are not actually displayed on the screen and are internally set in the game device3.

Specifically, the first character91anormally automatically moves on the path98a. The first character91bnormally automatically moves on the path98b. The first character91cnormally automatically moves on the path98c. Here, if an enemy character93is located within a predetermined range (distance) from the first character91, the first character91leaves the path98and approaches or moves toward the enemy character93which is present within the predetermined range. For example, as shown inFIG. 10, if a distance between the first character91aand the enemy character93ais greater than a predetermined value, the orientation of the first character91ais set to a direction along the path98a, and the position of the first character91achanges with time so that the first character91ais positioned on the path98a(time t=t0). In other words, if the distance between the first character91aand the enemy character93ais greater than the predetermined value, the first character91amoves on the path98awhile changing the orientation. If a predetermined period of time has elapsed since time t=t0, i.e., time t=t1, the distance between the first character91aand the enemy character93a(and93b) is smaller than or equal to the predetermined value. In this case, the first character91abegins to move toward the enemy character93a. That is, the orientation of the first character91ais changed to a direction from the position of the first character91ato the position of the enemy character93a, and the first character91amoves toward the enemy character93a. Similarly, since the distance between the first character91band the enemy character93ais smaller than or equal to the predetermined value, the first character91balso begins to move toward the enemy character93a. On the other hand, since the distance between the first character91cand the enemy character93ais greater than the predetermined value, the first character91cdoes not move toward the enemy character93aand moves on the path98c.

FIG. 11is a non-limiting example diagram showing details of the movement of the first character91a. As shown inFIG. 11, a guide object94awhich moves on the path98ais provided in the game space. A guide object94is provided for each first character91, and is internally set in the game device3. The guide object94is not actually displayed on the screen. The guide object94ais used to control the movement of the first character91a, and automatically moves on the path98a. If no enemy characters93are present around the first character91a, the first character91amoves, following the guide object94a. Specifically, the orientation of the first character91ais set to a direction from the position of the first character91atoward the position of the guide object94a, and the position of the first character91ais changed to be closer to the position of the guide object94a. On the other hand, if an enemy character93is present around the first character91a, the first character91aapproaches or moves toward the enemy character93. In other words, if an enemy character93is present around the first character91a, the first character91amoves toward the enemy character93. If the enemy character93is killed or beaten, so that no enemy characters93are present around the first character91a, the first character91amoves again, following the guide object94a. Specifically, as shown inFIG. 11, at time t=t1, the guide object94ais present on the path98a, and the first character91ais also located on the path98a. Here, at time t=t1, if the distance between the first character91aand an enemy character93is smaller than or equal to the predetermined value, the first character91abegins to move toward the enemy character93. If a predetermined period of time has elapsed since time t=t1, i.e., time t=t2, the first character91aleaves the path98a. Thereafter, if another predetermined period of time has elapsed, the first character91amoves to a position in the vicinity of the enemy character93, the first character91afights with the enemy character93. During this fighting, the guide object94amoves on the path98awhile the distance between the first character91aand the guide object94ais prevented from being greater than or equal to a predetermined value. If the fighting between the first character91aand the enemy character93has continued for a long period of time, the guide object94astops. If the first character91akills or beats the enemy character93at time t=t3, so that there are no enemy characters93around the first character91a, the first character91aresumes moving, following the guide object94a(toward the guide object94a).

Thus, each first character91normally automatically moves in the game space, following the corresponding guide object94, and when an enemy character93is present within the predetermined range, moves toward the enemy character93.

FIG. 12is a non-limiting example diagram showing the image90awhich is displayed in the upper left region of the television2when the first character91abegins to move toward a plurality of enemy characters93. As shown inFIG. 12, when the distance between the first character91aand an enemy character93is smaller than or equal to the predetermined value, then if a plurality of enemy characters93are present, the image90ashows a selection object99a. The selection object99ais displayed above the head of an enemy character93awhich is to be attacked by the first character91a. In other words, the selection object99aindicates a target to be attacked by the first character91a. The first character91aautomatically approaches or moves toward the enemy character93aselected by the selection object99a(without the first player A specifying a direction). When the first player A operates the cross button32aof the controller5, the position of the selection object99ais changed so that the selection object99ais displayed above the head of another enemy character93b. As a result, the first player A switches the attack target from one enemy character93to another.

As shown inFIG. 10, if the distance between the first character91band the enemy character93is smaller than or equal to the predetermined value, the first character91balso moves toward the enemy character93. Although not shown, similar toFIG. 12, the image90bdisplayed in the upper right region of the television2shows a selection object99bin addition to the first character91band the enemy characters93aand93b. In this case, the image90aalso shows the selection object99bindicating a target to be attacked by the first character91b. The selection object99bis displayed in a display form different from that of the selection object99a. For example, if the first character91ais displayed in red color, the selection object99ais displayed in red color, and if the first character91bis displayed in blue color, the selection object99bis displayed in blue color. As a result, by viewing the image90a, the first player A can recognize the attack target of the first character91aoperated by himself or herself and the attack target of the first character91boperated by the first player B. That is, by viewing the images90a-90c, each player operating the controller5can simultaneously recognize which of the enemy characters93is a target to be attacked by himself or herself and which of the enemy characters93is a target to be attacked by other players.

The first character91and the second character92attack the enemy character93as follows. That is, the first character91aattacks the enemy character93using the sword object95a. When the first player A swings the controller5a, the first character91aperforms a motion of swinging the sword object95a. Specifically, the attitude in the game space of the sword object95ais changed, corresponding to a change in the attitude in the real space of the controller5a. For example, when the first player A swings the controller5afrom left to right, the first character91aperforms a motion of swinging the sword object95afrom left to right. When the sword object95ais swung, then if an enemy character93is present within a short distance (a distance corresponding to the length of the sword object95a) in front of the first character91a, the sword object95ahits the enemy character93, i.e., an attack is successful. If a predetermined number of attacks on the enemy character93are successful, the enemy character93is killed or beaten. Similarly, the first characters91band91cattack the enemy character93using the sword objects95band95c, respectively.

On the other hand, the second character92shoots the arrow object97in the game space to attack the enemy character93. For example, when the second player slides the right analog stick53B of the terminal device7in a predetermined direction (e.g., the down direction) using his or her finger, a circular sight is displayed on the LCD51of the terminal device7. In this case, when the second player releases the right analog stick53B, the right analog stick53B returns to the original position (the right analog stick53B returns to the center position). As a result, the arrow object97is shot in the game space from the position of the second character92toward the center of the circular sight displayed on the LCD51. Thus, the second character92can attack the enemy character93at a long distance from the second character92by shooting the arrow object97. As shown inFIG. 8, the number of remaining arrow objects97is displayed on the television2(inFIG. 8, the number of remaining arrow object97is four). When the second player performs a predetermined operation on the terminal device7(e.g., the back surface of the terminal device7is caused to face in a direction toward the ground), an arrow object97is reloaded, and the number of remaining arrow objects97becomes a predetermined value.

The enemy character93attacks player characters (the first character91and the second character92). If one of the player characters (here, four characters) is killed or beaten by a predetermined number of attacks from the enemy character93, the game is over. Therefore, the players enjoy playing the game by cooperating with each other to kill or beat the enemy character93so that none of the players is killed or beaten by the enemy character93.

Next, a control of the position and orientation of the second character92will be described. The second character92moves based on an input operation performed on the left analog stick53A of the terminal device7.FIG. 13is a non-limiting example diagram showing a movement and a rotation of the second character92based on an input direction of the left analog stick53A. InFIG. 13, an AY-axis direction indicates the up direction of the left analog stick53A (the Y-axis direction in (a) ofFIG. 5), and an AX-axis direction indicates the right direction of the left analog stick53A (the X-axis negative direction in (a) ofFIG. 5). Specifically, when the left analog stick53A is slid in the up direction, the second character92moves forward (i.e., moves in a depth direction away from the player of the screen ofFIG. 9). When the left analog stick53A is slid in the down direction, the second character92retreats or moves backward without changing the orientation (i.e., moves in a depth direction toward the player of the screen ofFIG. 9while facing in the depth direction away from the player). Thus, when the up direction is input using the left analog stick53A, the second character92moves forward, and when the down direction is input using the left analog stick53A, the second character92retreats or moves backward.

The orientation of the second character92is changed based on a first input operation and a second input operation. The first input operation is performed on the left analog stick53A. Specifically, as shown inFIG. 13, when the left analog stick53A is slid in the right direction, the second character92turns clockwise. That is, when the left analog stick53A is slid in the right direction, the second character92rotates clockwise as viewed from above in the game space (in this case, only the orientation of the second character92is changed, and the position of the second character92is not changed). When the left analog stick53A is slid in the left direction, the second character92turns counterclockwise. When the left analog stick53A is slid in a diagonal direction, the second character92moves while turning clockwise or counterclockwise. For example, when the left analog stick53A is slid diagonally upward and to the right (in an upper right direction), the second character92moves forward while turning clockwise.

The second input operation is performed by changing the attitude in the real space of the terminal device7. That is, the orientation of the second character92is changed based on a change in the attitude of the terminal device7.FIG. 14is a non-limiting example diagram showing the terminal device7as viewed from above in the real space, indicating a change in the attitude in the real space of the terminal device7. As shown inFIG. 14, when the terminal device7is rotated about the Y-axis (rotated about the axis of the gravity direction from the attitude ofFIG. 6), the orientation in the game space of the second character92is changed based on the amount of the rotation. For example, when the terminal device7is rotated clockwise by a predetermined angle as viewed from above in the real space, the second character92is also rotated clockwise by the predetermined angle as viewed from above in the game space. For example, when the second player causes the back surface of the terminal device (a surface opposite to the surface on which the LCD51is provided) to face in a right direction, the second character92faces in a right direction. For example, when the back surface of the terminal device7is caused to face in an up direction (the terminal device7is rotated about the X-axis), the orientation of the second character92is not changed. In other words, the orientation of the second character92is set to be parallel to the ground (xz-plane) of the game space, and therefore, even when the back surface of the terminal device7is caused to face in an up direction, the second character92does not face in an up direction in the game space. In another embodiment, the orientation of the second character92may be changed along an up-down direction (a direction parallel to the y-axis) in the game space.

Thus, the second character92is caused to move based on the up and down directions input using the left analog stick53A (a sliding operation in the up and down directions). The orientation of the second character is changed based on the left and right directions input using the left analog stick53A and a change in the attitude of the terminal device7.

The attitudes of the second and third virtual cameras are changed based on a change in the orientation of the second character92. Specifically, the shooting direction vector of the second virtual camera is set to have a fixed angle with respect to the orientation (front direction vector) of the second character92. As a result, the television2displays the image90dwhich is an image of the game space containing the second character92which is captured from a position at the right rear of and above the second character92. The orientation in the xz-plane of the shooting direction vector of the third virtual camera (the orientation of a vector obtained by projecting the shooting direction vector onto the xz-plane of the game space) is set to be the same as the orientation of the second character92. The orientation in the up-down direction (direction parallel to the y-axis) of the shooting direction vector of the third virtual camera is set based on the attitude of the terminal device7. For example, when the back surface of the terminal device7is caused to face upward in the real space, the shooting direction vector of the third virtual camera is also set to face upward in the game space. Therefore, when the second player rotates the terminal device7clockwise as shown inFIG. 14, the third virtual camera is also rotated clockwise, so that an image of a right portion of the game space before the rotation is displayed on the LCD51of the terminal device7. When the second player causes the back surface of the terminal device7to face upward in the real space, an image of an upper region of the game space is displayed on the LCD51of the terminal device7.

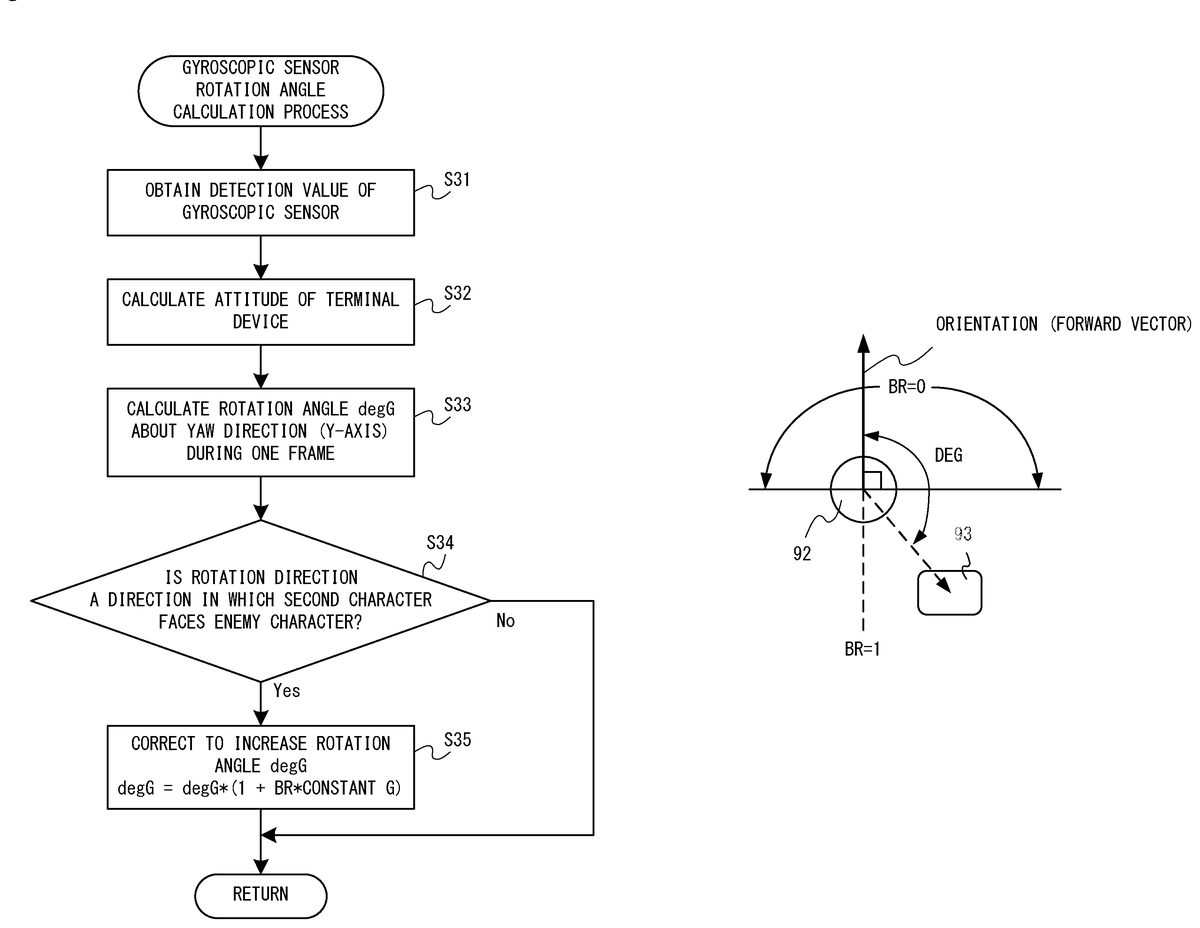

Here, as shown inFIG. 15, when the enemy character93is present directly behind the second character92, the second player tries to cause the second character92to turn to face in the opposite direction from the original in order to attack the enemy character93.FIG. 15is a non-limiting example diagram showing the image90ddisplayed in the lower right region of the television2when the enemy character93is present directly behind the second character92. In order to cause the second character92to turn to face in the opposite direction from the original, the second player operates the left analog stick53A of the terminal device7while viewing the screen of the television2or the screen of the terminal device7. In this case, the enemy character93is displayed below the second character92on the screen of the television2, and therefore, the second player slides the left analog stick53A in the down direction. As described above, when an input operation performed on the left analog stick53A is the down direction, the second character92retreats or moves backward (the position of the second character92moves backward while the orientation of the second character92is not changed). Alternatively, when the second player causes the second character92to turn to face in the opposite direction from the original, the terminal device7is rotated to a large extent (about the Y-axis). Thus, when the second character92is caused to turn to face in the opposite direction from the original, the difficulty of the operation may increase.