U.S. Pat. No. 8,593,464

SYSTEM AND METHOD FOR CONTROLLING ANIMATION BY TAGGING OBJECTS WITHIN A GAME ENVIRONMENT

AssigneeNintendo Co Ltd

Issue DateOctober 22, 2012

Illustrative Figure

Abstract

A game developer can “tag” an item in the game environment. When an animated character walks near the “tagged” item, the animation engine can cause the character's head to turn toward the item, and mathematically computes what needs to be done in order to make the action look real and normal. The tag can also be modified to elicit an emotional response from the character. For example, a tagged enemy can cause fear, while a tagged inanimate object may cause only indifference or indifferent interest.

Description

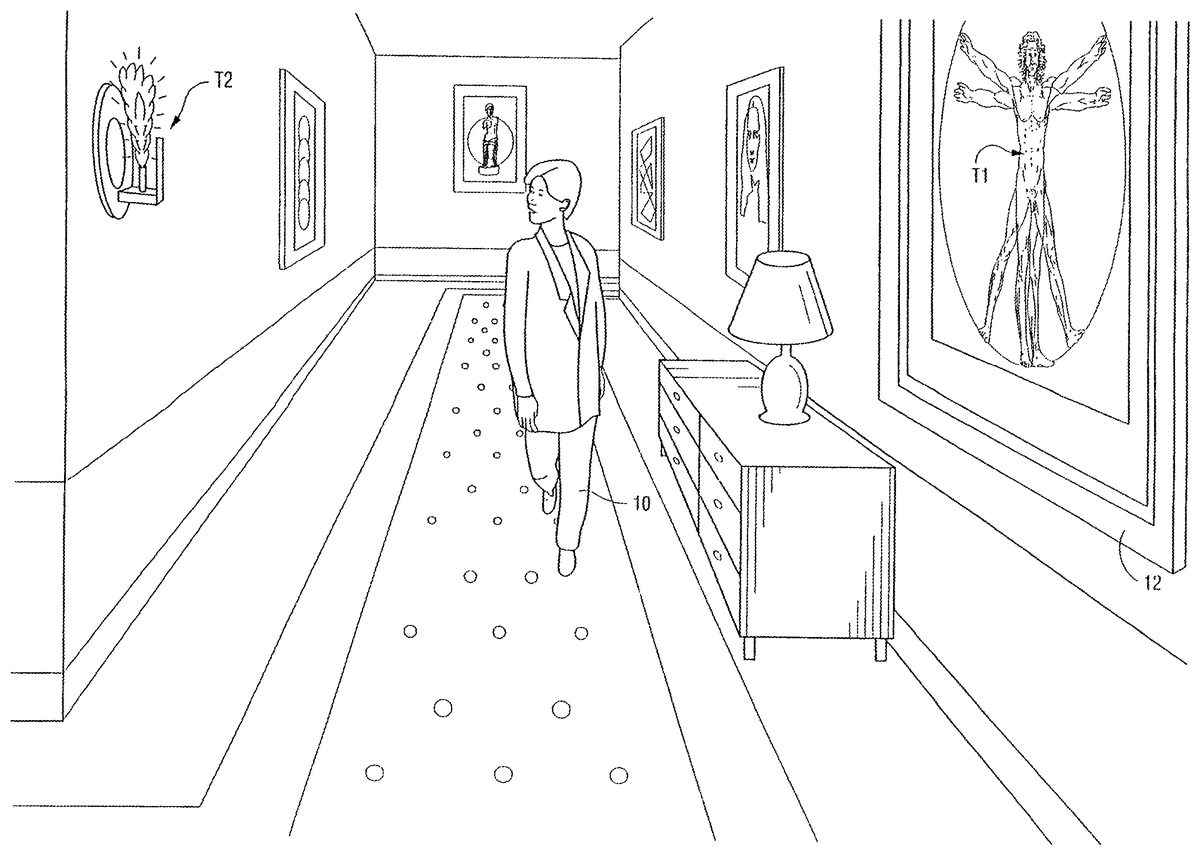

DETAILED DESCRIPTION OF PREFERRED EMBODIMENTS FIGS. 1-5show example screen effects provided by a preferred exemplary embodiment of this invention. These Figures show an animated character10moving through an illustrative video game environment such as a corridor of a large house or castle. Hanging on the wall11of the corridor is a 3D object12representing a painting. This object12is “tagged” electronically to indicate that character10should pay attention to it when the character is within a certain range of the painting. As the character10moves down the corridor (e.g., in response to user manipulation of a joystick or other interactive input device) (seeFIG. 1) and into proximity to tagged object12, the character's animation is dynamically adapted so that the character appears to be paying attention to the tagged object by, for example, facing the tagged object12(seeFIG. 2). In the example embodiment, the character10continues to face and pay attention to the tagged object10while it remains in proximity to the tagged object (seeFIG. 3). As the character moves out of proximity to the tagged object12(seeFIG. 4), it ceases paying attention to the tagged object by ceasing to turn towards it. Once the animated character10is more than a predetermined virtual distance away from the tagged object12, the character no longer pays attention to the object and the object no longer influences the character. When the character first enters the corridor, as shown inFIG. 1, the character is animated using an existing or generic animation that simply shows the character walking. However, when the tag becomes active, i.e., the character approaches the painting12, the reactive animation engine of the instant invention adapts or modifies the animation so that the character pays attention to the painting in a natural manner. The animation is preferably adapted from the existing animation by defining key frames and using the tag information (including the location ...

DETAILED DESCRIPTION OF PREFERRED EMBODIMENTS

FIGS. 1-5show example screen effects provided by a preferred exemplary embodiment of this invention. These Figures show an animated character10moving through an illustrative video game environment such as a corridor of a large house or castle. Hanging on the wall11of the corridor is a 3D object12representing a painting. This object12is “tagged” electronically to indicate that character10should pay attention to it when the character is within a certain range of the painting. As the character10moves down the corridor (e.g., in response to user manipulation of a joystick or other interactive input device) (seeFIG. 1) and into proximity to tagged object12, the character's animation is dynamically adapted so that the character appears to be paying attention to the tagged object by, for example, facing the tagged object12(seeFIG. 2). In the example embodiment, the character10continues to face and pay attention to the tagged object10while it remains in proximity to the tagged object (seeFIG. 3). As the character moves out of proximity to the tagged object12(seeFIG. 4), it ceases paying attention to the tagged object by ceasing to turn towards it. Once the animated character10is more than a predetermined virtual distance away from the tagged object12, the character no longer pays attention to the object and the object no longer influences the character.

When the character first enters the corridor, as shown inFIG. 1, the character is animated using an existing or generic animation that simply shows the character walking. However, when the tag becomes active, i.e., the character approaches the painting12, the reactive animation engine of the instant invention adapts or modifies the animation so that the character pays attention to the painting in a natural manner. The animation is preferably adapted from the existing animation by defining key frames and using the tag information (including the location and type of tag). More particularly, inbetweening and inverse kinematics are used to generate (i.e., calculate) a dynamic animation sequence for the character using the key frames and based on the tag. The dynamic animation sequence (rather than the existing or generic animation) is then displayed while the character is within proximity to the tag. However, when the tag is no longer active, the character's animation returns to the stored or canned animation (e.g., a scripted and stored animation that simply shows the character walking down the hallway and looking straight ahead).

In the screen effects shown inFIGS. 1-5, the object12is tagged with a command for character10to pay attention to the object but with no additional command eliciting emotion. Thus,FIGS. 1-5show the character10paying attention to the tagged object12without any change of emotion. However, in accordance with the invention, it is also possible to tag object12with additional data or command(s) that cause character10to do something in addition to (or instead of) paying attention to the tagged object. In one illustrative example, the tagged object12elicits an emotion or other reaction (e.g., fear, happiness, belligerence, submission, etc.) In other illustrative examples, the tagged object can repel rather than attract character10—causing the character to flee, for example. Any physical, emotional or combined reaction can be defined by the tag, such as facial expressions or posture change, as well as changes in any body part of the character (e.g., position of head, shoulders, feet, arms etc.).

FIG. 5Ais an example conceptual drawing showing the theory of operation of the preferred embodiment. Referring toFIG. 6, the “tag” T associated with an item in the 3D world is specified based on its coordinates in 3D space. Thus, to tag a particular object12, one specifies the location of a “tag point” or “tag surface” in 3D space to coincide with the position of a desired object in 3D space.FIG. 5Ashows a “tag” T (having a visible line from the character to the tag for illustration purposes) defined on the painting in the 3D virtual world. In accordance with the invention, the animated character10automatically responds by turning its head toward the “tag”, thereby appearing to pay attention to the tagged object. The dotted line inFIG. 5Aillustrates a vector from the center of the character10to the tag T. The animation engine can calculate this vector based on the relative positions of character10and tag T in 3D space and use the vector in connection with dynamically animating the character.

In accordance with a preferred embodiment of the invention, one can place any number of tags T at any number of locations within the 3D space. Any number of animated characters10(or any subsets of such characters, with different characters potentially being sensitive to different tags T) can react to the tags as they travel through the 3D world.

FIGS. 6-9illustrate another embodiment of the invention, wherein two tags are defined in the corridor through which the character is walking. A first tag T1is provided on the painting as described above in connection with the display sequence ofFIGS. 1-5. However, in this embodiment, a second tag T2is provided on the wall mounted candle. This second tag is different from the first tag in that it is defined to only cause a reaction from by the character when the candle is animated to flare up like a powerful torch (seeFIG. 7A). The second tag T2is given a higher priority than the first tag T1. The reactive animation engine is programmed to only allow the player to react to one tag at a time, that one tag being the tag that has the highest priority of any active tags. As a result, when the character10is walking down the corridor and gets within proximity of the two tags, the second tag is not yet active due to the fact that the candle is not flaring up. Thus, the character turns to the look at the only active tag T1(i.e., the painting) (seeFIG. 6). However, when the candle flares-up, the second tag T2, which has a higher priority than T1, also becomes active, thereby causing the character to stop looking at the painting and turn its attention to the flaring torch (i.e., the active tag with the highest priority) (seeFIG. 7A). Once the torch stops flaring and returns to a normal candle, the second tag T2is no longer active and the reactive animation engine then causes the character to again turn its attention to the painting (i.e. the only active tag)(seeFIG. 7B). Once the character begins to move past the painting, the character's head then begins to turn naturally back (seeFIG. 8) to the forward or uninterested position corresponding to the stored animation (seeFIG. 9). Thus, in accordance with the invention, the character responds to active tags based their assigned priority. In this way, the character is made to look very realistic and appears as if it has come to life within its environment. As explained above, the reactive animation engine E dynamically generates the character's animation to make the character react in a priority-based manner to the various tags that are defined in the environment.

Example Illustrative Implementation

FIG. 10Ashows an example interactive 3D computer graphics system50. System50can be used to play interactive 3D video games with interesting animation provided by a preferred embodiment of this invention. System50can also be used for a variety of other applications.

In this example, system50is capable of processing, interactively in real time, a digital representation or model of a three-dimensional world. System50can display some or the entire world from any arbitrary viewpoint. For example, system50can interactively change the viewpoint in response to real time inputs from handheld controllers52a,52bor other input devices. This allows the game player to see the world through the eyes of someone within or outside of the world. System50can be used for applications that do not require real time 3D interactive display (e.g., 2D display generation and/or non-interactive display), but the capability of displaying quality 3D images very quickly can be used to create very realistic and exciting game play or other graphical interactions.

To play a video game or other application using system50, the user first connects a main unit54to his or her color television set56or other display device by connecting a cable58between the two. Main unit54produces both video signals and audio signals for controlling color television set56. The video signals are what controls the images displayed on the television screen59, and the audio signals are played back as sound through television stereo loudspeakers61L,61R.

The user also needs to connect main unit54to a power source. This power source may be a conventional AC adapter (not shown) that plugs into a standard home electrical wall socket and converts the house current into a lower DC voltage signal suitable for powering the main unit54. Batteries could be used in other implementations.

The user may use hand controllers52a,52bto control main unit54. Controls60can be used, for example, to specify the direction (up or down, left or right, closer or further away) that a character displayed on television56should move within a 3D world. Controls60also provide input for other applications (e.g., menu selection, pointer/cursor control, etc.). Controllers52can take a variety of forms. In this example, controllers52shown each include controls60such as joysticks, push buttons and/or directional switches. Controllers52may be connected to main unit54by cables or wirelessly via electromagnetic (e.g., radio or infrared) waves.

To play an application such as a game, the user selects an appropriate storage medium62storing the video game or other application he or she wants to play, and inserts that storage medium into a slot64in main unit54. Storage medium62may, for example, be a specially encoded and/or encrypted optical and/or magnetic disk. The user may operate a power switch66to turn on main unit54and cause the main unit to begin running the video game or other application based on the software stored in the storage medium62. The user may operate controllers52to provide inputs to main unit54. For example, operating a control60may cause the game or other application to start. Moving other controls60can cause animated characters to move in different directions or change the user's point of view in a 3D world. Depending upon the particular software stored within the storage medium62, the various controls60on the controller52can perform different functions at different times.

As also shown inFIG. 10A, mass storage device62stores, among other things, a tag-based animation engine E used to animate characters based on tags stored in the character's video game environment. The details of preferred embodiment tag-based animation engine E will be described shortly. Such tag-based animation engine E in the preferred embodiment makes use of various components of system50shown inFIG. 10Bincluding:a main processor (CPU)110,a main memory112, anda graphics and audio processor114.

In this example, main processor110(e.g., an enhanced IBM Power PC 750) receives inputs from handheld controllers52(and/or other input devices) via graphics and audio processor114. Main processor110interactively responds to user inputs, and executes a video game or other program supplied, for example, by external storage media62via a mass storage access device106such as an optical disk drive. As one example, in the context of video game play, main processor110can perform collision detection and animation processing in addition to a variety of interactive and control functions.

In this example, main processor110generates 3D graphics and audio commands and sends them to graphics and audio processor114. The graphics and audio processor114processes these commands to generate interesting visual images on display59and interesting stereo sound on stereo loudspeakers61R,61L or other suitable sound-generating devices. Main processor110and graphics and audio processor114also perform functions to support and implement the preferred embodiment tag-based animation engine E based on instructions and data E′ relating to the engine that is stored in DRAM main memory112and mass storage device62.

As further shown inFIG. 10B, example system50includes a video encoder120that receives image signals from graphics and audio processor114and converts the image signals into analog and/or digital video signals suitable for display on a standard display device such as a computer monitor or home color television set56. System50also includes an audio codec (compressor/decompressor)122that compresses and decompresses digitized audio signals and may also convert between digital and analog audio signaling formats as needed. Audio codec122can receive audio inputs via a buffer124and provide them to graphics and audio processor114for processing (e.g., mixing with other audio signals the processor generates and/or receives via a streaming audio output of mass storage access device106). Graphics and audio processor114in this example can store audio related information in an audio memory126that is available for audio tasks. Graphics and audio processor114provides the resulting audio output signals to audio codec122for decompression and conversion to analog signals (e.g., via buffer amplifiers128L,128R) so they can be reproduced by loudspeakers61L,61R.

Graphics and audio processor114has the ability to communicate with various additional devices that may be present within system50. For example, a parallel digital bus130may be used to communicate with mass storage access device106and/or other components. A serial peripheral bus132may communicate with a variety of peripheral or other devices including, for example:a programmable read-only memory and/or real time clock134,a modem136or other networking interface (which may in turn connect system50to a telecommunications network138such as the Internet or other digital network from/to which program instructions and/or data can be downloaded or uploaded), andflash memory140.

A further external serial bus142may be used to communicate with additional expansion memory144(e.g., a memory card) or other devices. Connectors may be used to connect various devices to busses130,132,142. For further details relating to system50, see for example U.S. patent application Ser. No. 09/723,335 filed Nov. 28, 2000 entitled “EXTERNAL INTERFACES FOR A 3D GRAPHICS SYSTEM” incorporated by reference herein.

FIG. 11shows an example simplified illustration of a flowchart of the tag-based animation engine E of the instant invention. Animation engine E may be implemented for example by software executing on main processor110. Tag-based animation engine E may first initialize a 3D world and animation game play (block1002), and may then accept user inputs supplied for example via handheld controller(s)52(block1004). In response to such user inputs, engine E may animate one or more animated characters10in a conventional fashion to cause such characters to move through the 3D world based on the accepted user inputs (block1006). Tag-based animation engine E also detects whether any moving character is in proximity to a tag T defined within the 3D world (decision block1008). If a character10is in proximity to a tag T, the animation engine E reads the tag and computes (e.g., through mathematical computation and associated modeling, such as by using inbetweening and inverse kinematics) a dynamic animation sequence for the character10to make the character realistically turn toward or otherwise react to the tag (block1010). Processing continues (blocks1004-1010) until the game is stopped or some other event causes an interruption.

FIG. 12shows an illustrative exemplary data structure1100for a tag T. In the example shown, data structure1100includes a tag ID field1102that identifies the tag; three-dimensional (i.e., X, Y, Z) positional coordinate fields1104,1106,1108(plus further optional additional information if necessary) specifying the position of the tag in the 3D world; a proximity field1110(if desired) specifying how close character10must be to the tag in order to react to the tag; a type of tag or reaction code1112specifying the type of reaction to be elicited (e.g., pay attention to the tag, flee from the tag, react with a particular emotion, etc.); and a priority field114that defined a priority for the tag relative to other tags that may be activated at the same time as the tag.

FIG. 13shows a more detailed exemplary flow chart of the steps performed by the reactive animation engine E of the instant invention. Once the 3D world and game play are initialized (step1302), the system accepts user inputs to control the character within the environment in a conventional manner (step1304). The system initially uses scripted or canned animation that is provided with the game for the character (step1306). The animation engine checks the characters position relative to the tags that have been defined in the 3D world by the designers of the game (step1308). If the character is not within proximity to tag then the standard animation continues for the character (step1310). However, when a tag is detected (step1308), the tag is read to determine the type of reaction that the tag is supposed to elicit from the character and the exact location of the tag in the 3D world (step1312). The animation engine E then uses key frames (some or all of which may come from the scripted animation) and the tag information to dynamically adapt or alter the animation of the character to the particular tag encountered (step1314). The dynamic animation is preferably generated using a combination of inbetweening and inverse kinematics to provide a smooth and realistic animation showing a reaction to the tag. Particular facial animations may also be used to give the character facial emotions or reactions to the tag. These facial animations can be selected from a defined pool of facial animations, and inbetweening or other suitable animation techniques can be used to further modify or dynamically change the facial expressions of the character in response to the tag. The dynamic animation then continues until the tag is no longer active (step1316), as a result of, for example, the character moving out of range of the tag. Once the dynamic animation is completed, the standard or scripted animation is then used for the character until another tag is activated (step1318).

FIG. 14shows a simplified flow chart of the steps performed by the reactive animation engine E of the instant invention in order to generate the dynamic animation sequence in response to an activated tag. As seen inFIG. 14, once a tag is activated (step1402), the animation engine reads the tag to determine the type of tag, its exact location and any other information that is associated with the tag (step1404). The engine then defines key frames for use in generating the dynamic animation (step1406). The key frames and tag information are then used, together with inbetweening and inverse kinematics, to create an animation sequence for the character on-the-fly (step1408). Preferably, the dynamic animation sequence is adapted from the standard animation, so that only part of the animation needs to be modified, thereby reducing the overall work that must be done to provide the dynamic animation. In other words, the dynamic animation is preferably generated as an adaptation or alteration of the stored or standard animation. The dynamic animation then continues until the tag is no longer active (step1410), at which time the characters animation returns to the standard animation.

FIG. 15shows an exemplary flow chart of the priority-based tagging feature of the instant invention. This feature enables several or many tags to be activated simultaneously while still having the character react in a realistic and in a priority based manner. As seen inFIG. 15, when a tag is activated, the animation engine determines the priority of the tag (step1502), as well as doing the other things described above. The animation engine then determines if any other tags are currently active (step1506). If no other tags are active, the animation engine dynamically adapts or alters the animation, in the manner described above, to correspond to the active tag (step1508). If, on the other hand, one or more other tags are currently active, the reactive animation engine determines the priority of each of the other active tags (step1510) to determine if the current tag has a higher priority relative to each of the other currently active tags (step1512). If the current tag does have the highest priority, then the animation engine dynamically generates the character's animation based on the current tag (step1514). If, on the other hand, another active tag has a higher priority than the currently active tag, then the animation engine E adapts the animation in accordance with the other tag having the highest priority (step1516). When the other tag having a higher priority is no longer active, but the original tag (i.e., from step1502) is still active, then the animation engine dynamically generates the character's animation based on the original tag as soon as the higher priority tag has become inactive. In this way, the character's attention can be smoothly and realistically changed from one tagged object to another tagged object, as well as from no tagged object to a tagged object.FIGS. 6-9illustrate an exemplary priority-based display sequence as just described.

As can be seen from the description above, the instant reactive animation engine E of the instant invention can be used in a variety of video games and/or other graphical applications to improve realism and game play. The invention enables a character to appear as if it has “come to life” in the game environment. The instant invention is particularly advantageous when incorporated into role playing games wherein a character interacts with a 3D world and encounters a variety of objects and/or other characters that can have certain effects on a character. The animation engine of the instant invention can also be implemented such that the same tag has a different effect on the character depending on the state of a variable of the character at the time the tagged object is encountered. One such variable could be the “sanity” level of the player in a sanity-based game, such as described in U.S. provisional application Ser. No. 60/184,656 filed Feb. 24, 2000 and entitled “Sanity System for Video Game”, the disclosure of which is incorporated by reference herein. In other words, a tag may be defined such that it does not cause much of a reaction from the character when the character has a high sanity level. On the other hand, the same tag may cause a drastic reaction from the character (such as eye's bulging) when the character is going insane, i.e., when having a low sanity level. Any other variable or role playing element, such as health or strength, could also be used to control the type of reaction that a particular tag has on the particular character at any given time during the game. Other characters, such as monsters, can also be tagged and with prioritized tags as described above in order to cause the character to react to other characters as well as other objects. Tags can also be defined such that factors other than proximity (such as timing, as in the candle/torch example above) can be used alone or in addition to proximity to cause activation of the tag.

While the invention has been described in connection with what is presently considered to be the most practical and preferred embodiment, it is to be understood that the invention is not to be limited to the disclosed embodiment, but on the contrary, is intended to cover various modifications and equivalent arrangements included within the spirit and scope of the appended claims.

Claims

- An electronic device for animating objects within a virtual environment, comprising: processing resources including at least one processor and a memory;and a display device configured to display the virtual environment in which a representation of a tagged object is at least initially located;wherein the processing resources cooperate to at least: detect when a user-controlled object comes into proximity to the tagged object, the tagged object having tag information associated therewith, the tag information specifying reactions to be taken with respect to both the user-controlled object and the representation of the tagged object when it is detected that the user-controlled object comes into proximity to the tagged object;and cause the reactions to be taken with respect to both the user-controlled object and the representation of the tagged object as specified by the tag information, when it is detected that the user-controlled object comes into proximity to the tagged object.

- The device of claim 1 , wherein the processing resources further cooperate to at least detect when the user-controlled object is no longer within proximity to the tagged object and, upon such detection, trigger a different reaction for at least the user-controlled object.

- The device of claim 1 , wherein the processing resources further cooperate to at least detect when the user-controlled object is no longer within proximity to the tagged object and, upon such detection, trigger a different reaction for at least the representation of the tagged object.

- The device of claim 1 , wherein the processing resources further cooperate to at least detect when the user-controlled object is no longer within the predetermined proximity to the tagged object and, upon such detection, trigger different reactions for both the user-controlled object and the representation of the tagged object.

- The device of claim 1 , wherein the processing resources further cooperate to at least detect when the user-controlled object is no longer within the predetermined proximity to the tagged object and, upon such detection, trigger one or more different reaction(s) for both the user-controlled object and/or the representation of the tagged object, wherein the one or more different reaction(s) correspond to animations that would have been performed if the user-controlled object had not previously come into proximity to the tagged object.

- The device of claim 1 , wherein the processing resources further cooperate to at least generate an animation that corresponds to a human-like reaction in the user-controlled object when proximity to the tagged object is detected.

- The device of claim 1 , wherein the processing resources further cooperate to at least alter the surrounding virtual environment when proximity to the tagged object is detected.

- An electronic device for animating objects within a virtual environment, comprising: an input device configured to receive user input;processing resources including at least one processor and a memory;and a display device configured to display the virtual environment in which plural representations of respective tagged objects are at least initially located, each said tagged object having associated tag information that specifies reactions to be taken with respect to both a user-controlled object and the representation of the respective tagged object when it is detected that the user-controlled object comes into proximity to the respective tagged object;wherein the processing resources cooperate to at least: detect when the user-controlled object comes into proximity to the various tagged objects;and cause the reactions to be taken with respect to both the user-controlled object and the representation of the tagged objects as specified by the associated tag information when it is detected that the user-controlled object comes into proximity to the tagged objects.

- The device of claim 8 , wherein the tag information associated with each said tagged object further includes a priority indication so that priority indications for different tagged objects are comparable to one another by the processing resources.

- The device of claim 9 , wherein reactions for tagged objects having higher priority indications are prioritized above reactions for tagged objects having lower priority indications when it is detected that the user-controlled object comes into proximity to multiple tagged objects.

- The device of claim 10 , wherein when it is detected that the user-controlled object comes into proximity to multiple tagged objects, animations are performed in accordance with the tag information associated with the tagged object in that group that has the highest priority indication.

- The device of claim 10 , wherein when it is detected that the user-controlled object comes into proximity to multiple tagged objects, animations are performed in an order determined by the priority indications associated with the tagged objects in that group.

- A method for animating objects in a virtual world to be displayed on a display of a system, the system having processing resources including at least one processor and a memory, the method comprising: displaying, on the display of the system, the virtual world, the virtual world including a representation of a tagged object;receiving user input indicative of a desired action to be taken by a user-controlled object in connection with the virtual world;detecting when the user-controlled object comes within a predetermined proximity of the tagged object, the tagged object having tag information associated therewith, the tag information designating a reaction for the user-controlled object and a reaction for the representation of the tagged object when the user-controlled object comes into the predetermined proximity to the tagged object;and causing, in connection with the processing resources, the reaction for the user-controlled object and the reaction for the representation of the tagged object associated with the tagged object when the user-controlled object comes into the predetermined proximity to the tagged object based on at least on the tag information.

- The method of claim 13 , further comprising detecting when the user-controlled object is no longer within the predetermined proximity to the tagged object and, upon such detection, triggering a different reaction for at least the user-controlled object.

- The method of claim 13 , further comprising detecting when the user-controlled object is no longer within the predetermined proximity to the tagged object and, upon such detection, triggering a different reaction for at least the representation of the tagged object.

- The method of claim 13 , further comprising detecting when the user-controlled object is no longer within the predetermined proximity to the tagged object and, upon such detection, triggering a different reaction for the user-controlled object and the representation of the tagged object.

- The method of claim 13 , further comprising generating an animation that corresponds to a human-like reaction in the user-controlled object when proximity to the tagged object is detected.

- The method of claim 13 , further comprising generating an animation that alters all or substantially all of the virtual world when proximity to the tagged object is detected.

- The method of claim 13 , further comprising animating the three-dimensional world in accordance with a first script when proximity to the tagged object is detected.

- The method of claim 19 , further comprising animating the three-dimensional world in accordance with a second script when proximity to the tagged object is not detected.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.