U.S. Pat. No. 8,553,935

Computer interface employing a manipulated object with absolute pose detection component and a display

AssigneeElectronic Scripting Products Inc

Issue DateMay 25, 2011

Valve Corp. v. Electronic Scripting Products

IPR2019-00062

Decision Issued: April 2, 2019

On April 2, 2019, The Patent Trial and Appeal Board (PTAB) declined Valve’s petition to institute an Inter Partes Review of U.S. Patent No. 9,235,934 (the ‘934 Patent) for being a repetitive challenge to the patent. The genesis of this action is the patent infringement lawsuit, Electronic Scripting Products, Inc. v. HTC America, Inc., No. 3:17-cv-05806-RS, filed on October 9, 2017, in the Northern District of California. Electronic Scripting claimed HTC and Valve had infringed the ‘934 Patent and U.S. Patent No. 8,553,935 by integrating Valve’s technology into HTC’s VIVE headsets. On January 25, 2018, Electronic Scripting voluntarily dismissed Valve from the lawsuit, but not HTC, who in turn filed a first IPR petition on the ‘934 Patent. The PTAB declined to institute an IPR based on the first petition because HTC failed to show that any of the challenged claims would likely be invalid. Five months after the PTAB rejected HTC’s first petition, Valve submitted additional petitions to the PTAB based on other grounds, which the PTAB suggested were based on lessons learned from the denial of the first petition.

Electronic Scripting asked the PTAB to exercise their discretion to reject Valve’s additional IPR petitions under 35 U.S.C. § 314(a) because Valve was seeking to invalidate the same claims as HTC’s earlier IPR and is “similarly situated” to HTC. Valve counters by arguing the only similarity it has with HTC is wishing to see the ‘934 Patent invalidated, but otherwise, the two companies are unrelated. When it comes to a petition on a patent that the PTAB has already reviewed, the board applies a seven-factor test to determine if it should grant the second petition, as set forth in General Plastics. Case IPR2016-01357, slip op. at 16 (PTAB Sept. 6, 2017):

- whether the same petitioner previously filed a petition directed to the same claims of the same patent;

- whether at the time of filing of the first petition the petitioner knew of the prior art asserted in the second petition or should have known of it;

- whether at the time of filing of the second petition the petitioner already received the patent owner’s preliminary response to the first petition or received the Board’s decision on whether to institute review in the first petition;

- the length of time that elapsed between the time the petitioner learned of the prior art asserted in the second petition and the filing of the second petition;

- whether the petitioner provides adequate explanation for the time elapsed between the filings of multiple petitions directed to the same claims of the same patent;

- the finite resources of the Board; and

- the requirement under 35 U.S.C. § 316(a)(11) to issue a final determination not later than 1 year after the date on which the Director notices institution of review. Id.

The PTAB found that every factor weighed against instituting Valve’s later IPR petitions because of how closely linked Valve is to the litigation and HTC’s previous IPR petition. The first factor weighed against Valve because the PTAB found Valve’s petition was directed at the same claims as the previous IPR. Factor two weighed against institution due to the new prior art Valve asserted in its petition being fairly easy to find, which meant HTC should have known it existed. The third factor weighed against instituting the IPR as a result of Valve using the outcome of the first petition to help craft its petition.

Factors four and five weighed against Valve because of the five-month delay on Valve’s part to file the petition. The Board explored the impact of the Federal Circuit’s Click-to-Call decision in factors four and five. Prior to the Click-to-Call decision, Valve was not time barred because Electronic Scripting had dismissed the complaint against Valve. However, the Click-to-Call decision changed the law, and Valve was put in a position where it had to file or Valve would become time-barred. The PTAB reviewed the situation but ultimately decided that this did not impact the fairness of Valve’s delay in filing its IPR petitions. Finally, factors six and seven weighed against Valve because allowing similarly situated parties to continuously petition for IPRs would drain the resources of the PTAB.

With all seven factors weighing against instituting Valve’s IPR petition, the PTAB denied institution. Because institution was denied here, Valve may still be able to challenge the validity of the patents back in the district court, but as noted above Valve is no longer a party to the ongoing litigation. See Shaw Industries Group, Inc. v. Automated Creel Systems, Inc., 817 F.3d 1293 (Fed. Cir. 2016). We will continue to follow this case, and provide updates when available.

Illustrative Figure

Abstract

A system that has a remote control, e.g., a wand, equipped with a relative motion sensor that outputs data indicative of a change in position of the wand. The system also has one or more light sources and a photodetector that detects their light and outputs data indicative of the detected light. The system uses one or more controllers to determine the absolute position of the wand based on the data output by the relative motion sensor and by the photodetector. The data enables determination of the absolute pose of the wand, which includes the absolute position of a reference point chosen on the wand and the absolute orientation of the wand. To properly express the absolute parameters of position and/or orientation of the wand a reference location is chosen with respect to which the calculations are performed. The system is coupled to a display that shows an image defined by a first and second orthogonal axes such as two axes belonging to world coordinates (Xo,Yo,Zo). The one or more controllers are configured to generate signals that are a function of the absolute position of the wand in or along a third axis for rendering the display. To simplify the mapping of a real three-dimensional environment in which the wand is operated to the cyberspace of the application that the system is running, the third axis is preferably the third Cartesian coordinate axis of world coordinates (Xo,Yo,Zo).

Description

DETAILED DESCRIPTION To appreciate the basic aspects of the present invention, we initially turn to a simple version of an apparatus10in accordance with the invention, as shown inFIG. 1. Apparatus10has a manipulated object14whose motion40in a real three-dimensional environment18is expressed by absolute pose data12. Apparatus10processes absolute pose data12that describe the absolute pose of manipulated object14at a number of measurement times ti. Thus, successive pose data12collected at the chosen measurement times describe the motion that manipulated object14executes or is made to execute by a user38. Manipulated object14is any object that is moved either directly or indirectly by a user38and whose pose when object14is stationary or in motion yields useful absolute pose data12. For example, manipulated object14is a pointer, a wand, a remote control, a three-dimensional mouse, a game control, a gaming object, a jotting implement, a surgical implement, a three-dimensional digitizer, a digitizing stylus a hand-held tool or any utensil. In fact, a person skilled in the art will realize that a manipulated object14can be even be an entire device such as a cell phone or a smart object that is handled by user38to produce meaningful motion40. In the present case, manipulated object14is a pointer that executes motion40as a result of a movement performed by the hand of a user38. Pointer14has a tip16that will be used as a reference point for describing its absolute pose in real three-dimensional environment18. In general, however, any point on object14can be selected as reference point16, as appropriate or convenient. Pointer14has an on-board optical measuring arrangement22for optically inferring its absolute pose with the aid of one or more invariant features32,34,36disposed at different locations in real three-dimensional environment18. Invariant features32,34,36are high optical contrast features such as edges of objects, special markings, or light sources. In the present embodiment, invariant feature32is an edge of an object such as a ...

DETAILED DESCRIPTION

To appreciate the basic aspects of the present invention, we initially turn to a simple version of an apparatus10in accordance with the invention, as shown inFIG. 1. Apparatus10has a manipulated object14whose motion40in a real three-dimensional environment18is expressed by absolute pose data12. Apparatus10processes absolute pose data12that describe the absolute pose of manipulated object14at a number of measurement times ti. Thus, successive pose data12collected at the chosen measurement times describe the motion that manipulated object14executes or is made to execute by a user38.

Manipulated object14is any object that is moved either directly or indirectly by a user38and whose pose when object14is stationary or in motion yields useful absolute pose data12. For example, manipulated object14is a pointer, a wand, a remote control, a three-dimensional mouse, a game control, a gaming object, a jotting implement, a surgical implement, a three-dimensional digitizer, a digitizing stylus a hand-held tool or any utensil. In fact, a person skilled in the art will realize that a manipulated object14can be even be an entire device such as a cell phone or a smart object that is handled by user38to produce meaningful motion40.

In the present case, manipulated object14is a pointer that executes motion40as a result of a movement performed by the hand of a user38. Pointer14has a tip16that will be used as a reference point for describing its absolute pose in real three-dimensional environment18. In general, however, any point on object14can be selected as reference point16, as appropriate or convenient.

Pointer14has an on-board optical measuring arrangement22for optically inferring its absolute pose with the aid of one or more invariant features32,34,36disposed at different locations in real three-dimensional environment18. Invariant features32,34,36are high optical contrast features such as edges of objects, special markings, or light sources. In the present embodiment, invariant feature32is an edge of an object such as a table (object not shown), invariant feature34is a special marking, namely a cross, and feature36is a light source. It is possible to use features32,34,36that are all located in a plane (coplanar) or else at arbitrary locations (non-coplanar) within real three-dimensional environment18as conveniently defined by global or world coordinates (Xo,Yo,Zo). The limitation is that, depending on the type of features32,34,36a sufficient number of them have to be visible to on-board optical measuring arrangement22at measurement times ti, as described in more detail below.

In the present embodiment the world coordinates (Xo,Yo,Zo) chosen to parameterize real three-dimensional environment18are Cartesian. A person skilled in the art will recognize that other choices including polar, cylindrical or still different coordinate systems can be employed. In addition, it will be appreciated that features32,34,36can be temporarily or permanently affixed at their spatial locations as required for measuring the pose of pointer14. Indeed, the spatial locations of features32,34,36can be changed in an arbitrary manner, as long as on-board optical measuring arrangement22is appraised of their instantaneous spatial locations at times ti.

The spatial locations of features32,34,36, whether temporary or permanent, are conveniently expressed in world coordinates (Xo,Yo,Zo). Furthermore, if possible, the spatial locations of features32,34and36are preferably such that at least a subset of them is visible to on-board optical measuring arrangement22in all absolute poses that pointer14is expected to assume while undergoing motion40. Invariant features32,34,36are used in deriving a relative or absolute position of tip16of pointer14in real three-dimensional environment18. Features32,34,36are also used for optically inferring the remaining portion of the absolute pose, i.e., the orientation of pointer14.

A number of optical measurement methods using optical measuring arrangement22to infer the relative or absolute pose of pointer14can be employed. In any of these methods, arrangement22uses one or more on-board components to obtain pose data12in accordance with any well-known absolute pose recovery technique including geometric invariance, triangulation, ranging, path integration and motion analysis. In some embodiments arrangement22has a light-measuring component with a lens and an optical sensor that form an imaging system. In other embodiments arrangement22has an active illumination component that projects structured light or a scanning component that projects a scanning light beam into environment18and receives a scattered portion of the scanning light beam from features32,34. Specific examples of the various possible components will be explained in detail below.

Apparatus10has a processor26for preparing absolute pose data12corresponding to absolute pose of pointer14and for identifying a subset48of absolute pose data12required by an application28. Specifically, application28uses subset48which may contain all or less than all of absolute pose data12. Note that processor26can be located on pointer14or it can be remote, e.g., located in a remote host device, as is the case in this embodiment.

A communication link24is provided for sending absolute pose data12to application28. Preferably, communication link24is a wireless communication link established with the aid of a wireless transmitter30mounted on pointer14. In embodiments where processor26and application28are resident on pointer14, communication link24can be a direct electrical connection. In still other embodiments, communication link24can be a wired remote link.

During operation user38holds pointer14in hand and executes a movement such that pointer14executes motion40with respect to invariant features32,34,36in world coordinates (Xo,Yo,Zo) that parametrize real three-dimensional environment18. For better visualization, motion40is indicated in dashed lines42,44that mark the positions assumed by tip16and end46of pointer14during motion40. For the purposes of this invention, line42is referred to as the trace of tip16. In some specific applications of the present invention, trace42of tip16may be confined to a surface embedded in real three-dimensional environment18. Such surface can be plane, e.g., a planar jotting surface, or it can be curved.

Motion40may produce no movement of end46or tip16, i.e., no trace42. In fact, motion40is not limited by any parameter other than those of standard mechanics of rigid body motion known form classical mechanics. Accordingly, changes in orientation of pointer14are considered to be motion40. Likewise, changes in position of tip16(or any other reference point) in (x,y,z) coordinates conveniently expressed in world coordinates (Xo,Yo,Zo) are also considered to be motion40. In the present case, orientation of pointer14is described by inclination angle θ, rotation angle φ and roll angle ψ referenced with respect to a center axis C.A. of pointer14. A change in at least one of these angles constitutes motion40.

In the present case, tip16moves along line42as pointer14is inclined with respect to a normal Z′ at inclination angle θ equal to θo. For simplicity, normal Z′ is selected to be parallel to the Zoaxis of world coordinates (Xo,Yo,Zo). Furthermore, rotation and roll angles φ, ψ are equal to To, ψorespectively. For convenience, in this embodiment angles θ, φ and ψ are Euler angles. Of course, other angles can be used to describe the orientation of pointer14. In fact, a person skilled in the art will appreciate that any convention for describing the rotations of pointer16can be adapted for this description. For example, the four Carlyle-Klein angles, the direction cosines, quaternions or still other descriptors of tilt, yaw and roll can be employed in such alternative conventions.

FIGS. 2A-Cillustrate a convention for describing the orientation of pointer14using Euler angles θ, φ, ψ. Pointer14has a length l measured from tip16at the origin of non-rotated object coordinates (X′,Y′,Z′) as shown inFIG. 2A. Center axis C.A. is collinear with the Z′ axis, and it passes through tip16and the origin of non-rotated object coordinates (X′,Y′,Z′). In the passive rotation convention used herein, object coordinates will be attached to pointer14while pointer14is rotated from its initial upright position in which Z′ is parallel to Zoof world coordinates (Xo,Yo,Zo).

Now,FIG. 2Aillustrates a first counterclockwise rotation by first Euler angle φ of object coordinates (X′,Y′,Z′) about the Z′ axis. This rotation of the object coordinates does not affect the Z′ axis so once rotated Z″ axis is collinear with non-rotated Z′ axis (Z″=Z′). On the other hand, axes X′ and Y′ are rotated by first Euler angle φ to yield once rotated axes X″ and Y″.

FIG. 2Billustrates a second counterclockwise rotation by second Euler angle θ applied to once rotated object coordinates (X″,Y″,Z″). This second rotation is performed about the once rotated X″ axis and therefore it does not affect the X″ axis (X′″=X″). On the other hand axes Y″ and Z″ are rotated by second Euler angle θ to yield twice rotated axes Y′″ and Z′″. This second rotation is performed in a plane Π containing once rotated axes Y″, Z″ and twice rotated axes Y′″, Z′″. Note that axis C.A. of pointer14is rotated counterclockwise by second Euler angle θ in plane Π and remains collinear with twice rotated axis Z′″.

A third counterclockwise rotation by third Euler angle ψ is applied to twice rotated object coordinates (X′″,Y′″,Z′″) as shown inFIG. 1C. Rotation by ψ is performed about twice rotated axis Z′″ that is already collinear with object axis Z rotated by all three Euler angles. Meanwhile, twice rotated axes X′″,Y′″ are rotated by ψ to yield object axes X,Y rotated by all three Euler angles. Object axes X,Y,Z rotated by all three Euler angles φ, θ and ψ define Euler rotated object coordinates (X,Y,Z). Note that tip16of pointer14remains at the origin of all object coordinates during the Euler rotations.

Now, referring back toFIG. 1, the absolute pose of pointer14includes its orientation, i.e., Euler angles (φ, θ, ψ), and position of tip16, i.e., the coordinates (x,y,z) of tip16that was chosen as the reference point. The orientation of pointer14and position of tip16are expressed in world coordinates (Xo,Yo,Zo). World coordinates (Xo,Yo,Zo) have a reference location, in this case the world origin (0,0,0) that can be used to describe an absolute position of tip16. In fact, world coordinates (Xo,Yo,Zo) can be used for an absolute measure of any parameter(s) of the pose of pointer14. Alternatively, any parameter(s) of the pose of pointer14can be described in a relative manner, e.g., with reference to non-stationary or relative coordinates (Xi,Yi,Zi) or simply with respect to the previous pose.

For the purposes of the present invention, it is important to be able to optically infer, at least from time to time, the absolute pose of pointer14. To do this, one relates Euler rotated object coordinates describing the orientation of pointer14to world coordinates (Xo,Yo,Zo). Note that the orientation of object axis Z′ in world coordinates (Xo,Yo,Zo) prior to the three Euler rotations is normal to plane (Xo,Yo). Second Euler angle θ defines the only counterclockwise rotation of object coordinates that is not about an object Z axis (this second rotation is about the X″=X′″ axis rather than axis Z′, Z″ or Z′″). Thus, Euler angle θ is an inclination angle θ between the completely Euler rotated object axis Z or axis C.A. and original object axis Z′, which is normal to plane (Xo,Yo).

Optical measuring arrangement22infers the absolute pose of pointer14during motion40at measurement times tiand processor26prepares the corresponding absolute pose data12.

Note that absolute pose data12consist of inferred values of parameters (φ,θ,ψ,x,y,z) at measurement times ti. Invariant features32,34,36are located at positions that are defined in world coordinates (Xo,Yo,Zo). These positions stay fixed at least during measurement and usually permanently. Knowledge of the absolute positions of features32,34,36in world coordinates (Xo,Yo,Zo) allows the optical measuring arrangement22to describe the absolute pose of pointer14with absolute pose data12expressed in parameters (φ,θ,ψ,x,y,z) at measurement times tiin Euler rotated object coordinates within world coordinates (Xo,Yo,Zo). The expression of absolute pose data is preferably with respect to a reference location such as world origin (0,0,0) of world coordinates (Xo,Yo,Zo).

Of course, alternative locations within world coordinates can also be chosen as reference locations with respect to which the absolute pose of pointer14is expressed. For example, the center of invariant feature34may be chosen as the reference location and the locations of reference point16on pointer14at n measurement times tican be denoted by corresponding n vectors Di, as shown in the drawing.

The frequency with which the absolute pose is inferred, i.e., the times ti, depends on the use of absolute pose data12corresponding to that absolute pose and the desired performance, e.g., temporal resolution. It should be noted that periodic optical inference of absolute pose is not limited to any predetermined times tior frequency schedule. In other words, the times between any two successive optical inferences or measurements of the absolute pose can be arbitrary. Preferably, however, arrangement22infers the absolute pose at a frequency that is high enough to obtain absolute pose data12that describe motion40at the temporal resolution required by application28.

Wireless transmitter30of communication link24sends absolute pose data12here defined by parameters (φ,θ,ψ,x,y,z) collected at measurement times tito processor26. Absolute pose data12can be transmitted continuously, in bursts, in parts, at arbitrary or preset times or as otherwise desired. Processor26prepares a subset48of absolute pose data12, for example the absolute position (x,y,z) of tip16and sends it to application28. Application28uses absolute position (x,y,z) of tip16at measurement times tito chart trace42of tip16as pointer14executes motion40. In other words, unit28recovers trace42corresponding to the movement of tip16. Note that the resolution of trace42in absolute space can be improved by increasing the sample of measurements of absolute trace points traversed in environment18by increasing the frequency of measurement times ti.

It should also be noted that pose data12should be formatted for appropriate communications between transmitter30, processor26and application28. Any suitable communication and formatting standards, e.g., IEEE interface standards, can be adapted for these purposes. For specific examples of formatting standards the reader is referred to Rick Poyner, LGC/Telegraphics, “Wintab™ Interface Specification: 16-bit and 32-bit API Reference”, revision of May 9, 1996; Universal Serial Bus (USB), “Device Class Definition for Human Interface Devices (HID)”, Firmware Specification, USB Implementers' Forum, Jun. 27, 2001 and six-degree of freedom interface by Ulrica Larsson and Johanna Pettersson, “Development and evaluation of a 6DOF interface to be used in a medical application”, Thesis, Linkopings University, Department of Science and Technology, Sweden, Jun. 5, 2002.

The orientation portion of absolute pose data12, i.e., Euler angles (φ,θ,ψ) can also be used in the present embodiment. Specifically, processor26can prepare additional subsets or send all of the orientation parameters (φ,θ,ψ) of absolute pose data12as a single subset to application28or to a different application or device serving a different function. Any mix of orientation (φ,θ,ψ) and position (x,y,z) data derived from absolute pose data12can be used in subset48. In fact, in some embodiments processor26keeps all absolute pose data12in subset48such that all of its parameters (φ,θ,ψ,x,y,z) can be used by application28. This is done when application28has to reconstruct the entire motion40of pointer14and not just trace42of tip16. For example, this is done when application28includes a motion-capture application. Once again, the temporal resolution of motion40can be improved by increasing the frequency of measurement times ti. Note that in this case parameters of pose data12that vary slowly are oversampled.

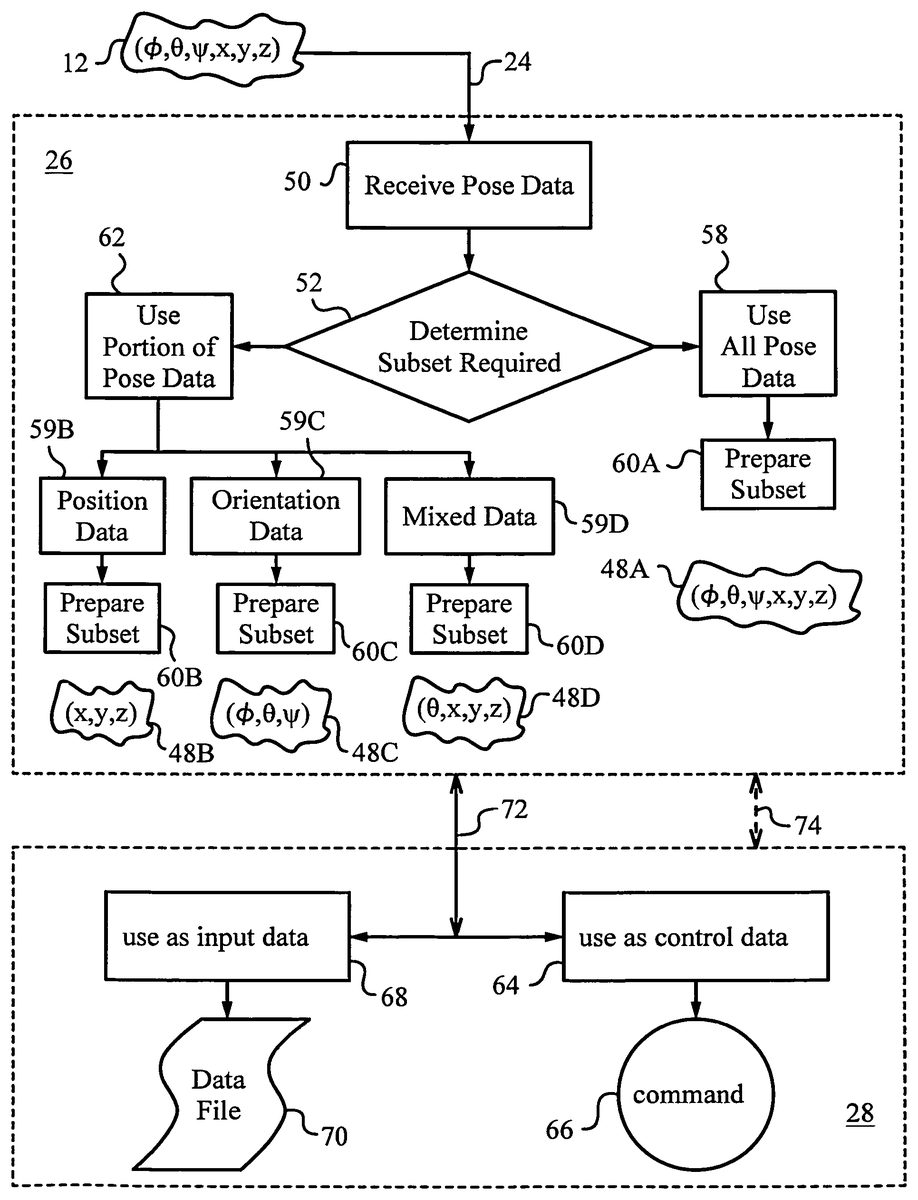

InFIG. 3a block diagram illustrates the processing of absolute pose data12by processor26and its use by application28in more detail. In a first step50, absolute pose data12is received by processor26via communication link24. In a second step52, processor26determines which portion or subset48of absolute pose data12is required. This selection can be made based on application28. For example, when application28is a trace-capture application that charts trace42, then only position data of tip16, i.e., (x,y,z) of this reference point16need to be contained in subset48. On the other hand, when application28is a motion-capture application, then all absolute pose data12are contained in subset48.

In step58all absolute pose data12are selected and passed to a subset formatting or preparing step60A. In step60A data12is prepared in the form of subset48A as required by application28. For example, data12is arranged in a particular order and provided with appropriate footer, header and redundancy bits (not shown), or as otherwise indicated by data porting standards such as those of Rick Poyner, LGC/Telegraphics (op. cit.).

In step62, only a portion of data12is selected. Three exemplary cases of partial selection are shown. In the first case, only position data is required by application28. Hence, in a step59B only position data (x,y,z) are selected and the remaining data12is discarded. In a subsequent step60B, position data (x,y,z) are prepared in the form of subset48B as required by application28and/or as dictated by the porting standards.

In a second case, in a step59C, only orientation data (φ,θ,ψ) are selected and the rest of data12are discarded. Then, in a step60C, orientation data (φ,θ,ψ) are prepared in the form of a subset48C for use by application28.

In the third case, in a step59D, a mix of data12, including some position data and some orientation data are selected and processed correspondingly in a step60D to prepare a subset48D.

A person skilled in the art will appreciate that the functions described can be shared between processor26and application28, e.g., as required by the system architecture and data porting. standards. For example, some preparation of subset48can be performed by application28upon receipt. It should also be noted that in some embodiments data12can be pre-processed by transmitter30or post-processed at any point before or after preparation of the corresponding subset48in accordance with any suitable algorithm. For example, a statistical algorithm, such as a least squares fit can be applied to data12derived at different times tior to successive subsets48. Furthermore, quantities such as time derivatives of any or all parameters, i.e.,

(ⅆxⅆt,ⅆyⅆt,ⅆzⅆt,ⅆϕⅆt,ⅆθⅆt,ⅆψⅆt),

can be computed. Also, various sampling techniques, e.g., oversampling can be used.

Subset48is transmitted to application28via a communication channel72. Application28receives subset48as an input that is treated or routed according to its use. For example, in a step64, subset48is used as control data. Thus, subset48is interpreted as an executable command66or as a part of an executable command. On the other hand, in a step68, subset48is used as input data and saved to a data file70.

In one embodiment, application28passes information to processor26to change the selection criteria for subset48. Such information can be passed via communication channel72or over an alternative link, e.g., a feedback link74. For example, application28requests subset48A to be transmitted and uses subset48A as input data for data file70. At other times, application28requests subset48C to be transmitted and uses subset48C as command data for executable command66. Alternatively, processor26can indicate a priori whether any subset48should be treated as input data or control data. In still another alternative, user38can indicate with the aid of a separate apparatus, e.g., a switch mounted on pointer14(not shown), whether subset48is intended as control data or input data. A person skilled in the art will recognize that there exist a large number of active and passive methods for determining the interpretation and handling of data being transmitted in subset48by both processor26and application28.

In a specific application28, subset48contains only position data (x,y,z) of reference point or tip16of pointer14collected at a number of measurement times ti. This subset corresponds to individual points along trace42and is an absolute trace expressed by points referenced with respect to origin (0,0,0) of world coordinates (Xo,Yi,Zo). For example, in a particular applications28trace42may be treated as a digital ink trace that is designed to be handled as input data or command data. Alternatively, the absolute points forming trace42can be expressed in world coordinates (Xo,Yo,Zo) with respect to a reference location other than world origin (0,0,0).FIG. 1shows that one such alternative reference location can be the center of feature34, whose absolute position in world coordinates (Xo,Yo,Zo) is known. In this case, vectors Do, . . . Di, . . . Dndescribe the absolute position of the points of trace42collected at successive measurement times to, . . . ti, . . . tn.

In practice, efficient inference of the absolute pose of pointer14in terms of absolute pose data expressed in parameters (φ,θ,ψ,x,y,z) representing Euler rotated object coordinates expressed in world coordinates (Xo,Yo,Zo) with respect to a reference location, such as world origin (0,0,0) imposes a number of important requirements. Since pointer14may be moving in a close-range environment18the field of view of on-board optical measuring arrangement22must be large. This is particularly crucial in situations where arrangement22has to tolerate frequent occlusions of one or more of invariant features32,34,36. Such conditions arise when user38operates pointer14in a close-range home, gaming or work environment18, i.e., in a room, a cubicle or other confined real space. Also, if full motion capture is desired, then the rate or frequency of measurement times tihas to be high in comparison to the rate of movement of the hand of user38.

To learn how to address these and other practical considerations, we turn to another embodiment of an apparatus100according to the invention as shown inFIG. 4. Apparatus100has a manipulated object102equipped with an on-board optical measuring arrangement104having a light-measuring component106. Apparatus100is deployed within a real three-dimensional environment108. In the case at hand, environment108is defined within a room110and it is parametrized by global or world coordinates (Xo,Yo,Zo) whose world origin (0,0,0) is posited in the lower left rear corner of room110.

As in the previous embodiment, world origin (0,0,0) is selected as the reference location for expressing the measured values of parameters (φ,θ,ψ,x,y,z) that represent absolute pose data of manipulated object102in Euler rotated object coordinates (X,Y,Z). The three successive rotations by Euler angles (φ,θ,ψ) to obtain Euler rotated object coordinates (X,Y,Z) are also indicated inFIG. 4. Also, the original (X′,Y′,Z′), the once rotated (X″,Y″,Z″), and the twice rotated (X′″,Y′″,Z′″) object coordinates are drawn along the fully Euler rotated (three times rotated) object coordinates (X,Y,Z). Just like in the previous embodiment, a tip102′ of manipulated object102is chosen as the reference point. Conveniently, a vector Godescribes the position of reference point102′ in world coordinates (Xo,Yo,Zo).

A number of invariant features B1-B7are placed at known locations in real three-dimensional environment108delimited by room110. Vectors R1-R7define the locations of corresponding invariant features B1-B7. Following standard convention, vectors R1-R7extend from world origin (0,0,0) to the centers of the corresponding invariant features B1-B7. All seven invariant features B1-B7are high optical contrast features. More precisely, invariant features B1-B7are light sources such as light-emitting diodes that emit electromagnetic radiation or light112. Preferably, light112is in the infrared wavelength range of the electromagnetic spectrum. Light-emitting diodes in that range are typically referred to as infrared emitting diodes or just IR LEDs. For clarity, only four of the seven IR LEDs B1-B7are shown simultaneously emitting light112inFIG. 4.

Optical measuring arrangement104with light-measuring component106is mounted on-board, and more precisely on one of the sides of manipulated object102. Component106is an absolute motion detection component equipped with a lens114and an optical sensor116shown in detail in the cut-away view of manipulated object102depicted inFIG. 5. Lens114faces environment108and it has a wide field of view. For example, lens114is a fisheye lens whose field of view (F.O.V.) is large enough to view all or nearly all IR LEDs B1-B7in environment108from all absolute poses that it is anticipated to assume while being manipulated by a user (not shown in this drawing).

It should be noted, however, that the handling of manipulated object102does not need to be carried out directly by a user. In fact, object102can be a remotely controlled object or even an object that is cast or thrown by the user. Whether object102is manipulated directly or remotely and whatever its spatial trajectory in environment108, it is crucial that light-measuring component106be optimally placed on object102to have a direct line-of-sight to most or all IR LEDs B1-B7while object102is undergoing its intended motion. That is because component106needs to capture light112emitted by IR LEDs B1-B7so that it can use these invariant features for optically inferring the values of parameters (φ,θ,ψ,x,y,z). Taken together, parameters (φ,θ,ψ,x,y,z) represent absolute pose data118that describes the absolute pose of manipulated object102.

An appropriate choice of lens114will aid in addressing the above optics challenges. Obviously, lens114has to be small, robust and low-cost (e.g., moldable in acrylic or other plastic). Lens114should not require active focusing and it should have a low F-number (e.g., F#≈1.6 or less) to ensure high light gathering efficiency. At the same time, lens114should exhibit low levels of aberration and have a single viewpoint. In other words, lens114should exhibit quasi-pinhole optical characteristics. This last attribute is especially important when manipulated object102is expected to sometimes pass within a short distance of IR LEDs B1-B7. Under such conditions, the limited depth of field inherent in a normal refractive lens, especially one without active focal length adjustment, would cause a loss of optical information; a familiar problem in machine vision. U.S. Pat. Nos. 7,038,846 and 7,268,956, both to Mandella, teach a suitable design of a catadioptric lens that satisfies these stringent demands.

Apparatus100has a processor120for preparing pose data118. In this exemplary embodiment, processor120is not on-board manipulated object102but is instead integrated in a computing device122. For example, processor120may be a central processing unit (CPU), a graphics processing unit (GPU) or some other unit or combination of units resident on computing device122. Computing device122is shown as a stationary device, but it is understood that it could be a portable device or an ultra-mobile device including a tablet, a PDA or a cell phone.

Besides preparing absolute pose data118, processor120is entrusted with identifying a subset118′ of data118. As in the prior embodiment, the preparation of data118may include just collecting the inferred values of parameters (φ,θ,ψ,x,y,z) corresponding to the absolute pose of object102. In more involved cases, the preparation of data118can include pre- and/or post-processing as well as computation of functions derived from measured values of one or more of parameters (φ,θ,ψ,x,y,z) (including the application of statistical algorithms to one or more these parameters). Meanwhile, identification of subset118has to do with the intended use of data118and the nature of its application.

Computing device122not only hosts processor120, but also has a display124for displaying an output126to the user. Output126is generated by an application128that is running on computing device122. Application128and its output126dictate what subset118′ needs to be identified and supplied by processor120. A simple case arises when application128is configured to produce as output126a visual element such as a token or even an image of object102and compute as well as show its absolute trajectory within room110in world coordinates (Xo,Yo,Zo) with respect to reference location (0,0,0). A person skilled in the art will easily discern, that under these constraints application128will require that all parameters (φ,θ,ψ,x,y,z) be included in subset118′. This way, as time progresses, application128will be able to alter output126in response to the absolute pose of object102at different times tiand, if desired, display a replica of its full trajectory within room110. Application128can display this information as output126on display124to the user as shown inFIG. 4or forward the information to still another application.

Computing device122employs its own internal communication link130, e.g., a data bus, to transmit subset118′ to application128. Meanwhile, a wireless communication link132is provided for transmitting data118from manipulated object102to computing device122. Wireless link132employs a transmitting unit134A on object102and a receiving unit134B on device122.

When manipulated object102moves within room110on-board optical measuring arrangement104deploys absolute motion detection component106. Here, component106is a light-measuring component that gathers light112emitted from IR LEDs B1-B7. Preferably, all IR LEDs B1-B7are on at measurement times tiwhen the values of parameters (φ,θ,ψ,x,y,z) describing the absolute pose of object102are being measured.

As shown in more detail inFIG. 5, light-measuring component106collects light112within the field of view of lens114. Preferably, lens114has a single viewpoint136and is configured to image room110onto optical sensor116. Thus, lens114images light112from IR LEDs B1-B7onto its optical sensor. For reasons of clarity, light112from just one IR LED is shown as it is being collected and imaged to an image point140on optical sensor116by lens114. Sensor116can be any type of suitable light-sensitive sensor, such as a CCD or CMOS sensor coupled with appropriate image processing electronics142.

Electronics142can either fully process signals from sensor116, or only pre-process them to obtain raw image data. The choice depends on whether fully processed or raw absolute pose data118is to be transmitted via wireless link132to computing device122. When sufficient on-board power is available, performing most or all image processing functions on-board object102is desirable. In this case electronics142include all suitable image processing modules to obtain measured values of parameters (φ,θ,ψ,x,y,z) in their final numeric form. Data118being transmitted via link132to computing device122under these conditions is very compact. On the other hand, when on-board power is limited while the bandwidth of wireless communication link132is adequate, then electronics142include only the image processing modules that extract raw image data from sensor116. In this case, raw absolute pose data118is transmitted to computing device122for further image processing to obtain the inferred or measured values of parameters (φ,θ,ψ,x,y,z) in their final numeric form.

In the present embodiment, sensor116is a CMOS sensor with a number of light-sensing pixels144arranged in an array145, as shown inFIG. 6. The field of view of lens112is designated by F.O.V. and it is indicated on the surface of sensor116with a dashed line. Image processing electronics142are basic and designed to just capture raw image data146from pixels144of sensor116. In particular, electronics142have a row multiplexing block148A, a column multiplexing block148B and a demultiplexer150.

The additional image processing modules depicted inFIG. 6and required to obtain data118in its final numeric form and to identify subset118′ for application128all reside on computing device122. These modules include: extraction of IR LEDs (module152) from raw image data146, image undistortion and application of the rules of perspective geometry (module154), computation of pose data118or extraction of inferred or measured values of parameters (φ,θ,ψ,x,y,z) (module156) and identification of subset118′ (module158). Note that different image processing modules may be required if invariant features are geometrically more complex than IR LEDs B1-B7, which are mere point sources.

For example, extraction of invariant features such as edges, corners and markings will require the application of suitable image segmentation modules, contrast thresholds, line detection algorithms (e.g., Hough transformations) and many others. For more information on edge detection in images and edge detection algorithms the reader is referred to U.S. Pat. Nos. 6,023,291 and 6,408,109 and to Simon Baker and Shree K. Nayar, “Global Measures of Coherence for Edge Detector Evaluation”, Conference on Computer Vision and Pattern Recognition, June 1999, Vol. 2, pp. 373-379 and J. Canny, “A Computational Approach to Edge Detection”, IEEE Transactions on Pattern Analysis and Machine Intelligence, Vol. 8, No. 6, November 1986 for basic edge detection all of which are herein incorporated by reference. Additional useful teachings can be found in U.S. Pat. No. 7,203,384 to Carl and U.S. Pat. No. 7,023,536 to Zhang et al. A person skilled in the art will find all the required modules in standard image processing libraries such as OpenCV (Open Source Computer Vision), a library of programming functions for real time computer vision. For more information on OpenCV the reader is referred to G. R. Bradski and A. Kaehler, “Learning OpenCV: Computer Vision with the OpenCV Library”, O'Reilly, 2008.

In the present embodiment, the absolute pose of object102including the physical location (x,y,z) of reference point102′ (described by vector Go) and the Euler angles (φ,θ,ψ) are inferred with respect to world origin (0,0,0) with the aid of vectors R1-R7. To actually compute these parameters from on-board object102it is necessary to recover vectors R1-R7from images140of IR LEDs B1-B7contained in an image160of room110as shown on the surface of sensor116inFIG. 6. This process is simplified by describing image160in image coordinates (Xi,Yi). Note that due to an occlusion162, only images140of IR LEDs B1-B4, B6, B7associated with image vectors R1′-R4′, R6′, R7′ are properly imaged by lens114onto sensor116.

In practical situations, occlusion162as well as any other occlusions can be due to the user's body or other real entities or beings present in environment108obstructing the line-of-sight between lens114and IR LED B5. Also note, that if too few of IR LEDs B1-B7are imaged, then inference of the absolute pose of object102may be impossible due to insufficient data. This problem becomes particularly acute if IR LEDs B1-B7are not distinguishable from each other. Therefore, in a practical application it is important to always provide a sufficiently large number of IR LEDs that are suitably distributed within environment108. Alternatively or in addition to these precautions, IR LEDs B1-B7can be made distinguishable by setting them to emit light112at different wavelengths.

Referring again toFIG. 6, in a first image processing step electronics142demultiplex raw image data146from row and column blocks148A,148B of array145with the aid of demultiplexer150. Next, wireless communication link132transmits raw image data146from on-board object102to computing device122. There, raw image data146is processed by module152to extract images140of IR LEDs B1-B7from raw image data146. Then, module154undistorts the image and applies the rules of perspective geometry to determine the mapping of images140of IR LEDs B1-B7to their actual locations in real three-dimensional environment108of room110. In other words, module154recovers vectors R1-R7from image vectors R1′-R7′.

To properly perform its function, module154needs to calibrate the location of the center of image coordinates (Xi,Yi) with respect to reference point102′. This calibration is preferably done prior to manipulating object102, e.g., during first initialization and testing or whenever re-calibration of origin location becomes necessary due to mechanical reasons. The initialization can be performed with the aid of any suitable algorithm for fixing the center of an imaging system. For further information the reader is referred to Carlo Tomasi and John Zhang, “How to Rotate a Camera”, Computer Science Department Publication, Stanford University and Berthold K. P. Horn, “Tsai's Camera Calibration Method Revisited”, which are herein incorporated by reference.

Armed with the mapping provided by module154, module156obtains the inferred values of parameters (φ,θ,ψ,x,y,z), which represent absolute pose data118. Data118now properly represents the final numerical result that describes the inferred absolute pose of object102. This description is made in terms of inferred values of parameters (φ,θ,ψ,x,y,z), which are the Euler rotated object coordinates expressed in world coordinates (Xo,Yo,Zo) with respect to world origin (0,0,0). In the last step, module158identifies a subset118′ of parameters (φ,θ,ψ,x,y,z) to be sent to application128.

In practice, due to certain optical effects including aberration associated with lens114, the non-occluded portion of image160will exhibit a certain amount of rounding. This rounding can be compensated optically by additional lenses (not shown) and/or electronically during undistortion performed by module154. Preferably, the rounding is accounted for by applying a transformation to the non-occluded and detected portion of image160by module154. For example, module154has an image deformation transformer based on a plane projection to produce a perspective view. Alternatively, module154has an image deformation transformer based on a spherical projection to produce a spherical projection. Advantageously, such spherical projection can be transformed to a plane projection with the aid of well-known methods, e.g., as described by Christopher Geyer and Kostas Daniilidis, “A Unifying Theory for Central Panoramic Systems and Practical Implications”, www.cis.upenn.edu, Omid Shakernia, et al., “Infinitesimal Motion Estimation from Multiple Central Panoramic Views”, Department of EECS, University of California, Berkeley, and Adnan Ansar and Kostas Daniilidis, “Linear Pose Estimation from Points or Lines”, Jet Propulsion Laboratory, California Institute of Technology and GRASP Laboratory, University of Pennsylvania which are herein incorporated by reference.

It should also be remarked, that once image160is recognized and transformed, a part of the orientation, namely Euler angles (φ,θ) of object102can be inferred in several ways. For example, when working with the spherical projection, i.e., with the spherical projection of unobstructed portions of image160, a direct three-dimensional rotation estimation can be applied to recover inclination angle θ and polar angle φ. For this purpose a normal view of room110with IR LEDs B1-B7is stored in a memory (not shown) such that it is available to module154for reference purposes. The transformation then yields the Euler angles (φ,θ) of object102with respect to IR LEDs B1-B7and any other high optical contrast invariant features in room110by applying the generalized shift theorem. This theorem is related to the Euler theorem stating that any motion in three-dimensional space with one point fixed (in this case the reference point102′ may be considered fixed for the duration of one measurement time ti) can be described by a rotation about some axis. For more information about the shift theorem the reader is referred to Ameesh Makadia and Kostas Daniilidis, “Direct 3D-Rotation Estimation from Spherical Images via a Generalized Shift Theorem”, Department of Computer and Information Science, University of Pennsylvania, which is herein incorporated by reference.

Alternatively, when working with a plane projection producing a perspective view of unobstructed portions of image160one can use standard rules of geometry to determine inclination angle θ and polar angle φ. Several well-known geometrical methods taking advantage of the rules of perspective views can be employed in this case.

Referring back toFIGS. 4 and 5, in the present embodiment, output126includes a visual element, namely an image of object102. Since subset118′ contains all parameters (φ,θ,ψ,x,y,z) and is gathered at many successive measurement times ti, visual element representing object102can be shown undergoing its absolute motion in world coordinates (Xo,Yo,Zo). For example, in the present case a trajectory162A of reference point or tip102′ is shown on display124. In addition, a trajectory162B of the center of mass designated by C.O.M. could also be displayed on display124. Depending on application128, the absolute motion of object102could be replayed in parts or in its entirety at normal speed or at an altered rate (slowed down or sped up).

A person skilled in the art will realize that the embodiment of apparatus100shown inFIGS. 4-6is very general. It admits of many variants, both in terms of hardware and software. Practical implementations of the apparatus and method of invention will have to be dictated by the usual limiting factors such as weight, size, power consumption, computational load, overall complexity, cost, desired absolute pose accuracy and so on. Among other, these factors will dictate which type of senor and lens to deploy, and whether most of the image processing should take place on-board object102or in computing device122.

Another embodiment of an apparatus200in accordance with the invention is shown in the three-dimensional diagrammatical view ofFIG. 7. Apparatus200represents a preferred embodiment of the invention and addresses several of the above-mentioned limiting factors. In particular, apparatus200introduces practical simplifications that can be used under numerous circumstances to obtain absolute pose of a manipulated object202(only partially shown here for reasons of clarity) that moves in a real three-dimensional environment204. Environment204is described by global or world coordinates (Xo,Yo,Zo). Their origin (0,0,0) is chosen as the reference location for apparatus200with respect to which the absolute pose or series of absolute poses at different measurement times tiare expressed.

Environment204is an outdoor environment with ambient light220provided by the sun over the usual solar spectral range Δλamb. A certain number n of invariant features B1-Bn are affixed at known locations in environment204. Vectors b1-bn are employed to describe the locations of corresponding invariant features B1-Bn in world coordinates (Xo,Yo,Zo). All invariant features B1-Bn are high optical contrast features, and, more specifically, they are IR LEDs for emitting electromagnetic radiation or light222in the infrared range of the electromagnetic spectrum.

When invariant features are embodied by light sources that are controlled they will be referred to as beacons. Beacons are preferably one-dimensional or point-like and they are implemented by light emitting diodes (LEDs), laser diodes, IR LEDs, optical fibers and the like. Of course, beacons can also be extended sources such as lamps, screens, displays and other light sources as well as any objects providing sufficiently highly levels of electromagnetic radiation that can be controlled. These include projected points and objects, as well as points and objects concentrating and reflecting radiation originating in environment204or active illumination from on-board manipulated object202. The advantage of beacons over simple and uncontrolled light sources is that they are distinguishable.

It is the emission pattern of beacons B1-Bn that is controlled in the present embodiment. Hence, they are distinguishable and play the role of beacons. The emission pattern of beacons B1-Bn is dictated by locations b1-bn at which they are affixed in environment204and their on/off timing. In other words, the emission pattern is spatially set by placing beacons B1-Bn at certain locations and it is temporally varied by turning the beacons on and off at certain times.

Beacons B1, B2, Bn are controlled by corresponding controls C1, C2, . . . , Cn and a central unit224that communicates with the controls. The communications between unit224and controls C1, C2, . . . , Cn are carried by wireless up-link and down-link signals226A,226B. Of course, any method of communication, including wired or optical, can be implemented between central unit224and controls C1, C2, . . . , Cn. Different communication equipment will typically require different supporting circuitry, as will be appreciated by those skilled in the art. Taken together, controls C1, C2, . . . , Cn and unit224form an adjustment mechanism228for setting or adjusting a sequenced emission pattern of IR LEDs B1, B2, . . . , Bn. In other words, adjustment mechanism228is capable of modulating all IR LEDs B1-Bn in accordance with a pattern.

Object202has an on-board optical measuring arrangement206consisting of an absolute motion detection component208. Component208is a light-measuring component with a lens210and an optical sensor212. Light-measuring component208has an optical filter216positioned before sensor212, as well as image processing electronics218connected to sensor212. As in the prior embodiment, lens210is preferably a wide field of view lens with a substantially single viewpoint214. Viewpoint214is selected as the reference point on manipulated object202for expressing the location parameters (x,y,z) of its absolute pose and its orientation parameters (φ,θ,ψ). Hence, vector Goin this embodiment extends from world origin (0,0,0) to viewpoint214.

Once again, the absolute pose of object202in this embodiment is expressed in the Euler rotated object coordinates (X,Y,Z), whose origin is now attached to viewpoint214. The manner in which rotations by Euler angles (φ,θ,ψ) are applied to object202to express the Euler rotated object coordinates (X,Y,Z) are analogous to the convention explained above and will therefore not be repeated.

The choice of viewpoint214of lens210as the reference point is very convenient for tracking object202and it does not limit the choice of object coordinates, as will be appreciated by those skilled in the art. As before, the absolute pose of object202is completely described by six parameters, namely the three components (x,y,z) of displacement vector Gofrom the origin of global coordinates (Xo,Yo,Zo) to the reference point, in this case viewpoint214, and the three Euler angles (φ,θ,ψ). A trajectory230of object202is thus fully described by these six parameters and time t, i.e., (x,y,z,φ,θ,ψ,t).

Notice that lens210, although shown as a single element in the previous and current embodiments can be compound. In other words, lens210can consist of several optical elements including various combinations of refractive and reflective elements. In any of these embodiments, the effective viewpoint214can be determined and chosen as reference point on object202.

Optical sensor212of absolute motion detection component208is a photosensor designed for sensing light222from IR LEDs B1-Bn. In fact, rather than being a sensor with an array of pixels, photosensor212is a centroid sensing device or the so-called position-sensing device (PSD) that determines a centroid of the flux of light222impinging on it.

Lens210has a field of view sufficiently large to capture electromagnetic radiation or light222emitted by most or all beacons B1-Bn and image it onto on-board centroid sensing device or PSD212. Mathematically, it is known that to infer the absolute pose of object202, i.e., to infer or measure the values of all parameters (x,y,z,φ,θ,ψ) of object202in environment204, at least four among distinguishable beacons B1-Bn need to be in the field of view of lens210.

Optical filter216placed before PSD212reduces the level of ambient light220impinging on PSD212. Concurrently, the wavelengths of electromagnetic radiation or light222provided by LEDs B1-Bn are selected such that they are passed by filter216. In the present case, ambient radiation220is produced by the sun and spans an emission spectrum Δλamb., whose intensity (I) peaks in the visible range and drops off in the infrared range as generally shown by graph250inFIG. 8A. Consequently, it is advantageous to select the wavelengths λ1, λ2, . . . , λnof electromagnetic radiation222emitted by LEDs B1-Bn to reside in an infrared range252.

It is optional whether all wavelengths λ1, λ2, . . . , λnare different or equal. In some embodiments, different wavelengths can be used to further help differentiate between IR LEDs B1-Bn. In the present embodiment, however, all IR LEDs B1-Bn are emitting at the same emission wavelength λeequal to 950 nm. A transmittance (T) of filter216is selected as shown by graph254inFIG. 8B, so that all wavelengths in infrared range252, including λein particular pass through. Wavelengths in the far infrared range upwards of 1,000 nm where ambient radiation220is even weaker can also be used if a higher signal to background ratio is desired.

Returning toFIG. 7, we see how electromagnetic radiation222at the wavelength of 950 nm emitted by beacon B4passes filter216and is imaged onto PSD212. PSD212can be selected from a large group of candidates including, for example, devices such as semiconductor-type position sensitive detectors (PSDs), optical waveguide-based position sensitive detectors and organic material position sensitive detectors. In the present embodiment, device212is a semiconductor-type position sensitive detector (PSD) employing a reverse biased p-n junction.

Lens210produces an imaged distribution232of electromagnetic radiation222on PSD212. PDS212, in turn, generates electrical signals that represent the x-y position of a center-of-mass or centroid234of imaged distribution232in x-y plane of PSD212. In the present case, IR LED B4is a point-like source of electromagnetic radiation222and therefore lens210images it to a spot-type distribution232. In general, it is desirable to keep spot232relatively small by appropriate design of lens210, which is preferably a lens with good imaging properties including low aberration, single viewpoint imaging and high-performance modulation transfer function (MTF). In general, however, optic210can be refractive, reflective or catadioptric.

For a better understanding of PSD212we turn to the plan view diagram of its top surface236shown inFIG. 9. To distinguish coordinates in the image plane that is coplanar with top surface236of PSD212, the image coordinates are designated (Xi,Yi). Note that the field of view (F.O.V.) of lens210is designated in a dashed line and is inscribed within the rectangular surface236of PSD212. This means that the entire F.O.V. of lens210is imaged onto PSD212. In an alternative embodiment, the F.O.V. may circumscribe surface236, as indicated in the dashed and dotted line. Under this condition, the image of some beacons may not fall on the surface of PSD212. Thus, the information from these beacons will not be useful in optically inferring the absolute pose of object202.

PSD212has two electrodes238A,238B for deriving signals corresponding to the x-position, namely xi+and xi−, and two electrodes238C,238D for obtaining yi+and yi−signals corresponding to the y-position. The manner in which these signals are generated and processed to obtain the location (xi,yi) of centroid234is well-known to those skilled in the art and will not be discussed herein. For more information on the subject the reader is referred to manufacturer-specific PSD literature, such as, e.g., “PSD (Position Sensitive Detector)” Selection Guide of Hamamatsu, Solid State Division, July 2003.

The intensities232X,232Y of imaged distribution232, i.e., spot232, along the Xiand Yiaxes are visualized along the sides. Another imaged distribution240due to ambient radiation220is also indicated with a dashed line. Corresponding intensities240X,240Y along the Xiand Yiaxes are also visualized along the sides. Because of the action of filter216, intensities240X,240Y are low in comparison to232X,232Y and the corresponding centroid position thus includes a negligibly small shift error due to the background noise on the desired signal. Such background can be removed with any well-known electronic filtering technique, e.g., standard background subtraction. Corresponding electronics are known and will not be discussed herein.

PSD212is connected to image processing electronics218and delivers signals xi+, xi−, and yi+, yi−to it. Electronics218are also in communication with central unit224by any suitable link so that it knows which beacon is active (here beacon B4) and thus responsible for centroid234at any given time. It is convenient to establish the link wirelessly with up-link and down-link signals226A,226B, as shown inFIG. 7.

During operation, optical apparatus200uses the knowledge of which beacon produces centroid234described by image coordinates (xi,yi) and the beacon's location in environment204or world coordinates (Xo,Yo,Zo) to infer the absolute pose of object202in terms of measured values of parameters (x,y,z,φ,θ,ψ). Note that beacons B1-Bn need not be attached or affixed at any permanent location in environment204, as long as their location at the time of emission of radiation222is known to apparatus200. Moreover, any sequenced pattern of beacons B1-Bn can be used, even a pattern calling for all beacons B1-Bn to be on simultaneously. In the latter case, a constellation of n spots is imaged on PSD212and centroid234is the center of mass (C.O.M.) of the entire constellation of n spots232, i.e., it is not associated with a single spot. Of course, in that case the ability to distinguish the beacons is removed and the performance of apparatus200will be negatively affected.

For better clarity of explanation, we first consider a modulation or sequenced pattern with only one beacon on at a time. Following such pattern, beacon B4is turned off and beacon Bm is turned on to emit radiation222. Note that an intensity distribution242of radiation222has a wide cone angle such that lens210can image radiation222even at steep angles of incidence. Alternatively, given knowledge of all possible relative positions between object202and beacon Bm, a mechanism can be provided to optimize angular distribution242for capture by lens210.

To commence motion capture, controls C1-Cn and unit224, i.e., adjustment mechanism228implements an initial sequenced pattern of IR LEDs B1-Bn. The initial pattern can be provided by image processing electronics218to unit224of adjustment mechanism228via up-link signals226A. The initial pattern can be based on any parameter of the last known or inferred absolute pose or any other tracking information. Alternatively, initial sequenced pattern is standard.

A flow diagram inFIG. 10illustrates the steps of an exemplary absolute pose and motion capture program270implemented by image processing electronics218and mechanism228. Algorithm270commences with activation of initial modulation according to sequenced pattern272for one cycle and synchronization of electronics218with mechanism228in step274. This is done by matching signals xi+, xi−, and yi+, yi−delivered by PSD212to electronics218with each active beacon as individual beacons B1, B2, . . . , Bn are turned on and off by controls C1, C2, . . . , Cn in accordance with initial sequenced pattern. Drop out of any one beacon is tolerated, as long as synchronization with at least four beacons is confirmed for absolute pose capture or fewer than four but at least one for relative pose determination.

Motion capture starts in step276. In step278signals xi+, xi−, and yi+, yi−encoding centroid234of activated beacon are sent from PSD212to electronics218for processing. In step280signals are tested for presence (sufficient power level for further processing) and are then filtered in step282to obtain filtered data corresponding to centroid234. Filtering includes background subtraction, signal gain control including lock-in amplification and/or other typical signal processing functions. Absence of signals xi+, xi−, and yi+, yi−is used to flag the corresponding beacon in step284.

After filtering, the data is normalized in step286. This step involves time-stamping, removing effects of known optical aberrations due to lens210and preparing the data for processing by either absolute or relative tracking or navigation algorithms. Normalization also formats data points from each cycle and may include buffering the data, if necessary, while centroid234from the next beacon in the pattern is queued up or buffering until a sufficient number of centroids234have been captured to perform reliable normalization. In a preferred embodiment, beacons B1, B2, . . . , Bn are amplitude modulated with a series of pulses. In this embodiment, normalization further includes selection of the pulse with most suitable amplitude characteristics (e.g., full dynamic range but no saturation) and discarding signals from other pulses.

In step288normalized data of centroid234is sent to a tracking or navigation algorithm290. Contemporaneously, or earlier depending on timing and buffering requirements, absolute pose and motion capture program270submits a query292whether the first cycle of initial sequenced pattern in complete. The answer is used by navigation algorithm290in determining at least one parameter of the pose of object202and to prepare for capturing the next centroid in step294.

Navigation algorithm290preferably determines all parameters (x,y,z,φ,θ,ψ) at initialization time tinit.in global coordinates (Xo,Yo,Zo) based on known locations of beacons B1, B2, . . . , Bn, i.e., known vectors b1, b2, . . . , bn. Only centroids234that are available (i.e., no drop out of corresponding beacon or other failure) and yield reliable centroid data are used. At least four centroids234need to be captured from the initial sequenced pattern to measure the values of parameters (x,y,z,y,φ,θ,ψ) in world coordinates (Xo,Yo,Zo). The pose is called absolute when all parameters are known in global coordinates (Xo,Yo,Zo) at a given time, e.g., at tinit.. Navigation using absolute pose or at least one parameter of absolute pose is referred to as absolute tracking or absolute navigation.

In a particular embodiment, beacons B1, B2, . . . , Bn are positioned on a plane in a rectangular grid pattern and parameters (x,y,z,y,φ,θ,ψ) are inferred or measured based on projective, i.e., perspective geometry. In this approach the rules of perspective geometry using the concept of vanishing points lying on a horizon line are applied to determine the location of point of view214. Specifically, given the locations of at least four coplanar beacons lying on at least three straight intersecting lines framing a rectangular grid in the field of view F.O.V. of lens210, absolute navigation algorithm290defines a horizon and finds conjugate vanishing points from which point of view214is determined. Once point of view214is known, parameters (x,y,z,y,φ,θ,ψ) of object202are inferred or measured. Initially, point of view214is the origin or reference point at (x,y,z). As mentioned above, any other point on object202can be used as a reference point based on a coordinate transformation. The perspective geometry and vector algebra necessary to perform absolute navigation are known to skilled artisans of optical image processing and will not be discussed herein. For more details, the reader is referred to K. Kanatani, “Geometric Computation for Machine Vision”, Oxford Science Publications; Clarendon Press, Oxford; 1993, Chapters 2-3 and to U.S. Pat. No. 7,203,384 to Carl.

In embodiments where a large number of beacons are used and are available (low drop out), the rules of perspective geometry can be employed to filter beacons that are non-conformant therewith. In other words, the perspective geometry constraint can be used as an additional filter for high-precision absolute tracking or navigation.

Absolute pose expressed with inferred or measured values of parameters (x,y,z,φ,θ,ψ) computed by image processing electronics218at initial time tinit.in step290is used to update trajectory230during pose update step296. Depending on the motion of object202and required resolution or accuracy for trajectory230, the centroid capture rate and time between determinations of absolute pose should be adjusted. At high-speed capture rates absolute navigation algorithm290can keep updating parameters (x,y,z,φ,θ,ψ) in a continuous fashion based on at least four most recently captured centroids or even as each successive centroid is obtained. This can be accomplished by substituting the most recently captured centroid for the oldest centroid. Computed trajectory230, expressed with absolute pose parameters and time (x,y,z,φ,θ,ψ), is output in step298to an application in the form of a subset. The subset may contain all or fewer than all of the parameters (x,y,z,φ,θ,ψ,t), depending on the requirements of the application.

The application requires knowledge of object's202movements for operation, feedback, input, control or other functions. The application has a control mechanism that initiates and terminates operation of motion capture program via control command300. In several advantageous applications object202is a hand-held object that is manipulated directly by the user and trajectory230is used as input for the application, as will be addressed in more detail below.

Preferably, upon completion of one cycle of initial sequenced pattern a re-evaluation is performed in step302. During re-evaluation beacons flagged during step284are removed from the data set or the optimized sequenced pattern to speed up operation. Beacons that fail in filtering or normalization steps282,286may be adjusted or left out as well. Finally, any high quality beacons as determined by tracking or navigation algorithm290can be used for benchmarking or weighting. Of course, these decisions can be periodically re-checked to ensure that beacons yielding high quality data at a different pose are not turned off permanently. Additionally, intermittent background measurements are made with all beacons off at regular intervals or on an as-needed basis for background subtraction.

Alternatively, optimization and re-evaluation of the sequenced pattern is performed on-the-fly. In this case the initial cycle does not need to be completed and information from some beacons, e.g., the latter portion of the cycle may be disregarded altogether.

In a preferred embodiment of the method, the sequenced pattern of emission of radiation222by the beacons is controlled based on the one or more absolute pose parameters determined by tracking or navigation algorithm290. The control can be a temporal control as in when the beacons are on, or spatial control of which beacons should be used and/or which beacons should be relocated and affixed at new locations in the environment. To this effect, in step304an optimized sequenced pattern is prepared based on the re-evaluation from step302. If the application issues request306for further output from motion capture program270, then the optimized sequenced pattern is activated in step308and the cycle of centroid capture re-starts at step278. Otherwise, motion capture program is terminated in step310.

In an alternative embodiment, motion capture program270employs an absolute navigation algorithm290that only determines a subset of absolute pose parameters (x,y,z,φ,θ,ψ). In one example, only (x,y,z) parameters defining the position of point of view214(vector Go) or some other reference point on object202are determined. These parameters can be used when orientation parameters (φ,θ,ψ) are not required by the application. An example of such application is a three-dimensional digitizer. In another example, only orientation parameters (φ,θ,ψ) of the pose of object202are determined. These can be used by an application that requires only orientation or angle information for its input or control functions, e.g., when object202is a remote pointer, joystick, three-dimensional controller, pointer, other hand-held object or indeed any object in need of angular tracking or navigation only.

In still another alternative embodiment, motion capture program270employs a relative navigation algorithm290′ that only determines changes in some or all parameters (Δx,Δy,Δz,Δφ,Δθ,Δψ). For example, navigation algorithm290′ determines linear and/or angular velocities

(ⅆxⅆt,ⅆyⅆt,ⅆzⅆt,ⅆϕⅆt,ⅆθⅆt,ⅆψⅆt),

accelerations or higher order rates of change, such as jerk, of any absolute pose parameter or combinations thereof. It should be noted that absolute pose may not be inferred or measured at all by relative navigation algorithm290′. Thus, the rates of change may be the results of variations of unknown combinations of absolute pose parameters. Relative navigation algorithm290′ is advantageous for applications that do not require knowledge of trajectory230but just rates of change. Such applications include navigation of relative hand-held devices such as two-dimensional mice, three-dimensional mice, relative mouse-pens and other low-accuracy controls or relative input devices.

Apparatus200is inherently low-bandwidth, since PSD212reports just four values, namely (xi+,xi−,yi+,yi−) corresponding to the location of centroid234produced by one or more known beacons. The intrinsically high signal-to-noise ratio (SNR) of centroid234due to low background noise allows apparatus200to operate at high capture rates, e.g., up to 10 kHz and higher, rendering it ideal for tracking fast moving objects. In fact, apparatus200is sufficiently robust to navigate even rapidly moving hand-held objects, including pointers, controllers, mice, high-precision gamer instruments, jotting implements and the like in close-range environments or constrained areas such as desks, hand-held notepads, point-of-sale environments and various game- and work-spaces.

Optical navigation apparatus200admits of many more specific embodiments. First and foremost, centroid sensing device212can use various physical principles to obtain the centroid of imaged distribution232of electromagnetic radiation222(and ambient radiation220). A person skilled in the art will recognize that even a regular full field sensor, e.g., a digital CMOS sensor, can act as centroid sensing device212. In general, however, the use of a standard full-frame capture CMOS sensor with a large number of individual pixels will not be very efficient. That is due to the large computational burden associated with processing large numbers of image pixels and lack of intrinsic facility in centroid sensing. In addition, fast motion capture and high frame rates required for navigating hand-held objects with on-board optical measuring arrangement are not compatible with the high-power and large bandwidth requirements of digital CMOS sensors.

Optical apparatus200for processing pose data can employ many other types of centroid sensing devices as PSD212. Some examples of such devices can be found in U.S. Patent Application 2007/0211239 to Mandella et al. A particularly convenient centroid sensing device has circular and planar geometry conformant to the naturally circular F.O.V. of lens210.FIG. 11shows such a circular PSD350of the semiconductor type in which the field of view F.O.V. is conformant with a sensing surface352of PSD350. In this embodiment four of beacons B1-Bn are active at the same time and produce an imaged intensity distribution354that is a constellation of four spots232A,232B,232C and232D at four locations in the image plane of PSD350. A center of mass (C.O.M.) of constellation354at the time of detection is designated with a cross and depends on the relative positions and intensities of spots232A-D.

The circular geometry of PSD250enables operation in polar coordinates (R,θ). In this convention each of four spots232A,232B,232C and232D has a centroid234A,234B,234C and234D described by polar coordinates (R1,θ1), (R2,θ2), (R3,θ3) and (R4,θ4). However, due to its principles of operation PSD350reports to electronics218only polar coordinates (Rc,θc), of the C.O.M.

A set of dashed arrows show the movement of centroids234A,234B,234C and234D and C.O.M. as a function of time. Note that applying optical flow without inferring or measuring the absolute pose of object202indicates an overall rotation and can be used as input for any relative motion device, e.g., an optical mouse. In such functional mode, absolute motion component208operates as an auxiliary motion component and more precisely an optical flow measuring unit that determines relative motion. Relative motion information obtained from optical flow can be very valuable and it can supplement absolute pose data in certain cases. For example, it can be used to interpolate motion of object202between times tiwhen absolute pose is inferred or measured.

In the last step, absolute pose data248consisting of all absolute pose parameters (x,y,z,φ,θ,ψ) are transmitted to an application running on control unit224via a wireless communication link244using a transceiver246A on-board object202and a transceiver246B on unit224. In this embodiment unit224is running a monitoring application to supervise manipulated object202without displaying any output.