U.S. Pat. No. 8,547,396

SYSTEMS AND METHODS FOR GENERATING PERSONALIZED COMPUTER ANIMATION USING GAME PLAY DATA

AssigneeIndividual

Issue DateDecember 31, 2007

Illustrative Figure

Abstract

Systems, methods, and computer storage media for generating a computer animation of a game. A custom animation platform receives game play data of the game and determines at least one scene based on the game play data. Then, one or more frames in the scene are set up, where at least one of the frames includes at least one non-game pre-production element of the game. Subsequently, the frames are rendered and the rendered frames are combined to generate a computer animation.

Description

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS The following detailed description is of the best currently contemplated modes of carrying out the invention. The description is not to be taken in a limiting sense, but is presented merely for the purpose of illustrating the general principles of the invention, since the scope of the invention is best defined by the appended claims. Referring now toFIG. 1, there is shown at100a schematic diagram of a system environment in accordance with one embodiment of the present invention. As depicted, the system100may include a custom animation platform101; game devices130,150,156, and164; a game developer's platform132; a posting board140; and an advertiser's platform136, which may be connected to a network118. The network118may include any suitable connections for communicating electrical signals therethrough, such as WAN, LAN, or the Internet. The custom animation platform101includes a personalized computer animation generation engine102(or, shortly, animation engine, hereinafter); and data storage104coupled to the animation engine102and storing pre-production items106, game play data (or, shortly, play data hereinafter)112, game information116, and gamer information113. The custom animation platform101may be a computer or any other suitable electronic device for running the animation engine102therein. For the purpose of illustration, the data storage104is shown to be included in the custom animation platform101. However, it should be apparent to those of ordinary skill that the data storage104may be physically located outside the custom animation platform and coupled to the animation engine102directly or via the network118. The game device164includes: a display166for displaying visual images to a user of the device (or, game player, hereinafter); a storage or memory for storing custom art168; and a storage or memory for storing game play data170. The game device164may be a PC, for instance, and include peripheral devices (not shown inFIG. 1), such as joystick or keyboard, that allows the user to interact with ...

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS

The following detailed description is of the best currently contemplated modes of carrying out the invention. The description is not to be taken in a limiting sense, but is presented merely for the purpose of illustrating the general principles of the invention, since the scope of the invention is best defined by the appended claims.

Referring now toFIG. 1, there is shown at100a schematic diagram of a system environment in accordance with one embodiment of the present invention. As depicted, the system100may include a custom animation platform101; game devices130,150,156, and164; a game developer's platform132; a posting board140; and an advertiser's platform136, which may be connected to a network118. The network118may include any suitable connections for communicating electrical signals therethrough, such as WAN, LAN, or the Internet.

The custom animation platform101includes a personalized computer animation generation engine102(or, shortly, animation engine, hereinafter); and data storage104coupled to the animation engine102and storing pre-production items106, game play data (or, shortly, play data hereinafter)112, game information116, and gamer information113. The custom animation platform101may be a computer or any other suitable electronic device for running the animation engine102therein. For the purpose of illustration, the data storage104is shown to be included in the custom animation platform101. However, it should be apparent to those of ordinary skill that the data storage104may be physically located outside the custom animation platform and coupled to the animation engine102directly or via the network118.

The game device164includes: a display166for displaying visual images to a user of the device (or, game player, hereinafter); a storage or memory for storing custom art168; and a storage or memory for storing game play data170. The game device164may be a PC, for instance, and include peripheral devices (not shown inFIG. 1), such as joystick or keyboard, that allows the user to interact with the game device.

The custom arts168may include art works generated by the game player who generates the play data170and/or by third parties, such as computer animation freelancers, students, studios, amateur enthusiasts, etc. By way of example, the custom art168may include a digital portrait of the game player, which is not seen during play of a computer game nor through replay of game scenes generated by use of in-game pre-production items only. (In-game pre-production items will be described in detail below with reference toFIG. 2A.) The custom art168may be sent to and stored in the data storage104such that the custom art may be incorporated into personalized computer animations generated by the animation engine102. The term personalized animation refers to a computer animation that is generated by use of game play data and includes a replay of the entire or a portion(s) of the game. For instance, a personalized animation of a football game may include touch down scenes.

The play data170includes records of selections made and actions taken by a game player during game play. The records may also include the time when the player starts to play the game and when certain objectives of the game are completed by the player. For accuracy and verification purposes, recorded times may be in standard time reference, such as Coordinated Universal Time (UTC) available through Network Time Protocol (NTP). The records may further include, but are not limited to, expressions generated by the player, such as dialog input. As a game player plays a game on the device164, the device164may store the game play data170in the device164or concurrently send the play data to the storage112for play data via the network118.

The game device156includes data inputting device158and a storage160for video/audio contents. The data inputting device158may include a scanner that can convert a printed image into a digital image, for instance. The data inputting device158may also receive video and/or audio data from a third body via the network118and subsequently store the data into the storage160or send the data to the custom animation platform101via the network118, or both. As in the case of custom art168, the video/audio data can be incorporated into personalized computer animations generated by the animation engine102. It is noted that the game player may send the video/audio contents by off-line measures to the custom animation platform101.

The game device150includes recording medium152and a storage154for game play data. By way of example, one or more game players may play a board game, such as Dungeons and Dragons roleplaying game, using a dice and record the game play data for each player in a suitable recording medium152, such as PC or PDA. A dungeon master, who prepares each game session with a thorough knowledge of the game rules, presides over each game session, and serves as both storyteller and referee, may record the game play data. Then, the game player may send the recorded game play data to the custom animation platform101via the network118. Alternatively, the game player may record the game play data on a paper and send the play data to the custom animation platform101by use of suitable off-line measures, such as ground mail.

The game device130may be a wireless communication device, such as cell phone or PDA, that allows a game player to play a computer game and records/sends the game play data to the custom animation platform101via the network118. For brevity, only four game devices130,150,156, and164are shown inFIG. 1. However, it should be apparent to those of ordinary skill that any suitable number of game devices may be used in the system environment100and more than one player may play a game simultaneously. Also, other types of game device that have features of one or more of the devices130,150,156, and164can be used to play a game and send game play data to the custom animation platform101.

The game developer's platform132, which is connected to the network118, may be used to provide the game devices130,150,156, and164with games134. The game developer's platform132may be a computer, or other suitable device that can provide tools to develop computer games for the game developers. Alternatively, the games134may be stored on a suitable computer storage medium, such as CD, and sold to a game player. The game developer may generate all or part of the pre-production items of a game, such as images and sounds viewed and heard during game play, in a game development process and also send game information including the pre-production items to the custom animation platform101, more specifically to the game information storage116, via the network118. The in-game pre-production items are described in detail with reference toFIG. 2A.

The posting board140, which is connected to the network118, may be included in a web server for displaying various contents, such as personalized computer animations generated by the animation engine102. The posting board140may include World Wide Web portals, World Wide Web Logs (blogs), Internet sites, Intranet portals, and Bulletin Board Systems, for instance. A personalized computer animation of a game can be generated in real-time based on game play data from a game device while the game is played on the game device. The generated animation may be sent from the custom animation platform101to the posting board140in a suitable data stream format, allowing viewers of the board to watch the live animation based on the game play on the posting board140. The viewers can also watch the live animation on networked devices connected to the board140.

The advertiser's platform136, which is connected to the network118, includes a storage138for advertisement contents and sends the advertisement contents to the custom animation platform101via the network118. As will be discussed below, the advertisement contents138may be incorporated into the personalized computer animations generated by the animation engine102. Advertisement providers can be any person, corporate, company or partnership that provides advertisements for delivery to viewers of the personalized computer animations.

As discussed above, the data storage104includes game play data112received from the game devices130,150,156, and164via the network118or other suitable off-line measures. The data storage104also includes gamer information113related to game players, such as player's ID, password, age, preference, or the like. The custom animation platform101may gather the gamer information by asking the gamers to pre-register or analyzing the game play data to understand each gamer's preferences. The gamer information113may be used, for instance, to determine the types of advertisement contents incorporated into personalized animations.

The pre-production items106may refer to, but are not limited to, all or part of elements that are used in computer animation development and production processes and are prepared prior to the production of actual animations. The pre-production items include in-game pre-production items108and non-game pre-production items110. The in-game pre-production items108of a computer game refer to pre-production items, such as images and sounds, that are used to produce the computer game and can be seen and heard during game plays of the computer game.FIG. 2Ashows in-game pre-production items of a computer game that might be included in the custom animation platform101. As depicted, the in-game pre-production items108may include, but are not limited to, models203, layout204, animation206, visual effects208, lighting210, shading212, voices214, sound tracks216, and sound effects218.

The models203of a computer game includes characters (or, avatars), stages for scenes, tools used by the characters, backgrounds, trifling articles, a world in which the characters live, or any other elements used for the visual presentation in the game. The layout204includes information related to the arrangements of the models203in the game scenes. The animation206refers to successive movements of each model appearing in a sequence of frames. By way of comparison, the stop-motion animation technique may be used to create an animation by physically manipulating real-world objects and photographing them one frame of film at a time to create the illusion of movement of a typical clay model. In one embodiment of the present invention, several different types of stop-motion animation technique, such as graphic animation, may be applied to create the animation206of each model. By the animation206, characters are brought to life with movements.

The visual effects208refer to visual components integrated with computer generated scenes in order to create more realistic perceptions and intended special effects. Lighting210refers to the placement of lights in a scene to create mood and ambience. Shading212is used to describe the appearance of each model, such as how light interacts with the surface of the model at a given point and/or how the material properties of the surface of the model vary across the surface. Shading can affect the appearance of the models, resulting in intended visual perceptions. The voices214includes voices of the characters in the game. The sound tracks (or, just tracks)216refers to audio recordings created or used in the game. The sound effects218are artificially created or enhanced sounds, or sound processes used to emphasize artistic or other contents of the animation. Hereinafter, the term sound collectively refers to the voices214, sound tracks216, and sound effects218. Also, the terms sound and audio content are used interchangeably hereinafter.

It is noted that, inFIG. 2A, the in-game pre-production items108are shown to have nine types of items for the purpose of illustration. However, it should be apparent to those of ordinary skill thatFIG. 2Adoes not show an exhaustive list of in-game pre-production items, nor does it imply that the entire in-game pre-production items can be grouped into nine types. For instance, the in-game pre-production items108may also include rendering parameters (not shown inFIG. 2A).

As will be further discussed below in conjunction withFIG. 3, the in-game pre-production items108may be used to generate images and sounds in the computer game animation. The in-game pre-production items108may be also used to make one or more distinct replays of animated scenes representing different plays of the same game. (Hereinafter, the term replay refers to replaying of animated scenes that represent a game play, where the animated scenes are generated by use of in-game pre-production items only.) Therefore, the in-game pre-production items108may need to be prepared to represent all or part of possible choices a player can make during game plays. For instance, all or part of the models203which can be selected by the player during game plays, and the items' appearances through particular shadings may be prepared and stored as in-game pre-production items108. The in-game pre-production items may be obtained from the game information116sent by the game developer's platform132. Alternatively, the in-game pre-production items may be created by a third party.

FIG. 2Bshows creative development items220of a computer game that might be created in a creative development process. The creative development items220includes story222and art design224. To create a game, the story222of the game is generated. Then, based on the story, scenarios for a computer game animation are prepared. Also, a storyboard including a cartoon-like sequence of events for each scenario is created. Art design224refers to selection of styles of arts throughout the game. Look and feel of the game is developed in the art design224. The story222and the art design224for the game guide the preparation of in-game pre-production items108.

FIG. 3shows a flow chart300illustrating exemplary steps that might be carried out to generate the items108,220and to produce images and sounds in a computer game animation using these items. The process begins in a state302to create the story222. Then, art design224for the computer animation is set in a state304. The states302and304are collectively referred to as a creative development process. Subsequently, based on the story222and the art design224, the in-game pre-production items108are prepared in a state306.

To produce a computer game animation, the process may proceed to a state308. To create a frame, the models203are arranged according to the layout204in the state308. Subsequently, in states310and312, animation206and shading212are applied to the models in the frame. The art design224may be used to guide the steps308and312. Then, the lighting210is selected for the frame in a state314, and visual effects208are added to the frame in a state316. Next, the frame is rendered in a state318. Hereinafter, the term rendering refers to taking a snap shot of a frame. In a decision block319, a determination is made as to whether all frames of the computer game animation have been rendered. If the answer to the decision block319is negative, the process proceeds to the state308and repeats to the states316to prepare and render another frame. Otherwise, the process proceeds to a state320to add sounds, such as voices214, sound tracks216, and sound effects218.

As discussed above, the in-game pre-production items108may be used to make one or more distinct replays of animated scenes representing different plays of the same game. For instance, a game player may play a game, resulting in a game play data. Based on the game play data, an animated scene can be generated by repeatedly performing the steps308-320. The animated scene may be displayed to the game player.

It will be appreciated by those of the ordinary skill that the illustrated process inFIG. 3may be modified in a variety of ways without departing from the spirit and scope of the present invention. For example, various portions of the illustrated process may be combined, be rearranged in an alternate sequence, be removed, and the like. In addition, it should be noted that the process may be performed in a variety of ways, such as by software executing in a general-purpose computer, by firmware and/or computer readable medium executed by a microprocessor, by dedicated hardware, and the like. For another example, the art design224may be modified upon completion of the states306-318. Then, in accordance with the modification, all or parts of the steps306-318may be repeated.

As depicted inFIG. 1, the pre-production items106includes non-game pre-production items110. The non-game pre-production items110refer to pre-production items that produce images and sounds which cannot be seen or heard during game plays nor through replay of scenes generated using the in-game pre-production items108only.FIG. 4shows non-game pre-production items110that might be included in the custom animation platform101.

For brevity, each non-game pre-production item is not described in detail. The non-game pre-production items110are similar to the in-game pre-production items108with several differences. First, the non-game pre-production items110include one or more stories401and art designs402created during the creative development processes. Each story and art design correspond to a possible choice a game player can make during a game play. For instance, one game player can choose a game character to find treasures in one sequence during a quest, while another game player can choose another sequence. The creative development process may be performed to make a story and an art design for each sequence differently. Second, the advertisement contents138received from the advertiser's platform136may be used as non-game pre-production items.

Third, the custom arts422received from the game device164or from a third body may be used as non-game pre-production items. For instance, the custom art422may include images, such as the image of the gamer, and voice of the gamer, that cannot be seen or heard during game plays or replays. The data inputting device158may be used to create custom art. Fourth, models403have images different from those shown in game plays and replays in form, shape, or color. For instance, a game character's costume or images of backgrounds included in the models403are different from their counterparts203(shown inFIG. 2) of the in-game pre-production items108. For another instance, the images of the models403can be shown in 3-dimension while the images of the models203are shown in 2-dimension, or vise versa. For yet another instance, the images of the models203can be constructed in 3-dimension with a low number of polygons while the images of the models403can be constructed in 3-dimension with a high number of polygons so that the images of non-game pre-production models may have enhanced visual resolutions.

Fifth, non-game pre-production items includes images or sounds additional to what are included in the counterpart in-game pre-production items. For instance, images for various facial expressions of each character can be included in the non-game pre-production items110. For another instance, additional sound effects, such as environmental sound of bird singing, and dialogues can be included in the sound effects418and voices414, respectively. Also, voices and sound effects different from what are included in the in-game pre-production items108in duration, periodicity, pitch, amplitude or harmonic profile can be included in the voices414and sound effects418. For yet another instance, sounds from text-to-speech may be included. For still another instance, the visual effects408may include additional special effects, such as realistic animation of fluid flow, that cannot be displayed during the game plays or replays. For a further instance, images and sounds of game play hints and secrets of the game played and of other games can be included in the non-game pre-production items110. For another further instance, a preview of other game may be also included in the non-game pre-production items110. These additional images or sounds can be created from the very beginning or by modifying the in-game pre-production items108or importing similar images or sounds from other games and animations.

It is noted that, inFIG. 4, the non-game pre-production items110are shown to have thirteen types of items for the purpose of illustration. However, it should be apparent to those of ordinary skill thatFIG. 4does not show an exhaustive list of non-game pre-production items, nor does it imply that the entire non-game pre-production items can be grouped into thirteen types.

FIG. 5shows a flow chart500illustrating exemplary steps that may be carried out to generate the non-game pre-production items110of a computer game. The process starts in a state502. In the state502, the game information116may be received from the game developer's platform132, where the game information includes, but is not limited to, scenarios of the game and in-game pre-production items developed by the game developer. As an alternative, the game information can be reverse engineered based on the game instructions or previews in the public domain.

Optionally, in states504and506, the advertisement contents138and custom art168can be received from the advertiser's platform136and the game device164and stored in the non-game pre-production item storage110as advertisement420and custom arts422, respectively. The gamer information116, such as age, gender, preference, income, or the like, may be received from a game player in an optional state508. Various approaches may be used to obtain game player information. For instance, a questionnaire to be filled out by the game player may be sent to the game player. For another instance, the game player may be asked to provide pre-registration information. Based on the information collected in the states502-508, the non-game pre-production items are generated and stored in the storage110(FIG. 4) in a state510.

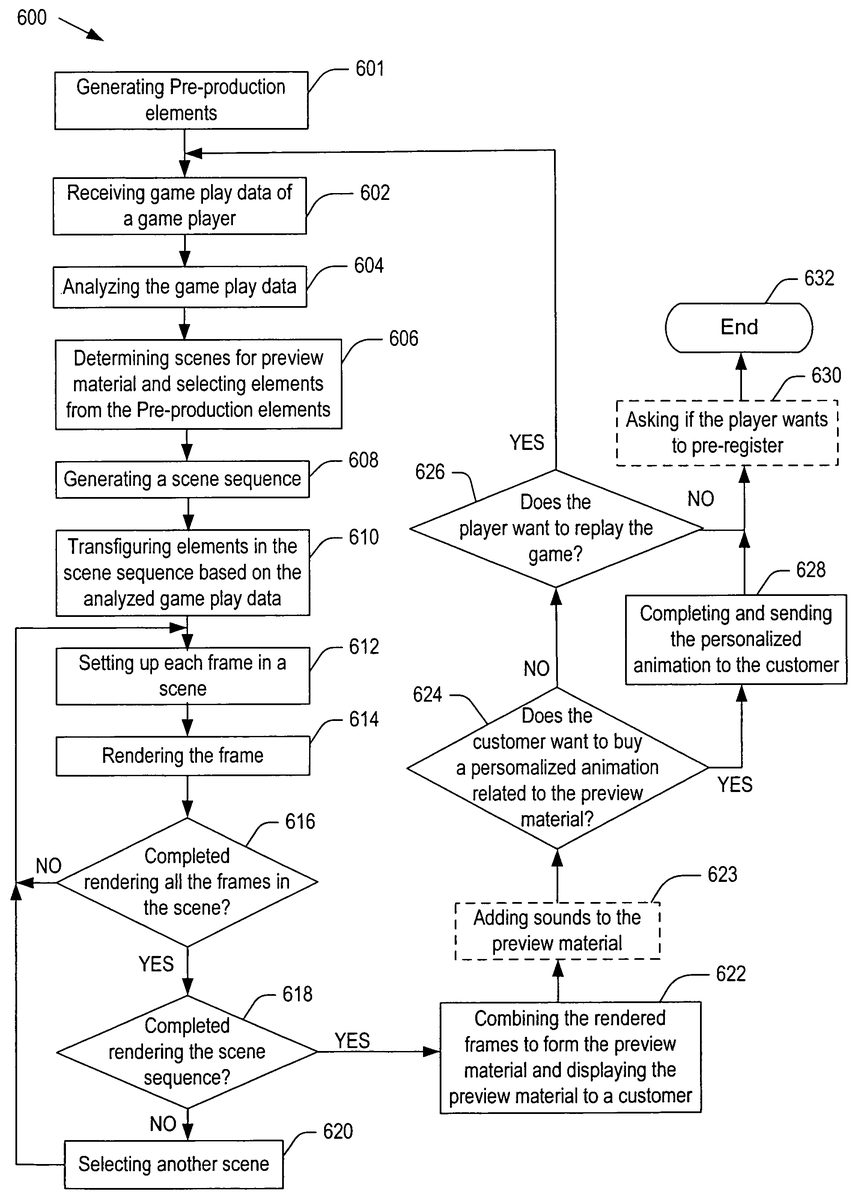

FIG. 6a flow chart600illustrating exemplary steps that may be carried out by the animation engine102(shown inFIG. 1) to generate a personalized computer animation. The process starts in a state601. In the state601, pre-production items may be generated by the processes described inFIGS. 3 and 5and stored in the pre-production items storage106. Then, in a state602, the game play data170may be received from one or more of the game devices130,150,156, and164and optionally stored in the play data storage112. Subsequently, the game play data is analyzed in a state604. For example, a game may include a cartoon character carrying several weapons during a quest. A statistical analysis may be done to the record of weapon selections made by the game player. For another example, grading of the game play may be done as a part of the analysis based on the time taken to complete the game, giving a higher grade to a shorter completion time. Then, the process proceeds to a state606.

In the state606, based on the game play data, scenes to be included in preview material are determined and elements to make the scenes are selected from the pre-production items106. The preview material may include discrete frames for the scenes, short animations of the scenes, or full personalized animations of the scenes. It is noted that the selected elements may include both the in-game and non-game pre-production items. Then, based on the game play data, a sequence of the scenes is generated in a state608. Then, the process proceeds to a state610.

In the state610, the elements in the scene sequence may be transfigured based on the analyzed game play data. For instance, the analysis may indicate that the game player chose a sword more frequently than other weapons in battles. In such a case, the image of the sword may be transfigured to show higher wear and tear than other weapons. Subsequently, each frame in a scene is set up in a state612and rendered in a state614. It is noted that the process may use a rendering algorithm that is not programmed into the game.

It is noted that the non-game pre-production items110(shown inFIG. 4) can be incorporated into the frame. For instance, an advertisement content420may be included in the frame. For another instance, a digital portrait of the game player stored in the custom art422may be included in the frame such that the portrait appears as the face image of the cartoon character. For yet another instance, a sword slightly different from the sword shown in the game play may be used in the frame. For still another instance, facial expressions of the cartoon characters and dialogues different from those in the game play may be used in the frame.

A determination is made as to whether rendering a scene has been completed in a state616, where rendering a scene refers to taking images of all the frames in the scene. Depending on the type of the preview material, few frames may be rendered in each scene or a sequence of frames may be rendered to create an animation of each scene. Upon negative answer to the decision diamond616, the process proceeds to the state612. Otherwise, the process proceeds to a state618.

In the state618, a determination is made as to whether rendering all of the scenes in the scene sequence has been completed. Upon negative answer to the decision diamond618, the process proceeds to a state620to select another scene. Subsequently, the process proceeds to the state612. If the answer to the decision diamond618is positive, the process proceeds to a state622. In the state622, the rendered frames are combined to form the preview material and preview material is displayed to a customer. As an option, sound may be added to the preview material in a state623, where the sound includes one or more of the voices414, sound tracks416, and sound effects418. Then, the customer decides whether to buy a personalized animation related to the preview material in a state624. The personalize animation may include a computer animation of the entire scene sequence generated in the state608. Upon negative answer to the decision diamond624, the process proceeds to a state626. In a state626, a determination is made as to whether the player wants to replay the game. Upon affirmative answer to the decision diamond626, the process proceeds to the state602so that the game player can replay the game. If the answer to the decision diamond626is negative the process may proceed to an optional state630.

If the customer wants to buy a personalized animation in the state624, the process proceeds to a state628. In the state628, the personalized animation is completed and sent to the customer. If the personalized animation includes animations of the entire scenes while the preview material includes only discrete frames or animations of selected scenes of the scene sequence, the states606-610and612-620may be repeated to make a complete animation such that the entire scene sequence is animated. Next, the process may proceed to the optional state630. In the state630, the game player is asked if he wants to pre-register. The pre-registration information is stored in the gamer information113(shown inFIG. 1). Then, the process ends in a state632. It is noted that the personalized computer animation can be generated in real time.

When more than one game player concurrently plays the same game on a game device or game devices connected to each other via the network118, the personalized computer animation that contains all or part of the players with perspectives from non-player objects, and from each player, are generated. For example, a touch down scene of a computer football game may be included in multiple personalized computer animations. In one animation, the scene may be generated with the perspective from the defense. In another animation, the scene may be generated with the perspective from the offence. In yet another animation, the scene may be generated with the perspective from a spectator.

FIG. 7shows exemplary in-game pre-production elements M1-M12of a computer game. For the purpose of illustration, only twelve elements M1-M12are shown inFIG. 7. Also, for brevity, only scenes related to a battle between the monster M1and the player character M2will be described in the present document. In the game, the player character M2may select one of the weapons M3-M7in the battle with the monster M1at a temple M12and picks up a cloak of invisibility M8in the box M11located on the table M10. It is noted that both the non-game and in-game pre-production items may be generated prior to game plays and stored in the storage104(shown inFIG. 1).

FIG. 8shows two exemplary game play data802,804recorded during two different game plays of the computer game including the elements M1-M12depicted inFIG. 7. As depicted, each game play data includes a log of events that can be used to create a scenario or story401. Each row of the game play data shows the time when an action of the game player is taken, the reaction of the game in response to the action, and the location where the action is taken.

Based on each game play data, say802, the animation engine102determines scenes and generates a sequence of scenes to be included in a personalized computer animation. For instance, three scenes may be generated as illustrated inFIG. 9.FIG. 9shows frames906,908, and910included in a personalized animation that might be generated by the personalized animation generation engine102, where the three frames are selected from the three scenes of the personalized animation. In the first scene including the frame906, the play character M2hides on top of the temple M12near the monster M1, then attacks the unsuspecting monster with a sword N2. Using the game play data in802, necessary elements to form the first scene, such as models, layout of a location, camera placements at the location, camera movement at the location, motion of models during battle, shading parameters for appearance of models, light source parameters and placements at the location, visual effects, and sounds used in the battle, are selected from pre-production elements in106.

As depicted in the game play data802, the play character M2uses the sword M4. If the analysis of the game play data shows the sword M4is used frequently by the game player throughout the game play, the animation engine102may select a non-game pre-production element N2in place of the sword M4in the personalized animation thereby to reinforce the personal choice made by the game player. An advertisement content912, which is another non-game pre-production item and selected from the advertisements420, may be superimposed on the image of the temple M12.

In the second scene, the play character M2fights against the monster M1as depicted in the fame908. All or part of the selected elements in the frame908may be transfigured for further personalization using the analysis of the game play data802. For an example, if the analysis indicates frequent use of the sword M4by a player, additional shadings may be applied to the sword N2to show wear and tear thereof. The level of wear and tear to show is determined by the analysis, and a non-game shading element to alter visual appearance of the sword N2is accordingly pulled from the shading412and applied.

In the third scene, the monster M1is killed and the play character M2picks up the cloak of invisibility M8. An exemplary non-game pre-production element N4may be included in the frame910. N4is a computer model containing an image of a layout of the dungeon with a safe exit for the player character M2marked with x. The layout N4is not seen during game plays nor replays. With the layout, a viewer of the third scene can avoid dangers in future game plays if the viewer chooses to, thereby enhancing game play experiences. A non-game pre-production item, such as an advertisement916of a soft drink bottle, may be included in the frame910. Also, another advertisement, such as a trademark914, may be displayed on a costume of the play character M2.

Based on the game play data804, the animation engine102may determine scenes and generate another sequence of scenes different those that based on the game play data802. For instance, two scenes may be generated as illustrated inFIG. 10.FIG. 10shows two frames1002and1004in a personalized animation that might be generated by the personalized animation generation engine102, where the two frames are selected from the two scenes in the personalized animation. The game data in804shows that a player character M2used the bow M7and arrows M5to kill the same monster M1.

In the first frame1002, the same location as in the frame906may be used to show an initial attack of the play character M2. A camera may be moved in closer to the top of the temple M12in the frame1002than in the frame906, i.e., the frame1002is shown through a different camera from the one used in the frame906, even though the same location as in the frame906is used in the frame1002.

In the second frame1004, the monster M1falls to death. Then, the play character M2picks up the cloak of invisibility M8. The analysis of the game play data804may yield statistical data that shows battle encounter counts with monsters and percentage of winning the battles. Using the analysis, additional shading selected from the shading412may be applied to the bow M7, which is an in-game pre-production element, to show different appearances of wear and tear, for instance.

Based on the analysis of game play data804, such as the time spent to complete the battle between the monster M1and the player character M2, a game play grade may be determined. In the case of a high grade, a non-game pre-production element, such as flames (not shown inFIG. 7) on arrows M5, may be pulled from the pre-production items106and applied in addition to or in replacement of the in-game special effect elements when the bow M7is used. It is noted that other non-game pre-production elements, such as the layout of the dungeon N4, may be included in the frame.

It is noted that the frames906,908,910,1002, and1004inFIGS. 9 and 10show few exemplary non-game pre-production items of a personalized computer animation. However, it should be apparent to those of ordinary skill that other suitable non-game pre-production items can be included in the frames without deviating from the scope of the present invention. For instance, various audio contents selected from the voices414, sound tracks416, and sound effects418, may be included to enhance theatrical effects. For another instance, advertisements may be embedded as a part of an animation using virtual billboards in the animation. For yet another instance, advertisement contents may be added in a similar manner to television commercials, such as in front of, in the middle of, and/or at the end of the personalized animation. For a further instance, a hypertext link my be embedded in the frame so that a customer may visit a web site by clicking the hypertext link.

It is also noted that the non-game pre-production items included in the personalized computer animation are not seen to the game player during the game plays. However, if needed, personalized computer animations can only include in-game pre-production items. Also, the animation engine102may limit the level of audio and visual quality of the personalized animation to that of the game software, or generate personalized animations with enhanced audio and visual quality level to improve theatrical effects.

As depicted inFIGS. 9 and 10, two different scenarios are formed based on two different game play data. The animation engine102respectively generates two distinct personalized computer animations for the two scenarios, where each personalized computer animation is produced with the selected and transfigured pre-production elements through a rendering process. It is noted that different art designs402may be used to prepare non-game pre-production items to produce distinct personalized animations in art style based on the same game play data. The non-game pre-production items in the personalized computer animation provide viewers with further gratification beyond the scope of replay of saved game data in the prior arts.

As the preview material of a personalized computer animation, only the frames inFIG. 9orFIG. 10may be sent to the game player. As a variation, the preview material may include only a portion of each scenario, such as the third scene ofFIG. 9for instance. As another variation, the preview material may include the entire scenes for each game play data. The contents and length of the preview material may be determined by various factors, such as type of game, custom arts, and advertisements included therein. If the game player wants to buy the personalized computer animation, the animation engine generates entire scenes, merges them to generate a complete personalized computer animation, and send the completed animation to the game player.

It is noted that the game play data may be recorded during game plays of a board game. As an example, a group of people may play Dungeons and Dragons roleplaying board game. In such a case, a record keeper, who may be the dungeon master, may record the game play to generate game play data. In this case, the game play data may include a list of, inter alia, descriptions of situations which are set by the dungeon master, selections and results of dice rolls made by players in each situation, etc. The custom animation platform101may create a personalized computer animation using the game play data in a manner similar to that described in conjunction withFIGS. 4-10.

FIG. 11shows an embodiment of a computer1100of a type that might be employed as the custom animation platform101in accordance with the present invention. The computer1100may have less or more components to meet the needs of a particular application. As shown inFIG. 11, the computer may include one or more processors1102including CPUs. The computer may have one or more buses1106coupling its various components. The computer may also include one or more input devices1104(e.g., keyboard, mouse, joystick), a computer-readable storage medium (CRSM)1100, a CRSM reader1108(e.g., floppy drive, CD-ROM drive), a communication interface1112(e.g., network adapter, modem) for coupling to the network118, one or more data storage devices1116(e.g., hard disk drive, optical drive, FLASH memory), a main memory1126(e.g., RAM) containing. software embodiments, such as the animation engine102, and one or more monitors1132. Various software may be stored in the computer-readable storage medium1100for reading into a data storage device1116or main memory1126.

While the invention has been described in detail with reference to specific embodiments thereof, it will be apparent to those skilled in the art that various changes and modifications can be made, and equivalents employed, without departing from the scope of the appended claims.

Claims

- A method for generating a computer animation of a game, comprising: receiving game play data of the game;determining at least one scene based on the game play data;preparing at least one non-game pre-production element of the game that cannot be displayed to a player of the game during play of the game and corresponds to a counterpart in-game pre-production item displayed to the player during play of the game, wherein the game play data includes information of frequency of usage of the counterpart in-game pre-production item during play of the game;performing an statistical analysis of the information of frequency of usage;transfiguring the non-game pre-production element based on the statistical analysis setting up one or more frames in the scene, at least one of the frames including the transfigured non-game pre-production element of the game;rendering the frames;and combining the rendered frames to generate the computer animation.

- A method as recited in claim 1 , wherein the non-game pre-production element is generated by modifying an in-game pre-production element.

- A method as recited in claim 1 , further comprising: adding a sound to the computer animation.

- A method as recited in claim 3 , wherein the sound includes a non-game pre-production audio content that is different from a corresponding in-game pre-production audio content of the game in at least one of pitch, periodicity, duration, amplitude, and harmonic profile.

- A method as recited in claim 3 , wherein the sound includes a text-to-speech.

- A method as recited in claim 1 , wherein the non-game pre-production element includes an image that is different from a corresponding image of an in-game pre-production element of the game in at least one of form, shape, and color.

- A method as recited in claim 1 , wherein the frames include at least one selected from the group consisting of an advertisement content, custom art, a hint of a game, a secret of a game, and a preview of a game.

- A method as recited in claim 7 , wherein the advertisement content includes at least one selected from the group consisting of a product placement, a virtual billboard, a trademark placement, a hypertext link, and an animation.

- A method as recited in claim 1 , wherein the non-game pre-production element includes a three-dimensional image generated by modifying a two-dimensional image of an in-game pre-production element of the game.

- A method as recited in claim 1 , wherein the computer animation is rendered by use of a rendering algorithm different from a rendering algorithm used to generate the animation of the game.

- A method as recited in claim 1 , wherein the non-game pre-production element includes a three-dimensional image that has a higher polygon count than a corresponding three-dimensional image of an in-game pre-production element of the game.

- A method as recited in claim 1 , wherein the game is one selected from the group consisting of a computer game and a board game.

- A method as recited in claim 1 , further comprising: preparing game information of the game;and generating the non-game pre-production element.

- A method as recited in claim 13 , wherein the game information includes at least one scenario of the game and wherein the step of preparing game information includes: performing a reverse engineering to generate the scenario.

- A method as recited in claim 1 , further comprising: receiving gamer information of a gamer who plays the game.

- A method as recited in claim 1 , wherein the game play data includes a log of events.

- A non-transitory computer readable medium carrying one or more sequences of pattern data for generating a computer animation of a game, wherein execution of one or more sequences of pattern data by one or more processors causes the one or more processors to perform the steps of: receiving game play data of the game;determining at least one scene based on the game play data;preparing at least one non-game pre-production element of the game that cannot be displayed to a player of the game during play of the game and corresponds to a counterpart in-game pre-production item displayed to the player during play of the game, wherein the game play data includes information of frequency of usage of the counterpart in-game pre-production item during play of the game;performing an statistical analysis of the information of frequency of usage;transfiguring the non-game pre-production element based on the statistical analysis;setting up one or more frames in the scene, at least one of the frames including the transfigured non-game pre-production element of the game;rendering the frames;and combining the rendered frames to generate the computer animation.

- A non-transitory computer readable medium as recited in claim 17 , wherein execution of one or more sequences of pattern data by one or more processors causes the one or more processors to perform the additional step of: adding a sound to the computer animation.

- A non-transitory computer readable medium as recited in claim 17 , wherein execution of one or more sequences of pattern data by one or more processors causes the one or more processors to perform the additional step of: storing the game play data and the non-game pre-production element in a storage device.

- A non-transitory computer readable medium as recited in claim 17 , wherein the game play data includes a log of events.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.