U.S. Pat. No. 8,430,750

VIDEO GAMING DEVICE WITH IMAGE IDENTIFICATION

AssigneeBroadcom Corporation

Issue DateNovember 30, 2009

Illustrative Figure

Abstract

A video gaming device includes a processing module, a graphics processing module, and a video gaming object interface module. The processing module interprets a digital image(s) of a player to determine an identity of the player. When the identity of the player is determined, the processing module retrieves a user profile and generates video gaming menu data in accordance with at least one data element of the user profile. The graphics processing module renders a display image based on the video gaming menu. The video gaming object interface module receives a wireless menu selection signal and converts it into a menu selection signal.

Description

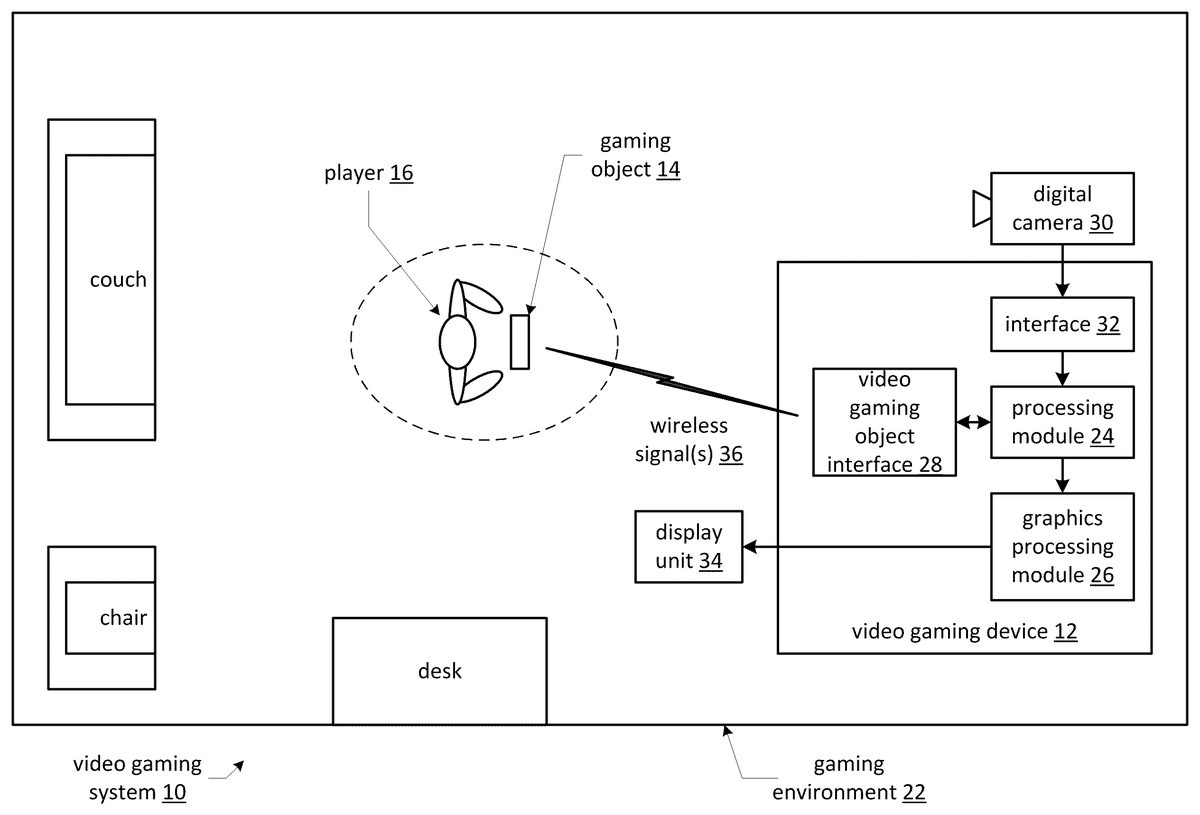

DETAILED DESCRIPTION OF THE INVENTION FIG. 1is a schematic block diagram of an overhead view of an embodiment of a video gaming system10that includes a video gaming device12and at least one gaming object14associated with a video game player16. The video gaming system10is within a gaming environment22, which may be a room, portion of a room, and/or any other space where the gaming object14and the video gaming device12can be proximally co-located (e.g., airport terminal, on a bus, on an airplane, etc.). The video gaming device12(which may be a standalone device, a video game console, or incorporated in a personal computer, laptop computer, handheld computing device, etc.) includes a processing module24, a graphics processing module26, a video gaming object interface28, and an interface32. The interface32couples the video gaming device12to at least one digital camera32, which could be included in the video gaming device12. The interface32may be a universal serial bus (USB), a Firewire interface, a Bluetooth interface, a WiFi interface, etc. The processing module24and the graphics processing module26may be separate processing modules or a shared processing module. Such a processing module may be a single processing device or a plurality of processing devices. Such a processing device may be a microprocessor, micro-controller, digital signal processor, microcomputer, central processing unit, field programmable gate array, programmable logic device, state machine, logic circuitry, analog circuitry, digital circuitry, and/or any device that manipulates signals (analog and/or digital) based on hard coding of the circuitry and/or operational instructions. The processing module may have an associated memory and/or memory element, which may be a single memory device, a plurality of memory devices, and/or embedded circuitry of the processing module. Such a memory device may be a read-only memory, random access memory, volatile memory, non-volatile memory, static memory, dynamic memory, flash memory, cache memory, and/or any device that stores ...

DETAILED DESCRIPTION OF THE INVENTION

FIG. 1is a schematic block diagram of an overhead view of an embodiment of a video gaming system10that includes a video gaming device12and at least one gaming object14associated with a video game player16. The video gaming system10is within a gaming environment22, which may be a room, portion of a room, and/or any other space where the gaming object14and the video gaming device12can be proximally co-located (e.g., airport terminal, on a bus, on an airplane, etc.).

The video gaming device12(which may be a standalone device, a video game console, or incorporated in a personal computer, laptop computer, handheld computing device, etc.) includes a processing module24, a graphics processing module26, a video gaming object interface28, and an interface32. The interface32couples the video gaming device12to at least one digital camera32, which could be included in the video gaming device12. The interface32may be a universal serial bus (USB), a Firewire interface, a Bluetooth interface, a WiFi interface, etc.

The processing module24and the graphics processing module26may be separate processing modules or a shared processing module. Such a processing module may be a single processing device or a plurality of processing devices. Such a processing device may be a microprocessor, micro-controller, digital signal processor, microcomputer, central processing unit, field programmable gate array, programmable logic device, state machine, logic circuitry, analog circuitry, digital circuitry, and/or any device that manipulates signals (analog and/or digital) based on hard coding of the circuitry and/or operational instructions. The processing module may have an associated memory and/or memory element, which may be a single memory device, a plurality of memory devices, and/or embedded circuitry of the processing module. Such a memory device may be a read-only memory, random access memory, volatile memory, non-volatile memory, static memory, dynamic memory, flash memory, cache memory, and/or any device that stores digital information. Note that when the processing module implements one or more of its functions via a state machine, analog circuitry, digital circuitry, and/or logic circuitry, the memory and/or memory element storing the corresponding operational instructions may be embedded within, or external to, the circuitry comprising the state machine, analog circuitry, digital circuitry, and/or logic circuitry. Further note that, the memory element stores, and the processing module executes, hard coded and/or operational instructions corresponding to at least some of the steps and/or functions illustrated inFIGS. 1-10.

In general, the graphics processing module26renders data into pixel data (e.g., a display image) for display on a display unit34(e.g., monitor, television, LCD display panel, etc.). For instance, the graphics processing module26may perform calculations for three-dimensional graphics, geometric calculators for rotation, translation of vertices into different coordinate systems, programmable shading for manipulating vertices and textures, anti-aliasing, motion compensation, color space processing, digital video playback, etc.

In an example of operation, the digital camera30takes at least one digital picture of the video game player16. This may occur when the player16enables the video gaming device12, the player16provides a command via the gaming object14, as a result of the digital camera periodically capturing digital images that are analyzed by the video gaming device12, and/or as a result of the digital camera continuously (e.g., video recorder mode) capturing digital images that are analyzed by the video gaming device12.

As the digital camera30captures digital images, it provides them to the processing module24via the interface. This may be done as the images are captures or the images may be stored and then forwarded to the processing module24. The processing module24interprets the digital images (or at least one of them) to detect whether a player16is present in the digital image and, if so, attempts to determine an identity of the video game player16.

When the processing module24is able to identify the video game player, the processing module24retrieve a user profile of the video game player based on his or her identity. The user profile includes various user data such as authentication data, access privileges, product registration data, and/or personal preference data. The processing module24then generates video gaming menu data in accordance with at least one data element of the user profile. For example, based the player's personal preferences, the processing module24creates a menu that includes system settings, favorite video games, etc.

The processing module24provides the video gaming menu data to the graphics processing module26, which renders a display image based on the video gaming menu. The rendering may include two-dimensional processing, three-dimensional processing, and/or any other video graphics processing function.

If the player16desires to engage the video gaming device12, he or she may provide a menu selection via the gaming object14. In this instance, the gaming object14interprets the input it receives from the player16(which may be received via a keypad, one or more electro-mechanical switches, or buttons, voice recognition, etc.) and creates a corresponding wireless menu selection signal. The video gaming object interface module28receives the wireless menu selection signal and converts the wireless menu selection signal into a menu selection signal.

Alternatively, the gaming object14may provide a directed wireless signal at a graphics representation of a menu item. The video gaming object interface28detects the directed wireless signal as the wireless menu selection signal and determines which of the menu items the signal is indicating. The video gaming object interface28converts the wireless menu selection signal into a menu selection signal, e.g., generates a signal indicating the selected menu item.

In general, the gaming object14and the video gaming object interface module28communicate signals36(e.g., menu selection, video game inputs, video game feedback, video game control, user preference inputs, etc.) wirelessly. Accordingly, the gaming object14and the interface module28each include wireless communication transceivers, such as infrared transceivers, radio frequency transceivers, millimeter wave transceivers, acoustic wave transceivers or transducers, and/or a combination thereof.

FIG. 2is a diagram of an example of that expands on the video gaming system ofFIG. 1. In this example, the digital camera30captures one or more digital images38of the player16. The image38may be a full body image or a portion of the body (e.g., upper torso, headshot, etc.). The processing module24receives the digital image(s)38via the interface32and performs an image interpretation function52thereof to identify the player. The image interpretation function52may include analyzing the digital image(s) to isolate a face of the video game player; and once the face is isolated (e.g., the pixel coordinates of the player's face are determined), the processing module performs a facial recognition algorithm on the face to identify the video game player.

Once the player is identified, the processing module24retrieves the user profile40from memory50(which may be flash memory, random access memory, etc.). The user profile40includes authentication data42, access privileges44, product registration data46, and personal preference data48. The user profile40may further include a digital image38of the user's face to facilitate the facial recognition function.

The authentication data42may include information such as a password, user ID, device ID or other data for authenticating the player to the video game device12or to a service provided through the video game device12. The video game device12may use the authentication data42to set access privileges44for the player in accordance with at least one video game it is executed. The access privileges44include an indication as to whether the player is a subscriber, or other authorized person, to play a video game, the age of the player to ensure that he or she is of a minimum age to play an age restricted video game, level of privilege to play a certain video game (e.g., play only, modify caricatures, save play, delete play, control other player's game play data, etc.) etc.

The product registration data46corresponds to data (e.g., serial number, date of purchase, purchaser's information, extended warranty, etc.) of the video game device12, of purchased video games, of rented video games, of gaming objections, etc. Such product registration data46of peripheral components (e.g., gaming objects, which includes joy sticks, game controllers, laser pointers, microphones, speakers, headsets, etc.) and video game programs can be automatically be supplied to video game device12or to a service provider coupled thereto via a network. In this fashion, product information can be obtained each time a new game is initiated; a new component is added to the system; etc., without having to query the player for the data.

The personal preference data48includes security preferences or data (e.g., encryption settings, etc.), volume settings, graphics settings, experience levels, names, favorite video games, all video games in a personal library, favorite competitors, caricature selections, etc. that are either game parameters specific to a particular game or that are specific to the user's use of the video game device12. The personal preference data48may also include billing information of the player to enable easy payment for on-line video game purchases, rentals, and/or play.

After retrieving the user profile40, the processing module24generates video gaming menu data in accordance with at least one data element of the user profile. The example ofFIG. 2continues atFIG. 3where the processing module24provides video gaming menu data64to the graphics processing module26. The graphics processing module26converts the video gaming menu data64into a display image66of the video gaming menu, which is displayed on the display unit34.

The video gaming menu data64, and hence the resulting menu display54, includes a composite of menu items that the player may most likely want to access or it may include a full listing of menu items available to the player. For example, the menu may include a listing of available video games, the user profile, and/or a listing of system settings (e.g., IP address, service provider information, video graphics processing settings (e.g., true color, 16 bit color, etc.), available memory, CPU data, etc.). In this example, the menu54includes system settings60, available video games56, view/change user profile58, and may include other 62 options (e.g., play a DVD, active a web browser, play an audio file, etc.).

As is further shown in this example, the player has selected the menu item of available video games56. The example continues atFIG. 4where the processing module generates a list of video games70based on information contained in the user profile40. The graphics processing module26converts the data of the list of video games70into a display image72of the available video games56, which is displayed on the display unit34.

In this example, the player provides a wireless menu selection signal36to the video gaming object interface module28, which produces a menu selection signal therefrom. The processing module24interprets the menu selection signal to identify selection of one of the available video games56to produce a selected video game (as indicated by the arrow). The processing module24then retrieves operational instructions of the selected video game and begins executing the operational instructions to facilitate playing of the selected video game as shown inFIG. 5.

FIG. 5is a diagram of another example of video gaming where the processing module24is retrieving operational instructions76from memory50for a selected video game. The operational instructions76may be in source code, object code, assembly language, etc., that are executable by the processing module24. In this example, as the processing module24is executing the operational instructions76, it generates video game play data78, which it provides to the graphics processing module26. The video game play data78may include information to enable the graphics processing module26to perform calculations for three-dimensional graphics, to perform geometric calculations for rotation, to translate vertices into different coordinate systems, perform shading for manipulating vertices and textures, perform anti-aliasing, perform motion compensation, and/or perform color space processing to produce the display image80of the video game play.

During the execution of the operational instructions76of the selected video game, the processing module24may encounter operational instructions regarding the rendering a graphical representation of a caricature. In this instance, the example continues atFIG. 6where the processing module24identifies operational instructions corresponding to rendering graphics of a caricature of the selected video game. Having identified the operational instructions, the processing module24alters the rendering of the graphics of the caricature based on the at least one digital image.

For example, while executing the operational instructions regarding rendering an image87of a caricature, the processing module24retrieves the digital image86of the player16and may further retrieve the caricature image data87. During the execution of these operational instructions, the processing module alters the video game play data by using the digital image86of the player in place of the digital image87of the caricature or by combining the images86and87. Further, the processing module24may modify the image86of the player to create a caricature of the player, which it uses when executing the operational instructions.

The processing module24provides the altered video game play data88to the graphics processing module26, which generates, therefrom, display image90of the video game play. The resulting video game play image92, which typically includes a series of images to produce a motion picture, is displayed on the display unit34.

FIG. 7is a diagram of another example of video gaming where the digital camera30captures a plurality of images94of the player16. In this example, the processing module24receives the additional digital images94of the video game player as it is executing operational instructions96. The processing module24interprets the additional digital images94to identify gaming expressions (e.g., facial expressions, gestures, etc.). The processing module24alters the executing of the operation instructions96based on the gaming expressions to produce altered video game play data98.

The processing module24provides the altered video game play data98to the graphics processing module26, which generates, therefrom, display image100of the video game play. The resulting video game play image102, which typically includes a series of images to produce a motion picture, is displayed on the display unit34.

FIG. 8is a logic diagram of an embodiment of a method for video gaming that begins at step110where the processing module interprets at least one digital image of the video game player to determine an identity of the video game player. In an embodiment, this may be done by analyzing the at least one digital image to isolate a face of the video game player and performing a facial recognition algorithm on the face to identify the video game player.

The method continues at step112where the processing module determines whether it was able to identify the player. If yes, the method continues at step114where the processing module retrieves a user profile of the video game player. An example of this was discussed with reference toFIG. 2. The method continues at step116where the processing module generates video gaming menu data in accordance with at least one data element of the user profile. An example of this was discussed with reference toFIG. 3.

If the processing module was not able to identify the player at step112, the method continues at step117, where the processing module determines whether it was able to isolate a face in the digital image(s). If not, the method continues at step118where the processing module generates a message instructing the video game player to reposition him or herself. The message is provided to the graphics processing module for subsequent presentation on the display unit34. In this instance, the images being captured by the digital camera may be processed and provided to the display unit such that the player can position him or herself to achieve a desired digital image. Alternatively or in addition to, the processing module may generate another message instructing the digital camera to adjust its capture range to zoom in on a head of the video game player and to capture another digital image.

If, at step117, the processing module was able to isolate a face, the method continues at step120where the processing module determines whether the player has a user profile. In this instance, the player has provided sufficient information to identify him or herself, but is either new to the video game device12or does not have his or her digital image linked to his or her user profile. When it is the former, the method continues at step126where the processing module obtains user data (e.g., authentication data, personal preferences, etc.). The method continues at step128where the processing module creates the user profile to include the user data and the at least one stored digital image.

If, at step120, the player has a user profile but it is not linked to his or her digital image, the method continues at step122where the processing module generates a query message regarding whether the video game player would like to establish the user profile linked to a digital image of the video game player. The message is provided to the graphics processing module, which create a display image of the message for presentation on the display unit. When the player affirmatively response, the method continues at step124where the processing module links the digital image of the player to his or her user profile. This may be done by storing a digital image of the video game player to produce at least one stored digital image and adding the digital image to the user profile to establish the link between the user profile and the digital image of the video game player.

FIG. 9is a logic diagram of another embodiment of a method for video gaming that begins at step130where the processing module interprets at least one of a plurality of digital images of a video game player to activate a video game. In an embodiment, the processing module may active a video game by identifying the video game player based on the at least one of a plurality of digital images; retrieving a user profile based on the identity of the video game player; and activating the video game in accordance with the user profile.

If the game cannot be activated, the method branches from step132to steps116-128ofFIG. 8to link a digital image to a player. If the game is activated, the method continues at step134where the processing module executes, in accordance with video gaming input signals, operational instructions of the video game incorporating at least some of the plurality of digital images to produce video gaming data. Examples of this were discussed inFIGS. 2-7.

The method continues at step136where the graphics processing module renders display images based on the video gaming data. Examples of this were also provided with reference toFIGS. 2-7. The method continues at step138where the video gaming object interface receives wireless video gaming signals and converts them into the video gaming input signals.

FIG. 10is a logic diagram of another embodiment of a method for video gaming that begins at step140where the processing module interpret at least one of a plurality of digital images to identify a first video game player and to identify a second video game player. In this instance, multiple players are proximally located to the video game device and, as such, the processing module analyzes the at least one of a plurality of digital images to isolate a face of the first and/or second video game player. The processing module then performs a facial recognition algorithm on the face to identify the first and/or second video game player. Note that the digital images may be received from one or more digital cameras. For example, a first digital camera may be used to capture images of the first player and a second digital camera may be used to capture images of the second player.

The method continues at step142where the processing module attempts to retrieve a first user profile for the first video game player based on the identity of the first video game player and attempts to retrieve a second user profile for the second video game player based on the identity of the second video game player. The method continues at step144where the processing module determines whether it can activate a video game. A video game can be activated if both players can be identified by their respective digital images, they have user profiles, and the user profiles indicate a particular game or one of the players has indicated which game to play. If the game cannot be activated, the method branches to steps116-128ofFIG. 8to link a digital image to a player for the first and/or second player.

When the game can be activated, the method continues at step146where the processing module executes, in accordance with first and second video gaming input signals, operational instructions of the video game incorporating at least some of the plurality of digital images to produce video gaming data. Examples of executing operational instructions for a single player have been provided with reference toFIGS. 2-7. These examples are applicable to multiple players processing of video game operational instructions.

For example, the processing module may execute the operation instructions by identifying a set of operational instructions corresponding to rendering graphics of a first or second caricature of the video game. When the set of operational instructions corresponding to rendering the graphics of the first or second caricature are to be executed, the processing module alters the rendering of the graphics of the first or second caricature based on one or more of the plurality of digital images. For instance, the graphics of the first caricature is altered based on an image of the first video game player in the one or more of the plurality of digital images and the graphics of the second caricature is altered based on an image of the second game player in the one or more of the plurality of digital images.

As another example, the processing module may execute the operation instructions by interpreting the plurality of digital images to identify gaming expressions of at least one of the first and second video game players. The processing module then alters the executing of the operation instructions based on the gaming expressions.

The method continues at step148where the graphics processing module renders display images based on the video gaming data. The method continues at step150where the video gaming object interface module receives first and second wireless video gaming signals and converts the first and second wireless video gaming signals into the first and second video gaming input signals, where the first video gaming input signals correspond to the first video game player and the second video gaming input signals correspond to the second video game player.

As may be used herein, the terms “substantially” and “approximately” provides an industry-accepted tolerance for its corresponding term and/or relativity between items. Such an industry-accepted tolerance ranges from less than one percent to fifty percent and corresponds to, but is not limited to, component values, integrated circuit process variations, temperature variations, rise and fall times, and/or thermal noise. Such relativity between items ranges from a difference of a few percent to magnitude differences. As may also be used herein, the term(s) “operably coupled to”, “coupled to”, and/or “coupling” includes direct coupling between items and/or indirect coupling between items via an intervening item (e.g., an item includes, but is not limited to, a component, an element, a circuit, and/or a module) where, for indirect coupling, the intervening item does not modify the information of a signal but may adjust its current level, voltage level, and/or power level. As may further be used herein, inferred coupling (i.e., where one element is coupled to another element by inference) includes direct and indirect coupling between two items in the same manner as “coupled to”. As may even further be used herein, the term “operable to” or “operably coupled to” indicates that an item includes one or more of power connections, input(s), output(s), etc., to perform, when activated, one or more its corresponding functions and may further include inferred coupling to one or more other items. As may still further be used herein, the term “associated with”, includes direct and/or indirect coupling of separate items and/or one item being embedded within another item. As may be used herein, the term “compares favorably”, indicates that a comparison between two or more items, signals, etc., provides a desired relationship. For example, when the desired relationship is that signal1has a greater magnitude than signal2, a favorable comparison may be achieved when the magnitude of signal1is greater than that of signal2or when the magnitude of signal2is less than that of signal1.

The present invention has also been described above with the aid of method steps illustrating the performance of specified functions and relationships thereof. The boundaries and sequence of these functional building blocks and method steps have been arbitrarily defined herein for convenience of description. Alternate boundaries and sequences can be defined so long as the specified functions and relationships are appropriately performed. Any such alternate boundaries or sequences are thus within the scope and spirit of the claimed invention.

The present invention has been described above with the aid of functional building blocks illustrating the performance of certain significant functions. The boundaries of these functional building blocks have been arbitrarily defined for convenience of description. Alternate boundaries could be defined as long as the certain significant functions are appropriately performed. Similarly, flow diagram blocks may also have been arbitrarily defined herein to illustrate certain significant functionality. To the extent used, the flow diagram block boundaries and sequence could have been defined otherwise and still perform the certain significant functionality. Such alternate definitions of both functional building blocks and flow diagram blocks and sequences are thus within the scope and spirit of the claimed invention. One of average skill in the art will also recognize that the functional building blocks, and other illustrative blocks, modules and components herein, can be implemented as illustrated or by discrete components, application specific integrated circuits, processors executing appropriate software and the like or any combination thereof.

Claims

- A video gaming device comprises: a processing module including a processor and executable operational instructions, the processing module configured to: interpret at least one digital image of the video game player to determine an identity of the video game player;when the identity of the video game player is determined from the at least one digital image, retrieve a user profile of the video game player, the user profile including a caricature based upon the at least one digital image of the video game player;and generate video gaming menu data in accordance with at least one data element of the user profile;and a graphics processing module configured to render a display image based on the video gaming menu;and a video gaming object interface module configured to: receive a wireless menu selection signal;and convert the wireless menu selection signal into a menu selection signal, wherein when the identity of the video game player cannot be determined from the at least one digital image: the processing module is further configured to generate a query message regarding whether the video game player would like to establish the user profile linked to a digital image of the video game player;the graphics processing module is further configured to render a second display image based on the query message;the video gaming object interface module is further configured to: receive a wireless response signal;and convert the wireless response signal into a response signal;and the processing module is further configured to: process the response signal to link the user profile to the digital image of the video game player.

- The video gaming device of claim 1 , wherein the processing module is further configured to interpret the at least one digital image by: analyzing the at least one digital image to isolate a face of the video game player;and performing a facial recognition algorithm on the face to identify the video game player.

- The video gaming device of claim 2 , wherein the processing module is further configured to perform at least one of: when the analyzing of the at least one digital image cannot isolate the face, generating a message instructing the video game player to reposition himself or herself;and when the analyzing of the at least one digital image cannot isolate the face, generating a second message instructing the digital camera to adjust its capture range to zoom in on a head of the video game player and to capture another digital image.

- The video gaming device of claim 1 , wherein the processing module processes the response signal by: determining whether the video game player has an existing user profile;when the video game player has the existing user profile: storing the at least one digital image of the video game player to produce at least one stored digital image;and adding the at least one stored digital image to the user profile to establish the link between the user profile and the digital image of the video game player;and when the video game player does not have an existing user profile: obtaining user data;and creating the user profile to include the user data and the at least one stored digital image.

- The video gaming device of claim 1 , wherein the user profile comprises at least one of: authentication data;access privileges;product registration data;and personal preference data.

- The video gaming device of claim 1 , wherein the processing module generates the video menu to comprise at least one of: a listing of available video games;the user profile;and a listing of system settings.

- The video gaming device of claim 6 , wherein the processing module is further configured to: interpret the menu selection signal to identify selection of one of the available video games to produce a selected video game;retrieve operational instructions of the selected video game;and execute the operational instructions to facilitate playing of the selected video game.

- The video gaming device of claim 7 , wherein the processing module executes the operation instructions by: identifying operational instructions corresponding to rendering graphics of the caricature based upon the selected video game;and when the operational instructions corresponding to rendering the graphics of the caricature are to be executed, altering the rendering of the graphics of the caricature based on the at least one digital image.

- The video gaming device of claim 7 , wherein the processing module executes the operation instructions by: receiving additional digital images of the video game player;interpreting the additional digital images to identify gaming expressions;and altering the executing of the operation instructions based on the gaming expressions.

- The video gaming device of claim 1 further comprises: a digital camera operable to capture the at least one digital image of the video game player.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.