U.S. Pat. No. 8,152,637

IMAGE DISPLAY SYSTEM, INFORMATION PROCESSING SYSTEM, IMAGE PROCESSING SYSTEM, AND VIDEO GAME SYSTEM

AssigneeSony Computer Entertainment Inc.

Issue DateOctober 13, 2005

Illustrative Figure

Abstract

According to functions (the first to the third target position setup functions 242, 244, and 246) which set up as a target position, a position of reference image, a position decided by orientations of multiple cards, and a position of a character image being a counterpart selected in combined actions display program, and functions to display a scene where the character image moves from above the identification information to the target position (action data string searching function 248, movement posture setup function 250, 3D image setup function 252, image drawing function 254, image display setup function 256, table rewriting function 258, distance calculating function 260, repetitive function 262), it is possible to expand a range of card game used to be played only in a real space up to a virtual space, and to offer a new game which merges the card game and video game.

Description

BEST MODE FOR CARRYING OUT THE INVENTION In the following, preferred embodiments will be described in detail in which an image display system, information processing system, and image processing system relating to the present invention have been applied to a video game system, with reference to the accompanying drawings,FIG. 1toFIG. 44. As shown inFIG. 1, the video game system10relating to the present embodiment includes a video game machine12and various external units14. The video game machine12includes CPU16which executes various programs, main memory18which stores various programs and data, image memory20in which image data is recorded (drawn), and I/O port22which exchanges data with the various external units14. Various external units14connected to the I/O port22includes, a monitor30which is connected via a display-use interface (I/F)28, an optical disk drive34which carries out reproducing/recording from/on an optical disk (DVD-ROM, DVD-RW, DVD-RAM, CD-ROM, and the like)32, memory card38being connected via a memory card-use interface (I/F)36, CCD camera42being connected via pickup-use interface (I/F)40, hard disk drive (HDD)46which carries out reproducing/recording from/on the hard disk44, and a speaker50being connected via the audio-use interface48. It is a matter of course that connection may be established with the Internet (not illustrated) from the I/O port22via a router not illustrated. Data input and output to/from the external units14and data processing and the like within the video game machine12are carried out by way of the CPU16and main memory18. In particular, pickup data and image data are recorded (drawn) in the image memory20. Next, characteristic functions held by the video game system10relating to the present embodiment will be explained with reference toFIG. 2toFIG. 44, that is, functions being implemented by programs provided to the video game machine12, via a recording medium such as optical disk32, memory card38, hard disk44, being available for random access, and further via a network such as the Internet, and Intranet. ...

BEST MODE FOR CARRYING OUT THE INVENTION

In the following, preferred embodiments will be described in detail in which an image display system, information processing system, and image processing system relating to the present invention have been applied to a video game system, with reference to the accompanying drawings,FIG. 1toFIG. 44.

As shown inFIG. 1, the video game system10relating to the present embodiment includes a video game machine12and various external units14.

The video game machine12includes CPU16which executes various programs, main memory18which stores various programs and data, image memory20in which image data is recorded (drawn), and I/O port22which exchanges data with the various external units14.

Various external units14connected to the I/O port22includes, a monitor30which is connected via a display-use interface (I/F)28, an optical disk drive34which carries out reproducing/recording from/on an optical disk (DVD-ROM, DVD-RW, DVD-RAM, CD-ROM, and the like)32, memory card38being connected via a memory card-use interface (I/F)36, CCD camera42being connected via pickup-use interface (I/F)40, hard disk drive (HDD)46which carries out reproducing/recording from/on the hard disk44, and a speaker50being connected via the audio-use interface48. It is a matter of course that connection may be established with the Internet (not illustrated) from the I/O port22via a router not illustrated.

Data input and output to/from the external units14and data processing and the like within the video game machine12are carried out by way of the CPU16and main memory18. In particular, pickup data and image data are recorded (drawn) in the image memory20.

Next, characteristic functions held by the video game system10relating to the present embodiment will be explained with reference toFIG. 2toFIG. 44, that is, functions being implemented by programs provided to the video game machine12, via a recording medium such as optical disk32, memory card38, hard disk44, being available for random access, and further via a network such as the Internet, and Intranet.

Firstly, a card54used in this video game system10will be explained. This card54has a size and a thickness being the same as a card used in a general card game. As shown inFIG. 2A, on the front face, there is printed a picture representing a character being associated with the card54. As shown inFIG. 2B, the identification image56is printed on the reverse side. It is a matter of course that a transparent card is also available. In this case, only the identification image56is printed.

Patterns of two-dimensional code (hereinafter abbreviated as “2D code”) as shown inFIG. 2Bconfigure the identification image56. One unit of the identification image56is assumed as one block, and logo part58and code part60are arranged in such a manner as being separated by one block within a range of rectangle, 9.5 blocks length vertically, and seven blocks length horizontally. In the logo part58, there is provided a black colored reference cell62, being 2D code for notifying the reference position of the code part60and the orientation of the card54, with a shape of large-sized rectangle having a length corresponding to 1.5 blocks vertically and a length corresponding to 7 blocks horizontally. There is also a case that a name of character, a mark (logo) for advertisement, or the like, is printed in the logo part58, for example.

The code part60is in a square range having seven blocks both vertically and horizontally, and at each of the corner sections, corner cells64each being a black square, for example, for recognizing identification information, are placed. Furthermore, identification cells66, each being black square for example, are provided in the area surrounded by four corner cells64in such a manner as being two-dimensionally patterned, so as to recognize the identification information.

Since a method for detecting a position of the identification image56from the pickup image data, a method for detecting the images at the corner cells64, and a method for detecting the 2D pattern of the identification cells66are described in detail in the Patent Document 1 (Japanese Patent Laid-open Publication No. 2000-82108) as mentioned above, it is advised that the Patent Document 1 is referred to.

In the present embodiment, an association table is registered, which associates various 2D patterns of the identification cells66with the identification numbers respectively corresponding to the patterns, for example, in a form of database68(2D code database, seeFIG. 6andFIG. 7), in the hard disk44, optical disk32, and the like. Therefore, by collating a detected 2D pattern of the identification cells66with the association table within the database68, the identification number of the card54is easily detected.

As shown inFIG. 3AandFIG. 3B, the functions implemented by the video game system is to pick up images by the CCD camera42, for example, of six cards541,542,543,544,545, and546, which are placed on a desk, table or the like52, to display thus picked up images in the monitor30. Simultaneously, on the respectively images of the cards541,542,543,545,546displayed in the screen of the monitor30, for example, on the identification images561,562,563,564,565, and566respectively attached to the cards541,542,543,544,545, and546, images of objects (characters)701,702,703,704,705, and706are displayed respectively associated with the identification images561to566of the cards541to546in such a manner as being superimposed thereon. According to the displaying manner as described above, it is possible to achieve a game which is a mixture of a game and a video game.

In addition,FIG. 3Ashows an example that one user74A places three cards541to543side by side on the desk, table, or the like52, and the other user74B places three cards544to546side by side on the desk, table and the like52, similarly. Here, the “character” indicates an object such as a human being, an animal, and a hero or the like who appears in a TV show, animated movie, and the like.

As shown inFIG. 3A, the CCD camera42is installed on stand72which is fixed on the desk, table, or the like52. Imaging surface of the CCD camera42may be adjusted, for example, by users74A and74B, so as to be oriented to the part on which the cards541to546are placed.

It is a matter of course that, as shown inFIG. 4A, an image of the user74who holds one card542, for example, is picked up, so as to be seen in the monitor30, thereby as shown inFIG. 4B, displaying the image of the user74, the identification image562of the card542, and the character image702. Accordingly, it is possible to create a scene such that a character is put on the card542held by the user74.

The functions of the present embodiment as described above are achieved, when the CPU16executes an application program to implement those functions, out of various programs installed in the hard disk44for example.

As shown inFIG. 5, the application program80includes, card recognition program82, character appearance display program84, character action display program86, field display program88, card position forecasting program90, combined action display program92, the first character movement display program94, the second character movement display program96, character nullification program98, floating image display program100, and landing display program102.

Here, functions of the application program80will be explained with reference toFIG. 6toFIG. 44.

Firstly, the card recognition program82is to perform processing for recognizing the identification image561of the card (for example, card541inFIG. 3A) placed on the desk, table, or the like52, so as to specify a character image (for example image701inFIG. 3B) to be displayed on the identification image561. As shown inFIG. 6, the card recognition program82includes a pickup image drawing function103, reference cell finding function104, identification information detecting function106, camera coordinate detecting function108, and character image searching function110. Here, the term “recognition” indicates to detect an identification number and the orientation of the card541from the identification image561of the card541, having been detected from the pickup image data drawn in the image memory20.

The pickup image drawing function103sets up in the image memory20an image of an object being picked up as a background image, and draws the image. As one processing for setting the image as the background image, setting Z value in Z-buffering is taken as an example.

As described above, the reference cell finding function104finds out image data of the reference cell62of the logo part58from the image data drawn in the image memory20(pickup image data), and detects a position of the image data of the reference cell62. The position of the image data of the reference cell62is detected as a screen coordinate.

As shown inFIG. 7, the identification information detecting function106detects image data of the corner cells64based on the position of the image data of the reference cell62having been detected. Image data of the area112formed by the reference cell62and the corner cells64is subjected to affine transformation, assuming the image data as being equivalent to the image114which is an image viewing the identification image561of the card541from upper surface thereof, and 2D pattern of the code part60, that is, code116made of 2D patterns of the corner cells64and the identification cells66is extracted. Then, identification number and the like are detected from thus extracted code116.

As described above, detection of the identification number is carried out by collating the code116thus extracted with the 2D code database68. According to this collation, if the identification number relates to a field which decides the image is a background image not a character image, the field display program88as described below is started. As a result of the collation, if the identification number does not exist, the floating image display program100as described below is started.

As shown inFIG. 8, the camera coordinate detection function108obtains a camera coordinate system (six axial directions: x, y, z, θx, θy and θz) having a camera viewing point C0as an original point based on the detected screen coordinate and focusing distance of the CCD camera42. Then, the camera coordinate of the identification image561at the card541is obtained. At this moment, the camera coordinate at the center of the logo part58in the card541and the camera coordinate at the center of the code part60are obtained.

The camera coordinate at the center of the logo part58and the camera coordinate at the center of the code part60thus obtained are registered in current positional information table117as shown inFIG. 9. This current positional information table117is an information table so as to store the camera coordinate at the center of the logo part58of the card, the camera coordinate at the center of the code part60, and the camera coordinate of the character image, in such a manner as associated with the identification number of the card. Registration in this current positional information table117may be carried out by card position forecasting program90, in addition to this card recognition program82. This will be described below.

Since a method for obtaining the camera coordinate of the image from the screen coordinate of the image drawn in the image memory20, and a method for obtaining a screen coordinate on the image memory20from the camera coordinate of a certain image are described in detail in the Patent Document 2 (Japanese Patent Laid-open Publication No. 2000-322602) as mentioned above, it is advised that the Patent Document 2 is referred to.

The character image searching function110searches the object information table118for a character image (for example, the character image701as shown inFIG. 3B), based on the identification number thus detected.

For example as shown inFIG. 10, a large number of records are arranged to constitute elements of the object information table118, and in one record, an identification number, a storage head address of the character image, various parameters (physical energy, offensive power, level, and the like), and a valid/invalid bit are registered.

The valid/invalid bit indicates a bit to determine whether or not the record is valid. When “invalid” is set, for example, it may include a case that an image of the character is not ready at the current stage, or for example, the character is beaten and killed in a battle which is set in the video game.

The character image searching function110searches the object information table118for a record associated with the identification number, and if thus searched record is “valid”, the image data120is read out from the storage head address registered in the record. For instance, image data120associated with the character is read out from the storage head address, out of the data file122which is recorded in the hard disk44, optical disk32, and the like, and in which a large number of image data items are registered. If the record thus searched is “invalid”, the image data120is not allowed to be read out.

Therefore, when one card541is placed on a desk, table, or the like52, the card recognition program82is started, and a character image701is identified, which is associated with the identification number specified by the identification image561of the card541thus placed.

In addition, the character image searching function110registers, at a stage when the character image701is identified, a camera coordinate at the center of the cord part60of the card541, as a camera coordinate of the character image of a record associated with the identification number of the card541out of the records in the current positional information table117.

According to the card recognition program82, it is possible to exert a visual effect merging the real space and the virtual space. Then, control is passed from this card recognition program82to various application programs (character appearance display program84, character action display program86, field display program88, card position forecasting program90, and the like).

Next, the character appearance display program84creates a display that a character image701associated with the identification number and the like which is specified by the identification image561of the detected card (for example, card541), appears in such a manner as being superimposed on the identification image561of the card541. As shown inFIG. 11, the character appearance display program84includes an action data string searching function124, an appearance posture setup function126, 3D image setup function128, image drawing function130, image displaying function132, and repetitive function134.

The action data string searching function124searches the appearance action information table136for an action data string for displaying a scene in which the character appears, based on the identification number and the like.

Specifically, at first, the action data string searching function124searches the appearance action information table136for a record associated with the identification number and the like, the table being recorded in the hard disk44, optical disk32, or the like, and registering a storage head address of action data string for each record. Then, the action data string searching function124reads out from the storage head address registered in the record thus searched, an action data string138representing an action where the character image701appears, out of the data file140which is recorded in the hard disk44, optical disk32, or the like, and a large number of action data strings138are registered.

The appearance posture setup function126sets one posture in a process where the character image701appears. For example, based on the action data of i-th frame (i=1, 2, 3 . . . ) of the action data string138thus readout, vertex data of the character image701is moved on the camera coordinate system, so that one posture is setup.

The 3D image setup function128sets up a three-dimensional image of one posture in a process where the character image701appears on the identification image561of the card541, based on the camera coordinate of the identification image561of the card541thus detected.

The image drawing function130allows the three-dimensional image of one posture in a process where the character image701appears to be subjected to a perspective transformation into an image on the screen coordinate system, and draws thus transformed image in the image memory20(including a hidden surface processing). At this timing, Z value of the character image701in Z-buffering is reconfigured to be in the unit of frame, thereby presenting a scene where the character image701gradually appears from below the identification image561of the card541.

The image display function132outputs the image drawn in the image memory20in a unit of frame to the monitor30via the I/O port22, and displays the image on the screen of the monitor30.

The repetitive function134sequentially repeats the processing of the appearance posture setup function126, the processing of the 3D image setup function128, the processing of the image drawing function130, and the processing of the image display function132. Accordingly, it is possible to display a scene where the character image701associated with the identification number and the like of the card541appears on the identification image561of the card541.

Next, the character action display program86is to display a scene where the character performs following actions; waiting, attacking, enchanting, protecting another character, and the like. As shown inFIG. 12, being almost similar to the aforementioned character appearance display program84, the character action display program86includes, action data string searching function142, posture setup function144, 3D image setup function146, image drawing, function148, image display function150, and repetitive function152.

The action data string searching function142searches various action information tables154associated with each scene, for an action data string to display the scene where the character performs following actions; waiting, attacking, enchanting, and protecting another character.

Specifically, at first, an action information table154associated with the action to be displayed is identified from the various action information tables154, which are recorded for example in the hard disk44, optical disk32, and the like, and in which a storage head address of action data string is registered for each record. Furthermore, the action data string searching function142searches thus identified action information table154for a record associated with the identification number and the like.

Then, out of the data file158which is recorded in the hard disk44, optical disk32, or the like and in which a large number of action data strings156are registered, the action data string searching function142reads out from the head address registered in the record thus searched, the action data string156which is associated with the action to be displayed for this time (the character's action, such as waiting, attacking, enchanting, or protecting another character).

The posture setup function144sets, for example as to a character regarding the card541, one posture in a process while the character image701is waiting, one posture in a process while the character image is attacking, one posture in a process while the character image is enchanting, and one posture in a process while the character image is protecting another character. For instance, based on the action data of the i-th frame (i=1, 2, 3 . . . ) of the action data string156thus read out, the vertex data of the character image701is moved on the camera coordinate system and one posture is set up.

The 3D image setup function146sets three-dimensional images of one posture on the identification image561of the card541, in a process while the character image701is waiting, one posture in a process while the character image is attacking, one posture in a process while the character image is enchanting, and one posture in a process while the character image is protecting another character, based on the camera coordinate of the identification image561on the card541thus detected.

The image drawing function148allows the 3D images of one posture in a process while the character image701is waiting, one posture in a process while the character image is attacking, one posture in a process while the character image is enchanting, and one posture in a process while the character image is protecting another character, to be subjected to perspective transformation into images on the screen coordinate system, and draws thus transformed images into the image memory20(including hidden surface processing).

The image display function150outputs the image drawn in the image memory20in a unit of frame to the monitor30via the I/O port22, and displays the image on the screen of the monitor30.

The repetitive function152sequentially repeats the processing of the posture setup function144, the processing of the 3D image setup function146, the processing of the image drawing function148, and the processing of the image display function150. Accordingly, it is possible to display scenes where the character image701is waiting, attacking, enchanting, and protecting another character.

With the aforementioned card recognition program82, the character appearance display program84, and the character action display program86, it is possible to allow a character in a card game to appear in a scenario of a video game, and perform various actions. In other words, a card game which has been enjoyed only in a real space can be spread to the virtual space, thereby offering a new type of game merging the card game and the video game.

Next, field display program88will be explained. As shown inFIG. 13A, in the case where the first card placed on the desk, table or the like52relates to a field (hereinafter, referred to as “field card54F”), this program88is started by the card recognition program82. As shown inFIG. 13B, this program88displays on the screen of the monitor30, background image160associated with the identification number specified by the identification image56F on the field card54F and multiple images601to606, each in a form of square, indicating positions for placing versus-fighting game cards.

As shown inFIG. 14, this field display program88includes background image searching function164, 3D image setup function166, background image drawing function168, card placing position forecasting function170, square region setup function172, background image display function174, repetitive function176, reference cell finding function178, identification information detecting function180, and character image searching function182.

The background image searching function162searches the background image information table184for a background image160associated with the identification number of the field card54F. As shown inFIG. 15for example, the background image information table184includes as elements a large number of records being arranged, and one record registers the identification number, a storage head address of the background image data, and a storage head address of animation data string.

This background image searching function164searches the background image information table184recorded, for instance, in the hard disk44, optical disk32, and the like, for a record associated with the identification number.

Then, the background image searching function164reads out background image data186associated with the identification number of the field card54F from the storage head address registered in the record thus searched, out of the data file188which is recorded in the hard disk44, optical disk32, and the like, and which registers a large number of background image data186.

The background image searching function164further reads out an animation data string190associated with the identification number of the field card54F from the storage head address registered in the record thus searched, out of the data file192which registers a large number of animation data strings190.

The 3D image setup function166sets up a scene (process) of a background image in motion. For example, based on the i-th frame (i=1, 2, 3 . . . ) of the animation data string190thus read out, the vertex data of the background image data186is moved on the camera coordinate, and one scene is set up.

The background image drawing function168allows a 3D image representing one scene of the background image in motion to be subjected to perspective transformation into an image on the screen coordinate system, and draws thus transformed image in the image memory20(including hidden surface processing). At this timing, Z value of the background image160in Z-buffering is set to a value lower than the Z value of the pickup image (a value closer to the camera viewpoint than the pickup image), and the background image160is given priority over the pickup image, to be displayed.

The card placing position forecasting function170forecasts camera coordinates of the multiple squares (inFIG. 13B, six squares601to606), based on the camera coordinate of the field card54thus detected. At this timing, the square information table194is used. As shown inFIG. 16, this square information table194registers relative coordinates respectively, assuming a position on which the field card54F is placed as a reference position.

Specifically, as shown inFIG. 17for example, when the origin (0, 0) on the xz plane is assumed as a position on which the field card54F is placed, (dx, −dz), (dx, 0), (dx, dz), (−dx, dz), (−dx, 0), and (−dx, −dz) are registered as relative coordinates of the first to the sixth squares. The lengths of dx and dz are determined based on an actual card size, and these lengths are set to values so that a card does not overlap the card placed adjacently.

Therefore, if the camera coordinate of the reference position (origin) is determined, each of the camera coordinates of the squares601to606is easily obtained according to the square information table. The camera coordinates of those six squares601to606represent camera coordinates of the positions where the multiple cards (hereinafter, referred to as versus-fighting cards541to546) other than the field card54F are placed.

The square region setup function172sets up six squares601to606respectively on the six camera coordinates thus obtained on which the versus-fighting cards541to546are to be placed.

Specifically, out of Z values of the pickup image, the Z value, corresponding to a region obtained by allowing squares601to606to be subjected to perspective transformation into the screen coordinate system, is set to a value lower than the Z value of the background image160(a value closer to the camera viewpoint than the background image160), where the squares601to606are each in a form of rectangle being a size larger than the size of each of the versus-fighting cards541to546, for instance. Accordingly, the pickup images are displayed on the parts corresponding to the six squares601to606in the background image160.

The background image display function174outputs the image drawn in the image memory20in a unit of frame to the monitor30via the I/O port22, and displays the background image160on the screen of the monitor30, and the pickup images are displayed on the parts respectively corresponding to the six squares601to606in the background image160.

The repetitive function176sequentially repeats the processing of the 3D image setup function166, the processing of the background image drawing function168, the processing of the card placing position forecasting function170, the processing of the square region setup function172, and the processing of the background image display function174. Accordingly, as shown inFIG. 12B, the background image160in motion is displayed on the screen of the monitor30, and in this background image160, the pickup images are displayed, being projected on the parts corresponding to the six squares601to606.

In other words, a user puts the versus-fighting cards541to546respectively on the six squares601to606on the desk, table or the like52, thereby clearly projecting the identification images561to566of the versus-fighting cards541to546from the background image160. Therefore, the user is allowed to put the versus-fighting cards541to546respectively on the six squares601to606.

On the other hand, the reference cell finding function178obtains six drawing ranges on the image memory20based on the camera coordinates of the six squares601to606, while the background image160in motion is displayed. Then, the reference cell finding function178finds out whether or not the identification images561to566of the versus-fighting cards541to546exist within each of the drawing ranges, and in particular, whether a reference cell62of the logo part58exists in the range.

For instance, as shown inFIG. 18A, when six versus-fighting cards541to546are placed on the desk, table, or the like52to be corresponding to the six squares601to606displayed on the monitor30(seeFIG. 13B), a position of each reference cell of the six versus-fighting cars541to546is detected.

The identification information detecting function180performs the same processing as that of the identification information detecting function106in the card recognition program as described above, and detects each of the identification numbers of the placed six cards541to546, which are identified based on each of the identification information561to566of the six cards541to546. As a result of the collation, if the identification number does not exist, floating image displaying program100described below is started.

The character image searching function182searches the object information table118for character image data120associated with the identification numbers of the versus-fighting cards541to546placed respectively corresponding to the six squares601to606.

For example, the object information table118is searched for records respectively associated with the identification numbers, and if a record thus searched is “valid”, the image data120is read out from each of the storage head addresses registered in the respective records. At this point of time, the character images701to706of the versus-fighting cards541to546, placed corresponding to the six squares601to606respectively, are determined.

Following this, the character appearance display program84is started, for example. As shown inFIG. 18B, a scene is displayed where the character images701to706associated with the versus-fighting cards541to546respectively appear on the identification images561to566of the versus-fighting cards541to546.

The field display program88does not search the overall image memory20to know at which part the user placed the versus-fighting card541to546, but it indicates the positions to place the versus-fighting cards541to546by use of six squares601to606. Therefore, detection is performed targeting around the positions of the six squares601to606, so that the versus-fighting cards541to546can be recognized. Accordingly, it is not necessary to detect all over the image memory20every time when the fighting card is recognized, thereby reducing loads for recognition processing with regard to the six cards541to546. With the configuration above, the speed of processing may be enhanced.

Next, the card position forecasting program90will be explained. This program90is suitable for six-person match or two-person match, for example. This program detects a position of an image as a reference (reference image), and based on the position of the reference image, positions at which the versus-fighting cards are placed are forecasted.

As shown inFIG. 19Afor example, it is possible to utilize as the reference image, the identification image56F of the field card54F which is placed on the desk, table, or the like52, prior to placing the versus-fighting cards541to546. Alternatively, in the example of two-person match, as shown inFIG. 20A, a rectangular mat196is put on the desk, table, or the like52, and the versus-fighting card541to546are placed respectively in the six squares drawn on the mat196for fighting (three squares are placed side by side for one person: the first to sixth squares801to806). In this example, “+” image198drawn at the center of the mat196is used as the reference image. Further alternatively, as shown inFIG. 21A, in the example of six-person match, a circular mat200is put on the desk, table, or the like52, and the versus-fighting cards541to546are placed respectively in the six squares drawn on the mat200for fighting (placed in a circle: the first to sixth squares901to906). In this example, the image202drawn at the center position of the mat200is used as the reference image, for instance.

If an item is placed, for example, at the center or at the corner of the fighting area, without putting the aforementioned mat196or200, the image or the like of the item is assumed as the reference image.

In the following descriptions, those images which are candidates to be used as a reference image are referred to as “reference images201”. It is preferable that an image used as the reference image201should have a property clearly indicating the orientation.

As shown inFIG. 22, the card position forecasting program90includes reference image detecting function204, camera coordinate detecting function206, detection area setup function208, reference cell finding function210, identification information detecting function212, and character image searching function214.

Here, processing operations of the card position forecasting program90will be explained with reference toFIG. 22toFIG. 25B.

At first, in step S1inFIG. 23, the reference image detecting function204detects a position of the reference image201from the pickup image data drawn in the image memory20. In this position detection, multiple images obtained by picking up a predetermined reference image201from various angles are registered as lookup images. According to a method such as pattern matching and the like using the lookup images, the reference image201can be easily detected from the pickup image data. It is a matter of course that the reference image201may be detected through another pattern recognition method. Position of the reference image201is detected as a screen coordinate.

Thereafter, in step S2, the camera coordinate detecting function206obtains a camera coordinate system (in six axial directions: x, y, z, θx, θy and θz) assuming a camera viewpoint as an origin, based on the screen coordinate of the reference image201thus detected and the focal distance of the CCD camera42, and further obtains the camera coordinate of the reference image201thus detected. Then, the camera coordinate at the center of the reference image201is obtained.

Thus obtained camera coordinate of this reference image201is registered in register216. The camera coordinate of the reference image201registered in the register216is utilized also in the first character movement display program94, as well as used in the card position forecasting program90. This will be described in the following.

Thereafter, in step S3, the detection area setup function208obtains a camera coordinate of the area including the reference image201and being a finding target by the reference cell finding function210, based on the camera coordinate of the reference image201.

As shown inFIG. 25A, the area to be found is a rectangular area218having the reference image201as a center, in the case where multiple cards are placed side by side for each person in two-person match. If the multiple cards are placed in a circle in a match for three-person or more, as shown inFIG. 25A, it is a circular area having the reference image201as a center. In the following, the rectangular area218and the circular area220are referred to as detection area222.

Thereafter, in step S4ofFIG. 23, the reference cell finding function210obtains a drawing range224of the detection area222on the image memory20, according to the camera coordinate of the detection area222thus obtained (seeFIG. 24BandFIG. 25B).

Then, in step S5, it is detected whether or not the identification images561to566of the versus-fighting cards541to546, in particular, the reference cell62in the logo part, exist in the drawing range224of the detection area222.

For example, positions respectively of the reference cells62of the six versus-fighting cards541to546are detected, in the case where six fighting cards541to546are placed with the field card54F as a center as shown inFIG. 19B, in the case where six fighting cards541to546are placed on the first to the sixth squares801to806of the mat196, as shown inFIG. 20A, in the case where six fighting cards541to546are placed on the first to the sixth squares901to906of the mat200as shown inFIG. 21A, and the like.

If identification images561to566of the versus-fighting cards541to546exist, the processing proceeds to step S6. Here, the identification information detecting function212allows the image data in the drawing range224to be subjected to affine transformation, and makes the image data in the drawing range224to be equivalent to the image data viewed from the upper surface. Then, based on thus obtained image data, the identification information detecting function212detects identification numbers of the six cards541to546from the identification images561to566of the six cards541to546, in particular, from two-dimensional pattern (code116) of the code part60in each of the identification images561to566. Detection of the identification number is carried out by collating the code116with the 2D code database68as stated above.

Afterwards, in step S7, the identification information detecting function212obtains camera coordinates of the identification images561to566on the six cards541to546, and registers the camera coordinate of the center of each log part58and the camera coordinate of each code part60in the six cards541to546, in the current positional information table117as shown inFIG. 9. If the identification number is not detectable, floating image display program100is started, which will be described below.

Then, in step S8, the character image searching function214searches the object information table118for image data120of the character images701to706based on the respective identification numbers of the versus-fighting cards541to546. For example, the character image searching function214searches the object information table118for a record associated with each of the identification number, and if the record thus searched out is “valid”, each character image data120is read out from the storage head address of the image data registered in each record.

Next, in step S9, at the stage where the character images701to706are specified, the character image searching function214registers a camera coordinate of the center of each code part60of the cards541to546, as a camera coordinate of the character image in each of the records respectively associated with the identification numbers of the cards541to546.

On the other hand, in step S5, if it is determined that the identification images561to566of the versus-fighting cards541to546do not exist, the reference cell finding function210performs step S10and outputs on the screen of the monitor30an error message prompting to place all the cards541to546.

Then, in step S11, after waiting for a predetermined period of time (for example, three seconds), the processing returns to S5, and the above processing is repeated.

At the stage where all the character images701to706are determined with respect to all the cards541to546existing in the detection area222, the processing operations as described above are completed.

Also in this case, as shown inFIG. 18C,FIG. 19BandFIG. 20B, by staring the character appearance display program84, for example, a scene is displayed in which the character images701to706associated with the identification numbers of the cards541to546appear on the identification images561to566of the cards541respectively.

In the card position forecasting program90, recognition of the six cards541to546is carried out for the detection area222that is set up based on the reference image201. Therefore, it is not necessary to detect all over the image memory20again in order to recognize the six cards541to546, thereby reducing loads in the process to recognize the six cards541to546.

Next, combined action display program92will be explained. As shown inFIG. 3A, this combined action display program92is configured assuming a case that a user74A puts three cards541to543side by side on the desk, table or the like52, and the other user74B puts three cards544to546side by side on the same desk, table, or the like52. This program92specifies one processing procedure based on one combination of detected orientations of the three cards being placed (for example, cards541to543).

For instance, out of the three cards541to543, a character who performs an action is specified according to the orientation of the card541placed on the left, a character as a counterpart to be influenced by the action is specified according to the orientation of the card542placed at the center, and a specific action is determined according to the orientation of the card placed on the right.

As shown inFIG. 26, the combined action display program92includes, card orientation detecting function226, action target specifying function228, counterpart specifying function230, and action specifying function232.

Here, processing in each function will be explained with reference toFIG. 3A,FIG. 3B,FIG. 26, andFIG. 27toFIG. 29, after the camera coordinates of the identification images561to566of all the cards541to546have been detected.

Firstly, the card orientation detecting function226detects which orientation each of the identification images561to566of the cards541to546is facing viewed from the users74A and74B, respectively associated with the cards, the cards being positioned on the left, center, and right of the users74A and74B respectively, based on the information registered in the current positional information table117. This information indicates camera coordinates (camera coordinate of the log part58and that of code part60) of the identification images561to566of the cards541to546all being placed.

If offensive or defensive action is performed by turns, it is possible to detect only the orientations of the cards being offensive next (cars541to543or544to546), which are possessed by the user74A or74B.

In the following descriptions, for ease of explanation, processing by the three cards541to543possessed by the user74A will be explained.

As shown inFIG. 26, the card orientation detecting function226passes information regarding the orientation of the identification image561of the card541which is placed on the left by the user74A to the action target specifying function228, passes information regarding the orientation of the identification image562of the card542which is placed at the center to the counterpart specifying function230, and passes information regarding the orientation of the identification image563of the card543which is placed on the right to the action specifying function232.

The action target specifying function228selects one character or all the characters, based on the information regarding the orientation of the card541, supplied from the card orientation detecting function226, out of the three character images701to703which are displayed in such a manner as respectively superimposed on the identification images561to563of the three cards541to543.

Specifically, as shown inFIG. 27for example, if the card541positioned on the left is oriented to the left, the character image701(actually, identification number specified by the identification image561) of the card541on the left is selected. If the card541is oriented upwardly, the character image702(actually, identification number specified by the identification image562) of the card542at the center is selected. If the card541is oriented to the right, the character image703(actually, identification number specified by the identification image563) of the card543on the right is selected. If the card541is oriented downwardly, the character images701to703(actually, identification numbers specified by the identification images561to563) of the three cards541to543on the left, at the center, and on the right are selected. The identification numbers thus selected are registered in the first register234.

The counterpart specifying function230selects one character or all the characters as a counterpart, based on the orientation of the card542supplied from the card orientation detecting function226, out of the three character images704to706displayed in such a manner as being superimposed respectively on the identification images564to566of the three cards544to546regarding the opposing counterpart (user74B).

Specifically as shown inFIG. 28for example, if the card542positioned at the center is oriented to the left, the character image704(actually, identification number specified by the identification image564) of the card544on the left viewed from the user74B is selected. If the card542is oriented upwardly, the character image705(actually, identification number specified by the identification image565) of the card545at the center viewed from the user74B is selected. If the card542is oriented to the right, the character image706(actually, identification number specified by the identification image566) of the card546on the right viewed from the user74B is selected. If the card542is oriented downwardly, the identification number selected in the action target specifying function228is selected. The identification numbers thus selected are registered in the second register236.

The action specifying function232selects an action of the character corresponding to the identification number being selected in the action target specifying function228(identification number registered in the first register234), based on the orientation of the card543supplied from the card orientation detecting function226.

As shown inFIG. 29for example, if the card543is oriented to the left, an action “to attack” is selected. If the card543is oriented upwardly, an action “to enchant” is selected. If the card543is oriented to the right, an action “being defensive” is selected, and if it is oriented to downwardly, an action “to protect” is selected.

At the stage where a specific action is selected, the action specifying function232starts the character action display program86and displays a scene corresponding to the action thus selected.

With the combined action display program92, it is possible to give a command regarding various processing according to a combination of orientations of the three cards541to543, without using an operation device (a device to input a command via key operations).

Next, the first character movement display program94will be explained. As shown inFIG. 30, for example, the program94displays that the character image705displayed in such a manner as being superimposed on the identification image565of the card545moves up to a target position240on the pickup image. Example of the target position240may include a position of the reference image201, a position determined by the orientations of the multiple cards541to546, and a position of the character image as a counterpart selected in the aforementioned combined action display program92, and the like.

As shown inFIG. 31, a first character movement display program94includes a first target position setup function242, a second target position setup function244, a third target position setup function246, an action data string searching function248, a movement posture setup function250, 3D image setup function252, an image drawing function254, an image display function256, table rewriting function258, distance calculating function260, and repetitive function262. Processing according to the first to the third target position setup functions242,244, and246are selectively performed according to a status of scenario in the video game.

The first target position setup function242sets as a target position, the position of the reference image201. Specifically, in the card position forecasting program90, the camera coordinate of the reference image201registered in the register216(seeFIG. 22) is read out, and this camera coordinate is set to a camera coordinate of the target position.

The second target position setup function244sets as a target position, a position determined based on the orientations of all the cards541to546placed on the desk, table, and the like52.

For example, as shown inFIG. 32, when six cards541to546are placed in a circle, the inner center or barycentric position266, for instance, of a polygon264formed on multiple points which are intersections of center lines m1to m6of the cards541to546, respectively, is assumed as a target position. This configuration can be applied to a case where three or more cards are placed in a circle.

A specific processing is as the following; based on the information registered in the current positional information table117, that is, based on the camera coordinates (camera coordinate of the logo part58and camera coordinate of the cord part60) of the identification images561to566of the all the cards541to546being placed, vectors of the center lines m1to m6of all of the cards541to546are obtained. Then, camera coordinates of multiple points as intersections of the center lines m1to m6thus obtained are extracted, and a camera coordinate of the inner center or barycentric position266of the polygon264configured by the multiple points thus extracted is set as a camera coordinate of the target position.

The third target position setup function246sets as a target position, a position of character image, as a counterpart selected in the combined action display program92. Specifically, in the combined action display program92, an identification number registered in the second register236(seeFIG. 26) is read out, and a camera coordinate (for example, camera coordinate of the character image) associated with the identification number thus read out is set as a camera coordinate of the target position.

As shown inFIG. 30for example, the action data string searching function248searches the first movement action information table268for an action data string to display a scene where the character image705moves from above the identification image565of the card545to the target position240.

Specifically, at first, the action data string searching function248searches the first movement action information table268recorded in the hard disk44, optical disk32, or the like, for instance, for a record associated with the identification number of the card545. Then, out of the data file272which is recorded in the hard disk44, optical disk32, or the like and in which a large number of action data strings270are registered, for example, the action data string searching function248reads out from the storage head address registered in the record thus searched, an action data string270indicating an action in which the character image705moves to the target position240.

The moving posture setup function250reads out from the current positional information table117, a camera coordinate of the character image705from the record associated with the identification number, and sets on the camera coordinate, one posture in a process where the character image705moves to the target position240. For example, one posture is set up by allowing the vertex data of the character image705to move on the camera coordinate, based on the action data of i-th frame (i=1, 2, 3 . . . ) of thus read out action data string270.

The 3D image setup function252sets up a 3D image of one posture in a process where the character image705moves from above the identification image565of the card545to the target position240based on the camera coordinate system of the pickup image, being detected by the card recognition program82, or the like, for example.

The image drawing function254allows the 3D image of one posture in a process where the character image705moves to the target position240to be subjected to perspective transformation to an image on the screen coordinate system, and draws thus transformed image into the image memory20(including hidden surface processing).

The image display function256outputs the image drawn in the image memory20in a unit of frame to the monitor30via the I/O port22, and displays the image on the screen of the monitor30.

The table rewriting function258rewrites the camera coordinate of the character image705of the record associated with the identification number in the current positional information table117, with the camera coordinate of the character image705after the character image moved for one frame.

The distance calculating function260calculates the shortest distance (direct distance) between the position of the character image705and the target position240, based on the camera coordinate of the character image after the character image moved for one frame, and the camera coordinate of the target position240.

The repetitive function262sequentially repeats the processing of the moving posture setup function250, the processing of the 3D image setup function252, the processing of the image drawing function254, the processing of the image display function256, the processing of the table rewriting function258, and the processing of the distance calculating function260, until the time when the distance calculated in the distance calculating function260falls within a predetermined range (for example, 0 mm to 10 mm when converted in the real space).

Accordingly, as shown inFIG. 30, the scene is displayed, in which the character image705associated with the identification number of the card545moves from above the identification image565of the card545to the target position240.

According to this first character movement display program94, when it is applied to a video game for example, as shown inFIG. 30, it is possible to display that the character image705appears from the card545moves to the target position and initiates battle or the like. Therefore, it is suitable for a versus-fighting game and the like.

For example, in the case of six-person fighting as shown inFIG. 33, it is possible to display a scene where each of the six character images move towards the center of the circle from above the identification images561to566of the cards541to546respectively associating with the images, and a battle is unfolded in the center of the circle.

For example, in the case of two-person fighting as shown inFIG. 34, it is possible to display a scene where the two character images703and705, for example, move to the target position (for example central position)240from above the identification images563and565of the cards543and545respectively associating these character images, and initiate battle. It is further possible as shown inFIG. 35to display a scene where the character image705at offensive side moves to the position of the character image703as a attacking target from above the identification image565of the card545which is associated with the character image705, and initiates battle.

Next, the second character movement display program96will be explained. This program96displays that, as shown inFIG. 36, the character image705having moved to the target position240from above the identification image565of the card545for example, according to the first character movement display program94as described above, moves back to above the identification image564of the card545originally positioned. The current positional information table117as described above registers the information to which position the character image705has moved.

As shown inFIG. 37, the second character movement display program96includes, action data string searching function274, moving posture setup function276, 3D image setup function278, image drawing function280, image display function282, table rewriting function284, distance calculating function286, and repetitive function288.

The action data string searching function274searches the moving action information table290for an action data string to display a scene where the character image705as shown inFIG. 36, for example, moves (returns) from the current position (for example the aforementioned target position240) to above the image705of the card545being associated.

Specifically, at first, the action data string searching function274searches the second moving action information table290which is recorded for example in the hard disk44, optical disk32, or the like, for a record associated with the identification number. Then, out of the data file294which is recorded in the hard disk44, optical disk32, or the like for example, and in which a large number of action data strings292are registered, the action data string searching function274reads out from the storage head address registered in the record thus searched, an action data string292indicating an action in which the character image705moves up to above the identification image565of the card545.

The moving posture setup function276reads out a camera coordinate of the character image705from the record associated with the identification number from the current positional information table117, and sets up one posture in a process where the character image705moves up to above the identification image565of the card545associated with the character image705.

For example, the moving posture setup function276moves the vertex data of the character image705on the camera coordinate, based on the action data of the i-th frame (i=1, 2, 3, . . . ) of the action data string292thus read out, so as to set up one posture.

The 3D image setup function278sets a 3D image of one posture in a process where the character image705moves from the current position to above identification image565of the card545being associated with the character image, based on the camera coordinate of the pickup image detected, for example, by the card recognition program82, or the like.

The image drawing function280allows the 3D image of one posture in a process where the character image705moves to above the identification image565of the card545associated with the character image, to be subjected to perspective transformation to an image on the screen coordinate system, and draws thus transformed image into the image memory20(including hidden surface processing).

The image display function282outputs the image drawn in the image memory20in a unit of frame to the monitor30via the I/O port22, and displays the image on the screen of the monitor30.

The table rewriting function284rewrites the camera coordinate of the character image705of the record associated with the identification number in the current positional information table117, with the camera coordinate of the character image705after the character image moved for one frame.

The distance calculating function286calculates a distance between the camera coordinate of the identification image565of the card545associated with the identification number, and the current position (camera coordinate) of the character associated with the identification number stored in the current positional information table117.

The repetitive function288sequentially repeats the processing of the moving posture setup function276, the processing of the 3D image setup function278, the processing of the image drawing function280, the processing of the image display function282, the processing of the table rewriting function284, and the processing of the distance calculating function286, until the time when the distance calculated in the distance calculating function286becomes zero. Accordingly, as shown inFIG. 36, for example, it is possible display a scene where the character image705moves to above the identification image565of the card545associated with the character image.

According to the second character movement display program96, when it is applied to a video game for example, it is possible to display that the character image705appeared from the card545moves to the target position240and initiates a battle, and when the character wins the battle, it returns to the card545originally positioned. Therefore, it is suitable for a versus-fighting game.

For instance, in the case of six-person fighting as shown inFIG. 33, it is possible to display that after six character images701to706unfold a battle at the center of the circle, the character images701,702and704who won the battle return to above the identification images561,562, and564of the cards541,542,544, respectively associated with those character images, as shown inFIG. 38.

In the case of two-person fighting as shown inFIG. 34, it is possible to display a scene as shown inFIG. 39, that out of the two character images703and705both being offensive, only the character image703who won the battle returns to the identification image563of the card543originally positioned.

Next, a character nullification program98will be explained. This program98displays a scene that when a character loses the battle, the character image disappears gradually while performing a certain action, and also nullifies the appearance of the character.

As shown inFIG. 40, the character nullification program98includes, action data string searching function296, disappearing posture setup function298, 3D image setup function300, image drawing function302, image display function304, repetitive function306, and invalid bit setup function308.

The action data string searching function296searches the disappearing display information table310, for an action data string to display a scene where the character image gradually disappears while it performs a certain action.

Specifically, at first, the action data string searching function296searches the disappearing display information table310recorded in the hard disk44or optical disk32or the like, for example, for a record associated with the identification number. Then, out of the data file314which is recorded in the hard disk44, optical disk32, or the like for example, and in which a large number of action data strings312are registered, the action data string searching function296reads out from the storage head address registered in the record thus searched, an action data string312indicating an action in which the character image gradually disappears.

The disappearing posture setup function298sets up one posture in a process where the character image is disappearing. For instance, the disappearing posture setup function298sets up one posture by moving the vertex data of the character image on the camera coordinate, based on the action data of i-th frame (i=1, 2, 3 . . . ) of the action data string312thus read out.

The 3D image setup function300sets up a 3D image of one posture in a process where a character image is disappearing on the identification image of the card, or on the target position240, or the like, based on the camera coordinate system of the pickup image detected by the card recognition program82, or the like, for example.

The image drawing function302allows the 3D image of one posture in a process where the character image is disappearing, to be subjected to perspective transformation to an image on the screen coordinate system, and draws thus transformed image into the image memory20(including hidden surface processing).

The image display function304outputs the image drawn in the image memory20in a unit of frame to the monitor30via the I/O port22, and displays the image on the screen of the monitor30.

The repetitive function306sequentially repeats the processing of the disappearing posture setup function298, the processing of the 3D image setup function300, the processing of the image drawing function302, and the processing of the image display function304. Accordingly, it is possible to display a scene that the character image gradually disappears while performing a certain action on the identification image of the card associated with the character image, on the target position240, or the like.

The invalid bit setup function308sets a valid/invalid (1/0) bit as “invalid”, regarding the record associated with the character image (that is, a record associated with the identification number) in the object information table118.

According to this character nullification program98, if it is applied to a video game for example, it is possible to display that a character appeared from the card moves to a predetermined position to initiate battle, and in the case of decease due to a loss in the battle, it is possible to display that the character gradually disappears. Therefore, this program is suitable for a versus-fighting game and the like.

In the meantime, according to a progress of the video game, the card put on the desk, table, or the like52may be moved by a hand of user. For example, this happens when at least one of the cards are displaced, the cards are switched in position, replaced by a new card, or the like. If there is a movement in a card as thus described, it is necessary to recognize again the card thus moved.

In order to solve the problem above, the card recognition program82or the card position forecasting program90may be started every unit of some frames, or a few dozen of frames. It is a matter of course that when a new card is recognized, the character appearance display program84is started and a character image associated with the identification number or the like of the new card may appear on the identification image of the new card. Furthermore, in just a simple case such that a card is displaced from the position or the card positions are switched, the character action display program86is started, and an action of “waiting” is displayed, for example.

However, there is a possibility that this re-recognition of the card may fail. For example, the re-recognition of the card may be disabled in the following cases; once the card is placed allowing an associated character to appear, and thereafter, the card is drawn back and left as it is, the card surface is covered by another object (such as user's hand), the pickup surface of the CCD camera is displaced and an image of the card surface cannot be picked up, and the like. An application program which performs processing for dealing with such cases as described above will be explained.

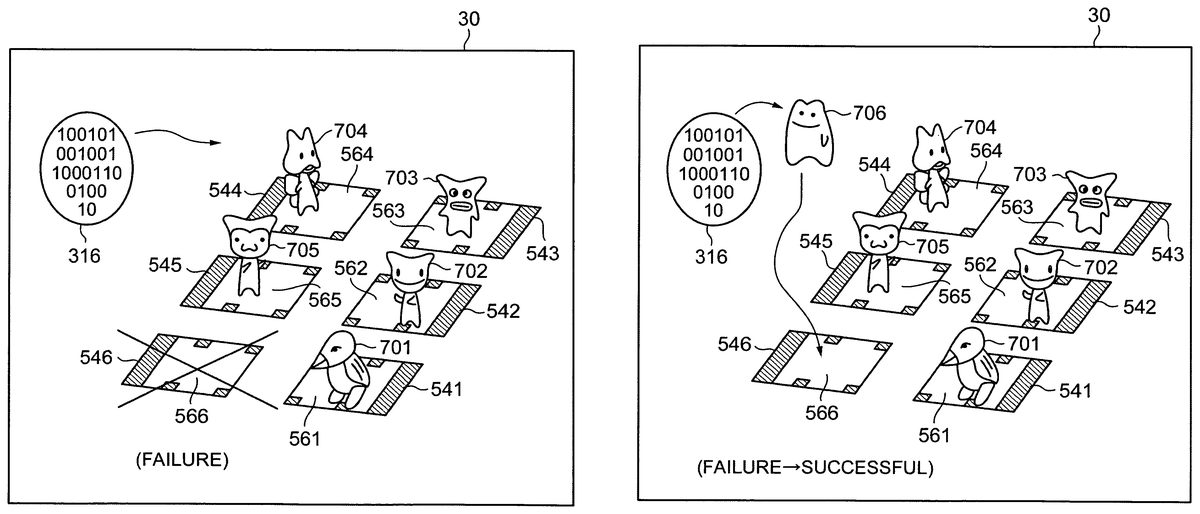

Firstly, floating image display program100will be explained. When the re-recognition of the card fails, as shown inFIG. 41for example, the program100displays an image in which data strings including arbitrary combination of “1” and “0” are described in a shape of an ellipse (floating image316), being floating for a predetermined period of time.FIG. 41shows an example of failing in recognition of the card546(it is denoted as “x” for ease of understanding).

This floating image display program100is started by any one of the card recognition program82, the field display program88, and the card position forecasting program90, when detection of identification information fails. As shown inFIG. 42, the program100includes, timekeeping function318, floating image reading function320, action data string reading function322, floating posture setup function324, 3D image setup function326, image drawing function328, image display function330, repetitive function332, and floating image erasing function334.

The timekeeping function318starts counting clock pulse Pc at the point of time when the program100is started, and at each point of predetermined timing (such as one minute, two minutes, three minutes or the like), a time-up signal St is outputted, and the timekeeping data is reset.

The specific image reading function320reads out from the hard disk44, optical disk32, or the like, for example, image data (floating image data)336of the floating image316as shown inFIG. 41.

The action data string reading function322reads out from the hard disk44, optical disk32, or the like, for example, an action data string338for displaying a scene that the floating image316is floating and moving.

The floating posture setup function324sets up one posture in a process where the floating image316is floating and moving. For example, the vertex data of the floating image data336is moved on the camera coordinate, based on the action data of the i-th frame (i=1, 2, 3 . . . ) of the action data string338thus read out, and one posture is set up.

The 3D image setup function326sets up a 3D image of one posture, in a process that the floating image316is floating and moving, based on the camera coordinate of the pickup image which is detected by the card recognition program82, or the like, for example.

The image drawing function328allows the 3D image of one posture in a process where the floating image316is floating and moving, to be subjected to perspective transformation to an image on the screen coordinate system, and draws thus transformed image into the image memory20(including hidden surface processing).

The image display function330outputs the image drawn in the image memory20in a unit of frame to the monitor30via the I/O port22, and displays the image on the screen of the monitor30.

The repetitive function332sequentially repeats the processing of the floating posture setup function324, the processing of the 3D image setup function326, the processing of the image drawing function328, and the processing of the image display function330, until the time when the timekeeping function318outputs the time-up signal St. Accordingly, as shown inFIG. 41, a scene where the floating image316moves while floating is displayed on the screen of the monitor30.

The floating image display program100displays the floating image316, and thus it is possible to indicate for the user that currently, some cards (in the example ofFIG. 41, card546) cannot be recognized, or there is no card which can be recognized.

Next, landing display program102will be explained. As shown inFIG. 43, after the floating image316is displayed being floating and the re-recognition of the card546is successful, this program102displays that the character image706associated with the identification number of the card546lands on the identification image566of the card546.

After the floating image316is displayed being floating, if the identification information of the card is properly detected, the landing display program102is started from the card recognition program82, the field display program88, or the card position forecasting program90. As shown inFIG. 44, the landing display program102includes, action data string searching function340, landing posture setup function342, 3D image setup function344, image drawing function346, image displaying function348, distance calculating function350, and repetitive function352. The aforementioned card recognition program82, the filed display program88, or card position forecasting program90supplies the identification number of the card which is successful in the re-recognition and the camera coordinate of the identification image of the card being associated.

As shown inFIG. 43, for example, the action data string searching function340searches the landing action information table354for an action data string to display a scene where a character image706jumps out from the position of the floating image316and lands on the identification image566of the card546being associated with the character image.

Specifically, at first, the action data string searching function searches the landing action information table354being recorded in the hard disk44, optical disk32, and the like, for a record associated with the identification number. Then, out of the data file358which is recorded in the hard disk44, optical disk32, or the like, and in which a large number of action data strings356are registered, the action data string searching function354reads out from the storage head address which is registered in the record thus searched, the action data string356indicating an action in which the character image706lands on the identification image566of the card546being associated with the character image.

The landing posture setup function342sets up one posture in a process where the character image706lands on the identification image566of the card546being associated with the character image. For example, the vertex data of the character image706is moved on the camera coordinate, based on the action data of the i-th frame (i=1, 2, 3 . . . ) of the action data string356thus read out, and one posture is set up.

The 3D image setup function344sets up a 3D image of one posture in a process that the character image706lands on the identification image566of the card546being associated with the character image, from the position of the floating image316, based on the camera coordinate of the pickup image detected by the card recognition program82, or the like, for example.

The image drawing function346allows the 3D image of one posture in a process where the character image706lands on the identification image566of the card546being associated with the character image, to be subjected to perspective transformation to an image on the screen coordinate system, and draws thus transformed image into the image memory20(including hidden surface processing).

The image display function348outputs the image drawn in the image memory20in a unit of frame to the monitor30via the I/O port22, and displays the image on the screen of the monitor30.

The distance calculating function350calculates a distance between the camera coordinate of the identification image706which has moved for one frame, and the camera coordinate of the identification image566of the card546being associated with the identification number.

The repetitive function352sequentially repeats the processing of the landing posture setup function342, the processing of the 3D image setup function344, the processing of the image drawing function346, the processing of the image display function348, and the processing of the distance calculating function350, until the distance calculated in the distance calculating function350becomes zero. Accordingly, as shown inFIG. 43, for example, it is possible to display a scene where the character image706lands on the identification image566of the card546being associated with the character image.

With this landing display program102, it is possible to display that the character image706, for example, jumps out of the floating image316and lands on the identification image566of the card546being associated. Therefore, an atmosphere is created such that the character of the card546returns from an unknown world to the virtual space of this video game and lands thereon, thereby giving an amusement to the video game.

In the above description, two-person fighting and six-person fighting are taken as an example. It is further possible to easily apply the present invention to three-person, four-person, five-person, and also seven-or-more person fighting.

It should be understood that the image display system, the image processing system, and the video game system relating to the present invention are not limited to the disclosed embodiments, but are susceptible of changes and modifications without departing from the scope of the invention.

Claims