U.S. Pat. No. 8,096,863

EMOTION-BASED GAME CHARACTER MANIPULATION

AssigneeSony Computer Entertainment America LLC

Issue DateOctober 21, 2008

Illustrative Figure

Abstract

Methods and systems for emotion-based game character manipulation are provided. Each character is associated with a table of quantified attributes including emotional attributes and non-emotional attributes. An adjustment to an emotional attribute of a game character is determined based on an interaction with another game character. The emotional attribute of the first game character is adjusted, which further results in an adjustment to a non-emotional attribute of the first game character. The behavior of the first game character is then determined based on the adjusted non-emotional attribute.

Description

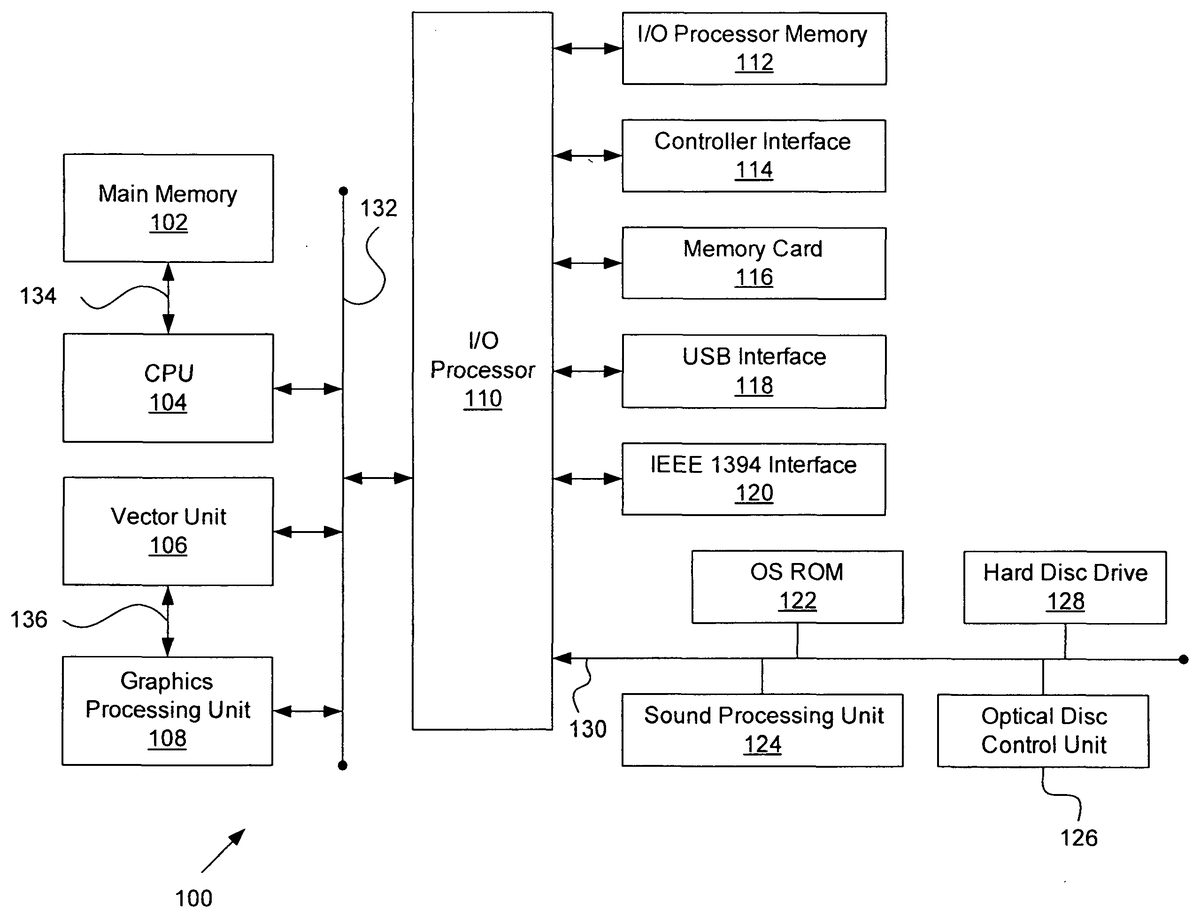

DETAILED DESCRIPTION FIG. 1is a block diagram of an exemplary electronic entertainment system100according to the present invention. The entertainment system100includes a main memory102, a central processing unit (CPU)104, at least one vector unit106, a graphics processing unit108, an input/output (I/O) processor110, an I/O processor memory112, a controller interface114, a memory card116, a Universal Serial Bus (USB) interface118, and an IEEE 1394 interface120, although other bus standards and interfaces may be utilized. The entertainment system100further includes an operating system read-only memory (OS ROM)122, a sound processing unit124, an optical disc control unit126, and a hard disc drive128, which are connected via a bus130to the I/O processor110. Preferably, the entertainment system100is an electronic gaming console. Alternatively, the entertainment system100may be implemented as a general-purpose computer, a set-top box, or a hand-held gaming device. Further, similar entertainment systems may contain more or less operating components. The CPU104, the vector unit106, the graphics processing unit108, and the I/O processor110communicate via a system bus132. Further, the CPU104communicates with the main memory102via a dedicated bus134, while the vector unit106and the graphics processing unit108may communicate through a dedicated bus136. The CPU104executes programs stored in the OS ROM122and the main memory102. The main memory102may contain prestored programs and programs transferred through the I/O Processor110from a CD-ROM, DVD-ROM, or other optical disc (not shown) using the optical disc control unit126. The I/O processor110primarily controls data exchanges between the various devices of the entertainment system100including the CPU104, the vector unit106, the graphics processing unit108, and the controller interface114. The graphics processing unit108executes graphics instructions received from the CPU104and the vector unit106to produce images for display on a display device (not shown). For example, the vector unit106may transform objects from three-dimensional coordinates to two-dimensional coordinates, and send the two-dimensional coordinates to the graphics processing unit108. Furthermore, the sound processing unit124executes instructions ...

DETAILED DESCRIPTION

FIG. 1is a block diagram of an exemplary electronic entertainment system100according to the present invention. The entertainment system100includes a main memory102, a central processing unit (CPU)104, at least one vector unit106, a graphics processing unit108, an input/output (I/O) processor110, an I/O processor memory112, a controller interface114, a memory card116, a Universal Serial Bus (USB) interface118, and an IEEE 1394 interface120, although other bus standards and interfaces may be utilized. The entertainment system100further includes an operating system read-only memory (OS ROM)122, a sound processing unit124, an optical disc control unit126, and a hard disc drive128, which are connected via a bus130to the I/O processor110. Preferably, the entertainment system100is an electronic gaming console. Alternatively, the entertainment system100may be implemented as a general-purpose computer, a set-top box, or a hand-held gaming device. Further, similar entertainment systems may contain more or less operating components.

The CPU104, the vector unit106, the graphics processing unit108, and the I/O processor110communicate via a system bus132. Further, the CPU104communicates with the main memory102via a dedicated bus134, while the vector unit106and the graphics processing unit108may communicate through a dedicated bus136. The CPU104executes programs stored in the OS ROM122and the main memory102. The main memory102may contain prestored programs and programs transferred through the I/O Processor110from a CD-ROM, DVD-ROM, or other optical disc (not shown) using the optical disc control unit126. The I/O processor110primarily controls data exchanges between the various devices of the entertainment system100including the CPU104, the vector unit106, the graphics processing unit108, and the controller interface114.

The graphics processing unit108executes graphics instructions received from the CPU104and the vector unit106to produce images for display on a display device (not shown). For example, the vector unit106may transform objects from three-dimensional coordinates to two-dimensional coordinates, and send the two-dimensional coordinates to the graphics processing unit108. Furthermore, the sound processing unit124executes instructions to produce sound signals that are outputted to an audio device such as speakers (not shown).

A user of the entertainment system100provides instructions via the controller interface114to the CPU104. For example, the user may instruct the CPU104to store certain game information on the memory card116or instruct a character in a game to perform some specified action. Other devices may be connected to the entertainment system100via the USB interface118and the IEEE 1394 interface120.

FIG. 2is a block diagram of one embodiment of the main memory102ofFIG. 1according to the present invention. The main memory102is shown containing a game module200which is loaded into the main memory102from an optical disc in the optical disc control unit126(FIG. 1). The game module200contains instructions executable by the CPU104, the vector unit106, and the sound processing unit124ofFIG. 1that allows a user of the entertainment system100(FIG. 1) to play a game. In the exemplary embodiment ofFIG. 2, the game module200includes data storage202, an action generator204, a characteristic generator206, and a data table adjuster208.

In one embodiment, the action generator204, the characteristic generator206, and the data table adjuster208are software modules executable by the CPU104. For example, the action generator204is executable by the CPU104to produce game play, including character motion and character response; the characteristic generator206is executable by the CPU104to generate a character's expressions as displayed on a monitor (not shown); and the data table adjuster208is executable by the CPU104to update data in data storage202during game play. In addition, the CPU104accesses data in data storage202as instructed by the action generator204, the characteristic generator206, and the data table adjuster208.

For the purposes of this exemplary embodiment, the game module200is a tribal simulation game in which a player creates and trains tribes of characters. A tribe of characters is preferably a group (or team) of characters associated with a given game user. Preferably, the tribal simulation game includes a plurality of character species, and each team of characters may include any combination of characters from any of the character species. A character reacts to other characters and game situations based upon the character's genetic makeup as expressed by gene attributes. Typically, each character's behavior depends upon one or more gene attributes. Gene attributes that typically remain constant throughout a character's life are called static attributes; gene attributes that may change during game play in response to character-character, character-group, and character-environment interactions are called dynamic attributes; and gene attributes that are functions of the static and dynamic attributes are called meta attributes. A character's dynamic and meta attributes may be modified by emotional attributes as quantified by hate/love (H/L) values. A character's H/L values correspond to other species, teams, and characters. A character's static attributes, dynamic attributes, meta attributes, and H/L values are described further below in conjunction withFIGS. 3-7.

FIG. 3Ais a block diagram of an exemplary embodiment of the data storage202ofFIG. 2according to the present invention. The data storage202includes a character A database302a, a character B database302b, and a character C database302c. Although theFIG. 3Aembodiment of data storage202shows three character databases302a,302b, and302c, the scope of the present invention includes any number of character databases302.

FIG. 3Bis a block diagram of an exemplary embodiment of the character A database302aofFIG. 3A. The character A database302aincludes a static parameter table308, a dynamic parameter table310, a meta parameter table312, and emotion tables314. Character A's static attributes are stored in the static parameter table308, character A's dynamic attributes (preferably not including H/L values) are stored in the dynamic parameter table310, character A's meta attributes are stored in the meta parameter table312, and character A's H/L values are stored in the emotion tables314. Attributes are also referred to as parameters. Although the static attributes stored in the static parameter table308typically remain constant throughout character A's life, in an alternate embodiment of the invention, the static attributes may be changed through character training. Referring back toFIG. 3A, the character B database302band the character C database302care similar to the character A database302a.

FIG. 4is an illustration of an exemplary embodiment of the static parameter table308ofFIG. 3B. The static parameter table308includes a plurality of static parameters, such as, but not limited to, a strength parameter402, a speed parameter404, a sight parameter406, a hearing parameter408, a maximum hit point parameter410, a hunger point parameter412, a healing urge parameter414, a self-healing rate parameter416, and an aggressive base parameter418. The scope of the invention may include other static parameters as well. The strength parameter402corresponds to a character's strength; the speed parameter404corresponds to how fast a character walks and runs across terrain; the sight parameter406corresponds to a character's viewing distance; and the hearing parameter408corresponds to a character's hearing distance. The maximum hit point parameter410is, preferably, a health parameter threshold value, which is discussed further below in conjunction withFIG. 5. The hunger point parameter412is a reference value to which a character's energy is measured to compute a character's hunger parameter, as will be described further below in conjunction withFIG. 6. Further, the healing urge parameter414corresponds to a character's desire to heal another character, while the self-healing rate parameter416corresponds to a time rate at which a character heals itself. Finally, the aggressive base parameter418is a reference value that represents a character's base aggression level, and is described further below in conjunction withFIG. 6. As previously indicated, not all of these parameters are required, and other parameters may be contemplated for use in the present invention.

FIG. 5is an illustration of an exemplary embodiment of the dynamic parameter table310ofFIG. 3B. The dynamic parameter table310includes a plurality of dynamic parameters, such as an energy parameter502, a health parameter504, an irritation parameter506, and a game experience parameter508. However, the scope of the present invention may not include all of the above listed parameters and/or include other dynamic parameters. These dynamic parameters change during game play. For example, the character's energy parameter502is a function of the character's consumption of food and the rate at which the character uses energy. When the character eats, the character's energy parameter502increases. However, the character is continuously using energy as defined by the character's metabolic rate. The metabolic rate is a meta parameter dependant upon several static parameters and is further discussed below in conjunction withFIG. 6.

In the present embodiment, the health parameter504is less than or equal to the maximum hit point parameter410(FIG. 4), and is a function of the character's energy parameter502, the character's self-healing rate parameter416(FIG. 4), and a number of character hits. For example, a character is assigned a health parameter504equal to the maximum hit point parameter410upon game initialization. Each time the character is hit by another character via a physical blow or weapons fire, the character's health parameter504decreases. In addition, whenever a character's energy parameter502falls below a predefined threshold value, the character's health parameter504decreases. Furthermore, the character's health parameter504increases at the character's self-healing rate416. Thus, although static and dynamic parameters are stored in separate tables, these parameters are closely related. For example, the health parameter504is based in part on the self-healing rate parameter416, which is a static parameter.

Preferably, the character's irritation parameter506increases if the character is exposed to irritating stimuli, such as the presence of enemies or weapons fire within the character's range of sight, specified by the sight parameter406(FIG. 4). The irritation parameter506decreases over time at a predefined rate.

Finally, the character's game experience parameter508quantifies a character's game experiences, particularly in association with character participation in tribal games and fighting. For example, an experienced character has accumulated wisdom, and is less likely to be surprised by game situations and more adept at making game decisions.

FIG. 6is an illustration of an exemplary embodiment of the meta parameter table312ofFIG. 3B. The meta parameter table312includes a plurality of meta parameters, such as, but not necessarily completely inclusive of or limited to, a hunger parameter602, a metabolic rate parameter604, an aggression parameter606, and a fight/flight parameter608. The meta parameters are typically changeable, and are based upon the static and dynamic parameters. For example, a character's desire to eat is dependent upon the hunger parameter602. In one embodiment of the invention, the hunger parameter602is a signed value defined by the energy parameter502(FIG. 5) less the hunger point parameter412(FIG. 4). If the character's hunger parameter602is greater than zero, then the character is not hungry. However, if the character's hunger parameter602is less than zero, then the character is hungry. As the negative hunger parameter602decreases (i.e., becomes more negative), the character's desire to eat increases. This desire to eat may then be balanced with other desires, such as a desire to attack an enemy or to search for a weapons cache. The weighting of these parameters may determine a character's behaviors and actions.

Typically, the metabolic rate parameter604is directly proportional to the character's speed parameter404(FIG. 4), the strength parameter402(FIG. 4), and the maximum hit point parameter410(FIG. 4), while indirectly proportional to the character's hunger point parameter412(FIG. 4) and healing urge parameter414(FIG. 4). For example, if the character's healing urge parameter414is large, the character is likely a calm, non-excitable individual. Therefore the character's metabolic rate parameter604would be small. Alternatively, if the character's healing urge parameter414is small, the character is likely a highly-strung, excitable individual. Consequently, the character's metabolic rate parameter604would be large.

Finally, the aggression parameter606is defined as the aggressive base parameter418(FIG. 4) plus the irritation parameter506(FIG. 5). As the aggression parameter606increases, the character becomes more aggressive and is more likely to be engaged in fights.

A character uses the fight/flight parameter608to determine whether, when faced with an enemy or other dangerous situations, to fight or flee the enemy. The fight/flight parameter608is preferably based upon the hunger parameter602, the aggression parameter606, the game experience parameter508(FIG. 5), and the energy parameter502(FIG. 5). In one embodiment of the invention, a large value for the fight/flight parameter608corresponds to a character's desire to fight, whereas a small value for the fight/flight parameter608corresponds to a character's desire to flee. For example, as the character's hunger or aggression increases, as measured by the character's hunger parameter602and aggression parameter606, respectively, the character is more likely to engage in fights.

FIG. 7is an illustration of one embodiment of the emotion tables314ofFIG. 3B, according to the present invention. The emotion tables314include an individual's hate/love (H/L) table702, a species H/L table704, and a team H/L table706. The individual's H/L table702includes one or more character identification (ID) numbers and one or more character H/L values, wherein each character ID number is associated with a character H/L value. For example, character A has a −900 character H/L value corresponding to a character identified by character ID number 192993293. Thus, character A has high hate for the individual having character ID number 192993293. Conversely, character A has a 100 character H/L value for character ID number 339399928. This positive H/L value corresponds to a general liking of the individual having ID number 339399928. The more negative or positive the H/L value is, the more the particular individual is hated or loved, respectively. In a further embodiment, the individuals H/L table702may also include individual character names corresponding to the character ID numbers.

The species H/L table704includes one or more species names and one or more species H/L values. Each species name is associated with a species H/L value which represents character A's relationship with each species. Similar to the individuals H/L table702, the more negative or positive the H/L value, the more the particular species is hated or loved, respectively. For example, character A has a 100 species H/L value corresponding to the Nids species which implies a general like of the Nids species. Conversely, character A has a −500 species H/L value corresponding to the Antenids species. Therefore, character A has a strong dislike (i.e., hate) for the Antenids species.

Similarly, the team H/L table706includes one or more team ID numbers, one or more team H/L values, and one or more team names. Each team ID number is associated with a team H/L value and a team name. For example, the character A has a 1000 team H/L value corresponding to the Frosties team represented by ID number 139000. Because the H/L value is so high, character A has a deep love for the Frosties team. However, character A has a −500 H/L value corresponding to the Slashers team represented by ID number 939992, thereby representing a hate for this team.

In one embodiment of the invention, the character, species, and team H/L values range from −1000 to 1000. A character, species, or team H/L value of 1000 represents unconditional love directed towards the character, species, or team, respectively, while a character, species, or team H/L value of −1000 represents extreme hatred directed towards the character, species, or team, respectively. A H/L value of zero represents a neutral feeling. In alternate embodiments, the H/L value ranges may be larger or smaller, and may include other maximum and minimum values.

According to one embodiment of the present invention, the data table adjuster208(FIG. 2) initializes all character and team H/L values to zero upon initiation of a new game. Furthermore, the data table adjuster208initializes all species H/L values to zero or to non-zero predefined values dependent upon game-defined species compatibility. In an alternate embodiment, the data table adjuster208initializes all species, character, and team H/L values to zero upon initiation of a new game. In a further embodiment, the data table adjuster208initializes some or all character, species, and team H/L values to non-zero predefined values dependent upon game-defined character, species, and team compatibility. Upon game completion, a user may save all the H/L values to the memory card116(FIG. 1) or the hard disc drive128(FIG. 1) for future game play. If the user has instructed the game module200(FIG. 2) to save the H/L values, the data table adjuster208may use the saved H/L values to initialize all game H/L values upon continuation of game play.

FIG. 8is an exemplary flowchart800of method steps for dynamic behavioral modification based upon game interactions, according to one embodiment of the present invention. In step802, H/L values are initialized. Initially, a user (not shown) instructs the game entertainment system100(FIG. 1) to execute the game module200(FIG. 2) via user commands and the controller interface114(FIG. 1). The CPU104(FIG. 1) receives the user commands and executes the data table adjuster208(FIG. 2). The data table adjuster208accesses the character, species, and team H/L values and stores the character, species, and team H/L values in the emotions table314(FIG. 3). These species H/L values are set to predefined values dependent upon game-defined species compatibility or results of a previous playing of the game. In an initial game play, since characters and teams have not yet interacted via game play, the data table adjuster208, preferably, initializes all character and team H/L values to zero, where a H/L value of zero represents a neutral emotion.

Next in step804, the CPU104executes the action generator204(FIG. 2) and the characteristic generator206(FIG. 2) to generate game play and game interactions. Game interactions typically include information exchange between characters, as well as communication, observation, detection of sound, direct physical contact, and indirect physical contact. For example, in one embodiment of the invention, character A and character B may interact and exchange information via a conversation. In another embodiment of the invention, character A may receive information via observations. For instance, character A may observe character B engaged in direct physical contact with character C via a first fight, or character A may observe character B engage character C in indirect physical contact via an exchange of weapons fire. Alternatively, in another example, character A may observe character B interact with an “inanimate” object. For example, character B moves a rock and discovers a weapons cache. In a further embodiment of the invention, character A may hear a communication between character B and character C. In yet another embodiment of the invention, character A may engage in direct physical contact with character B. Finally, in another embodiment of the invention, character A may engage in indirect physical contact with character B. For example, character A may discharge a weapon aimed at character B, or character A may receive fire from character B's weapon. The above described game interactions are meant as exemplary interactions, however, the scope of the present invention covers all types of interactions.

In step806, the data table adjuster208modifies the character, species, and team H/L values based upon the game interaction. In a first example, referring toFIG. 7, character A has a −200 character H/L value corresponding to character B and a 750 character H/L value corresponding to character C. If character B communicates to character A that character B has healed character C, then the data table adjuster208adjusts character A and character B's character H/L values. For example, after the communication with character B, character A's character H/L value corresponding to character B may increase to −75. In other words, character A hates character B less than before the communication, since character B has healed a character strongly loved by character A, namely character C. Further, character A and character B may communicate to other characters of the group that character B has healed character C. Consequently, other characters' H/L values corresponding to character B are adjusted to reflect a new feeling towards character B. In this manner of group interaction, the average behavior of the group has been modified.

In a second example, character A initially has a 800 character H/L value corresponding to character B and a −50 character H/L value corresponding to character C. However, character A sees character C hit character B, and thus character A's character H/L values are adjusted accordingly. In this example, character A's character H/L value corresponding to character B increases to 850 because of feelings of sympathy towards character B, and character A's character H/L value corresponding to character C may decrease to −200 due to an increased hatred for character C. In addition, if character C then attacks character A, character A develops more hatred towards character C, and character A's character H/L value corresponding to character C may further decrease to −275. However, at some later time in the game, if character C communicates to character A useful information on the operation of a weapon, then character A's character H/L value corresponding to character C may increase to −150.

In one embodiment of the invention, characters' H/L values may be adjusted based upon an averaging procedure. For example, if a group of characters interact, then the characters' H/L values are adjusted based upon averaging the group of characters' H/L values. More specifically, if three characters have a Nids species (FIG. 7) interaction, and if character A has a 100 Nids species H/L value (FIG. 7), character B has a −1000 Nids species H/L value, and character C has a 300 Nids species H/L value, then after the interaction each character (character A, character B, character C) has a −200 Nids species H/L value. The adjustment of characters' H/L values based upon the averaging procedure is an exemplary embodiment of the invention and is not meant to restrict the invention. In alternate embodiments, characters' H/L values may be adjusted based on other weighting methods or mathematical algorithms.

In step808, each character's non-zero character, species, and team H/L values modify the character's subsequent behavior. For example, character A's energy parameter502(FIG. 5) is less than character A's hunger point parameter412(FIG. 4), and consequently character A is hungry. Subsequently, character A sees an enemy character, for example, character B. Character A must choose between attacking character B or searching for food. Referring back to the first example of step806, since character A's character H/L value corresponding to character B has been previously modified from −200 to −75, character A chooses to search for food instead of attacking character B. However, if character A's character H/L value corresponding to character B had not been modified from −200 to −75 in step806, then character A's hatred for character B outweighs character A's desire to search for food, and character A attacks character B. Thus, modifications to character A's character, species, and team H/L values via game interactions modify character A's subsequent behavior and game decisions. Similarly, as game interactions modify H/L values of a group of characters (step806), the subsequent average behavior of the group is modified.

In step810, the CPU104determines if the game user(s) have completed the game. If the CPU104determines that the game is complete, then the method ends. However if in step810, the CPU104determines that the game is not complete, then the method continues at step804.

The invention has been described above with reference to specific embodiments. It will, however, be evident that various modifications and changes may be made thereto without departing from the broader spirit and scope of the invention as set forth in the appended claims. The foregoing description and drawings are, accordingly, to be regarded in an illustrative rather than a restrictive sense.

Claims

- A method for emotion-based game character manipulation, the method comprising: executing instructions stored in memory, wherein execution of the instructions by a processor: maintains a table of quantified attributes for a first game character, the attributes including emotional attributes and non-emotional attributes;determines an adjustment to an emotional attribute of the first game character based on an interaction between the first game character and a second game character;adjusts the emotional attribute of the first game character as determined, wherein adjustment of the emotional attribute results in an adjustment to a non-emotional attribute of the first game character;and generates a behavior of the first game character based on the adjusted non-emotional attribute.

- The method of claim 1 , wherein the emotional attributes of the first game character is associated with a team of game characters.

- The method of claim 1 , wherein the emotional attributes of the first game character is associated with a species of game characters.

- The method of claim 3 , wherein the emotional attributes of the first game character associated with the species is further based on a species of the first game character.

- The method of claim 1 , further comprising the execution of instructions by a processor to receive user input concerning the interaction between the first game character and the second game character and initiating the interaction based on the received user input.

- The method of claim 1 , further comprising the execution of instructions by a processor to re-adjust the emotional attribute of the first game character back to an original state after a period of time.

- The method of claim 1 , wherein determining an adjustment to an emotional attribute of the first game character is further based on the second game character being within range-of-sight of the first game character.

- The method of claim 1 , wherein determining an adjustment to the emotional attribute of the first game character is further based on an emotional attribute of the second game character.

- The method of claim 7 , wherein the emotional attribute of the first game character is adjusted to be closer to the emotional attribute of the second game character.

- The method of claim 1 , wherein generating the behavior of the first game character includes determining that the first game character chooses an action from at least two possible actions.

- The method of claim 1 , wherein generating the behavior of the first game character includes determining that the first game character mimic an action performed by the second character.

- A system for emotion-based game character manipulation, the system comprising: a memory that stores a data table concerning quantified attributes for a first game character, the attributes including emotional attributes and non-emotional attributes;a processor, wherein execution of instructions by the processor determines an adjustment to a stored emotional attribute of the first game character based on an interaction between the first game character and a second game character;a data table adjuster stored in memory and executable to adjust the stored emotional attribute of the first game character as determined by execution of the instructions by the processor, wherein adjustment of the stored emotional attribute results in an adjustment to a non-emotional attribute of the first game character;and an action generator stored in memory and executable to generate a behavior of the first game character based on the adjusted non-emotional attribute.

- The system of claim 12 , wherein the memory further stores data concerning emotional attributes associated with a team of game characters.

- The system of claim 12 , wherein the memory further stores data concerning emotional attributes associated with a species of game characters.

- The system of claim 12 , wherein the action generator is further executable to initiate the interaction between the first game character and the second game character based on received user input.

- The system of claim 12 , wherein execution of instructions by the processor further determines the adjustment to the emotional attribute of the first game character based on the second game character being within range-of-sight of the first game character.

- The system of claim 12 , wherein execution of instructions by the processor further determines the adjustment to the emotional attribute of the first game character based on an emotional attribute of the second game character.

- The system of claim 12 , wherein the action generator is further executable to direct the first game character to choose an action from at least two possible actions.

- The system of claim 12 , wherein the action generator is further executable to direct the first game character to mimic an action performed by the second character.

- A non-transitory computer-readable storage medium having embodied thereon a program, the program being executable by a processor to perform a method for emotion-based game character manipulation, the method comprising: maintaining a table of quantified attributes for a first game character, the attributes including emotional attributes and non-emotional attributes;determining an adjustment to an emotional attribute of the first game character based on an interaction between the first game character and a second game character;adjusting the emotional attribute of the first game character as determined, wherein adjustment of the emotional attribute results in an adjustment to a non-emotional attribute of the first game character;and generating a behavior of the first game character based on the adjusted non-emotional attribute.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.