U.S. Pat. No. 7,785,197

VOICE-TO-TEXT CHAT CONVERSION FOR REMOTE VIDEO GAME PLAY

AssigneeNintendo Co., Ltd.

Issue DateJuly 29, 2004

Illustrative Figure

Abstract

A multi-player networked video game playing system including for example video game consoles analyzes speech to vary the font size and/or color of associated text displayed to other users. If the amplitude of the voice is high, the text displayed to other users is displayed in a larger than normal font. If the voice sounds stressed or is aggressive words are used, the text displayed to other users is displayed using a special font such as red color. Other analysis may be performed on the speech in context to vary the font size, color, font type and/or other display attributes.

Description

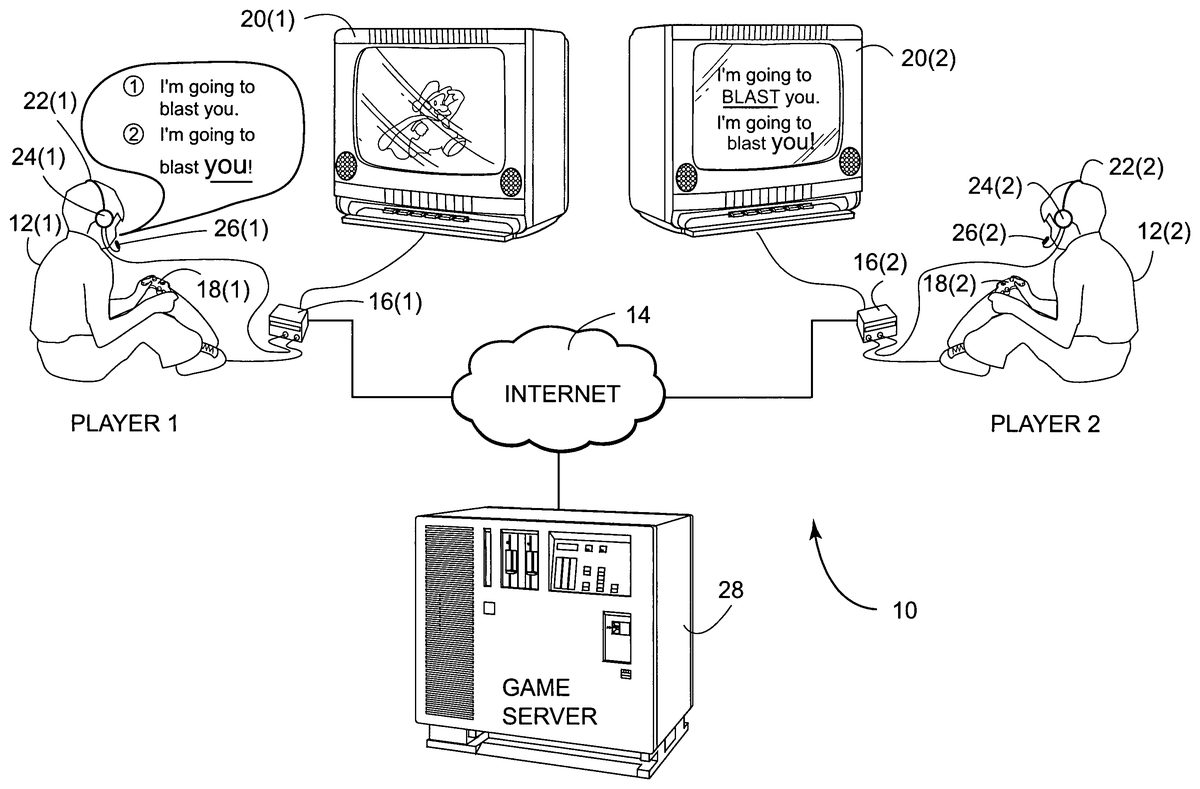

DETAILED DESCRIPTION FIG. 1schematically shows an example non-limiting illustrative implementation of a multi-player gaming system10. In the example implementation shown, video game player12(1) plays a video game against another video game player12(2) (any number of players can be involved). Video game players12(1) and12(2) may be remotely located, with communications being provide between them via a network14such as the Internet or any other signal path capable of carrying game play data or other signals. In the example system10shown, each game player12has available to him or her electronic video game playing equipment16. In the example shown, video game playing equipment16may comprise for example a home video game platform such as a NINTENDO GAMECUBE system connected to a handheld game controller18and a display device20such as a home color television set. In other examples, game playing equipment16could comprise a handheld networked video game platform such as a NINTENDO DS or GAMEBOY ADVANCE, a personal computer including a monitor and appropriate input device(s), a cellular telephone, a personal digital assistant, or any other electronic or other appliance. In the example system10shown, each of players12has a headset22including earphones24and a microphone26. Earphones24receive audio signals from game playing equipment16and play them back into the player12's ears. Microphone26receives acoustical signals (e.g., speech spoken by a player12) and provides associated audio signals to the game playing equipment16. In other exemplary implementations, microphone26and earphones24could be separate devices or a loud speaker and appropriate feedback-canceling microphone could be used instead. In the example shown inFIG. 1, both of players12(1) and12(2) are equipped with a headset22, but depending upon the context it may be that only some subset of the players have such equipment. In the example system10shown, each of players12interacts with video game play by inputting commands via a handheld controller18and watching a resulting display (which may be audio visual) on a ...

DETAILED DESCRIPTION

FIG. 1schematically shows an example non-limiting illustrative implementation of a multi-player gaming system10. In the example implementation shown, video game player12(1) plays a video game against another video game player12(2) (any number of players can be involved). Video game players12(1) and12(2) may be remotely located, with communications being provide between them via a network14such as the Internet or any other signal path capable of carrying game play data or other signals. In the example system10shown, each game player12has available to him or her electronic video game playing equipment16. In the example shown, video game playing equipment16may comprise for example a home video game platform such as a NINTENDO GAMECUBE system connected to a handheld game controller18and a display device20such as a home color television set. In other examples, game playing equipment16could comprise a handheld networked video game platform such as a NINTENDO DS or GAMEBOY ADVANCE, a personal computer including a monitor and appropriate input device(s), a cellular telephone, a personal digital assistant, or any other electronic or other appliance.

In the example system10shown, each of players12has a headset22including earphones24and a microphone26. Earphones24receive audio signals from game playing equipment16and play them back into the player12's ears. Microphone26receives acoustical signals (e.g., speech spoken by a player12) and provides associated audio signals to the game playing equipment16. In other exemplary implementations, microphone26and earphones24could be separate devices or a loud speaker and appropriate feedback-canceling microphone could be used instead. In the example shown inFIG. 1, both of players12(1) and12(2) are equipped with a headset22, but depending upon the context it may be that only some subset of the players have such equipment.

In the example system10shown, each of players12interacts with video game play by inputting commands via a handheld controller18and watching a resulting display (which may be audio visual) on a display device20. Software and/or hardware provided by game playing platforms16produce interactive 2D or 3D video game play and associated sound. In the example shown, each instance of game playing equipment16provides appropriate functionality to produce local video game play while communicating sufficient coordination signals for other instances of the game playing equipment to allow all players12to participate in the “same” game. In some contexts, the video game could be a multiplayer first person shooter, driving, sports or any other genre of video game wherein each of players12can manipulate an associated character or other display object by inputting commands via handheld controllers18. For example, in a sports game, one player12(1) could control the players of one team, while another player12(2) could control the players on an opposite team. In a driving game, each of players12(1),12(2) could control a respective car or other vehicle. In a flight or space simulation game, each of players12may control a respective aircraft. In a multi-user role playing game, each of players may control a respective avatar that interacts with other avatars within the virtual environment provided by the game. Any number of players may be involved depending upon the particular game play.

As will be seen inFIG. 1, a game server28may optionally be provided to coordinate game play. For example, in the case of a complex multiplayer role playing game having tens or even hundreds of players12who can play simultaneously, a game server28may be used to keep track of the master game playing database and to provide updates to each instance of game playing equipment16. In other game playing contexts, a game server28may not be necessary with all coordination being provided directly between the various instances of game playing equipment16.

In the particular example system10shown inFIG. 1, a voice-to-voice text chat capability is provided. As can be seen, player12(1) in this particular example is speaking the following words into his or her microphone26:“I'm going to blast you.”

In response to this statement, game playing equipment16and/or game server28converts the spoken utterance into data representing associated text along with formatting information responsive to detected characteristics of the utterance. For example, the speech-to-text converter may recognize the term “blast” as being a special “threat” term, and cause the resulting text message to be displayed on the other player(s)' display20(2) using a special format such as for example: “I'm going to BLAST you.”

The special formatting may be the user of all capital letters, use of a special size or style of font (e.g., italics, bold, or some other special typeface), the use of a special color (e.g., red for threats, blue for statements of friendship, green for statements of emotion, yellow for statements of fear, etc.), or any other sort of distinctive visual, aural or other indication.

As another example shown inFIG. 1, suppose player12(1) says “I'm going to blast you!” in a loud voice emphasizing the word “you.” The non-limiting exemplary speech-to-text converter in the example system10shown inFIG. 1recognizes the increased amplitude and/or different inflection or emphasis placed on the word “you” and may provide an associated display on the other player(s)' display20(2) that includes punctuation, formatting or other indications emphasizing the displayed text “you,” for example: “I'm going to blast you!”

Such recognition may be in context, on a word-by-word or sound-by-sound basis, or using any other characteristic such as speech loudness, speech pitch, speech tone, whether the player is shouting or whispering, articulation, inflection, language (e.g., English, French, German, Japanese, etc.), vocabulary, pauses or any other characteristic of speech. The associated formatting based on the recognition of such predetermined characteristic can take any form such as size of displayed text, color of displayed text, language of displayed text, timing of displayed text, other information displayed along with text, sounds played while text is being displayed, scrolling or other movement of displayed text, introduction of visual or audio effects highlighting displayed text, selection of different displays for displaying displayed text, selection of portions of display20for displaying displayed text, or any other attribute perceptible by player12(2).

FIG. 2shows an example illustrative non-limiting implementation of a speech-to-text converter50that may be used by example system10—either in or with game playing equipment16, within game server28or both. In the example shown, analog speech received from a microphone26is converted into digital form by an analog-to-digital converter52and presented to both a phoneme pattern matcher54and an amplitude measurer56. A phoneme pattern matcher54attempts to recognize phoneme patterns within the incoming speech stream. Such phoneme recognition output is provided to a word pattern matching block58that recognizes words in whatever appropriate language is being spoken by player12(2). Blocks54,58are conventional and may be supplied by any suitable speech-to-text conversion algorithm as is well known by those skilled in the art.

In the example shown, amplitude measurement block56provides an average amplitude output indicating the amplitude or loudness at which player12(2) spoke the words into the microphone.

As shown inFIG. 3, the amplitude and content (word recognition) outputs provided by theFIG. 2example speech-to-text converter are analyzed using an illustrative, non-limiting exemplary analysis route that detects characteristics in the incoming speech signals. In the particular illustrative non-limiting example shown, the analyzer60determines whether a recognized word is a known stress word such as “blast”, “friend”, “enemy”, “shoot”, or other special word (decision block62). If the word is a known stress word (“yes” exit to decision block62), then the analyzer60may add appropriate formatting information such as for example “display color=red” (block64). Similarly, if the average amplitude of the utterance is above a certain threshold level A (as tested for by decision block66), analyzer60may similarly provide appropriate formatting such as color, font, etc. (block64). In the example shown, if the recognized voice is not a known stress word and the average amplitude does not exceed a certain threshold level A (“no” exit to decision block66), then the analyzer60may decide to display the associated text in a normal color (block68), but may perform a further test to determine whether the amplitude is above a threshold B (which may be lower than threshold A for example) (decision block70). If the amplitude level is higher than B (“yes” exit to decision block70), then the analyzer may increment the font size to result in a larger font, an all caps display, or any other perceptible indicia (block72). Otherwise, the analyzer60may set the font size as “normal” (block74).

In one exemplary illustrative non-limiting implementation, the analyzer60may perform additional functionalities such as for example filtering or replacement of words (e.g., to screen out bad language). Word substitution is possible using for example a database of word substitutions. The display instructions108shown inFIG. 4may provide a conventional scroll-back capability so that game players12can scroll back and review a history of some substantial portion of the text resulting from previous game play. This provides a record for ready reference. Different display text may be tagged with the identity of the player who uttered the associated speech so that different statements can be attributed to different players.

FIG. 4shows an example storage medium100that stores instructions for execution by game playing equipment16and/or game server28. Such instructions may include for example game play instructions102, speech recognition instructions104implementing the functionality shown inFIG. 2, analyzer instructions106implementing the analyzer functionality shown in FIG.3, and display instructions for providing visually perceptible formatted textual displays on display device20.

While the technology herein has been described in connection with exemplary illustrative non-limiting embodiments, the invention is not to be limited by the disclosure. The invention is intended to be defined by the claims and to cover all corresponding and equivalent arrangements whether or not specifically disclosed herein.

Claims

- A multi-player video game playing method for use with a networked multi-player video game playing system that, in use, accepts interactive inputs from a first game player and additional inputs from at least a second game player over a network and thereby provides interactive multiplayer game play for both said first game player and said second game player, said method comprising: receiving user inputs from said first game player manipulating at least a first handheld video game controller;communicating multiplayer game playing information over a network with the second game player's video game playing system;displaying, in a coordinated manner on a first display associated with said first game player and on a second display associated with said second game player, multiplayer interactive video game play at least in part in response to said received user input and said communicated multiplayer game playing information;sensing audible speech uttered by the first game player;automatically, by computer, converting the sensed uttered speech to written text;analyzing said speech and/or said text for aggressive content;and generating formatting for said text for display to the second video game player on the second display, including formatting at least a portion of said displayed text in a way so that as displayed on the second display the formatting of the text display at least in part reflects how aggressive the first game player's speech is.

- The method of claim 1 wherein said formatting includes aggression-indicating font size.

- The method of claim 1 wherein the formatting includes aggression-indicating font color.

- The method of claim 1 wherein the formatting includes aggressive punctuation.

- The method of claim 1 wherein the formatting includes aggression-indicating font style.

- The method of claim 1 wherein the characteristic comprises aggression-indicating uttered speech amplitude.

- The method of claim 1 wherein the aggressive content comprises use of predetermined stress words.

- The method of claim 1 wherein the aggressive content comprises audible voice features that reflect aggression.

- The method of claim 1 wherein the aggressive content comprises a threat.

- Video game playing equipment comprising: at least one handheld game controller that in use provides local input from a first video game player;a computing device executing video game play instructions at least in part in response to said local input from said first video game player via said at least one handheld game controller and at least in part in response to additional signals communicated over a network from a second video game player remote to said first video game player, to provide a multiplayer video game display;a microphone that, in use, receives audible speech from said first video game player;a speech-to-text converter that automatically converts said received audible speech into written text;an analyzer that analyzes said audible speech and/or text to determine whether at least one predetermined aggression characteristic is present in utterance by said first video game player;and a text formatter that selectively formats said text for display on a second display associated with said second video game player to demonstrate, to said second video game player, aggression of said first video game player at least in part in response to said analyzer determination.

- A video game chat system comprising: a plurality of video game play sites, each said site including a user input device and a display, said displays providing coordinated interactive video game play in response to user inputs said user input devices provide, wherein at least one of said sites further includes an audio transducer that picks up audible speech uttered by a first game player;a speech recognizer coupled to said audio transducer, said speech recognizer converting said first game player's audible speech into displayable indicia and further analyzing said audible speech to determine whether a predetermined characteristic indicating emotion is present therein;and a display formatter that displays said displayable indicia on at least one of said displays to at least a second game player different from said first game player, said display formatter formatting said display to show first game player's emotion to said second game player at least in part in response to whether said predetermined characteristic is present in said first player's audible speech.

- The system of claim 11 wherein said emotion comprises aggression.

- A multi-player video game playing method for use with a networked multi-player video game playing system that, in use, accepts interactive inputs from a first game player and additional inputs from at least a second game player over a network and thereby provides interactive multiplayer game play for both said first game player and said second game player, said method comprising: receiving user inputs from said first game player manipulating at least a first handheld video game controller;communicating multiplayer game playing information over a network with the second game player's video game playing system;displaying, in a coordinated manner on a first display associated with said first game player and on a second display associated with said second game player, multiplayer interactive video game play at least in part in response to said received user input and said communicated multiplayer game playing information;sensing audible speech uttered by the first game player;automatically, by computer, converting the first player's uttered speech to text;analyzing said speech and/or said text for emotional content;and formatting said text for display to said second video game player, including formatting at least a portion of said text n a way so that as displayed on the second display screen the format of said text display at least in part graphically reflects said emotional content.

- Video game playing equipment comprising: at least one handheld game controller that in use provides local input from a first video game player;a computing device executing video game play instructions at least in part in response to said local input from said first video game player via said at least one handheld game controller and at least in part in response to additional signals communicated over a network from a second video game player remote to said first video game player, to provide a multiplayer video game display;a microphone that, in use, receives speech from at least the second game player;a speech-to-text converter that converts said received speech into text;an analyzer that analyzes said speech and/or text to determine whether at least one predetermined emotional characteristic is present;and a text formatter that selectively formats said text to graphically show, with said formatting, emotion on the display at least in part in response to said analyzer determination.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.