DETAILED DESCRIPTION FIG. 1is a block diagram of one embodiment of an electronic entertainment system100in accordance with the invention. System100includes, but is not limited to, a main memory110, a central processing unit (CPU)112, vector processing units VU0111and VU1113, a graphics processing unit (GPU)114, an input/output processor (IOP)116, and IOP memory118, a controller interface120, a memory card122, a Universal Serial Bus (USB) interface124, and an IEEE1394 interface126. System100also includes an operating system read-only memory (OS ROM)128, a sound processing unit (SPU)132, an optical disc control unit134, and a computer readable storage medium such as a hard disc drive (HDD)136, which are connected via a bus146to IOP116. System100is preferably an electronic gaming console; however, system100may also be implemented as a general-purpose computer, a set-top box, or a hand-held gaming device. CPU112, VU0111, VU1113, GPU114, and IOP116communicate via a system bus144. CPU112communicates with main memory110via a dedicated bus142. VU1113and GPU114may also communicate via a dedicated bus140. CPU112executes programs stored in OS ROM128and main memory110. Main memory110may contain pre-stored programs and may also contain programs transferred via IOP116from a computer readable storage medium such as a CD-ROM, DVD-ROM, or other optical disc (not shown) using optical disc control unit134. IOP116control data exchanges between CPU112, VU0111, VU1113, GPU114and other devices of system100, such as controller interface120. CPU114executes drawings instructions from CPU112and VU0111to produce images for display on a display device (not shown). VU1113transforms objects from three-dimensional coordinates to two-dimensional coordinates, and sends the two-dimensional coordinates to GPU114. SPU132executes instructions to produce sound signals that are output on an audio device (not shown). A user of system100provides instructions via controller interface120to CPU112. For example, the user may instruct CPU112to store certain game information on memory card122or may instruct a character in a game to perform some specified action. Other devices may be connected to system100via USB ...

DETAILED DESCRIPTION

FIG. 1is a block diagram of one embodiment of an electronic entertainment system100in accordance with the invention. System100includes, but is not limited to, a main memory110, a central processing unit (CPU)112, vector processing units VU0111and VU1113, a graphics processing unit (GPU)114, an input/output processor (IOP)116, and IOP memory118, a controller interface120, a memory card122, a Universal Serial Bus (USB) interface124, and an IEEE1394 interface126. System100also includes an operating system read-only memory (OS ROM)128, a sound processing unit (SPU)132, an optical disc control unit134, and a computer readable storage medium such as a hard disc drive (HDD)136, which are connected via a bus146to IOP116. System100is preferably an electronic gaming console; however, system100may also be implemented as a general-purpose computer, a set-top box, or a hand-held gaming device.

CPU112, VU0111, VU1113, GPU114, and IOP116communicate via a system bus144. CPU112communicates with main memory110via a dedicated bus142. VU1113and GPU114may also communicate via a dedicated bus140. CPU112executes programs stored in OS ROM128and main memory110. Main memory110may contain pre-stored programs and may also contain programs transferred via IOP116from a computer readable storage medium such as a CD-ROM, DVD-ROM, or other optical disc (not shown) using optical disc control unit134. IOP116control data exchanges between CPU112, VU0111, VU1113, GPU114and other devices of system100, such as controller interface120.

CPU114executes drawings instructions from CPU112and VU0111to produce images for display on a display device (not shown). VU1113transforms objects from three-dimensional coordinates to two-dimensional coordinates, and sends the two-dimensional coordinates to GPU114. SPU132executes instructions to produce sound signals that are output on an audio device (not shown).

A user of system100provides instructions via controller interface120to CPU112. For example, the user may instruct CPU112to store certain game information on memory card122or may instruct a character in a game to perform some specified action. Other devices may be connected to system100via USB interface124and IEEE 1394 interface126. In the preferred embodiment, a USB microphone (not shown) is connected to USB interface124to enable the user to provide voice commands to system100.

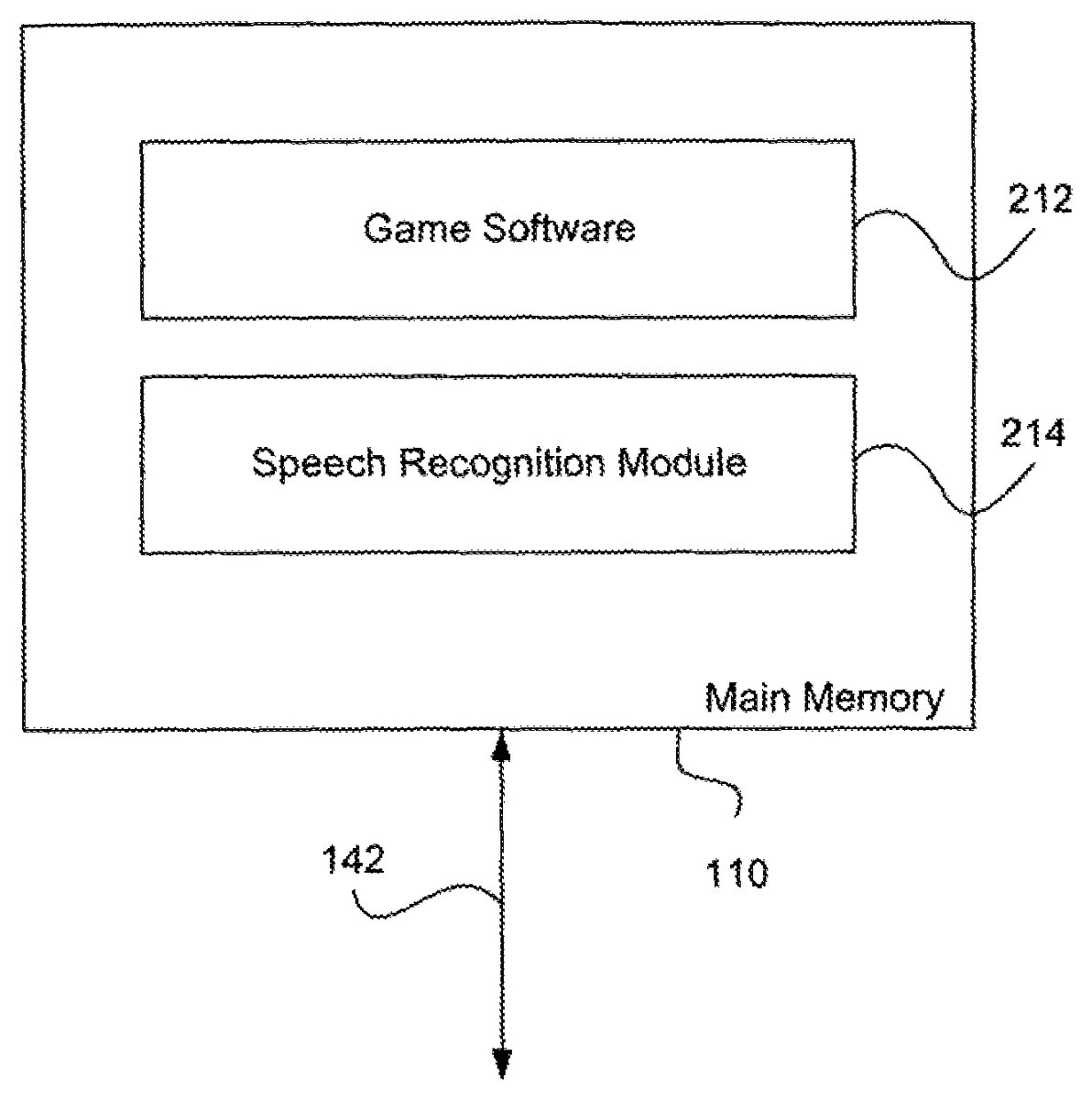

FIG. 2is a block diagram of one embodiment of main memory110ofFIG. 1, according to the invention. Main memory110includes, but is not limited to, game software212and a speech recognition module214, which were loaded into main memory100from an optical disc in optical disc control unit134. Game software212includes instructions executable by CPU112, VU0111, VU1113, and SPU132that allow a user of system100to play the game. In theFIG. 2embodiment, game software212is a combat simulation game in which the user controls a three-character team of foot soldiers. In other embodiments, game software212may be any other type of game, including but not limited to a role-playing game (RPG), a flight simulation game, and a civilization-building simulation game.

Speech recognition module214is a speaker-independent, context sensitive speech recognizer executed by CPU112. In an alternate embodiment, IOP116executes speech recognition module214. Speech recognition module214includes a vocabulary of commands that correspond to instructions in game software212. The commands include single-word commands and multiple-word commands. When an input speech signal (utterance) matches a command in the vocabulary, speech recognition module214notifies game software212, which then provides appropriate instruction to CPU112.

The user of system100and game software212inputs one or more of the commands via the microphone and USB interface124to control the actions of characters in game software212. The spoken commands are preferably used in conjunction with controller commands; however, other embodiments of game software212may implement spoken commands only.

FIG. 3Ais a diagram of one embodiment of a level1menu command screen322on a display device310connected to system100, according to the invention. The level1menu330includes three level1command items, a team item332, an alpha item334, and a bravo item336. Although three level1command items are shown, game software212may use any number of level1command items. Level1menu command screen322appears when the user actuates and then releases a button or other device on the controller dedicated to this function. Level1menu command screen322also includes a current game picture (not shown) underlying level1menu330. Level1menu330appears on display device310to indicate to the user available choices for level1commands.

In theFIG. 2embodiment of game software212, the user controls the actions of a team leader character using controller actions such as actuating buttons or manipulating a joystick. The user inputs spoken commands to control the actions of a two-character team. Team item332represents the availability of a team command that selects both members of the two-character team to perform an action. Alpha item334represents the availability of a command that selects one member, alpha, to perform an action. Bravo item336represents the availability of a command that selects one member, bravo, to perform an action.

The user says the word “team” into the microphone to select team item332, says the word “alpha” to select alpha item334, and says the word “bravo” to select bravo item336. When speech recognition module214recognizes one of the level1commands, it notifies game software212, which then displays a level2menu command screen.

FIG. 3Bis a diagram of one embodiment of a level2menu command screen324on the display device310, according to the invention. In theFIG. 3Bembodiment, the user has spoken “team” into the microphone to select team item332. Level2menu command screen324shows a level2menu340. Level2menu340includes the previously selected level1command (team item322) and three level2commands: a hold position item342, a deploy item344, and a fire at will item346. Although three level2command items are shown, game software212may use any number of level2command items.

Level2menu340illustrates available commands for the two-character team. The available commands depend upon a current state of the game. For example, if the user verbally selects hold position item342, then the two-character team will appear to hold their position in the game environment. The next time the user verbally selects team item332, hold position item342will not appear but a follow item (not shown) will appear instead.

The combination of team item332and hold position item342is a complete command, so if the user says “hold position” while screen324is displayed, game software212then stops displaying screen324and causes the selected characters to perform the selected action in the game environment (both members of the team hold their position). Similarly, the combination of team item332and fire at will item346is a complete command, so if the user says “fire at will ” while screen324is displayed, game software212then stops displaying screen324and causes the selected characters to perform the selected action in the game environment (both members of the team fire their weapons). The combination of team item332and deploy item344is not a complete command. If the user says “deploy” while screen324is displayed, game software212then displays a level3menu command screen.

FIG. 3Cis a diagram of one embodiment of a level3menu command screen326on the display device310, according to the invention. Level3menu command screen326shows a level3menu350that includes a previously selected level1command (team item332), a previously selected level2command (deploy344) and two level3commands: frag item352and smoke item354. In theFIG. 2embodiment of game software212, the two-character team of foot soldiers can deploy fragment (frag) grenades and smoke grenades. Although only two level3commands are shown, game software212may include any number of level3commands.

The combination of team item332, deploy item344, and frag item352is a complete command, so if the user says “frag” while screen326is displayed, software module212stops displaying screen326and causes the selected characters to perform the selected action in the game environment (both members of the team deploy a frag grenade). The combination of team item332, deploy item344, and smoke item354is a complete command, so if the user says “smoke” while screen326is displayed, software module212stops displaying screen326and causes the selected characters to perform the selected action in the game environment (both members of the team deploy a smoke grenade).

Although inFIG. 3Conly three menu levels are shown, any number of menu levels is within the scope of the invention. In the preferred embodiments the number of menu levels is three for ease of use and to facilitate learning of the various command combinations. Once the user has learned or memorized all of the various command combinations, the user may play the game without using the command menus (non-learning mode).

As stated above in conjunction withFIG. 2, speech recognition module214is context-sensitive. For example, the word “deploy” is in the vocabulary of speech recognition module214. However, speech recognition module214will only recognize the word “deploy” in the context of a level1command such as “team” or “alpha”. In other words, speech recognition module214will not react to an input level2command unless a level1command has been input, and will not react to an input level3command unless a level2command has been input. The speech recognition module212recognizes sentence-type commands (actor plus action) that game software212uses to control actions of the characters in the game environment.

AlthoughFIGS. 3A-3Cillustrate command menus330,340, and350as branching menus that branch from left to right, any other configuration for displaying available commands to the user is within the scope of the invention.

FIG. 4is a flowchart of method steps for menu-driven voice command of characters, according to one embodiment of the invention. First, in step412, game software212determines whether the user has actuated a controller button assigned to a voice command functionality. If not, the game software212continues monitoring the controller button signals. If the button has been actuated, then in step414game software212determines whether the button has been released. If the button has been released. The method continues in a learning mode with step416. If the button has not been released, the method continues in a non-learning mode with step428.

In step416, game software212displays level1commands on a level1menu command screen. Then in step418, speech recognition module214determines whether the user has verbally input a level1command. If not, the method returns to step416. If the user has verbally input a level1command, then in step420game software212displays level2commands on a level2command screen. In step422, speech recognition module214determines whether the user has verbally input a level2command. If not, the method returns to step420. If the user has verbally input a level2command, the method continues with step424.

If the input level2command does not have associated level3commands, then in step438game software212executes the commands, whereby a character or characters in the game environment perform the selected action. If the input level2command does have associated level3commands, then in step426software module212displays level3commands on a level3menu command screen. In step428, speech recognition module214determines whether the user has verbally input a level3command. If not, the method returns to step426. If the user has verbally input a level3command, then in step438game software212executes the commands, whereby a character or characters in the game environment perform the selected action.

In the non-learning mode, the available commands at each level are not displayed to the user. In step430, speech recognition module214determines if the user has verbally input a level1command. If not, speech recognition module214continues to listen for a level1command. If the user has verbally input a level1command, then in step432speech recognition module214determines whether the user has verbally input a level2command. If not, speech recognition module214continues to listen for a level2command. If the user has input a level2command, the method continues with step434.

If the input level2command does not have associated level3commands, then the method continues in step438where game software212executes the commands, whereby a character or characters in the game environment perform the selected action. If the input level2command does have associated level3commands, then in step436speech recognition module214determines whether the user has verbally input a level3command. If the user has not verbally input a level3command, then speech recognition module214continues to listen for a level3command. If the user has verbally input a level3command, then in step438game software212executes the command whereby a character or characters in the game environment perform the selected action.

In the non-learning mode, the user experiences the game environment as if he or she is actually giving verbal instructions to a character or characters, making the game play more intuitive and realistic. In one embodiment of game software212, after the user has given a spoken command to one of the characters a prerecorded acknowledgement of the command, or other audible response is played back to the user via a speaker or headset (not shown).