U.S. Pat. No. 7,532,211

IMAGE PROCESSING DEVICE AND IMAGE PROCESSING METHOD

AssigneeSega

Issue DateJune 30, 2005

U.S. Patent No. 7,532,211: Image processing device and image processing method

Summary:

The ‘211 patent allows games to have a more realistic and life-like feel to them because the movements of players have been redesigned to simulate those of actual opponents. The virtual camera is designed to provide alternate views of the action based on what is going on during the event. Whenever there are a lot of characters on the screen at the same time, the camera will be pulled out so that the most action can be seen while the player competes in the game.

Abstract:

Games are processed in a more realistic and immediate manner during image processing for soccer games and the like. Specifically, the movements of characters more accurately simulate those of actual opponents, resulting in greater game realism.The invention is an image processing device for imaging and displaying the behavior of characters modeled on opponents in virtual three-dimensional space. It is determined (S21 to S24) whether or not there exists a certain situation in which the relation to the game contents (in the centering area, for example) or the positional relation (such as distance) between characters and a target (such as opponent characters or the ball) having a relation through the game to said characters matches certain conditions, and the eyes of the characters are directed to the target (S25, S26, S28, etc.) when it is determined that the certain situation exists. The invention is especially suitable for soccer games.

Illustrative Claim:

1. An image processing device comprising: image generating means for generating a game screen representing a view seen from a virtual camera disposed in virtual space, wherein a plurality of predetermined areas are defined in said virtual space and an angle of said virtual camera is set for each of said plurality of predetermined areas; object setting means for setting an object in said virtual space; object behavior controlling means for controlling behavior of said object in said virtual space based on an operation of a player; camera angle adjusting means for adjusting an angle at which said virtual camera views said object, based on positional coordinates of said virtual camera in said virtual space; and object positional determination means for determining whether said object is located in one of said plurality of predetermined areas based on the positional coordinates of said virtual camera; wherein said camera angle adjusting means angularly adjusts said virtual camera to 0 degrees if said object positional determination means determines that said object is not located in said plurality of predetermined areas; and said camera angle adjusting means adjusts said virtual camera to the angle set for the area in which said object is located if said object positional determination means determines that said object is located in any of said plurality of predetermined areas.

Illustrative Figure

Abstract

Games are processed in a more realistic and immediate manner during image processing for soccer games and the like. Specifically, the movements of characters more accurately simulate those of actual opponents, resulting in greater game realism. The invention is an image processing device for imaging and displaying the behavior of characters modeled on opponents in virtual three-dimensional space. It is determined (S21 to S24) whether or not there exists a certain situation in which the relation to the game contents (in the centering area, for example) or the positional relation (such as distance) between characters and a target (such as opponent characters or the ball) having a relation through the game to said characters matches certain conditions, and the eyes of the characters are directed to the target (S25, S26, S28, etc.) when it is determined that the certain situation exists. The invention is especially suitable for soccer games.

Description

BEST MODE FOR CARRYING OUT THE INVENTION A first embodiment of the present invention is described below with reference toFIGS. 1 through 22, a second embodiment is described with reference toFIGS. 23 through 31, and a third embodiment is described with reference toFIGS. 32 through 58. These embodiments relate to games integrally incorporating the image processing device of the present invention. The application software in these cases assume soccer game software as an example, but they may similarly be implemented with other types of software such as that for baseball games, soft ball games, and basket ball games. FIG. 1schematically illustrates the block structure of the game device pertaining to the first embodiment. The game device has a CPU (central processing unit)1, with ROM2, RAM3, an input device4, and a video display processor (VDP)5all connected by a bus to this CPU1. The CPU1sequentially executes the game program previously stored in ROM2. The various processes pertaining to the present invention are realized as the VDP5periodically executes the program stored in ROM2. Three processes relating to the present invention include the process by which the sight of line of the characters is controlled, the process by which spectator behavior is controlled, and the process of fog control in the form of display screen color adjustment. In addition to the program processed by the CPU1or VDP5, character polygon data as well as the programs and fixed data necessary for the three processes (such as spectator polygon data and fog reference data) are previously stored in ROM2. The operating RAM3temporarily stores various types of data during the execution of the game. The input device4is equipped with an instrument operated by the player, such as a joy stick, and is used to input the data necessary for executing the game, such as when controlling the ...

BEST MODE FOR CARRYING OUT THE INVENTION

A first embodiment of the present invention is described below with reference toFIGS. 1 through 22, a second embodiment is described with reference toFIGS. 23 through 31, and a third embodiment is described with reference toFIGS. 32 through 58. These embodiments relate to games integrally incorporating the image processing device of the present invention. The application software in these cases assume soccer game software as an example, but they may similarly be implemented with other types of software such as that for baseball games, soft ball games, and basket ball games.

FIG. 1schematically illustrates the block structure of the game device pertaining to the first embodiment. The game device has a CPU (central processing unit)1, with ROM2, RAM3, an input device4, and a video display processor (VDP)5all connected by a bus to this CPU1. The CPU1sequentially executes the game program previously stored in ROM2. The various processes pertaining to the present invention are realized as the VDP5periodically executes the program stored in ROM2. Three processes relating to the present invention include the process by which the sight of line of the characters is controlled, the process by which spectator behavior is controlled, and the process of fog control in the form of display screen color adjustment. In addition to the program processed by the CPU1or VDP5, character polygon data as well as the programs and fixed data necessary for the three processes (such as spectator polygon data and fog reference data) are previously stored in ROM2.

The operating RAM3temporarily stores various types of data during the execution of the game. The input device4is equipped with an instrument operated by the player, such as a joy stick, and is used to input the data necessary for executing the game, such as when controlling the movement and motions of characters.

A video RAM (VRAM)6, imaging device7, and operating RAM8are connected to the VDP5. Polygon data from ROM2is stored in VRAM6. The polygon data comprises coordinate data for the number of vertices which are to be displayed and color data given in the form of color palettes for the vertices. The VDP5has a digital signal processor (DSP). In response to periodic timing signals such as the frame switching timing, the VDP5actuates and executes an image processing-dedicated program previously stored in ROM2. The coordinates of the polygon data stored in VRAM6are converted and processed as a result of processing by the BDP5, and are transferred to the imaging device7.

A texture ROM9and frame buffer memory10are connected to the imaging device7. Texture is mapped by the imaging device7to the polygon data which has undergone coordinate conversion, and is written in the form of pixel data per frame (screen) to the frame buffer memory10.

The frame buffer memory10is connected by a D/A convertor11to an image processing device12such as a CRT. The D/A convertor11functions as a video signal generating circuit, reading pixel data from the frame buffer memory10and converting it to analog signals. The converted data is sequentially sent in the form of video signals to the display device12, and images are displayed.

The game device also comprises a fog circuit13and real time clock14which are connected to the bus BUS. The real time clock14has a time and calendar function, so as to give real daily time data to the CPU1. As is described below, the fog circuit13has what is referred to as a “fog function,” which involves adjusting the display screen color through masking using specially established color data, referred to as fog data, according to the time in which the game device is operated (that is, the real daily time in which the game device is being played by a player), and generates fog data under the control of the CPU1and transmits it to the VDP5.

FIG. 2illustrates an example of processing per frame executed by the CPU1. First, the CPU1receives from the input device4character motion commands (such as running, turning, and kicking the ball) corresponding to operating data from the player, and computes the motion of characters in three-dimensional virtual space (S1).

The CPU1then processes the ball (S2) in three-dimensional virtual space, such as advancing the soccer ball position, and processes collisions (hits) (S3) in three-dimensional virtual space. The collision processing is the determination and processing of various types of collisions, such as that between characters and the ground, between characters, and between characters and the ball. The CPU1then processes the behavior of the characters (robots) operated by the player in three-dimensional virtual space in response to operating data from the player (S4).

The CPU1also processes the control of the eye direction of the characters (5S). This control of the eye direction constitutes a characteristic feature of the )present invention in an effort to provide more diverse character action during the game and to enhance the realism of the soccer game. This process is described in detail below.

When the process for controlling the eye direction is complete, the CPU1processes the soccer game field (S6). This field processing involves issuing commands for processes necessary to the game as the positions of the characters present in the field in virtual three-dimensional space are used as reference to determine which characters are in the offside line and which characters are in the goal area so as to advance the game.

The CPU1then processes the control of spectator behavior (S7) and issues commands for fog control (S8). These two processes also constitute part of the characteristic features of the present invention. The process of controlling spectator behavior is an attempt to enhance realism and immediacy by representing a variety of spectator behaviors while suppressing the computing load, and is described in detail below. The process of fog control is an attempt to enhance realism and immediacy by controlling the brightness of the screen according to the time throughout the day (color adjustment based on whether the game is played during the day or at night) in which the game is actually being played by the player, and is described in detail below. Finally, other necessary processing is executed (S9).

Only the main point of view of the character is determined in the control of the point of view in step SS; the motion of actually turning a character may be managed in the subsequent processing time S1. Step S4may be similarly managed. In other words, a timing of at most about 1/60 second can be established from the data acquisition and determination of the main point of view until the motion is actually carried out in the relevant direction.

The CPU1repeats the aforementioned processing for each frame. Thus, as the game unfolds, the CPU1sends commands for motion and the like corresponding to the manipulations of the player to the VDP5. The necessary polygon data is transferred from the ROM2to the VDP5under the control of the CPU1. The VDP5temporarily stores the polygon data in the VRAM6, the polygon data undergoes coordinate conversion from virtual three-dimensional space to perspective two-dimensional space according to the commands, and the converted coordinates are transferred to the imaging device7. The imaging device7maps texture to the polygon data that has undergone coordinate conversion and writes it to the frame buffer memory10. As a result, images with new pixel data are displayed on the display device12for each frame.

The process for the control of the aforementioned character eye direction is described below with reference toFIGS. 3 through 7. This process is the step executed in step S5inFIG. 2.

The principles of angle computation determining the direction of the eye direction of a character C are described first. Here, the character C is assumed to be located at coordinates (Xp, Yp, Zp) in three-dimensional virtual space, and the soccer ball B serving as the target is located at coordinates (Xt, Yt, Zt) in the same space. In this case, based on the geometry viewed from the direction of the Y axis inFIG. 3, the following values can be calculated:

X′=Xt−Xp

Z′=Zt−Zp

and the angle θy and distance L on the X-Z plane between the character C and the ball B can be calculated from the values X′ and Z′. Similarly, the distance L between the character C and the ball B on the horizontal axis can be obtained based on this geometry, and the geometry obtained when the vertical axis is the Y axis can be imagined as depicted inFIG. 4. In other words, the coordinates (Yp, Lp) for the character C and the coordinates (Yt, Lt) for the ball B can be determined. In this case, the values

Y′=Yt−Lt

L′=Lt−Lp

can be calculated, and the angle θy at which the character C sees the ball B on the Y-L plane can be calculated based on Y′ and L′. In other words, the eye direction when each character looks at the ball, for example, is determined by the parameters θy, L, and θx. The target is not limited to the ball, and can be similarly calculated when another player, the goal, or referee. In other words, it may be managed by giving the coordinates of one's own character and the coordinates of a given point for another player or referee or the coordinates of a central position such as of a goal.

The direction of the eyes is determined in this manner, but the character's body faces in a variety of directions (way of turning) while his eyes are directed in that direction.FIG. 5illustrates this. In the present embodiment, the head HD, torso BD, and waist HP of the character C are given as components where the body turns (rotates) during the control of the eye direction. Thus, modes of turning such as

1) when the head HD turns first (vertical or lateral turn), the torso BD then turns, and the waist HP then turns;

2) when the head HD and torso BD first turn simultaneously, and the waist HP then turns; and

3) when only the torso HD turns

can be controlled for each situation in which the character is situated for each game scenario. This control may be managed, for example, by storing the angle to which each part HD, BD, and HP turns per frame, giving commands for motion based on a slight increase in the angle of the current turning angle in subsequent frames, and terminating the turning motion commands at the frame where the calculated angles θx and θy are reached for each body part.

In general, the movements of the human body are based on natural structural principles, and body movements appear most natural if these natural principles are applied when characters are set in motion. For example, when a body is turned, as indicated inFIG. 5, the neck turns the most rapidly, followed by the upper half of the body, and lastly by the entire body. Thus, when a body is turned, the neck should turn faster than the upper half of the body, and the upper half of the body should turn faster than the whole body.

By having the torso BD begin to turn when the turning angle of the head HD reaches a certain value, the turning body may be represented as the turning timings of the head, torso, and waist are staggered.

FIGS. 6 and 7depict an example of the process for controlling the eye direction determined in this manner. The procedure for controlling the character eye direction can assume various forms. What is shown here is only an example, and the present invention is in no way limited to the examples. The process of controlling the eye direction may be carried out for all competitors (characters) on the field, or it may be carried out for only characters in a designated visual field to alleviate the computing load. It may even be carried out for only specific characters within the designated visual field, such as characters of particular concern (for example, characters in motion related to the ball or characters under the control of the player (individual playing the game device)).

As indicated inFIG. 5, for example, it is determined whether or not a given character is currently running, either YES (running) or NO (not running) (S21). If YES, it is furthermore determined whether or not the other team has the ball (S22). If the determination is YES (the other team has the ball), it is further determined whether or not a character on the other team is dribbling (S23). If YES (is dribbling), it is determined on the basis of the calculated distance values whether or not the dribbling character is within 3 meters (S24). If YES (within 3 meters), the eye direction of the character currently being controlled is directed toward the ball (S25). In the process of step25, the turning process inFIG. 5above is added. For example, since the character in step25is running, turning mode1) is suitable, for example, in directing the character's eye direction to the ball while running.

If NO (not within 3 meters) in step S24, the eye direction of the character currently being controlled is directed to the character that is dribbling (S26). The turning control at this time may be in any of the forms described with reference toFIG. 5, and should be selected according to the type of angle relation with the other character at this time.

If NO (not dribbling) in step S23, and if NO (opposing team does not have the ball) in step S22, it is determined whether or not the current ball behavior is a “high ball” (S27). Here, a “high ball” is when the ball position is higher than the heads of the characters. If YES (high ball), a command is given to the character currently being controlled to direct his eyes to the ball (S28). On the other hand, if NO (not a high ball), the eye direction is not controlled, and the motion-dependent eye direction is maintained (S29). For example, since the character is at least running, the eyes are kept in the direction in which the character is running. “Motion dependent” means when the eye direction is not controlled and the movement of the character's action pattern (motion) determined by the program is used without further modification.

If NO in step S21, that is, when it has been determined that one's own character is not running, it is sequentially determined whether the character is dribbling (FIG. 7, S30) or is in the centering area (S31). If YES in step31, since the character is dribbling and is in the centering area, the character will naturally aim at the goal. In this case, the character's eyes are directed at the goal (S32).

When the character is not in the centering ring in step S31, the character's eyes are directed to the top athlete at a rate of once every 4 seconds (S33), for example, and the character's eyes are directed to the goal at a rate of once every 4 seconds (S34). If NO (not dribbling) in step S30, it is determined whether or not the game is in set play (S35). If YES (set play), it is determined whether or not another character has decided to pass and is ready to kick (S37), and if YES, the eye direction is directed to the passing character (S37), whereas if NO, a motion-dependent eye direction is maintained without any special control of the eye direction (S38).

FIG. 22schematically depicts a screen displaying the results of the control of a character's eye direction. In the figure, athlete A directs his line of vision in the direction of athlete B (feet) who is the passing character. Athlete B directs his line of vision in the direction of the ball to be passed.

Controlling the character's eye direction in this manner allows the sports behavior of actual soccer games to be simulated far more closely. The eye direction is not longer the kind in which the ball is suddenly kicked in another direction while the character is facing the direction in which he is running, for example, as in the past. In such cases as well, the character's eyes are first directed in the direction of the kick or the intended kick, so the character's behavior can be more realistically portrayed, and a more exciting game device with better immediacy can be provided. Furthermore, when the eye direction is being controlled, not only the head is turned, but the torso or waist are also turned, either consecutively or simultaneously, as needed, allowing the behavior during the control of the eye direction to be realized in a more realistic manner.

Another advantage of the aforementioned control of the eye direction is that the direction in which the character's eyes are directed itself gives clues (suggests) to the player (individual playing the game device) what the character's next move will be. For example, when a dribbling character begins to direct his eyes frequently behind, it may be concluded that a character from the other team is approaching from behind, allowing the player (individual playing the game device) to avoid pursuit. The behavior of the characters can thus telegraph (suggest) situations in the game to the player (individual playing the game device).

Conversely, it is possible to make misleading suggestions in the determination steps inFIGS. 6 and 7. In other words, the eye direction can be deliberately directed in a direction completely different from the actual determination. This can confuse the judgment of the player (individual playing the game device), can further enhance the interest and excitement of the game device, and can increase the game difficulty.

The process of controlling spectator behavior is described below with reference toFIGS. 8 through 17.

The structure of the image data (spectator data) for portraying the spectators in the present invention is described first with reference toFIG. 8. First, spectators are sitting in stands in which m (>2) columns of seats are increasingly higher the further back the stands go, and the spectators in these m columns are divided into n (>2) rows. Of these “m columns×n rows,” “m′ columns×n rows” of spectators per m′ column (>0) are represented by mapping textures containing a plurality of spectators onto each rectangular polygon. For example,FIG. 8shows the data structure in virtual space, where A through D, A′ through D′, and A″ through D″ indicate12rectangular polygons, and the polygons are layered based on a pattern which is increasingly higher in the depthwise direction of the stands. Each of polygons A through D, A′ through D′, and A″ through D″ is a single polygon, representing a plurality of spectators in 3 column (=m′)×4 row (=n) portions, for example. The second polygon B located in virtual space behind (depthwise direction) the first polygon A assumes an initial state that is one column higher, for example, the third polygon C assumes an initial state that is one column higher, for example, and the fourth polygon D assumes an initial state that is one column higher, for example. As such, “14 columns×4 rows” of spectators, for example, are ranked in the stands by means of the 12 polygons A through D, A′ through D′, and A″ through D″.

The first four polygons A through D, for example, among the 12 polygons A through D, A′ through D′, and A″ through D″ are mutually connected in a spectator pattern, the next four polygons A′ through D′ are mutually connected in a spectator pattern, and the last four polygons A″ through D″ are mutually connected in a spectator pattern. At the same time, three polygons—the first, fifth, and ninth polygons A, A′, and A″—among the 12 polygons A through D, A′ through D′, and A″ through D″ constitute one object OB1in which they move in the same manner. Similarly, another three polygons—the second, sixth, and tenth polygons B, B′, and B″—constitute one object OB2in which they move in the same manner. Another three polygons—the third, seventh, and eleventh polygons C, C′, and C″—similarly constitute one object OB3, and another three polygons—the fourth, eighth, and twelfth polygons D, D′, and D″—constitute one object OB4. The spectator patterns of each object are not connected. That is, a characteristic feature of the spectator data in the present invention is that a plurality of polygons constitute one object while separated form each other in virtual space. The spectator patterns of each object need not be connected.

The process of controlling spectator behavior shown inFIG. 9is executed by the CPU1using the data structured in this manner. That is, the CPU1determines the polygon groups whose behavior is controlled among all of the spectator data polygons (S41). Thus, any group of spectator polygons (such as the 12 polygons A through D, A′ through D′, and A″ through D″ inFIG. 10) ranked on the side of the team whom they are cheering, for example, may be selected from among the spectators seen from the virtual camera (point of view). A plurality of polygon groups may also be selected, of course.

The CPU1then selects a behavior pattern to move the determined (selected) polygon groups (S42). Patterns in which one or several groups of polygons are moved up and down (vertically) or side to side (laterally) have been prepared as behavior patterns. When the behavior pattern is selected, the CPU1executes the process for moving the one or more groups of polygons according to the selected behavior pattern (S43athrough S43n).

FIGS. 10 through 17depicts examples of ways to move the polygons. The polygon group in these figures is an example of one group and has the same data structure as inFIG. 8.FIG. 10shows the state before the polygons are moved, and the state sequentially changes frame by frame to the states of the polygon positions shown inFIGS. 11,12,13,14,15, and16, returning after several frames to the state of the polygon positions shown inFIG. 17(same asFIG. 10).

Specifically, in the first new frame shown inFIG. 11, The first fifth, and ninth polygons A, A′, and A″ from the front constituting the first object OB1are raised up in virtual space (up). In the next new frame shown inFIG. 12, the three polygons A, A′, and A″ of object OB1are raised further up (up), and the second, sixth, and tenth polygons B, B′, and B″ constituting the second object OB2are raised up (up). In the next new frame depicted inFIG. 13, the polygons A, A′, and A″ of the object OB1drop down in virtual space (down), the polygons B, B′, and B″ of the second object OB2are raised further up, and the third, seventh, and eleventh polygons C, C′, and C″ constituting the third object OB3are raised up (up). In the next new frame depicted inFIG. 14, the polygons B, B′, and B″ of the second object OB2and the polygons C, C′, and C″ of the third object OB3drop down (down), and the three polygons D, D′, and D″ of object OB4are raised up (up). In the next new frame depicted inFIG. 15, the polygons c, C′, and C″ of the third object OB3and the polygons D, D′, and D″ of object OB4drop down (down). In the next new frame, the polygons D, D′, and D″ of object OB4which drops down more slowly drops further down (down). Thus, as shown inFIG. 17, the sequence returns to the initial state of the polygon positions. The polygons may similarly be moved side to side.

The spectator data of the portion seen from the virtual camera is designated by the CPU1to the VDP5each time one or more groups of polygons are moved (from the state in FIG.10to that inFIG. 11, for example) (S44). The process subsequently returns to the process in step S41, repeating the aforementioned behavior pattern control process for each new frame. This process for controlling the behavior of the spectators can be executed for groups of display frames instead of frame-by-frame to simplify the process. The process for controlling spectator behavior may also be executed during certain modes (such as during goals). The display objects in three-dimensional virtual space are displayed with changing perspective, relative to the display screen, from a certain point of view of the virtual camera in virtual space (which can be moved by the individual playing the game device). Just to be sure, the point of view in the control of a character's eye direction and the point of view corresponding to the projected center and the position of the virtual camera should be separate.

As a result, a plurality of polygons are connected as a single object, the polygons in a plurality of groups are interleaved in cross-sectional groupings, and each group is textured with connecting patterns, so that the diverse behavior of constantly moving spectators can be more realistically portrayed simply by moving the polygons in their object units. Because they move in object units, software design can be simplified with fewer commands, for example.

The behavioral control itself is simple, resulting in lower computing loads for such control. The control can be done with far less data to handle, while displaying behavior far more realistic than when spectators are individually portrayed with polygons. As such, less memory capacity is needed to store the spectator data. Of course, spectator behavior can be displayed more realistically and immediately with less data than when such behavior is displayed by animation.

The process for fog control mentioned above is described below with reference toFIGS. 18 through 21. This fog control is a process in which one type of mask data having color values, as described above, is superposed on image data. This affords a more realistic image display which cannot be obtained by just reflecting on the screen the changes in brightness accompanying changes in sunlight throughout the day using only conventional luminance data.

This process is executed by the CPU1as shown inFIG. 18, for example. The process inFIG. 18may also be executed by the VDP5.

The CPU1first reads the current time, that is, the standard time in which a player (individual playing the game device) is playing the game device, from the real time clock13(S51). It is then determined whether or not the time deviates from the predetermined time zone serving as reference for daytime, evening, or night (S52). The standard time zones for daytime, evening, and night are determined as shown inFIG. 19, for example. For example, the daytime standard time zone is established at a relatively long 6:00 to 16:30, the evening time zone is established at 17:00 to 18:30, and the night standard time zone is established at 19:30 to 5:30. The daytime standard time zone is longer because to avoid differences in game results due to changes in screen brightness between players playing in the morning and players playing in the evening.

When YES in step S52, the predetermined fog data parameters for the daytime, evening, and night standard time zones are read from ROM2(S53). The three parameters are red, blue, and green fog color codes, offset values (indicating fog depth), and density (degree to which fog is applied relative to depth), and these parameters are predetermined so as to be suited to the standard time zones.

The CPU1then computes the fog data, and outputs the data in the form of mask data to the VDP5(S54, S55).

If NO in step S52, the deviation between the current time and the standard time zones is calculated (S56). For example, when the time is 5:45 in the morning, the deviation is 15 minutes exactly midway between the night standard reference time zone and that for daytime.

The CPU1then reads the fog data parameters (R, G, B color codes, offset values, density) for the two standard time zones between which the deviant time falls (S57). When the time is 5:45, for example, the parameters for the night and daytime standard time zones are read.

The offset and density parameter values are corrected (S58). For example, when the time is 5:45, the offset values and density are the mean values for ½ of the offset and density values of the night and daytime standard time zones. When the time is closer to one of the standard time zones, the values are averaged (corrected), with the values of the closer time zone given more importance.

The offset values and density are determined by such correction when the time thus deviates from the standard time zone, and the fog data is computed and output in the same manner as above (S54, S55).

This results in the real time display images which have been fogged according to the state of sunlight assumed for the time during which the game is being played by a player (individual playing the game device). For example, when a game is played in the evening, the background beyond the playing field has darkish fogging (see the slanted lines inFIG. 20). When a game is played at night, for example, the background has darkish fogging and yellowish fogging from the shine of moon light, assuming the moon is out in the back ground (see slanted lines inFIG. 21).

It is thus possible to more realistically represent spectral changes and the brightness of the light source, unlike cases in which the state of the sunlight (physical brightness) in the images portraying the playing field and its environment is displayed merely through the control of the luminance as in the past. In particular, local brightness such as in portions where the moon is out, for example, can be easier to control because of the coverage of the color fog data. In the present embodiment, the subtle brightness of standard time zones such as when the rising or setting sun is out can be processed based on the corrected parameters obtained using two standard time zones among those for daytime, evening, and night.

That is, the color state is determined by preparing in advance the color state corresponding to suitable color states for a daytime, evening, or night game, and by making corrections (specifically, processes for mixing color; luminance values corrected on the basis of two sets of standard values may also be added) based on standard values between daytime and evening, evening and night, or night and daytime for color states suited to the time in which the game is played. It is thus no longer difficult to play the game as the screen darkens, which is what happens when adjustments are made using only luminance values. The point at which color adjustment begins (one standard value) and the point at which it ends (another standard value) are predetermined, and the states which are suitable for games are set, so no advantages or disadvantages are produced by the color state of the screen, no matter what time in which the game is played. That is, because of the very nature of the game, it is important that “no sense of unfairness is experienced as a result of advantages or disadvantages caused by the time zone in which the game is played” when “color changes based on time changes” are presented, and the device in the present embodiment is able to deal with this. It is thus possible to provide images with better immediacy in portraying the brightness of the environment in which the playing field and its surroundings are enveloped.

The aforementioned control of character's eye direction, control of spectator behavior, and fog control need not necessarily be managed simultaneously. Any one or two may be controlled.

A second embodiment of the present invention is described below with reference toFIGS. 23 through 58.

FIG. 23illustrates the appearance of a video game machine featuring the use of an image processing device relating to another embodiment of the present invention. In the figure, the video game machine main unit50is roughly in the shape of a box, with boards and the like for processing the game installed in the interior. Two connectors60a are provided on the font surface of the video game machine main unit50, and game playing PADs60bare connected by cables60cto the connectors60a. When two players play the game, both PADs60bare used.

A cartridge I/F50afor connecting ROM cartridges and a CD-ROM drive50bfor reading CD-ROM are provided on the top of the vide game machine main unit50. Although not shown in the figure, video output terminals and audio output terminals are provided in the back of the video game machine main unit50. The video output terminals are connected by a cable70ato the video input terminals of a TV receiver80, and the audio output terminals are connected by a cable70bto the audio input terminals of th TV receiver80. Users operate the PAD60bin such video game machines to play the game while watching images projected on the TV receiver80.

FIG. 24is a block diagram showing a schematic of the TB game machine in the present embodiment. The image processing device is composed of a CPU block51for controlling the entire system, a video block52for controlling the game screen display, a sound block53for generating sound effects and the like, a subs system54for reading the CD-ROM, and the like.

The CPU block51is composed of an SCU (system control unit)100, a main CPU101, an RAM102, and ROM103, a cartridge I/F50a, a subCPU104, a CPU bus103, and the like. The main CPU101controls the entire system. The main CPU101has computing functions similar to that of an internal DSP (digital signal processor), allowing application software to be rapidly executed. The RAM102is used as a work area for the main CPU101. An initial program or the like for initialization is written to the ROM103. The SCU100controls buses105,106, and107so as to ensure smooth data input and output between the main CPU101, VDP120and130, DSP140, CPU141, and the like. The SCU100has a DMA controller installed in the interior, allowing sprite data in the game to be transmitted to the VRAM in the video block52. Application software for games and the like can thus be rapidly executed. The cartridge50ais used to input application software provided in the form of an ROM cartridge.

The subCPU104is referred to as a SMPC (system manager and peripheral control), and has the function of collecting peripheral data from the PADs60bthrough the connectors60ain response to commands from the main CPU101. The main CPU101executes processes based on peripheral data received from the CPU104. Any peripheral from among PADs, joysticks, keyboards, and the like can be connected to the connectors60a. The subCPU104has the functions of automatically recognizing the type of peripheral connected to the connectors60a(main unit side terminals), and of collecting peripheral data according to the transmission mode corresponding to the type of peripheral.

The video block52is equipped with a VDP (video display processor)120for imaging characters and the like consisting of video game polygon data, and a VDP130for imaging background screens, synthesizing polygon image data and background images, clipping processing, and the like. The VDP120is connected to the VRAM121, and frame buffers122and123. The imaging data for the polygons representing the video game machine characters are sent from the main CPU101through the SCU100to the VDP120, and are written to the VRAM121. The imaging data written to the VRAM121is imaged by the imaging frame buffer122or123in the form of 16- or 8-bit/pixels, for example. The imaged data in the frame buffer122or123is sent to the VDP130. Data controlling the imaging is sent from the main CPU101through the SCU100to the VDP120. The VDP120executes the imaging process accordingly.

The VDP130is connected to the VRAM131, and image data output from the VDP130is output to an encoder160through memory132. The encoder160generates image signals by adding synchronizing signals or the like to the image data, and outputs them to the TV receiver80. The game screen is thus displayed on the TV receiver80.

The sound block53is composed of a DSP140for synthesizing sounds according to either PCM mode or FM mode, and a CPU141for controlling the DSP140. The audio data synthesized by the DSP140is converted to 2 channel signals by a D/A convertor170, and is then output to speakers80b.

The subsystem54is composed of a CD-ROM driver50b, CD I/F180, CPU181, MPEG Audio182, MPEG Video183, and the like. The subsystem54has the function of reading application software provided in the form of CD-ROM, and of reproducing animation. The CD-ROM drive50breads data from the CD-ROM. The CPU181executes processes such as control of the CD-ROM drive50band correcting errors in the data that is read. The data read from the CD-ROM is fed through the CD I/F180, bus106, and SCU100to the CPU101, where it is used as application software. The MPEG Audio182and MPEG Video183are devices for restoring data which has been compressed according to MPEG standards (motion picture expert group). Animation can be reproduced by using the MPEG audio182and MPEG video183to restore the MPEG compressed data written to CD-ROM.

FIG. 25illustrates a case of a soccer game being played, as an example of a game, in 3D virtual game space formed by a computer system.

In the figure, a soccer court is formed on the x-z plane in 3D virtual space. The lengthwise direction (left-right direction) of the court is in the direction of the x axis, the breadth wise direction (depthwise direction) of the court is in the direction of the y axis, and the height wise direction is in the direction of the z axis. Various athlete objects not shown in the figure are situated on the court, and the game device players control the movements of the character athletes by means of input devices. Line objects are described on the ground to form the soccer court. The game is relayed by a virtual camera (point of view) which is situated to display circumstances in the visual field in virtual game space by means of coordinate conversion or the like on a two-dimensional monitor screen.

FIGS. 26 and 27illustrate perspectives in the present invention. InFIG. 26, line objects are arranged by a combination of polygons (hereinafter referred to as line polygons) forming lines on the ground, and a soccer court drawn by the lines is formed, as shown inFIG. 25. The lines are well displayed on the screen when the camera position in game space is at an angle overlooking the field from above, but the surface area of the lines in the screen diminishes as the vertical (y axis) angle of the camera approaches the horizontal direction, and the lines gradually disappear from the monitor screen. Additionally, in cases where the line polygons and camera face each other, that is, when the line polygon normal line vector and the camera's eye direction vector are parallel, it is sometimes possible for the line polygons to become so fine that they cannot be displayed on two-dimensional projection screens in which three-dimensional virtual space has undergone coordinate conversion when the point of view is sufficiently remote. This is disadvantageous in games which are played inside such lines (or a court).

Thus, in the present invention, the positional coordinates of some of the vertices of line polygons are modified to increase the surface area projected by the camera under conditions which make it difficult for lines to be projected on the monitor screen. That is, That is, in the reciprocal relation with the camera, the surface area of line polygons projected by the camera is increased by slightly elevating, as shown inFIG. 27, the height position of vertices located in the depthwise direction, as viewed from the camera, of the line polygons situated on the ground.

FIG. 28is a flow chart illustrating the algorithm for such a process.

First, when line polygons (or line objects) are present in the visual field of the camera viewing objects situated in virtual game space, a corresponding flag is established by the program not shown in the figure. When this is determined in the main program (not shown in figure), a process for preventing the lines from disappearing is executed (S102, YES).

It is first determined whether the vertices of the line polygons are located away from or toward the camera. A method for this, as shown inFIG. 29, is to calculate the distance11between the camera and vertex P1and distance12between the camera and vertex P3, and to determine the further and nearer vertices based on the magnitude of the two distances.

Another method, as shown inFIG. 30, is to compare the angles θ1and θ3of the vertices P1and P3and the angles Ø1and Ø3of the camera to determine the further and nearer vertices P1and P3. Although either of these two methods can be used in the present embodiment, the latter is more advantageous because there are fewer calculations for the hardware than in the former method.

The latter method for comparing angles is used to describe the determination of the further and nearer vertices of the line polygons in steps S104through S110below.

The data of a line polygon is read from an object table not shown in the figure which gives the object groups situated in the scene (S104).FIG. 31is of an example of line polygon data, where, for example, coordinate values (xn, zn) of a world coordinate system as well as predetermined angles and the like for determining further and nearer vertices are correlated in the data for polygon vertices P1through P4.

As shown inFIG. 30, the current position of the camera in the world coordinate system (x-z plane) and the angle Øn in the vertex Pn direction of a line polygon seen from the camera position are then read. The angle Øn can be determined by a trigonometric function from the coordinates of the line polygon vertex Pn and the coordinates of the camera position (S106).

The line polygon vertex and camera angle are then compared (S108). InFIG. 30, for example, the predetermined angle for vertex P1is 90 degrees, and the predetermined angle for vertex P3is 270 degrees. When the angle Ø1from the x axis of the eye direction vector between the camera and vertex P1is 120 degrees, then 120 degrees−90 degrees=30 degrees90 degrees (where 90 degrees is the reference value for determination in this case, and ABS is the absolute value) (S108), allowing it to be determined as the vertex on the nearer edge of the line (S110).

When the vertex Pn is the nearer edge of a line object, no height adjustment is carried out for vertex Pn, and the data for the next line polygon vertex is read (S110, NO).

When the vertex Pn is the nearer edge of a line object (S110, YES), it is determined whether or not the distance to vertex Pn is 10 m or less. If 10 m or less (S112, YES), that is, when the camera is near the line, the line is normally visible on the screen, so the height of the vertex Pn is not adjusted, and the data for the next line polygon vertex is read (S112, NO).

When the distance to the vertex Pn is more than 10 m (S112, NO), that is, when the camera is remote from the line, the line is usually difficult to see, so the value in the y axis direction (heightwise direction) in the coordinate data for the vertex Pn on the further edge of the line is increased a certain amount to raise the further edge of the line polygon up from the ground (S114). This process is carried out foe each of the vertices of all the line polygons in the screen (S116).

As a result, the further edges of the line objects situated in virtual game space are raised up, as shown inFIG. 27, allowing them to be easily seen from the camera.

A third embodiment of the present invention is described below. The third invention involves dividing the game field (soccer ground) into prescribed areas, determining the area in which the ball is located, and adjusting the camera angle so that the direction in which the ball is advancing (direction in which the player wants to look) can be readily seen.

FIGS. 32 through 34illustrate the directions in which game device players move and desirable camera directions when moving in such directions.

First, as shown inFIG. 25, the camera basically moves along the sidelines and is directed in the player direction. Of course, the camera can move into the field to following the game.

When players controlled by the game device players move in the direction to and away from the viewer (z axis direction) in the x-y plane (FIG. 32a), the camera is directed in the z axis direction (FIG. 32b).

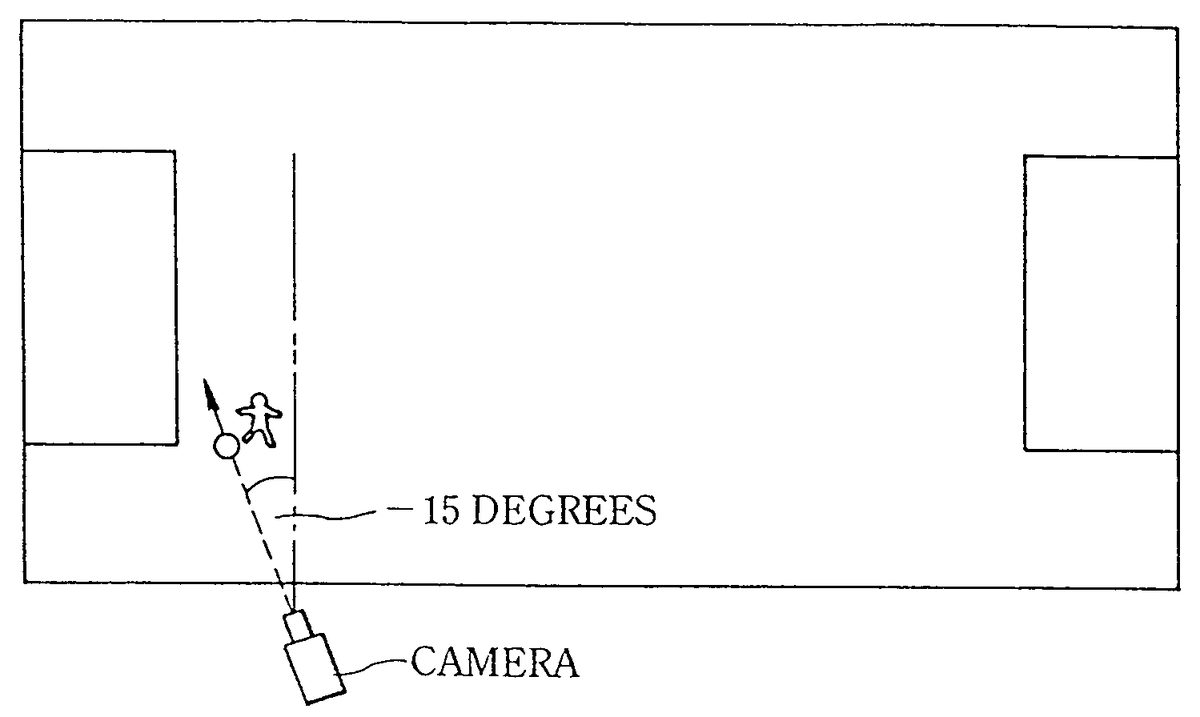

When the players controlled by the game device players move in the left direction (−x axis direction) in the x-y plane (FIG. 33a), the camera is turned from the z axis direction to a specific angle, such as −15 degrees, to increase the screen display in the area in the direction in which the ball is advancing (FIG. 33b). Here, the angle measured in the clockwise direction (positive direction) from the z axis is a positive value, and angles measured in the counterclockwise direction (negative direction) are negative values.

When players controlled by the game device players move in the right direction (x axis direction) in the x-y plane (FIG. 34a), the camera is turned from the z axis direction to a specific angle, such as 15 degrees, to increase the screen display in the area in the direction in which the ball is advancing (FIG. 34b).

An example in which the direction of the camera point of view is determined by combining the camera angle adjustment and the game field area is described with reference toFIGS. 35 and 36.

The main routine for lateral camera angle adjustment is first executed according to prescribed timing (conditions) determined in the main program not shown in the figure, and it is determined whether the main point of view of the camera is on the player side or the ball side (S132).

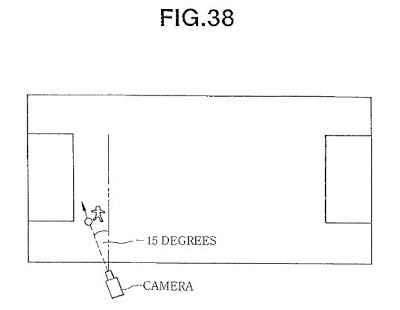

When it is on the player side (S132, player side), it is determined whether or not the main point of view is a prescribed distance, such as 8 m or less, from the soccer court penalty area (S134). When within 8 m (S134, YES), opponents and fellow team mates gather in the vicinity of the penalty area, with a greater opportunity to pass or shoot (FIG. 37), so the camera tilts about −15 degrees relative to the z axis to afford a better view of the vicinity of the penalty area (S136,FIG. 38).

When more than 8 m from the penalty area (S134, NO), the direction in which the player advances is determined (S138). When the player is moving toward the viewer (FIG. 39) or away from the viewer (FIG. 40), the angle of the camera in the x-z plane is 0 degrees relative to the player (S140,FIG. 41). When the player is moving to the left (FIG. 42), the angle of the camera relative to the player is −15 degrees from the z axis (S142,FIG. 43). When the player is moving to the right (FIG. 44), the angle of the camera relative to the player is 15 degrees from the z axis (S144,FIG. 45).

When the ball is the main point of view of the camera (S132, ball side), it is determined whether or not the distance between the ball and player is a prescribed distance, such as 15 m or more (S146). If 15 m or more (S146, YES,FIG. 46), the camera angle is determined so that the eye direction vector from the ball toward the player is at an angle of 20 degrees from the z axis (S154,FIG. 47). When the position between the player and ball is the opposite, relative to the z axis, the camera angle is determined so that the eye direction vector from the ball to the player is −20 degrees (S154,FIG. 48).

When the distance between the ball and player is not a specific distance such as 15 m or more (S146, NO), it is determined whether or not the main point of view of the camera is within 8 m of a penalty area (S148). When the main point of view of the camera is within 8 m of the penalty area (S148, YES,FIG. 49), the eye direction vector of the camera is set to an angle of −15 degrees (FIG. 50). When the position between the ball an player is the opposite, as shown inFIG. 48, the direction of the camera is set 15 degrees from the z axis.

When the distance between the ball and player is within 15 m, and the main point of view of the camera is not with 8 m of the penalty area (S148, NO,FIG. 51), the eye direction vector of the camera is set to 0 degrees relative to the ball (0 degrees relative to the z axis) (FIG. 52). When these processes are concluded, the system returns to the main program.

Occasionally, it becomes difficult to play at right angles to the direction in which the player on the screen is moving in cases where player movement in the x and z directions is input by the game device player using an input device such as a pad or joystick if the camera angle from the z axis is too great when the camera moves along the sidelines. A camera angle of about 15 degrees is thus advantageous.

The camera angle of the player in the x-z plane is thus adjusted according to areas in the game field, affording a better view in the direction in which the ball is advancing.

Vertical (y axis direction) camera angle adjustment is described below.FIG. 53is a flow chart of the process for adjusting the vertical camera angle, which is executed according to prescribed timing (conditions) determined in the main program not shown in the figure. The camera height position is usually set, but not limited, to a height of about 10 m. The main routine, as shown inFIG. 54, establishes the angle at which the camera tracks the game field according to game areas. That is, the position of the main point of view of the camera is determined (S162). When the player is the main point of view (S162, player), it is determined whether or not the main point of view is near the penalty area (S164). When the main point of view is not near the penalty area (S164, NO), the camera direction is determined so that the eye direction vector of the camera is −8 degrees from the z axis, to afford a relatively broad range of vision (S166). Here, when the camera looks down, the angle is negative, when it looks up, the angle is positive, and when it is level, the angle is 0. When the main point of view is near a penalty area (S164, YES), the camera direction is determined so that the eye direction vector of the camera is −11 degrees from the z axis (S168). This allows the camera to give a better view overlooking the field, resulting in images with a better sense of depth and dimension.

When the ball is the main point of view (S162, ball), it is determined whether or not the main point of view is near a penalty area (S170). When the main point of view is not near the penalty area (S170, NO), the camera direction is determined so that the line of sigh vector of the camera is −11 degrees from the z axis (S166). When the main point of view is near the penalty area (S170, YES), the camera direction is set so that the eye direction vector of the camera is −13 degrees from the z axis (S174).

Upon the conclusion of these processes, the system returns to the main program.

FIG. 55is a flow chart of the camera zoom adjustment. When it is determined in the main program that camera zoom adjustment is needed, the process moves to the main routine.

First, it is determined whether or not the main point of view of the camera is near a penalty area (S182). When it is near a penalty area, the camera zooms down to a prescribed distance from the main point of view (S184). This allows the entire penalty area to be seen.

When the main point of view of the camera is not near the penalty area (S182, NO) and the player is outside the screen (S186, YES), the camera zooms down to project the player in the screen (S188). As shown inFIG. 56, when the player is in the screen (S186, NO) and the player is in ¾ of the screen (S190, YES), the camera zooms up to a prescribed distance from the main point of view (S190). This allows close ups of players in situations where a fixed range is not visible. When the player is projected on the screen but is in ¾ of the screen (S190, NO), the distance between the camera dn main point of view is maintained (S194).

FIG. 57is a flow chart of another example enabling the display of objects which should be prevented from disappearing on the screen, such as the aforementioned line polygons.

In the figure, attribute data indicating that an object is to be prevented from disappearing is added to the data of polygons which should be prevented from disappearing. When object groups in the visual field of the virtual camera are displayed on the screen, the computer system determines whether or not there are polygons which are to be prevented from disappearing in the visual field (S202).

When there are polygons which should be prevented from disappearing, such as line polygons (S202, YES), a program that prevents polygons from disappearing is actuated. That is, as shown inFIG. 58, a unit eye direction vector is determined from the main point of view of the camera and the position of the camera (S204). The unit normal line vector is determined from the data for polygons which are to be prevented from disappearing (S206). The angle between the unit eye direction vector and the unit normal line vector is determined. This can be determined as the inner product of the unit eye direction vector and the unit normal line vector (S208). The polygon vertex coordinate values are adjusted so that the angle is at a prescribed angle (S210). The process from step204to step210is executed for each polygon that is to be prevented from disappearing in the visual field. Here, step202corresponds to means for preventing such disappearance, steps204through208correspond to angle computing means, and step310corresponds to polygon tilting means.

This allows lines and the like that are indispensable for a game to be prevented from disappearing.

The present invention is not limited to soccer games, and is applicable for a variety of games in which a ground or court is described by lines, such as tennis, baseball, basket ball, volley ball, Rubgy, and American football.

Camera zooming is thus adjusted according to area and display status.

A program for executing the aforementioned image processing device and for executing the method for displaying images on a computer system may be provided as a recording on data recording media such as CD-ROMs, DVD-ROMs, and ROM cassettes.

The embodiment described with reference toFIGS. 8 through 17is not limited to embodiments in which the surfaces of polygons A through D″ are mapped with textures of spectators facing the playing field, that is, examples of display objects displayed in three-dimensional virtual space serving as the game space. For example, among the textures mapped to polygon surfaces, portions of the background other than display objects modeled on spectators may be used as transparent bit textures, and portions of the display objects may be used as opaque bit textures.

FIG. 59is a schematic of such textures. The opaque bit texture area includes the spectator display objects300and surrounding portions301as needed.FIG. 60is an example of texture related to another spectator embodiment. The background302is similarly transparent bits, and the characters304are opaque bits. This texture is mapped to polygons other than polygons mapped with the texture inFIG. 59, and polygons mapped with the texture inFIG. 60are arranged in front of the polygons mapped with the texture inFIG. 59, that is, more on the virtual point of view side.FIG. 61shows a state in which these textures are layered, that is, superposed, as shown inFIGS. 8 through 17. The characters304inFIG. 60are superposed on the transparent back ground portion ofFIG. 59. Accordingly, when the polygons inFIGS. 59 and 60are superposed, spectators with the two textures are displayed on the screen while blended, grouped, and superposed. When spectators which are these polygon characters are superposed, the spectators toward the bottom in three-dimensional space, that is, spectators of polygons having the lowest priority, are under polygons having the highest priority, and are not displayed on the screen.

Spectator movements can be reproduced or simulated in the same manner as the previous embodiment of spectator behavior by moving a plurality of polygons mapped with such textures along planes perpendicular or otherwise intersecting the direction in which the plurality of polygons are superposed, or in a direction intersecting the virtual camera facing polygons A through D″ in three-dimensional space, or in a direction intersecting the direction in which they are superposed, as shown inFIGS. 8 through 17.

FIGS. 62 and 63are of a case in which the polygons400described inFIG. 59the polygons402described inFIG. 60are superposed, whereFIG. 62shows the polygons400moving up, andFIG. 63shows the polygons402moving up.

Thus, in the present invention, movements such as that of several display objects moving as in the case of spectators around a soccer game field, movements of groups consisting of several display objects, and movements in cases where several display objects are preferably divided into several blocks and are moved while the blocks are controlled (movements of animals and insects) can be created more efficiently. Such movements are produced in specific modes, such as in the case of soccer games and when athletes competing over a ball make a goal. It bears repeating that the textures described here may be picture data including characters such as spectators and background (clouds, waves, etc.). In addition, instead of constructing the background portions inFIG. 59with transparent textures, texture of a single may be used, and the texture inFIG. 60may be constructed of texture with another color distinguishable from this single color. Furthermore, the background portion ofFIG. 60may be made of a single color texture, and the background portion ofFIG. 59can be used as it is with transparent colors, while at least the profiles of the characters inFIG. 59are not the aforementioned single color. In the case ofFIGS. 8 through 17, the polygons were arranged in, but are not limited to, positions so as to be gradually inclining upward in virtual space, so the polygons can also be arranges in a virtually level plane.

In the aforementioned embodiments, the eyes of a character were directed at a target when it was determined whether or not the relation to the game contents or the positional relation such as the distance between characters and the target having a relation through the game to the aforementioned characters matched certain conditions, so that when a character competed while dribbling a ball in a soccer game, for example, the character looked in (surveyed) other directions to look for team mates or kicking zones, allowing the behavior of actual soccer contestants to be more realistically simulated, providing more natural movements, and achieving greater realism and immediacy.

The control of the point of view provides effects such as 1) affecting the strategies used in the game and the level of game difficulty, and 2) making it easier to understand and play situations or points to which the ball can (should) be passed based on the behavior of characters in the possession of the ball in ball games.

In the embodiments, a plurality of polygons mapped with texture modeled on a plurality of spectators are virtually superposed, and the plurality of polygons are moved in a direction intersecting the direction in which they are superposed, so that the variegated movements (more realistic behavior) of individual spectators can be represented. Software design is simplified, the computing load is reduced, and memory capacity can be reduced. These and other demands can be simultaneously addressed, and game immediacy can be further enhanced.

Also in the embodiments, the time of the game being played by a game device player is sensed, and the screen colors for the images are determined according to that time by corrections based on screen colors previously optimized for games, so that presentations with changes in the screen colors are added according to the time in which the game is being played, allowing the screen colors to be constantly maintained in such a way as to avoid interfering with the game. It is also possible to avoid the drawbacks that occur when the screen color state is adjusted using only the luminance, as in the past, and it is furthermore possible to more consistently and accurately make the changes in brightness throughout the day compatible with the color state of the display screen, further enhancing game immediacy.

Polygons which are difficult to see in virtual space due to the camera position sometimes occur in games which develop in three-dimensional virtual space, so in another embodiment, line objects drawn on the ground, for example, are prevented from disappearing by means of image processing.

Yet another embodiment affords a game device for adjusting the camera position, camera direction, range of visual field, and the like according to the direction in which the objects move or game areas, resulting in a screen that makes games easier to play.

INDUSTRIAL APPLICABILITY

As described above, the image processing device of the present invention allows simulations (such as games) in virtual space to be displayed more visibly and with more immediacy for observers (such as individuals playing the game device). The invention is particularly suitable for video (or television) game devices.

Claims

- An image processing device comprising: image generating means for generating a game screen representing a view seen from a virtual camera disposed in virtual space, wherein a plurality of predetermined areas are defined in said virtual space and an angle of said virtual camera is set for each of said plurality of predetermined areas;object setting means for setting an object in said virtual space;object behavior controlling means for controlling behavior of said object in said virtual space based on an operation of a player;camera angle adjusting means for adjusting an angle at which said virtual camera views said object, based on positional coordinates of said virtual camera in said virtual space;and object positional determination means for determining whether said object is located in one of said plurality of predetermined areas based on the positional coordinates of said virtual camera;wherein said camera angle adjusting means angularly adjusts said virtual camera to 0 degrees if said object positional determination means determines that said object is not located in said plurality of predetermined areas;and said camera angle adjusting means adjusts said virtual camera to the angle set for the area in which said object is located if said object positional determination means determines that said object is located in any of said plurality of predetermined areas.

- The image processing device according to claim 1 , wherein said camera angle adjusting means further adjusts the angle of said virtual camera based on a direction in which said object is moving.

- The image processing device according to claim 1 , wherein said camera angle adjusting means can adjust the angle of said virtual camera in lateral and vertical directions in said virtual space.

- The image processing device according to claim 1 , wherein the camera angle adjusting means further adjusts the angle of said virtual camera based on a distance between the object and a second object.

- An image processing method comprising the steps of: generating a game screen representing a view seen from a virtual camera disposed in virtual space, wherein a plurality of predetermined areas are defined in said virtual space and an angle of said virtual camera is set for each of said plurality of predetermined areas;setting an object in said virtual space;controlling behavior of said object in said virtual space based on an operation of a player;adjusting an angle at which said virtual camera view said object, based on positional coordinates of said virtual camera in said virtual space;determining whether said object is located in one of said plurality of predetermined areas based on the positional coordinates of said virtual camera;adjusting the angle of said virtual camera to 0 degrees if the object is determined in the determining step not to be located in said plurality of predetermined areas;and adjusting the angle of said virtual camera to the angle set for the area in which said object is located if said object is determined in the determining step to be located in any of said plurality of predetermined areas.

- The image processing method according to claim 5 , further comprising the step of adjusting the angle of said virtual camera based on a direction in which said object is moving.

- The image processing method according to claim 5 , further comprising the step of adjusting the angle of the virtual camera in lateral and vertical directions in the virtual space.

- The image processing device according to claim 5 , further comprising the step of adjusting the angle of the virtual camera based on a distance between the object and a second object.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.