U.S. Pat. No. 7,522,166

VIDEO GAME PROCESSING METHOD, VIDEO GAME PROCESSING APPARATUS AND COMPUTER READABLE RECORDING MEDIUM STORING VIDEO GAME PROGRAM

AssigneeKabushiki Kaisha Square Enix

Issue DateNovember 29, 2002

Illustrative Figure

Abstract

A simple model for an object to be processed is obtained, and Z-values and display coordinates of vertexes of the simple model from a predetermined viewpoint are calculated. A rectangular Z-area associated with the calculated display coordinates is detected, and an area of a predetermined size is generated based upon the detected Z-area while keeping a feature of the detected Z-area. A minimum value Z1MIN of the simple model is extracted. The minimum value Z1MIN of the simple model is compared with all of the Z-values within the generated area, which are stored in a Z-buffer at that time. If it is determined that the minimum value Z1MIN of the simple model is larger than the maximum value Z2MAX within the generated area, subsequent steps are skipped. Thus, processing of a real model can be avoided, which reduces the processing.

Description

DESCRIPTION OF THE PREFERRED EMBODIMENTS An embodiment of the present invention will be described below with reference to the attached drawings.FIG. 1is a block diagram showing an example of a configuration of a video game machine, according to an embodiment of the present invention. First of all, a video game machine according to an embodiment of the present invention will be described. A game machine10includes a game machine body11and a keypad50connected to an input side of the game machine body11. A television set100having a cathode ray tube (CRT) and a speaker is connected to an output side of the game machine body11. The game machine body11includes a central processing unit (CPU)12, a read only memory (ROM)13, a random access memory (RAM)14, a hard disk drive (HDD)15, and a graphics processing portion16. The game machine body11also includes a sound processing portion17, a disk drive18, and a communications interface portion19. A memory card reader/writer20and an input interface portion21are also provided. All components are connected via a bus22. The game machine body11is connected to the keypad50serving as an operation input portion, through the input interface portion21. A cross key51and a button group52are provided on the keypad50. The button group52includes a circle button52a, an X-button52b, a triangle button52cand a square button52d. A select button55is provided at a joint part between a base having the cross key51and a base having the button group52. Multiple buttons such as an R1button56and an L1button53are provided on the side of the keypad50. The keypad50includes switches linking with the cross key51, the circle button52a, the X-button52b, the triangle button52c, the square button52d, the select button55, the R1button56and the L1button53, respectively. When each of the buttons is pressed, the corresponding switch is turned on. Then, a detection signal in accordance with ON/OFF of the switch is generated in the keypad50. The ...

DESCRIPTION OF THE PREFERRED EMBODIMENTS

An embodiment of the present invention will be described below with reference to the attached drawings.FIG. 1is a block diagram showing an example of a configuration of a video game machine, according to an embodiment of the present invention.

First of all, a video game machine according to an embodiment of the present invention will be described. A game machine10includes a game machine body11and a keypad50connected to an input side of the game machine body11. A television set100having a cathode ray tube (CRT) and a speaker is connected to an output side of the game machine body11.

The game machine body11includes a central processing unit (CPU)12, a read only memory (ROM)13, a random access memory (RAM)14, a hard disk drive (HDD)15, and a graphics processing portion16. The game machine body11also includes a sound processing portion17, a disk drive18, and a communications interface portion19. A memory card reader/writer20and an input interface portion21are also provided. All components are connected via a bus22. The game machine body11is connected to the keypad50serving as an operation input portion, through the input interface portion21.

A cross key51and a button group52are provided on the keypad50. The button group52includes a circle button52a, an X-button52b, a triangle button52cand a square button52d. A select button55is provided at a joint part between a base having the cross key51and a base having the button group52. Multiple buttons such as an R1button56and an L1button53are provided on the side of the keypad50.

The keypad50includes switches linking with the cross key51, the circle button52a, the X-button52b, the triangle button52c, the square button52d, the select button55, the R1button56and the L1button53, respectively. When each of the buttons is pressed, the corresponding switch is turned on. Then, a detection signal in accordance with ON/OFF of the switch is generated in the keypad50.

The detection signal generated in the keypad50is supplied to the input interface portion21. The detection signal from the keypad50passed through the input interface21can serves as detection information indicating which button on the keypad50is turned on. Thus, an operation instruction given from a user to the keypad50is further supplied to the game machine body11.

The CPU12performs overall control of the entire apparatus by executing an operating system stored in the ROM13. The CPU12executes a video game program stored in a program area of the RAM14. In addition, the CPU12monitors a manipulation state on the keypad50through the input interface21and executes a video game program stored in the program area of the RAM14as necessary. Furthermore, various kinds of data derived from the progress of a game are stored in predetermined areas, respectively, of the RAM14as necessary.

The RAM14includes a program area, an image data area, a sound data area and an area for storing other data. Program data, image data, sound data and other data, which are read from a disk30such as a DVD and a CD-ROM through the disk drive18, are stored in respective areas.

The RAM14is also used as a work area. Various kinds of data derived from the progress of a game are stored in an area for storing other data. Program data, image data, sound data, and other data read from the disk30can be stored in the hard disk drive15. The program data, image data, sound data and other data stored in the hard disk drive15may be transferred to the RAM14as necessary. Various kinds of data derived from the progress of a game, which are stored in the RAM14, may be transferred and stored in the hard disk drive15.

The graphics processing portion16includes a frame buffer as a buffer memory for storing image data and a Z-buffer for storing depth information in the VRAM23. The graphics processing portion16determines whether the object can be displayed, by executing a processing as described later, while referring to the z value that serves as the depth information at the time when the value is written into the z buffer in accordance with control information sent from the CPU12upon the execution of program. Then, the graphics processing portion16stores the object that can be displayed in the frame buffer by Z-buffering. Then, the graphics processing portion16generates video signals based on image data stored in the frame buffer in accordance with predetermined timing, and outputs the video signal to a television set100. Thus, an image is displayed on a screen display portion101of the television set100.

Specifically, image data including color information to be displayed in respective display coordinates is stored in the frame buffer. A Z-value, serving as the depth information corresponding to image data stored in the display coordinates of the frame buffer, is stored in the Z-buffer. Based on these kinds of information, the image data stored in the frame buffer is displayed on the screen displaying portion101of the television set100. The graphics processing portion16includes an image processing unit having a sprite function for deforming, enlarging and reducing an image. Thus, an image can be processed variously in accordance with control information from the CPU12.

The sound processing portion17has a function for generating a sound signal such as background music (BGM), a conversation between characters and sound effects. The sound processing portion17outputs the sound signals to a speaker102of the television set100based on data stored in the RAM14in accordance with control information from the CPU12upon program execution.

The television set100has the screen display portion101and the speaker102and displays images and outputs sound in accordance with a content of a video game based on video signals and/or sound signals from the game machine body11.

The disk (such as a DVD and a CD-ROM)30, which is a recording medium, can be removably loaded in the disk drive18. The disk drive18reads program data, image data, sound data and other data of a video game stored in the disk30.

The communications interface portion19is connected to a network110. The communications interface portion19obtains various kinds of data by exchanging data with a data storage device and/or information processing device such as a server located in another place. The program data, image data, sound data and other data of the video game stored in the RAM14may be obtained via the network110and the communications interface portion19.

A memory card31can be removably loaded in the memory card reader/writer20. The memory card reader/writer20writes a smaller amount of saved data such as progress data of the video game and environment setting data of the video game in the memory card31.

A video game program for displaying a virtual space from a virtual viewpoint on a screen is recorded in a recording medium according to one embodiment of the present invention, that is, the disk30. The disk30is readable by a computer (the CPU12and peripheral devices). By reading and executing the program, the computer can obtain a simple model that bounds a polygon group of an object in a virtual space. The computer can also calculate first depth information from a viewpoint and display coordinates with respect to a vertex of the simple model. Furthermore, the computer can obtain present depth information from a viewpoint with respect to an area corresponding to the display coordinates and compare the first depth information with the present depth information. Furthermore, the computer can stop the execution of subsequent steps for the object when the first depth information indicates a depth that is deeper than a depth indicated by the present depth information.

Accordingly, in addition to functions required for performing software processing based on data stored in memories of the CPU12and other parts and executing a conventional video game by using hardware in the game machine body11, the game machine body11includes, as unique functions relating to image processing, a first obtaining unit for obtaining a simple model, which bounds a polygon group of an object in a virtual space. The game machine body11further includes a calculating unit for calculating first depth information from a viewpoint and display coordinates with respect to a vertex of the simple model. The game machine body11further includes a second obtaining unit for obtaining present depth information from a viewpoint with respect to an area corresponding to the display coordinates. The game machine body11includes a comparing unit for comparing the first depth information with the present depth information. The game machine body11further includes a stopping unit for stopping the execution of subsequent steps for the object when the first depth information indicates a depth that is deeper than the present depth information.

In this case, the second obtaining unit of the game machine body11includes a function for obtaining one piece of typical depth information for each of the multiple display coordinates in the area corresponding to the display coordinates. The comparing unit includes a function for comparing the first depth information with the typical depth information.

Therefore, image processing can be performed fast, and a video game can be achieved in which a more detailed character or more characters can be displayed. In this case, these unique functions may be implemented by specific hardware.

Next, operations of this embodiment having the construction as described above will be described.FIGS. 2 and 3are illustrative schematic flowcharts showing processing steps involved in the image processing according to the embodiment.

First of all, though not shown inFIG. 2, when a system is powered on, a boot program is read out and each of the components is initialized, and processing for starting a game is performed. In other words, program data, image data, sound data and other data stored in the disk (such as a DVD and a CD-ROM)30is read out by the disk drive18, and are stored in the RAM14. At the same time, if required, data stored in the hard disk drive15or a writable nonvolatile memory such as the memory card31, is read out and is stored in the RAM14.

Various initial settings having been performed so that the game can be started, for example, the keypad50is manipulated to move a player character. Then, when a request is received for displaying an object from a predetermined viewpoint at the movement position, the processing goes to step S1. Here, highest values are stored in the Z-buffer.

At step S1, a background model is obtained. A background model is a summary of three-dimensional coordinate data showing a background of an image. When the background model has been obtained, the processing goes to step S2.

At step S2, a Z-value of each of the vertexes of the background model from the predetermined viewpoint and display coordinates of each of the vertexes of the background model are calculated. When the Z-values of the background model and the display coordinates of the background model have been calculated, the processing goes to step S3. There, the present Z-values corresponding to the display coordinates of the vertexes of the background model, which are stored in the present Z-buffer, and the Z-values calculated at step S2are compared. Then, it is determined whether the present Z-values stored in the Z-buffer at that time are larger than the Z-values of the vertexes of the background model.

When it is determined at step S3that the present Z-values stored in the Z-buffer at that time are larger than the Z-values of the vertexes of the background models, the processing goes to step S4. There, the Z-values of the vertexes of the background model are stored in the Z-buffer. In addition, the corresponding image data is written in the frame buffer, and the processing goes to step S5. At step S3, when it is determined that the present Z-values stored in the Z-buffer are not larger than the Z-values of the background model, the processing directly goes to step S5.

At step S5, it is determined whether the same processing has been performed on areas corresponding to all of the vertexes of the background model. The processing at steps S3to S5is repeated until the processing is performed on all of the areas. When it is determined that the processing has been performed on the areas corresponding to all of the vertexes of the background model, the processing goes to step S6.

At step S6, whether another background exists is determined. If it is determined that another background exists, the processing returns to step S1. Then, processing at steps S1to S6is repeated. At step S6, when it is determined that other backgrounds do not exist, the processing goes to step S7.

At step S7, a simple model of an object such as a predetermined character to be processed is obtained. The simple model is a summary of three-dimensional coordinate data indicating vertexes of a hexahedron, which bounds a polygon group of the object.

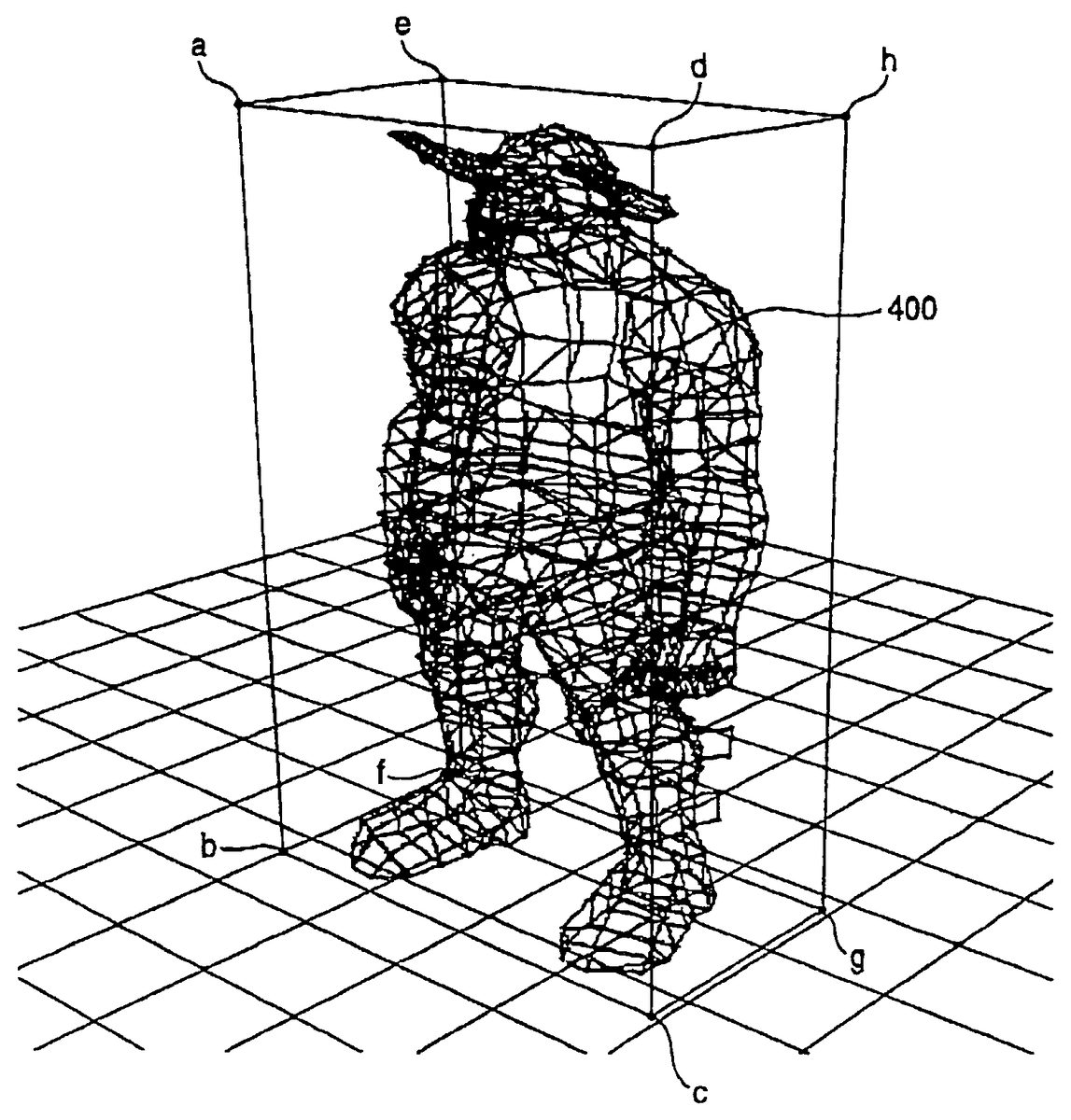

FIG. 4is an explanatory diagram showing a specific example of the simple model.FIG. 4includes a character400as an object. The square-pillar like hexahedron bounding the character400is a simple model. The simple model includes three-dimensional data for eight vertexes, a, b, c, d, e, f, g and h. Referring back toFIG. 3, when the simple model has been obtained, the processing goes to step S8.

At step S8, a Z-value of each of the vertexes of the simple model from a predetermined viewpoint and the display coordinates of each of the vertexes of the simple model are calculated. When the Z-values of the simple model and the display coordinates of the simple model have been calculated, the processing goes to step S9. Then, a rectangular Z-area (described below) is detected based upon the display coordinates calculated in step S8.

When the Z-area has been detected, an area reduced to a predetermined-size is generated based upon the detected Z-area while leaving the features of the detected Z-area. Here, the sprite function of the image processing unit provided in the graphics processing portion16is used. Since the unit for executing specific processing is used in this way, the processing can be performed fast. The scale of hardware does not have to be increased because the existing unit is used. Also, additional costs are not required.

FIG. 5is an explanatory diagram conceptually showing an example of the detection of the Z-area corresponding to the display coordinates and the generation of the area of the predetermined size based upon the Z-area. InFIG. 5, an area401is a z-buffer having an area corresponding to a display screen having 600×400 pixels.

Here, for example, when the Z-area corresponding to eight vertexes of the simple model shown inFIG. 4is detected through the processing at step S9, a rectangular area402inFIG. 5is detected as the Z-area corresponding to the display coordinates.

In the example shown inFIG. 5, a (64×64) rectangular area is detected as the Z-area. However, the size of the rectangular area may be detected differently according to viewpoints and/or directions of the object. For example, when a viewpoint exists at a position directly across from the center of the front surface of the character400, a rectangular area having four vertexes (a, b, c and d) at the corners is detected as the Z-area, which is different in size.

Then, an area of a predetermined size of, for example, 32×32 pixels is generated based upon the area402of 64×64 pixels as shown inFIG. 5, by using the sprite function of the image processing unit of the graphics processing portion16while leaving the characteristics of the detected Z-area. For example, the highest one of the Z-values in every Z-area corresponding to four adjacent pixels is extracted as a typical value. The typical values of the entire area402are extracted so that the area of the predetermined size of 32×32 is generated based upon the area402.

The generation of the reduced size (32×32) area in this way can keep an amount of comparison processing in subsequent steps within a certain range. In this example, because the highest value is used as the typical value, the data compression does not affect the subsequent steps. Alternatively, an average value of the Z-values within the area corresponding to multiple pixels may be used as the typical value, which also contributes to faster processing.

When the generation of the area of the predetermined size has completed, the processing goes to step S11. At step S11, a minimum value Z1MIN of the simple model is extracted. Then, the minimum value Z1MIN of the simple model and all of the Z-values within the generated area, which are stored in the Z-buffer at the present time, are compared. Then, a maximum z-value Z2MAX within the generated area is extracted, and it is determined whether the minimum value Z1MIN of the simple model is larger than the maximum value Z2MAX.

At step S11, when it is determined that the minimum value Z1MIN of the simple model is larger than the maximum value Z2MAX within the generated area, the object cannot be seen from the viewpoint because of the background, for example, displayed at the present time. Thus, steps S12to S16are skipped. Then, the processing goes to step S17. In this way, processing by using a real model of the object, which does not have to be displayed, is not executed. As a result, the processing can be performed faster. When it is determined that the minimum value Z1MIN of the simple model is not larger than the maximum value Z2MAX within the generated area, the processing goes to step S12.

At step S12, real model data is obtained for the object of the predetermined character to be processed. The real model data is a summary of three-dimensional coordinate data indicating vertexes of the object (for example, character400inFIG. 4). When the real model has been obtained, the processing goes to step S13.

At step S13, Z-values of vertexes of the real model from a predetermined viewpoint and display coordinates of vertexes of the real model are calculated. When the Z-values of the vertexes of the real model and the display coordinates of the real model have been calculated, the processing goes to step S14. Then, the Z-values stored in the Z-buffer at the present time at a position corresponding to the display coordinates of the vertexes of the real model are compared with the Z-values of the vertexes calculated at step S13. Then, it is determined whether the Z-values stored in the Z-buffer at the present time are larger than the Z-values of the vertexes of the real model.

At step S14, when it is determined that the Z-values stored in the Z-buffer at the present time are larger than Z-values of the vertexes of the real model, the processing goes to step S15. At step15, the Z-values of the vertexes of the real model are stored in the Z-buffer, and image data is written in the frame buffer. Then, the processing goes to step S16. Alternatively, at step S14, when it is determined that the Z-values stored in the Z-buffer at the present time are not larger than the Z-values of the vertexes of the real model, the processing goes to step S16directly.

At step S16, it is determined whether the same processing is performed on the areas corresponding to all of the vertexes of the real model. Steps S14to S16are repeated until the processing is performed all of the areas. When it is determined that the processing is performed on the areas corresponding to all of the vertexes of the real model, the processing goes to step S17.

At step S17, it is determined whether any other target objects exists. If it is determined that another object exists, the processing returns to step S7. Then, steps S7to S17are repeated. If it is determined that no other objects exist at step S17, the processing goes to step S18.

At step S18, display processing is performed on the objects of the predetermined characters to be processed, and images for the predetermined objects are displayed on a screen. Then, the processing ends, and highest values are stored in the Z-buffer.

FIGS. 6A and 6Bare explanatory diagrams showing positional relationships of three objects to be processed. Operations relating to the rendering processing according to this embodiment will be described more specifically with reference toFIGS. 6A and 6B.

InFIGS. 6A and 6B, a reference numeral501represents a viewpoint.FIG. 6Ashows a positional relationship of objects within a viewing angle on an XZ-plane.FIG. 6Bshows a positional relationship of objects within a viewing angle on a ZY-plane. Each ofFIGS. 6A and 6Bincludes a background model502and simple models503and504for the objects to be processed. Here, the Z-values serving as depth information are stored in the Z-buffer.

First, the simple model503to be processed exists in front of the background model502with respect to the viewpoint501. Therefore, it is determined that the minimum value Z1MIN of the simple model is not larger than the maximum value Z2MAX within the generated area of the predetermined size. As a result, a real model thereof is obtained and undergoes general processing. Thus, the Z-values of vertexes forming the object bounded by the simple model503are written in the Z-buffer. In addition, image data is written in the frame buffer.

The simple model504to be processed is positioned behind the background model502with respect to the viewpoint501. Therefore, it is determined that the minimum value Z1MIN of the simple model is larger than the maximum value Z2MAX within the generated area. Thus, the subsequent steps are skipped. The real model is not obtained and does not undergo general processing for the vertexes. In addition, no writing is performed on the Z-buffer and the frame buffer.

Next, another embodiment will be described. According to the above-described embodiment, the rectangular Z-area corresponding to the display coordinates calculated from the simple model is detected at step S9, and after the completion of the Z-area detection, the processing goes to step S10directly. In another embodiment, it may be determined that the Z-area is equal to or smaller than the predetermined area. When it is determined that it is equal to or smaller than the predetermined area, the Z-area may be replaced with the generated area directly. Then, the processing may go to step S11. When it is determined that the Z-area is larger than the predetermined area, the processing goes to step S10, where the area of the predetermined size is generated with the features of the Z-area. Such processing can suppress the amount of processing involved in comparisons at subsequent steps. Thus, more efficient processing can be achieved.

According to the above-described embodiment, a square-pillar (having rectangular planes) like hexahedron having eight vertexes, which accommodates an object, is used. However, other solids may be used as a simple model. For example, a triangle-pillar (having rectangular planes) like pentahedron having six vertexes, which accommodates an object may be used as the simple model. A simple hexahedron may be used instead of the one in the shape of the rectangular-pillar (having rectangular planes).

Furthermore, in the above-described embodiment, the simple model is obtained before the processing on the real model with respect to the object for a character. Alternatively, in another embodiment, objects may be divided into two groups in accordance with identification data attached to data of vertexes of object. With respect to the objects belonging to one group, the determination processing is performed based on the simple model. With respect to the objects belonging to the other group, the real model itself is processed.

Although the invention has been described with reference to several exemplary embodiments, it is understood that the words that have been used are words of description and illustration, rather than words of limitation. Changes may be made within the purview of the appended claims, as presently stated and as amended, without departing from the scope and spirit of the invention in its aspects. Although the invention has been described with reference to particular means, materials and embodiments, the invention is not intended to be limited to the particulars disclosed; rather the invention extends to all functionally equivalent structures, methods, and uses such as are within the scope of the appended claims. In addition, the components having the same functions are assigned the same reference numerals, in respective figures.

As described above, according to these embodiments, a simple model is used such that rendering processing can be performed fast. Thus, a more detailed character or more characters can be displayed, for example.

Claims

- A video game processing method for displaying a virtual space viewed from a virtual viewpoint on a screen, comprising: obtaining a three dimensional simple model that bounds a polygon group of a three dimensional object in the virtual space;calculating first depth information from the viewpoint and display coordinates with respect to a vertex of the simple model;obtaining present depth information from the viewpoint with respect to an area corresponding to the display coordinates, the area comprising a rectangle bounding all vertexes of the simple model as viewed from the viewpoint, the area varying when the viewpoint changes and/or when an orientation of the object changes;comparing the first depth information and the present depth information;and stopping further processing of the object when the first depth information indicates a depth that is deeper than a depth indicated by the present depth information.

- The video game processing method according to claim 1 , wherein obtaining the present depth information further comprises obtaining one piece of typical depth information for each of a plurality of display coordinates in the area, and comparing further comprises comparing the first depth information with the typical depth information.

- The video game processing method according to claim 2 , wherein the typical depth information comprises a maximum value for each of the display coordinates in the area.

- The video game processing method according to claim 1 , wherein the simple model comprises a hexahedron having eight vertexes.

- The video game processing method according to claim 1 , wherein identification information is added to data of the object, and wherein, when the identification information indicates that the simple model is used, the calculating the first depth information and display coordinates is performed.

- The video game processing method according to claim 1 , wherein obtaining the present depth information further comprises transforming the area corresponding to the display coordinates;and the present depth information with respect to an area corresponding to the display coordinates is the depth information with respect to the transformed area corresponding to the display coordinates.

- A video game processing apparatus for displaying a virtual space viewed from a virtual viewpoint on a screen, comprising: a first obtaining system that obtains a three dimensional simple model bounding a polygon group of a three dimensional object in the virtual space;a calculator that calculates first depth information from the viewpoint and display coordinates with respect to a vertex of the simple model;a second obtaining system that obtains present depth information from the viewpoint with respect to an area corresponding to the display coordinates, the area comprising a rectangle bounding all vertexes of the simple model as viewed from the viewpoint, the area varying when the viewpoint changes and/or when an orientation of the object changes;a comparator that compares the first depth information with the present depth information;and a stopping system that stops further processing of the object when the first depth information indicates a depth that is deeper than a depth indicated by the present depth information.

- The video game processing apparatus according to claim 7 , wherein the second obtaining system obtains one piece of typical depth information for each of a plurality of display coordinates in the area;and the comparator compares the first depth information with the typical depth information.

- The video game processing apparatus according to claim 8 , wherein the second obtaining system is implemented by an image processing unit having an image reduction function, and the typical depth information for each of the display coordinates in the area is obtained by the image processing unit.

- The video game processing apparatus according to claim 8 , wherein the typical depth information comprises a maximum value for each of the display coordinates in the area.

- The video game processing apparatus according to claim 7 , wherein the simple model comprises a hexahedron having eight vertexes.

- The video game processing apparatus according to claim 7 , wherein identification information is added to data of the object, and the first obtaining system further comprises a supply system, wherein, when the identification information indicates that the simple model is used, the supply system supplies the data of the object to the first obtaining system.

- The video game processing apparatus according to claim 7 , wherein the second obtaining system that obtains the present depth information further comprises a transforming system that transforms the area corresponding to the display coordinates;and the present depth information with respect to an area corresponding to the display coordinates is the depth information with respect to the transformed area corresponding to the display coordinates.

- A computer readable recording medium on which is recorded a video game program for displaying a virtual space viewed from a virtual viewpoint on a screen, the program causing a computer to execute: obtaining a three dimensional simple model bounding a polygon group of a three dimensional object in the virtual space;calculating first depth information from the viewpoint and display coordinates with respect to a vertex of the simple model;obtaining present depth information from the viewpoint with respect to an area corresponding to the display coordinates, the area comprising a rectangle bounding all vertexes of the simple model as viewed from the viewpoint, the area varying when the viewpoint changes and/or when an orientation of the object changes;comparing the first depth information with the present depth information;and stopping further processing of the object when the depth information obtained by the first depth information indicates a depth that is deeper than a depth indicated by the present depth information.

- The computer readable recording medium according to claim 14 , wherein obtaining the present depth information further comprises obtaining one piece of typical depth information for each of the plurality of display coordinates in the area;and comparing further comprises comparing the first depth information obtained with the typical depth information.

- The computer readable recording medium according to claim 15 , wherein the typical depth information comprises a maximum value for each of the display coordinates in the area.

- The computer readable recording medium according to claim 14 , wherein the simple model comprises a hexahedron having eight vertexes.

- The computer readable recording medium according to claim 14 , wherein identification information is added to data of the object, and wherein, when the identification information indicates that the simple model is used, the calculating the first depth information and display coordinates is performed.

- The computer readable recording medium according to claim 14 , wherein obtaining the present depth information further comprises transforming the area corresponding to the display coordinates;and the present depth information with respect to an area corresponding to the display coordinates is the depth information with respect to the transformed area corresponding to the display coordinates.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.