U.S. Pat. No. 7,084,888

ORIENTATION DETECTION MARKER, ORIENTATION DETECTION DEVICE AND VIDEO GAME DECIVE

AssigneeKonami Corporation

Issue DateAugust 5, 2002

Illustrative Figure

Abstract

The invention provides an orientation detection marker having a simple structure and capable of providing information enabling the remote measurement of the orientation of a controller. Light source unit 13 (orientation detection marker) provides so-called biaxial direction information composed of one axis on which dotted light sources 131 to 133 are disposed at equal intervals and another axis on which dotted light sources 131, 134 and 135 are disposed at the same interval as the above intervals and which intersects with the aforementioned one axis at the dotted light source 131 or the like.

Description

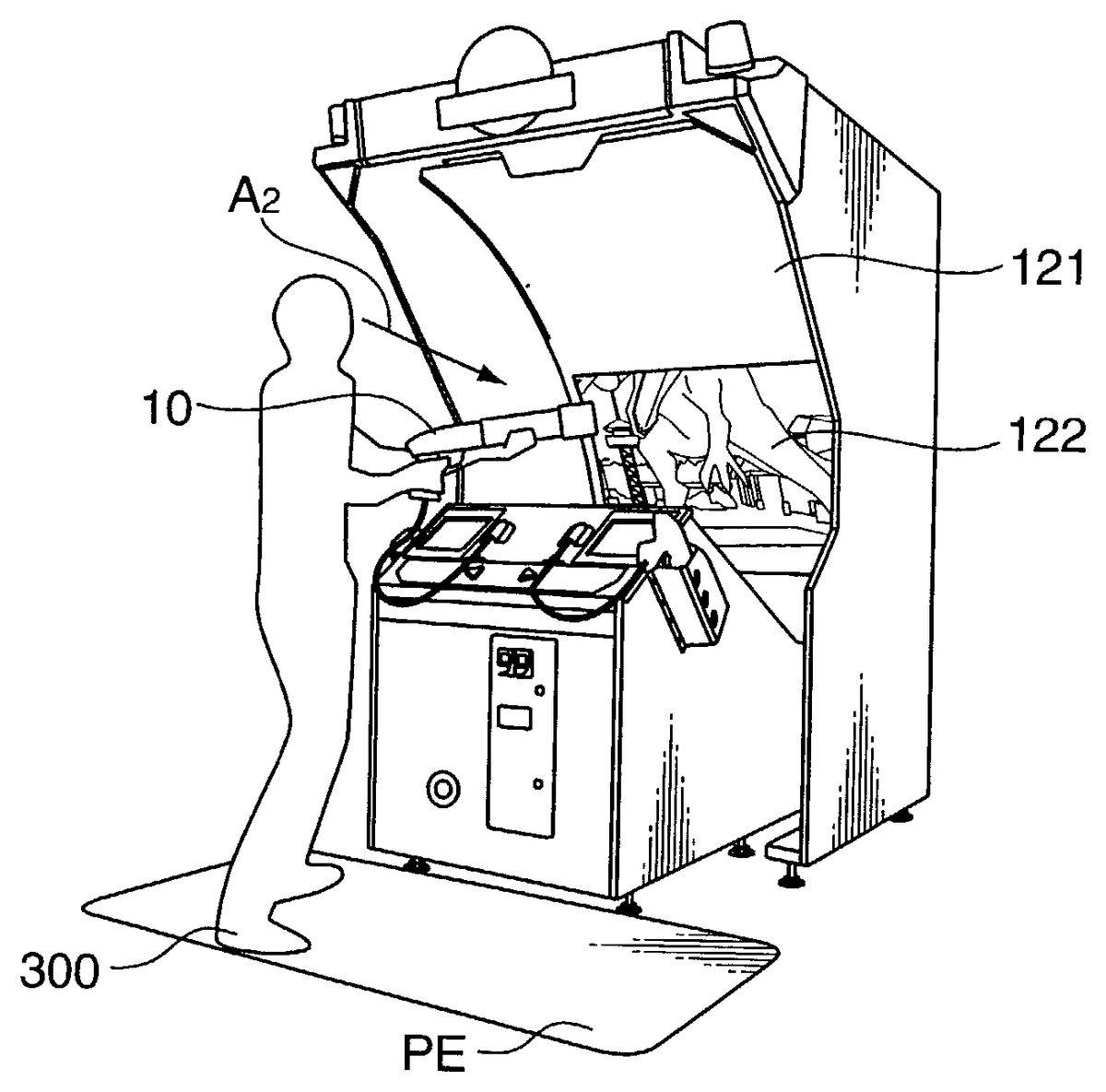

DESCRIPTION OF THE PREFERRED EMBODIMENTS First Embodiment The shooting video game machine pertaining to the first embodiment of the present invention is explained below.FIG. 1is a diagram showing the shifting of the projected image on the screen121of the shooting video game machine.FIG. 1Ashows the display of the projected image122at the lower part of the screen121, andFIG. 1Bshows the display of the projected image123at the upper part of the screen121.FIG. 5is a diagram showing the change in the displayed image accompanying the movement of the player300to the left and right.FIG. 2andFIG. 4are diagrams showing an example of the projected image122at the lower part andFIG. 3is a diagram showing an example of the projected image123at the upper part. As shown inFIG. 1AandFIG. 1B, the player300standing in the play area PE of a prescribed area set in front of the game machine operates the gun unit10and virtually shoots the dinosaur as the game character displayed in the projected images122,123on the screen121. The dinosaur having a 3D shape and which moves and shifts with the elapse in time within the game space (virtual three-dimensional space) is displayed in the projected image122as shown inFIG. 2when existing afar as an image captured from the virtual viewpoint (which corresponds to the position of the reference viewpoint of the player300set in advance), and is displayed in the projected image123as shown inFIG. 3or in the projected image122as shown inFIG. 4when nearby. Particularly, with the present game machine, the displayed contents of the projected image122(FIG. 1A) displayed at the lower part of the screen121changes to the projected image123(FIG. 1B) in accordance with the virtual viewpoint moving within the game space and position of the dinosaur while shifting continuously toward the arrow A1, and, pursuant to this type of shifting of the projected image on the screen121, the visual line of ...

DESCRIPTION OF THE PREFERRED EMBODIMENTS

First Embodiment

The shooting video game machine pertaining to the first embodiment of the present invention is explained below.FIG. 1is a diagram showing the shifting of the projected image on the screen121of the shooting video game machine.FIG. 1Ashows the display of the projected image122at the lower part of the screen121, andFIG. 1Bshows the display of the projected image123at the upper part of the screen121.FIG. 5is a diagram showing the change in the displayed image accompanying the movement of the player300to the left and right.FIG. 2andFIG. 4are diagrams showing an example of the projected image122at the lower part andFIG. 3is a diagram showing an example of the projected image123at the upper part.

As shown inFIG. 1AandFIG. 1B, the player300standing in the play area PE of a prescribed area set in front of the game machine operates the gun unit10and virtually shoots the dinosaur as the game character displayed in the projected images122,123on the screen121. The dinosaur having a 3D shape and which moves and shifts with the elapse in time within the game space (virtual three-dimensional space) is displayed in the projected image122as shown inFIG. 2when existing afar as an image captured from the virtual viewpoint (which corresponds to the position of the reference viewpoint of the player300set in advance), and is displayed in the projected image123as shown inFIG. 3or in the projected image122as shown inFIG. 4when nearby.

Particularly, with the present game machine, the displayed contents of the projected image122(FIG. 1A) displayed at the lower part of the screen121changes to the projected image123(FIG. 1B) in accordance with the virtual viewpoint moving within the game space and position of the dinosaur while shifting continuously toward the arrow A1, and, pursuant to this type of shifting of the projected image on the screen121, the visual line of the player300changes naturally from the lower part of the screen121to the upper part of the screen121(from the direction of arrow A2ofFIG. 1Ato the direction of arrow A3ofFIG. 1B).

Further, with the present game machine, it is envisioned that the dinosaur within the game space is to attack the player, and displayed on the upper part of the screen121is an image where the dinosaur is trying to bite the player on the play area PE from the state within the projected image123ofFIG. 3, and an image where the dinosaur is trying to kick (or whipping its tail against) the player is displayed on the lower part of the screen121from the state within the projected image122ofFIG. 4.

The player300may avoid these attacks by the dinosaur by moving to the left and right on the play area PE. When the player300who is shooting toward the head of the dinosaur as shown inFIG. 1Bmoves to the left and right (direction of arrow A4) on the play area as shown inFIG. 5upon sensing that the dinosaur will begin its attack, with the present game machine, this movement is detected, the coordinates are set such that the virtual player (virtual viewpoint) moves away from the dinosaur within the game space, and a projected image123showing the player300moving away from the dinosaur (the dinosaur moves outward toward the direction of arrow A5) is displayed.

Although the upper and lower parts of the enormous dinosaur approaching the virtual viewpoint are displayed on the upper and lower parts of the screen121inFIG. 3andFIG. 4, a flying dinosaur (pterosaur) afar from the virtual viewpoint may be displayed on the lower part of the screen121, the displayed contents thereof may be continuously changed and continuously shifted in accordance with the flying dinosaur with respect to the virtual viewpoint in order to display the flying dinosaur approaching the virtual viewpoint on the upper part of the screen121.

FIGS. 6 to 11will now be explained in this order regarding the structure of the present game machine for performing the overall operation described above.FIG. 6andFIG. 7are diagrams relating to the structure for projecting images and performing imaging with the gun unit10,FIG. 8andFIG. 9are diagrams relating to the structure of the light source unit (marker) which is the detection subject for detecting the muzzle16direction, andFIG. 10andFIG. 11are diagrams relating to the structure for protecting the rotation and the like of the mirror43.

The structure for projecting images is now explained.FIG. 6is a diagram showing the appearance of the present game machine, andFIG. 7is a typical cross section for explaining the shifting of the projected image on the screen121.

With the present game machine, as shown inFIG. 6, the projected image124projected from the projector31(FIG. 7) on the screen121retained with the screen retention table120shifts in the arrow A6direction, and the gun unit10and gun unit20are connected to the main body control unit100(explained later atFIG. 13) via the gun cable17. The projected image124contains a shooting target such as a dinosaur as described above, and the 1P player standing on the play area PE operates the gun unit10(or the 2P player operates the gun unit20) to virtually shoot the shooting target, and points are scored in accordance with the skill of shooting such as the shooting position and shooting timing.

The four player detection sensors51to54mounted on the front face of the base110are for detecting the movement of the 1P player when it is a one player game (or 1P player and 2P player when it is a two player game) in the left and right directions, and side plates125are provided for preventing the disturbance and the like upon detecting the muzzle direction (described later) with respect to the screen121and displaying images on the screen121.

Further, with the present game machine, music and the like is played in order to yield vigor, and a speaker (top)32, speaker (left)33and speaker (right)34for outputting sounds in the middle and high ranges, and a woofer speaker35for outputting sounds in the low ranges are provided in order to output such sounds during the game. The speaker (top)32and speaker (left)33form one pair and the speaker (top)32and speaker (right)34form one pair in order to playback in stereo sound.

A coin of a prescribed amount is inserted into the coin insertion slot38, the start button36is suitably pressed in accordance with the display on the screen121, and a one player game with only the 1P player or a two player game with both the 1P player and 2P player is selectively started.

The rectangular flat mirror43, as shown inFIG. 7, has a mirror axis45extending in the perpendicular direction in the diagram, and both ends of the mirror axis45are rotatably retained with the mirror retention member46. The rotation of the stepping motor41connected to the control unit described later is transmitted to the mirror with the timing belt44, and the projected image124shifts in the arrow A6direction on the screen by the mirror43being rotated in the direction of arrow A7.

The reference viewpoint set at a prescribed height and position at the front of the present game machine is associated with the virtual viewpoint within the game space, and it is envisioned that a player (of an average height) is able to view the screen121from this reference viewpoint position.

Next, explained is the structure for detecting the direction of the muzzle16. Preferably a color CCD camera6as the imaging means is mounted in forward orientation at a prescribed height and position at the left, right and center of the screen121shown inFIG. 7. The CCD camera6is directed in the direction of θ so as to at least include the muzzle position in the game of the gun unit10operated by the player, and adopted are those which have a prescribed visual field width (solid angle of the range shown with the chain line). It is preferable that the CCD camera6be built in the likes of a housing61for blocking light such that it will not receive the projected light from the projector31to the screen121.

FIG. 8is a diagram showing the structure of the gun unit10(similar with the gun unit20) as an example of the controller for accepting input operations from the player, andFIG. 9is a diagram showing an example of the mounting structure of the marker provided to the gun unit10for detecting the direction of the muzzle16with respect to the screen121.

The gun unit10, as shown inFIG. 8A, simulates a pump action gun, and has a trigger switch11as the micro switch that is turned on when the player pulls the trigger14in the direction of arrow A8, a pump trigger switch12as the micro switch that is turned on when the player slides the sliding unit15in the direction of arrow A9, and a marker13for detecting the point where the direction of the muzzle with respect to the screen121; that is, the direction of the muzzle16(visual line vector) intersects with the screen121. Signals from the trigger switch11and the pump trigger switch12are transmitted to the main body control unit100via the gun cable17, virtual shooting is designated by the trigger switch11being turned on, and the loading of a prescribed number of virtual bullets is designated to the gun unit10when the pump trigger switch12is turned on. When the marker13is not of a reflective type and is rather a self-illuminating type, a power source supply line is included in the gun cable.

As shown inFIG. 8B, the marker13mounted on the tip (marker mounting section) of the muzzle16of the gun unit10comprises a plate shaped substrate130, and, for example, LEDs131to135as the five dotted light sources for emitting prescribed colors having the same shape in the present embodiment are disposed on such plate surface in prescribed intervals, preferably in prescribed equal intervals in the vertical and horizontal directions. LEDs131to135are structured such that LED131is at the intersecting point position of the vertical and horizontal axes in the present embodiment, LEDs132and133are disposed in equal intervals in the vertical axis direction (LED131and LED132structure the first light source unit, LED132and LED133structure the second light source unit, and LED132is shared in the present embodiment), and LEDs134and135are disposed in equal intervals in the horizontal direction (LED131and LED134structure the third light source unit, LED134and LED135structure the fourth light source unit, and LED134is shared in the present embodiment). Mounting position information on the respective plate surfaces of LEDs131to135is, for example, stored in the likes of a ROM105of the main body control unit100shown inFIG. 12as position information with LED131as the reference. It is preferable that an illuminator capable of emitting infrared light be used as the LEDs131to135for preventing the erroneous detection by outside light. In a mode where the LED131is not shared and respectively divided in the vertical and horizontal directions, the number of LEDs will become six. LED132and LED134do not have to be shared either. Further, the color of the substrate130is adopted upon being colored to a color capable of being identified with the CCD camera6against the luminescent color of the LED, and, as described later, enabled is the measurement of the vertical and horizontal dimension of the substrate130; that is, dimension of the distance to the CCD camera6. Needless to say, a CCD camera capable of only receiving infrared light may also be used.

FIG. 8Cis a diagram showing an example of the image imaged with the CCD camera6and incorporated in the image memory, and, as illustrated therein, obtained are images of the luminescent spots131ato135acorresponding to the LEDs131to135. Moreover, as shown in the diagram, the image is imaged in an oval as shown with the chain line around the substrate; that is, in a posture facing the oblique direction, and it is evident that the luminescent spots131ato135aare compress and mapped in the left and right directions.

FIG. 9is a diagram showing an example of the mounting structure of the dotted light source. The LEDs131to135as the dotted light source are mounted, for example, in an upright posture on the substrate130of a prescribed size to which is mounted an LED illuminating drive circuit or the like. Pores130afrom which the illumination unit6of the LEDs131to135is exposed are provided at prescribed positions on the substrate130.

When providing a description with LED131as the example, the pore130ais formed in a conical shape (cone shape) broadening toward the front of the of the substrate130, and the light emitted from the respective LEDs may be irradiated at a broad angle. A stud nut130chaving a prescribed height is welded at two places at the left and right sides of the thin metal plate130b, or established by pressurization (to the pore not shown formed on the thin metal plate130c), and the mounting plate130dand the thin metal plate130bare integrally formed by mounting a mounting plate130don these nuts130cand tightening this with a bolt130efrom the opposite face. The thin metal plate130bto the bolt130eform the LED supporting unit. A pore130fis further formed at both end positions of the thin metal plate130b, and, although not shown, a triangular screw penetrates the cone shaped pore formed on the substrate130in order to integrally form the substrate130and muzzle16by tightening the bolt via the LED supporting unit.

Next, explained is the structure for protecting the rotation and the like of the mirror43.FIG. 10is a typical cross section showing the acrylic plate142established for protecting the projection of the image form the projector31, andFIG. 11is a diagram showing the structure of the acrylic plate retention member141(FIG. 11A) and the acrylic plate142(FIG. 11B).

The acrylic plate142(FIG. 11B) established so as to cover the mirror43, projector31and the like in a state of where the end thereof is passing through the acrylic plate guide groove143(FIG. 11A), as shown inFIG. 10, transmits the images from the projector31, and protects the inside which houses the likes of the mirror43and projector31from outside sources. Further, when the real image is projected on the upper part of the screen121, an inclination of roughly 10° is provided from the horizontal direction such that the virtual image is connected to the outside of the screen121with the light reflected from the likes of the acrylic plate143and mirror43.

The control of the present game machine structured as above is now explained with reference toFIGS. 12onward.FIG. 12is a block diagram showing the hardware structure of the control unit of the present game machine, andFIG. 13is a flowchart showing the procedure of the shooting game processing (shooting video game program) executed with the game control unit (CPU)103.

As shown inFIG. 12, connected to the (game control unit103of the) main body control unit100set within the base110(FIG. 6) are the aforementioned CCD camera6; trigger switches11,21; pump trigger switches12,22; player detection sensors51to54; start button36; projector31; stepping motor41; speakers32to35; coin switch37for detecting the insertion of the coin from the coin insertion slot38; and position sensor42for determining the rotational reference position of the mirror with the semicircular plate mounted on the mirror axis (upon turning on the power), and the display position of the projected image124on the screen121(FIG. 7) is continuously designated by the game control unit103designating the rotation angle from the rotational reference position.

Provided to the main body control unit100are a ROM105storing the program, image data and sound data for the shooting video game processing described later; a RAM106for temporarily storing the program read from the ROM105and data used in the program; a game control unit103for controlling the overall progress of the game based on the program loaded on the RAM106; a drawing control unit (image drawing processor)101for writing image data corresponding to the projected image of the projector31in the frame buffer102while performing processing unique to the image such as polygon drawing and texture mapping in accordance with the coordinates of the object having a 3D shape within the game space; and a sound control unit (sound control processor)104comprising an ADPCM sound source for reproducing sounds from the sound data.

With the shooting video game processing to be executed at the game control unit103, as shown inFIG. 13, if the coin insertion is not detected with the coin switch37(NO at ST2), demo image data is read and a demo screen is displayed (ST1).

When the insertion of the coin is detected (YES at ST2), the start screen is displayed (ST3), (and when the pressing of the start button36is further detected) the game start processing is executed (ST5) and the game is started after other game data is read (ST4) which characterizes the image data and sound data differing per stage, and the attack or movement of the enemy character (foregoing dinosaur or other shooting targets) and the movement of the player.

With the present game machine, similar to conventional hand-to-hand combat game machines, a virtual life of the player is set and reduced in accordance with the time limit of the game and the attack by the enemy character, and the game is ended when the time is up during the game progress (YES at ST6) or when the life runs out (NO at ST7), and a screen indicated game over is displayed (ST13). If time still remains (NO at ST6) and the life still remains (YES at ST7), the game is continued at the game processing main body (ST8, to be described in detail later with reference toFIG. 15and the like).

When a stage is cleared (YES at ST9) by defeating the enormous dinosaur shown inFIGS. 2 to 4, and the cleared stage is not the final stage (NO at ST10), processing from ST4is repeated for the new stage.

When the cleared stage is the final stage (YES at ST10), the markers13,23are turned off thereafter (ST11), the ending screen and game over screen are displayed (ST12, ST13), and the routine returns to the processing of ST1.

FIG. 14is a block diagram showing the structure of the principal parts of the game processing unit400(part of the shooting video game program) for performing the processing with the game processing main body at ST8ofFIG. 13, andFIG. 15is a flowchart showing the detailed procedure of the processing with the game processing main body at ST8.FIG. 16andFIG. 17are diagrams for explaining the detection of the position of the player300on the play area PE with the player detection sensors51to54(in a one player game with only 1P player).

As shown inFIG. 14, when taking the gun unit10as an example as the processing unit for performing processing in relation to the player (virtual viewpoint, or virtual player within the game space), the game processing unit400has a light-up processing unit400afor lighting the marker during the game; a muzzle direction detection unit401for detecting the position on the screen121to which the muzzle is facing based on the image captured with the CCD camera6; an I/O input unit402for inputting the on-state of the pump trigger switch12and trigger switch11and the detection status of the player detection sensors51to54; a bullet-loading processing unit403for processing the loading of a prescribed number of virtual bullets when the pump trigger switch12is turned on; a bullet position computation unit404for setting the coordinates so as to move the bullets in a direction according to the direction of the muzzle16from the vicinity of the virtual viewpoint within the game space when the trigger switch11is turned on; a viewpoint position shifting unit405for ordinarily shifting the virtual viewpoint within the game space (at a shifting width designated in advance) and shifting the virtual viewpoint so as to avoid the dinosaur when the player detection sensors51to54detect the movement of the player on the player area PE; and a collision judgment unit406for judging whether the virtual attack from the enemy hit the player.

Further, as the processing unit for performing processing relating to the enemy character, the game processing unit400has an enemy attack setting unit407for generating the attack to the player when the enemy character is sufficiently close to the player (using random numbers, etc.); an enemy movement processing unit408for moving the enemy character upon setting the enemy character coordinates so as to chase the player within the game space; and an enemy collision judgment unit409for judging whether the virtual attack from the player hit the enemy. The game processing unit400further has an image processing unit410for setting data which designates the drawing control unit101so as to draw based on the setting of the enemy character coordinates and rotating the stepping motor41in accordance with where to display the projected image of the projector31on the screen121, for example, whether to display the projected image on the upper part or lower part; and a sound processing unit411for setting data which designates the sound control unit104to selectively reproduce sounds (including music) according to the game progress.

With the game processing main body executed at the game processing unit400including each of the foregoing processing units, as shown inFIG. 15, light-up processing of the marker13is foremost conducted (ST80), the muzzle direction detection processing is then conducted with the muzzle direction detection unit401(ST81, described later in details with reference toFIG. 18and the like), and the response status of the pump trigger switch12, trigger switch11and player detection sensors51to54is obtained with the I/O input unit402(ST82).

If the pump trigger switch12is responding (YES at ST83), the bullets are virtually loaded at the bullet-loading processing unit403(ST84). If the trigger switch11is responding (YES at ST85), the coordinates representing the trajectory of the bullets within the game space are computed (ST86) in accordance with the direction of the muzzle16with respect to the screed detected with the muzzle direction detection unit401.

If the response state of the player detection sensors51to54is of a prescribed pattern showing the movement of the player300on the play area PE (YES at ST87), the avoidance movement of the virtual viewpoint is set with the viewpoint position shifting unit405(ST88), and, if the response status of the player detection sensors51to54is not of a prescribed pattern (NO at ST87), the normal movement of the virtual viewpoint is set (ST89).

In further detail, the player detection sensors51to54are range sensors for detecting the distance to the obstacle with supersonic waves or infrared rays, and turning on the signal when the distance to the obstacles is less than a prescribed distance (distance corresponding to the player300on the play area PE) (it is not necessary to measure the distance accurately). As shown inFIG. 16andFIG. 17, when it is detected that 1P player shifted from the reference position in front of the player detection sensor52on the inner left side to the front of the player detection sensor51on the outer left side during an ordinary case, the virtual viewpoint coordinates are set within the game space deeming that the player has moved around to the left side of the dinosaur.

Particularly, here, since the movement to the left side is detected with a combination of two player detection sensors, as shown inFIG. 17, it is possible to detect “a state where nobody is playing” and “a state of erroneously recognizing the gallery other than the player” in addition to the movement itself, and it is possible to detect the movement of the player with further accuracy.

Further, regarding the 1P player's movement to the right side (FIG. 16), when it is detected that the player moved to the front of the player detection sensor53on the inner right side (or the player detection sensor54on the outer right side), the virtual viewpoint coordinates are set within the game space deeming that the player has moved around to the right side of the dinosaur.

Moreover, the reference position may be moved to the front of the player detection sensor53on the inner right side. Here, when it is detected that the player moved to the front of the player detection sensor54on the outer right side, the virtual viewpoint coordinates may be set within the game space deeming that the player has moved around to the right side of the dinosaur, and when it is detected that the player moved to the front of the player detection sensor52on the inner left side (or the player detection sensor51on the outer left side), the virtual viewpoint coordinates may be set within the game space deeming that the player has moved around to the left side of the dinosaur.

When the collision judgment unit406judges that the attack from the enemy character hit the player (YES at ST90ofFIG. 15), the player's life is reduced, and the player's life gauge display (which represents the player's life value on the screen in a pole shape) is renewed (ST91).

When an attack from the enemy character to the player is generated in the enemy attack setting unit407(YES at ST92), coordinates within the game space of the respective portions are set (ST93) such that the attack against the player is generated from the portions which the enemy character generates attacks such as the mouth, arms, legs and tail. When the enemy character movement is set in the enemy movement processing unit408(YES at ST94), enemy character coordinates within the game space are moved (ST95). Next, when the enemy collision judgment unit409judges that the attack from the player hit the enemy character (YES at ST96), the enemy's life is reduced, and the enemy life gauge display is renewed (ST97).

It is possible to assume that one or two or more enemy characters exist in the game space, and while the target enemy character is being renewed, processing of ST92to ST97for all enemy characters is repeated (NO at ST98). When all processing of ST92to ST97for all enemy characters is completed (YES at ST98), image display processing with the image processing unit410(ST99) and the sound output processing with the sound processing unit411(ST100) are conducted, and the processing of the game processing main body returns to the beginning.

FIG. 18is a block diagram showing the structure of the principal parts of the muzzle direction detection unit401for performing the muzzle direction detection processing at ST81ofFIG. 15;FIG. 19is a flowchart showing the detailed procedure of the muzzle direction detection processing at ST81; andFIG. 20is a diagram showing the detailed procedure of the extraction processing of the dotted light source at ST815.

As shown inFIG. 18, the muzzle direction detection unit401has an image processing unit4011for repeatedly incorporating in prescribed cycles the CCD image (data per pixel is stored in a prescribed area on the RAM106) which is the picture image of the CCD camera6; a binary processing unit4012for binarizing data per imaged pixel as a prescribed threshold and storing this in a prescribed area on the RAM106as luminescent spot data, or performing binary processing after temporarily storing the same; a coordinate data extraction unit4013for extracting coordinate data in which the binarized luminescent spot data exists and performing prescribed data arrangement; a straight line judgment unit4014for successively selecting the combination of the three spots from the extracted luminescent spot data and judging whether such three spots exist on the straight line and whether they are of equal intervals; a vicinity judgment unit4015for judging whether another luminescent spot exists in the vicinity of a luminescent spot on one end with respect to the three spots existing on the straight line (main axis); a dotted light source specification unit4016for associating the luminescent spot and the dotted light source with the judgment of both judgment units; a normal line computation unit4017for computing the direction of the muzzle16of the gun unit10(normal line direction of the substrate130) from the position information of the associated luminescent spot and the dotted light source thereof; and an intersecting point (H, V) computation unit4018for computing the intersecting point (impact position) with the screen121of the computed normal line direction of the substrate130. The CCD image includes luminescent spots other than the LED; that is, noise, caused by infrared rays contained in the natural light and infrared rays contained in the fluorescent lights and discharge lamps. Therefore, the straight line judgment unit4014and the vicinity judgment unit4015endeavor to eliminate the noise by performing judgment processing for all combinations.

Here, the normal line computation unit4017and intersecting point (H, V) computation unit4018will be explained in further detail. The positions of the LEDs131to135of the marker when the substrate130is flat is Pa (0, 0), Pb (1, 0), Pc (2, 0), Pd (0, 1), Pe (0, 2), and when these are defined upon expanding to the three-dimensional space, the positions will become0a(0, 0, 0),0b(1, 0, 0),0c(2, 0, 0),0d(0, 1, 0),0e(0, 2, 0). Meanwhile, when these defined coordinates of the respective points are viewed from the CCD camera6(with the CCD camera6as the origin), the coordinates will be represented as a value enjoining three-dimensional matrix such as Ca (tx, ty, tz), Cb (tx+r00, ty+r01, tz+r02), Cc (tx+2×r00, ty+2×r01, tz+2×r02), Cd (tx+r10, ty+r11, tz+r12), Ce (tx+2×r10, ty+2×r11, tz+2×r12). Meanwhile, the dimension ratio ph, pv in the vertical and horizontal directions of the visual field of the CCD camera and the actual substrate130(may also be obtained from the imaging dimension of the substrate130with respect to the actual dimension) and the coordinates Ga (Ah, Av), Gb (Bh, Bv), Gc (Ch, Cv), Gd (Dh, Dv), Ge (Eh, Ev) of the respective points in the CCD image are all publicly known. Thus, variables tx, ty, tz, r00to r12can be computed from these publicly known data and the coordinates of the respective points viewed from the CDD camera6, and the components (r20, r21, r22) of the normal line direction of the substrate130are thereby determined. Moreover, the reason why the value contained in the two items of coordinates Cc and Ce was made to be double value against r00, r01, r02, r10, r11, r12; that is, to be in proportion is because the substrate130is represented as a plane (as a simulation). In reality, when considering that the substrate130is of a curved surface, a three-dimensional matrix should be defined in consideration of the curvature of such curved surface with respect to the respective coordinates. By conducting the foregoing computation, although there may be cases where either the LED131or LED133is near or undefined in the CCD camera6depending the direction of the muzzle16, it becomes possible to specify the direction by employing the dimension information of LED131, LED132, LED133and the dimension information of LED131, LED134, LED135; that is, by employing the relationship of the dimension when the muzzle16is in the correct position and the ratio thereof.

The intersecting point (H, V) on the screen121of the normal line of the substrate130is sought with (tx+tz·(r20/r22), ty+tz·(r21/r22)) from the obtained position of the marker13, normal line direction information (r20, r21, r22) of the substrate130, and surface position information of the screen with the preset (predefined) CCD camera6as the origin. Further, during this computation, since the position of the marker13has been computed, this position information may be adopted as necessary, and, for instance, a desired dynamic detection of the controller10becomes possible with continuous computation, and it will no longer be necessary to adopt the conventional structure such as the triangular ranging method.

Moreover, as described above, although LED131, LED132, LED133, LED131, LED134, LED135are not limited to being a fixed pitch, in this case also, they may be computed so as long as they have each pitch information. In addition, the game control unit103further has a trajectory computation unit for computing the trajectory of bullets flying within the game space from impact position on the screen121based on the information obtained with the intersecting point (H, V) computation unit4018and the information obtained with the normal line computation unit4017and the intersecting point (H, V) computation unit4018.

InFIG. 19, image (CCD) data is foremost incorporated by activating the CCD camera6under trigger conditions such as vertical synchronization (V-sync, V-blank) at a prescribed cycle, 1/60 seconds for example (ST811), and then the incorporated data is binarized with the binary processing unit4012in order to extract luminescent spots (ST812). Here, coordinates of the binary data to be treated as luminescent spots are incorporated, an identification symbol is added thereto (labeling), and appropriate grouping processing is performed (ST813) in a mode where the coordinate data exist dispersedly. Then, the rough specification of the luminescent spots is conducted (ST814) based on the position data of luminescent spots, group information, and shape information (prepared as necessary). Next, each luminescent spot specified at ST814is named A to E (ST815).

Thereafter, the coefficient of the dimension ratio ph, pv is multiplied against the position data of luminescent spots A to E (ST816). Next, coordinates tz of the z component are computed with the marker13of the CCD camera6as the origin regarding the coordinate data of luminescent spots A, B, C (ST817), x component tx, y component ty, r00, r01, r02are then computed from the camera coordinates tz (ST818), r10, r11, r12are thereafter computed based on the coordinate data of luminescent spots D, E and tx, ty, tz (ST819), and the normal line vector (r20, r21, r22) of the substrate130; that is, the intersecting point (H, V) of the gun unit13direction and the screen121is then computed as described above (ST820). When the computation is complete, the intersecting point (H, V) which is the computation result is forwarded to the CPU103side, and utilized in the collision judgment processing of the shooting against the enemy character (may also be considered for impact presentation) and the like. Moreover, in a case where a plurality of markers are lit up for a two player game, the intersecting point (H, V) of the other markers is similarly computed, and the suitable intersecting point data may be utilized.

FIG. 20is a detailed subroutine of ST815, and the existing luminescent spot positions are foremost structured (formed) into a graph composed of the vector and distance (ST831). The luminescent spot position data within the graph is organized in accordance with the foregoing grouping or in a somewhat sorted state, thereby facilitating the data analysis. Next, the vector is utilized to research whether the three luminescent spots are of a straight line relationship be operating the inner product of the adjacent two points for example (ST832). When a straight line relationship does not exist (NO at ST833), it is judged whether processing for all combinations has been completed (ST834), and the routine returns to ST832when in the middle, and skips to ST821when it has been completed. Meanwhile, if a straight line relationship exists at ST833, knowledge from the distance between the luminescent spots (arranged status) is confirmed; that is, whether the three spots on the main axis are aligned in approximately equal intervals is confirmed (ST835). If a combination of a substantially equal interval does not exist, the routine returns to ST832, and if it exists, the routine researches the luminescent spot in the vicinity of both ends of the three spots (distance in which the LED134structuring the marker is deemed to exist) (ST837). Here, if a luminescent spot does not exist in the vicinity (NO at ST838), the routine returns to ST832, and if it exists, the routine researches the relationship of the luminescent spot in the vicinity and the luminescent structuring the main axis, and the shape of the marker is estimated thereby (ST839). The reason such luminescent spot is treated as D depending on the conditions of the existence in the vicinity is because the relationship of the intersection and equal distance with the LED.A will not be established depending on the direction of the camera. Next, A, B, C, D are encoded from the positional relationship of the respective luminescent spots in order to determine the arrangement thereof (ST840).

According to the muzzle16direction detection processing as described above, to which part of the screen121the muzzle16is ultimately facing, and the virtual trajectory position of the bullets fired toward the target object from the muzzle16within the game space from a position on the screen may be computed with the characteristics of the positional relationship of the image of the marker within the CCD image.

FIG. 21is a block diagram showing the structure of the principal parts of the image processing unit for performing the image display processing at ST99ofFIG. 15, andFIG. 22is a flowchart showing the detailed procedure of the image display processing at ST99.

The image processing unit410for displaying images while performing such correction, as shown inFIG. 21, has a visual line elevation setting unit4101for setting the visual line elevation (angle inclining the visual line upward against the level surface) in accordance with the object position data421(data representing the position of the shooting target object within the game space); a mirror inclination control unit4102for designating the rotation angle of the mirror in accordance with the visual line elevation and controlling the stepping motor41; and an image generation indication unit4103for designating the generation of images to the drawing control unit101.

With the image display processing, as shown inFIG. 22, the visual line elevation is set with the visual line elevation setting unit4101(in accordance with the dinosaur position or the parts of the dinosaur which generate attacks during the approach) (ST991), the mirror rotation angle according to this visual line elevation is designated with the mirror inclination control unit4102(ST992), and the mirror43rotates as a result of the stepping motor41being controlled (ST993). Next, drawing of the image captured from the prescribed virtual viewpoint is indicated with the drawing control unit101against the set visual line elevation with the image generation indication unit4104(ST994), and this processing is returned to the beginning. In accordance with the indication of drawing, with the drawing control unit101, image data is generated upon operating data relating to polygons within the (three-dimensional) game space, this image data is written in the frame buffer102, and images are projected on the screen121in accordance with the image data on the frame buffer102.

FIG. 23is a diagram for explaining the rotation control of the mirrors corresponding respectively to the two areas in which one stage is divided with the first modified example of the shooting video game machine.

With the game machine in the present modified example, an area501corresponding to the first half of the first stage and an area502corresponding to the second half are assumed, and the player shoots small dinosaurs and flying dinosaurs (ordinary enemy characters) in the first half area501and shoots the foregoing enormous dinosaurs (enemy character corresponding to the boss character) in the second half area502. In the area501within the game space, the virtual player (virtual viewpoint) will move in the direction of arrow B1at a speed designated in advance. An enemy character appears when the virtual player passes through prescribed positions511to514, and the player virtually shoots at the display of such enemy character with the gun unit10. When the player detection sensors51to54respond, the player is made to move a relatively small distance to the left or right within the game space just enough to avoid the attack from the enemy character, and the mirror for determining the position of the projected image on the screen is rotated based on the data designated in advance.

In the area502within the game space, the player avoids the attack of the boss character522approaching the virtual player521within the game space by making the player detection sensors51to54respond and moving in the arrow B2or arrow B3direction, and the player shoots at the display of the boss character by operating the gun unit. The boss character522will move in the arrow B4or arrow B5direction so as to follow the moving virtual player521.

Here, the player is made to move a relatively large distance upon the response of the player detection sensors51to54(movement so as to move around the left or right side of the dinosaur, and the a different distance range such as 5 meters, 20 meters, etc. is set in accordance with the stage), and the mirror is rotated in accordance with the distance between the boss character and the virtual viewpoint as described above, or in accordance with the portion in the boss character is to make the attack.

With the game machine of the present modified example, since the distance of movement upon the player detection sensors51to54responding and the method of mirror rotation control is differed, the player is able to enjoy a shooting game abundant in changes and unwearying.

FIG. 24is a diagram for explaining the detection of the position of player300(during the two player game with 1P player and 2P player) with the player detection sensors51to54with the second modified example of the shooting video game machine.

With the game machine of the present modified example, during a two player game, the two virtual players within the game space share the same fate, and move in the same direction. When it is detected that 1P player (left side player) moved from the reference position in front of the player detection sensor52on the inner left side to the front of the player detection sensor52on the outer left side, this is deemed as the player moving around to the left side of the dinosaur, and the coordinates of the two virtual players are set within the game space. Moreover, when it is detected that 2P player (right side player) moved from the reference position in front of the player detection sensor53on the inner right side to the front of the player detection sensor54on the outer right side, this is deemed as the player moving around to the right side of the dinosaur, and the coordinates of the two virtual players are set within the game space.

With the shooting video game machine of the present modified example, since the coordinates of the virtual players are set in accordance with the movement of the two players, unique amusement in the game progress may be yielded upon the mutual cooperation of the players.

Here, the video game device, which is a modified mode of the foregoing shooting video game machine, is explained.

FIG. 25is an overall perspective view of the video game device. As shown inFIG. 25, the video game device comprises a console type game housing1000having a rectangular parallelepiped shape for example, a display unit1101is disposed at the front upper part thereof, a CCD camera1102is provided to the front lower part thereof, and, although not shown, a speaker1103(c.f.FIG. 27) for yielding sound effects is provided to a suitable position in the housing, and a start button1001(c.f.FIG. 27) for indicating game start is provided to the approximately center right or left, for example. The display unit1101is formed of a CRT, LCD or the like, and an image of a sports stadium including a tennis court1201for example is displayed thereon, and an opponent character1202is displayed on the opponent's court side. The player P to play the game upon standing in the play area PE prepared in front of the game housing1000will play the competition game upon holding the controller1300simulating a tennis racket. The CCD camera1102corresponds to the CCD camera6in the previous embodiments, and is set to at least include the visual field of the controller1300to be swung by the player positioned in the play area PE. The setup position of the CCD camera1102is not limited to the position shown inFIG. 26, and may be immediately above the display unit1102, or the left or right side; in other words, it will suffice so as long as the position is able to observe the changes in the direction of the face of the simulated net of the simulated tennis racket to be swung by the player. In some cases, the mounting position may be separate from the game housing1000, and, in such a case, it should be considered that the CCD camera1102is established on the game housing side from the relationship of signal processing. The purpose of this game is to repeat the motion of returning the tennis ball hit from the opponent back to the opponent's court via a game medium of a tennis ball (not shown inFIG. 26) with the opponent character (competition with the computer for example) within the game screen, and the game is played in accordance with the rules of a tennis game.

FIG. 26shows the front view of the controller1300simulating a tennis racket, and dotted light sources1301to1305such as LEDs structuring the light source unit are mounted to the face of the net portion (marker mounting section). The power source of LEDs may be supplied with a cable, or batteries may be built therein (inside the grip for instance). The dotted light sources1301to1303are disposed in equal intervals in the longitudinal direction, and the dotted light sources1304and1305are disposed in equal intervals in the width direction together with the dotted light source1301to be shared. Imaging of the controller1300with the CCD camera1102, the intersecting angle (direction) formed with the screen of the display unit1101of the net face of the controller1300, and the height and left/right positions with respect to the screen of the display unit1101are obtained by executing operational processing similar to the operation method in the previous embodiments. The CCD camera1102performs imaging operations at a prescribed cycle, 1/60 seconds for example, and the operational processing for seeking the intersecting angle as well as the height and left/right positions is repeated.

FIG. 27is a block diagram of the video game device. The present device comprises a CPU1400as the control unit, a ROM1501storing game program data, image data required in the game (each image data may be structured with objects as virtual three-dimensional objects structured from polygons), sound data and other fixed data (e.g., three-dimensional position information and the like of the display unit1101with the CCD camera1102as the origin), and a RAM1502for temporarily storing data under processing.

The CPU1400is for controlling the desired game progress by collectively or suitably reading necessary game program data or other data from the ROM1501, and comprises a controller position operation unit1401for computing the position of the controller; a controller direction operation unit1402for computing the direction of the controller; a collision judgment unit1403for judging the virtual collision of the ball character as the game medium and the controller1300; a return processing unit1404for computing the return direction and speed when the ball character is returned with the controller1300; an in/out judgment unit1405for judging whether the returned ball character is in the in area or out area of the opponent's court; an opponent character processing unit1406for performing the movement processing of the opponent character; a ball character processing unit1407for performing the positional computation of the ball character; a drawing processing unit1408for performing drawing processing to the display unit1101of the tennis court1201, opponent character1202and ball character; and an evaluation processing unit1409for managing the game score and victory/defeat.

The controller position operation unit1401is for computing the direction and position with respect to the screen of the display unit1101by executing an operational processing similar to the operation method in the previous embodiments from the position information of the dotted light sources1301to1305of the controller images with the CCD camera1102. The controller direction operation unit1402is for computing the direction of the flat face of the net portion of the controller1300with respect to the screen of the display unit1101by performing processing similar to the previous embodiments.

The collision judgment unit1403is for judging whether the ball character virtually hit the controller1300from the flight direction (virtual flight from the screen) of the ball character described later, and the position and direction information of the controller1300at the point when the ball character within the game image flies from within the virtual three-dimensional space and computationally coincides with the position on the screen of the display unit1101.

The return processing unit1404is for computing the flight path of the ball character returned by the player from the swing speed sought with the position and direction of the controller1300and two times worth of consecutive computed positional information of the controller1300computed successively when it is deemed by the collision judgment unit1403that the ball character virtually hit the net of the controller. The in/out judgment unit1405is for judging whether the ball character in which the flight path thereof is computed with the return processing unit1404landed on the inside or outside of the opponent's court.

The opponent character processing unit1406is for making the behavior of the opponent character existing within the game space and controlled with the CPU1400to follow the motion procedures by incorporating the ordinary motion in a tennis match into the game program, and produces the movement in the front/back/left/right directions within the opponent's court, serve motion, return motion and so on. The ball character processing unit1407is for performing the movement processing of the ball character moving to the screen side from the ball hitting motion of the opponent character, and to perform the movement control of the ball character virtually hit by the player, preferably in consideration of adding gravity, drive, cut, and other ball rotations.

The drawing processing unit1408is for displaying information annunciating the game progress such as the tennis court1201, opponent character1202, ball character, scores, acquired sets and so on upon employing the image data read from the ROM1501on the screen of the display unit1101in accordance with the game program and behavior of the controller1300. The evaluation processing unit1409is for executing the processing for determining the score and victory/defeat in accordance with the rules of a tennis match.

When simply explaining the operational procedure of this tennis game, after the game is started upon the start button1001being pushed, the game begins with the score at 0-0, and the serve motion by the opponent character1202is displayed. The flight patch of the ball character is computed based on the position of this serve motion within the virtual three-dimensional space and the direction and speed of the smash motion, and the movement of the ball character along such direction is displayed. The controller position operation unit1401and the controller direction operation unit1402compute the position and direction of the controller1300in prescribed cycles after the game is started, and judges whether the controller1300hit the ball character from the position, direction and swing speed of the controller1300at the time the ball character arrives at the screen. If it is a miss, air shot processing (minus evaluation processing) is executed, and if it is a hit, the return direction and speed of the ball character is computed upon executing dynamic computation. The flight path of the ball character within the virtual three-dimensional space is sought based on the computation described above, and the ball character is displayed on the screen. Whether the returned ball character landed within the opponent's court is judged, and minus evaluation is made if outside the court, and, if inside the court, judgment is made on whether the opponent character is able to return the ball character from the landing position of the ball character (equivalent to the distance between the landing point and the position of the opponent player) and the speed (also upon reflecting the drive, cut or other ball rotations). If it is returnable as a result of such judgment, the opponent character is made to operate for returning the ball character, and the flight computation of such ball character is further conducted. By repeating the foregoing operation, the score is renewed when either player misses the ball character. When either player wins 6 set game a prescribed number of times in accordance with tennis rules, the victory/defeat is determined, and the game is over.

Further, since both faces of the racket may be used for hitting a ball in tennis and the like, it is preferable that the dotted light sources1301to1305are mounted so as to be exposed on both sides of the net portion, such that the illuminated light is emitted to both faces. Here, since three dotted light sources1301to1303and1301,1304,1305are provided respectively in each axis, the front/back judgment will become undefined in cases where the racket is turned in the normal line direction of the racket face.

Therefore, as the structure of the controller1300, as shown inFIG. 26, although one prescribed color may be used (when using only one face), the net portion may be formed with plates having colors different from the dotted light sources on the front and back. Or, the illuminated color of the dotted light sources1301,1302,1303may be made the same (blue for example), and at least one among the dotted light sources1304or1305may be made a color (green for example) differing from the foregoing color. According to this structure, distinction of the front and back will be realized in addition to facilitating the specification processing of the dotted light sources within the picture image and the judgment of the axis side, and the processing speed will improve thereby. With the front and back distinction in this case, a green light source will rotate 90° from the blue color and imaged on the front face in the clockwise direction of the dotted light source1301for example, and this will be the reverse color relationship on the back face. Here, at least one on the specific same axis side other than the dotted light source1301may also be flash controlled. Or, the size and shape of the dotted light source may be made to differ from the others. As described above, the dotted light source for judging the front and back may be made to have a different light-emitting form than the other dotted light sources. Moreover, since the dotted light source for judging the front and back is shared with one of the dotted light sources1301to1305, the number of dotted light sources may be reduced in comparison to cases of separately providing such dotted light source.

Further, as shown inFIG. 28, a reverse T shape may be employed as the shape of the biaxial direction marker. Here, the illuminant color of the dotted light sources1311to1313is made the same color (green for example) in order to distinguish the front and back, and the illuminant color of the dotted light source1314is made to be a different color (red for example), and/or the illuminant color of the dotted light source1315is made to be a different color (blue for example). Thus, the color of at least the dotted light source1314or the dotted light source1315needs to be a different color (green in this example). Since the controller1310is simulating a racket, the front and back thereof is switched by the racket being swung around the axis parallel to the axis of the dotted light sources1311to1313, and it will suffice to change the color of the dotted light source on the side sandwiching the rotational axis.

Similarly,FIGS. 29A,29B and29C are diagrams show a simulated table tennis racket used as the controller1320when the video game device it used for playing a table tennis game. Since both sides of the table tennis racket may be used to hit the ball, it is desirable that dotted light sources capable of recognizing the front and back be disposed. In other words, as shown inFIG. 29A,FIG. 29BandFIG. 29C, three dotted light sources1321to1323are disposed on the ball-hitting face of the racket on one side with respect to the grip axis and parallel to the axis, dotted light sources1324and1325are provided to the opposite side of the axis upon sharing the dotted light source1321, and light may therefore be emitted from either face upon exposing both the front and back sides. Moreover, by differing the illuminant color of the dotted light source1324or1325from the other dotted light sources, the recognition processing of the front and back may be simplified, and the rotational angle with respect to the rotational axis of the grip from the luminescent spot images corresponding to the dotted light sources1321and1324within the picture image; that is, the direction with respect to the screen of the display unit may be operated. In addition, the front and back judgment will also become possible with the imaging means recognizing a different color if the color of the ball-hitting face of the racket (ground color) is made to differ for the front and back.

Second Embodiment

The shooting video game machine pertaining to the second embodiment of the present invention is explained below.

With the shooting video game machine pertaining to the second embodiment, the setup positions of the CCD camera6and the marker13are switched in the shooting video game machine pertaining to the first embodiment. In other words, the marker13is disposed on the shooting video game machine main body side (screen121), and the CCD camera6is disposed on the gun unit10. Thus, the description ofFIGS. 1 to 5in the first embodiment corresponds to the second embodiment. This shooting video game machine is now explained below.

FIG. 30andFIG. 31are diagrams relating to the structure for projecting images,FIG. 32andFIG. 33are diagrams relating to the structure for detecting the direction of the muzzle216, andFIG. 34is a diagram showing the mode of markers26to29and the structural diagram for mounting such markers to the screen.

The structure for projecting images is not explained.FIG. 30is a diagram showing, the appearance of the present game machine, andFIG. 31is a typical cross section for explaining the shifting of the projected image on the screen2121.

With the present game machine, as shown inFIG. 30, the projected image2124projected from the projector231(FIG. 31) on the screen2121retained with the screen retention table2120shifts in the arrow A26direction, and the gun unit210and gun unit220are connected to the main body control unit2100(explained later atFIG. 35) via the gun cable217. The projected image2124contains a shooting target such as a dinosaur as described above, and the 1P player standing on the play area PE operates the gun unit210(or the 2P player operates the gun unit220) to virtually shoot the shooting target, and points are scored in accordance with the skill of shooting such as the shooting position and shooting timing.

The four player detection sensors251to254mounted on the front face of the base2110are for detecting the movement of the 1P player when it is a one player game (or 1P player and 2P player when it is a two player game) in the left and right directions, and side plates2125are provided for preventing the disturbance and the like upon detecting the muzzle direction (described later) with respect to the screen2121and displaying images on the screen2121.

Further, with the present game machine, music and the like is played in order to yield vigor, and a speaker (top)232, speaker (left)233and speaker (right)234for outputting sounds in the middle and high ranges, and a woofer speaker235for outputting sounds in the low ranges are provided in order to output such sounds during the game. The speaker (top)232and speaker (left)233form one pair and the speaker (top)232and speaker (right)234form one pair in order to playback in stereo sound.

A coin of a prescribed amount is inserted into the coin insertion slot238, the start button236is suitably pressed in accordance with the display on the screen2121, and a one player game with only the 1P player or a two player game with both the 1P player and 2P player is selectively started.

The rectangular flat mirror243, as shown inFIG. 31, has a mirror axis245extending in the perpendicular direction in the diagram, and both ends of the mirror axis245are rotatably retained with the mirror retention member246. The rotation of the stepping motor241connected to the control unit described later is transmitted to the mirror with the timing belt244, and the projected image2124shifts in the arrow A26direction on the screen by the mirror243being rotated in the direction of arrow A27.

The reference viewpoint set at a prescribed height and position at the front of the present game machine is associated with the virtual viewpoint within the game space, and it is envisioned that a player (of an average height) is able to view the screen2121from this reference viewpoint position.

Next, explained is the structure for detecting the direction of the muzzle216.FIG. 32is a diagram showing the structure of the gun unit210(similar with the gun unit220) as an example of the controller for accepting input operations from the player, andFIG. 33is a diagram showing an example of the arrangement of the markers26to29for detecting the direction of the muzzle216with respect to the screen2121together with the CCD camera213in the gun unit210.

FIG. 33Ais a diagram showing the front view of the screen2121extended on the level plane, andFIG. 33Bis a diagram showing the side view of the screen2121provided in the present game machine. InFIG. 33, the markers26to29have the same shape, and a prescribed number (four in this embodiment) is disposed in the vertical direction, preferably in equal intervals, at the center position of the surface of the screen2121. The mounting position information for each of the markers26to29with respect to the screen2121is stored in the likes of a ROM2105of the main body control unit2100shown inFIG. 35. The arrangement position of the markers may be set in accordance with the shape of the screen, and, in the present embodiment, markers are provided in the vicinity of the top or bottom of the screen2121and at two locations in which the space therebetween is divided in thirds. Moreover, the number of markers may be suitably set in accordance with the size of the screen and visual field angle of the CCD camera213.

The gun unit210, as shown inFIG. 32, simulates a pump action gun, and has a trigger switch211as the micro switch that is turned on when the player pulls the trigger214in the direction of arrow A28, a pump trigger switch212as the micro switch that is turned on when the player slides the sliding unit215in the direction of arrow A29, and a CCD camera213having a visual field angle capable of imaging at least one, up to two in this embodiment, among the markers26to29for detecting the point where the direction of the muzzle with respect to the screen2121; that is, the direction of the muzzle216(visual line vector) intersects with the screen2121.

Signals from the trigger switch211, the pump trigger switch212and the CCD camera213are transmitted to the main body control unit2100via the gun cable217, virtual shooting is designated by the trigger switch211being turned on, and the loading of a prescribed number of virtual bullets is designated to the gun unit210when the pump trigger switch212is turned on.

Depending on the visual field2431of the CCD camera213, as shown inFIG. 33A, only a part of the screen2121may be imaged, and, as further shown inFIG. 33B, the distance between the CCD camera213(within the gun unit210) and the screen2121is changed in accordance with the player's operation during the game progress, and the size of the portion of the screen2121to fit within the visual field2431of the CCD camera213will change.

With the present game machine, detected are the arrangement of the image and the rotational angle information of the one marker illuminating with the mounting position information of the markers26to29(as described later) within the CCD image corresponding to the visual field2431, and detected is which part of the screen2121the player is facing the muzzle216, and the intersecting point thereof.

FIG. 34Ashows the form of the markers, andFIG. 34BandFIG. 34Cshow the mounting structure to the screen2121. Since the markers26to29have the same shape, marker26will be explained here. Four LEDs26A to26D are adopted in the marker26as the spot light source (illuminator) for illuminating infrared light for preventing erroneous detection by outside light. LED26A to LED26C are disposed on a straight line (main axis) in prescribed equal intervals, LED26has the same interval as the foregoing prescribed interval from LED26A, and provided on the line (sub axis) intersecting with the main axis. Since the shape of the markers form an L shape, the expression “L shape” will be used in the second embodiment when referring to the marker shape. The mounting position information of the marker26is prescribed and stored for each LED, and the LED26A is the reference position in the present embodiment.

The interval between LED26A to LED26D is preferably equal, and it not limited thereto. The disposition of LED26D at a position of intersecting with the sequence of LED26A to LED26C is preferably form the computational processing perspective to be performed based on the marker position and rotation angle within the picture image described later.

FIG. 34Bshows the mounting structure of the LED26D. The LED26D is mounted, for example, in an upright posture on the substrate611of a prescribed size to which is mounted an LED illuminating drive circuit or the like. Pores2121afrom which the illumination unit of the LED26D is exposed are provided at prescribed positions on the substrate611. The pore2121ais formed in a conical shape (cone shape) broadening toward the front face of the screen2121, and the light emitted from the LED26D may be irradiated at a broad angle. A stud nut613having a prescribed height is welded at two places at the left and right sides of the thin metal plate612, or established by pressurization (to the pore not shown formed on the thin metal plate612), and the substrate611and the thin metal plate612are integrally formed by tightening a bolt614from the opposite face upon mounting the substrate611on this nut613. A pore612ais further formed at both end positions of the thin metal plate612, and, although not shown, a triangular screw penetrates the cone shaped pore2121bcorresponding to the screen2121in order to integrally form the screen2121and the thin metal plate612by tightening the bolt.

FIG. 34Cshows the mounting structure of LEDs26A to26C, and this is basically the same mounting structure as with LED26D. In other words, the LEDs26A to26C are mounted, for example, in an upright posture on the substrate621of a prescribed size. Three pores2121afrom which the illumination unit of the LEDs26A to26C is exposed are provided at prescribed positions on the screen2121. The pores2121aare formed in a conical shape broadening toward the front face of the screen2121, and the light emitted from the LEDs26A to26C may be irradiated at a broad angle. Moreover, the thin metal plate622is of a size capable of containing LEDs26A to26C (three LED worth), a stud nut623having a prescribed height is welded at three places, or established by pressurization (to the pore not shown formed on the thin metal plate622), and the substrate621and the thin metal plate622are integrally formed by tightening a bolt from the opposite face upon mounting the substrate621on this nut623. Pores622aare further formed at suitable positions of the thin metal plate622, and, although not shown, a triangular screw penetrates the cone shaped pore2121bcorresponding to the screen2121in order to integrally form the screen2121and the thin metal plate622by tightening the bolt.

Further, the structure for protecting the rotation and the like of the mirror243is the same as the first embodiment shown inFIG. 10andFIG. 11, and the explanation thereof is omitted.

The control of the present game machine structured as above is now explained.FIG. 35is a block diagram showing the hardware structure of the control unit of the present game machine, andFIG. 36is a flowchart showing the procedure of the shooting game processing (shooting video game program) executed with the game control unit (CPU)2103.

As shown inFIG. 35, connected to the (game control unit2103of the) main body control unit2100set within the base2110(FIG. 30) are the aforementioned trigger switches211,221; pump trigger switches212,222; CCD cameras213,223; markers26to29; player detection sensors251to254; start button236; projector231; stepping motor241; speakers232to235; coin switch237for detecting the insertion of the coin from the coin insertion slot238; and position sensor242for determining the rotational reference position of the mirror243with the semicircular plate mounted on the mirror axis (upon turning on the power), and the display position of the projected image2124on the screen2121(FIG. 31) is continuously designated by the game control unit2103designating the rotation angle from the rotational reference position.

Provided to the main body control unit2100are a ROM2105storing the program, image data and sound data for the shooting video game processing described later; a RAM2106for temporarily storing the program read from the ROM2105and data used in the program; a game control unit2103for controlling the overall progress of the game based on the program loaded on the RAM2106; a drawing control unit (image drawing processor)2101for writing image data corresponding to the projected image of the projector231in the frame buffer2102while performing processing unique to the image such as polygon drawing and texture mapping in accordance with the coordinates of the object having a 3D shape within the game space; and a sound control unit (sound control processor)2104comprising an ADPCM sound source for reproducing sounds from the sound data.

With the shooting video game processing to be executed at the game control unit2103, as shown inFIG. 36, if the coin insertion is not detected with the coin switch237(NO at ST22), demo image data is read and a demo screen is displayed (ST1). When the insertion of the coin is detected (YES at ST22), the start screen is displayed (ST23), (and when the pressing of the start button236is further detected) the game start processing is executed (ST25) and the game is started after other game data is read (ST24) which characterizes the image data and sound data differing per stage, and the attack or movement of the enemy character (foregoing dinosaur or other shooting targets) and the movement of the player.

With the present game machine, similar to conventional hand-to-hand combat game machines, a virtual life of the player is set and reduced in accordance with the time limit of the game and the attack by the enemy character, and the game is ended when the time is up during the game progress (YES at ST26) or when the life runs out (NO at ST27), and a screen indicated game over is displayed ST213). If time still remains (NO at ST26) and the life still remains (YES at ST27), the game is continued at the game processing main body (ST28, to be described in detail later with reference toFIG. 38and the like).

When a stage is cleared (YES at ST29) by defeating the enormous dinosaur shown inFIGS. 2 to 4of the first embodiment, and the cleared stage is not the final stage (NO at ST210), processing from ST24is repeated for the new stage. When the cleared stage is the final stage (YES at ST210), the markers26to29are turned off thereafter (ST211), the ending screen and game over screen are displayed (ST212, ST213), and the routine returns to the processing of ST21.

FIG. 37is a block diagram showing the structure of the principal parts of the game processing unit2400(part of the shooting video game program) for performing the processing with the game processing main body at ST28ofFIG. 36, andFIG. 38is a flowchart showing the detailed procedure of the processing with the game processing main body at ST28.FIG. 39andFIG. 40are diagrams for explaining the detection of the position of the player on the play area PE2(corresponds to player300ofFIG. 1andFIG. 5in the first embodiment) with the player detection sensors251to254(in a one player game with only 1P player).

As shown inFIG. 37, as the processing unit for performing processing in relation to the player (virtual viewpoint, or virtual player within the game space), the game processing unit2400has a light-up processing unit2400afor lighting one of the markers26to29corresponding to the screen2121position to which the projected image is displayed; a muzzle direction detection unit2401for detecting the position on the screen2121to which the muzzle is facing based on the image captured with the CCD camera213; an I/O input unit2402for inputting the on-state of the pump trigger switch212and trigger switch211and the detection status of the player detection sensors251to254; a bullet-loading processing unit2403for processing the loading of a prescribed number of virtual bullets when the pump trigger switch212is turned on; a bullet position computation unit2404for setting the coordinates so as to move the bullets in a direction according to the direction of the muzzle216from the vicinity of the virtual viewpoint within the game space when the trigger switch211is turned on; a viewpoint position shifting unit2405for ordinarily shifting the virtual viewpoint within the game space (at a shifting width designated in advance) and shifting the virtual viewpoint so as to avoid the dinosaur when the player detection sensors251to254detect the movement of the player on the player area PE2; and a collision judgment unit2406for judging whether the virtual attack from the enemy hit the player.

Further, as the processing unit for performing processing relating to the enemy character, the game processing unit2400has an enemy attack setting unit2407for generating the attack to the player when the enemy character is sufficiently close to the player (using random numbers, etc.); an enemy movement processing unit2408for moving the enemy character upon setting the enemy character coordinates so as to chase the player within the game space; and an enemy collision judgment unit2409for judging whether the virtual attack from the player hit the enemy. The game processing unit400further has an image processing unit2410for setting data which designates the drawing control unit2101so as to draw based on the setting of the enemy character coordinates and rotating the stepping motor241in accordance with where to display the projected image of the projector231on the screen2121, for example, whether to display the projected image on the upper part or lower part; and a sound processing unit2411for setting data which designates the sound control unit2104to selectively reproduce sounds (including music) according to the game progress.

With the game processing main body executed at the game processing unit2400including each of the foregoing processing units, as shown inFIG. 38, light-up processing of one of the markers26to29corresponding to the position on the screen2121to which the projected image2124is displayed is foremost conducted (ST280), the muzzle direction detection processing is then conducted with the muzzle direction detection unit2401(ST281, described later in details with reference toFIG. 44and the like), and the response status of the pump trigger switch212, trigger switch211and player detection sensors251to254is obtained with the I/O input unit2402(ST282).