U.S. Pat. No. 6,973,430

METHOD FOR OUTPUTTING VOICE OF OBJECT AND DEVICE USED THEREFOR

AssigneeSony

Issue DateDecember 27, 2001

U.S. Patent No. 6,973,430: Method for outputting voice of object and device used therefor

Summary:

The ‘430 creates a voice-activated system allowing the player to control his animated character not just with the physical controller, but with his vocal commands as well. The voice processing method detects the player’s voice tone and then analyzes the meaning and the detected voice level. The system also provides a tendency detection means which detects voice tone and helps the game recognize what command is being spoken.

Abstract:

The present invention detects a voice tone of a player based on input voice information, and then outputs voice data having a voice tone corresponded to the detected voice tone as voice data of an object, to thereby allow the player to operate a game object through voice input.

Illustrative Claim:

1. A voice processing method comprising the steps of: detecting a voice tone based on inputted voice information; determining a plurality of groups corresponding to a plurality of voice data; classifying the detected voice tone into at least one of the plurality of groups; and outputting voice data whose voice tone corresponds to the detected voice tone; wherein the step of outputting voice data outputs voice data corresponding to the at least one group if a count of voice tones classified for the at least one group exceeds a predetermined number.

Illustrative Figure

Abstract

The present invention detects a voice tone of a player based on input voice information, and then outputs voice data having a voice tone corresponded to the detected voice tone as voice data of an object, to thereby allow the player to operate a game object through voice input.

Description

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS The present invention is applicable, for example, to a video game machine as shown in FIG.1. General Constitution of Video Game Machine The video game machine shown inFIG. 1comprises a main unit1for executing a battle-type video game described below, a controller2to be handled by a player, and a head set3having integrated therein a sound outputting portion for outputting effective sound and so forth of such video game and a microphone set for picking up player's voice. The main unit1comprises an operational command input section11to which operational commands are supplied from the controller2handled by the player, a voice level detection section12for detecting player's voice level based on sound signals transmitted from the microphone of the head set3, a voice recognition section13for recognizing meaning of player's voice based on voice signals transmitted from the microphone of the head set3, and a counter10for obtaining tone-based statistics of the player's voice by counting the player's voices as being classified into those of polite tone, gentle tone and so forth. The main unit1also has a parameter storage section14for storing parameters expressing the number of enemies, apparent fearfulness, distance between the leading character and an enemy character and so forth read out from an optical disk19; a selection/event table storage section20for storing a plurality of selection tables classified by the individual categories, where each selection table comprises a plurality of event tables and each event table contains a plurality of events expressing behaviors of the leading character and enemy characters; an optical disk reproduction section15for reading out such parameters or game programs from the optical disk19loaded thereon; a display processing section16responsible for the controlled display of game scenes onto a display device18; and a control section17for controlling entire portion of such video game machine. Constitution of Controller An appearance of ...

DETAILED DESCRIPTION OF THE PREFERRED EMBODIMENTS

The present invention is applicable, for example, to a video game machine as shown in FIG.1.

General Constitution of Video Game Machine

The video game machine shown inFIG. 1comprises a main unit1for executing a battle-type video game described below, a controller2to be handled by a player, and a head set3having integrated therein a sound outputting portion for outputting effective sound and so forth of such video game and a microphone set for picking up player's voice.

The main unit1comprises an operational command input section11to which operational commands are supplied from the controller2handled by the player, a voice level detection section12for detecting player's voice level based on sound signals transmitted from the microphone of the head set3, a voice recognition section13for recognizing meaning of player's voice based on voice signals transmitted from the microphone of the head set3, and a counter10for obtaining tone-based statistics of the player's voice by counting the player's voices as being classified into those of polite tone, gentle tone and so forth.

The main unit1also has a parameter storage section14for storing parameters expressing the number of enemies, apparent fearfulness, distance between the leading character and an enemy character and so forth read out from an optical disk19; a selection/event table storage section20for storing a plurality of selection tables classified by the individual categories, where each selection table comprises a plurality of event tables and each event table contains a plurality of events expressing behaviors of the leading character and enemy characters; an optical disk reproduction section15for reading out such parameters or game programs from the optical disk19loaded thereon; a display processing section16responsible for the controlled display of game scenes onto a display device18; and a control section17for controlling entire portion of such video game machine.

Constitution of Controller

An appearance of the controller2is shown in FIG.2. As is clear fromFIG. 2, the controller2has two grip ends20R,20L so as to allow a player to grip such grip ends20R,20L with the right and left hands, respectively, to thereby hold the controller2.

The controller2also has first and second operational portions21,22and analog operational portions23R,23L at positions operable by, for example, the individual thumbs while holding the grip ends20R,20L with the right and left hands, respectively.

The first operational portion21is responsible typically fox instructing an advancing direction of the game character, which comprises an upward prompt button21afor prompting upward direction, a downward prompt button21bfor prompting downward direction, a rightward prompt button21cfor prompting rightward direction, and a leftward prompt button21dfor prompting leftward direction.

The second operational portion22comprises a “Δ” button22ahaving a “Δ” marking, a “x” button22bhaving a “x” marking, a “∘” button22chaving a “∘” marking, and a “□” button22dhaving a “□” marking.

The analog operational portions23R,23L are designed to be kept upright (not-inclined state, or in a referential position) when they are not inclined for operation, but when they are inclined for operation while being pressed down, a coordinate value on an X-Y coordinate is detected based on the amount and direction of the inclination from the referential position, and such coordinate value is supplied as an operational output via the not-illustrated controller plug-in portion to the main unit1.

The controller2is also provided with a start button24for prompting the game start, a selection button25for selecting predetermined subjects, and a mode selection switch26for toggling an analog mode and a digital mode. When the analog mode is selected with the mode selection switch26, a light emitting diode27(LED) is lit under control, and the analog operational portions23R,23L are activated. When the digital mode is selected, a light emitting diode27(LED) is turned off under control, and the analog operational portions23R,23L are deactivated.

The controller2is still also provided with a right button28and a left button29at positions operable by, for example, the individual second fingers (or third fingers) while holding the grip ends20R,20L with the right and left hands, respectively. The individual buttons28,29comprise first and second right buttons28R1,28R2and first and second left buttons29L1,29L2, respectively, aligned side by side in the direction of the thickness of the controller2.

The player is expected to operate these buttons to enter operational commands for the video game machine or characters.

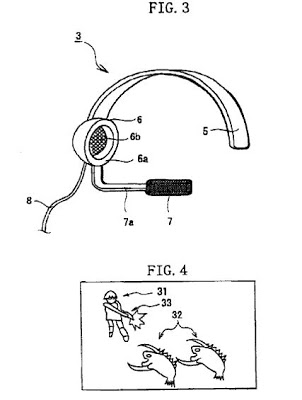

Constitution of Head Set

As shown inFIG. 3, the head set3is typically designed for single-ear use, and has a fitting arm5for fitting the head set3onto the player's head, a sound outputting portion6provided at an end of the fitting arm5, and a microphone7.

The fitting arm5is designed in a curved shape so as to fit the human head profile, and so as to lightly press both sides of the player's head with both ends thereof, to thereby attach the head set3onto the player's head.

The sound outputting portion6has a pad portion6awhich can cover the entire portion of the player's right (or left) ear when the head set3is fitted on he player's head, and a speaker unit6bfor outputting effective sound and so forth of the video game. The pad portion6ais composed, for example, of a soft material such as sponge so as to avoid pain on the player's ear caused by wearing such head set3for a long time.

The microphone7is provided on the end of a microphone arm7a, the opposite end of which being attached to the sound outputting portion6. The microphone7is designed to be positioned close to the player's mouth when the head set3is fitted on the player's head, which is convenient for picking up players voice and supplying sound signals corresponded thereof through a cable8to the voice level detection section12and the voice recognition section13of the main unit1.

Although the following explanation deals with the head set3designed for single-ear use, it should be noted that the binaural specification is also allowable such as a general headphone. The sound outputting portion may have an inner-type earphone, which will be advantageous in reducing the size and weight of such head set.

It should also be noted that while the head sat3herein is designed to be fitted on the player's head using the fitting arm5, it is also allowable to provide a hook to be hung on either of the player's ears, to thereby allow the head set to be fixed on one side of the player's ear with the aid of such hook.

Executive Operation of Video Game

Next, executive operation of a battle-type video game on the video game machine of this embodiment will be explained.

In this battle-type video game, a leading character moves from a start point to a goal point along a predetermined route, during which the leading character encounters with enemy characters. Thus, the player operates the controller2and also speaks to the leading character in the displayed scene through the microphone7of the head set3to encourage it or make such leading character fight with enemy characters while giving instructions on the battle procedures. The player thus aims at the goal while defeating the enemy characters in such fights.

In the execution of such battle-type video game, the player loads the optical disk19having stored therein such battle-type video game into the main unit1, and then press the start button24of the controller2to prompt the game start. An operational command for prompting the game start is then supplied through the operational command input section11to the control section17so as to control the optical disk reproduction section15, to thereby reproduce a game program, the individual parameters for the leading character, enemy characters and arms owned by the leading character, a plurality of event tables containing a plurality of tabulated events expressing behaviors of the leading character and enemy characters, and a plurality of selection table comprising a plurality of event tables classified by the individual categories and so forth.

The control section17stores under control the individual parameters reproduced by the optical disk reproduction section15into the parameter storage section14, and the individual selection tables and event tables into the selection/event table storage section20.

From such optical disk19, also reproduced is voice information provided for the individual voice tones of the leading character31as described later. The voice information provided for the individual voice tones are stored under control in the selection/event table storage section20, and are properly read out by the control section17to be supplied to the head set3worn by the player.

The control section17also generates a game scene of the battle-type video game based on the game program reproduced by the optical disk reproduction section15and operation through the controller2by the player, and then displays such scene on the display device18after processing by the display processing section16.

FIG. 4shows one scene of such game, in which a leading character31encounters with an enemy character32during the travel along the travel route, and points arms33, such as laser beam gun, at the enemy character32.

Parameters

The leading character31, enemy character32and arms33used by the leading character31are individually provided with parameters allowing real-time changes.

Leading Character Parameters

Parameters owned by the leading character31are composed as shown inFIG. 5, which typically include vital power (life, mental power, apparent fearfulness, skill level, accuracy level, residual number of bullets on the arms33, enemy search ability, attack range, direction of field of view (forward field of view), motional speed (speed), terror, offensive power, defensive power, continuous shooting ability of the arm33, damage score (damage counter), decreasing rate of bullets in a magazine of the arm33(consumption level of magazine), angle of field of view, sensitivity of field of view (field of view (sense)), short-distance offensive power, middle-distance offensive power, long-distance offensive power, dodge skill from short-distance attack by the enemy (dodge characteristic), dodge skill from middle-distance attack by the enemy, dodge skill from long-distance attack by the enemy, endurance power against short-distance attack by the enemy (defensive characteristic), endurance power against middle-distance attack by the enemy, and endurance power against long-distance attack by the enemy.

Among these, vital power, offensive power, defensive power, and damage score are expressed by values from 0 to 255, which decrease depending on damage caused by the enemy. The motional speed is expressed in 16 steps from 0 to 15. The subjects listed from “mental power” to “enemy search ability”, terror, consumption level of magazine, and subjects listed from “short-distance offensive power” to “endurance power against long-distance attack” are expressed in percent (%).

The continuous shooting ability is expressed by the number of frames for displaying such continuous shooting. The attack range, direction of field of view (forward field of view), angle of field of view, and sensitivity of field of view are individually expressed in a unit of “maya”.

Enemy Character Parameters

Parameters owned by the enemy character32are composed as shown inFIG. 6, which typically include vital power (life), mental power, apparent fearfulness, skill level, accuracy level, residual number of bullets on the arms, enemy search ability, attack range, direction of field of view (forward field of view), notional speed (speed), terror, offensive power, defensive power, continuous shooting ability of the arm, damage score (damage counter), decreasing rate of bullets in a magazine of the arm (consumption level of magazine), angle of field of view, sensitivity of field of view (field of view (sense)), short-distance offensive power, middle-distance offensive power, long-distance offensive power, dodge skill from short-distance attack by the leading character (dodge characteristic), dodge skill from middle-distance attack by the leading character, dodge skill from long-distance attack by the leading character, endurance power against short-distance attack by the leading character (defensive characteristic), endurance power against middle-distance attack by the leading character, and endurance power against long-distance attack by the leading character.

Other parameters specific to enemy characters include endurance power against attack by the leading character (stroke endurance), endurance power against attack by the leading character using a flame thrower (fire endurance), endurance power against attack by the leading character using a water thrower (water endurance), endurance power against attack by the leading character using an acid thrower (acid endurance), endurance power against thunder shock caused by the leading character (thunder endurance), weak point ID, ability for pursuing the leading character (persistency), and critical endurance.

Among these, vital power, offensive power, defensive power, and damage score are expressed by values from 0 to 255, which decrease depending on damage given by the leading character. The notional speed is expressed in 16 steps from 0 to 15. The subjects listed from “mental power” to “enemy search ability”, terror, consumption level of magazine, and subjects listed from “short-distance offensive power” to “weak point ID” are expressed in percent (%).

The continuous shooting ability is expressed by the number of frames for displaying such continuous shooting. The attack range, direction of field of view (forward field of view), angle of field of view, and sensitivity of field of view are individually expressed in a unit of “maya”.

Arms Parameters

Parameters for the arms33owned by the leading character31is composed as shown inFIG. 7, which typically include range, weight (size), offensive power, continuous shooting speed, number of loading, direction of field of view (forward field of view), angle of field of view, sensitivity of field of view (field of view (sense)), bullet loading time, attack range, shooting accuracy, short-distance offensive power, middle-distance offensive power, long-distance offensive power, dodge skill from short-distance attack by the enemy (dodge characteristic), dodge skill from middle-distance attack by the enemy, dodge skill from long-distance attack by the enemy, endurance power against short-distance attack by the enemy (defensive characteristic), endurance power against middle-distance attack by the enemy, and endurance power against long-distance attack by the enemy.

Among these, the range, direction of field of view (forward field of view), angle of field of view, and sensitivity of field of view are expressed in meter (m), and the offensive power is typically expressed by values from 0 to 255. The weight is expressed in kilogram (kg), the number of loading in values from 0 to 1023, and the continuous shooting speed and bullet loading time in the number of frames for displaying such continuous shooting. The subjects listed from “shooting accuracy” to “endurance power against long-distance attack by the enemy” are individually expressed in percent (%).

Display Control Based on Parameters

Such individual parameters are read out from the optical disk19as described in the above, and then stored in the parameter storage section14shown in FIG.1. The control section17properly reads out the parameter from the parameter storage section14depending on a scene or situation, to thereby display under control behaviors of the leading character31, enemy character32and arms33used by the leading character31.

A process flow of the controlled display based on such parameters will be explained referring to a flow chart of FIG.8. The process flow starts when the main unit1starts the video game, and the process by the control section17advances to step S1.

In step S1, the control section17reads out parameters for the normal state from various parameters stored in the parameter storage section14, and then, in step S2, displays under control the leading character31moving along a predetermined route while keeping a psychological state corresponded to such normal parameters.

Examples of the parameters for the normal state of the leading character21read out from the parameter storage section14include mental power, terror and skill level as listed inFIG. 9The individual values of such parameters for the normal state of the leading character31are “1” for the mental power, “0.15” for terror, and “1” for skill level.

The “mental power” parameter ranges from 0 to 1 (corresponding to weak to strong) depending on the mental condition of the leading character; the “terror” parameter ranges also from 0 to 1 (corresponding to fearless to fearful) depending on the number or apparent fearfulness of the enemy characters; and the “skill level” parameter ranges again from 0 to 1 (corresponding to less to much) depending on the number of times the game is executed, in which the leading character31gains experience by repeating battles with the enemy character32.

The enemy character32is programmed to attack the leading character31at predetermined points on the travel route. In step S3in the flow chart shown inFIG. 8, the control section17determines whether the enemy character32which may attack the leading character31appeared or not, and the process thereof returns to step S2when the enemy character32was not found, to thereby display under control actions of the leading character31based on the foregoing parameters for the normal state. This allows the leading character31to keep on traveling along the predetermined route.

On the contrary, when the enemy character32appeared, the control section17reads out in step S4from the parameter storage section14the parameters of the leading character31for the case of encountering with the enemy character32.

The parameters of the leading character31read out from the parameter storage section14for the case of encountering with the enemy character32include, as typically listed inFIG. 10, those for mental power of the leading character, apparent fearfulness of the enemy character32, number of the enemies nearby, distance to the enemy character32and skill level.

As is clear fromFIG. 10, the individual values of such parameters of the leading character31for the case of encountering with the enemy character32are “0.25” for the mental power, “0.1” for the apparent fearfulness of the enemy character32, “0.1” for the number of enemies nearby, “0” for the distance to the enemy character32, and “0.1” for the skill level.

The control section17displays under control actions of the leading character31based on the parameters listed inFIG. 10for the case of encountering with the enemy character32, where the display of such actions of the leading character31can be altered depending on the presence or absence of voice input by the player in such controlled display.

More specifically, the control section17determines in step S5the presence or absence of the player's voice input upon reading out the parameters or the leading character31for the case of encountering with the enemy character32, and the process thereof advances to step S9when the voice input from the player is detected, and advances to step S6when not detected.

In step S6, reached after detecting no voice input from the player, the control section17displays under control the leading character31using parameters of such leading character31read out from the parameter storage section14for the case of encountering the enemy character32without alteration.

On the other hand in step S9, reached after detecting voice input from the player, the control section17alters the individual parameters of the leading character32, read out from the parameter storage section14for the case of encountering the enemy character32, into values corresponding to the player's voice input, and then in step S6, actions of the leading character31are displayed under control based on such altered values of the parameters.

FIG. 11shows an exemplary scene in which the enemy character32appeared in front of the leading character moving along the route. In such exemplary case, in order to make the leading character31fight with the enemy character32, the player not only controls the controller2, but also gives instructions to the leading character31through voice such as “Take the fire thrower!” so as to designate an arm to be used for attacking the enemy character32, and such as “Aim at the belly!” so as to designate a weak point of the enemy character32to be aimed at.

The player's voice is picked up by the microphone7of the head set3shown inFIG. 3, converted into sound signals, which are then supplied to the voice recognition section13. The voice recognition section13analyzes meaning of the phrase spoken by the player based on waveform pattern of such sound signals, and supplies the analytical results to the control section17. The control section17then alters the values of the individual parameters, read out in step S4, of the leading character31for the case of encountering with the enemy character32based on such analytical results. Actions of the leading character31are displayed under control based on such altered parameters.

In such exemplary case, in which the instructions of “Take the fire thrower!” and “Aim at the belly !” were made by the player, the control section17allows the controlled display such that the leading character31holds a fire thrower as the arms33and throws fire to the enemy character32using such fire thrower to thereby expel it as shown in FIG.12.

In step S7in the flow chart shown inFIG. 8, the control section17determines whether the enemy character32was defeated or not, and the operation of the main unit1advances to step S8for the case the enemy character32was defeated, and returns to step S5when not defeated. Then presence or absence of the player's voice input is determined in step S5as described in the above, and again in step S9or step S6, actions of the leading character31are displayed under control based on the parameters corresponding to the presence or absence of the player's voice input.

In step S9, whether the video game was completed either in response to defeat of the enemy character32or in response to instruction of end of the game issued by the player is determined, and the entire routine of the flow chart shown inFIG. 8is terminated without any other operations when the end of the game was detected, and the operation of the control section17returns to step S1when the game is not completed yet. The control section17then roads out the parameters of the leading character31for the normal state from the parameter storage section14, and displays under control the leading character31with the parameters for the normal psychological state so as to travel along a predetermined route.

Escaping Action from Enemy Character

While the above description dealt with the case that the leading character31fights with the enemy character22, the leading character31does not always fight with the encountered enemy character32, and the actions thereof may differ depending on the psychological state (parametric values).

More specifically, when a value of the “terror” parameter of the leading character31encountering with the enemy character32is higher than a predetermined value, the control section17displays under control the leading character31such that running away from the enemy character32.FIG. 13shows the individual parametric values for the leading character31in such situation.

As is clear fromFIG. 13, when the leading character31runs away from the enemy character32, the individual values of such parameters are “0.7” for the hit ratio of own attack, “0.5” for the terror, “0.4” for the distance to the target, “0.5” for the number of enemies nearby, “0.8” for the hit ratio of the enemy's attack, and “0.6” for the distance to the enemy. The control section17is allowed to display under control the leading character31such that running away from the enemy character32for example when the values for the “terror” parameter exceeds “0.5”.

When the player encourages the leading character31about to run away with a word such as “Hold out !” or “Don't run away!”, the control section17lowers the value for the “terror” parameter by a predetermined value. If the lowered value for the “terror” parameter becomes “0.4” or below, the control section17displays under control actions of the is leading character31based on the parameters for the normal state as described previously referring to FIG.9. The leading character31now has the normal psychological state, stops running away from the enemy character32and begins to advance along a predetermined route in a normal way of walking.

Even if the player speaks the words, the controlled display of the leading character31such that running away will be retained by the control section17if the “terror” parameter still remains at “0.5” or above. In this case, the leading character31keeps on running away from the enemy character32disobeying the player. When the leading character31came far enough from the enemy character32, the control section17lowers the value of the “terror” parameter to thereby display under control the leading character31so as to have normal actions.

Voice Instruction for Cases Other than Encountering Enemy Character

The player watching the leading character31moving along the route may speak to such leading character21in the displayed scene such as “Watch out!” or “Be careful!” when the player feels a sign of abrupt appearance of the enemy character32. Upon receiving such voice input, the control section17typically raises the value of the “terror” parameter of the leading character31by a predetermined range, and displays under control the leading character based on such raised parametric value.

Since the value of the “terror” parameter was raised by a predetermined range, the control section17displays under control the leading character31so as to make careful steps along the route while paying attention to the peripheral, which was altered from the previous normal steps.

When the leading character31walking with careful steps encounters with the enemy character32as expected, the control section17displays under control actions of the leading character31based on the parameters for the case encountering with the enemy character32, which were previously explained referring to FIG.10.

When the leading character31walking with careful steps did not encounter with the enemy character32and it was defined as no more dangerous, the player then gives voice instruction such as “Out of danger. Forward normally”. The control section17reads out the parameters for the normal psychological state according to such voice input as previously explained referring toFIG. 9, to thereby displays actions of the leading character31based on such parameters.

Conversation of Player with Leading Character

While the above description may suggest that only the player one-sidedly gives instructions to the leading character31, the actual game proceeds while allowing the leading character31to occasionally ask the player something or answer to the player, and allowing such player to reply to a question of the leading character31or give some instruction on the next action, to thereby control the behavior of the leading character31through conversation therewith. The voice tone of the leading character31is programmed to vary depending on the voice tone in the response or instruction of the player.

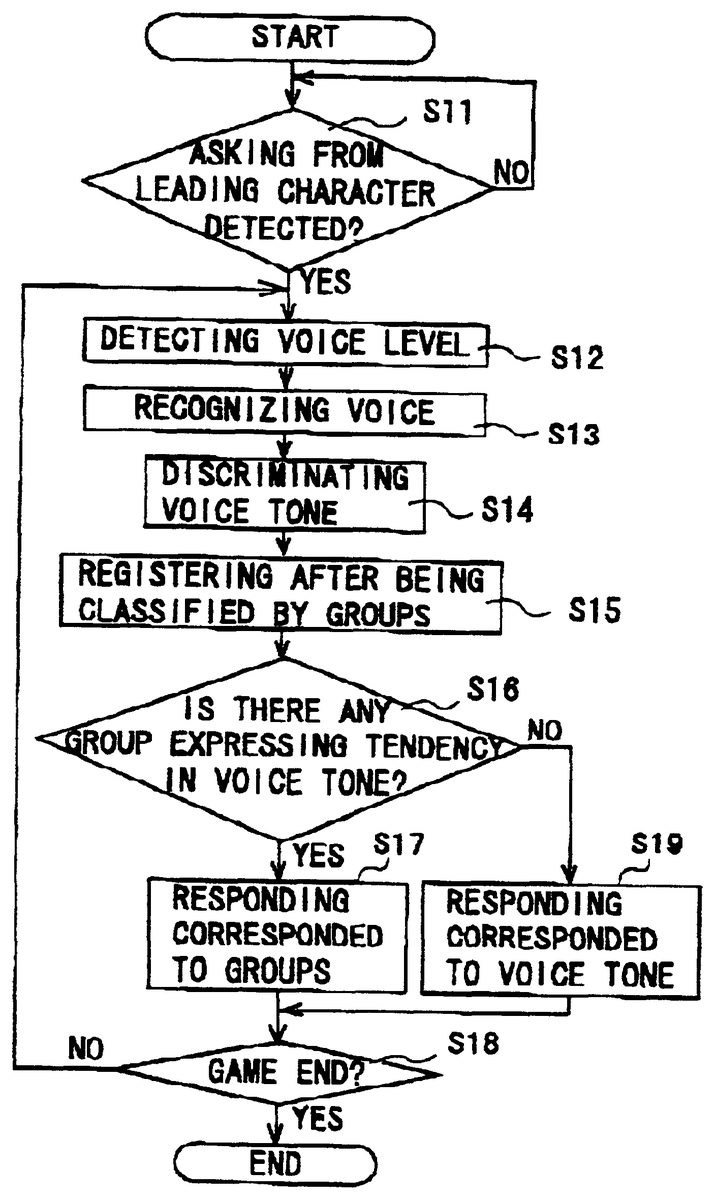

FIG. 14is a flow chart showing a process flow according to which the leading character31completes a response to a player in a voice tone corresponded to the player's voice tone.

The process flow starts from step S11when the video game begins. The control section17reads out from the selection/event table storage section20a sound signal for a question issued by the leading character31according to a predetermined timing, and send the signal to the head set3worn by the player.

In step S11, the control section17discriminates whether the leading character31asked a question to the player or not by discriminating whether the sound signal for the question issued by the leading character31was sent to the head set3worn by the player or not. The control section17is brought into a stand-by mode until a question is issued for the case the leading character31did not ask any question, and the process thereof advances to step S12for the case the question was detected.

When the question is issued by the leading character31, the player will answer the question. The player's voice as the answer is picked up by the microphone7of the head set3, and supplied as sound signal to the voice level detection section12and the voice recognition section13In step S12, the voice level detection section12determines the player's voice level by detecting the level of the sound signal, and sends a level detection output to the control section17. In step S13, the voice recognition section13analyzes meaning of the player's voice based on the waveform pattern of the sound signal, and sends the analytical results to the control section17.

Next, in step S14, the control section17detects the player's voice tone based on the level detection output from the voice level detection section12and the analytical result of the meaning of the player's voice from the voice recognition section13, and then registers the detection result into tone groups classified by voice tones in step S15.

While the following description deals with the case in which the player's voice tone is detected based on the level detection output from the voice level detection section12and the analytical result of the meaning of the player's voice from the voice recognition section13, it is also allowable that the player's voice tone is detected based only on the analytical result of the meaning of the player's voice. Nevertheless, the detection of the voice tone involving level detection output from the voice level detection section12will raise accuracy in such detection.

Each voice tone group is assigned with a counter10, a value of which is incremented by one when the group is registered with the player's voice tone after being classified by the control section17.

Such registration operation is repeated each time the player's voice tone is analyzed, so that the count of the voice tone group corresponded to a frequently used voice tone will be incremented by one as the video game proceeds (each time the player utters). Thus, a tendency in the player's voice tone which is frequently used is expressed by the counts of the individual counters10.

In step S16, the control section17discriminates whether there is any group expressing a tendency in the player's voice tone or not by confirming whether the count value of each voice tone group equals to or exceeds a predetermined count value or not. If there is a group having a count value equals to or exceeds the predetermined value, a sound signal of the leading character31is read out in step S17from the selection/event table storage section20so as to produce a response corresponded to such group, and the sound signal is then sent to the speaker unit6bof the head set3worn by the player. Thus, the response of the leading character31is produced in a voice tone corresponded to the voice tone of the player.

On the other hand, the count values of the individual counters10can increase only within a range below a predetermined value when the player is in a period shortly after the beginning of the game or the player uses various voice tones.

In such case, the control section17discriminates that there is no group expressing a tendency in the player's voice zone, and then in step S19reads out from the selection/event table storage section20a sound signal of the leading character31so as to make a response corresponded to the currently detected player's voice, and sends the sound signal to the speaker unit6bof the head set3worn by the player. Thus, the response of the leading character31is produced again in a voice tone corresponded to the player's voice tone.

The above description will further be detailed assuming that the leading character31is a female character. As shown inFIG. 4, when the leading character31encounters with the enemy character32, the control section17reads out voice information such as “Damn you!”, “Don't be like a fool and show me the weak point!” or the like from those stored in the selection/event table storage section20, and sends it to the head set3worn by the player.

When instructions such as “Take the fire thrower!” and “Aim at the belly!” were given by the player in response to such saying of the leading character31, the control section17controls the display so as to make the leading character31have a fire thrower as an arm33and expel the enemy character32by throwing fire using such fire thrower.

On the other hand, when no instruction was given by the player to the leading character31, the control section17selects a voice information such as “Are you hearing?” from those stored in the selection/event table storage section20, and sends it to the head set3worn by the player, to thereby repeat the asking by the leading character31and prompt an answer from the player.

The player now gives answers to the leading character31, which may be given in a variety of voice tones.

FIG. 15shows exemplary positive answers to the question “Are you hearing?” by the leading character31, which include “Yes, I am.”, “Sure.”, “Yeah.” and “Hum, so what?”.

“Yes, I am” corresponds to the polite voice tone, “Sure.” to the gentle voice tone, “Yeah.” to the general voice tone, and “Hum, so what?” to the negligent voice tone.

The control section17discriminates such player's voice tone in the processes from steps S12to S14shown inFIG. 14, classifies them into any one of “polite tone group”, “gentle tone group”, “general tone group” and “negligent tone group”, and increments the counter10of a corresponding group by one.

Executing such increment every time the player's voice tone is analyzed will produce a tendency of the player's voice tone as being expressed by the count values of the individual voice tone groups as described in the above. So that if there is a group expressing a tendency of the player's voice tone, the control section17reads out, from the selection/event table storage section20, voice information which belongs to a group corresponded to the player's voice tone, and supplies the voice information to the head set3worn by the player. On the contrary, if there is no group expressing a tendency of the player's voice tone, the control section17reads out in step S19a currently analyzed voice information corresponded to the player's voice tone from the selection/event table storage section20, and sends such voice information to the head set3worn by the player.

In such case for example, when the player answers as “Yes, I am.” which belongs to the “polite tone group” in response to the question of “Are you hearing?” by the leading character31, the control section17reads out voice information of the leading character31such as “Oops! Your polite answer makes me ill!”, for example, from the selection/event storage section20, and then sends the voice information to the head set3worn by the player.

Similarly, in the case that the player gave an answer of “Sure.” or “Yeah.” which belong to the “gentle tone group” and “general tone group”, respectively, in response to the question of “Are you hearing?” by the leading character31, the control section17reads out a casual voice information of the leading character31such as “OK!”, for example, from the selection/event storage section20, and then sends the voice information to the head set3worn by the player.

Similarly again, in the case that the player gave an answer of “Hum, so what?” which belong to the “negligent tone group” in response to the question of “Are you hearing?” by the leading character31, the control section17reads out voice information of the leading character31such that “Why can you say in that way? I'm mad!”, for example, from the selection/event storage section20, and then sends the voice information to the head set3worn by the player.

The above description dealt with an exemplary case that the player gave a positive answer to the leading character31. On the contrary, an exemplary case that the player gave a negative answer to such question is shown in FIG.16.

The negatives answer to the question “Are you hearing?” by the leading character31include “No, I'm not.”, “No, sorry.”, “No.” and “No, So what?”.

“No, I'm not.” corresponds to the polite voice tone, “No, sorry.” to the gentle voice tone, “No.” to the general voice tone, and “No, so what?” to the negligent voice tone.

When the player gave an answer of “No, I'm not.” which belongs to the “polite tone group” in response to the question of “Are you hearing?” by the leading character31, the control section17reads out voice information of the leading character31such that “Listen to me carefully, then!”, for example, from the selection/event storage section20, and then sends the voice information to the head set3worn by the player.

Similarly, in the case that the player gave an answer of “No, sorry.” which belongs to the “gentle tone group” in response to the question of “Are you hearing?” by the leading character31, the control section17reads out voice information of the leading character31such as “Then listen to me carefully 'cause I'll tell you once more!”, for example, from the selection/event storage section20, and then sends the voice information to the head set3worn by the player.

Similarly again, in the case that the player gave an answer of “No.” which belongs to the “general tone group” in response to the question of “Are you hearing?” by the leading character31, the control section17reads out voice information of the leading character31such as “Why ain't you listening to me? Don't make me repeat the same phrase!”, for example, from the selection/event storage section20, and then sends the voice information to the head set3worn by the player.

Still similarly, in the case that the player gave an answer of “No, so what?” which belongs to the “negligent tone group” in response to the question of “Are you hearing?” by the leading character31, the control section17reads out voice information of the leading character31such as “Hey you! Are you really going to help me? Listen to me carefully 'cause I'll tell you once more!”, for example, from the selection/event storage section20, and then sends the voice information to the head set3worn by the player.

In this video game, the player and the leading character31are in a partnership for fighting with a common enemy. With such video game machine of this embodiment, the personality of the player can be simulated by the personality of the game character by altering the voice tone of the leading character31corresponding to that of the player. This successfully makes the player feel familiar to the game character and promotes empathy of the player, to thereby further enhance the enjoyment of the video game.

The above conversation between the player and the leading character31is none other than one example, and it is to be understood that the video game proceeds based on any other conversation between the player and the leading character31, in which the voice tone of the leading character31can vary depending on the voice tone of the player.

After such control of the response for the leading character31, the control section17determines in step S18inFIG. 14whether such battle-type video game is completed by discriminating whether the player issued an instruction of end of the game or not, or whether a series of the game story came to the end or not. For the case the end of the game was detected, the entire routine shown in the flow chart will complete without any other processing. For the case the end of the game was not detected, the process goes back to step S12, and the foregoing voice tone discrimination, group-classified registration and responding operation of the leading character31depending on the voice tone groups or on the player's voice tone will be repeated until the game over will be discriminated in step S18.

Effect of the Embodiment

As is clear from the above description, when a predetermined event such as appearance of the enemy character32occurred, the video game machine of this embodiment reads out parameters corresponded to such event to thereby control display of leading character's behavior based on such parameters, where such display of the leading character31can also be controlled by modifying such parameters according to the player's voice when it is recognized. This allows control of the leading character31both through the controller and sound and voice input.

Since the leading character31is controllable not only through the controller but also through the voice input, the player is readily empathized with the video game, which makes the player positively participate the game. Such pleasure in operating the game character enhances enjoyment of the video game.

Since also the control section17of course controls behaviors of the game characters based on the individual parameters, the behaviors of the game characters do not always depend on the voice input by the player, which provides another enjoyment of such video game.

Since the video game machine of this embodiment can modify the voice tone of the leading character31corresponding to that of the player, the personality of the leading character31can be matched to that of the player. This successfully makes the player feel familiar to the game character and promotes empathy of the player, to thereby further enhance the enjoyment of the video game.

While the above description dealt with the case in which the leading character31is operated by voice input for the simplicity of the understanding of the embodiment, it is also allowable to operate the enemy character32by such voice input. For example, operating the leading character31by one player and the enemy character32by the other player allows mutual attack through voice input, which will enhance interest of the video game.

While the above description dealt with the case in which the present invention was applied to a battle-type video game, any other types of video games will be allowable provided that the objects such as game characters are operated through voice input.

The embodiment described in the above is only part of the examples of the present invention. It is therefore to be understood that the present invention may be practiced in any modifications depending on the design or the like otherwise than as specifically described herein without departing from the scope and the technical spirit thereof.

Claims

- A voice processing method comprising the steps of: detecting a voice tone based on inputted voice information;determining a plurality of groups corresponding to a plurality of voice data;classifying the detected voice tone into at least one of the plurality of groups;and outputting voice data whose voice tone corresponds to the detected voice tone;wherein the step of outputting voice data outputs voice data corresponding to the at least one group if a count of voice tones classified for the at least one group exceeds a predetermined number.

- The voice processing method according to claim 1 , wherein the step of detecting a voice tone further comprises the steps of: analyzing the meaning of the inputted voice information;and determining the voice tone based on the analyzed meaning.

- The voice processing method according to claim 1 , wherein the step of detecting a voice tone further comprises the steps of: analyzing the meaning of the inputted voice information;detecting a voice level based on the inputted voice information;and determining the voice tone based on the analyzed meaning and the detected voice level.

- The voice processing method according to claim 1 , wherein the plurality of groups includes at least a group for polite tone, a group for gentle tone, a group for general tone and a group for negligent tone.

- The voice processing method according to claim 1 , wherein the inputted voice information and the voice data are a voice of a game player and a game object, respectively.

- A voice processing device comprising: a voice tone detection means for detecting a voice tone based on inputted voice information;a voice information storage means having stored therein voice data corresponded to a plurality of voice tones;a group determination means for determining a plurality of groups corresponding to a plurality of voice data;and a classification means for classifying the detected voice tone into at least one of the plurality of groups;a counter for counting each classification into the at least one group;and a voice output-control means for outputting voice data corresponded to the detected voice tone from the voice information storage means;wherein the voice output-control means outputs voice data corresponding to the at least one group if a count of the counter exceeds a predetermined number.

- The voice processing device according to claim 6 , wherein the voice tone detection means analyzes meaning of the inputted voice information and determines the voice tone based on the analyzed meaning.

- The voice processing device according to claim 6 , wherein the voice tone detection means analyzes meaning of the inputted voice information and detects a voice level based on the inputted voice information, to thereby determine the voice tone based on the analyzed meaning of the inputted voice information and the detected voice level.

- The voice processing device according to claim 6 , further comprising: a tendency detection means for detecting tendency in the detected voice tone;and wherein the voice output-control means outputs voice data with a voice tone corresponded to a tendency in the detected voice tone.

- The voice processing device according to claim 6 , wherein the inputted voice information and the voice data are a voice of a game player and a game object, respectively.

- A recording medium having recorded therein a voice processing program to be executed on a computer, in which the voice processing program executes the steps of: detecting a voice tone based on inputted voice information;determining a plurality of groups corresponding to a plurality of voice data;classifying the detected voice tone into at least one of the plurality of groups;and outputting voice data whose voice tone corresponds to the detected voice tone;wherein the step of outputting voice data comprises the step of outputting voice data corresponding to the at least one group if a count of voice tones classified for the at least one group exceeds a predetermined number.

- The recording medium having recorded therein a voice processing program according to claim 11 , wherein the step of detecting a voice tone further comprises the steps of: analyzing the meaning of the inputted voice information;and determining the voice tone based on the analyzed meaning.

- The recording medium having recorded therein a voice processing program according to claim 11 , wherein the step of detecting a voice tone further comprises the steps of: analyzing the meaning of the inputted voice information;detecting a voice level based on the inputted voice information;and determining the voice tone based on the analyzed meaning and the detected voice level.

- The recording medium having recorded therein a voice processing program according to claim 11 , wherein the plurality of groups include at least a group for polite tone, a group for gentle tone, a group for general tone and a group for negligent tone.

- The recording medium having recorded therein a voice processing program according to claim 11 , wherein the inputted voice information and the voice data are a voice of a game player and a game object, respectively.

- A computer having a processor and a memory storing a voice processing program to be executed by the computer, the voice processing program performing the steps of: detecting a voice tone based on inputted voice information;determining a plurality of groups corresponding to a plurality of voice data;classifying the detected voice tone into at least one of the plurality of group;and outputting voice data whose voice tone corresponds to the detected voice tone;wherein the step of outputting voice data comprises the step of outputting voice data corresponding to the at least one group if a count of voice tones classified for the one group exceeds a predetermined number.

- A voice processing device comprising: a voice tone detection unit for detecting a voice tone based on inputted voice information;a voice information storage unit having stored therein voice data corresponded to a plurality of voice tones;a voice output-control unit for determining a plurality of groups corresponding to a plurality of voice data, for classifying the detected voice tone into at least one of the plurality of groups, and for outputting voice data corresponding to the detected voice tone from the voice information storage unit;and a counter for registering a classification of the detected voice tone into the at least one group;wherein the voice output-control unit outputs voice data corresponding to the at least one group if a count of the counter for voice tones classified for the at least one group exceeds a predetermined number.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.