U.S. Pat. No. 6,561,907

SIMULTANEOUS OR RECIPROCAL IMAGE SYNTHESIS FOR A VIDEO GAME AT DIFFERENT VIEWPOINTS

Issue DateApril 30, 2001

Illustrative Figure

Abstract

No abstract is available for this record.

Description

DETAILED DESCRIPTION OF THE INVENTION A detailed description is given of embodiments of the invention with reference to the accompanying drawings. Embodiment 1 First, a description is given of a first embodiment of the invention. FIG. 1 indicates a video game apparatus, as a game processing apparatus, according to one embodiment of the invention. The video game apparatus executes a program recorded in a computer-readable recording medium according to one embodiment of the invention, and is used for execution of a game processing method according to one embodiment of the invention. A video game apparatus 10 is composed of, for example, a game machine body 11 , and a key pad 50 connected to the input side of the game machine body 11 , wherein a television (TV) set 100 has a CRT as a display monitor and a speaker connected to the output side of the video game apparatus 10 . The keypad 50 is operated by a user (player) and provides the game machine body 11 with instructions given by the user. The television set 100 displays a picture (image) and outputs sound responsive to a game content on the basis of video signals (picture signals) and sound signals supplied by the game machine body 11 . The game machine body 11 has, for example, a CPU 12 , ROM 13 , RAM 14 , hard disk drive (HDD) 15 , graphics processing unit 16 , sound processing unit 17 , CD-ROM (Compact Disk Read Only Memory) drive 18 , communications interface unit 19 , memory card reader writer 20 , input interface unit 21 , and a bus 22 which connects these components to each other. The CPU 12 carries out basic control of the entire system by executing an operation system stored in the ROM 13 ...

DETAILED DESCRIPTION OF THE INVENTION

A detailed description is given of embodiments of the invention with reference to the accompanying drawings.

Embodiment 1

First, a description is given of a first embodiment of the invention. FIG. 1 indicates a video game apparatus, as a game processing apparatus, according to one embodiment of the invention. The video game apparatus executes a program recorded in a computer-readable recording medium according to one embodiment of the invention, and is used for execution of a game processing method according to one embodiment of the invention.

A video game apparatus 10 is composed of, for example, a game machine body 11 , and a key pad 50 connected to the input side of the game machine body 11 , wherein a television (TV) set 100 has a CRT as a display monitor and a speaker connected to the output side of the video game apparatus 10 . The keypad 50 is operated by a user (player) and provides the game machine body 11 with instructions given by the user. The television set 100 displays a picture (image) and outputs sound responsive to a game content on the basis of video signals (picture signals) and sound signals supplied by the game machine body 11 .

The game machine body 11 has, for example, a CPU 12 , ROM 13 , RAM 14 , hard disk drive (HDD) 15 , graphics processing unit 16 , sound processing unit 17 , CD-ROM (Compact Disk Read Only Memory) drive 18 , communications interface unit 19 , memory card reader writer 20 , input interface unit 21 , and a bus 22 which connects these components to each other.

The CPU 12 carries out basic control of the entire system by executing an operation system stored in the ROM 13 , and executes a game program stored in a program storing area, described later, of the RAM 14 .

The RAM 14 stores game programming data and image data, etc., which the CR-ROM drive 18 reads from the CD-ROM 30 , in respective areas. Also, the game programming data and image data can be stored in the hard disk drive 15 .

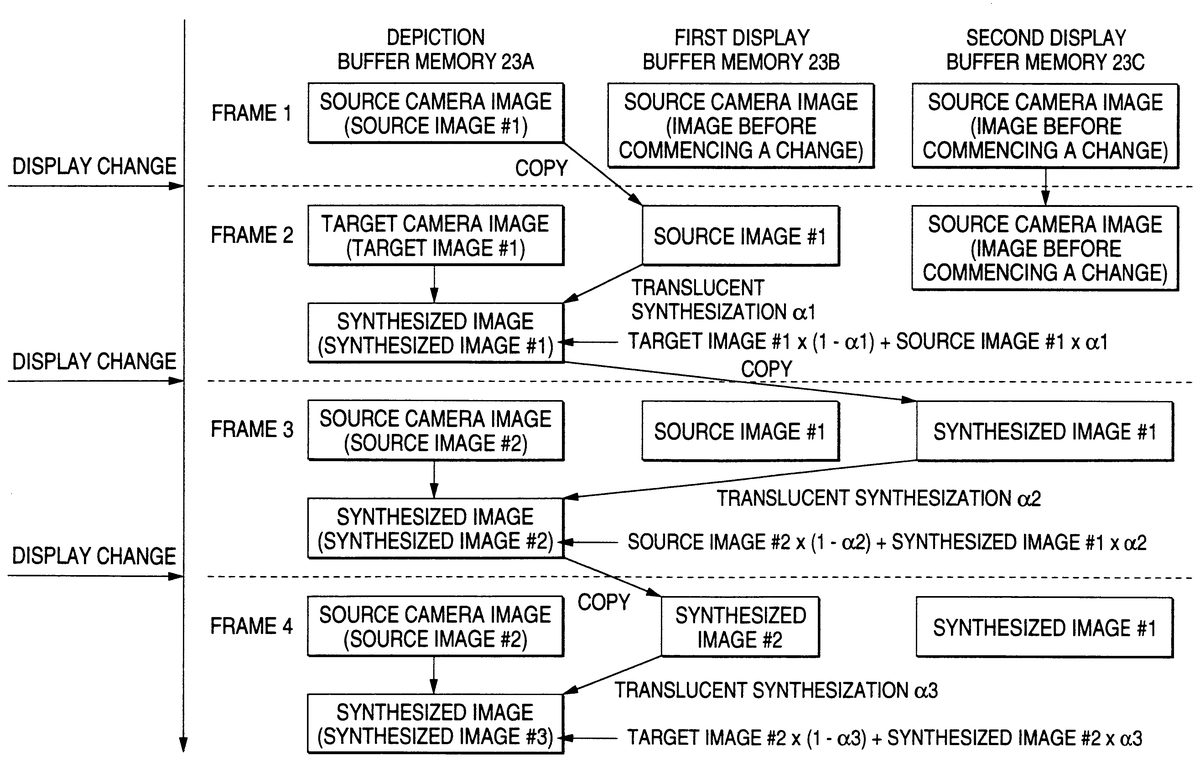

The graphics processing unit 16 has a VRAM 23 . As shown in FIG. 2 , the VRAM 23 contains a depiction buffer memory (virtual buffer) 23 A, first display buffer memory (frame buffer) 23 B, and second display buffer memory (frame buffer) 23 C as buffer memories for storing image data. The graphics processing unit 16 generates video signals on the basis of the image data stored in the first display buffer memory 23 B and second display buffer memory 23 C in compliance with an instruction from the CPU 12 in line with execution of a program, and outputs the video signals to the television set 100 , whereby a display image can be obtained on a display screen 101 of the television set 100 by image data stored in the frame buffers.

The sound-processing unit 17 has a function to generate sound signals such as background music (BGM), sound effects, etc., and the sound processing unit 17 generates sound signals on the basis of data stored in the RAM 14 by an instruction from the CPU 12 in line with the execution of programs, and outputs it to the television set 100 .

Since a CD-ROM 30 that is a recording medium is detachably set in the CD-ROM drive 18 , the CD-ROM drive 18 reads game programming data, image data, and sound data, which are stored in the CD-ROM 30 .

The communications interface unit 19 is selectively connected to a network 111 by a communications line 110 , and carries out data transmissions with other apparatuses.

The memory card reader writer 20 is set so that the memory card 31 can be detached, and holds or saves data such as interim game advancing data and game environment setting data, etc.

A computer-readable recording medium according to an embodiment of the invention is a recording medium, readable by a computer, in which a game program such as a wrestling game (fighting game) is recorded, and it is composed of a CD-ROM 30 and a hard disk drive 15 .

By making a computer execute programs recorded in the recording medium, the computer is caused to carry out the following processes. That is, the computer selects a dynamic image from at least one viewpoint among dynamic images depicted from a plurality of different viewpoints (cameras) set in a virtual space, and achieves a process of displaying the dynamic image.

And, when the viewpoint of a dynamic image to be displayed is changed (that is, a camera is changed), the computer translucently synthesizes a dynamic image from a viewpoint before the change of the viewpoint and another dynamic image from another viewpoint after the change of the viewpoint together frame by frame. When the computer carries out the translucent synthesis, the computer fades out the image by gradually increasing transparency of the dynamic image depicted from a viewpoint before the viewpoint is changed, and at the same time, fades in the image by gradually reducing the transparency of the dynamic image depicted from a viewpoint after the viewpoint is changed. In the embodiment, a program to cause the game machine body 11 to execute the aforementioned process is stored in a CD-ROM, etc., which is a recording medium.

In further detail, by causing the computer to execute the program stored in the recording medium, the following process is carried out. That is, the computer generates a dynamic image (the first dynamic image) from at least one viewpoint, which is formed of a plurality of image frames, in real time, and displays it on a display screen.

When changing the viewpoint of a dynamic image to be displayed, the computer reciprocally adjusts brightness of one image frame of the first dynamic images and brightness of one image frame of another dynamic image (the second dynamic image) depicted from the second viewpoint, which consists of a plurality of image frames. And the computer acquires a synthesized image frame by synthesizing the corresponding two image frames whose brightnesses were adjusted, and displays the corresponding synthesized image frame.

Subsequently, the computer mutually selects the brightness of the next image frame of the first dynamic images, and brightness of the next image frame of the second dynamic images whenever image frames to be displayed are renewed; and reciprocally adjusts the brightness of the synthesized image frame acquired by the last synthesis, and brightness of the reciprocally selected next image frame, acquires a newly synthesized image frame by synthesizing said synthesized image frame whose brightness has been previously adjusted, and said image frame, and displays the corresponding synthesized image frame, whereby an imaging process of fade-out and fade-in among the respective dynamic images is executed in the game machine body 11 .

In this case, the computer adjusts the brightnesses of the image frame and synthesized image frame by adjusting the quantity of reduction in the brightnesses in the image frame and synthesized image frame, and acquires the abovementioned synthesized image frame by translucent synthesis. For example, with respect to adjustment of the brightness of the image frame of the first dynamic image, the computer adjusts the quantity of reduction in the brightness so that the brightness becomes low whenever the next image frame is selected, and with respect to adjustment of the brightness of the image frame of the second dynamic image, the computer adjusts the quantity of reduction in the brightness so that the brightness becomes high whenever the next image frame is selected.

Next, a detailed description is given of actions of a video game apparatus 10 according to the embodiment. The CPU 12 reads programs and data necessary for execution of a game from the CD-ROM 30 via the CD-ROM drive 18 on the basis of the operating system stored in the ROM 13 . The read data are transferred to the RAM 14 and hard disk drive 15 .

And, since the CPU 12 executes a program transferred to the RAM 14 , various types of processes, described later, to progress the game are executed in the video game apparatus 10 . Also, there are some control actions to be executed in the video game apparatus 10 , which make actual control by devices other than the CPU 12 cooperating with the CPU 12 .

Programs and data necessary to execute the game are actually read from the CD-ROM 30 one after another on the basis of progression of the process in compliance with instructions from the CPU 12 , and are transferred into the RAM 14 . However, in the following description, to easily understand the invention, a detailed description regarding the reading of data from the CD-ROM 30 and transfer of the data into RAM 14 is omitted.

Herein, a description is given of a configuration of the data stored in the RAM 14 during execution of a game program according to the embodiment. FIG. 3 is a view showing one example of the data configuration of RAM. The RAM 14 is, as shown in FIG. 3 , provided with, for example, a program memory area 14 A for storing game programs, a character memory area 14 B that stores character data needed in the process of progression of a game program, a camera data memory area 14 C that stores respective camera data of a plurality of cameras 1 , 2 , 3 , Y, and a camera change data memory area 14 D that stores data regarding the camera changes.

In the first embodiment, the contents of the game is wrestling which is one of the fighting games. As shown in FIG. 4 , a wrestling hall containing an entrance side stage (area A), fairways (areas B through E), ring (Area F), spectators seats, and others constitutes a virtual three-dimensional space to be displayed on a display. Cameras 1 , 2 , 3 , 4 , 5 , 6 , 7 , and 8 shoot the wrestling hall from different camera angles (viewpoints) for a live telecast.

In the camera data memory area 14 C, data regarding respective movements of the cameras 1 , 2 , 3 , 4 , 5 , 6 , 7 , and 8 are stored as separate data files of respective scenes camera by camera. The data regarding the movements of cameras are those related to the position and orientation, etc., of cameras per frame. When a camera is selected, an image that the selected camera shoots in the virtual three-dimensional space is depicted in real time. The depiction is carried out in, for example, a depiction buffer memory 23 A.

The camera change data memory area 14 D stores a camera selection table 40 , number 41 of camera change frames, default 42 of the source camera transparency, default 43 of a target camera transparency, source camera transparency 44 , and target camera transparency 45 . The camera selection table 40 is a table that stores information describing the selection reference when changing a camera to display a game screen containing characters, etc. The number 41 of camera change frames stores the number of frames from commencement of a change to termination thereof when changing the camera. For example, a value such as, for example, 33 is preset.

In the source camera transparency default 42 , a default of transparency of a camera (source camera) to fade out an image when changing the transparency of the camera is set in advance. In the target camera transparency default 43 , a default of transparency of a camera (target camera) to fade in an image when changing the transparency of the camera is set in advance. In the source camera transparency 44 , values showing the transparency of the source camera are stored frame by frame in an image. And in the target camera transparency 45 , values showing the transparency of the target camera are stored frame by frame in an image.

Data of cameras 1 , 2 , 3 , Y stored in the camera data memory area 14 C are, for example, camera data to display characters moving by calculations of a computer. For example, they include a scene where a character such as a wrestler walks on a fairway and reaches the ring from a stage and a winning scene in the ring. In addition, camera data of a camera at the ringside are used to depict a character moving in response to an operation input. Cameras are selected on the basis of a probability in compliance with selection indexes defined in the camera selection table 40 . Cameras are changed in compliance with display time elapses defined in the camera selection table 40 . In other words, the viewpoint of a dynamic image to be displayed on a display is changed.

FIG. 5 is a view showing an example of a camera selection table according to the embodiment. The camera selection table 40 is structured so that it has a character position column 401 where an area where a character exists is divided into areas A, B, Y, a usable camera column 402 that defines a usable camera in the respective areas A, B, Y, a selection index column 403 that establishes selection indexes (probabilities) for the respective usable cameras, and a display time column 404 that shows a display time of the respective usable cameras.

The character position column 401 shows divisions of the respective areas where the wrestler can move. In the example shown in FIG. 5 , for example, areas A, B, Y are set. In the usable camera column 402 , the numbers of cameras usable in the areas corresponding to the areas where the wrestler can move are established. In the example shown in FIG. 5 , cameras 1 , 2 , 3 , Y are established, corresponding to the area A, and cameras 1 , 4 , 5 , Y are established, corresponding to the area B.

Corresponding to the cameras usable in the respective areas, the selection indexes of the cameras are set in the selection index column 403 . The selection indexes show a frequency at which the corresponding usable camera is selected. That is, a usable camera having a selection index of 20 is selected at a probability greater by two times than that of a camera having a selection index of 10. In the example of FIG. 5 , for example, the selection index of camera 1 in the area A is 20, the selection index of camera 2 in the area A is 40, and the selection index of camera 3 of the area A is 30. In addition, the selection index of camera 1 in the area B is 10, the selection index of camera 4 in the area B is 60, and the selection index of camera 5 in the area B is 30.

In the display time column 404 , the display time of the camera is set, corresponding to the usable cameras in the respective areas. The display time is set with a specified allowance. That is, the shortest time and longest time are set for the respective display times. Where a usable camera is selected as a target camera, any optional time between the shortest time and the longest time that are set as a display time is determined as the display time.

As shown in FIG. 5 , in the display time column 404 , for example, a value of 7 through 10 seconds (that is, 7 seconds or more and 10 seconds or less) is set, corresponding to the camera 1 in the area A, and a value of 7 through 9 seconds (7 seconds or more and 9 seconds or less) is set, corresponding to the camera 2 in the area A. A value of 3 through 5 seconds (3 seconds or more and 5 seconds or less) is set, corresponding to the camera 3 in the area A. A value of 5 through 6 seconds (5 seconds or more and 6 seconds or less) is set, corresponding to the camera 1 in the area B. A value of 4 through 8 seconds (4 seconds or more and 8 seconds or less) is set, corresponding to the camera 4 in the area B. And, a value of 4 through 8 seconds (4 seconds or more and 8 seconds or less) is set, corresponding to the camera 5 in the area B.

Next, using FIG. 6 and FIG. 7 , a description is given of procedures of a display process according to the embodiment. FIG. 6 shows the routine of a display screen process in an automatic play that displays an appearance scene of a wrestler.

In the image display process, first, where characters exist is judged (Step S 11 ). For example, it is judged to which areas among the respective areas A, B, C, D, E and F the position of a character in a virtual space of a game belongs.

Next, with reference to the data of the camera selection table 40 in compliance with an area where the character exists, a target camera (a dynamic image depicted from the viewpoint after the viewpoint is changed) is selected (Step S 12 ). The target camera is selected among usable cameras corresponding to the area where the character exists. However, where the camera that is now used to display the current image is included in the usable cameras, the camera is excluded from the target cameras. Cameras to be selected are selected at a probability responsive to the selection index. That is, the probabilities at which the respective cameras are selected will become a value obtained by dividing the selection index of the camera by the total sum of the selection indexes of all the cameras to be selected.

Further, when the target camera is selected, the display time of the selected camera is also determined. The display time of the selected camera is determined with reference to the data of the display time column 404 of the camera selection table 40 . That is, any optional time between the shortest time and the longest time, which are set by the display time of the selected camera, is determined.

Next, a camera change process is carried out (Step S 13 ). The display time counting of the selected camera is commenced after the camera change process is completed (Step S 14 ). And, a game image coming from the selected camera is depicted frame by frame, and is displayed on a display screen (Step S 15 ).

Through a comparison between the display time determined simultaneously with the selection of the camera and the counted value of the display time, it is judged whether or not the display time of the current camera (selected camera) is finished (Step S 16 ). That is, if the counted value of the display time exceeds the display time of the selected camera, it is judged that the display time is over.

If the display time is not finished (Step S 16 : NO route), the process returns to Step S 15 , wherein depiction (screen display) based on a dynamic image data by the selected camera is continued. To the contrary, if the display time is over (Step S 16 : YES route), the process returns to step S 11 , wherein the area where the character exists at present is judged. Hereinafter, processes from Step S 12 through S 16 are repeated.

Next, a detailed description is given of a camera change process. FIG. 7 is a flow chart that shows the details of the camera change process. As the camera change process is commenced, first, the display time equivalent to the camera change process is set (Step S 21 ). That is, the time responsive to the number of camera change frames is set as a display time of the camera change process. For example, where the number of camera change frames is 33 and the display time per frame is 1/30 seconds, the display time will become 33/30 seconds.

Next, a camera change is carried out (Step S 22 ), in which image data are written in the depiction buffer memory 23 A. The camera change is reciprocally carried out between the source camera and the target cameras. And, a game image, coming from the camera, which is to be written in the image data is written (depicted) in the depiction buffer memory 23 A (Step S 23 ). Further, image data of the first or second display buffer memory 23 B or 23 C are translucently synthesized with the image data of the depiction buffer memory 23 A (Step S 24 ). Image data synthesized with the image data of the depiction buffer memory 23 A are the image in the display buffer memory, which is an object of a screen output in the present frame.

Next, a transparency renewal process is executed (Step S 25 ). By the transparency renewal process, the transparency of a synthesized image in the next synthesis is adjusted. By adjustment of the transparency, the quantity of reduction in brightness of the respective images is adjusted when translucently synthesizing by adjustment of the transparency. In the renewal process of the transparency in the first embodiment, for example, the transparency where depiction is executed by the source camera, and the transparency where depiction is executed by the target camera are renewed. However, since depiction of any one camera in the respective frame is carried out, the value of the transparency of the depicted image is used.

Further, depiction of images of the respective cameras is carried out with respect to the depiction buffer memory 23 A, and the depiction buffer memory 23 A is handled as a target of synthesis (that is, the side to be synthesized) when translucent synthesis is carried out. The transparency renewed in Step S 25 is a transparency with respect to the images in the depiction buffer memory 23 A. Generally, in the translucent synthesis, a transparency with respect to the images at the side to be synthesized is specified. In such cases, the transparency at the synthesizing side is specified so that the transparency of images in the depiction buffer memory 23 A becomes a value set in Step S 25 .

Next, output of a vertical synchronization signal that is a transition trigger of an image display frame is monitored (Step S 26 ). Until the vertical synchronization signal is issued (Step S 26 : NO route), monitoring regarding the output of the vertical synchronization signal is continued.

As the vertical synchronization signal is output (Step S 26 : YES route), the image data in the depiction buffer memory 23 A are copied to the first or second display buffer memory 23 B or 23 C, wherein the copied image is displayed. That is, copying of the image data in the depiction buffer memory 23 A is carried out from output of the vertical synchronization signal to commencement of output of the next frame image data into the television set 100 .

And, whether or not a change of the display screen is completed is judged (Step S 28 ). Whether or not a change of a display screen is finished is judged on the basis of whether or not the display time of the camera change process is finished. That is, if the display time of the camera change process is finished, it is judged that the change of the display screen has finished. If the display screen is changed (Step S 28 : YES route), the process advances to step S 15 of a screen display process routine as shown in FIG. 6 . Thereafter, an image coming from the target camera is displayed. To the contrary, if the display screen is not changed (Step S 28 : NO routine), the process returns to step S 22 , wherein the camera is changed, and the processes from step S 23 through step S 28 are repeated.

Next, a description is given of a procedure of the camera change process described above, with reference to an image data transition view of the depiction buffer memory and display buffer memories, which are shown in FIG. 8 . In the image data transition view, display is changed by a vertical synchronization signal, wherein frames are shifted in such a manner of frame 1 frame 2 frame 3 and frame 4 .

Frame 1 :

Image data (source image 1 ) of the source camera, which are image data depicted from the last viewpoint, are written in the depiction buffer memory 23 A. At this time, an image depending on the camera image data, before the change of the camera, which are set in the second display buffer memory 23 C, is displayed on the screen.

Frame 2 :

The source image 1 written in the depiction buffer memory 23 A is copied onto the first display buffer memory 23 B, and an image depending on the source image 1 , which are set in the first display buffer memory 23 B, is displayed on the screen.

Image data (the target image 1 ) of the target camera, which are image data depicted from a new viewpoint, are written in the depiction buffer memory 23 A. And, the source image 1 set in the first display buffer memory 23 B, which is displayed on the screen at present, is translucently synthesized with respect to the depiction buffer memory 23 A. For example, the translucent synthesis is carried out at a transparency a 1 close to the opaque, whereby a synthesized image 1 is generated.

The translucent synthesis is executed by a technique of alpha blending, etc. In the alpha blending, the degree of translucency of an image to be synthesized is designated in terms of value a. Real numbers 0 through 1 may be set as the value a. If the value a is 0, the image to be synthesized is completely transparent, wherein even though a completely transparent image is synthesized, the target image does not change at all. Also, if the value a is 1, the image to be synthesized is opaque. If an opaque image is synthesized, the target image is replaced by an image to be synthesized.

The degree of translucency indicates a quantity of reduction in brightness of an image to be synthesized. That is, if a translucent synthesis is carried out with a translucent value a, the value a is multiplied by a value showing the brightness of the respective pixels of an image to be synthesized. Since the value a is 1 or less, the brightness of the respective pixels is reduced. Also, the value showing the brightness of the respective pixels of an image being the target of synthesis is multiplied by a value of (1 a), whereby the brightness of the target synthesizing image is reduced. And, by adding and synthesizing the image to be synthesized, whose brightness is reduced, with the target synthesizing image whose brightness is reduced, such a translucent synthesis is carried out. For example, in the frame 2 , translucent synthesis is carried out at a degree of a target image 1 x(1 1) source image data 1 x 1. 1 indicating the transparency in the frame 2 is a value close to the opaque since the image to be synthesized is a source image (an image to be faded out).

Frame 3 :

The image data (synthesized image 1 ) in the depiction buffer memory 23 A after the translucent synthesis is copied into the second display buffer memory, wherein an image produced by the synthesized image 1 set in the second display buffer memory 23 C is displayed on the screen. At this time, the displayed image becomes such that an image by the target image 1 is thinly (at a high transparency) overlapped on an image produced by a fresh source image 1 .

The next camera image data (source image 2 ) at the source side are written in the depiction buffer memory 23 A whose data has been cleared. And, the synthesized image 1 that is displayed on the screen at present and is set in the second display buffer memory 23 C is translucently synthesized at a transparency a 2 close to transparency, whereby the synthesized image data 2 are generated.

The translucent synthesis is carried out by a technique of alpha blending at a degree of the target image 2 x(1 2) synthesized image 1 x 2.

Frame 4 :

The image data (synthesized image 2 ) in the depiction buffer memory 23 A after the translucent synthesis is copied into the first display buffer memory 23 B, wherein an image is displayed on the screen by using the synthesized image 2 set in the first display buffer memory 23 B.

At this time, the display image becomes such that the image of the synthesized image 1 is translucently synthesized with the image of the source image 2 . At this time, the transparency of the source image 2 is increased further than the transparency of the source image 1 in the synthesized image 1 in the frame 3 .

Camera image data (target image 2 ) of the next frame at the target side is written in the depiction buffer memory 23 A. And, the synthesized image 2 that is displayed on the screen at present and is set in the second display buffer memory 23 C is translucently synthesized with the target image 2 in the depiction buffer memory 23 A. At this time, the translucency a 3 is a value closer to the transparency than a 1 in the frame 2 , whereby a synthesized image 3 is produced.

The translucent synthesis is carried out by a technique of alpha blending at a degree of the target image data 2 x(1 a3) synthesized image data 2 xa3.

Hereinafter, until a change of the screen display occurs, the processes in the frames 3 and 4 described above are repeatedly performed. However, in translucent synthesis of odd-numbered frames (in which the image of the source camera is depicted in the depiction buffer), the transparency gradually becomes opaque whenever the display is changed (the transparency of the depicted image gradually becomes transparent). In addition, in the translucent synthesis of even-numbered frames (the image of the target camera is depicted in the depiction buffer memory), the transparency gradually becomes more transparent whenever the display is changed (the transparency of the depicted image gradually becomes opaque).

FIG. 9 shows an example of changes in the transparencies of the source image data and of the target image data. In FIG. 9 , an example of transition of the transparencies is the illustrated embodiment. In the drawing, corresponding to the frame numbers, the transparency of the image written in the depiction buffer memory in the frame is illustrated. In the example shown in FIG. 9 , values from 0 through 1 are converted to values 0 through 128. That is, values obtained by dividing the values shown in FIG. 9 by 128 will become the transparencies.

For example, as shown in FIG. 9 , values showing the transparencies where the source image is depicted will, respectively, become values of 128, 126, 124, 122, 120, YY 66, and 64 in frames 1 , 2 , 3 , 4 , 5 , . . . 32 , and 33 . In addition, values showing the transparencies where the source image is depicted will, respectively, become values of 64, 66, 68, 70, 72, . . . 126, and 128 in frames 1 , 2 , 3 , 4 , 5 , 32 , and 33 .

When carrying out translucent synthesis in the respective frames, either of the transparency of the source image in FIG. 9 or the transparency of the target image therein may be applicable in response to the image data depicted in the depiction buffer memory 23 A. That is, if the image data depicted in the depiction buffer memory 23 A is the image of the target camera, translucent synthesis is carried out so that the transparency of the depicted image becomes a value expressed in terms of the transparency of the source image corresponding to the frame number. In the present embodiment, the depicted image becomes a target synthesizing image. Also, the transparency in the translucent synthesis is designated with transparency at the synthesizing side. Therefore, the value obtained by subtracting the transparency shown in FIG. 9 (the value obtained by dividing the value shown in FIG. 9 by 128) from 1 becomes a transparency in the translucent synthesis.

FIG. 10 , FIG. 11 , FIG. 12 , FIG. 13 and FIG. 14 , respectively, shows examples of transition of the display image by the image display processes described above. FIG. 10 shows a display image immediately before changing the camera. In this image, only a fairway Sa and a character Ca walking on the fairway Sa, which are image data picked by the source camera, are clearly displayed.

FIG. 11 shows an image immediately after the camera is changed. In this image, a fairway Sa and a character Ca walking thereon, based on the image data depicted from the source camera, are comparatively clearly displayed. Also, a fairway Sb, a character Cb walking on the fairway Sb, and a ring R slightly frontward thereof, based on the image data depicted from the target camera, are thinly displayed at a high transparency.

FIG. 12 shows an image appearing at the intermediate point during changing the cameras. In this image, the fairway Sa and character Ca walking on the fairway Sa, based on the image data depicted from the source camera, and the fairway Sb, character Cb walking on the fairway Sb, and ring R slightly frontward thereof, based on the image data depicted from the target camera, are displayed at the same transparency.

FIG. 13 shows an image immediately before termination of changing the cameras. In this image, reversing the state appearing immediately after changing the camera, the fairway Sa and character Ca walking on the fairway Sa, which are image data depicted from the source camera, are thinly displayed at a high transparency. In addition, the fairway Sb, character Cb walking on the fairway Sb, and ring R slightly frontward thereof, which are image data depicted from the target camera, are comparatively clearly displayed.

FIG. 14 shows an image immediately before changing the camera. In this image, only the fairway Sb, character Cb walking on the fairway Sb, and ring R slightly frontward thereof are clearly displayed.

Through the screen display processes described above, even though, a displayed character moves in real time like a wrestler walking on a fairway toward the ring, fade-out of the image depicted from the source viewpoint and fade-in of the image depicted from the target viewpoint can be carried out with natural images at both the source and target screens. Still further, by using the last translucent synthesis image for the next translucent synthesis, the remaining image effect can be obtained.

Embodiment 2

Next, a description is given of the second embodiment. In the first embodiment, although the fade-out and fade-in are processed while acquiring the remaining image effect, the fade-out and fade-in are carried out without acquiring any remaining image effect in the second embodiment. A program to execute the second embodiment is stored in a computer-readable recording medium. By executing the program stored in the recording medium in a computer of a video game apparatus, etc., illustrated in FIG. 1 , the processes of the second embodiment can be carried out.

That is, by causing the computer to execute the program of the second embodiment, the computer executes the following processes. First, the first dynamic image, depicted from the first viewpoint, consisting of multiple image frames is displayed on a screen. And, where a viewpoint of the dynamic image to be displayed on the screen is changed, the brightness of one image frame of the first dynamic image, and the brightness of one image frame of the second dynamic image which is formed of a plurality of image frames and depicted from the second viewpoint are reciprocally adjusted. The corresponding two image frames whose brightnesses were adjusted are synthesized to acquire the synthesized image frame. Further, the acquired synthesized image frame is displayed on the screen.

In this case, the brightness of image frames of the first dynamic image is adjusted so that the brightness thereof gradually becomes low whenever the next image frame is selected. Also, the brightness of image frames of the second dynamic image is adjusted so that the brightness thereof gradually becomes high whenever the next image frame is selected.

In the second embodiment, only the camera change process is different from the first embodiment. Hereinafter, a description is given of the camera change process in the second embodiment.

FIG. 15 shows a routine of a camera change process in the second embodiment. First, the image data of the source camera are written in the depiction buffer memory 23 A (Step S 31 ). Next, the image data of the target camera are written in the first or second display buffer memories 23 B or 23 C (Step S 32 ).

Next, a subtraction process is carried out, using an appointed subtraction value, with respect to the brightnesses of the respective pixels of the image data depicted from the target camera in the first or second buffer memory 23 B or 23 C (Step S 33 ). Subsequently, a multiplication process is carried out, using an appointed multiplication value, with respect to the brightnesses of the respective pixels of the image data picked by the source camera in the depiction buffer memory 23 A. The result (the multiplied value) is added to the image data, depicted from the target camera of the first or second buffer memory 23 B or 23 C, which has already subtracted (Step S 34 ).

Next, the multiplied value and subtracted value are renewed by values determined by the expression equations shown in FIG. 16 (Step S 35 ). In FIG. 16 , multiplied values of the source images and subtracted values of the target images in the respective frames are shown, corresponding to the frame numbers. The example shown in FIG. 16 shows a case where an image is changed at N frames (N: natural numbers).

For example, the default of the multiplied value is 1.0, and it is reduced by 1/(N 1) per transition of frames. In the final frame of a camera change, the multiplied value becomes 0. In addition, the default of the subtracted value is 255 which is reduced by 255/(N 1) per transition of frames, and in the final frame of a camera change, it becomes 0. Further, the subtracted value is an example where the maximum value in the brightnesses of the respective pixels is 255. This indicates that the image data depicted from the source camera become null and void in the final frame of the camera change, and only the image data depicted from the target camera becomes completely effective.

Next, the display buffer is changed. In line therewith, the screen display is renewed (Step S 36 ). And, whether or not the screen change is completed is judged (Step S 37 ). If processes are carried out equivalent to the number of frames of the screen change after the camera change process is commenced, it is judged that the screen change has been completed. If the screen change is not terminated, the process returns to step S 31 (Step S 37 : NO route), wherein steps S 31 through S 37 are repeated. To the contrary, if the screen change is terminated, the process advances to (Step S 37 : YES route), wherein the process returns to Step S 15 of the image display process routine shown in FIG. 6 .

Next, a description is given of a procedure of the camera change process described above, with reference to image data transition view, shown in FIG. 17 , of the depiction buffer memory 23 A, and the first and second buffer memories 23 B and 23 C. In the image data transition view, a display change is carried out by a vertical synchronization signal, wherein the frames are changed like frame 17 frame 2 .

Frame 1

The image data (source image 1 ) depicted from the source camera, which are the image data depicted from the last viewpoint, are written in the depiction buffer memory 23 A, and the image data (target image 1 ) depicted from the target camera, which are image data depicted from the new viewpoint, are written in the first display buffer memory 23 B.

And, brightness is subtracted by an appointed subtraction value (default) with respect to the target image 1 of the first display buffer memory 23 B, whereby subtracted image data of the target image 1 can be obtained in the first display buffer memory 23 B. And, multiplication is carried out with an appointed multiplication value (default) with respect to the source image 1 of the depiction buffer memory 23 A, wherein the image data are added to and synthesized with the first display buffer memory 23 B. Thereby, synthesized image data of the target image 1 and source image 1 can be acquired. Thus, the image is displayed on the screen based on the synthesized image data.

Frame 2 :

The next image data (source image 2 ) picked by the source camera are written in the depiction buffer memory 23 A, and the next image data (target image 2 ) depicted from the target camera are written in the second display buffer memory.

And, a subtraction process is carried out with the renewed subtraction value with respect to the target image 2 in the second display buffer memory 23 C, and new subtracted image data of the target image 2 can be obtained in the second display buffer memory 23 C. Subsequently, a multiplication process is carried out with the renewed multiplication value with respect to the source image 2 of the depiction buffer memory 23 A, and the image data are overwritten in the second display buffer memory 23 C, whereby synthesized image data of the target image 2 and source image 2 are acquired, and the image is displayed on the screen on the basis of the synthesized image data.

Hereinafter, processes in the frames 1 and 2 described above are reciprocally carried out until the change of the screen display occurs. However, the subtraction value and multiplication value of the brightness are gradually reduced with such properties as illustrated in FIG. 16 whenever a display change is executed. By gradually reducing the subtraction value in the brightness of the image depicted from the target camera, a dynamic image depicted from the target camera can be faded in. In addition, by gradually reducing the brightness of the image depicted from the source camera, the dynamic image depicted from the source camera can be faded out.

Therefore, in the embodiment, even though a displayed character moves in real time, the fade-out of a screen based on the viewpoint at the source side and fade-in of an image based on the viewpoint at the target side can be carried out by the abovementioned screen display process, wherein both images at the source side and at the target side can be displayed naturally.

The screen display process described above is applicable to fade-out and fade-in of screens, when changing the cameras and scenes, in the screen display of characters operated by a player, other than automatic playing screens.

The game processing method described in the abovementioned embodiment can be achieved by executing programs prepared in advance, using a personal computer or a video game apparatus. The game program is recorded or stored in a computer-readable recording medium such as a hard disk, floppy disk, CD-ROM, (Magneto-optical disk) MO, DVD, etc., and is executed by the computer reading out the same. Also, the game program can be distributed via a network such as the Internet by means of the abovementioned recording medium.

Claims

- A recording medium, readable by a computer, in which a game program to execute a process of displaying dynamic images on a screen is stored, the program causing said computer to process image display, wherein when an actual viewpoint for a dynamic image to be displayed is changed, said computer translucently synthesizes a source dynamic image depicted from a first viewpoint before the actual viewpoint is changed, and a target dynamic image depicted from a second viewpoint after the actual viewpoint is changed, together frame by frame in respective images, the synthesizing comprising fading out the source dynamic image by gradually increasing transparency of the source dynamic image and simultaneously fading the target dynamic image by gradually reducing the transparency of the target dynamic image.

- A recording medium, readable by a computer, in which a game program to execute a process of displaying dynamic images on a screen is stored, and causing said computer to execute programs comprising: displaying a first dynamic image depicted from a first viewpoint, comprising a plurality of image frames;when an actual viewpoint of an actual dynamic image to be displayed is changed, reciprocally adjusting brightness of one image frame of the first dynamic image and brightness of one image frame of a second dynamic image depicted from a second viewpoint, comprising a plurality of image frames, synthesizing the corresponding two image frames whose brightnesses were adjusted, displaying the corresponding synthesized image frame;subsequently mutually selecting the brightness of the next image frame of said first dynamic image, and brightness of the next image frame of said second dynamic image whenever image frames to be displayed are renewed;reciprocally adjusting the brightness of the synthesized image frame, and brightness of the reciprocally selected next image frame;synthesizing said synthesized image frame whose brightness has been previously adjusted, and said image frame;and displaying the synthesized image frame.

- The recording medium readable by a computer, in which programs are stored, as set forth in claim 2 , wherein said programs cause said computer to adjust the brightness of an image frame and a synthesized image frame by adjusting the quantity of reduction in brightness thereof, and to acquire said synthesized image frame by translucent synthesis.

- The recording medium readable by a computer, in which programs are stored, as set forth in claim 3 , wherein the adjustment of the brightness of image frames of said first dynamic image further comprises adjusting the quantity of reduction in brightness so that the brightness becomes low whenever the next image frame is selected, and the adjustment of the brightness of image frames of said second dynamic image further comprises adjusting the quantity of reduction in brightness so that the brightness becomes high whenever the next image frame is selected.

- A recording medium, readable by a computer, in which a game program to execute a process of displaying dynamic images on a screen is stored, and causing said computer to execute a program comprising: displaying a first dynamic image depicted from a first viewpoint, comprising a plurality of image frames;when a viewpoint of an actual dynamic image to be displayed is changed: reciprocally adjusting a brightness of one image frame of said first dynamic image and a brightness of one image frame of the second dynamic image depicted from a second viewpoint, which is composed of a plurality of image frames, whenever the image frame to be displayed is renewed;acquiring a synthesized image frame by synthesizing the corresponding two image frames whose brightness have been adjusted;and displaying the corresponding synthesized image frame.

- The recording medium readable by a computer, as set forth in claim 5 , having programs stored therein, wherein said programs execute: with respect to image frames of said first dynamic image, adjusting the brightness so that the brightness gradually becomes low whenever a next image frame is selected;and with respect to image frames of said second dynamic image, adjusting the brightness so that the brightness becomes high when a next image frame is selected.

- A game program to display dynamic images, the program controlling a computer to process image display, wherein: when an actual viewpoint for a dynamic image to be displayed is changed, said computer translucently synthesizes a source dynamic image depicted from a first viewpoint before the actual viewpoint is changed, and a target dynamic image depicted from a second viewpoint after the actual viewpoint is changed, together frame by frame in respective images, the synthesizing comprising fading out the source dynamic image by gradually increasing transparency of the source dynamic image, and at the same time, fading in the target dynamic image by gradually reducing the transparency of the target dynamic image.

- A game program to execute a process for displaying dynamic images, the program controlling a computer to process: displaying a first dynamic image depicted from a first viewpoint, comprising a plurality of image frames;when an actual viewpoint of an actual dynamic image to be displayed is changed, reciprocally adjusting a brightness of one image frame of said first dynamic image and a brightness of one image frame of a second dynamic image depicted from a second viewpoint, comprising a plurality of image frames;acquiring a synthesized image frame by synthesizing the corresponding two image frames whose brightnesses were adjusted, and displaying the corresponding synthesized image frame;subsequently mutually selecting the brightness of the next image frame of said first dynamic image, and brightness of the next image frame of said second dynamic image whenever image frames to be displayed are renewed;and reciprocally adjusting the brightness of the synthesized image frame acquired by the last synthesis, and brightness of the reciprocally selected next image frame;acquiring a newly synthesized image frame by synthesizing said synthesized image frame whose brightness has been previously adjusted, and said image frame, and displaying the corresponding synthesized image frame.

- The game program as set forth in claim 8 , wherein the computer adjusts the brightness of an image frame and a synthesized image frame by adjusting the quantity of reduction in brightness thereof, and acquires said synthesized image frame by translucent synthesis.

- The game program as set forth in claim 9 , wherein, with respect to adjustment of the brightness of image frames of said first dynamic image, the computer adjusts the quantity of reduction in brightness so that the brightness becomes low whenever the next image frame is selected, and with respect to adjustment of the brightness of image frames of said second dynamic image, said computer adjusts the quantity of reduction in brightness so that the brightness becomes high whenever the next image frame is selected.

- A game program to execute a process for displaying dynamic images, the program controlling a computer to execute: displaying a first dynamic image depicted from a first viewpoint, comprising a plurality of image frames;when a viewpoint of an actual dynamic image to be displayed is changed: reciprocally adjusting a brightness of one image frame of said first dynamic image and a brightness of one image frame of a second dynamic image depicted from a second viewpoint, comprising a plurality of image frames, whenever the image frame to be displayed is renewed;acquiring a synthesized image frame by synthesizing the corresponding two image frames whose brightness has been adjusted;and displaying the corresponding synthesized image frame.

- The game program as set forth in claim 11 , wherein the computer executes: with respect to image frames of said first dynamic images, adjusting the brightness so that the brightness gradually becomes low whenever a next image frame is selected;and with respect to image frames of said second dynamic images, adjusting the brightness so that the brightness gradually becomes high when a next image frame is selected.

- A method for processing a game displaying dynamic images when an actual viewpoint for a dynamic image to be displayed is changed, comprising: translucently synthesizing a source dynamic image depicted from a first viewpoint before the actual viewpoint is changed, and a target dynamic image depicted from a second viewpoint after the actual viewpoint is changed, together frame by frame in respective images;and fading out the source dynamic image by gradually increasing transparency of the source dynamic image, and at the same time, fading in the target dynamic image by gradually reducing the transparency of the target dynamic image.

- A method for processing a game capable of displaying dynamic images, comprising: displaying a first dynamic image depicted from a first viewpoint, comprising a plurality of image frames;when an actual viewpoint of an actual dynamic image to be displayed is changed, reciprocally adjusting a brightness of one image frame of said first dynamic image and a brightness of one image frame of a second dynamic image depicted from a second viewpoint, comprising a plurality of image frames, acquiring a synthesized image frame by synthesizing the corresponding two image frames whose brightnesses were adjusted, displaying the corresponding synthesized image frame;and subsequently mutually selecting the brightness of the next image frame of said first dynamic image, and brightness of the next image frame of said second dynamic image whenever image frames to be displayed are renewed;reciprocally adjusting the brightness of the synthesized image frame acquired by the last synthesis, and brightness of the reciprocally selected next image frame;acquiring a newly synthesized image frame by synthesizing said synthesized image frame whose brightness has been previously adjusted, and said image frame;and displaying the corresponding synthesized image frame.

- The method of claim 14 , further comprising: adjusting the brightness of an image frame and a synthesized image frame by adjusting the quantity of reduction in brightness thereof;and acquiring said synthesized image frame by translucent synthesis.

- The method for processing a game capable of displaying dynamic images as set forth in claim 15 , further comprising: with respect to adjustment of the brightness of image frames of said first dynamic image, adjusting the quantity of reduction in brightness so that the brightness becomes low whenever the next image frame is selected, and with respect to adjustment of the brightness of image frames of said second dynamic image, adjusting the quantity of reduction in brightness so that the brightness becomes high whenever the next image frame is selected.

- A method for processing a game capable of displaying dynamic images, comprising: displaying a first dynamic image depicted from a first viewpoint, comprising a plurality of image frames;when an actual viewpoint of an actual dynamic image to be displayed is changed, reciprocally adjusting a brightness of one image frame of said first dynamic image and a brightness of one image frame of a second dynamic image depicted from a second viewpoint, comprising a plurality of image frames, whenever the image frame to be displayed is renewed;acquiring a synthesized image frame by synthesizing the corresponding two image frames whose brightness has been adjusted;and displaying the corresponding synthesized image frame.

- The method for processing a game capable of displaying dynamic images, as set forth in claim 17 , further comprising: with respect to image frames of said first dynamic image, adjusting the brightness so that the brightness gradually becomes low whenever a next image frame is selected;and with respect to image frames of said second dynamic images, adjusting the brightness so that the brightness gradually becomes high when a next image frame is selected.

- A game processing apparatus for executing a game by displaying images on a monitor display, comprising: a recording medium readable by a computer, having a program stored therein to execute a game;the computer for executing said program by reading at least one part of said program from said recording medium;and the monitor display for displaying images of said game resulting from the program;wherein, by reading at least one part of said program from said recording medium, said computer: displays dynamic images;when an actual viewpoint for an actual dynamic image to be displayed is changed, translucently synthesizes a source dynamic image depicted from a first viewpoint before the actual viewpoint is changed, and a target dynamic image depicted from a second viewpoint after the actual viewpoint is changed, together frame by frame in respective images;and when the actual viewpoint for the actual dynamic image to be displayed is changed, fades out the source dynamic image by gradually increasing transparency of the source dynamic image, and at the same time, fades in the target dynamic image by gradually reducing the transparency of the target dynamic image.

- A game processing apparatus for creating a game by displaying images on a monitor display, comprising: a recording medium readable by a computer, having a program stored therein to execute the game;the computer for executing said program by reading at least one part of said program from said recording medium;and the monitor display for displaying images of said game which is executed by said program;wherein, by reading at least one part of said program from said recording medium, said computer: displays a first dynamic image depicted from a first viewpoint, comprising a plurality of image frames;when an actual viewpoint of an actual dynamic image to be displayed is changed, reciprocally adjusts a brightness of one image frame of said first dynamic image and a brightness of one image frame of a second dynamic image depicted from a second viewpoint, comprising a plurality of image frames, acquires a synthesized image frame by synthesizing the corresponding two image frames whose brightnesses were adjusted, and displays the corresponding synthesized image frame;subsequently mutually selects a brightness of a next image frame of said first dynamic image, and brightness of a next image frame of said second dynamic image whenever image frames to be displayed are renewed;reciprocally adjusts the brightness of the synthesized image frame acquired by the last synthesis, and brightness of the reciprocally selected next image frame;acquires a newly synthesized image frame by synthesizing said synthesized image frame whose brightness has been previously adjusted, and said image frame, and displays the corresponding synthesized image frame.

- The game processing apparatus as set forth in claim 20 , wherein the brightness of an image frame and a synthesized image frame is adjusted by adjusting the quantity of reduction in brightness thereof, and said synthesized image frame is acquired by translucent synthesis.

- The game processing apparatus as set forth in claim 21 , wherein with respect to adjustment of the brightness of image frames of said first dynamic images, the quantity of reduction in brightness is adjusted so that the brightness becomes low whenever a next image frame is selected, and with respect to adjustment of the brightness of image frames of said second dynamic images, the quantity of reduction in brightness is adjusted so that the brightness becomes high whenever a next image frame is selected.

- A game processing apparatus for executing a game by displaying images on a monitor display, comprising: a recording medium readable by a computer, having a program stored therein to execute the game;the computer for executing said program by reading at least one part of said program from said recording medium;and the monitor display for displaying images of said game executed by said program;wherein, by reading at least one part of said program from said recording medium, said computer: displays a first dynamic image depicted from a first viewpoint, comprising a plurality of image frames;when an actual viewpoint of an actual dynamic image to be displayed is changed, reciprocally adjusts a brightness of one image frame of said first dynamic image and a brightness of one image frame of a second dynamic images depicted from a second viewpoint, comprising a plurality of image frames, whenever the image frame to be displayed is renewed;acquires a synthesized image frame by synthesizing the corresponding two image frames whose brightness has been adjusted;and displays the corresponding synthesized image frame.

- The game processing apparatus as set forth in claim 23 , wherein the brightness of image frames of said first dynamic images is adjusted so that the brightness gradually becomes low whenever a next image frame is selected, and the brightness of image frames of said second dynamic images is adjusted so that the brightness gradually becomes high whenever a next image frame is selected.

- A game processing apparatus for executing a game by displaying images on a monitor display, comprising: a recording medium, readable by a computer, having a program stored therein to execute the game;a computer that executes said program by reading at least one part of said program from said recording medium;the monitor display that displays images of said game executed by said program;and a depiction buffer memory, a first display buffer memory and a second display buffer memory and a second buffer comprising buffer memories for storing image data;wherein, by reading at least a part of said program from said recording medium, said computer executes a display process that fades out screen display of the last scene by the image data from the last viewpoint, and fades in screen display of a new scene by image data from a new viewpoint, wherein: (1) image data from the new viewpoint is written in said depiction buffer memory, the image data from the last viewpoint, which are displayed on a screen at present and written in said first display buffer memory are overwritten in said depiction buffer memory, and the image data from the last viewpoint are translucently synthesized with the image data from the new viewpoint at transparency equivalent to opaqueness;(2) the image data in said depiction buffer memory after the translucent synthesis are copied in said second display buffer memory, and screen display is processed with respect to the image data of the corresponding second display buffer memory, wherein the next image data from the last viewpoint are written in said depiction buffer display, the translucently synthesized image data which are displayed on a screen at present and are written in said second display buffer memory are overwritten in said depiction buffer memory, and translucently synthesized image data from said second display buffer memory are translucently synthesized with the image data from the last viewpoint at a transparency equivalent to pellucidity;(3) the image data in said depiction buffer memory after the translucent synthesis are copied in said first display buffer memory, and screen display is processed with respect to the image data of the corresponding first display buffer memory, wherein the next image data from the new viewpoint are written in said depiction buffer display, the translucently synthesized image data which are displayed at present and are written in said first display buffer memory are overwritten in said depiction buffer memory, and translucently synthesized image data from said first display buffer memory are translucently synthesized with the image data from the new viewpoint at a transparency equivalent to pellucidity;and (4) thereafter (2) and (3) are reciprocally repeated until a change of the screen display is completed, wherein, in the translucent synthesis in (2), the transparency of the translucently synthesized image data from said second display buffer memory is gradually reduced with respect to the image data from the last viewpoint, and in the translucent synthesis in (3), the transparency of the translucently synthesized image data from said first buffer memory is gradually raised with respect to the image data from the new viewpoint.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.