U.S. Pat. No. 6,331,146

Video Game System and Method With Enhanced Three-Dimensional Character and Background Control

AssigneeNintendo Co., Ltd.

U.S. Patent No. 6,331,146: Video Game System and Method With Enhanced Three-Dimensional Character and Background Control

Summary:

The ‘146 patent concerns a three-dimensional world with multiple courses. In the case of Super Mario 64, those courses were hidden behind doors and paintings on the wall of Princess Peach’s castle. A player, when approaches a door or painting, must have achieved a certain number of goals before he is allowed to open it and advance to a new level. If the player has failed to achieve the minimum number of goals from the lower worlds, he will be denied access to any further worlds until he meets the minimum goal. For all of you who get a bit nostalgic, here’s a screenshot of everyone’s favorite plumber:

Abstract:

A video game system includes a game cartridge which is pluggably attached to a main console having a main processor, a 3D graphics generating coprocessor, expandable main memory and player controllers. A multifunctional peripheral processing subsystem external to the game microprocessor and coprocessor is described which executes commands for handling player controller input/output to thereby lessen the processing burden on the graphics processing subsystem. The video game methodology involves game level organization features, camera perspective or point of view control features, and a wide array of animation and character control features. The system changes the “camera” angle (i.e., the displayed point of view in the three-dimensional world) automatically based upon various conditions and in response to actuation of a plurality of distinct controller keys/buttons/switches, e.g., four “C” buttons in the exemplary embodiment. The control keys allow the user at any time to move in for a close up or pull back for a wide view or pan the camera to the right and left to change the apparent camera angle. Such user initiated camera manipulation permits a player to better judge jumps or determine more precisely where an object is located in relation to the player controlled character. The video game system and methodology features a unique player controller, which permits control over a character’s exploration of the three-dimensional world to an unprecedented extent. A player controlled character may be controlled in a multitude of different ways utilizing the combination of the joystick and/or cross-switch and/or control keys and a wide range of animation effects are generated.

Illustrative Claim:

1. For use with a video game system having a game program execution processing system including a microprocessor for executing a video game program and a coprocessor, coupled to said game microprocessor, for cooperating with said game microprocessor to execute said video game program, at least one player controller operable by a player to generate video game control signals, and a removable storage device for storing a program for controlling the operation of said video game system, a method of operating said video game system comprising the steps of:

generating a three-dimensional world display of a first video game play course;

storing the number of goals achieved by a player in said first video game course;

generating a three-dimensional world display of a second video game play course;

storing the number of goals achieved by a player in said second video game course;

maintaining a cumulative total of the number of goals achieved by a player at least on said first and second video game courses;

comparing said cumulative total of the number of goals achieved by a player at least on said first and second video game courses with a predetermined threshold; and

preventing access to a third video game course if said cumulative total is below said predetermined threshold.

Related patents:

U.S. Patent No. 6,267,673

U.S. Patent No. 6,155,926

U.S. Patent No. 6,139,434

U.S. Patent No. 6.139.433

Illustrative Figure

Abstract

No abstract is available for this record.

Description

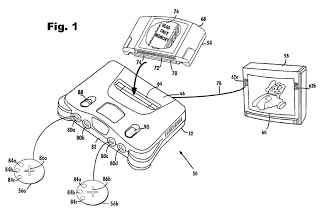

DETAILED DESCRIPTION OF EXEMPLARY EMBODIMENT FIG. 1 shows an exemplary embodiment of a video game system 50 on which the video game methodology of the present invention, which is described in detail below, may be performed invention. Illustrative video game system 50 includes a main console 52, a video game storage device 54, and handheld controllers 56a,b (or other user input devices). Video game storage device 54 includes a read-only memory 76 storing a video game program, which in the presently preferred illustrative implementation of the present invention is the commercially available video game entitled "Super Mario 64". Main console 52 is connected to a conventional home color television set 58. Television set 58 displays 3D video game images on its television screen 60 and reproduces stereo sound through its speakers 62a,b. In the illustrative embodiment, the video game storage device 54 is in the form of a replaceable memory cartridge insertable into a slot 64 on a top surface 66 of console 52. A wide variety of alternative program storage media are contemplated by the present invention such as CD ROM, floppy disk, etc. In this exemplary embodiment, video game storage device 54 comprises a plastic housing 68 encasing a printed circuit board 70. Printed circuit board 70 has an edge 72 defining a number of electrical contacts 74. When the video game storage device 68 is inserted into main console slot 64, the cartridge electrical contacts 74 mate with corresponding "edge connector" electrical contacts within the main console. This action electrically connects the storage device printed circuit board 72 to the electronics within main console 52. In this example, at least a "read only memory" chip 76 is disposed on printed circuit board 70 within storage device housing 68. This "read only memory" chip 76 stores instructions and ...

DETAILED DESCRIPTION OF EXEMPLARY EMBODIMENT

FIG. 1 shows an exemplary embodiment of a video game system 50 on which the

video game methodology of the present invention, which is described in

detail below, may be performed invention. Illustrative video game system

50 includes a main console 52, a video game storage device 54, and

handheld controllers 56a,b (or other user input devices). Video game

storage device 54 includes a read-only memory 76 storing a video game

program, which in the presently preferred illustrative implementation of

the present invention is the commercially available video game entitled

"Super Mario 64". Main console 52 is connected to a conventional home

color television set 58. Television set 58 displays 3D video game images

on its television screen 60 and reproduces stereo sound through its

speakers 62a,b.

In the illustrative embodiment, the video game storage device 54 is in the

form of a replaceable memory cartridge insertable into a slot 64 on a top

surface 66 of console 52. A wide variety of alternative program storage

media are contemplated by the present invention such as CD ROM, floppy

disk, etc. In this exemplary embodiment, video game storage device 54

comprises a plastic housing 68 encasing a printed circuit board 70.

Printed circuit board 70 has an edge 72 defining a number of electrical

contacts 74. When the video game storage device 68 is inserted into main

console slot 64, the cartridge electrical contacts 74 mate with

corresponding "edge connector" electrical contacts within the main

console. This action electrically connects the storage device printed

circuit board 72 to the electronics within main console 52. In this

example, at least a "read only memory" chip 76 is disposed on printed

circuit board 70 within storage device housing 68. This "read only memory"

chip 76 stores instructions and other information pertaining to a

particular video game. The read only memory chip 76 for one game cartridge

storage device 54 may, for example, contain instructions and other

information for an adventure game while another storage device 54 may

contain instructions and information to play a car race game, an

educational game, etc. To play one game as opposed to another game, the

user of video game system 60 need only plug the appropriate storage device

54 into main console slot 64--thereby connecting the storage device's read

only memory chip 76 (and any other circuitry it may contain) to console

52. This enables a computer system embodied within console 52 to access

the information contained within read only memory 76, which information

controls the console computer system to play the appropriate video game by

displaying images and reproducing sound on color television set 58 as

specified under control of the read only memory game program information.

To set up the video game system 50 for game play, the user first connects

console 52 to color television set 58 by hooking a cable 78 between the

two. Console 52 produces both "video" signals and "audio" signals for

controlling color television set 58. The "video" signals control the

images displayed on the television screen 60 and the "audio" signals are

played back as sound through television loudspeaker 62. Depending on the

type of color television set 58, it may be necessary to connect a

conventional "RF modulator" between console 52 and color television set

58. This "RF modulator" (not shown) converts the direct video and audio

outputs of console 52 into a broadcast type television signal (e.g., for a

television channel 2 or 3) that can be received and processed using the

television set's internal "tuner." Other conventional color television

sets 58 have direct video and audio input jacks and therefore don't need

this intermediary RF modulator.

The user then needs to connect console 52 to a power source. This power

source may comprise a conventional AC adapter (not shown) that plugs into

a standard home electrical wall socket and converts the house voltage into

a lower voltage DC signal suitable for powering console 52. The user may

then connect up to 4 hand controllers 56a, 56b to corresponding connectors

80a-80d on main unit front panel 82. Controllers 56, which are only

schematically represented in FIG. 1 may take a variety of forms. In this

example, the controllers 56a,b include various function controlling push

buttons such as 84a-c and X-Y switches 86a,b used, for example, to specify

the direction (up, down, left or right) that a player controllable

character displayed on television screen 60 should move. Other controller

possibilities include joysticks, mice pointer controls and a wide range of

other conventional user input devices. In the presently preferred

implementation of the present invention, the unique controller described

below in conjunction with FIGS. 6 through 9 and in incorporated parent

application Ser. No. 08/719,019 (the '019 application).

The present system has been designed to accommodate expansion to

incorporate various types of peripheral devices yet to be specified. This

is accomplished by incorporating a programmable peripheral device

input/output system described in detail in the '288 application which

permits device type and status to be specified by program commands.

In use, a user selects a storage device 54 containing a desired video game,

and inserts that storage device into console slot 64 (thereby electrically

connecting read only memory 76 and other cartridge electronics to the main

console electronics). The user then operates a power switch 88 to turn on

the video game system 50 and operates controllers 56 (depending on the

particular video game being played, up to four controllers for four

different players can be used with the illustrative console) to provide

inputs to console 52 and thus control video game play. For example,

depressing one of push buttons 84a-c may cause the game to start playing.

Moving directional switch 86 or the joystick 45 shown in FIG. 6 may cause

animated characters to move on the television screen 60 in controllably

different directions. Depending upon the particular video game stored

within the storage device 54, these various controls 84, 86 on the

controller 56 can perform different functions at different times. If the

user wants to restart game play from the beginning, or alternatively with

certain game programs reset the game to a known continuation point, the

user can press a reset button 90.

FIG. 2 is a block diagram of an illustrative embodiment of console 52

coupled to a game cartridge 54 and shows a main processor 100, a

coprocessor 200, and main memory 300 which may include an expansion module

302. Main processor 100 is a computer that executes the video game program

within storage device 54. In this example, the main processor 100 accesses

this video game program through the coprocessor 200 over a communication

path 102 between the main processor and the coprocessor 200, and over

another communication path 104a,b between the coprocessor and the video

game storage device 54. Alternatively, the main processor 100 can control

the coprocessor 200 to copy the video game program from the video game

storage device 54 into main memory 300 over path 106, and the main

processor 100 can then access the video game program in main memory 300

via coprocessor 200 and paths 102, 106. Main processor 100 accepts inputs

from game controllers 56 during the execution of the video game program.

Main processor 100 generates, from time to time, lists of instructions for

the coprocessor 200 to perform. Coprocessor 200, in this example,

comprises a special purpose high performance, application specific

integrated circuit having an internal design that is optimized for rapidly

processing 3D graphics and digital audio information. In the illustrative

embodiment, the coprocessor described herein is the product of a joint

venture between Nintendo Company Limited and Silicon Graphics, Inc. For

further details of exemplary coprocessor hardware and software beyond that

expressly disclosed in the present application, reference is made to

copending application Ser. No. 08/561,718, naming Van Hook et al as

inventors of the subject matter claimed therein, which is entitled "High

Performance Low Cost Video Game System With Coprocessor Providing High

Speed Efficient 3D Graphics and Digital Audio Signal Processing" filed on

Nov. 22, 1995, which application is expressly incorporated herein by

reference. The present invention is not limited to use with the

above-identified coprocessor. Any compatible coprocessor which supports

rapid processing of 3D graphics and digital audio may be used herein. In

response to instruction lists provided by main processor 100 over path

102, coprocessor 200 generates video and audio outputs for application to

color television set 58 based on data stored within main memory 300 and/or

video game storage device 54.

FIG. 2 also shows that the audio video outputs of coprocessor 200 are not

provided directly to television set 58 in this example, but are instead

further processed by external electronics outside of the coprocessor. In

particular, in this example, coprocessor 200 outputs its audio and video

information in digital form, but conventional home color television sets

58 require analog audio and video signals. Therefore, the digital outputs

of coprocessor 200 must be converted into analog form--a function

performed for the audio information by DAC and mixer amp 40 and for the

video information by VDAC and encoder 144. The analog audio signals

generated in DAC 140 are amplified and filtered by an audio amplifier

therein that may also mix audio signals generated externally of console 52

via the EXTSOUND L/R signal from connector 154. The analog video signals

generated in VDAC 144 are provided to a video encoder therein which may,

for example, convert "RGB" inputs to composite video outputs compatible

with commercial TV sets. The amplified stereo audio output of the

amplifier in ADAC and mixer amp 140 and the composite video output of

video DAC and encoder 144 are provided to directly control home color

television set 58. The composite synchronization signal generated by the

video digital to analog converter in component 144 is coupled to its video

encoder and to external connector 154 for use, for example, by an optional

light pen or photogun.

FIG. 2 also shows a clock generator 136 (which, for example, may be

controlled by a crystal 148 shown in FIG. 3A) that produces timing signals

to time and synchronize the other console 52 components. Different console

components require different clocking frequencies, and clock generator 136

provides suitable such clock frequency outputs (or frequencies from which

suitable clock frequencies can be derived such as by dividing).

In this illustrative embodiment, game controllers 56 are not connected

directly to main processor 100, but instead are connected to console 52

through serial peripheral interface 138. Serial peripheral interface 138

demultiplexes serial data signals incoming from up to four or five game

controllers 56 (e.g., 4 controllers from serial I/O bus 151 and 1

controller from connector 154) and provides this data in a predetermined

format to main processor 100 via coprocessor 200. Serial peripheral

interface 138 is bidirectional, i.e., it is capable of transmitting serial

information specified by main processor 100 out of front panel connectors

80a-d in addition to receiving serial information from those front panel

connectors. The serial interface 138 receives main memory RDRAM data,

clock signals, commands and sends data/responses via a coprocessor serial

interface (not shown). I/O commands are transmitted to the serial

interface 138 for execution by its internal processor as will be described

below. In this fashion, the peripheral interface's processor (250 in FIG.

5) by handling I/O tasks, reduces the processing burden on main processor

100. As is described in more detail below in conjunction with FIG. 5,

serial peripheral interface 138 also includes a "boot ROM (read only

memory)" that stores a small amount of initial program load (IPL) code.

This IPL code stored within the peripheral interface boot ROM is executed

by main processor 100 at time of startup and/or reset to allow the main

processor to begin executing game program instructions 108 within storage

device 54. The initial game program instructions 108 may, in turn, control

main processor 100 to initialize the drivers and controllers it needs to

access main memory 300.

In this exemplary embodiment, serial peripheral interface 138 includes a

processor (see 250 in FIG. 5) which, in addition to performing the I/O

tasks referred to above, also communicates with an associated security

processor 152 within storage device 54. This pair of security processors

(one in the storage device 54, the other in the console 52) performs, in

cooperation with main processor 100, an authentication function to ensure

that only authorized storage devices may be used with video game console

52.

As shown in FIG. 2, peripheral interface 138 receives a power-on reset

signal from reset IC 139. The reset circuitry embodied in console 52 and

timing signals generated by the circuitry are shown in FIGS. 3A and 3B of

the incorporated '288 application.

FIG. 2 also shows a connector 154 within video game console 52. In this

illustrative embodiment, connector 154 connects, in use, to the electrical

contacts 74 at the edge 72 of storage device printed circuit board 70.

Thus, connector 154 electrically connects coprocessor 200 to storage

device ROM 76. Additionally, connector 154 connects the storage device

security processor 152 to main unit serial peripheral interface 138.

Although connector 154 in the particular example shown in FIG. 2 may be

used primarily to read data and instructions from a non-writable read only

memory 76, system 52 is designed so that the connector is bidirectional,

i.e., the main unit can send information to the storage device 54 for

storage in random access memory 77 in addition to reading information from

it. Further details relating to accessing information for the storage

device 54 are contained in the incorporated '288 application in

conjunction with its description of FIGS. 12 through 14 therein.

Main memory 300 stores the video game program in the form of CPU

instructions 108. All accesses to main memory 300 are through coprocessor

200 over path 106. These CPU instructions are typically copied from the

game program/data 108 stored in storage device 54 and downloaded to RDRAM

300. This architecture is likewise readily adaptable for use with CD ROM

or other bulk media devices. Although CPU 100 is capable of executing

instructions directly out of storage device ROM 76, the amount of time

required to access each instruction from the ROM is much greater than the

time required to access instructions from main memory 300. Therefore, main

processor 100 typically copies the game program/data 108 from ROM 76 into

main memory 300 on an as-needed basis in blocks, and accesses the main

memory 300 in order to actually execute the instructions. Memory RD RAM

300 is preferably a fast access dynamic RAM capable of achieving 500

Mbytes/second access times such as the DRAM sold by RAMBUS, Inc. The

memory 300 is coupled to coprocessor 200 via a unified nine bit wide bus

106, the control of which is arbitrated by coprocessor 200. The memory 300

is expandable by merely plugging, for example, an 8 Mbyte memory card into

console 52 via a console memory expansion port (not shown).

As described in the copending Van Hook et al application, the main

processor 100 preferably includes an internal cache memory (not shown)

used to further decrease instruction access time. Storage device 54 also

stores a database of graphics and sound data 112 needed to provide the

graphics and sound of the particular video game. Main processor 100, in

general, reads the graphics and sound data 112 from storage device 54 on

an as-needed basis and stores it into main memory 300 in the form of

texture data, sound data and graphics data. In this example, coprocessor

200 includes a display processor having an internal texture memory into

which texture data is copied on an as-needed basis for use by the display

processor.

As described in the copending Van Hook et al application, storage device 54

also stores coprocessor microcode 156. In this example, a signal processor

within coprocessor 200 executes a computer program in order to perform its

various graphics and audio functions. This computer program, called the

"microcode," is provided by storage device 54. Typically, main processor

100 copies the microcode 156 into main memory 300 at the time of system

startup, and then controls the signal processor to copy parts of the

microcode on an as-needed basis into an instruction memory within signal

processor for execution. Because the microcode 156 is provided by storage

device 54, different storage devices can provide different

microcodes--thereby tailoring the particular functions provided by

coprocessor 200 under software control. Because the microcode 156 is

typically too large to fit into the signal processor's internal

instruction memory all at once, different microcode pages or portions may

need to be loaded from main memory 300 into the signal processor's

instruction memory as needed. For example, one part of the microcode 156

may be loaded into signal processor 400 for graphics processing, and

another part of microcode may be loaded for audio processing. See the

above-identified Van Hook related application for further details relating

to an illustrative signal processor, and display processor embodied within

the coprocessor as well as the various data bases maintained in RD RAM

300.

Although not shown in FIG. 2, as described in the copending Van Hook et al

application, coprocessor 200 also includes a CPU interface, a serial

interface, a parallel peripheral interface, an audio interface, a video

interface, a main memory DRAM controller/interface, a main internal bus

and timing control circuitry. The coprocessor main bus allows each of the

various main components within coprocessor 200 to communicate with one

another. The CPU interface is the gateway between main processor 100 and

coprocessor 200. Main processor 100 reads data to and writes data from

coprocessor CPU interface via a CPU-to-coprocessor bus. A coprocessor

serial interface provides an interface between the serial peripheral

interface 138 and coprocessor 200, while coprocessor parallel peripheral

interface 206 interfaces with the storage device 54 or other parallel

devices connected to connector 154.

A coprocessor audio interface reads information from an audio buffer within

main memory 300 and outputs it to audio DAC 140. Similarly, a coprocessor

video interface reads information from an RDRAM frame buffer and then

outputs it to video DAC 144. A coprocessor DRAM controller/interface is

the gateway through which coprocessor 200 accesses main memory 300. The

coprocessor timing circuitry receives clocking signals from clock

generator 136 and distributes them (after appropriate dividing as

necessary) to various other circuits within coprocessor 200.

Main processor 100 in this example is a MIPS R4300 RISC microprocessor

designed by MIPS Technologies, Inc., Mountain View, Calif. For more

information on main processor 100, see, for example, Heinrich, MIPS

Microprocessor R4000 User's Manual (MIPS Technologies, Inc., 1984, Second

Ed.).

As described in the copending Van Hook et al application, the conventional

R4300 main processor 100 supports six hardware interrupts, one internal

(timer) interrupt, two software interrupts, and one non-maskable interrupt

(NMI). In this example, three of the six hardware interrupt inputs (INTO,

INT1 and INT2) and the non-maskable interrupt (NMI) input allow other

portions of system 50 to interrupt the main processor. Specifically, main

processor INTO is connected to allow coprocessor 200 to interrupt the main

processor, the main processor interrupt INT1 is connected to allow storage

device 54 or other external devices to interrupt the main processor, and

main processor interrupts INT2 and NMI are connected to allow the serial

peripheral interface 138 to interrupt the main processor. Any time the

processor is interrupted, it looks at an internal interrupt register to

determine the cause of the interrupt and then may respond in an

appropriate manner (e.g., to read a status register or perform other

appropriate action). All but the NMI interrupt input from serial

peripheral interface 138 are maskable (i.e., the main processor 100 can

selectively enable and disable them under software control).

When the video game reset switch 90 is pressed, a non-maskable interrupt

signal is generated by peripheral interface circuit 138 and is coupled to

main processor 100 as shown in FIG. 2. The NMI signal, however, results in

non-maskable, immediate branching to a predefined initialization state. In

order to permit the possibility of responding to reset switch 90 actuation

by branching, for example, to the current highest game level progressed

to, the circuit shown in FIG. 3A of the incorporated '288 application is

preferably used.

In operation, as described in detail in the copending Van Hook et al

application, main processor 100 receives inputs from the game controllers

56 and executes the video game program provided by storage device 54 to

provide game processing, animation and to assemble graphics and sound

commands. The graphics and sound commands generated by main processor 100

are processed by coprocessor 200. In this example, the coprocessor

performs 3D geometry transformation and lighting processing to generate

graphics display commands which the coprocessor then uses to "draw"

polygons for display purposes. As indicated above, coprocessor 200

includes a signal processor and a display processor. 3D geometry

transformation and lighting is performed in this example by the signal

processor and polygon rasterization and texturing is performed by display

processor 500. Display processor writes its output into a frame buffer in

main memory 300. This frame buffer stores a digital representation of the

image to be displayed on the television screen 60. Further circuitry

within coprocessor 200 reads the information contained in the frame buffer

and outputs it to television 58 for display. Meanwhile, the signal

processor also processes sound commands received from main processor 100

using digital audio signal processing techniques. The signal processor

writes its digital audio output into main memory 300, with the main memory

temporarily "buffering" (i.e., storing) the sound output. Other circuitry

in coprocessor 200 reads this buffered sound data from main memory 300 and

converts it into electrical audio signals (stereo, left and right) for

application to and reproduction by television 58.

More specifically, main processor 100 reads a video game program 108 stored

in main memory 300. In response to executing this video game program 108,

main processor 100 creates a list of commands for coprocessor 200. This

command list, in general, includes two kinds of commands: graphics

commands and audio commands. Graphics commands control the images

coprocessor 200 generates on TV set 58. Audio commands specifying the

sound coprocessor 200 causes to be reproduced on TV loudspeakers 62. The

list of graphics commands may be called a "display list" because it

controls the images coprocessor 200 displays on the TV screen 60. A list

of audio commands may be called a "play list" because it controls the

sounds that are played over loudspeaker 62. Generally, main processor 100

specifies both a display list and a play list for each "frame" of color

television set 58 video.

In this example, main processor 100 provides its display/play list 110 to

coprocessor 200 by copying it into main memory 300. Main processor 100

also arranges for the main memory 300 to contain a graphics and audio

database that includes all that the data coprocessor 200 needs to generate

graphics and audio requested in the display/play list 110. For example,

main processor 100 may copy the appropriate graphics and audio data from

storage device read only memory 76 into the graphics and audio database

within main memory 300. Main processor 100 tells coprocessor 200 where to

find the display/play list 110 it has written into main memory 300, and

that display/play list 110 may specify which portions of graphics and

audio database 112 the coprocessor 200 should use.

The coprocessor's signal processor reads the display/play list 110 from

main memory 100 and processes this list (accessing additional data within

the graphics and audio database as needed). The signal processor generates

two main outputs: graphics display commands for further processing by

display processor; and audio output data for temporary storage within main

memory 300. Once signal processor 400 writes the audio output data into

main memory 300, another part of the coprocessor 200 called an "audio

interface" (not shown) reads this audio data and outputs it for

reproduction by television loudspeaker 62.

The signal processor can provide the graphics display commands directly to

display processor over a path internal to coprocessor 200, or it may write

those graphics display commands into main memory 300 for retrieval from

the main memory by the display processor. These graphics display commands

command display processor to draw ("render") specified geometric images on

television screen 60. For example, display processor can draw lines,

triangles or rectangles based on these graphics display commands, and may

fill triangles and rectangles with particular textures (e.g., images of

leaves of a tree or bricks of a brick wall such as shown in the exemplary

screen displays in FIGS. 4A through F) stored within main memory 300--all

as specified by the graphics display command. It is also possible for main

processor 100 to write graphics display commands directly into main memory

300 so as to directly command the display processor. The coprocessor

display processor generates, as output, a digitized representation of the

image that is to appear on television screen 60.

This digitized image, sometimes called a "bit map," is stored (along with

"depth or Z" information) within a frame buffer residing in main memory

300 of each video frame displayed by color television set 58. Another part

of coprocessor 200 called the "video interface" (not shown) reads the

frame buffer and converts its contents into video signals for application

to color television set 58.

Each of FIGS. 4A-4F was generated using a three-dimensional model of a

"world" that represents a castle on a hilltop. In the present illustrative

embodiment of the present invention, the castle serves as the focal point

of a three-dimensional world in which a player controlled character such

as the well-known "Mario" may freely explore its exterior, interior and

enter the many courses or worlds hidden therein. The FIGS. 4A-4F model are

made up of geometric shapes (i.e., lines, triangles, rectangles) and

"textures" (digitally stored pictures) that are "mapped" onto the surfaces

defined by the geometric shapes. System 50 sizes, rotates and moves these

geometric shapes appropriately, "projects" them, and puts them all

together to provide a realistic image of the three-dimensional world from

any arbitrary viewpoint. System 50 can do this interactively in real time

response to a person's operation of game controllers 86 to present

different points of view or, as referred to herein, "camera" angles of the

three-dimensional world.

FIGS. 4A-4C and 4F show aerial views of the castle from four different

viewpoints. Notice that each of the views is in perspective. System 50 can

generate these views (and views in between) interactively with little or

no discernible delay so it appears as if the video game player is actually

flying over the castle.

FIGS. 4D and 4E show views from the ground looking up at or near the castle

main gate. System 50 can generate these views interactively in real time

response to game controller inputs commanding the viewpoint to "land" in

front of the castle, and commanding the "virtual viewer" (i.e., the

imaginary person moving through the 3-D world through whose eyes the

scenes are displayed) to face in different directions. FIG. 4D shows an

example of "texture mapping" in which a texture (picture) of a brick wall

is mapped onto the castle walls to create a very realistic image.

FIGS. 3A and 3B comprise an exemplary more detailed implementation of the

FIG. 2 block diagram. Components in FIGS. 3A and 3B, which are identical

to those represented in FIG. 2, are associated with identical numerical

labels. Many of the components shown in FIGS. 3A and 3B have already been

described in conjunction with FIG. 2 and further discussion of these

components is not necessary.

FIGS. 3A and 3B show the interface between system components and the

specific signals received on device pins in greater detail than shown in

FIG. 2. To the extent that voltage levels are indicated in FIGS. 3A and

3B, VDD represents +3.3 volts and VCC represents +5 volts.

Focusing first on peripheral interface 138 in FIG. 3B, reset related

signals such as CLDRES, NMI, RESIC, CLDCAP and RSWIN are described in the

incorporated '288 application. Three coprocessor 200/peripheral interface

138 communication signals are shown: PCHCLK, PCHCMD, and PCHRSP. These

signals are transmitted on 3 bit wide peripheral interface channel bus as

shown in FIGS. 2, 3A and 3B. The clock signal PCHCLK is used for timing

purposes to trigger sampling of peripheral interface data and commands.

The clock signal is transmitted from the coprocessor 200 to the peripheral

interface 138.

Coprocessor 200 and CPU 100, based on the video game program store in

storage device 54, supply commands for the peripheral interface 138 to

perform on the PCHCMD control line. The command includes a start bit

field, a command code field and data or other information.

The peripheral interface circuitry as described in more detail in the

incorporated '288 application decodes the command and, if the data is

ready in response to the command, sends a PCHRSP response signal

comprising an acknowledge signal "ACK" followed by the response data.

Approximately two clock pulses after the peripheral interface 138

generates the acknowledgment signal ACK, data transmission begins. Data

received from the peripheral interface 138 may be information/instructions

stored in the boot ROM or controller status or controller data, etc. The

incorporated '288 application shows representative signals transmitted

across the PCHCLK, PCHCMD and PCHRSP lines and describes exemplary signals

appearing on the peripheral interface channel for four exemplary commands

serving to read 4 bytes into memory, write 4 bytes into memory, execute a

peripheral interface macro instruction or write 64 bytes into peripheral

interface buffer memory.

Turning back to the FIG. 3B peripheral interface 138, SECCLK, SECTRC and

SECTRD are three security related signals coupled between two security

processors embodied within the peripheral interface 138 and game

cartridge, respectively. SECCLK is a clock signal used to clock security

processor operations in both the peripheral interface and the game

cartridge. SECTRC is a signal sent from the peripheral interface 138 to

the game cartridge defining a data transmission clock signal window in

which data is valid and SECTRD is a data transmission bus signal in which

data from the peripheral interface 138 and data from the game cartridge

security processor are exchanged at times identified by the SECTRD

transmission clock pulses. Finally, the peripheral interface 138 includes

a pin RSWIN which is the reset switch input pin.

Turning next to connector 154, as previously mentioned, the system 50

includes an expansion capability for adding another controller 56. Data

from such a controller would be transmitted via the EXTJOY I/O pin of the

connector 154. The three above-mentioned security related signals are

coupled between the game cartridge security processor and peripheral

interface processor at the pins labeled SECTRD, SECTRC and SECCLK.

The cartridge connector additionally couples a cold reset signal CRESET to

the game cartridge security processor to enable a power on reset function.

Additionally, if during processor authentication checking, if, for

example, the peripheral interface processor does not receive data which

matches what is expected, the cartridge processor may be placed in a reset

state via the CRESET control pin.

The NMI input is a control pin for coupling an NMI interrupt signal to the

cartridge. The control line CARTINT is provided to permit an interrupt

signal to be generated from the cartridge to CPU 100 to, for example, if

devices are coupled to the cartridge requiring service by CPU 100. By way

of example only, a bulk storage device such as a CD ROM is one possible

device requiring CPU interrupt service.

As shown in FIG. 3B, the system bus is coupled to the cartridge connector

154 to permit accessing of program instructions and data from the game

cartridge ROM and/or bulk storage devices such as CD ROM, etc. In contrast

with prior video game systems such as the Nintendo NES and SNES, address

and data signals are not separately coupled on different buses to the game

cartridge but rather are multiplexed on an address/data 16 bit wide bus.

Read and write control signals and address latch enable high and low

signals, ALEH and ALEL, respectively are also coupled to the game

cartridge. The state of the ALEH and ALEL signals defines the significance

of the information transmitted on the 16 bit bus. The read signal RD is a

read strobe signal enabling data to be read from the mask ROM or RAM in

the game cartridge. The write signal WR is a strobe signal enabling the

writing of data from the coprocessor 200 to the cartridge static RAM or

bulk media device.

FIG. 3C is a block diagram which demonstrates in detail how the

address/data 16 bit bus is utilized to read information from a game

cartridge ROM and read and write information from a game cartridge RAM.

Coprocessor 200 generates an address latch enable high signal which is

input to the ALEH pin in FIG. 3C. Exemplary timing signals for the reading

and writing of information are shown in FIGS. 13 and 14 respectively of

the incorporated '288 patent application. The coprocessor 200 similarly

generates an address latch enable low signal which is coupled to the ALEL

pin which, in turn, enables information on address pin 0 to 15 to be

loaded into the input buffer 352. Bits 7 and 8 and 11 and 12 from input

buffer 352 are, in turn, coupled to address decoder 356. In the exemplary

embodiment of the present invention, bits 7, 8 and 11, 12 are decoded by

the address decoder to ensure that they correspond to 4 bits indicative of

the proper location in the address space for the mask ROM 368. Thus, the

mask ROM 368 has a designated location in the AD16 bus memory map and

decoder 356 ensures that the mask ROM addressing signals correspond to the

proper mask ROM location in this memory map. Upon detecting such

correspondence, decoder 356 outputs a signal to one-bit chip select

register 360. When ALEH transitions from high to low, as shown in FIG. 3C,

bits 0 to 6 output from input buffer 352 are latched into 7 bit address

register 362. Simultaneously, data from address decoder 356 is latched

into chip select register 360 and register 358 is also enabled, as

indicated in FIG. 3C.

When the coprocessor 200 outputs low order address bits on the AD16 bus,

the address signals are input to input buffer 352. The bits are coupled in

multiple directions. As indicated in FIG. 3C, bits 1 to 8 are set in an 8

bit address presettable counter 366 and bits 9 to 15 are coupled to 7 bit

address register 364. At a time controlled by ALEL, when registers 358 and

360 are set and registers 362, 364 and 366 are loaded, the read out of

data is ready to be initiated. A time TL is required for data to be output

after the ALEL signal transitions from high to low. After the ALEL signal

has been generated, a read pulse RD is applied on the pin shown in the top

lefthand portion of FIG. 3C. The read signal is input to gate 374 whose

other input is coupled to gate 372. When the output of registers 358, 360

and signals ALEL and ALEH are low, then the output of 372 will be low.

When RD and the output of 372 are low, the clock signal is generated at

the output of 374 thereby causing the counter 366 to be clocked and begin

counting and the output buffer 354 to be enabled. The 8 bit address

presettable counter determines the memory cell array column selected and

the combination of the output of address register 362 and address register

364 defines the memory cell row selected. The output data is temporarily

stored in latch 370 and then coupled to output buffer 354. Thereafter, the

data is transmitted back to coprocessor 200 via the same 16AD 0 to 15

lines.

By virtue of using the multiplexed AD 0 to 15 bus, the game cartridge pin

out is advantageously reduced. The circuitry of FIG. 3C, although designed

for accessing a mask ROM, is readily adaptable for writing information

into, for example, static RAM. In a static RAM embodiment, the processing

of the ALEH and ALEL signals are the same as previously described as is

the loading of information in the registers 358, 360, 362, 364 and 366. A

write signal is generated and coupled to gate 374 instead of the read

signal shown in FIG. 3C. Data is output from coprocessor 200 for writing

into a static RAM memory 368. The data is loaded into buffer 352. A clock

pulse is generated at the output of gate 374 to cause the address

presettable counter to begin counting to cause data to be written into

memory 368 rather than read out as previously described. The multiplexed

use of the 16 bit address/data bus is described in further detail in

conjunction with FIGS. 12-14 of the incorporated '288 patent application.

Sound may be output from the cartridge and/or through connector 154 to the

audio mixer 142 channel 1 and channel 2 inputs, CH1EXT and CH2EXT,

respectively. The external sound inputs from SOUNDL and SOUNDR will be

mixed with the sound output from the coprocessor via the audio DAC 140 and

the CH1IN, CH2IN inputs to thereafter output the combined sound signal via

the audio mixer outputs CH1OUT, CH2OUT which are, in turn, coupled to the

AUDIOL and AUDIOR inputs of the audio video output connector 149 and

thereafter coupled to the TV speakers 62a,b.

The connector 154 also receives a composite sync signal CSYNC which is the

output of video DAC 144 which is likewise coupled to the audio video

output connector 149. The composite sync signal CSYNC, as previously

described, is utilized as a synchronization signal for use in

synchronizing, for example, a light pen or photogun.

The cartridge connector also includes pins for receiving power supply and

ground signals as shown in FIG. 3B. The +3.3 volts drives, for example,

the 16 bit AD bus as well as other cartridge devices. The 12 volt power

supply connection is utilized for driving bulk media devices.

Turning to coprocessor 200 in FIG. 3A, many of the signals received or

transmitted by coprocessor 200 have already been described, which will not

be repeated herein. The coprocessor 200 outputs an audio signal indicating

whether audio data is for the left or right channel, i.e., AUDLRCLK.

Serial audio data is output on a AUDDATA pin. Timing for the serially

transmitted data is provided at the AUDCLK pin. Coprocessor 200 outputs

seven video signals SRGBO through SRGB7 which synchronized RGB digital

signals are coupled to video DAC 144 for conversion to analog. Coprocessor

200 generates a timing signal SYNC that controls the timing for the SRGB

data which is coupled to the TSYNC input of video DAC 144. Coprocessor 200

receives a video clock input from clock generator 136 via the VCLK input

pin for controlling the SRGB signal timing. The coprocessor 200 and CPU

100 use a PVALID SIGNAL to indicate that the processor 100 is driving a

valid command or data identifier or valid address/data on the system bus

and an EVALID signal to indicate that the coprocessor 200 is driving a

valid command or data identifier or valid address/data on the system bus.

Coprocessor 200 supplies CPU 100 with master clock pulses for timing

operations within the CPU 100. Coprocessor 200 and CPU 100 additionally

use an EOK signal for indicating that the coprocessor 200 is capable of

accepting a processor 100 command.

Turning to main memory RDRAM 300, 302, as depicted in FIG. 3A, two RDRAM

chips 300a and 300b are shown with an expansion RDRAM module 302. As

previously described, the main memory RDRAM may be expanded by plugging in

a memory module into a memory expansion port in the video console housing.

Each RDRAM module 300a,b, 302 is coupled to coprocessor 200 in the same

manner. Upon power-up RDRAM 1 (300a) is first initialized, then RDRAM2

(300b) and RDRAM3 (302) are initialized. RDRAM 1 is recognized by

coprocessor 200 since its SIN input is tied to VDD, as shown in FIG. 4A.

When RD1 is initialized under software control SOUT will be at a high

level. The SOUT high level signal is coupled to SIN of RDRAM 2 (300b)

which operates to initialize RDRAM2. SOUT will then go to a high level

which operates to initialize RDRAM3 (302) (if present in the system).

Each of the RDRAM modules receives bus control and bus enable signals from

coprocessor 200. The coprocessor 200 outputs a TXCLK signal when data is

to be output to one of RDRAM1 through 3 and a clock signal RXCLK is output

when data is to be read out from one of the RDRAM banks. The serial in

(SIN) and serial out (SOUT) pins are used during initialization, as

previously described. RDRAM receives clock signals from the clock

generator 136 output pin FSO.

Clock generator 136 is a three frequency clock signal generator. By way of

example, the oscillator within clock generator 136 may be a phase-locked

locked loop based oscillator which generates an FSO signal of

approximately 250 MHz. The oscillator also outputs a divided version of

the FSO signal, e.g., FSO/5 which may be at approximately 50 MHz, which is

used for timing operations involving the coprocessor 200 and video DAC

144, as is indicated in FIGS. 3A and 3B. The FSC signal is utilized for

timing the video encoder carrier signal. Clock generator 136 also includes

a frequency select input in which frequencies may be selected depending

upon whether an NTSC or version of the described exemplary embodiment

is used. Although the FSEL select signal is contemplated to be utilized

for configuring the oscillator for NTSC or use, as shown in FIG. 3A,

the input resets the oscillator under power-on reset conditions. When

connected to the power on reset, the oscillator reset is released when a

predetermined threshold voltage is reached.

FIG. 5, which is shown in further detail in the incorporated '288

application, is a block diagram of peripheral interface 138 shown in FIG.

2. Peripheral interface 138 is utilized for I/O processing, e.g.,

controlling the game controller 56 input/output processing, and for

performing game authenticating security checks continuously during game

play. Additionally, peripheral interface 138 is utilized during the game

cartridge/coprocessor 200 communication protocol using instructions stored

in boot ROM 262 to enable initial game play. Peripheral interface 138

includes CPU 250, which may, for example, be a 4 bit microprocessor of the

type manufactured by Sharp Corporation. CPU 250 executes its security

program out of program ROM 252. As previously described, the peripheral

interface processor 250 communicates with the security processor 152

embodied on the game cartridge utilizing the SECTRC, SECTRD and SECCLK

signals. Peripheral interface port 254 includes two 1 bit registers for

temporarily storing the SECTRC and SECTRD signals.

Overall system security for authenticating game software is controlled by

the interaction of main processor 100, peripheral interface processor 250,

boot ROM 262 and cartridge security processor 152. Boot ROM 262 stores a

set of instructions executed by processor 100 shortly after power is

turned on (and, if desired, upon the depression of reset switch 90). The

boot ROM program includes instructions for initializing the CPU 100 and

coprocessor 200 via a set of initial program loading instructions (IPL).

Authentication calculations are thereafter performed by the main processor

100 and the result is returned to the CPU 250 in peripheral interface 138

for verification. If there is verification, the game program is

transferred to the RDRAM, after it has been initialized, and a further

authentication check is made. Upon verification of an authentic game

program, control jumps to the game program in RDRAM for execution.

Continuous authentication calculations continue during game play by the

authenticating processor in the peripheral interface 138 and by security

processor 152 such as is described, for example, in U.S. Pat. No.

4,799,635 and related U.S. Pat. No. 5,426,762, which patents are

incorporated by reference herein.

Turning back to FIG. 5, a PCHCLK clock signal having a frequency of, for

example, approximately 15 MHz is input to clock generator 256 which, in

turn, supplies an approximately 1 MHz clocking signal to CPU 250 and an

approximately 1 MHZ clock signal along the line SECCLK for transmission to

the game cartridge security processor 152. PIF channel interface 260

responds to PCHCLK and PCHCMD control signals to permit access of the boot

ROM 262 and RAM 264 and to generate signals for controlling the

interruption of CPU 250, when appropriate.

The PCHCLK signal is the basic clock signal which may, for example, be a

15.2 MHz signal utilized for clocking communication operations between the

coprocessor 200 and the peripheral interface 138. The PCHCMD command is

utilized for reading and writing from and to RAM 264 and for reading from

boot ROM 262. The peripheral interface 138 in turn provides a PCHRSP

response which includes both accessed data and an acknowledgment signal.

In the present exemplary embodiment, four commands are contemplated

including a read 4 byte from memory command for reading from RAM 264 and

boot ROM 262, a write 4 byte memory command for writing to RAM 264, a PIF

macro command for reading 64 bytes from buffer 264 and accessing

control/data from the joystick based player controller (also referred to

as the JoyChannel). The CPU 250 is triggered to send or receive JoyChannel

data by the PIF macro instruction. The main processor 100 may thus

generate a PIF macro command which will initiate I/O processing operations

by CPU 250 to lessen the processing burden on main processor 100. The main

processor 100 may also issue a write 64 byte buffer command which writes

64 bytes into RAM 264.

Turning back to FIG. 5, peripheral interface 138 also includes a bus

arbitrator 258 which allocates access to RAM 264 between CPU 250 and PIF

channel interface 260. RAM 264 operates as a working RAM for CPU 250 and

stores cartridge authenticating related calculations. RAM 264 additionally

stores status data such as, for example, indicating whether the reset

switch has been depressed. RAM 264 also stores controller related

information in, for example, a 64 byte buffer within RAM 264. Exemplary

command formats for reading and writing from and to the 64 byte buffer are

shown in the incorporated '288 application.

Both the buffer RAM 264 and the boot ROM 262 are in the address space of

main processor 100. The CPU 250 of the peripheral interface 138 also can

access buffer RAM 264 in its address space. Memory protection techniques

are utilized in order to prevent inappropriate access to portions of RAM

264 which are used for authenticating calculations.

Reset and interrupt related signals, such as CLDRES, CLDCAP and RESIC are

generated and/or processed as explained above in the incorporated '288

application. The signal RSWIN is coupled to port 268 upon the depression

of reset switch 90 and the NMI and the pre-NMI warning signal, INT2, are

generated as described in the incorporated '288 application.

Port 268 includes a reset control register storing bits indicating whether

an NMI or INT2 signal is to be generated. A third bit in the reset control

register indicates whether the reset switch 90 has been depressed.

As mentioned previously, peripheral interface 138, in addition to its other

functions, serves to provide input/output processing for two or more

player controllers. As shown in FIG. 1, an exemplary embodiment of the

present invention includes four sockets 80a-d to accept up to four

peripheral devices. Additionally, the present invention provides for

including one or more additional peripheral devices. See connector 154 and

pin EXTJOY I/O. The 64 byte main processor 100 does not directly control

peripheral devices such as joystick or cross-switch based controllers.

Instead, main processor 100 controls the player controllers indirectly by

sending commands via coprocessor 200 to peripheral interface 138 which

handles I/O processing for the main processor 100. As shown in FIG. 5,

peripheral interface 138 also receives inputs from, for example, five

player controller channels via channel selector 280, demodulator 278,

joystick channel controller 272 and port 266. Joystick channel data may be

transmitted to peripheral devices via port registers 266 to joystick

channel controller 272, modulator 274 and channel select 276. With respect

to JoyChannel communication protocol, there is a command protocol and a

response protocol. After a command frame, there is a completion signal

generated. A response frame always comes after a command frame. In a

response frame, there is a completion signal generated after the response

is complete. Data is also sent from the peripheral interface 138 to the

JoyChannel controllers. The CPU 250 of the peripheral interface controls

such communications.

Each channel coupled to a player controller is a serial bilateral bus which

may be selected via the channel selector 276 to couple information to one

of the peripheral devices under the control of the four bit CPU 250. If

the main processor 100 wants to read or write data from or to player

controllers or other peripheral devices, it has to access RAM 264. There

are several modes for accessing RAM 264. The 64 bit CPU 100 may execute a

32 bit word read or write instruction from or to the peripheral interface

RAM 264. Alternatively, the CPU may execute a write 64 byte DMA

instruction. This instruction is performed by first writing a DMA starting

address into the main RAM address register. Thereafter, a buffer RAM 264

address code is written into a predetermined register to trigger a 64 byte

DMA write operation to transfer data from a main RAM address register to a

fixed destination address in RAM 264.

A PIF macro also may be executed. A PIF macro involves an exchange of data

between the peripheral interface RAM 264 and the peripheral devices and

the reading of 64 bytes by DMA. By using the PIF macro, any peripheral

device's status may be determined. The macro is initiated by first setting

the peripheral interface 138 to assign each peripheral device by using a

write 64 byte DMA operation or a write 4 byte operation (which could be

skipped if done before and no change in assignment is needed). Thereafter,

the DMA destination address is written onto a main RAM address register

and a predetermined RAM 264 address code is written in a PIF macro

register located in the coprocessor which triggers the PIF macro. The PIF

macro involves two phases where first, the peripheral interface 138 starts

a reading or writing transaction with each peripheral device at each

assigned mode which results in updated information being stored in the

peripheral interface RAM 264. Thereafter, a read 64 byte DMA operation is

performed for transferring 64 bytes from the fixed DMA starting address of

the RAM 264 to the main RAM address register programmable DMA destination

address within main RAM 300.

There are six JoyChannels available in the present exemplary embodiment.

Each Channel's transmit data and receive data byte sizes are all

independently assignable by setting size parameters. The function of the

various port 266 registers are described in the incorporated '288

application.

The peripheral device channel is designed to accept various types of future

peripheral devices. The present exemplary embodiment uses an extensible

command which is to be interpreted by peripherals including future

devices. Commands are provided for read and writing data to a memory card.

Backup data for a game may be stored on a memory card. In this fashion, no

backup battery need be used for this memory during game play since it

plugs into the controller. Certain of these commands contemplate an

expansion memory card module 50 that plugs into a player controller 56 as

is shown in FIG. 9. For further details relating to exemplary controllers

that may be used in system 50 and the communications protocol between such

controller and the peripheral interface 138 (and processing associated

therewith) reference is made to the incorporated '019 application and

Japanese patent application number H07-288006 (no. 00534) filed Oct. 9,

1995 naming Nishiumi et al as inventors, which application is incorporated

herein by reference.

FIGS. 6 and 7 are external oblique-view drawings of a controller 56. The

top housing of the controller 56 comprises an operation area in which a

joystick 45 and buttons 403, 404A-F and 405 are situated adjacent 3 grips

402L, 402C and 402R. The bottom housing of the controller 56 comprises an

operation area in which a button 407 is situated, 3 grips 402L, 402C and

402R and an expansion device mount 409. In addition, buttons 406L and 406R

are situated at the boundary between the top housing and bottom housing of

the controller 56. Furthermore, an electrical circuit, to be described

below, is mounted inside the top and bottom housings. The electrical

circuit is electrically connected to the video processing device 10 by

cable 42 and connection jack 41. Button 403 may, for example, be a cross

switch-type directional switch consisting of an up button, down button,

left button and right button, which may be used to control the displayed

position of a displayed moving object such as the well known Mario

character. Buttons 404 consist of button 404A (referred to herein as the

"A" button), button 404B (referred to as the "B" button), button 404C,

button 404D, button 404E and button 404F(referred to collectively herein

as the "C" buttons), and can be used, for example, in a video game to fire

missiles or for a multitude of other functions depending upon the game

program. As described herein in the presently preferred illustrative

embodiment, the four arrowhead labeled (up, down, left, and right) "C"

buttons permit a player to modify the displayed "camera" or point of view

perspective in the three-dimensional world. Button 405 is a start button

and is used primarily when starting a desired program. As shown in FIG. 7,

button 406L is situated so that it can be easily operated by the index

finger of the left hand, and button 406R is situated so that it can be

easily operated by the index finger of the right hand. Button 407 is

situated on the bottom housing so that it cannot be seen by the operator.

In addition, grip 402L is formed so that it is grasped by the left hand

and grip 402R is formed so that it is grasped by the right hand. Grip 402C

is situated for use when grip 402L and/or, grip 402R are not in use. The

expansion device mount 409 is a cavity for connecting an expansion device

to the joy port connector 446 shown in FIG. 9.

The internal construction of an exemplary embodiment of the controller 56

joystick 45 is shown in FIG. 8. Reference is also made to incorporated

application Ser. No. 08/765,474, for its detailed disclosure of an

exemplary joystick construction for use as a character control member.

See, for example, FIGS. 11-15 and 31-41 therein and the disclosure related

thereto. Turning back to FIG. 8, the tip of the operation member 451

protruding from the housing is formed into a disk which is easily

manipulated by placing one's finger on it. The part below the disk of the

operation member 451 is rodshaped and stands vertically when it is not

being manipulated. In addition, a support point 452 is situated on the

operation member 451. This support point 452 securely supports the

operation member on the controller 56 housing so that it can be tilted in

all directions relative to a plane. An X-axis linkage member 455 rotates

centered around an X shaft 456 coupled with tilting of the operation

member 451 in the X-direction. The X shaft 456 is axially supported by a

bearing (not shown). A Y-axis linkage member 465 rotates centered around a

Y shaft 466 coupled with tilting of the operation member 451 in the

Y-direction. The Y shaft 466 is axially supported by a bearing (not

shown). Additionally, force is exerted on the operation member 451 by a

return member, such as a spring (not shown), so that it normally stands

upright. Now, the operation member 451, support 452, X-axis linkage member

455, X shaft 456, Y-axis linkage member 465 and Y shaft 466 are also

described in Japan Utility Patent Early Disclosure (Kokai) No. HEI

2-68404.

A disk member 457 is attached to the X shaft 456 which rotates according to

the rotation of the X shaft 456. The disk member 457 has several slits 458

around the perimeter of its side at a constant distance from the center.

These slits 458 are holes which penetrate the disk member 457 and make it

possible for light to pass through. A photo-interrupter 459 is mounted to

the controller 56 housing around a portion of the edge of the perimeter of

the disk member 457, which photo-interrupter 459 detects the slits 458 and

outputs a detection signal. This enables the rotated condition of the disk

member 457 to be detected. A description of the Y shaft 466, disk member

467 and slits 468 are omitted since they are the same as the X shaft 456,

disk member 457 and slits 458 described above. The technique of detecting

the rotation of the disc members 457 and 467 using light, which was

described above, is disclosed in detail in Japan Patent Application

Publication No. HEI 6-114683, filed by applicants' assignee in this

matter, which is incorporated herein by reference.

In this exemplary implementation, disk member 457 is directly mounted on

the X-axis linkage, member 455, but a gear could be attached to the X

shaft 456 and the disc member 457 rotated by this gear. In such a case, it

is possible to cause the disc member 457 to greatly rotate by the operator

slightly tilting the operation member 451 by setting the gear ratio so

that rotation of the disc member 457 is greater than rotation of the X

shaft 456. This would make possible more accurate detection of the tilted

condition of the operation member 451 since more of the slits 458 could be

detected. For further details of the controller 56 joystick linkage

elements, slit disks, optical sensors and other elements, reference is

made to Japanese Application No. H7-317230 filed Nov. 10, 1995, which

application is incorporated herein by reference.

An exemplary controller 56 is described in conjunction with the FIG. 9

block diagram. The data which has been transmitted from the video

processing device 52 is coupled to a conversion circuit 43. The conversion

circuit 43 sends and receives data to and from the peripheral interface

138 in the video processing device 52 as a serial signal. The conversion

circuit 43 sends serial data received from the peripheral interface 138 as

a serial signal to receiving circuit 441 inside the controller circuit 44.

It also receives a serial signal from the send circuit 445 inside the

controller circuit 44 and then outputs this signal as a serial signal to

the peripheral interface 138.

The send circuit 445 converts the parallel signal which is output from the

control circuit 442 into a serial signal and outputs the signal to

conversion circuit 43. The receive circuit 441 converts the serial signal

which has been output from converter circuit 43 into a parallel signal and

outputs it to control circuit 442.

The send circuit 445, receive circuit 441, joy port control circuit 446,

switch signal detection circuit 443 and counter 444 are connected to the

control circuit 442. A parallel signal from receive circuit 441 is input

to control circuit 442, whereby it receives the data/command information

which has been output from video processing device 52. The control circuit

442 performs the desired operation based on such received data. The

control circuit 442 instructs the switch signal detection circuit 443 to

detect switch signals, and receives data from the switch signal detection

circuit 443 which indicates which of the buttons have been pressed. The

control circuit 442 also instructs the counter 444 to output its data and

receives data from the X counter 444X and the Y counter 444Y. The control

circuit 442 is connected by an address bus and a data bus to an expansion

port control circuit 446. By outputting command data to port control

circuit 446, control circuit 442 is able to control expansion device 50,

and is able to receive expansion device output data.

The switch signals from buttons 403-407 are input to the switch signal

detection circuit 443, which detects that several desired buttons have

been simultaneously pressed and sends a reset signal to the reset circuit

448. The switch signal detection circuit 443 also outputs a switch signal

to the control circuit 442 and sends a reset signal to the reset circuit

448.

The counter circuit 444 contains two counters. X counter 444X counts the

detection pulse signals output from the X-axis photo-interrupter 469

inside the joystick mechanism 45. This makes it possible to detect how

much the operation member 451 is tilted along the X-axis. The Y counter

444Y counts the pulse signals output from the Y-axis photo-interrupter 459

inside the joysticks mechanism 45. This makes it possible to detect how

much the operation member 451 is tilted along the Y-axis. The counter

circuit 444 outputs the count values counted by the X counter 444X and the

Y counter 444Y to the control circuit 442 according to instructions from

the control circuit 442. Thus, not only is information generated for

determining 360.degree. directional movement with respect to a point of

origin but also the amount of operation member tilt. As explained below,

this information can be advantageously used to control both the direction

of an object's movement, and also, for example, the rate of movement.

Buttons 403-407 generate electrical signals when the key tops, which

protrude outside the controller 56 are pressed by the user. In the

exemplary implementation, the voltage changes from high to low when a key

is pressed. This voltage change is detected by the switch signal detection

circuit 443.

The controller 56 generated data in the exemplary embodiment consists of

the following four bytes, where the various data bits are represented as

either "0" or "1": B, A, G, START, up, down, left, right (byte 1); JSRST,

0 (not used in the exemplary implementation in this application), L, R, E,

D, C, F (byte 2); an X coordinate (byte 3) and a Y coordinate (byte 4). E

corresponds to the button 404B and becomes 1 when button 404B is pressed,

0 when it is not being pressed. Similarly, A corresponds to button 404A, G

with button 407, START with button 405, up, down, left and right with

button 403, L with button 406L, R with button 406R, E with button 404E, D

with button 404D, C with button 404C and F with button 404F. JSRST becomes

1 when 405, button 406L and button 406R are simultaneously pressed by the

operator and is 0 when they are not being pressed. The X coordinate and Y

coordinate are the count value data of the X counter 444X and Y counter

444Y, respectively.

The expansion port control circuit 446 is connected to the control circuit

442 and via an address, control and data bus to expansion device 50 via a

port connector 46. Thus, by connecting the control circuit 442 and

expansion device 50 via an address bus and a data bus, it is possible to

control the expansion device 50 according to commands from the video

processing device console 52.

The exemplary expansion device 50, shown in FIG. 9, is a back-up memory

card 50. Memory card 50 may, for example, include a RAM device 51, on

which data can be written to and read from desired indicated addresses

appearing on an address bus and a battery 52 which supplies the back-up

power necessary to store data in the RAM device 51. By connecting this

back-up memory card 50 to expansion (joy port) connector 46 in the

controller 40, it becomes possible to send data to and from RAM 51 since

it is electrically connected with the joy port control circuit 446.

The memory card 51 and game controller connector 46 provide the game

controller and the overall video game system with enhanced flexibility and

function expandability. For example, the game controller, with its memory

card, may be transported to another player's video game system console.

The memory card may store and thereby save data relating to individual

achievement by a player and individual, statistical information may be

maintained during game play in light of such data. For example, if two

players are playing a racing game, each player may store his or her own

best lap times. The game program may be designed to cause video processing

device 52 to compile such best lap time information and generate displays

indicating both statistics as to performance versus the other player and a

comparison with the best prior lap time stored on the memory card. Such

individualized statistics may be utilized in any competitive game where a

player plays against the video game system computer and/or against

opponents. For example, with the memory card, it is contemplated that in

conjunction with various games, individual statistics relating to a

professional team will be stored and utilized when playing an opponent who

has stored statistics based on another professional team such as a

baseball or football team. Thus, RAM 51 may be utilized for a wide range

of applications including saving personalized game play data, or storing

customized statistical data, e.g., of a particular professional or college

team, used during game play.

The sending and receiving of data between the video processing device 52

and the controller 56, further controller details including a description

of controller related commands, how the controller may be reset, etc., are

set forth in detail in the incorporated '019 application.

The present invention is directed to a new era of video game methodology in

which a player fully interacts with complex three-dimensional environments

displayed using the video game system described above. The present

invention is directed to video game methodology featuring a unique

combination of game level organization features, animation/character

control features, and multiple "camera" angle features. A particularly

preferred exemplary embodiment of the present invention is the

commercially available Super Mario 64 game for play on the assignee's

Nintendo 64 video game system which has been described in part above.

FIG. 10 is a flowchart depicting the present methodology's general game

program flow and organization. Various unique and/or significant aspects

of the processing represented in the FIG. 10 flowchart are first generally