U.S. Pat. No. 12,407,788

Video Game Engine Assisted Virtual Studio Production Process

Issue DateOctober 6, 2022

Illustrative Figure

Abstract

A production process involves predetermined number of cameras simultaneously filming a background at predetermined angles, and filming actors in a studio with the same number of cameras and the same angles, used in conjunction with a virtual studio system. In studio, the actors perform before a green screen and the virtual studio system composites the actors onto the background in real-time. Camera tracking allows the in-studio cameras to pan, tilt, focus, zoom, and make limited other movements as the virtual studio system adjusts display of the background in a corresponding manner, resulting in a realistic scene without transporting actors and crew to the background location.

Description

DETAILED DESCRIPTION Referring initially toFIG.1, the positioning of cameras at a background location is shown. The background location may be at any desired location, but is shown here as a background for a scene that takes place in a restaurant. As shown inFIG.1, background elements112are present in the forms in which they appear in the location, including windows, tables, and a pizza oven in the present example. An empty space114may be prepared in some circumstances where the principal part of the foreground action will take place. For example, in a restaurant, an empty space may be created by moving away tables and chairs. Three cameras are depicted inFIG.1, although any number of cameras may be used, depending on the particular needs of the scene. More particularly, setups with two to four cameras are most common for TV productions, and the present invention works particularly well with such setups. Nonetheless, some films have used several dozen cameras for special purposes or effects, such as “bullet time.” The present invention also works well with setups involving large numbers of cameras, and can avoid the labor, cost, and other drawbacks involved in using computer-generated backgrounds. As seen inFIG.1, a left camera120is placed at a left camera position122and a left camera angle124, while a middle camera130is placed at a middle camera position132and a middle camera angle134, and a right camera140is placed at a right camera position142and a right camera angle144. The left camera120, middle camera130, and right camera140all film at the same time, for at least the duration of time the corresponding scene is expected to last. Referring now toFIG.2, in the studio actors150and props152are located in front of a green screen (not depicted). A left camera160is located at a left camera position162and a left camera angle164, while a middle camera170is located at a ...

DETAILED DESCRIPTION

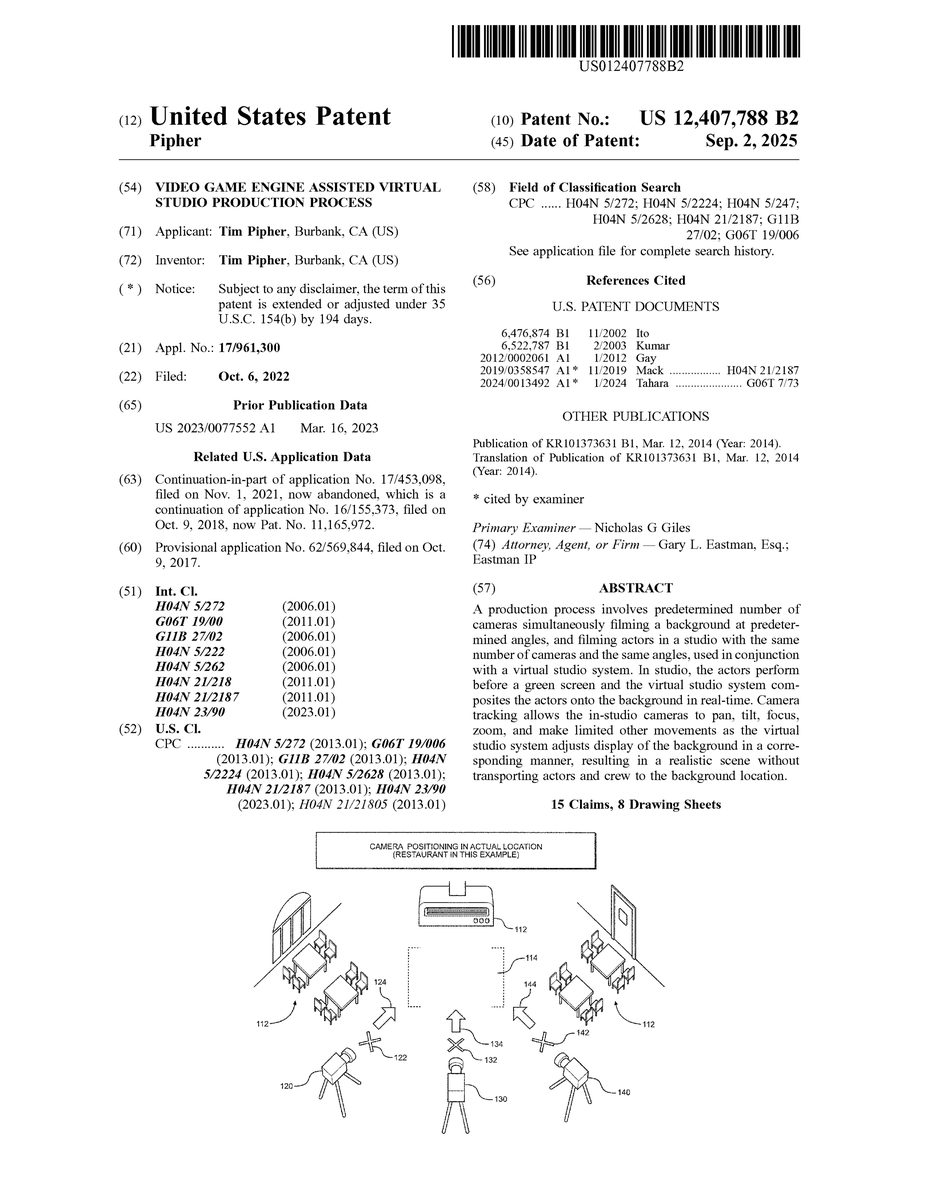

Referring initially toFIG.1, the positioning of cameras at a background location is shown. The background location may be at any desired location, but is shown here as a background for a scene that takes place in a restaurant. As shown inFIG.1, background elements112are present in the forms in which they appear in the location, including windows, tables, and a pizza oven in the present example. An empty space114may be prepared in some circumstances where the principal part of the foreground action will take place. For example, in a restaurant, an empty space may be created by moving away tables and chairs.

Three cameras are depicted inFIG.1, although any number of cameras may be used, depending on the particular needs of the scene. More particularly, setups with two to four cameras are most common for TV productions, and the present invention works particularly well with such setups. Nonetheless, some films have used several dozen cameras for special purposes or effects, such as “bullet time.” The present invention also works well with setups involving large numbers of cameras, and can avoid the labor, cost, and other drawbacks involved in using computer-generated backgrounds.

As seen inFIG.1, a left camera120is placed at a left camera position122and a left camera angle124, while a middle camera130is placed at a middle camera position132and a middle camera angle134, and a right camera140is placed at a right camera position142and a right camera angle144. The left camera120, middle camera130, and right camera140all film at the same time, for at least the duration of time the corresponding scene is expected to last.

Referring now toFIG.2, in the studio actors150and props152are located in front of a green screen (not depicted). A left camera160is located at a left camera position162and a left camera angle164, while a middle camera170is located at a middle camera position172and a middle camera angle174, and a right camera180is located at a right camera position182and a right camera angle184. The relative positions of left, middle, and right camera positions162,172, and182in the studio are the same as the relative positions of left, middle, and right camera positions122,132, and142on location. The angles164,174, and184, are the same, relative to each other, as the angles124,134, and144. In this way, foreground elements from a studio camera at a particular point in time can be placed against the background from a background location camera of the corresponding position, angle, and time, resulting in temporal and spatial consistency in the scene.

Filming takes place onstage in conjunction with a virtual studio system, such as those sold in conjunction with the marks Zero Density, BRAINSTORM, or VIZRT, with camera tracking. Camera tracking is a system through which data flows from the cameras to the virtual studio system in such a way that the virtual background will automatically move around to correspond with in-studio camera movement. The studio cameras are free to pan, tilt, zoom, focus, and make a certain amount of other movement. These in-studio camera movements don't need to match movement in the on-location plates. In fact, the on-location filming is conducted with “locked down” non-moving cameras. This gives the filmmaker the freedom to make the camera moves that the story requires in-studio, allowing for artistic freedom and further enhancing the impression of reality.

Referring now toFIG.3, the result of the process is depicted. The actors150and props152appear to be acting in the location filmed by the background cameras. As the view is switched between camera angles, background elements, including transitory elements such as people or animals, appear consistently in the expected places, resulting in a realistic, “three-dimensional” depiction of the scene. This result may be seen in real-time on studio monitors and recorded as the actors are performing. By depicting and recording it in real-time, a director can determine immediately if the scene is satisfactory, or a “live” TV show can be broadcast. The on-location plates (the background) and in-studio live action (the foreground) are composited, or combined, live, saving the expense of post-production compositing and allowing for in-studio camera movement.

More generally, the result may be prepared by a computer and depicted in real-time on studio monitors as the actors are performing, or it may be prepared in post-processing, or both. By depicting it in real-time, a director can determine immediately if the scene is satisfactory, or a “live” TV show can be broadcast. Performing or re-performing the combination during post-processing allows film editors to fine tune the effect and add or adjust any element as desired. If the foreground and background are combined in post-production, the order of filming isn't limited to doing the background first. The background and foreground elements could be filmed in any order, although one advantage of filming the background first is the ability to preview the resultant combination in real-time in the studio.

Thus, in a preferred embodiment, tracking data is used for real-time compositing, and in an alternative embodiment, tracking data is stored for compositing the in-studio actors with the backgrounds later, during post production. In some embodiments, the background and foreground are composited live on studio monitors, and the tracking data is stored and the final combination of the foreground with the background plates is performed later, during post production. In situations in which computational power may be limited, this allows the use of a more efficient compositing algorithm in real-time on the studio monitors and a higher quality compositing algorithm, or even manual intervention in the compositing process, at a later time.

Referring now toFIG.4, a production control room is shown, in which a technician190, such as a technical director, is shown at a workstation. The technician190sees the foreground, with the actors150and props152against the green screen background on a workstation monitor192. Another studio monitor194displays, at the same time, the scene including the foreground composited onto the virtual background. Before in-studio filming, each of the in-studio cameras is associated with a background plate in the virtual studio system. Then, during filming, the virtual studio system composites the foreground captured by each in-studio camera onto the corresponding virtual background, using camera tracking to adjust the virtual background in correlation with the panning, tilting, zooming, focusing, and movement of the in-studio cameras.

Referring now toFIG.5, an outline of the primary steps in the process of the present invention is diagrammed and generally designated200. A first step202involves providing a predetermined number of cameras, as well as a predetermined position and angle for each camera. A second step204involves filming the background on location with the predetermined number of cameras at the predetermined positions and angles. A third step206involves filming the foreground in the studio and in front of a green screen. The third step206also uses the same predetermined number of cameras at the same predetermined positions and angles. During in-studio filming, a fourth step208comprises camera tracking, also known as match moving. In this step, in-studio camera movements, including zooming, panning, tilting, and focus, are provided to the virtual studio system. A fifth step210, which, as discussed above, may occur simultaneously with the third step206and the fourth step208using computer technology, involves compositing, or placing the foreground elements on the background plates. The in-studio camera movement data provided by the fourth step208allows the virtual studio system to adjust the display of the background—e.g., making it bigger, panning to a different part of the background image, etc.—in order to make the in-studio camera movement appear to affect both foreground and background elements. This compositing, including camera tracking and corresponding background adjustments, can be performed in real-time and appear on in-studio monitors during filming and, if desired, even be broadcast live.

Referring now toFIG.6, the positioning of virtual cameras in a scene generated with a video game engine is illustrated. Scene elements312are placed throughout the virtual space by modelers, with an optional space314designated for foreground action. However, by using a video game engine to render the scene, objects can be rendered much closer to the actors without the need to be physically present in the studio.

Since the scene is computer generated, there are no physical cameras, but the scene is rendered by the video game engine as if a camera were at a location and angle that corresponds to a foreground camera's location and angle, such as locations162,172, and182and angles167,174, and184of cameras160,170, and180(shown inFIG.2). For example, location322with angle324, location332with angle334, and location340with angle342correspond to an exemplary three-camera layout. The virtual camera pans, tilts, and zooms, jibs, or otherwise moves to match the corresponding panning, tilting, zooming, and other movements of its corresponding foreground camera, thus displaying the scenery correctly around the foreground actors and elements. This can be performed with a single virtual camera that is relocated whenever the foreground view is switched between foreground cameras, or by multiple virtual cameras with each virtual camera corresponding to a foreground camera. Accordingly, the in-studio cameras can pan, tilt, zoom, focus, jib, and move in any other way the producer desires.

In some embodiments, only some specific scene elements312are generated by the video game engine on a transparent background, the elements312are then composited onto background plates generated by background cameras such as background cameras120,130and140(shown inFIG.1). The result is three distinct layers being composited for the film or broadcast: The foreground filmed in studio, the additional scene elements generated by the video game engine, and the background filmed on location. This allows for great flexibility in generating otherwise difficult scene elements and special effects, and even allows for such effects to be generated and composited during a live broadcast.

Referring now toFIG.7, an outline of the primary steps in the process of a preferred embodiment of the present invention is diagrammed and generally designated500. A first step502involves providing a predetermined number of cameras, as well as a predetermined position for each camera. Since the background will be generated by a video game engine, the virtual cameras can move in any and all directions—the movement is not limited as with 2D background plates—so the angles and movement of the in-studio cameras do not need to be pre-determined at all. A second step504involves filming the foreground in the studio and in front of a green screen using the predetermined number of cameras at the predetermined positions and angles. During filming in step504, step506is also performed, in which the movements, zoom, and focus of the in-studio cameras is tracked. The tracking in step506is performed by a virtual studio system in some embodiments, and by similar routines added to the video game engine in some other embodiments. Accordingly, the in-studio cameras can pan, tilt, zoom, focus, jib, and move in any other way the producer desires.

In step508, the scene is rendered by the video game engine using virtual cameras that track the positions, angles, movements, zoom, and focus of the in-studio cameras. Prior to this step, the scene is modeled in the same way as video game environments; this can be done by studio artists, or a pre-created scene, such as one purchased from a video game environment marketplace, can be used. The foreground is composited onto the resulting scene in step510. In some embodiments, steps508and510are performed in real-time, that is, at the same time steps504and506are performed, in order to allow for a live broadcast.

By adjusting the lighting and camera settings prior to filming in step504, beautiful real shadows emerge on the studio floor and are captured by the camera; these shadows in turn integrate seamlessly with the virtual floors generated in step508, which adds significantly to the realistic nature of the result: The virtual scene looks more real by virtue of the real shadows.

In some embodiments, the video game engine used to render the scene in step508also performs the compositing in step510. This is made possible by the customizability of some popular video game engines, such as the Unreal Engine—which is generally provided with source code allowing even for source-level modification—that allows for the addition of routines for chroma keying that would be needed or useful in adding video from the foreground cameras to the rendered scenes.

In some preferred embodiments, however, compositing is performed by the virtual studio system, such as VizRT, Brainstorm, or another virtual studio system, which in turn accesses the video game engine (e.g., through the virtual studio's plugin system) to generate the background frames onto which the foreground is composited.

Referring now toFIG.8, an outline of the primary steps in the process of a preferred embodiment of the present invention is diagrammed and generally designated600. Process600combines steps from process200(shown inFIG.5) and process500(shown inFIG.7) in order to provide features from both processes.

A first step602involves providing a predetermined number of cameras, as well as a predetermined position and angle for each camera. A second step604involves filming the background on location with the predetermined number of cameras at the predetermined positions and angles. A third step606involves filming the foreground in the studio and in front of a green screen. The third step606also uses the same predetermined number of cameras at the same predetermined positions and angles. During in-studio filming, a fourth step608comprises camera tracking, also known as match moving. In this step, in-studio camera movements, including zooming, panning, tilting, and focus, are provided to the virtual studio system. The tracking in step608is performed by a virtual studio system in preferred embodiments, and by similar routines added to the video game engine in some other embodiments.

In step610, the scene is rendered by the video game engine using virtual cameras that track the positions, angles, movements, zoom, and focus of the in-studio cameras.

Step612, which, as discussed above, may occur simultaneously with the third step606and the fourth step608using computer technology, involves compositing, or placing the foreground elements on the background plates. The in-studio camera movement data provided by the fourth step608allows the virtual studio system to adjust the display of the background—e.g., making it bigger, panning to a different part of the background image, etc. —in order to make the in-studio camera movement appear to affect both foreground and background elements. This compositing, including camera tracking and corresponding background adjustments, can be performed in real-time and appear on in-studio monitors during filming and, if desired, even be broadcast live.

The compositing step in612differs from step210(seeFIG.5) of process200in that scene elements rendered in step610are also added to the final product during compositing. In some embodiments, this step is performed by the same compositing software used to combine the foreground and background elements, such as the video game engine as described above in connection with step510ofFIG.5, while in other embodiments a separate software system is used.

While there have been shown what are presently considered to be preferred embodiments of the present invention, it will be apparent to those skilled in the art that various changes and modifications can be made herein without departing from the scope and spirit of the invention.

Claims

- A method for realistic offsite filming, comprising the steps of: preparing a scene comprising a virtual space;placing scene elements in the virtual space;designating a space in the virtual space for foreground action;determining a number of cameras with corresponding positions and angles;filming a foreground with the determined number of studio cameras at the determined positions and angles;tracking camera movements, zoom, and focus of each of the studio cameras during the step of filming a foreground;rendering a background using the scene with virtual cameras following the movements, zoom, and focus of the studio cameras;and combining the foreground and background.

- The method for realistic filming as recited in claim 1, wherein the steps of rendering a background and combining the foreground and background are performed at the same time as the step of filming a foreground.

- The method for realistic filming as recited in claim 2, further comprising the step of displaying the combined foreground and background in real-time on studio monitors during the step of filming the foreground.

- The method for realistic filming as recited in claim 3, further comprising the step of broadcasting the combined foreground and background live during the step of filming the foreground.

- The method for realistic filming as recited in claim 1, wherein the step of rendering a background is performed by a video game engine.

- The method for realistic filming as recited in claim 1, wherein the steps of rendering a background and combining the foreground and background are performed by a video game engine.

- The method for realistic filming as recited in claim 1, wherein the step of rendering a background is performed by a video game engine, and wherein the step of combining the foreground and background is performed by a virtual studio system.

- A method for realistic offsite filming, comprising the steps of: providing a studio having a green screen;determining a number of cameras with corresponding positions and angles;filming a background on location with the determined number of cameras at the determined positions and angles;filming a foreground in the studio against the green screen with the determined number of cameras at the determined positions and angles;tracking camera movements, zoom, and focus of each of the cameras during the step of filming a foreground;rendering additional scene elements with virtual cameras following the movements, zoom, and focus of the studio cameras;and combining the foreground, background, and additional scene elements, adjusting the presentation of the background to correspond to the tracked movements, zoom, and focus.

- The method for realistic filming as recited in claim 8, wherein the step of combining the foreground, background, and additional scene elements is performed at the same time as the step of filming a foreground.

- The method for realistic filming as recited in claim 9, further comprising the step of displaying the combined foreground, background, and additional scene elements in real-time on studio monitors during the step of filming the foreground.

- The method for realistic filming as recited in claim 10, further comprising the step of broadcasting the combined foreground, background, and additional scene elements live during the step of filming the foreground.

- The method for realistic filming as recited in claim 8, wherein the step of filming a background is performed prior to the step of filming a foreground.

- The method for realistic filming as recited in claim 8, wherein the step of rendering additional scene elements is performed by a video game engine.

- The method for realistic filming as recited in claim 8, wherein the steps of rendering additional scene elements and combining the foreground, background, and additional scene elements are performed by a video game engine.

- The method for realistic filming as recited in claim 8, wherein the step of rendering additional scene elements is performed by a video game engine, and wherein the step of combining the foreground, background, and additional scene elements is performed by a virtual studio system.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.