U.S. Pat. No. 12,397,228

COMMUNICATION WITH IN-GAME CHARACTERS

Issue DateJuly 5, 2023

Illustrative Figure

Abstract

A method for coordinating reactions of a virtual character with script spoken by a user involves specifying keywords in the script, making a first prediction of times for individual keywords and for responses by a virtual character, displaying the script, sensing the time that the user reaches a first keyword, recalculating the predictions based on the syllables in the script the time the user reaches the first keyword, sensing the time that the user reaches a second keyword, recalculating the predictions of times based on the syllables and time to second keyword, continuing to sense times and recalculating until a last keyword is reached, and causing specific actions and responses of the virtual character according to the recalculated predictions of times.

Description

DETAILED DESCRIPTION OF THE INVENTION FIG.1is an architectural diagram100in an embodiment of the invention. Line101in the figure represents the well-known Internet network and all subnetworks and components of the Internet. An Internet-connected server102coupled to a data repository103executing software104provides a web site in this example for a video gaming enterprise. Data repository103stores client data among other data; in one example repository103stores profiles for registered members of the enterprise who are regular players of video games streamed by the enterprise from game servers, such as, server105connected to the Internet, executing software107including a game engine, and storing and processing games and game data in a coupled data repository106. In this example the gaming enterprise hosting server102and105stream games to players using game platforms such as computerized appliance108aexecuting software109a, communicating with the enterprise servers through an Internet service provider (ISP)111a. Platform108ahas a connected head-mounted display110awith input interface110bthrough which a player inputs commands during game play. This head-mounted display may also comprise a gyroscope or other apparatus for determining the head position of the player wearing the display. This is input the system may be used to decide where the user may be looking. The skilled person will understand that ISP111ais a generalized representation meaning to encompass all of the many ways that a gaming platform may connect to the Internet network. A second player platform108bexecuting software109bcommunicates through an ISP111band provides a head-mounted display110cwith input interface111dfor a second player. And a third platform113with a display and a keyboard112has a pointer (not shown) executes software109nand communicates through an ISP111c. The representations of the gaming platforms are meant to illustrate a substantial number of such platforms that may be communicating with the gaming enterprise servers in parallel with a number of players, some of whom may be engaged in multi-player games streamed by the enterprise servers. The skilled ...

DETAILED DESCRIPTION OF THE INVENTION

FIG.1is an architectural diagram100in an embodiment of the invention. Line101in the figure represents the well-known Internet network and all subnetworks and components of the Internet. An Internet-connected server102coupled to a data repository103executing software104provides a web site in this example for a video gaming enterprise. Data repository103stores client data among other data; in one example repository103stores profiles for registered members of the enterprise who are regular players of video games streamed by the enterprise from game servers, such as, server105connected to the Internet, executing software107including a game engine, and storing and processing games and game data in a coupled data repository106.

In this example the gaming enterprise hosting server102and105stream games to players using game platforms such as computerized appliance108aexecuting software109a, communicating with the enterprise servers through an Internet service provider (ISP)111a. Platform108ahas a connected head-mounted display110awith input interface110bthrough which a player inputs commands during game play. This head-mounted display may also comprise a gyroscope or other apparatus for determining the head position of the player wearing the display. This is input the system may be used to decide where the user may be looking. The skilled person will understand that ISP111ais a generalized representation meaning to encompass all of the many ways that a gaming platform may connect to the Internet network.

A second player platform108bexecuting software109bcommunicates through an ISP111band provides a head-mounted display110cwith input interface111dfor a second player. And a third platform113with a display and a keyboard112has a pointer (not shown) executes software109nand communicates through an ISP111c.

The representations of the gaming platforms are meant to illustrate a substantial number of such platforms that may be communicating with the gaming enterprise servers in parallel with a number of players, some of whom may be engaged in multi-player games streamed by the enterprise servers. The skilled person will also understand that, in some circumstances, games may be stored locally and played with local software from data repositories at the individual gaming platforms.

The skilled person will also understand the general process in the playing of video games and in other instances of individual users at local platforms interacting with virtual reality presentations. The general circumstance is that a user (player) at a gaming platform has a display, which may be a head-mounted display as illustrated inFIG.1, and has an input interface through which the player may input commands. A virtual reality presentation is presented on the display which may provide animated virtual characters typically termed avatars in the art. The player interacts with the game and may be associated with a specific avatar such that the player may input commands to move the avatar, and then the game engine receives the commands and manipulates the game data to move the avatar and stream new data to the player and/or other players in a multiplayer game so that the avatar is seen to move in the display.

In some circumstances it may be desirable to provide programming wherein a player may engage in direct voice communication with an avatar, and that avatar may respond to the voice input of the player, by a specific movement, a change of facial expression, and even a voice response. For this purpose, it is, of course, necessary that the game platform in use by the player may have a microphone such that the player's voice input may be communicated to the game engine which may then make the data edits to stream new data to the player and other players in order to display the responses of the avatar to whom voice input is directed. It is typical that platforms as shown inFIG.1have microphones.

In a circumstance of voice input by a player directed to a specific avatar, it is needed that the system recognize the player speaking and the avatar spoken to. The programming of the game or other video presentation has preprogrammed input for the player, and the player's voice input is anticipated. The responses of the avatar are also pre-programmed. The system will update the data stream for the avatar to perform whatever pre-programmed responses are associated with certain voice input.

In an embodiment of the invention with a game or video presentation in progress in which player voice input and virtual character response is programmed and enabled, it is necessary for the system to respond to a trigger event to begin listening for a player voice input. In one embodiment, the system may simply switch to “listening” mode at specific time intervals. Other triggers may comprise tracking which point on the screen a player may be looking or which point the player has concentrated activity. In another example, certain start words may trigger listening mode; for example, the player may say “Hey!” and the system in response will start a voice input-character response process.

In one embodiment, the system, when triggered, continues listening to the player until the player has stopped speaking, or until a predetermined time interval has been reached. In the case of a predetermined time interval, after the time expires the microphone starts listening for the specific start words again. Upon recognizing a trigger event, the system listens for the predetermined duration or until the user has stopped speaking. While the user is speaking, in-game responses are triggered based on the time interval of the speech.

A typical use case involves the system recognizing a trigger event. The system may display a script for the player to address to the virtual character identified as the target of the voice input. As the player begins to read the script from script displayed, rather than resorting through a voice recognition and a library of word and phases matched with character activity, the system simply tracks the players real-time position in the script and triggers the virtual character's responses accordingly. As a simple example, if the script describes details of a recent sporting event to an in-game character, the character may be caused to visibly shake the head when the user mentions a blown play or may widen the eyes with shock when the player explains how their team took the lead in the end. After the player reaches the end of the script and is finished speaking, there may be an interval where the character may speak a predetermined set of lines based on what the user said from the script, and then the system goes back to listening mode, looking for another trigger.

FIG.2is a diagram of an example script201and a corresponding timeline202illustrating time passed from 0 to 16 seconds as a player speaks script201. In this simple example, a player speaking at an “average” or ‘typical’ rate will take a bit over 15 seconds to speak the entire script. The first sentence completes at 4 seconds, the second sentence at about 9.5 seconds, and the third at just over 15 seconds.

In one embodiment, the system assumes the player talking speaks at the rate illustrated inFIG.2and controls the response of the virtual character accordingly. For example, the system may control the character to behave, as shown inFIG.3, along the same example script201. InFIG.3, character responses and actions301are illustrated in words. At 4 seconds, after the player begins speaking, the system causes the character to nod the head, acknowledging that he or she said “you should have seen last night's game.” At about 9 seconds, the system causes the character to raise the eyebrows in response to the speaking of “the shortstop fumbled a routine play.” At about 15 seconds, the system causes the character to gasp and speak, in response to hearing “we won the game with a grand slam!”

Again, this example assumes the player speaks at a particular pre-assumed rate. This creates a problem because, in some circumstances, players may speak faster or slower than this assumed rate, the coordination may be awkward.

Referring back toFIG.1, note that client data is stored in data repository103. The clients are the players/users referred to in these examples. The system may track client voice input and store data points to indicate the speaking rate of each player and use this data to coordinate the character responses to specific players so that an “average” speaking rate might no longer be assumed and coordination may be better controlled.

FIG.4is an example an audio track401on example script201of a player speaking the script ofFIGS.2and3. The variations in the audio track401may be monitored to determine number of syllables and words. In this particular example, the spaces402on the audio track401indicate the end of one sentence of example script201and the beginning of another. With proper coding, the system, knowing the script, can tell where the player speaking is in the script by the variations and quiet spaces in the audio track. The system may then control the virtual character's responses accordingly. This process is precise and does not rely on timing of a speaker.

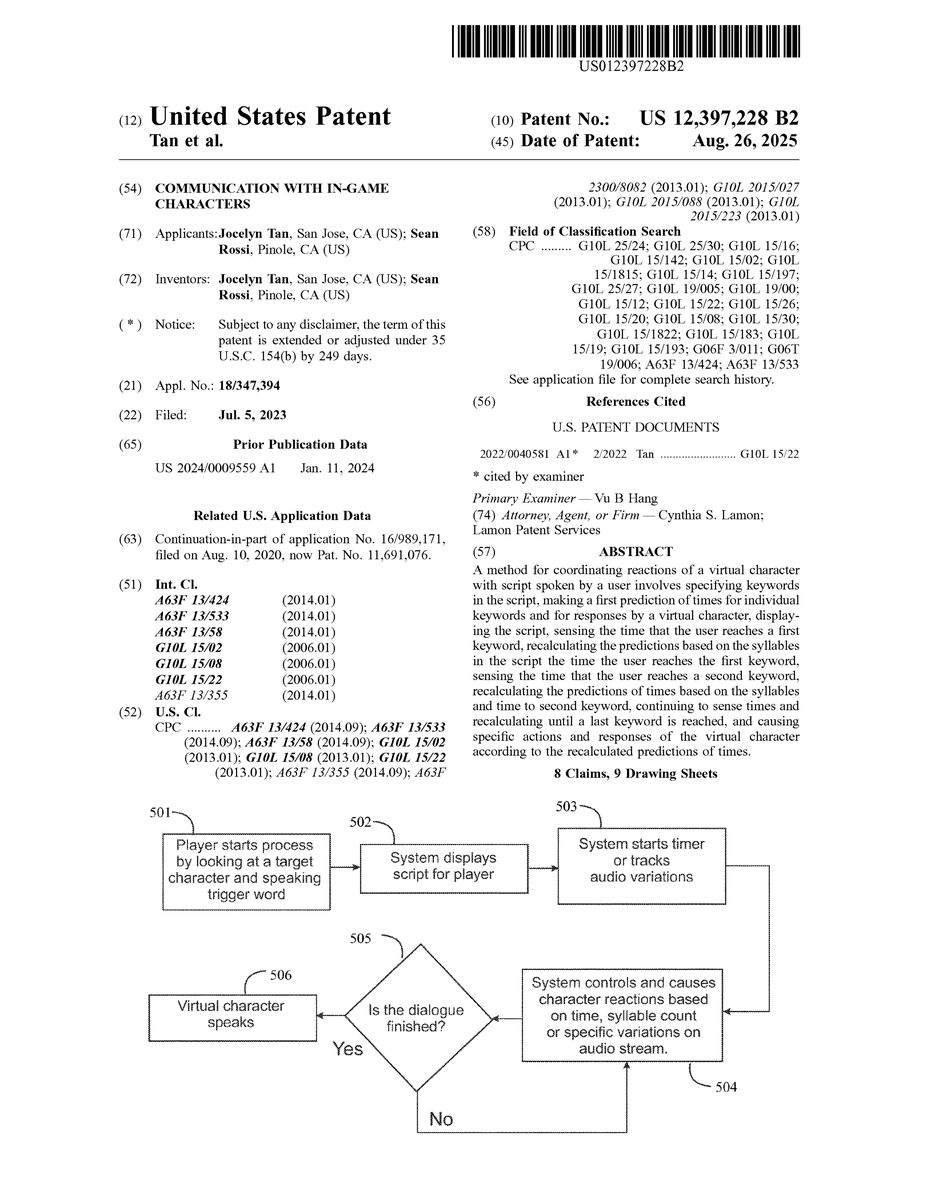

FIG.5is a flow diagram depicting steps of a method for coordinating character responses and actions derived from spoken words and phrases by a player in an embodiment of the invention. At step501a player starts the process by looking at a target character and speaking a trigger word. The system displays a script for the player at step502. The system starts a timer or tracks variations in an audio wave for the player at step503. At step504the system controls and causes character reactions, such as facial expressions or gasps, based on time, syllable count, or specific variations in the audio stream. At step505the system determines if the dialogue is finished. This may be based on a pre-programmed silent period length. If the dialogue is over, the virtual character may make a final utterance and/or movements at step506. If the dialogue is not over, the system continues control and causes character reactions based on time, syllable count, or specific variations in audio waves.

In another embodiment of the invention a method for dynamically adjusting the predicted timeline based on system recognition of keywords in a script is provided. In this method for adjusting the timeline to trigger actions, emotions or verbal responses of the virtual character are triggered at predicted times, but the predicted times for each response of action of the character is dynamically adjusted during the speaking of the script. In this method specific words in the script verbally input by the user are marked as keywords. There may be one keyword, two or more, or every word in the script may be a recognizable keyword.

At the beginning of the spoken script the predictions for times of uttering specific words and triggering actions of the virtual character are based on, in this example, the beginning predicted times may be an average standard, and there is no need to identify and access the particular profile for the speaking rate/pattern of the user identified that is speaking the script. As each keyword is uttered and recognized by the system, the system readjusts the predicted times. If there is just one keyword, the time that that keyword is uttered enables the system to determine the predicted timing for every word and event. If the user is consistent this may be adequate. However, if there are a plurality of keywords, the timing and predictions may be readjusted as the script plays out.

FIG.6is a diagram of a first example of time course correction based on key words. The timeline shows six keywords and the predicted time of 1 second for uttering the first keyword. In this example the user reaches the first keyword in 0.7 seconds instead of the predicted 1 second. Accordingly, the adjusted predicted rate is calculated to be 5.71 syllables per second. The adjusted remaining time is calculated to be 4.20 seconds

FIG.7is a diagram of a second example of time course correction based on key words. The second keyword is reached in 1.2 seconds instead of the newly predicted 1.4 seconds. The new APR is 6.6 syllables per second, and the ART is 3.00 seconds.

FIG.8is a diagram of a third example of time course correction based on key words. The fifth keyword is reached in 3.3 seconds instead of the newly predicted 3.0 seconds. The new APR is 6.06 syllables per second, and the ART is 3.30 seconds.

FIG.9is a diagram showing events on the original and adjusted timelines. The upper timeline is the original, based 28 syllables per second at 4 syllables per second. This shows how the timeline of events is adjusted based on the newly adjusted predicted rates and the adjusted predicted times based on times when specific keywords are reached.

In this method the timing of virtual character response, action, etc. is triggered based on the user's delivery of the script, even if the user changes his or her delivery rate and periods between syllables and sentences.

The skilled person will understand that the embodiments described above are exemplary and not specifically limiting to the scope of the invention. Many functions may be accomplished in different ways, and apparatus may vary in different embodiments. The invention is limited only by the scope of the claims.

Claims

- A method for coordinating reactions of a virtual character with script spoken by a user in any one of a video game and virtual reality (VR) presentation, comprising: uniquely specifying specific keywords in the script;making a first prediction of times for reaching individual keywords and for triggering responses by a virtual character, displaying the script for the user on a display of a computerized platform used by the user, and prompting the player to speak the script;sensing the time that the user reaches a first keyword;recalculating the prediction of times for reaching individual following keywords and for triggering responses by a virtual character, based on the syllables in the script up to the first keyword and the actual time the user reaches the first keyword;sensing the time that the user reaches a second keyword;recalculating the prediction of times for reaching individual following keywords and for triggering responses by a virtual character, based on the syllables in the script up to the second keyword and the actual time the user reaches the second keyword;continuing to sense times to keywords and recalculating the predicted times until a last keyword is reached;and causing specific actions and responses of the virtual character according to the recalculated predictions of times.

- The method of claim 1 further comprising a step for determining a start of a dialogue, wherein the system associates viewpoint of the player with a specific virtual character in the video game or VR presentation and selects a script accordingly.

- The method of claim 1 further comprising a step for sensing that the dialogue is finished.

- The method of claim 3 further comprising a step of playing any one of a verbal reaction and at least facial physical movement by the virtual character after the end of dialogue is sensed.

- A system coordinating reactions of a virtual character with script spoken by a player in any one of a video game or VR presentation, comprising: an internet-connected server executing software and streaming video games or presentations;an internet-connected computerized platform used by a user, the platform having a display, a command input interface, and a microphone;wherein the system uniquely species specific keywords in the script, makes a first prediction of times for reaching individual keywords and for triggering responses by a virtual character, displays the script for the user on a display of a computerized platform used by the user, and prompts the user to speak the script, senses the time that the user reaches a first keyword, recalculates the prediction of times for reaching individual following keywords and for triggering responses by a virtual character, based on the syllables in the script up to the first keyword and the actual time the user reaches the first keyword, senses the time that the user reaches a second keyword, recalculates the prediction of times for reaching individual following keywords and for triggering responses by a virtual character, based on the syllables in the script up to the second keyword and the actual time the user reaches the second keyword, continues to sense times to keywords and recalculates the predicted times until a last keyword is reached, and causes specific actions and responses of the virtual character according to the recalculated predictions of times.

- The system of claim 5 further comprising the system determining a start of a dialogue, wherein the system associates viewpoint of the player with a specific virtual character in the video game or VR presentation and selects a script accordingly.

- The system of claim 5 wherein the system determines the dialogue is finished.

- The system of claim 7 further comprising the system playing any one of a verbal reaction and at least facial physical movement by the virtual character after the end of dialogue is sensed.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.