U.S. Pat. No. 12,296,269

SYSTEMS OF METHODS OF RENDERING TEXTURES IN VIRTUAL SPACES WITH WALLS AND ARRANGEABLE OBJECTS

AssigneeNINTENDO CO., LTD.

Issue DateAugust 26, 2022

Illustrative Figure

Abstract

On the basis of operation data, a wallpaper to be set on a wall object constructing a wall of a room is selected. Then, in a rendering process, a process of rendering the wall object and a first-type object arranged in the room by using a wallpaper texture corresponding to the set wallpaper, and a process of rendering a second-type object arranged in the room, are executed.

Description

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS Hereinafter, one exemplary embodiment will be described. It is to be understood that, as used herein, elements and the like written in a singular form with the word “a” or “an” attached before them do not exclude those in the plural form. [Hardware Configuration of Information Processing Apparatus] First, an information processing apparatus for executing information processing according to the exemplary embodiment will be described. The information processing apparatus is, for example, a smartphone, a stationary or hand-held game apparatus, a tablet terminal, a mobile phone, a personal computer, a wearable terminal, or the like. The information processing according to the exemplary embodiment can also be applied to a game system including a game apparatus, etc., as described above, and a predetermined server. In the exemplary embodiment, a stationary game apparatus (hereinafter, referred to simply as “game apparatus”) is described as an example of the information processing apparatus. FIG.1is a block diagram showing an example of the internal configuration of a game apparatus2according to the exemplary embodiment. The game apparatus2includes a processor81. The processor81is an information processing section for executing various types of information processing to be executed by the game apparatus2. For example, the processor81may be composed only of a CPU (Central Processing Unit), or may be composed of a SoC (System-on-a-chip) having a plurality of functions such as a CPU function and a GPU (Graphics Processing Unit) function. The processor81performs various types of information processing by executing an information processing program (e.g., a game program) stored in a storage section84. The storage section84may be, for example, an internal storage medium such as a flash memory or a DRAM (Dynamic Random Access Memory), or may be configured to utilize an external storage medium mounted to a slot that is not shown, or the ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

Hereinafter, one exemplary embodiment will be described. It is to be understood that, as used herein, elements and the like written in a singular form with the word “a” or “an” attached before them do not exclude those in the plural form.

[Hardware Configuration of Information Processing Apparatus]

First, an information processing apparatus for executing information processing according to the exemplary embodiment will be described. The information processing apparatus is, for example, a smartphone, a stationary or hand-held game apparatus, a tablet terminal, a mobile phone, a personal computer, a wearable terminal, or the like. The information processing according to the exemplary embodiment can also be applied to a game system including a game apparatus, etc., as described above, and a predetermined server. In the exemplary embodiment, a stationary game apparatus (hereinafter, referred to simply as “game apparatus”) is described as an example of the information processing apparatus.

FIG.1is a block diagram showing an example of the internal configuration of a game apparatus2according to the exemplary embodiment. The game apparatus2includes a processor81. The processor81is an information processing section for executing various types of information processing to be executed by the game apparatus2. For example, the processor81may be composed only of a CPU (Central Processing Unit), or may be composed of a SoC (System-on-a-chip) having a plurality of functions such as a CPU function and a GPU (Graphics Processing Unit) function. The processor81performs various types of information processing by executing an information processing program (e.g., a game program) stored in a storage section84. The storage section84may be, for example, an internal storage medium such as a flash memory or a DRAM (Dynamic Random Access Memory), or may be configured to utilize an external storage medium mounted to a slot that is not shown, or the like.

Furthermore, the game apparatus2includes a controller communication section86for allowing the game apparatus2to perform wired or wireless communication with a controller4. Although not shown, the controller4is provided with various kinds of buttons such as a cross key and ABXY buttons, an analog stick, and the like.

A display section5(e.g., a television) is connected to the game apparatus2via an image sound output section87. The processor81outputs images and sounds generated (by executing the aforementioned information processing, for example), to the display section5via the image sound output section87.

[Outline of Game Processing of the Exemplary Embodiment]

Next, an outline of operation of game processing executed by the game apparatus2according to the exemplary embodiment will be described. In this game processing, a user arranges, in a predetermined area in a virtual space, an arrangement item which is a kind of an in-game object and has been obtained by the user in the game. Specifically, the predetermined area is a room of a player character object (hereinafter, referred to simply as “player character”). The arrangement item is a virtual object with which the room is furnished or decorated. More specifically, the arrangement item is a virtual object having a motif of a furniture article, a home appliance, an interior decoration article, etc. The game is executed such that the user obtains such an arrangement item in the game and arranges the item in (the predetermined area set as) the room of the player character, thereby enjoying decorating (customizing) the room. A process according to the exemplary embodiment relates to a process for decorating the room (hereinafter, referred to as “room editing process”), and in particular, a process related to changing wallpaper in the room.

Regarding a method for obtaining a virtual object, the user can obtain the virtual object by, for example, performing an event of “making furniture” in the game. Moreover, the user can increase the number of virtual objects he/she possesses by performing the event a plurality of numbers of times.

Next, the outline of the game processing according to the exemplary embodiment will be described with reference to examples of screens.FIG.2shows an example of a screen immediately after the room editing process has been started. For convenience of description, a state where no arrangement item is arranged in a room of a player character is shown as an example of an initial state. InFIG.2, a room201in the initial state is displayed. In the exemplary embodiment, the room201is a virtual space composed of a floor object202, a plurality of wall objects203, and a ceiling object (not shown). Regarding the wall objects203, one wall object203is arranged along each of four sides of the floor object202having a rectangular shape, that is, four wall objects203in total are arranged. For convenience of description, each wall object203has a height of 2 m in terms of the size in the virtual game space. The wall object203arranged along the upper side of the floor object202has a width of 3 m in terms of the size in the virtual game space.FIG.2shows a game image obtained by capturing the room201with a virtual camera from a predetermined position. InFIG.2, the wall object203on the near side as seen from the virtual camera is made to be transparent for ease of user operation. Moreover, inFIG.2, regarding the wall object203arranged along the upper side of the floor object202, only a substantially lower half part thereof appears in the screen. A part, of the wall object203, which does not appear in the screen is indicated by a dotted line inFIG.2so that the entire image of the wall object203can be easily grasped. It is possible to make this part appear in the screen by changing the position and/or the angle of the virtual camera.

Although not shown inFIG.2, each wall object203may have a window and/or a door.

InFIG.2, a cursor205is also displayed. The user can designate an arrangement object (described later) arranged in the room201and designate various kinds of selection elements displayed on the screen by moving the cursor205through a predetermined operation.

In the exemplary embodiment, as wallpaper for each wall object203, a two-dimensional image (hereinafter, referred to as “texture”) having a predetermined size is pasted. In the exemplary embodiment, one texture is an image having a size of 1 m (width) by 2 m (height) in terms of the size in the virtual game space. In the example ofFIG.2, regarding the wall object203arranged along the upper side of the floor object202, three identical textures are pasted side by side in the horizontal direction (in other words, the same texture is repeatedly pasted in the horizontal direction). Although not visible inFIG.2, the same texture as described above is similarly pasted as wallpaper to each of the wall objects203arranged at the left, right, and lower sides of the floor object202. Hereinafter, when the term “wallpaper” is simply used, this term refers to the “texture” to be pasted to the wall object203.

Next, major operations that the user can perform in the room editing process will be described. In the exemplary embodiment, the user can perform two types of operations, i.e., “arrangement object arranging operation” and “wallpaper change operation”.

[Arranging Operation]

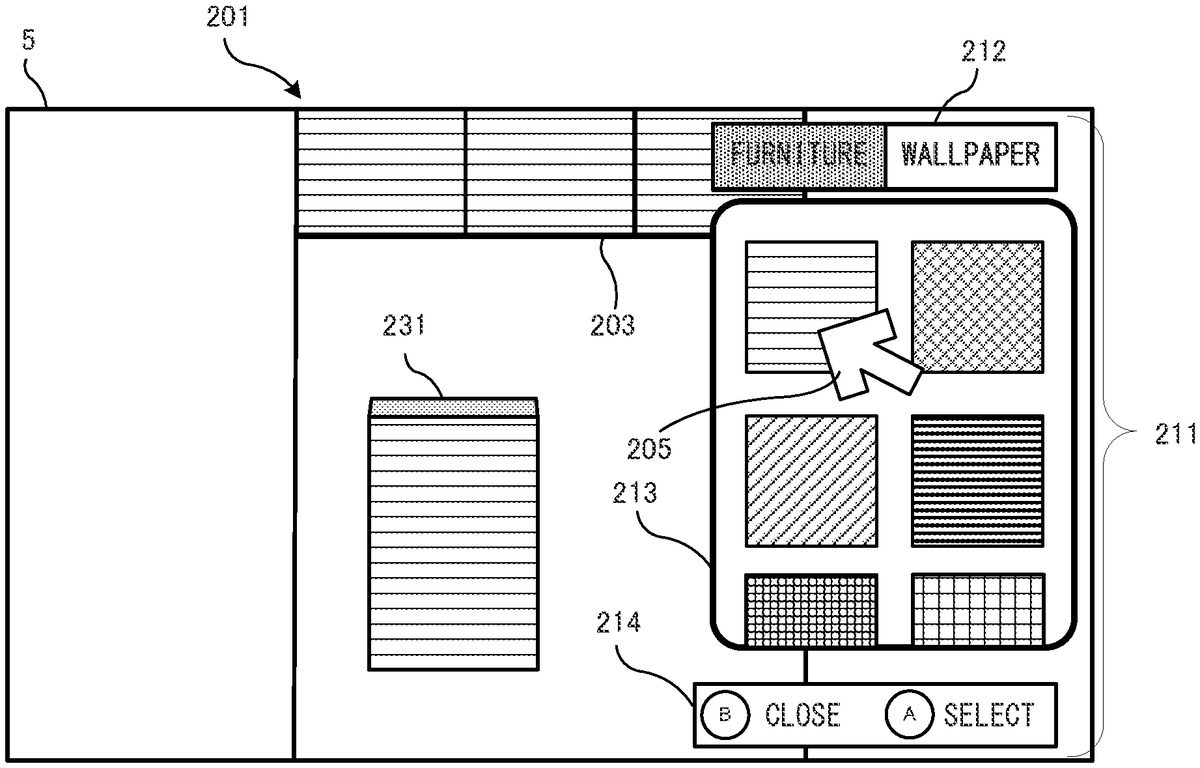

First, an example of “arrangement object arranging operation” will be described. When the user has performed a predetermined operation in the state shown inFIG.2, a list image211as shown inFIG.3is displayed. InFIG.3, the list image211includes category tabs212, a list window213, and an operation guide214. The category tabs212are tabs for switching between the categories of items to be displayed on the list window213. In the exemplary embodiment, there are two categories, “furniture” and “wallpaper”. In the initial state, “furniture” is selected. In another embodiment, tabs corresponding to categories into which the category of “furniture” is further classified, may be displayed. For example, tabs corresponding to categories such as “desk”, “chair”, “home appliance”, etc., may be displayed.

The list window213is a display area scrollable by a drag operation. The display content of the list window213is changed according to the selection state of the category tabs212. When the category tab212of “furniture” is being selected, a list of arrangement objects that the user possesses at that time is displayed. The user selects a predetermined arrangement object from the list window213by using the cursor205, and presses a predetermined button which is an A button disposed on the controller4in this example, thereby deciding the arrangement object being selected, as an object to be arranged. The arrangement object decided as an object to be arranged is arranged at a predetermined position on the floor object202as shown inFIG.4. The predetermined position may be automatically determined according to the arrangement status at that time, or may be designated by the user.

After the user has performed the arrangement object selecting and arranging operations as described above, the user can complete the arranging operation and delete the list image211by pressing a B button.

[Already-Arranged Object Change Operation]

After the list image211has been deleted, operations as follows can be further performed on the arrangement object arranged as described above. That is, the position of the arrangement object can be changed, and the arrangement object can be rotated (the orientation thereof can be changed). Moreover, the arrangement object can also be “put away”. Specifically, when the user moves the cursor205to the already-arranged arrangement object and performs a predetermined operation, the arrangement object enters a currently selected state, and an arrangement editing operation guide221as shown inFIG.5is displayed. With reference toFIG.5, each time the user presses the A button in the state shown inFIG.5, the arrangement object is rotated at units of 90 degrees around an axis orthogonal to the floor object202. Moreover, the user can change the arrangement position by performing a direction instruction input while pressing an X button. The user can decide the content of the rotation or the position change by pressing a B button. Moreover, the user can put away the arrangement object from the room201(so that the arrangement object is not arranged in the room201) by pressing a Y button. In this case, the change is decided without the B button being pressed.

[Wallpaper Change Operation]

Next, an example of “wallpaper change operation” will be described. When the user performs an operation of selecting “wallpaper” from the category tabs212, the content of the list window213is switched to a list of wallpapers that the user possesses at that time, as shown inFIG.6. Regarding the wallpaper, like the case of the arrangement objects, the user can increase the number of wallpapers he/she possesses by obtaining wallpaper through predetermined game processing.

In the state immediately after the user has switched the category tab212to “wallpaper”, the cursor205is automatically positioned to the wallpaper that is set on the wall object203at that time. From this state, the user selects another wallpaper, e.g., a next wallpaper displayed on the right, and presses the A button, whereby the wallpaper set on the wall object203is changed to the selected wallpaper, as shown inFIG.7. After the wallpaper has been changed, the list image211is deleted as described above when the user presses the B button.

[Partition Wall]

Incidentally, in the exemplary embodiment, as one of the arrangement objects described above, “partition wall object” (hereinafter, referred to simply as “partition wall”) is prepared. The partition wall is a plate-shaped object used for partitioning the inside of the room.FIG.8shows a game image in which one partition wall231is arranged through the aforementioned “arrangement object arranging operation”. In the exemplary embodiment, the partition wall231is a plate-shaped rectangular parallelepiped object having main surfaces and side surfaces. The main surfaces are outward surfaces having the largest area. Since the partition wall231has a plate shape, the partition wall231has, as the main surfaces, two surfaces parallel to each other and facing outward from each other. Hereinafter, when the term “main surface” is simply used, this term includes the two surfaces.

In the exemplary embodiment, the main surface has a size of 1 m (width) by 2 m (height) in terms of the size in the virtual game space. That is, the main surface has a rectangular shape, and the size thereof is the same as the image size of one texture described above. This also means that the partition wall231is an object having the same height as the wall object203. In the exemplary embodiment, the user can arrange a plurality of partition walls231described above, and can design a layout in which the inside of the room is partitioned by the partition walls231by appropriately performing position change and/or rotation (in units of 90 degrees) of the partition walls231as described above. For example, it is possible to change the layout of 1DK (1 room+dining and kitchen area) to the layout of 2DK (2 rooms+dining and kitchen area). In the exemplary embodiment, each arrangement object can be rotated in units of 90 degrees. Regarding the orientation of the partition wall231, the partition wall231can be arranged such that the main surface thereof is horizontal or vertical with respect to the wall surface of the wall object203.

In terms of decorating the room, it is also conceivable that a predetermined pattern image is set on the main surface of the partition wall231. For example, it is conceivable that the user sets a predetermined pattern image for each of the plurality of partition walls231. However, such an individual setting operation is considered to take time. Therefore, in the exemplary embodiment, regarding a pattern image used for the main surface of the partition wall231(hereinafter, the pattern image is referred to as “main surface image”), a process of setting a main surface image in accordance with the wallpaper set on the wall object203is performed. That is, in the exemplary embodiment, regarding the main surface image of the partition wall231, a process of automatically using the same texture as (the texture of) the wallpaper set on the wall object203at that time is performed. In the example ofFIG.8, at the time of arranging the partition wall231, the partition wall231having the main surface on which the same texture as the wallpaper of the wall object203is pasted, is arranged. It is assumed that, from this state, an operation of changing the wallpaper is performed. When the user performs a predetermined operation to display the list image211and selects the “wallpaper” tab, a game image as shown inFIG.9is displayed. InFIG.9, as inFIG.6, the cursor205is positioned on the wallpaper that is set on the wall object203at this time. From this state, the user selects, for example, a next wallpaper displayed on the right and presses the A button by operating the cursor205. Then, as shown inFIG.10, the wallpaper of the wall object203is changed and the main surface image of the already-arranged partition wall231is also changed to (the image of) the selected wallpaper. That is, in accordance with the change of the wallpaper, the main surface image of the partition wall231is also changed to the same texture as the wallpaper.

In the exemplary embodiment, a texture of a single-color image is pasted to the side surfaces (surfaces other than the main surface) of the partition wall231. As to what color should be used as the single color, it is determined based on the main surface image. In the exemplary embodiment, the color of a pixel at a predetermined position in the main surface image is determined to be used as the color of the side surfaces. For example, the color of a pixel at any of the corners of the main surface may be used. Alternatively, pixels at a plurality of positions in the main surface are extracted, and a color that is most frequently used among the pixels may be selected as the single color for the side surfaces. In another embodiment, not only a single color but also a plurality of colors may be used for the side surfaces as long as the colors are determined based on the main surface image.

[Details of Room Editing Process According to Exemplary Embodiment]

Next, the room editing process according to the exemplary embodiment will be described in more detail with reference toFIG.11toFIG.19.

[Data to be Used]

First, various kinds of data to be used in this game processing will be described.FIG.11is a memory map showing an example of various kinds of data stored in the storage section84of the game apparatus2. The storage section84includes a program storage area301and a data storage area303. A game processing program302is stored in the program storage area301. An arrangement object master304, a wallpaper master305, possession data306, room construction data307, a current mode308, list data309, operation data310, and the like are stored in the data storage area303.

The game processing program302is a program for executing the game processing according to the exemplary embodiment, and also includes a program code for executing the room editing process.

The arrangement object master304is data that defines all arrangement objects that appear in this game.FIG.12shows an example of the data structure of the arrangement object master304. The arrangement object master304is a database including at least items such as an object ID341, type data342, model data343, and object texture data344.

The object ID341is an ID for uniquely identifying the corresponding arrangement object.

The type data342is data indicating the type of the corresponding arrangement object. Here, a supplemental description of the “type” will be given. In the exemplary embodiment, the arrangement objects are classified into “first-type objects” and “second-type objects” to be managed. In the exemplary embodiment, the first-type objects are the aforementioned partition walls231. The second-type objects are the arrangement objects other than the partition walls231. That is, the arrangement objects having factors linked with setting of wallpaper of the wall object203are classified as the first-type objects while the other arrangement objects are classified as the second-type objects.

The model data343is data that defines a 3D model (stereoscopic shape) of the corresponding arrangement object.

The object texture data344is data that is defined only for the corresponding second-type object and that defines a texture used as the appearance of the second-type object. As described above, since the appearance (main surface image) of the partition wall231as the first-type object can be set and changed in accordance with the wallpaper of the wall object203, the first-type object does not have unique texture data. For example, the first-type object may be given a Null value as the object texture data344. According to the above data structure, the appearance of each first-type object is rendered based on the setting of the wallpaper while the appearance of each second-type object is rendered by using the texture that is uniquely set on the second-type object.

Referring back toFIG.11, the wallpaper master305is data that defines all the wallpapers that appear in this game.FIG.13shows an example of the data structure of the wallpaper master305. The wallpaper master305is a database having at least items such as a wallpaper ID351and wallpaper texture data352. The wallpaper ID351is an ID for uniquely identifying each wallpaper. The wallpaper texture data352is data of a two-dimensional image corresponding to each wallpaper. As described above, in the exemplary embodiment, this image is an image having a size of 1 m by 2 m in terms of the size in the virtual game space.

Referring back toFIG.11, the possession data306is data indicating the arrangement objects and the wallpapers that the user possesses. In the possession data306, object IDs341and wallpaper IDs351according to the possession status of the user are stored. If the user is allowed to possess a plurality of identical objects, the number of the objects may be stored in the possession data306.

The room construction data307is data indicating the current content (layout, etc.) of the room201.FIG.14shows an example of the data structure of the room construction data307. The room construction data307includes at least room size data371, set wallpaper data372, and arrangement position data373. The room size data371is data that defines the size of the room201(in a virtual three-dimensional space). For example, if the user is allowed to designate and change the size of the room201(according to the progress status of the game), the size designated by the user is stored. In the exemplary embodiment, a floor object202having a predetermined size is generated and arranged in accordance with the content designated in the room size data371, and wall objects203and a ceiling object are generated and arranged in accordance with the floor object202. Thus, (the framework of) the room201is constructed.

The set wallpaper data372is data that designates the wallpaper to be set (pasted) on the wall object203. Moreover, as described above, in the exemplary embodiment, the set wallpaper data372also serves as data that designates the main surface image of the partition wall231.

The arrangement position data373is data in which the arrangement positions and orientations of the arrangement objects (including both the first-type objects and the second-type objects) already arranged in the room201, are stored. That is, the arrangement position data373is data in which the current content of the specific layout of the room201is stored. Specifically, the arrangement position data373includes the object IDs341of the arrangement objects being currently arranged, coordinate information indicating the arrangement positions, information indicating the arrangement orientations (e.g., rotation angles), and the like.

The room construction data307may include, in addition to the above data, information that designates textures used for the floor object202and the ceiling object, for example.

Referring back toFIG.11, the current mode308is data indicating the current processing state in the room editing process. In the exemplary embodiment, the “processing state” in the room editing process is roughly classified into three “modes”. The first mode is “list display mode” indicating a state where the list image211as shown inFIG.3orFIG.6is displayed. In this mode, the user is allowed to select an arrangement object to be arranged and set wallpaper, through the list window213. The second mode is “arrangement change mode” indicating a state where an arrangement change operation is performed on an already-arranged arrangement object as shown inFIG.5. In this mode, the user is allowed to move, rotate, and put away the already-arranged arrangement object. The third mode is “waiting mode” indicating a state other than the above states, that is, a state as shown inFIG.2. In this mode, the user is allowed to perform an operation for shifting to the “list display mode” and an operation for shifting to the “arrangement change mode”. In addition, the user is allowed to operate the virtual camera to change the angle at which the room201is captured. Moreover, the user is allowed to perform an instruction to end the room editing process.

The list data309is data that defines the content to be displayed on the list image211, in particular, the list window213. The list data309is generated based on the possession data306. That is, the content displayed on the list window213includes only the arrangement objects and the wallpapers possessed by the user. The list data309has, stored therein, object IDs341and wallpaper IDs351of the objects to be displayed on the list window213, information that defines the display order of the objects (the display positions of images of the arrangement objects in the list window213), and the like. Moreover, the list data309also has, stored therein, information indicating the category to be currently displayed (hereinafter, referred to as “display category information”) among the categories displayed as the category tabs212described above. In the exemplary embodiment, the categories are “furniture” and “wallpaper”, and the default of the display category information is “furniture”.

The operation data310is data indicating the contents of operations performed on the controller4. In the exemplary embodiment, for example, the operation data310includes data indicating the operation states on the operation sections such as the analog stick and the buttons disposed on the controller4. The contents of the operation data310are updated in a predetermined cycle on the basis of a signal from the controller4.

In addition to the above, various kinds of data (e.g., sound data, etc.) to be used in the game processing are stored in the storage section84according to need.

[Details of Processes Executed by Processor81]

Next, the room editing process according to the exemplary embodiment will be described in detail.FIG.15is a flowchart showing the room editing process in detail. This process is started when an operation of editing the room201is performed from a game menu or the like, for example. A process loop of steps S2to S13shown inFIG.15is repeatedly executed for each frame.

The flowchart is merely an example of the processing procedure. Therefore, the order of process steps may be changed as long as the same result is obtained. In addition, the values of variables, and thresholds used in determination steps, are also mere examples, and other values may be employed according to need.

[Preparation Process]

With reference toFIG.15, first, in step S1, the processor81executes a preparation process.FIG.16is a flowchart showing the preparation process in detail. InFIG.16, first, in step S21, the processor81generates list data309as described above on the basis of the possession data306(at that time), and stores the list data309in the storage section84.

Next, in step S22, the processor81sets “wait mode” in the current mode308. This is the end of the preparation process.

[Rendering Process]

Referring back toFIG.15, in step S2, the processor81executes a rendering process. Specifically, first, the processor81performs a process of constructing a room201in the virtual space on the basis of the room construction data307. That is, the processor81generates a floor object202having a predetermined size on the basis of the room size data371, and arranges it in the virtual space. The processor81also generates wall objects203and a ceiling object, and appropriately arranges them at predetermined positions based on the position of the floor object202. Moreover, the processor81also performs a process of pasting the wallpaper designated by the set wallpaper data372to the wall objects203. Next, the processor81arranges the arrangement objects designated by the arrangement position data373on the floor object202on the basis of the positions and orientations indicated by the arrangement position data373. At this time, the processor81determines, based on the type data342, whether each of the arrangement objects included in the arrangement position data373is a first-type object or a second-type object. Regarding the first-type object, i.e., the partition wall231, the processor81performs a process of pasting the wallpaper designated by the set wallpaper data372to the partition wall231, as a main surface image of the partition wall231. Furthermore, the processor81determines a rendering color of the side walls of the partition wall231on the basis of some pixels in the main surface image as described above, and pastes a texture of a single-color image consisting of the determined color to the side walls. Meanwhile, regarding the second-type object, the processor81pastes a texture based on the object texture data344set on the corresponding arrangement object, to the arrangement object. After the room201has been constructed in the virtual space as described above, the processor81captures an image of the virtual space with the virtual camera. Furthermore, the processor81generates, according to need, a game image in which a predetermined image according to the current mode308(e.g., the list image211in the case of the list display mode) is synthesized with the image obtained by capturing the room201with the virtual camera, and outputs the game image to the display section5.

[Determination of Current Mode]

Next, in step S3, the processor81determines whether or not the current mode308is the list display mode. When the current mode308is the list display mode (YES in step S3), the processor81executes a list mode process in step S4. The list mode process will be described later in detail.

When the current mode308is not the list display mode (NO in step S3), the processor81determines in step S5whether or not the current mode308is the arrangement change mode. When the current mode308is the arrangement change mode (YES in step S5), the processor81executes an arrangement change process in step S6. The arrangement change process will be described later in detail.

[Determination on Presence/Absence of Mode Switch Operation]

When the current mode308is not the arrangement change mode (NO in step S5), the processor81acquires the operation data310in step S7. Next, in step S8, the processor81determines whether or not an operation for displaying the list image211(list display operation) has been performed. When the list display operation has been performed (YES in step S8), the processor81sets “list display mode” in the current mode308in step S9, and returns to step S2.

When the list display operation has not been performed (NO in step S8), the processor81determines in step S10whether or not an operation of selecting any of the already-arranged arrangement objects has been performed. When the selecting operation has been performed (YES in step S10), the processor81sets “arrangement change mode” in the current mode308in step S11, and returns to step S2.

When the operation of selecting any already-arranged arrangement object has not been performed (NO in step S11), the processor81determines in step S12whether or not an operation of ending the room editing process has been performed. When the ending operation has been performed (YES in step S12), the processor81ends the room editing process. When the ending operation has not been performed (NO in step S12), the processor81executes another game process according to an operation content in step S13. For example, a process of changing the position and/or the orientation of the virtual camera is executed according to the operation content.

[Process in List Display Mode]

Next, the list mode process in the above step S4will be described.FIGS.17and18are flowcharts showing the list mode process in detail. First, in step S31, the processor81acquires the operation data310. Next, in step S32, the processor81determines whether or not a display category change operation has been performed on the category tabs212. When the display category change operation has been performed (YES in step S32), the processor81executes a process of switching between the categories of the category tabs212in step S33. Specifically, the processor81changes the content of the display category information included in the list data309, according to the operation content. In this embodiment, the category is switched between “furniture” and “wallpaper”. Thereafter, the process proceeds to step S42described later.

Meanwhile, as a result of the determination in step S32, if the display category change operation has not been performed on the category tabs212(NO in step S32), the processor81determines in step S34whether or not an operation of deciding selection of any selected item (arrangement object or wallpaper) in the list window213has been performed. In this embodiment, this operation is pressing the A button. When the selection deciding operation has been performed (YES in step S34), the processor81determines in step S35whether or not the currently displayed category is “wallpaper”. When a result of the determination is “wallpaper” (YES in step S35), this means that the selection deciding operation for any wallpaper has been performed. In this case, in step S36, the processor81sets the selected and decided wallpaper in the set wallpaper data372of the room construction data307. If the rendering process as described above is performed later, the wall objects203and the partition wall231will be rendered by using the selected and decided wallpaper.

Meanwhile, when the currently displayed category is not “wallpaper” (NO in step S35), this means that the currently displayed category is “furniture” and the selection deciding operation has been performed on any arrangement object. In this case, in step S37, the processor81updates the content of the arrangement position data373such that the selected and decided arrangement object is arranged at a predetermined position on the floor object202, with an orientation set as the default. Thus, if the rendering process is performed later, the room201in which the selected and decided arrangement object is arranged will be constructed and rendered. Thereafter, the process proceeds to step S42described later.

Meanwhile, as a result of the determination in step S34, if the selection deciding operation has not been performed on any selected item in the list window213(NO in step S34), the processor81determines, in step S38shown inFIG.18, whether or not an operation for ending the list mode has been performed. As a result of the determination, if the ending operation has not been performed (NO in step S38), the processor81executes as appropriate another process according to an operation content in step S41. For example, a process of moving the cursor205(in the state where selection has yet to be decided), a process of scrolling the list window213, or the like is executed.

Next, in step S42, the processor81generates a list image211according to the currently set category, and performs setting for displaying the list image211as a game image. For example, instruction setting for synthesizing the generated list image211with the image captured by the virtual camera, is performed. Thereafter, the list mode process is ended.

Meanwhile, as a result of the determination in step S38, if the ending operation has been performed (YES in step S38), the processor81sets “wait mode” in the current mode308in step S39. Next, in step S40, the processor81performs display setting for deleting the list image211. Thereafter, the list mode process is ended. This is the end of the description of the list mode process.

[Arrangement Change Process]

Next, the arrangement change process in the above step S6will be described in detail.FIG.19is a flowchart showing the arrangement change process in detail. First, in step SM, the processor81acquires the operation data310. Next, in step S52, the processor81determines whether or not an operation of moving an arrangement object (changing the arrangement position) has been performed. When the moving operation has been performed (YES in step S52), the processor81performs, in step S53, a process of changing the arrangement position of the currently selected arrangement object, according to the operation content. That is, the processor81appropriately updates the content of the arrangement position data373(the arrangement position of the currently selected arrangement object) on the basis of the operation content. Thereafter, the process proceeds to step S61described later.

Meanwhile, as a result of the determination in step S52, if the moving operation has not been performed (NO in step S52), the processor81determines in step S54whether or not an operation of rotating the orientation of the currently selected arrangement object has been performed. As a result of the determination, if the rotating operation has been performed (YES in step S54), the processor81executes, in step S55, a process of rotating the currently selected arrangement object by 90 degrees in a predetermined direction, according to the operation content. That is, the processor81appropriately updates the content of the arrangement position data373(the orientation of the currently selected arrangement object) on the basis of the operation content. Thereafter, the process proceeds to step S61described later.

Meanwhile, as a result of the determination in step S54, if the rotating operation has not been performed (NO in step S54), the processor81determines in step S56whether or not an operation of putting away the currently selected arrangement object has been performed. As a result of the determination, if the operation of putting away the arrangement object has been performed (YES in step S56), the processor81executes, in step S57, a process of putting away the currently selected arrangement object from the room201. Specifically, the processor81deletes, from the arrangement position data373, data of the arrangement object to be put away, thereby putting away the currently selected arrangement object from the room201. Thereafter, the process proceeds to step S59described later.

Meanwhile, as a result of the determination in step S56, if the operation of putting away the arrangement object has not been performed (NO in step S56), the processor81determines in step S58whether or not an operation of deciding the change regarding movement or rotation has been performed. When the operation of deciding the change has been performed (YES in step S58), the processor81sets “wait mode” in the current mode308in step S59. In the subsequent step S60, the processor81deletes the arrangement editing operation guide221from the screen. Thereafter, the arrangement change process is ended.

Meanwhile, as a result of the determination in step S58, if the operation of deciding the change has not been performed (NO in step S58), the processor81generates an image of the arrangement editing operation guide221in step S61. Then, the processor81performs display setting so as to display the arrangement editing operation guide221on the screen. For example, instruction setting of synthesizing the generated image of the arrangement editing operation guide221with the image captured by the virtual camera, is performed. Thereafter, the arrangement change process is ended.

This is the end of the detailed description of the room editing process according to the exemplary embodiment.

As described above, in the exemplary embodiment, setting of the wallpaper of the wall object203and setting of the main surface image of the partition wall231are linked with each other. This allows the user to perform editing of a room having a sense of unity with respect to the atmosphere, through a simple operation. In particular, while it is considered that changing the wallpaper has great effect on the atmosphere of the room, it is possible to unify the overall atmosphere of the room when the same pattern as the wallpaper is used for the partition wall231. According to the process of the exemplary embodiment, it is possible to simplify the operation of providing the atmosphere of the room with a sense of unity, thereby improving convenience of the user. Moreover, the user can select various types of wallpapers one after another by pressing the A button with the wallpaper list window213being opened. Thus, the user can visually recognize the atmosphere of the room in which the wallpaper is quickly changed, while having the impression of zapping TV channels. Thus, convenience of the user can be improved.

[Modifications]

In the above exemplary embodiment, regarding setting of wallpaper, common data (set wallpaper data372) is used for both the wall object203and the partition wall231. However, in another embodiment, the wall object203and the partition wall231may be provided with individual pieces of data for designating wallpaper. In this case, the respective pieces of data may designate the same wallpaper according to the wallpaper change operation.

In the above exemplary embodiment, a two-dimensional image, which is a still picture image, is used as the texture for wallpaper. However, an animation texture (so-called “moving wallpaper”) may be used as the texture for wallpaper. The aforementioned processes can also be applied to the “moving wallpaper”. That is, two types of wallpapers, the “still picture wallpaper” and the “moving wallpaper”, may be used (so as to be selectable by the user). When the user selects the “moving wallpaper”, the selected “moving wallpaper” is set on both the wall object203and the main surface image of the partition wall231. In this case, regarding the reproduction speed of the animation of the “moving wallpaper”, the same reproduction speed may be set for both the wall object203and the partition wall231.

In the above “moving wallpaper”, an image, which is temporarily displayed in a random position at a random timing, may be used. In this case, an area including the wall surfaces of the wall objects203and the main surface of the partition wall231may be used as a target area on which the random position is to be set. For example, it is supposed that, in “moving wallpaper” representing “thunderstorm”, an image of “lighting” is temporarily displayed in a random position at a random timing. When determining the display position of the “lighting”, the wall surfaces of the wall objects203and the main surface of the partition wall231may be combined to be one area and the display position may be determined within this area, instead of determining the display position only within the wall surfaces of the wall objects203or the main surface of the partition wall231.

In the above exemplary embodiment, the same wallpaper is set on all the wall objects203. In another embodiment, for example, different types of wallpapers may be set on the wall objects203arranged at the respective sides of the room201. For example, different types of wallpapers may be set on the right-side wall object203(right wall) and the left-side wall object203(left wall) of the room201. In this case, also regarding the main surface image of the partition wall231, different wallpaper images may be used for one surface and the other surface of the main surfaces of the partition wall231. For example,FIG.20schematically shows the room201viewed from above. It is assumed that “wallpaper A” and “wallpaper B” are set on the left wall and the right wall of the room201, respectively. It is assumed that the partition wall231is arranged at an orientation such that the main surfaces thereof are respectively parallel to the left and right walls of the room201. In this case, regarding wallpaper to be used for each of the main surface images of the partition wall231, the wallpaper of the wall object203to which the main surface is opposed, may be used. In the example ofFIG.20, the “wallpaper A” may be used for the main surface, of the partition wall231, opposing the left wall while the “wallpaper B” may be used for the main surface, of the partition wall231, opposing the right wall. In this case, the color of the side surface of the partition wall231may be determined on the basis of a pixel in one of the main surfaces of the partition wall231. Moreover, the side surface may be divided into two areas, i.e., left and right areas (seeFIG.21), and the color of the left area may be determined based on a color used in the “wallpaper A” while the color of the right area may be determined based on a color used in the “wallpaper B”.

In the above exemplary embodiment, a case where the series of processes related to the room editing process are performed in a single game apparatus2has been described. However, in another embodiment, the series of processes above may be performed in an information processing system that includes a plurality of information processing apparatuses. For example, in an information processing system that includes a terminal side apparatus and a server side apparatus capable of communicating with the terminal side apparatus via a network, a part of the series of processes above may be performed by the server side apparatus. Alternatively, in an information processing system that includes a terminal side apparatus and a server side apparatus capable of communicating with the terminal side apparatus via a network, a main process of the series of processes above may be performed by the server side apparatus, and a part of the series of the processes may be performed by the terminal side apparatus. Still alternatively, in the information processing system, a server side system may include a plurality of information processing apparatuses, and a process to be performed in the server side system may be divided and performed by the plurality of information processing apparatuses. In addition, a so-called cloud gaming configuration may be adopted. For example, the game apparatus2may be configured to send operation data indicating a user's operation to a predetermined server, and the server may be configured to execute various types of game processing and stream the execution results as video/audio to the game apparatus2.

While the exemplary embodiment has been described in detail, the foregoing description is in all aspects illustrative and not restrictive. It is to be understood that numerous other modifications and variations can be devised without departing from the scope of the exemplary embodiments.

Claims

- A computer-readable non-transitory storage medium having stored therein a game program causing a computer of an information processing apparatus to perform operations comprising: processing operation data on the basis of an operation input;positioning a wall object in a room in a virtual space;selecting, based on the operation data, an object from among a plurality of objects including a first-type object and a second-type object, and arranging, moving, or deleting the selected object in the room;selecting, based on the operation data, a wallpaper to be set on the wall object;and generating an image of the virtual space that includes the wall object, the first-type object, and the second-type object by executing, for a given frame, a render process that includes: selecting, based on the wallpaper that has been selected using the operation data, a wallpaper texture and texture mapping the wallpaper texture to the wall object, automatically selecting, based on the wallp as been set, an object texture that corresponds to the wallpaper texture, and texture mapping the object texture to the first-type object that is arranged in the room in the virtual space, and texture mapping a texture that is different from the wallpaper that has been set to the second-type object that is arranged in the room in the virtual space.

- The storage medium according to claim 1, wherein arranging moving, or deleting the selected object on a floor object constructing a floor of the room.

- The storage medium according to claim 1, wherein the first-type object has a main surface, and the main surface of the first-type object is rendered by using the object texture corresponding to the wallpaper texture.

- The storage medium according to claim 3, wherein the main surface of the first-type object has a rectangular shape.

- The storage medium according to claim 3, wherein the wall object is positioned such that at least one of main surfaces of the wall object is along a first direction in the virtual space, and the first-type object is arranged or moved such that the main surface of the first-type object is along the first direction or a second direction perpendicular to the first direction.

- The storage medium according to claim 3, wherein the render process includes using a plurality of instances of the wallpaper texture arranged side by side on a wall surface of the wall object.

- The storage medium according to claim 1, wherein the first-type object has a height, in the virtual space, which is equal to a height of the wall object.

- The storage medium according to claim 1, wherein the first-type object has two main surfaces that are parallel to each other and facing outward from each other, and the two main surfaces are rendered by using the object texture that corresponds to the set wallpaper.

- The storage medium according to claim 1, wherein the operations further comprise: calculating a color based on a color of any pixel in the wallpaper texture used for a main surface of the first-type object, and rendering a side surface, of the first-type object, which is different from the main surface of the first-type object, by using an image that includes the calculated color.

- The storage medium according to claim 1, wherein the operations further comprise two types of wallpapers including a still-picture wallpaper and an animation wallpaper, wherein the selection of the wallpaper to be set on the wall object is based on one of the two types of presented wallpapers;wherein the animation includes an image that is temporarily displayed at a predetermined timing, and as part of the render process when the animation wallpaper has been selected, obtaining a display target based on a main surface of the wall object and a main surface of the first-type object;and determining a position in the display target area as a display position of the image that is temporarily displayed.

- A game system comprising: a user input device;and a game apparatus that includes at least one processor being configured to execute: process operation data from the operation section;position a wall object of a room in a virtual space;select, based on the operation data, an object from among a plurality of objects including a first-type object and a second-type object, and arranging, moving, or deleting the selected object in the room;select . . . based on the operation data, a wallpaper to be set on the wall object;generate an image of the virtual space that includes the wall object, the first-type object, and the second-type object by executing, for a given frame, a render process that includes: selection, based on the wallpaper that has been selected using the operation data, of a wallpaper texture and texture mapping the wallpaper texture to the wall object, automatic selection, based on the wallpaper that has been set, of an object texture that corresponds to the wallpaper texture, and texture mapping the object texture to the first-type object that is arranged in the room in the virtual space, and texture map of a texture that is different from the wallpaper that has been set to the second-type object that is arranged in the room in the virtual space.

- A game apparatus comprising: at least one hardware processor and a memory coupled thereto, the hardware processor configured to perform operations comprising: processing operation data on the basis of an operation input;positioning a wall object in a room in a virtual space;selecting, based on the operation data, an object from among a plurality of objects including a first-type object and a second-type object, and arranging, moving, or deleting the selected object in the room;selecting, based on the operation data, a wallpaper to be set on the wall object;and generating an image of the virtual space that includes the wall object, the first- type object, and the second-type object by executing, for a given frame, a render process that includes: selecting, based on the wallpaper that has been selected using the operation data, a wallpaper texture and texture mapping the wallpaper texture to the wall object, automatically selecting, based on the wallpaper that has been set, an object texture that corresponds to the wallpaper texture, and texture mapping the object texture to the first-type object that is arranged in the room in the virtual space, and texture mapping a texture that is different from the wallpaper that has been set to the second-tyr object that is arranged in the room in the virtual space.

- A game processing method comprising;processing operation data on the basis of an operation input;positioning a wall object in a room in a virtual space;selecting, based on the operation data, an object from among a plurality of objects including a first-type object and a second-type object, and arranging, moving, or deleting the selected object in the room;selecting, based on the operation data, a wallpaper to be set on the wall object;and generating an image of the virtual space that includes the wall object, the first-type obiect, and the second-type object by executing, for a given frame, a render process that includes: selecting, based on the wallpaper that has been selected using the operation data, a wallpaper texture and texture mapping the wallpaper texture to the wall object, automatically selecting, based on the wallpaper that has been set, an object texture that corresponds to the wallpaper texture, and texture mapping the object texture to the first-type object that is arranged in the room in the virtual space, and texture mapping a texture that is not based on the wallpaper that has been set to the second-type object that is arranged in the room in the virtual space.

- The storage medium of claim 1, wherein the object texture and the wallpaper texture are based on common texture data.

- The storage medium of claim 1, wherein the object texture and the wallpaper texture are based on different pieces of texture data.

- The storage medium of claim 1, wherein the wallpaper texture and the object texture are both an animated texture.

- The storage medium of claim 16, wherein the operations further comprise: determining a target display area that is based on a combining at least a main surface of the wall object and a main surface of the first-type object;and determining, within the target display area, a position at which an image for the animated texture is to be displayed.

- The storage medium of claim 17, wherein the position at which the image for the animated texture is randomized over multiple different instances.

- The storage medium of claim 17, wherein a timing at which the image for the animated texture is displayed is randomized over multiple different instances.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.