U.S. Pat. No. 12,268,969

VECTOR-SPACE FRAMEWORK FOR EVALUATING GAMEPLAY CONTENT IN A GAME ENVIRONMENT

AssigneeSony Interactive Entertainment Inc

Issue DateNovember 18, 2022

Illustrative Figure

Abstract

A media system employs techniques to create a framework for evaluating gameplay content in a vector-space. These techniques include monitoring frames for content streams that correspond to gameplay in a game environment, determining feature-values for features associated with the frames, mapping the content streams to position vectors in the vector-space based on the feature-values, and assigning a set of position vectors in the vector-space to an area in the game environment based on a relative proximity of the set of position vectors in the vector-space.

Description

DETAILED DESCRIPTION As used herein, the term “user” refers to a user of an electronic device(s) and actions performed by the user in the context of computer software shall be considered to be actions to provide an input to electronic device(s) that cause the electronic device to perform steps or operations embodied in computer software. As used herein, the terms “stream”, “content”, and/or “channel” generally refer to media content that includes visual and/or audio data. As used herein, the term “frame” refers to media frames that form part of a content stream; As discussed above, an ever increasing amount of diverse content presents new challenges for evaluating and indexing content as well as searching for relevant content. In the context of the video/entertainment industry, such challenges are further magnified by the evolving immersive nature of experiences which provide the myriad of diverse features (e.g., sounds, images, videos, feedback, etc.). In context of a game environment, users often search for videos of gameplay content to help hone their skills, watch other players of interest, identify helpful walk-through videos, and the like. However, such users typically spend a lot of time parsing through a large quantity of content to find relevant content due to the inherent limitations of text-based searches for image-based content. Accordingly, this disclosure provides techniques to create a framework in a vector-space that includes vector positions mapped to frames/content streams and evaluate gameplay content in terms of the vector-space. With respect to evaluating gameplay content, this disclosure describers techniques to create content streams from various frames, determine user-locations in a game environment, identify content streams as relevant to a particular user, and so on. Referring to the figures,FIG.1illustrates a schematic diagram of an example communication environment100. As shown, communication environment100includes a communication network105that represents a distributed collection of devices/nodes110interconnected ...

DETAILED DESCRIPTION

As used herein, the term “user” refers to a user of an electronic device(s) and actions performed by the user in the context of computer software shall be considered to be actions to provide an input to electronic device(s) that cause the electronic device to perform steps or operations embodied in computer software. As used herein, the terms “stream”, “content”, and/or “channel” generally refer to media content that includes visual and/or audio data. As used herein, the term “frame” refers to media frames that form part of a content stream;

As discussed above, an ever increasing amount of diverse content presents new challenges for evaluating and indexing content as well as searching for relevant content. In the context of the video/entertainment industry, such challenges are further magnified by the evolving immersive nature of experiences which provide the myriad of diverse features (e.g., sounds, images, videos, feedback, etc.). In context of a game environment, users often search for videos of gameplay content to help hone their skills, watch other players of interest, identify helpful walk-through videos, and the like. However, such users typically spend a lot of time parsing through a large quantity of content to find relevant content due to the inherent limitations of text-based searches for image-based content. Accordingly, this disclosure provides techniques to create a framework in a vector-space that includes vector positions mapped to frames/content streams and evaluate gameplay content in terms of the vector-space. With respect to evaluating gameplay content, this disclosure describers techniques to create content streams from various frames, determine user-locations in a game environment, identify content streams as relevant to a particular user, and so on.

Referring to the figures,FIG.1illustrates a schematic diagram of an example communication environment100. As shown, communication environment100includes a communication network105that represents a distributed collection of devices/nodes110interconnected by communication links120(and/or network segments) for exchanging data such as data packets140as well as transporting data to/from end nodes or client devices130. Client devices130include personal computing devices, entertainment systems, game systems, laptops, tablets, mobile devices, and the like.

Communication links120represent wired links or shared media links (e.g., wireless links, PLC links, etc.) where certain devices/nodes (e.g., routers, servers, switches, client devices, etc.) communicate with other devices/nodes110, based on distance, signal strength, operational status, location, etc. Those skilled in the art will understand that any number of nodes, devices, links, etc. may be included in communication network105, and further the view illustrated byFIG.1is provided for purposes of discussion, not limitation.

Data packets140represent network traffic/messages which are exchanged over communication links120and between network devices110/130using predefined network communication protocols such as certain known wired protocols, wireless protocols (e.g., IEEE Std. 802.15.4, WiFi, Bluetooth®, etc.), PLC protocols, or other shared-media protocols where appropriate. In this context, a protocol consists of a set of rules defining how the devices or nodes interact with each other.

FIG.2illustrates a block diagram of an example device200, which may represent one or more of devices110/130(or portions thereof). As shown, device200includes one or more network interfaces210(e.g., transceivers, antennae, etc.), at least one processor220, and a memory240interconnected by a system bus250.

Network interface(s)210contain the mechanical, electrical, and signaling circuitry for communicating data over communication links120shown inFIG.1. Network interfaces210are configured to transmit and/or receive data using a variety of different communication protocols, as will be understood by those skilled in the art.

Memory240comprises a plurality of storage locations that are addressable by processor220and store software programs and data structures associated with the embodiments described herein. For example, memory240can include a tangible (non-transitory) computer-readable medium, as is appreciated by those skilled in the art.

Processor220represents components, elements, or logic adapted to execute the software programs and manipulate data structures245, which are stored in memory240. An operating system242, portions of which are typically resident in memory240, and is executed by processor220to functionally organizes the device by, inter alia, invoking operations in support of software processes and/or services executing on the device. These software processes and/or services may comprise an illustrative gameplay evaluation process/service244. Note that while gameplay evaluation process/service244is shown in centralized memory240, it may be configured to collectively operate in a distributed communication network of devices/nodes.

It will be apparent to those skilled in the art that other processor and memory types, including various computer-readable media, may be used to store and execute program instructions pertaining to the techniques described herein. Also, while the description illustrates various processes, it is expressly contemplated that various processes may be embodied as modules configured to operate in accordance with the techniques herein (e.g., according to the functionality of a similar process). Further, while the processes have been shown separately, those skilled in the art will appreciate that processes may be routines or modules within other processes. For example, processor220can include one or more programmable processors, e.g., microprocessors or microcontrollers, or fixed-logic processors. In the case of a programmable processor, any associated memory, e.g., memory240, may be any type of tangible processor readable memory, e.g., random access, read-only, etc., that is encoded with or stores instructions that can implement program modules, e.g., a module having gameplay evaluation process244encoded thereon. Processor220can also include a fixed-logic processing device, such as an application specific integrated circuit (ASIC) or a digital signal processor that is configured with firmware comprised of instructions or logic that can cause the processor to perform the functions described herein. Thus, program modules may be encoded in one or more tangible computer readable storage media for execution, such as with fixed logic or programmable logic, e.g., software/computer instructions executed by a processor, and any processor may be a programmable processor, programmable digital logic, e.g., field programmable gate array, or an ASIC that comprises fixed digital logic, or a combination thereof. In general, any process logic may be embodied in a processor or computer readable medium that is encoded with instructions for execution by the processor that, when executed by the processor, are operable to cause the processor to perform the functions described herein.

FIG.3illustrates a third-person perspective view of gameplay in a game environment, represented by a frame300. Frame300is part of a content stream that corresponds to the gameplay. Notably, a content stream can include any number of frames while a collection/aggregation of frames forms a respective content stream. Here, frame300illustrates the third person perspective view of a character305and graphically shows various features or gameplay attributes. As shown, the features include a health/status310(which may also represent a gameplay status), an equipment list or inventory315, a currently selected weapon316, a number of points320, structures or buildings325(e.g., here, a castle), and the like. Notably, the features of frame300can also include sounds, colors or color palettes, feedback (e.g., haptic feedback, etc.), and the like. Collectively, the features of frame300provide important context for describing a content stream (which includes a number of frames) and further, the features provide a foundation for defining dimensions in a vector-space framework discussed in greater detail herein.

FIG.4illustrates a schematic diagram of a gameplay map400, showing frames—e.g., labeled as frame “1”, “2”, “3”, and “4”—corresponding to locations in the game environment. As mentioned above, the frames are associated with features, which (as shown), include colors indicated by the “color palette” feature, sounds, character selection, and levels. Gameplay map400particularly illustrates changes for feature-values corresponding to these features when, for example, a player moves between different areas or locations in the game environment.

As is appreciated by those skilled in the art, an area or a location in the game environment may be represented by multiple frames which typically include similar feature-values for a given set of features. For example, the game environment for a particular area, such as a room in a house, will often have similar feature-values for frames representing the room. That is, gameplay content for users in the room will often share similar features even if the underlying frames show different views of the same room. Accordingly, despite slight nuances (shown by different views of the room), the underlying frames and associated feature-values for a given set of feature will be similar (e.g., similar colors, hues, sounds, objects in the room, and the like). In this fashion, frames with the same or similar feature-values for a given set of features may be assigned to or grouped within an area and/or a location in the game environment. It should be noted, the features and feature-values shown inFIG.4are provided for purposes of discussion, not limitation. Any number of features discussed herein may be included or excluded (as desired).

FIG.5illustrates a schematic diagram of a convolutional neural network (CNN)500. CNN500generally represents a machine learning network and includes convolutional layers and interconnected neurons that learn how to convert input signals (e.g. images, pictures, frames, etc.) into corresponding output signals (e.g. a position vector in a vector-space).

CNN500may used, for example, to extract features and corresponding feature-values for the frames in a content stream. In operation, CNN500receives an input signal such as a frame/image505and performs a convolution process on frame/image505. During the convolution process, CNN500attempts to label frame/image505with reference to what CNN500learned in the past—if frame/image505looks like previous frame/image previously characterized, a reference signal for the previous frame/image will be mixed into, or convolved with, the input signal (here, frame/image505). The resulting output signal is then passed on to the next layer. CNN500also performs sub-sampling functions between layers to “smooth” output signals from prior layers, reduce convolutional filter sensitivity, improve signal-to-noise ratios, and the like. Sub-sampling is typically achieved by taking averages or a maximum over a sample of the signal.

Generally, convolutional processes are translational invariant and, intuitively, each convolution (e.g., each application of a convolution filter) represents a particular feature of interest (e.g., a shape of an object, a color of a room, etc.). CNN500learns which features comprise a resulting reference signal. Notably, the strength of an output signal from each layer does not depend on where the features are located in frame/image505, but simply whether the features are present. Thus, CNN500can co-locate frames showing different views of a given area/location in the gameplay environment.

After multiple convolutions and sub-sampling steps, the convolved/sub-sampled signal is passed to a fully connected layer for classification, resulting in an output vector510. Fully connected, as is appreciated by those skilled in the art, means the neurons of a preceding layer are connected to every neuron in the subsequent layer. Notably, CNN500also includes feedback mechanisms (not shown) which typically include a validation set to check predictions and compare the predictions with the resultant classification. The predictions and prediction errors provide feedback and are used to refine weights/biases learned. CNN500represents a general convolutional neural network and may include (or exclude) various other layers, processes, operations, and the like, as is appreciated by those skilled in the art.

FIG.6illustrates a block diagram of a vector engine605that includes a feature extraction module610and a vector mapping module620. Vector engine605may represent device200(or portions thereof) and may further operate in conjunction with CNN500to extract feature-values for one or more features615associated with frames—here, frames 1-3—for a corresponding content stream. In particular, vector engine605monitors frames from content streams such as the illustrated frames 1-4. For example, client devices (e.g., client devices130shown inFIG.1) can generate content streams for gameplay in a game environment and communicate such content streams over a network (e.g., network105). Vector engine605monitors these content streams over the communication network and extracts features using feature extraction module610. Feature extraction module particularly extracts features and corresponding feature-values from frames 1-3 for respective content streams. These features can include, for example, the illustrated features615such as a color palette, sounds, level, inventory, and the like.

Feature extraction module610passes extracted features/feature-values to vector mapping module620which further maps a content stream and/or a frame corresponding to the content stream to a respective position vector in a vector-space based on the extracted features/feature-values. Here, the vector-space is represented by an N-dimension vector-space625that includes dimensions corresponding to the features, where a position vector is mapped according to its respective feature-value(s). For example, dimension 1 may correspond to the color palette feature, dimension 2 may correspond to the sounds feature, dimension 3 may correspond to the health feature, and so on. Typically, one dimension corresponds to one feature, however, it is appreciated that any number of dimensions and any number of features may by mapped, as appropriate. In this fashion, vector engine605monitors frames for a content stream and maps the frames and/or the content streams (represented by the frames) to a position vector in a vector-space framework.

N-dimension vector-space625also shows a “query” position vector in close proximity to frame 3, indicated by a distance and an angle α. As discussed in greater detail below, the techniques herein establish a vector-space framework (e.g., N-dimension vector-space625) and evaluate gameplay content according to the vector-space. For example, the techniques evaluate frames for a content-stream in terms of its respective position vectors in the vector-space. Evaluating the frames can include assigning position vectors to areas and/or locations in the game environment, determining a user location in the game environment based on features/feature-values extracted from user frames and proximately located position vectors, establishing criteria for storing, indexing, prioritizing, and/or ranking content streams, searching for relevant content streams based on proximate position vectors, and the like.

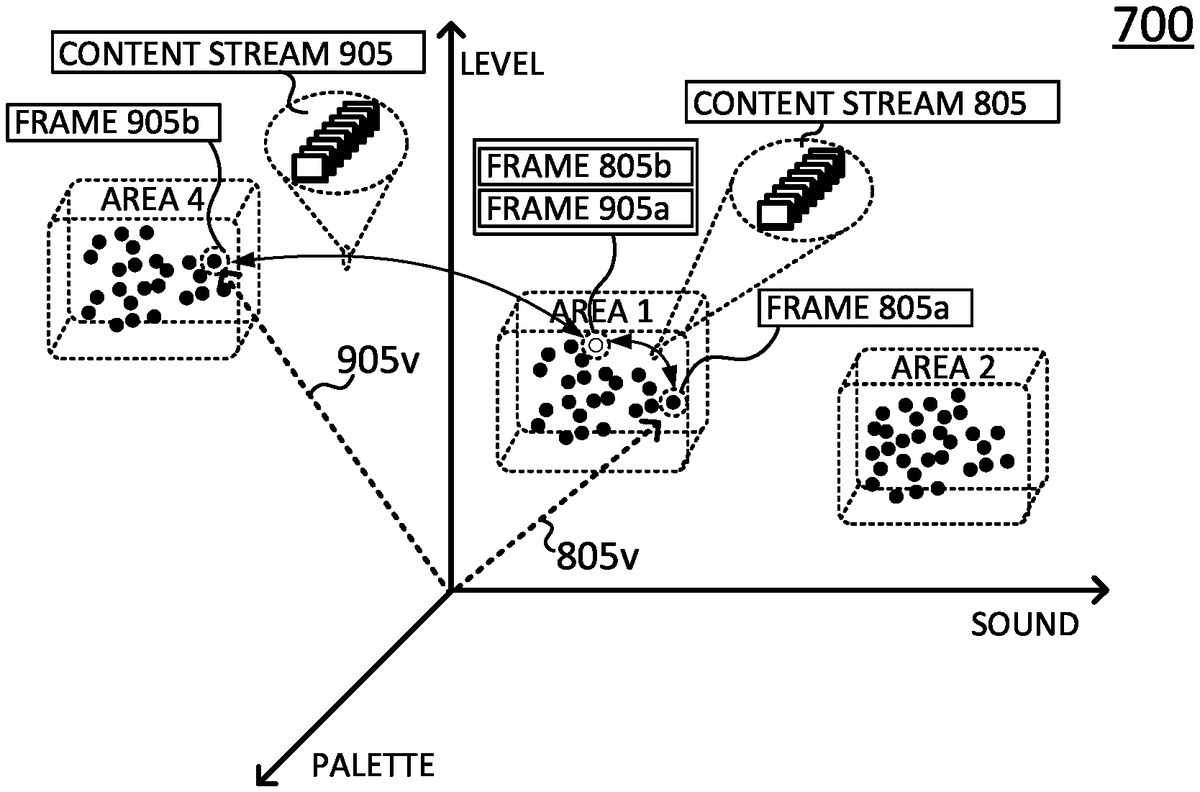

FIG.7illustrates a schematic diagram of a vector-space700, showing position vectors assigned to on one or more areas (areas 1-4) in the game environment. As shown, each dimension of vector-space700corresponds to a respective feature and the position vectors are mapped according to feature-values for each dimension/feature. Vector-space700, similar to the representative vector-space625, is generated from vector engine605where, as discussed, vector engine605extracts features/feature-values from frames for a content stream and maps position vectors to respective frames/content streams.

As mentioned, vector engine605assigns one or more sets or groups of closely located position vectors to areas in the game environment. Typically, these areas in the game environment define one or more locations or positions in the game environment, and a number of frames may represent the same area/location in the game environment where feature-values for these frames are often the same or substantially similar. In terms of vector-space700, these same or substantially similar feature-values are indicated by proximately located position vectors grouped or assigned to an area/location (e.g., one of areas 1-4) in the game environment. Notably, the position vectors in vector-space700may be initially generated from content streams of pre-release test data and may define an initial set of gameplay transitions as well as establish transition thresholds, discussed in greater detail below.

Vector-space700may also be used to determine a user location in the game environment. For example, vector engine605extracts features/feature-values from user frames for a user content stream. Vector engine605maps a position vector to at least one of the user frames, represented by the query vector710. Vector engine605identifies the closest position vectors with respect to query vector710by analyzing relative distance and angles between the position vectors in vector-space700. Here, vector engine605determines the user location as a location within area 1. In some embodiments, the vector-space may simply indicate zones or areas as corresponding to locations in the game environment. In such embodiments, vector engine605determines the user location by identifying the closest zone or area in the vector-space (rather than identifying proximate position vectors). In this fashion, vector-space700provides a framework to determine a user location based on features/feature-values extracted from user frames for a user content stream.

FIGS.8A and8Bcollectively illustrate a gameplay transition, showing a change between locations in the game environment. Specifically,FIG.8Aillustrates a schematic diagram of vector-space700showing a gameplay transition represented by content stream805. Content stream805comprises a number of frames, which include an initial frame805aand an end or terminal frame805b. As shown, initial frame805aand terminal frame805brepresent a first and a last frame, respectively, of the gameplay transition and the gameplay transition represents a change between game environment locations, as indicated by two different position vectors.

Vector engine605is operable to map content stream805to one or more position vectors based on its underlying content frames—namely, initial frame805aand/or terminal frame805b. As shown, content stream805is mapped to a vector805vthat points to a position vector corresponding to initial frame805a. In this fashion, content stream805is indexed or stored according to initial frame805a. However, it is also appreciated content stream805can be mapped to any number of position vectors corresponding to any number of its underlying frames such that portions of the gameplay transition may be mapped to respective position vectors. In this fashion, vector-space700represents a framework for organizing content streams according to areas and/or locations in the game environment.

FIG.8Billustrates a schematic diagram of a gameplay map801that provides game environment context for the vector-space transition shown inFIG.8A. Gameplay map801particularly illustrates a gameplay transition for a character moving between map spaces on gameplay map801, which spaces correspond to locations in the gameplay environment. As shown, vector engine605indexes or assigns content stream805, which comprises frames805a-805b, to “gameplay transition24”, which includes a transition time of 12:04 with 2,200 points achieved during the transition. Gameplay transition24is defined by changes in feature-values for the features associated with frames for content stream805—e.g., color palette, sound, level location, gameplay time, and points. Notably, vector engine605calculates some of these changes based on differences between the feature-values, as is appreciated by those skilled in the art. Further, the identifier “gameplay transition24” may be a general identifier that represents any number of related transitions between specific locations in the game environment.

In some embodiments a user may wish to create a content stream to serve as a walk through for a particular gameplay transition. In such embodiments, the vector engine605receives a request (e.g., a walk through mode request) and begins monitoring user frames that will eventually form the content stream for the gameplay transition. Vector engine605determines feature-values/features for the frames and identifies the gameplay transition (or gameplay transitions) based on changes, as discussed above. Upon completion of the gameplay transition(s) and/or upon termination of the walk-through mode by the user, vector engine605selects the user frames or sets of the user frames to form the content stream for a transition, and maps the content stream to a position vector (e.g., based on an initial frame for the content stream). As discussed in greater detail below, vector engine605may further evaluate the content stream prior to mapping to the position vector to determine if the content stream meets certain minimum standards indicated by one or more thresholds (e.g., transition time thresholds, point value thresholds, etc.).

FIGS.9A and9Bcollectively illustrate multiple gameplay transitions in the game environment. Specifically,FIG.9Aillustrates vector-space700with a first gameplay transition represented by content stream805(corresponding to vector805v) and a second gameplay transition represented by content stream905(corresponding to vector905v).

Content stream905, similar to content stream805(discussed above), comprises a number of frames, including an initial frame905a, which may be the same frame as terminal frame805b, and a terminal frame905b. Here, initial frame905aand terminal frame905brepresent a first and a last frame for the gameplay transition and the gameplay transition indicates movement between locations or areas in the game environment, e.g., between area 1 and area 4.

FIG.9Billustrates a schematic diagram of a gameplay map901that provides game environment context for the gameplay transitions shown inFIG.9A. Gameplay map901particularly illustrates a gameplay transition24corresponding to content stream805, and a gameplay transition87corresponding to content stream905. Gameplay transition87particularly indicates a transition time of 8:00 with 4,600 points achieved during the gameplay transition. As mentioned, vector engine605is operable to evaluate features/feature-values for frames of a content stream and, as shown here, vector engine605calculates the transition time and the points achieved based on differences between the features/feature-values for content frames of content stream905. Gameplay transition24for content stream805and gameplay transition87for content stream905identify different content streams, however, it is appreciated that content streams805and905may form part of a larger content stream such that content streams805/905represent segments or portions thereof. In this fashion, a single content stream may comprise multiple gameplay transitions; each indexed or mapped to a respective position vector in vector-space700.

With respect to the content streams for each transition, vector engine605is also operable to define a content stream by selecting a set of frames that correspond to a gameplay transition. For example, vector engine605identifies a number of frames for a content stream and the number of frames may correspond to multiple transitions (or portions thereof). Vector engine605maps at least a portion of the number of frames to respective vector positions and evaluates the respective vector positions based on assigned areas/locations for proximate position vectors. Vector engine605further selects a set of the frames corresponding to one or more vector positions to represent a given gameplay transition. For example, referring again toFIGS.9A and9B, vector engine605selects initial frame805a, terminal frame805b, and any frames there-between to form content stream805, which represents gameplay transition24. Similarly, vector engine selects initial frame905a, terminal frame905b, and any frames there-between to form content stream905, which represents gameplay transition87. Vector engine605typically selects the frames based on parameters for the gameplay transition. For example, the gameplay transition may be defined by parameters such as moving between locations, areas, levels, defeating opponents in the game environment, achieving a milestone, increasing an inventory, and the like.

FIG.10Aillustrates a block diagram of another embodiment of a vector engine1005that includes a transition evaluation module1010as well as one or more transition thresholds1015. Vector engine1005is similar to and incorporates certain modules of vector engine605, discussed above, and performs similar operations—e.g., extracts features/feature-values, maps frames/content streams to respective position vectors in the vector-space, etc.

In addition to the modules of vector engine605, vector engine1005includes a transition evaluation module1010, which operates as a filter prior to vector mapping module620. Transition evaluation module1010analyzes gameplay transitions in the game environment and selects a content stream as a preferred content stream1021for a particular gameplay transition based on one or more transition threshold(s)1015and/or a gameplay rank/priority1020. Transition threshold(s)1015and gameplay rank/priority1020indicate a preference for one or more features and/or one or more gameplay metrics. As used herein, the term gameplay metric encompasses and includes first order features such as those specifically associated with respective frames for a content stream as well as higher order features/criteria not specifically associated with a frame. For example, a feature for a frame can include a gameplay time, while the gameplay metric can include a threshold gameplay metric (e.g., a threshold time) measured by differences in the gameplay time between multiple frames—e.g., a total time, average (or mean) time, and/or a median time measured between initial and terminal frames. In addition the gameplay metric can also include other features that indicate popularity (e.g., a number of votes, a number of views, a value associated with the player that created a content stream, and the like).

Transition threshold1015, as shown, provides a threshold transition time such that gameplay transitions that exceed the threshold time (e.g., 14:00) are filtered/discarded prior to vector mapping module620. In addition, vector engine1005further ranks or assigns a priority to each gameplay transition shown by a table—gameplay transition rank/priority1020. The rank/priority value for each gameplay transition here indicates a preference for a lower or quicker transition time. In this example, content stream805is selected as a preferred content stream1021for gameplay transition24because it has the fastest or lowest transition time of 12:04. Other content streams, such as content stream806and content stream807include slower or higher transitions times of 12:35 and 14:20, respectively, for gameplay transition24. Further, content stream807is marked with an “X” because its transition time of 14:20 exceeds a transition time threshold (e.g., 14:00). In this fashion, transition evaluation module1010filters content streams for a transition based on transition thresholds1015and selects specific content streams to map in the vector-space based on a priority/rank1020.

While in the above example the transition threshold and rank/priority indicate a preference for a quicker/lower transition time, it is appreciated any gameplay metric may be used to filter and/or rank gameplay transitions. For example, gameplay transitions may be evaluated according to a gameplay status, a number of points, an inventory, a character selection, a health of a character, a sound, a color palette, a level, a position, a gameplay milestone, and so on.

FIG.10Billustrates a block diagram of another embodiment of vector engine1005, showing vector engine1005compiling or aggregating content streams for multiple gameplay transitions into one preferred content stream1022. As mentioned, a single content stream may include multiple gameplay transitions and as shown, vector engine1005selects a preferred content stream for each gameplay transition24,34,44, and54, and compiles the preferred content streams into a single preferred content stream1022.

Vector engine1005further prioritizes content streams for a given gameplay transition according to respective transition times, with a preference for a faster or lower transition time. The gameplay transitions particularly include gameplay transition24, comprising content streams805-807, a gameplay transition34, comprising content streams1043-1045, a gameplay transition44, comprising content streams1046-1048, and a gameplay transition54, comprising content streams1049-1051. Notably, in some embodiments, the transition threshold1015may be different for each gameplay transition. Vector engine1005further selects one content stream for each gameplay transition, and aggregates each content stream into preferred content stream1022. Preferably, vector engine1005maps each selected content stream to a respective position vector in the vector-space such that preferred content stream1022is indexed or bookmarked by multiple position vectors in the vector-space.

WhileFIGS.10A and10Bdescribe vector engine1005operations to select one content stream as a preferred content stream (FIG.10A) or select one content stream for each gameplay transition (FIG.10B), it is appreciated vector engine1005may select any number of content streams as preferred content streams.

Collectively,FIGS.10A and10Billustrate operations to create a vector-space of vector positions for content streams, where the vector positions map to underlying frames for a given content stream. This vector-space establishes a framework to evaluating gameplay content in the game environment where each dimension of the vector-space corresponds to a feature (and/or a gameplay metric). For example, as discussed above, the vector-space may be used to filter and rank content streams such that preferred content streams are mapped to the vector-space while other content streams are discarded (e.g., for failing to satisfy threshold conditions). Notably, this vector-space may be initially created using pre-release test gameplay and further, the pre-release testing gameplay may establish baseline transition conditions or transition thresholds. For example, in the context of the game environment, an initial set of gameplay transitions can represent content streams from multiple users as a selected character moves from a first level to a second level. The gameplay transitions from the multiple users are compiled and statistically analyzed to determine a threshold transition condition—e.g., a threshold transition condition can include a transition time corresponding to a character moving from the first level to the second level. As is appreciated by those skilled in the art, the transition time can include an average or mean time, a median time, a total time, and so on.

FIGS.11A and11Billustrate techniques to identify relevant content for a query vector1105based on proximity between query vector1105and one or more position vectors mapped to content streams/transitions in a vector-space1100.

In particular, with reference toFIG.11A, vector engine1005initially establishes a framework, here a vector-space1100, to evaluate subsequent frames/content streams. As mentioned, vector engine1005monitors frames for content streams, extracts features/feature-values, and maps position vectors for the content streams in vector-space1100. Further, vector engine1005assigns sets or groups of position vectors in close proximity to areas/locations in the game environment—here, area 2.

Vector engine1005uses vector-space1100to search for relevant content streams with respect to a user query, represented by query vector1105. In the context of a game environment, a user may request or search for gameplay content such as a walk-through content to assist completing a gameplay transition (e.g., defeating an opponent, advancing to a new level, obtaining additional inventory, etc.). In such situations, the user may send a request for relevant gameplay content to vector engine1005. Vector engine1005receives the request and monitors user frames for a current user content stream. Notably, vector engine1005may continuously monitor the user content stream during gameplay to improve response times relative to a request (e.g., by caching frames for the last 15 seconds of gameplay, etc.).

Vector engine1005extracts user features/feature-values from the current user content stream (e.g., one of the user frames in the current user content stream) and maps the current user content stream to a user vector—e.g., query vector1105. Vector engine1005evaluates the query vector1105in the vector-space to determine a next-closest or a proximate position vector for a relevant content stream/transition. Here, vector engine1005identifies content stream1110as the relevant content stream for gameplay transition1124based on a position vector mapped to an initial frame1105afor content stream1110.

FIG.11Billustrates vector engine1005identifying multiple content streams as relevant content streams for gameplay transition1124in vector-space1100. In particular, vector engine1005determines content streams1110,1115,1120,1125, and1130as relevant to gameplay transition1124(and relevant to query vector1105) based on position vectors mapped to the respective content streams and their proximity to query vector1105. Here, vector engine1005further assigns a priority or a rank to each content stream based on respective feature-values for features associated with the content streams. For example, as discussed above, vector engine1005can rank the relevant content streams based on a transition time for gameplay transition1124. However, it is also appreciated any combination of features/feature-values may be weighted or prioritized as appropriate.

FIG.12illustrates the third-person perspective view of gameplay in the game environment shown inFIG.3, further showing portions of relevant content streams for a current gameplay transition. Here, the content streams include the above-discussed content streams1110,1115, and1120, where each content stream is represented by an image or thumbnail.FIG.12also shows an example search feature1205, which a user can interact with to cause vector engine1005to execute the search functions discussed above. Importantly, the relevant content streams are presented according to respective rank or priority, such that content stream1110is displayed first or at a top portion of frame300, content stream1110is displayed second or directly below content stream1110, and so on.

FIG.13illustrates an example simplified procedure1300for evaluating gameplay in a game environment. Procedure1300is particularly described with respect to a vector engine (e.g., vector engine605and/or vector engine1005) performing certain operations, however it is appreciated such operations are not intended to be limited to the vector engine and further such discussion is for purposes of illustration, not limitation.

Procedure1300begins at step1305and continues to step1310where, as discussed above, the vector engine monitors frames for content streams that correspond to gameplay in a game environment. The vector engine determines, at step1315, feature-values for features associated with the frames and selects, at step1320, frames to form content streams representing gameplay transitions in the game environment. Next, in step1325, the vector engine maps the content streams in a vector-space based on the feature-values for underlying frames. In performing these steps, the vector engine particularly evaluates the frames for respective content streams and selects certain frames for gameplay transitions (e.g., preferred content streams) based on threshold conditions/transition thresholds, as discussed above.

The vector engine also uses the vector-space to determine a user location in the game environment based on user frames for a user content stream. In particular, in step1330, the vector engine assigns a set of position vectors in the vector-space to an area or location in the game environment and, in step1335, the vector engine monitors a user frame for a user stream. The vector engine maps, in step1340, the user stream to a user vector (e.g., query vector710) based on features/feature-values extracted from the user frame. The vector engine analyzes relative distances and/or angles between the position vectors in the vector-space to determine, in step1345, the user vector is proximate to the area/location assigned to other position vectors. Thus, the vector engine determines the user location based on the area/location assigned to the other position vectors. The vector engine further presents, in step1350, the user location in the game environment and/or portions of the content streams mapped to the position vectors in the set of position vectors.

Procedure subsequently ends in step1355, but may continue on to step1310where the vector engine monitors frames for content streams. Collectively, the steps in procedure1300describe techniques to evaluate gameplay in a game environment using a framework defined in a vector-space.

FIG.14illustrates a simplified procedure1400for identifying relevant gameplay content for a game environment. As discussed below, procedure1400is described with respect to operations performed by a vector engine (e.g., vector engine605and/or vector engine1005), however, it is appreciated such operations are not intended to be limited to the vector engine, and further such discussion is for purposes of illustration, not limitation.

Procedure1400begins at step1405and continues to step1410where, as discussed above, the vector engine monitors a user stream and selects a user frame. For example, in context of the game environment, a user may request/search for relevant gameplay content to assist the user through a gameplay transition. In such context, the vector engine monitors the user game play, selects a user frame from the user stream, and extracts feature-values for corresponding features to generate, in step1415, a user query. The vector engine further maps the user query, in step1420, to a user-vector in the vector-space, and identifies, in step1425, relevant content streams based on proximity between vector positions mapped to the relevant content streams and the user-vector.

Next, in step1430, the vector engine determines relevant feature-values for the features associated with the frames and, in step1435, assigns priority values to the relevant content streams based on the relevant feature-values. For example, as mentioned above, the content streams may correspond to gameplay transitions in the game environment and the vector engine can determine gameplay transitions times for the content streams. In some embodiments, the vector engine compares the gameplay transition times to a threshold transition time to further filter/discard irrelevant content streams. While the transition times are one example of feature filtering/weighting, it is appreciated any number of features may be weighted or prioritized to identify relevant content streams for a given user query. In this sense, a character with specific attributes, a particular inventory of items, and/or other features may be more relevant to a specific user and may be accorded an appropriate weight/priority.

The vector engine further selects one or more relevant content streams, in step1440, based on their respective priority values and, in step1445, presents at least a portion (e.g., a thumbnail, etc.) of the selected relevant content streams to the user. The vector engine may provide any number of views to highlight the relevance for content streams (e.g., a list view, etc.) Procedure1400subsequently ends at step1450, but may continue on to step1410where the vector engine monitors a user stream and selects a user frame.

It should be noted some steps within procedures1300-1400may be optional, and further the steps shown inFIGS.13-14are merely examples for illustration, and certain other steps may be included or excluded as desired. Further, while a particular order of the steps is shown, this ordering is merely illustrative, and any suitable arrangement of the steps may be utilized without departing from the scope of the embodiments herein. Moreover, while procedures1300and1400are described separately, steps from each procedure may be incorporated into each other procedure, and the procedures are not meant to be mutually exclusive.

The techniques described herein, therefore, describe operations to create a framework in a vector-space and evaluate gameplay content in context of the vector-space. In particular, the techniques to evaluate the gameplay content include, for example, creating content streams from frames, determining user-locations in a game environment, identify content streams as relevant to a particular user, and the like. While there have been shown and described illustrative embodiments to evaluate gameplay content using the above-discussed vector-space using particular devices and/or modules (e.g., vector-engines), it is to be understood that various other adaptations and modifications may be made within the spirit and scope of the embodiments herein. For example, the embodiments have been shown and described herein with relation to certain systems, platforms, devices, and modules performing specific operations. However, the embodiments in their broader sense are not as limited, and may, in fact, such operations and similar functionality may be performed by any combination of the devices shown and described.

The foregoing description has been directed to specific embodiments. It will be apparent, however, that other variations and modifications may be made to the described embodiments, with the attainment of some or all of their advantages. For instance, it is expressly contemplated that the components and/or elements described herein can be implemented as software being stored on a tangible (non-transitory) computer-readable medium, devices, and memories such as disks, CDs, RAM, and EEPROM having program instructions executing on a computer, hardware, firmware, or a combination thereof.

Further, methods describing the various functions and techniques described herein can be implemented using computer-executable instructions that are stored or otherwise available from computer readable media. Such instructions can comprise, for example, instructions and data which cause or otherwise configure a general purpose computer, special purpose computer, or special purpose processing device to perform a certain function or group of functions. Portions of computer resources used can be accessible over a network. The computer executable instructions may be, for example, binaries, intermediate format instructions such as assembly language, firmware, or source code.

Examples of computer-readable media that may be used to store instructions, information used, and/or information created during methods according to described examples include magnetic or optical disks, flash memory, USB devices provided with non-volatile memory, networked storage devices, and so on. In addition, devices implementing methods according to these disclosures can comprise hardware, firmware and/or software, and can take any of a variety of form factors. Typical examples of such form factors include laptops, smart phones, small form factor personal computers, personal digital assistants, and so on.

Functionality described herein also can be embodied in peripherals or add-in cards. Such functionality can also be implemented on a circuit board among different chips or different processes executing in a single device, by way of further example. Instructions, media for conveying such instructions, computing resources for executing them, and other structures for supporting such computing resources are means for providing the functions described in these disclosures.

Accordingly this description is to be taken only by way of example and not to otherwise limit the scope of the embodiments herein. Therefore, it is the object of the appended claims to cover all such variations and modifications as come within the true spirit and scope of the embodiments herein.

Claims

- A method for evaluating gameplay content in game environments, the method comprising: receiving information indicating a preference for one or more content stream features and one or more gameplay metrics;monitoring a plurality of content streams having a plurality of frames;filtering the content streams based on one or more of the frames including the content stream features that meet one or more thresholds associated with the features, wherein at least one of the monitored content streams is discarded;assigning priority values to the features of the filtered content streams based on gameplay metrics that correspond to the received preference;selecting a subset of content streams from the filtered content streams, wherein the subset of content streams is selected based on a respective ranking of the assigned priority values;mapping feature-values extracted from the frames of the selected subset of content streams in a vector-space, wherein the extracted feature-values are mapped to one or more position vectors within the vector-space, wherein the selected subset of content streams are represented as a series of indexed vectors that is an aggregation of different portions of the monitored content streams;and indexing the mapped feature-values within the vector-space based on their corresponding position vectors.

- The method of claim 1, wherein the received information includes information regarding a gameplay transition defined by one or more changes in feature-values of the features of the content streams.

- The method of claim 1, wherein the received information includes information regarding a plurality of gameplay transitions each associated with a different threshold value of the one of more thresholds.

- The method of claim 1, wherein filtering the content streams is further based on a threshold transition time, wherein a transition time of the at least one discarded stream exceeds the threshold transition time.

- The method of claim 1, wherein filtering the content streams is further based on one or more point value thresholds.

- The method of claim 1, wherein assigning the priority values to the features is further based on a transition time, and wherein a high priority value indicates a lower transition time.

- The method of claim 1, wherein assigning the priority values is further based on a combination of different feature-values, and wherein at least one of the different feature-values is weighted.

- The method of claim 1, wherein assigning the priority values is further based on gameplay metrics of the filtered content streams.

- The method of claim 8, wherein the gameplay metrics include one or more values that indicate popularity.

- The method of claim 1, wherein the position vectors represent an in-game position.

- A system for evaluating gameplay content in game environments, the system comprising: memory that stores information regarding a vector-space that corresponds to a game environment;a network interface that communicates over one or more communication networks to receive information indicating a preference for one or more content stream features and one or more gameplay metrics;and a processor that executes instructions stored in memory, wherein execution of the instructions by the processor: monitors a plurality of content streams having a plurality of frames;filters the content streams based on one or more of the frames including the content stream features that meet one or more thresholds associated with the features, wherein at least one of the monitored content streams is discarded;assigns priority values to the features of the filtered content streams based on gameplay metrics that correspond to the received preference;selects a subset of a content streams from the filtered content streams, wherein the subset of content streams is selected based on a respective ranking of the assigned priority values;maps feature-values extracted from the frames of the selected subset of content streams in the vector-space, wherein the extracted feature-values are mapped to one or more position vectors within the vector-space, wherein the selected subset of content streams are represented as a series of indexed vectors that is an aggregation of different portions of the monitored content streams;and indexes the mapped feature-values within the vector-space based on their corresponding position vectors.

- The system of claim 11, wherein the received information includes information regarding a gameplay transition defined by one or more changes in feature-values of the features of the content streams.

- The system of claim 11, wherein the received information includes information regarding a plurality of gameplay transition each associated with a different threshold value of the one of more thresholds.

- The system of claim 11, wherein the content streams are filtered further based on a threshold transition time, wherein transition time of the at least one discarded stream exceeds the threshold transition time.

- The system of claim 11, wherein the content streams are filtered further based on one or more point value thresholds.

- The system of claim 11, wherein the priority values to the features are assigned based on a transition time, and wherein a high priority value indicates a lower transition time.

- The system of claim 11, wherein the priority values are assigned further based on a combination of different feature-values, wherein at least one of the different feature-values is weighted.

- The system of claim 11, wherein the priority values are assigned further based on gameplay metrics of the filtered content streams.

- The system of claim 18, wherein the gameplay metrics include a number of achieved points.

- A non-transitory, computer-readable storage medium, having instructions encoded thereon, the instructions executable by a processor to perform a method for evaluating gameplay content in game environments, the method comprising: receiving information indicating a preference for one or more content stream features and one or more gameplay metrics;monitoring a plurality of content streams having a plurality of frames;filtering the content streams based on one or more of the frames including the content stream features that meet one or more thresholds associated with the features, wherein at least one of the monitored content streams is discarded;assigning priority values to the features of the filtered content streams based on gameplay metrics that correspond to the received preference;selecting a subset of content streams from the filtered content streams, wherein the subset of content streams is selected based on a respective ranking of the assigned priority values;mapping feature-values extracted from the frames of the selected content streams in a vector-space, wherein the extracted feature-values are mapped to one or more position vectors within the vector-space, wherein the selected subset of content streams are represented as a series of indexed vectors that is an aggregation of different portions of the monitored content streams;and indexing the mapped feature-values within the vector-space based on their corresponding position vectors.

Disclaimer: Data collected from the USPTO and may be malformed, incomplete, and/or otherwise inaccurate.