U.S. Pat. No. 12,263,405

DISPLAY VIDEOGAME CHARACTER AND OBJECT MODIFICATIONS

AssigneeCOLOPL, INC.

Issue DateFebruary 28, 2022

Illustrative Figure

Abstract

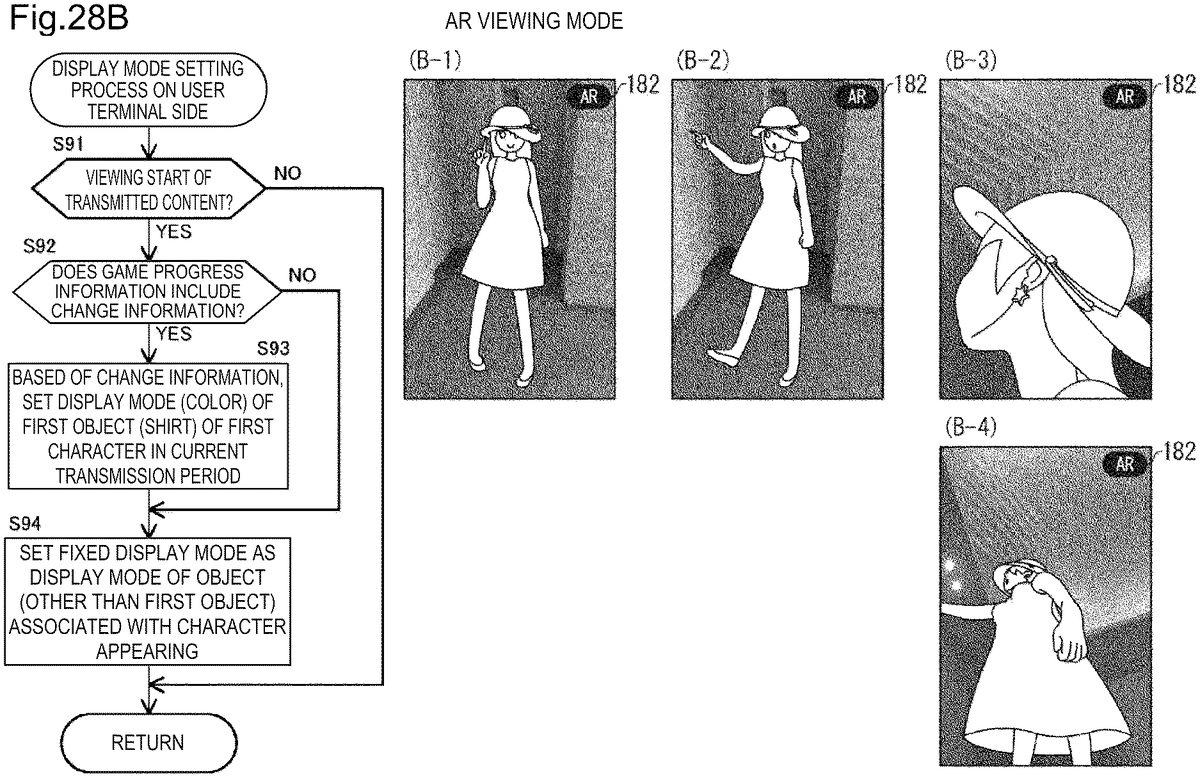

First display data is received to enable display of a video of a virtual space including a first character, which moves in cooperation with a behavior of a performer. A video is displayed in which the first character behaves in the virtual space on the basis of the first display data. The first character is associated with a prescribed first object, and a display mode of the first object is changed in accordance with a predetermined rule.

Description

DESCRIPTION OF EMBODIMENTS A system according to the present disclosure is a system for providing a game to a plurality of users. The system will be described below with reference to the drawings. The present invention is not limited to these illustrations but is indicated by the scope of the claims, and it is intended that the present invention includes all modifications within the meaning and scope equivalent to the scope of the claims. In the following description, the same components are denoted by the same reference numerals in the description of the drawings, and will not be repeatedly described. FIG.1is a diagram showing an overview of a system1according to the present embodiment. The system1includes a plurality of user terminals100(computers), a server200, a game play terminal300(an external device, a second external device), and a transmission terminal400(an external device, a first external device). InFIG.1, user terminals100A to100C, that is, three user terminals100are shown as an example of the plurality of user terminals100, but the number of user terminals100is not limited to the shown example. In the present embodiment, the user terminals100A to100C are described as “user terminals100” when being not necessary to be distinguished from each other. The user terminal100, the game play terminal300, and the transmission terminal400are connected to the server200via a network2. The network2is configured by various mobile communication systems constructed by the Internet and a wireless base station. Examples of the mobile communication system include so-called 3G and 4G mobile communication systems, LTE (Long Term Evolution), and a wireless network (for example, Wi-Fi (registered trademark)) that can be connected to the Internet through a predetermined access point. (Overview of Game) In the present embodiment, as an example of a game provided by the system1(hereinafter, referred to as “main game”), a game mainly played by the user of the ...

DESCRIPTION OF EMBODIMENTS

A system according to the present disclosure is a system for providing a game to a plurality of users. The system will be described below with reference to the drawings. The present invention is not limited to these illustrations but is indicated by the scope of the claims, and it is intended that the present invention includes all modifications within the meaning and scope equivalent to the scope of the claims. In the following description, the same components are denoted by the same reference numerals in the description of the drawings, and will not be repeatedly described.

FIG.1is a diagram showing an overview of a system1according to the present embodiment. The system1includes a plurality of user terminals100(computers), a server200, a game play terminal300(an external device, a second external device), and a transmission terminal400(an external device, a first external device). InFIG.1, user terminals100A to100C, that is, three user terminals100are shown as an example of the plurality of user terminals100, but the number of user terminals100is not limited to the shown example. In the present embodiment, the user terminals100A to100C are described as “user terminals100” when being not necessary to be distinguished from each other. The user terminal100, the game play terminal300, and the transmission terminal400are connected to the server200via a network2. The network2is configured by various mobile communication systems constructed by the Internet and a wireless base station. Examples of the mobile communication system include so-called 3G and 4G mobile communication systems, LTE (Long Term Evolution), and a wireless network (for example, Wi-Fi (registered trademark)) that can be connected to the Internet through a predetermined access point.

(Overview of Game)

In the present embodiment, as an example of a game provided by the system1(hereinafter, referred to as “main game”), a game mainly played by the user of the game play terminal300will be described. Hereinafter, the user of the game play terminal300called a “player”. As an example, the player (performer) operates one or more characters appearing in the main game to carry on the game. In the main game, the user of the user terminal100plays a role of supporting the progress of the game by the player. Details of the main game will be described below. The game provided by the system1may be a game in which a plurality of users participate, and no limitation to this example is intended.

(Game Play Terminal300)

The game play terminal300controls the progress of the game in response to operations input by the player. Further, the game play terminal300sequentially transmits information (hereinafter, game progress information) generated by a player's game play to the server200in real time.

(Server200)

The server200sends the game progress information (second data) received in real time from the game play terminal300, to the user terminal100. In addition, the server200mediates the sending and reception of various types of information between the user terminal100, the game play terminal300, and the transmission terminal400.

(Transmission Terminal400)

The transmission terminal400generates behavior instruction data (first data) in response to operations input by the user of the transmission terminal400, and transmits the behavior instruction data to the user terminal100via the server200. The behavior instruction data is data for reproducing a moving image on the user terminal100, and specifically, is data for producing behaviors of characters appearing in the moving image.

In the present embodiment, as an example, the user of the transmission terminal400is a player of the main game. Further, as an example, the moving image reproduced on the user terminal100based on the behavior instruction data is a moving image in which the characters operated by the player in the game behave. The “behavior” is to move at least a part of a character's body, and also includes a speech. Therefore, the behavior instruction data according to the present embodiment includes, for example, sound data for controlling the character to speak and motion data for moving the character's body.

As an example, the behavior instruction data is sent to the user terminal100after the main game is over. Details of the behavior instruction data and the moving image reproduced based on the behavior instruction data will be described below.

(User Terminal100)

The user terminal100receives game progress information in real time, and generate a game screen to display using the information. In other words, the user terminal100reproduces the game screen of the game being played by the player in real-time rendering. Thereby, the user of the user terminal100can visually recognize the same game screen as the game screen that the player visually recognize while playing the game at substantially the same timing as the player.

In addition, the user terminal100generates information for supporting the progress of the game by the player in response to the operation input by the user, and sends the information to the game play terminal300via the server200. Details of the information will be described below.

Further, the user terminal100receives the behavior instruction data from the transmission terminal400, and generates and reproduces a moving image (video) using the behavior instruction data. In other words, the user terminal100reproduces the behavior instruction data by rendering.

FIG.2is a diagram showing a hardware configuration of the user terminal100.FIG.3is a view showing a hardware configuration of the server200.FIG.4is a diagram showing a hardware configuration of the game play terminal300.FIG.5is a diagram showing a hardware configuration of the transmission terminal400.

(User Terminal100)

In the present embodiment, as an example, an example is described in which the user terminal100is implemented as a smartphone, but the user terminal100is not limited to the smartphone. For example, the user terminal100may be implemented as a feature phone, a tablet computer, a laptop computer (a so-called notebook computer), or a desktop computer. Further, the user terminal100may be a game device suitable for a game play.

As shown inFIG.2, the user terminal100includes a processor10, a memory11a, a storage12, a communication interface (IF)13, an input/output IF14, a touch screen15(display unit), a camera17, and a ranging sensor18. These components of the user terminal100are electrically connected to one another via a communication bus. The user terminal100may include an input/output IF14that can be connected to a display (display unit) configured separately from a main body of the user terminal100instead of or in addition to the touch screen15.

Further, as shown inFIG.2, the user terminal100may be configured to have the capability to communicate with one or more controller1020. The controller1020establishes communication with the user terminal100in accordance with a communication standard, for example, Bluetooth (registered trademark). The controller1020may include one or more button, and sends an output value based on the user's input operation to the button to the user terminal100. In addition, the controller1020may include various sensors such as an acceleration sensor and an angular velocity sensor, and sends the output values of the various sensors to the user terminal100.

Instead of or in addition to the user terminal100including the camera17and the ranging sensor18, the controller1020may include the camera17and the ranging sensor18.

It is desirable that the user terminal100allows a user, who uses the controller1020, to input user identification information such as a user's name or login ID via the controller1020at the time of start of a game, for example. Thereby, the user terminal100enables to associate the controller1020with the user, and can specify on the basis of a sending source (controller1020) of the received output value that the output value belongs to any user.

When the user terminal100communicates with a plurality of controllers1020, each user grasps each of the controllers1020, so that it is possible to implement multiplay with one user terminal100without communication with another device such as the server200via the network2. In addition, the user terminals100communicate with one another in accordance with a wireless standard such as a wireless LAN (Local Area Network) standard (communicate with one another without using the server200), whereby multiplay can be implemented locally with a plurality of user terminals100. When the above-described multiplay is implemented locally with one user terminal100, the user terminal100may further have at least a part of various functions (to be described below) provided in the server200. Further, when the above-described multiplay is implemented locally with the plurality of user terminals100, the plurality of user terminals100may have various functions (to be described below) provided in the server200in a distributed manner.

Even when the above-described multiplay is implemented locally, the user terminal100may communicate with the server200. For example, the user terminal may send information indicating a play result such as a record or win/loss in a certain game and user identification information in association with each other to the server200.

Further, the controller1020may be configured to be detachable from the user terminal100. In this case, a coupling portion with the controller1020may be provided on at least any surface of a housing of the user terminal100, controller1020. When the user terminal100is coupled to the controller1020by a cable via the coupling portion, the user terminal100and the controller1020sends and receives signals via the cable.

As shown inFIG.2, the user terminal100may be connected to a storage medium1030such as an external memory card via the input/output IF14. Thereby, the user terminal100can read program and data recorded on the storage medium1030. The program recorded on the storage medium1030is a game program, for example.

The user terminal100may store the game program acquired by communicating with an external device such as the server200in the memory11of the user terminal100, or may store the game program acquired by reading from the storage medium1030in the memory11.

As described above, the user terminal100includes the communication IF13, the input/output IF14, the touch screen15, the camera17, and the ranging sensor18as an example of a mechanism for inputting information to the user terminal100. Each of the components described above as an input mechanism can be regarded as an operation unit configured to receive a user's input operation.

For example, when the operation unit is configured by at least any one of the camera17and the ranging sensor18, the operation unit detects an object1010in the vicinity of the user terminal100, and specifies an input operation from the detection result of the object. As an example, a user's hand as the object1010or a marker having a predetermined shape is detected, and an input operation is specified based on color, shape, movement, or type of the object1010obtained as a detection result. More specifically, when a user's hand is detected from a captured image of the camera17, the user terminal100specifies a gesture (a series of movements of the user's hand) detected based on the captured image, as a user's input operation. The captured image may be a still image or a moving image.

Alternatively, when the operation unit is configured by the touch screen15, the user terminal100specifies and receives the user's operation performed on an input unit151of the touch screen15as a user's input operation. Alternatively, when the operation unit is configured by the communication IF13, the user terminal100specifies and receives a signal (for example, an output value) sent from the controller1020as a user's input operation. Alternatively, when the operation unit is configured by the input/output IF14, a signal output from an input device (not shown) different from the controller1020connected to the input/output IF14is specified and received as a user's input operation.

(Server200)

The server200may be a general-purpose computer such as a workstation or a personal computer as an example. The server200includes a processor20, a memory21, a storage22, a communication IF23, and an input/output IF24. These components in the server200are electrically connected to one another via a communication bus.

(Game Play Terminal300)

The game play terminal300may be a general-purpose computer such as a personal computer as an example. The game play terminal300includes a processor30, a memory31, a storage32, a communication IF33, and an input/output IF34. These components in the game play terminal300are electrically connected to one another via a communication bus.

As shown inFIG.4, the game play terminal300according to the present embodiment is included in an HMD (Head Mounted Display) set1000as an example. In other words, it can be expressed that the HMD set1000is included in the system1, and it can also be expressed that the player plays a game using the HMD set1000. A device for the player to play the game is not limited to the HMD set1000. As an example, the device may be any device that allows the player to experience the game virtually. The device may be implemented as a smartphone, a feature phone, a tablet computer, a laptop computer (a so-called notebook computer), or a desktop computer. Further, the device may be a game device suitable for a game play.

The HMD set1000includes not only the game play terminal300but also an HMD500, an HMD sensor510, a motion sensor520, a display530, and a controller540. The HMD500includes a monitor51, a gaze sensor52, a first camera53, a second camera54, a microphone55, and a speaker56. The controller540may include a motion sensor520.

The HMD500may be mounted on a head of the player to provide a virtual space to the player during operations. More specifically, the HMD500displays each of a right-eye image and a left-eye image on the monitor51. When each eye of the player visually recognizes each image, the player may recognize the image as a three-dimensional image based on a parallax of both the eyes. The HMD500may include either a so-called head-mounted display including a monitor or a head-mounted device capable of mounting a terminal including a smartphone or another monitor.

The monitor51is implemented as, for example, a non-transmissive display device. In an aspect, the monitor51is arranged on a main body of the HMD500to be located in front of both eyes of the player. Therefore, when the player visually recognizes the three-dimensional image displayed on the monitor51, the player can be immersed in the virtual space. In an aspect, the virtual space includes, for example, a background, player-operatable objects, and player-selectable menu images. In an aspect, the monitor51may be implemented as a liquid crystal monitor or an organic EL (Electro Luminescence) monitor included in a so-called smart phone or other information display terminals.

In another aspect, the monitor51can be implemented as a transmissive display device. In this case, the HMD500may be an open type such as a glasses type, instead of a closed type that covers the player's eyes as shown inFIG.1. The transmissive monitor51may be temporarily configured as a non-transmissive display device by adjustment of its transmittance. The monitor51may include a configuration in which a part of the image constituting the virtual space and a real space are displayed at the same time. For example, the monitor51may display an image of the real space captured by a camera mounted on the HMD500, or may make the real space visually recognizable by setting a part of the transmittance to be high.

In an aspect, the monitor51may include a sub-monitor for displaying a right-eye image and a sub-monitor for displaying a left-eye image. In another aspect, the monitor51may be configured to integrally display the right-eye image and the left-eye image. In this case, the monitor51includes a high-speed shutter. The high-speed shutter operates to enable alternate display of the right-eye image and the left-eye image so that only one of the eyes can recognize the image.

In an aspect, the HMD500includes a plurality of light sources (not shown). Each of the light source is implemented by, for example, an LED (Light Emitting Diode) configured to emit infrared rays. The HMD sensor510has a position tracking function for detecting the movement of the HMD500. More specifically, the HMD sensor510reads a plurality of infrared rays emitted by the HMD500and detects the position and inclination of the HMD500in the real space.

In another aspect, the HMD sensor510may be implemented by a camera. In this case, the HMD sensor510can detect the position and the inclination of the HMD500by executing image analysis processing using image information of the HMD500output from the camera.

In another aspect, the HMD500may include a sensor (not shown) as a position detector instead of the HMD sensor510or in addition to the HMD sensor510. The HMD500can use the sensor to detect the position and the inclination of the HMD500itself. For example, when the sensor is an angular velocity sensor, a geomagnetic sensor, or an acceleration sensor, the HMD500can use any of those sensors instead of the HMD sensor510to detect its position and inclination. As an example, when the sensor provided in the HMD500is an angular velocity sensor, the angular velocity sensor detects an angular velocity around each of three axes of the HMD500in the real space over time. The HMD500calculates a temporal change of the angle around each of the three axes of the HMD500based on each of the angular velocities, and further calculates an inclination of the HMD500based on the temporal change of the angles.

The gaze sensor52detects a direction in which lines of sight of the right eye and the left eye of the player are directed. The gaze sensor52detects the lines of sight of the player. The direction of the line of sight is detected by, for example, a known eye tracking function. The gaze sensor52is implemented by a sensor having the eye tracking function. In an aspect, the gaze sensor52preferably includes a right-eye sensor and a left-eye sensor. The gaze sensor52may be, for example, a sensor configured to irradiate the right eye and the left eye of the player with infrared light and to receive reflection light from the cornea and the iris with respect to the irradiation light, thereby detecting a rotational angle of each eyeball. The gaze sensor52can detect the line of sight of the player based on each of the detected rotational angles.

The first camera53captures a lower part of the player's face. More specifically, the first camera53captures a nose and a mouse of the player. The second camera54captures eyes and eyebrows of the player. The housing of the HMD500on the player side is defined as an inside of the HMD500, and the housing of the HMD500on the side opposite to the player. In an aspect, the first camera53can be located outside the HMD500, and the second camera54can be located inside the HMD500. The imaged generated by the first camera53and the second camera54are input to the game play terminal300. In another aspect, the first camera53and the second camera54may be implemented as one camera, and the player's face may be captured by the one camera.

The microphone55converts the speech of the player into a sound signal (electric signal) and outputs the sound signal to the game play terminal300. The speaker56converts the sound signal into a sound and outputs the sound to the player. In another aspect, the HMD500may include earphones instead of the speaker56.

The controller540is connected to the game play terminal300in a wired or wireless manner. The controller540receives as an input a command from the player to the game play terminal300. In an aspect, the controller540is configured to be capable of being gripped by the player. In another aspect, the controller540is configured to be wearable on a part of player's body or clothing. In further another aspect, the controller540may be configured to output at least one of vibration, sound, and light in accordance with the signal sent from the game play terminal300. In further another aspect, the controller540receives an operation for controlling the position and movement of an object arranged in the virtual space, from the player.

In an aspect, the controller540includes a plurality of light sources. Each of the light sources is implemented, for example, by an LED that emits infrared rays. The HMD sensor510has a position tracking function. In this case, the HMD sensor510reads the plurality of infrared rays emitted by the controller540, and detects position and inclination of the controller540in the real space. In another aspect, the HMD sensor510may be implemented by a camera. In this case, the HMD sensor510can detect the position and the inclination of the controller540by executing image analysis processing using the image information of the controller540output from the camera.

The motion sensor520is attached to the player's hand in an aspect, and detects movement of the player's hand. For example, the motion sensor520detects a rotation speed of the hand and the number of rotations of the hand. The detected signal is sent to the game play terminal300. The motion sensor520is provided in the controller540, for example. In an aspect, the motion sensor520is provided in, for example, the controller540configured to be capable of being gripped by the player. In another aspect, for safety in the real space, the controller540is a glove-type controller that is mounted on the player's hand not to easily fly away. In further another aspect, a sensor not mounted on the player may detect the movement of the player's hand. For example, a signal of a camera capturing the player may be input to the game play terminal300as a signal representing a behavior of the player. The motion sensor520and the game play terminal300are connected to each other in a wireless manner, for example. In the case of the wireless, a communication mode is not particularly limited, and Bluetooth or other known communication methods may be used, for example.

The display530displays the same image as the image displayed on the monitor51. Thereby, users other than the player wearing the HMD500can also view the same image like the player. The image displayed on the display530does not have to be a three-dimensional image, and may be a right-eye image or a left-eye image. Examples of the display530include a liquid crystal display and an organic EL monitor.

The game play terminal300produces the behavior of a character to be operated by the player, on the basis of various types of information acquired from the respective units of the HMD500, the controller540, and the motion sensor520, and controls the progress of the game. The “behavior” herein includes moving respective parts of the body, changing postures, changing facial expressions, moving, speaking, touching and moving the object arranged in the virtual space, and using weapons and tools gripped by the character. In other words, in the main game, as the respective parts of the player's body move, respective parts of the character's body also move in the same manner as the player. In the main game, the character speaks the contents of the speech of the player. In other words, in the main game, the character is an avatar object that behaves as a player's alter ego. As an example, at least some of the character's behaviors may be executed in response to an input to the controller540from the player.

In the present embodiment, the motion sensor520is attached to both hands of the player, both legs of the player, a waist of the player, and a head of the player. The motion sensor520attached to both hands of the player may be provided in the controller540as described above. In addition, the motion sensor520attached to the head of the player may be provided in the HMD500. The motion sensor520may be further attached to both elbows and knees of the user. As the number of motion sensors520attached to the player increases, the movement of the player can be more accurately reflected in the character. Further, the player may wear a suit to which one or more motion sensors520are attached, instead of attaching the motion sensors520to the respective parts of the body. In other words, a motion capturing method is limited to an example of using the motion sensor520.

(Transmission Terminal400)

The transmission terminal400may be a mobile terminal such as a smartphone, a PDA (Personal Digital Assistant), or a tablet computer. Further, the transmission terminal400may be a so-called stationary terminal such as a desktop computer terminal.

As shown inFIG.5, the transmission terminal400includes a processor40, a memory41, a storage42, a communication IF43, an input/output IF44, and a touch screen45. The transmission terminal400may include an input/output IF44connectable to a display (display unit) configured separately from the main body of the transmission terminal400, instead of or in addition to the touch screen45.

The controller1021may include one or physical input mechanisms of buttons, levers, sticks, and wheels. The controller1021sends an output value based on an input operation input to the input mechanisms from the operator (the player in the present embodiment) of the transmission terminal400, to the transmission terminal400. Further, the controller1021may include various sensors of an acceleration sensor and an angular velocity sensor, and may send the output values of the various sensors to the transmission terminal400. The above-described output values are received by the transmission terminal400via the communication IF43.

The transmission terminal400may include a camera and a ranging sensor (not shown). The controller1021may alternatively or additionally include the camera and the ranging sensor provided in the transmission terminal400.

As described above, the transmission terminal400includes the communication IF43, the input/output IF44, and the touch screen45as examples of mechanisms that input information to the transmission terminal400. The above-described respective components as an input mechanism can be regarded as an operation unit configured to receive the user's input operation.

When the operation unit is configured by the touch screen45, the transmission terminal400specifies and receives a user's operation, which is performed on an input unit451of the touch screen45, as a user's input operation. Alternatively, when the operation unit is configured by the communication IF43, the transmission terminal400specifies and receives a signal (for example, an output value), which is sent from the controller1021, as a user's input operation. Alternatively, when the operation unit is configured by the input/output IF44, the transmission terminal400specifies and receives a signal, which is output from an input device (not shown) connected to the input/output IF44, as a user's input operation.

Each of the processors10,20,30, and40controls operations of all the user terminal100, the server200, the game play terminal300, and the transmission terminal400. Each of the processors10,20,30, and40includes a CPU (Central Processing Unit), an MPU (Micro Processing Unit), and a GPU (Graphics Processing Unit). Each of the processors10,20,30, and40reads a program from each of storages12,22,32, and42which will be described below. Then, each of the processors10,20,30, and40expands the read program to each of memories11,21,31, and41which will be described below. The processors10,20, and30execute the expanded program.

Each of the memories11,21,31, and41is a main storage device. Each of the memories11,21,31, and41is configured by storage devices of a ROM (Read Only Memory) and a RAM (Random Access Memory). The memory11temporarily stores a program and various types of data read from the storage12to be described below by the processor10to give a work area to the processor10. The memory11also temporarily stores various types of data generated when the processor10is operating in accordance with the program. The memory21temporarily stores a program and various types of data read from the storage22to be described below by the processor20to give a work area to the processor20. The memory21also temporarily stores various types of data generated when the processor20is operating in accordance with the program. The memory31temporarily stores a program and various types of data read from the storage32to be described below by the processor30to give a work area to the processor30. The memory31also temporarily stores various types of data generated when the processor30is operating in accordance with the program. The memory41temporarily stores a program and various types of data read from the storage42to be described below by the processor40to give a work area to the processor40. The memory41also temporarily stores various types of data generated when the processor40is operating in accordance with the program.

In the present embodiment, the programs to be executed by the processors10and30may be game programs of the main game. In the present embodiment, the program executed by the processor40may be a transmission program for implementing transmission of behavior instruction data. In addition, the processor10may further execute a viewing program for implementing the reproduction of a moving image.

In the present embodiment, the program to be executed by the processor20may be at least one of the game program, the transmission program, and the viewing program. The processor20executes at least one of the game program, the transmission program, and the viewing program in response to a request from at least one of the user terminal100, the game play terminal300, and the transmission terminal400. The transmission program and the viewing program may be executed in parallel.

In other words, the game program may be a program for implementing the game by cooperation of the user terminal100, the server200, and the game play terminal300. The transmission program may be a program implementing the transmission of the behavior instruction data by cooperation of the server200and the transmission terminal400. The viewing program may be a program for implementing the reproduction of the moving image by cooperation of the user terminal100and the server200.

Each of the storages12,22,32, and42is an auxiliary storage device. Each of the storages12,22,32, and42is configured by a storage device such as a flash memory or an HDD (Hard Disk Drive). Each of the storages12and32stores various types data regarding the game, for example. The storage42stores various types of data regarding transmission of the behavior instruction data. Further, the storage12stores various types of data regarding the reproduction of the moving image. The storage22may store at least some of various types of data regarding each of the game, the transmission of the behavior instruction data, and the reproduction of the moving image.

Each of the communication IFs13,23,33, and43controls the sending and reception of various types of data in the user terminal100, the server200, the game play terminal300, and the transmission terminal400. Each of the communication IFs13,23,33, and43controls, for example, communication via a wireless LAN (Local Area Network), Internet communication via a wired LAN, a wireless LAN, or a mobile phone network, and communication using short-range wireless communication.

Each of the input/output IFs14,24,34, and44are interfaces through which the user terminal100, the server200, the game play terminal300, and the transmission terminal400receives a data input and outputs the data. Each of the input/output IFs14,24,34, and44may perform input/output of data via a USB (Universal Serial Bus) or the like. Each of the input/output IFs14,24,34, and44may include a physical button, a camera, a microphone, a speaker, a mouse, a keyboard, a display, a stick, and a lever. Further, each of the input/output IFs14,24,34, and44may include a connection portion for sending to and receiving from a peripheral device.

The touch screen15is an electronic component in which the input unit151and the display unit152(display) are combined. The touch screen45is an electronic component in which the input unit451and the display unit452are combined. Each of the input units151and451is, for example, a touch-sensitive device, and is configured by a touch pad, for example. Each of the display units152and452is configured by a liquid crystal display or an organic EL (Electro-Luminescence) display, for example.

Each of the input units151and451has a function of detecting a position where user's operations (mainly, physical contact operations including a touch operation, a slide operation, a swipe operation, and a tap operation) are input to an input surface, and sending information indicating the position as an input signal. Each of the input units151and451includes a touch sensor (not shown). The touch sensor may adopt any one of methods such as a capacitive touch method and a resistive-film touch method.

Although not shown, the user terminal100and the transmission terminal400may include one or more sensors configured to specify a holding posture of the user terminal100and a holding posture of the transmission terminal400, respectively. The sensor may be, for example, an acceleration sensor or an angular velocity sensor.

When each of the user terminal100and the transmission terminal400includes a sensor, the processors10and40can specify the holding posture of the user terminal100and the holding posture of the transmission terminal400from the outputs of the sensors, respectively, and can perform processing depending on the holding postures. For example, when the processors10and40may be vertical screen displays in which a vertically long images are displayed on the display units152and452when the user terminal100and the transmission terminal400are held in a vertical direction, respectively. On the other hand, when the user terminal100and the transmission terminal400are held horizontally, a horizontally long image may be displayed on the display unit as a horizontal screen display. In this way, the processors10and40may be able to switch between a vertical screen display and a horizontal screen display depending on the holding postures of the user terminal100and the transmission terminal400, respectively.

FIG.6is a block diagram showing functional configurations of the user terminal100, the server200, and the HMD set1000included in the system1.FIG.7is a block diagram showing a functional configuration of the transmission terminal400shown inFIG.6.

The user terminal100has a function as an input device that receives a user's input operation, and a function as an output device that outputs an image or a sound of the game. The user terminal100functions as a control unit110and a storage unit120by cooperation of the processor10, the memory11, the storage12, the communication IF13, the input/output IF14, and the touch screen15.

The server200has a function of mediating the sending and reception of various types of information between the user terminal100, the HMD set1000, and the transmission terminal400. The server200functions as a control unit210and a storage unit220by cooperation of the processor20, the memory21, the storage22, the communication IF23, and the input/output IF24.

The HMD set1000(the game play terminal300) has a function as an input device that receives a player's input operation, a function as an output device that outputs an image and a sound of the game, and a function of sending game progress information to the user terminal100via the server200in real time. The HMD set1000functions as a control unit310and a storage unit320by cooperation of the processor30, the memory31, the storage32, the communication IF33, and the input/output IF34of the game play terminal300with the HMD500, the HMD sensor510, the motion sensor520, and the controller540.

The transmission terminal400has a function of generating behavior instruction data and sending the behavior instruction data to the user terminal100via the server200. The transmission terminal400functions as a control unit410and a storage unit420by cooperation of the processor40, the memory41, the storage42, the communication IF43, the input/output IF44, and the touch screen45.

(Data Stored in Storage Unit of Each Device)

The storage unit120stores a game program131(a program), game information132, and user information133. The storage unit220stores a game program231, game information232, user information233, and a user list234. The storage unit320stores a game program331, game information332, and user information333. The storage unit420stores a user list421, a motion list422, and a transmission program423(a program, a second program).

The game programs131,231, and331are game programs to be executed by the user terminal100, the server200, and the HMD set1000, respectively. The respective devices operates by cooperation based on the game programs131,231, and331, and thus the main game is implemented. The game programs131and331may be stored in the storage unit220and downloaded to the user terminal100and the HMD set1000, respectively. In the present embodiment, the user terminal100performs rendering on the data received from the transmission terminal400in accordance with the game program131and reproduces a moving image. In other words, the game program131is also a program for reproducing the moving image using moving image instruction data transmitted from the transmission terminal400. The program for reproducing the moving image may be different from the game program131. In this case, the storage unit120stores a program for reproducing the moving image separately from the game program131.

The game information132,232, and332are data used for reference when user terminal100, the server200, and the HMD set1000execute the game programs, respectively. Each of the user information133,233, and333is data regarding a user's account of the user terminal100. The game information232is the game information132of each of the user terminals100and the game information332of the HMD set1000. The user information233is the user information133of each of the user terminals100and player's user information included in the user information333. The user information333is the user information133of each of the user terminals100and player's user information.

Each of the user list234and the user list421is a list of users who have participated in the game. Each of the user list234and the user list421may include not only a list of users who have participated in the most recent game play by the player but also a list of users who have participated in each of game plays before the most recent game play. The motion list422is a list of a plurality of motion data created in advance. The motion list422is, for example, a list in which motion data is associated with information (for example, a motion name) identifies each motion. The transmission program423is a program for implementing transmission of the behavior instruction data for reproducing the moving image on the user terminal100to the user terminal100.

(Functional Configuration of Server200)

The control unit210comprehensively controls the server200by executing the game program231stored in the storage unit220. For example, the control unit210mediates the sending and reception of various types of information between the user terminal100, the HMD set1000, and the transmission terminal400.

The control unit210functions as a communication mediator211, a log generator212, and a list generator213in accordance with the description of game program231. The control unit210can also as other functional blocks (not shown) for the purpose of mediating the sending and reception of various types of information regarding the game play and transmission of the behavior instruction data and supporting the progress of the game.

The communication mediator211mediates the sending and reception of various types of information between the user terminal100, the HMD set1000, and the transmission terminal400. For example, the communication mediator211sends the game progress information received from the HMD set1000to the user terminal100. The game progress information includes data indicating information on movement of the character operated by the player, parameters of the character, and items and weapons possessed by the character, and enemy characters. The server200sends the game progress information to the user terminal100of all the users who participate in the game. In other words, the server200sends common game progress information to the user terminal100of all the users who participate in the game. Thereby, the game progresses in each of the user terminals100of all the users who participate in the game in the same manner as in the HMD set1000.

Further, for example, the communication mediator211sends information received from any one of the user terminals100to support the progress of the game by the player, to the other user terminals100and the HMD set1000. As an example, the information may be an item for the player to carry on the game advantageously, and may be item information indicating an item provided to the player (character). The item information includes information (for example, a user name and a user ID) indicating the user who provides the item. Further, the communication mediator211may mediate the transmission of the behavior instruction data from the transmission terminal400to the user terminal100.

The log generator212generates a log for the game progress based on the game progress information received from the HMD set1000. The list generator213generates the user list234after the end of the game play. Although details will be described below, each user in the user list234is associated with a tag indicating the content of the support provided to the player by the user. The list generator213generates a tag based on the log for the game progress generated by the log generator212, and associates it with the corresponding user. The list generator213may associate the content of the support, which is input by the game operator or the like using a terminal device such as a personal computer and provided to the player by each user, with the corresponding user, as a tag. Thereby, the content of the support provided by each user becomes more detailed. The user terminal100sends, based on the user's operation, the information indicating the user to the server200when the users participate in the game. For example, the user terminal100sends a user ID, which is input by the user, to the server200. In other words, the server200holds information indicating each user for all the users who participate in the game. The list generator213may generate, using the information, the user list234.

(Functional Configuration of HMD Set1000)

The control unit310comprehensively controls the HMD set1000by executing the game program331stored in the storage unit320. For example, the control unit310allows the game to progress in accordance with the game program331and the player's operation. In addition, the control unit310communicates with the server200to send and receive information as needed while the game is in progress. The control unit310may send and receive the information directly to and from the user terminal100without using the server200.

The control unit310functions as an operation receiver311, a display controller312, a UI controller313, an animation generator314, a game coordinator315, a virtual space controller316, and a response processor317in accordance with the description of the game program331. The control unit310can also as other functional blocks (not shown) for the purpose of controlling characters appearing in the game, depending on the nature of the game to be executed.

The operation receiver311detects and receives the player's input operation. The operation receiver311receives signals input from the HMD500, the motion sensor520, and the controller540, determines what kind of input operation has been performed, and outputs the result to each component of the control unit310.

The UI controller313controls user interface (hereinafter, referred to as UI) images to be displayed on the monitor51and the display530. The UI image is a tool for the player to make an input necessary for the progress of the game to the HMD set1000, or a tool for obtaining information, which is output during the progress of the game, from the HMD set1000. The UI image is not limited thereto, but includes icons, buttons, lists, and menu screens, for example.

The animation generator314generates, based on control modes of various objects, animations showing motions of various objects. For example, the animation generator314may generate an animation that expresses a state where an object (for example, a player's avatar object) moves as if it is there, its mouth moves, or its facial expression changes.

The game coordinator315controls the progress of the game in accordance with the game program331, the player's input operation, and the behavior of the avatar object corresponding to the input operation. For example, the game coordinator315performs predetermined game processing when the avatar object performs a predetermined behavior. Further, for example, the game coordinator315may receive information indicating the user's operation on the user terminal100, and may perform game processing based on the user's operation. In addition, the game coordinator315generates game progress information depending on the progress of the game, and sends the generated information to the server200. The game progress information is sent to the user terminal100via the server200. Thereby, the progress of the game in the HMD set1000is shared in the user terminal100. In other words, the progress of the game in the HMD set1000synchronizes with the progress of the game in the user terminal100.

The virtual space controller316performs various controls related to the virtual space provided to the player, depending on the progress of the game. As an example, the virtual space controller316generates various objects, and arranges the objects in the virtual space. Further, the virtual space controller316arranges a virtual camera in the virtual space. In addition, the virtual space controller316produces the behaviors of various objects arranged in the virtual space, depending on the progress of the game. Further, the virtual space controller316controls the position and inclination of the virtual camera arranged in the virtual space, depending on the progress of the game.

The display controller312outputs a game screen reflecting the processing results executed by each of the above-described components to the monitor51and the display530. The display controller312may display an image based on a field of view from the virtual camera arranged in the virtual space, on the monitor51and the display530as a game screen. Further, the display controller312may include the animation generated by the animation generator314in the game screen. Further, the display controller312may draw the above-described UI image, which is controlled by the UI controller313, in a manner of being superimposed on the game screen.

The response processor317receives a feedback regarding a response of the user of the user terminal100to the game play of the player, and outputs the feedback to the player. In the present embodiment, for example, the user terminal100can create, based on the user's input operation, a comment (message) directed to the avatar object. The response processor317receives comment data of the comment and outputs the comment data. The response processor317may display text data corresponding to the comment of the user on the monitor51and the display530, or may output sound data corresponding to the comment of the user from a speaker (not shown). In the former case, the response processor317may draw an image corresponding to the text data (that is, an image including the content of the comment) in a manner of being superimposed on the game screen.

(Functional Configuration of User Terminal100)

The control unit110comprehensively controls the user terminal100by executing the game program131stored in the storage unit120. For example, the control unit110controls the progress of the game in accordance with the game program131and the user's operation. In addition, the control unit110communicates with the server200to send and receive information as needed while the game is in progress. The control unit110may send and receive the information directly to and from the HMD set1000without using the server200.

The control unit110functions as an operation receiver111, a display controller112, a UI controller113, an animation generator114, a game coordinator115, a virtual space controller116, and a moving image reproducer117in accordance with the description of the game program131. The control unit110can also as other functional blocks (not shown) for the purpose of progressing the game, depending on the nature of the game to be executed.

The operation receiver111detects and receives the user's input operation with respect to the input unit151. The operation receiver111determines what kind of input operation has been performed from the action exerted by the user on a console via the touch screen15and another input/output IF14, and outputs the result to each component of the control unit110.

For example, the operation receiver111receives an input operation for the input unit151, detects coordinates of an input position of the input operation, and specifies a type of the input operation. The operation receiver111specifies, for example, a touch operation, a slide operation, a swipe operation, and a tap operation as the type of the input operation. Further, the operation receiver111detects that the contact input is released from the touch screen15when the continuously detected input is interrupted.

The UI controller113controls a UI image to be displayed on the display unit152to construct a UI according to at least one of the user's input operation and the received game progress information. The UI image is a tool for the user to make an input necessary for the progress of the game to the user terminal100, or a tool for obtaining information, which is output during the progress of the game, from the user terminal100. The UI image is not limited thereto, but includes icons, buttons, lists, and menu screens, for example.

The animation generator114generates, based on control modes of various objects, animations showing motions of various objects.

The game coordinator115controls the progress of the game in accordance with the game program131, the received game progress information, and the user's input operation. When predetermined processing is performed by the user's input operation, the game coordinator115sends information on the game processing to the HMD set1000via the server200. Thereby, the predetermined game processing is shared in the HMD set1000. In other words, the progress of the game in the HMD set1000synchronizes with the progress of the game in the user terminal100. The predetermined game processing is, for example, processing of providing an item to an avatar object, and in this example, information on the game processing is the item information described above.

The virtual space controller116performs various controls related to the virtual space provided to the user, depending on the progress of the game. As an example, the virtual space controller116generates various objects, and arranges the objects in the virtual space. Further, the virtual space controller116arranges a virtual camera in the virtual space. In addition, the virtual space controller116produces the behaviors of the various objects arranged in the virtual space, depending on the progress of the game, specifically, depending on the received game progress information. Further, the virtual space controller316controls position and inclination of the virtual camera arranged in the virtual space, depending on the progress of the game, specifically, the received game progress information.

The display controller112outputs a game screen reflecting the processing results executed by each of the above-described components to the display unit152. The display controller112may display an image based on a field of view from the virtual camera arranged in the virtual space provided to the user, on the display unit152as a game screen. Further, the display controller112may include the animation generated by the animation generator114in the game screen. Further, the display controller112may draw the above-described UI image, which is controlled by the UI controller113, in a manner of being superimposed on the game screen. In any case, the game screen displayed on the display unit152is the game screen as the game screen displayed on the other user terminal100and the HMD set1000.

The moving image reproducer117performs analysis (rendering) on the behavior instruction data received from the transmission terminal400, and reproduces the moving image.

(Functional Configuration of Transmission Terminal400)

The control unit410comprehensively controls the transmission terminal400by executing a program (not shown) stored in the storage unit420. For example, the control unit410generates behavior instruction data in accordance with the program and the operation of the user (the player in the present embodiment) of the transmission terminal400, and transmits the generated data to the user terminal100. Further, the control unit410communicates with the server200to send and receive information as needed. The control unit410may send and receive the information directly to and from the user terminal100without using the server200.

The control unit410functions as a communication controller411, a display controller412, an operation receiver413, a sound receiver414, a motion specifier415, and a behavior instruction data generator416in accordance with the description of the program. The control unit410can also function as other functional blocks (not shown) for the purpose of generating and transmitting behavior instruction data.

The communication controller411controls the sending and reception of information to and from the server200or the user terminal100via the server200. The communication controller411receives the user list421from the server200as an example. Further, the communication controller411sends the behavior instruction data to the user terminal100as an example.

The display controller412outputs various screens, which reflects results of the processing executed by each component, to the display unit452. The display controller412displays a screen including the received user list234as an example. Further, as an example, the display controller412displays a screen including the motion list422for enabling the player to select motion data included in the behavior instruction data to be transmitted for use in production of the behavior of an avatar object.

The operation receiver413detects and receives the player's input operation with respect to the input unit151. The operation receiver111determines what kind of input operation has been performed from the action exerted by the user on a console via the touch screen45and another input/output IF44, and outputs the result to each component of the control unit410.

For example, the operation receiver413receives an input operation for the input unit451, detects coordinates of an input position of the input operation, and specifies a type of the input operation. The operation receiver413specifies, for example, a touch operation, a slide operation, a swipe operation, and a tap operation as the type of the input operation. Further, the operation receiver413detects that the contact input is released from the touch screen45when the continuously detected input is interrupted.

The sound receiver414receives a sound generated around the transmission terminal400, and generates sound data of the sound. As an example, the sound receiver414receives a sound output by the player and generates sound data of the sound.

The motion specifier415specifies the motion data selected by the player from the motion list422in accordance with the player's input operation.

The behavior instruction data generator416generates behavior instruction data. As an example, the behavior instruction data generator416generates behavior instruction data including the generated sound data and the specified motion data.

The functions of the HMD set1000, the server200, and the user terminal100shown inFIG.6and the function of the transmission terminal400shown inFIG.7are merely examples. Each of the HMD set1000, the server200, the user terminal100, and the transmission terminal400may have at least some of functions provided by other devices. Further, another device other than the HMD set1000, the server200, the user terminal100, and the transmission terminal400may be used as a component of the system1, and another device may be made to execute some of the processing in the system1. In other words, the computer, which executes the game program in the present embodiment, may be any of the HMD set1000, the server200, the user terminal100, the transmission terminal400, and other devices, or may be implemented by a combination of these plurality of devices.

FIG.8is a flowchart showing an example of a flow of control processing of the virtual space provided to the player and the virtual space provided to the user of the user terminal100.FIGS.9A and9Bare diagrams showing a virtual space600A provided to the player and a field-of-view image visually recognized by the player according to an embodiment.FIGS.10A and10Bare diagrams showing a virtual space600B provided to the user of the user terminal100and a field-of-view image visually recognized by the user according to an embodiment. Hereinafter, the virtual spaces600A and600B are described as “virtual spaces600” when being not necessary to be distinguished from each other.

In step S1, the processor30functions as the virtual space controller316to define the virtual space600A shown inFIG.9A. The processor30defines the virtual space600A using virtual space data (not shown). The virtual space data may be stored in the game play terminal300, may be generated by the processor30in accordance with the game program331, or may be acquired by the processor30from the external device such as the server200.

As an example, the virtual space600has an all-celestial sphere structure that covers the entire sphere in a 360-degree direction around a point defined as a center. InFIGS.9A and10A, an upper half of the virtual space600is illustrated as a celestial sphere not to complicate the description.

In step S2, the processor30functions as the virtual space controller316to arrange an avatar object (character)610in the virtual space600A. The avatar object610is an avatar object associated with the player, and behaves in accordance with the player's input operation.

In step S3, the processor30functions as the virtual space controller316to arrange other objects in the virtual space600A. In the example ofFIGS.9A and9B, the processor30arranges objects631to634. Examples of other objects may include character objects (so-called non-player characters, NPC) that behaves in accordance with the game program331, operation objects such as virtual hands, and objects that imitate animals, plants, artificial objects, or natural objects that are arranged depending on the progress of the game.

In step S4, the processor30functions as the virtual space controller316to arrange a virtual camera620A in the virtual space600A. As an example, the processor30arranges the virtual camera620A at a position of the head of the avatar object610.

In step S5, the processor30displays a field-of-view image650on the monitor51and the display530. The processor30defines a field-of-view area640A, which is a field of view from the virtual camera620A in the virtual space600A, in accordance with an initial position and an inclination of the virtual camera620A. Then, the processor30defines a field-of-view image650corresponding to the field-of-view area640A. The processor30outputs the field-of-view image650to the monitor51and the display530to allow the HMD500and the display530to display the field-of-view image650.

In the example ofFIGS.9A and9B, as shown inFIG.9A, since a part of the object634is included in the field-of-view area640A, the field-of-view image650includes a part of the object634as shown inFIG.9B.

In step S6, the processor30sends initial arrangement information to the user terminal100via the server200. The initial arrangement information is information indicating initial arrangement positions of various objects in the virtual space600A. In the example ofFIGS.9A and9B, the initial arrangement information includes information on initial arrangement positions of the avatar object610and the objects631to634. The initial arrangement information can also be expressed as one of the game progress information.

In step S7, the processor30functions as the virtual space controller316to control the virtual camera620A depending on the movement of the HMD500. Specifically, the processor30controls the direction and inclination of the virtual camera620A depending on the movement of the HMD500, that is, the posture of the head of the player. As will be described below, the processor30moves the head of the player (changes the posture of the head) and moves a head of the avatar object610in accordance with such movement. The processor30controls the direction and inclination of the virtual camera620A such that a direction of the line of sight of the avatar object610coincides with a direction of the line of sight of the virtual camera620A. In step S8, the processor30updates the field-of-view image650in response to changes in the direction and inclination of the virtual camera620A.

In step S9, the processor30functions as the virtual space controller316to move the avatar object610depending on the movement of the player. As an example, the processor30moves the avatar object610in the virtual space600A as the player moves in the real space. Further, the processor30moves the head of the avatar object610in the virtual space600A as the head of the player moves in the real space.

In step S10, the processor30functions as the virtual space controller316to move the virtual camera620A to follow the avatar object610. In other words, the virtual camera620A is always located at the head of the avatar object610even when the avatar object610moves.

The processor30updates the field-of-view image650depending on the movement of the virtual camera620A. In other words, the processor30updates the field-of-view area640A depending on the posture of the head of the player and the position of the virtual camera620A in the virtual space600A. As a result, the field-of-view image650is updated.

In step S11, the processor30sends the behavior instruction data of the avatar object610to the user terminal100via the server200. The behavior instruction data herein includes at least one of motion data that takes the motion of the player during a virtual experience (for example, during a game play), sound data of a sound output by the player, and operation data indicating the content of the input operation to the controller540. When the player is playing the game, the behavior instruction data is sent to the user terminal100as game progress information, for example.

Processes of steps S7to S11are consecutively and repeatedly executed while the player is playing the game.

In step S21, the processor10of the user terminal100of a user3functions as the virtual space controller116to define a virtual space600B shown inFIG.10A. The processor10defines a virtual space600B using virtual space data (not shown). The virtual space data may be stored in the user terminal100, may be generated by the processor10based on the game program131, or may be acquired by the processor10from an external device such as the server200.

In step S22, the processor10receives initial arrangement information. In step S23, the processor10functions as the virtual space controller116to arrange various objects in the virtual space600B in accordance with the initial arrangement information. In the example ofFIGS.10A and10B, various objects are an avatar object610and objects631to634.

In step S24, the processor10functions as the virtual space controller116to arrange a virtual camera620B in the virtual space600B. As an example, the processor10arranges the virtual camera620B at the position shown inFIG.10A.

In step S25, the processor10displays a field-of-view image660on the display unit152. The processor10defines a field-of-view area640B, which is a field of view from the virtual camera620B in the virtual space600B, in accordance with an initial position and an inclination of the virtual camera620B. Then, the processor10defines a field-of-view image660corresponding to the field-of-view area640B. The processor10outputs the field-of-view image660to the display unit152to allow the display unit152to display the field-of-view image660.

In the example ofFIGS.10A and10B, since the avatar object610and the object631are included in the field-of-view area640B as shown inFIG.10A, the field-of-view image660includes the avatar object610and the object631as shown inFIG.10B.

In step S26, the processor10receives the behavior instruction data. In step S27, the processor10functions as the virtual space controller116to move the avatar object610in the virtual space600B in accordance with the behavior instruction data. In other words, the processor10reproduces a video in which the avatar object610is behaving, by real-time rendering.

In step S28, the processor10functions as the virtual space controller116to control the virtual camera620B in accordance with the user's operation received when functioning as the operation receiver111. In step S29, the processor10updates the field-of-view image660depending on changes in the position of the virtual camera620B in the virtual space600B and the direction and inclination of the virtual camera620B. In step S28, the processor10may automatically control the virtual camera620B depending on the movement of the avatar object610, for example, the change in the movement and direction of the avatar object610. For example, the processor10may automatically move the virtual camera620B or change its direction and inclination such that the avatar object610is always captured from the front. As an example, the processor10may automatically move the virtual camera620B or change its direction and inclination such that the avatar object610is always captured from the rear in response to the movement of the avatar object610.

As described above, the avatar object610behaves in the virtual space600A depending on the movement of the player. The behavior instruction data indicating the behavior is sent to the user terminal100. In the virtual space600B, the avatar object610behaves in accordance with the received behavior instruction data. Thereby, the avatar object610performs the same behavior in the virtual space600A and the virtual space600B. In other words, the user3can visually recognize the behavior of the avatar object610depending on the behavior of the player using the user terminal100.

FIGS.11A to11Dare diagrams showing another example of the field-of-view image displayed on the user terminal100. Specifically,FIG.11is a diagram showing an example of a game screen of a game (main game) to be executed by the system1in which the player is playing.

The main game is a game in which the avatar object610who operates weapons, for example, guns and knives and a plurality of enemy objects671who is NPC appear in the virtual space600and the avatar object610fights against the enemy objects671. Various game parameters, for example, a physical strength of the avatar object610, the number of usable magazines, the number of remaining bullets of the gun, and the number of remaining enemy objects671are updated depending on the progress of the game.

A plurality of stages are prepared in the main game, and the player can clear the stage by establishing predetermined achievement conditions associated with each stage. Examples of the predetermined achievement conditions may include conditions established by defeating all the appearing enemy objects671, defeating a boss object among the appearing enemy objects671, acquiring a predetermined item, and reaching a predetermined position. The achievement conditions are defined in the game program131. In the main game, the player clears the stage when the achievement conditions are established depending on the content of the game, in other words, a win of the avatar object610against the enemy objects671(win or loss between the avatar object610and the enemy object671) is determined. On the other hand, for example, when the game executed by the system1is a racing game, the ranking of the avatar object610is determined when a condition is established that the avatar object reaches a goal.

In the main game, the game progress information is live transmitted to the plurality of user terminals100at predetermined time intervals in order to share the virtual space between the HMD set1000and the plurality of user terminals100. As a result, on the touch screen15of the user terminal100on which the user watches the game, a field-of-view image of the field-of-view area defined by the virtual camera620B corresponding to the user terminal100is displayed. Further, on an upper right side and an upper left side of the field-of-view image, parameter images showing the physical strength of the avatar object610, the number of usable magazines, the number of remaining bullets of the gun, and the number of remaining enemy objects671are displayed in a manner of being superimposed. The field-of-view image can also be expressed as a game screen.

As described above, the game progress information includes motion data that takes the behavior of the player, sound data of a sound output by the player, and operation data indicating the content of the input operation to the controller540. These data are, that is, information for specifying the position, posture, and direction of the avatar object610, information for specifying the position, posture, and direction of the enemy object671, and information for specifying the position of other objects (for example, obstacle objects672and673). The processor10specifies the position, posture, and direction of each object by analyzing (rendering) the game progress information.

The game information132includes data of various objects, for example, the avatar object610, the enemy object671, and the obstacle objects672and673. The processor10uses the data and the analysis result of the game progress information to update the position, posture, and direction of each object. Thereby, the game progresses, and each object in the virtual space600B moves in the same manner as each object in the virtual space600A. Specifically, in the virtual space600B, each object including the avatar object610behaves in accordance with the game progress information regardless of whether the user operates the user terminal100.

On the touch screen15of the user terminal100, as an example, UI images701and702are displayed in a manner of being superimposed on the field-of-view image. The UI image701is a UI image that receives an operation for controlling the touch screen15to display a UI image711that receives an item-supply operation for supporting the avatar object610from the user3. The UI image702is a UI image that receives an operation for controlling the touch screen15to display a UI image (to be described below) receives an operation for inputting and sending a comment for the avatar object610(in other words, a player4) from the user3. The operation received by the UI images701and702may be, for example, an operation of tapping the UI images701and702.

When the UI image701is tapped, the UI image711is displayed in a manner of being superimposed on the field-of-view image. The UI image711includes, for example, a UI image711A on which a magazine icon is drawn, a UI image711B on which a first-aid kit icon is drawn, a UI image711C on which a triangular cone icon is drawn, and a UI image711D on which a barricade icon is drawn. The item-supply operation corresponds to an operation of tapping any UI image, for example.

As an example, when the UI image711A is tapped, the number of remaining bullets of the gun used by the avatar object610increases. When the UI image711B is tapped, the physical strength of the avatar object610is restored. When the UI images711C and711D are tapped, the obstacle objects672and673are arranged in the virtual space to obstruct the movement of the enemy object671. One of the obstacle objects672and673may obstruct the movement of the enemy object671more than the other obstacle object.

The processor10sends item-supply information indicating that the item-supply operation has been performed, to the server200. The item-supply information includes at least information for specifying a type of the item specified by the item-supply operation. The item-supply information may include another information on the item such as information indicating a position where the item is arranged. The item-supply information is sent to another user terminal100and the HMD set1000via the server200.

FIGS.12A to12Dare diagrams showing another example of the field-of-view image displayed on the user terminal100. Specifically,FIG.12is a diagram showing an example of a game screen of the main game, and is a diagram for illustrating a communication between the player and user terminal100during the game play.

In a case ofFIG.12A, the user terminal100produces a speech691of the avatar object610. Specifically, the user terminal100produces the speech691of the avatar object610on the basis of the sound data included in the game progress information. The content of the speech691is “OUT OF BULLETS!” output by a player4. In other words, the content of the speech691is to inform each user that there is no magazine (0) and the number of bullets loaded in the gun is 1, so that a means for attacking the enemy object671is likely to be lost.

InFIG.12A, a balloon is used to visually indicate the speech of the avatar object610, but the sound is output by the speaker of the user terminal100in fact. In addition to the output of the sound, the balloon shown inFIG.12A(that is, the balloon including a text of the sound content) may be displayed in the field-of-view image. This also applies to a speech692to be described below.

Upon reception of the tap operation on the UI image702, the user terminal100displays UI images705and706(message UI) in a manner of being superimposed on the field-of-view image as shown inFIG.12B. The UI image705is a UI image on which a comment on the avatar object610(in other words, the player) is displayed. The UI image706is a UI image that receives a comment-sending operation from the user3in order to send the input comment.

As an example, upon reception of the tap operation on the UI image705, the user terminal100controls the touch screen15to display a UI image (not shown, hereinafter simply referred to as “keyboard”) imitating a keyboard. The user terminal100controls the UI image705to display a text corresponding to the user's input operation on the keyboard. In the example ofFIG.12B, the text “I'll SEND YOU A MAGAZINE” is displayed on the UI image705.

As an example, upon reception of the tap operation on the UI image706after the text is input, the user terminal100sends comment information including information indicating the input content (text content) and information indicating the user, to the server200. The comment information is sent to another user terminal100and HMD set1000via the server200.