U.S. Pat. No. 12,179,111

STORAGE MEDIUM STORING GAME PROGRAM, GAME SYSTEM, GAME APPARATUS, AND GAME PROCESSING METHOD

AssigneeNINTENDO CO., LTD.; THE POKÉMON COMPANY

Issue DateMay 2, 2024

Illustrative Figure

Abstract

In a first mode, an aiming direction in a virtual space is determined based on a second operation input, and a player character is caused to launch, in the aiming direction, an item that affects a field character disposed on a field in the virtual space, based on a third operation input. In a second mode, the aiming direction is determined, based on the second operation input, and the player character is caused to launch, in the aiming direction, a fighting character that fights, based on the third operation input.

Description

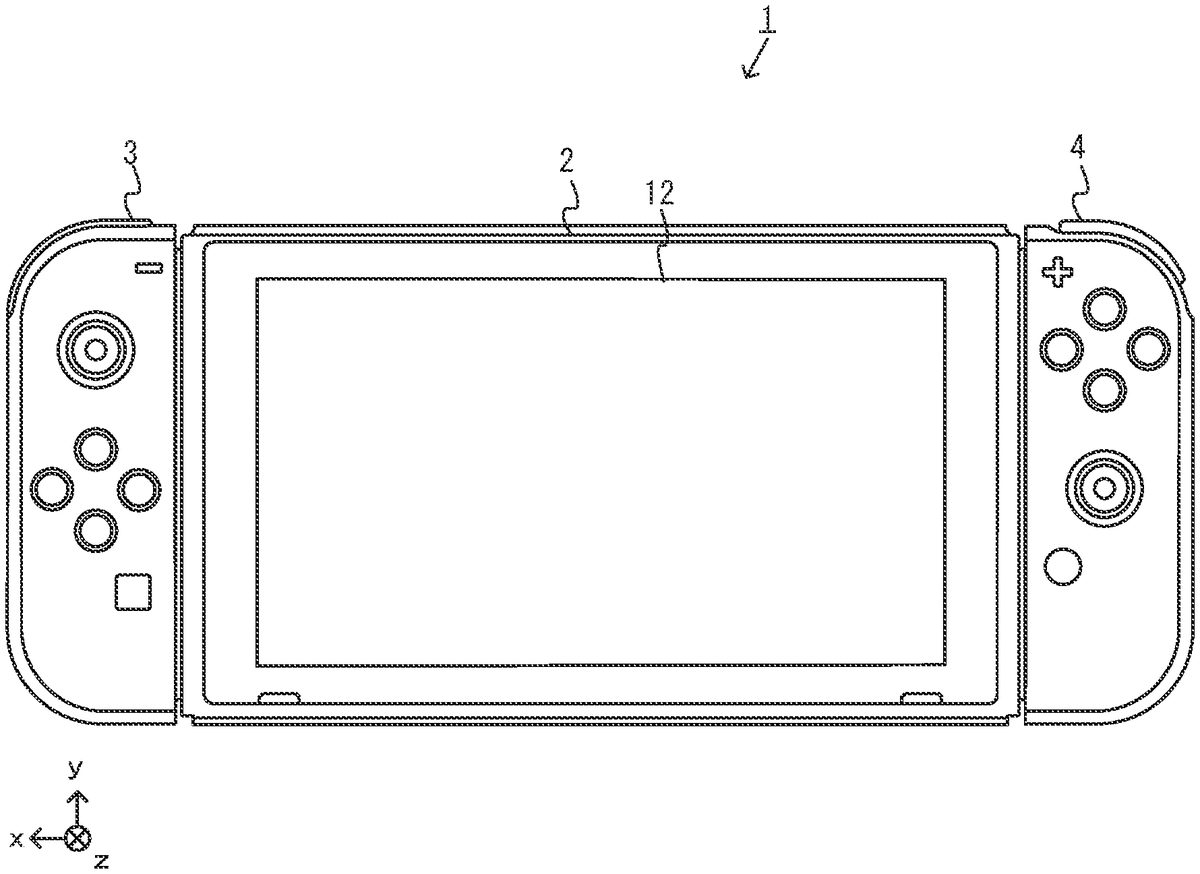

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS A game system according to the present non-limiting example will now be described. A non-limiting example of a game system1according to the present non-limiting example includes a main body apparatus (information processing apparatus serving as the main body of a game apparatus in the present non-limiting example)2, a left controller3, and a right controller4. The left controller3and the right controller4are attachable to and detachable from the main body apparatus2. That is, the user can attach the left controller3and the right controller4to the main body apparatus2, and use them as a unified apparatus. The user can also use the main body apparatus2and the left controller3and the right controller4separately from each other (seeFIG.2). In the description that follows, a hardware configuration of the game system1of the present non-limiting example is described, and thereafter, the control of the game system1of the present non-limiting example is described. FIG.1is a diagram illustrating a non-limiting example of the state where the left controller3and the right controller4are attached to the main body apparatus2. As illustrated inFIG.1, each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the game system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs. FIG.2is a diagram illustrating a non-limiting example of the state where each of the left controller3and the right controller4is detached from the main body apparatus2. As illustrated inFIGS.1and2, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. It should be noted that hereinafter, the left controller3and the right controller4will occasionally be referred to collectively as a “controller.” FIG.3illustrates six orthogonal ...

DETAILED DESCRIPTION OF NON-LIMITING EXAMPLE EMBODIMENTS

A game system according to the present non-limiting example will now be described. A non-limiting example of a game system1according to the present non-limiting example includes a main body apparatus (information processing apparatus serving as the main body of a game apparatus in the present non-limiting example)2, a left controller3, and a right controller4. The left controller3and the right controller4are attachable to and detachable from the main body apparatus2. That is, the user can attach the left controller3and the right controller4to the main body apparatus2, and use them as a unified apparatus. The user can also use the main body apparatus2and the left controller3and the right controller4separately from each other (seeFIG.2). In the description that follows, a hardware configuration of the game system1of the present non-limiting example is described, and thereafter, the control of the game system1of the present non-limiting example is described.

FIG.1is a diagram illustrating a non-limiting example of the state where the left controller3and the right controller4are attached to the main body apparatus2. As illustrated inFIG.1, each of the left controller3and the right controller4is attached to and unified with the main body apparatus2. The main body apparatus2is an apparatus for performing various processes (e.g., game processing) in the game system1. The main body apparatus2includes a display12. Each of the left controller3and the right controller4is an apparatus including operation sections with which a user provides inputs.

FIG.2is a diagram illustrating a non-limiting example of the state where each of the left controller3and the right controller4is detached from the main body apparatus2. As illustrated inFIGS.1and2, the left controller3and the right controller4are attachable to and detachable from the main body apparatus2. It should be noted that hereinafter, the left controller3and the right controller4will occasionally be referred to collectively as a “controller.”

FIG.3illustrates six orthogonal views of a non-limiting example of the main body apparatus2. As illustrated inFIG.3, the main body apparatus2includes an approximately plate-shaped housing11. In the present non-limiting example, a main surface (in other words, a surface on a front side, i.e., a surface on which the display12is provided) of the housing11has a generally rectangular shape.

It should be noted that the shape and the size of the housing11are optional. As a non-limiting example, the housing11may be of a portable size. Further, the main body apparatus2alone or the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2may function as a mobile apparatus. The main body apparatus2or the unified apparatus may function as a handheld apparatus or a portable apparatus.

As illustrated inFIG.3, the main body apparatus2includes the display12, which is provided on the main surface of the housing11. The display12displays an image generated by the main body apparatus2. In the present non-limiting example, the display12is a liquid crystal display device (LCD). The display12, however, may be a display device of any suitable type.

In addition, the main body apparatus2includes a touch panel13on the screen of the display12. In the present non-limiting example, the touch panel13allows multi-touch input (e.g., a capacitive touch panel). It should be noted that the touch panel13may be of any suitable type, e.g., it allows single-touch input (e.g., a resistive touch panel).

The main body apparatus2includes a speaker (i.e., a speaker88illustrated inFIG.6) inside the housing11. As illustrated inFIG.3, speaker holes11aand11bare formed in the main surface of the housing11. The speaker88outputs sounds through the speaker holes11aand11b.

The main body apparatus2also includes a left-side terminal17that enables wired communication between the main body apparatus2and the left controller3, and a right-side terminal21that enables wired communication between the main body apparatus2and the right controller4.

As illustrated inFIG.3, the main body apparatus2includes a slot23. The slot23is provided on an upper side surface of the housing11. The slot23is so shaped as to allow a predetermined type of storage medium to be attached to the slot23. The predetermined type of storage medium is, for example, a dedicated storage medium (e.g., a dedicated memory card) for the game system1and an information processing apparatus of the same type as the game system1. The predetermined type of storage medium is used to store, for example, data (e.g., saved data of an application or the like) used by the main body apparatus2and/or a program (e.g., a program for an application or the like) executed by the main body apparatus2. Further, the main body apparatus2includes a power button28.

The main body apparatus2includes a lower-side terminal27. The lower-side terminal27allows the main body apparatus2to communicate with a cradle. In the present non-limiting example, the lower-side terminal27is a USB connector (more specifically, a female connector). When the unified apparatus or the main body apparatus2alone is placed on the cradle, the game system1can display, on a stationary monitor, an image that is generated and output by the main body apparatus2. Also, in the present non-limiting example, the cradle has the function of charging the unified apparatus or the main body apparatus2alone, being placed thereon. The cradle also functions as a hub device (specifically, a USB hub).

FIG.4illustrates six orthogonal views of a non-limiting example of the left controller3. As illustrated inFIG.4, the left controller3includes a housing31. In the present non-limiting example, the housing31has a vertically long shape, e.g., is shaped to be long in an up-down direction (i.e., a y-axis direction illustrated inFIGS.1and4). In the state where the left controller3is detached from the main body apparatus2, the left controller3can also be held in the orientation in which the left controller3is vertically long. The housing31has such a shape and a size that when held in the orientation in which the housing31is vertically long, the housing31can be held with one hand, particularly the left hand. Further, the left controller3can also be held in the orientation in which the left controller3is horizontally long. When held in the orientation in which the left controller3is horizontally long, the left controller3may be held with both hands.

The left controller3includes an analog stick32. As illustrated inFIG.4, the analog stick32is provided on a main surface of the housing31. The analog stick32can be used as a direction input section with which a direction can be input. The user tilts the analog stick32and thereby can input a direction corresponding to the direction of the tilt (and input a magnitude corresponding to the angle of the tilt). It should be noted that the left controller3may include a directional pad, a slide stick that allows a slide input, or the like as the direction input section, instead of the analog stick. Further, in the present non-limiting example, it is possible to provide an input by pressing the analog stick32.

The left controller3includes various operation buttons. The left controller3includes four operation buttons33to36(specifically, a right direction button33, a down direction button34, an up direction button35, and a left direction button36) on the main surface of the housing31. Further, the left controller3includes a record button37and a “−” (minus) button47. The left controller3includes a first L-button38and a ZL-button39in an upper left portion of a side surface of the housing31. Further, the left controller3includes a second L-button43and a second R-button44, on the side surface of the housing31on which the left controller3is attached to the main body apparatus2. These operation buttons are used to give instructions depending on various programs (e.g., an OS program and an application program) executed by the main body apparatus2.

The left controller3also includes a terminal42that enables wired communication between the left controller3and the main body apparatus2.

FIG.5illustrates six orthogonal views of a non-limiting example of the right controller4. As illustrated inFIG.5, the right controller4includes a housing51. In the present non-limiting example, the housing51has a vertically long shape, e.g., is shaped to be long in the up-down direction. In the state where the right controller4is detached from the main body apparatus2, the right controller4can also be held in the orientation in which the right controller4is vertically long. The housing51has such a shape and a size that when held in the orientation in which the housing51is vertically long, the housing51can be held with one hand, particularly the right hand. Further, the right controller4can also be held in the orientation in which the right controller4is horizontally long. When held in the orientation in which the right controller4is horizontally long, the right controller4may be held with both hands.

Similarly to the left controller3, the right controller4includes an analog stick52as a direction input section. In the present non-limiting example, the analog stick52has the same configuration as that of the analog stick32of the left controller3. Further, the right controller4may include a directional pad, a slide stick that allows a slide input, or the like, instead of the analog stick. Further, similarly to the left controller3, the right controller4includes four operation buttons53to56(specifically, an A-button53, a B-button54, an X-button55, and a Y-button56) on a main surface of the housing51. Further, the right controller4includes a “+” (plus) button57and a home button58. Further, the right controller4includes a first R-button60and a ZR-button61in an upper right portion of a side surface of the housing51. Further, similarly to the left controller3, the right controller4includes a second L-button65and a second R-button66.

Further, the right controller4includes a terminal64for allowing the right controller4to perform wired communication with the main body apparatus2.

FIG.6is a block diagram illustrating a non-limiting example of an internal configuration of the main body apparatus2. The main body apparatus2includes components81to91,97, and98illustrated inFIG.6in addition to the components illustrated inFIG.3. Some of the components81to91,97, and98may be implemented as electronic parts on an electronic circuit board, which is contained in the housing11.

The main body apparatus2includes a processor81. The processor81is an information processor for executing various types of information processing to be executed by the main body apparatus2. For example, the CPU81may include only a central processing unit (CPU), or may be a system-on-a-chip (SoC) having a plurality of functions such as a CPU function and a graphics processing unit (GPU) function. The processor81executes an information processing program (e.g., a game program) stored in a storage section (specifically, an internal storage medium such as a flash memory84, an external storage medium that is attached to the slot23, or the like), thereby executing the various types of information processing.

The main body apparatus2includes a flash memory84and a dynamic random access memory (DRAM)85as examples of internal storage media built in itself. The flash memory84and the DRAM85are connected to the CPU81. The flash memory84is mainly used to store various data (or programs) to be saved in the main body apparatus2. The DRAM85is used to temporarily store various data used in information processing.

The main body apparatus2includes a slot interface (hereinafter abbreviated to “I/F”)91. The slot I/F91is connected to the processor81. The slot I/F91is connected to the slot23, and reads and writes data from and to a predetermined type of storage medium (e.g., a dedicated memory card) attached to the slot23, in accordance with commands from the processor81.

The processor81reads and writes, as appropriate, data from and to the flash memory84, the DRAM85, and each of the above storage media, thereby executing the above information processing.

The main body apparatus2includes a network communication section82. The network communication section82is connected to the processor81. The network communication section82communicates (specifically, through wireless communication) with an external apparatus via a network. In the present non-limiting example, as a first communication form, the network communication section82connects to a wireless LAN and communicates with an external apparatus, using a method compliant with the Wi-Fi standard. Further, as a second communication form, the network communication section82wirelessly communicates with another main body apparatus2of the same type, using a predetermined communication method (e.g., communication based on a particular protocol or infrared light communication). It should be noted that the wireless communication in the above second communication form achieves the function of allowing so-called “local communication,” in which the main body apparatus2can wirelessly communicate with another main body apparatus2located in a closed local network area, and the plurality of main body apparatuses2directly communicate with each other to exchange data.

The main body apparatus2includes a controller communication section83. The controller communication section83is connected to the processor81. The controller communication section83wirelessly communicates with the left controller3and/or the right controller4. The main body apparatus2may communicate with the left and right controllers3and4using any suitable communication method. In the present non-limiting example, the controller communication section83performs communication with the left and right controllers3and4in accordance with the Bluetooth (registered trademark) standard.

The processor81is connected to the left-side terminal17, the right-side terminal21, and the lower-side terminal27. When performing wired communication with the left controller3, the processor81transmits data to the left controller3via the left-side terminal17and also receives operation data from the left controller3via the left-side terminal17. Further, when performing wired communication with the right controller4, the processor81transmits data to the right controller4via the right-side terminal21and also receives operation data from the right controller4via the right-side terminal21. Further, when communicating with the cradle, the processor81transmits data to the cradle via the lower-side terminal27. As described above, in the present non-limiting example, the main body apparatus2can perform both wired communication and wireless communication with each of the left and right controllers3and4. Further, when the unified apparatus obtained by attaching the left and right controllers3and4to the main body apparatus2or the main body apparatus2alone is attached to the cradle, the main body apparatus2can output data (e.g., image data or sound data) to a stationary monitor or the like via the cradle.

Here, the main body apparatus2can communicate with a plurality of left controllers3simultaneously (or in parallel). Further, the main body apparatus2can communicate with a plurality of right controllers4simultaneously (or in parallel). Thus, a plurality of users can simultaneously provide inputs to the main body apparatus2, each using a set of left and right controllers3and4. As a non-limiting example, a first user can provide an input to the main body apparatus2using a first set of left and right controllers3and4, and at the same time, a second user can provide an input to the main body apparatus2using a second set of left and right controllers3and4.

Further, the display12is connected to the processor81. The processor81displays, on the display12, a generated image (e.g., an image generated by executing the above information processing) and/or an externally obtained image.

The main body apparatus2includes a codec circuit87and speakers (specifically, a left speaker and a right speaker)88. The codec circuit87is connected to the speakers88and an audio input/output terminal25and also connected to the processor81. The codec circuit87is for controlling the input and output of audio data to and from the speakers88and the sound input/output terminal25.

Further, the main body apparatus2includes an acceleration sensor89. In the present non-limiting example, the acceleration sensor89detects the magnitudes of accelerations along predetermined three axial (e.g., the x-, y-, and z-axes shown inFIG.1) directions. It should be noted that the acceleration sensor89may detect an acceleration along one axial direction or accelerations along two axial directions.

Further, the main body apparatus2includes an angular velocity sensor90. In the present non-limiting example, the angular velocity sensor90detects angular velocities about predetermined three axes (e.g., the x-, y-, and z-axes shown inFIG.1). It should be noted that the angular velocity sensor90may detect an angular velocity about one axis or angular velocities about two axes.

The acceleration sensor89and the angular velocity sensor90are connected to the processor81. The detection results of the acceleration sensor89and the angular velocity sensor90are output to the processor81. Based on the detection results of the acceleration sensor89and the angular velocity sensor90, the processor81can calculate information about a motion and/or orientation of the main body apparatus2.

The main body apparatus2includes a power control section97and a battery98. The power control section97is connected to the battery98and the processor81. Further, although not illustrated, the power control section97is connected to components of the main body apparatus2(specifically, components that receive power supplied from the battery98, the left-side terminal17, and the right-side terminal21). Based on a command from the processor81, the power control section97controls the supply of power from the battery98to each of the above components.

Further, the battery98is connected to the lower-side terminal27. When an external charging device (e.g., the cradle) is connected to the lower-side terminal27, and power is supplied to the main body apparatus2via the lower-side terminal27, the battery98is charged with the supplied power.

FIG.7is a block diagram illustrating non-limiting examples of the internal configurations of the main body apparatus2, the left controller3, and the right controller4. It should be noted that the details of the internal configuration of the main body apparatus2are illustrated inFIG.6and therefore are omitted inFIG.7.

The left controller3includes a communication control section101, which communicates with the main body apparatus2. As illustrated inFIG.7, the communication control section101is connected to components including the terminal42. In the present non-limiting example, the communication control section101can communicate with the main body apparatus2through both wired communication via the terminal42and wireless communication without via the terminal42. The communication control section101controls the method for communication performed by the left controller3with the main body apparatus2. That is, when the left controller3is attached to the main body apparatus2, the communication control section101communicates with the main body apparatus2via the terminal42. Further, when the left controller3is detached from the main body apparatus2, the communication control section101wirelessly communicates with the main body apparatus2(specifically, the controller communication section83). The wireless communication between the communication control section101and the controller communication section83is performed in accordance with the Bluetooth (registered trademark) standard, for example.

Further, the left controller3includes a memory102such as a flash memory. The communication control section101includes, for example, a microcomputer (or a microprocessor) and executes firmware stored in the memory102, thereby performing various processes.

The left controller3includes buttons103(specifically, the buttons33to39,43,44, and47). Further, the left controller3includes the analog stick (“stick” inFIG.7)32. Each of the buttons103and the analog stick32outputs information regarding an operation performed on itself to the communication control section101repeatedly at appropriate timing.

The left controller3includes inertial sensors. Specifically, the left controller3includes an acceleration sensor104. Further, the left controller3includes an angular velocity sensor105. In the present non-limiting example, the acceleration sensor104detects the magnitudes of accelerations along predetermined three axial (e.g., the x-, y-, and z-axes shown inFIG.4) directions. It should be noted that the acceleration sensor104may detect an acceleration along one axial direction or accelerations along two axial directions. In the present non-limiting example, an angular velocity sensor105detects angular velocities about predetermined three axes (e.g., the x-, y-, and z-axes shown inFIG.4). It should be noted that the angular velocity sensor105may detect an angular velocity about one axis or angular velocities about two axes. Each of the acceleration sensor104and the angular velocity sensor105is connected to the communication control section101. Then, the detection results of the acceleration sensor104and the angular velocity sensor105are output to the communication control section101repeatedly at appropriate timing.

The communication control section101acquires information regarding an input (specifically, information regarding an operation or the detection result of the sensor) from each of input sections (specifically, the buttons103and the analog stick32). The communication control section101transmits operation data including the acquired information (or information obtained by performing predetermined processing on the acquired information) to the main body apparatus2. It should be noted that the operation data is transmitted repeatedly, once every predetermined time. It should be noted that the interval at which the information regarding an input is transmitted from each of the input sections to the main body apparatus2may or may not be the same.

The above operation data is transmitted to the main body apparatus2, whereby the main body apparatus2can obtain inputs provided to the left controller3. That is, the main body apparatus2can determine operations on the buttons103and the analog stick32based on the operation data. Further, the main body apparatus2can calculate information regarding the motion and/or the orientation of the left controller3based on the operation data (specifically, the detection results of the acceleration sensor104and the angular velocity sensor105).

The left controller3includes a power supply section108. In the present non-limiting example, the power supply section108includes a battery and a power control circuit. Although not illustrated inFIG.7, the power control circuit is connected to the battery and also connected to components of the left controller3(specifically, components that receive power supplied from the battery).

As illustrated inFIG.7, the right controller4includes a communication control section111, which communicates with the main body apparatus2. Further, the right controller4includes a memory112, which is connected to the communication control section111. The communication control section111is connected to components including the terminal64. The communication control section111and the memory112have functions similar to those of the communication control section101and the memory102, respectively, of the left controller3. Thus, a communication control section111can communicate with the main body apparatus2through both wired communication via the terminal64and wireless communication without via the terminal64(specifically, communication compliant with the Bluetooth (registered trademark) standard). The communication control section111controls the method for communication performed by the right controller4with the main body apparatus2.

The right controller4includes input sections similar to the input sections of the left controller3. Specifically, the right controller4includes buttons113, the analog stick52, and inertial sensors (an acceleration sensor114and an angular velocity sensor115). These input sections have functions similar to those of the input sections of the left controller3and operate similarly to the input sections of the left controller3.

The right controller4includes a power supply section118. The power supply section118has a function similar to that of the power supply section108of the left controller3and operates similarly to the power supply section108.

As described above, in the game system1of the present non-limiting example, the left controller3and the right controller4are removable from the main body apparatus2. In addition, when the unified apparatus obtained by attaching the left controller3and the right controller4to the main body apparatus2or the main body apparatus2alone is attached to the cradle, an image (and sound) can be output on an external display device, such as a stationary monitor or the like. The game system1will be described below according to a non-limiting embodiment in which an image is displayed on the display12. It should be noted that in the case where the game system1is used in an embodiment in which an image is displayed on the display12, the game system1may be used with the left controller3and the right controller4attached to the main body apparatus2(e.g., the main body apparatus2, the left controller3, and the right controller4are integrated in a single housing).

A game is played using a virtual space displayed on the display12, according to operations performed on the operation buttons and sticks of the left controller3and/or the right controller4, or touch operations performed on the touch panel13of the main body apparatus2, in the game system1. In the present non-limiting example, as a non-limiting example, a game in which a player character PC and a field character FC, which perform an action on a field in the virtual space, and characters such as a fighting character BC that fights against the field character FC on the field, are employed, can be played according to the user's operation performed using the operation buttons, the sticks, and the touch panel13.

An overview of a game process executed in the game system1will be given with reference toFIGS.8to20. It should be noted thatFIG.8is a diagram illustrating a non-limiting example of a game image in a first stage of catching of a field character FC.FIG.9is a diagram illustrating a non-limiting example of a game image in a second stage of catching of a field character FC.FIG.10is a diagram illustrating a non-limiting example of a game image in a third stage of catching of a field character FC.FIG.11is a diagram illustrating a non-limiting example of a game image in a first stage of a fight between a field character FC and a fighting character BC.FIG.12is a diagram illustrating a non-limiting example of a game image in a second stage of a fight between a field character FC and a fighting character BC.FIG.13is a diagram illustrating a non-limiting example of a game image in a third stage of a fight between a field character FC and a fighting character BC.FIG.14is a diagram illustrating a non-limiting example of a game image in a fourth stage of a fight between a field character FC and a fighting character BC.FIG.15is a diagram illustrating a non-limiting example of a game image in a first stage of collection of a collection object OBJ by a fighting character BC.FIG.16is a diagram illustrating a non-limiting example of a game image in a second stage of collection of a collection object OBJ by a fighting character BC.FIG.17is a diagram illustrating a non-limiting example of a pictorial representation of a field character FC.FIG.18is a diagram illustrating a non-limiting example of a game image of an attack on a boss character MC.FIG.19is a diagram illustrating a non-limiting example of a game image in a first stage of a fight between a boss character MC and a fighting character BC.FIG.20is a diagram illustrating a non-limiting example of a game image in a second stage of a fight between a boss character MC and a fighting character BC.

First Non-Limiting Embodiment

A game process according to a first non-limiting embodiment will be described. In the first non-limiting embodiment, a player character PC is caused to perform different actions in different modes, i.e., switch between a first and a second mode. In the first mode, the player character PC is caused to perform an action of launching, at a field character FC as a target on a field, an item that affects a field character FC, according to an operation input for causing the player character PC to perform a launching action in the direction indicated by an aiming point M. In the second mode, the player character PC is caused to perform an action of launching, at a field character FC on a field, a fighting character BC that fights against a field character FC, according to an operation input for causing the player character PC to perform a launching action in the direction indicated by an aiming point M.

InFIG.8, a game image indicating that the player character PC is disposed in a virtual space is displayed on the display12of the game system1. The player character PC performs an action in the virtual space according to the user's operation. In the game image displayed on the display12, a field character FC disposed in the virtual space is displayed. A plurality of field characters FC are disposed on the field in the virtual space, and are caused to perform an action in the virtual space by automatic control performed by the processor81based on a predetermined algorithm or the like. A user who operates the player character PC can cause the player character PC to perform an action to catch a field character FC, and set the field character FC in the user's possession.

InFIG.8, the player character PC is holding an empty ball item B, and is about to throw the held ball item B in the virtual space. Here, the empty ball item B serves as a catching item that can be caused to hit a field character FC on the field and thereby catch the field character FC. For example, when an empty ball item B thrown by the player character PC hits a field character, it is determined whether or not the field character is successfully caught (successful-catch determination). If the result of the successful-catch determination is positive, the field character FC hit by the ball item B is successfully caught and set in the user's possession.

For example, the user can cause the player character PC to perform an action of getting into a throwing position for throwing a chosen item (e.g., an action of getting into the position illustrated inFIG.8), by performing a predetermined operation input (e.g., pressing down the operation button (ZR button)61). In addition, a direction in which the player character PC throws a chosen item is indicated by an aiming point M. The location of the aiming point M is moved according to a predetermined operation input (e.g., the direction of inclination of the analog stick32or52, the orientation of the body of the left controller3or the right controller4, or the motion or pointed location of the body of the left controller3or the right controller4). Thereafter, when the user ends the operation input for causing the player character PC to perform the action of getting into a throwing position (e.g., the user releases the operation button (ZR button)61), the player character PC is caused to perform an action of throwing the chosen item in the direction indicated by the aiming point M.

For example, the user can change categories of items to be thrown by the player character PC, by performing a predetermined operation input (e.g., pressing down the operation button (X button)55). In the first non-limiting embodiment, there are at least the first mode in which a first category including a plurality of items that affect a field character FC is chosen, and the second mode in which a second category including a plurality of fighting characters BC that fight against a field character FC on the field is chosen. The user can change the categories (modes) by pressing down the operation button55. The user can also choose an item to be thrown by the player character PC from the chosen category, by pressing down the operation button (L button)38or the operation button (R button)60. In the non-limiting example ofFIG.8, projectile information Im1is displayed, indicating that the first category (first mode) has been chosen, and an empty ball item B has been chosen from the first category by the user. For example, the first category may include a plurality of ball items having different functions or appearances, or items that are other than ball items and that when thrown, support the user in throwing a ball item and catching a field character by, for example, setting a limitation on a movement of the field character.

When an item chosen from the first category is a projectile, a first aiming point M1(e.g., an aiming point in a normal display form) is displayed. When an empty ball item B that can catch a field character FC is chosen as a projectile from the first category, the location of the first aiming point M1can be moved by the above operation of moving the aiming point M, or alternatively, the first aiming point M1can be set (locked on) to a location where the first aiming point M1is aligned with a field character FC by the user performing a predetermined operation input (e.g., pressing down the operation button (ZL button)39). When the first aiming point M1is locked on to the location of a field character FC, a thrown item is more likely to hit the field character FC.

When the first aiming point M1is locked on to a field character FC, catch information Ig indicating how likely the field character FC is to be caught by hitting the field character FC with an empty ball item B is displayed in the vicinity of the first aiming point M1. For example, an indicator indicating how likely the result of the successful-catch determination is to be positive when an empty ball item B thrown by the player character PC hits a field character FC, is displayed as the catch information Ig. The catch information Ig may be an indicator indicating one of a plurality levels indicating how likely the result of the successful-catch determination is to be positive, or an indicator indicating a numerical value indicating the probability or degree of the positive result of the determination. The catch information Ig may be an indicator representing how likely the result of the successful-catch determination is to be positive, by design or text, a size or motion, a color or lightness, or the like. The catch information Ig may not be displayed on the display12, and may be represented by sounds, vibrations applied to the controller3and/or4, or the like indicating how likely the result of the successful-catch determination is to be positive.

FIGS.9and10illustrate game images that are displayed on the display12, showing that an empty ball item B thrown by the player character PC hits a field character FC, so that the field character FC is successfully caught. For example, the user ends an operation input of causing the player character PC to get into a throwing position (e.g., the user releases the operation button (ZR button)61), and thereby, can allow the player character PC to perform an action of throwing a chosen item in the direction indicated by the first aiming point M1. When the empty ball item B thrown by the player character PC hits a field character FC, a scene image showing that the field character FC is hit (and/or caught) is displayed as illustrated inFIG.9. When the field character FC is successfully caught, a scene image showing the field character FC is put into the empty ball item B, which means that the field character FC is caught, is displayed as illustrated inFIG.10. Thereafter, the field character FC successfully caught is set in the user's possession. It should be noted that the field character FC successfully caught may be allowed to be used as a fighting character BC in the subsequent process by the user.

When the empty ball item B thrown by the player character PC does not hit a field character FC, or the player character PC fails to catch a field character FC, a scene image showing such a situation is displayed on the display12. Here, when the player character PC fails to catch a field character FC, there may be a disadvantage such as the escape of the field character FC, or the outbreak of a fight due to an attack by the field character FC.

Although it is assumed above that a condition for catching a field character FC is that an empty ball item B thrown by the player character PC hits the field character FC, the field character FC may be caught if the empty ball item B reaches a predetermined range including the location of the field character FC irrespective of whether the empty ball item B hits the field character FC. In that case, how likely it is to catch a field character FC may be changed, depending on whether the field character FC is hit.

In addition, how likely it is to catch a field character FC may be changed according to a state (emotions, endurance, remaining physical strength, size, movement, or the like) of the field character FC to be caught, or the type of an item (e.g., the type of an empty ball item B) thrown by the player character PC. Even when how likely it is to catch a field character FC is changed, the user can know the change from the catch information Ig, which is displayed in the vicinity of the first aiming point M1.

Although in the first non-limiting embodiment, an empty ball item B is used as a non-limiting example of an item that is chosen from the first category (first mode) including a plurality of items that affect a field character FC, the first category may include other types of items. For example, the first category may include an item that slows the movement of a hit field character FC, an item that deprives physical strength of a hit field character FC, an item that changes emotions of a hit field character FC, and an item that attracts a field character FC. By using a combination of these items to hit and attract a field character FC (an item is placed at a location to which a field character FC should be attracted) and the like, the effect of making it more likely to catch a field character FC by throwing a catching item (e.g., an empty ball item B) can be expected.

InFIG.11, the player character PC is holding a ball item Bs containing a fighting character BC, and is about to throw the held ball item Bs in the virtual space in the second mode. Here, when the ball item Bs containing a fighting character BC is thrown on the field, the fighting character BC appears in the virtual space. For example, when the player character PC throws the ball item Bs at near a field character FC, the fighting character BC appears from the ball item Bs and starts fighting against the field character FC. It should be noted that a fight is directly started on the field without changing places.

For example, the user can cause the player character PC to perform an action of getting into a throwing position for throwing a chosen fighting character BC (ball item Bs) (e.g., an action of getting into the position illustrated inFIGS.11andFIG.12), by performing a predetermined operation input (e.g., pressing down the operation button (ZR button)61). In addition, a direction in which the player character PC throws the chosen fighting character BC (ball item Bs) is indicated by the aiming point M. The location of the aiming point M is moved according to a predetermined operation input (e.g., the direction of inclination of the analog stick32or52, the orientation of the body of the left controller3or the right controller4, or the motion or pointed location of the body of the left controller3or the right controller4). Thereafter, when the user ends the operation input for causing the player character PC to perform the action of getting into a throwing position (e.g., the user releases the operation button (ZR button)61), the player character PC is caused to perform an action of throwing the chosen fighting character BC (ball item Bs) in the direction indicated by the aiming point M.

As described above, the user can change the category to the second category (second mode) including a plurality of fighting characters BC by performing a predetermined operation input (e.g., pressing down the operation button (X button)55). Thereafter, the user can choose a fighting character BC that the player character PC is to throw, from the chosen second category, by performing a predetermined operation input (e.g., pressing down the operation button (L button)38or the operation button (R button)60). For example, in the non-limiting examples ofFIGS.11and12, projectile information Im2is displayed, indicating that the second category (second mode) has been chosen, and the user has chosen a predetermined fighting character BC from the second category.

When a fighting character BC (a ball item Bs containing a fighting character BC) is chosen as a projectile from the second category, a second aiming point M2is displayed. The second aiming point M2is displayed in a form different that of the first aiming point M1. As a non-limiting example, the second aiming point M2does not have the normal display form, and is displayed as a colored mark imitating a ball item Bs containing a fighting character BC. By thus displaying the second aiming point M2in a display form different from that of the first aiming point M1, a projectile that the player character PC is trying to throw can be easily recognized while seeing the aiming point.

As illustrated inFIG.12, when the second aiming point M2is disposed, overlaying a range in which a field character FC and a fighting character BC can fight against each other, the second aiming point M2is changed to a third aiming point M3. The third aiming point M3is displayed in a display form that is different from those of the first aiming point M1and the second aiming point M2. As a non-limiting example, the third aiming point M3is displayed as a mark to which a design indicating a fight is added at a center of the second aiming point M2. By thus displaying the third aiming point M3in a display form different from those of the first aiming point M1and the second aiming point M2, the user is allowed to easily recognize that the player character PC can fight against a field character FC by throwing a ball item Bs containing a fighting character BC, while seeing the aiming point.

InFIG.13, the display12displays a game image in which a fighting character BC appearing from a ball item Bs thrown by the player character PC is fighting against a field character FC. For example, the user can cause the player character PC to perform an action of throwing a ball item Bs containing a chosen fighting character BC in the direction indicated by the third aiming point M3by ending an operation input for causing the player character PC to get into a throwing position (e.g., releasing the operation button (ZR button)61). When the ball item Bs thrown by the player character PC reaches a range in which the fighting character BC can fight against the field character FC, the fighting character BC appears from that range. Thereafter, the fighting character BC starts fighting against the field character FC. Thus, in the first non-limiting embodiment, by causing the player character PC to perform the same action, i.e., an action of throwing a projectile in the direction indicated by the aiming point M, the field character FC can be subjected to various situations on the field, because the categories (modes) of a projectile can be changed.

While a fighting character BC is fighting against a field character FC, a gauge G1indicating a state of the field character FC is displayed at a location that is set, corresponding to a location of the field character FC. Here, the state of the field character FC indicated by the gauge G1indicates at least a parameter related to the remaining physical strength of the field character FC during a fight against the fighting character BC. When an attack on the field character FC by the fighting character BC is effective, the parameter gradually decreases according to the attack. When the remaining physical strength indicated by the gauge G1is zero, the fighting character BC wins the fight. As described below, the state of the field character FC indicated by the gauge G1also indicates how likely it is to catch the field character FC during the fight.

As illustrated inFIG.14, while a fighting character BC is fighting against a field character FC, the user can control actions of the player character PC and/or the fighting character BC by choosing commands. For example, while a fighting character BC is fighting against a field character FC, a plurality of command-indicating images C for allowing the user to choose a command are displayed. For example, inFIG.14, as a non-limiting example of the command-indicating images C, an attack command, an item command, an appear/disappear command, and an escape command are displayed. The user can choose any of the commands by performing an operation input using an input section provided on the left controller3or the right controller4, or the touch panel13of the main body apparatus2.

The user can control an action of a fighting character BC by performing an operation input for choosing the attack command. As a non-limiting example, when the attack command is chosen, the user is prompted to choose one of a plurality of kinds of attack. By performing an operation of choosing one from the plurality of kinds of attack, the user can cause a fighting character BC to perform an attack action corresponding to the chosen kind of attack.

The user can control an action of the player character PC by performing an operation input for choosing the item command. As a non-limiting example, when the item command is chosen, the user is prompted to choose one of a plurality of items including a catching item. By performing an operation of choosing an item to be used from the plurality of items, the user can cause the player character PC to perform an action of using the chosen item. As a non-limiting example, by performing an operation of choosing a catching item for catching a field character FC during the fight, the user can cause the player character PC to perform an action of catching a field character FC using the catching item.

A catching item used during a fight may be the same as or similar to the above ball item B. For example, when the command to use a catching item is chosen during the fight, a scene that the player character PC throws a catching item at a field character FC during the fight is displayed. As to whether or not the field character FC is successfully caught, successful-catch determination is performed as in the non-fight state. During the fight, the successful-catch determination is performed based on a state of the field character FC that is changed during the fight, i.e., the state of the field character FC indicated by the gauge G1. Specifically, when a state (e.g., the remaining physical strength) of the field character FC changed due to the fight is reduced to a predetermined state, the result of the successful-catch determination is more likely to be positive. It should be noted that the above threshold may be changed according to at least one of the type of the field character FC, the type of the chosen catching item, an ability value of the player character PC, an ability value of the fighting character BC, and the like. Even when the result of the successful-catch determination is positive during the fight, the field character FC is caught by the command using the catching item, and is set in the user's possession. As another non-limiting example, while a fighting character BC is fighting against a field character FC, the player character PC may be caused to perform an action of catching the field character FC using a catching item, by performing an operation input of causing the player character PC to perform an action of throwing the catching item at the aiming point M as in catching instead of the operation of choosing a command unlike the above fight.

The user can cause a fighting character BC to disappear during the fight or can cause another fighting character BC to appear during the fight, by performing an operation input of choosing the appear/disappear command. It should be noted that the existing fighting character BC may be replaced by another fighting character BC that newly appears, or in addition to the existing fighting character BC, another fighting character BC may newly appear. As a non-limiting example, when the appear/disappear command is chosen, the user is prompted to choose one of a plurality of characters that is to appear, and by operating an operation of choosing the one from the plurality of characters, the user can cause the player character PC to perform an action of causing the chosen character to appear as a fighting character BC.

The user can end a fight between a fighting character BC and a field character FC, and control an action of the player character PC such that the player character PC performs an action of escaping from the field character FC, by performing an operation input of choosing the escape command. At that time, the appearing fighting character BC may be collected by the player character PC to disappear from the field, or may be left as it is on the field.

Thus, in a fight between a fighting character BC and a field character FC, the field character FC can be caught by choosing the command to use a catching item as in the non-fight state. Therefore, the user can choose whether to catch a field character FC without fighting against the field character FC using a fighting character BC, or to catch a field character FC by performing the fight, resulting in a game having various strategic aspects.

Although in the first non-limiting embodiment, a fighting character BC is released in the virtual space by the player character PC throwing a ball item Bs containing the fighting character BC in the direction indicated by the aiming point M, a fighting character BC may be released in the virtual space by the player character PC directly throwing the fighting character BC.

The location and orientation of a virtual camera for generating a game image to be displayed on the display12may be set such that the virtual camera is located behind the player character PC, and the player character PC is included in the range of view of the virtual camera, or such that the first-person point of view of the player character PC is provided. In either case, the location and/or orientation of the virtual camera may be changeable according to the user's operation input. In that case, a field character FC may be out of the range of view during the fight or the gauge G1indicating a state of the field character FC may be out of the display range, depending on the location or orientation of the player character PC in the virtual space. If the location and/or orientation of the virtual camera are changeable according to the user's operation input even during a fight between a fighting character BC and a field character FC, then when a fight between a field character FC and a fighting character BC starts without the field character FC being displayed, the gauge G1indicating a state of the field character FC, i.e., an indicator affecting catching of the field character FC, can be displayed according to the user's operation. The player character PC may be allowed to freely move during a fight according to the user's operation input. Therefore, no matter what kind of situation a fight starts in, the camera can be subsequently appropriately changed by the user. Therefore, a fight can be caused to start freely irrespective of situations.

In addition, in the first non-limiting embodiment, a fighting character BC appearing from a ball item Bs can be caused to perform an action different from that during the fight on the field. For example, in the first non-limiting embodiment, when the player character PC throws a ball item Bs containing a fighting character BC on the field in the virtual space, the fighting character BC may be caused to merely appear on the field, or the fighting character BC may be caused to appear and perform a predetermined action on a virtual object OBJ disposed on the field.

For example, as illustrated inFIG.15, a collection object OBJ is disposed on the field in the virtual space. In the first non-limiting embodiment, the player character PC may collect the collection object OBJ by directly touching the collection object OBJ, and a fighting character BC may be caused to appear and collect the collection object OBJ.

InFIG.15, the player character PC is holding a ball item Bs containing a fighting character BC as in the state ofFIG.11, and is performing an action of getting into a throwing position for throwing the chosen fighting character BC (ball item Bs), according to a predetermined operation input (e.g., pressing down the operation button (ZR button)61). In the non-limiting example ofFIG.15, the projectile information Im2is displayed, indicating that the second category (second mode) has been chosen, and a predetermined fighting character BC has been chosen from the second category by the user.

As described above, when a fighting character BC (a ball item Bs containing the fighting character BC) chosen from the second category is a projectile, the second aiming point M2is displayed. When the second aiming point M2is disposed, overlaying the range in which an action of collecting a collection object OBJ is allowed, the second aiming point M2is changed to a fourth aiming point M4. The fourth aiming point M4is displayed in a display form different from those of the first aiming point M1, the second aiming point M2, and the third aiming point M3. As a non-limiting example, the fourth aiming point M4is displayed as a mark in which a design imitating a portion of the fighting character BC is added at a center of the second aiming point M2. Thus, by displaying the fourth aiming point M4in a display form different from those of the first aiming point M1, the second aiming point M2, and the third aiming point M3, it is more easily recognized that the field character FC can be caused to appear in a state different from the fight state by throwing the ball item Bs containing the fighting character BC, while seeing the aiming point.

InFIG.16, a game image that a fighting character BC appearing from a ball item Bs thrown by the player character PC is collecting a collection object OBJ is displayed on the display12. For example, the user stops performing an operation input for causing the player character PC to perform an action of getting into a throwing position (e.g., the user releases the operation button (ZR button)61), and thereby can cause the player character PC to perform an action of throwing a ball item Bs containing a chosen fighting character BC in the direction indicated by the fourth aiming point M4. When the ball item Bs thrown by the player character PC reaches a range in which the player character PC can collect a collection object OBJ, the fighting character BC appears from the range. Thereafter, the fighting character BC starts collecting the collection object OBJ.

In the first non-limiting embodiment, information about a field character FC to which the aiming point M is locked on can be displayed (pictorial representation). For example, when a predetermined operation input (e.g., pressing down the operation button (down button)34or the down button of the directional pad) is performed with the first aiming point M1locked on to the field character FC as illustrated inFIG.8, i.e., an operation of displaying a pictorial representation is performed while performing an operation of locking the first aiming point M1on to the field character FC, information about the field character FC is displayed as illustrated inFIG.17. Here, information about a field character FC includes mission information about a history of missions in a game including at least the number of field characters FC to which the aiming point M is locked on and which have been caught by the player character PC and the number of times of a fight against the field character FC. In a non-limiting example of a pictorial representation of field characters FC illustrated inFIG.17, for a field character A of a plurality of field characters FC to which the aiming point M is locked on, the history of each of features such as the number of catches (the number of times a field character A was caught), the number of heavy size catches (the number of times a relatively heavy field character A was caught), the number of times of a fight (the number of times of a fight against a field character A), the number of beaten field characters A (the number of field characters A that were beaten during a fight), and the number of types of observed field characters A (the number of types of field characters A that appeared in the virtual space and were displayed on the display12) is displayed as information about the field character FC. In the non-limiting example of the pictorial representation of field characters FC ofFIG.17, a mission to be accomplished and the progress of the mission are displayed for each feature. As a non-limiting example, as the mission information, for each feature of the pictorial representation, a value required for accomplishing a mission (the number of times required for accomplishing a mission) is displayed for each stage. For a mission that has already been accomplished, a mark (check mark in the non-limiting example ofFIG.17) indicating that the mission already has been accomplished is given to the value required for accomplishing the mission.

Thus, the history information of a mission to be accomplished for a field character FC on the field is displayed, and can be referenced for choosing whether to catch the field character FC or fight against the field character FC. It should be noted that information about a field character FC different from the field character A to which an aiming point is locked on may also be displayed. For example, in the non-limiting example ofFIG.17, a tag is provided at a right end of a display screen for each field character FC (e.g., field characters A to E), and information about the other field characters FC can be displayed by performing an operation of choosing the respective corresponding tags.

Second Non-Limiting Embodiment

A game process according to a second non-limiting embodiment will be described. In this non-limiting embodiment, a player character PC can fight against a boss character MC that is a non-limiting example of a field character disposed on a field in a virtual space. In the second non-limiting embodiment, a game process is performed on the boss character MC. Here, the boss character MC appears on the same field in the same virtual space on which a field character FC appears, and attacks the player character PC and moves toward the player character PC. Therefore, the user may operate the player character PC such that the player character PC escapes an attack from the boss character MC, or the player character PC hits the boss character MC with a boss attack item AI. In the second non-limiting embodiment, the player character PC is caused to perform different actions in different modes, i.e., switch between a first and a second mode. In the first mode, the player character PC is caused to perform an action of launching, at the boss character MC as a target on the field, an item that affects the boss character MC, according to an operation input for causing the player character PC to perform a launching action in the direction indicated by an aiming point M. In the second mode, the player character PC is caused to perform an action of launching, at the boss character MC on the field, a fighting character BC that fights against the boss character MC, according to an operation input for causing the player character PC to perform a launching action in the direction indicated by an aiming point M.

InFIG.18, a game image that the player character PC and the boss character MC are disposed in the virtual space is displayed on the display12. For example, the boss character MC appears in the virtual space in a special event (e.g., a boss fight event) during progression of a game, and is operated on the field in the virtual space by automatic control performed by the processor81based on a predetermined algorithm or the like as with a field character FC. The user who operates the player character PC can cause the player character PC or a fighting character BC to fight against the boss character MC. It should be noted that the boss character MC may be forbidden to be caught, unlike the above field character FC.

InFIG.18, the player character PC is holding a boss attack item AI, and is about to throw the held boss attack item AI in the virtual space. Here, the boss fight event can be progressed by hitting the boss character MC on the field with the boss attack item AI. For example, a boss state parameter that indicates a state of the boss character MC in the boss fight event is provided. The boss state parameter is reduced when the boss attack item AI hits the boss character MC. As a non-limiting example, when the boss attack item AI thrown by the player character PC hits the boss character MC, the attack is assessed based on the site of the hit, the state of the boss character MC, and the like, and a reduction amount based on the assessment of the attack is subtracted from the boss state parameter of the boss character MC. When the boss state parameter is reduced to reach a threshold (e.g., zero), the boss character MC is beaten, so that the boss fight event is cleared. In the non-limiting example of a game image ofFIG.18, a gauge G2indicating the remaining amount of the boss state parameter of the boss character MC is displayed at an upper portion of a display screen.

In the boss fight event, the user can also cause the player character PC to perform an action of getting into a throwing position for throwing a chosen boss attack item AI (e.g., an action of getting into the position illustrated inFIG.18) by performing a predetermined operation input (e.g., pressing down the operation button (ZR button)61). A direction in which the player character PC throws a chosen boss attack item AI is indicated by a first aiming point M1. The location of the first aiming point M1is moved according to a predetermined operation input (e.g., the direction of inclination of the analog stick32or52, the orientation of the body of the left controller3or the right controller4, or the motion or pointed location of the body of the left controller3or the right controller4). Thereafter, when the user ends the operation input for causing the player character PC to perform the action of getting into a throwing position (e.g., the user releases the operation button (ZR button)61), the player character PC is caused to perform an action of throwing the chosen boss attack item AI in the direction indicated by the first aiming point M1.

In the boss fight event, the user can also change categories of an item to be thrown by the player character PC, by performing a predetermined operation input (e.g., pressing down the operation button (X button)55). In the second non-limiting embodiment, there are at least the first mode in which a first category including a plurality of items that affect the boss character MC is chosen, and the second mode in which a second category including a plurality of fighting characters BC that fight against the boss character MC on the field is chosen. The user can change the categories (modes) by pressing down the operation button55. The user can also choose an item to be thrown by the player character PC from the chosen category, by pressing down the operation button (L button)38or the operation button (R button)60. For example, in the non-limiting example ofFIG.18, projectile information Im3is displayed, indicating that the first category (first mode) has been chosen, and a boss attack item AI has been chosen from the first category by the user. In the boss fight event, when a boss attack item AI is chosen as a projectile from the first category, the first aiming point M1(e.g., an aiming point in a normal display form) is also displayed.

As described above, when the user ends the operation input of causing the player character PC to perform an action of getting into a throwing position (e.g., the user releases the operation button (ZR button)61), the player character PC can be caused to perform an action of throwing a chosen boss attack item AI in the direction indicated by the first aiming point M1. When the boss attack item AI thrown by the player character PC hits the boss character MC, the boss state parameter of the boss character MC is reduced based on the above attack assessment. When the boss attack item AI thrown by the player character PC fails to hit the boss character MC, the boss state parameter of the boss character MC is maintained, or the boss state parameter is increased by a predetermined amount.

Although in the foregoing, a condition for reducing the boss state parameter of the boss character MC is that a boss attack item AI thrown by the player character PC hits the boss character MC, the boss state parameter of the boss character MC may be reduced if the boss attack item AI reaches a predetermined range including the location of the boss character MC irrespective of whether the boss attack item AI directly hits the boss character MC.

Although in the second non-limiting embodiment, a boss attack item AI is used as a non-limiting example of an item chosen from the first category (first mode) including a plurality of items that affect the boss character MC, the first category may include other types of items. For example, the first category may include an item that slows the movement of the boss character MC when the item hits the boss character, an item that changes emotions of the boss character MC when the item hits the boss character, and an item that attracts the boss character MC. By using a combination of these items to hit and attract the boss character MC (an item is placed at a location to which the boss character MC should be attracted) and the like, the effect of making it more likely for a boss attack item AI to hit the boss character MC can be expected.

In the second non-limiting embodiment, a fighting character BC may be caused to appear on the field and fight against the boss character MC. InFIG.19, the player character PC is holding a ball item Bs containing a fighting character BC, and is about to throw the held ball item Bs in the virtual space. Here, when the ball item Bs containing a fighting character BC is thrown on the field in the boss fight event, the fighting character BC appears in the virtual space. For example, when the player character PC throws the ball item Bs at near the boss character MC, the fighting character BC appears from the ball item Bs and starts fighting against the boss character MC. It should be noted that a fight is directly started on the field without changing places.

For example, in the boss fight event, the user can also cause the player character PC to perform an action of getting into a throwing position for throwing a chosen fighting character BC (ball item Bs) (e.g., an action of getting into the position illustrated inFIG.19), by performing a predetermined operation input (e.g., pressing down the operation button (ZR button)61). In addition, a direction in which the player character PC throws the chosen fighting character BC (ball item Bs) is indicated by a second aiming point M2. The location of the second aiming point M2is moved according to a predetermined operation input (e.g., the direction of inclination of the analog stick32or52, the orientation of the body of the left controller3or the right controller4, or the motion or pointed location of the body of the left controller3or the right controller4). As illustrated inFIG.19, in the boss fight event, when the second aiming point M2is disposed, overlaying a range in which the boss character MC and the fighting character BC can fight against each other, the second aiming point M2is changed to a third aiming point M3.

In the boss fight event, the user can also change the category to the second category (second mode) including a plurality of fighting characters BC by performing a predetermined operation input (e.g., pressing down the operation button (X button)55). Thereafter, the user can choose a fighting character BC that the player character PC is to throw, from the chosen second category, by performing a predetermined operation input (e.g., pressing down the operation button (L button)38or the operation button (R button)60). For example, in the non-limiting examples ofFIGS.19and20, projectile information Im2is displayed, indicating that the second category (second mode) has been chosen, and the user has chosen a predetermined fighting character BC from the second category.

InFIG.20, a game image that a fighting character BC appearing from a ball item Bs thrown by the player character PC is fighting against the boss character MC is displayed on the display12. For example, in the boss fight event, the user can also cause the player character PC to perform an action of throwing a ball item Bs containing a chosen fighting character BC in the direction indicated by the third aiming point M3by ending an operation input for causing the player character PC to get into a throwing position (e.g., releasing the operation button (ZR button)61). When the ball item Bs thrown by the player character PC reaches a range in which the fighting character BC can fight against the boss character MC, the fighting character BC appears from that range. Thereafter, the fighting character BC starts fighting against the boss character MC. Thus, in the second non-limiting embodiment, by causing the player character PC to perform the same action, i.e., an action of throwing a projectile in the direction indicated by the aiming point M, the boss character MC can be subjected to various situations on the field, because the categories (modes) of a projectile can be changed.

While a fighting character BC is fighting against the boss character MC, a gauge G3indicating a state of the boss character MC is displayed at a location that is set, corresponding to a location of the boss character MC. Here, the state of the boss character MC indicated by the gauge G3indicates at least a parameter related to the remaining physical strength of the boss character MC during a fight against the fighting character BC. When an attack on the boss character MC by the fighting character BC is effective, the parameter gradually decreases according to the attack. When the remaining physical strength of the boss character MC indicated by the gauge G3is zero, the fighting character BC wins the fight.

While a fighting character BC is fighting against the boss character MC, the user can control an action of the fighting character BC by choosing a command. As a non-limiting example, by performing an operation of choosing one from a plurality of attack commands, the user can cause a fighting character BC to perform an attack action corresponding to the chosen attack command.

When a fighting character BC wins a fight against the boss character MC, a condition for clearing the boss fight event is adjusted so as to be more easily satisfied. As a first non-limiting example, when a fighting character BC wins a fight against the boss character MC, a limitation is set on the movement in the virtual space of the boss character MC during at least a predetermined period of time. This allows the user to more easily hit the boss character MC with a boss attack item AI, and therefore, to more easily reduce the boss state parameter for clearing the boss fight event, so that the condition for clearing the boss fight event is more easily satisfied. As a second non-limiting example, when a fighting character BC wins a fight against the boss character MC, the amount of a reduction in the boss state parameter corresponding to hitting of the boss character MC with a boss attack item AI is relatively increased during at least a predetermined period of time. This allows the user to more easily reduce the boss state parameter, so that the condition for clearing the boss fight event is more easily satisfied. As a third non-limiting example, when a fighting character BC wins a fight against the boss character MC, the boss state parameter as of the end of the fight is reduced by a predetermined amount. This allows the user to more easily reduce the boss state parameter, so that the condition for clearing the boss fight event is more easily satisfied. It should be noted that in the second non-limiting embodiment, by combining at least two of the above non-limiting examples, the condition for clearing the boss fight event may be adjusted so as to be more easily satisfied.

It should be noted that a fight between a fighting character BC and the boss character MC in the boss fight event may be allowed to start only when the boss character MC is in a predetermined state. For example, the predetermined state may be a state that the boss character MC is in an unguarded moment, a state that the boss character MC is in a predetermined position, a state that the boss state parameter of the boss character MC has reached a predetermined value, a state that a predetermined period of time has passed since the start of the boss fight event, or the like. In addition, the fight may not be forbidden to start in a state that a fighting character BC does not appear even when the player character PC throws a ball item Bs, a state that the player character PC does not perform a throwing action even when the user performs an operation input for causing the player character PC to perform a throwing action, a state that a ball item Bs cannot be chosen as a projectile, or the like.

Thus, in the second non-limiting embodiment, in the boss fight event, in which the boss character MC appears, an operation of throwing an item that affects the boss character MC (boss attack item AI) in the direction indicated by an aiming point, and an operation of throwing a fighting character BC that fights against the boss character MC in the direction indicated by an aiming point, can be allowed, and therefore, the user can choose whether to attack the boss character MC using an item or attack the boss character MC using a fighting character BC, resulting in a game having various strategic aspects.

In the boss fight event, the location and orientation of a virtual camera for generating a game image to be displayed on the display12may be set such that the virtual camera is located behind the player character PC, and the player character PC is included in the range of view of the virtual camera, or such that the first-person point of view of the player character PC is provided. In either case, the location and/or orientation of the virtual camera may be changeable according to the user's operation input.