U.S. Pat. No. 12,179,096

OVERLAPPING OPERATIONS AT A SERVER AND A CLIENT FOR A VIDEO FRAME TO REDUCE LATENCY IN CLOUD GAMING APPLICATIONS

AssigneeSony Interactive Entertainment Inc.

Issue DateJanuary 9, 2024

Illustrative Figure

Abstract

A method for cloud gaming. The method including receiving one or more encoded slices of a video frame at a client, wherein the video frame was generated at a server while executing a video game, and encoded by an encoder at the server into the one or more encoded slices. The method including decoding a first encoded slice at a decoder of the client before fully receiving the one or more encoded slices of the video frame.

Description

DETAILED DESCRIPTION Although the following detailed description contains many specific details for the purposes of illustration, anyone of ordinary skill in the art will appreciate that many variations and alterations to the following details are within the scope of the present disclosure. Accordingly, the aspects of the present disclosure described below are set forth without any loss of generality to, and without imposing limitations upon, the claims that follow this description. Generally speaking, the various embodiments of the present disclosure describe methods and systems configured to reduce latency and/or latency instability between source and target devices when streaming media content (e.g., streaming audio and video from video games). In particular, in some embodiments of the present disclosure, one-way latency in cloud gaming applications may be reduced by overlapping encode and transmission operations of video frames at the server when streaming video frames generated from a video game executing on the server. The server in cloud gaming applications encodes video frames, and to reduce one-way latency transmission of data from the server to the client may begin as soon as a packet is encoded by an encoder of the server. This may require information (e.g., amount of data encoded at a given time) to be shared within the server between the encode unit and those units (e.g., streamer operating at the application layer) performing the transmission of data. In some embodiments, the encoder is tuned to encode packets such that the packet size corresponds to natural quantities of data being created by the encoder. In one example, a packet size may correspond to a network based maximum transmission unit (MTU). In another example, the packet size may correspond to a MTU and also be limited to an encoded slice generated by the encoder. In still another example, a packet size may ...

DETAILED DESCRIPTION

Although the following detailed description contains many specific details for the purposes of illustration, anyone of ordinary skill in the art will appreciate that many variations and alterations to the following details are within the scope of the present disclosure. Accordingly, the aspects of the present disclosure described below are set forth without any loss of generality to, and without imposing limitations upon, the claims that follow this description.

Generally speaking, the various embodiments of the present disclosure describe methods and systems configured to reduce latency and/or latency instability between source and target devices when streaming media content (e.g., streaming audio and video from video games). In particular, in some embodiments of the present disclosure, one-way latency in cloud gaming applications may be reduced by overlapping encode and transmission operations of video frames at the server when streaming video frames generated from a video game executing on the server. The server in cloud gaming applications encodes video frames, and to reduce one-way latency transmission of data from the server to the client may begin as soon as a packet is encoded by an encoder of the server. This may require information (e.g., amount of data encoded at a given time) to be shared within the server between the encode unit and those units (e.g., streamer operating at the application layer) performing the transmission of data. In some embodiments, the encoder is tuned to encode packets such that the packet size corresponds to natural quantities of data being created by the encoder. In one example, a packet size may correspond to a network based maximum transmission unit (MTU). In another example, the packet size may correspond to a MTU and also be limited to an encoded slice generated by the encoder. In still another example, a packet size may correspond to a scanline or screen slice, in one embodiment. In that manner, one-way latency and the amount of buffering required are reduced. In addition, in some embodiments of the present disclosure, one-way latency in cloud gaming applications may be reduced by overlapping reception and decoding operations of video frames at the client when streaming video frames generated from a video game executing on the server. In other embodiments of the present disclosure, one-way latency between a cloud gaming server and a client may be reduced by overlapping reception and decode operations of compressed video frames and their display. The client in cloud gaming applications receives compressed video frames from the cloud gaming server, and is configured to decode the compressed video frames. One-way latency between the server and the client may be reduced by beginning the decode operations at the client as soon as possible. For example, each packet of an encoded slice may be decoded as it is received by the client from the server. Also, one-way latency can be reduced by beginning display of a video frame before the frame has been completely received or decoded at the client. Timing of submission for display must anticipate remaining time needed for reception and decode of the compressed video frames. As previously introduced, packet sizing may correspond to natural quantities of data being created by the encoder (e.g., MTU, scanline, encoder slice sized to the MTU, etc.), which makes it possible to decode each packet as it arrives.

In particular, latency instability may be introduced between a server and client due to the additional time needed to generate a complex frame (e.g., scene change) at the server, increased times to encode/compress the complex frame at the server, variable communication paths over a network, and increased time to decode complex frames at the client. Latency instability may also be introduced due to differences in clocks at the server and the client, which causes a drift between server and client VSYNC signals. In one embodiment, this latency instability may be removed by overlapping encode and transmission operations at the server, receive and decode operations at the client, and/or receive and decode and rendering for display operations at the client.

With the above general understanding of the various embodiments, example details of the embodiments will now be described with reference to the various drawings.

Throughout the specification, the reference to “game” or video game” or “gaming application” or “application” is meant to represent any type of interactive application that is directed through execution of input commands. For illustration purposes only, an interactive application includes applications for gaming, word processing, video processing, video game processing, etc. Further, the terms introduced above are interchangeable.

Cloud gaming includes the execution of a video game at the server to generate game rendered video frames, which are then sent to a client for display. Timing of operations at both the server and the client may be tied to respective vertical synchronization (VSYNC) parameters. When VSYNC signals are properly synchronized and/or offset between the server and/or the client, the operations performed at the server (e.g., generation and transmission of video frames over one or more frame periods) are synchronized with the operations performed at the client (e.g., displaying the video frames on a display at a display frame or refresh rate corresponding to the frame period). In particular, a server VSYNC signal generated at the server and a client VSYNC signal generated at the client may be used for synchronizing operations at the server and client. That is, when server and client VSYNC signals are synchronized and/or offset, the server generates and sends video frames in synchronization with how the client displays those video frames.

VSYNC signaling and vertical blanking intervals (VBI) have been incorporated for generating video frames and displaying those video frames when streaming media content between a server and a client. For example, the server strives to generate a game rendered video frame in one or several frame periods as defined by a corresponding server VSYNC signal (e.g. if a frame period of 16.7 ms, then generating a video frame each frame period results in 60 Hz operation, and generating one video frame for each two frame periods results in 30 Hz operation), and subsequently encode and transmit that video frame to the client. At the client, the received encoded video frames are decoded and displayed, wherein the client displays each video frame that is rendered for display beginning with a corresponding client VSYNC.

For illustration,FIG.1Ashows how a VSYNC signal111may indicate the beginning of a frame period, wherein various operations may be performed during a corresponding frame period at the server and/or client. When streaming media content, the server may use a server VSYNC signal for generating and encoding video frames, and the client may use a client VSYNC signal for displaying the video frames. The VSYNC signal111is generated at a defined frequency which corresponds to the defined frame period110, as shown inFIG.1B. In addition, VBI105defines the time period between when the last raster line was drawn on the display for a previous frame period and when the first raster line (e.g., top) is drawn to the display. As shown, after VBI105, the video frame rendered for display is displayed via raster scanlines106(e.g., raster line by raster line, from left to right).

In addition, various embodiments of the present disclosure are disclosed for reducing one-way latency and/or latency instability between source and target devices, such as when streaming media content (e.g., video game content). For purposes of illustration only, the various embodiments for reducing one-way latency and/or latency instability are described within a server and client network configuration. However, it is understood that the various techniques disclosed for reducing one-way latency and/or latency instability may be implemented within other network configurations, and/or over peer-to-peer networks, as is shown inFIGS.2A-2D. For example, the various embodiments disclosed for reducing one-way latency and/or latency instability may be implemented between one or more of server and client devices in various configurations (e.g., server and client, server and server, server and multiple clients, server and multiple servers, client and client, client and multiple clients, etc.).

FIG.2Ais a diagram of a system200A for providing gaming over a network250between one or more cloud gaming networks290and/or servers260, and one or more client devices210, in various configurations, wherein server and client VSYNC signals can be synchronized and offset, and/or wherein dynamic buffering is performed on the client, and/or wherein encode and transmit operations on the server can be overlapped, and/or wherein receive and decode operations at the client can be overlapped, and/or wherein decode and display operations on the client can be overlapped to reduce one-way latency between the server260and client210, in accordance with one embodiment of the present disclosure. In particular, system200A provides gaming via a cloud game network290, wherein the game is being executed remote from client device210(e.g., thin client) of a corresponding user that is playing the game, in accordance with one embodiment of the present disclosure. System200A may provide gaming control to one or more users playing one or more games through the cloud game network290via network250in either single-player or multi-player modes. In some embodiments, the cloud game network290may include a plurality of virtual machines (VMs) running on a hypervisor of a host machine, with one or more virtual machines configured to execute a game processor module utilizing the hardware resources available to the hypervisor of the host. Network250may include one or more communication technologies. In some embodiments, network250may include 5thGeneration (5G) network technology having advanced wireless communication systems.

In some embodiments, communication may be facilitated using wireless technologies. Such technologies may include, for example, 5G wireless communication technologies. 5G is the fifth generation of cellular network technology. 5G networks are digital cellular networks, in which the service area covered by providers is divided into small geographical areas called cells. Analog signals representing sounds and images are digitized in the telephone, converted by an analog to digital converter and transmitted as a stream of bits. All the 5G wireless devices in a cell communicate by radio waves with a local antenna array and low power automated transceiver (transmitter and receiver) in the cell, over frequency channels assigned by the transceiver from a pool of frequencies that are reused in other cells. The local antennas are connected with the telephone network and the Internet by a high bandwidth optical fiber or wireless backhaul connection. As in other cell networks, a mobile device crossing from one cell to another is automatically transferred to the new cell. It should be understood that 5G networks are just an example type of communication network, and embodiments of the disclosure may utilize earlier generation wireless or wired communication, as well as later generation wired or wireless technologies that come after 5G.

As shown, the cloud game network290includes a game server260that provides access to a plurality of video games. Game server260may be any type of server computing device available in the cloud, and may be configured as one or more virtual machines executing on one or more hosts. For example, game server260may manage a virtual machine supporting a game processor that instantiates an instance of a game for a user. As such, a plurality of game processors of game server260associated with a plurality of virtual machines is configured to execute multiple instances of one or more games associated with gameplays of a plurality of users. In that manner, back end server support provides streaming of media (e.g., video, audio, etc.) of gameplays of a plurality of gaming applications to a plurality of corresponding users. That is, game server260is configured to stream data (e.g., rendered images and/or frames of a corresponding gameplay) back to a corresponding client device210through network250. In that manner, a computationally complex gaming application may be executing at the back end server in response to controller inputs received and forwarded by client device210. Each server is able to render images and/or frames that are then encoded (e.g., compressed) and streamed to the corresponding client device for display.

For example, a plurality of users may access cloud game network290via communications network250using corresponding client devices210configured for receiving streaming media. In one embodiment, client device210may be configured as a thin client providing interfacing with a back end server (e.g., game server260of cloud game network290) configured for providing computational functionality (e.g., including game title processing engine211). In another embodiment, client device210may be configured with a game title processing engine and game logic for at least some local processing of a video game, and may be further utilized for receiving streaming content as generated by the video game executing at a back end server, or for other content provided by back end server support. For local processing, the game title processing engine includes basic processor based functions for executing a video game and services associated with the video game. The game logic is stored on the local client device210and is used for executing the video game.

In particular, client device210of a corresponding user (not shown) is configured for requesting access to games over a communications network250, such as the internet, and for rendering for display images generated by a video game executed by the game server260, wherein encoded images are delivered to the client device210for display in association with the corresponding user. For example, the user may be interacting through client device210with an instance of a video game executing on game processor of game server260. More particularly, an instance of the video game is executed by the game title processing engine211. Corresponding game logic (e.g., executable code)215implementing the video game is stored and accessible through a data store (not shown), and is used to execute the video game. Game title processing engine211is able to support a plurality of video games using a plurality of game logics, each of which is selectable by the user.

For example, client device210is configured to interact with the game title processing engine211in association with the gameplay of a corresponding user, such as through input commands that are used to drive gameplay. In particular, client device210may receive input from various types of input devices, such as game controllers, tablet computers, keyboards, gestures captured by video cameras, mice, touch pads, etc. Client device210can be any type of computing device having at least a memory and a processor module that is capable of connecting to the game server260over network250. The back-end game title processing engine211is configured for generating rendered images, which is delivered over network250for display at a corresponding display in association with client device210. For example, through cloud based services the game rendered images may be delivered by an instance of a corresponding game executing on game executing engine211of game server260. That is, client device210is configured for receiving encoded images (e.g., encoded from game rendered images generated through execution of a video game), and for displaying the images that are rendered for display11. In one embodiment, display11includes an HMD (e.g., displaying VR content). In some embodiments, the rendered images may be streamed to a smartphone or tablet, wirelessly or wired, direct from the cloud based services or via the client device210(e.g., PlayStation® Remote Play).

In one embodiment, game server260and/or the game title processing engine211includes basic processor based functions for executing the game and services associated with the gaming application. For example, processor based functions include 2D or 3D rendering, physics, physics simulation, scripting, audio, animation, graphics processing, lighting, shading, rasterization, ray tracing, shadowing, culling, transformation, artificial intelligence, etc. In addition, services for the gaming application include memory management, multi-thread management, quality of service (QoS), bandwidth testing, social networking, management of social friends, communication with social networks of friends, communication channels, texting, instant messaging, chat support, etc.

In one embodiment, cloud game network290is a distributed game server system and/or architecture. In particular, a distributed game engine executing game logic is configured as a corresponding instance of a corresponding game. In general, the distributed game engine takes each of the functions of a game engine and distributes those functions for execution by a multitude of processing entities. Individual functions can be further distributed across one or more processing entities. The processing entities may be configured in different configurations, including physical hardware, and/or as virtual components or virtual machines, and/or as virtual containers, wherein a container is different from a virtual machine as it virtualizes an instance of the gaming application running on a virtualized operating system. The processing entities may utilize and/or rely on servers and their underlying hardware on one or more servers (compute nodes) of the cloud game network290, wherein the servers may be located on one or more racks. The coordination, assignment, and management of the execution of those functions to the various processing entities are performed by a distribution synchronization layer. In that manner, execution of those functions is controlled by the distribution synchronization layer to enable generation of media (e.g., video frames, audio, etc.) for the gaming application in response to controller input by a player. The distribution synchronization layer is able to efficiently execute (e.g., through load balancing) those functions across the distributed processing entities, such that critical game engine components/functions are distributed and reassembled for more efficient processing.

The game title processing engine211includes a central processing unit (CPU) and graphics processing unit (GPU) group that may be configured to perform multi-tenancy GPU functionality. In another embodiment, multiple GPU devices are combined to perform graphics processing for a single application that is executing on a corresponding CPU.

FIG.2Bis a diagram for providing gaming between two or more peer devices, wherein VSYNC signals can be synchronized and offset to achieve optimal timing of receipt of controller and other information between the devices, in accordance with one embodiment of the present disclosure. For example, head-to-head gaming may be performed using two or more peer devices that are connected through network250or directly through peer-to-peer communication (e.g., Bluetooth, local area networking, etc.).

As shown, a game is being executed locally on each of the client devices210(e.g., game console) of corresponding users that are playing the video game, wherein the client devices210communicate through peer-to-peer networking. For example, an instance of a video game is executing by the game title processing engine211of a corresponding client device210. Game logic215(e.g., executable code) implementing the video game is stored on the corresponding client device210, and is used to execute the game. For purposes of illustration, game logic215may be delivered to the corresponding client device210through a portable medium (e.g. optical media) or through a network (e.g., downloaded through the internet from a gaming provider).

In one embodiment, the game title processing engine211of a corresponding client device210includes basic processor based functions for executing the game and services associated with the gaming application. For example, processor based functions include 2D or 3D rendering, physics, physics simulation, scripting, audio, animation, graphics processing, lighting, shading, rasterization, ray tracing, shadowing, culling, transformation, artificial intelligence, etc. In addition, services for the gaming application include memory management, multi-thread management, quality of service (QoS), bandwidth testing, social networking, management of social friends, communication with social networks of friends, communication channels, texting, instant messaging, chat support, etc.

Client device210may receive input from various types of input devices, such as game controllers, tablet computers, keyboards, gestures captured by video cameras, mice, touch pads, etc. Client device210can be any type of computing device having at least a memory and a processor module, and is configured for generating rendered images executed by the game title processing engine211, and for displaying the rendered images on a display (e.g., display11, or display11including a head mounted display—HMD, etc.). For example, the rendered images may be associated with an instance of the game executing locally on client device210to implement gameplay of a corresponding user, such as through input commands that are used to drive gameplay. Some examples of client device210include a personal computer (PC), a game console, a home theater device, a general purpose computer, mobile computing device, a tablet, a phone, or any other types of computing devices that can execute an instance of a game.

FIG.2Cillustrates various network configurations that benefit from proper synchronization and offsetting of VSYNC signals between a source device and a target device, including those configurations shown inFIGS.2A-2B, in accordance with embodiments of the present disclosure. In particular, the various network configurations benefit from proper alignment of frequencies of server and client VSYNC signals, and a timing offset of the server and client VSYNC signals for purposes of reducing one-way latency and/or latency variability between a server and client. For example, one network device configuration includes a cloud gaming server (e.g., source) to client (target) configuration. In one embodiment, the client may include a WebRTC client configured for providing audio and video communication inside a web browser. Another network configuration includes a client (e.g. source) to server (target) configuration. Still another network configuration includes a server (e.g., source) to server (e.g., target) configuration. Another network device configuration includes a client (e.g., source) to client (target) configuration, wherein the clients can each be a gaming console to provide for head-to-head gaming, for example.

In particular, alignment of VSYNC signals may include synchronizing the frequencies of the server VSYNC signal and the client VSYNC signal, and may also include adjusting a timing offset between the client VSYNC signal and server VSYNC signal, for the purposes of removing drift, and/or to maintain an ideal relationship between server and client VSYNC signals, for purposes of reducing one-way latency and/or latency variability. To achieve proper alignment, the server VSYNC signal may be tuned in order to implement proper alignment between a server260and client210pair, in one embodiment. In another embodiment, the client VSYNC signal may be tuned in order to implement proper alignment between a server260and client210pair. Once the client and server VSYNC signals are aligned, the server VSYNC signal and client VSYNC signal occur at substantially the same frequency, and are offset from each other by a timing offset, that may be adjusted from time to time. In another embodiment, alignment of VSYNC signals may include synchronizing the frequencies of VSYNC for two clients, and may also include adjusting a timing offset between their VSYNC signals, for the purposes of removing drift, and/or achieving optimal timing of receipt of controller and other information; either VSYNC signal may be tuned to achieve this alignment. In still another embodiment, alignment may include synchronizing the frequencies of VSYNC for a plurality of servers, and may also include synchronizing the frequencies of the server VSYNC signals and the client VSYNC signals and adjusting a timing offset between the client VSYNC and server VSYNC signals, e.g. for head-to-head cloud gaming. In the server to client configuration and the client to client configuration, alignment may include both synchronization of the frequencies between the server VSYNC signal and client VSYNC signal, as well as providing a proper timing offset between the server VSYNC signal and client VSYNC signal. In the server to server configuration, alignment may include synchronization of the frequencies between the server VSYNC signal and client VSYNC signal without setting a timing offset.

FIG.2Dillustrates a multi-tenancy configuration between a cloud gaming server260and one or more clients210that benefit from proper synchronization and offsetting of VSYNC signals between a source device and a target device, in accordance with one embodiment of the present disclosure. In the server to client configuration, alignment may include both synchronization of the frequencies between the server VSYNC signal and client VSYNC signal, as well as providing a proper timing offset between the server VSYNC signal and client VSYNC signal. In the multi-tenancy configuration, the client VSYNC signal is tuned at each client210in order to implement proper alignment between a server260and client210pair, in one embodiment.

For example, a graphics subsystem may be configured to perform multi-tenancy GPU functionality, wherein one graphics subsystem could be implementing graphics and/or rendering pipelines for multiple games, in one embodiment. That is, the graphics subsystem is shared between multiple games that are being executed. In particular, a game title processing engine may include a CPU and GPU group that is configured to perform multi-tenancy GPU functionality, wherein one CPU and GPU group could be implementing graphics and/or rendering pipelines for multiple games, in one embodiment. That is, the CPU and GPU group is shared between multiple games that are being executed. The CPU and GPU group could be configured as one or more processing devices. In another embodiment, multiple GPU devices are combined to perform graphics processing for a single application that is executing on a corresponding CPU.

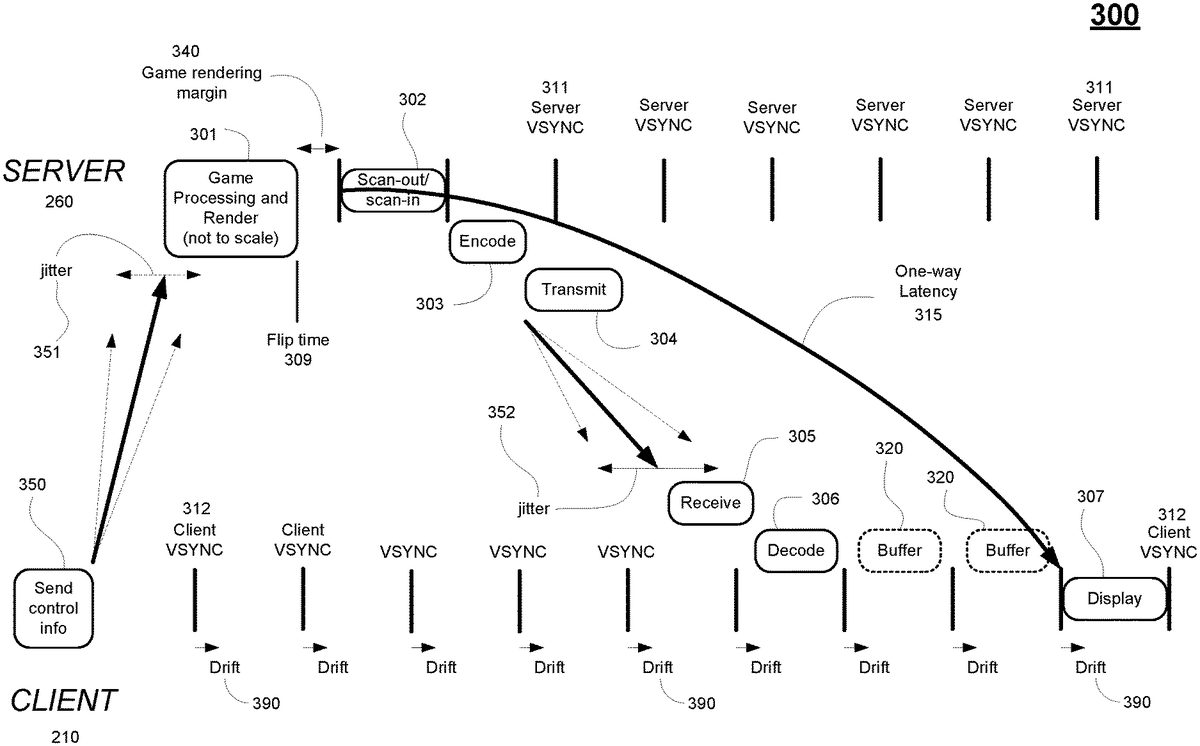

FIG.3illustrates the general process of executing a video game at a server to generate game rendered video frames and sending those video frames to a client for display. Traditionally, a number of the operations at the game server260and client210are performed within a frame period as defined by a respective VSYNC signal. For example, the server260strives to generate a game rendered video frame at301in one or multiple frame periods as defined by a corresponding server VSYNC signal311. The video frame is generated by the game, either in response to control information (e.g., input commands of a user) delivered from an input device at operation350, or game logic not driven by control information. Transmission jitter351may be present when sending control information to the server260, wherein jitter351measures the variation of network latency from client to server (e.g., when sending input commands). As shown, the bold arrow shows the current delay when sending control information to the server260, but due to jitter there may be a range of arrival times for control information at the server260(e.g. range bounded by the dotted arrows). At flip-time309, the GPU reaches a flip command that indicates that the corresponding video frame has been completely generated and placed into the frame buffer at the server260. Thereafter, the server260performs scan-out/scan-in (operation302, wherein scan-out may be aligned with the VSYNC signal311) for that video frame over the subsequent frame period as defined by the server VSYNC signal311(the VBI is omitted for clarity). Subsequently the video frame is encoded (operation303) (e.g. encoding starts after an occurrence of the VSYNC signal311, and the end of encoding may not be aligned with the VSYNC signal) and transmitted (operation304, wherein transmission may not be aligned with the VSYNC signal311) to the client210. At the client210, the encoded video frames are received (operation305, wherein receive may not be aligned with the client VSYNC signal312), decoded (operation306, wherein decode may not be aligned with the client VSYNC signal312), buffered, and displayed (operation307, wherein the start of display may be aligned with the client VSYNC signal312). In particular, the client210displays each video frame that is rendered for display beginning with a corresponding occurrence of the client VSYNC signal312.

One-way latency315may be defined as being the latency from beginning of transfer of the video frame to the encoding unit (e.g. scan-out302) at the server, to the beginning of display of the video frame at the client307. That is, one-way latency is the time from server scan-out to client display, taking into account client buffering. Individual frames have a latency from beginning of scan-out302to completion of decode306that may vary from frame to frame due to the high degree of variance of server operations such as encode303and transmission304, network transmission between the server260and client210with accompanying jitter352, and client reception305. As shown, the straight bold arrow shows the current latency when sending the corresponding video frame to the client210, but due to jitter352there may be a range of arrival times for video frames at the client210(e.g. range bounded by the dotted arrows). As one-way latency must be relatively stable (e.g. kept fairly consistent) to achieve a good play experience, traditionally buffering320is performed with the result that the display of individual frames with low latency (e.g. from beginning of scan-out302to completion of decode306) is delayed for several frame periods. That is, if there are network instabilities, or unpredictable encode/decode time, extra buffering is needed so that one-way latency is kept consistent.

One-way latency between a cloud gaming server and a client may vary due to clock drift when streaming video frames generated from a video game executing on the server, in accordance with one embodiment of the present disclosure. That is, differences in the frequencies of the server VSYNC signal311and the client VSYNC signal312may cause the client VSYNC signal to drift relative to the frames arriving from the server260. The drift may be due to very slight differences in the crystal oscillators used in each of the respective clocks at the server and client. Furthermore, embodiments of the present disclosure reduce one-way latency by performing one or more of synchronization and offset of VSYNC signals for alignment between a server and a client, by providing dynamic buffering on the client, by overlapping encode and transmit of video frames at the server, by overlapping receive and decode of video frames at the client, and by overlapping decoding and displaying of video frames at the client.

FIG.4illustrates the flow of data through a network configuration including a highly optimized cloud gaming server260and a highly optimized client210when streaming video frames generated from a video game executing on the server, wherein overlapping server operations and client operations reduces the one-way latency, and synchronizing and offsetting the VSYNC signals between the server and the client reduces the one-way latency as well as reduces variability in the one-way latency between the server and the client, in accordance with embodiments of the present disclosure. In particular,FIG.4shows the desired alignment between the server and the client VSYNC signals. In one embodiment, tuning of the server VSYNC signal311is performed to obtain proper alignment between server and client VSYNC signals, such as in a server and client network configuration. In another embodiment, tuning of the client VSYNC signal312is performed to obtain proper alignment between server and client VSYNC signals, such as in a multi-tenant server to multiple clients network configuration. For purposes of illustration, tuning of the server VSYNC signal311is described inFIG.4for purposes of synchronizing the frequencies of server and client VSYNC signals, and/or adjusting the timing offset between corresponding client and server VSYNC signals, though it is understood that the client VSYNC signal312may also be used for tuning. In the context of this patent, “synchronize” should be taken to mean tuning the signals so that their frequencies match, but phase may differ; “offset” should be taken to mean the time delay between the signals, e.g. the time between when one signal reaches its maximum and the other signal reaches its maximum.

As shown,FIG.4illustrates an improved process of executing a video game at a server to generate rendered video frames and sending those video frames to a client for display, in embodiments of the present disclosure. The process is shown with respect to generation and display of a single video frame at a server and client. In particular, the server generates a game rendered video frame at401. For example, the server260includes a CPU (e.g., game title processing engine211) configured for executing the game. The CPU generates one or more draw calls for a video frame, wherein the draw calls include commands placed into a command buffer for execution by a corresponding GPU of the server260in a graphics pipeline. The graphics pipeline may include one or more shader programs on vertices of objects within a scene to generate texture values as rendered for the video frame for displaying, wherein the operations are performed in parallel through a GPU for efficiency. At flip-time409, the GPU reaches a flip command in the command buffer that indicates that the corresponding video frame has been completely generated and/or rendered and placed into the frame buffer at the server260.

At402, the server performs scan-out of the game rendered video frame to an encoder. In particular, scan-out is performed scanline by scanline, or in groups of consecutive scanlines, wherein a scanline refers to a single horizontal line, for example of a display from screen edge to screen edge. These scanlines or groups of consecutive scanlines are sometimes referred to as slices, and are referred to in this specification as screen slices. In particular, scan-out402may include a number of processes that modify the game rendered frame, including overlaying it with another frame buffer, or shrinking it in order to surround it with information from another frame buffer. During scan-out402, the modified video frame is then scanned into an encoder for compression. In one embodiment, scan-out402is performed at an occurrence311aof the VSYNC signal311. In other embodiments, scan-out402may be performed before the occurrence of the VSYNC signal311, such as at flip-time409.

At403, the game rendered video frame (which may have undergone modification) is encoded on an encoder slice by encoder slice basis at the encoder to generate one or more encoded slices, wherein an encoded slice is unrelated to a scanline or screen slice. As such, the encoder generates one or more encoded (e.g., compressed) slices. In one embodiment, the encoding process begins before the scan-out402process has fully completed for a corresponding video frame. Further, the start and/or end of encode403may or may not be aligned with the server VSYNC signal311. The boundaries of an encoded slice are not restricted to a single scanline, and may be comprised of a single scanline, or multiple scanlines Additionally, the end of an encoded slice and/or the start of the next encoder slice may not necessarily occur at the edges of the display screen (e.g., may occur somewhere mid-screen or in the middle of a scanline), such that the encoded slice need not traverse fully from edge to edge of the display screen. As shown, one or more encoded slices may be compressed and/or encoded, including “encoded slice A” that is compressed having hash marks.

At404, the encoded video frame is transmitted from the server to the client, wherein the transmission may occur on an encoded slice-by-slice basis, wherein each encoded slice is an encoder slice that has been compressed. In one embodiment, the transmission process404begins before the encoding process403has fully completed for a corresponding video frame. Further, the start and/or end of transmission404may or may not be aligned with the server VSYNC signal311. As shown, encoded slice A that is compressed is transmitted to the client independently of the other compressed encoder slices for the rendered video frame. The encoder slices may be transmitted one at a time, or in parallel.

At405, the client receives the compressed video frame, again on an encoded slice-by-slice basis. Further, the start and/or end of receive405may or may not be aligned with the client VSYNC signal312. As shown, encoded Slice A that is compressed is received by the client. Transmission jitter452may be present between the server260and client210, wherein jitter452measures the variation in network latency from the server260to the client210. A lower jitter value exhibits a more stable connection. As shown, the bold straight arrow shows the current latency when sending the corresponding video frame to the client210, but due to jitter there may be a range of arrival times for video frames at the client210(e.g. range bounded by the dotted arrows). Variation in latency may also be due to one or more operations at the server such as encode403and transmission404, as well as networking issues that introduce latency when transmitting video frames to the client210.

At406, the client decodes the compressed video frame, again on an encoded slice-by-slice basis, producing decoded Slice A (shown without hash marks) that is now ready for display. In one embodiment, the decode process406begins before the receive process405has fully completed for a corresponding video frame. Further, the start and/or end of decode406may or may not be aligned with the client VSYNC signal312. At407, the client displays the decoded rendered video frame on the display at the client. That is, the decoded video frame is placed in a display buffer which is streamed out on a scanline-by-scanline basis to a display device, for example. In one embodiment, the display process407(i.e. the streaming out to the display device) begins after the decode process406has fully completed for a corresponding video frame, i.e. the decoded video frame is fully resident in the display buffer. In another embodiment, the display process407begins before the decode process406has fully completed for a corresponding video frame. That is, streamout to the display device begins from the address of the display buffer at a time at which only a portion of the decoded frame buffer is resident in the display buffer. The display buffer is then updated or filled in with remaining portions of the corresponding video frame in time for displaying, such that the updating of the display buffer is performed prior to streamout of those portions to the display. Further, the start and/or end of display407is aligned with the client VSYNC signal312.

In one embodiment, the one-way latency416between the server260and the client210may be defined as the elapsed time between when scan-out402begins and when display407begins. Embodiments of the present disclosure are capable of aligning the VSYNC signals (e.g. synchronize the frequency and adjust the offset) between the server and the client, to reduce one-way latency between the server and the client, and to reduce variability in the one-way latency between the server and the client. For example, embodiments of the present disclosure are able to calculate an optimal adjustment to the offset430between server VSYNC signal311and client VSYNC signal312such that even in the event of near worst case time needed for server processing such as encode403and transmit404, near worst case network latency between server260and client210, and near worst case client processing such as receive405and decode406, the decoded rendered video frame is available in time for the display process407. That is, it is not necessary to determine the absolute offset between server VSYNC and client VSYNC; it is sufficient to adjust the offset so that the decoded rendered video frame is available in time for the display process.

In particular, the frequencies of the server VSYNC signal311and the client VSYNC signal312may be aligned through synchronization. Synchronization is achieved through tuning the server VSYNC signal311or the client VSYNC signal312. For purposes of illustration, tuning is described in relation to the server VSYNC signal311, though it is understood that tuning could be performed on the client VSYNC signal312instead. For example, as shown inFIG.4the server frame period410(e.g., the time between two occurrences311cand311dof the server VSYNC signal311) is substantially equal to the client frame period415(e.g., the time between two occurrences312aand312bof the client VSYNC signal312), which indicates that the frequencies of the server VSYNC signal311and client VSYNC signal312are also substantially equal.

To maintain synchronization of the frequencies of the server and client VSYNC signals, the timing of the server VSYNC signal311may be manipulated. For example, the vertical blanking interval (VBI) in the server VSYNC signal311may be increased or reduced over a period of time, such as to account for the drift between the server VSYNC signal311and the client VSYNC signal312. Manipulation of vertical blanking (VBLANK) lines in the VBI provides for adjusting the number of scanlines used for VBLANK for one or more frame periods of the server VSYNC signal311. Dropping the number of scanlines of VBLANK reduces a corresponding frame period (e.g., time interval) between two occurrences of the server VSYNC signal311. Conversely, increasing the number of scanlines of VBLANK increases a corresponding frame period (e.g., time interval) between two occurrences of the VSYNC signal311. In that manner, the frequency of the server VSYNC signal311is adjusted to align the frequencies between the client and server VSYNC signals311and312to be at substantially the same frequency. Also, offset between server and client VSYNC signals can be adjusted by increasing or reducing the VBI for a short period of time, before returning the VBI to its original value. In one embodiment, the server VBI is adjusted. In another embodiment, the client VBI is adjusted. In yet another embodiment, instead of two devices (server and client), there are a plurality of connected devices, each of which may have a corresponding VBI that is adjusted. In one embodiment, each of the plurality of connected devices may be independent peer devices (e.g. without a server device). In another embodiment, the plurality of devices may include one or more server devices and/or one or more client devices arranged in one or more server/client architectures, multi-tenant server/client(s) architecture, or some combination thereof.

Alternatively, the pixel clock of the server (e.g., located at the southbridge of a northbridge/southbridge core logic chipset of the server, or in the case of a discrete GPU, it would generate a pixel clock by itself using its own hardware) may be manipulated to perform coarse and/or fine tuning of the frequency of the server VSYNC signal311over a period of time to bring the synchronization of frequencies between server and client VSYNC signals311and312back into alignment, in one embodiment. Specifically, the pixel clock in the south bridge of the server may be overclocked or underclocked to adjust the overall frequency of the VSYNC signal311of the server. In that manner, the frequency of the server VSYNC signal311is adjusted to align the frequencies between the client and server VSYNC signals311and312to be at substantially the same frequency. Offset between server and client VSYNC can be adjusted by increasing or reducing the client server pixel clock for a short period of time, before returning the pixel clock to its original value. In one embodiment, the server pixel clock is adjusted. In another embodiment, the client pixel clock is adjusted. In yet another embodiment, instead of two devices (server and client), there are a plurality of connected devices, each of which may have a corresponding pixel clock which is adjusted. In one embodiment, each of the plurality of connected devices may be independent peer devices (e.g. without a server device). In another embodiment, the plurality of connected devices may include one or more server devices and one or more client devices arranged in one or more server/client architectures, multi-tenant server/client(s) architecture, or some combination thereof.

FIG.5Ais a diagram illustrating overlapping of encode and transmit operations at the server260, and receive and decode operations at the client210when streaming video frames generated from a video game executing on the server, in accordance with one embodiment of the disclosure. In particular, the overlapping of the encode and transmission operations of video frames at the server260, and the overlapping of receive and decode operations of video frames at the client210can be performed at the encoder slice level with reference to a timeline501.

In particular, a rendered video frame is generated by server260that is executing a video game. For instance, video frames are generated as the video game is executing on a CPU in combination with a GPU graphics pipeline of the server260. Each video frame may be split into one or more encoder slices that are then compressed—as one or more encoded slices. As previously described, the boundaries of an encoded slice are not restricted to a single scanline, and may be comprised of a single scanline, or multiple scanlines. Additionally, the end of an encoded slice and/or the start of the next encoded slice may not necessarily occur at the edges of the display screen (e.g., may occur somewhere mid-screen or in the middle of a scanline).

Further, each encoded slice (e.g., compressed) may include one or more packets (e.g., user datagram protocol—UDP). If an encoded slice exceeds a maximum transmission unit (MTU), then the encoded slice is split into multiple packets, each sized to the MTU or smaller. The MTU defines the maximum size of a packet when sent over UDP. For example, encoded slice A may be split into one or more packets503-A. Similarly, encoded slice B, encoded slice C, and encoded slice D may each be split into one or more packets.

At the server260, one or more packets of an encoded slice may be compressed and delivered separately (and possibly grouped by the slice) without waiting for all the encoder slices (e.g., of a video frame) to be compressed (e.g., into encoded slices) and packetized. This may require information (e.g., amount of data encoded at a given time) to be shared within the server260, such as between the encode unit and those units (e.g., streamer operating at the application layer) performing the transmission of data. In particular, at operation403the encoder receives the generated video frame and encodes the video frame on an encoder slice-by-slice basis to generate one or more encoded slices. In some embodiments, the encoder receives a video frame generated by a video game that has been modified with additional layers or overlays (e.g., during a scan-out process), as previously described. As shown, each of the encoded slices of the generated video frame is compressed separately at the encoder. For example, in the encoding process of operation403the generated video frame is encoded into encoded slice A (e.g., compressed), encoded slice B, encoded slice C, and encoded slice D. Depending on the number of processors or the hardware, multiple slices can be encoded simultaneously (e.g., in parallel), in some embodiments. For example, some gaming consoles can generate four encoded slices in parallel. More particularly, hardware encoders may be configured to compress multiple encoder slices (e.g., to generate one or more encoded slices) in parallel due to the hardware pipelining.

At operation404, the encoded slices are transmitted over a network to a client210. In particular, the encoded slices are delivered from the encoder to a streamer device that is configured to transmit the encoded slices over the network to the client210. For example, the streamer device is configured to operate at the application layer of a transmission control protocol/internet protocol or UDP/IP computer networking model. As shown, the encoding and transmission processes may be overlapped, in accordance with one embodiment of the present disclosure. For example, each of the encoded slices (e.g., compressed) is transmitted separately, such that the one or more packets of a corresponding encoded slice are sent as a group before the packets of the next encoded slice can be sent. In particular, once compressed, a corresponding encoded slice (e.g., one or more packets of the encoded slice) is then ready for transmission by the streamer at operation404without waiting for all the encoder slices to be compressed for the corresponding video frame. As shown, in timeline501encoded slice A is transmitted by the streamer while encoder slice B is being compressed (e.g., into encoded slice B) by the encoder, and before encoder slices C and D have begun the encoding process. More specifically, packets of encoded slice A is transmitted by the streamer over the network to client210before encoder slice B has been compressed by the encoder. Similarly, encoded slice B is transmitted by the streamer while encoder slice C is being compressed by the encoder, and before encoder slice D has begun the encoding process. In the case where multiple slices can be encoded simultaneously (e.g., X number of slices encoded in parallel), then X number of encoded slices could be sent while the next X number of encoder slices are being encoded (e.g., compressed). As such, in embodiments of the present disclosure, transmission of data (e.g., encoded slices and/or packets of corresponding encoded slices) from server to client may begin as soon as a corresponding encoder slice and/or packets of an encoder slice have been encoded and/or compressed, and before the full video frame has been encoded (as is traditionally done). In that manner, one-way latency between the server and client may be reduced by beginning transmission of data from the server to the client as soon as a packet's worth of data is encoded by the server.

At the client210, the process includes receiving the compressed video frame (e.g., the encoded slices of the video frame), and then decoding the compressed video frame. At the client, the receiving and decoding process may also be performed at the encoded slice level, such that once the one or more packets of a corresponding encoded slice are received, that encoded slice is then decoded using those one or more packets. As such, the receiving and decoding processes may be overlapped at the client. In particular, at the client210one or more encoded slices of a corresponding video frame are received separately at operation405. For illustration purposes, in the timeline501encoded slice A may be received first, then encoded slice B, and so on until encoded slice D is received last.

In particular, at operation406, the received encoded slices are then decoded. In particular, once received, a corresponding encoded slice that is received is then ready for decoding by the decoder at operation406without waiting for all the encoded slices to be received for the corresponding video frame. For example, in timeline501the one or more packets of encoded slice A are decoded while encoded slice B is being received, and while encoded slice C and encoded slice D are still in transmission over the network. Similarly, one or more packets of encoded slice B are decoded while encoded slice C is being received, and while encoded slice D is in transmission over the network. As such, in embodiments of the present disclosure, decoding of data (e.g., encoded slices and/or packets of encoded slices) from server to client may begin as soon as the encoded slice and/or packets of an encoded slice are received, and before the full complement of encoded slices and/or corresponding packets of those encoded slices for the video frame have been received. In that manner, one-way latency between the server260and client210may be reduced by beginning decoding of data as soon as a packet's worth of data is received by the client210.

It is appreciated that encoded slices of a corresponding video frame may be received at the client in various random orders (e.g., due to network jitter), and may be received in parallel depending on the hardware configuration at the client. That is, in operation405the encoded slices that are transmitted may be received at client210out of sequence due to jitter. In that case, it may be possible to decode encoded slices out of order to account the jitter (e.g., receiving encoded slices of a corresponding video frame out of order). For example, in a sequence of encoded slices for a corresponding video frame being transmitted over the network, when one encoded slice is received out of order, the decoding is also performed out of order. That is, the decoding still occurs as each encoded slice is received.

More particularly, each of the encoded slices of a corresponding video frame may be split into one or more network packets at the encoder of the server260, which are then transmitted over a network to the client210. As such, client210receives the one or more packets for each of the one or more slices of a corresponding video frame before slices and/or corresponding packets of slices of the next video frame are received. That is, the one or more encoded slices for a corresponding video frame may be received one at a time or in parallel at the client, and before the one or more encoded slices of the next video frame are being received, in one embodiment. More specifically, the packets for the one or more encoded slices of a corresponding video frame may be received one at a time or in parallel at the client, and before the one or more encoded slices of the next video frame are being received.

With the detailed description of the various client devices210and/or the cloud gaming network290(e.g., in the game server260) ofFIGS.2A-2D, flow diagram500B ofFIG.5Billustrates a method for cloud gaming in which server operations may be overlapped to reduce one-way latency between the cloud gaming server and the client, in accordance with one embodiment of the present disclosure.

At510, the method includes generating a video frame when executing a video game at a server. For example, the server may be executing a video game in a streaming mode, such that the CPU of a server executes a video game responsive to input commands from a user in order to generate game rendered video frames using a graphics pipeline that can be used for streaming. In particular, a CPU executing the video game in cooperation with a GPU graphics pipeline are configured to generate a plurality of video frames. In cloud gaming, the game generated video frames are typically rendered for display on a virtual display. The server may perform additional operations on the game generated video frames. For example, one or more overlays may be added to a corresponding game generated video frame, such as during a scan-out process.

At520, the method includes scanning-in the game generated video frame to an encoder at the server scanline-by-scanline. As previously described, the game generated video frame may be modified during a scan-out process, such as adding one or more overlays. As such, the optionally modified game generated video frame is scanned into the encoder for compression in preparation of streaming the video frame to a client.

At530, the method includes encoding one or more encoder slices for the corresponding video frame as one or more encoded slices. That is, the video frame is being compressed into a compressed video frame including one or more encoded slices. As previously described, each encoded slice may be configured into one or more scanlines, wherein the minimum size of an encoded slice is one scanline, and wherein the end of an encoded slice may not necessarily occur at the edge of a display screen.

After encoding, the one or more encoded slices are delivered to a buffer. The rate of filling the buffer is dependent on the capabilities of the encoder, such that each encoded slice is delivered to the buffer at an encoder fill rate. For example, the buffer may be filled as fast as the encoder is able to encode video frames, and more particularly as fast as the encoder is able to generate encoded slices and/or packets of the encoded slices.

In addition, one or more forward error correction (FEC) slices may be generated for the corresponding video frame that is being compressed by the encoder. The one or more FEC slices may be used by the client to correct any encoded slices that are lost during transmission to the client. In one embodiment, the one or more FEC slices may be interleaved within the one or more encoded slices during streaming. That is, for a corresponding video frame having multiple encoded slices and multiple FEC slices, the FEC slices may be interleaved within the encoded slices during streaming. For example, a FEC slice used to correct a set of encoded slices may be delivered right after each of the encoded slices in the set is delivered.

At540, the method includes draining a first encoded slice from the buffer to stream the first encoded slice at a buffer drain rate over a network to a client. That is, the first encoded slice is delivered over the network to the client, wherein the client is configured to receive and decompress the compressed video frame for rendering to a display. More particularly, one or more packets of the first encoded slice are drained from the buffer at the drain rate for streaming. In one embodiment, at least one encoded slice of the video frame is drained before the whole video frame is encoded. In one embodiment, the first encoded slice is streamed over the network before fully encoding the one or more slices of the corresponding video frame.

In one embodiment, the drain rate is dynamically adjusted to be approximately equal to the measured maximum receive bandwidth of the client. In particular, transmission of a plurality of encoded slices of a plurality of video frames that is compressed is performed by spacing the encoded slices according to the measured maximum receive bandwidth of the client. In that manner, the buffer drain rate is decoupled from the encoder fill rate, in one embodiment. In particular,FIG.6is a diagram illustrating the spacing of a plurality of encoded slices of a plurality of video frames, wherein the spacing is based on the maximum receive bandwidth of the client210when streaming video frames generated from a video game executing on the server, in accordance with one embodiment of the disclosure.

In one embodiment, it is beneficial to tune an encoder/decoder (codec)610or codec usage so that the size of packets for corresponding encoded slices corresponds to natural quantities of data being created by the codec in order to reduce buffering and latency. An encoded slice (e.g., compressed) may include one or more packets that are then delivered over the network to a client. In one embodiment, packet size is tied to the maximum transmission unit (MTU). When a video encoder slice that is compressed (into an encoded slice) exceeds the MTU, the encoded slice is split into several packets, each sized to be equal to or less than the MTU.

One consequence of encoded slices that are larger than the MTU is that the incurred packet loss rate and the amount of visual corruption will increase when packet loss occurs. For example, if a compressed encoder slice is split into three packets during encoding, and one of the packets is lost enroute to the client, the entire encoder slice becomes invalid at the client, such that losing a single packet results in an effective loss of three packets. As such, in one embodiment the packet size can be tuned to natural quantities of data being created by the codec (e.g., the MTU). In one implementation the packet size is tuned to correspond to a UDP packet size. In another implementation, the packet size can be tuned to correspond to a screen slice (e.g., from edge to edge of a screen), wherein the packet size and encoded slice correspond to the MTU. That is, an encoded slice is sized to the MTU, and can be delivered as one packet. This may increase the number of encoded slices of a corresponding video frame. When the packet size is tuned to correspond to something natural in the codec at the server side (e.g., MTU size and/or encoded slice), it makes it possible to decode the packets on the client side as soon as each of the packets arrive.

In one embodiment, the compressed video frames are transmitted from the server at a rate based on the maximum available bit rate or bandwidth over the network to the client at a particular point in time. That is, the rate of transmission of encoded slices of the compressed video frames is adjusted dynamically according to the currently measured maximum bandwidth. As such, packets655of the encoded slices650are spaced out for delivery according to the maximum available bit rate or maximum bandwidth to the client. That is, instead of spacing out the packet deliveries according to the bit rate of the codec (e.g., coupling encoder fill rate615to the buffer drain rate635), the packets655are delivered (at a buffer drain rate635that is tied to the maximum bandwidth of the client) according to the maximum bandwidth of the client210(e.g., decoupling the encoder fill rate615from the buffer drain rate635). In that manner, packets655can be received at the client210at a higher rate. In one embodiment, the encoding of packets is performed at the same rate that they are transmitted, such that both operations are dynamically tuned to the maximum available bandwidth available to the client.

In addition, at the server additional information may include the maximum send rate which defines the total network throughput (e.g., bandwidth) that the server thinks is available to the client. This maximum send rate may be determined by the server by tracking the amount of bandwidth sent to the client for a range of packets, coupled with a message from the client to the server, indicating how much packet loss occurred for the same range of packets. This may be used to determine the maximum rate that encoded slices of video frames can be sent out. The maximum rate will fluctuate based on the stability of the network connection to the client, and the offset can be dynamically adjusted to accommodate the fluctuation. Further, the maximum send rate can be adjusted independently of encoder parameters, such that slices can be sent out quicker if the encoder is configured to not produce slices at the maximum send rate.

In one embodiment, the maximum bandwidth at the client is determined by means of a feedback mechanism from the client. In particular, the streamer620operating at the application layer measures the maximum bandwidth of the client, such as using bandwidth tester625. As previously described, the application layer is used in the UDP suite of protocols used to interconnect network devices over the internet. For example, the application layer defines the communications protocols and interface methods used for communicating between devices over an IP communications network. During the test, the streamer620provides extra FEC packets640so that the buffer630(e.g., first-in/first-out FIFO buffer) can stream packets at a predefined bit rate, such as a tested maximum bandwidth. In one embodiment, the client returns as feedback690to the streamer620a number of packets it has received over a range of incremental sequence identifiers (IDs), such as for a range of video frames. For example, the client may report something like, 145 of 150 video frames received for sequence IDs100to250(e.g., 150 video frames). As such, the streamer620at the server260is able to calculate the packet loss, and since the streamer620knows the amount of bandwidth that was being sent (e.g., as tested) during that sequence of packets, the streamer620can dynamically determine what the client's maximum bandwidth is at a particular moment in time. The measured maximum bandwidth of the client may be delivered from streamer620to the buffer630as control information627, so that the buffer630can dynamically transmit packets at a rate that is approximately equal to the maximum bandwidth of the client.

The client210is unable to make this determination because the amount of bandwidth being sent is constantly fluctuating due to variable bitrate, scene complexity, etc. As such, the client210does not know if the server is sending the maximum bandwidth that the client can handle at any given moment. For example, the maximum bandwidth may be 15 Mbps (megabits per second), but because the scene complexity may be low due to the user being on a menu, as a result, only 2 Mbps is being sent. Hence, if the client reports 0% packet loss, this does not tell the server260if the client210can handle a maximum bandwidth of 15 Mbps. Hence, only when the server260is sending the maximum bandwidth can the true maximum bandwidth be determined.

FIG.7Ais a diagram illustrating the overlapping of receive and decode operations at the client when streaming video frames generated from a video game executing on the server to reduce one-way latency between the server and client, in accordance with one embodiment of the disclosure. In addition, receive, decode, and the rendering of decompressed video frames for display (e.g., display operations) may be overlapped at the client to reduce one-way latency between the server and client, in accordance with one embodiment of the present disclosure.

In particular, the client210receives a compressed video frame705at operation405, wherein a server (not shown) executes a video game to generate a game rendered video frame which is then encoded at an encoder at the server and delivered to the client as the compressed video frame705. The compressed video frame705includes one or more encoded slices, such as encoded slice A (which is hashed), as previously described. For example, the compressed video frame705includes encoded slices A through G. Each of the encoded slices may be of different sizes. For example, encoded slice D is larger than encoded slice E. Further, each of the slices may be split into one or more packets.

The client210includes a decoder that is configured for decoding the one or more encoded slices (e.g. decoded slice A—which is not hashed) of a corresponding compressed video frame at operation406. In one embodiment, the decode process begins before the corresponding compressed video frame is fully received at the client. That is, decoding of an encoded slice for a corresponding video frame begins before all of the encoded slices of the corresponding video frame is received. Because the decoder decodes on an encoded slice-by-slice basis, the decoded video frame706includes one or more encoder slices (as decoded). Thereafter, the decoded video frame706is then prepared for displaying, such as rendering the information in the decoded video frame706into scanlines or screen slices. As such, the client rendered video frame707is ready for display.

In a fault intolerant mode, one-way latency between the server and client can be reduced by having the client210begin the display of a video frame at operation407before the video frame has been completely decoded at operation406, in accordance with one embodiment of the present disclosure. In particular, one or more decoded slices of a video frame may be prepared for rendering to a display before the compressed video frame has been fully decoded. That is, the display operation at407overlaps the decode operation at406. In particular, the first encoded slice (e.g., slice A) must arrive and be decoded before client scan-out begins to the display. In addition, all subsequent encoded slices must arrive and be decoded before their respective decompressed data is rendered and scanned out for display.

Further, in addition to overlapping the receiving and decoding operations at the client, the display of one or more decoded slices that are then rendered in preparation for displaying can occur even before the compressed video frame sent by the server has been fully received at the client210. That is, one or more of the receive, decode, and display operations at the client may be overlapped for a corresponding video frame.

Moreover, when overlapping multiple operations at both the server and the client, one or more decoded slices of a video frame that are then rendered in preparation for displaying can be displayed at the client even before the scan-out operation at the server has fully completed, in accordance with one embodiment of the present disclosure. As previously described, scan-out delivers the game rendered video frame to the encoder at the server, wherein the game rendered video frame may be optionally modified (e.g., adding overlays) during a scan-out process.

With the detailed description of the various client devices210and/or the cloud gaming network290(e.g., in the game server260) ofFIGS.2A-2D, flow diagram700B ofFIG.7Billustrates a method for cloud gaming in which client operations may be overlapped to reduce one-way latency between the cloud gaming server and the client, in accordance with one embodiment of the present disclosure. In particular,FIG.7Bis a flow diagram illustrating the overlapping of receive and decode operations at the client to reduce one-way latency between the server and the client when streaming video frames generated from a video game executing on the server, wherein the rendering of decompressed video frames may optionally also be overlapped with receive and decode operations, in accordance with one embodiment of the disclosure.

At710, the method includes receiving one or more encoded slices of a compressed video frame at a client. In particular, a plurality of video frames is generated at the server responsive to processing of a video game by the server in a streaming mode during a session with the client. The plurality of video frames is encoded at the encoder into a plurality of compressed video frames, each corresponding compressed video frame including one or more corresponding encoded slices. The plurality of compressed video frames is transmitted to the client over a network, and more particularly, the encoded slices of the plurality of compressed video frames are transmitted to the client.

At the client, the method includes receiving the plurality of compressed video frames at a decoder, and decompressing the plurality of compressed video frames for rendering to a display associated with the client, wherein these operations may be overlapped. In particular, at720, the method includes decoding a first encoded slice at the client before fully receiving the one or more encoded slices of the corresponding compressed video frame.

FIG.8Ais a diagram illustrating the interleaving of forward error correction (FEC) packets within encoded slices of a video frame when streaming video frames generated from a video game executing on the server, in accordance with one embodiment of the disclosure. Interleaving of FEC data between encoded slices of a corresponding video frame allows for overlapping of receive, decode, and render for display operations at the client, which may be performed on an encoded slice-by-slice basis. In particular, forward error correction provides for reconstruction of lost encoded slices and/or reconstruction of lost packets of encoded slices of a corresponding video frame. As such, forward error correction allows for the display of an encoded slice that is received, decoded and rendered for display at the client before one or more subsequent encoded slices have been received and/or decoded.

For example, an encoded slice may be partitioned into one or more network packets. A FEC packet may be used to correct one or more packets associated with a slice. As such, FEC packets may be interleaved within the data packets of a corresponding compressed video frame (e.g., within packets of encoded slices) to speed up recovery of lost packets using FEC. That is, instead of putting FEC packets at the end of the packet grouping for a corresponding compressed video frame, FEC packets are interleaved within the data packets. In that manner, as soon as one or more data packets of a corresponding compressed video frame are lost, those lost packets can be recovered almost immediately using corresponding FEC packets. In particular, the recovery of a lost packet of a corresponding compressed video frame can occur without waiting for the entire set of data packets to be delivered. This is because a corresponding FEC packet is delivered after data packets that that FEC packet can recover. As such, the FEC packet that is received earlier (e.g., during transmission of encoded slices of a corresponding compressed video frame) and possibly before all data packets have been received for the compressed video frame can be used to recover one or more lost packets. That is, in embodiments of the present disclosure, forward error correction can be performed earlier (e.g., during the receiving of a compressed video frame) to correct for missing and/or corrupted packets of encoded slices without waiting for the entire set of packets of a compressed video frame (e.g., data and FEC) to be received by the client. Typically, forward error correction of any packet of a compressed video frame is performed after receiving all of the packets for that compressed video frame—thereby adding to one-way latency between the server and client, wherein embodiments of the disclosure perform forward error correction while the client is still receiving the compressed video frame—thereby reducing one-way latency. As such, by interleaving FEC packets within data packets of a corresponding compressed video frame, this provides for overlapping the decode and display operations at the client.

In addition, when packets are tuned to the MTU, this may allow for easier recovery from packet loss, in accordance with one embodiment of the present disclosure. That is, since a packet is tuned and/or sized to an encoded slice (i.e., one-to-one relationship between an encoded slice and corresponding packet), a complete encoded slice that is lost may be recovered using a corresponding FEC. In that manner FEC creation is done across a data packet, such that a FEC packet can be used to correct one or more corresponding data packets, wherein each data packet corresponds to an encoded slice. As shown inFIG.8A, each of the encoded slices is sized at or below the MTU850, such that each encoded slice is transmitted over the network using one corresponding packet.

FIG.8Ashows the interleaving of FEC packets within packets of compressed encoder slices of an encoded video frame, in accordance with one embodiment of the present disclosure. Purely for example, the encoded video frame may include encoded slice0, encoded slice1, encoded slice2, and encoded slice3. Also, the encoded slices may be sized to be an MTU850or smaller. As shown, FEC-0and FEC-1are interleaved with data packets for encoded slice0, encoded slice1, encoded slice2, and encoded slice3.