U.S. Pat. No. 12,175,617

INFORMATION PROCESSING SYSTEM AND METHOD FOR JUDGING WHETHER TO ALLOW A MOBILE MEDIUM TO MOVE WITHIN A VIRTUAL SPACE

AssigneeGREE, INC.

Issue DateSeptember 30, 2022

Illustrative Figure

Abstract

An information processing system comprises processing circuitry configured to render a virtual space; render each mobile medium associated with each user, each mobile medium being able to move within the virtual space; associate designated movement authority information to a first mobile medium, the first mobile medium being associated with a first user based on a first input from the first user; switch the designated movement authority information from association with the first mobile medium to association with a second mobile medium, the second mobile medium being associated with a second user different from the first user and based on a second input from the first user; and judge, based on the designated movement authority information, whether to allow one mobile medium to move toward a designated position within the virtual space.

Description

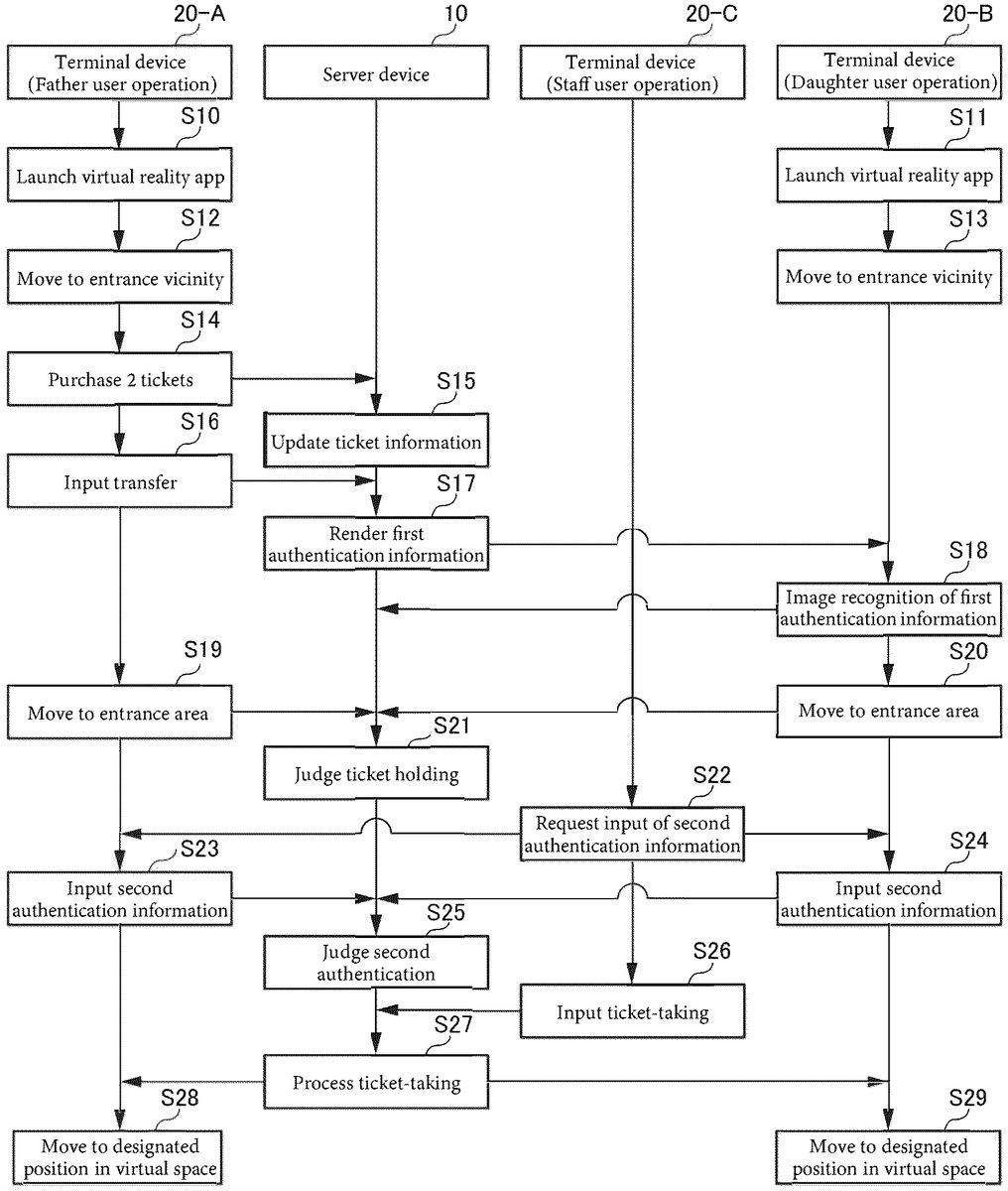

DETAILED DESCRIPTION The inventors have recognized that, with conventional technology, it is difficult to transfer, form one user to another user, information on authority to move to a location that provides specific content, such as a ticket, safely using a simple configuration. The inventors have developed the technology in this disclosure to make it easier and safer to transfer, from one user to another user, movement authority information. In accordance with the present disclosure, an information processing unit that includes a space rendering processor that renders a virtual space: a medium rendering processor that renders each mobile medium associated with each user, each mobile medium being able to move within the virtual space: a first movement authority processor that associates designated movement authority information to a first mobile medium associated with a first user based on a first input from the first user: a second movement authority processor that switches an association of the designated movement authority information associated with the first mobile medium by the first movement authority processor to a second mobile medium associated with a second user different from the first user based on a second input from the first user; and a judging processor that judges whether to allow one mobile medium to move toward a designated position within the virtual space based on whether or not the designated movement authority information is associated with the one mobile medium. Each embodiment will be described in detail below with reference to the attached drawings. Embodiment 1 (Overview of a Virtual Reality Generating System) An overview of a virtual reality generating system1according to an embodiment will be described with reference toFIG.1.FIG.1is a block diagram of the virtual reality generating system1according to the present embodiment. The virtual reality generating system1includes a server device10and at least one terminal device20. For ...

DETAILED DESCRIPTION

The inventors have recognized that, with conventional technology, it is difficult to transfer, form one user to another user, information on authority to move to a location that provides specific content, such as a ticket, safely using a simple configuration.

The inventors have developed the technology in this disclosure to make it easier and safer to transfer, from one user to another user, movement authority information.

In accordance with the present disclosure, an information processing unit that includes a space rendering processor that renders a virtual space: a medium rendering processor that renders each mobile medium associated with each user, each mobile medium being able to move within the virtual space: a first movement authority processor that associates designated movement authority information to a first mobile medium associated with a first user based on a first input from the first user: a second movement authority processor that switches an association of the designated movement authority information associated with the first mobile medium by the first movement authority processor to a second mobile medium associated with a second user different from the first user based on a second input from the first user; and a judging processor that judges whether to allow one mobile medium to move toward a designated position within the virtual space based on whether or not the designated movement authority information is associated with the one mobile medium.

Each embodiment will be described in detail below with reference to the attached drawings.

Embodiment 1

(Overview of a Virtual Reality Generating System)

An overview of a virtual reality generating system1according to an embodiment will be described with reference toFIG.1.FIG.1is a block diagram of the virtual reality generating system1according to the present embodiment. The virtual reality generating system1includes a server device10and at least one terminal device20. For the sake of simplicity, three of the terminal devices20are illustrated inFIG.1, however, there may be any number of the terminal devices20so long as there are two or more.

The server device10is an information processor such as a server, or the like, managed by an operator that provides, for example, one or more virtual realities. The terminal device20is an information processor, for example, a cell phone, a smartphone, a tablet terminal, a Personal Computer (PC), a head-mounted display, or a game device, or the like, used by a user. A plurality of the terminal devices20can be connected to the server device10through a network3by a mode that typically differs by user.

The terminal device20is able to execute a virtual reality application according the present embodiment. The virtual reality application may be received by the terminal device20from the server device10or a designated application distribution server through the network3, or, may be pre-recorded in a memory device included in the terminal device20or in a memory medium, such as a memory card, or the like, that the terminal device20can read. The server device10and the terminal device20are communicatively connected through the network3. For example, the server device10and the terminal device20operate jointly to execute various processes related to a virtual reality.

Note that the network3may include a wireless communication network, the Internet, a Virtual Private Network (VPN), a Wide Area Network (WAN), a wired network, or any combination, or the like, thereof.

An overview of the virtual reality according to the present embodiment will be described here. The virtual reality according to the present embodiment is a virtual reality, or the like, relating to any reality such as, for example, education, travel, role playing, a simulation, or entertainment, or the like, such as a concert, and a virtual reality medium such as an avatar is used in conjunction with the execution of the virtual reality. For example, the virtual reality according to the present embodiment is realized by a 3D virtual space, various types of virtual reality media appearing in the virtual space, and various types of content provided in the virtual space.

A virtual reality medium is electronic data used in the virtual reality including, for example, a card, an item, a point, a currency in a service (or a currency in a virtual reality), a ticket, a character, an avatar, a parameter, or the like. Furthermore, the virtual reality medium may be virtual reality-related information such as level information, status information, virtual reality parameter information (a physical strength value, attack power, or the like), or ability information (a skill, an ability, a spell, a job, or the like). The virtual reality medium is also electronic data that can be acquired, owned, used, managed, exchanged, combined, strengthened, sold, disposed, granted, or the like, within virtual reality by a user, and a use mode of the virtual reality medium is not limited to that specified in the present specification.

In the present embodiment, users include a general user and a staff user (an example of a third user). The general user is a user who is not involved in operating the virtual reality generating system1, and the staff user is a user who is involved in operating the virtual reality generating system1. The staff user takes a role (of agent) of supporting the general user within the virtual reality. The staff user includes, for example, a user referred to as a so-called game master. Below, unless otherwise stated, the user refers to both the general user and the staff user.

Furthermore, the user may also include a guest user. The guest user may be an artist or influencer, or the like, who operates a guest avatar that functions as content (content provided by the server device10) to be describe later. Note that there may be cases where some of the staff users become the guest users, and there may be cases where not all user attributes are clear.

Content (content provided in the virtual reality) provided by the server device10can come in any variety and in any volume, however, as one example in the present embodiment, the content provided by the server device10may include digital content such as various types of video. The video may be video in real time, or video not in real time. Furthermore, the video may be video based on actual images or video based on Computer Graphics (CG). The video may be video for providing information. In such cases, the video may be related to a specific genre of information providing services (information providing services related to travel, living, food, fashion, health, beauty, or the like), broadcasting services (e.g., YouTube (registered trademark)) by a specific user, or the like.

Furthermore, as one example in the present embodiment, the content provided by the server device10may include guidance, advice, or the like, to be described later, from the staff user. For example, the content may include guidance, advice, or the like, from a dance teacher as content provided by means of a virtual reality relating to a dance lesson. In this case, the dance teacher becomes the staff user, a student thereof becomes the general user, and the student is able to receive individual guidance from the teacher in the virtual reality.

Furthermore, in another embodiment, the content provided by the server device10may be various types of performances, talk shows, meetings, gatherings, or the like, by one or more of the staff users or the guest users through a staff avatar m2or a guest avatar, respectively.

A mode for providing content in the virtual reality may be any mode, and, for example, if the content is video, the video is rendered on a display of a display device (virtual reality medium) within the virtual space. Note that the display device in the virtual space may be of any form, such as a screen set within the virtual space, a large-screen display set within the virtual space, a display, or the like, of a mobile terminal within the virtual space.

(Configuration of the Server Device)

A specific description of the server device10is provided next. The server device10is configured of a server computer. The server device10may be realized through joint operation of a plurality of server computers. For example, the server device10may be realized through joint operation of a server computer that provides various types of content, a server computer that realizes various types of authentication servers, and the like. The server device10may also include a web server. In this case, some of the functions of the terminal device20to be described later may be executed by a browser processing an HTML document, or various types of similar programs (JavaScript), received from the web server.

The server device10includes a server communication unit11, a server memory unit12, and a server controller13. Server device10and its components may include, be encompassed by or be a component of control circuitry and/or processing circuitry. Further, the functionality of the elements disclosed herein may be implemented using circuitry or processing circuitry which includes general purpose processors, special purpose processors, integrated circuits, ASICs (“Application Specific Integrated Circuits”), conventional circuitry and/or combinations thereof which are configured or programmed to perform the disclosed functionality. Processors are considered processing circuitry or circuitry as they include transistors and other circuitry therein. The processor may be a programmed processor which executes a program stored in a memory. In the disclosure, the circuitry, units, or means are hardware that carry out or are programmed to perform the recited functionality. The hardware may be any hardware disclosed herein or otherwise known which is programmed or configured to carry out the recited functionality. When the hardware is a processor which may be considered a type of circuitry, the circuitry, means, or units are a combination of hardware and software, the software being used to configure the hardware and/or processor. The server communication unit11includes an interface that sends and receives information by communicating wirelessly or by wire with an external device. The server communication unit11may include, for example, a wireless Local Area Network (LAN) communication module, a wired LAN communication module, or the like. The server communication unit11is able to transmit and receive information to and from the terminal device20through the network3.

The server memory unit12is, for example, a memory device that stores various information and programs necessary for various types of processes relating to a virtual reality. For example, the server memory unit12stores a virtual reality application.

The server memory unit12also stores data for rendering a virtual space, for example, an image, or the like, of an indoor space such as a building or an outdoor space. Note that a plurality of types of data for rendering the virtual space may be prepared and properly used for each virtual space.

The server memory unit12also stores various images (texture images) to be projected (texture mapping) on various objects arranged within a 3D virtual space.

For example, the server memory unit12stores rendering information of a user avatar m1as a virtual reality medium associated with each user. The user avatar m1is rendered in the virtual space based on the rendering information of the user avatar m1.

The server memory unit12also stores rendering information for the staff avatar m2as a virtual reality medium associated with each of the staff users. The staff avatar m2is rendered in the virtual space based on the rendering information of the staff avatar m2.

The server memory unit12also stores rendering information relating to various types of objects that are different from the user avatar m1and the staff avatar m2, such as, for example, a building, a wall, a tree, a shrub, or a Non-Player Character (NPC), or the like. These various types of objects are rendered within the virtual space based on relevant rendering information.

Below, an object corresponding to any virtual medium (e.g., a building, a wall, a tree, a shrub, or an NPC, or the like) that is different from the user avatar m1and the staff avatar m2, and that is rendered within the virtual space, is referred to as a second object m3. Note that, in the present embodiment, the second object may include an object fixed within the virtual space, a mobile object within the virtual space, or the like. The second object may also include an object that is always arranged within the virtual space, an object that is arranged therein only in a case where designated conditions have been met, or the like.

The server controller13may include a dedicated microprocessor, a Central Processing Unit (CPU) that executes a specific function by reading a specific program, a Graphics Processing Unit (GPU), or the like. For example, the server controller13, operating jointly with the terminal device20, executes the virtual reality application corresponding to a user operation with respect to a display23of the terminal device20. The server controller13also executes various processes relating to the virtual reality.

For example, the server controller13renders the user avatar m1, the staff avatar m2, and the like, together with the virtual space (image), which are then displayed on the display23. The server controller13also moves the user avatar m1and the staff avatar m2within the virtual space in response to a designated user operation. The specific processes of the server controller13will be described in detail later.

(Configuration of the Terminal Device)

The configuration of the terminal device20will be described. As is illustrated inFIG.1, the terminal device20includes a terminal communication unit21, a terminal memory unit22, the display23, an input unit24, and a terminal controller25. Terminal device20and its components may include, be encompassed by or be a component of control circuitry and/or processing circuitry.

The terminal communication unit21includes an interface that sends and receives information by communicating wirelessly or by wire with an external device. The terminal communication unit21may include a wireless communication module, a wireless LAN communication module, a wired LAN communication module, or the like, that supports a mobile communication standard such as, for example, Long Term Evolution (LTE) (registered trademark), LTE-Advanced (LTE-A), a fifth-generation mobile communication system, Ultra Mobile Broadband (UMB), or the like. The terminal communication unit21is able to transmit and receive information to and from the server device10through the network3.

The terminal memory unit22includes, for example, a primary memory device and a secondary memory device. For example, the terminal memory unit22may include semiconductor memory, magnetic memory, optical memory, or the like. The terminal memory unit22stores various information and programs that are received from the server device10and used in processing a virtual reality. The information and programs used in processing the virtual reality are acquired from the external device through the terminal communication unit21. For example, a virtual reality application program may be acquired from a designated application distribution server. Below, the application program is also referred to simply as the application. Furthermore, part or all of, for example, information relating to a user, information relating to a virtual reality medium of a different user, or the like, as described above, may be acquired from the server device10.

The display23includes a display device such as, for example, a liquid crystal display, an organic Electro-Luminescence (EL) display, or the like. The display23is able to display various images. The display23is configured of, for example, a touch panel that functions as an interface that detects various user operations. Note that the display23may be in the form of a head mounted display.

The input unit24includes, for example, an input interface that includes the touch panel provided integrally with the display23. The input unit24is able to accept user input for the terminal device20. The input unit24may also include a physical key, as well as any input interface, including a pointing device such as a mouse, or the like. Furthermore, the input unit24may be capable of accepting contactless user input such as audio input or gesture input. Note that the gesture input may use a sensor (image sensor, acceleration sensor, distance sensor, or the like) for detecting a physical movement of a user body.

The terminal controller25includes one or more processors. The terminal controller25controls the overall movement of the terminal device20.

The terminal controller25sends and receives information through the terminal communication unit21. For example, the terminal controller25receives various types of information and programs used in various types of processes relating to the virtual reality from, at least, either the server device10or a different external server. The terminal controller25stores the received information and programs in the terminal memory unit22. For example, a browser (Internet browser) for connecting to a web server may be stored in the terminal memory unit22.

The terminal controller25starts the virtual reality application based on a user operation. The terminal controller25, operating jointly with the server device10, executes various types of processes relating to the virtual reality. For example, the terminal controller25displays an image of a virtual space on the display23. For example, a Graphic User Interface (GUI) that detects the user operation may be displayed on a screen. The terminal controller25is able to detect the user operation with respect to the screen through the input unit24. For example, the terminal controller25is able to detect a user tap operation, long tap operation, flick operation, swipe operation, and the like. The tap operation involves the user touching the display23with a finger and then lifting the finger off therefrom. The terminal controller25sends operation information to the server device10.

Examples of a Virtual Reality

The server controller13, operating jointly with the terminal device20, displays an image of a virtual space on the display23, and then updates the image of the virtual space based on progress of a virtual reality and a user operation. In the present embodiment, the server controller13, operating jointly with the terminal device20, renders an object arranged in a 3D virtual space expressed as seen from a virtual camera arranged in the virtual space.

Note that although the rendering process described below is realized by the server controller13, in another embodiment, all or part of the rendering process described below may be realized by the server controller13. For example, in the description below, at least part of the image of the virtual space displayed on the terminal device20may be a web display displayed on the terminal device20based on data generated by the server device10, and at least part of a screen may be a native display displayed through a native application installed on the terminal device20.

FIG.2AthroughFIG.2Care explanatory drawings of several examples of the virtual reality that can be generated by the virtual reality generating system1.

FIG.2Ais an explanatory drawing of a virtual reality relating to travel, and a conceptual drawing of a virtual space when viewed in a plane. In this case, a position SP1for purchasing and receiving a ticket (in this case an airline ticket, or the like) and a position SP2near a gate have been set within the virtual space.FIG.2Aillustrates the user avatar m1that is associated with two separate users.

The two users decide to travel together in the virtual reality and to enter into the virtual space, each through the user avatar m1. Then, the two users, each through the user avatar m1, obtain the ticket (see arrow R1) at the position SP1, arrive at (see arrow R2) the position SP2, and then, pass through (see arrow R3) the gate and board an airplane (the second object m3). Then, the airplane takes off and arrives at a desired destination (see arrow R4). During this time, the two users experience the virtual reality, each through the terminal device20and the display23. For example, an image G300of the user avatar m1positioned within the virtual space relating to the desired destination is illustrated inFIG.3. Thus, the image G300may be displayed on the terminal device20of the user relating to the user avatar m1. In this case, the user can move and engage in sightseeing, or the like, within the virtual space through the user avatar m1(to which the user name “fuj” has been assigned).FIG.2Bis an explanatory drawing of a virtual reality relating to education, and a conceptual drawing of a virtual space when viewed in a plane. In this case too, the position SP1for purchasing and receiving the ticket (in this case an admission ticket, or the like) and the position SP2near the gate have been set within the virtual space.FIG.2Billustrates the user avatars m1that are associated with two separate users.

The two users have decided to receive specific education in the virtual reality and to enter into the virtual space, each through the user avatar m1. Then, the two users, each through the user avatar m1, obtain the ticket (see arrow R11) at the position SP1, proceed (see arrow R12) to the position SP2, and then, pass through (see arrow R13) the gate and arrive at a first position SP11. A specific first content is provided at the first position SP11. Next, the two users, each through the user avatar m1, arrive at (see arrow R14) a second position SP12, receive provision of a specific second content, then arrive at (see arrow R15) a third position SP13, and receive provision of a specific third content, the same following thereafter. A learning effect of the specific second content is enhanced by being provided after receipt of the specific first content, and a learning effect of the specific third content is enhanced by being provided after receipt of the second specific content.

For example, in the case of software that uses certain 3D modeling, the education may be a condition where a first content includes an install link image, or the like, for the software: a second content includes an install link movie, or the like, for an add on; a third content includes an initial settings movie; and a fourth content includes a basic operations movie.

In the example illustrated inFIG.2B, each user (teachers for example), by moving, in order, through the user avatar m1from the first position SP11to an eighth position SP18to receive the provision of various types of content in order, are able to receive specific education by a mode through which a high learning effect can be obtained. Or, the various types of content may be tasks on a quiz, or the like, in which case, in the example illustrated inFIG.2B, a game such as Sugoroku or an escape game can be offered.

FIG.2Cis an explanatory drawing of a virtual reality relating to a lesson, and a conceptual drawing of a virtual space when viewed in a plane. In this case too, the position SP1for purchasing and receiving the ticket (in this case an admission ticket, or the like) and the position SP2near the gate have been set within the virtual space.FIG.2Cillustrates the user avatars m1that are associated with the two separate users.

The two users decide to receive a specific lesson in the virtual reality and to enter into the virtual space, each through the user avatar m1. Then, the two users, each through the user avatar m1, obtain the ticket (see arrow R21) at the position SP1, arrive at (see arrow R22) the position SP2, and then, pass through (see arrow R23) the gate and arrive at a position SP20. The position SP20corresponds to each position within a free space that excludes positions SP21, SP22, SP23, or the like, said positions corresponding to each stage, in an area surrounded by, for example, a round peripheral wall W2. When the users, each through the user avatar m1, arrive at (see arrow R24) a first position SP21corresponding to a first stage, the users receive provision of a first content for the lesson at the first position SP21. Furthermore, when the users, each through the user avatar m1in same way, arrive at (see arrow R25) a second position SP22corresponding to a second stage, the users receive provision of a second content for the lesson at the second position SP22, and, when the users arrive at (see arrow R26) a third position SP23corresponding to a third stage, the users receive provision of a third content for the lesson at the third position SP23.

For example, in a case where the lesson is a golf lesson, the first content for the lesson may be a video describing points for improving the users' golf swings, the second content for the lesson may be to practice a sample swing by the staff user who is a professional golfer, and the third content for the lesson may be advice from the staff user who is a professional golfer on practice of the users' swings. Note that the practice of the sample swing by the staff user is realized by the staff avatar m2, and that the practice of the users' swings is realized by the user avatar m1. For example, when the staff user actually performs a movement of a swing, that movement is reflected in the movement of the staff avatar m2based on data (e.g., gesture input data) of the movement. The advice from the staff user may be realized using a chart, or the like. Thus, each of the users, can receive each various types of lessons, for example together at home, or the like, with a friend, from a teacher (in this case, a professional golfer) in the virtual reality at an adequate pace and in adequate depth.

Thus, in the present embodiment, as is illustrated inFIG.2AthroughFIG.2C, a user can receive provision of useful content in a virtual reality at a designated position through the user avatar m1, at a time required by, and in a viewing format of, each user.

By the way, just as in reality, a mechanism is required for ensuring that only a user who has satisfied certain conditions can receive provision of content in the virtual reality as well. For example, in reality, for a person to arrive at a position where it is possible to receive provision of a given content (e.g., of an experience in an amusement park), the person must sometimes pay a price for the content and obtain a ticket. In this case, for example, in a case of a parent with a child, the parent buys a ticket for the child and enters a venue to a position (e.g., inside a facility, or the like) where the provision of the given content can be received together with the child.

In the case of the virtual reality as well, just as in reality, when a plurality of users is to receive the provision of content together in the virtual reality, convenience is enhanced when one user is able to obtain a movement authority, such as a ticket, for companion users so that all users can enter to a position (e.g., within a facility, or the like) where the provision of relevant content can be received.

However, in the case of the virtual reality, unlike reality, a plurality of users scheduled to accompany one another need not, in reality, be near each other, and are typically unable to make physical contact.

Thus, in the present embodiment, the virtual reality generating system1realizes a mechanism or a function (hereinafter, a “ticket transferring function”) in the virtual reality that enables the transfer of movement authority information that indicates this type of movement authority between users safely and with a reduced operational load. This ticket transferring function is described in detail below.

Although the server device10that relates to the ticket transferring function realizes an example of an information processing system in the following, as is described later, each element (seeFIG.1) of one specific terminal device20may realize an example of an information processing system, and a plurality of terminal devices20, operating jointly, may realize an example of an information processing system. Furthermore, one server device10and one or more terminal devices20may, operating jointly, realize an example of an information processing system.

Detailed Description of the Ticket Transferring Function

FIG.4is an example of a functional block diagram of the server device10related to the ticket transferring function.FIG.5is an example of a functional block diagram of the terminal device20(the terminal device20on a transfer receiving side) related to a ticket transferring function.FIG.6is a diagram for describing data in a user database140.FIG.7is a diagram for describing data in an avatar database142.FIG.8is a diagram for describing data in a ticket information memory unit144.FIG.9is a diagram for describing data in a spatial state memory unit146. Note that, inFIG.6throughFIG.9, “***” indicates a state in which some sort of information is stored, that “−” indicates a state in which no information is stored, and that “ . . . ” indicates repetition of the same.

As is illustrated inFIG.4, the server device10includes the user database140, the avatar database142, the ticket information memory unit144, the spatial state memory unit146, a space rendering processor150, a user avatar processor152, a staff avatar processor154, a terminal image generator158(an example of the medium rendering processor), a content processor159, a dialogue processor160, a first movement authority processor162, a second movement authority processor164, a judging processor166, a space information generator168, and a parameter updating unit170. As discussed above, each sub-component of server device10and each processor may include, be encompassed by or be a component of control circuitry and/or processing circuitry.

Note that some or all of the functions of the server device10described below may be realized by the terminal device20when appropriate. Note further that the demarcation of the spatial state memory unit146from the user database140and the demarcation of the parameter updating unit170from the space rendering processor150is for the convenience of description, and that some functional units may realize the functions of other functional units. For example, the functions of the space rendering processor150, the user avatar processor152, the terminal image generator158, the content processor159, the dialogue processor160, and the space information generator168may be realized by the terminal device20. Furthermore, some or all of the data in the user database140may be integrated with the data in the avatar database142, and stored in a different database.

Note that the spatial state memory unit146can be realized from the user database140by the server memory unit12illustrated inFIG.1, and that the parameter updating unit170can be realized from the space rendering processor150by the server controller13illustrated inFIG.1. Note further that part (a functional unit that communicates with the terminal device20) of the parameter updating unit170can be realized from the space rendering processor150by the server controller13and the server communication unit11illustrated inFIG.1.

User information is stored in the user database140. In the example illustrated inFIG.6, the user information includes user information600relating to a general user, and staff information602relating to a staff user.

The user information600associates a user name, authentication information, a user avatar ID, and position/orientation information, and the like, to each user ID. The user name is any name registered personally by the general user. The authentication information is information for showing that the general user is a legitimate general user, and may include, for example, a password, email address, date of birth, watchword, biometric, or the like. The user avatar ID is an ID for specifying a user avatar. The position/orientation information includes the position information and orientation information of the user avatar m1. The orientation information may be information that indicates an orientation of a face of the user avatar m1. Note that the position/orientation information is information that can change dynamically based on operational input from the general user, and may include, in addition to the position/orientation information, information indicating a movement of a hand or leg, or the like, or an expression, or the like, of a face of the user avatar m1.

The staff information602associates a staff name, authentication information, a staff avatar ID, position/orientation information, staff points, and the like, to each staff ID. The staff name is any name registered personally by the staff user. The authentication information is information for showing that the staff user is a legitimate staff user, and may include, for example, a password, email address, date of birth, watchword, biometric, or the like. The staff avatar ID is an ID for specifying a staff avatar. The position/orientation information includes the position information and orientation information of the staff avatar m2. The orientation information may be information that indicates an orientation of a face of the staff avatar m2. Note that the position/orientation information is information that can change dynamically based on operational input from the staff user, and may include, in addition to the position/orientation information, information indicating a movement of a hand or leg, or the like, or an expression, or the like, of a face of the staff avatar m2.

The staff points may be a parameter that increases with each fulfillment of the staff avatar role (staff job) in a virtual reality. That is, the staff points may be a parameter for indicating how the staff user moves in the virtual reality. For example, the staff points relating to one of the staff users may be increased each time this staff user assists the general user in the virtual reality through the corresponding staff avatar m2. Or, the staff points relating to one of the staff users may be increased corresponding to a time during which this staff user is able to assist (that is, in an operating state) the general user in the virtual reality through the corresponding staff avatar m2.

Avatar information relating to the user avatar m1and the staff avatar m2is stored in the avatar database142. In the example illustrated inFIG.7, the avatar information includes user avatar information700relating to the general user, and staff avatar information702relating to the staff user.

The user avatar information700associates a face, a hairstyle, clothing, and the like, with each of the user avatar IDs. Information relating to the appearance of the face, hairstyle, clothing, and the like, is a parameter that assigns characteristics to the user avatar, and is set by the general user. For example, the information relating to the appearance of the face, hairstyle, clothing, and the like, relating to an avatar may be granted an ID according to the type thereof. Furthermore, with respect to the face, a part ID may be prepared for each type of face shape, eye, mouth, nose, and the like, and information relating to the face may be managed by means of a combination of an ID for each part configuring the face. In this case, information relating to the appearance of the face, hairstyle, clothing, and the like, can function as avatar rendering information. That is, each of the user avatars m1can be rendered on both the server device10side and the terminal device20side based on each ID relating to an appearance linked to each of the user avatar IDs.

The staff avatar information702associates a face, a hairstyle, clothing, and the like, with each of the staff avatar IDs. Information relating to the appearance of the face, hairstyle, clothing, and the like, is a parameter that assigns characteristics to the staff avatar, and is set by the staff user. The information relating to the appearance of the face, hairstyle, and the like, just as in the case of the user avatar information700, may be managed by means of a combination of each part ID, and can function as the avatar rendering information. Note that the staff avatar m2may have a common characteristic that makes distinction thereof from the user avatar m1easy. For example, having each of the staff avatars m2wear common clothing (uniforms) makes distinction thereof from the user avatars m1easy.

Thus, in the present embodiment, in basic terms, one of the user IDs is associated with one of the general users, and one of the user IDs is associated with the user avatar ID. Accordingly, a state where certain information is associated with one of the general users, a state where the information is associated with the relevant one of the user IDs, and a state where the information is associated with the user avatar ID associated with the relevant one of the user IDs, are synonymous. This applies to the staff user in the same way. Accordingly, for example, unlike the example illustrated inFIG.6, the position/orientation information on the user avatar m1may be stored associated with the user avatar ID relating to the user avatar m1, and, in the same way, the position/orientation information on the staff avatar m2may be stored associated with the staff avatar ID relating to the staff avatar m2. In the description below, the general user and the user avatar m1associated with the general user, are in a relationship in which each can be read as the other; and the staff user and the staff avatar m2associated with the staff user, are also in a relationship in which each can be read as the other.

Ticket information relating to a ticket is stored in the ticket information memory unit144. The ticket is a virtual reality medium indicating a movement authority of the user avatar m1to move to a designated position within a virtual space. As one example in the present embodiment, the ticket is a form that can be torn according to a ticket-taking operation input by the staff user. Here, the acts of “ticket-taking” and “taking a ticket,” basically indicate that staff receive at ticket from a general user, who is a customer, at an entrance, or the like, of an event venue, or the like, and then tear off a stub from the ticket. The ticket being torn off enables the general user to gain admission to the event venue. Note that, in a modified example, in place of ticket-taking, the ticket may be a form where a stamp, mark, or the like, indicating the ticket has “been used” is applied thereupon.

In the example shown inFIG.8, the ticket information associates a designated position, an owner ID, purchase information, transfer authentication information, ticket-taking authentication information, transfer information, validity flag, a ticket-taker ID, ticket-taking information, and the like, to a ticket ID.

The ticket ID is a unique ID assigned to each ticket.

The designated position indicates a position that can be positioned within the virtual space based on a movement authority relating to a ticket. The designated position includes a position at which the provision of a specific content can be received. The designated position may be defined by a coordinate value of one point, but is typically defined by a plurality of coordinate values forming a group of regions or spaces. Furthermore, the designated position may be a position on a flat surface or a position in space (that is, a position represented by a 3D coordinate system that includes a height direction). Typically, the designated position may be set for each specific content according to a position for providing, or an attribute of, the specific content. For example, in the examples illustrated inFIG.2AthroughFIG.2C, the designated position is a position within the virtual space that can be entered through each gate. The designated position may be defined by a specific Uniform Resource Locator (URL). In this case, the general user, or the like, can move the user avatar m1, or the like, to the designated position by accessing a specific URL. In this case, the general user can access the specific URL to receive provision of the specific content using a browser on the terminal device20.

Note that it is preferable that the ticket information shown inFIG.8be prepared with a plurality of types of designated positions such as, for example, the designated position inside a gate like that illustrated inFIG.2Aor the designated position inside a gate like that illustrated inFIG.2B. In cases where there is only one type of designated position, the designated position in the ticket information may be omitted.

The owner ID corresponds to the user ID relating to the general user holding the ticket at the present time. Since, as described above, the ticket is transferable, the owner ID can be changed after the fact.

The purchase information shows purchaser ID, purchase date and time, purchase method, seller ID, and the like. Note that the purchaser ID is the user ID associated with a user who has made a purchasing input. The seller ID is the staff ID of the staff user who sold the ticket. Note that in a case where the ticket is not sold face-to-face by the staff user within the virtual space (e.g., when the ticket is sold in advance), the seller ID may be omitted.

The transfer authentication information is authentication information required for transfer, and that differs by ticket ID.

The ticket-taking authentication information is authentication information for authenticating that the ticket is legitimate, and that differs by ticket ID. Although the ticket-taking authentication information may take any form, an example in the present embodiment, the form thereof is a four-digit code consisting of numbers and/or symbols. Note that the ticket-taking authentication information may take the form a pre-issued unique identifier. Furthermore, the ticket-taking authentication information may be set by the user who purchased the ticket.

The transfer information indicates the presence or absence of one or more transfers, and may also indicate transfer date, time, and the like. Note that “−” as shown inFIG.8indicates that no transfer has taken place.

The validity flag is flag information indicating the validity of the ticket. As one example in the present embodiment, the ticket is valid when the validity flag is “1,” and invalid when the validity flag is “0.” A state where the ticket is valid corresponds to a state where the user avatar m1associated with the ticket can move to the designated position associated with the ticket (and to an accompanying state where the user can receive provision of a specific content at the designated position).

The validity of the ticket may be set according each ticket attribute. For example, a ticket with a certain attribute may be invalidated when taken (or when arriving at the designated position immediately thereafter). Furthermore, a ticket with a different attribute may be invalidated once a designated period of time has passed since the ticket was taken. Furthermore, a ticket with a different attribute may be invalidated once separated from the designated position after being taken. Or a mechanism that enables re-admission using the same ticket may also be realized. In this case, the validity of the ticket may be maintained until the designated period of time has passed since the ticket was taken. Or, the ticket may be invalidated when a number of movements (admissions) to the designated position equal to, or exceeding, a designated number are detected.

The ticket-taker ID is the staff avatar ID associated with the staff user who took the ticket. In the present embodiment, the ticket may be taken without the direct involvement of the staff user. In this case, information indicating that the ticket was taken automatically may be stored as the ticket-taker ID.

The ticket-taking information indicates whether or not the ticket was taken, and may also indicate the date, time, and the like, the ticket was taken. Note that “−” as shown inFIG.8indicates the ticket was not taken.

Spatial state information relating to a state within a space relating to the designated position in the virtual space is stored in the spatial state memory unit146. Note that, in the following, the space relating to the designated position in the virtual space is defined as a room that can be defined by the general user with a URL. A user accessing the same room is managed as a session linked to the same room. An avatar entering the space relating to the room may be expressed as entry to the room. Although the number of users that can gain access to one room at a time may be limited by processing capacity, processing that creates a plurality of rooms with the same settings to disperse the processing load is permitted. Furthermore, entry to the room may be managed based on access authority, or the like, and processes that confirm possession of, or require consumption of, a valid ticket are permitted. In the example illustrated inFIG.9, the spatial state information includes user state information900relating to a general user, and staff state information902relating to a staff user.

The user state information900associates the ticket ID and information on content to be provided, and the like, with an entering user. The entering user is the general user relating to the user avatar m1positioned in the designated position, and information on the entering user may be any information (the user ID, the user avatar ID, or the like) that can be used to specify the general user. Note that the entering user is the general user relating to the user avatar m1positioned in the designated position, and that the position information of the user avatar m1corresponds to the designated position (one of a plurality of coordinate values when this position is defined by said plurality of coordinate values). In other words, in a case where the position information of one user avatar m1does not correspond to the designated position, the general user relating to the one user avatar m1is excluded from being the entering user. The ticket ID of the user state information900represents the ticket ID associated with the ticket used when the entering user enters the room. The information on content to be provided may include information on whether the provision of a specific content has been received, or information on whether the provision of the specific content is being received. Furthermore, the information on content to be provided relating to a virtual space where a plurality of specific content can be provided may include historical information showing which provided specific content has been received or information on which specific content is being received. The user state information900may include information such as “Validated_At” (date and time it was confirmed that the ticket was valid) or “Expired_On” (date and time the ticket expired), and the like.

The staff state information902includes information on operation staff. The operation staff is the staff user relating to the staff avatar m2positioned in the designated position, and the information on operation staff may be any information (the staff ID, the staff avatar ID, or the like) that can be used to specify the staff user.

The space rendering processor150renders the virtual space based on the virtual space rendering information. Note that, despite being generated in advance, the virtual space rendering information may be updated, or the like, either after the fact or dynamically. Each position within the virtual space may be defined using a spatial coordinate system. Note that although any method for rendering the virtual space is acceptable, rendering may be realized by, for example, mapping a field object, or a background object, on an appropriate flat surface, curved surface, or the like.

The user avatar processor152executes various types of processes relating to the user avatar m1. The user avatar processor152includes an operation input acquiring unit1521and a user operation processor1522.

The operation input acquiring unit1521acquires operation input information from the general user. Note that the operation input information from the general user is generated through the input unit24of the terminal device20described above.

The user operation processor1522determines the position and orientation of the user avatar m1in the virtual space based on the operation input information acquired by the operation input acquiring unit1521. The position/orientation information on the user avatar m1determined by the user operation processor1522may be stored associated with, for example, the user ID (seeFIG.6). The user operation processor1522may also determine various types of movement of a hand, a foot, or the like, of the user avatar m1based on the operation input information. In this case, information on such movement may also be stored together with the position/orientation information on the user avatar m1.

Here, the function of the user operation processor1522, as described above, may be realized by the terminal device20instead of the server device10. For example, movement within the virtual space may be realized by a mode in which acceleration, collision, and the like, are expressed. In this case, although each user can make the user avatar m1jump by pointing to (indicating) a position, a decision with respect to a restriction relating to a wall surface or a movement may be realized by the terminal controller25(the user operation processor1522). In this case, the terminal controller25(user operation processor1522) processes this decision based on restriction information provided in advance. Note that, in this case, the position information may be shared with a required other user through the server device10by means of real time communication based on a WebSocket, or the like.

The staff avatar processor154executes various types of processes relating to the staff avatar m2. The staff avatar processor154includes an operation input acquiring unit1541and a staff operation processor1542.

The operation input acquiring unit1541acquires operation input information from the staff user. Note that the operation input information from the staff user is generated through the input unit24of the terminal device20described above.

The staff operation processor1542determines the position and orientation of the staff avatar m2in the virtual space based on the operation input information acquired by the operation input acquiring unit1541. The position/orientation information on the staff avatar m2, the position and orientation of which have been determined by the staff operation processor1542, may be stored associated with, for example, the staff ID (seeFIG.6). The staff operation processor1542may also determine various types of movement of a hand, a foot, or the like, of the staff avatar m2based on the operation input information. In this case, information on such movement may also be stored together with the position/orientation information on the staff avatar m2.

The terminal image generator158renders each of the virtual reality media (e.g., the user avatar m1and the staff avatar m2) that are able to move within the virtual space. Specifically, the terminal image generator158generates an image relating to each user displayed by means of the terminal device20based on the avatar rendering information (seeFIG.7), the position/orientation information on each of the user avatars m1, and the position/orientation information on the staff avatar m2.

For example, the terminal image generator158generates, for each of the user avatars m1, an image (hereinafter referred to as a “general user terminal image” when distinguished from a staff user terminal image to be described later) displayed by means of the terminal device20relating to a general user associated with one of the user avatars m1, based on the position/orientation information on this one of the user avatars m1. Specifically, the terminal image generator158generates an image (an image with part of the virtual space cut out) of the virtual space as seen from a virtual camera having a position and orientation corresponding to position/orientation information based on the position/orientation information of one of the user avatars m1as a terminal image. In this case, when the virtual camera is positioned and oriented corresponding to the position/orientation information, a field of view of the virtual camera substantially matches a field of view of the user avatar m1. However, in this case, the user avatar m1is not reflected in the field of view from the virtual camera. Accordingly, when the terminal image in which the user avatar m1is reflected is generated, a position of the virtual camera may be set behind the user avatar m1. Or, the position of the virtual camera may be adjusted in any way by the corresponding general user. Note that, when the terminal image is generated, the terminal image generator158may execute various types of processes (e.g., a process that bends a field object, or the like) to provide a sense of depth, and the like. Furthermore, in a case where a terminal image in which the user avatar m1is reflected is generated, the user avatar m1may be rendered by a relatively simple mode (e.g., in the form of a 2D sprite) in order to reduce a rendering process load.

Furthermore, in the same way, the terminal image generator158generates an image (hereinafter referred to as a “staff user terminal image” when distinguished from the general user terminal image described above) displayed by means of the terminal device20relating to a staff user associated with one of the staff avatars m2, based on the position/orientation information on this one of the staff avatars m2.

In a case where another of the user avatars m1or staff avatars m2is positioned with the field of view from the virtual camera, the terminal image generator158generates a terminal image that includes the other user avatar m1or staff avatar m2. However, in this case, the other user avatar m1or staff avatar m2may be rendered by means of a relatively simple mode (e.g., in the form of a 2D sprite) in order to reduce the rendering process load.

Here, the function of the terminal image generator158, as described above, may be realized by the terminal device20instead of the server device10. For example, in this case, the terminal image generator158receives the position/orientation information generated by the staff avatar processor154of the server device10, information (e.g., the user avatar ID or the staff avatar ID) that can be used to identify an avatar to be rendered, and the avatar rendering information (seeFIG.7) relating to the avatar to be rendered from the server device10, and renders an image of each avatar based on the received information. In this case, the terminal device20may store part information for rendering each part of the avatar in the terminal memory unit22, and then render the appearance of each avatar based on the part information and avatar rendering information (ID of each part) to be rendered acquired from the server device10.

It is preferable that the terminal image generator158render the general user terminal image and the staff user terminal image by different modes. In this case, the general user terminal image and the staff user terminal image are rendered by different modes even when the position/orientation information on the user avatar m1and the position/orientation information on the staff avatar m2match perfectly. For example, the terminal image generator158may superimpose information that is not normally visible (e.g., information on the ticket validity flag), which is information useful for fulfilling various types of roles granted to the staff user, onto the staff user terminal image. Furthermore, the terminal image generator158may render the user avatar m1associated with, and the user avatar m1not associated with, the owner ID by different modes based on the ticket information (seeFIG.8) in the staff user terminal image. In this case, the staff user can easily distinguish between the user avatar m1associated with, and the user avatar m1not associated with, the owner ID.

The content processor159provides specific content to the general user at each designated position. The content processor159may output the specific content on the terminal device20through, for example, a browser. Or, the content processor159may output the specific content on the terminal device20through a virtual reality application installed on the terminal device20.

The dialogue processor160enables dialogue among the general users through the network3based on input from a plurality of the general users. The dialogue among the general users may be realized in text and/or voice chat format through each general user's own user avatar m1. This enables general users to dialogue with one another. Note that text is output to the display23of the terminal device20. Note that text may be output separate from the image relating to the virtual space, or may be output superimposed on the image relating to the virtual space. dialogue by text output to the display23of the terminal device20may be realized in a format that is open to an unspecified number of users, or in a format open only among specific general users. This also applies to voice chat.

The dialogue processor160enables dialogue between the general user and the staff user through the network3based on input from the general user and input from the staff user. The dialogue may be realized in text and/or voice chat format through the corresponding user avatar m1and staff avatar m2. This enables the general user to receive assistance from the staff user in real time.

The first movement authority processor162generates the ticket (designated movement authority information) based on the purchasing input (an example of the first input), and then associates the ticket with the user avatar m1associated with the general user.

The purchasing input includes various types of input for purchasing the ticket. The purchasing input typically corresponds to the consumption of money or a virtual reality medium having monetary value. The virtual reality medium having monetary value may include a virtual reality medium, or the like, that can be obtained in conjunction with the consumption of money. Note that the consumption of the virtual reality medium refers to the removal of an association between the user ID and the virtual reality medium, and may be realized by reducing, or the like, the volume or number of the virtual reality medium that had been associated with the user ID.

When the ticket is issued, the first movement authority processor162generates a new ticket ID (seeFIG.8) and updates the data in the ticket information memory unit144. In this case, the designated position, the owner ID, and the like, are associated with the new ticket ID (seeFIG.8). In this case, the owner ID becomes the user ID relating to the user who made the purchasing input described above.

Specifically, the first movement authority processor162includes a purchasing input acquiring unit1621, a ticket ID generator1622, an authentication information notifier1623, and a ticket rendering unit1624.

The purchasing input acquiring unit1621acquires the purchasing input from the general user described above from the terminal device20through the network3.

In a case where the user avatar m1is in an entrance vicinity relating to the designated position, the purchasing input can be input by the general user associated with the user avatar m1. Although it is not necessary to clearly stipulate the entrance relating to the designated position in the virtual space, the entrance may be associated with a position corresponding to an entrance in the virtual space by rendering characters spelling entry or gate. For example, in the examples illustrated inFIG.2AthroughFIG.2C, the position SP1relating to a ticket purchasing area, the position SP2relating to an entrance area, and a vicinity of these correspond to an entrance vicinity.

In this case, the general user who has purchased the ticket can move their own user avatar m1to the entrance vicinity and make the purchasing input through dialogue with the staff avatar m2arranged in association with the position SP1.

Or, the purchasing input by the general user may be made possible in advance (before the user avatar m1is positioned in the entrance vicinity relating to the designated position). In this case, the general user who has purchased the ticket in advance can move their own user avatar m1to the entrance vicinity and activate the advance purchasing input through dialogue with the staff avatar m2arranged in association with the position SP1. Note that the advance purchasing input may be activated automatically (that is, without requiring any additional input) when the general user who has purchased the ticket in advance moves their own user avatar m1to the entrance vicinity (by searching for the input using the user ID and the ticket ID linked therewith).

As described above, the ticket ID generator1622generates a new ticket ID (seeFIG.8) and updates the data in the ticket information memory unit144based on the purchasing input. For example, when the general user, wishing to purchase the ticket, makes the purchasing input in a state where their own user avatar m1has been positioned in the entrance vicinity, the ticket ID generator1622immediately generates the new ticket ID. In this case, an initial value of the validity flag associated with the ticket ID may be set to “1.” Furthermore, when the general user who has purchased the ticket in advance performs an activating input in a state where their own user avatar m1has been positioned in the entrance vicinity, the ticket ID generator1622may update the value of the validity flag already associated with the ticket ID from “0” to “1.”

Based on the purchasing input, the authentication information notifier1623reports the ticket-taking authentication information (seeFIG.8) associated with the purchased ticket to the general user who purchased the ticket. Note that, as described above, as an example in the present embodiment, the form of the ticket-taking authentication information is a four-digit code consisting of numbers and/or symbols. For example, the authentication information notifier1623sends the ticket-taking authentication information through the network3to the terminal device20relating to the general user who purchased the ticket. At this time, the ticket-taking authentication information may be reported using automated audio, or the like, by email or telephone. Or, as described above, the ticket-taking authentication information may be set by the general user when making the purchasing input. In this case, the authentication information notifier1623may be omitted.

The ticket rendering unit1624renders a ticket (virtual reality medium) for each ticket ID based on the purchasing input. For example, the ticket rendering unit1624may render the ticket in association with a hand of the user avatar m1relating to the owner ID in the terminal image that includes the user avatar m1relating to the owner ID. In this way, a state where the user avatar m1holds (owns) the ticket can be realized in the virtual reality. Note that, in a case where a plurality of ticket IDs are associated with the same owner ID, the user avatar m1relating to the owner ID may be rendered in a state that indicates the plurality of tickets are being held.

The second movement authority processor164switches an association to the ticket associated with the user ID relating a specific general user by the first movement authority processor162to the user ID relating to a different general user based on a transferring input (an example of the second input) from the specific general user. That is, the second movement authority processor164changes the owner ID (seeFIG.8) associated with the ticket from the user ID associated with the purchaser ID to the user ID relating to a different transferee general user. In this way, the second movement authority processor164switches the ticket associated with the specific general user (purchaser ID) by the first movement authority processor162to a general user (transferee general user) who is different from the specific general user based on the transferring input. As a result, the ticket changes from a state of being associated with the user avatar m1(an example of the first mobile medium) relating to the general user on a transferring side to a state of being associated with the user avatar m1(an example of the second mobile medium) relating to the general user on a transfer receiving side based on the transferring input.

Specifically, the second movement authority processor164includes a transferring input acquiring unit1640, an authentication report guidance unit1641, a first authentication information rendering unit1642, a first authentication information receiver1643, and a ticket information rewriting unit1644.

The transferring input acquiring unit1640acquires the transferring input from the general user on the transferring side described above from the terminal device20through the network3. The transferring input includes the ticket ID relating to the ticket to be transferred. Note that the general user who is able to make the transferring input is the general user who owns the ticket, and the general user who holds the user ID relating to the owner ID in the ticket information (seeFIG.8).

In a case where the user avatar m1on the transferring side is in an entrance vicinity relating to the designated position, the transferring input can be input by the general user associated with the user avatar m1. The entrance vicinity relating to the designated position is as described above in relation to the purchasing input described above. For example, in the examples illustrated inFIG.2AthroughFIG.2C, the position SP1corresponds to the entrance vicinity. Note that although the entrance vicinity relating to the designated position where the transferring input can be input and the entrance vicinity relating to the designated position where the purchasing input can be input are the same in these examples, they may also be different.

Or, the transferring input can be input by the general user together with the purchasing input. This is because, for example, in most cases where the ticket is purchased by a parent with a child, the parent purchases the ticket with the intent of transferring the ticket to their child.

In response to the transferring input described above, the authentication report guidance unit1641guides the general user on the transferring side to report the ticket-taking authentication information to the general user on the transfer receiving side. Note that this guidance may be realized either at the time of the purchasing input or at some other time. Furthermore, in a case where this fact is reported to all general users during use of the virtual reality generating system1, the authentication report guidance unit1641may be omitted. Upon receiving this guidance, the general user on the transferring side reports the ticket-taking authentication information to the general user on the transferring side by chat, email, Short Message Service (SMS), or the like. Note that in a case where the general user on the transferring side and the general user on the transfer receiving side are in a relationship where the same ticket-taking authentication information is used many times, like a relationship between a parent and a child, it may not be necessary to report the ticket-taking authentication information itself. Furthermore, in a case where the general user on the transferring side and the general user on the transfer receiving side are, in fact, near one another, the ticket-taking authentication information may be reported directly face-to-face.

The first authentication information rendering unit1642renders first authentication information corresponding to the transfer authentication information in response to the transferring input described above. Specifically, the first authentication information rendering unit1642detects the transfer authentication information (seeFIG.8) associated with the ticket ID based on the ticket ID relating to the ticket to be transferred. Then, the first authentication information rendering unit1642renders the first authentication information corresponding to the detected transfer authentication information. A method for rendering the first authentication information may be any method so long as the transfer authentication information and the rendered first authentication information match, but it is preferable that the first authentication information be rendered using a form with image recognition capability.

As one example in the present embodiment, the first authentication information rendering unit1642renders the first authentication information in the position associated with the user avatar m1on the transferring side using a form of coded information such as a 2D code. For example, the first authentication information rendering unit1642renders the first authentication information in a state where said information is superimposed onto part of the user avatar m1in the terminal image that includes the user avatar m1on the transferring side. Or, the first authentication information rendering unit1642may render the first authentication information associated with a held item (e.g., the ticket) rendered associated with the user avatar m1on the transferring side. In this way, the rendered first authentication information can be recognized via image recognition by the terminal device20relating to a different general user. For example, in a case where the first authentication information is included the terminal image supplied to a certain terminal device20, the first authentication information can be recognized via image recognition in the certain terminal device20. This image recognition processing will be described in detail later with reference toFIG.5.

Note that the coded information relating to the first authentication information may be a 2D image code that includes the following information.(1) A URL of an authentication server(2) The user ID on the transferring side(3) The ticket ID(4) A current time(5) A URL of the current virtual space(6) Current coordinates(7) Personal Identification Number (PIN)(8) The user ID on the transfer receiving side

In this case, the (7) PIN may be used as the ticket-taking authentication information. Also, in this case, a user on the transferring side can complete a second authenticating judgment (describe later) by accessing the URL of the authentication server and inputting a PIN code.

Note that, as described above, the function of the first authentication information rendering unit1642may be realized on the terminal device20side. In this case, the terminal device20may, for example, include a Graphics Processing Unit (GPU), and the GPU may generate an image code relating to the first authentication information.

Furthermore, the coded information relating to the first authentication information need not be visible to the user. Accordingly, the coded information relating to the first authentication information may include, for example, a code that fluctuates at ultra-high-speed using time information, a high-resolution code, a code of a hard-to-see color, a code that confuses using a pattern of clothing worn by the user avatar m1, or the like.

The first authentication information receiver1643receives first authentication information image recognition results from the terminal device20through the network3. The first authentication information image recognition results include the user ID, and the like, associated with the sending terminal device20.

When the first authentication information receiver1643receives image recognition results, the ticket information rewriting unit1644rewrites the owner ID of the ticket information based on the image recognition results. Specifically, when the first authentication information receiver1643receives the image recognition results from the terminal device20associated with a certain general user, the ticket information rewriting unit1644specifies the transfer authentication information corresponding to the first authentication information based on the first authentication information of the image recognition results. Furthermore, the ticket information rewriting unit1644associates the user ID included in the image recognition results with the owner ID associated with the ticket ID relating to the specified transfer authentication information. At this time, in a state where the user ID relating to the user who made the transferring input is associated with the owner ID, a fact of the transfer may be added to the transfer information (seeFIG.8) of the ticket information.

When the owner ID relating to one ticket ID is changed based on the image recognition results in this way, the ticket information rewriting unit1644may instruct the ticket rendering unit1624to reflect the change. In this case, the ticket rendering unit1624renders the ticket in association with the user avatar m1relating to the new owner ID. For example, the general user can recognize a state in which the ticket is being held in the terminal image for the user displayed on their own terminal device20by confirming a state wherein the ticket is rendered in association with their own user avatar m1.

The judging processor166can judge whether the user avatar m1can move to the designated position based on the ticket information and the ticket-taking authentication information.

Specifically, the judging processor166includes a ticket holding judgment unit1661, a second authentication information receiver1662, a second authenticating judgment unit1663, and a movability judgment unit1664.

The ticket holding judgment unit1661, when judging whether a given user avatar m1can move toward the designated position, first judges whether the user avatar m1is holding a ticket that can move to the designated position. This type of judging process is also referred to as a “ticket holding judgment” below.

The ticket holding judgment can be realized based on the ticket information shown inFIG.8. In this case, the general user (or the user avatar m1thereof) for which the user ID is the owner ID can be judged as holding a ticket with the ticket ID to which the owner ID is associated. Furthermore, in a case where the validity flag “1” is associated with the ticket ID of the ticket, the ticket can be judged to be a ticket that can move to the designated position.

The ticket holding judgment is preferably executed with respect to the user avatar m1positioned at the entrance area relating to the designated position based on the position information (seeFIG.6) of the user avatar m1. The entrance area relating to the designated position may be, for example, the position SP2in the examples illustrated inFIG.2AthroughFIG.2C, or in the vicinity thereof. The entrance area relating to the designated position is the area in which the user avatar m1to be moved to the designated position is to be positioned, and thus positioning the user avatar m1in the entrance area may be used to display an intention of the general user to move the user avatar m1to the designated position. Or, in addition to positioning the user avatar m1in the entrance area, a ticket presenting operation, or the like, may be handled to display the intention of the general user to move the user avatar m1to the designated position.